- Department of Psychology, University of Alberta, Edmonton, AB, Canada

While the effects of reward, affect, and motivation on learning have each developed into their own fields of research, they largely have been investigated in isolation. As all three of these constructs are highly related, and use similar experimental procedures, an important advance in research would be to consider the interplay between these constructs. Here we first define each of the three constructs, and then discuss how they may influence each other within a common framework. Finally, we delineate several sources of evidence supporting the framework. By considering the constructs of reward, affect, and motivation within a single framework, we can develop a better understanding of the processes involved in learning and how they interplay, and work toward a comprehensive theory that encompasses reward, affect, and motivation.

Introduction

Reward, affect, and motivation are three highly related constructs, but are often investigated in isolation despite using similar experimental procedures. As an example, contextual fear conditioning is a common task used to investigate affective learning in rats. In this task, a rat is kept in a two-compartment chamber. Over time the rat gradually learns that when a tone is presented, the floor of one compartment of the chamber will deliver an electric shock. With respect to the affect construct, this task is described as eliciting a fear-related response (i.e., fleeing or freezing) when the tone occurs. However, this procedure is nearly identical to a conditioned place preference task, where the dependent measure is the proportion of time that the rat spends in each compartment, after the rat has been conditioned with shocks. Here the task is described as measuring motivational effects, e.g., approach vs. avoidance. Furthermore, it is important that an integral aspect of the task is the use of shocks, an aversive stimulus with respect to the reward construct, to elicit learning.

While it is possible to disentangle these constructs experimentally, they often coincide in real-world experiences and can converge and conflict in important ways. Here we briefly define each construct and discuss how they function in concert, as described by the proposed SIMON framework. By discussing the interplay of the constructs, we can lay the foundation for the development of a common theory encompassing reward, affect, and motivation.

Defining the Constructs

Before we can discuss interactions of reward, affect, and motivation, it is important to operationalize the three constructs independently. As the descriptions below are relatively brief, it is suggested to refer to the cited reviews for further details.

Reward

Reward is the most clearly defined of the three constructs, particularly when viewed from an operant conditioning perspective: an organism learns that by responding (R) to a stimulus (S), an outcome (O) is presented. The outcome can either be appetitive (i.e., elicit an approach response), such as food, or aversive (i.e., elicit avoidance), such as an electric shock. Thus, in this simplified form, reward-based learning can be described as a S–R–O association (i.e., operant conditioning). To clarify the reward construct, it is important to note that while often used interchangeably, “reward” and “reinforcement” do not have identical meanings (White, 1989; Berridge and Robinson, 1998). Specifically, while rewards (i.e., appetitive stimuli) elicit approach responses, reinforcement should be used to describe the increase of responses to a stimulus. For further clarity, we will define “reward” as the construct itself, where outcomes can be either “appetitive” and “aversive,” rather refer to outcomes as being “rewarding” (i.e., appetitive). It is also important to note that many different types of stimuli can be appetitive, such as monetary, food, and erotic stimuli (see Sescousse et al., 2013 for a review), while aversive stimuli usually are either monetary losses or electric shocks. Kirsch et al. (2004) provide a comprehensive discussion on the role of reward-based learning (specifically, conditioning) on cognition.

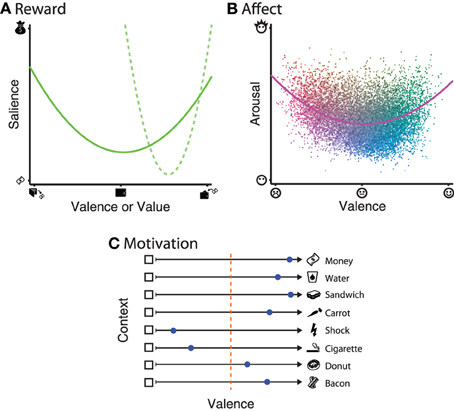

It is unarguable that rewards can vary along at least one dimension: value; when gains vs. losses are included, this dimension is often referred to as reward valence. However, recent findings suggest that reward is coded in the brain along two orthogonal dimensions: valence and salience (Figure 1A). Briefly, reward valence ranges from appetitive to aversive. Reward salience is defined by a quadratic relationship relative to value, such that the highest and lowest values experienced are highest in salience. Evidence for separable coding of reward salience is mainly supported by neural activations that correlate with the magnitude of outcomes, independent of the valence (Zink et al., 2004; Jensen et al., 2007; Cooper and Knutson, 2008; Litt et al., 2011). Recent behavioral studies have supported the notion of reward salience, even when the range of experienced outcomes is constrained to only the gain or loss domain [(Ludvig et al., 2013; Madan and Spetch, 2010); e.g., Figure 1A, dashed line].

Figure 1. Illustrations of the dimensionality of each of the three constructs. (A) Reward: the solid line denotes that reward salience has a quadratic relationship relative to reward valence. Recent results have also shown that this relationship can be observed even when the range of values experienced is constrained to either the gain or loss domain, as in the dashed line. (B) Affect: each dot represents a word from a large normative database Warriner et al., (2013). Dot color varies between blue–yellow (based on arousal) and red–green (based on valence), with variability in luminance added to improve item discriminability. The solid line represents a quadratic model fit to all words in the database. (C) Motivation: approach–avoidance tendencies are context-dependant, based on not only stimulus itself, but also the current state of the individual (e.g., thirst, hunger) and inter-individual differences (e.g., economics status, smoker, dieter, vegetarian). Individual preference within a given context are denoted by the blue dots, and range from approach (dot closer to the stimulus) to avoid (dot closer to the empty box). The orange dotted line denotes the point of indifference.

Neuroimaging results suggest that reward-related activations in the medial orbitofrontal cortex, rostral anterior cingulate cortex, and dorsal posterior cingulate correspond to reward value, while activations in the dorsal anterior cingulate cortex and insula correspond to reward salience (Litt et al., 2011). Activations in the ventral striatum, and particularly the nucleus accumbens, correspond to a mixture of reward value and salience, with salience playing a stronger role. There is also evidence of dissociable brain regions associated with gain vs. loss outcomes (Yacubian et al., 2006; Eppinger et al., 2013).

Affect

Affect can be defined as the conscious experience of emotions (Panksepp, 2000; Yik et al., 2011), though affect and emotion are often used synonymously [also see Kleinginna and Kleinginna (1981a), Lang (2010), and Izard (2010), for in-depth definitions of emotion]. To describe the affective space, Russell, (1980) proposed the circumplex model of affect (also see Yik et al., 2011), which suggests that affect is comprised of two orthogonal dimensions: valence and arousal. Valence ranges from unpleasant to pleasant, while arousal ranges from bored to excited. The orthogonality of these two dimensions is also supported by neuroimaging results (Kensinger and Corkin, 2004; Posner et al., 2009): the valence-specific network was associated with the insula, the arousal-specific network with the medial prefrontal cortex and posterior cingulate, while both networks included the dorsolateral prefrontal cortex, anterior cingulate cortex, and the amygdala.

Within an experimental setting, words and images are used to elicit affect within the participant, most commonly using the International Affective Picture System (IAPS; Lang et al., 2008) and Affective Norms for English Words (ANEW; Bradley and Lang, 1999; but also see Warriner et al., 2013) databases. While affective states fill the complete circumplex space, stimuli often show a U- or boomerang-shaped distribution [Lang et al., (1998); e.g., Figure 1B].

Motivation

Here we define motivation primarily based on the hedonistic principle (e.g., Young, 1959; White, 1989): motivation is the process of maximizing pleasure (i.e., appetitive, positive affect) and minimizing pain (i.e., aversive, negative affect). The ends of this motivational valence continuum correspomd to approach and avoidance behavior [see (Young, 1959), Kleinginna and Kleinginna, (1981b), and Elliot and Covington, (2001), for detailed definitions of motivation]. Based on this definition, it is clear that motivation is closely related to reward and affect. Additionally, motivation is intrinsically defined as motor movements, to either approach or avoid a stimulus. This perspective also overlaps highly with the idea of motor affordances (Gibson, 1977; Cisek and Kalaska, 2010).

It is also important to note that motivation incorporates contextual information that may influence stimulus processing outside of what could be explained by reward and affective processing alone [e.g., Berridge, (2004); see Figure 1C]. As an example, money can be used as a appetitive outcome, but an individual's drive to obtain money is not always constant. A simpler example would be one's drive for food and water, both of which are appetitive, but an individual is not always hungry/thirsty and may be over-satiated and temporarily not want to consume more food or water, and thus be not approached. Other stimuli may be generally aversive, such as electric shocks; stimuli that reliably predict shocks will lead to avoidance behavior after sufficient learning. However, there are individual differences in approach–avoidance motivation. For instance, smoking is highly aversive to many, but considered appetitive to some. Foods like donuts and bacon can demonstrate even more inter-individual variability: despite being foods and thus generally appetitive, an individual who is dieting should avoid donuts and a vegetarian would actively avoid the bacon.

Summary

Reward, affect, and motivation are related constructs. However, all constructs are discrete and dissociable: affect is largely an internal state, whereas a reward is related an outcome to be obtained (i.e., a goal) or avoided. While obtaining the outcome, e.g., food, likely also leads to a positive affective state, these are two dissociable processes, such as in the case of over-satiation. The food is still appetitive, but due to over-satiation, motivation is attenuated and the resulting affective state changes accordingly. Despite the strong intrinsic relationships between the constructs, they do not co-vary together in all situations. Studying these instances of disagreement are critical for the development of a comprehensive theory that encompasses all three constructs.

The Simon Framework

While prior studies have discussed portions of their interplay, all three have not been discussed within the same framework. The SIMON framework serves this purpose by delineating a simple framework where the constructs are considered in concert. Here we propose the structure of the SIMON framework and describe prior evidence supporting portions of the framework:

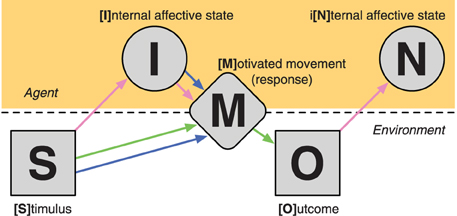

The proposed SIMON framework suggests that after a [S]timulus is presented, it leads to an [I]nternal affective state. The stimulus and the elicited affective state both influence the resulting [M]otivated movement (i.e., response) where the individual responds to the stimulus. Based on the movement (or lack there of), an [O]utcome occurs that then also leads to an i[N]ternal affective state. See Figure 2 for a graphical representation of the SIMON framework. Here we have the three constructs overlaid such that the reward construct is described by the Stimulus–Movement–Outcome (S–M–O) portion of the framework, which is a S–R–O association, i.e., operant conditioning. The affect construct is denoted by the S–I(–M) and O–N portions of the framework, where presented stimuli, including the outcome itself, elicit an affective state, and that this can also lead to a response. The motivation construct is described by the S–I–M portion of the framework, where a stimulus and it's resulting affective state both lead to a motivated movement.

Figure 2. Illustration of the processes involved in the SIMON framework. Line arrows correspond to the portions of the framework that are intrinsic to each of the three component constructs: reward (green), affect (pink), and motivation (blue).

Evidence for Reward → Motivation: Can Reward Learning Lead to Motivated Movements?

Learning that a specific action leads to a reward-related outcome is the basis of operant conditioning and much of animal learning as a field (e.g., Balleine and Dickinson, 1998). Additionally, in certain circumstances, this type of learning can lead to the development of superstitious behaviors in both human and non-human animals (e.g., “lucky numbers”; Brown, 1986). An illustration of this type of learning is outlined in (Cardinal et al., 2002, Figure 2), where the behavior resulting from the learning of a stimuli–outcome association are the elicitation of motivated movements, such as lever-pressing and locomotor approach.

Consider two theoretical perspectives that bear on the relation of these two constructs: from a reinforcement learning perspective, an agent's goal is to obtain as much reward (i.e., appetitive stimuli) as possible, by learning from the outcomes of prior actions Woergoetter and Porr, (2008). In other words, seeking of rewards drives motivated movements, a notion supported by a number of studies (e.g., Hikosaka et al., 2008), and supporting the S–M–O portion of the SIMON framework. This rationale is also supported by the motor chauvinist perspective: the purpose of the brain is to produce movements, and sequences of motor actions are constructed to achieve high-level goals, such as acquire appetitive outcomes (Wolpert et al., 2001). Despite markedly different theoretical backgrounds, both perspectives suggest that at a basic level, motor movements are important to acquiring appetitive outcomes and that learning from reward-related experiences can reinforce the production of preceding movements.

Evidence for Affect → Motivation: Can Affect Drive Motivated Movements?

Stimuli can often elicit affective states, and the combination of the stimuli and affective states can lead to a motor response (I–M portion of the SIMON framework). Well-known examples of this phenomena are reflex potentiation (fight-or-flight response) and freezing, and that affective states can influence physiological measures such as pupil dilation, heart rate (e.g., fear bradycardia; Campbell et al., 1997), and skin conductance (Bradley et al., 2008). Furthermore, research has shown that a variety of mammals use similar facial expressions (i.e., movements of the facial muscles) as humans to express positive/appetitive and negative/aversive states (e.g., Berridge, 2004). Lang and Bradley, (2010) discuss evidence that affective stimuli can lead to greater motor potentiation, as measured by neural activation in supplementary motor area, among other brain regions, indicating higher-level cortical involvement, rather than only reflexive motor potentiation due to affect. Also see Carver, (2006) and Harmon-Jones et al., (2013) for further discussions of the coupling between affect and motivation/motor-actions.

Evidence for Reward → Affect: Can Rewards Lead to Affective States?

Rewards and affect both have important influences on learning, but are often discussed in isolation and use different procedures: studies of reward learning usually use a conditioning-based approach, where the task is learned through trial-and-error with the goal of obtaining the maximal cumulative reward. Studies using affective stimuli usually simply present the affective words/images, though there are instances where affect is conditioned (e.g., Mather and Knight, 2008; Schwarze et al., 2012). Nonetheless, one would expect that that earning an appetitive stimulus should elicit positive affect, whereas a negative outcome, such as a shock, is both aversive and elicits negative affect. This notion also suggested by Rolls, (2000), and would comprise the O–N portion of the framework. Results from Dixon et al., (2010) also support this idea, where physiological measures of arousal were greater for appetitive outcomes (also see Bechara et al., 2005). Brown (1986) also suggests that arousal can play an important role in problem gambling.

Another line of research supporting the influence of rewards on affect is decision affect theory (Mellers et al., 1997). Here participants were presented with pie charts denoting probabilities of either gaining/losing a specified amount of money or receiving an outcome of $0. After each trial, participants were asked to provide a rating of the emotional state on a Likert scale, ranging from extremely elated (+50) to extremely disappointed (−50), and affective responses were found to follow directly from predictions based on the reward outcome obtained. According to this line of research, in the event of a choice, “elation” is experienced if the outcome exceeds expectations, but “disappointment” is experienced if the outcome is worse than expected (Bell, 1985). If feedback on the forgone/unchosen option is also presented, “regret” is experienced if the chosen option is worse than the unchosen option's outcome, while “relief” is experienced when the chosen outcome led to the better outcome (Bell, 1982; Bryne, 2002). In other words, affective responses are operationalized based on outcomes experienced.

Evidence Supporting the Framework

By considering the relations between reward, affect, and motivation, a myriad of behavioral findings support the notion of a single framework. Here we outline a handful of such examples, along with their underlying principles as outlined by the SIMON framework.

Affective Stimuli and Motor Movements

One of the most straightforward sources of evidence for the SIMON framework is that positive stimuli should be more congruent with an approach response, while negative stimuli should be more congruent with an avoid response. In lexical decision, where participants are presented with a letter-string that may or may not be a word and must judge it's lexicality, participants have been shown to respond relatively faster to positive words and relatively slower to negative words, when compared to neutral words (Estes and Adelman, 2008). Furthermore, in a go/no-go task, participants were slower to respond to images of fearful faces relative to happy faces (Hare et al., 2005). In both instances, participants exhibited a tendency to avoid negative stimuli, in conflict with the instruct to respond (i.e., approach) the stimuli. However, Hare et al., (2005) also reported that when participants are asked to inhibit their responses (i.e., no-go trials), false alarm rates are higher for happy than fearful faces as it is more difficult to suppress the approach response to the positive stimuli. Through similar principles, it has been suggested that approach/avoidance movements can provide information about an animal's affective preferences (Kirkden and Pajor, 2006).

Motor Movements and Rewards

Another interesting line of evidence for the SIMON framework is intrinsic relationship between motor actions and rewards. One example of this is motor movements should optimize rewards earned in the task. Consider a reaching task where there are two target areas, each associated with a different reward value, e.g., similar to a dartboard (see Trommershäuser et al., 2008; Cisek, 2012). Participants have been found to aim for a point that would maximize their earnings and minimize potential losses, while also accounting for variability in precision.

A second interaction of motor and rewards can be observed in the influence of medication to treat motor dysfunction on reward-related behavior. It is known that Parkinson's patients taking dopamine agonists are more likely to develop problem gambling behavior (Dodd et al., 2005), a result found to generalize to other disorders also treated with dopamine agonists (e.g., d'Orsi et al., 2011). A likely cause for this interaction is that even though both gain outcomes and motor movements normally rely on the phasic release of dopamine (e.g., Steinberg et al., 2013), dopamine agonists increase the tonic level of dopamine, still leaving a dysregulation of the dopamine system.

Conflict in Affect and Reward to Improve Executive Control

Another source of support for the SIMON framework is the use of affective stimuli that conflict with a reward. For instance, cigarette packages in North America often depict graphical images of the negative consequences of smoking, and have been shown to help individuals quit smoking (Farrelly et al., 2012). Extending this to food stimuli, Veling et al., (2011) presented participants with palatable food images in a go/no-go task, but preceded the food images with affective faces. Images of fearful faces were found to increase response time, suggesting that the conflict between reward and affect can be used to increase impulse control. Hollands et al., (2011) used a similar approach, but instead conditioned individuals to form associations between snack images and aversive bodily images (e.g., obese individuals) and found that the intervention improved healthy food choices relative to a control group. Similar interventions have also been used to treat phobias (e.g., Hekmat and Vanian, 1971).

Conclusion

In the laboratory we aim to isolate a single construct and research it experimentally. However, in the real world learning is influenced by a multitude of concurrent effects that can be closely inter-related. By considering the constructs of reward, affect, and motivation within a single framework, we can work toward a better understanding of the processes involved in learning and provide an opportunity to refine the definitions of each of the component constructs. Finally, by considering the interplay of these three constructs, several current lines of research can be predicted in a common framework, and we can begin to work toward a comprehensive theory that encompasses reward, affect, and motivation.

Conflict of Interest Statement

The author declares that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

This work was partly funded by an Alexander Graham Bell Canada Graduate Scholarship (doctoral-level) from the National Science and Engineering Research Council of Canada as well as doctoral scholarships from the Alberta Gambling Research Institute. I would like to thank Marcia Spetch, Craig Chapman, and Elizabeth Kensinger for feedback on an earlier draft of the manuscript. I would also like to thank the the following designers from The Noun Project (http://www.thenounproject.com) for the icons used in Figure 1: Ben Rex Furneaux (wallet), Tom Glass Jr. (sandwich), Jacob Jalton (bacon, doughnut), Karthick Nagajaran (money), Sergey Shmidt (money), Kate Vogel (carrot), Hakan Yalcin (wallet), along with a number of unknown designers (electrical shock warning, smoking, water), as well as GoSquared Ltd. (http://www.gosquared.com) for the face icons used in Figure 1B.

References

Balleine, B. W., and Dickinson, A. (1998). Goal-directed instrumental action: contingency and incentive learning and their cortical substrates. Neuropharmacology 37, 407–419. doi: 10.1016/S0028-3908(98)00033-1

Bechara, A., Damasio, H., Tranel, D., and Damasio, A. R. (2005). The Iowa Gambling Task and the somatic marker hypothesis: some questions and answers. Trends Cogn. Sci. 9, 159–162. doi: 10.1016/j.tics.2005.02.002

Bell, D. E. (1982). Regret in decision making under uncertainty. Oper. Res. 30, 961–981. doi: 10.1287/opre.30.5.961

Bell, D. E. (1985). Disappointment in decision making under uncertainty. Oper. Res. 33, 1–27. doi: 10.1287/opre.33.1.1

Berridge, K. C. (2004). Motivation concepts in behavioral neuroscience. Physiol. Behav. 81, 179–209. doi: 10.1016/j.physbeh.2004.02.004

Berridge, K. C., and Robinson, T. E. (1998). What is the role of dopamine in reward: hedonic impact, reward learning, or incentive salience? Brain Res. Rev. 28, 209–369. doi: 10.1016/S0165-0173(98)00019-8

Bradley, M. M., and Lang, P. J. (1999). “Affective norms for English words (ANEW): stimuli, instruction manual and affective ratings,” Technical Report C-1 (Gainesville, FL: University of Florida).

Bradley, M. M., Miccoli, L., Escrig, M. A., and Lang, P. J. (2008). The pupil as a measure of emotional arousal and autonomic activation. Psychophysiology 45, 602–607. doi: 10.1111/j.1469-8986.2008.00654.x

Brown, R. I. F. (1986). Arousal and sensation-seeking components in the general explanation of gambling and gambling addictions. Int. J. Addict. 21, 1001–1016.

Bryne, R. M. J. (2002). Mental models and counterfactual thoughts about what might have been. Trends Cogn. Sci. 6, 426–431. doi: 10.1016/S1364-6613(02)01974-5

Campbell, B. A., Wood, G., and McBride, T. (1997). “Origins of orienting and defense responses: an evolutionary perspective,” in Attention and Orienting: Sensory and Motivational Processes, eds P. J. Lang, R. F. Simmons, and M. T. Balaban, (Hillsdale, NJ: Lawrence Erlbaum Associates), 41–67.

Cardinal, R. N., Parkinson, J. A., Hall, J., and Everitt, B. J. (2002). Emotion and motivation: the role of the amygdala, ventral striatum, and prefrontal cortex. Neurosci. Biobehav. Rev. 26, 321–352. doi: 10.1016/S0149-7634(02)00007-6

Carver, C. S. (2006). Approach, avoidance, and the self-regulation of affect and action. Motiv. Emot. 30, 105–110. doi: 10.1007/s11031-006-9044-7

Cisek, P. (2012). Making decisions through a distributed consensus. Curr. Opin. Neurobiol. 22, 927–936. doi: 10.1016/j.conb.2012.05.007

Cisek, P., and Kalaska, J. F. (2010). Neural mechanisms for interacting with a world full of action choices. Annu. Rev. Neurosci. 33, 269–298. doi: 10.1146/annurev.neuro.051508.135409

Cooper, J. C., and Knutson, B. (2008). Valence and salience contribute to nucleus accumbens activation. Neuroimage 39, 538–547. doi: 10.1016/j.neuroimage.2007.08.009

Dixon, M. J., Harrigan, K. A., Sandhu, R., Collins, K., and Fugelsang, J. A. (2010). Losses disguised as wins in modern multi-line video slot machines. Addiction 105, 1819–1824. doi: 10.1111/j.1360-0443.2010.03050.x

Dodd, M. L., Klos, K. J., Bower, J. H., Geda, Y. E., Josephs, K. A., and Ahlskog, J. E. (2005). Pathological gambling caused by drugs used to treat parkinson disease. Arch. Neurol. 62, 1377–1381. doi: 10.1001/archneur.62.9.noc50009

d'Orsi, G., Demaio, V., and Specchio, L. M. (2011). Pathological gambling plus hypersexuality in Restless Legs Syndrome: a new case. Neurol. Sci. 32, 707–709. doi: 10.1007/s10072-011-0605-5

Elliot, A. J., and Covington, M. V. (2001). Approach and avoidance motivation. Educ. Psychol. Rev. 13, 73–92. doi: 10.1023/A:1009009018235

Eppinger, B., Schuck, N. W., Nystrom, L. E., and Cohen, J. D. (2013). Reduced striatal responses to reward prediction errors in older compared with younger adults. J. Neurosci. 33, 9905–9912. doi: 10.1523/JNEUROSCI.2942-12.2013

Estes, Z., and Adelman, J. S. (2008). Automatic vigilance for negative words in lexical decision and naming: comment on Larsen, Mercer, and Balota (2006). Emotion 8, 441–444. doi: 10.1037/1528-3542.8.4.441

Farrelly, M. C., Duke, J. C., Davis, K. C., Nonnemaker, J. M., Kamyab, K., Willett, J. G., et al. (2012). Promotion of smoking cessation with emotional and/or graphic antismoking advertising. Am. J. Prev. Med. 43, 475–482. doi: 10.1016/j.amepre.2012.07.023

Gibson, J. J. (1977). “The theory of affordances,” in Perceiving, Acting, and Knowing: Toward an Ecological Psychology, eds R. Shaw and J. Bransford (Hillsdale, NJ: Lawrence Erlbaum), 67–82.

Hare, T. A., Tottenham, N., Davidson, M. C., Glover, G. H., and Casey, B. J. (2005). Contributions of amygdala and striatal activity in emotion regulation. Biol. Psychiatry 57, 624–632. doi: 10.1016/j.biopsych.2004.12.038

Harmon-Jones, E., Gable, P. A., and Price, T. F. (2013). Does negative affect always narrow and positive affect always broaden the mind? Considering the influence of motivational intensity on cognitive scope. Curr. Dir. Psychol. 22, 301–307. doi: 10.1177/0963721413481353

Hekmat, H., and Vanian, D. (1971). Behavior modification through covert semantic desensitization. J. Consult Clin. Psychol. 36, 248–251. doi: 10.1037/h0030734

Hikosaka, O., Bromber-Martin, E., Hong, S., and Matsumoto, M. (2008). New insights on the subcortical representation of reward. Curr. Opin. Neurobiol. 18, 203–208. doi: 10.1016/j.conb.2008.07.002

Hollands, G. J., Prestwich, A., and Marteau, T. M. (2011). Using aversive images to enhance healthy food choices and implicit attitudes: an experimental test of evaluative conditioning. Health Psychol. 30, 195–203. doi: 10.1037/a0022261

Izard, C. E. (2010). The many meanings/aspects of emotion: definitions, functions, activation, and regulation. Emot. Rev. 2, 363–370. doi: 10.1177/1754073910374661

Jensen, J., Smith, A. J., Willeit, M., Crawley, A. P., Mikulis, D. J., Vitcu, I., et al. (2007). Separate brain regions code for salience vs. valence during reward prediction in humans. Hum. Brain Mapp. 28, 294–302. doi: 10.1002/hbm.20274

Kensinger, E. A., and Corkin, S. (2004). Two routes to emotional memory: distinct neural processes for valence and arousal. Proc. Natl. Acad. Sci. U.S.A. 101, 3310–3315. doi: 10.1073/pnas.0306408101

Kirkden, R. D., and Pajor, E. A. (2006). Using preference, motivation and aversion tests to ask scientific questions about animalsÕ feelings. Appl. Anim. Behav. Sci. 100, 29–47. doi: 10.1016/j.applanim.2006.04.009

Kirsch, I., Lynn, S. J., Vigorito, M., and Miller, R. R. (2004). The role of cognition in classical and operant conditioning. J. Clin. Psychol. 60, 369–392. doi: 10.1002/jclp.10251

Kleinginna, P. R. Jr., and Kleinginna, A. M. (1981a). A categorized list of emotion definitions, with suggestions for a consensual definition. Motiv. Emot. 5, 345–379. doi: 10.1007/BF00992553

Kleinginna, P. R. Jr., and Kleinginna, A. M. (1981b). A categorized list of motivation definitions, with a suggestion for a consensual definition. Motiv. Emot. 5, 263–291. doi: 10.1007/BF00993889

Lang, P. J. (2010). Emotion and motivation: toward consensus definitions and a common research purpose. Emot. Rev. 2, 229–233. doi: 10.1177/1754073910361984

Lang, P. J., and Bradley, M. M. (2010). Emotion and the motivational brain. Biol. Psychol. 84, 437–450. doi: 10.1016/j.biopsycho.2009.10.007

Lang, P. J., Bradley, M. M., and Cuthbert, B. N. (1998). Emotion, motivation, and anxiety: brain mechanisms and psychophysiology. Biol. Psychiatry 44, 1248–1263. doi: 10.1016/S0006-3223(98)00275-3

Lang, P. J., Bradley, M. M., and Cuthbert, B. N. (2008). “International affective picture system (IAPS): affective ratings of pictures and instruction manual,” Technical Report A-8 (Gainesville, FL: University of Florida).

Litt, A., Plassmann, H., Shiv, B., and Rangel, A. (2011). Dissociating valuation and saliency signals during decision-making. Cereb. Cortex 21, 95–102. doi: 10.1093/cercor/bhq065

Ludvig, E. A., Madan, C. R., and Spetch, M. L. (2013). Extreme outcomes sway risky decisions from experience. J. Behav. Decis. Making. doi: 10.1002/bdm.1792

Madan, C. R., and Spetch, M. L. (2010). Is the enhancement of memory due to reward driven by value or salience? Acta Psychol. 139, 343–349. doi: 10.1016/j.actpsy.2011.12.010

Mather, M., and Knight, M. (2008). The emotional harbinger effect: poor context memory for cues that previously predicted something arousing. Emotion 8, 850–860. doi: 10.1037/a0014087

Mellers, B. A., Schwartz, A., Ho, K., and Ritov, I. (1997). Decision affect theory: emotional reactions to the outcomes of risky options. Psychol. Sci. 8, 423–429. doi: 10.1111/j.1467-9280.1997.tb00455.x

Panksepp, J. (2000). “Affective consciousness and the instinctual motor system: the neural sources of sadness and joy,” in The Caldron of Consciousness: Motivation, Affect, and Self-Organization, eds R. D. Ellis and N. Newton (Amsterdam: John Benjamins), 27–54.

Posner, J., Russell, J. A., Gerber, A., Gorman, D., Colibazzi, T., Yu, S., et al. (2009). The neurophysiological bases of emotion: an fmri study of the affective circumplex using emotion-denoting words. Hum. Brain Mapp. 30, 883–895. doi: 10.1002/hbm.20553

Rolls, E. T. (2000). Prècis of the brain and emotion. Behav. Brain Sci. 23, 177–234. doi: 10.1017/S0140525X00002429

Russell, J. A. (1980). A circumplex model of affect. J. Pers. Soc. Psychol. 39, 1161–1178. doi: 10.1037/h0077714

Schwarze, U., Bingel, U., and Sommer, T. (2012). Event-related nociceptive arousal enhances memory consolidation for neutral scenes. J. Neurosci. 32, 1481–1487. doi: 10.1523/JNEUROSCI.4497-11.2012

Sescousse, G., Caldú, X., Segura, B., and Dreher, J.-C. (2013). Processing of primary and secondary rewards: a quantitative meta-analysis and review of human functional neuroimaging studies. Neurosci. Biobehav. Rev. 37, 681–696. doi: 10.1016/j.neubiorev.2013.02.002

Steinberg, E. E., Keiflin, R., Boivin, J. R., Witten, I. B., Deisseroth, K., and Janak, P. H. (2013). A causal link between prediction errors, dopamine neurons and learning. Nat. Neurosci. 16, 966–972. doi: 10.1038/nn.3413

Trommershäuser, J., Maloney, L. T., and Landy, M. S. (2008). Decision making, movement planning and statistical decision theory. Trends Cogn. Sci. 12, 291–297. doi: 10.1016/j.tics.2008.04.010

Veling, H., Aarts, H., and Stroebe, W. (2011). Fear signals inhibit impulsive behavior toward rewarding food objects. Appetite 56, 643–648. doi: 10.1016/j.appet.2011.02.018

Warriner, A. B., Kuperman, V., and Brysbaert, M. (2013). Norms of valence, arousal, and dominance for 13,915 English lemmas. Behav. Res. doi: 10.3758/s13428-012-0314-x. [Epub ahead of print].

White, N. M. (1989). Reward or reinforcement: what's the difference? Neurosci. Biobehav. Rev. 13, 181–186. doi: 10.1016/S0149-7634(89)80028-4

Woergoetter, F., and Porr, B. (2008). Reinforcement learning. Scholarpedia J. 3:1448. doi: 10.4249/scholarpedia.1448

Wolpert, D. M., Ghahramani, Z., and Flanagan, J. R. (2001). Perspectives and problems in motor learning. Trends Cogn. Sci. 5, 487–494. doi: 10.1016/S1364-6613(00)01773-3

Yacubian, J., Gläscher, J., Schroeder, K., Sommer, T., Braus, D. F., and Büchel, C. (2006). Dissociable systems for gain- and loss-related value predictions and errors of prediction in the human brain. J. Neurosci. 26, 9530–9537. doi: 10.1523/JNEUROSCI.2915-06.2006

Yik, M., Russell, J. A., and Steiger, J. H. (2011). A 12-point circumplex structure of core affect. Emotion 11, 705–731. doi: 10.1037/a0023980

Young, P. T. (1959). The role of affective processes in learning and motivation. Psychol. Rev. 66, 104–125. doi: 10.1037/h0045997

Keywords: reward, affect, motivation, movements, emotion, context, arousal, valence

Citation: Madan CR (2013) Toward a common theory for learning from reward, affect, and motivation: the SIMON framework. Front. Syst. Neurosci. 7:59. doi: 10.3389/fnsys.2013.00059

Received: 11 July 2013; Accepted: 12 September 2013;

Published online: 07 October 2013.

Edited by:

Dave J. Hayes, University of Toronto, CanadaReviewed by:

Raúl G. Paredes, National University of Mexico, MexicoNicole El Massioui, National Center of Scientific Research, France

Copyright © 2013 Madan. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) or licensor are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Christopher R. Madan, Department of Psychology, University of Alberta, P-217 Biological Sciences Building, Edmonton, AB T6G 2E9, Canada e-mail:Y21hZGFuQHVhbGJlcnRhLmNh

Christopher R. Madan

Christopher R. Madan