- Department of Psychology, UCLA, USA

In four experiments we assessed the effect of systemic amphetamine on the ability of a stimulus paired with reward and a stimulus that was not paired with reward to support instrumental conditioning; i.e., we trained rats to press two levers, one followed by a stimulus that had been trained in a predictive relationship with a food outcome and the other by a stimulus unpaired with that reward. Here we show, in general accord with predictions from the dopamine re-selection hypothesis [Redgrave and Gurney (2006) . Nat. Rev. Neurosci. 7, 967–975], that systemic amphetamine greatly enhanced the performance of lever press responses that delivered a visual stimulus whether that stimulus had been paired with reward or not. In contrast, amphetamine had no effect on the performance of responses on an inactive lever that had no stimulus consequences. These results support the notion that dopaminergic activity serves to mark or tag actions associated with stimulus change for subsequent selection (or re-selection) and stand against the more specific suggestion that dopaminergic activity is solely related to the prediction of reward.

Introduction

Theoretical interest in the role of dopamine (DA) in learning has focused primarily on its role in reward processing, particularly the suggestion that the burst firing of midbrain dopamine neurons acts as, or reflects, error in the prediction of reward within the circuitry associated with predictive learning (Montague et al., 1996 ; Schultz, 1998 ). In contrast, Redgrave and Gurney (Redgrave and Gurney, 2006 ) have recently advanced an alternative “re-selection” hypothesis according to which the phasic DA signal acts to promote the re-selection or repetition of those actions∕movements that immediately precede unpredicted events, irrespective of their immediate reward value. In support of this view they point out that (i) the rapidity of the dopaminergic response precludes the identity of the unpredicted activating event from entering any concomitant processing of error signals and (ii) the promiscuous nature of the dopamine response stands discordant with the idea that the circuitry is focused solely on reward processing. This latter problem is especially salient from a learning theoretic point of view. It has been well established that stimuli without apparent rewarding properties support the acquisition of both Pavlovian (Bitterman et al., 1953 ) and instrumental forms of conditioning (Kish, 1966 ). Given that sensory events reliably evoke burst firing in dopaminergic cells across both stimulus-type and species (Ljungberg et al., 1992 ; Steinfels et al., 1983 ), this is compatible with a general role of dopamine in the acquisition of these neutral, stimulus-supported associations.

Although derived from patterns of dopamine neuronal activity recorded in the roughly 250 ms time range that constitutes their phasic activity, the hypothesis that dopamine is involved specifically in reward prediction has lately been developed into a more general view of dopamine function in the literature dealing with both human learning (Bray and O'Doherty, 2007 ; Holroyd and Coles, 2002 ) and computational theories of adaptive behavior (Joel et al., 2002 ; McClure et al., 2003 ). In fact, theories relating dopamine to reward have been around for some time in one form or another. It has long been proposed, for example, that dopamine mediates the ability of cues associated with reward to act as sources of conditioned reinforcement based on the acquisition of new instrumental actions reinforced by these cues and the ability of the dopamine agonist amphetamine—that both releases DA from presynaptic terminals and inhibits DA reuptake (Heikkila et al., 1975 ; Seiden et al., 1993 )—to substantially facilitate the acquisition and performance of these new actions (Robbins, 1975 ; Robbins, 1976 ; Robbins et al., 1989 ). In general accord with the involvement of dopamine in the modulation specifically of reward prediction, the results of these experiments were interpreted as suggesting that amphetamine affects the performance of actions associated with predictors of reward and, against the re-selection hypothesis, does not affect the performance of other actions (Robbins et al., 1989 ).

In fact, the effect of amphetamine on the ability of a purely Pavlovian predictor of reward and of a similarly treated neutral stimulus to support the acquisition and performance of a new instrumental action has yet to be compared. Although several experiments have compared the effects of amphetamine on responding for cues that have served as instrumental discriminative stimuli, i.e., that were previously trained to signal the reinforcement and non-reinforcement of another instrumental action, cues simply paired or unpaired with reward have not been assessed. The present series of experiments therefore constitute an attempt to provide an initial test of these two general accounts of dopamine function by assessing the effect of d-amphetamine on the acquisition of two lever press actions, one followed by a stimulus trained in a predictive relationship with a rewarding food outcome (either grain food pellets or sucrose solution) and the other by a stimulus unpaired with any reward. To the extent that amphetamine selectively enhances acquisition of responding for stimuli that predict reward as opposed to similarly exposed stimuli with no such history, evidence for the role of dopamine in reward prediction would be provided. To the extent that amphetamine affects the performance of an action delivering a stimulus that has not been paired with reward then the effect of dopamine in this task would appear to be based more upon the immediate sensory consequences of behavior, rather than upon reward prediction per se. This latter finding would be in keeping with predictions from the Redgrave and Gurney's (2006) response re-selection hypothesis.

Materials and Methods

Subjects and apparatus

Male Long-Evans rats (Harlan, USA), all approximately 100 days old at the beginning of training, were used in these experiments. Rats were housed in pairs, and were maintained on a schedule of food deprivation according to which they were once daily provided sufficient chow to maintain them at 85% of their free feeding body weight. Training and testing were conducted in 24 operant chambers (Med Associates, East Fairfield, VT) equipped with exhaust fans that generated background noise (approx. 60 dB) and shells that prevented both outside sound and light from entering the chamber. Stimuli were produced by two (3 W, 28 V) stimulus lights on the front panel, one above and to the left and one above and to the right of the centrally placed, recessed magazine. A pump fitted with a syringe allowed for the delivery of 0.1 ml of a 20% w∕v sucrose solution to a well in the recessed magazine. A pellet dispenser was also fitted and allowed for the delivery of a single 45-mg food pellet (Bio-Serv, Frenchtown, NJ) to the magazine. Magazine entries were detected by interruptions of an infrared photobeam spanning the magazine horizontally and located over the front of the fluid and pellet delivery cups. Two retractable levers were also present in each chamber, one to the left, and one to the right of the magazine. Illumination was provided by a house light (3 W, 28 V) mounted on the wall opposite that containing the magazine. All housing and experimental procedures were in accordance with National Institute of Health guidelines and UCLA Institutional Animal Care and Use Committee.

Procedures

Pavlovian conditioning. In Experiments 1–3 Pavlovian conditioning was conducted to develop stimuli with differential histories of reward prediction. One session was conducted each day consisting of the delivery of 12 S+ trials and 12 S− trials. The order of trials was randomly determined without constraint. For half the animals, the S+ was the left, flashing (2 Hz) keylight and the S− was the right, steady keylight, while for the other half these identities were reversed. Both S+ and S− were 10 seconds in duration. During the S+, sucrose solution was delivered with a 0.2 probability for each second of the S+, resulting in on average two sucrose deliveries per S+ for animals in Experiments 1 and 2; pellets were delivered in an identical manner in Experiment 3. In this and subsequent studies, S− was never paired with any reward. Sessions were divided into 320 × 10 second bins and the 12 S+ and 12 S− stimulus periods were randomly distributed across these bins, with the constraint that no two stimuli occurred with less than 30 seconds (3 bins) of separation. Responding during the S− was assessed by subtracting entries during the 10 seconds PreS− period from entries during the 10 seconds S− period. Responding during the S+ likewise utilized a difference score, but the PreS+ and S+ period lengths were defined on a trial-wise basis as the length of the period of the S+ before US delivery, if any delivery occurred, and 10 seconds if no delivery occurred. This provided a measure of expectancy (and S+ onset) driven entries, uncontaminated by changes induced by US delivery.

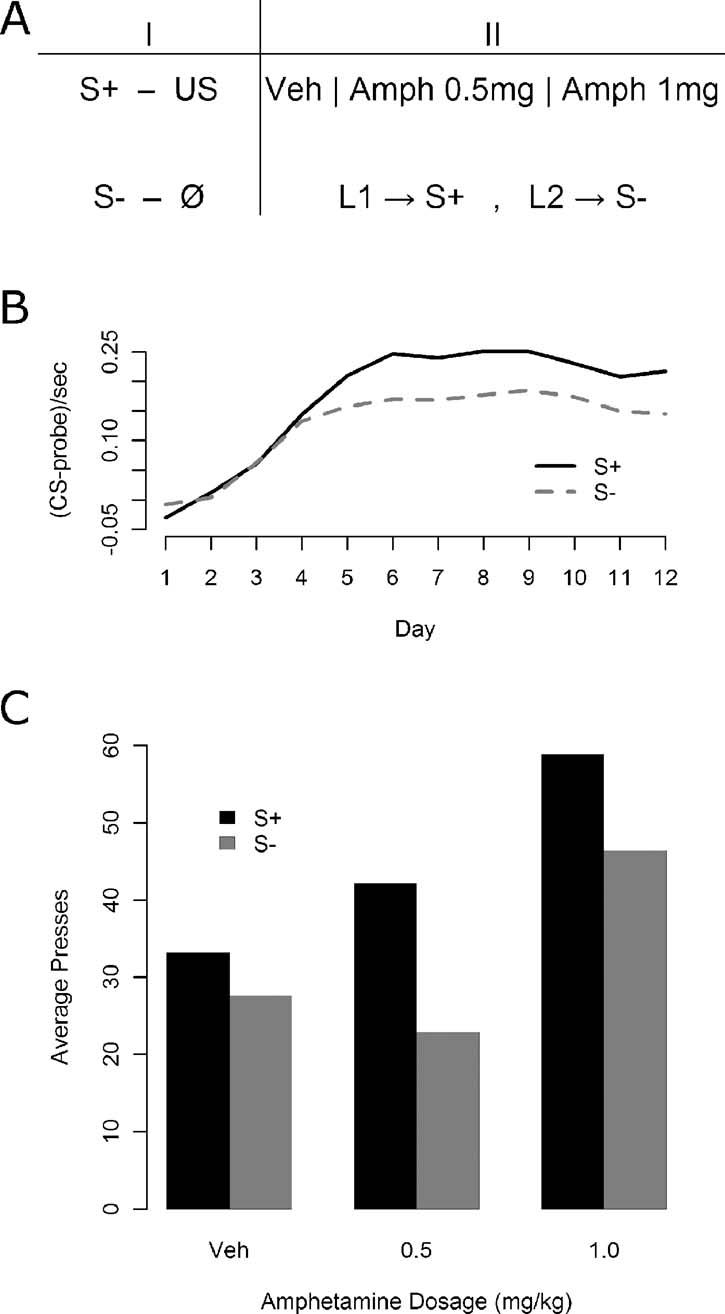

Instrumental conditioning—Experiment 1. In Experiment 1, immediately following Pavlovian conditioning, all of the 30 animals were given daily, 15 minutes test sessions in which they acquired two lever press responses: one delivering the S+ and the other S−. The stimuli were delivered on a variable ratio 2 schedule (VR 2) following a lever press. No nutritive outcomes were delivered during these sessions. Five minutes prior to the three sessions, each of 30 animals was given an injection of d-amphetamine. Three i.p. doses were used: 1.5 mg∕kg, 0.5 mg∕kg, or saline vehicle. All animals received each dose in a fully counterbalanced arrangement. During these sessions pressing the left lever delivered the flashing light and pressing the right lever delivered the steady light. Thus, for half of the animals the left lever delivered the S+ and the right lever delivered the S− whereas for the other half these contingencies were reversed. The total number of presses on each lever was recorded in each session. The design of Experiment 1 is presented in Figure 1 A.

Figure 1. The effect of d-amphetamine on sensory and secondary reward processes. (A) The phases of Experiment 1. First, animals were given Pavlovian discrimination training where the S+ predicted on average two outcome deliveries per 10 seconds presentation while the S− was never paired with an outcome. Following Pavlovian conditioning, animals were given daily training sessions where one lever (L1) secured delivery of the S+ and the other (L2) delivery of the S−. These sessions were conducted under vehicle, a low dose of amphetamine (0.5 mg∕kg), and a high dose of amphetamine (1.5 mg∕kg) in a completely counterbalanced fashion. (B) Results of the Pavlovian discrimination training. Magazine entry rate was reliably higher during the S+ compared with the S−, although some generalization may have occurred. (C) Results of instrumental conditioning under amphetamine. Overall effects both of the stimulus delivered by the lever (S+ vs. S−) and of the drug were detected, but no interaction emerged.

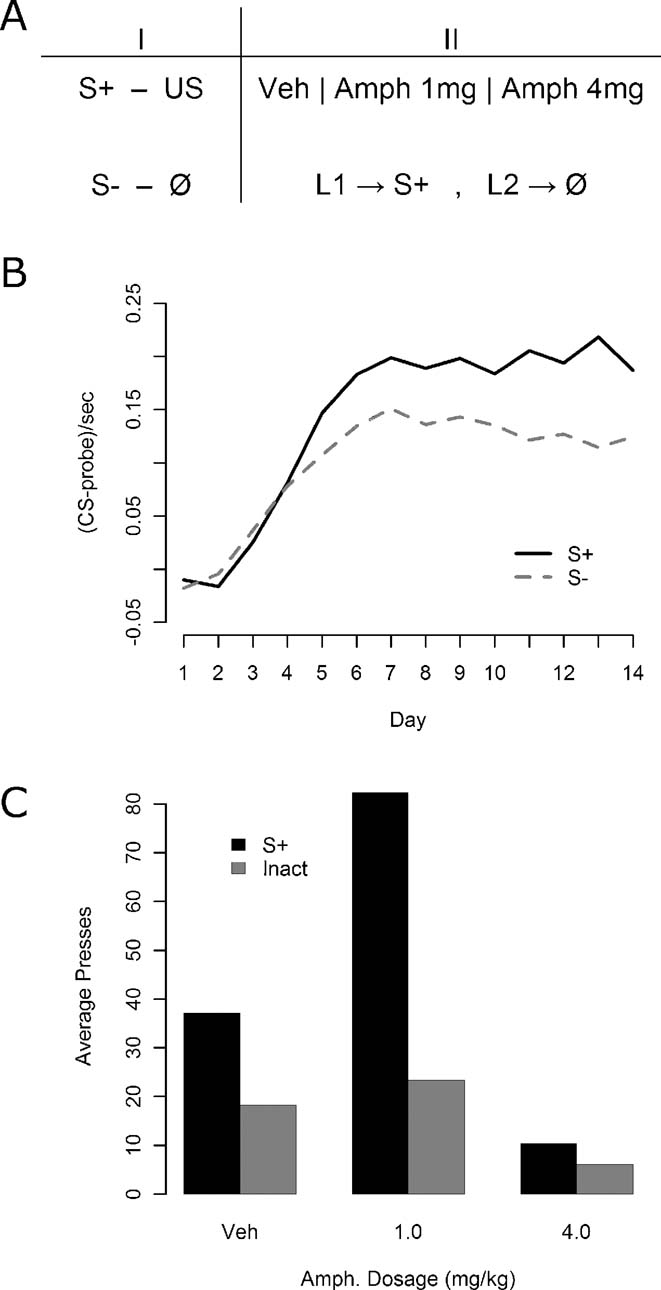

Instrumental conditioning—Experiment 2. In Experiment 2, all of the 24 rats were given daily, 15 minutes test sessions in which pressing one lever delivered the S+ whereas pressing the other had no programmed stimulus consequences. Half of the animals received the S+ for presses of the left lever and the other half for presses to the right lever, with no stimulus on the other lever. Three i.p. doses of amphetamine were used: 4.0 mg∕kg, 1.0 mg∕kg, or saline vehicle. All rats again received each drug dosage level, in a fully counterbalanced fashion. Again no outcomes were delivered during the instrumental training sessions.

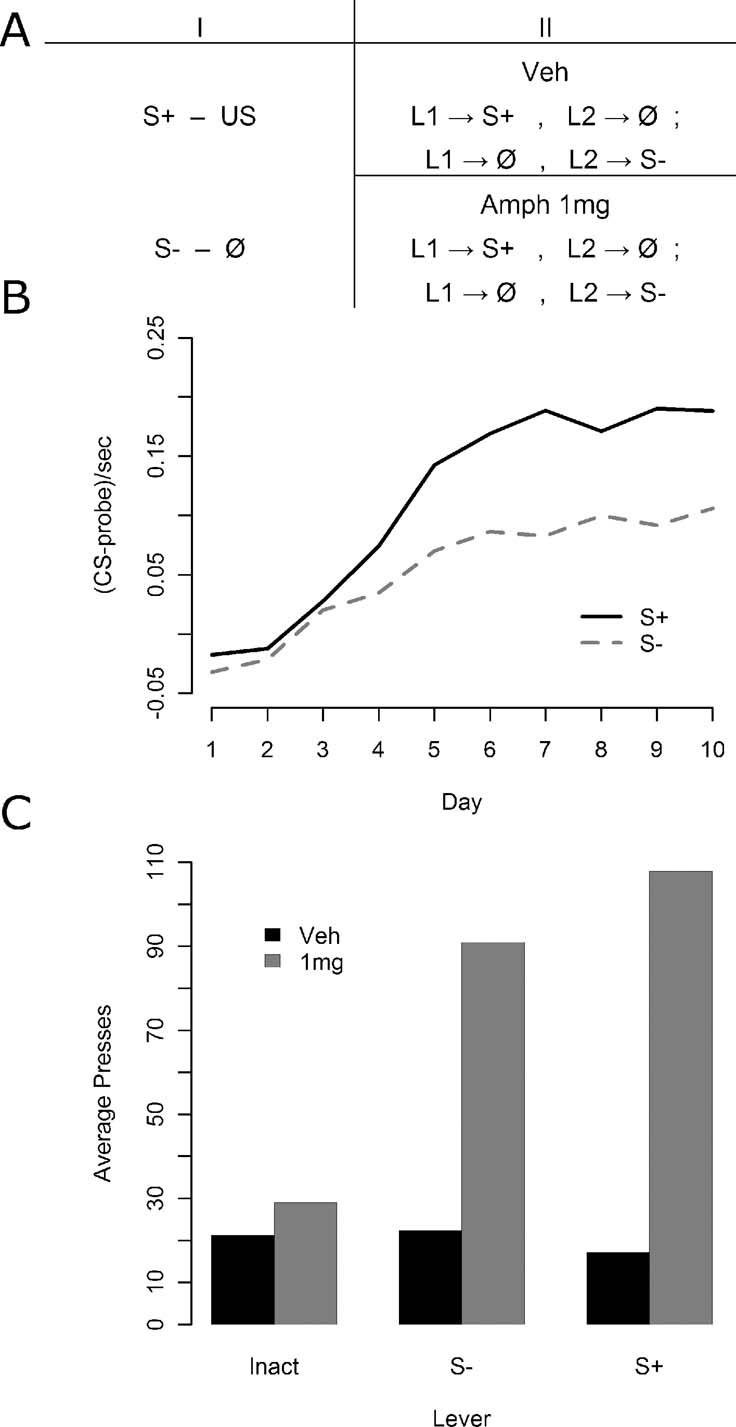

Instrumental conditioning—Experiment 3. In Experiment 3, 32 animals were given 15 minutes test sessions in which they acquired two lever press responses one delivering the S+ and the other S− immediately after Pavlovian conditioning (see Figure 3 A). Again, no outcomes were delivered during these sessions. Animals were given two sessions of such instrumental conditioning, one per day. Half of the animals were given an injection of saline prior to both sessions, whereas the other half were injected with 1 mg∕kg d-amphetamine prior to both sessions. All injections occurred approximately 5 minutes prior to the sessions. During each session, presses on one lever delivered either the S+ or the S− on a VR 2 schedule whereas the other lever had no programmed consequences. The stimulus lever and the inactive lever were switched from day 1 to day 2. Half of the animals received the S+ for presses on the first day and the S− for presses on the second, whereas for the other half this order was reversed.

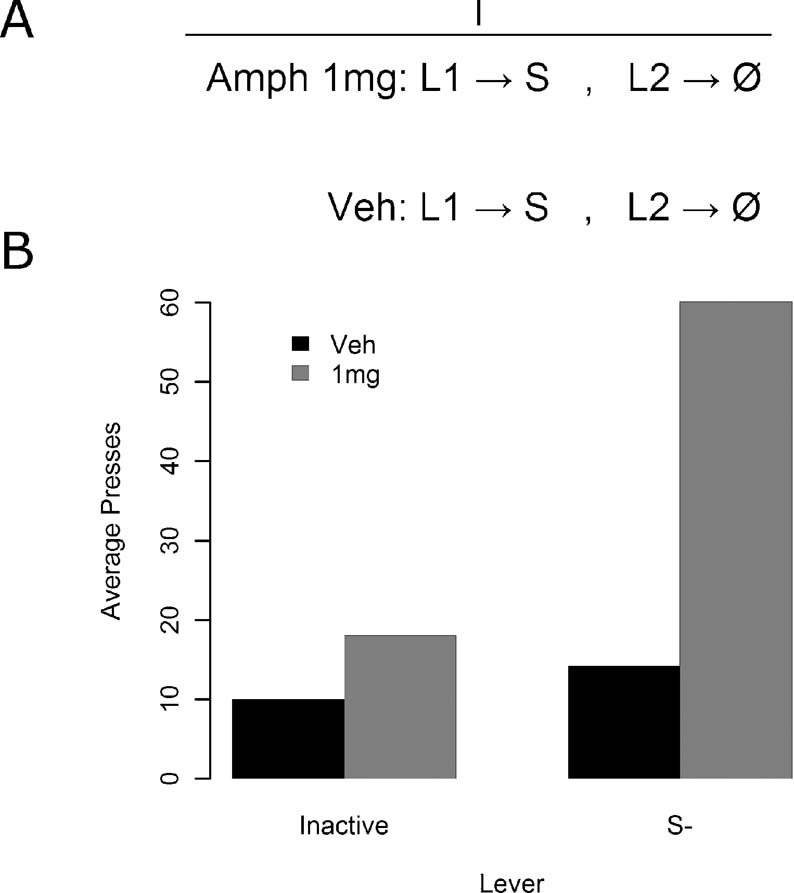

Instrumental conditioning—Experiment 4. Rats in Experiment 4 were not given Pavlovian training, but were given instrumental conditioning in two daily sessions (see Figure 4 A). Half of the 14 rats were given an injection of 1 mg∕kg of d-amphetamine prior to the first session and a saline injection 5 minutes prior to the second session, whereas the other half received the opposite order of drug treatments. During each session, all animals could press the left lever for delivery of the left, flashing keylight. The right lever was inactive and had no programmed consequences. No primary rewards were delivered at any time during the course of this experiment.

Results

Experiment 1

As illustrated in Figure 1 B, Pavlovian conditioning proceeded smoothly with reliable discrimination emerging by the end of conditioning, as assessed using a one-way, within-subjects ANOVA on the final day elevation of S+ magazine entries relative to S− entries [F(1, 29) = 19.62, MSe = 0.0047, p < 0.05]. The results for the instrumental conditioning phase are presented in Figure 1 C. Responding is shown for the lever leading to the S+ and the lever leading to the S− both under vehicle and under two increasing doses of amphetamine. The S+ appears to support more responding than the S− at all levels, but amphetamine appears to increase responding generally, at least in the higher dose. A within-subjects, factorial ANOVA confirmed both main effects, with a lever effect [F(1, 29) = 13.45, MSe = 489.7, p < 0.05], and an amphetamine effect [F(2, 58) = 10.06, MSe = 923, p < 0.05], but the apparent interaction was not detected [F(2, 58) = 2.16, MSe = 378.5, p > 0.05].

All component 2 × 2 interactions were assessed to further examine the apparent interaction between lever and drug state, using within-subjects factorial ANOVAs with a Bonferroni correction (yielding alpha = 0.0167). When comparing the 0 mg∕kg versus the 0.5 mg∕kg levels of drug, neither an effect of drug dosage (F < 1; MSe = 570.8) nor an interaction [F(1, 29) = 4.91, MSe = 331.4, p > 0.0167] was detected, but an effect of the lever was present [F(1, 29) = 12.76, MSe = 333.0, p < 0.0167]. The 0.5 mg∕kg level versus the 1.5 mg∕kg level again yielded no interaction [F(1, 29) = 1.02, MSe = 336.1, p > 0.0167], but produced reliable effects of both drug [F(1, 29) = 11.43, MSe = 1059, p < 0.0167] and lever [F(1, 29) = 10.99, MSe = 688.7, p < 0.0167], and finally the comparison of 0 and 1.5 mg∕kg produced no reliable interaction [F(1, 29) = 1.02, MSe = 467.9, p > 0.0167], but the effects of both drug dosage [F(1, 29) = 13.64, MSe = 1138, p < 0.0167] and lever were reliable [F(1, 29) = 6.47, MSe = 336.2, p < 0.0167].

The consistency of the lever effect, in the absence of any interactions, suggests that at all drug dosages the animals preferred responding on the S+ lever to responding on the S− lever. The pattern of drug effects differs somewhat from that suggested by the figure, in that animals showed an effect of the higher dosage whereas the lower dosage fails to differ from vehicle. Since that lower dosage produced no elevation of responding relative to vehicle in a non-interactive fashion, and the higher dosage elevated responding in comparison with either vehicle or the lower dosage, the only additional conclusion that is provided by interrogating the component interactions is that the 0.5 mg∕kg dosage was insufficient to produce any reliable effects on behavior.

These results suggest that amphetamine may enhance not only responding for the S+, but also for the S−. That they should be treated with caution, however, is indicated for two reasons. First, Robbins (1976) , using a between-subjects design with broadly similar logic although differing in a few (potentially crucial) procedural details, found that a psychostimulant similar to amphetamine, pipradrol, had no effect on responding for an S−. Second, the direct comparison of S+ and S− in this study precluded control for any effect of general arousal on the rats' performance. Drug-induced arousal could provide, therefore, a viable explanation for the effect observed to both S+ and S−. To begin to examine this possibility, Experiment 2 compared the effect of amphetamine on responding for an S+ with its effect on performance on a control lever that produced no stimulus consequences. The design of this experiment is illustrated in Figure 2 A. If responding for cues in general is indeed affected by amphetamine then it should elevate responding on the S+ lever, as in Experiment 1, but should not affect responding on a lever that produces no stimulus consequences. If, however, general arousal was indeed the source of the effect in Experiment 1, then an increase in responding on both levers should be anticipated.

Figure 2. The effect of amphetamine on responding for S+ and on an inactive lever in Experiment 2. (A) Experiment 2 design. All rats were given Pavlovian discrimination training, with the S+ predicting reward and the S− never paired with reward. In the instrumental phase, animals were tested with an inactive lever control in which one lever delivered the S+ and the other produced no stimulus consequences. All animals were tested under vehicle, 1 mg∕kg of amphetamine, and 4 mg∕kg of amphetamine. (B) Results of Pavlovian conditioning. The S+ prompted reliably more magazine entry than the S−, although some generalization may again have occurred. (C) Effect of various doses of amphetamine on instrumental responding for the S+ and on the inactive lever.

Experiment 2

Given the results of Experiment 1, Experiment 2 was conducted to assess the effects of amphetamine against an inactive lever, which is the standard control condition employed in conditioned reinforcement experiments (Robbins et al., 1989 ). Previously performance on an inactive lever has been found to be unaffected by amphetamine administration, ruling out general motor effects of amphetamine as the source of its enhancement, and we felt it important to see if we could replicate this effect before moving on to compare the inactive lever with the S− control in Experiment 3. As illustrated in Figure 2 B, Pavlovian conditioning again proceeded smoothly with reliable discrimination emerging by the end of conditioning, as assessed by final day elevation of S+ magazine responding relative to S− entries [F(1, 47) = 22.99, MSe = 0.0041, p < 0.05]. The results for the instrumental conditioning phase are presented in Figure 2 C. We found that the 4 mg∕kg dosage strongly suppressed performance and that this effect persisted for at least an hour after each session. Because of the uniform effects of this dose, these data were excluded from further analysis so as to concentrate on the effects of lower doses. More importantly, there appeared to be a strong overall advantage in responding on the S+ lever, and that the moderate dose of amphetamine selectively enhanced actions leading to the S+ without affecting performance on the inactive control lever.

A factorial ANOVA was conducted with within-subjects factors of drug and lever. In this analysis, the effects of lever [F(1, 23) = 47.83, MSe = 780, p < 0.05] and drug [F(1, 23) = 12.06, MSe = 1207, p < 0.05] were significant. Importantly, there was also a significant lever by drug interaction [F(1, 23) = 15.41, MSe = 654.4, p < 0.05]. Further analysis of the simple effects was therefore performed to determine whether responding on the inactive lever was affected by amphetamine. One-way within-subjects ANOVAs were used both to compare inactive lever responding at 0 and 1 mg∕kg, where no significant elevation by amphetamine was detected (F < 1, MSe = 261.1), and to examine the lever effect under vehicle, which was reliable [F(1, 23) = 15.66, MSe = 274.2, p < 0.05]. These results, together with all reliable omnibus ANOVA results, confirm the visually suggested pattern of results in Figure 2 C.

In this experiment, responding on an inactive lever that was followed by no stimulus consequences was not affected by amphetamine. This latter finding suggests that, rather than being mediated by the general arousing effects of amphetamine, it was the increase in sensory reinforcement-directed instrumental responding observed in the previous study that mediated the specific effect of amphetamine on responding for the S−. Two problems remain, however. First, the decision to include a 4 mg∕kg dose, which had a general suppressive effect on behavior, rendered the statistical analysis far less powerful than was hoped. Furthermore, the effect of amphetamine on the S+ lever when contrasted against the inactive control lever appeared far more powerful than performance on the S+ lever against the S− control lever in the previous experiment. This effect may, however, reflect the fact that, in choice situations, reduced performance of one action provides the opportunity for increased responding on another action; in fact the overall operant rate was comparable across the two studies.

Experiment 3

Experiment 3 was an attempt to replicate the findings of Experiment 2 in a design more sensitive to the effects of amphetamine on instrumental responses for the S−. The design is illustrated in Figure 3 A. Pavlovian conditioning, illustrated in Figure 3 B was similar to that observed in the previous experiments with reliable discrimination emerging by the end of conditioning, as assessed by final day elevation of S+ responding relative to S− responding [F(1, 31) = 23.92, MSe = 0.0045, p < 0.05]. The results for the instrumental conditioning phase, illustrated in Figure 3 C, once again found that amphetamine enhanced responding for S+ and for S− relative to the inactive control lever.

Figure 3. The effect of amphetamine on sensory and secondary reward in Experiment 3. (A) Experiment 3 again first included Pavlovian discrimination training. Instrumental conditioning then proceeded, this time in two groups: one that received vehicle injections before every session, and one that received 1 mg∕kg d-amphetamine before every session. Within each group, all animals experienced two kinds of session: one where one lever delivered the S+ and the other nothing, and another session where the former lever delivered nothing, and the latter delivered the S−. (B) Pavlovian conditioning data. A reliable discrimination was observed by the end of training. (C) Instrumental conditioning data. The vehicle group showed rather little preference for one lever over the other, perhaps because the shifting location of the inactive lever rendered it worth checking at the beginning of sessions following first day of training. The drug group showed a clear preference, however, for active levers, but a preference that did not discriminate between the S− and the S+ delivering lever.

A mixed ANOVA was conducted with drug as a between-subjects factor and lever as a within-subjects factor. Both the apparent overall effects of lever [F(2, 60) = 10.66, MSe = 1652, p < 0.05] and of drug [F(1, 30) = 19.47, MSe = 3745, p < 0.05] were reliable, as was the lever by drug interaction [F(2, 60) = 10.49, MSe = 1652, p < 0.05]. Four contrasts with a Bonferroni correction (yielding alpha = 0.0125) were used to test the source of the interaction. First, lever and drug effects at just the S+ and S− levels together (excluding the inactive lever) was separately analyzed using a mixed ANOVA to test for evidence of secondary reward above and beyond sensory reward, but neither a reliable interaction nor a lever effect was observed (Fs < 1, MSe = 2109), although a clear effect of drug was detected [F(1, 30) = 24.40, MSe = 4165, p < 0.0125]. Second, the drug effect on the inactive lever was examined via a one-way, within-subjects ANOVA and found not to be reliable [F(1, 30) = 5.14, MSe = 775.1, p > 0.0125]. Third, the lever effect in the amphetamine free group was examined in a one-way, within-subjects ANOVA, and found not to be reliable (F < 1, MSe = 190.8). Finally, the lever effect in the 1 mg∕kg amphetamine group was found to be reliable [F(2, 30) = 11.18, MSe = 3113, p < 0.0125] using a one-way, within-subjects ANOVA. As such, these results suggest that the overall interaction appears to be solely carried by the failure of amphetamine to affect responding on the inactive lever whereas it had a clear facilitative effect on the S− lever.

An unfortunate feature of the mean response rates during the instrumental training phase in Experiment 3 was the lack of a difference in performance between S+ and S− under vehicle. As the conclusions rely on the argument that Pavlovian conditioning in the current experiment conferred a differential predictive status on S+ relative to S−, if this was not the case, then the inability to find a general preference for the S+ relative to the S− in instrumental performance could be explained by a simple failure to transfer the appropriate Pavlovian discrimination from the training phase to the instrumental phase. To test this argument, we examined the correlation between S+ versus S− discrimination during Pavlovian conditioning and the degree of preference for the S+ and S− relative to the inactive lever in instrumental conditioning. Because the second session of instrumental training utilized a reversal of the active and inactive levers, analysis of the correlations was confined to the first session, providing us with a measure of relative lever preference not confounded with any influence of earlier instrumental learning. Although animals did not directly experience a contrast between a lever leading to the S+ and S−, a between groups comparison allowed us to assess the relative reward values of the two stimuli. The correlation between the degree of Pavlovian discrimination expressed in magazine entry performance and the relative preference for responding on the cued lever versus the inactive lever was thus explored both in animals able to press for delivery of the S+ and animals able to press for the delivery of the S−.

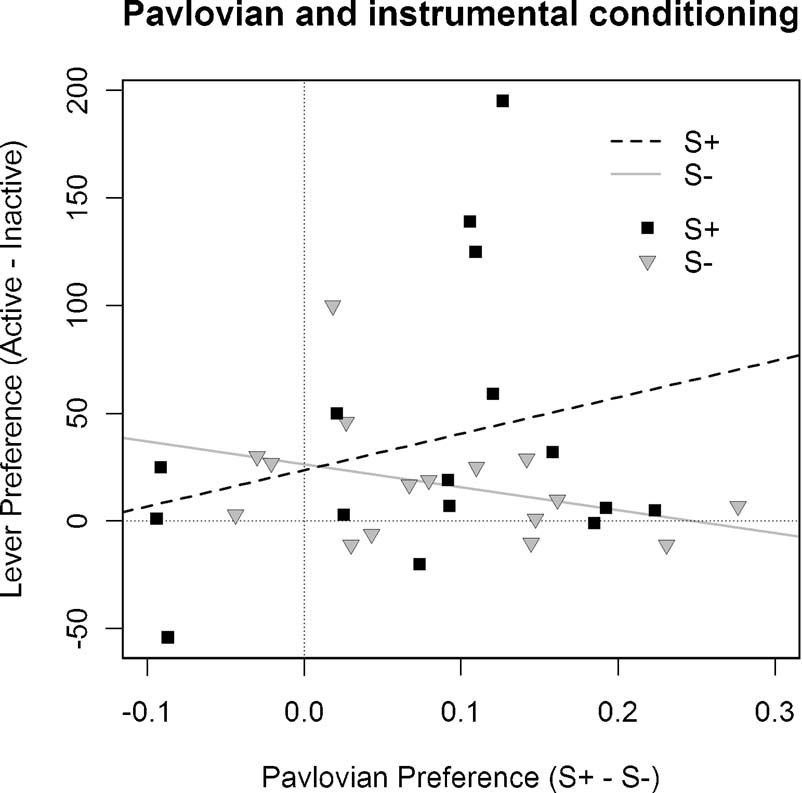

As illustrated in Figure 4 , in rats pressing for the S+ the degree of preference for the active lever relative to the inactive lever was positively correlated with the degree of expressed Pavlovian discrimination, with r = 0.26. In contrast, animals pressing for the S− showed a negative correlation between degree of preference for the inactive lever and magnitude of Pavlovian discrimination, with r = −0.35. For the statistical analysis, the difference between these correlations was compared using a randomization test (Edgington, 1995 ), in which a frequency distribution was constructed to model the difference between correlations by re-sampling from the subjects without replacement and assigning them to groups on a random basis. We found, against the null hypothesis, that the probability that the correlations in Groups S+ and S− observed in Experiment 3 did not differ was p = 0.038, allowing us, therefore, to reject this null hypothesis. Contrary to the argument developed above, therefore, this analysis suggests that the different treatments given during Pavlovian conditioning to the stimuli used in Groups S+ and S− were a significant determinant of their effects on subsequent instrumental performance.

Figure 4. Correlation between Pavlovian discrimination and instrumental performance in Experiment 3. The difference in performance during the S+ and S− stimuli during Pavlovian conditioning was compared with the difference between S+ and inactive lever responding in Groups S+ and S− and inactive lever responding in Group S−. This figure illustrates these correlations for each subject of these two groups together with the linear regression line: For Group S+: r = 0.26, whereas for Group S− it is—0.35.

Together, these results indicate that although amphetamine was again ineffective at increasing responding on an inactive lever, it increased responding for the S+ and of the S− to a similar degree. The overall inability to detect the standard elevation of S+ and S− levers relative to the inactive lever may be a consequence of the reversal employed on the second day of testing; i.e., the formerly rewarded lever was shifted to inactivity which may potentially have resulted in spurious lever presses on the inactive lever that second day. More troubling, perhaps, was the failure of this experiment to produce evidence of any clear preference for the S+ in instrumental performance. There are, however, two potential reasons for the failure to detect this effect. It is possible that considerable overtraining on the Pavlovian discrimination is required for the S+ to act as a secondary reward relative to the S−. Indeed, Pavlovian and instrumental incentive processes are known to be largely separate (Corbit et al., 2001 ), so it would not be surprising if what appears to be discrimination during Pavlovian conditioning actually supports generalization with respect to secondary rewarding stimuli. By this account, both the S− here and the S+ would be acting primarily as apparent signals of reward. This would also reconcile this data with past studies showing no effect of psychostimulants on familiar, but unreinforced, Pavlovian stimuli. However, given the results of the following experiment, this explanation seems less than persuasive. More likely, under amphetamine the inactive control lever contrasts poorly with reward of either type on the active lever and is so unappealing a target for behavior that the animals simply direct all responding towards the active lever. This experiment included no direct choice test between the S+ and S− levers, and so precluded the most sensitive measure of the animal's preference for one source of reward over the other.

Experiment 4

The previous experiments strongly suggest that responding for an S− is affected by amphetamine, but all also include the possibility that the S− is, in the instrumental phase, acting as a source of reward via generalization of the prediction from the S+. Experiment 4 provided a direct test of amphetamine's ability to affect lever presses that delivered the S− and employed a design formally identical to that used in the standard test of drug effects on an S+, but omitting Pavlovian discrimination training of the to-be-delivered stimulus (see Figure 5 A). All animals were given the opportunity to press for a sensory stimulus and to press a lever with no programmed consequences for 2 days, and all animals experienced 1 day under 1 mg∕kg amphetamine and 1 day under vehicle. If amphetamine is able to potentiate lever pressing that delivers an S− then we predicted that pressing would be elevated in the presence of amphetamine relative to the vehicle treatment. The inactive lever provided a control for the general excitatory effects of amphetamine and, as observed in Experiments 2 and 3, was not expected to be affected by the drug treatment.

Figure 5. The effect of amphetamine on sensory reward in Experiment 4. (A) Experiment 4 design. All animals were given sessions both under vehicle and under 1 mg∕kg d-amphetamine. In these sessions they could press one lever for stimulus delivery, while the other lever had no stimulus consequences. (B) Responding was elevated under drug for the S−, but all other points failed to reliably differ.

Results for the instrumental conditioning phase are presented in Figure 5 B. Amphetamine appeared to selectively enhance responding on the lever leading to the S− relative to the lever leading to no consequences. A two-way, within-subjects ANOVA was conducted, with lever identity and drug dosage as factors, to examine this effect. Both the lever [F(1, 13) = 9.35, MSe = 492.9, p < 0.05] and drug [F(1, 13) = 8.15, MSe = 831.7, p < 0.05] effects were reliable, but more importantly, so was the lever by drug interaction [F(1, 13) = 7.63, MSe = 356.0, p < 0.05]. The source of the interaction was inspected via four contrasts, utilizing the Bonferroni–Holm procedure for multiple comparisons to adjust the alpha value in each successive contrast. Responding under amphetamine on the S− lever was: greater than responding on the inactive lever under vehicle [F(1, 13) = 9.65, MSe = 1169.1, p < 0.0125], greater than responding on the inactive lever under amphetamine [F(1, 13) = 9.06, MSe = 794.7, p < 0.0167], and greater than responding on the S− lever under vehicle [F(1, 13) = 8.42, MSe = 1072.7, p < 0.025], all as assessed via one-way, within-subjects ANOVAs. Finally, responding on the inactive lever at both drug doses and on the S− lever under vehicle was found using a one-way, within-subjects ANOVA to be comparable [F(2, 26) = 2.11, MSe = 108.29, p > 0.05]. Amphetamine therefore reliably enhanced S−, without reliably affecting responding in any other condition.

This experiment clearly demonstrates that amphetamine can have strong potentiating effects on responding for sensory events previously unpaired with reward induced by simple stimulus change contingent upon lever pressing. Although a preference for the S− was not apparent without amphetamine administration in this experiment, the fragility of this effect is comparable to that of responding for S+ in lieu of amphetamine. In this experiment, after the administration of amphetamine the sensory stimulus was capable of supporting vigorous responding on the lever that delivered it when compared to a lever that had no stimulus consequences without a history of Pavlovian conditioning of any kind.

Discussion

These results demonstrate that amphetamine can augment the performance of actions that deliver a stimulus previously paired with reward and actions that deliver a stimulus never paired with reward. Both the S− from Pavlovian training and a similar novel stimulus were capable of supporting vigorous responding, i.e., responding for sensory events previously unpaired with reward, compared with responding on an inactive lever in control subjects—an effect consistently elevated by the administration of amphetamine. Indeed, direct comparison of the elevation of a putative conditioned reinforcer (S+) with a sensory event unpaired with reward (S−) seemed to provide more support for the argument that the impact of the sensory event itself (the common component in all stimulus delivery conditions in these experiments) was alone affected by amphetamine, in that no interaction with stimulus identity was ever detected. Instead, all earned cues were similarly affected by amphetamine. Given that they shared the potential for sensory reinforcement but not for conditioned reinforcement, an effect of amphetamine solely on conditioned reinforcement is not supported by the current experiments. This series of findings, when combined with the inability to separate potential sources of sensory reinforcement from the stimuli used to examine conditioned reinforcement, makes it is imperative that demonstrations of drug (and other) effects on conditioned reinforcement employ sensory controls; i.e., stimuli that have never been paired with any primary reward.

These controls, however, are consistently neglected in contemporary examinations of the effects of stimulant drugs of abuse on nominal conditioned reinforcement processes (Olausson et al., 2004 ; Robbins et al., 1983 ). The most extensive body of work examining drug effects on conditioned reinforcement has been produced by Robbins (Robbins, 1975 ; Robbins, 1976 ; Robbins and Koob, 1978 ; Robbins et al., 1983 ; Robbins et al., 1989 ). The vast majority of these studies have employed the inactive control lever paradigm, but three stand out for their use of an active, sensory reinforcer on the control lever. Some feature(s) of the broader procedures employed, however, appear to have precluded Robbins from detecting the considerable amphetamine effect on actions delivering an unpaired sensory event reported here. For example, his most closely analogous design (Robbins and Koob, 1978 ) differed greatly from the present studies in several ways. For example, in that study animals first learned to perform an instrumental action, a panel push response, in order to receive (presumably rewarding) brain stimulation. The S+ and S− cues were then developed by allowing panel pushing in a discrimination paradigm, where pushing during the S+ was reinforced, but pushing during the S− cue was not reinforced—indeed, pushing during the S− cue was punished by delaying later opportunities for reinforcement. Following discrimination training, the cues were presented contingently for presses on two, novel levers. Pipradrol was shown to enhance pressing for the S+, but did not affect responding for S−. It is not particularly surprising that pipradol had no effect on S− responding given that instrumental responses during the S− in the discrimination training phase were actively punished. It is in fact quite reasonable that animals should later have avoided exposure via instrumental behavior to that cue which in effect was a conditioned punisher. Indeed, if, as has been suggested, amphetamine's effects on reward and punishment are symmetrical (Killcross et al., 1997 ), punished S− responding could well have been mildly decreased under amphetamine so reducing any concurrent increase due to sensory reward, although the former effect might have been difficult to detect in the Robbins and Koob (1978) design because of the already very low responding for the S− without amphetamine.

Another result (Robbins, 1976 ) is more difficult to reconcile with the present studies. There, Robbins used water reward for thirsty rats, and eliminated explicit punishment for responding during the S− during the discrimination training phase. Rats were first trained to panel push in order to gain access to water and were then given discrimination training, where pushes during the S+ stimulus led to water, but pushes during the S− stimulus did not. Finally, half of the animals were allowed to press one lever for delivery of the S+ and the other with no programmed consequences, and the other half were allowed to press one lever for delivery of the S− and the other with no programmed consequences. This phase, therefore, is similar to that used in Experiment 2 but using a between-subjects design. Here, however, he again detected no elevation in responding on the lever that delivered the S−, and no potentiation of that responding by amphetamine. However, it is important to note that this design and the Robbins and Koob (1978) design have in common the use of instrumental discrimination training to establish the S+ and S− stimuli. It is in fact quite likely, if the S− has a history of inhibiting instrumental responses that inhibition transfers to the acquisition of new responses for the S−. Indeed, the Killcross et al., 1997 result lends additional support to this hypothesis. They examined the effects of amphetamine (amongst other drugs) on both conditioned punishment and reinforcement using the appropriate, sensory reward control, and yet detected no effect of amphetamine on that control. Once again, however, the S+ and S− were established using instrumental discrimination training.

There is in fact considerable evidence that the predictive status of a stimulus established by instrumental discriminative training (i.e., in which the stimulus serves to discriminate when some particular response will be followed by the US; i.e., CS: R-US) is quite different from that established by a simple Pavlovian pairing of CS and US. For example, although it has been well documented that a discriminative stimulus can block associations between another discriminative stimulus and the US (Neely and Wagner, 1974 ), Holman and Mackintosh (1981) found that a CS established through simple pairing with US delivery could exert no such effect. Furthermore, Colwill and Rescorla (1988) reported that instrumental discriminative stimuli transfer their control to other instrumental actions whereas simple CSs does not [see also (Azrin and Hake, 1969 ; Konorski, 1948 )]. The problems Robbins' studies have had in detecting the effects of amphetamine on responding for a sensory event unpaired with reward, is, therefore, very likely to be due to the difference in the predictive status of a stimulus trained to discriminate periods when an instrumental response is rewarded from those when it is not rewarded relative to a CS that has merely been presented unpaired with a food US, as in the current study. Here, simple Pavlovian training was given to develop both the S+ and its S− control only after which instrumental training, in which separate levers produced the two stimuli, was given. This approach clearly provided sufficient sensitivity to detect unequivocal effects of amphetamine on both the S+ and the S−.

Finally, the current results appear to provide clear support for Redgrave and Gurney's (2006) action re-selection hypothesis of dopamine function. Generally, throughout this series amphetamine was found to elevate responding on a lever delivering a stimulus paired with reward; i.e., that delivered the S+. This could be due to amphetamine elevating the dopaminergic response elicited by the S+ on its presentation based on its status as a predictor of the food US during the conditioning phase. Several theories have been developed that predict this kind of result, not least of which are recent accounts based on the actor–critic version of reinforcement learning theory (Sutton and Barto, 1998 ). The critic uses a temporal difference prediction error signal to update successive predictions of future reward associated with a state of the environment whereas the actor uses the same error signal to modify stimulus-response associations in the form of a policy, so that actions associated with greater long-term reward are subsequently chosen more frequently. In the current case, actions that produce S+ should be preferred because they deliver a stimulus predictive of future primary reward. More recently, drawing on evidence that dopamine neurons provide a reward prediction error signal (Mirenowicz and Schultz, 1994 ), several theorists have suggested that this signal could be used both to set values and to improve action selection (Montague et al., 1996 ; Schultz et al., 1997 ). In this case, the better predictor of reward should produce a larger dopaminergic response and so signal a higher value and actions associated with that stimulus should be preferred over other actions. Nevertheless, although this dopamine-reinforcement learning view has produced an elegant computational approach to the neural bases of adaptive behavior, it does not anticipate the effect of amphetamine on the selection of actions that deliver the S− in the current experiments. Here we found substantial performance of an action that delivered a stimulus presented throughout the previous Pavlovian conditioning phase unpaired with the US. Furthermore, the performance of the action delivering the unpaired stimulus was substantially elevated by amphetamine even when there were no grounds for generalization between the S− and an S+ (as in Experiment 4).

As it stands, it is possible to maintain the function of dopamine as an error signal if it is agreed to limit its application specifically to establishing the predictive status of Pavlovian CSs with respect to the US whilst accepting that the dopamine signal could function differently to control action selection. In fact, given the current state of knowledge, there seems little reason to deny that dopamine firing could serve several independent functions. The alternative is to propose that salient sensory events unpaired with reward nevertheless have substantial reward value. This may well be true, it has been suggested many times before (Berlyne, 1960 ), but if so it raises interesting and important questions with regard to the definition of “reward” generally. Although unpredicted sensory stimuli like those used in the current experiments, do produce a strong dopaminergic response, it seems somewhat circular to link their status as rewards to either that effect or to their ability to support instrumental performance alone, suggesting that establishing some other reward-related property of sensory events will be required to make the case. Previously, we have argued that reward value is determined solely by the emotional response elicited in conjunction with a sensory stimulus, whether this is elicited on contact with a biologically “neutral” event or via the primary motivational and affective processes engaged by biologically significant events (Balleine, 2001 ; Balleine, 2005 ; Ostlund et al., 2008 ). If this is true then the ability of biologically neutral stimuli to engage instrumental actions should be open to modulation by treatments that influence emotional processing, a hypothesis we have yet to test. For the present, at least with regard to its role in instrumental conditioning, the suggestion that dopamine marks actions for “re-selection” has at least the virtue of accounting for the robust lever pressing maintained by the S− and the potentiation of that performance by amphetamine, effects that are not anticipated by alternative views of dopamine function.

Conflict of Interest Statement

The authors declare that this research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgements

The research reported in this paper was supported by a grant from the National Institute of Mental Health #56446 to Bernard Balleine.

References

Azrin, N. H., and Hake, D. F. (1969). Positive conditioned suppression: conditioned suppression using positive reinforcers as the unconditioned stimuli. J. Exp. Anal. Behav. 12, 167–173.

Balleine, B. W. (2001). Incentive processes in instrumental conditioning. In Handbook of Contemporary Learning Theories, EDN R. M. S. Klein, ed. (Hillsdale, NJ, LEA), pp. 307–366.

Balleine, B. W. (2005). Neural bases of food seeking: affect, arousal and reward in corticostriatolimbic circuits. Physiol. Behav. 86, 717–730.

Bitterman, M. E., Reed, P. C., and Kubala, A. L. (1953). The strength of sensory preconditioning. J. Exp. Psychol.: Anim. Behav. Process. 46, 178–182.

Bray, S., O'Doherty, J. (2007). Neural coding of reward-prediction error signals during classical conditioning with attractive faces. J. Neurophysiol. 97, 3036–3045.

Colwill, R. M., and Rescorla, R. A. (1988). Associations between the discriminative stimulus and the reinforcer in instrumental learning. J. Exp. Psychol.: Anim. Behav. Process. 14, 155–164.

Corbit, L. H., Muir, J. L., and Balleine, B. W. (2001). The role of the nucleus accumbens in instrumental conditioning: evidence of a functional dissociation between accumbens core and shell. J. Neurosci. 21, 3251–3260.

Heikkila, R. E., Orlansky, H., Mytilineou, C., and Cohen, G. (1975). Amphetamine: evaluation of d- and l-isomers as releasing agents and uptake inhibitors for 3H-dopamine and 3H-norepinephrine in slices of rat neostriatum and cerebral cortex. J. Pharmacol. Exp. Ther. 194, 47–56.

Holman, J. G., and Mackintosh, N. J. (1981). The control of appetitive instrumental responding does not depend on classical conditioning to the discriminative stimulus. Q. J. Exp. Psychol. 33B, 21–31.

Holroyd, C. B., and Coles, M. G. (2002). The neural basis of human error processing: reinforcement learning, dopamine, and the error-related negativity. Psychol. Rev. 109, 679–709.

Joel, D., Niv, Y., and Ruppin, E. (2002). Actor-critic models of the basal ganglia: new anatomical and computational perspectives. Neural. Netw. 15, 535–547.

Killcross, A. S., Everitt, B. J., and Robbins, T. W. (1997). Symmetrical effects of amphetamine and alpha-flupenthixol on conditioned punishment and conditioned reinforcement: contrasts with midazolam. Psychopharmacology (Berl.) 129, 141–152.

Kish, G. B. (1966). Studies of sensory reinforcement. In Operant Behavior Areas of Research and Application , EDN W. K. Honig, ed. (New York, Appleton-Century-Crofts), pp. 109–159.

Konorski, J. (1948). Conditioned reflexes and neuron organization (Cambridge, Cambridge University Press).

Ljungberg, T., Apicella, P., and Schultz, W. (1992). Responses of monkey dopamine neurons during learning of behavioral reactions. J. Neurophysiol. 67, 145–163.

McClure, S. M., Daw, N. D., and Montague, P. R. (2003). A computational substrate for incentive salience. Trends Neurosci. 26, 423–428.

Mirenowicz, J., and Schultz, W. (1994). Importance of unpredictability for reward responses in primate dopamine neurons. J. Neurophysiol. 72, 1024–1027.

Montague, P. R., Dayan, P., and Sejnowski, T. J. (1996). A framework for mesencephalic dopamine systems based on predictive Hebbian learning. J. Neurosci. 16, 1936–1947.

Neely, J. H., and Wagner, A. R. (1974). Attenuation of blocking with shifts in reward: involvement of schedule-generated context cues. J. Exp. Psychol. 102, 751–763.

Olausson, P., Jentsch, J. D., and Taylor, J. R. (2004). Nicotine enhances responding with conditioned reinforcement. 171, 173–178.

Ostlund, S. B., Winterbauer, N. E., and Balleine, B. W. (2008). Theory of reward systems. In Learning and Memory A Comprehensive Reference—Volume 2 Learning Theory and Behavior, J. Byrne, ed. (Oxford, Elsevier).

Redgrave, P., and Gurney, K. (2006). The short-latency dopamine signal: a role in discovering novel actions? Nat. Rev. Neurosci. 7, 967–975.

Robbins, T. W. (1975). The potentiation of conditioned reinforcement by psychomotor stimulant drugs: a test of Hill's hypothesis. Psychopharmacologia 45, 103–114.

Robbins, T. W. (1976). Relationship between reward-enhancing and stereotypical effects of psychomotor stimulant drugs. Nature 264, 57–59.

Robbins, T. W., and Koob, G. F. (1978). Pipradrol enhances reinforcing properties of stimuli paired with brain stimulation. 8, 219–222.

Robbins, T. W., Watson, B. A., Gaskin, M., and Ennis, C. (1983). Contrasting interactions of pipradrol, d-amphetamine, cocaine, cocaine analogues, apomorphine and other drugs with conditioned reinforcement. Psychopharmacology (Berl.) 80, 113–119.

Robbins, T. W., Cador, M., Taylor, J. R., and Everitt, B. J. (1989). Limbic-striatal interactions in reward-related processes. Neurosci. Biobehav. Rev. 13, 155–162.

Schultz, W., Dayan, P., and Montague, P. R. (1997). A neural substrate of prediction and reward. Science 275, 1593–1599.

Seiden, L. S., Sabol, K. E., and Ricaurte, G. A. (1993). Amphetamine: effects on catecholamine systems and behavior. Annu. Rev. Pharmacol. Toxicol. 33, 639–677.

Keywords: conditioned reinforcement, sensory reinforcement, instrumental conditioning, dopamine

Citation: Neil E. Winterbauer and Bernard W. Balleine (2007). The influence of amphetamine on sensory and conditioned reinforcement: evidence for the re-selection hypothesis of dopamine function. Front. Integr. Neurosci. 1:9. doi: 10.3389/neuro.07/009.2007

Received: 8 October 2007;

Paper pending published: 30 October 2007;

Accepted: 19 November 2007;

Published online: 30 November 2007

Edited by:

Sidney A. Simon, Duke University Medical Center, USAReviewed by:

Albino J. Oliveira-Maia, Universidade do Porto, Portugal; Duke University Medical Center, USAIvan E. de Araujo, The J. B. Pierce Laboratory, USA; Yale University School of Medicine, USA

Jennifer Stapleton, Duke University, USA

Copyright: © 2007 Winterbauer and Balleine. This is an open-access article subject to an exclusive license agreement between the authors and the Frontiers Research Foundation, which permits unrestricted use, distribution, and reproduction in any medium, provided the original authors and source are credited.

*Correspondence: Bernard W. Balleine Department of Psychology, UCLA, Box 951563, Los Angeles, CA 90095-1563, USA. e-mail:YmFsbGVpbmVAcHN5Y2gudWNsYS5lZHU=