- 1Department of Neurology, Barrow Neurological Institute, Phoenix, AZ, USA

- 2Department of Neurosurgery, Barrow Neurological Institute, Phoenix, AZ, USA

Well-documented differences in the psychology and behavior of men and women have spurred extensive exploration of gender's role within the brain, particularly regarding emotional processing. While neuroanatomical studies clearly show differences between the sexes, the functional effects of these differences are less understood. Neuroimaging studies have shown inconsistent locations and magnitudes of gender differences in brain hemodynamic responses to emotion. To better understand the neurophysiology of these gender differences, we analyzed recordings of single neuron activity in the human brain as subjects of both genders viewed emotional expressions. This study included recordings of single-neuron activity of 14 (6 male) epileptic patients in four brain areas: amygdala (236 neurons), hippocampus (n = 270), anterior cingulate cortex (n = 256), and ventromedial prefrontal cortex (n = 174). Neural activity was recorded while participants viewed a series of avatar male faces portraying positive, negative or neutral expressions. Significant gender differences were found in the left amygdala, where 23% (n = 15∕66) of neurons in men were significantly affected by facial emotion, vs. 8% (n = 6∕76) of neurons in women. A Fisher's exact test comparing the two ratios found a highly significant difference between the two (p < 0.01). These results show specific differences between genders at the single-neuron level in the human amygdala. These differences may reflect gender-based distinctions in evolved capacities for emotional processing and also demonstrate the importance of including subject gender as an independent factor in future studies of emotional processing by single neurons in the human amygdala.

Introduction

Since gender is such an integral part of human identity, gender differences in the human brain have long been a source of interest and dissent amongst researchers. Anatomically, there are well-documented gender differences in relative volumes of neural structures including the frontal cortices, the hypothalamus, and the amygdala (Goldstein et al., 2001). While researchers have offered evidence of the biochemical processes which cause these anatomical differences to arise (Nugent and McCarthy, 2011; Uddin et al., 2013), it is less understood how these differences are translated into a difference in emotional processing or behavior.

In total, more than two thousand neuroimaging studies exploring emotional processing have been published since 1990. While many have reported significant gender differences in hemodynamic changes, the location, extent and direction of these differences have varied widely, leading several groups of researchers to search for consistent patterns by performing meta-analyses. The results of these analyses have been mixed, particularly with regards to the present study's region of interest: the amygdala.

The most recent meta-analysis, by Stevens and Hamann (2012), evaluated studies whose stimuli evoked emotions broadly classified as “positive” (amusing, pleasant or erotic) or “negative” (anger, fear, disgust, sadness) and evaluated results in terms of a positive vs. negative valence. The authors found that men were significantly more often reported as having higher responses to positive stimuli in the left amygdala (amongst other structures), while women were reported as being more responsive to negative stimuli in the same region. By contrast, Fusar-Poli et al. (2009) performed a meta-analysis of 105 studies in which the stimuli were limited to emotional faces, and found that these stimuli significantly increased activity in the right amygdalae of men compared to women, with no significant effect of gender in the left amygdala. A prior meta-analysis by Sergerie et al. (2008) of emotional processing imaging studies reporting amygdala activation did not find gender to be a predictive variable for lateralization of activity. While the contradictory results of these meta-analyses may have been partly due to variations in study inclusion criteria and statistical methods, their inconsistencies make it difficult to predict what types of differences in single neuron firing would be expected between male and female participants.

To clarify the effects of gender on human neural response to emotional faces, we examined single neuron firing recorded in the human brain during an experiment showing faces which incidentally varied emotional expression. While this experiment was designed to vary the race of synthetic faces, the faces also depicted positive, neutral, and negative expressions. This allowed us to directly measure differences in the response of single neurons to emotional expressions between the male and female participants. We recorded from four clinically mandated brain areas, including the amydala, a structure proven in lesion studies to be essential for correctly perceiving the emotions of others (Adolphs et al., 1994; Becker et al., 2012), and which has been shown to contain neurons significant for facial emotional processing in single-unit recording experiments in monkeys (Kuraoka and Nakamura, 2007). Since we recorded from both hemispheres, we were also able to determine whether neural activity was lateralized.

In brief, we found a significant gender difference in single-neuron firing rates in response to emotional faces. This difference was localized to the left amygdala, where a greater number of neurons in men fired significantly in response to stimuli than neurons in the same location in women. The men and women in the study also differed behaviorally in response time and accuracy in an emotion identification task.

Methods

Participants

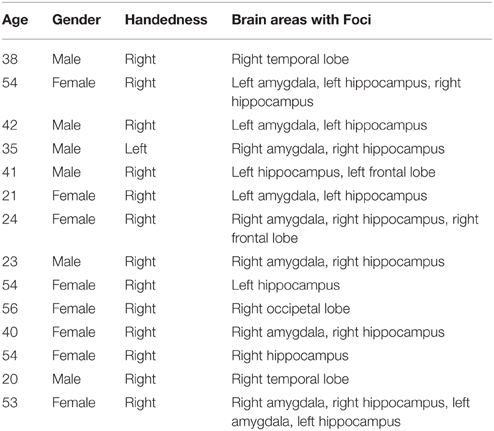

Single-neuron firing activity was recorded from microwires implanted in 14 pharmaco-resistant epilepsy patients, 6 male and 8 female (13 right handed, ages 21–56, mean age = 29) at the Barrow Neurological Institute. Table 1 shows the age and characteristics of illness for each subject enrolled in this study. Patients were being evaluated for possible resection of an epileptogenic focus. Each patient receiving depth electrode monitoring was asked to participate in the study and so no attempt was made to balance the gender of the patients enrolled. Data were recorded from clinically mandated brain areas including the amygdala, the hippocampus, the anterior cingulated cortex and the prefrontal cortex. All patients granted consent to participate in the experiment using a protocol approved by the Institutional Review Board of Saint Joseph's Hospital and Medical Center.

Experimental Procedures

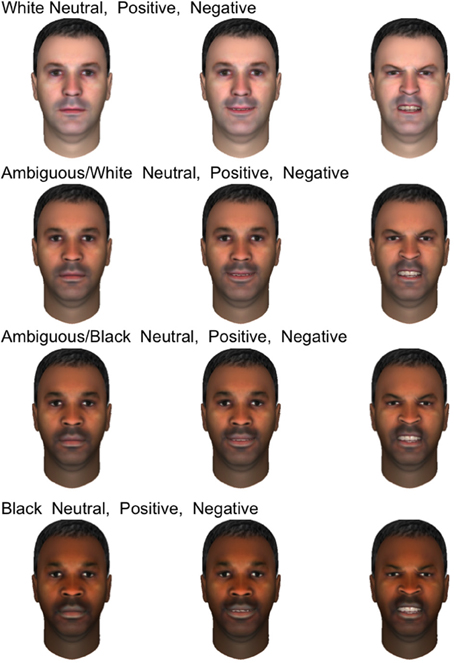

Participants were presented with a set of 120 synthetic male faces which were originally designed to test the effects of race on the firing of human single neurons while also displaying varying emotional expressions. These faces were created using FaceGen Modeler (Inversions, 2006) to permit smooth variation between prototypical Caucasian and African-American. Because these synthetic faces contained variation in emotional expression as well as race, we were able to examine how neural responses to both the displayed emotion and race of the face depended on the gender of the participant. Only male faces were shown in the original design to minimize additional independent variation and maximize power to distinguish between races. Given the adventitious design of this analysis, we did not show both male and female faces. Figure 1 displays 12 of the emotional faces utilized in the present study which were generated from a single prototype face image. Ten prototype face images were used to generate the entire set. All three expressions appeared in equal numbers throughout the experiment (40 of each, presented in random order), and the emotions depicted were validated in two perceptual experiments with undergraduate volunteers from Arizona State University (see SI Methods). Stimulus race had a significant effect on firing rate (will be reported separately), but men and women did not differ with regards to this response, making stimulus race of lesser importance to the present study.

Figure 1. Examples of emotional stimuli presented to subjects. The faces shown are generated from a single prototype face image and depict four races, from top to bottom rows: white, ambiguous white, ambiguous black, and black. In each row, three emotional expressions are shown: neutral, positive, and negative.

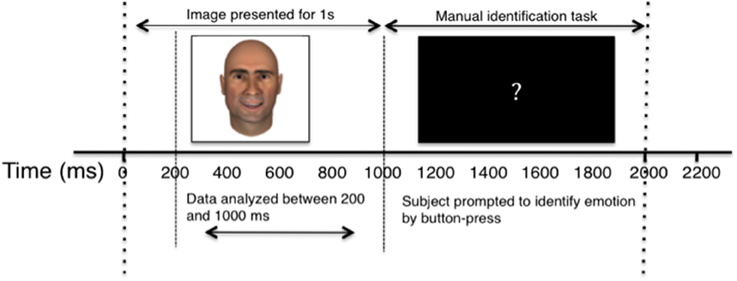

Participants were presented with centrally-located facial images appearing on a laptop screen subtending approximately 11° visual angle. Images appeared for 1000 ms, and were followed by a black screen with a centrally-located white question mark for 2000 ms, during which time the participants were instructed to classify the emotion of the preceding face. Participants identified emotion by pressing one of three buttons on a trackpad labeled “Sad,” “Happy,” and “Neutral” and were instructed to use the “Sad” button for any sad, angry, or negative emotion. Each face in the study was presented six times, with each experiment consisting of 720 trials in total. For a diagrammatic representation of the behavioral task, see Figure 2.

Figure 2. Diagram of single experimental trial. The horizontal axis depicts the passage of time, in milliseconds. Dashed lines separate key stages of the experiment, and labeled arrow bars distinguish between the recording and behavioral segments.

Cells were recorded continuously, but only firing between 200 ms and 1000 ms after image presentation (prior to manual identification task) was included in the analysis. Trials in which participants responded early, pressed more than one identifying key, or failed to respond were eliminated from analyses. The elimination of error trials did not affect the reported results.

Microwire Implantation, Signal Amplification, and Spike Sorting

We used surgical and recording methods which we have previously described in Valdez et al. (2013). In brief, nine microwires were implanted stereotactically (Medtronic StealthStation) with a 1.5T structural MRI through skull bolts at each recording site protruding from the clinical depth electrodes used to locate epileptogenic focus (Dymond et al., 1972; Fried et al., 1999). In the hippocampus, the target for microwire tip placement was the mid-body of the hippocampus. In the amygdala, the target was the center of the amygdala; in the anterior cingulate cortex, the target was the anterior cingulate gyrus, above and behind the genu of the corpus callosum; in the ventromedial prefrontal cortex, the target was just below the anterior cingulate gyrus and corpus callosum, in the most anterior portion of the gyrus rectus. Using these techniques, the error in tip placement is estimated to be ±2 mm (Mehta et al., 2005). While this resolution is insufficient to determine subfields within the hippocampus or nuclei within the amygdala, it is sufficient to allow discovery of neural firing differences between major brain areas and sides of the brain.

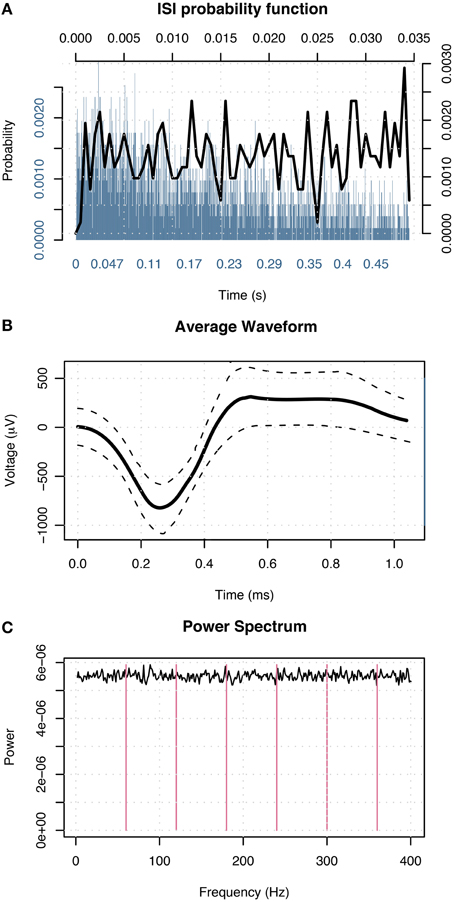

Following patients' recovery from surgery, the microwires were connected to headstage amplifiers which applied a 400x gain to yield eight recording channels. Microwire tips continuously recorded extracellular action potentials corresponding to single-neuron activity. Possible action potentials were high-pass filtered to determine event shape, and all events recorded from individual channels were grouped into sets of similar waveform shape (clusters) with the open-source clustering program KlustaKwik (http://klustakwik.sourceforge.net). Post-sorting, each cluster was classified as either noise, multi-unit or single-unit activity per the criteria listed in Valdez et al. (2013) Table 1. Figure 3 illustrates events in a cluster classified as single-unit activity after sorting.

Figure 3. Events in a cluster in the left amygdala of a male classified as single-unit activity. (A) Distribution of interspike intervals over two duration scales: broad range 0–0.5 s (blue) and narrow range 0–0.035 s (black) shown on x-axis. The y-axis designates probability of interval. (B) Average waveform shape. Time (ms) is shown on the x-axis. The y-axis denotes scale in μV. The dashed lines indicate ± 1 SD at each sample point. (C) Power spectral density of event times. The x-axis displays frequency in Hz; magenta lines indicate primary and harmonics of the power line frequency (60 Hz). The y-axis shows power spectral density in events 2/Hz.

This spike-sorting technique has been previously used in multiple publications (Steinmetz et al., 2013; Valdez et al., 2013, 2015; Wixted et al., 2014). In our experience, this technique (Valdez et al., 2013) produces results comparable to prior reports in other laboratories (Viskontas et al., 2007) in terms of recorded waveform shapes, inter-spike intervals, and firing rates (also see Wild et al., 2012 regarding variability in spike sorting depending on the particular waveforms shapes being detected). While it is important to note that these and other reports of human single-unit recordings (Kreiman et al., 2000; Steinmetz, 2009) do not achieve the quality of unit separation achievable in animal recordings (Hill et al., 2011), they nonetheless represent neural activity at a much finer spatial and temporal scale than otherwise achievable.

Analysis

Each neuron in the study was classified according to brain area, side, recording quality, and gender of participant. Only neurons with well-isolated single unit activity were included in analyses. Our analysis here parallels that we recently used to examine object encoding (Valdez et al., 2015). We created a nested set of generalized linear models (McCullagh and Nelder, 1989) in R (R Development Core Team, 2009) testing firing rate as a function of stimulus affect for each neuron in each experiment (n = 936). Image luminance and contrast were included as additional factors given their recently demonstrated effect on firing rate in the amygdala (Steinmetz et al., 2011). Model 1 contained only a constant. Model 2 contained a constant plus the addition of luminance and contrast factors. Model 3 contained constant, luminance and contrast factors plus the addition of the stimulus emotion. Models 2 and 3 were compared with an ANOVA F-test (df = 2) for each neuron in the study to determine whether the addition of the affect stimuli factor improved goodness of fit (McCullagh and Nelder, 1989). Neurons with a resultant p-value < 0.05 were deemed significantly affected by stimulus emotion.

Binomial tests were used to determine if firing activity within brain areas was significantly affected by differences in stimuli facial emotion. Using a binomial distribution, we tested the probability of the observed outcome plus all less likely outcomes against the outcome expected by random chance (Stuart et al., 1999). Our expected outcome was that 5% of neurons in each area were significant for stimuli affect and the p-values for this test were adjusted using a Benjamini–Hochberg (BH, Benjamini and Hochberg, 1995) adjustment for false discovery rate.

To determine whether a sex difference was present within a tested brain area, neurons were classified by the gender of the subject. A Fisher's Exact Test (Fisher, 1922) was conducted to determine whether the ratios of neurons with a significant or non-significant response in each brain area in men and women differed significantly, based on a null hypothesis that these ratios are equal. The p-values for these tests were also corrected using a BH correction (Benjamini and Hochberg, 1995).

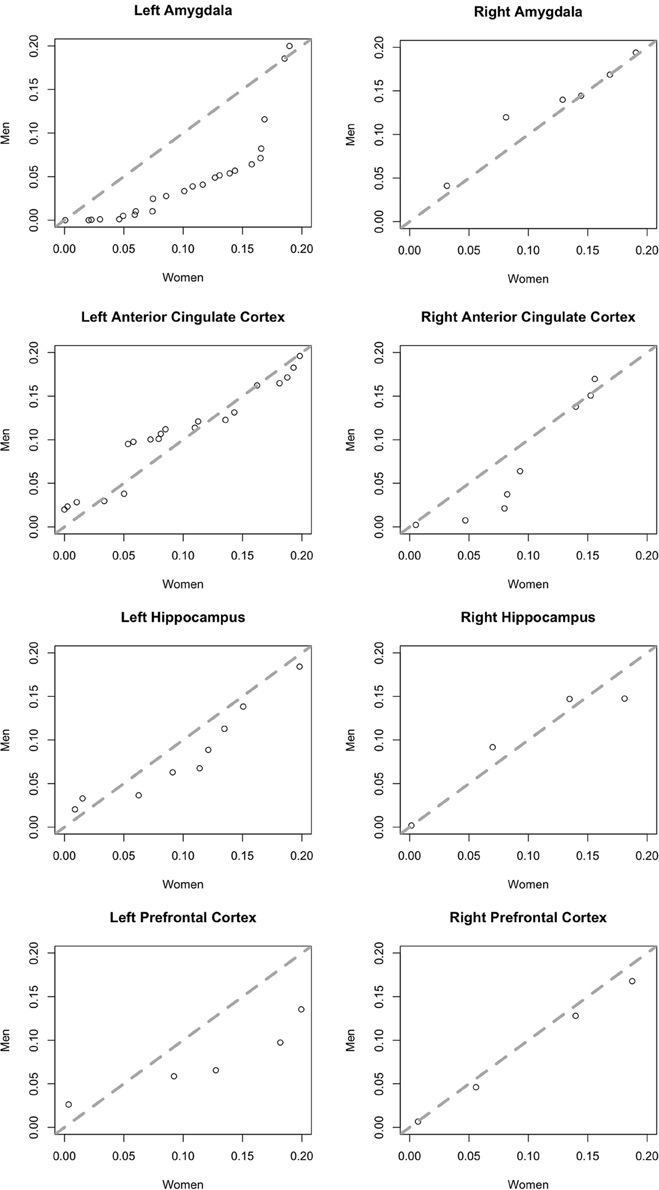

To ensure this, gender-based trends did not result from the presence of a small number of data outliers, we examined the distribution of F-test p-values for both genders in addition to ratios of significant and non-significant neurons. We plotted the quantiles of the probability distributions of p-values < 0.2 for neurons in men against those in women in Quantile-Quantile (Q-Q) plots (Chambers et al., 1983). Equal distributions generate points along the line y = x. Deviation from this 45° line indicates that the distributions differ with regards to dispersal. Since we plotted p-values of neurons in males along the y-axis and those from females along the x-axis, points deviating rightward from the central line indicate greater dispersal of values in females than in males.

While prior studies have often restricted analysis of the effects of independent factors, such as emotion, to neurons with responses that differ from background firing, we do not do so, because this form of pre-selection can lead to erroneous conclusions (Steinmetz and Thorp, 2013). Instead, we applied multinomial logistic regression (MLR McCullagh and Nelder, 1989) to determine which categories of facial emotion yielded firing rates which differed most significantly from background firing (essentially a simplified version of the point-process framework proposed by Truccolo et al., 2005). This technique provides a means of examining the relative effects of multiple explanatory covariates on a nominal dependent variable by constructing linear predictor functions for each covariate. For our purposes, we created a model for each cluster to predict the affect category of the image shown given the neuron's firing rate. Examination of the model coefficient for each category indicates the likelihood of obtaining observed firing data in each category assuming it was background firing. Statistically reliable changes in coefficient values from zero were determined using multivariate t-tests (Hosmer and Lemeshow, 2000, Chap. 2), one for each neuron. Once again, neurons were classified by brain area, side, and gender of participant. Fisher's Exact Tests were applied to the aforementioned tables to determine whether males and females differed significantly in response to the different categories of facial emotion.

Results

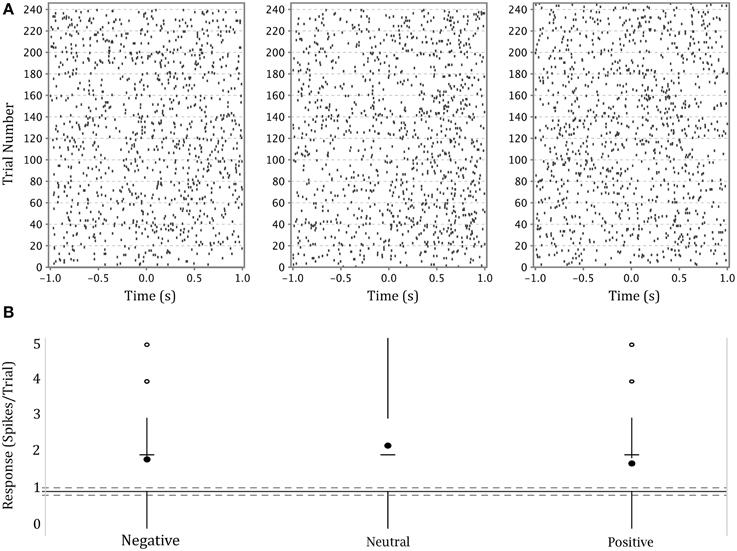

The firing activity of a significant number of neurons depended on stimulus affect. Figure 4 shows an example of a neuron in the left amygdala of a male subject with a significant effect of emotion (This is the same neuron whose waveform is shown in Figure 3). The higher density of dots in each raster plot following presentation of the stimulus demonstrates a generalized heightened firing in response to the presentation of any emotional faces as compared to the preceding black screen. The neuron represented in this figure showed a stronger response to neutral expressions (center panel), particularly between 500 and 1000 ms after stimulus presentation. This is also apparent in the modified box plot in the lower panel where the mean of firing for this emotion is above that for other emotions. (Note that we show a modified box-plot in order to visually compare responses to firing in all background intervals, rather than simply comparing to background intervals preceding trials depicting a particular emotion.)

Figure 4. (A) Upper panel: raster plots of the responses of a single neuron in the left amygdala of a male participant to each of three categories of affect. Y axis—trial numbers. The points on each line represent the firing of action potentials during each trial. X axis—time in seconds from onset of stimulus. The rasters are specific to the affect categories labeled at the base of part B. (B) Lower panel: Modified box-plot of the firing rate of the same neuron in the left amygdala of a male participant as a function of stimulus affect. The center dots show mean response per affect category. The horizontal dashes show median response per affect category. Vertical lines extend from (where IQR = interquartile range, n = number of observations; equivalent to a 95% confidence interval for differences between medians, Chambers et al., 1983, p. 62) to the data point furthest from the median which is no more than ±(1.5*IQR) beyond the first or third quartiles. Open circles show responses outside that range. Solid gray line shows the mean of background firing; dashed gray lines at of the background firing (), representing a 95% confidence interval for the median of background firing.

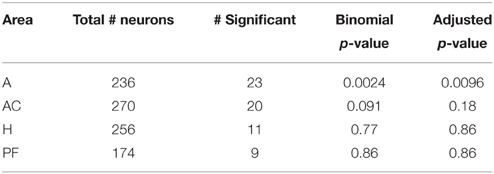

To compare the activity of neurons in populations across several brain areas, we tested for a selective response to emotion in all neurons in all brain areas. (We do not limit analysis to only neurons with a generalized visual response to avoid the errors which can arise from such pre-selection, Steinmetz and Thorp, 2013). Table 2 shows the number of neurons in each brain area with firing rates significantly affected by stimulus emotion. The rightmost column lists the p-values of a binomial test of whether the fractions of neurons with a significant response to emotion are greater than that expected by chance. The amygdala was the only brain area in which the firing rates of a significant number of neurons were influenced by stimulus emotion.

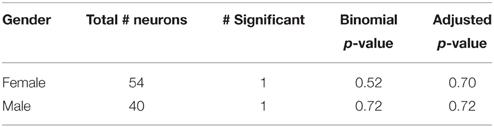

Within the amygdala, we found this activity was highly lateralized, and differed measurably between men and women. Tables 3, 4 show the numbers of neurons with a significant response split by gender in the left and right amygdala, respectively. In the left amygdala, 15% of neurons had a significant effect of affect. When amygdalar neurons were split by gender of participant, a clear difference emerged. In the left amygdala of males, 23% of neurons had a significant effect of affect, whereas only 7.9% of neurons in females had such an effect. Binomial tests found this fraction to be significant in males (p = 7.2E-07) but not in females (p = 0.28). A Fisher's Exact Test comparing these two ratios found the ratios to be significantly different between males and females (p = 0.013).

To better illustrate differences between brain areas in the fractions of neurons with a significant effect of affect, we generated quantile-quantile (Q-Q) plots of the p-value distributions of tests of the effect of affect in male vs. female subjects. The distribution in the left amygdala (Figure 5) demonstrates that the significant difference we found in this area between men and women is not due to the presence of a small number of potential outliers, but rather an overall shift in the distribution. This plot contrasts sharply with the plots of brain areas in which no significant gender difference was found (Figure 5), and in which the data points fall close to the central diagonal line. The only other brain area in which the Q-Q plot of p-value distributions revealed a potential trend is the right amygdala (Figure 5), though this difference failed to reach significance.

Figure 5. Quantile-quantile plots of the distributions of p-values of an F-test for an effect of affect on neurons in men (y-axis) plotted against those in women (x-axis). Plots include more significant values, p < 0.2. The central diagonal dashed line shows the expected result if the distributions in men and women were the same.

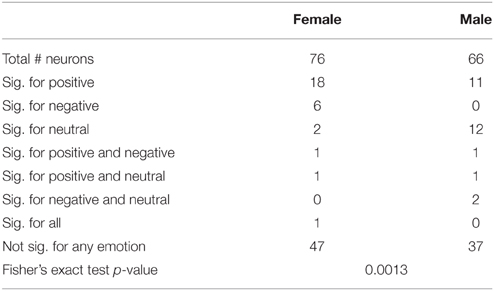

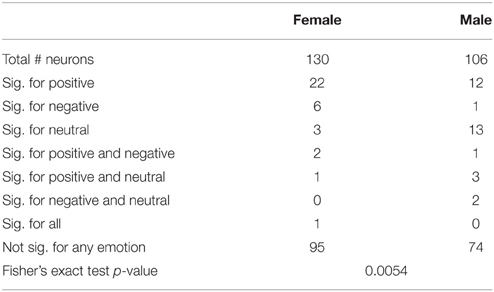

We next determined which categories of emotion (negative, neutral, or positive) particular neurons were responding to using multinomial logistic regression (MLR). This regression determines whether a category of emotion can reliably be predicted based on changes of the firing of a neuron relative to background and provides a single test for each neuron which determines both that the response is different from background firing and provides information distinguishing between different emotions. The results of MLR analyses of the total and left amygdala are described in Tables 5, 6. Notably, neurons in males were most highly responsive to stimuli with neutral affect, whereas neurons in females were most responsive to positive affect. Fisher's exact tests comparing the proportions of neurons affected by specific categories of emotion in men and women found a significant difference between genders in the total amygdala (p = 0.0054) and left amygdala (p = 0.0013).

Table 5. Neurons significant for specific emotions relative to background firing in the total amydala.

As an additional test that neuronal responses to the three emotions differed from background firing, we tested whether the responses of neurons with a significant effect of emotion had responses which significantly differed from background firing, using the changes from background test (Steinmetz and Thorp, 2013). We found that 89% of neurons with a significant effect of emotion also had firing which differed significantly from background. Overall, the percentage of neurons whose responses differed significantly from background across all brain areas was 28%, which demonstrates a general effect of viewing an image of a face vs. the black background.

Finally, we note that men and women differed in performance of the manual emotion identification task. Consistent with the findings of prior studies (Hampson et al., 2006), we found that women identified the facial emotions of the stimulus images more quickly than men (see Figure S1). Additionally, males in the study correctly identified stimulus affect nearly 20% more often than females did (see Figure S2). We did not, however, find a significant correlation between behavior and neural responses on a trial by trial basis.

Discussion

We found that neurons in the left amygdalae of men were more responsive to emotional faces than neurons in the same location in women. Logistic regression analyses demonstrated that neurons in men were most responsive to faces with neutral expressions, while those in women were most responsive to faces with positive expressions.

One prior publication reported human amygdalar neuron firing in response to the presentation of emotional face (Fried et al., 1997). Our results in Table 1 are in good agreement with these prior results as 10% of neurons in the amygdala overall in the present study responded to emotion. Our results differ from those reported by (Fried et al., 1997), that 10–20% of neurons in the amygdala responded to the emotion shown on a face (depending on mnemonic task) and that 10% of these neurons responded to the gender of the presented face, though they did not examine differences between genders of the subjects. Kawasaki et al. (2005) who found that 21% of neurons in the human ventromedial prefrontal cortex (VMPFC) responded selectively to the presentation of complex emotional scenes. Results in Table 1 show that 12% (9/174) of neurons in ventromedial prefrontal cortex respond selectively to the emotion shown on a face. One possible cause of this difference in the reported fraction of responsive neurons in the VMPFC is that Kawasaki et al. (2005) showed complex scenes designed to reliably signal strong and specific emotions (Lang et al., 2005), whereas our adventitous analysis was limited to faces depicting specific emotions.

Our experimental design required subjects to actively assess and identify the emotional state of presented faces. Completion of task trials therefore involved explicit emotional processing, as opposed to implicit processing, which pertains to the assessment of non-emotional criteria (e.g., gender or age). While inconsistencies within the neuroimaging literature have called into question whether the amygdala has greater activation under explicit or implicit experimental conditions (Habel et al., 2007), this work demonstrates unequivocally that the amygdala indeed plays an active role in emotional processing under explicit conditions.

Although previous work utilizing non-invasive techniques to assess gender differences in hemodynamic responses to emotional stimuli has produced conflicting results (Sergerie et al., 2008; Fusar-Poli et al., 2009; Stevens and Hamann, 2012), women are most frequently reported to be the more responsive gender in the neuroimaging literature. Heightened BOLD signals in women, particularly within the amygdala, have been reported to correlate with negative imagery (Klein et al., 2003; Domes et al., 2010; Frewen et al., 2011; Young et al., 2013); this finding is commonly concluded to contribute to higher rates of affective disorders in women (Ohrmann et al., 2010).

By using single-neuron recording to directly measure neural activity within the amygdala, we obtained results which suggest the opposite; namely, that neurons in amygdalae of men are more responsive to emotional stimuli than those in women. There are several possible explanations for the difference between our results and the bulk of those obtained via neuroimaging. Firstly, the relationship between the BOLD signal and underlying neural activity is still not well-understood (Sirotin and Das, 2009; Ekstrom, 2010; Boynton, 2011; Handwerker and Bandettini, 2011; Kleinfeld et al., 2011; Martin, 2014), and may well depend on the particular task being performed and the brain area involved (Sirotin and Das, 2009; Conner et al., 2011; Cardoso et al., 2012; Huo et al., 2014; Lima et al., 2014). Thus, the discrepancy between these results may simply reflect a different relationship between neural firing and the BOLD signal in the amygdala.

Secondly, discrepancies within the neuroimaging literature have been attributed, amongst other factors, to variations in experiment design (Derntl et al., 2012) and our behavioral task differed from those used in most prior studies, particularly regarding the nature of the stimuli. All emotional faces presented as stimuli were ostensibly male, designed originally to test neural response to race in a task in which gender was standardized. Since, viewer sex and sex of presented images have been shown to influence results of fMRI studies as well as behavioral identification tasks (Proverbio et al., 2012; Spreckelmeyer et al., 2013), it is possible that neural responses are largest for own-sex faces, as has also been shown an ERP study (Doi et al., 2010).

Both of these potential explanations suggest an interesting avenue for future research: how would single-neuron firing rates differ between men and women when viewing own-sex vs. opposite sex faces? If sample sizes permit, it may also prove intriguing to include additional subject variables which have been shown to influence BOLD signals in neuroimaging studies, including participant sexual preference (Perry et al., 2013) and menstrual cycle phase in female participants (Derntl et al., 2008). Since, women have been shown to respond significantly more quickly and accurately to human vs. avatar faces in an emotion identification task, with no comparable result observed in men (Moser et al., 2006), it would also be desireable to conduct this study using actual photographs showing emotional expressions rather than avatar faces.

Intriguingly in this study, males were more accurate in identifying the emotion expressed on faces whereas women had shorter response times. While these differences did not correlate with neural firing on a trial-by-trial basis, the overall changes in neural firing suggest that the higher accuracy of males may be related to larger changes in neural firing which are present in the left amygdala of male subjects. We did not observe a specific neural firing rate correlate of the faster responses in females.

Several limitations of this study should be noted. Firstly, the stimulus images in this study were designed to portray, as opposed to evoke, emotions. While these images were not designed to evoke emotion, it has been shown repeatedly that the viewing of emotional faces evokes the presented emotion in the participant, particularly with regards to “strong” emotions including happiness and sadness (Hess and Blairy, 2001; Wild et al., 2001).

Secondly, due to the invasive nature of single-unit recording, participants could not be chosen at random. All subjects shared a common diagnosis of refractory epilepsy, and were thus neurologically distinct from the majority of the population. We are not aware, however, of any reports that epileptics process emotion in a fashion distinct from that of the general public, nor that it has been demonstrated that men and women with epilepsy have gender-linked differences in emotional responses unequal with those observed in healthy individuals.

What is the potential cause of these gender based differences in neural responses to emotional faces? Given the limitations of this adventitous experimental design, we can speculate that these differences may reflect evolutionary differences accrued as a means of survival under respective selective pressures. Men have born the brunt of intergroup conflict dating back to hunter-gatherer societies (Van Vugt, 2009), and behavioral vestiges of this pattern have been consistently shown to persist to modern day. Cross-culturally, male-on-male violence accounts for more than half of homicide crimes (Jason et al., 1983; Eckhardt and Pridemore, 2009; Häkkänen-Nyholm et al., 2009), while the vast majority of homicides that do involve female victims or perpetrators take place between family members or acquaintances (Kellermann and Mercy, 1992). Extending to the modern day, males near-exclusively bear the brunt of violence from strangers or outgroup individuals. Men may have evolved greater responsiveness to potential social cues in male expressions due to their higher likelihood of encountering direct physical threat from other males in the evolutionary landscape (McDonald and Navarrete, 2012) (women, by contrast, would have been more likely to face sexual threat, the results of which would not necessarily impair reproductive fitness). This hypothesis is supported by our finding that males are most responsive to neutral faces. Also supporting this hypothesis, a 2009 study by Hareli et al. (2009) found that neutral expressions in men, but not in women, were perceived as being more dominant than men expressing sadness or happiness, and thus sensitivity to this expression would enable male viewers to better avoid physical altercation.

Alternatively, the observed results may be due to differences in inter- vs. intra-sex male expression of empathy, an emotion whose neuronal network has been shown in imaging studies to include the amygdala (Völlm et al., 2006). Prior work demonstrating that men are better able to correctly identify the expressions of male faces attributed this result in part to greater activation of the amygdala, specifically with regards to its role in empathy (Schiffer et al., 2013). While this may account for the heightened firing rate in this structure in males following the presentation of male stimuli, it fails to account for the lack of a similarly heightened rate in females, as the latter have been shown to be the more empathetic sex across a myriad of tests utilizing a wide range of techniques (Christov-Moore et al., 2014).

In summary, our findings demonstrate emotional differences between men and women at the single neuron level, thereby illustrating the profound effect of gender on the human brain. This finding necessitates the inclusion of subject gender as a potential variable in single-unit recording studies, particularly those whose scopes include firing rates within the amygdala. Gender should also be a factor of interest in single-unit experiments which utilize emotional imagery or human expressions.

Author Contributions

MN analyzed data and created text and figures. PS Designed and conducted experiments and created text and figures. KS Performed patient surgeries. DT supervised patient safety and clinical compliance.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We would first like to thank the patients at the Barrow Neurological Institute who volunteered for these experiments. We also thank Drs S. Goldinger and M. Papesh for the creation of the stimulus set and Dr. R. Adolphs for comments on the manuscript. Finally, we would like to thank E. Cabrales and Dr. A. Valdez for providing technical assistance. This research was funded by NIH Grant 1R21DC009871-0, the Barrow Neurological Foundation, and the Arizona Biomedical Research Commission #09084092.

Supplementary Material

The Supplementary Material for this article can be found online at: http://journal.frontiersin.org/article/10.3389/fnhum.2015.00499

SI Methods

Validation of Designations of Emotion

The intended emotion designations were validated in two perceptual experiments. Subjects were undergraduate volunteers from ASU with normal or corrected vision. (Epilepsy patients from the present study did not participate in validation testing.) In the first experiment (n = 25), subjects were shown all faces in random order and were asked to quickly classify each one as either “positive” or “negative” in expression, with no option to respond “neutral.” Variations in apparent race were included in the experiment but were orthogonal to the task. Positive faces were classified as positive in 93.2% of all trials, with a mean correct response time (RT) of 515 ms. Negative faces were classified as negative in 98.1% of all trials, with a mean correct RT of 499 ms. Neutral faces were classified as positive in 41.5% of trials, with a mean RT of 688 ms and as negative in the remaining 58.5% of trials, with a mean RT of 601 ms. This indicated that positive and negative expressions were quickly and accurately appreciated. Neutral faces generated more evenly divided responses, and all responses were far slower relative to non-ambiguous faces. There was, however, a bias toward interpreting neutral expressions as being slightly more negative.

Figure S1. Tufte-style box plot (21) of mean first response time for each experiment by affect and gender.Response times are grouped by affect and gender. The center dots show intragender means. Vertical lines extend from (where IQR = interquartile range, n = number of observations; equivalent to a 95% confidence interval for differences between medians, Chambers et al., 1983, p. 62) to the data point furthest from the median which is no more than ±(1.5*IQR)beyond the first or third quartiles. Both males and females were slowest to identify neutral expressions, and the largest gap in response time was in response to negative faces.

Figure S2. Tufte-style box plot (21) of mean percentage correct of identification of affect of stimuli by experiment. Percentage correct is grouped by gender. The center dots show intragender means. Vertical lines extend from (where IQR = interquartile range, n = number of observations; equivalent to a 95% confidence interval for differences between medians, Chambers et al., 1983, p. 62) to the data point furthest from the median which is no more than ±(1.5*IQR)beyond the first or third quartiles. Open circles show responses outside that range. Males had higher accuracy in the expression identification task than females across all categories of affect.

References

Adolphs, R., Tranel, D., Damasio, H., and Damasio, A. (1994). Impaired recognition of emotion in facial expressions following bilateral damage to the human amygdala [see comments]. Nature 372, 669–672. doi: 10.1038/372669a0

Becker, B., Mihov, Y., Scheele, D., Kendrick, K. M., Feinstein, J. S., Matusch, A., et al. (2012). Fear processing and social networking in the absence of a functional amygdala. Biol. Psychiatry 72, 70–77. doi: 10.1016/j.biopsych.2011.11.024

Benjamini, Y., and Hochberg, Y. (1995). Controlling the false discovery rate: a practical and powerful approach to multiple testing. J. R. Stat. Soc. B 57, 289–300.

Boynton, G. M. (2011). Spikes, BOLD, attention, and awareness: a comparison of electrophysiological and fMRI signals in V1. J. Vis. 11:12. doi: 10.1167/11.5.12

Cardoso, M. M., Sirotin, Y. B., Lima, B., Glushenkova, E., and Das, A. (2012). The neuroimaging signal is a linear sum of neurally distinct stimulus- and task-related components. Nat. Neurosci. 15, 1298–1306. doi: 10.1038/nn.3170

Chambers, J. M., Cleveland, W. S., Kleiner, B., and Tukey, P. A. (1983). Graphical Methods for Data Analysis. California, CA: Wadsworth & Brooks.

Christov-Moore, L., Simpson, E. A., Coudé, G., Grigaityte, K., Iacoboni, M., and Ferrari, P. F. (2014). Empathy: gender effects in brain and behavior. Neurosci. Biobehav. Rev. 46(Pt 4), 604–627. doi: 10.1016/j.neubiorev.2014.09.001

Conner, C. R., Ellmore, T. M., Pieters, T. A., Disano, M. A., and Tandon, N. (2011). Variability of the relationship between electrophysiology and BOLD-fMRI across cortical regions in humans. J. Neurosci. 31, 12855–12865. doi: 10.1523/JNEUROSCI.1457-11.2011

Derntl, B., Habel, U., Robinson, S., Windischberger, C., Kryspin-Exner, I., Gur, R. C., et al. (2012). Culture but not gender modulates amygdala activation during explicit emotion recognition. BMC Neurosci. 13:54. doi: 10.1186/1471-2202-13-54

Derntl, B., Windischberger, C., Robinson, S., Lamplmayr, E., Kryspin-Exner, I., Gur, R. C., et al. (2008). Facial emotion recognition and amygdala activation are associated with menstrual cycle phase. Psychoneuroendocrinology 33, 1031–1040. doi: 10.1016/j.psyneuen.2008.04.014

Doi, H., Amamoto, T., Okishige, Y., Kato, M., and Shinohara, K. (2010). The own-sex effect in facial expression recognition. Neuroreport 21, 564–568. doi: 10.1097/WNR.0b013e328339b61a

Domes, G., Schulze, L., Böttger, M., Grossmann, A., Hauenstein, K., Wirtz, P. H., et al. (2010). The neural correlates of sex differences in emotional reactivity and emotion regulation. Hum. Brain Mapp. 31, 758–769. doi: 10.1002/hbm.20903

Dymond, A. M., Babb, T. L., Kaechele, L. E., and Crandall, P. H. (1972). Design considerations for the use of fine and ultrafine depth brain electrodes in man. Biomed. Sci. Instrum. 9, 1–5.

Eckhardt, K., and Pridemore, W. A (2009). Differences in female and male involvement in lethal violence in Russia. J. Crim. Justice 37, 55–64. doi: 10.1016/j.jcrimjus.2008.12.009

Ekstrom, A. (2010). How and when the fMRI BOLD signal relates to underlying neural activity: the danger in dissociation. Brain Res. Rev. 62, 233–244. doi: 10.1016/j.brainresrev.2009.12.004

Fisher, R. A. (1922). On the interpretations of chi-squared from contigency tables, and the calculation of p. J. R. Stat. Soc. 85, 87–94. doi: 10.2307/2340521

Frewen, P. A., Dozois, D. J., Neufeld, R. W., Densmore, M., Stevens, T. K., and Lanius, R. A. (2011). Neuroimaging social emotional processing in women: fMRI study of script-driven imagery. Soc. Cogn. Affect. Neurosci. 6, 375–392. doi: 10.1093/scan/nsq047

Fried, I., MacDonald, K. A., and Wilson, C. L. (1997). Single neuron activity in human hippocampus and amygdala during recognition of faces and objects. Neuron 18, 753–765. doi: 10.1016/S0896-6273(00)80315-3

Fried, I., Wilson, C. L., Maidment, N. T., Engel, J. Jr., Behnke, E., Fields, T. A., et al. (1999). Cerebral microdialysis combined with single-neuron and electroencephalographic recording in neurosurgical patients. Technical note. J. Neurosurg. 91, 697–705. doi: 10.3171/jns.1999.91.4.0697

Fusar-Poli, P., Placentino, A., Carletti, F., Landi, P., Allen, P., Surguladze, S., et al. (2009). Functional atlas of emotional faces processing: a voxel-based meta-analysis of 105 functional magnetic resonance imaging studies. J. Psychiatry Neurosci. 34, 418–432.

Goldstein, J. M., Seidman, L. J., Horton, N. J., Makris, N., Kennedy, D. N., Caviness, V. S. Jr., et al. (2001). Normal sexual dimorphism of the adult human brain assessed by in vivo magnetic resonance imaging. Cereb. Cortex 11, 490–497. doi: 10.1093/cercor/11.6.490

Habel, U., Windischberger, C., Derntl, B., Robinson, S., Kryspin-Exner, I., Gur, R. C., et al. (2007). Amygdala activation and facial expressions: explicit emotion discrimination versus implicit emotion processing. Neuropsychologia 45, 2369–2377. doi: 10.1016/j.neuropsychologia.2007.01.023

Häkkänen-Nyholm, H., Putkonen, H., Lindberg, N., Holi, M., Rovamo, T., and Weizmann-Henelius, G. (2009). Gender differences in Finnish homicide offence characteristics. Forensic Sci. Int. 186, 75–80. doi: 10.1016/j.forsciint.2009.02.001

Hampson, E., van Anders, S., and Mullin, L. (2006). A female advantage in the recognition of emotional facial expression: test of an evolutionary hypothesis. Evol. Hum. Behav. 27, 401–416. doi: 10.1016/j.evolhumbehav.2006.05.002

Handwerker, D. A., and Bandettini, P. A. (2011). Simple explanations before complex theories: alternative interpretations of Sirotin and Das' observations. Neuroimage 55, 1419–1422. doi: 10.1016/j.neuroimage.2011.01.029

Hareli, S., Shomrat, N., and Hess, U. (2009). Emotional versus neutral expressions and perceptions of social dominance and submissiveness. Emotion 9, 378–384. doi: 10.1037/a0015958

Hess, U., and Blairy, S. (2001). Facial mimicry and emotional contagion to dynamic emotional facial expressions and their influence on decoding accuracy. Int. J. Psychophysiol. 40, 129–141. doi: 10.1016/S0167-8760(00)00161-6

Hill, D. N., Mehta, S. B., and Kleinfeld, D. (2011). Quality metrics to accompany spike sorting of extracellular signals. J. Neurosci. 31, 8699–8705. doi: 10.1523/JNEUROSCI.0971-11.2011

Huo, B. X., Smith, J. B., and Drew, P. J. (2014). Neurovascular coupling and decoupling in the cortex during voluntary locomotion. J. Neurosci. 34, 10975–10981. doi: 10.1523/JNEUROSCI.1369-14.2014

Inversions. (2006). Singular. “FaceGen 3.1 Full Software Development Kit Documentation.” Available online at: www.facegen.com

Jason, J., Flock, M., and Tyler, C. W. Jr. (1983). Epidemiologic characteristics of primary homicides in the United States. Am. J. Epidemiol. 117, 419–428.

Kawasaki, H., Adolphs, R., Oya, H., Kovach, C., Damasio, H., Kaufman, O., et al. (2005). Analysis of single-unit responses to emotional scenes in human ventromedial prefrontal cortex. J. Cogn. Neurosci. 17, 1509–1518. doi: 10.1162/089892905774597182

Kellermann, A. L., and Mercy, J. A. (1992). Men, women, and murder: gender-specific differences in rates of fatal violence and victimization. J. Trauma 33, 1–5. doi: 10.1097/00005373-199207000-00001

Klein, S., Smolka, M. N., Wrase, J., Grusser, S. M., Mann, K., Braus, D. F., et al. (2003). The influence of gender and emotional valence of visual cues on FMRI activation in humans. Pharmacopsychiatry 36(Suppl. 3), S191–S194. doi: 10.1055/s-2003-45129

Kleinfeld, D., Blinder, P., Drew, P. J., Driscoll, J. D., Muller, A., Tsai, P. S., et al. (2011). A guide to delineate the logic of neurovascular signaling in the brain. Front. Neuroenergetics 3:1. doi: 10.3389/fnene.2011.00001

Kreiman, G., Koch, C., and Fried, I. (2000). Category-specific visual responses of single neurons in the human medial temporal lobe. Nat. Neurosci. 3, 946–953. doi: 10.1038/78868

Kuraoka, K., and Nakamura, K. (2007). Responses of single neurons in monkey amygdala to facial and vocal emotions. J. Neurophysiol. 97, 1379–1387. doi: 10.1152/jn.00464.2006

Lang, P. J., Bradley, M. M., and Cuthbert, B. N. (2005). “International Affective Picture System (IAPS),” in Technical Manual and Affective Ratings (Gainesville, FL).

Lima, B., Cardoso, M. M., Sirotin, Y. B., and Das, A. (2014). Stimulus-related neuroimaging in task-engaged subjects is best predicted by concurrent spiking. J. Neurosci. 34, 13878–13891. doi: 10.1523/JNEUROSCI.1595-14.2014

Martin, C. (2014). Contributions and complexities from the use of in vivo animal models to improve understanding of human neuroimaging signals. Front. Neurosci. 8:211. doi: 10.3389/fnins.2014.00211

McCullagh, P., and Nelder, J. A. (1989). Generalized Linear Models, 2nd Edn. (Chapman & Hall/CRC Monographs on Statistics & Applied Probability). Boca Raton, FL: Chapman and Hall/CRC.

McDonald, M. M., and Navarrete, C. D. (2012). Evolution and the psychology of intergroup conflict: the male warrior hypothesis. Philos. Trans. R. Soc. Lond. B Biol. Sci. 367, 670–679. doi: 10.1098/rstb.2011.0301

Mehta, A. D., Labar, D., Dean, A., Harden, C., Hosain, S., Pak, J., et al. (2005). Frameless stereotactic placement of depth electrodes in epilepsy surgery. J. Neurosurg. 102, 1040–1045. doi: 10.3171/jns.2005.102.6.1040

Moser, E., Derntl, B., Robinson, S., Fink, B., Gur, R. C., and Grammer, K. (2006). Amygdala activation at 3T in response to human and avatar facial expressions of emotions. J. Neurosci. Methods 161, 126–133. doi: 10.1016/j.jneumeth.2006.10.016

Nugent, B. M., and McCarthy, M. M. (2011). Epigenetic underpinnings of developmental sex differences in the brain. Neuroendocrinology 93, 150–158. doi: 10.1159/000325264

Ohrmann, P., Pedersen, A., Braun, M., Bauer, J., Kugel, H., Kersting, A., et al. (2010). Effect of gender on processing threat-related stimuli in patients with panic disorder: sex does matter. Depress Anxiety 27, 1034–1043. doi: 10.1002/da.20721

Perry, D., Walder, K., Hendler, T., and Shamay-Tsoory, S. G. (2013). The gender you are and the gender you like: sexual preference and empathic neural responses. Brain Res. 1534, 66–75. doi: 10.1016/j.brainres.2013.08.040

Proverbio, A. M., Riva, F., Martin, E., and Zani, S. (2012). Neural markers of opposite-sex bias in face processing. Front. Psychol. 1:169. doi: 10.3389/fpsyg.2010.00169

R Development Core Team. (2009). R: A Language and Environment for Statistical Computing. Vienna: R Foundation for Statistical Computing.

Schiffer, B., Pawliczek, C., Müller, B. W., Gizewski, E. R., and Walter, H. (2013). Why don't men understand women? Altered neural networks for reading the language of male and female eyes. PLoS ONE 8:e60278. doi: 10.1371/journal.pone.0060278

Sergerie, K., Chochol, C., and Armony, J. L. (2008). The role of the amygdala in emotional processing: a quantitative meta-analysis of functional neuroimaging studies. Neurosci. Biobehav. Rev. 32, 811–830. doi: 10.1016/j.neubiorev.2007.12.002

Sirotin, Y. B., and Das, A. (2009). Anticipatory haemodynamic signals in sensory cortex not predicted by local neuronal activity. Nature 457, 475–479. doi: 10.1038/nature07664

Spreckelmeyer, K. N., Rademacher, L., Paulus, F. M., and Gründer, G. (2013). Neural activation during anticipation of opposite-sex and same-sex faces in heterosexual men and women. Neuroimage 66, 223–231. doi: 10.1016/j.neuroimage.2012.10.068

Steinmetz, P. N. (2009). Alternate task inhibits single neuron category selective responses in the human hippocampus while preserving selectivity in the amygdala. J. Cogn. Neurosci. 21, 347–358. doi: 10.1162/jocn.2008.21017

Steinmetz, P. N., and Thorp, C. K. (2013). Testing for effects of different stimuli on neuronal firing relative to background activity. J. Neural Eng. 10:056019. doi: 10.1088/1741-2560/10/5/056019

Steinmetz, P. N., Cabrales, E., Wilson, M. S., Baker, C. P., Thorp, C. K., Treiman, D. M., et al. (2011). Neurons in the human hippocampus and amygdala respond to both low and high level image properties. J. Neurophysiol. 105, 2874–2884. doi: 10.1152/jn.00977.2010

Steinmetz, P. N., Scott Wait, D., Gregory Lekovic, P., Harold Rekate, L., and John Kerrigan, F. (2013). Firing behavior and network activity of single neurons in human epileptic hypothalamic hamartoma. Front. Neurol. 4:210: doi: 10.3389/fneur.2013.00210

Stevens, J. S., and Hamann, S. (2012). Sex differences in brain activation to emotional stimuli: a meta-analysis of neuroimaging studies. Neuropsychologia 50, 1578–1593. doi: 10.1016/j.neuropsychologia.2012.03.011

Stuart, A., Ord, J. K., and Arnold, S. (1999). Kendall's Advanced Theory of Statistics. London: Arnod.

Truccolo, W., Eden, U. T., Fellows, M. R., Donoghue, J. P., and Brown, E. N. (2005). A point process framework for relating neural spiking activity to spiking history, neural ensemble, and extrinsic covariate effects. J. Neurophysiol. 93, 1074–1089. doi: 10.1152/jn.00697.2004

Uddin, M., Sipahi, L., and Kownen, K. C. (2013). Sex differences in DNA methylation may contribute to risk of PTSD and depression: a review of existing evidence. Depress Anxiety 30, 1151–1160. doi: 10.1002/da.22167

Valdez, A. B., Hickman, E. N., Treiman, D. M., Smith, K. A., and Steinmetz, P. N. (2013). A statistical method for predicting seizure onset zones from human single-neuron recordings. J. Neural Eng. 10:016001. doi: 10.1088/1741-2560/10/1/016001

Valdez, A. B., Papesh, M. H., Treiman, D. M., Smith, K. A., Goldinger, S. D., and Steinmetz, P. N. (2015). Distributed representation of visual objects by single neurons in the human brain. J. Neurosci. 35, 5180–5186. doi: 10.1523/JNEUROSCI.1958-14.2015

Van Vugt, M. (2009). Sex differences in intergroup competition, aggression, and warfare: the male warrior hypothesis. Ann. N.Y. Acad. Sci. 1167, 124–134. doi: 10.1111/j.1749-6632.2009.04539.x

Viskontas, I. V., Ekstrom, A. D., Wilson, C. L., and Fried, I. (2007). Characterizing interneuron and pyramidal cells in the human medial temporal lobe in vivo using extracellular recordings. Hippocampus 17, 49–57. doi: 10.1002/hipo.20241

Völlm, B. A., Taylor, A. N., Richardson, P., Corcoran, R., Stirling, J., McKie, S., et al. (2006). Neuronal correlates of theory of mind and empathy: a functional magnetic resonance imaging study in a nonverbal task. Neuroimage 29, 90–98.

Wild, B., Erb, M., and Bartels, M. (2001). Are emotions contagious? Evoked emotions while viewing emotionally expressive faces: quality, quantity, time course and gender differences. Psychiatry Res. 102, 109–124. doi: 10.1016/S0165-1781(01)00225-6

Wild, J., Prekopcsak, Z., Sieger, T., Novak, D., and Jech, R. (2012). Performance comparison of extracellular spike sorting algorithms for single-channel recordings. J. Neurosci. Methods 203, 369–376. doi: 10.1016/j.jneumeth.2011.10.013

Wixted, J. T., Squire, L. R., Yoonhee, J., Papesh, M. H., Goldinger, S. D., Kuhn, J. R., et al. (2014). Sparse and distributed coding of episodic memory in neurons of the human hippocampus. Proc. Natl. Acad. Sci. U.S.A. 111, 9621–9626. doi: 10.1073/pnas.1408365111

Keywords: gender differences, human single neuron, amygdala, emotional expression, facial experssion

Citation: Newhoff M, Treiman DM, Smith KA and Steinmetz PN (2015) Gender differences in human single neuron responses to male emotional faces. Front. Hum. Neurosci. 9:499. doi: 10.3389/fnhum.2015.00499

Received: 12 May 2015; Accepted: 28 August 2015;

Published: 14 September 2015.

Edited by:

John J. Foxe, Albert Einstein College of Medicine, USAReviewed by:

Bonnie J. Nagel, Oregon Health & Science University, USAArun Bokde, Trinity College Dublin, Ireland

Copyright © 2015 Newhoff, Treiman, Smith and Steinmetz. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) or licensor are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Peter N. Steinmetz, Nakamoto Brain Research Institute, 7650 S. McClintock Drive, Ste. 103-432, Tempe, AZ 85284, USA,cGV0ZXJuc3RlaW5tZXR6QHN0ZWlubWV0ei5vcmc=

Morgan Newhoff

Morgan Newhoff David M. Treiman1

David M. Treiman1 Kris A. Smith

Kris A. Smith Peter N. Steinmetz

Peter N. Steinmetz