- 1Department of Software Engineering and Theoretical Computer Science, Technische Universität Berlin, Berlin, Germany

- 2Bernstein Center for Computational Neuroscience Berlin, Berlin, Germany

Neural mass signals from in-vivo recordings often show oscillations with frequencies ranging from <1 to 100 Hz. Fast rhythmic activity in the beta and gamma range can be generated by network-based mechanisms such as recurrent synaptic excitation-inhibition loops. Slower oscillations might instead depend on neuronal adaptation currents whose timescales range from tens of milliseconds to seconds. Here we investigate how the dynamics of such adaptation currents contribute to spike rate oscillations and resonance properties in recurrent networks of excitatory and inhibitory neurons. Based on a network of sparsely coupled spiking model neurons with two types of adaptation current and conductance-based synapses with heterogeneous strengths and delays we use a mean-field approach to analyze oscillatory network activity. For constant external input, we find that spike-triggered adaptation currents provide a mechanism to generate slow oscillations over a wide range of adaptation timescales as long as recurrent synaptic excitation is sufficiently strong. Faster rhythms occur when recurrent inhibition is slower than excitation and oscillation frequency increases with the strength of inhibition. Adaptation facilitates such network-based oscillations for fast synaptic inhibition and leads to decreased frequencies. For oscillatory external input, adaptation currents amplify a narrow band of frequencies and cause phase advances for low frequencies in addition to phase delays at higher frequencies. Our results therefore identify the different key roles of neuronal adaptation dynamics for rhythmogenesis and selective signal propagation in recurrent networks.

Introduction

A prominent characteristic of cortical activity is its rhythmicity as shown by electroencephalography or the local field potential. Dominant oscillation frequencies in these signals range from <1 to 100 Hz and reflect synchronous activity of populations of neurons. Such oscillations are linked to behavioral states (Wang, 2010) and involved in a variety of cognitive functions (Engel et al., 2001; Fries, 2001; Melloni et al., 2007; Ghazanfar et al., 2008; Wang, 2010) as well as pathological conditions (Hammond et al., 2007; Zijlmans et al., 2009; Uhlhaas and Singer, 2010). It is therefore important to understand the mechanisms of oscillations in neuronal networks, how they are initiated and terminated, and how their frequency is determined.

Fast rhythmic activity in the beta and gamma band (>20 Hz) can be generated by network-based mechanisms, such as synaptic excitation-inhibition loops or by feedback inhibition alone (Isaacson and Scanziani, 2011). In these scenarios the oscillation frequency is largely determined by the inhibitory decay time constant (Brunel and Wang, 2003; Tiesinga and Sejnowski, 2009). Low-frequency oscillations, on the other hand, could depend on slow transmembrane outward currents (Compte et al., 2003; Gigante et al., 2007b; Destexhe, 2009), which are mediated by low-threshold voltage-dependent muscarinic (M) and high-threshold calcium-gated afterhyperpolarization (AHP) K+ channels, respectively (Brown and Adams, 1980; Connors et al., 1982; Stocker, 2004). These currents cause spike frequency adaptation and are typically more pronounced in cortical regular spiking pyramidal (excitatory) neurons compared to fast spiking (inhibitory) interneurons (La Camera et al., 2006). Both, the M and AHP type K+ currents, are susceptible to cholinergic modulation (McCormick, 1992). Their kinetic time constants range from milliseconds to seconds (Abel et al., 2004; Manuel et al., 2005) and can be pharmacologically manipulated (Pedarzani et al., 2001).

Here we study the interplay of the dynamics of such adaptation currents with synaptic excitation and inhibition in recurrent networks of excitatory and inhibitory neurons. Specifically, we ask (1) how adaptation can generate slow oscillations, (2) how it modulates faster rhythms based on synaptic interaction, and (3) how adaptation affects resonance properties of the network.

In-vivo recordings from behaving animals have revealed that even when the population activity oscillates, the spike trains of the constituent neurons are rather irregular and display Poisson-like characteristics (Fries, 2001; Wang, 2010). This stochasticity in neuronal responses allows us to derive a mean-field model from a recurrent network of adaptive spiking model neurons coupled through conductance-based synapses with heterogeneous strengths and delays. Our approach is based on the Fokker–Planck (FP) formalism (Brunel, 2000; Deco et al., 2008) and efficiently describes the activity of large networks where the features of the spiking neurons (i.e., the model parameters) are retained. Using this method we analyze network responses to constant as well as rhythmic external input. In particular we describe asynchronous irregular states with constant steady-state activity as well as oscillatory states and their properties. We validate our mean-field results qualitatively by large-scale network simulations.

Methods

We first describe our network model containing two populations (excitatory and inhibitory) of adaptive spiking neurons with delayed conductance-based synaptic coupling. Based on that model we then derive mean-field model equations and solve them numerically to obtain distributions of the membrane potentials and instantaneous spike rates.

Network Model

We consider a network of N = Nℰ + Nℐ adaptive exponential integrate-and-fire neurons (aEIF) proposed by Brette and Gerstner (2005), where Nℰ and Nℐ are the numbers of excitatory and inhibitory neurons, respectively. The dynamics of the i-th neuron of population α ∈ {ℰ, ℐ} is described by

with reset condition

The first Equation (1) is for the membrane potential Vαi, where the capacitive current through the membrane with capacitance C equals the sum of ionic currents Iion, the adaptation current wαi and the synaptic current Iαsyn, i. The ionic currents are given by

where the first term on the right-hand side describes an Ohmic leak current with conductance gL and reversal potential EL. The exponential term with threshold slope factor ΔT and threshold potential VT approximates the Na+-current which is responsible for the generation of spikes, assuming that the activation of Na+-channels is instantaneous and neglecting their inactivation (Fourcaud-Trocme et al., 2003). Equation (2) governs the dynamics of the adaptation current wαi, where τw denotes the adaptation time constant and a quantifies a conductance that mediates subthreshold adaptation. A spike is said to occur at the time when Vαi diverges to infinity, but in practice a finite “cutoff” value Vcut is chosen. When Vαi crosses Vcut from below, Vαi is set to the reset potential Vr and wαi is incremented by b, cf. condition (3). In this way spike-triggered adaptation is included in the model. Immediately after the reset, Vαi and wαi are clamped for a refractory period Tref.

The aEIF model has been shown to reproduce a broad range of subthreshold dynamics (Touboul and Brette, 2008) and spike patterns of cortical neurons (Naud et al., 2008) and can well predict their spike times (Jolivet et al., 2008) and post-stimulus time histograms (Pospischil et al., 2011). Importantly, the subthreshold and spike-triggered adaptation components of this model have been shown to capture the effects of the M and AHP currents in a detailed biophysical neuron model, respectively (Ladenbauer et al., 2012).

Neuron i of population α receives total synaptic current

which is the superposition of synaptic inputs Iα, extij from Kext external excitatory neurons, Iα, ℰij from Kℰ excitatory neurons of the network and Iα, ℰij from Kℐ inhibitory neurons of the network. j is the index of the respective presynaptic neuron. The synaptic current Iα, γij caused by neuron j of population γ ∈ {ext, ℰ, ℐ} is modeled using delta functions,

where β ∈ {ℰ, ℐ} denotes the presynaptic population. Jα, γij are dimensionless synaptic efficacies drawn from a Gaussian distribution with mean Jα, γ and standard deviation ΔJα, γ. Here we consider that Jα, γ ≡ Jγ and ΔJα, γ ≡ ΔJγ depend only on the presynaptic population γ. tkj is the k-th spike time of neuron j from the respective population. Eℰ and Eℐ denote the excitatory and inhibitory reversal potentials, respectively. dα, βij is the synaptic delay, sampled using a bi-exponential probability density

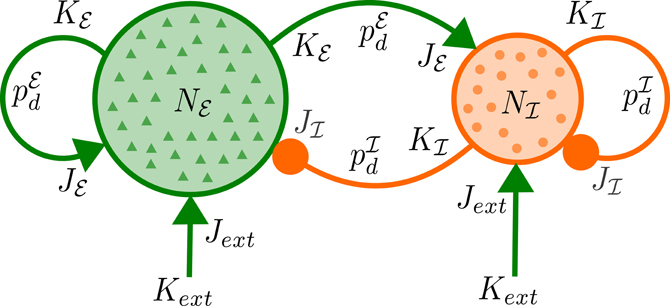

for positive delays d, where d0 is the minimal delay and τr, τd are the rise and decay time constants, for each pair of populations. In the model we use two different delay distributions pℰd and pℐd which do not depend on the postsynaptic population as for the synaptic weights. For a schematic diagram of the network, see Figure 1.

Figure 1. Network architecture. Each of Nℰ excitatory and Nℐ inhibitory neurons receives excitatory input from Kext external neurons with mean synaptic strength Jext as well as synaptic input from Kℰ (Kℐ) excitatory (inhibitory) neurons of the network with mean strength Jℰ (Jℐ) and delays distributed according to pℰd (pℐd).

We assume the neurons from the external population generate spike times according to Poisson processes with rates rαext(t). The spike rate of each population α ∈ {ℰ, ℐ} at time t is given by the average number of spikes of neurons from the corresponding population in the interval [t, t + Δt],

In the mean-field limit N → ∞, Δt → 0 we obtain a continuous population spike rate rα(t) (see below).

We selected the following parameters for the neuron model: C = 200 pF, gL = 10 nS, EL = −70 mV, ΔT = 1 mV, VT = −50 mV, Vr = −70 mV, Vcut = −40 mV, and Tref = 1.4 ms (Badel et al., 2008; Destexhe, 2009). For excitatory neurons the adaptation parameters were varied within reasonable ranges: τw ∈ [5, 1000] ms, a ∈ [0, 10] nS, b ∈ [0, 50] pA. For inhibitory neurons adaptation was neglected (a = b = 0) since it was found to be weak in fast spiking interneurons compared to pyramidal cells (La Camera et al., 2006).

The network parameter values were Nℰ = 40,000, Nℐ = 10,000, Kext = 1600, Kℰ = 1600, Kℐ = 400, Eℰ = 0 mV, Eℐ = −80 mV, Jext = 0.003, Jℰ = 0.003, and ΔJγ = 0.1Jγ with γ ∈ {ext, ℰ, ℐ} (Brunel and Wang, 2003). To adjust the balance of recurrent synaptic excitation and inhibition we introduce the parameter

which is the ratio of total charges induced at rest (Kumar et al., 2008). g determines Jℐ and thus ΔJℐ for fixed Jℰ and was varied in [0.8, 4] which yields a physiological range of inhibitory postsynaptic potential amplitudes (Tamas et al., 1997). Note that the value of g that corresponds to balanced mean recurrent excitatory and inhibitory synaptic currents depends on the mean membrane potential for each population. The effect of a spike of presynaptic neuron j on neuron i is mediated by a delayed instantaneous increment or decrement of the postsynaptic membrane potential, cf. Equations (1), (5), and (7). This implies that dα, βij reflects the conduction delay as well as delays in the synaptic kinetics. We therefore chose the parameter values of pℰd and pℐd such that conduction delays as well as typical time courses of excitatory AMPA and inhibitory GABAA synaptic receptors are taken into account. The values we selected were d0 = 1 ms, τℰr ∈ [1.25, 1.5] ms, τℰd ∈ [1.5, 2] ms, τℐr ∈ [0.55, 1.25] ms, and τℐd ∈ [1.5, 5] ms. The input rate of the excitatory population rℰext was varied in [1, 12.5] Hz. rℐext was chosen such that rℰ = rℐ in case of uncoupled populations of neurons, i.e., Jℰ = Jℐ = 0.

Mean-Field Model

We reduce the two-population network of aEIF neurons to the mean-field model in three steps. First, we replace the synaptic current fluctuations by a Gaussian white noise process via the diffusion approximation. Next, we take a mean-field limit to formulate the stochastic network model in terms of two coupled deterministic scalar partial differential equations (PDE). Finally, to allow for efficient numerical computation we reduce the number of variables in these equations using an adiabatic approximation.

Diffusion approximation

We approximate the total synaptic current Iαsyn, i of Equation (5) by its mean plus a fluctuating Gaussian part, which is justified by the following physiologically plausible assumptions: (1) The number of synaptic inputs to a neuron is large, i.e., Kext, Kℰ, Kℐ » 1 (Destexhe et al., 2003) and (2) the postsynaptic potential amplitudes elicited by individual presynaptic spikes are small, i.e., Jext|Eℰ − V|, Jℰ|Eℰ − V|, Jℐ|Eℐ − V| « Vcut − Vr (Williams and Stuart, 2002). We further assume that (3) the network connectivity is random and sparse, i.e., Kℰ, Kℐ « N, and that (4) presynaptic spike times are represented by Poisson processes which are homogeneous in each small time interval. The total synaptic current can then be written as (Brunel, 2000; Nykamp and Tranchina, 2000; Renart et al., 2004; Richardson, 2004; Gigante et al., 2007b)

where μα, i and σα, i are the infinitesimal mean and standard deviation of Iαsyn, i, respectively, and ηi is a Gaussian white noise process with δ-autocorrelation. The infinitesimal mean is given by

with

where 〈·〉 denotes the expectation operator. The infinitesimal variance is

with

where β ∈ {ℰ, ℐ} and * denotes convolution. In Equations (13), (14), (16), and (17) we have used that the presynaptic Poisson processes, the synaptic weights and delays are independent.

Mean-field limit

We analyze networks of sparsely coupled neurons, i.e., the probability for a connection between any pair of neurons is low, cf. assumption (3) above. For large N correlations between the fluctuations of synaptic currents of different neurons become negligible, i.e., 〈 ηi(t) ηj(t) 〉 = 0 for i ≠ j. In the mean-field limit N → ∞ the network model Equations (1)–(4), Equations (11)–(17) can be described by two FP equations—one for each population α—which are delay-coupled by the population spike rates rℰ and rℐ,

with

pα(V, w, t) is the probability density to find a neuron of population α in the state (V, w) at time t. SVα(V, w, t) and Swα(V, w, t) are the probability fluxes in positive V and w direction, respectively. Note that we used the Stratonovich interpretation of the underlying stochastic equations (Risken, 1996; Richardson, 2004). To account for the reset condition (3) the flux through the cutoff voltage Vcut at w is re-injected after the refractory period Tref at Vr, w + b, i.e.,

This implies that in general pα is not differentiable at the line V = Vr. The boundary conditions are reflecting for w → ±∞, V → −∞ and absorbing for V = Vcut,

The spike rate of population α is given by the integral of the cutoff fluxes,

At any timepoint t the histogram of the membrane potentials of neurons in population α can be seen as a sample drawn from the probability density pα(V, t) which is governed by the FP equation.

Adiabatic approximation

Solving the 2 + 1 dimensional PDE (Equations 18–20) with corresponding reset and boundary conditions (21)–(24) numerically is possible but computationally demanding. We therefore reduce the dimensionality of the FP system Equations (18)–(20) assuming the timescales of membrane voltage and adaptation current dynamics are separable. This is justified by the observation that the dynamics of neuronal adaptation is significantly slower than the other in the model system such as membrane time constant and average inter-spike interval (Womble and Moises, 1992; Stocker, 2004). Under this assumption, the adaptation current of each neuron can be seen as an efficient integrator that filters the fluctuations in the neuronal activity. We approximate wαi(t) in Equation (2) by its population average wα(t), which evolves according to

where 〈·〉p denotes the average over the density p (Brunel et al., 2003; Gigante et al., 2007b). The probability density pα(V, t) then satisfies the 1 + 1 dimensional FP equation

where again SVα is the probability flux defined in Equation (19) and w : = wα(t) appears as a system parameter. The reset condition is

and the boundary conditions (23)–(24) become

The population spike rates are given by the corresponding fluxes through the cutoff voltage,

Note that the adiabatic approximation described above could be applied repeatedly for additional slow variables.

Numerical Solution

We solved the reduced FP Equation (27) subject to conditions (28)–(30) and mean adaptation current dynamics (Equation 26) forward in time until either steady states r∞ℰ, r∞ℐ with r∞α : = limt → ∞ rα(t) or stable oscillatory states were reached. The probability densities pℰ, pℐ were initialized using normalized Gaussians with mean 0.5 · (Vr + VT) and standard deviation 0.2 · (VT − Vr). We applied a first-order finite volume method on a finite and non-uniform grid V0 < V1 < ··· < VNV using upwind-fluxes to stabilize the numerical solution (LeVeque, 2002). Time was discretized using the implicit Euler method on an equidistant grid, i.e., . The resulting linear equation systems were solved with a preconditioned Krylov subspace method in each time step. Specifically, BiCGSTAB (van der Vorst, 1992) was used in combination with an incomplete LU decomposition preconditioner (Saad, 2003) that strongly improved the convergence speed.

wℰ was initialized with values wℰ(0) ∈ [0, 500] pA (and wℐ ≡ 0). The other parameters were , minm ΔVm = 1 μV with ΔVm : = Vm + 1−Vm, V0 : = −100 μV, VNV = Vcut and NV = 256.

We complemented the mean-field results with numerical simulations of the network model Equations (1)–(4) using a Runge–Kutta second order method implemented in Brian 1.4 (Goodman and Brette, 2009) with a time step of 50 μs.

In case of stable periodic population spike rates the oscillation frequency was determined by the dominant frequency of the Fourier spectrum of rℰ over the last 2 s of runtime.

Results

Adaptation Mediates Oscillations

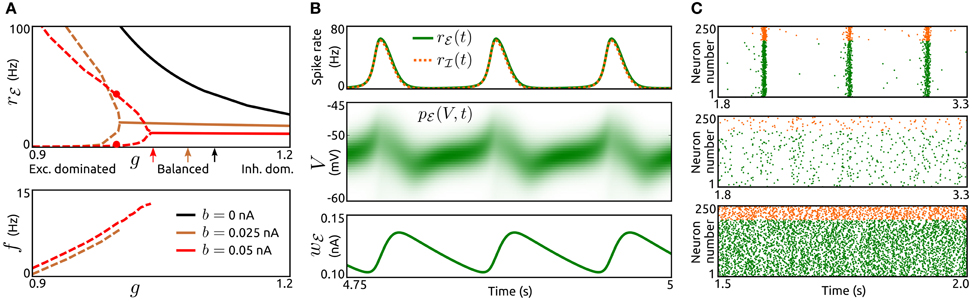

To examine how the interplay of adaptation and recurrent synaptic input shapes network dynamics we vary the type, strength and timescale (parameters a, b, and τw) of adaptation for excitatory neurons as well as the strength of synaptic inhibition (parameter g) across networks. Adaptation currents are disregarded for inhibitory neurons, which is supported by experimental observations, see the section Methods. We consider constant rates rℰext, rℐext for the external Poisson-inputs and identical delay distributions pℰd ≡ pℐd. First, we examine steady-state spike rates, oscillation amplitudes and frequencies for networks with different values of spike-triggered adaptation b and inhibition strength g, see Figure 2A. All networks without adaptation (a = b = 0) settle into asynchronous states with constant population rates that decrease with increasing g. For networks with increased b slow oscillatory states become stable if recurrent excitation is sufficiently strong. The larger b is, the less recurrent excitation is necessary for sustained oscillations. Amplitude and period of the oscillatory rate decrease with an increase of b and g, respectively. Thus, in networks where recurrent synaptic excitation dominates inhibition at least slightly, spike-triggered adaptation b generates spike rate oscillations. The dynamics of an example network is shown in Figure 2B. The evolution of the population spike rates rℰ, rℐ, membrane potential probability densities pℰ, pℐ and adaptation current wℰ display periodic bursts of population activity. As a validation of the findings above using the mean-field model the activity of simulated large networks of spiking neurons is shown in Figure 2C. The raster plots reveal population bursts when b is increased and g is small. An asynchronous state with low population activity occurs if g is increased. If in addition adaptation is removed (a = b = 0) the network settles into an asynchronous state with increased spike rates.

Figure 2. Population bursts caused by spike-triggered adaptation. (A) Top: Spike rate rℰ of the excitatory population as a function of the strength of inhibition g for networks without spike-triggered adaptation (b = 0, black) and with increased levels of b (0.025 nA, brown and 0.05 nA, red). In case of stable oscillatory states the maxima and minima of the periodic rℰ are shown by dashed lines. Solid lines represent asynchronous states. Arrows indicate balance of recurrent excitation and inhibition for both populations. Bottom: Corresponding oscillation frequencies f. τw = 200 ms, a = 0, and rℰext = 6.25 Hz. The parameter values for both delay distributions pℰd, pℐd were τr = 1.5 ms and τd = 2 ms. For other model parameters see the section Methods. (B), Top: Time-dependent spike rates rℰ(t) (green) and rℐ(t) (orange, dashed) for the parameter values b = 0.05 nA and g = 1, as indicated in (A) by red dots. Center: Corresponding membrane potential density pℰ(V, t). Bottom: Corresponding mean adaptation current wℰ(t). (C): Raster plots of simulated networks of N = 50,000 aEIF neurons for b = 0.05 nA, g = 0.85 (top), b = 0.05 nA, g = 1.05 (center) and b = 0, g = 1 (bottom). The spike times of 200 excitatory neurons and 50 inhibitory neurons, all randomly selected, are shown by green and orange dots, respectively. τw = 200 ms, a = 0, and rℰext = 3.75 Hz. Other parameter values as in (A).

The mechanism that generates these oscillations is a loop of recurrent excitation, build up and decay of adaptation current as indicated in Figure 2B. A low level of population activity is initiated by the external input rℰext and recurrent synaptic excitation boosts the activity, thereby increasing the adaptation current wℰ through b in a spike rate dependent way. The adaptation current in turn acts as a negative feedback which eventually outweighs the recurrent excitation. The population activity drops rapidly and the adaptation current decays slowly. Upon recovery from the adaptation current the cycle starts again.

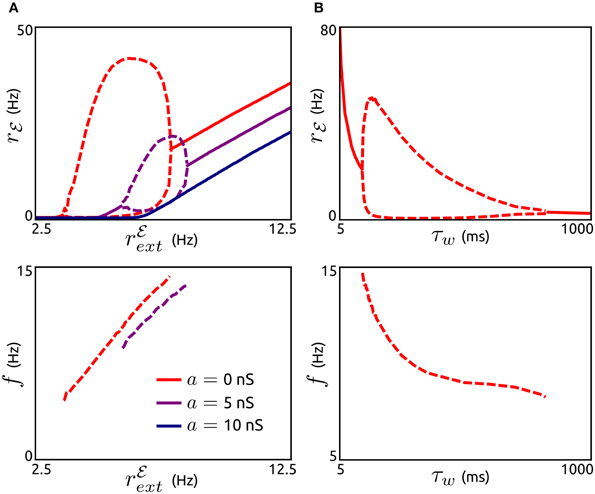

Next, we investigate how these oscillations are affected by the external input rℰext, the subthreshold adaptation conductance a and the adaptation timescale τw, see Figure 3. The existence of adaptation-induced oscillations is quite sensitive to the level of rℰext (Figure 3A). Periodic activity is stable for small values of rℰext (above threshold). While oscillation frequencies increase monotonically with increasing rℰext, oscillation amplitudes increase initially for a small interval of rℰext values and decrease over the following interval. For larger values of rℰext oscillatory activity is destabilized and asynchronous states occur. Interestingly, an increase in a does not lead to oscillations. On the contrary, periodic population bursts are destabilized by a. The dependence of oscillation amplitude and frequency on τw is shown in Figure 3B. Stable oscillations exist for a large range of values of τw, where the frequencies decrease with increasing τw. Oscillations are unstable for small adaptation timescales in the range of the membrane time constant and for very large values of τw.

Figure 3. Effects of subthreshold adaptation, external input, and adaptation timescale on population bursts. (A), Top: Spike rate rℰ depending on the external input rℰext for networks without subthreshold adaptation (a = 0, red) and with increased levels of a (5 nS, violet and 10 nS, dark blue). Maxima and minima of oscillating rℰ are shown by dashed lines. Bottom: Corresponding frequencies f. b = 0.05 nA, τw = 200 ms, g = 1, and other parameter values as in Figure 2A. (B): Maxima and minima of rℰ (top) and oscillation frequency as a function of the adaptation time constant τw. a = 0, rℰext = 6.25 Hz, and other parameter values as in (A).

Adaptation Modulates Frequencies of Network-Based Oscillations

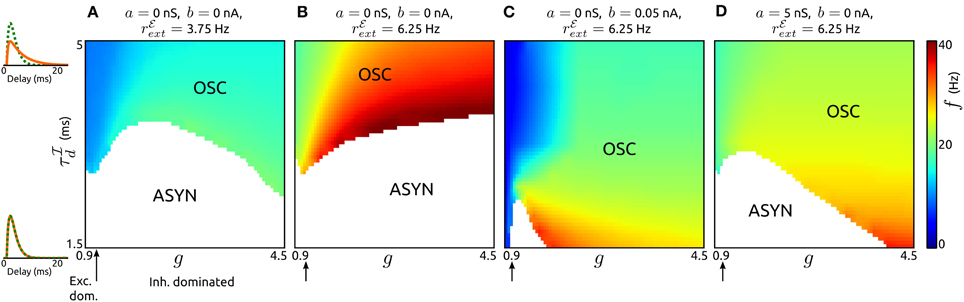

Here we study the influence of adaptation on oscillations generated by recurrent synaptic excitation-inhibition (ℰ−ℐ) loops. The pace of such oscillations is believed to be largely determined by the decay of inhibition. To describe their dependence on the timescale of inhibition for various recurrent network regimes (from excitation dominated to inhibition dominated) we first consider networks of neurons without an adaptation current (a = b = 0), see Figures 4A,B. By varying the decay τℐd of inhibition and its strength (by parameter g) across networks we find that stable oscillatory states occur if inhibition is sufficiently slow in comparison to excitation. The oscillation frequencies increase with increasing external input spike rate rℰext, increasing g and decreasing τℐd, respectively. A low value of rℰext leads to frequencies in the low beta band (Figure 4A), for a higher value of rℰext the frequencies span the beta and low gamma bands (Figure 4B). Note that the network parameters can be adjusted to obtain higher oscillation frequencies. The generating mechanism underlying the oscillations is a loop of recurrent synaptic excitation and inhibition, initiated by the excitatory external input. We verified this by removing the recurrent excitatory input to the inhibitory population, which lead to a destabilization of the oscillations. For larger values of g as the ones used in Figure 4, the ℰ−ℐ-loop mechanism is replaced by an ℐ-ℐ-loop that does not depend on recurrent excitation (not shown). Since adaptation is only exhibited by excitatory neurons, we disregard the parameter space where ℐ-ℐ-loop-based rhythmic activity occurs and focus on ℰ-ℐ-loop-based oscillations instead.

Figure 4. Influence of synaptic inhibition and adaptation on network-based oscillations. (A–D): Existence of oscillatory states (OSC) and corresponding frequencies f as a function of the strength g and timescale τℐd of synaptic inhibition for networks with adaptation parameters and external input strengths as specified. Asynchronous states (ASYN) are indicated by white regions in the parameter space. Arrows mark balance of recurrent excitation and inhibition. On the left pℰd (green) and pℐd (orange) are shown for τℐd = 1.5 ms, τℐd = 5 ms. τℐr was chosen such that the peaks of pℰd and pℐd occur at the same delay value. τw = 200 ms, τℰr = 1.25 ms, and τℰd = 1.5 ms. For other parameter values see the Methods section.

An increase of spike-triggered adaptation or subthreshold current stabilizes oscillatory states also for faster recurrent inhibition, see Figures 4C,D. This change in single neuron dynamics causes oscillations in large parts of explored (g, τℐd)-space. In particular, for spike-triggered adaptation asynchronous states only occur in a small region of the parameter space. Interestingly, the oscillation frequencies are significantly reduced by either type of adaptation.

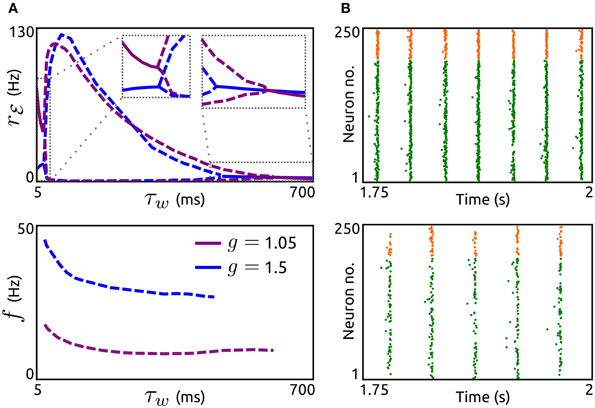

Next, we investigate how the timescale of adaptation τw affects oscillations mediated by an ℰ-ℐ-loop. In Figure 5A we show the dependence of amplitude and frequency of such oscillations on τw for networks with both adaptation components increased (a = 5 nS, b = 0.05 nA) and either dominant recurrent excitation (g = 1.05) or inhibition (g = 1.5). In both cases, stable oscillatory states exist for a large range of time constants. As τw increases the oscillation frequencies decrease while the amplitudes first increase abruptly and then decrease. The networks settle into asynchronous states for small τw (in the order of the membrane time constant) or large τw (several hundreds of milliseconds). Note that these effects of τw are similar if either a or b is increased individually (not shown). We validated these effects by simulations of aEIF neuron networks, see Figure 5B. The raster plots show that an increase in τw leads to a decrease in oscillation frequency and amplitude.

Figure 5. Effects of adaptation timescale on network-based oscillations. (A) Top: Spike rate rℰ as a function of the adaptation time constant τw for networks with dominant recurrent excitation (g = 1.05, violet) and inhibition (g = 1.5, blue). Dashed lines indicate maxima and minima of oscillating rℰ, solid lines represent constant rℰ. Bottom: Corresponding oscillation frequencies f. a = 5 nS and b = 0.05 nA. rℰext = 7.5 Hz, τℰr = 1.25 ms, τℰd = 1.55 ms, τℐr = 0.98 ms, and τℐd = 2 ms. Other parameters as in Figure 4. (B): Raster plots of simulated networks of size N = 50,000 with g = 1.5 and τw = 100 ms (top) as well as τw = 400 ms (bottom), showing the spike times of 200 excitatory and 50 inhibitory aEIF neurons. Other parameter values as in (A).

Adaptation Promotes Periodic Signal Propagation

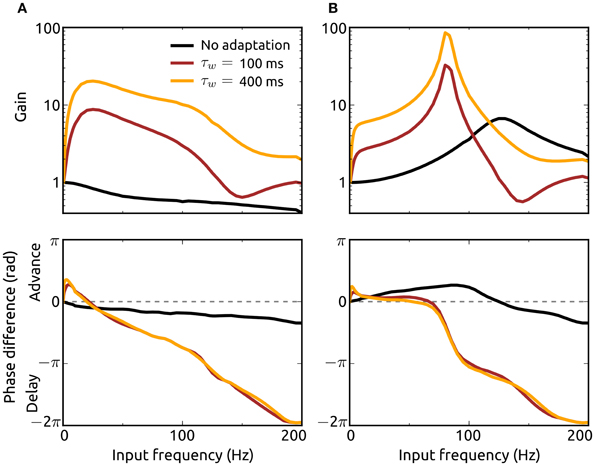

To analyze how the resonance properties of recurrent networks in asynchronous states are influenced by adaptation currents, we here consider external Poisson-inputs with oscillatory rates with frequency f. Gain of input spike rate and phase difference between network and input spike rates as a function of input frequency for networks without (a = b = 0) and with adaptation (a = 5 nS, b = 0.05 nA) considering two adaptation time constants are presented in Figures 6A,B. Excitation dominated networks without adaptation do not exhibit resonance at any frequency and show only phase delays. The presence of an adaptation current leads to a significant amplification of oscillations in the input which is particularly strong at lower frequencies (of the beta band). This effect is pronounced for an increased adaptation timescale. In addition, adaptation causes a phase advance for low oscillation frequencies.

Figure 6. Effects of adaptation on resonance properties of recurrent networks. Gain (top) and phase shift (bottom) of the spike rate rℰ for networks with dominant recurrent excitation [g = 1.05, (A)] and inhibition [g = 1.5, (B)] as a function of the input frequency f. The gain is defined as the quotient of the oscillation amplitude in rℰ for the input with frequency f and the amplitude for the lowest frequency (fmin = 0.5 Hz). Adaptation parameter values are a = b = 0 (black), a = 5 nS, b = 0.05 nA, τw = 100 ms (dark red), and a = 5 nS, b = 0.05 nA, τw = 400 ms (orange). Delay distributions are identically parameterized (pℰd ≡ pℐd) with τr = 1.25 ms and τd = 1.5 ms. The Poisson-input rates rℰext, rℐext each consist of a baseline rate plus a sinusoidal component of small amplitude (1/1000th of the baseline) with frequency f. The baseline of rℰext is chosen to yield a steady-state spike rate r∞ℰ of 50 Hz with constant input rate. The baseline rate of rℐext is chosen as explained in the Methods section.

In networks where recurrent inhibition dominates excitation on the other hand even in the absence of adaptation currents resonance is shown for a high frequency band and phase advances for lower frequencies. Adaptation greatly enhances resonance and shifts the preferred frequency band to the high gamma range. The resonance effect is even stronger if the adaptation current is slower, i.e., τw increased. Although these effects of adaptation on resonance properties of recurrent networks are similar when either the subthreshold (a) or spike-triggered adaptation component (b) is increased individually, the dominant contribution to the frequency amplifications comes from b (not shown). We additionally examined the response of single neurons to oscillatory noisy inputs using our mean-field model and found that adaptation mediates resonance even in the absence of recurrent input (not shown). These results emphasize the importance of adaptation for the amplification and thus propagation of oscillatory signals in neuronal networks.

Discussion

In this work we have investigated the role of neuronal adaptation currents in shaping spike rate oscillations in large recurrent networks of excitatory and inhibitory neurons. Based on a network of aEIF model neurons sparsely coupled through conductance-based synapses with heterogeneous delays and strengths driven by noisy external input, we used a mean-field method taking advantage of the FP equation. We simplified the problem by applying an adiabatic approximation and solved the resulting equations numerically. Using this method we obtain membrane potential distributions and population averages of spike rates and adaptation currents. At the same time, the dynamical properties of single neurons, i.e., the neuron model parameters, are retained in the derived mean-field network model.

Alternative mean-field methods have been developed for conductance-based model neurons (Robinson et al., 2008) and recurrent networks thereof in asynchronous states (Shriki et al., 2003), where spike rates are obtained without having to solve a PDE. Our approach based on the FP equation on the other hand treats noise in the synaptic inputs in more detail and allows for the calculation of membrane potential distributions in addition to spike rates.

We chose the aEIF model because it provides a rich yet low-dimensional description of neuronal dynamics and includes a proper phenomenological description of the M and AHP adaptation currents. The effects of subthreshold (a) and spike-triggered adaptation (b) on response properties of aEIF neurons (measured by spike rate-input current relationships and phase response curves) match those of M and AHP adaptation currents in a Hodgkin–Huxley type neuron model, respectively (Ladenbauer et al., 2012). Furthermore, fitting the aEIF model parameters to a detailed biophysical model using standard electro-physiological paradigms revealed a clear relationship between parameter a and the conductance for the M current as well as between parameter b and the AHP current (not shown).

Our method is based on several assumptions which allow to derive the mean-field equations. The Poisson approximation of spike train statistics is justified by experimental findings (Tolhurst et al., 1983; McAdams and Maunsell, 1999) although spiking seems to be more regular in some cortical areas (Maimon and Assad, 2009). The sparse random connectivity implies vanishing noise correlations between neurons in the large network limit and an experimental study in primary visual cortex of awake monkeys has reported almost zero noise correlations (Ecker et al., 2010). However, there is an ongoing debate about the strength of correlations in experimental data (Cohen and Kohn, 2011). We have used an adiabatic approximation, which relies on separable time scales of adaptation current and membrane voltage. Although this assumption is violated for small values of τw, numerically solving the unreduced FP system, Equations (18)–(24), showed that our results are robust regarding the violation of this assumption. The results we obtained by simulations of aEIF networks and the mean-field results show quantitative differences. However, the presented effects described using the mean-field model are validated qualitatively by the network simulations.

We have shown that spike-triggered adaptation provides a mechanism to generate spike rate oscillations in a low frequency range (alpha band and lower) if recurrent excitation is sufficiently strong. Increased subthreshold adaptation on the other hand does not contribute to this mechanism but rather dampens such oscillations. The type of adaptation current therefore strongly determines rhythmic activity in excitation dominated networks. The importance of activity-driven adaptation for slow oscillations is consistent with results from simulations of detailed (thalamo-)cortical spiking neuron network models (Bazhenov et al., 2002; Compte et al., 2003; Destexhe, 2009), mean-field studies based on networks of excitatory neurons under the assumption sparse (Gigante et al., 2007b) and all-to-all connectivity (Nesse et al., 2008), as well as phenomenological rate models (Latham et al., 2000). We have further shown that reducing inhibitory synaptic strength leads to a reduction on oscillation frequency, which is in agreement with similar experimental findings (Sanchez-Vives et al., 2010).

The M and AHP K+ currents, which mediate spike frequency adaptation in pyramidal neurons, are known to be deactivated by acetylcholine (McCormick, 1992), with the AHP current showing higher sensitivity. Since the adaptation parameter b is strongly related to AHP type adaptation, our results support the hypothesis that the cholinergically induced activating transition from slow-wave oscillations to asynchronous irregular states (Lee and Dan, 2012) is mediated (at least in part) by a reduction of spike-triggered adaptation (Destexhe, 2009).

We have demonstrated that an increase of either type of adaptation current leads to a reduction in the frequency of oscillations generated by a loop of recurrent excitation and inhibition. This shows that the dynamical properties of neurons in addition to coupling characteristics strongly affect the network frequency. Also the passive (integrative) membrane properties significantly influence such networks oscillations as has been described previously (Geisler et al., 2005). Our additional finding of decreased frequencies for increased adaptation time constants is consistent with the results from a computational study on clustering effects of spike-triggered adaptation in gamma oscillations (Kilpatrick and Ermentrout, 2011).

Low input frequencies have been shown to be suppressed in the output of single excitatory neurons with increased spike-triggered (Gigante et al., 2007a) or subthreshold adaptation (Richardson et al., 2003; Prescott and Sejnowski, 2008), which we confirmed using our aEIF-based mean-field model. Such a high pass property of single neurons has also been found using a more general model of adaptation (Benda and Herz, 2003). We have demonstrated that both adaptation currents cause spike rate resonance in excitation dominated recurrent networks. Inhibition dominated networks, on the other hand, exhibit resonance without adaptation and we have shown that increased adaptation of excitatory neurons strongly amplifies this resonance. A similar effect has been described for purely inhibitory networks (Richardson, 2009). In addition, our results show that adaptation shifts the resonance frequency to lower values.

In excitation dominated networks, adaptation further leads to phase advances for low input frequencies in addition to phase delays for higher frequencies as observed in previous studies on single excitatory neurons (Fuhrmann et al., 2002; Gigante et al., 2007a). These adaptation-induced phase advances enable synchronization of periodic activity between distant neurons (and populations of neurons) in different areas of the brain if the strength of adaptation is controlled appropriately, e.g., through cholinergic neuromodulation.

Here we have considered one adaptation current for each neuron of the excitatory population. To account for the multimodal distribution of adaptation timescales found experimentally (La Camera et al., 2006) our approach can be easily extended to include multiple adaptation currents.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We thank Timm Lochmann for helpful comments on the manuscript. This work was supported by the DFG Collaborative Research Center SFB910.

References

Abel, H. J., Lee, J. C. F., Callaway, J. C., and Foehring, R. C. (2004). Relationships between intracellular calcium and afterhyperpolarizations in neocortical pyramidal neurons. J. Neurophysiol. 91, 324–335.

Badel, L., Lefort, S., Brette, R., Petersen, C. C. H., Gerstner, W., and Richardson, M. J. E. (2008). Dynamic I-V curves are reliable predictors of naturalistic pyramidal-neuron voltage traces. J. Neurophysiol. 99, 656–666.

Bazhenov, M., Timofeev, I., Steriade, M., and Sejnowski, T. J. (2002). Model of thalamocortical slow-wave sleep oscillations and transitions to activated states. J. Neurosci. 22, 8691–8704.

Benda, J., and Herz, A. V. M. (2003). A universal model for spike-frequency adaptation. Neural Comput. 15, 2523–2564.

Brette, R., and Gerstner, W. (2005). Adaptive exponential integrate-and-fire model as an effective description of neuronal activity. J. Neurophysiol. 94, 3637–3642.

Brown, D. A., and Adams, P. R. (1980). Muscarinic suppression of a novel voltage-sensitive K+ current in a vertebrate neurone. Nature 283, 673–676.

Brunel, N. (2000). Dynamics of sparsely connected networks of excitatory and inhibitory spiking neurons. J. Comput. Neurosci. 8, 183–208.

Brunel, N., Hakim, V., and Richardson, M. (2003). Firing-rate resonance in a generalized integrate-and-fire neuron with subthreshold resonance. Phys. Rev. E 67, 051916.

Brunel, N., and Wang, X.-J. (2003). What determines the frequency of fast network oscillations with irregular neural discharges? I. Synaptic dynamics and excitation-inhibition balance. J. Neurophysiol. 90, 415–430.

Cohen, M. R., and Kohn, A. (2011). Measuring and interpreting neuronal correlations. Nat. Neurosci. 14, 811–819.

Compte, A., Sanchez-Vives, M. V., McCormick, D. A., and Wang, X.-J. (2003). Cellular and network mechanisms of slow oscillatory activity (<1 Hz) and wave propagations in a cortical network model. J. Neurophysiol. 89, 2707–2725.

Connors, B., Gutnick, M., and Prince, D. (1982). Electrophysiological properties of neocortical neurons in vitro. J. Neurophysiol. 48, 1302–1320.

Deco, G., Jirsa, V. K., Robinson, P. A., Breakspear, M., and Friston, K. (2008). The dynamic brain: from spiking neurons to neural masses and cortical fields. PLoS Comput. Biol. 4:e1000092. doi: 10.1371/journal.pcbi.1000092

Destexhe, A. (2009). Self-sustained asynchronous irregular states and up-down states in thalamic, cortical and thalamocortical networks of nonlinear integrate-and-fire neurons. J. Comput. Neurosci. 27, 493–506.

Destexhe, A., Rudolph, M., and Paré, D. (2003). The high-conductance state of neocortical neurons in vivo. Nat. Rev. Neurosci. 4, 739–751.

Ecker, A. S., Berens, P., Keliris, G. A., Bethge, M., Logothetis, N. K., and Tolias, A. S. (2010). Decorrelated neuronal firing in cortical microcircuits. Science 327, 584–587.

Engel, A., Fries, P., and Singer, W. (2001). Dynamic predictions: oscillations and synchrony in top-down processing. Nat. Rev. Neurosci. 2, 704–716.

Fourcaud-Trocme, N., Hansel, D., van Vreeswijk, C., and Brunel, N. (2003). How spike generation mechanisms determine the neuronal response to fluctuating inputs. J. Neurosci. 23, 11628–11640.

Fries, P. (2001). Modulation of oscillatory neuronal synchronization by selective visual attention. Science 291, 1560–1563.

Fuhrmann, G., Markram, H., and Tsodyks, M. (2002). Spike frequency adaptation and neocortical rhythms. J. Neurophysiol. 88, 761–770.

Geisler, C., Brunel, N., and Wang, X.-J. (2005). Contributions of intrinsic membrane dynamics to fast network oscillations with irregular neuronal discharges. J. Neurophysiol. 94, 4344–4361.

Ghazanfar, A., Chandrasekaran, C., and Logothetis, N. (2008). Interactions between the superior temporal sulcus and auditory cortex mediate dynamic face/voice integration in rhesus monkeys. J. Neurosci. 28, 4457–4469.

Gigante, G., Del Giudice, P., and Mattia, M. (2007a). Frequency-dependent response properties of adapting spiking neurons. Math. Biosci. 207, 336–351.

Gigante, G., Mattia, M., and Del Giudice, P. (2007b). Diverse population-bursting modes of adapting spiking neurons. Phys. Rev. Lett. 98, 1–4.

Goodman, D. F. M., and Brette, R. (2009). The brian simulator. Front. Neurosci. 3, 192–197. doi: 10.3389/neuro.01.026.2009

Hammond, C., Bergman, H., and Brown, P. (2007). Pathological synchronization in Parkinson's disease: networks, models and treatments. Trends Neurosci. 30, 357–364.

Isaacson, J. S., and Scanziani, M. (2011). How inhibition shapes cortical activity. Neuron 72, 231–243.

Jolivet, R., Schürmann, F., Berger, T. K., Naud, R., Gerstner, W., and Roth, A. (2008). The quantitative single-neuron modeling competition. Biol. Cybern. 99, 417–426.

Kilpatrick, Z. P., and Ermentrout, B. (2011). Sparse gamma rhythms arising through clustering in adapting neuronal networks. PLoS Comput. Biol. 7:e1002281. doi: 10.1371/journal.pcbi.1002281

Kumar, A., Schrader, S., Aertsen, A., and Rotter, S. (2008). The high-conductance state of cortical networks. Neural Comput. 20, 1–43.

La Camera, G., Rauch, A., Thurbon, D., Lüscher, H.-R., Senn, W., and Fusi, S. (2006). Multiple time scales of temporal response in pyramidal and fast spiking cortical neurons. J. Neurophysiol. 96, 3448–3464.

Ladenbauer, J., Augustin, M., Shiau, L., and Obermayer, K. (2012). Impact of adaptation currents on synchronization of coupled exponential integrate-and-fire neurons. PLoS Comput. Biol. 8:e1002478. doi: 10.1371/journal.pcbi.1002478

Latham, P. E., Richmond, B. J., Nelson, P. G., and Nirenberg, S. (2000). Intrinsic dynamics in neuronal networks. I. Theory. J. Neurophysiol. 83, 808–827.

LeVeque, R. (2002). Finite Volume Methods for Hyperbolic Problems. Cambridge: Cambridge University Press.

Maimon, G., and Assad, J. A. (2009). Beyond Poisson: increased spike-time regularity across primate parietal cortex. Neuron 62, 426–440.

Manuel, M., Meunier, C., Donnet, M., and Zytnicki, D. (2005). How much afterhyperpolarization conductance is recruited by an action potential? A dynamic-clamp study in cat lumbar motoneurons. J. Neurosci. 25, 8917–8923.

McAdams, C. J., and Maunsell, J. H. (1999). Effects of attention on the reliability of individual neurons in monkey visual cortex. Neuron 23, 765–773.

McCormick, D. A. (1992). Neurotransmitter actions in the thalamus and cerebral cortex and their role in thalamocortical activity. Progr. Neurobiol. 39, 337–388.

Melloni, L., Molina, C., Pena, M., Torres, D., Singer, W., and Rodriguez, E. (2007). Synchronization of neural activity across cortical areas correlates with conscious perception. J. Neurosci. 27, 2858–2865.

Naud, R., Marcille, N., Clopath, C., and Gerstner, W. (2008). Firing patterns in the adaptive exponential integrate-and-fire model. Biol. Cybern. 99, 335–347.

Nesse, W. H., Borisyuk, A., and Bressloff, P. C. (2008). Fluctuation-driven rhythmogenesis in an excitatory neuronal network with slow adaptation. J. Comput. Neurosci. 25, 317–333.

Nykamp, D. Q., and Tranchina, D. (2000). A population density approach that facilitates large-scale modeling of neural networks: analysis and an application to orientation tuning. J. Comput. Neurosci. 8, 19–50.

Pedarzani, P., Mosbacher, J., Rivard, A., Cingolani, L. A., Oliver, D., Stocker, M., et al. (2001). Control of electrical activity in central neurons by modulating the gating of small conductance Ca(2+)-activated K+ channels. J. Biol. Chem. 276, 9762–9769.

Pospischil, M., Piwkowska, Z., Bal, T., and Destexhe, A. (2011). Comparison of different neuron models to conductance-based post-stimulus time histograms obtained in cortical pyramidal cells using dynamic-clamp in vitro. Biol. Cybern. 105, 167–180.

Prescott, S. A., and Sejnowski, T. J. (2008). Spike-rate coding and spike-time coding are affected oppositely by different adaptation mechanisms. J. Neurosci. 28, 13649–13661.

Renart, A., Brunel, N., and Wang, X.-J. (2004). “Mean-field theory of irregularly spiking neuronal populations and working nemory in recurrent cortical networks,” in Computational Neuroscience: A Comprehensive Approach, ed J. Feng (Boca Raton, FL: CRC Press), 431–490.

Richardson, M. (2004). Effects of synaptic conductance on the voltage distribution and firing rate of spiking neurons. Phys. Rev. E 69, 1–8.

Richardson, M. (2009). Dynamics of populations and networks of neurons with voltage-activated and calcium-activated currents. Phys. Rev. E 80, 1–16.

Richardson, M. J. E., Brunel, N., and Hakim, V. (2003). From subthreshold to firing-rate resonance. J. Neurophysiol. 89, 2538–2554.

Risken, H. (1996). The Fokker–Planck Equation: Methods of Solutions and Applications. Berlin: Springer.

Robinson, P. A., Wu, H., and Kim, J. W. (2008). Neural rate equations for bursting dynamics derived from conductance-based equations. J. Theor. Biol. 250, 663–672.

Sanchez-Vives, M. V., Mattia, M., Compte, A., Perez-Zabalza, M., Winograd, M., Descalzo, V. F., et al. (2010). Inhibitory modulation of cortical up states. J. Neurophysiol. 104, 1314–1324.

Shriki, O., Hansel, D., and Sompolinsky, H. (2003). Rate models for conductance-based cortical neuronal networks. Neural Comput. 15, 1809–1841.

Stocker, M. (2004). Ca(2+)-activated K+ channels: molecular determinants and function of the SK family. Nat. Rev. Neurosci. 5, 758–770.

Tamas, G., Buhl, E. H., and Somogyi, P. (1997). Fast IPSPs elicited via multiple synaptic release sites by different types of GABAergic neurone in the cat visual cortex. J. Physiol. 500, 715–738.

Tiesinga, P., and Sejnowski, T. J. (2009). Cortical enlightenment: are attentional gamma oscillations driven by ING or PING? Neuron 63, 727–732.

Tolhurst, D. J., Movshon, J. A., and Dean, A. F. (1983). The statistical reliability of signals in single neurons in cat and monkey visual cortex. Vision Res. 23, 775–785.

Touboul, J., and Brette, R. (2008). Dynamics and bifurcations of the adaptive exponential integrate-and-fire model. Biol. Cybern. 99, 319–334.

Uhlhaas, P. J., and Singer, W. (2010). Abnormal neural oscillations and synchrony in schizophrenia. Nat. Rev. Neurosci. 11, 100–113.

van der Vorst, H. A. (1992). Bi-CGSTAB: a fast and smoothly converging variant of Bi-CG for the solution of nonsymmetric linear systems. SIAM J. Sci. Stat. Comput. 13, 631–644.

Wang, X.-J. (2010). Neurophysiological and computational principles of cortical rhythms in cognition. Phyiol. Rev. 90, 1195–1268.

Williams, S. R., and Stuart, G. J. (2002). Dependence of EPSP efficacy on synapse location in neocortical pyramidal neurons. Science 295, 1907–1910.

Womble, M. D., and Moises, H. C. (1992). Muscarinic inhibition of M-current and a potassium leak conductance in neurones of the rat basolateral amygdala. J. Physiol. 457, 93–114.

Keywords: spike frequency adaptation, adaptation, oscillations, rate models, network dynamics, Fokker–Planck, mean-field, recurrent network

Citation: Augustin M, Ladenbauer J and Obermayer K (2013) How adaptation shapes spike rate oscillations in recurrent neuronal networks. Front. Comput. Neurosci. 7:9. doi: 10.3389/fncom.2013.00009

Received: 01 December 2012; Paper pending published: 30 December 2012;

Accepted: 08 February 2013; Published online: 27 February 2013.

Edited by:

Peter Robinson, The University of Sydney, AustraliaReviewed by:

Alessandro Treves, Scuola Internazionale Superiore di Studi Avanzati, ItalyPeter Robinson, The University of Sydney, Australia

Copyright © 2013 Augustin, Ladenbauer and Obermayer. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits use, distribution and reproduction in other forums, provided the original authors and source are credited and subject to any copyright notices concerning any third-party graphics etc.

*Correspondence: Moritz Augustin and Josef Ladenbauer, Department of Software Engineering and Theoretical Computer Science, Technische Universität Berlin, Neural Information Processing Group, Marchstr. 23, MAR 5-6, 10587 Berlin, Germany. e-mail:YXVndXN0aW5AbmkudHUtYmVybGluLmRl;amxAbmkudHUtYmVybGluLmRl

†These authors have contributed equally to this work.