- 1Population Health Sciences, Weill Cornell Medical College, New York, NY, United States

- 2Broadmoor Solutions Inc. Sinking Spring, PA, United States

- 3Cerner Corporation North Kansas City, MO, United States

- 4Department of Cardiology, NewYork-Presbyterian Medical Group Queens, New York, NY, United States

- 5Columbia University School of Nursing, New York, NY, United States

Shared decision-making (SDM) empowers patients and care teams to determine the best treatment plan in alignment with the patient's preferences and goals. Decision aids are proven tools to support high quality SDM. Patients with atrial fibrillation (AF), the most common cardiac arrhythmia, struggle to identify optimal rhythm and symptom management strategies and could benefit from a decision aid. In this Brief Research Report, we describe the development and preliminary evaluation of an interactive decision-making aid for patients with AF. We employed an iterative, user-centered design method to develop prototypes of the decision aid. Here, we describe multiple iterations of the decision aid, informed by the literature, expert feedback, and mixed-methods design sessions with AF patients. Results highlight unique design requirements for this population, but overall indicate that an interactive decision aid with visualizations has the potential to assist patients in making AF treatment decisions. Future work can build upon these design requirements to create and evaluate a decision aid for AF rhythm and symptom management.

Introduction

Shared decision-making (SDM) is an increasingly embraced practice in modern medicine when there is clinical equipoise between all possible treatment options, and a patient's values and goals of care should be considered alongside the evidence about outcome (1). The SDM process is aided by the use of decision aids, which are structured tools that explicitly describe the decision to be made and present unbiased information about options, including the option of taking no action. Prior studies have well established that decision-aids improve patient knowledge, patient involvement, and decision quality (2, 3). Decision aids are commonly delivered in a digital format, which allows the information to be rapidly updated, tailored to the individual person, and more precise timing of delivery in the decision-making process (4).

Patients with atrial fibrillation (AF) could benefit from a decision aid to compare AF treatment outcomes, risks and benefits, and alignment with personal care goals. AF is the most common type of cardiac arrhythmia, and its prevalence is steadily rising (5). Treatments for AF include medications or catheter ablation, a minimally invasive procedure that involves destroying the cardiac tissue believed to be causing the arrhythmia. Both treatment pathways have their own set of associated risks, benefits, and outcomes. The decision is complicated by the fact that, while catheter ablations are recommended in evidence-based guidelines for symptomatic patients (6), patients may continue to experience persistent AF and associated symptoms even after the procedure (7, 8). Thus, the treatment choice should come from a nuanced consideration of the anticipated benefits and potential risks.

Despite being an ideal scenario for SDM, little research or decision aid development has been conducted to support patients as they choose a rhythm and symptom control strategy for AF. In fact, a recent study demonstrated that very few AF patients engage in SDM with their care teams or even understand their treatment options (9). In our previous work, we report that AF patients have unique needs that create a challenging set of design requirements—specifically, a propensity for anxiety about their cardiac status but a desire for knowledge and data (10).

With these design challenges in mind, the aim of this Brief Research Report is to describe the development and preliminary evaluation of an interactive decision aid for patients with AF. A secondary objective was to explore data visualizations for communicating the risk of outcomes from each treatment option by evaluating participants' comprehension and preferences.

Materials and methods

Study design

We followed the International Patient Decision Aid Standards (IPDAS) Collaboration guidelines for creating high-quality patient decision aids (3), which outlines several steps that should be taken when developing decision aids. Following the first several steps of the IPDAS guidelines, in prior work we defined the scope of the decision aid, conducted needs assessments with patients and clinicians, determined the format and distribution plan, and reviewed and synthesized evidence about treatment options as well as optimal decision aid design. We defined the scope as helping patients with AF learn about two treatment options for rhythm and symptom management, antiarrhythmic medication or catheter ablation, including how each option works and its risks and benefits. The decision aid is intended to be used by patients during a cardiac electrophysiology visit to discuss treatment options for AF, as well as before or after the visit. Our needs assessment with 15 patients and 5 clinicians underscored the need for decision aids in this specific treatment decision, and generated suggestions regarding the format and delivery of the decision aid (10). In the present study, we build on this prior work by describing the next two steps of the IPDAS guidelines: (1) prototyping and (2) alpha testing to evaluate comprehensibility and acceptability. This study was approved by the Weill Cornell Medicine Institutional Review Board.

Prototype design and development

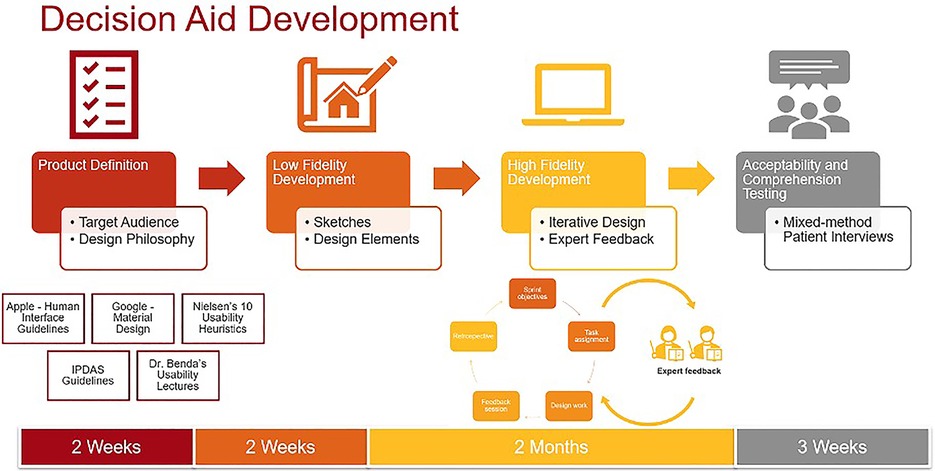

Prototype development occurred in three phases: low-fidelity prototyping, high-fidelity prototyping, and expert feedback incorporation. Figure 1 outlines the design process. During low-fidelity prototyping we created a set of hand-drawn rough sketches, which we iterated upon until agreeing upon a design theme and common elements (Supplementary Figure S1). During this stage, we sought feedback from clinical experts who provided input on the content, color palettes, and general flow of the decision aid. We then created high-fidelity prototypes using Adobe XD, a prototyping software suite which was chosen for the purposes of creating an interactive prototype suitable for real-time collaboration and extensive version histories. We again iterated upon these prototypes until the entire research team was satisfied with the content and visual elements in the prototypes. During this stage, we sought feedback from experts in SDM, decision aid design, and data visualization, which led to further changes to the prototypes. Specifically, the experts suggested personalizing results by demographics and medical histories to avoid a “one size fits all” message to the treatment outcomes, incorporating more information about AF and treatment options so patients can explore the decision aid on their own before visits, and incorporating an open-ended question section for patients to add their preferences and questions. They also recommended studying visualizations for communicating symptoms and quality of life given the dearth of literature on this topic, as we describe below.

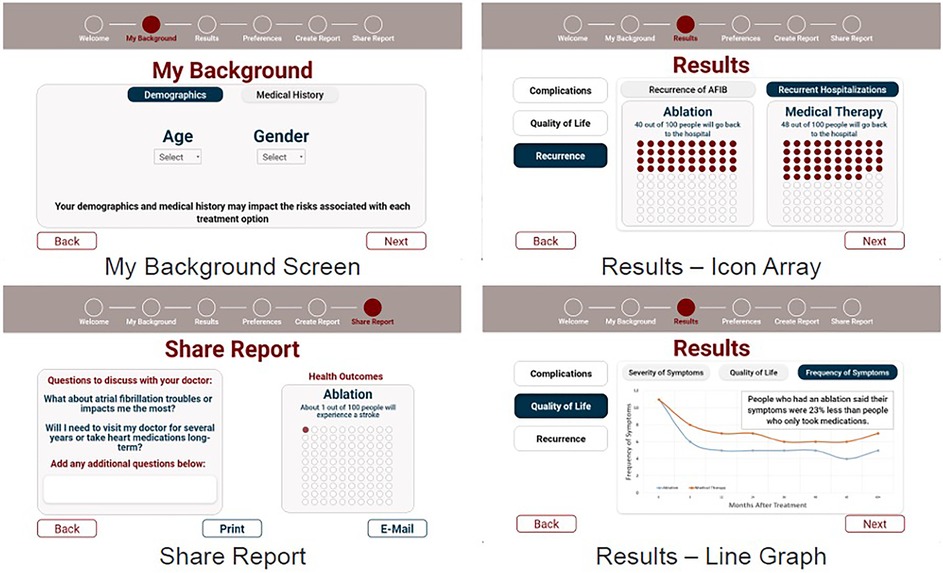

The final interactive prototypes were used for alpha testing with patients, shown in Figure 2.

Supplementary Table S1 describes key design choices of our final prototype after incorporating feedback from our project team and external experts. In terms of user experience (UX) design, we sought out the software industry's standards for icon layout, color palette, font choices. We based our UX standards off of Apple's Human Interface Guidelines (11), Google's Material Design (12), and Nielsen's ten 10 usability heuristics (13). Accessibility and inclusive design were prioritized to ensure that the decision aid can meet the needs of a diverse target audience and includes elements such as font, size, shape, and color of each component (14). For this reason, we also followed gerontological design principles (15), such as consistent linear navigation and large touch-targets to support usability among older adults, who are the predominant age group of AF patients.

The information presented in the decision aid came from a recent meta-analysis of catheter ablation vs. medication therapy (16) and a separate clinical trial reporting symptoms and quality of life outcomes (8).

To determine how to present the information, we performed a literature review of past decision aids studies to identify evidence about which visualizations are most effective at communicating evidence. We chose to use data visualizations because numerous studies have shown that visualizations are better understood or preferred in communicating the probability of an outcome as compared to text alone (17–21). Prior studies specifically report that pictographs are the most widely comprehended visualization for communicating binary outcomes (e.g., having a stroke or not after the treatment) compared to other visualizations or text alone (17, 18, 20, 22, 23). Prior studies also recommend using the same denominator (e.g., 5 in 100 people will experience this outcome) for consistency when presenting multiple outcomes using ratios and percentages (21, 24, 25). Therefore, we adopted these visualization principles when presenting information about binary outcomes in the prototypes.

However, we found there is far less literature on how to communicate symptom experiences and quality of life in decision aids. One prior study testing the comprehension of symptom visualizations between text, text plus visual analogy (such as a gas gauge or weather icon representing symptom status), text with a number line, and text with a line graph showed that comprehension for the visual analogy was significantly higher than text alone or other visualizations (26). However, this study was focused on returning patients' personal symptom data to them, rather than projected population-level symptom outcomes in a decision aid.

Therefore, we explored comprehension of similar visualizations in the different context of SDM. Specifically, we created four visualization options showing symptom and quality of life outcomes: line graph, gauge, text with cartoon, and text alone (Supplementary Figure S2). The text alone option was the control condition. We created a version of the text alone that also included a cartoon image explaining that information should be contextualized to the individual patient, at the suggestion of experts who evaluated our high-fidelity prototypes. The gauge was selected because visual analogies were previously reported as well comprehended in older adults with cardiovascular disease (26). The line graph, although less well comprehended in prior work, most easily allowed us to display multiple data points over time. We evaluated comprehension of the four visualization options during alpha testing.

Alpha testing

Alpha testing involves evaluating early stage prototypes with patients for usability and comprehension (27). The outcomes of interest in alpha testing were (1) objective comprehension of data visualizations included in the decision aid, measured using the International Organization for Standardization (ISO) 9,186 method (2, 28) decision aid acceptability, measured using the Decision Aid Acceptability Scale (29). We aimed to recruit 15 participants based on our prior experience with user-centered design studies and published guidance (10, 30–32), with the option to terminate recruitment early if thematic saturation in qualitative data was reached. Thematic saturation occurs when no new information is being obtained and participant responses become redundant with prior responses (33).

To conduct alpha testing, we recruited patients who had recently undergone catheter ablation at an urban hospital affiliated with New York Presbyterian-Cornell hospital in Queens, New York. The cardiology team at the hospital generated a list of potential patients, who were then contacted by phone or email and invited to participate via Zoom. All participants provided verbal consent to participate before each session. Each participant was compensated for their time with a $25 gift card.

During each session, we collected baseline socio-demographic information, preferences for involvement in medical decision-making measured using the Controls-Preferences Scale (34), health literacy (35), subjective numeracy (36), graph literacy (37), and experiences of decisional conflict relating to the decision to undergo ablation measured using the Decisional Conflict Scale (38).

After completing baseline surveys, participants were shown a series of screens displaying the high fidelity prototype. We collected qualitative data regarding general reactions and suggestions for improved usability, appearance, and satisfaction, and administered the Decision Aid Acceptability Scale.

Participants were then shown the four visualization options showing symptom and quality of life outcomes: line graph, gauge, text with cartoon, and text alone. The order in which visualizations were shown was randomized for each participant, known as counterbalancing, to prevent potential order effects (39). Objective comprehension was measured for each of the four visualizations.

All sessions were audio-recorded and transcribed via NVivo automated transcription software. The transcripts were then reviewed by two members on the research team and verified against the original recording to confirm accuracy. Qualitative data was analyzed using general thematic analysis (40). To ensure rigor in qualitative approaches, we conducted independent coding, triangulated results with quantitative surveys, and discussed results with other stakeholders to confirm credibility. To ensure rigor in qualitative approaches, we conducted independent coding, triangulated results with quantitative surveys, and discussed results with other stakeholders to confirm credibility. During the analysis, one coder analyzed the transcripts to identify themes that were reviewed and confirmed by a second coder. The emerging findings were discussed and coders independently confirmed when thematic saturation had been reached. Quantitative survey data was analyzed using basic descriptive statistics of mean, central tendency, and frequency. Qualitative and quantitative data were triangulated and the integrated findings were discussed with other key stakeholders (cardiologists and cardiac nurses) for veracity.

Results

Participant characteristics

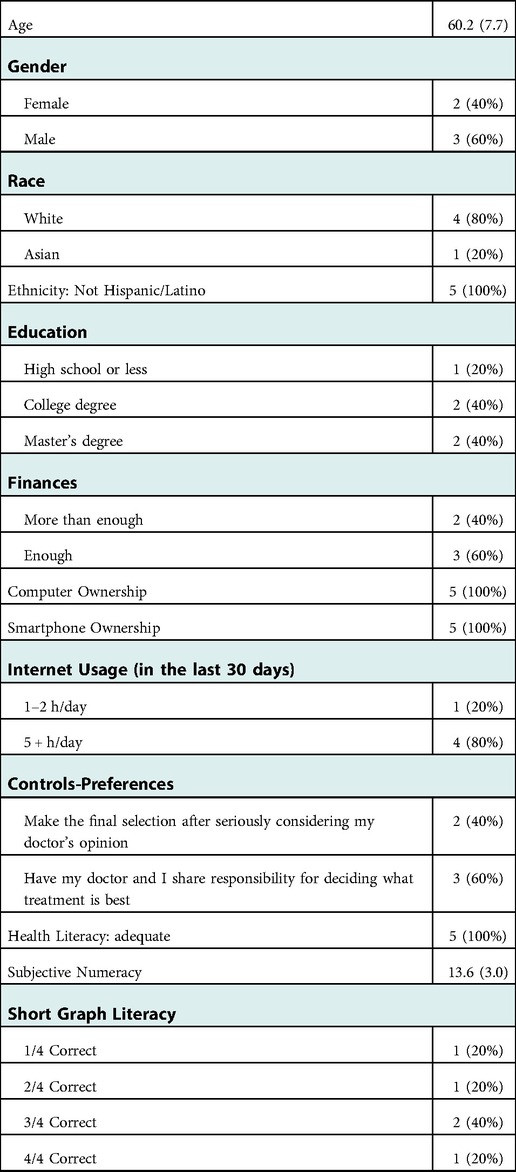

Recruitment concluded after five participants were enrolled in alpha testing because thematic saturation was reached. Participants (two female and three male) had an average age of 60.2 years (SD = 7.7) (Table 1). The majority of participants were non-Hispanic/Latino White with high education levels and high technology experience. All had adequate or more than adequate financial resources and owned a laptop and an iPhone. The majority also had high willingness to engage in decision making with their care teams (controls-preferences), high health literacy, moderate subjective numeracy (mean score 13.6 out of 18, with higher scores equating to higher numeracy), but mixed levels of graph literacy.

Acceptability and comprehension

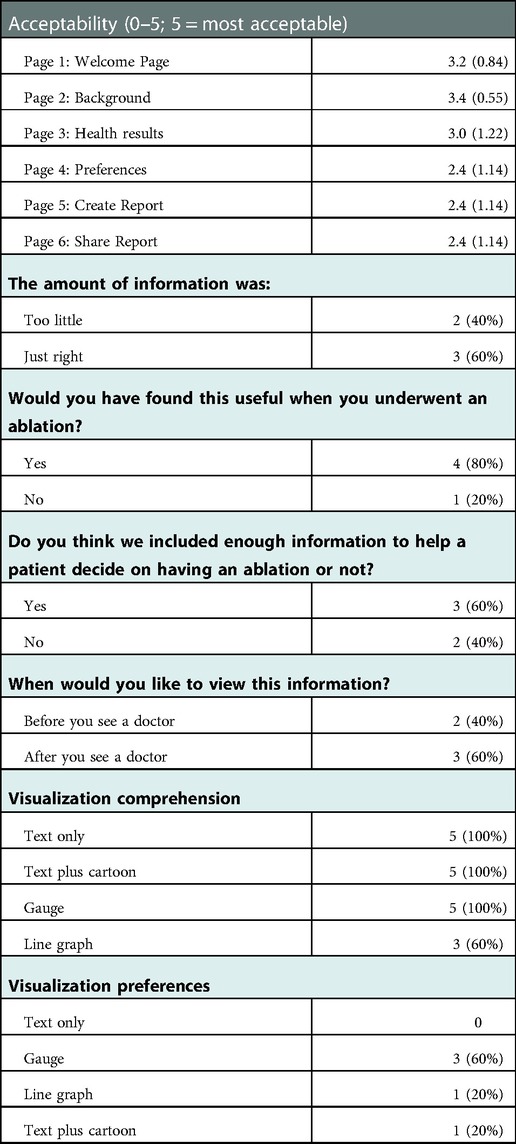

The acceptability of the decision aid and objective comprehension of the visualizations are presented in Table 2. On average, the mean scores for the Welcome Page, Background and Health Results screens were higher than the Preferences, Create Report and Share Report screens, indicating higher acceptability. Four of the five participants found the decision aid to be helpful, but two thought the decision aid provided too little information to help a patient reach a treatment decision.

Table 2. Decision aid acceptability and visualization comprehension survey results (n = 5); mean (SD) or n (%).

Regarding objective comprehension, all five participants correctly comprehended the text only, text plus cartoon, and gauge visualizations. Three of the five participants correctly comprehended the line graph. The majority of participants (three of the five) reported that the gauge visualization was their most preferred visualization.

Qualitative feedback

Themes from the qualitative analysis are provided below. Illustrate quotes are provided in Supplementary Table S2.

Theme 1: desire for data and evidence

Most participants showed a strong desire for data and evidence, some even requesting more data than what was presented in the prototypes. All participants stated they would like to understand more about from where the evidence originated, with citations to the original trials or guidelines providing the evidence, and guidance on how they should contextualize the evidence for themselves.

Theme 2: preference for simplified language rather than medical terms

Since all participants were already familiar with AF and had exposure to many AF-related terms prior to the interview, they were mostly successful in comprehending the language used in the proptype. However, they still showed a preference for simplified language rather than medical terms, on some screens they required more detailed explanations about certain terms.

Theme 3: more details on treatment options are required

Most participants wanted more information about the treatment options available to them. One participant stated that they would like to see more treatment options other than ablation and medication, and what could be the potential outcome if the treatment did not work. Another participant suggested that patients tended to overestimate the benefits of surgical treatment and thought it would be beneficial for the decision aid to temper expectations by providing more details on potential treatment outcomes and pushing for discussions with a provider.

Theme 4: both digital and physical versions are important

All participants responded positively to accessing the decision-aid electronically, which participants noted was especially helpful when the COVID-19 pandemic caused anxiety around in-person visits. They also noted it facilitated communication around decision-making with care teams and caregivers. Email, text, website, participant portal and mobile app were all mentioned by participants as preferred strategies for electronically accessing a decision aid. However, participants also expressed the need to obtain physical copies of results for people with lower digital literacy, and liked having an option to print results from an electronic decision aid.

Theme 5: preference to use decision aid with care teams

Despite the overall high acceptability of the decision aid, participants reported a preference to review the decision aid with their doctor or other member of their care team to weigh the risks and benefits of each option. Participants were mixed regarding whether they would prefer to view the decision aid before or after consulting with their doctor.

Theme 6: visualizations could affect participant sentiments

Visualizations provoked both positive and negative emotional responses from participants. One participant stated that certain images in the prototype caused anxiety and triggered negative sentiments, such as the heartbeat graphic on the welcome screen. Another participant reported that viewing the cardiac outcomes caused anxiety, and that cartoon images of patients caused confusion and concern. However another participant reported that the gauge data visualization was visually appealing and lifted their mood.

Discussion

Summary of findings

In this study, we developed and evaluated prototypes of an AF decision aid using the steps outlined in the IPDAS guidelines for decision-aid development. Our evaluation of the interactive decision aid prototype revealed high acceptability of many pages of the decision aid. However, three important design challenges emerged: managing patient anxiety, visualizing symptom outcomes, and designing for broad accessibility. These design challenges will be critically important to address as the prevalence of AF continues to rise and the number of patients needing decision support around treatment options rises with it. In AF, many decision aids have been developed to help patients choose a stroke-preventing medication (anticoagulant); these decision aids have led to more SDM occurring between clinicians and patients and lowered patients' cognitive load and decisional conflict (41–44). Thus, well-designed decision aids for patients selecting a rhythm and symptom control strategy may have an equally positive impact on decisional outcomes. Below we describe these design challenges in greater detail and potential solutions to explore in future work.

Challenge 1: manage patient anxiety without withholding information

Patients in our study wanted more information, but also noted how easily they could become anxious about their cardiac status. Patients requested detailed data about treatment pathways and potential adverse outcomes. At the same time, they described worrying constantly about their cardiac status and fear of those same adverse outcomes. In some cases, viewing a graphic image of a heart in our prototypes was enough to generate worry. Prior studies have indeed reported that many patients with AF struggle with anxiety symptoms (45–47). Moreover, some studies have shown that providing too much information can, in some cases, deteriorate decision quality (48).

Therefore, there exists an interesting paradox in this patient populations' information needs. Our findings suggest that patients need to see more comprehensive information presented in a straightforward manner in medical decision aids. Specifically, sources of evidence for the data being displayed should be clearly cited with hyperlinks for further reading; patients reported wanting to verify sources of data themselves. Patients also expressed a clear desire for explanations that used simple, non-medical jargon, even when they were familiar with certain medical terms. Consistent and non-medical terms are shown to reduce patient confusion (49). As in prior studies (42, 44), patients in our study strongly preferred to discuss their treatment options with their care team rather than view the decision aid independently. The context provided by healthcare professionals could also ameliorate anxiety. Finally, visualizations should be carefully examined to avoid causing anxiety and fear.

Challenge 2: determine how to visualize symptom outcomes

Prior work has established the benefits of using visualizations to communicate evidence; patients report increased comprehension of probabilities of different outcomes occurring with each treatment option (17–23, 42). In our study, patients preferred and comprehended visualizations better than text alone. For probabilities with binary outcomes (e.g., likelihood of an adverse event occurring), studies support the use of icon arrays as the most comprehended visualization (50).

However, less is understood about the best visualizations of potential symptoms and quality of life outcomes. Symptoms and quality of life are typically measured through patient-reported outcomes measures (PROMs) which have different scoring mechanisms, making numerical comparisons difficult. For this reason, in prior studies, visual analogies such as the gauge visualization of personal PROM scores are well comprehended compared to text alone or line graphs (26). However, patients in our study reported wanting to see numerical scores, and felt that visual analogies overly simplify these measures and do not capture nuanced changes in PROMs over time. At the same time, only three of the five participants objectively comprehended line graphs (where nuanced changes were displayed in more detail), and only one participant preferred it. Adding another layer of complexity is the desire for patients to personalize data visualizations based on their personal health history, demographics, and other factors that may affect outcomes. It is possible that visual analogies paired with a “details on demand” approach, providing numerical symptom and quality of life scores plotted over time and customized to the patient, may represent a promising visualization option which should be further explored.

Challenge 3: design for broad accessibility

Inclusive design principles ensure that applications “are accessible to, and usable by, people with the widest range of abilities within the widest range of situations” (51) and should guide every user-centered design project. While we consulted gerontological design principles (15) when creating prototypes, additional user needs and user groups should be considered. For example, the unique design needs of people with disabilities should be solicited (52). Many patients engage in SDM with the support of their caregivers (53), who should also be considered end users in usability studies.

More fundamentally, the creation of an electronic vs. a paper-based decision aid also creates barriers to access that should be carefully considered. In general, Internet use among racial and ethnic minority, low income, and older adult populations is steadily rising (54). However, one study showed that the use of digital information declined among older cohorts, but found that the physical vs. digital disparities were significantly lower among people with no college education (55). In another study, patients preferred printed medication information and had mixed responses to electronic information (56). In our study, patients preferred to have both physical and digital copies available of our decision aid's information. Creating printable screens of an electronic decision aid is one way to create broad accessibility for patients depending on their preferences.

Strengths and limitations

In this study, we followed IPDAS guidelines closely and were able to demonstrate effectiveness and quality in the development and evaluation of the decision aid. We found success in being able to leverage several sources of widely accepted knowledge, including existing literature (for data visualization strategies), experts in atrial fibrillation and decision aids (for feedback), and industry design and heuristic standards (for our design philosophy). Our study was limited primarily by the small sample size due to thematic saturation being reached after only five participants were enrolled, which may narrow the generalizability of findings. Moreover, the sample did not include a wide range of older adults based on age or technology comfort, which may further limit generalizability. In future work we plan to refine the prototype based on the feedback provided and continue testing with larger samples of participants. This will be a critically important step to avoid creating intervention-generated inequities (57), and advance the goal of creating a highly usable and useful decision aid for AF patients to be tested in clinical trials.

Data availability statement

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation.

Ethics statement

The studies involving human participants were reviewed and approved by the Weill Cornell Medicine Institutional Review Board. Written informed consent for participation was not required for this study in accordance with the national legislation and the institutional requirements.

Author contributions

JF, BW, and EZ: drafted the manuscript and participated in data collection and analysis. MRT: conceived of the study, obtained funding, led data collection, and provided critical feedback on manuscript drafts. DJS: provided critical feedback during data collection, analysis, and on the manuscript drafts. All authors contributed to the article and approved the submitted version.

Funding

This study was supported by the National Institute of Nursing Research of the National Institutes of Health (4R00NR019124-03; PI: MRT).

Acknowledgments

We would like to acknowledge the patients and experts who provided feedback and guidance for this project.

Conflict of interest

MRT is a consultant for Boston Scientific Corp. and has equity ownership in Iris OB Health, Inc. BW is employed by Oracle/Cerner. EZ is employed by Broadmoor Solutions Inc. and is a consultant for Sanofi Pasteur.

The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher's note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Supplementary material

The Supplementary Material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/fdgth.2022.1086652/full#supplementary-material.

References

1. Armstrong MJ, Shulman LM, Vandigo J, Mullins CD. Patient engagement and shared decision-making: what do they look like in neurology practice? Neurol Clin Pract. (2016) 6(2):190–7. doi: 10.1212/CPJ.0000000000000240

2. Medicines N, Prescribing Centre (UK). Patient decision aids used in consultations involving medicines. Manchester, UK: National Institute for Health and Care Excellence (NICE) (2015).

3. International Patient Decision Aids Standards (IPDAS) Collaboration. Available at: http://ipdas.ohri.ca/index.html (cited 2021 Sep 23).

4. Hoffman AS, Volk RJ, Saarimaki A, Stirling C, Li LC, Härter M, et al. Delivering patient decision aids on the Internet: definitions, theories, current evidence, and emerging research areas. BMC Med Inform Decis Mak.. (2013) 13 Suppl 2(Suppl 2):S13. doi: 10.1186/1472-6947-13-S2-S13

5. Colilla S, Crow A, Petkun W, Singer DE, Simon T, Liu X. Estimates of current and future incidence and prevalence of atrial fibrillation in the U.S. adult population. Am J Cardiol. (2013) 112(8):1142–7. doi: 10.1016/j.amjcard.2013.05.063

6. January Craig T, Samuel WL, Hugh C, Chen Lin Y, Cigarroa Joaquin E, Cleveland Joseph C, et al. AHA/ACC/HRS focused update of the 2014 AHA/ACC/HRS guideline for the management of patients with atrial fibrillation: a report of the American college of cardiology/American heart association task force on clinical practice guidelines and the heart rhythm society in collaboration with the society of thoracic surgeons. Circulation. (2019) 140(2):e125–51. doi: 10.1016/j.jacc.2019.01.011

7. Packer DL, Mark DB, Robb RA, Monahan KH, Bahnson TD, Poole JE, et al. Effect of catheter ablation vs. antiarrhythmic drug therapy on mortality, stroke, bleeding, and cardiac arrest among patients with atrial fibrillation: the CABANA randomized clinical trial. JAMA. (2019) 321(13):1261–74. doi: 10.1001/jama.2019.0693

8. Mark DB, Anstrom KJ, Sheng S, Piccini JP, Baloch KN, Monahan KH, et al. Effect of catheter ablation vs. medical therapy on quality of life among patients with atrial fibrillation: the CABANA randomized clinical trial. JAMA. (2019) 321(13):1275–85. doi: 10.1001/jama.2019.0692

9. Ali-Ahmed F, Pieper K, North R, Allen LA, Chan PS, Ezekowitz MD, et al. Shared decision-making in atrial fibrillation: patient-reported involvement in treatment decisions. Eur Heart J Qual Care Clin Outcomes. (2020) 6(4):263–72. doi: 10.1093/ehjqcco/qcaa040

10. Turchioe M R, Mangal S, Ancker JS, Gwyn J, Varosy P, Slotwiner D. “Replace uncertainty with information”: shared decision-making and decision quality surrounding catheter ablation for atrial fibrillation. Eur J Cardiovasc Nurs. (2022):zvac078. doi: 10.1093/eurjcn/zvac078

11. Apple Inc. Human interface guidelines. Available at: https://developer.apple.com/design/human-interface-guidelines/ (cited 2022 Mar 30).

12. Material Design. Material Design. Available at: https://material.io/design (cited 2022 Mar 30).

13. Nielsen J. 10 usability heuristics for user interface design. Nielsen Norman Group. Available at: https://www.nngroup.com/articles/ten-usability-heuristics/ (cited 2022 Mar 25).

14. Benda NC, Montague E, Valdez RS. Design for inclusivity. In: Sethumadhavan A, Sasangohar F., editors. Design for Health: Applications of Human Factors. Elsevier Science (2020).

15. Masterson Creber RM, Hickey KT, Maurer MS. Gerontechnologies for older patients with heart failure: what is the role of smartphones, tablets, and remote monitoring devices in improving symptom monitoring and self-care management? Curr Cardiovasc Risk Rep. (2016) 10(10):30. doi: 10.1007/s12170-016-0511-8

16. Asad ZUA, Yousif A, Khan MS, Al-Khatib SM, Stavrakis S. Catheter ablation versus medical therapy for atrial fibrillation: a systematic review and meta-analysis of randomized controlled trials. Circ Arrhythm Electrophysiol. (2019) 12(9):e007414. doi: 10.1161/CIRCEP.119.007414

17. Hawley ST, Zikmund-Fisher B, Ubel P, Jancovic A, Lucas T, Fagerlin A. The impact of the format of graphical presentation on health-related knowledge and treatment choices. Patient Educ Couns. (2008) 73(3):448–55. doi: 10.1016/j.pec.2008.07.023

18. Tait AR, Voepel-Lewis T, Brennan-Martinez C, McGonegal M, Levine R. Using animated computer-generated text and graphics to depict the risks and benefits of medical treatment. Am J Med. (2012) 125(11):1103–10. doi: 10.1016/j.amjmed.2012.04.040

19. Tait AR, Voepel-Lewis T, Zikmund-Fisher BJ, Fagerlin A. Presenting research risks and benefits to parents: does format matter? Anesth Analg. (2010) 111(3):718–23. doi: 10.1213/ANE.0b013e3181e8570a

20. Tait AR, Voepel-Lewis T, Zikmund-Fisher BJ, Fagerlin A. The effect of format on parents’ understanding of the risks and benefits of clinical research: a comparison between text, tables, and graphics. J Health Commun. (2010) 15(5):487–501. doi: 10.1080/10810730.2010.492560

21. Waters EA, Weinstein ND, Colditz GA, Emmons K. Formats for improving risk communication in medical tradeoff decisions. J Health Commun. (2006) 11(2):167–82. doi: 10.1080/10810730500526695

22. Zikmund-Fisher BJ, Witteman HO, Fuhrel-Forbis A, Exe NL, Kahn VC, Dickson M. Animated graphics for comparing two risks: a cautionary tale. J Med Internet Res. (2012) 14(4):e106. doi: 10.2196/jmir.2030

23. Fraenkel L, Peters E, Tyra S, Oelberg D. Shared medical decision making in lung cancer screening: experienced versus descriptive risk formats. Med Decis Making. (2016) 36(4):518–25. doi: 10.1177/0272989X15611083

24. Trevena L, Zikmund-Fisher B, Edwards A, Gaissmaier W, Galesic M, Han P, et al. Presenting probabilities. In: Volk R, Llewellyn-Thomas H, editors. 2012 Update of the International Patient Decision Aids Standards (IPDAS) Collaboration's Background Document. Chapter C (2012). http://ipdas.ohri.ca/resources.html

25. Waller J, Whitaker KL, Winstanley K, Power E, Wardle J. A survey study of women's responses to information about overdiagnosis in breast cancer screening in Britain. Br J Cancer. (2014) 111(9):1831–5. doi: 10.1038/bjc.2014.482

26. Turchioe M R, Grossman LV, Myers AC, Baik D, Goyal P, Masterson Creber RM. Visual analogies, not graphs, increase patients’ comprehension of changes in their health status. J Am Med Inform Assoc. (2020 ) 27(5):677–89. doi: 10.1093/jamia/ocz217

27. Coulter A, Stilwell D, Kryworuchko J, Mullen PD, Ng CJ, van der Weijden T. A systematic development process for patient decision aids. BMC Med Inform Decis Mak. (2013) 13 Suppl 2(Suppl 2):S2. doi: 10.1186/1472-6947-13-S2-S2

28. Arcia A, Grossman LV, George M, Turchioe MR, Mangal S, Creber RMM. Modifications to the ISO 9186 method for testing comprehension of visualizations: successes and lessons learned. 2019 IEEE workshop on visual analytics in healthcare (VAHC) (2019). p. 41–7

29. O’Connor AM,AC. User manual—acceptability. Ottawa Hospital Research Institute (1996). Available at: https://decisionaid.ohri.ca/docs/develop/User_Manuals/UM_Acceptability.pdf (published 2002).

30. Turchioe MR, Heitkemper EM, Lor M, Burgermaster M, Mamykina L. Designing for engagement with self-monitoring: a user-centered approach with low-income, Latino adults with Type 2 Diabetes. Int J Med Inform. (2019) 130:103941. doi: 10.1016/j.ijmedinf.2019.08.001

31. Creswell JW. Qualitative inquiry and research design: Choosing among five approaches. Thousand Oaks, California: SAGE Publications (2012).

32. Reading M, Baik D, Beauchemin M, Hickey KT, Merrill JA. Factors influencing sustained engagement with ECG self-monitoring: perspectives from patients and health care providers. Appl Clin Inform. (2018) 9(4):772–81. doi: 10.1055/s-0038-1672138

33. Saunders B, Sim J, Kingstone T, Baker S, Waterfield J, Bartlam B, et al. Saturation in qualitative research: exploring its conceptualization and operationalization. Qual Quant. (2018) 52(4):1893–907. doi: 10.1007/s11135-017-0574-8

34. Degner LF, Sloan JA, Venkatesh P. The control preferences scale. Can J Nurs Res. (1997) 29(3):21–43.9505581

35. Chew LD, Bradley KA, Boyko EJ. Brief questions to identify patients with inadequate health literacy. Fam Med. (2004) 36(8):588–94.15343421

36. McNaughton CD, Cavanaugh KL, Kripalani S, Rothman RL, Wallston KA. Validation of a short, 3-item version of the subjective numeracy scale. Med Decis Making. (2015) 35(8):932–6. doi: 10.1177/0272989X15581800

37. Okan Y, Janssen E, Galesic M, Waters EA. Using the short graph literacy scale to predict precursors of health behavior change. Med Decis Making. (2019) 39(3):183–95. doi: 10.1177/0272989X19829728

38. O’Connor AM. Validation of a decisional conflict scale. Med Decis Making. (1995) 15(1):25–30. doi: 10.1177/0272989X9501500105

39. MacKenzie IS. Human-Computer Interaction: an Empirical Research Perspective (1st. ed.). San Francisco, CA, USA: Morgan Kaufmann Publishers Inc. (2013).

40. Patton MQ. Enhancing the quality and credibility of qualitative analysis. Health Serv Res. (1999) 34(5):1189–208.10591279

41. Thomson R, Robinson A, Greenaway J, Lowe P, DARTS Team. Development and description of a decision analysis based decision support tool for stroke prevention in atrial fibrillation. Qual Saf Health Care. (2002) 11(1):25–31. doi: 10.1136/qhc.11.1.25

42. Fatima S, Holbrook A, Schulman S, Park S, Troyan S, Curnew G. Development and validation of a decision aid for choosing among antithrombotic agents for atrial fibrillation. Thromb Res. (2016) 145:143–8. doi: 10.1016/j.thromres.2016.06.015

43. Kunneman M, Branda ME, Hargraves IG, Sivly AL, Lee AT, Gorr H, et al. Assessment of shared decision-making for stroke prevention in patients with atrial fibrillation: a randomized clinical trial. JAMA Intern Med. (2020 1) 180(9):1215–24. doi: 10.1001/jamainternmed.2020.2908

44. Zeballos-Palacios CL, Hargraves IG, Noseworthy PA, Branda ME, Kunneman M, Burnett B, et al. Developing a conversation aid to support shared decision making: reflections on designing anticoagulation choice. Mayo Clin Proc. (2019) 94(4):686–96. doi: 10.1016/j.mayocp.2018.08.030

45. Risom SS, Zwisler AD, Thygesen LC, Svendsen JH, Berg SK. High readmission rates and mental distress 1 yr after ablation for atrial fibrillation or atrial flutter: a NATIONWIDE SURVEY. J Cardiopulm Rehabil Prev. (2019) 39(1):33–8. doi: 10.1097/HCR.0000000000000395

46. Joensen AM, Dinesen PT, Svendsen LT, Hoejbjerg TK, Fjerbaek A, Andreasen J, et al. Effect of patient education and physical training on quality of life and physical exercise capacity in patients with paroxysmal or persistent atrial fibrillation: a randomized study. J Rehabil Med. (2019) 51(6):442–50. doi: 10.2340/16501977-2551

47. Severino P, Mariani MV, Maraone A, Piro A, Ceccacci A, Tarsitani L, et al. Triggers for atrial fibrillation: the role of anxiety. Cardiol Res Pract. (2019) 2019:1208505. doi: 10.1155/2019/1208505

48. Chan SY. The use of graphs as decision aids in relation to information overload and managerial decision quality. J Inf Sci Eng. (2001) 27(6):417–25. doi: 10.1177/016555150102700607

49. Gao L, Zhao CW, Hwang DY. End-of-life care decision-making in stroke. Front Neurol. (2021) 12:702833. doi: 10.3389/fneur.2021.702833

50. Zikmund-Fisher BJ, Witteman HO, Dickson M, Fuhrel-Forbis A, Kahn VC, Exe NL, et al. Blocks, ovals, or people? Icon type affects risk perceptions and recall of pictographs. Med Decis Making. (2014) 34(4):443–53. doi: 10.1177/0272989X13511706

51. Keates S. BS 7000-6:2005 Design management systems. Managing inclusive design. Guide. 2005 Feb 4. Available at: http://gala.gre.ac.uk/id/eprint/12997/ (cited 2022 Jun 29).

52. Valdez RS, Rogers CC, Claypool H, Trieshmann L, Frye O, Wellbeloved-Stone C, et al. Ensuring full participation of people with disabilities in an era of telehealth. J Am Med Inform Assoc. (2020) 28(2):389–92. doi: 10.1093/jamia/ocaa297

53. Zeliadt SB, Penson DF, Moinpour CM, Blough DK, Fedorenko CR, Hall IJ, et al. Provider and partner interactions in the treatment decision-making process for newly diagnosed localized prostate cancer. BJU Int. (2011) 108(6):851–6. doi: 10.1111/j.1464-410X.2010.09945.x

54. Internet/broadband fact sheet (2021). Pew Research Center: Internet, Science & Tech. Available at: https://www.pewresearch.org/internet/fact-sheet/internet-broadband/ [cited 2022 Sep 13].

55. Gordon NP, Crouch E. Digital information technology use and patient preferences for internet-based health education modalities: cross-sectional survey study of middle-aged and older adults with chronic health conditions. JMIR Aging. (2019) 2(1):e12243. doi: 10.2196/12243

56. Hammar T, Nilsson AL, Hovstadius B. Patients’ views on electronic patient information leaflets. Pharm Pract. (2016) 14(2):702. doi: 10.18549/PharmPract.2016.02.702

Keywords: shared decision-making, atrial fibrillation, prototype, decision aids, iterative design, health informatics, mixed-methods

Citation: Fanio J, Zeng E, Wang B, Slotwiner DJ and Reading Turchioe M (2023) Designing for patient decision-making: Design challenges generated by patients with atrial fibrillation during evaluation of a decision aid prototype. Front. Digit. Health 4:1086652. doi: 10.3389/fdgth.2022.1086652

Received: 1 November 2022; Accepted: 14 December 2022;

Published: 6 January 2023.

Edited by:

Patrick C. Shih, Indiana University Bloomington, United StatesReviewed by:

Amalie Dyda, The University of Queensland, AustraliaLaurie Lovett Novak, Vanderbilt University Medical Center, United States

© 2023 Fanio, Zeng, Wang, Slotwiner and Reading Turchioe. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Meghan Reading Turchioe bXIzNTU0QGN1bWMuY29sdW1iaWEuZWR1

Specialty Section: This article was submitted to Human Factors and Digital Health, a section of the journal Frontiers in Digital Health

Janette Fanio

Janette Fanio Erin Zeng1,2

Erin Zeng1,2 Meghan Reading Turchioe

Meghan Reading Turchioe