- WISE Lab, Faculty of Humanities and Social Sciences, Dalian University of Technology, Dalian, China

In order to better understand the effect of social media in the dissemination of scholarly articles, employing the daily updated referral data of 110 PeerJ articles collected over a period of 345 days, we analyze the relationship between social media attention and article visitors directed by social media. Our results show that social media presence of PeerJ articles is high. About 68.18% of the papers receive at least one tweet from Twitter accounts other than @PeerJ, the official account of the journal. Social media attention increases the dissemination of scholarly articles. Altmetrics could not only act as the complement of traditional citation measures but also play an important role in increasing the article downloads and promoting the impacts of scholarly articles. There also exists a significant correlation among the online attention from different social media platforms. Articles with more Facebook shares tend to get more tweets. The temporal trends show that social attention comes immediately following publication but does not last long, so do the social media directed article views.

Introduction

Social Media Attention about Scholarly Articles

Social media, such as Facebook and Twitter, has become a critical tool in scholarly communications. Dissemination of research in traditional way depends on the user searching for or “pulling” relevant knowledge from the literature base. Social media, instead, “pushes” knowledge to the user straightly (Allen et al., 2013). Not only general public but scientists are also active users of social media (Rowlands et al., 2011; Van Noorden, 2014; Veletsianos, 2016). According to the estimation of altmetric.com, around 15,000 unique research outputs are shared or mentioned online each day (Altmetric, 2016). About 21.5% of papers receive at least one tweet overall; however, Twitter density is very different in different fields, higher in Social Sciences, Biomedical and Health Sciences, as well as Life and Earth Sciences, but very low in Mathematics and Computer Science and Natural Sciences and Engineering (Haustein et al., 2015). Open access is also an important factor in disseminating articles on social media. Open access articles receive more social media attention and higher article downloads than non-open access papers (Wang et al., 2015).

Relationship among Social Media Attention, Downloads, and Citation

Altmetrics can supply hints of online concerns from publics. Moreover, altmetrics is in some measure produced by scholars as part of their academic communication (Lăzăroiu, 2017). The correlations between altmetrics and citation are complicated. First, there are correlations between Mendeley readership and times cited (Priem et al., 2012; Zahedi et al., 2017). Nevertheless, the results of correlations between tweets and citation are controversy. For example, an early study confirms that tweets can predict highly cited articles within the first 3 days after article publication (Eysenbach, 2011); however, some other studies draw different conclusions. Thelwall et al. (2013) found that tweets are associated with citation counts, but there is no correlation between altmetrics and citations. Haustein et al. (2014) and Costas et al. (2015) found a very weak correlation between the number of tweets and the number of citations of papers. Third, there are positive correlations between downloads and citations, most downloaded articles are those that are more likely to receive citations (O’Leary, 2008; Lippi and Favaloro, 2012). Article download is one of the first alternative metrics to be introduced in digital library (Bollen et al., 2009; Kurtz and Bollen, 2010), while link analysis at article-level is even earlier to be used as altmetrics indicators for research evaluation (Kousha and Thelwall, 2007a,b). As early as 2004, the BMJ provided the article views to public. Nowadays, article usage data are available on the article page from a lot of publishers’ and individual journals’ websites, including Springer Nature, Frontiers, IEEE Xplore Digital Library, ACM Digital Library, Taylor & Francis, Oxford University Press, Science, PNAS and PeerJ, etc. (Wang et al., 2014, 2016). The blooming of usage data inspires many studies from various perspectives, i.e., exploring researchers’ working habits according to the time of article downloads (Wang et al., 2012), the temporal trends of article downloads after publication (Wang et al., 2014; Duan and Xiong, 2017; Khan and Younas, 2017). Compared to downloads, citations usually delay by about 2 years, so download statistics provide a useful indicator of eventual citations in advance (Watson, 2009). More downloads during a limited time period is an indicator of more citations to the article in a long-term interval (Jahandideh et al., 2007). Yan and Gerstein (2011) found that there are intrinsic differences among different types of article usage (HTML views and PDF downloads versus XML). PDF downloads increase the probability that people would later read it (Allen et al., 2013). The fourth aspect is concerning the relationship between social media attention and article downloads. It is considered that people hardly read the articles they tweet about, for example, Haile (2014) stated that they “found effectively no correlation between social shares and people actually reading.” Employing a small dataset (16 articles), Allen et al. (2013) reported that social media release of a research article in the clinical pain sciences increases the article visitors. In our previous study, applying the referral data from PeerJ, we found that referrals from social media account for a significant number of visits to articles, especially during the days shortly after publication. However, this fast initial accumulation soon gives way to a rapid decay (Wang et al., 2016). Winter (2015) found a clear association exists between the number of tweets and the number of views for PLOS ONE articles.

It is necessary to point out that article-level metrics is different from author-level metrics within altmetrics, where the latter measure the impact of individual authors through varied metric indicators, including bibliometrics, usage, participation, rating, social connectivity, and composite indicators (Torres-Salinas and Milanes-Guisado, 2014; Orduña-Malea et al., 2016).

Adoption of Altmetrics

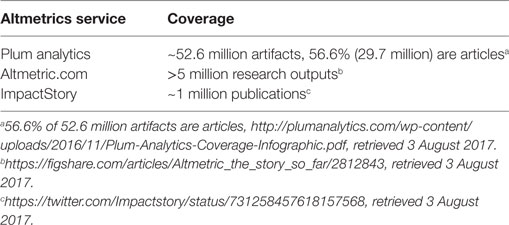

There are three major services calculating altmetrics, including Altmetric.com, Plum Analytics, and Impactstory. Plum Analytics has covered the most number of papers. According to the statistics, it covers 52.6 million research outputs, of which 56.6% (29.7 million) are articles.1 Altmetric.com covers over five million research outputs and ImpactStory tracks around 1 million publications, as shown in Table 1.

The importance of social media in disseminating scholarly articles has been realized by publishers. Nowadays, almost all publishers have integrated the social share tools into article page, which makes article readers share articles on social media platforms easily. As two pioneers, Journal of Medical Internet Research (in 2008) and PLoS (in 2009) started to systematically collect tweets about their articles. Now, many publishers have started providing altmetrics statistics to readers. In 2017, PlumX from Plum Analytics is integrated into Scopus. According to the information released by altmetric.com, over 70 publishers now display Altmetric data across their article pages, including Springer Nature, Wiley, Frontiers, and PeerJ, etc.

Research Gap and Research Questions

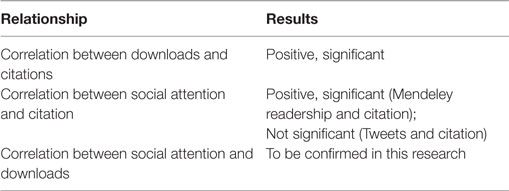

Previous studies confirmed the correlation between downloads and citations, and the correlation between Mendeley readership and citations, and although some previous studies confirmed the overall association between tweets and article views using the data of tweets and total views of articles, there lacks direct evidence, as shown in Table 2. If we may know the number of tweets about an article and get the tweet directed article views, not only the total article views, we could confirm the causal relationship from the social media attention to the directed article views.

In this study, with the availability of referrals data at article-level, which will be introduced in the following method part, we are able to examine this kind of causal relationship. Our research questions are, first, what is the relationship between social media attention and article views? Does more social media attention suggest more article visitors? Second, what is the relationship between different kinds of social media attention? Does the number of tweets of articles associate with activity on other social media?

Answering these questions will validate the effects of social media in promoting the impacts of scholarly articles and shed light on the mechanism of altmetrics in scholarly communication.

Method

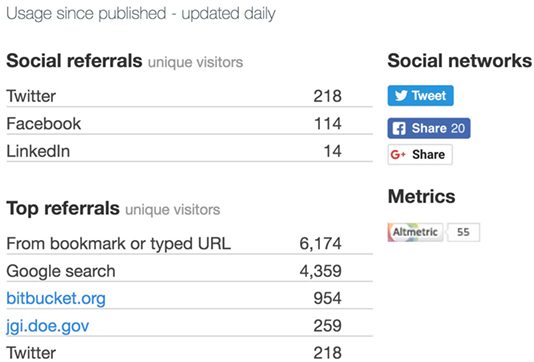

PeerJ, an open access, peer reviewed scholarly journal, provides data on the referral source of article visitors to all PeerJ article pages, as shown in Figure 1. This is unique because such data are not available on other publishers or journal websites. Although Frontiers also provides partial referral data of each Frontiers article, it only includes the top five referring sites; however, there are usually hundreds of referrals for one paper, so only the data of five referrals is far not sufficient for study. The metrics of PeerJ provide all referrals of each paper, no matter how many referrals it has, and update daily since the following day of an article’s publication, meaning that we are able to track the digital footprints of scholarly articles.

The metadata and article visits data are collected from peerj.com directory, while the data of Tweets and Facebook shares for each article are collected from Plum analytics. We use the same dataset as our previous study (Wang et al., 2016). Because we are studying the temporal trend of article visits since the first day of publication, so a long study period is not appropriate. We selected articles published during the period from January 21, 2016 to February 18, 2016 as the research objects; there are a total of 110 samples included, which accounts for about 6.5% of all PeerJ papers up to then. Although the dataset includes only a small section of the total papers, it covers all the main subjects of the journal, which made it an enough fraction of the journal. The referral data are collected and updated daily. Compared with the 90 days of time window used in our previous study (Wang et al., 2016), the time window of this study is extended to 345 days, which covers the date from 22 January, 2016 to December 31, 2016.

The altmetrics data (social media attention) are retrieved from Plum Analytics, which has been integrated into Scopus now, including Tweets and Facebook shares for each article. Here, we collect the Plum Analytics data from Scopus manually.

Finally, the metadata, referral data, and altmetrics data are processed and parsed into our designed SQL database for analysis.

In this study, we use statistical methods including correlation analysis and one-way ANOVA. Correlation analysis is used to examine the relationship between social media attention and the number of social media directed visitors, and the relationship between attentions from different social media platforms, etc. One-way ANOVA is used to test whether there are significant differences in the number of tweets and Twitter directed visitors between the two periods.

Results

Descriptive Analysis

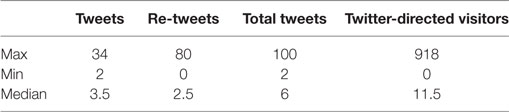

All the papers have received at least two tweets. The median total tweets is 6, and median Twitter directed visitors is 11.5, as shown in Table 3. However, since @thePeerJ, the official Twitter account tweets each article twice, on the exact day and the following day of the article publication. If we exclude the tweets from @thePeerJ, the results would be a little different, that is about 68.18% of the papers receive at least one tweet. The median of total tweets is also six, and the median of Twitter directed visitors is 10.5.

The most shared paper on Facebook is the article The furculae of the dromaeosaurid dinosaur Dakotaraptor steini are trionychid turtle entoplastra,2 which is a study about archeology. It got 196 Facebook shares and also got 63 tweets (ranked sixth among all papers). The most tweeted paper is the article Evaluation of the global impacts of mitigation on persistent, bioaccumulative and toxic pollutants in marine fish,3 which is a study about marine environment protection. It got 100 tweets and also got 49 Facebook shares (ranked 20th among all papers). The most visited paper is the article The effect of habitual and experimental antiperspirant and deodorant product use on the armpit microbiome,4 which is a study about personal health. It got 105 Facebook shares (ranked eighth among all papers) and 97 tweets (ranked second among all papers). In general, most of those top shared and tweeted articles are studies concerning issues include health, animals, and environment, etc.

Correlation Analysis

Correlation between Total Visitors and Visitors Directed from Social Referrals

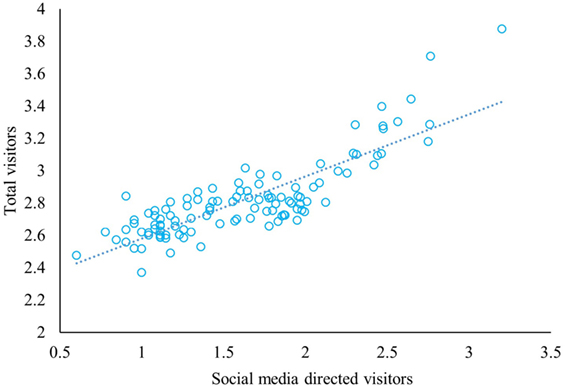

Since the data distribution is positively skewed, we use Log transformation. After log transformation, the data (including the data in Figures 2, 3 and 4) obey normal distribution, which is tested by Shapiro–Wilk test. Figure 2 shows the relationship between visitors directed from social referrals and total article visitors with log transformation as of December 31, 2016. Because Spearman correlation test does not assume any assumptions about the distribution of the data and is the appropriate correlation analysis when the variables are measured on a scale that is at least ordinal, we adopt Spearman correlation analysis in this research. The result indicates that there exists a positive and strong association between the two variables. Social media mentions are positively and strongly correlated with the resulted article visits, while the correlation coefficient r = 0.785 (p < 0.001). In other words, the more social media mentions an article receives the more visitors it attracts from social media referrals.

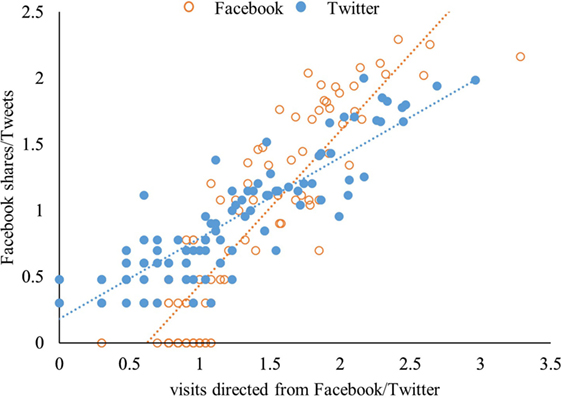

Correlation between Facebook Shares/Tweets, and Visitors Directed from Facebook/Twitter

Facebook and Twitter are the two dominant social referrals directing people to scholarly articles, accounting for more than 95% of all social referrals. Individually Facebook and Twitter are roughly equivalent to one another (Wang et al., 2016). Here, the data of Facebook and Twitter are selected out and separated. In Figure 3, the blue dots represent the Twitter data, while the orange circles represent the Facebook data. The y-axis corresponds to Facebook shares or Tweets, while the x-axis corresponds to the visitors directed from Facebook or Twitter. As Figure 3 shows, there is obvious stratification between the Twitter dots and Facebook circles. Compared with the Facebook circles, the Twitter dots are more closed to the horizontal axis, which indicates that compared with Facebook shares, Tweets directed more people to visit scholarly articles. Moreover, for Facebook, the correlation coefficient r = 0.854 (p < 0.001); while for Twitter, the coefficient is 0.869 (p < 0.001). Both correlations are significant.

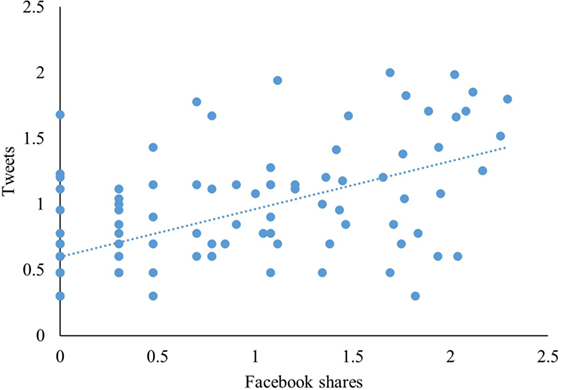

Correlation between Facebook Shares and Tweets

For different social media platforms, do articles get equivalent attention? In other words, do articles receive more tweets also get more Facebook shares? To investigate this issue, we make correlation analysis of social media attention between Facebook and Twitter. Although the data in Figure 4 do not show a trend as obvious as Figures 2 and 3, it still indicates a positive relationship between Facebook shares and tweets.

According to the results of correlation analysis, Facebook shares are positively and strongly correlated with tweets, and the correlation coefficient r = 0.594 (p < 0.001).

Temporal Trends

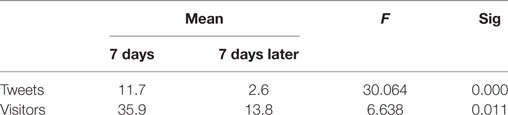

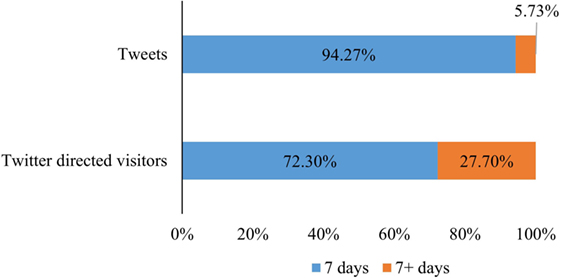

For each paper, we record the tweeting time and calculate the interval days between tweeting and publishing. The tweets over time after publication show that most articles received tweets in a short time after their publication. Here, we set a time point of 7 days, as Eysenbach (2011) did, and we calculate the total tweets (including tweets and re-tweets) within and after 7 days of article publication, correspondingly we count the Twitter directed visitors for each article in 7 days and after 7 days of publication. In Figure 5, we summarize the data for all articles in these two periods. 110 papers are tweeted 384 times in total, while papers got 95.27% of tweets in 7 days after publication, and only 5.73% of tweets are received in the later period. Twitter directed 5,463 visitors to the 110 articles, while 72.30% of them came from the first 7 days after article publication.

Figure 5. Distribution of total tweets and total Twitter directed visitors in two periods after publication.

One-way ANOVA is used to test whether there are significant differences in the number of tweets between the two periods, which are within 7 days and after 7 days of publication. Furthermore, we make the same analysis on the number of Twitter directed article visitors. The alpha level is set to 0.05. As shown in Table 4, the result is significant. The sig values of both tests are less than 0.05, which means that regardless of the number of tweets or the number of Twitter directed article visitors, there are significant differences between the number within 7 days and 7 days later.

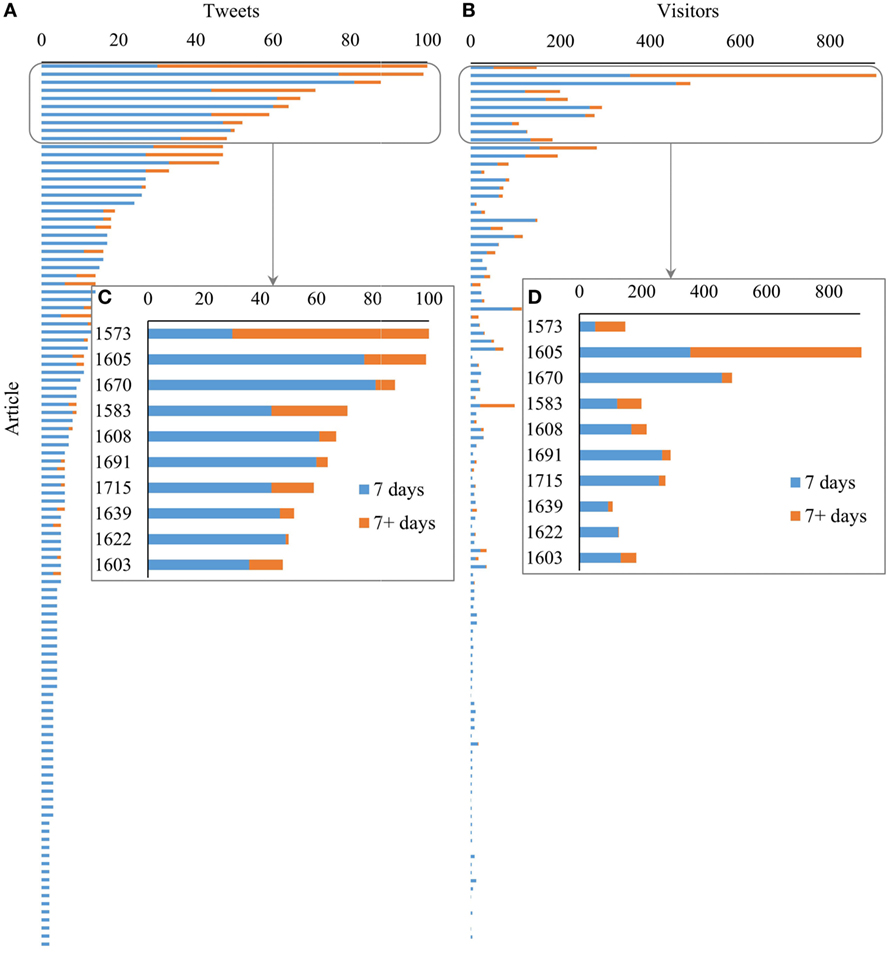

Figure 6 shows the statistics for each paper. Figure 6A indicates the tweets count, while Figure 6C is the enlargement of the top 10 papers with the most tweets; Figure 6B displays the visitor count, while Figure 6D is the enlargement of the top 10 papers corresponding to Figure 6C. Each stacked bar represents the number of tweets/visitors for each article. The bar length is decided by the total number of tweets/visitors of the paper. The data in both panels are ranked by the total number of tweets for each paper. As Figure 6A shows, for most articles, the blue bar is much longer than the orange bar, which indicates that most articles received most tweets in the first 7 days after publication. Only one paper5 received more tweets in the late period (7+ days) than the early period (the first 7 days). Especially for the papers got a few tweets, almost all the tweets are received in the first 7 days. Figure 6B shows the Twitter-directed visitors for each paper. Generally, articles with more tweets tend to have more visitors. However, there are also some exceptions. For example, paper 1,573 (see text footnote 5) has the most tweets, but with relative few visitors directed from Twitter.

Figure 6. Distribution of tweets and Twitter-directed visitors for each paper in two periods after publication. (A) Distribution of tweets for each paper. (B) Distribution of visitors for each paper. (C) The enlargement of the top 10 papers with the most tweets. (D) The enlargement of 10 papers with the most visitors.

Conclusion and Discussion

First, social media attention increases the number of views of scholarly articles, which is confirmed by the direct evidence of social media directed article visitors. More social media attention suggests more article views, while some social media directed article visitors may not be reached through traditional ways. Second, there exist significant correlation among different social media activity. Articles with more Facebook shares tend to get more tweets, and vice versa. Third, the temporal trends show that social attention comes immediately following publication. However, those coming easily may often go soon, social media attention around scholarly articles does not last long, the same applies to social media-directed article views.

To better understand the role of social media in directing people to visit scholarly articles, this paper investigates the relationship between social media attention and article visitors at article-level. We employ unique referral data of 110 PeerJ articles, which could better illustrate the relationship between social media attention and social media directed visitors for each article. We record and analyze the daily updated visiting data of each article for a period of 345 days.

Our results show that the social media presence of PeerJ articles is high. About 68.18% of the papers received at least one tweet from Twitter accounts other than the official account of the journal.

Social media brings scholarly articles to the public. Not only researchers, but also many general people are directed to scholarly articles by social media attention. Although it needs more evidence to make deep and detailed analysis.

Besides the complementary role to traditional, citation-based metrics (Priem et al., 2012), online attention could be transformed to other kinds of impacts, e.g., article downloads. Social media attention increases the dissemination of scholarly articles. Scholarly articles attract visitors through their social media presence. Articles with more social media attention would have more article visitors. Social media directed visitors contribute significantly to the total article visitors, which is applicable for both Facebook and Twitter.

There also exist significant correlations among the online attention from different social media platforms. Articles with more Facebook shares tend to attract more tweets. It could be explained by the following reasons. First, the article attracts independent users from Facebook or Twitter with no interference from the other to share it on social media platforms. Second, there may be overlapped user group across Facebook and Twitter. According to the report of Pew Research Center in 2013, 90% of Twitter users also use Facebook and 22% of Facebook users also use Twitter (Duggan and Smith, 2013). Article visitors directed by Twitter referral may share the paper on Facebook and vice versa.

The temporal trends show that social attention comes soon. Most of those tweets (94.27%) and Twitter-directed visitors (72.30%) are concentrated in the few days immediately following publication, which are in consistent with the results of Eysenbach (2011), which find that the majority of tweets were sent within the 7 days of article publication, especially the day and the following day of article publication. Although we set the time window of 7 days in this study, we do observe tweets come earlier. The exact day and the following day of publication have the most tweets. However, those coming easily may often go soon, social media attention around scholarly articles does not last long. Only a few (5.73%) tweets distributed in the period from the seventh day to the 345th day after publication, which generated 27.70% of all Twitter directed visitors.

There are some limitations in this study. First, only 110 articles are included in the dataset, there exists sample size bias for the dataset. Second, besides the correlation between social media attention and social media directed visitors, the causality between the two factors maybe tell us more. Third, we only collect the referrals data from PeerJ, which is a journal publishes articles in the specific field of life, biology, and health science. There may also exist disciplinary bias. The universality of the findings needs to be examined in other disciplines. Moreover, there exist some disadvantages of altmetrics, including commercialization, data quality, missing evidence, and manipulation (Bornmann, 2014); these shortcomings of altmetrics may have influence on the result.

Author Contributions

XW, as the corresponding author, designed the work, collected data, performed analysis, interpreted data, and wrote manuscript. QL and XG helped in manuscript writing. YC helped in data processing and manuscript writing.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

This research is supported by the “National Natural Science Foundation of China” (71673038, 61301227).

Funding

This research is supported by the “National Natural Science Foundation of China” (71673038, 61301227).

Footnotes

References

Allen, H., Stanton, T., Pietro, F., and Moseley, G. (2013). Social media release increases dissemination of original articles in the clinical pain sciences. PLoS ONE 8:e68914. doi: 10.1371/journal.pone.0068914

Altmetric. (2016). Altmetric: The Story So Far. Figshare. Available at: https://doi.org/10.6084/m9.figshare.2812843.v1.

Bollen, J., Van de Sompel, H., Hagberg, A., and Chute, R. (2009). A principal component analysis of 39 scientific impact measures. PLoS ONE 4:e6022. doi:10.1371/journal.pone.0006022

Bornmann, L. (2014). Do altmetrics point to the broader impact of research? An overview of benefits and disadvantages of altmetrics. J. Informetr. 8, 895–903. doi:10.1016/j.joi.2014.09.005

Costas, R., Zahedi, Z., and Wouters, P. (2015). Do “altmetrics” correlate with citations? Extensive comparison of altmetric indicators with citations from a multidisciplinary perspective. J. Assoc. Info. Sci. Technol. 66, 2003–2019. doi:10.1002/asi.23309

Duan, Y., and Xiong, Z. (2017). Download patterns of journal papers and their influencing factors. Scientometrics 112, 1761–1775. doi:10.1007/s11192-017-2456-1

Duggan, M., and Smith, A. (2013). Social Media Update 2013. Pew Research Center. Available at: http://www.pewinternet.org/2013/12/30/social-media-update-2013/

Eysenbach, G. (2011). Can tweets predict citations? Metrics of social impact based on Twitter and correlation with traditional metrics of scientific impact. J. Med. Internet Res. 13, e123. doi:10.2196/jmir.2012

Haile, T. (2014). We’ve Found Effectively No Correlation between Social Shares and People Actually Reading [Tweet]. Available at: https://twitter.com/arctictony/statuses/430008055263408128.

Haustein, S., Costas, R., and Larivière, V. (2015). Characterizing social media metrics of scholarly papers: the effect of document properties and collaboration patterns. PLoS ONE 10:e0120495. doi:10.1371/journal.pone.0120495

Haustein, S., Peters, I., Sugimoto, C. R., Thelwall, M., and Larivière, V. (2014). Tweeting biomedicine: an analysis of tweets and citations in the biomedical literature. J. Assoc. Info. Sci. Technol. 65, 656–669. doi:10.1002/asi.23101

Jahandideh, S., Abdolmaleki, P., and Asadabadi, E. B. (2007). Prediction of future citations of a research paper from number of its internet downloads. Med. Hypotheses 69, 458–459. doi:10.1016/j.mehy.2007.01.007

Khan, M. S., and Younas, M. (2017). Analyzing readers behavior in downloading articles from IEEE digital library: a study of two selected journals in the field of education. Scientometrics 110, 1523–1537. doi:10.1007/s11192-016-2232-7

Kousha, K., and Thelwall, M. (2007a). Google Scholar citations and Google Web/URL citations: a multi-discipline exploratory analysis. J. Am. Soc. Inf. Sci. Technol. 58, 1055–1065. doi:10.1002/asi.20584

Kousha, K., and Thelwall, M. (2007b). How is science cited on the Web? A classification of google unique Web citations. J. Am. Soc. Info. Sci. Technol. 58, 1631–1644. doi:10.1002/asi.20649

Kurtz, M. J., and Bollen, J. (2010). Usage bibliometrics. Annual review of information science and technology. 44, 1–64. doi:10.1002/aris.2010.1440440108

Lăzăroiu, G. (2017). What do altmetrics measure? Maybe the broader impact of research on society. Educ. Philos. Theory 49, 309–311. doi:10.1080/00131857.2016.1237735

Lippi, G., and Favaloro, E. J. (2012). Article downloads and citations: is there any relationship? Clinica Chimica Acta 415, 195. doi:10.1016/j.cca.2012.10.037

O’Leary, D. (2008). The relationship between citations and number of downloads in Decision Support Systems. Decis. Support Syst. 45, 972–980. doi:10.1016/j.dss.2008.03.008

Orduña-Malea, E., Martín-Martín, A., and Delgado-López-Cózar, E. (2016). The next bibliometrics: ALMetrics (Author Level Metrics) and the multiple faces of author impact. Profesional de la Información 25, 485–496. doi:10.3145/epi.2016.may.18

Priem, J., Piwowar, H. A., and Hemminger, B. M. (2012). Altmetrics in the wild: Using social media to explore scholarly impact. arXiv preprints:1203.4745.

Rowlands, I., Nicholas, D., Russell, B., Canty, N., and Watkinson, A. (2011). Social media use in the research workflow. Learn. Pub. 24, 183–195. doi:10.1087/20110306

Thelwall, M., Haustein, S., Larivière, V., and Sugimoto, C.R. (2013). Do altmetrics work? Twitter and ten other social web services. PloS one 8, e64841.

Torres-Salinas, D., and Milanes-Guisado, Y. (2014). Presence on social networks and altmetrics of authors frequently published in the journal EI profesional de la informacion. Profesional de la Informacion 23, 367–372. doi:10.3145/epi.2014.jul.04

Wang, X., Fang, Z., and Sun, X. (2016). Usage patterns of scholarly articles on Web of Science: a study on Web of Science usage count. Scientometrics 109, 917–926. doi:10.1007/s11192-016-2093-0

Wang, X., Liu, C., Mao, W., and Fang, Z. (2015). The open access advantage considering citation, article usage and social media attention. Scientometrics 103, 555–564. doi:10.1007/s11192-015-1589-3

Wang, X., Mao, W., Xu, S., and Zhang, C. (2014). Usage history of scientific literature: nature metrics and metrics of Nature publications. Scientometrics 98, 1923–1933. doi:10.1007/s11192-013-1167-5

Wang, X., Xu, S., Peng, L., Wang, Z., Wang, C., Zhang, C., et al. (2012). Exploring scientists’ working timetable: do scientists often work overtime? J. Informetr. 6, 655–660. doi:10.1016/j.joi.2012.07.003

Watson, A. B. (2009). Comparing citations and downloads for individual articles at the Journal of Vision. J Vision 9, i,1–4. doi:10.1167/9.4.i

Winter, J. C. F. D. (2015). The relationship between tweets, citations, and article views for Plos One articles. Scientometrics 102, 1773–1779. doi:10.1007/s11192-014-1445-x

Yan, K., and Gerstein, M. (2011). The spread of scientific information: insights from the web usage statistics in PLoS Article-Level Metrics. PLoS ONE 6:e19917. doi:10.1371/journal.pone.0019917

Keywords: altmetrics, social media, Twitter, PeerJ, referral

Citation: Wang X, Cui Y, Li Q and Guo X (2017) Social Media Attention Increases Article Visits: An Investigation on Article-Level Referral Data of PeerJ. Front. Res. Metr. Anal. 2:11. doi: 10.3389/frma.2017.00011

Received: 23 August 2017; Accepted: 05 December 2017;

Published: 20 December 2017

Edited by:

Zaida Chinchilla-Rodríguez, Consejo Superior de Investigaciones Científicas (CSIC), SpainReviewed by:

Ehsan Mohammadi, University of South Carolina, United StatesEnrique Orduna-Malea, Universitat Politècnica de València, Spain

Han Woo Park, Yeungnam University, South Korea

Copyright: © 2017 Wang, Cui, Li and Guo. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) or licensor are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Xianwen Wang, eGlhbndlbndhbmdAZGx1dC5lZHUuY24=, eHdhbmcuZGx1dEBnbWFpbC5jb20=

Xianwen Wang

Xianwen Wang Yunxue Cui

Yunxue Cui Qingchun Li

Qingchun Li Xinhui Guo

Xinhui Guo