- 1Building Simplexity Laboratory, Department of Architecture, The University of Hong Kong, Pokfulam, Hong Kong SAR, China

- 2School of Architecture, The Chinese University of Hong Kong, Shatin, Hong Kong SAR, China

Enhanced virtual communicative technologies extend human perception in extraordinary ways that junction in detaching or augmenting its operator from physical reality. Prospects of the metaverse are progressing towards a possibly fully immersive cyberspace in which users will be entirely disconnected from their analogue physical surroundings. As we advance towards such an alternative reality, the practice-based research project discussed in this paper, titled “Resonance-In-Sight,” foresees and demonstrates the near-future of the metaverse to be a blended hyper-reality in which fiction and reality seamlessly blend into a “Mixed Reality” (MR)-based, co-urban design environment. As digital content in such an environment creatively coexists with our physical cityscape, the research question posed by this project is how such media environment can be used as a proactive cultural educational tool to engage with and inform user audiences of otherwise challenging art-historical content. Through this demonstrator project, the article discusses and reflects on applied methods for user experience (UX) design and the development of MR mobile applications for effective mixed-reality installations. It further reports on employed “Simultaneous Localisation And Mapping” (SLAM) techniques, which are essential for merging co-virtual design content in a hybrid metaverse, and concludes with an assessment of the project’s community impact from an analysis of available user data.

1 Introduction

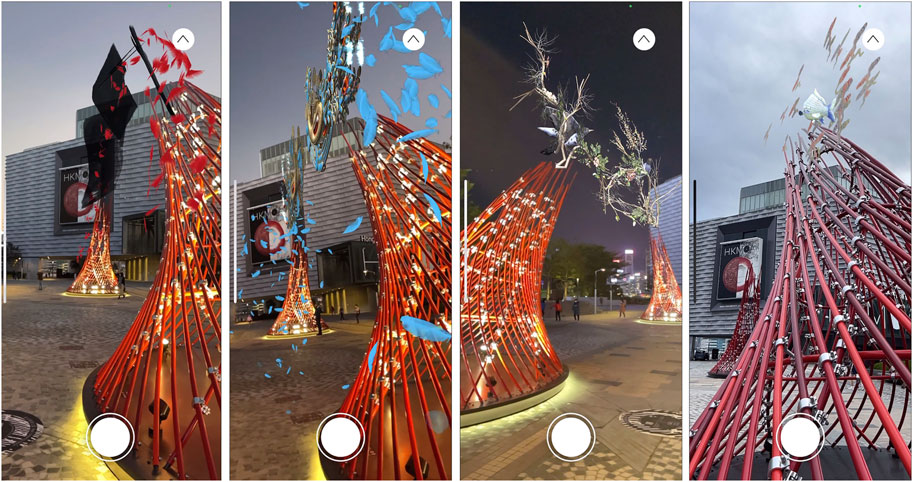

“Resonance-In-Sight” (see Figures 1, 2) is an urban mixed-reality artwork installation made for the Hong Kong Museum of Art (HKMoA) as part of the “Redefining Reality” exhibition that launched in December 2021. HKMoA is Hong Kong’s first and main public art museum and custodian of over 17,000 items grouped in four art collections: Chinese Antiquities, Modern and Hong Kong Art, China Trade Art, and Chinese Painting and Calligraphy (HKMoA 2022a). Following a major building renovation and expansion, the institute reopened to the public on 30 November 2019 (HKMoA 2019), right before the start of the COVID-19 global health pandemic, which profoundly disrupted Hong Kong’s urban life since January 2020 (Choy et al., 2022) and later forced the institute to close for 4 months during the project’s exhibition period between January 7th and April 21st of 2022 (HKMoA 2022a; Bala 2022). This disruption challenged the museum’s mission statement to “connect art to people by curating a world of contrasts with the Hong Kong viewpoint, offering fresh experiences and understanding” (HKMoA 2022b), in response to which the artwork was developed.

“Resonance-In-Sight” uses latest advancements in design, fabrication, and multimedia communication technology for its realisation and combines an analogue or physical component with a tailored virtual element, accessible through visitors’ own handheld mobile devices (see Figure 3). This set up enables the artwork to playfully engage with the public and introduce them, through virtual content, to the various highlights of the art collection over time. Both design components are essential to the experience, and the ‘resonance’ between them is required to complete the full understanding of the artwork. Being one of few artworks placed outside of the museum building, the project was never closed and continued its function of audience interaction even when the museum was closed.

1.1 Mixed-reality installation

We are currently moving from the internet age towards what has been called the “Augmented Age” (King 2016) or the “Metaverse” (Stephenson 1992; Meta 2021). These have been described as a three-dimensional version of the internet that can be appreciated through immersive experiences mediated by the rise of extended reality technologies (Reaume 2022). These immersive experiences may be situated in entire virtual realities that detach users from their real-world surrounding or can be mixed realities in which digital content is superimposed onto our analogue real-world environment. Baudrillard labelled this “Hyper-Reality” (Baudrillard 1983), a reality in which digital content blurs with the analogue or physical and both interact with one another through contextualised personal digital content. The ideation for “Resonance-In-Sight” originated from concepts about such a hyper-reality and capitalised on the rise of extended reality wearables as vehicles to allow for the digital and physical world to merge by overlaying digital information on our field of vision.

This is not a new idea: A similar concept is visible in among others the speculative short film “Hyper Reality” (see Figure 4) by Keiichi Matsuda (Matsuda 2016) in which the cityscape of Medellin is excessively overlaid with digital holograms. More portrayals of often dystopian future visions of such hyper-realties or metaverses can be found in artistic works like Neal Stephenson’s novel “Snow Crash” (Stephenson 1992) which coined the term metaverse, William Gibson’s novel “Neuromancer” (Gibson 1984) which popularised the term cyberspace, Lana and Lilly Wachowski’s movie “The Matrix” (Wachowski and Lilly, 1999), Philip K. Dick’s story “The Trouble with Bubbles” (Dick 1953) in which user shape and own their own world “Worldcraft,” Stanley G. Weinbaum’s story “Pygmalion’s Spectacles” (Weinbaum 1935) about the immersion of VR like wearables, and Ray Bradbury’s story “The Veldt” (Bradbury 1950) which supplants children in a virtual reality nursery which they never want to leave.

FIGURE 4. Holograms as part of our daily lives: still from HYPER-REALITY by Matsuda (2016).

These metaverse futures are creative science fictions that raise important questions about what a more realistic future hyper-reality cityscape could become and about who controls the creation and experiencing of the possibly city-wide scale of digital objects. This paper postulates that today’s architects and urban designers are particularly well-placed to proactively and constructively engage in the formation of not only the physical but also the digital spectrum of our future hybrid cityscape.

As a timely demonstrator project, “Resonance-In-Sight” does precisely that: The installation mixes realities to engage, inform, and educate users through an artwork that treats its digital components with the same admiration as the more traditional physical object itself.

1.2 Context

“Resonance-In-Sight” is situated within a broader context of recent artworks that engage with hyper-reality and expand beyond purely physical methods of representation by adding a digital animated overlay. Augmented Reality (AR) artworks allow digital content to be placed in real-world surroundings, for example, through mobile AR applications that project art at any location (Acute Art 2022). As these projections are either displaying entirely digitally designed content or are referring to digitalised physical content, they do not have to be related to a specific location, allowing users to in principle display them anywhere. Mixed Reality (MR) interventions, however, reference physical objects with digital content by contextualising digital information in a specific location in space. Artists are utilising this technology through MR applications to augment specific physical art works, for example, by using 2D paintings as trackers upon which a digital object is overlayed in static, interactive, or animated form (Art For Everyone, 2021).

While many of these artworks involve augmentation of small-scale, primarily two-dimensional objects placed in controlled indoor environments, this project positions itself at an expanded concept scale through large three-dimensional objects situated in a lively urban context. In doing so, it outperforms several examples of recently emerged pavilion-scale applications in which designers used physical structures to trigger animated 3D content through mixed reality apps on handheld mobile devices, for example, on colourful flattened 2D printed sheet walls (Belitskaja et al., 2021) or in between wooden frame structures (Goepel et al., 2022).

At building scale, digital content has been animated as an MR overlay on building facades such as on the MUMOK museum in Vienna (Artefact, 2019) which allowed users to interact with the transformation of the main building by revealing museum related content of current exhibitions. AR overlays have also been overlaid onto sport stadiums with, for example, digital dragons flying over spectators (SK Telecom 2019) or with AR showcasing player formations and reactions to actions like goals on the field through AR animated light shows (Rakuten Mobile 2021).

2 Methods

“Resonance-In-Sight” is an explorative design project that was designed and built by an interdisciplinary team following a heuristic process. An iterative experimental problem-solving design procedure was set up in which digital and physical prototypes were subjected to critical self-reflection to identify and resolve gradually discovered issues. This happened through extensive discussions with all parties involved, including the museum client and its curators, artists, architects, graphic designers, app developers, steel manufacturers, and builders.

The design process originally started off with the idea to make a single, large-scale, bridge-like structure that would symbolically connect two ends of the public square. Manufacturing constraints and available budgets quickly altered this ambition and triggered a conversation of connecting both ends of the public square through a symbolic conceptual bridge, only visible virtually if people took the effort to personally engage with the artwork and discover the connection. This evolved to the idea of using a virtual component to expand to the museum’s aim to introduce and connect with visitors through its art collection. As its collection largely consisted of historical pieces, the idea arose to reinvent those and bring them to life through playful graphic animated interpretations. These interpretations would then be changed over time to encourage people to return again and again to discover new items and thus learn more about the historic art collection. This learning experience could easily be shared with visitors’ personal social networks through various social media channels already directly accessible through their handheld mobile devices. To amplify this last component, an additional series of Instagram filters was set up related to the covered VR artworks and was launched at the beginning of phase 1 and 3 of the exhibition.

2.1 Physical component

The physical component of “Resonance-In-Sight” consists of a dramatic pair of elegantly curved, brightly coloured steel structures placed several meters apart to create a tangible tension between them. Their shell structure, which relies on its double curvature for greater strength, was developed to minimise material use. Inspired by fibrous light-weight structures found in nature in the skulls of birds or in eggshells, the structure is built up of interconnected layers made from the thinnest possible three-dimensionally curved steel pipes that swoop in opposite directions. The doubly curved pipe geometry was fabricated through a combination of manual craftsmanship and strategic digital fabrication technology. Computer-numerically-cut (CNC) moulds from thin sheet metal were used as guides for craftsmen to manually bend the steel pipes using traditional tools, like blowtorches and standard pipe benders. This simple system provided the necessary control to fabricate the geometry accurately, as overall geometries were developed to connect all pipes with one single standard connector: an off-the-shelf swivel shackle, thus further reducing cost. For this to be possible, all pipe interconnections needed to be spaced at precisely the same distance and perpendicular to the crossing curve axes which were modelled through customised parametric tools. The assembly of the bent pipes was simplified trough a set of reusable CNC-cut moulds that guided the exact placement of each curved member. This allowed for quick assembly and disassembly of the structure.

2.2 Virtual component

The virtual component of the artwork is overlaid on top of the physical structure in the form of superimposed graphic holograms which were carefully designed and curated to echo the museum’s collection. As these digital objects have no physical mass and need not follow the rules of physics, endless possibilities emerged for storytelling and engagement with the audiences. Certain medium-specific rules still apply, though, for the digital content to become visible, like maximum file size or polygon count, limited animation length and complexity, permissible degrees of user interaction, etc. A tailored application (or app), accessible through a smartphones or tablet computer, overlays the digital content onto the physical installation through “Simultaneous Localisation And Mapping” (SLAM) of the device in its surroundings. This technology allows the digital objects to be precisely aligned with the physical installation in the real-world environment.

2.3 Mixed-reality app experience

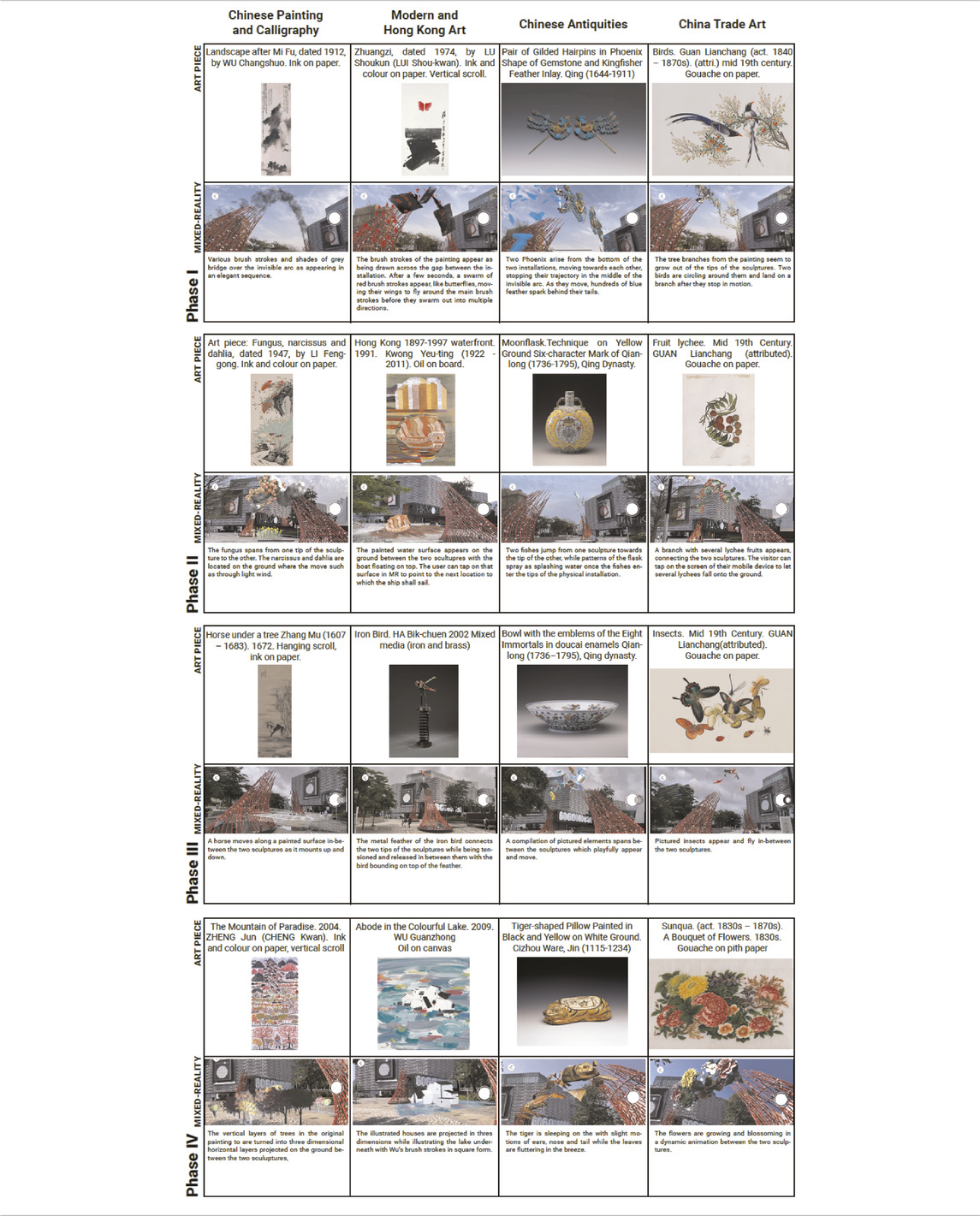

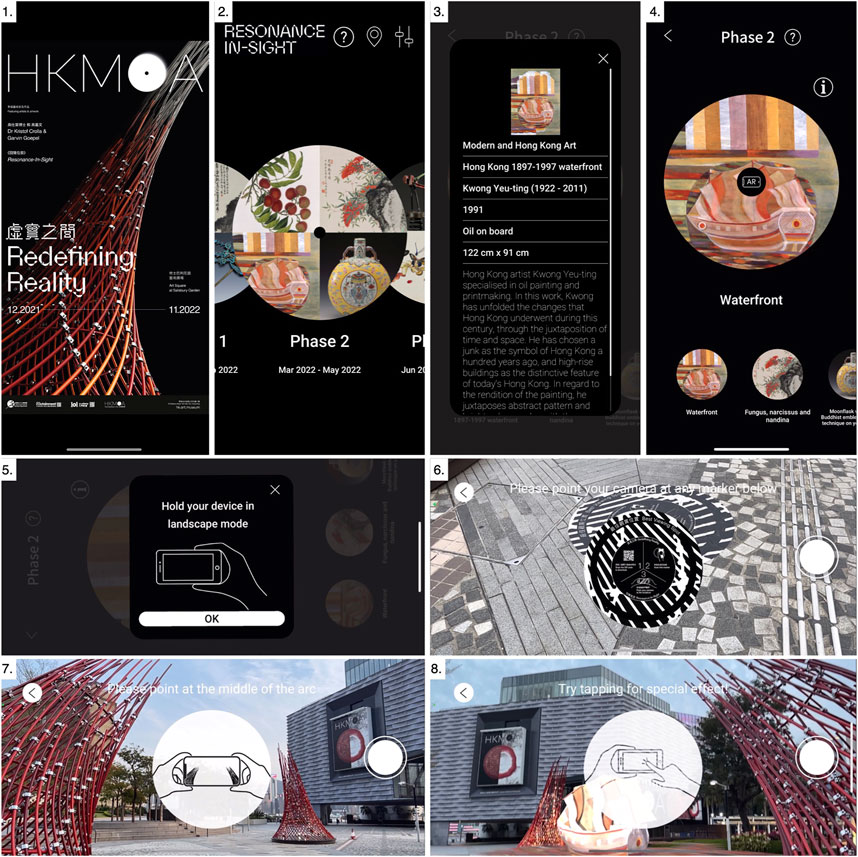

The mixed-reality app “RiS@HKMoA” was developed specifically for the project (see Figure 5). During each of the four three-month-long phases of the project, it allows users to activate four selected artwork interpretations (see Table 1). Information on the original artworks and their importance within the overall collection is included in the app to further educate the audience.

FIGURE 5. App user interface screenshots: 1. Start page; 2. Selection of Phase; 3. Detailed artwork information; 4. Tap to enter AR; 5. Instructions to rotate device from portrait to landscape mode; 6. Instructions to scan the Image Tracker; 7. Instructions to move the device towards the sculpture; 8. Special effect instruction for selected assets.

Users are guided to a download site where they can access the application on their mobile devices through a QR-code incorporated in the name plaque adjoining the artwork onsite. Once installed, users can activate the app’s user interface (UI), which opens with a main page where the user can gain information about the exhibition “Redefining Reality” and the location of the museum and can customise settings including Mandarin, Cantonese, or English language settings. They can select one of the four project phases and can then choose from four menu items to activate the mixed-reality experience. Each option first displays as an image the original artwork from the museum collection on which the MR experience is based and provides the user with a short description of its author, date, material, and origin. A user-click on the AR icon above the art piece opens a widow which asks the user to rotate the device from portrait to landscape mode to activate the MR experience. The back camera of the handheld device is turned on and graphics appear that guide the user to orient the device towards image trackers which can be one of four large one-meter-radius stickers placed in front and around the installation. Once detected, the instructions ask the user to slowly move the device towards the tip of the physical installation which positions the 3D assets in the correct location of the physical installation (see Figure 6).

The app is designed to allow for immediate experience sharing on common social media platforms with as aim to attract a wider audience to the museum and encourage community creation. Every 3 months a new selection of four artworks is brought to live through app updates, inviting visitors to return and discover more of the museum’s unique collection.

2.4 Tools and techniques

All digital asset content was created and animated in Cinema4D (Losch, 2021) and then imported as a motion blur (.fbx) file format to the gaming engine Unity 3D (Unity Technologies 2020) where the main app was developed. Development of AR functionalities relied on the ARCore (Google Developers 2021) and ARkit (Apple Developer 2021) plugin for Unity 3D. Some assets were enhanced through a user game play in Unity 3D that triggers animations through detected on-screen touch. This was, for example, used to activate falling lychee (Fruit lychee. Lianchang) or to interactively change a ship’s position (Hong Kong 1897–1997 waterfront. Kwong Yeu-ting). The apps were developed for AR ready Android and iOS mobile devices and launched on the Google play store (Google 2022) and the Apple App store (Apple 2022) in December 2021.

SLAM tracking locates the mobile device position in relation to the detected image tracker and correctly positions the digital objects by recognising the physical world surrounding of the sculpture through a predefined select number of feature points that are identified through the camera feed. The bright red colour of the installation was specifically chosen to increase colour contrast with the sculpture’s surrounding in order to have a more stable tracking result. Once the tracking points are correctly identified, the digital objects can be accurately positioned between the two physical sculptures.

2.5 Digital content and gameplay of the four phases

Each of the four virtual reality content phases contains four selected art pieces, one from each of HKMoA’s core collections. A team of artists, graphic designers and app developers turned these into bespoke sets of assets by digitising and animating pictures, objects, or scenes from the artworks followed by their optimisation for mixed reality experience. The following compilation lists each selected art piece and the choreographed abstraction into a digital object and user gameplay.

2.5.1 Instagram filters

To further enhance social media engagement, a set of bespoke Instagram (IG) filters was additionally developed based on elements from the selected artworks used in VR (see Figure 7). These allow visitors to directly interact with the Museum’s collection anywhere and at any time. Four IG filters are developed, three of which decorate the users’ head by a flying phoenix (Kingfisher Feather Inlay, Qing dynasty 1644–1911), flowers (Birds, Guan Lianchang 1840–1870) and butterflies (Insects, Guan Lianchang 1840–1870). The decorations are designed to be responsive to user actions like blinking of the eyes to make flowers bloom or the shaking of the head to make butterflies fly away. The fourth IG filter uses the back camera of the mobile devices and allows user to place an animated ship (Hong Kong waterfront, Kwong Yeu-ting 1991) on any surface in the user’s surrounding.

FIGURE 7. Instagram filters available on HKMoA’s Instagram page. From left to right: Kingfisher Feather Inlay, Qing dynasty; Birds, Guan Lianchang; Insects, Guan Lianchang; Hong Kong waterfront, Kwong Yeu-ting.

3 Results and discussion

3.1 Results

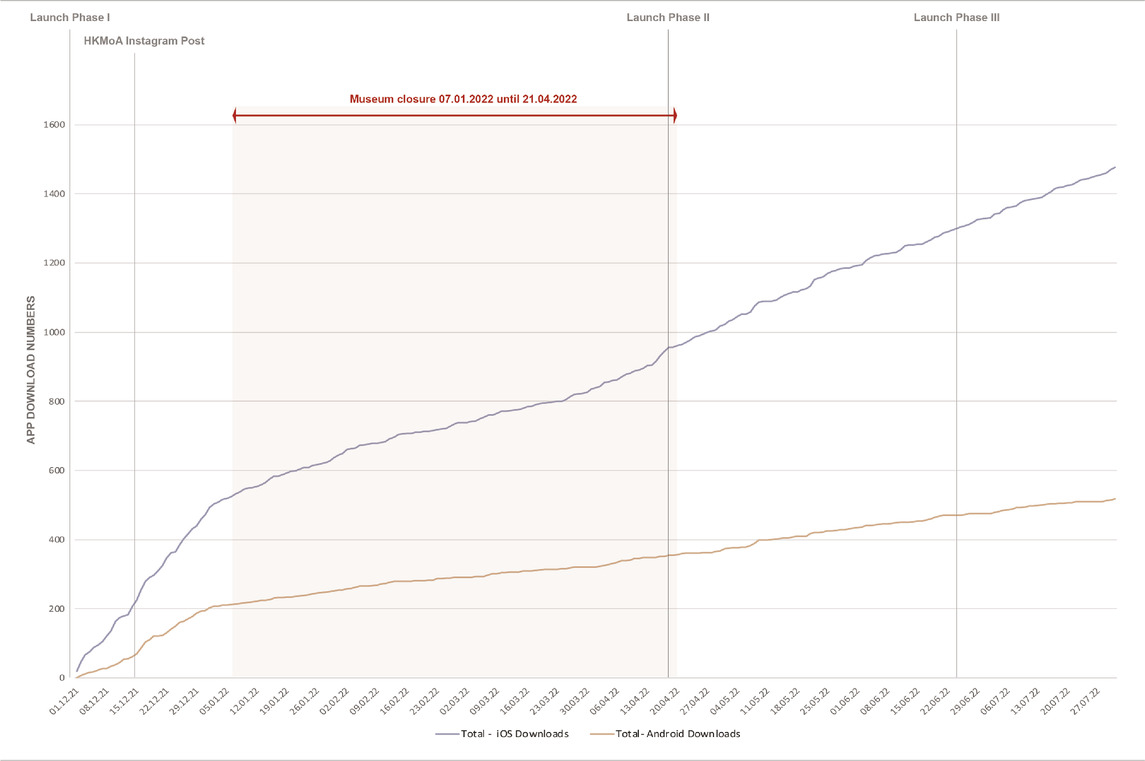

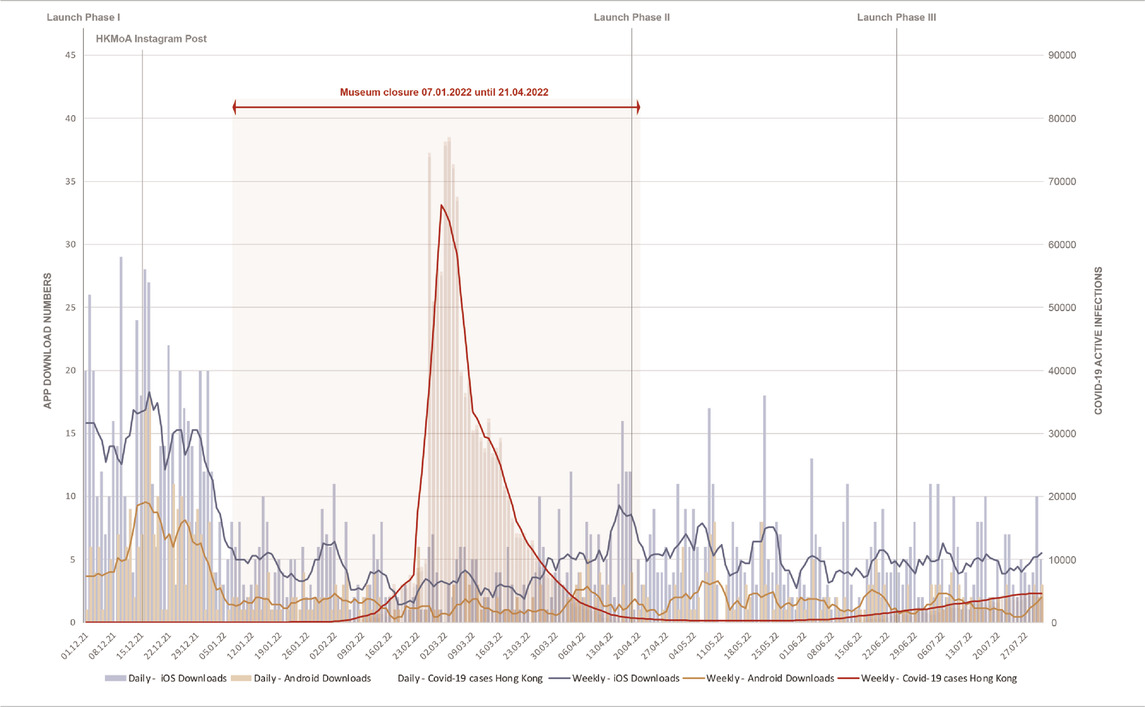

Even when the museum was forcibly closed for months on end due to COVID-related restrictions on public gathering, the freely accessible public outdoors installation continued to playfully engage with the public and exposes them to the museum’s rich art collection. This is visible in the data that showcases the number of new app downloads on to iOS (Apple) or Android-based smartphones and devices. Following an enthusiastic use at the exhibition’s opening in December, a steep decline in app downloads is visible from the end of January onwards as the Omicron subvariant found its way into Hong Kong, forcing the temporary closure of the museum. Despite government publicity for public events being kept at an absolute minimum during that period, app downloads continued steadily and then picked up again once the museum reopened towards the end of April 2022.

Tables 2, 3 illustrate three times more downloads by iOS users (1476) than by Android users (518) with a total of 1994 downloads by 31 July 2022 when three phases had been launched. The data shows that the opening period of the exhibition from December until the COVID-induced museum closure in January (Ritchie et al., 2020) was the most popular term in terms of app download numbers. The combination of social restrictions by the government (Choy et al., 2022) and closure of the museum from January to late April seriously affected but did not eliminate the download of the app (Tables 1, 2). Download numbers increased again after the COVID wave and after the museum opening. Unfortunately, the launches of new phases seemed to have had little impact on the download numbers. This may be attributed to the fact that the museum was not allowed to encourage social gatherings during these times by advertising the exhibition at a larger scale.

TABLE 2. App Downloads in comparison to museum closure and COVID-19 active cases in Hong Kong—COVID-19 case data retrieved by “Our World in Data” Ritchie et al. (2020).

3.2 Discussion

The discussion paragraph argues for a hyper-reality future vision of the metaverse. It discusses current limitations and future opportunities for designers on how spatial computing technologies can be applied to enhance our cityscape. It suggests an optimistic stance that contrasts with frequent dystopian prognostications of such a hyper-reality.

3.2.1 Hyper-reality

Designers are faced with the challenge to actively engage in the early formation of meaningful and constructive digital objects and content in our co-urban cityscape. As spatial computing technologies are exponentially advancing with the promise of 6G networks, predictions of full submersion in a hyper-reality through, for example, smart lenses are becoming more plausible and convincing each day (Guzman 2021). Fundamental questions must be asked on where this digital content is coming from, who develops it, what purpose it will serve and who it will be presented to. Will we all be immersed in the same hyper-reality? Will digital objects become personalised or commonly shared? Which digital objects are public; which ones are private; and who owns the cloud in which these are stored? Will there be one metaverse like there is one main internet? Will there be a dark web equivalent? Or will we be able to switch through parallel metaverses of customised versions of personalised cityscapes?

Objects, digital or physical, are anyways perceived as a construct in our brains: as per Schopenhauer, the whole of the objective world is supposed to be constructed in our head (Schopenhauer 1966). A building façade in a hyper-reality does not need to be constructed as a physical ornament as it is only being perceived visually in our urban cityscape. Architects and urban planners may therefore dispatch into two groups, one focusing on physical objects and one on the digital object overlay. Soon, the only physical objects remaining will be those with which we physically interact. Physical objects, which are only perceived visually, may be exchanged for digital objects.

3.2.2 “Resonance-In-Sight”

As we discover properties and necessities of digital and physical objects, one may argue for the physical component of “Resonance-In-Sight” to also become digital: The physical component does not per se require (or permit) touch, nor does it have any haptic function or properties requiring it to be of mass. Therefore, it may be replaced by a digital object.

However, there are several arguments still for its physicality: There is the technical claim that, while wearable extended reality devices become increasingly popular, we are still carrying our XR mediums in our pockets, and manual activation of XR enabled mobile applications is still required. Apps need reference points in space to locate digital content such as a physical object or image tracker. But so do we humans. Without the physical installation, there would be no spatial attractor point for us to even know that digital content would exist. The physical sculpture becomes that magnet attractor that brings together people to a physical location where they can meet and discuss about the events unfolding in front of them. In doing so, communities are being formed based on shared experiences fixed in physical space and time.

Future technologies could eliminate the need of such physical component, and the argumentation for it might fade once shared digital overlays have become ubiquitous in our everyday lives. But we are not there yet, and the mixed-reality installation “Resonance-In-Sight” is therefore a construct of our time as we are moving towards a possible future hyper-reality. The project describes an in-between stage of physical reality and hyper-reality, requiring physical components to inform digital counterparts.

3.2.3 Future research

The concept of merging physical sculptures with digital content can be augmented from an art installation towards an architectural mixed-reality prototype. Future research will question how a digital overlay can enhance architecture in a hyper-reality.

Future research can also allow for the implementing of traces of each user adding toward the existing art piece. The exhibition of “Resonance-In-Sight” was supported by a governmental institution for which the digital content of the mobile application was carefully selected and created to be objective and politically neutral. This limited early design ideas of collective user design input as this could not be guaranteed to be unbiased. These traces, however, could easily be incorporated and could include AR drawings, comments, tags, selfies and so on, allowing users to manifest themselves during the exhibition period. This would potentially increase app usage per device. Collection of additional app analytics data and on-site surveys would be needed to investigate such user behaviour in future studies.

Future applications should also investigate how SLAM technologies can be further improved to eliminate the need of additional image trackers and instead only use the sculpture itself and its surroundings to localize the digital content in reference to the physical space through object- and/or scene-tracking. This would result in less in-app instructions and a more intuitive user behaviour, requiring only to point the camera straight towards the installation, which would result in a higher first-use success rate.

4 Conclusion

“Resonance-In-Sight” showcases how contextualised and superimposed digital information has the potential to expand the design solution space of our cityscape towards a co-urban and co-virtual city design. It demonstrates how physical and digital objects may coexist in a hyper-reality and be used constructively and productively to perform an educational and community-building role. The project critically reflects on the timely notion of the necessity for digital objects as we proceed towards a more complete hyper-reality.

Data availability statement

The raw data supporting the conclusion of this article will be made available by the authors, without undue reservation.

Ethics statement

Written informed consent was obtained from the individual(s) for the publication of any potentially identifiable images or data included in this article.

Author contributions

All authors listed have made a substantial, direct, and intellectual contribution to the work and approved it for publication.

Acknowledgments

ARTWORK: 回聲在目—Resonance-In-Sight; EXHIBITION: 虛實之間—Redefining Reality; LOCATION: 梳士巴利花園 藝術廣場—Art Square at Salisbury Garden; EXHIBITION PERIOD: 12.2021—11.2022; INSTITUTE: 香港藝術館—Hong Kong Museum of Art; ARTISTS: Kristof Crolla + Garvin Goepel; PROJECT DESIGN: Laboratory for Explorative Architecture & Design Ltd. (LEAD); DESIGN TEAM: Kristof Crolla and Julien Klisz (LEAD), Garvin Goepel; GRAPHIC & AUGMENTED REALITY DESIGN: Daniel Lam & Ester Wong; AUGMENTED REALITY EXPERIENCE DESIGN & IMPLEMENTATION: Joy Aether Ltd.; STRUCTURAL ENGINEERING: Buro Happold International (Hong Kong) Ltd.; MANUFACTURING & INSTALLATION: Program Contractors Ltd. (PCL); PHOTOGRAPHY: Kris Provoost; APP DOWNLOAD LINK: iOS: https://apple.co/3rLBgAf, Android: https://bit.ly/3lNCsPL; INSTAGRAM FILTERS: https://bit.ly/3IE5zyM, https://bit.ly/3rSMXVJ; PROJECT VIDEO: https://vimeo.com/659238642

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

References

Acute Art (2022). Acute art app - virtual reality & augmented reality art production. London: Acute Art. https://acuteart.com/.

Apple (2022). App store. Hong Kong: Apple. https://www.apple.com/hk/en/app-store/.

Apple Developer, (2021). Augmented reality. Cupertino: Apple Developer. https://developer.apple.com/augmented-reality/.

Art For Everyone (2021). ArtForEveryone. Hong Kong: ArtForEveryone App - Hong Kong Museum of Art. https://www.artforeveryone.hk/.

Artefact (2019). MUMOK AR app. Vienna: Artefact. https://www.artefact.at.

Bala, Sumathi (2022). Covid in Hong Kong: Here’s a list of everything that will Be Shut down starting Tomorrow. CNBC. https://www.cnbc.com/2022/01/06/hong-kong-covid-what-will-be-shut-down-starting-jan-7.html.

Baudrillard, Jean (1983). Simulations. New York City, N.Y., U.S.A: Semiotext. Translated by Phil Beitchman, Paul Foss, and Paul Patton.

Belitskaja, Sasha, Ben, James, and Shaun, McCallum (2021). Blob House—Iheartblob. Vancouver: BLOB HOUSE by Iheartblob. https://www.iheartblob.com/blob-house/.

Choy, Gigi, Kathleen, Magramo, Nadia, Lam, and Sammy, Heung (2022). Bars, Gyms to close, 6pm Restaurant Curfew as Hong Kong Ramps up Omicron Battle. Hong Kong: South China Morning. https://www.scmp.com/news/hong-kong/health-environment/article/3162190/coronavirus-hong-kongs-fifth-wave-has-already (Accessed January 5, 2022).

Google (2022). Android apps on Google play. Mountain View: Google. https://play.google.com/store/games?hl=en&gl=HK (Accessed August 23, 2022).

Google Developers (2021). Build new augmented reality experiences that seamlessly blend the digital and physical worlds. ARCore. Google Developers. https://developers.google.com/ar.

Goepel, Garvin, George, Guida, and Ana Gabriela Loayza, Nolasco (2022). Self-compass. Boston: Goepel. https://www.garvingoepel.com/self-compass.

HKMoA (2019). Flagstaff House of museum of Tea Ware - Whatsnew10. Hong Kong: Hong Kong Museum of Art. https://web.archive.org/web/20191008190906/https://hk.art.museum/en_US/web/ma/whatsnew10.html.

HKMoA (2022a). Home. Hong Kong: Hong Kong Museum of Art. https://hk.art.museum/en_US/web/ma/home.html.

HKMoA (2022b). Mission statement. Hong Kong: Hong Kong Museum of Art. https://hk.art.museum/en_US/web/ma/about-us.html.

Joy Aether LtD (2022). RiS@HKMoA. Hong Kong: IOS. Android https://apps.apple.com/us/app/ris-hkmoa/id1579643324https://bit.ly/3lNCsPL.

Matsuda, Keiichi (2016). HYPER-REALITY. London. https://www.youtube.com/watch?v=YJg02ivYzSs.

Meta, dir (2021). The metaverse and how We’ll Build it together -- connect 2021, Menlo Park. https://www.youtube.com/watch?v=Uvufun6xer8.

Rakuten Mobile (2021). Rakuten mobile and Rakuten Vissel Kobe Successfully demonstrate a new spectator experience using 5G and VPS technology. Tokyo: Rakuten Mobile. https://corp.mobile.rakuten.co.jp/english/news/press/2021/1110_01/.

Reaume, Amanda (2022). What is the metaverse? Meaning and what You should know. Vancouver: Seeking Alpha. https://seekingalpha.com/article/4472812-what-is-metaversehttps://seekingalpha.com/article/4472812-what-is-metaverse.

Ritchie, Hannah, Edouard, Mathieu, Lucas, Rodés-Guirao, Cameron, Appel, Charlie, Giattino, Esteban, Ortiz-Ospina, et al. (2020). Coronavirus pandemic (COVID-19). Wales: Our World in Data. https://ourworldindata.org/coronavirus.

Schopenhauer, Arthur (1966). The world as will and representation. Editor E. F. J. Payne. 2nd Revised ed. (New York: Dover Publications).

SK telecom, dir (2019). [SKTelecom 5G]SK telecom uses 5G AR to bring fire-breathing dragon to baseball park, Seoul. https://www.youtube.com/watch?v=u5hQpRbHERg.

Wachowski, Lana, and Lilly, dir (1999). The Matrix. Australia: Warner Bros. (worldwide) Roadshow Entertainment.

Keywords: co-virtual, city design, participatory, XR realities, metaverse

Citation: Crolla K and Goepel G (2022) Entering hyper-reality: “Resonance-In-Sight,” a mixed-reality art installation. Front. Virtual Real. 3:1044021. doi: 10.3389/frvir.2022.1044021

Received: 14 September 2022; Accepted: 24 October 2022;

Published: 21 November 2022.

Edited by:

Marc Aurel Schnabel, Victoria University of Wellington, New ZealandReviewed by:

Ding Wen Bao, RMIT University, AustraliaAnastasia Globa, The University of Sydney, Australia

Copyright © 2022 Crolla and Goepel. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Kristof Crolla, a2Nyb2xsYUBoa3UuaGs=

†These authors share first authorship

Kristof Crolla

Kristof Crolla Garvin Goepel

Garvin Goepel