- 1Institute of Orthopedic Research and Biomechanics, Center of Trauma Research Ulm, Ulm University Medical Center, Ulm, Germany

- 2Department of Orthopedic Surgery, Universitäts- und Rehabilitationskliniken Ulm (RKU), Ulm University Medical Center, Ulm, Germany

An exact understanding of the interplay between the articulating tissues of the knee joint in relation to the osteoarthritis (OA)-related degeneration process is of considerable interest. Therefore, the aim of the present study was to characterize the biomechanical properties of mildly and severely degenerated human knee joints, including their menisci and tibial and femoral articular cartilage (AC) surfaces. A spatial biomechanical mapping of the articulating knee joint surfaces of 12 mildly and 12 severely degenerated human cadaveric knee joints was assessed using a multiaxial mechanical testing machine. To do so, indentation stress relaxation tests were combined with thickness and water content measurements at the lateral and medial menisci and the AC of the tibial plateau and femoral condyles to calculate the instantaneous modulus (IM), relaxation modulus, relaxation percentage, maximum applied force during the indentation, and the water content. With progressing joint degeneration, we found an increase in the lateral and the medial meniscal instantaneous moduli (p < 0.02), relaxation moduli (p < 0.01), and maximum applied forces (p < 0.01), while for the underlying tibial AC, the IM (p = 0.01) and maximum applied force (p < 0.01) decreased only at the medial compartment. Degeneration had no influence on the relaxation percentage of the soft tissues. While the water content of the menisci did not change with progressing degeneration, the severely degenerated tibial AC contained more water (p < 0.04) compared to the mildly degenerated tibial cartilage. The results of this study indicate that degeneration-related (bio-)mechanical changes seem likely to be first detectable in the menisci before the articular knee joint cartilage is affected. Should these findings be further reinforced by structural and imaging analyses, the treatment and diagnostic paradigms of OA might be modified, focusing on the early detection of meniscal degeneration and its respective treatment, with the final aim to delay osteoarthritis onset.

Introduction

Knee joint osteoarthritis (OA) is a prevalent and disabling disease that globally incurs increasing socioeconomic costs (Neogi, 2013). It has incidence and progression rates of approximately 2.5 and 3.6%, respectively (Cooper et al., 2000). While total knee replacement is a highly cost-effective and quality of life-improving approach to treat patients suffering from end-stage OA (CrossIII, Saleh et al., 2006; RuizJr., Koenig et al., 2013), the early detection and consequent treatment of early or pre-OA remain major challenges (Chu et al., 2012; Ryd et al., 2015). While dependencies between knee OA and meniscal degeneration have been previously described (Bhattacharyya et al., 2003; Hunter et al., 2006; Lohmander et al., 2007; Englund, 2009; Englund et al., 2009, 2012), it remains unknown as to which is the cause and which is the consequence of these entities. On the one hand, studies have shown an increased OA incidence in knees affected by meniscal injuries (Lohmander et al., 2007; Englund, 2009). On the other hand, degenerated knee joints also indicate degenerative changes of the menisci, including tears, macerations, and tissue loss (Bhattacharyya et al., 2003; Hunter et al., 2006; Englund et al., 2009), thereby leading to controversy in the treatment of knee joint OA, as summed up by Englund et al. (2009): “A meniscal tear can lead to knee OA, but knee OA can also lead to a meniscal tear.” Both articular cartilage (AC) (Silvast et al., 2009; Marchiori et al., 2019; Ebrahimi et al., 2020) and menisci (Fithian et al., 1990; Fox et al., 2012; Son et al., 2013; Danso et al., 2017; Travascio et al., 2020a, b; Warnecke et al., 2020; Morejon et al., 2021) are highly anisotropic and inhomogeneous tissues that exhibit strong structure–function relationships that change during the course of OA degeneration. It is well accepted that biomechanical factors like altered joint loading caused by obesity and joint malalignment, trauma, or instability contribute substantially to the initiation and progression of knee joint OA (Hochberg et al., 1995; Jackson et al., 2004; Lohmander et al., 2007; Englund, 2010; Guilak, 2011; Willinger et al., 2019). In the initiation phase, the articulating surfaces already experience structural changes (Andriacchi et al., 2004; Loeser et al., 2012), for example, softening of the AC (Hosseini et al., 2013), while the meniscus loses its elasticity (Fox et al., 2012; Tsujii et al., 2017). Osteoarthritic AC exhibits a decrease in the tensile modulus of up to 90% compared with healthy samples (Akizuki et al., 1986; Setton et al., 1999; Temple-Wong et al., 2009), while Kwok et al. (2014), who examined healthy and degenerated human menisci using nanoindentation, found that the Young’s modulus increased with progressing meniscal degeneration. These findings were underlined by a more recent study utilizing shear wave elastography (Park et al., 2020). In summary, it can be stated that these converse tissue degeneration effects might result in an excessive abrasion of the cartilage tissue accompanied by meniscal tissue calcification, which may finally accelerate knee joint OA progression.

Despite several authors having investigated the biomechanical properties of isolated meniscal and tibial and femoral cartilage specimens utilizing indentation mapping (Sim et al., 2014, 2017; Hadjab et al., 2018; Pflieger et al., 2019; Seidenstuecker et al., 2019), a combined complementary characterization of the articulating partners within the knee joint remains lacking. Consequently, an exact understanding of the interplay between these tissues in relation to the degenerative process is of considerable interest. Therefore, the aim of the present study was to characterize the biomechanical properties of mildly and severely degenerated human knee joints, including their menisci and tibial and femoral AC. Specifically, we wanted to explore the question of whether the degeneration of one of the structures might be the cause of the degenerative processes of the other structure(s), as hypothesized by Englund et al. (2009).

Materials and Methods

Study Design

A spatial biomechanical mapping of the articulating knee joint surfaces of 12 mildly and 12 severely degenerated knee joints was assessed using a multiaxial mechanical testing machine. To do so, indentation stress relaxation tests were combined with thickness measurements at the lateral and medial menisci and the AC of the tibial plateau and femoral condyles to finally calculate the instantaneous modulus (IM), the modulus after a relaxation time of 20 s (Et20), the maximum applied load (Pmax), and the relaxation percentage over the maximum stress (Δσrelax). Additionally, we measured the water content of the tissues and correlated it to the biomechanical data. Non-parametric statistical analyses and correlation analyses were performed to interpret the results.

Specimen Preparation

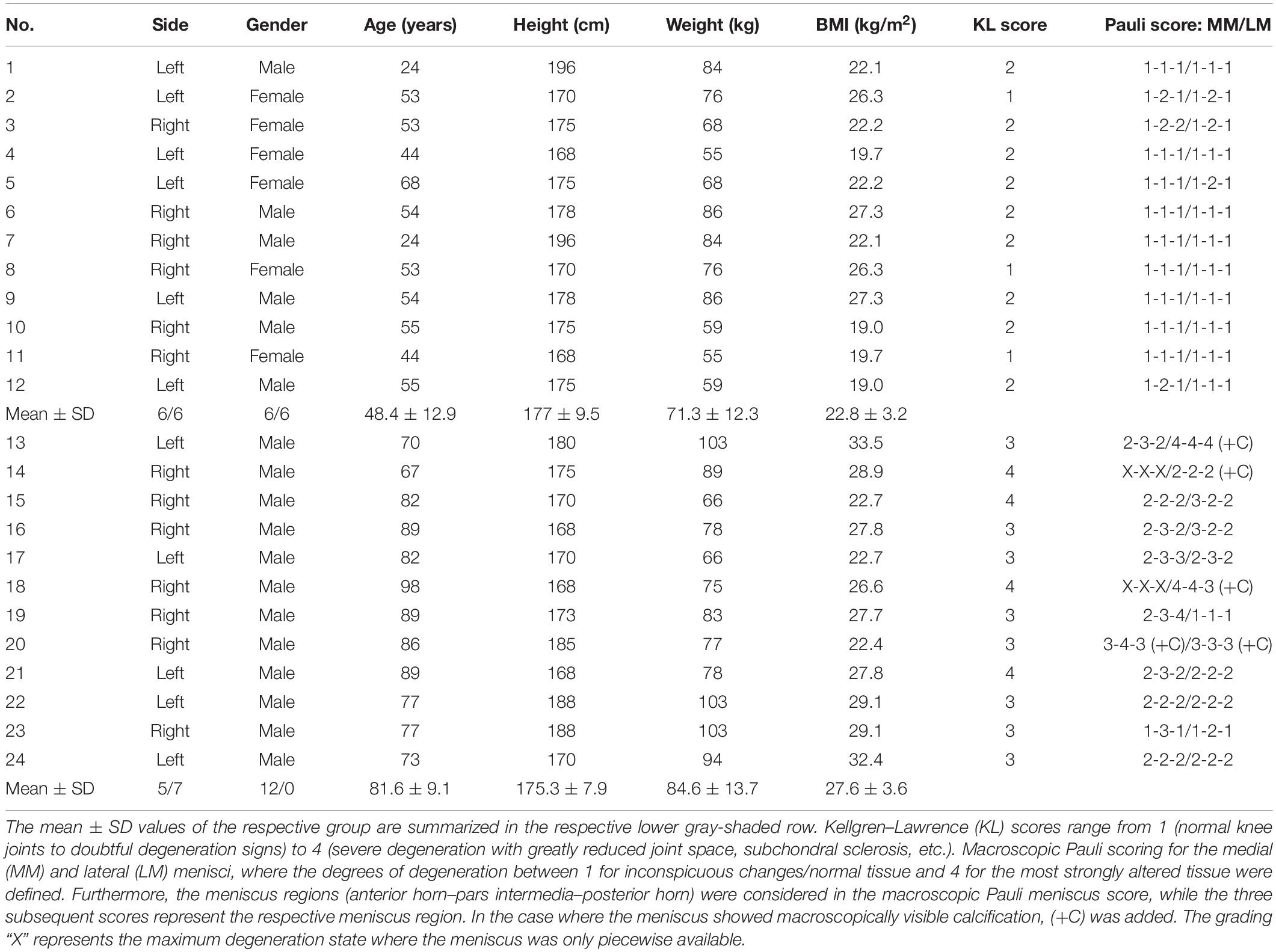

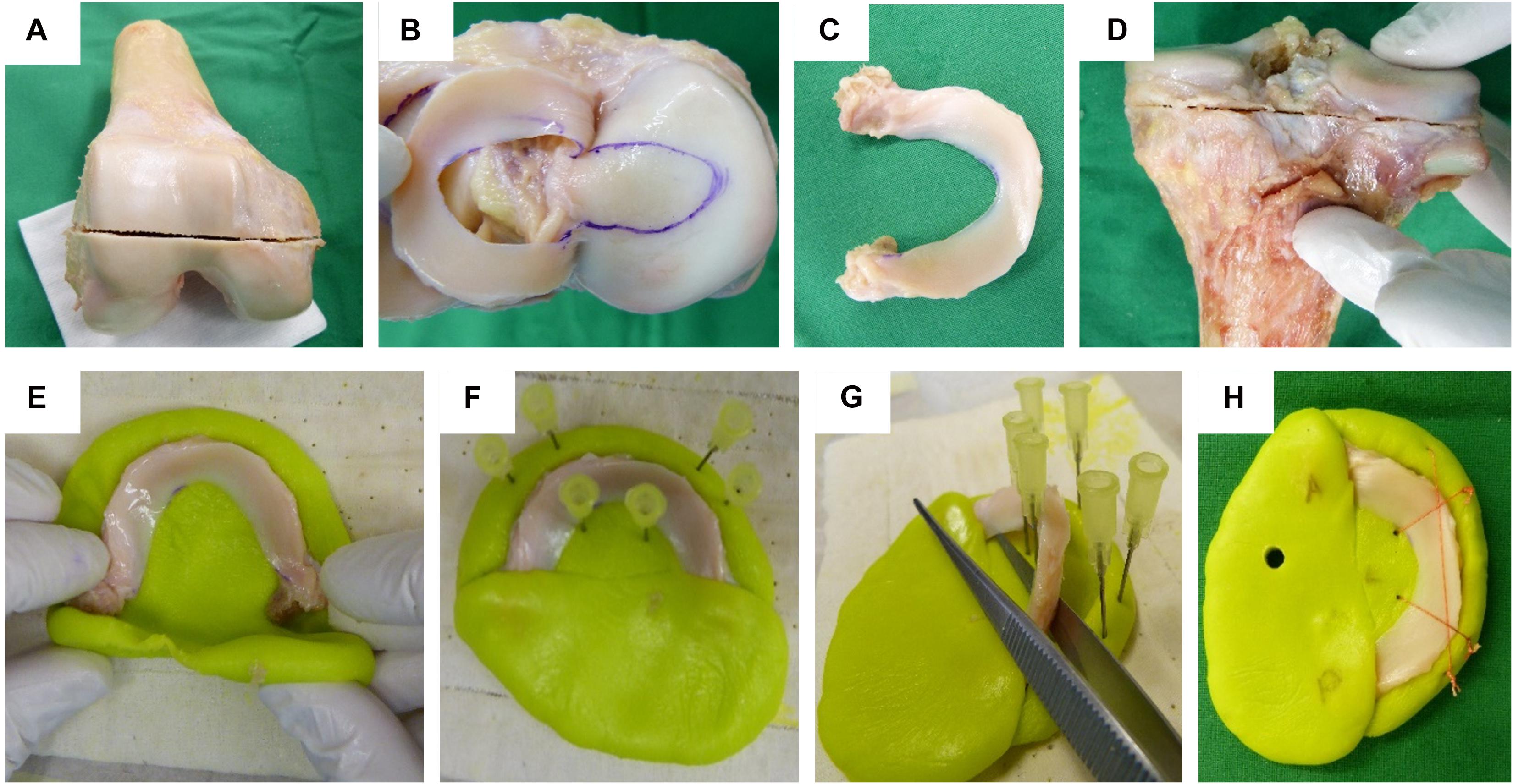

Following IRB approval (no. 70/16, Ulm University, Germany), 24 human knee joints were obtained from an official tissue bank (Science Care Inc., Phoenix, AZ, United States). On the basis of Kellgren–Lawrence (KL) grading (Kellgren and Lawrence, 1957) by three independent orthopedic surgeons, the joints were equally assigned to a mild (KL 1–2) and a severe (KL 3–4) degeneration group (Table 1). Prior to dissection, the joints were thawed at room temperature and the skin and soft tissues were removed. Following separation of the femur from the tibial plateau with the menisci remaining attached, the distal part of the femur was cut through a horizontal plane with a band saw to permit mounting on a sample holder (Figure 1A), which was then installed in a testing chamber filled with phosphate-buffered saline (PBS). Then, the menisci were additionally graded using the macroscopic Pauli scoring (Pauli et al., 2011) (Table 1). Subsequently, the meniscus-uncovered cartilage-to-cartilage contact (CtC) area on the lateral and medial compartments of the tibial plateau was marked using a tissue marker (Figure 1B). Following careful detachment of the coronary menisco-tibial ligaments and the connection to the medial collateral ligament of the medial meniscus, both menisci were chiseled out at their anterior and posterior root attachments (Figure 1C). The remaining tibial plateau was cut at a distance of 15 mm parallel to the joint line using a band saw (Figure 1D). Throughout the preparation process, the specimens were kept moist with PBS. During pretests, we recognized a significant circumferential deflection of the menisci when applying normal loading to their femoral surface. Therefore, each meniscus was embedded at its bony attachments by means of a customized polymethylmethacrylate (PMMA; Technovit 3040, Heraeus-Kulzer GmbH, Hanau, Germany) cast, which confined the meniscus along its circumference (Figures 1E,F). During the exothermic setting of the PMMA, special care was taken to avoid any heat-related impact on the menisci (Figure 1G). Furthermore, a trapezoidal interlinked suture material (Figure 1H) secured the menisci in the axial direction and prevented them from a lift-off during the thickness measurements. After the mechanical mapping and thickness measurements were completed, cylindrical samples were extracted from the center of each anatomical region of the menisci [anterior horn (AH), pars intermedia (PI), and posterior horn (PH)] using a biopsy punch (Ø = 4.6 mm, GlaxoSmithKline GmbH, Munich, Germany) (Martin Seitz et al., 2013; Warnecke et al., 2020). The AC samples of the tibial plateau were extracted by means of a trephine drill (Ø = 5.0 mm) at their meniscus corresponding subregions, covered by the meniscus (AH, PI, and PH) and at the CtC area. In a further preparation step, the cartilage layer was separated from the subchondral bone using a previously introduced cutting device (Martin Seitz et al., 2013). Immediately after the preparation process, the wet weight of both the menisci and AC samples were determined using a precision scale (AC120S, Sartorius AG, Göttingen, Germany). All samples were stored in special containers and lyophilized (Lyovac GT2, Finn-Aqua Santasalo-Sohlberg GmbH, Cologne, Germany), dry weighed, and the respective water content was determined.

Figure 1. Human cartilage and meniscus sample preparation: (A) Horizontal plane cut at the distal femur. (B) Highlighting the cartilage-to-cartilage contact area at the tibial plateau using a permanent tissue marker. (C) Separated lateral meniscus with its intact anterior and posterior root attachments. (D) Horizontal plane cut at the proximal tibia. Meniscus cast preparation steps: (E) The meniscus was initially embedded at a time point when the polymethylmethacrylate (PMMA) had a rubber-like texture. (F) Insertion of six needles to allow for a later positioning of security sutures. (G) During the exothermic hardening reaction of the PMMA, the meniscus body was temporarily removed. (H) Final meniscus sample including interlinked security sutures and anterior (A) and posterior (P) cast markings.

Biomechanical Testing

A multiaxial mechanical tester (MACH-1 v500css, Biomomentum Inc., Laval, QC, Canada) was used to biomechanically map the articulating surfaces of the menisci, tibial plateau, and femoral condyles of each of the 24 donor knees. Firstly, the built-in camera registration system was used to define repeatable patterns of the measurement points for each surface: the lateral and medial menisci were subdivided into their anatomical subregions (AH, PI, and PH); the tibial plateau was divided into a lateral and a medial CtC area and into the areas that were covered by the corresponding parts of the menisci (AH, PI, and PH). Finally, the distal femur was divided into its lateral and medial condyles. While the AC structures allowed for repeatable mapping grid patterns (tibial plateau compartment: n = 7 × 4; femoral condyles: n = 3 × 6; see Supplementary Material) with similar distances between each measurement point, the lateral and medial menisci received individual grid patterns for the biomechanical mapping. This was necessary because each meniscus was different in its anatomical appearance and degeneration state. In some cases, additional points were manually added. However, a minimum of four measurement points was defined for each meniscal subregion (AH, PI, and PH). An established algorithm (Sim et al., 2017) was applied to create the biomechanical mapping of the respective surfaces: firstly, the surface angle of the structure to be examined is determined. Secondly, according to this surface angle, a normal force is generated by correspondingly moving the three axes of the materials testing machine in a highly accurate coordinated manner. In doing so, a non-destructive indentation test (Table 2) utilizing a spherical indenter (diameter = 2 mm) was conducted to determine the relaxation behavior of each structure. Subsequently, the indenter was replaced with a 26-G 3/8-in. Precision-Glide intradermal bevel needle (BD, Franklin Lakes, NJ, United States) and inserted into the tissue at a test velocity of 0.5 mm/s and a stop criterion of 8 N while recording the force and indentation depth. Once the needle penetrated the tissue surface, the force started to increase. Reaching the subchondral bone or the underlying PMMA cast of the meniscus, respectively, the force response showed a sudden steep increase until the stop criterion was attained. The thickness (h) of the cartilage and menisci was then determined as the needle penetration depth between the point of first force increase and the sudden steep force increase (Jurvelin et al., 1995). Finally, this value was corrected for the surface angulation at the tested position by the cosine of the previously determined angle during the normal indentation procedure (Sim et al., 2017). On the basis of a mathematical least squares fitting of an elastic model in indentation (Hayes et al., 1972) on the experimental data, the IM at each measurement point was determined using the following equation:

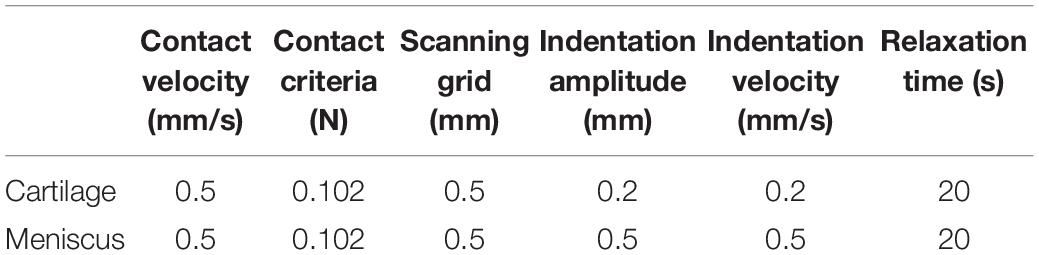

Table 2. Setup data to perform the biomechanical mapping of the cartilaginous (femur and tibia) and meniscus surfaces.

where P is the applied load in newton, H the indentation depth in millimeters ν the Poisson’s ratio, the radius of the contact region in millimeters hi the thickness at each position in millimeters, and κ is a correction factor that is dependent on a/h and ν.

In accordance with Seidenstuecker et al. (2019), we identified the Pmax, which equals the force registered by the load cell after reaching the AC and the meniscus-specific indentation amplitude. The initial viscous response of the tissues was examined by the determination of the Et20:

where σt20 is the stress in megapascal and εi is the strain in millimeters per millimeter. Pt20 is the load in N after the relaxation time of 20 s and Asseg is the resultant area of the spherical indentation segment after reaching the according indentation depth (H) in square millimeters. The Δσrelax in percent (Coluccino et al., 2017) was determined to gain further insight into the viscoelastic response of the biphasic materials:

where σmax is the maximum stress in megapascal.

Statistical Analysis

On the basis of the results of a similar study investigating IM differences between healthy and degenerated tibial cartilage (Seidenstuecker et al., 2019), an a priori sample size calculation [G∗Power 3.1 (Faul et al., 2007): α = 0.05, Power (1 − β) = 0.95, effect size (dz) = 4.71, n = 6] was performed to ensure sufficient statistical power of the study. Because no data exist on IM measurements on meniscus samples, the medical epidemiology and statistics department recommended increasing the sample size to n = 12 for both groups, resulting in a total sample size of n = 24. Gaussian distribution of the data was tested using the Shapiro–Wilk test, resulting in non-normally distributed data. Non-parametric statistical analyses were performed using a statistical software package (SPSS v24, IBM Corp., Armonk, NY, United States). A p < 0.05 was considered statistically significant, while p value Bonferroni correction was applied, where necessary. Spearman’s rank order correlation coefficients (rs) were calculated for the h, IM, Et20, Pmax, Δσrelax, and water content measurements of the mildly and severely degenerated articulating partners: menisci vs. tibial plateau AC, menisci vs. distal femoral AC, and tibial plateau AC vs. distal femoral AC. For detailed analysis, the articulating partners were separated into their lateral and medial compartments to detect possible regional correlations. Ancillary, degeneration-specific rs were calculated for the biomechanical parameters (IM, Pmax, Et20, and Δσrelax) and the water content of the articulating partners.

Results

Menisci

The thickness of the lateral menisci ranged between 1.4 and 8.1 mm and that of the medial menisci between 1.6 and 7.0 mm (Table 3). Meniscal thickness of the different anatomical regions was neither different for the mildly degenerated menisci (Friedman test: p > 0.56) nor for the severely degenerated menisci (p > 0.34). By tendency, the severely degenerated lateral and medial menisci were 23% and 16% thicker, respectively, compared to their mildly degenerated counterparts. Mann–Whitney U tests revealed an 88% greater meniscal thickness at the PH of the severely degenerated lateral menisci (p < 0.01) and a 69% thicker PI at the severely degenerated medial menisci (p = 0.03) compared to their mildly degenerated counterparts.

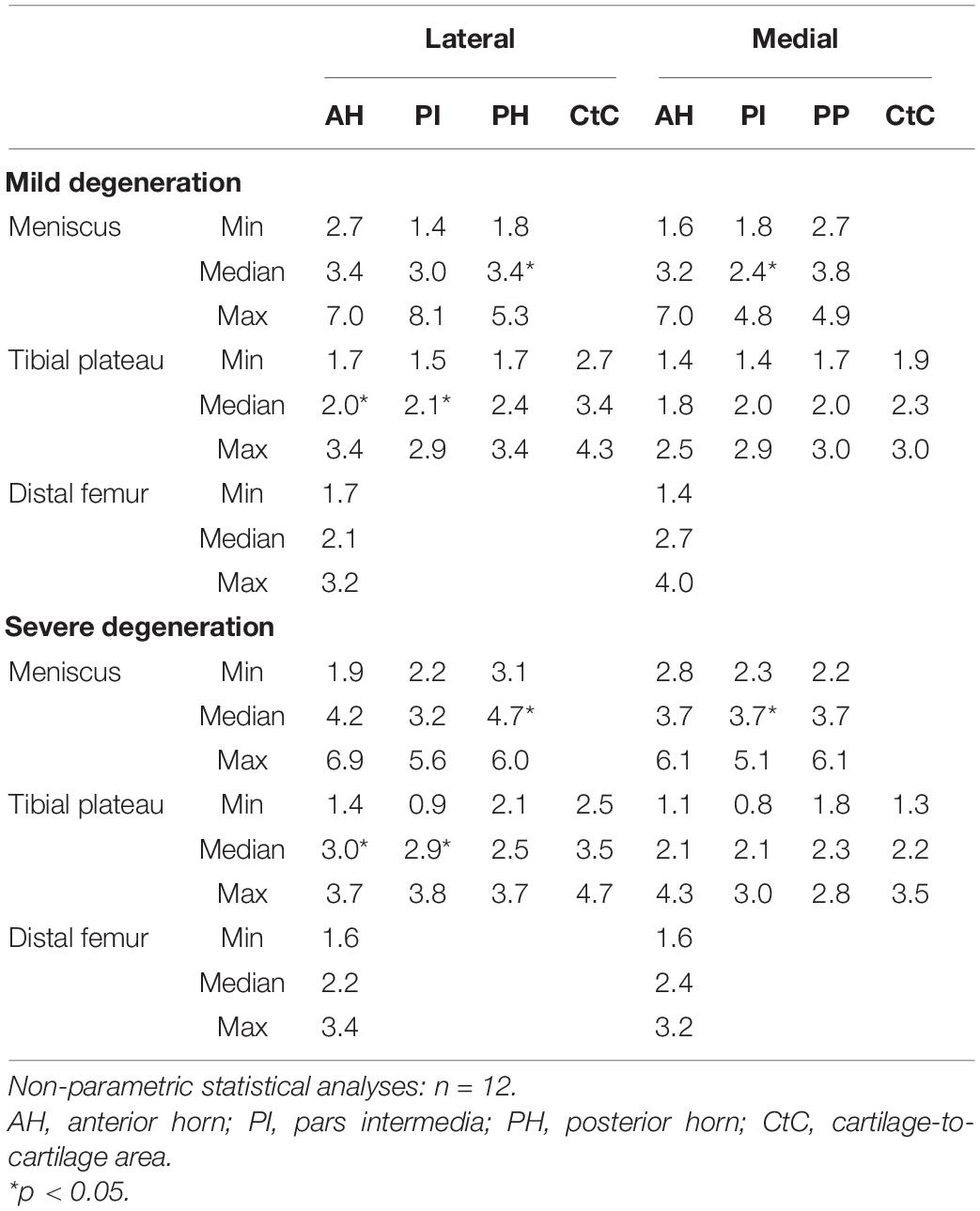

Table 3. Thickness measurement results (minimum, median, and maximum values) of the cartilaginous (femur and tibia) and meniscus (AH, PI, PH, and CtC area) surface localizations.

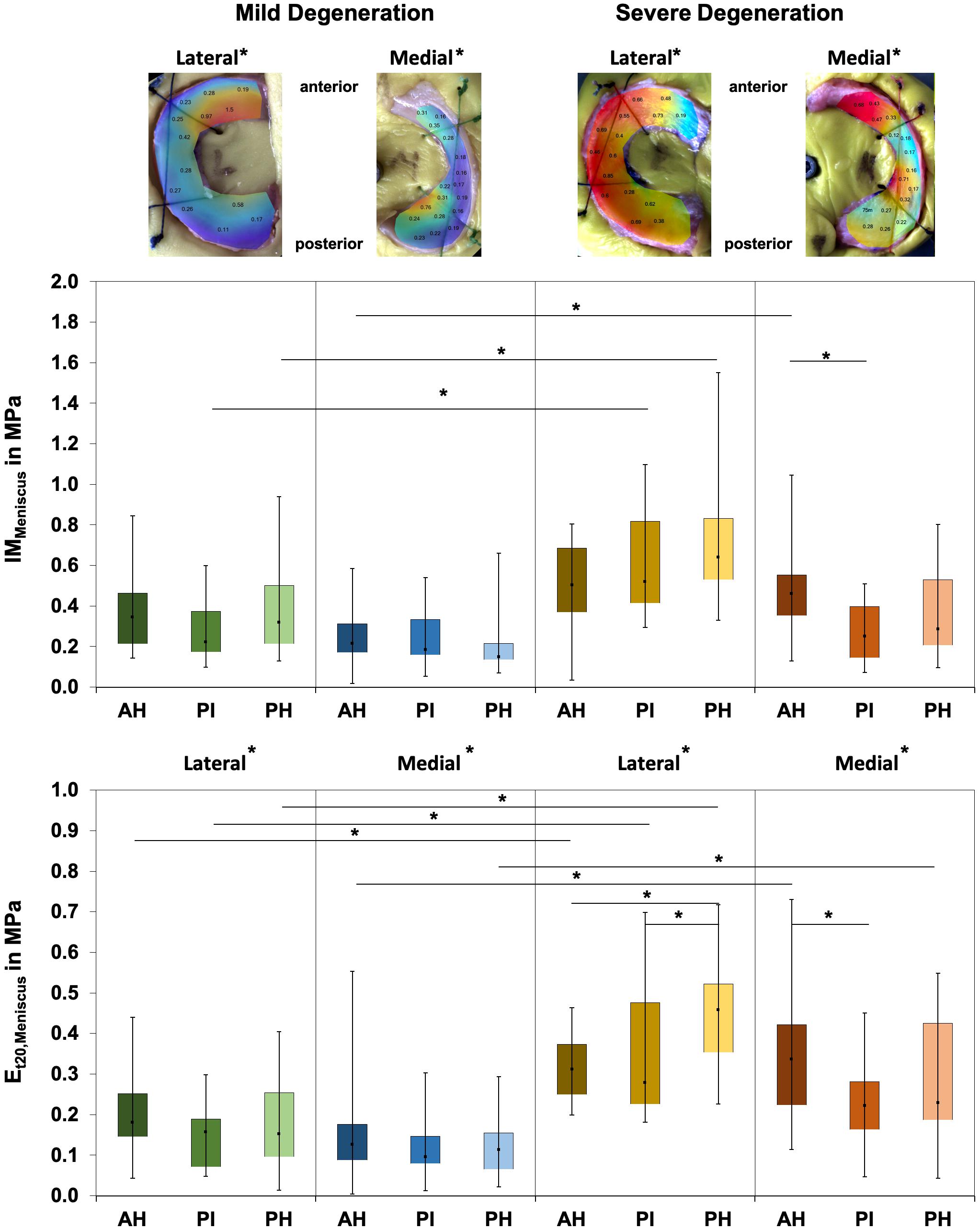

Friedman testing revealed significant differences for the IM, which ranged between 0.1 and 1.58 MPa, only at the severely degenerated medial menisci (p = 0.01; Figure 2) and for the localizations AH vs. PI (p = 0.01). Lateral menisci had a higher IM than medial menisci for both the mild (p = 0.01) and severe (p = 0.01) degeneration groups. Compared to the corresponding mildly degenerated menisci, the severely degenerated lateral and medial menisci displayed a 64% higher (Mann–Whitney U test: p = 0.01) and a 54% higher (p = 0.02) IM, respectively. In detail, the severe lateral PI (Mann–Whitney U test: p < 0.01) and lateral PH (p < 0.01) indicated 100% and 91%, respectively, higher IM values compared to their mildly degenerated lateral PI and PH counterparts. Regarding the anatomical region degeneration comparison of the medial menisci, only the AH of the severely degenerated menisci indicated a statistically relevant 66% higher IM value (p = 0.03) compared to the mildly degenerated menisci. The Et20 of the mildly degenerated menisci ranged between 0.01 and 0.55 MPa (Figure 2) and those of the severely degenerated menisci between 0.04 and 0.73 MPa. While Friedman testing revealed no differences regarding the anatomical subregions of the mildly degenerated menisci, the severely degenerated menisci indicated differences both at the lateral (p = 0.04) and medial (p < 0.01) menisci. Consecutive Wilcoxon testing showed a statistically higher Et20 of the lateral PH vs. AH (p = 0.03) and PH vs. PI (p = 0.01), while for the severely degenerated medial menisci, the Et20 of the AH was higher compared to the PI (p < 0.01). Compared to the mildly degenerated menisci, the Et20 values of the severely degenerated menisci were 127% (Mann–Whitney U test: p < 0.01) and 75% (p < 0.01) higher on the lateral and medial sides, respectively. All anatomical subregions of the severely degenerated menisci indicated significantly higher Et20 values (Mann–Whitney U test: AH: 91%, p < 0.01; PI: 137%, p < 0.01; PH: 136%, p < 0.01) compared to their mildly degenerated counterparts. The severely degenerated AH indexed a 78% higher (p = 0.02) and the PH an 84% higher (p = 0.01) Et20 compared to their mildly degenerated counterparts.

Figure 2. Representative biomechanical mappings of the instantaneous modulus (IM) measurements of mildly and severely degenerated menisci, with all values given in megapascal. Middle row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial IMMeniscus values in megapascal. Lower row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial Et20 values in megapascal. Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn. Non-parametric statistical analyses: n = 12; *p < 0.05. For reasons of readability, we marked significant differences between the mild and severe degeneration of the medial and lateral sides above the representative biomechanical mappings.

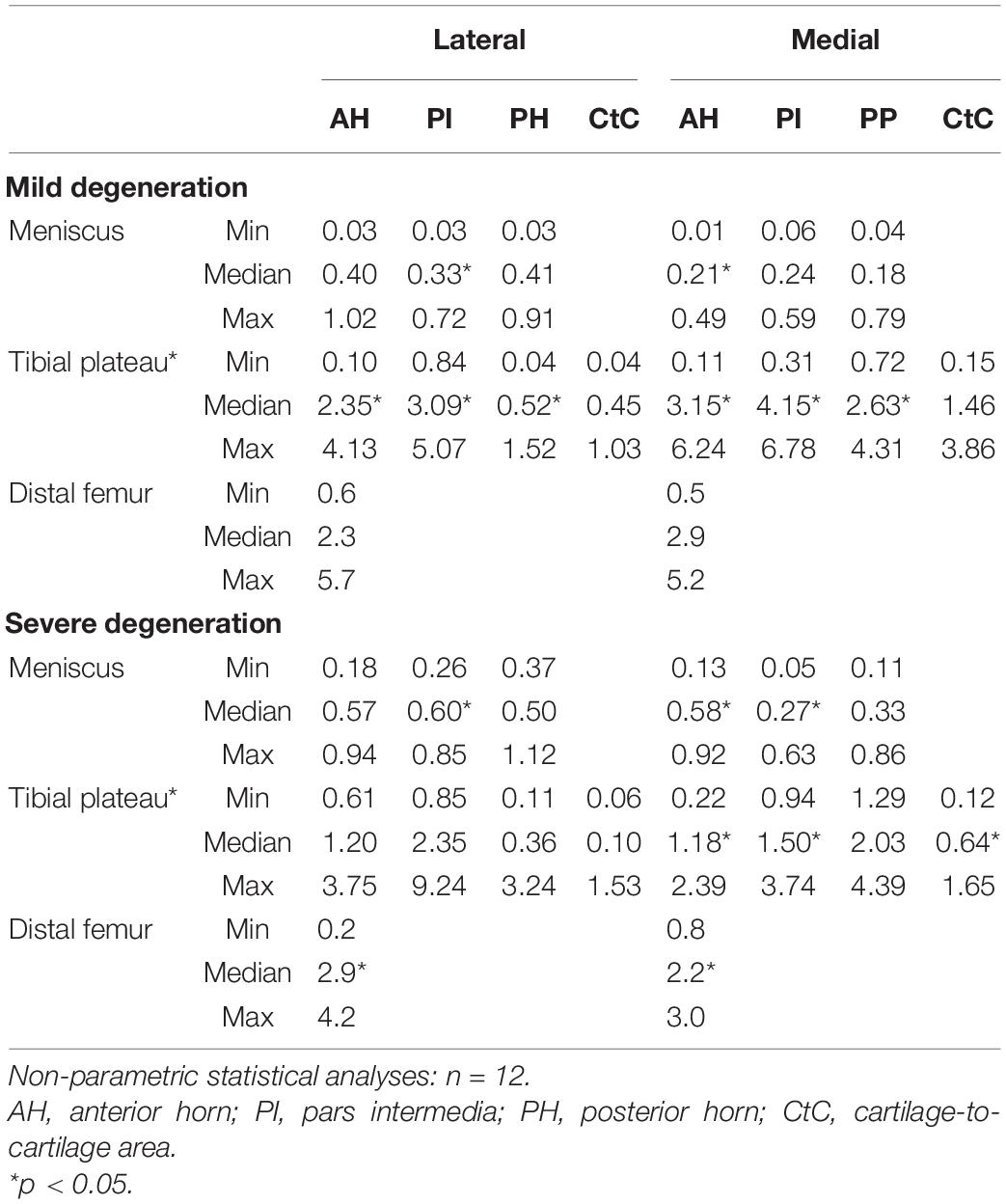

The Pmax values of the mildly degenerated menisci ranged between 0.01 and 1.02 N without subregional differences (Friedman test: p > 0.17; Table 4). The severely degenerated medial menisci displayed subregional differences (p = 0.02), with a 114% higher Pmax only for the comparison between the AH and PI (Wilcoxon test: p = 0.02). Both lateral and medial severely degenerated menisci displayed a 47% (p < 0.01) and 53% (p < 0.01), respectively, higher Pmax than their mildly degenerated comparators. In detail, compared to the mildly degenerated menisci, the severely degenerated lateral PI (Mann–Whitney U test: p < 0.01) and medial AH (p < 0.01) indicated 88% and 105% higher Pmax values, respectively.

Table 4. Maximum applied load, Pmax (minimum, median, and maximum values), of the cartilaginous (femur and tibia) and meniscus (AH, PI, PH, and CtC area) surface localizations.

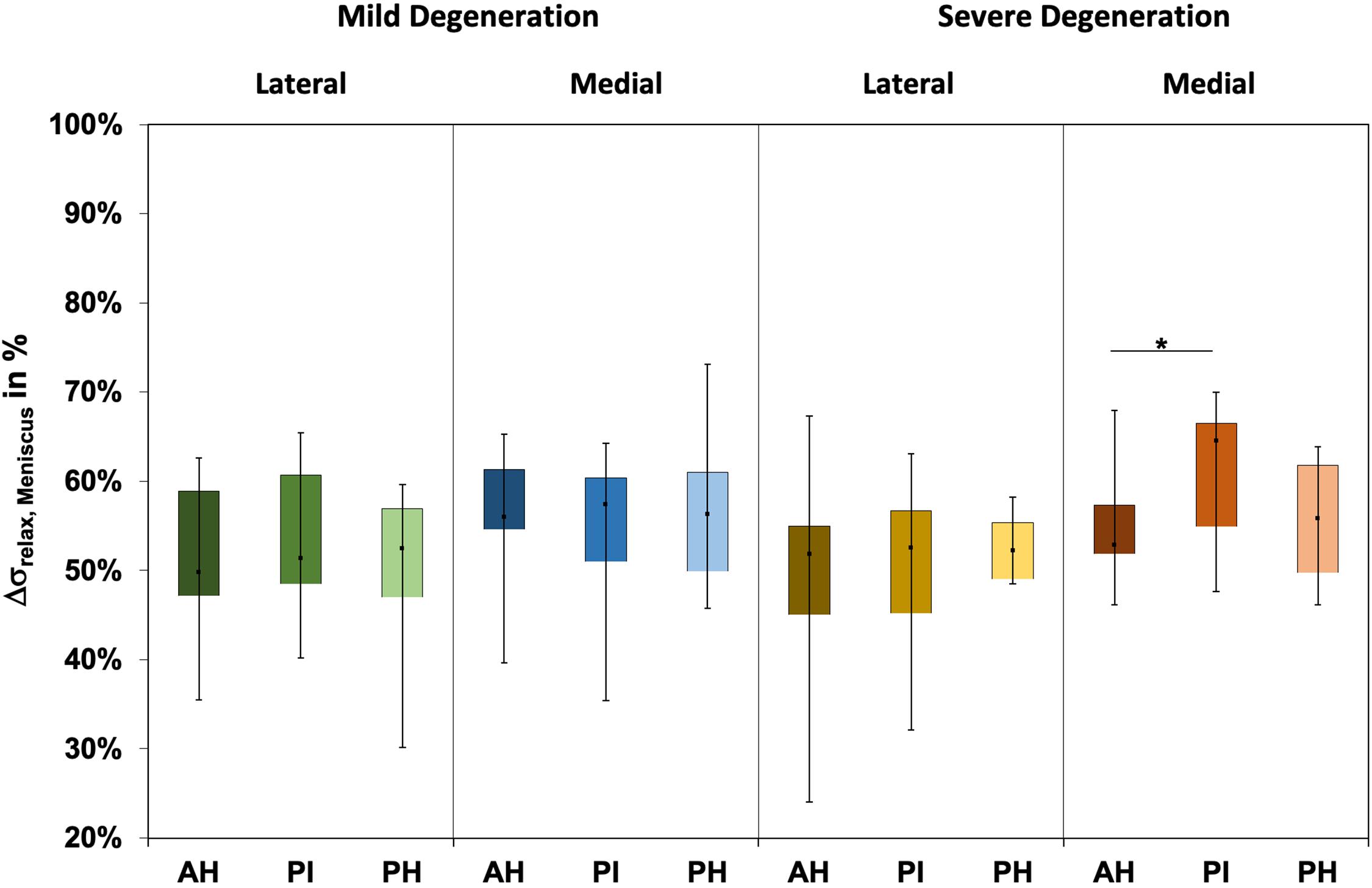

The Δσrelax (Figure 3) ranged between 24% and 73% and indicated subregional differences only for the severely degenerated medial menisci (Friedman test: p = 0.01) between the AH and PI (Wilcoxon test: p = 0.03). The Δσrelax was identical for the mildly and severely degenerated menisci (Mann–Whitney U test: p > 0.71).

Figure 3. Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the relaxation percentages over the maximum stress (Δσrelax) of the mildly and severely degenerated menisci. Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn. Non-parametric statistical analyses: n = 12; *p < 0.05.

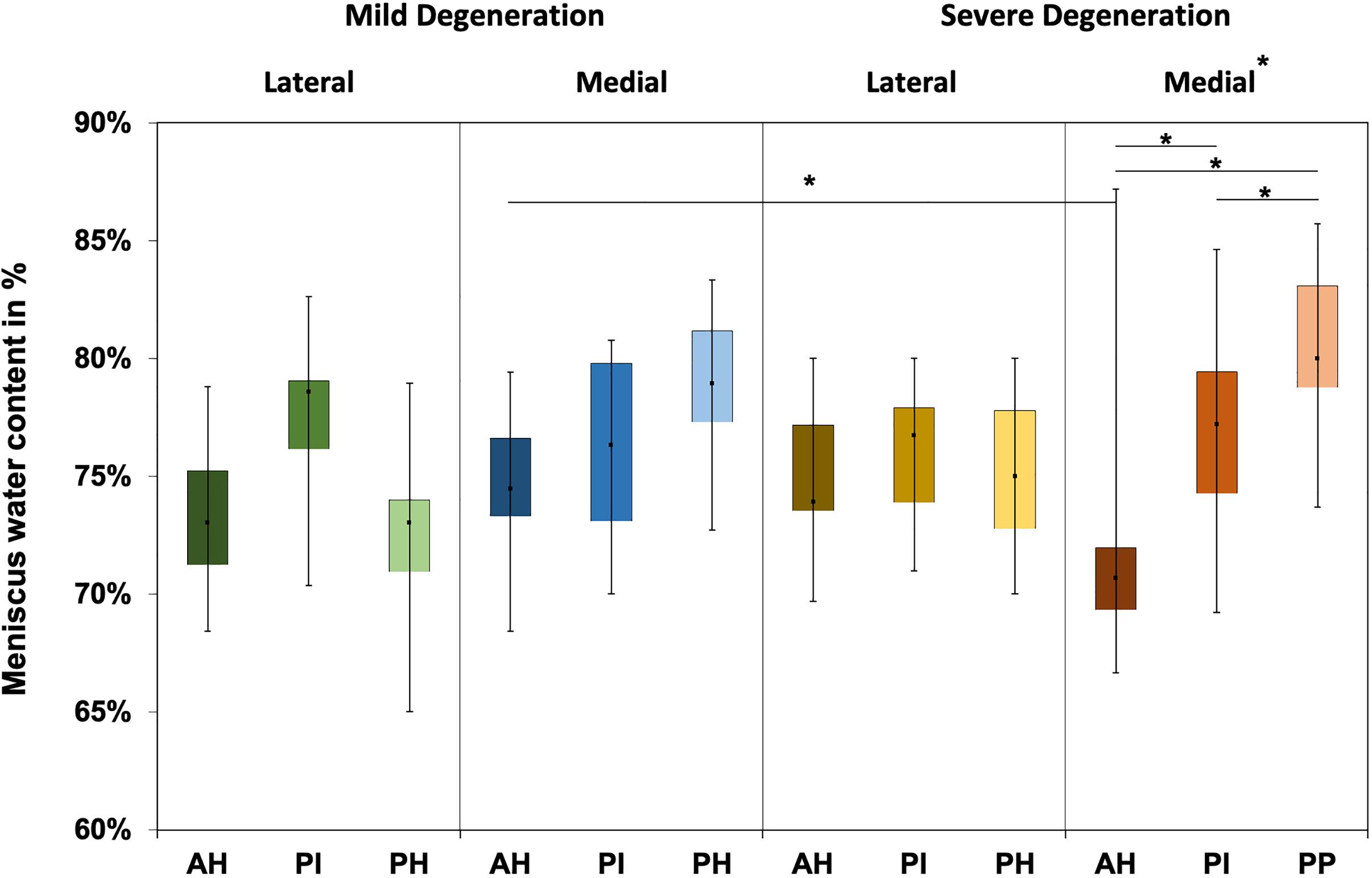

The water content of the menisci ranged between 65% and 87% (Figure 4), while only the severely degenerated medial menisci indicated subregional differences (Friedman test: p < 0.01). In detail, the AH indicated significantly less water content compared to the corresponding PI (−8%, p = 0.04) and PH (−12%, p = 0.01), and the PI contained more water (4%, p = 0.01) than the PH. By tendency, the severely degenerated menisci showed a higher water content both for the lateral (∼2%) and medial (∼1%) compared to the mildly degenerated menisci.

Figure 4. Water content value box plots (minimum, maximum, median, and 25th and 75th percentiles) in percent of the mildly and severely degenerated menisci. Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn. Non-parametric statistical analyses: n = 12; *p < 0.05.

Tibial Cartilage

The thickness of the AC of the tibial plateau ranged between 0.8 mm, measured at the PI at the medial compartment of the severely degenerated joints, and 4.7 mm, measured at the CtC area at the lateral compartment of the severely degenerated joints (Table 3). Friedman testing revealed a statistical difference for the thickness measurements between both the medial and lateral subregions (AH, PI, and PH) of the mildly degenerated knees (p < 0.01). Regarding the severely degenerated knees, only the lateral compartment indicated subregional differences (Friedman test: p < 0.01). In general, the cartilage at the CtC area was always thicker compared to the meniscus-covered cartilage. Severely degenerated tibial cartilage was, on average, 29% (Mann–Whitney U test: p < 0.01) thicker compared to the mildly degenerated tibial cartilage. Detailed analyses revealed that the severely degenerated menisci were thicker at the lateral AH (73%, p = 0.02) and lateral PI (75%, p = 0.02) compared to their mildly degenerated counterparts.

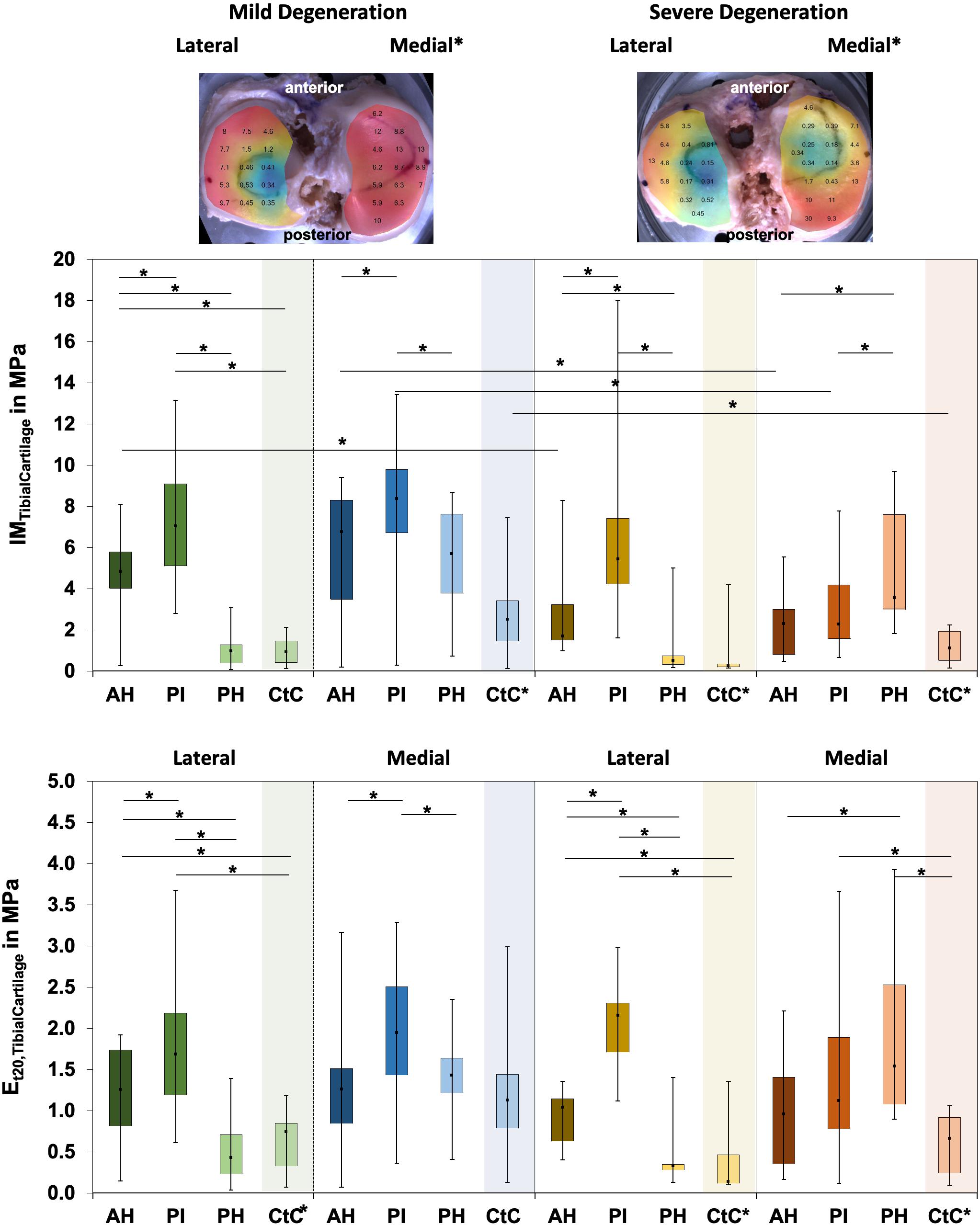

The IM values for tibial AC covered by the menisci were localization-dependent (Friedman test: p < 0.02; Figure 5) and ranged between 0.1 and 17.6 MPa. In detail, the lateral compartment of the mildly degenerated knees displayed differences for all localizations (p < 0.01), while the medial compartment showed differences at the AH vs. PI (p < 0.01) and the PI vs. PH (p = 0.01). For the severely degenerated joints, the IM was different at all localizations on the lateral compartment (p < 0.01) and on the medial compartment at the AH vs. PH (p < 0.01) and the PI vs. PH (p = 0.01). Comparing the areas covered by the menisci with the uncovered CtC area showed lower IM values for the CtC area (p < 0.03), except for the lateral PH of the mildly degenerated joints (p = 0.53). At the mildly degenerated joints, the IM was higher for the medial than for the lateral side (Mann–Whitney U test: p < 0.01), while for the severely degenerated joints the IM was similar for both compartments. Degeneration had no influence on the lateral cartilage IM (Mann–Whitney U: p = 0.17), while the medial compartment cartilage of the mildly degenerated joints had higher IM values (p < 0.01) than those of the severely degenerated joints. Mann–Whitney U tests indicated that the lateral AC compartments displayed a significantly higher IM (68%, p = 0.03) for the mildly degenerated AH subregion compared to its severely degenerated counterpart. On the medial side, the mildly degenerated AC indicated higher IM values at the subregions of the AH (84%, p = 0.02) and PI (94%, p < 0.01) and at the meniscus-uncovered CtC region (65%, p = 0.04) compared to their severely degenerated counterparts. Comparable to the IM results, the Et20 values for the meniscus-covered tibial AC were also different for the subregions (Friedman test: p < 0.02) and ranged between 0.04 and 3.93 MPa (Figure 5). The lateral mildly (Wilcoxon test: p < 0.03) and severely (p < 0.01) degenerated tibial AC showed differences between all anatomical localizations, with the highest values at the PI subregion. The mildly degenerated medial tibial AC indicated differences between the Et20 values of the AH vs. PI (p < 0.01) and the PI vs. PH (p < 0.01), also with the highest values at the PI subregion. The severely degenerated medial tibial AC indicated statistically higher Et20 values only for the PH vs. AH (60%, p = 0.04). Comparisons between the meniscus-covered and uncovered AC regions indicated differences between the mildly degenerated lateral AH (p < 0.01) and PI (p < 0.01), with significantly higher values for the meniscus-covered subregions compared to the CtC region. Severely degenerated meniscus-covered lateral AC had a 7.6 times higher Et20 (p < 0.01) at the AH and 16 times higher (p < 0.01) at the PI compared to the CtC region. Both the PI (p = 0.04) and PH (p < 0.01) of the severely degenerated medial menisci had significantly higher values compared to the meniscus-uncovered CtC region. There was neither for the lateral nor for the medial tibial AC compartments a difference of the Et20 values (Mann–Whitney U test: p > 0.07) between the mild and severe degeneration states.

Figure 5. Representative biomechanical mappings of the instantaneous modulus (IM) measurements of the mildly and severely degenerated tibial plateau articular cartilage (AC), with all values given in megapascal. Middle row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial IMTibialCartilage values in megapascal. Lower row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial Et20 values in megapascal. Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn; CtC, cartilage-to-cartilage contact area. Non-parametric statistical analyses: n = 12; *p < 0.05. For reasons of readability, we marked significant differences between the mild and severe degeneration of the medial and lateral sides above the representative biomechanical mappings and also between the CtC and other anatomical compartments only at the legend of the category axis (e.g., CtC*).

The Pmax of the degenerated tibial AC ranged between 0.04 and 9.24 N (Table 4). The Pmax showed subregional dependencies for the lateral and medial compartments and for both degeneration conditions (Friedman test: p < 0.03). In detail, the Pmax values of the mildly and severely degenerated lateral tibial AC subregions were different (Wilcoxon test: p < 0.01) and indicated a descending Pmax from the PI (3.11 and 2.35 N, respectively) to the AH (72% and 51%, respectively) to the PH (21% and 15%, respectively). The mildly degenerated medial AC also showed the highest Pmax value at the PI, which was statistically higher, compared to the AH (31%, p = 0.02) and the PH (46%, p < 0.01). The severely degenerated tibial medial AC was only different for the comparison between the AH and PH (p < 0.01). The meniscus-covered tibial AC indicated statistically higher Pmax values (Wilcoxon test: p < 0.04) compared to the respective CtC regions, with the exception of the mildly degenerated lateral AC at the PH subregion (p = 0.31). Progressing degeneration significantly decreased the Pmax (−34%; Mann–Whitney U test: p < 0.01) of the medial tibial AC, while the lateral side remained unaffected (p = 0.16). In detail, the Pmax at the medial tibial AC decreased with progressing degeneration at the AH (−43%; Mann–Whitney U test: p = 0.03), PI (−50%, p < 0.01), and the meniscus-uncovered CtC region (−39%, p = 0.05).

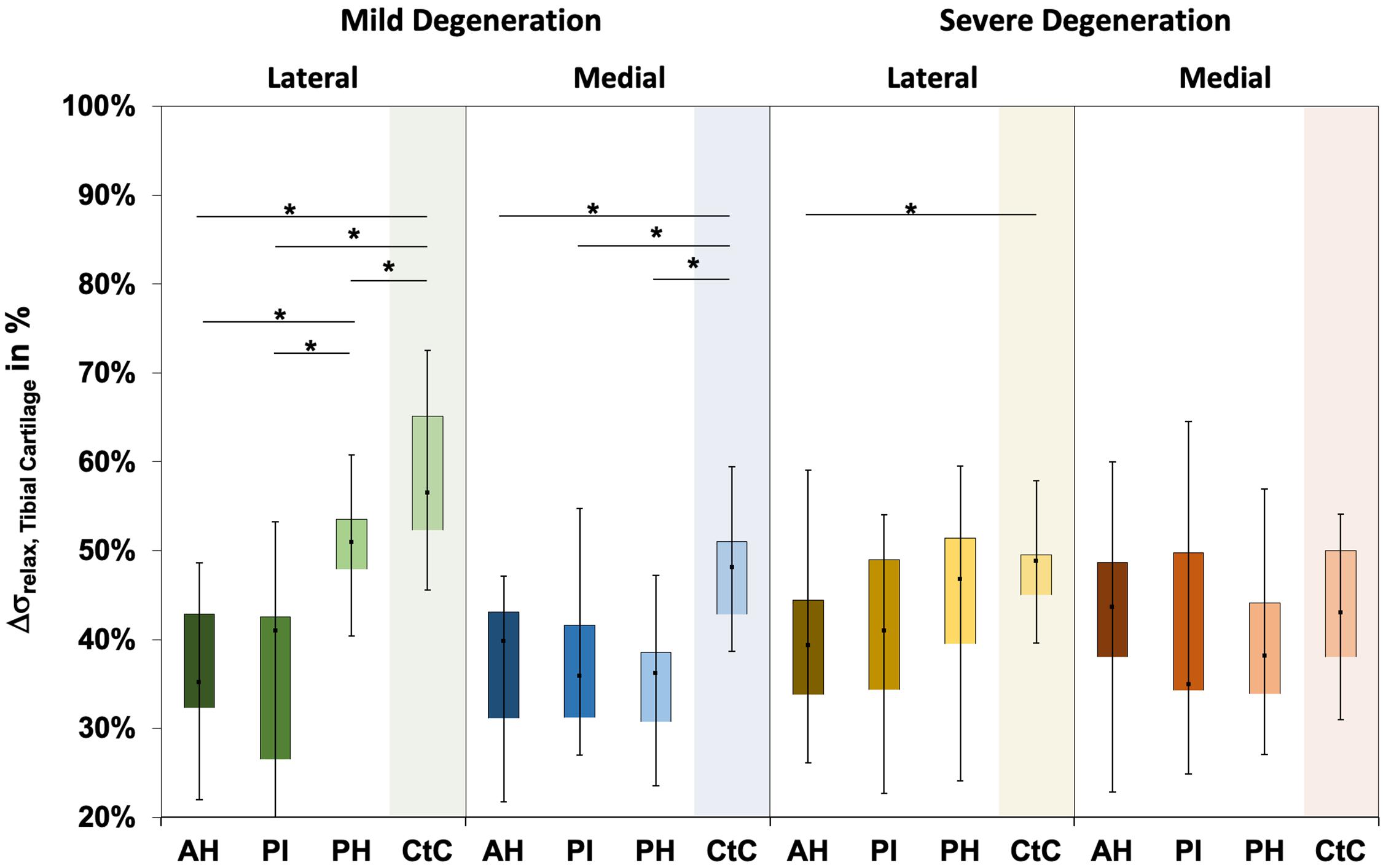

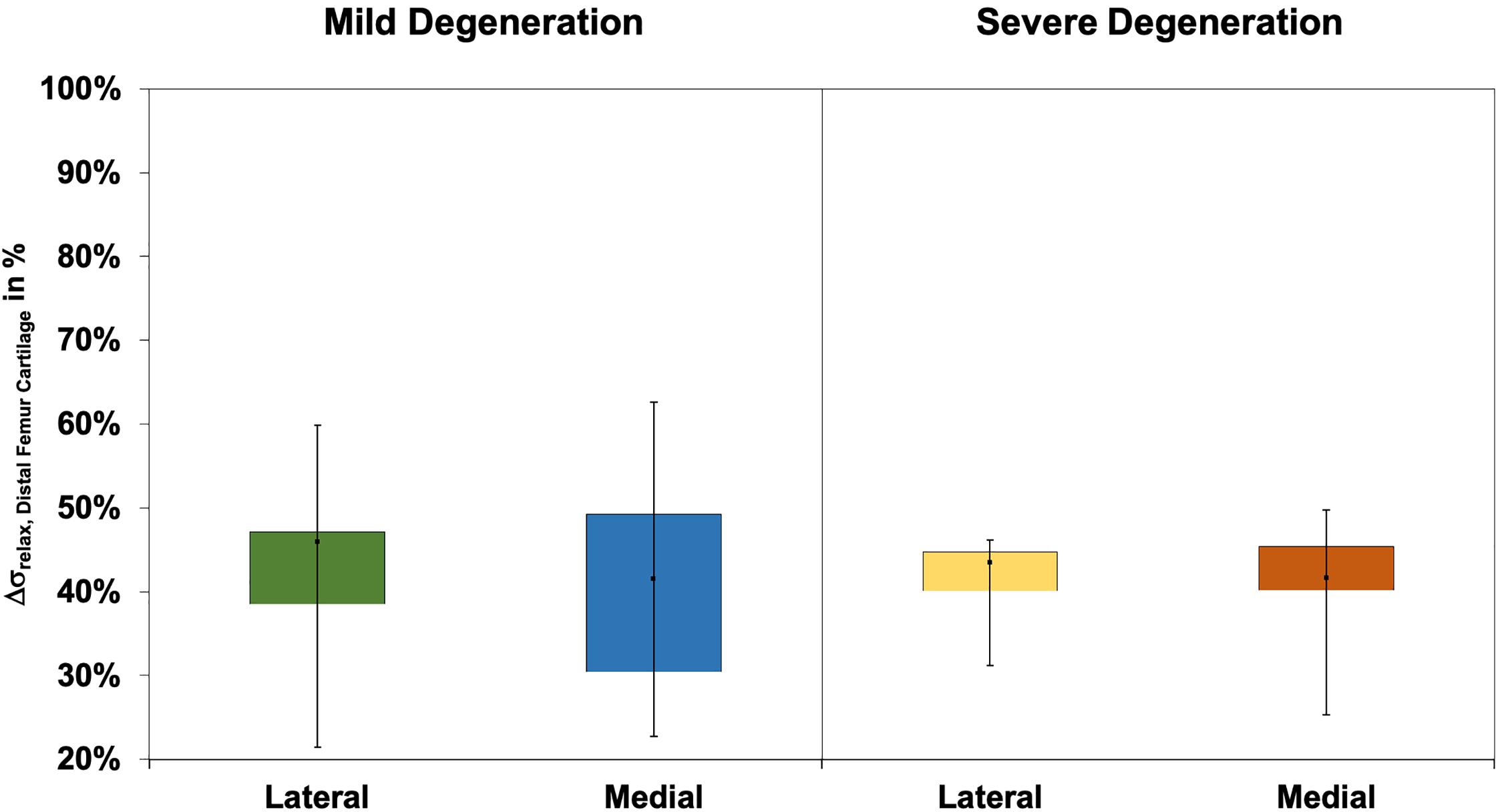

The Δσrelax ranged between 15 and 73% for the mildly and between 23% and 65% for the severely degenerated tibial AC (Figure 6). Friedman testing indicated subregional differences for the mildly degenerated lateral tibial AC (p < 0.01) between the AH and PH (Wilcoxon test: p < 0.01) and the PI and PH (p < 0.01). Mildly degenerated joints indicated significantly higher Δσrelax values of the uncovered CtC area compared to all lateral (Wilcoxon test: p < 0.01) and medial meniscus-covered subregions (p < 0.01). Regarding the severely degenerated tibial AC, only the lateral AH and the lateral CtC area showed significant differences (p = 0.03). There was no statistical difference for the Δσrelax values between the mildly and severely degenerated tibial AC (Mann–Whitney U test: p > 0.05).

Figure 6. Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the relaxation percentages over the maximum stress (Δσrelax) of the mildly and severely degenerated tibial plateau articular cartilage (AC). Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn; CtC, cartilage-to-cartilage contact area. Non-parametric statistical analyses: n = 12; *p < 0.05.

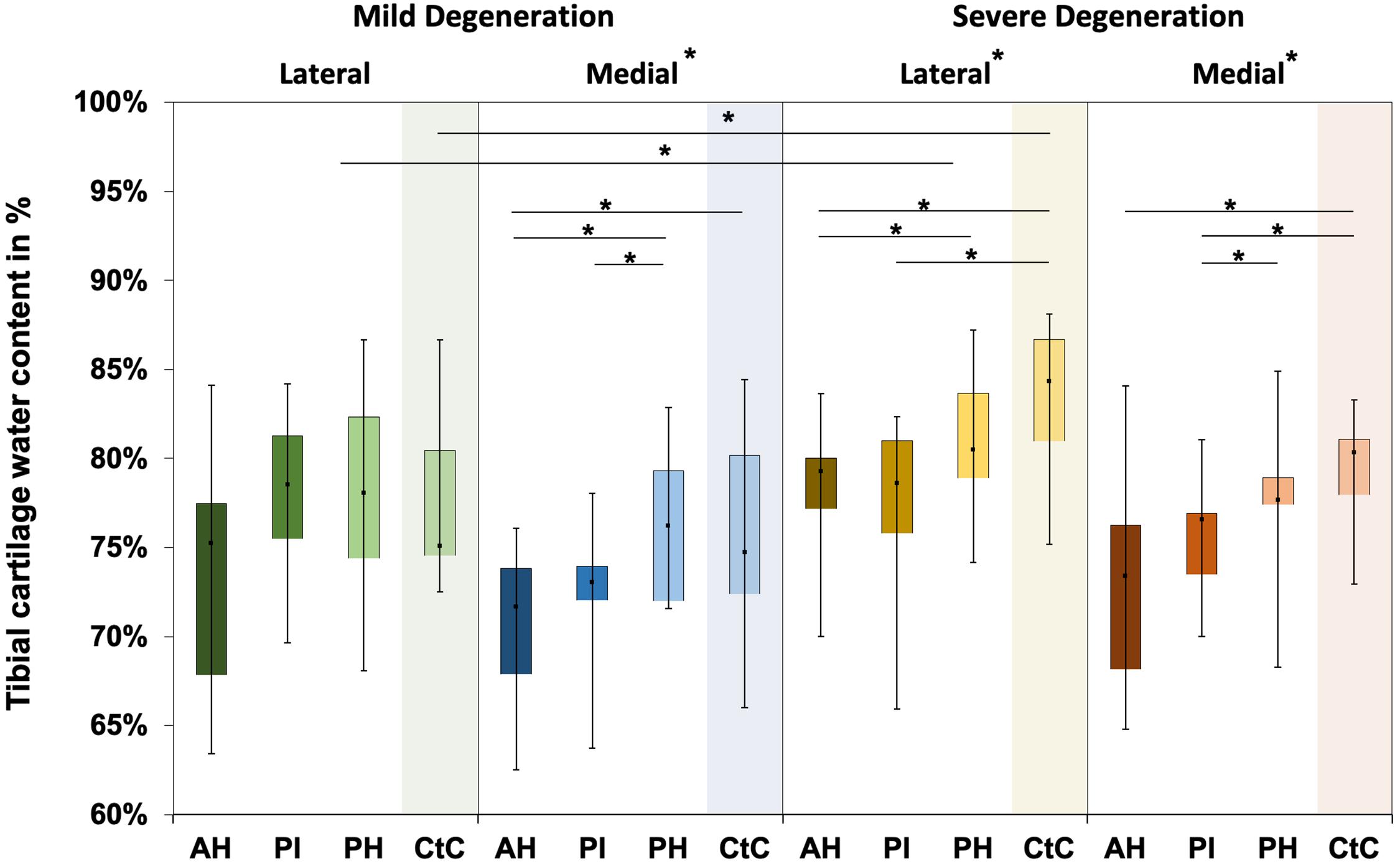

Water content ranged between 63% and 87% for the mildly degenerated and between 65% and 88% for the severely degenerated tibial AC (Figure 7). With the exception of the mildly degenerated lateral tibial AC, Friedman testing revealed subregional water content differences at the mildly degenerated medial (p < 0.01) and both severely degenerated (lateral: p = 0.04; medial: p = 0.03) tibial AC compartments. In general, the AH indicated the lowest water content of all the subregions. Wilcoxon testing indicated an 11% higher water content for the PH vs. AH (p < 0.01), 10% higher for the CtC vs. AH (p = 0.02), and 6% higher for the PH vs. PI (p = 0.05) at the mildly degenerated medial tibial AC. Detailed comparisons of the severely degenerated lateral AC indicated a 6% higher water content for the comparison of the PH vs. AH (p = 0.02), 11% higher water content for the CtC area AH (p = 0.01), and 10% higher water content for the CtC area compared to the PI (p = 0.01). The severely degenerated medial PI subregion indicated 7% less water content compared to PH (p < 0.01) and 11% less compared to the CtC area (p = 0.02). Moreover, the CtC area indicated 12% more (p = 0.02) water content than the AH subregion. The severely degenerated tibial AC contained significantly more water than the mildly degenerated tibial AC (lateral: 9%, p < 0.01; medial: 3%, p = 0.04). Consecutive Mann–Whitney U tests revealed a statistically higher water content at the severely degenerated lateral PH (10%, p = 0.03) and lateral CtC area (18%, p = 0.03) compared to their mildly degenerated counterparts.

Figure 7. Water content in percent of the mildly and severely degenerated tibial plateau articular cartilage (AC). Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the tibial cartilage water content values in percent. Subdivided anatomical regions are: AH, anterior horn; PI, pars intermedia; PH, posterior horn; CtC, cartilage-to-cartilage contact area. Non-parametric statistical analyses: n = 12; *p < 0.05.

Distal Femur Cartilage

The thickness of the mildly and severely degenerated distal femur cartilage ranged between 1.4 and 4.0 mm and showed similar values (p > 0.24) for both the lateral and medial compartments (Table 3).

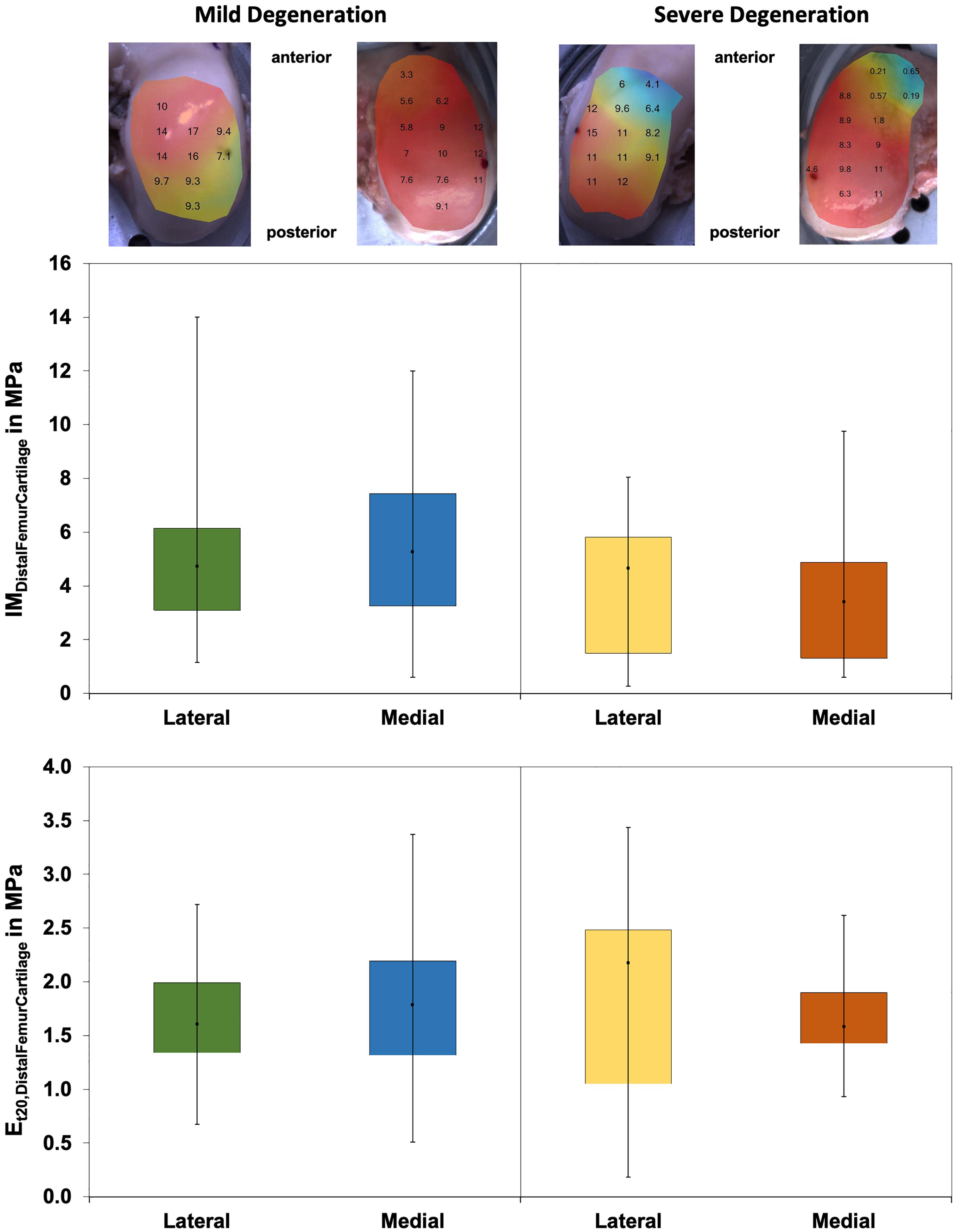

Wilcoxon testing indicated differences neither between the medial and lateral distal femur cartilage IM values (p > 0.58; Figure 8) nor in the Et20 values (p > 0.45). Although the IM values of the severely degenerated medial distal femur cartilage were 35% lower (lateral: 2% lower) compared to its mildly degenerated counterpart, the values were not different (p > 0.21). The Et20 values were also not different (Mann–Whitney U test: p > 0.57) between the mild and severe degeneration groups.

Figure 8. Representative maps of the instantaneous modulus (IM) measurements of the mildly degenerated and severely degenerated distal femora, with all values given in megapascal. Middle row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial IMDistalFemurCartilage values in megapascal. Lower row: Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the lateral and medial Et20 values in megapascal. Non-parametric statistical analyses: n = 12.

The Pmax values were different between the severely degenerated lateral and medial distal femoral AC, indicating significantly higher values for the lateral side (Wilcoxon test: 34%, p = 0.03; Table 4). Progressing degeneration had no influence on the Pmax of the distal femoral AC (Mann–Whitney U test: p > 0.18).

The Δσrelax values (Figure 9) of the distal femur AC ranged between 21% and 63% and indicated no difference between the lateral and medial condyles (p > 0.39). The degeneration progression also had no influence on the distal femur AC Δσrelax (Mann–Whitney U test: p > 0.32).

Figure 9. Box plots (minimum, maximum, median, and 25th and 75th percentiles) of the relaxation percentages over the maximum stress (Δσrelax) of the mildly and severely degenerated distal femur articular cartilage (AC). Non-parametric statistical analyses: n = 12.

Correlations

The thickness of the severely degenerated lateral menisci and the corresponding tibial plateau thickness indicated a significant correlation (rs = 0.36, p = 0.03), whereas the menisci and femur AC thickness did not correlate with each other. The thickness of the severely degenerated lateral tibial CtC and the corresponding severely degenerated lateral femoral AC correlated well (rs = 0.86, p < 0.01). Both the IM (p > 0.12) and Et20 (p > 0.12) values of the mildly and severely degenerated meniscal, tibial, and femoral AC did not correlate with each other. The Pmax values of the meniscus and tibia (p > 0.68) as well as the meniscus and femur (p > 0.37) did not correlate. The Pmax of the mildly degenerated tibial CtC AC and the mildly degenerated corresponding femoral AC showed a significant correlation (rs = 0.62, p = 0.03). The Δσrelax of the severely degenerated medial menisci and of the severely degenerated tibial AC indicated a significant negative correlation (rs = −0.42, p = .04), whereas the menisci and femur, as well as the tibial CtC and femur AC, Δσrelax did not correlate with each other. The water content of the menisci and the according tibial plateau AC did not correlate (p > 0.15).

There were significant correlations for both mildly and severely degenerated lateral and medial menisci for the IM vs. Et20 (rs > 0.64, p < 0.01), IM vs. Pmax (rs > 0.48, p < 0.01), IM vs. Δσrelax (rs < −0.36, p < 0.03), Et20 vs. Pmax (rs > 0.34, p < 0.04), and Pmax vs. Δσrelax (rs < −0.55, p < 0.01). The water content showed only a correlation at the severely degenerated medial menisci for the IM measurements (rs = −0.55, p < 0.01).

Tibial plateau AC indicated significant Spearman’s correlations for the IM vs. Et20 (rs > 0.79, p < 0.01), IM vs. Pmax (rs > 0.80, p < 0.01), and Et20 vs. Pmax (rs > 0.69, p < 0.01), while the Et20 vs. Δσrelax (rs = 0.50, p < 0.01) correlated only for the severely degenerated medial tibial plateau AC. Additionally, the mildly and severely degenerated lateral tibial plateau AC indicated correlations for the IM vs. Δσrelax (rs < −0.43, p < 0.01) and Pmax vs. Δσrelax (rs < −0.55, p < 0.01). The biomechanical properties of the lateral tibial plateau and the mildly degenerated medial tibial plateau AC did not correlate with the water content. The water content showed a correlation only for the Et20 (rs = 0.45, p = 0.03) at the severely degenerated medial tibial AC.

The mildly and severely degenerated CtC area of the lateral and medial tibial plateaus showed significant correlations for the IM vs. Et20 (rs > 0.79, p < 0.01), IM vs. Pmax (rs > 0.77, p < 0.01), and Et20 vs. Pmax (rs > 0.62, p < 0.05).

Both mildly and severely degenerated lateral femoral AC indicated significant correlations for the IM vs. Et20 (rs > 0.74, p < 0.01), IM vs. Pmax (rs > 0.70, p < 0.02), and Et20 vs. Pmax (rs > 0.60, p < 0.05). The mildly degenerated medial femoral AC correlated for the IM vs. Pmax (rs = 0.99, p < 0.01), while the severely degenerated medial femoral AC showed significant correlations for the IM vs. Δσrelax (rs = −0.66, p = 0.04), Et20 vs. Pmax (rs = 0.64, p = 0.05), and Pmax vs. Δσrelax (rs = −0.79, p < 0.01).

The water content did not correlate with the meniscus thickness. Only the mildly degenerated lateral tibial plateau cartilage and the according CtC area showed a positive correlation between the water content and cartilage thickness (rs = 0.50, p < 0.01, and rs = 0.69, p < 0.03, respectively).

Discussion

The most important finding of this study is that, with progressing joint degeneration, there was an increase in the lateral and medial meniscal IM, Et20, and Pmax values, while the underlying tibial AC indicated an IM and Pmax decrease only at the medial compartment. The stress relaxation percentage Δσrelax, which could be interpreted as a measure for the viscous properties of the menisci (Coluccino et al., 2017), was not affected by the degeneration of any of the investigated articulating partners. OA progression had no influence on any of the investigated biomechanical parameters of the distal femoral AC. Furthermore, our water content analyses indicated that, with progressing OA degeneration, the tibial AC water content significantly increased, while the menisci showed only a tendential increase.

Our results suggest that, in severely degenerated joints, the meniscal stiffness increased, whereas the femoral and tibial AC softened. Particularly at the lateral compartment, changes of the biomechanical parameters (IM, Et20, and Pmax) were detectable in the menisci prior to the AC. This could be interpreted as a first sign that OA-related knee joint degeneration first initiates a stiffening of the meniscus surface, followed by a softening of the AC. This could lead to disturbed tibiofemoral contact transmission, where the menisci might play an active role by causing premature cartilage degeneration. In contrast to the severely degenerated joints, the mildly degenerated joints displayed positive correlation values for the IM, where the stiffness for both the meniscus and AC increased. Therefore, it appears that there is a degeneration-dependent pivot point of correlation. Furthermore, we believe that this is the first study that comprehensively investigated the biomechanical changes of the articulating knee joint partners during different degeneration grades. In particular, the preparation technique to allow for the biomechanical mapping of the menisci is described here for the first time.

Thickness Measurements

Although the needle indentation method is the gold standard for AC thickness measurement (Horbert et al., 2019), to our knowledge, this is the first time that this method has been applied to degenerated menisci. Indeed, the ranges measured in the present study were in accordance with those obtained with in vivo magnetic resonance imaging (Erbagci et al., 2004; Bamac et al., 2006; Hunter et al., 2006) and ex vivo caliper measurements (Takroni et al., 2016). The AC thickness results of the present study are in agreement with those given in the literature: while in the early degeneration phase the cartilage matrix indicates hypertrophic swelling (Buck et al., 2010), resulting in increased thickness (Hunter et al., 2008; Buck et al., 2010, 2013; Sim et al., 2017), which is also shown in the CtC area of our mildly degenerated joints, and further progression of cartilage degeneration leads to reduced structural integrity (Waldstein et al., 2016). This, combined with increased wear, culminates in substantial cartilage volume loss associated with decreased cartilage thickness (Stefanik et al., 2016; Waldstein et al., 2016; Horbert et al., 2019; Seidenstuecker et al., 2019), which is in accordance with the measurements found particularly at the medial compartments of the severely degenerated knees of the present study. Furthermore, the mild (six females/six males) and severe degeneration (no female/12 males) groups were clearly different in gender distribution. It is known that male knee joints display not only up to 46.6% higher cartilage volumes compared to female knee joints but also a 13.3% higher mean cartilage thickness (Faber et al., 2001). This unequal gender distribution might have further amplified the AC thickness difference between the mild and severe degeneration groups.

Biomechanical Mapping

We found an increasing IM with progressing meniscal degeneration for both the lateral and medial menisci, ranging from 0.1 to 1.6 MPa. This range is in agreement with the values published for healthy and degenerated human menisci (Moyer et al., 2012, 2013; Kwok et al., 2014; Danso et al., 2015; Fischenich et al., 2015). While Danso et al. (2015) and Fischenich et al. (2015) used a likely similar method to the present study, Kwok et al. (2014) applied atomic force microscopy and Moyer et al. (2012, 2013) used nanoindentation to determine the IM of human meniscal tissue. However, only two of these studies (Kwok et al., 2014; Fischenich et al., 2015) focused on the impact of meniscal degeneration on the IM. Whereas Fischenich et al. (2015) observed an IM decrease with progressing degeneration, Kwok et al. (2014) reported, similar to the results in the present study, an IM increase with progressing degeneration. A possible explanation for the contrary IM trends between the study of Fischenich et al. (2015) and the present study might be the different scoring methods that were principally used to group the specimens. While Fischenich et al. (2015) used the macroscopic Pauli score (Pauli et al., 2011) with detailed subcategories from 1 to 4, we applied the radiographic KL score to assign the specimens initially into two groups, combining the lower and higher KL scores, respectively. Furthermore, the different testing methods might also have an impact on the contrary outcomes. While we analyzed multiple testing points within one anatomical region and extracted the respective median values, the group of Fischenich used only one testing point within each region. However, at the posterior meniscus region, which was the region with the most homogeneous sample distribution with regard to the degeneration state, Fischenich et al. (2015) also reported both an increase in the instantaneous compressive modulus and an increase in the equilibrium compressive modulus with progressing degeneration. Additionally, we confined the specimen along the circumference because we observed during pretests a recognizable radial deflection of the menisci during the normal indentation tests, particularly on the femoral-facing outer meniscal rim. The region-specific differences observed in the current study coincide with the findings from other studies (Moyer et al., 2012; Danso et al., 2017).

In contrast to the increased lateral and medial meniscal IM with progressing degeneration, we found a statistical IM decrease only at the medial tibial plateau AC. Although not significant, there was a softening tendency of both the tibial plateau and femoral condyle surfaces. This trend and the IM values of the femoral condyles measured in the current study (range = 0.5–9 MPa) are in accordance with those reported by Sim et al. (2014, 2017). However, unlike Sim et al. (2017), the IM values of the AC of the femoral condyles did not display significant changes with progressing degeneration, although a trend toward a decrease in the IM with degeneration was observed, particularly on the medial side. This difference could be explained by the different donor groups and the different non-correlating OA grading scores (Down et al., 2011) used in the comparison studies. While Sim et al. post hoc graded the asymptomatic articular surfaces of their eight specimens on an adapted macroscopic Committee of the International Cartilage Repair Society (ICRS) score (Mainil-Varlet et al., 2003) and histological Mankin scoring (Mankin et al., 1981), we grouped our 24 knees a priori into two different degeneration groups, which was based on KL and macroscopic Pauli scorings. Despite the use of different measurement methods, the IM values for the tibial AC were similar to those reported in the literature (Deneweth et al., 2013; Sim et al., 2014, 2017; Seidenstuecker et al., 2019): while Sim et al. (2014, 2017) and Seidenstuecker et al. (2019) used the same automated indentation mapping method, Deneweth et al. (2013) performed a manual mapping method, where full-thickness 4-mm diameter cylindrical AC explants were extracted from the tibial plateau and exposed to unconfined compression. The IM values were lower in the study of Seidenstuecker et al. (2019), which might be due to the fact that no section-specific subdivision of the tibial plateau was performed during their examinations. While they divided their tibial AC into meniscus-covered and uncovered regions, we additionally grouped the covered parts in the three anatomical regions of the menisci, resulting in more diverging IM results. The values of Deneweth et al. (2013) were generally higher than those reported in any other study, which could be explained by the non-osteoarthritic knees they used in their study and by the different test method. Even so, they observed the same region-specific tendencies for both the meniscus-covered and CtC areas as we saw in the mildly degenerated knee joints. Additionally, Seidenstuecker et al. (2019); Swann and Seedhom (1993), and Yao and Seedhom (1993) measured significantly softer cartilage at the CtC area compared to that covered by the meniscus, which was further underlined by the present study and also found at both the mildly and severely degenerated AC of the tibial plateau.

The Pmax values during the spherical indentation mappings of the knee joint AC and menisci have been investigated very recently (Seidenstuecker et al., 2019; Pordzik et al., 2020a, b) by the same group. In their studies, similar indentation mappings were used: (Pordzik et al., 2020a, b) found mean Pmax values of 0.01 ± 0.01 N for healthy and 0.02 ± 0.02 N for OA menisci. The present study could confirm the previous findings where the severely degenerated menisci exhibited a significantly higher Pmax compared to the mildly degenerated menisci. However, our Pmax values were higher than those of Pordzik et al., which might be explained by the larger indenter diameter (2 vs. 1 mm), deeper indentation depth (0.5 vs. 0.2 mm), and greater indentation speed (0.5 vs. 0.2 mm/s) used in the present study. Seidenstuecker et al. (2019) and Pordzik et al. (2020b) also investigated the Pmax of degenerated and healthy tibial AC. Although using different indenter sizes and indentation parameters, the values of the present study were in the same range as those reported in the literature. However, in contrast to a decrease in the Pmax with progressing degeneration, Seidenstuecker et al. (2019) reported higher Pmax values for their OA cartilage samples compared to healthy samples. Several authors have reported a softening of AC with OA progression (Akizuki et al., 1986; Setton et al., 1999; Temple-Wong et al., 2009; Hosseini et al., 2013), which might help to explain the contrary Pmax findings, which also confirmed the findings of Pordzik et al. (2020b), who found a Pmax decrease with progressing OA.

In the present study, we were unable to combine the time-consuming mapping procedure with a stress relaxation test until the equilibrium state of the AC and menisci was reached. Such relaxation procedures are normally conducted for at least 30–60 min (Korhonen et al., 2002; Chia and Hull, 2008; Martin Seitz et al., 2013; Danso et al., 2017; Warnecke et al., 2020) to obtain the matrix stiffness. However, in the current test setup, such long relaxation times would have resulted in testing times of more than 24 h, which might result in severe autolysis. We would like to point out that the here established Et20 parameter is rather a measure for viscoelasticity: it is generally accepted that the time-dependent behavior of the AC and meniscus tissue is due to the fluid flow inside the porous matrix. Coluccino et al. (2017) further concluded that the percentage of stress relaxation (Δσrelax) can be interpreted as a measure of the viscous properties of the structure that are mainly governed by the proteoglycan content and tissue porosity. During their biomechanical analyses of healthy bovine meniscus samples of different orientations, they identified stress relaxation percentages between 79% and 88% after reaching the equilibrium state (Coluccino et al., 2017). In our study, we found Δσrelax values for mildly and severely degenerated human menisci ranging from 50 to 65% after a relaxation time of 20 s. This reflects the typical negative exponential of the stress relaxation curve of biphasic cartilage and meniscus samples indicating a rapidly decreasing stress. Therefore, we assume that it reflects, to some extent, the viscoelastic behavior of the biphasic structures and allows for a comparison between the different tissues and degeneration groups. Consequently, based on the here obtained parameters IM and Et20 in combination with Δσrelax, it is possible to characterize the viscoelastic properties of the mildly and severely degenerated AC and meniscus tissue.

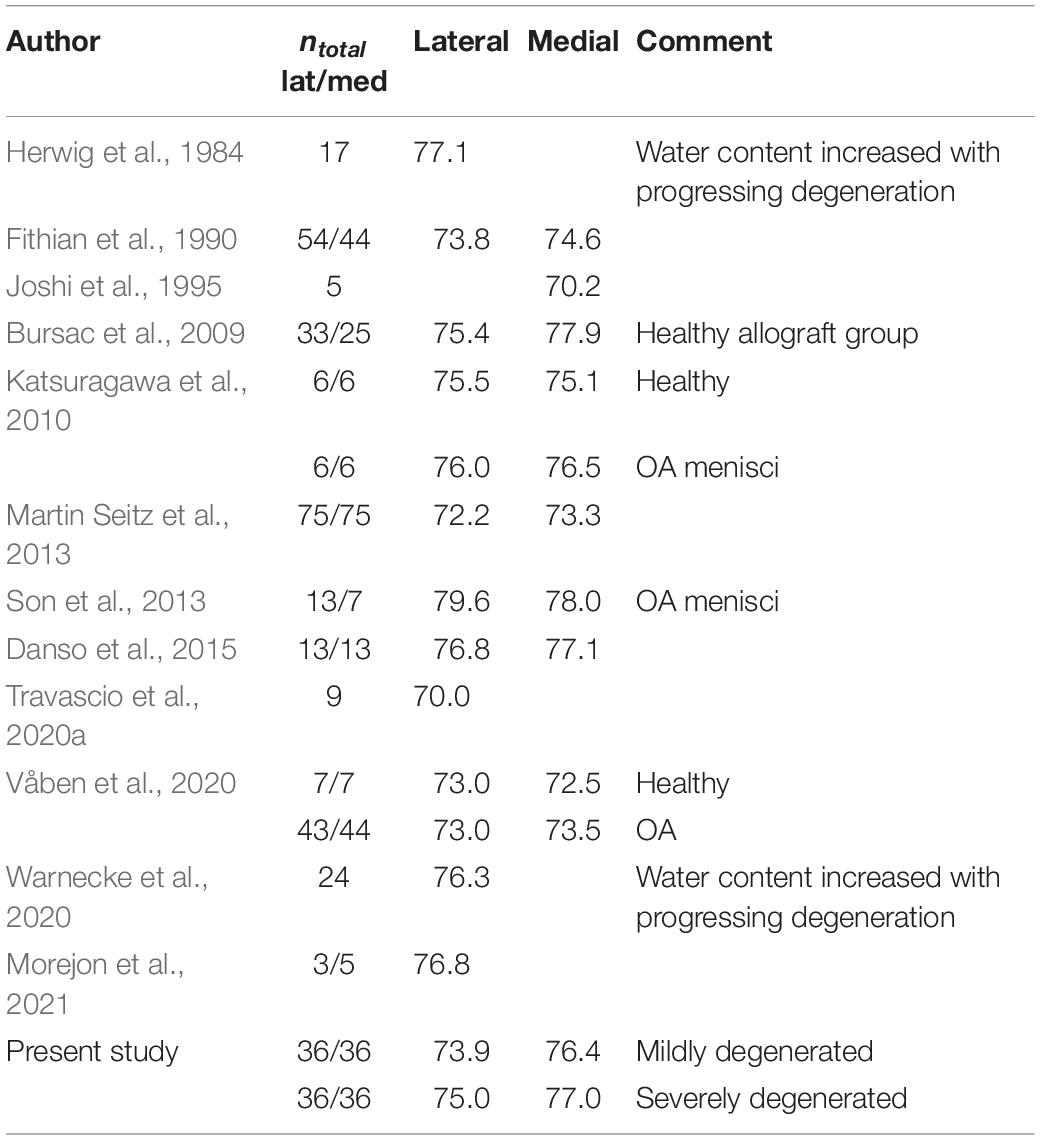

Water Content

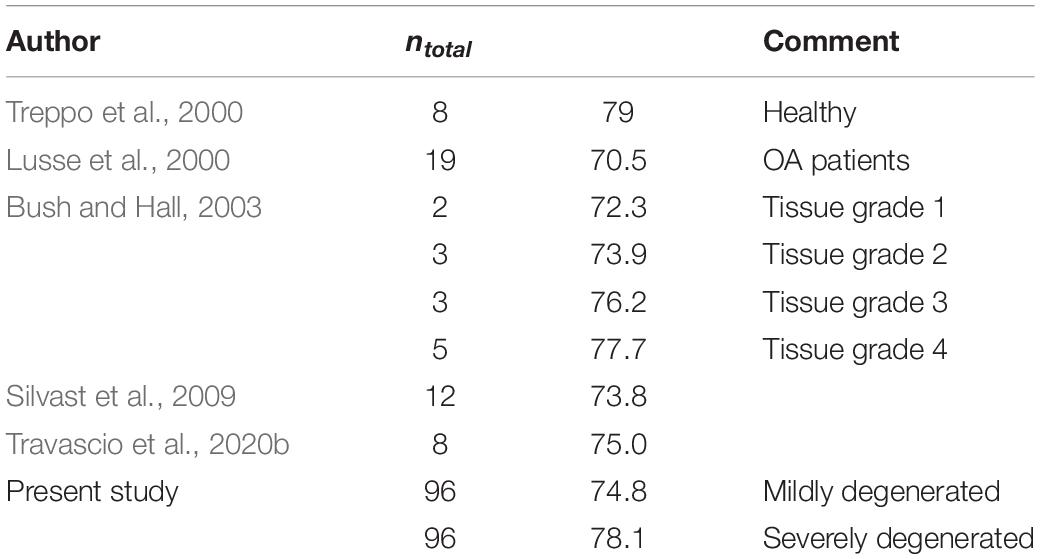

The water content found in the present study was comparable to the data presented in previous studies, indicating a mean water content ranging from 73.9% for the lateral to 76.4% for the medial mildly degenerated menisci (Table 5). Additionally, regarding the severely degenerated meniscal tissue, we were also able to confirm the results of Herwig et al. (1984); Katsuragawa et al. (2010), Son et al. (2013); Våben et al. (2020), and Warnecke et al. (2020), who found an increased water content in more degenerated menisci. However, similar to the literature, our findings also indicated that this water content increase was neither significant for the medial nor for the lateral menisci.

Regarding the water content of the tibial AC, we found an increase of approximately 3% with progressing degeneration. This is in agreement with the findings of Bush and Hall (2003), who found an identical increase in the water content of degenerating tibial AC. In general, the water content in the present study fitted well with the previously published values of human tibial AC (Table 6).

Limitations

Several factors should be considered when interpreting the results of the present study. Firstly, the preparation technique, particularly the confinement of the menisci using a PMMA cast, might have influenced the biomechanical measurements. As with other in vitro indentation setups using cadaveric specimens, this system does not replicate the complex loading behavior of the knee joint, and in particular does not reproduce the circumferential stress induced during physiological loading. Therefore, the here obtained indentation properties of the menisci do not provide bulk properties of the entire tissue and are mainly influenced by the superficial layer and the locally defined region around the indenter. It could be that, at any particular time point when the indentation test was performed, the amount of collagen, fluid, or calcification around the indenter might have influenced the measured biomechanical properties. Even so, during extensive pretesting, we identified a very high reproducibility (>96%) of the identified biomechanical properties.

The setup data to perform the biomechanical mapping, based on the indentation, including the indentation depth and velocity and relaxation time, were not based on physiological strains or loads. Instead, we adapted these setup data from a prior study (Sim et al., 2017) in order to be comparable to the literature. In contrast to the AC mapping setup data, there were no data available in the literature for meniscal mapping. During pretests using both porcine and human menisci, we identified the indentation amplitude and velocity to be the most critical parameters to achieve convergence. Furthermore, the anatomical subregions were based on a standardized method, which did not consider individual anatomy. Firstly, the menisci and meniscus-covered regions at the associated tibial plateau were equally separated into the AH, PI, and PH. This division was based on the middle point between the respective anterior and posterior meniscal attachments. Secondly, the meniscus-covered and uncovered regions were defined in a static position, although the menisci significantly translate on the tibial plateau during knee flexion (Scholes et al., 2015). Both these factors, together with the individual donor variabilities (gender, age, BMI, and knee condition), might explain the deviation in the results between the meniscus-covered regions and the CtC area in the present study.

Another limitation is related to the group grading, which was based on the common clinically used radiographic KL scores. While the KL scores rate the knee as a single entity, it might be that knees were assigned to the severely degenerated group with a severely degenerated medial compartment, whereas the lateral compartment remained in a good condition, potentially leading to a scattered results range within one group. Therefore, it might be beneficial for future studies to divide and group the compartments of each knee joint separately. However, the consecutively conducted macroscopic Pauli scoring of the menisci corroborated the initial degeneration state of the knee joints.

Conclusion and Outlook

With progressing OA degeneration, we identified a significant increase of both the elastic (IM and Pmax) and viscous (Et20) properties of the lateral and medial menisci, while the relaxation percentage and the water content were not affected. This can be interpreted in a way that the matrix of the menisci becomes stiffer with progressing degeneration while the relaxation behavior seems to be less impaired. In contrast, the elastic properties (IM and Pmax) of the adjacent tibial cartilage decreased only in the medial compartment. Therefore, we can conclude that the medial compartment of the here investigated knee joints was much more affected by degenerative changes than the lateral compartment. This coincides with the fact that OA-related medial gonarthrosis is much more frequent than the lateral (Wise et al., 2012). Furthermore, this suggests that the lateral compartments were in an earlier stage of joint degeneration, with the AC still unaffected but the menisci already impaired. Therefore, the results of this biomechanical study suggest that the menisci potentially degenerate earlier than the adjacent AC.

In particular, localization-dependent meniscal stiffening might be attributed to a degeneration-induced crystallization of calcium phosphate within the menisci (Bocher et al., 1965; Hubert et al., 2020). To elucidate the hypothesized crystallization process of the degenerating menisci and their associated role in the degeneration process of the adjacent AC, we are currently working to obtain other knee scores and perform histological analyses and structural quantifications of the collagen and proteoglycan contents. Should our biomechanical findings be reinforced by these additional analyses, the diagnostic paradigms and treatment of OA might be changed, with the focus on the early detection of meniscal degeneration and its respective treatment with the final aim to delay OA onset. Furthermore, the material parameters could be used in combination with finite element programs with automatic optimization subroutines (e.g., simple biphasic model in FEBio) (Maas et al., 2012). This information will be extremely valuable to computational biomechanists and could enrich the current knowledge of the scientific community.

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation.

Ethics Statement

The studies involving human participants were reviewed and approved by IRB No. 70/16, Ulm University, Germany. The patients/participants provided their written informed consent to participate in this study.

Author Contributions

AS, FO, and JS performed the preparation procedure, mechanical testing, data analysis, and statistics. AS drafted the manuscript. DW assisted in the mechanical testing. MF and MS performed clinical analyses. AI and DW participated in the design and coordination of the study. LD and FO conceived the study and helped in drafting the manuscript. All authors read and approved the final manuscript.

Funding

This study was funded by the German Research Foundation (DFG DU254/10-1).

Conflict of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We would like to thank Patrizia Horny from the Institute of Orthopedic Research and Biomechanics Ulm for her art design support.

Supplementary Material

The Supplementary Material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/fbioe.2021.659989/full#supplementary-material

References

Akizuki, S., Mow, V. C., Muller, F., Pita, J. C., Howell, D. S., and Manicourt, D. H. (1986). Tensile properties of human knee joint cartilage: I. Influence of ionic conditions, weight bearing, and fibrillation on the tensile modulus. J. Orthop. Res. 4, 379–392. doi: 10.1002/jor.1100040401

Andriacchi, T. P., Mundermann, A., Smith, R. L., Alexander, E. J., Dyrby, C. O., and Koo, S. (2004). A framework for the in vivo pathomechanics of osteoarthritis at the knee. Ann. Biomed. Eng. 32, 447–457.

Bamac, B., Ozdemir, S., Sarisoy, H. T., Colak, T., Ozbek, A., and Akansel, G. (2006). Evaluation of medial and lateral meniscus thicknesses in early osteoarthritis of the knee with magnetic resonance imaging. Saudi Med. J. 27, 854–857.

Bhattacharyya, T., Gale, D., Dewire, P., Totterman, S., Gale, M. E., Mclaughlin, S., et al. (2003). The clinical importance of meniscal tears demonstrated by magnetic resonance imaging in osteoarthritis of the knee. J. Bone Joint Surg. Am. 85, 4–9. doi: 10.2106/00004623-200301000-00002

Bocher, J., Mankin, H. J., Berk, R. N., and Rodnan, G. P. (1965). Prevalence of calcified meniscal cartilage in elderly persons. N. Engl. J. Med. 272, 1093–1097. doi: 10.1056/nejm196505272722103

Buck, R. J., Wirth, W., Dreher, D., Nevitt, M., and Eckstein, F. (2013). Frequency and spatial distribution of cartilage thickness change in knee osteoarthritis and its relation to clinical and radiographic covariates - data from the osteoarthritis initiative. Osteoarthr. Cartil. 21, 102–109. doi: 10.1016/j.joca.2012.10.010

Buck, R. J., Wyman, B. T., Le Graverand, M. P., Hudelmaier, M., Wirth, W., Eckstein, F., et al. (2010). Osteoarthritis may not be a one-way-road of cartilage loss–comparison of spatial patterns of cartilage change between osteoarthritic and healthy knees. Osteoarthr. Cartil. 18, 329–335. doi: 10.1016/j.joca.2009.11.009

Bursac, P., York, A., Kuznia, P., Brown, L. M., and Arnoczky, S. P. (2009). Influence of donor age on the biomechanical and biochemical properties of human meniscal allografts. Am. J. Sports Med. 37, 884–889. doi: 10.1177/0363546508330140

Bush, P. G., and Hall, A. C. (2003). The volume and morphology of chondrocytes within non-degenerate and degenerate human articular cartilage. Osteoarthr. Cartil. 11, 242–251. doi: 10.1016/s1063-4584(02)00369-2

Chia, H. N., and Hull, M. L. (2008). Compressive moduli of the human medial meniscus in the axial and radial directions at equilibrium and at a physiological strain rate. J. Orthop. Res. 26, 951–956. doi: 10.1002/jor.20573

Chu, C. R., Williams, A. A., Coyle, C. H., and Bowers, M. E. (2012). Early diagnosis to enable early treatment of pre-osteoarthritis. Arthritis Res. Ther. 14:212. doi: 10.1186/ar3845

Coluccino, L., Peres, C., Gottardi, R., Bianchini, P., Diaspro, A., and Ceseracciu, L. (2017). Anisotropy in the viscoelastic response of knee meniscus cartilage. J. Appl. Biomater. Funct. Mater. 15, e77–e83.

Cooper, C., Snow, S., Mcalindon, T. E., Kellingray, S., Stuart, B., Coggon, D., et al. (2000). Risk factors for the incidence and progression of radiographic knee osteoarthritis. Arthritis Rheum 43, 995–1000. doi: 10.1002/1529-0131(200005)43:5<995::aid-anr6>3.0.co;2-1

Cross, W. W. III, Saleh, K. J., Wilt, T. J., and Kane, R. L. (2006). Agreement about indications for total knee arthroplasty. Clin. Orthop. Relat. Res. 446, 34–39. doi: 10.1097/01.blo.0000214436.49527.5e

Danso, E. K., Makela, J. T., Tanska, P., Mononen, M. E., Honkanen, J. T., Jurvelin, J. S., et al. (2015). Characterization of site-specific biomechanical properties of human meniscus-Importance of collagen and fluid on mechanical nonlinearities. J. Biomech. 48, 1499–1507. doi: 10.1016/j.jbiomech.2015.01.048

Danso, E. K., Oinas, J. M. T., Saarakkala, S., Mikkonen, S., Toyras, J., and Korhonen, R. K. (2017). Structure-function relationships of human meniscus. J. Mech. Behav. Biomed. Mater. 67, 51–60.

Deneweth, J. M., Newman, K. E., Sylvia, S. M., Mclean, S. G., and Arruda, E. M. (2013). Heterogeneity of tibial plateau cartilage in response to a physiological compressive strain rate. J. Orthop. Res. 31, 370–375. doi: 10.1002/jor.22226

Down, C., Xu, Y., Osagie, L. E., and Bostrom, M. P. G. (2011). The lack of correlation between radiographic findings and cartilage integrity. J. Arthroplast. 26, 949–954. doi: 10.1016/j.arth.2010.09.007

Ebrahimi, M., Turunen, M. J., Finnila, M. A., Joukainen, A., Kroger, H., Saarakkala, S., et al. (2020). Structure-function relationships of healthy and osteoarthritic human tibial cartilage: experimental and numerical investigation. Ann. Biomed. Eng. 48, 2887–2900. doi: 10.1007/s10439-020-02559-0

Englund, M. (2009). The role of the meniscus in osteoarthritis genesis. Med. Clin. North Am. 93, 37–43. doi: 10.1016/j.mcna.2008.08.005

Englund, M. (2010). The role of biomechanics in the initiation and progression of OA of the knee. Best Pract. Res. Clin. Rheumatol. 24, 39–46. doi: 10.1016/j.berh.2009.08.008

Englund, M., Guermazi, A., and Lohmander, S. L. (2009). The role of the meniscus in knee osteoarthritis: a cause or consequence? Radiol. Clin. North Am. 47, 703–712. doi: 10.1016/j.rcl.2009.03.003

Englund, M., Roemer, F. W., Hayashi, D., Crema, M. D., and Guermazi, A. (2012). Meniscus pathology, osteoarthritis and the treatment controversy. Nat. Rev. Rheumatol. 8, 412–419. doi: 10.1038/nrrheum.2012.69

Erbagci, H., Gumusburun, E., Bayram, M., Karakurum, G., and Sirikci, A. (2004). The normal menisci: in vivo MRI measurements. Surg. Radiol. Anat. 26, 28–32. doi: 10.1007/s00276-003-0182-2

Faber, S. C., Eckstein, F., Lukasz, S., Muhlbauer, R., Hohe, J., Englmeier, K. H., et al. (2001). Gender differences in knee joint cartilage thickness, volume and articular surface areas: assessment with quantitative three-dimensional MR imaging. Skeletal. Radiol. 30, 144–150. doi: 10.1007/s002560000320

Faul, F., Erdfelder, E., Lang, A. G., and Buchner, A. (2007). G∗Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav. Res. Methods 39, 175–191. doi: 10.3758/bf03193146

Fischenich, K. M., Lewis, J., Kindsfater, K. A., Bailey, T. S., and Haut Donahue, T. L. (2015). Effects of degeneration on the compressive and tensile properties of human meniscus. J. Biomech. 48, 1407–1411. doi: 10.1016/j.jbiomech.2015.02.042

Fithian, D. C., Kelly, M. A., and Mow, V. C. (1990). Material properties and structure-function relationships in the menisci. Clin. Orthop. Relat. Res. 252, 19–31.

Fox, A. J., Bedi, A., and Rodeo, S. A. (2012). The basic science of human knee menisci: structure, composition, and function. Sports Health 4, 340–351. doi: 10.1177/1941738111429419

Guilak, F. (2011). Biomechanical factors in osteoarthritis. Best Pract. Res. Clin. Rheumatol. 25, 815–823. doi: 10.1016/j.berh.2011.11.013

Hadjab, I., Sim, S., Karhula, S. S., Kauppinen, S., Garon, M., Quenneville, E., et al. (2018). Electromechanical properties of human osteoarthritic and asymptomatic articular cartilage are sensitive and early detectors of degeneration. Osteoarthr. Cartil. 26, 405–413. doi: 10.1016/j.joca.2017.12.002

Hayes, W. C., Keer, L. M., Herrmann, G., and Mockros, L. F. (1972). A mathematical analysis for indentation tests of articular cartilage. J. Biomech. 5, 541–551. doi: 10.1016/0021-9290(72)90010-3

Herwig, J., Egner, E., and Buddecke, E. (1984). Chemical-changes of human knee-joint menisci in various stages of degeneration. Ann. Rheum. Dis. 43, 635–640. doi: 10.1136/ard.43.4.635

Hochberg, M. C., Lethbridge-Cejku, M., Scott, W. W. Jr., Reichle, R., Plato, C. C., and Tobin, J. D. (1995). The association of body weight, body fatness and body fat distribution with osteoarthritis of the knee: data from the Baltimore Longitudinal Study of Aging. J. Rheumatol. 22, 488–493.

Horbert, V., Lange, M., Reuter, T., Hoffmann, M., Bischoff, S., Borowski, J., et al. (2019). Comparison of near-infrared spectroscopy with needle indentation and histology for the determination of cartilage thickness in the large animal model sheep. Cartilage 10, 173–185. doi: 10.1177/1947603517731851

Hosseini, S. M., Veldink, M. B., Ito, K., and Van Donkelaar, C. (2013). Is collagen fiber damage the cause of early softening in articular cartilage? Osteoarthr. Cartil. 21, 136–143. doi: 10.1016/j.joca.2012.09.002

Hubert, J., Beil, F. T., Rolvien, T., Butscheidt, S., Hischke, S., Puschel, K., et al. (2020). Cartilage calcification is associated with histological degeneration of the knee joint: a highly prevalent, age-independent systemic process. Osteoarthr. Cartil. 28, 1351–1361. doi: 10.1016/j.joca.2020.04.020

Hunter, D. J., Niu, J. B., Zhang, Y., Lavalley, M., Mclennan, C. E., Hudelmaier, M., et al. (2008). Premorbid knee osteoarthritis is not characterised by diffuse thinness: the Framingham Osteoarthritis Study. Ann. Rheum. Dis. 67, 1545–1549. doi: 10.1136/ard.2007.076810

Hunter, D. J., Zhang, Y. Q., Niu, J. B., Tu, X., Amin, S., Clancy, M., et al. (2006). The association of meniscal pathologic changes with cartilage loss in symptomatic knee osteoarthritis. Arthritis Rheum. 54, 795–801. doi: 10.1002/art.21724

Jackson, B. D., Wluka, A. E., Teichtahl, A. J., Morris, M. E., and Cicuttini, F. M. (2004). Reviewing knee osteoarthritis–a biomechanical perspective. J. Sci. Med. Sport 7, 347–357. doi: 10.1016/s1440-2440(04)80030-6

Joshi, M. D., Suh, J. K., Marui, T., and Woo, S. L. (1995). Interspecies variation of compressive biomechanical properties of the meniscus. J. Biomed. Mater. Res. 29, 823–828. doi: 10.1002/jbm.820290706

Jurvelin, J. S., Rasanen, T., Kolmonen, P., and Lyyra, T. (1995). Comparison of optical, needle probe and ultrasonic techniques for the measurement of articular cartilage thickness. J. Biomech. 28, 231–235. doi: 10.1016/0021-9290(94)00060-h

Katsuragawa, Y., Saitoh, K., Tanaka, N., Wake, M., Ikeda, Y., Furukawa, H., et al. (2010). Changes of human menisci in osteoarthritic knee joints. Osteoarthr. Cartil. 18, 1133–1143. doi: 10.1016/j.joca.2010.05.017

Kellgren, J. H., and Lawrence, J. S. (1957). Radiological assessment of osteo-arthrosis. Ann. Rheum. Dis. 16, 494–502. doi: 10.1136/ard.16.4.494

Korhonen, R. K., Laasanen, M. S., Toyras, J., Rieppo, J., Hirvonen, J., Helminen, H. J., et al. (2002). Comparison of the equilibrium response of articular cartilage in unconfined compression, confined compression and indentation. J. Biomech. 35, 903–909. doi: 10.1016/s0021-9290(02)00052-0

Kwok, J., Grogan, S., Meckes, B., Arce, F., Lal, R., and D’lima, D. (2014). Atomic force microscopy reveals age-dependent changes in nanomechanical properties of the extracellular matrix of native human menisci: implications for joint degeneration and osteoarthritis. Nanomedicine 10, 1777–1785. doi: 10.1016/j.nano.2014.06.010

Loeser, R. F., Goldring, S. R., Scanzello, C. R., and Goldring, M. B. (2012). Osteoarthritis: a disease of the joint as an organ. Arthritis Rheum. 64, 1697–1707. doi: 10.1002/art.34453

Lohmander, L. S., Englund, P. M., Dahl, L. L., and Roos, E. M. (2007). The long-term consequence of anterior cruciate ligament and meniscus injuries: osteoarthritis. Am. J. Sports Med. 35, 1756–1769. doi: 10.1177/0363546507307396

Lusse, S., Claassen, H., Gehrke, T., Hassenpflug, J., Schunke, M., Heller, M., et al. (2000). Evaluation of water content by spatially resolved transverse relaxation times of human articular cartilage. Magn. Reson. Imaging 18, 423–430. doi: 10.1016/s0730-725x(99)00144-7

Maas, S. A., Ellis, B. J., Ateshian, G. A., and Weiss, J. A. (2012). FEBio: finite elements for biomechanics. J. Biomech. Eng. 134:011005.

Mainil-Varlet, P., Aigner, T., Brittberg, M., Bullough, P., Hollander, A., Hunziker, E., et al. (2003). Histological assessment of cartilage repair: a report by the histology endpoint committee of the international cartilage repair society (ICRS). J. Bone Joint Surg. Am. 85-A (Suppl. 2), 45–57. doi: 10.2106/00004623-200300002-00007

Mankin, H. J., Johnson, M. E., and Lippiello, L. (1981). Biochemical and metabolic abnormalities in articular cartilage from osteoarthritic human hips. III. Distribution and metabolism of amino sugar-containing macromolecules. J. Bone Joint Surg. Am. 63, 131–139. doi: 10.2106/00004623-198163010-00017

Marchiori, G., Berni, M., Boi, M., and Filardo, G. (2019). Cartilage mechanical tests: evolution of current standards for cartilage repair and tissue engineering. A literature review. Clin. Biomech. (Bristol, Avon) 68, 58–72. doi: 10.1016/j.clinbiomech.2019.05.019

Martin Seitz, A., Galbusera, F., Krais, C., Ignatius, A., and Durselen, L. (2013). Stress-relaxation response of human menisci under confined compression conditions. J. Mech. Behav. Biomed. Mater. 26, 68–80. doi: 10.1016/j.jmbbm.2013.05.027

Morejon, A., Norberg, C. D., De Rosa, M., Best, T. M., Jackson, A. R., and Travascio, F. (2021). Compressive properties and hydraulic permeability of human meniscus: relationships with tissue structure and composition. Front. Bioeng. Biotechnol. 8:622552. doi: 10.3389/fbioe.2020.622552

Moyer, J. T., Abraham, A. C., and Haut Donahue, T. L. (2012). Nanoindentation of human meniscal surfaces. J. Biomech. 45, 2230–2235. doi: 10.1016/j.jbiomech.2012.06.017

Moyer, J. T., Priest, R., Bouman, T., Abraham, A. C., and Donahue, T. L. (2013). Indentation properties and glycosaminoglycan content of human menisci in the deep zone. Acta Biomater. 9, 6624–6629. doi: 10.1016/j.actbio.2012.12.033

Neogi, T. (2013). The epidemiology and impact of pain in osteoarthritis. Osteoarthr. Cartil. 21, 1145–1153. doi: 10.1016/j.joca.2013.03.018

Park, J. Y., Kim, J. K., Cheon, J. E., Lee, M. C., and Han, H. S. (2020). Meniscus stiffness measured with shear wave elastography is correlated with meniscus degeneration. Ultrasound Med. Biol. 46, 297–304. doi: 10.1016/j.ultrasmedbio.2019.10.014

Pauli, C., Grogan, S. P., Patil, S., Otsuki, S., Hasegawa, A., Koziol, J., et al. (2011). Macroscopic and histopathologic analysis of human knee menisci in aging and osteoarthritis. Osteoarthr. Cartil. 19, 1132–1141. doi: 10.1016/j.joca.2011.05.008

Pflieger, I., Stolberg-Stolberg, J., Foehr, P., Kuntz, L., Tubel, J., Grosse, C. U., et al. (2019). Full biomechanical mapping of the ovine knee joint to determine creep-recovery, stiffness and thickness variation. Clin. Biomech. (Bristol, Avon) 67, 1–7. doi: 10.1016/j.clinbiomech.2019.04.015

Pordzik, J., Bernstein, A., Mayr, H. O., Latorre, S. H., Maks, A., Schmal, H., et al. (2020a). Analysis of proteoglycan content and biomechanical properties in arthritic and arthritis-free menisci. Appl. Sci. Basel 10:9012. doi: 10.3390/app10249012

Pordzik, J., Bernstein, A., Watrinet, J., Mayr, H. O., Latorre, S. H., Schmal, H., et al. (2020b). Correlation of biomechanical alterations under gonarthritis between overlying menisci and articular cartilage. Appl. Sci. Basel 10:8673. doi: 10.3390/app10238673

Ruiz, D. Jr., Koenig, L., Dall, T. M., Gallo, P., Narzikul, A., Parvizi, J., et al. (2013). The direct and indirect costs to society of treatment for end-stage knee osteoarthritis. J. Bone Joint Surg. Am. 95, 1473–1480. doi: 10.2106/jbjs.l.01488

Ryd, L., Brittberg, M., Eriksson, K., Jurvelin, J. S., Lindahl, A., Marlovits, S., et al. (2015). Pre-Osteoarthritis: definition and diagnosis of an elusive clinical entity. Cartilage 6, 156–165. doi: 10.1177/1947603515586048

Scholes, C., Houghton, E. R., Lee, M., and Lustig, S. (2015). Meniscal translation during knee flexion: what do we really know? Knee Surg. Sports Traumatol. Arthrosc. 23, 32–40. doi: 10.1007/s00167-013-2482-3

Seidenstuecker, M., Watrinet, J., Bernstein, A., Suedkamp, N. P., Latorre, S. H., Maks, A., et al. (2019). Viscoelasticity and histology of the human cartilage in healthy and degenerated conditions of the knee. J. Orthop. Surg. Res. 14:256.

Setton, L. A., Elliott, D. M., and Mow, V. C. (1999). Altered mechanics of cartilage with osteoarthritis: human osteoarthritis and an experimental model of joint degeneration. Osteoarthr. Cartil. 7, 2–14. doi: 10.1053/joca.1998.0170

Silvast, T. S., Jurvelin, J. S., Lammi, M. J., and Toyras, J. (2009). pQCT study on diffusion and equilibrium distribution of iodinated anionic contrast agent in human articular cartilage–associations to matrix composition and integrity. Osteoarthr. Cartil. 17, 26–32. doi: 10.1016/j.joca.2008.05.012

Sim, S., Chevrier, A., Garon, M., Quenneville, E., Lavigne, P., Yaroshinsky, A., et al. (2017). Electromechanical probe and automated indentation maps are sensitive techniques in assessing early degenerated human articular cartilage. J. Orthop. Res. 35, 858–867. doi: 10.1002/jor.23330

Sim, S., Chevrier, A., Garon, M., Quenneville, E., Yaroshinsky, A., Hoemann, C. D., et al. (2014). Non-destructive electromechanical assessment (Arthro-BST) of human articular cartilage correlates with histological scores and biomechanical properties. Osteoarthr. Cartil. 22, 1926–1935. doi: 10.1016/j.joca.2014.08.008

Son, M., Goodman, S. B., Chen, W., Hargreaves, B. A., Gold, G. E., and Levenston, M. E. (2013). Regional variation in T1rho and T2 times in osteoarthritic human menisci: correlation with mechanical properties and matrix composition. Osteoarthr. Cartil. 21, 796–805. doi: 10.1016/j.joca.2013.03.002

Stefanik, J. J., Guermazi, A., Roemer, F. W., Peat, G., Niu, J., Segal, N. A., et al. (2016). Changes in patellofemoral and tibiofemoral joint cartilage damage and bone marrow lesions over 7 years: the Multicenter Osteoarthritis Study. Osteoarthr. Cartil. 24, 1160–1166. doi: 10.1016/j.joca.2016.01.981

Swann, A. C., and Seedhom, B. B. (1993). The stiffness of normal articular cartilage and the predominant acting stress levels: implications for the aetiology of osteoarthrosis. Br. J. Rheumatol. 32, 16–25. doi: 10.1093/rheumatology/32.1.16

Takroni, T., Laouar, L., Adesida, A., Elliott, J. A., and Jomha, N. M. (2016). Anatomical study: comparing the human, sheep and pig knee meniscus. J. Exp. Orthop. 3:35.

Temple-Wong, M. M., Bae, W. C., Chen, M. Q., Bugbee, W. D., Amiel, D., Coutts, R. D., et al. (2009). Biomechanical, structural, and biochemical indices of degenerative and osteoarthritic deterioration of adult human articular cartilage of the femoral condyle. Osteoarthr. Cartil. 17, 1469–1476. doi: 10.1016/j.joca.2009.04.017

Travascio, F., Devaux, F., Volz, M., and Jackson, A. R. (2020a). Molecular and macromolecular diffusion in human meniscus: relationships with tissue structure and composition. Osteoarthr. Cartil. 28, 375–382. doi: 10.1016/j.joca.2019.12.006

Travascio, F., Valladares-Prieto, S., and Jackson, A. R. (2020b). Effects of solute size and tissue composition on molecular and macromolecular diffusivity in human knee cartilage. Osteoarthr. Cartil. Open 2:100087. doi: 10.1016/j.ocarto.2020.100087

Treppo, S., Koepp, H., Quan, E. C., Cole, A. A., Kuettner, K. E., and Grodzinsky, A. J. (2000). Comparison of biomechanical and biochemical properties of cartilage from human knee and ankle pairs. J. Orthop. Res. 18, 739–748. doi: 10.1002/jor.1100180510

Tsujii, A., Nakamura, N., and Horibe, S. (2017). Age-related changes in the knee meniscus. Knee 24, 1262–1270. doi: 10.1016/j.knee.2017.08.001

Våben, C., Heinemeier, K. M., Schjerling, P., Olsen, J., Petersen, M. M., Kjaer, M., et al. (2020). No detectable remodelling in adult human menisci: an analysis based on the C14 bomb pulse. Br. J. Sports Med. 54, 1433–1437. doi: 10.1136/bjsports-2019-101360