- 1Department of Neurosurgery, Graduate School of Medicine, Osaka University, Suita, Japan

- 2Department of Neuroinformatics, ATR Computational Neuroscience Laboratories, Seika-cho, Japan

- 3Center for Information and Neural Networks, National Institute of Information and Communications Technology, Suita, Japan

- 4Institute for Advanced Co-Creation Studies, Osaka University, Suita, Japan

- 5Endowed Research Department of Clinical Neuroengineering, Global Center for Medical Engineering and Informatics, Osaka University, Suita, Japan

- 6Department of Mechanical Engineering and Intelligent Systems, University of Electro-Communications, Chofu, Japan

- 7Department of Neuromodulation and Neurosurgery, Graduate School of Medicine, Osaka University, Suita, Japan

- 8Graduate School of Informatics, Kyoto University, Kyoto, Japan

Objective: Brain-machine interfaces (BMIs) are useful for inducing plastic changes in cortical representation. A BMI first decodes hand movements using cortical signals and then converts the decoded information into movements of a robotic hand. By using the BMI robotic hand, the cortical representation decoded by the BMI is modulated to improve decoding accuracy. We developed a BMI based on real-time magnetoencephalography (MEG) signals to control a robotic hand using decoded hand movements. Subjects were trained to use the BMI robotic hand freely for 10 min to evaluate plastic changes in the cortical representation due to the training.

Method: We trained nine young healthy subjects with normal motor function. In open-loop conditions, they were instructed to grasp or open their right hands during MEG recording. Time-averaged MEG signals were then used to train a real decoder to control the robotic arm in real time. Then, subjects were instructed to control the BMI-controlled robotic hand by moving their right hands for 10 min while watching the robot's movement. During this closed-loop session, subjects tried to improve their ability to control the robot. Finally, subjects performed the same offline task to compare cortical activities related to the hand movements. As a control, we used a random decoder trained by the MEG signals with shuffled movement labels. We performed the same experiments with the random decoder as a crossover trial. To evaluate the cortical representation, cortical currents were estimated using a source localization technique. Hand movements were also decoded by a support vector machine using the MEG signals during the offline task. The classification accuracy of the movements was compared among offline tasks.

Results: During the BMI training with the real decoder, the subjects succeeded in improving their accuracy in controlling the BMI robotic hand with correct rates of 0.28 ± 0.13 to 0.50 ± 0.11 (p = 0.017, n = 8, paired Student's t-test). Moreover, the classification accuracy of hand movements during the offline task was significantly increased after BMI training with the real decoder from 62.7 ± 6.5 to 70.0 ± 11.1% (p = 0.022, n = 8, t(7) = 2.93, paired Student's t-test), whereas accuracy did not significantly change after BMI training with the random decoder from 63.0 ± 8.8 to 66.4 ± 9.0% (p = 0.225, n = 8, t(7) = 1.33).

Conclusion: BMI training is a useful tool to train the cortical activity necessary for BMI control and to induce some plastic changes in the activity.

Introduction

Brain–machine interfaces (BMIs) can reconstruct motor function in paralyzed subjects (Hochberg et al., 2006, 2012; Yanagisawa et al., 2012a; Collinger et al., 2013; Bouton et al., 2016) as well as induce functional alterations in cortical activity (Ganguly et al., 2011; Wander et al., 2013; Orsborn et al., 2014; Yanagisawa et al., 2016). A BMI works by first recording neural activity and then converting the recorded activity into control of some machine, such as a robotic hand or computer (Yanagisawa et al., 2009, 2011, 2012a,b; Nakanishi et al., 2013, 2014; Fukuma et al., 2015, 2016). Recent studies demonstrated that neurofeedback training using BMI induces plastic changes in neural activities in accordance with some functional alterations in the neural system. The neurofeedback of decoded information using functional magnetic resonance imaging (fMRI) demonstrated that the training induced alteration of cortical activities in accordance with alterations in cognition (Shibata et al., 2011, 2016; Amano et al., 2016; Ordikhani-Seyedlar et al., 2016). In addition, using a certain power spectrum of electroencephalographic signals, motor rehabilitation was improved in stroke patients (Shindo et al., 2011; Ramos-Murguialday et al., 2013). Moreover, we recently reported that BMI training to control a robotic hand induced plastic changes in the motor cortical representation of phantom limb pain patients and changed their pain in accordance with the plastic changes (Yanagisawa et al., 2016).

Such plastic changes are attributed to reinforcement learning with the BMI feedback (Watanabe et al., 2017). The closed-loop system with decoded information enables subjects to modulate the decoded information based on the feedback as a reward. Therefore, we expect that training to use a BMI based on the decoding information would improve the decoding accuracy better than training to use a BMI that is not based on the decoding information.

In this study, we demonstrate that BMIs based on magnetoencephalography (MEG) signals precisely decode hand movements in real time (Bradberry et al., 2009; Toda et al., 2011; Fukuma et al., 2015) and training to use the BMIs induces plastic changes in cortical activity of healthy subjects (Nishimura et al., 2013; Clancy et al., 2014; Luu et al., 2017), especially in the accuracy to decode hand movements.

Subjects and Methods

Subjects

Nine young right-handed volunteers with normal neurological function (2 males and 7 females; mean age, 24.1 years; range, 21–30 years) participated in this study. The study adhered to the Declaration of Helsinki and was performed in accordance with protocols approved by the Ethics Committee of Osaka University Clinical Trial Center (no. 12107, UMIN000010180). All participants were informed of the purpose and possible consequences of this study, and written informed consent was obtained. We recruited subjects aged 20 years and older with normal neurological functioning. Inclusion criteria did not consider gender, race or any special experience.

MEG Recording

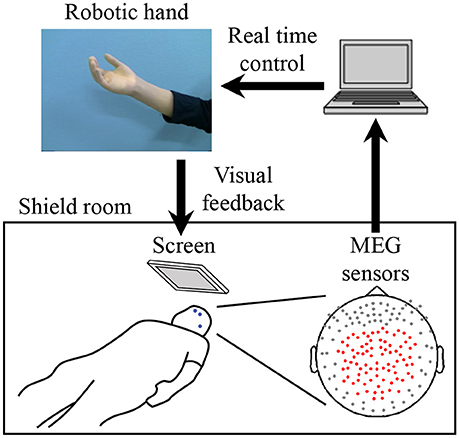

For the MEG recording, subjects were in the supine position with the head centered in the gantry. A projection screen in front of the face provided stimuli using a visual stimulus presentation system (Presentation; Neurobehavioral Systems, Albany, CA, USA) and a liquid crystal projector (LVP-HC6800; Mitsubishi Electric, Tokyo, Japan) (Figure 1). MEG signals were measured by a 160-channel whole-head MEG equipped with coaxial-type gradiometers (MEGvision NEO; Yokogawa Electric Corporation, Kanazawa, Japan) housed in a magnetically shielded room.

Figure 1. System overview for training to use a robotic hand. MEG signals from 84 parietal sensors (shown with red dots) were acquired in real-time to decode performed movement. The robotic hand was controlled according to the results of the decoder. The participant received visual feedback of the robotic hand presented on the screen. Blue dots on the participant's face denote head marker coils used to determine position and orientation of MEG sensors relative to the head. Three marker coils (at the center of the forehead, above the left eyebrow, and on the left preauricular area) are shown.

The MEG signals were sampled at 1,000 Hz with an online low-pass filter at 200 Hz and acquired online by FPGA DAQ boards (PXI-7854R; National Instruments, Austin, TX, USA) after passing through an optical isolation circuit. For the online control of the robotic hand, signals from 84 selected sensors (Figure 1) were used, except for one experiment in which 81 sensors were used for technical reasons. The same 84 sensors were used for offline analysis. Subjects were instructed to not move the head to avoid motion artifacts. A cushion was placed under the elbows to reduce motion artifacts.

Five head marker coils were attached to the subject's face before beginning the MEG recording, to provide the position and orientation of MEG sensors relative to the head (Figure 1). The positions of the five marker coils were measured to evaluate differences in the head position before and after each MEG recording. The maximum acceptable difference was 5 mm.

We also recorded electromyograms of the face and forearm to monitor muscle activities. Subjects were monitored by two video cameras to confirm their arousal.

Experimental Design

A crossover trial consisting of two experiments was performed with a washout period of more than 2 weeks. Each experiment consisted of three tasks, an offline task (pre-BMI), BMI training, and an offline task (post-BMI). For each training task, the participant controlled the robotic hand using two different decoders: a real decoder and a sham decoder. To balance which decoder type was selected first, the order for the real and sham decoders was randomized. Subjects were not informed about the order. Seven subjects participated in both experiments, one subject only participated in the experiment with the real decoder, and another only participated in the experiment with the sham decoder.

First, in the pre-BMI offline task, the subjects attempted to move their right hands (grasping and opening) at the presented times (Yanagisawa et al., 2012a) while MEG signals of the selected sensors were recorded (Figure 1). The subjects were visually instructed which movement to perform with the Japanese word for “grasp” or “open.” After the instruction for movement type, four execution cues were given to the subject every 5.5 s. The execution cue was given both visually and aurally, and was presented 40 times for each movement type. The order of the requested movement type was randomized. We instructed the subjects to slightly move the hand once at the cued time, without moving other body parts.

The MEG signals from the selected sensors were recorded during the task (Figure 1) and then time-averaged using windows of 500 ms from −2,000 to 1,000 ms at 100-ms intervals, with respect to the time of the execution cues. The averaged signals were converted into z-scores using the mean and standard deviations estimated from the initial 50 s of the offline task. The acquired z-scores were used to construct the decoder to control the robotic hand (Fukuma et al., 2015).

During the BMI training task, the subjects were instructed to control the prosthetic hand in real time using the trained decoder. The screen fixed in front of the subject showed a picture of the robotic hand in real time as visual feedback (Figure 1). Subjects were instructed to control the robotic hand freely for 10 min to improve their ability to control it by moving their hands (see Supplementary Video 1). Just before starting the training, the experimenter changed the threshold for detecting movement onset, because the threshold estimated from the offline task was sometimes too low, resulting in the detection of movement onsets even during the resting state in the online task. The other parameters estimated from the offline task were not changed (Fukuma et al., 2016). The selected parameters were fixed for the 10 min of training. The post-BMI offline task was performed in the same way as the pre-BMI task, after the BMI training task.

The BMI training to control the robotic hand was performed as a randomized crossover trial consisting of two training sessions on different days. Each training session was performed with two different decoders to control the robotic hand: a real decoder and a sham decoder. Using the z-scored MEG sensor signals of the offline tasks to move the right hand, we constructed a decoder to infer hand movements at an arbitrary time, in order to control the robotic hand in real time (Fukuma et al., 2015). Each experiment was performed after more than 2 weeks had passed since the previous experiment. For the experiments with the real decoder and sham decoder, the order of the experiments was randomly assigned to the subjects. The experimenter was not blinded to the group allocation.

At the time of enrollment in this trial, we instructed the subjects to use their brain activity to control the robotic hand, but they were not informed of the decoder they used.

Decoder to Control the Prosthetic Hand

MATLAB R2013a (Mathworks, Natick, MA, USA) was used to calculate the decoding parameters and for online robotic hand control. First, MEG signals from the 84 selected sensors during the offline task were averaged in a 500-ms time window and converted to the z-score using the mean and standard deviations estimated from the initial 50 s of data during the offline task. The time-averaged MEG signals were calculated for the period from −2,000 to 1,000 ms at 100-ms intervals according to the execution cue.

The z-scored signals from the offline task were used to train the online decoder, which consisted of an onset detector and class decoder, to control the robotic hand online in the following BMI training task. The class decoder was trained at the peak classification accuracy of the offline task by the support vector machine (SVM). The onset detector was trained using the z-scored signals to differentiate time period of the hand movement from period of resting by SVM and Gaussian process regression (GPR). The details of the construction of the decoder are available in our previous reports (Fukuma et al., 2015; Yanagisawa et al., 2016).

Here, we constructed two types of online decoders depending on the data used to train the decoder. The real decoder was trained by the MEG signals of the offline task to move the hand. The sham decoder was trained by the MEG signals of the same offline task with randomized types of movements (grasp or open).

Evaluation of Online BMI Control

The movements of subject's hand and robotic hand were evaluated from the video recording. We counted the subject's hand movements. Then, we evaluated the robotic hand movements within 1 s after each movement of the subject's hand. If the robotic hand moved into the same posture (grasp or open) as the subject's hand, we counted the movement as correctly controlled movement. The correct rate of BMI control was evaluated by the number of correctly controlled movements divided by the total number of hand movements. The correct rate was counted for 1 min at the beginning and at the end of the 10-min training.

Cortical Current Estimation by VBMEG

A polygonal model of the cortical surface was constructed based on structural MRI (T1-weighted; Signa HDxt Excite 3.0T; GE Healthcare UK Ltd., Buckinghamshire, UK) using the Freesurfer software (Martinos Center Software) (Dale et al., 1999). To align MEG data with individual MRI data, we scanned the three-dimensional facial surface and 50 points on the scalp of each participant (FastSCAN Cobra; Polhemus, Colchester, VT, USA). Three-dimensional facial surface data were superimposed on the anatomical facial surface provided by the MRI data. The positions of five marker coils before each recording were used to estimate cortical current with variational Bayesian multimodal encephalography (VBMEG) (Sato et al., 2004). VBMEG is free software for estimating cortical currents from MEG data (ATR Neural Information Analysis Laboratories, Kyoto, Japan; Cohen et al., 1991; Yoshioka et al., 2008). VBMEG estimated 4004 single current dipoles that were equidistantly distributed on and perpendicular to the cortical surface. An inverse filter was calculated to estimate the cortical current of each dipole from the selected 84 MEG sensor signals. The hyperparameters m0 and γ0 were set to 100 and 10, respectively. The inverse filter was estimated by using MEG signals in all trials from 0 to 1000 ms in the offline task, with the baseline of the current variance estimated from the signals from −1,500 to −500 ms. The filter was then applied to sensor signals in each trial to calculate cortical currents.

Evaluation of Cortical Representation

We evaluated the cortical representation during the offline task using cortical current source estimation. First, VBMEG was used to estimate the cortical currents from the obtained MEG signals. Next, the estimated cortical currents were averaged using a 500-ms window starting from the execution cue and compared between two types of movements with a one-way analysis of variance (ANOVA) for each vertex. The F-value of the ANOVA was averaged for all subjects and color-coded on the normalized brain surface.

Evaluation of Classification Accuracy of Movement Types in the Offline Task

A nested cross-validation (Quian Quiroga and Panzeri, 2009) was performed with a linear support vector machine using the z-scores of the MEG signals from selected sensors (Fukuma et al., 2015) to evaluate the accuracy of classifying the performed movement types. The z-scores from 11 time windows (ranging from −500 to 500 ms at 100-ms intervals, with respect to the timing of the instruction to move) were concatenated to form a decoding feature. To calculate the classification accuracy, 10-fold cross-validation was applied. For each fold, the testing data set was classified with a decoder that was trained completely independently from the testing data set. To optimize hyperparameters of the decoder independently from the testing data set, another 10-fold cross-validation was applied to the training data set so that hyperparameters that achieved the highest classification accuracy within the training data set were selected. Finally, classification accuracies during the two offline tasks before and after the BMI training session were compared using a paired Student's t-test. Significance threshold for this t-test was set to 0.025, because this study employs two t-tests: one for training with a real decoder and another with a sham decoder (Bonferroni correction).

Results

BMI Training With a Robotic Hand

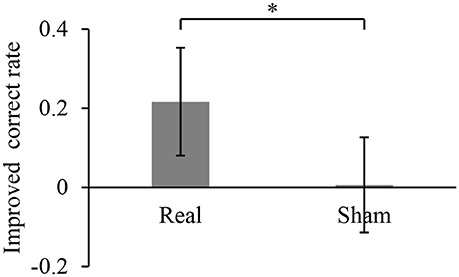

During the 10-min BMI training, the accuracy in controlling the robotic hand was improved. The hand movements at an arbitrary timing were successfully detected and classified, with a correct rate of 0.28 ± 0.13 during the first 1 min of the BMI training with the real decoder. The correct rate increased significantly to 0.50 ± 0.11 for the final 1 min of the BMI training (p = 0.017, n = 8, paired Student's t-test). On the other hand, the correct rates during the BMI training with the random decoder were not significantly changed among the first 1 min and the last 1 min (0.51 ± 0.13 to 0.52 ± 0.10, p = 0.92, n = 8, paired Student's t-test). Notably, the increase of the correct rate during the BMI training with the real decoder was significantly larger than that during the BMI training with the random decoder (Figure 2). Also, it should be noted that correct rates during the first 1 min of the BMI training were not significantly different between the BMI trainings with the real decoder and the random decoder (p = 0.11, n = 7, paired Student's t-test).

Figure 2. Improved accuracy of controlling the robotic hand during online BMI training. The correct rate for robotic hand control was calculated for the first 1 min of the training and the last 1 min of the 10-min training. Each bar shows the averaged improvement of the correct rate for the training with real and sham decoder. Error bars are 95% confidence intervals of the improved correct rate. *p < 0.05 significant difference between two different decoders (unpaired Student's t-test).

BMI Training Changed the Cortical Representation of Hand Movements

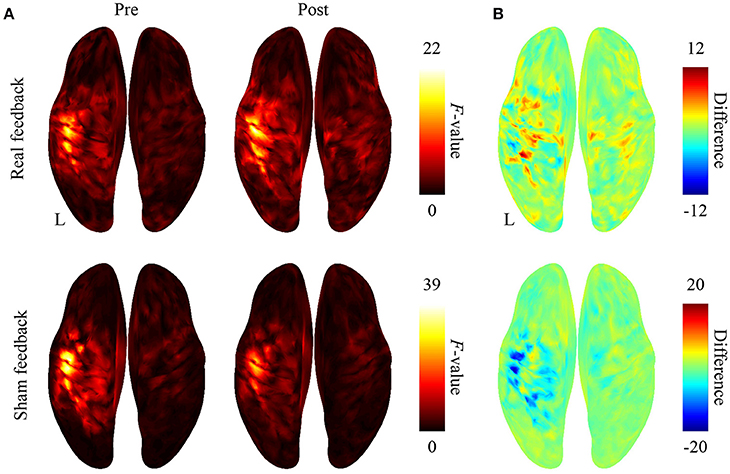

After BMI training with the real decoder, the F-values increased in the contralateral sensorimotor cortex (Figure 3A), although the difference of the F-values (Figure 3B) between pre-BMI and post-BMI offline tasks was not statistically significant (p > 0.05, paired t-test, FDR corrected). After training with the random decoder, the F-value of the contralateral sensorimotor cortex did not increase (Figures 3A,B), although the subject was instructed in the same way as during the experiment with real decoder. These findings suggest that the BMI training with the real decoder increased the discriminability of the cortical activity representing the hand movements.

Figure 3. Difference in cortical activation evoked by two types of movements during the offline task. (A) The averaged F-values of one-way ANOVA between 500-ms time-averaged cortical currents estimated during hand grasping or opening were color-coded and plotted on the normalized brain surface. (B) The differences of F-values shown in plot (A) were color-coded on the normalized brain surface.

BMI Training Altered Classification Accuracy of Hand Movements

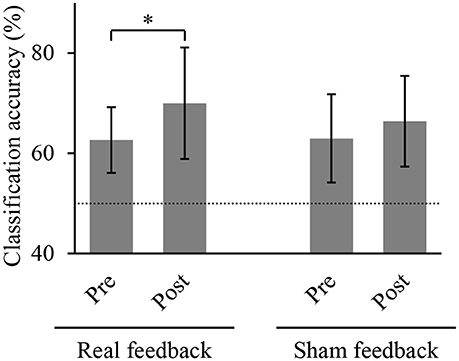

We compared the accuracies for classifying the hand movements using the z-scored MEG signals at the selected sensors. Figure 4 shows the classification accuracies of hand movements in the offline task before and after training task. The accuracy significantly increased after BMI training with the real decoder from 62.7 ± 6.5 to 70.0 ± 11.1% (p = 0.022, n = 8, t(7) = 2.93, paired Student's t-test). In contrast, the BMI training with the random decoder did not increase the accuracy from 63.0 ± 8.8 to 66.4 ± 9.0% (p = 0.225, n = 8, t(7) = 1.33). The BMI training with the real decoder significantly improved the cortical activity to decode the hand movements.

Figure 4. Classification accuracy of hand movements before and after training. Each bar shows the averaged classification accuracy of hand movements during the offline task. Error bars are 95% confidence intervals of classification accuracy. Dotted line denotes chance level. *p < 0.05 significant difference between offline tasks before and after 10-min BMI training with feedback (paired Student's t-test with Bonferroni correction).

Discussion

Our findings demonstrated that MEG-based BMI training to control a robotic hand significantly improved the accuracy to control the robotic hand and induced significant changes of the cortical representation of hand movements in terms of classification accuracy. These results suggest that the BMI training will be useful for two important applications.

First, the non-invasive BMI training will be beneficial in training patients before applying invasive BMI. Previous studies demonstrated that the ability to control the BMI varies among patients (Yanagisawa et al., 2012a; Fukuma et al., 2016; Pandarinath et al., 2017). Before applying an invasive BMI for paralyzed patients, we need to evaluate their ability to control the BMI and to train them when the ability is poor. Our BMI training succeeded in improving the accuracy of controlling the BMI with improved cortical activities, which are also used for invasive BMI. Therefore, the proposed MEG-based BMI training will be beneficial for preoperative evaluation of the invasive BMI.

Second, the BMI training will be useful for inducing plastic changes in the cortical representation. Even for these subjects with normal motor function, the BMI training succeeded in improving the classification accuracy of the hand movements using the MEG signals. Our findings suggest that the BMI training did not induce the changes by normalizing the cortical activity but by modulating the activity depending on the decoder. The BMI training could be applied in clinical therapy to change maladapted cortical representation (Kuner and Flor, 2016).

Recent studies have revealed that BMI training in a closed-loop condition improves BMI performance. It has been demonstrated that closed-loop training improves the control of a neuroprosthetic device using multi-unit activities in accordance with some network plasticity and reorganization (Orsborn et al., 2014; Balasubramanian et al., 2017). Similarly, the performance of non-invasive BMI can be predicted by cortical activities and improved by closed-loop neurofeedback training (Hwang et al., 2009; Blankertz et al., 2010; Sugata et al., 2016; Wan et al., 2016). On the other hand, performance improvement depends on the properties of the cortical activities used by the BMI (Sadtler et al., 2014). Further studies are necessary to optimize the improvement of BMI performance for some clinical uses.

It should be noted that BMI training was effective to induce significant differences even with a limited number of subjects. Although the correct rate of robotic control varied among subjects, our BMI training induced a consistent effect on the correct rates. Indeed, our results successfully demonstrated that BMI training significantly improved classification accuracy during the offline task and the correct rates during the online BMI training even among a limited number of subjects.

In summary, neurofeedback training using MEG-based BMI provides a novel method to directly change the information content of motor representations by induced plasticity in the sensorimotor cortex.

Data Availability

The data that support the findings of this study are available on request from the corresponding author.

Author Contributions

RF and TaY performed the research, wrote the manuscript, and prepared all the figures. TaY designed the study. HY, MH, ToY, YS, YK, and HK reviewed the manuscript.

Funding

This research was conducted under SRPBS by MEXT and AMED. This research was also supported in part by JST PRESTO; Grants-in-Aid for Scientific Research KAKENHI (JP24700419, JP26560467, JP22700435, JP17H06032 and JP15H05710); Brain/MINDS and SICP from AMED; ImPACT; Ministry of Health, Labor, and Welfare (18261201); the Japan Foundation of Aging and Health; the CANON Foundation; and the TERUMO Foundation for Life Sciences and Arts.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We thank Ms. Yuki Kataoka and Ms. Miho Aoki of the Division of Functional Diagnostic Science, Osaka University Medical School for helping the MEG recordings in this study.

Supplementary Material

The Supplementary Material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/fnins.2018.00478/full#supplementary-material

References

Amano, K., Shibata, K., Kawato, M., Sasaki, Y., and Watanabe, T. (2016). Learning to associate orientation with color in early visual areas by associative decoded fMRI neurofeedback. Curr. Biol. 26, 1861–1866. doi: 10.1016/j.cub.2016.05.014

Balasubramanian, K., Vaidya, M., Southerland, J., Badreldin, I., Eleryan, A., Takahashi, K., et al. (2017). Changes in cortical network connectivity with long-term brain-machine interface exposure after chronic amputation. Nat. Commun. 8:1796. doi: 10.1038/s41467-017-01909-2

Blankertz, B., Sannelli, C., Halder, S., Hammer, E. M., Kubler, A., Muller, K. R., et al. (2010). Neurophysiological predictor of SMR-based BCI performance. Neuroimage 51, 1303–1309. doi: 10.1016/j.neuroimage.2010.03.022

Bouton, C. E., Shaikhouni, A., Annetta, N. V., Bockbrader, M. A., Friedenberg, D. A., Nielson, D. M., et al. (2016). Restoring cortical control of functional movement in a human with quadriplegia. Nature 533, 247–250. doi: 10.1038/nature17435

Bradberry, T. J., Rong, F., and Contreras-Vidal, J. L. (2009). Decoding center-out hand velocity from MEG signals during visuomotor adaptation. Neuroimage 47, 1691–1700. doi: 10.1016/j.neuroimage.2009.06.023

Clancy, K. B., Koralek, A. C., Costa, R. M., Feldman, D. E., and Carmena, J. M. (2014). Volitional modulation of optically recorded calcium signals during neuroprosthetic learning. Nat. Neurosci. 17, 807–809. doi: 10.1038/nn.3712

Cohen, L. G., Bandinelli, S., Findley, T. W., and Hallett, M. (1991). Motor reorganization after upper limb amputation in man. a study with focal magnetic stimulation. Brain 114, 615–627. doi: 10.1093/brain/114.1.615

Collinger, J. L., Wodlinger, B., Downey, J. E., Wang, W., Tyler-Kabara, E. C., Weber, D. J., et al. (2013). High-performance neuroprosthetic control by an individual with tetraplegia. Lancet 381, 557–564. doi: 10.1016/S0140-6736(12)61816-9

Dale, A. M., Fischl, B., and Sereno, M. I. (1999). Cortical surface-based analysis. I. Segmentation and surface reconstruction. NeuroImage 9, 179–194. doi: 10.1006/nimg.1998.0395

Fukuma, R., Yanagisawa, T., Saitoh, Y., Hosomi, K., Kishima, H., Shimizu, T., et al. (2016). Real-Time control of a neuroprosthetic hand by magnetoencephalographic signals from paralysed patients. Sci. Rep. 6:21781. doi: 10.1038/srep21781

Fukuma, R., Yanagisawa, T., Yorifuji, S., Kato, R., Yokoi, H., Hirata, M., et al. (2015). Closed-Loop control of a neuroprosthetic hand by magnetoencephalographic signals. PLoS ONE 10:e0131547. doi: 10.1371/journal.pone.0131547

Ganguly, K., Dimitrov, D. F., Wallis, J. D., and Carmena, J. M. (2011). Reversible large-scale modification of cortical networks during neuroprosthetic control. Nat. Neurosci. 14, 662–667. doi: 10.1038/nn.2797

Hochberg, L. R., Bacher, D., Jarosiewicz, B., Masse, N. Y., Simeral, J. D., Vogel, J., et al. (2012). Reach and grasp by people with tetraplegia using a neurally controlled robotic arm. Nature 485, 372–375. doi: 10.1038/nature11076

Hochberg, L. R., Serruya, M. D., Friehs, G. M., Mukand, J. A., Saleh, M., Caplan, A. H., et al. (2006). Neuronal ensemble control of prosthetic devices by a human with tetraplegia. Nature 442, 164–171. doi: 10.1038/nature04970

Hwang, H. J., Kwon, K., and Im, C. H. (2009). Neurofeedback-based motor imagery training for brain-computer interface (BCI). J. Neurosci. Methods 179, 150–156. doi: 10.1016/j.jneumeth.2009.01.015

Kuner, R., and Flor, H. (2016). Structural plasticity and reorganisation in chronic pain. Nat. Rev. Neurosci. 18, 20–30. doi: 10.1038/nrn.2016.162

Luu, T. P., Nakagome, S., He, Y., and Contreras-Vidal, J. L. (2017). Real-time EEG-based brain-computer interface to a virtual avatar enhances cortical involvement in human treadmill walking. Sci. Rep. 7:8895. doi: 10.1038/s41598-017-09187-0

Nakanishi, Y., Yanagisawa, T., Shin, D., Chen, C., Kambara, H., Yoshimura, N., et al. (2014). Decoding fingertip trajectory from electrocorticographic signals in humans. Neurosci. Res. 85, 20–27. doi: 10.1016/j.neures.2014.05.005

Nakanishi, Y., Yanagisawa, T., Shin, D., Fukuma, R., Chen, C., Kambara, H., et al. (2013). Prediction of three-dimensional arm trajectories based on ECoG signals recorded from human sensorimotor cortex. PLoS ONE 8:e72085. doi: 10.1371/journal.pone.0072085

Nishimura, Y., Perlmutter, S. I., Eaton, R. W., and Fetz, E. E. (2013). Spike-timing-dependent plasticity in primate corticospinal connections induced during free behavior. Neuron 80, 1301–1309. doi: 10.1016/j.neuron.2013.08.028

Ordikhani-Seyedlar, M., Lebedev, M. A., Sorensen, H. B., and Puthusserypady, S. (2016). Neurofeedback therapy for enhancing visual attention: state-of-the-art and challenges. Front. Neurosci. 10:352. doi: 10.3389/fnins.2016.00352

Orsborn, A. L., Moorman, H. G., Overduin, S. A., Shanechi, M. M., Dimitrov, D. F., and Carmena, J. M. (2014). Closed-loop decoder adaptation shapes neural plasticity for skillful neuroprosthetic control. Neuron 82, 1380–1393. doi: 10.1016/j.neuron.2014.04.048

Pandarinath, C., Nuyujukian, P., Blabe, C. H., Sorice, B. L., Saab, J., Willett, F. R., et al. (2017). High performance communication by people with paralysis using an intracortical brain-computer interface. Elife 6:e18554. doi: 10.7554/eLife.18554

Quian Quiroga, R., and Panzeri, S. (2009). Extracting information from neuronal populations: information theory and decoding approaches. Nat. Rev. Neurosci. 10, 173–185. doi: 10.1038/nrn2578

Ramos-Murguialday, A., Broetz, D., Rea, M., Laer, L., Yilmaz, O., Brasil, F. L., et al. (2013). Brain-machine-interface in chronic stroke rehabilitation: a controlled study. Ann. Neurol. 74, 100–108. doi: 10.1002/ana.23879

Sadtler, P. T., Quick, K. M., Golub, M. D., Chase, S. M., Ryu, S.I., Tyler-Kabara, E. C., et al. (2014). Neural constraints on learning. Nature 512, 423–426. doi: 10.1038/nature13665

Sato, M. A., Yoshioka, T., Kajihara, S., Toyama, K., Goda, N., Doya, K., et al. (2004). Hierarchical Bayesian estimation for MEG inverse problem. Neuroimage 23, 806–826. doi: 10.1016/j.neuroimage.2004.06.037

Shibata, K., Watanabe, T., Kawato, M., and Sasaki, Y. (2016). Differential activation patterns in the same brain region led to opposite emotional states. PLoS Biol. 14:e1002546. doi: 10.1371/journal.pbio.1002546

Shibata, K., Watanabe, T., Sasaki, Y., and Kawato, M. (2011). Perceptual learning incepted by decoded fMRI neurofeedback without stimulus presentation. Science 334, 1413–1415. doi: 10.1126/science.1212003

Shindo, K., Kawashima, K., Ushiba, J., Ota, N., Ito, M., Ota, T., et al. (2011). Effects of neurofeedback training with an electroencephalogram-based brain-computer interface for hand paralysis in patients with chronic stroke: a preliminary case series study. J. Rehabil. Med. 43, 951–957. doi: 10.2340/16501977-0859

Sugata, H., Hirata, M., Yanagisawa, T., Matsushita, K., Yorifuji, S., and Yoshimine, T. (2016). Common neural correlates of real and imagined movements contributing to the performance of brain-machine interfaces. Sci. Rep. 6:24663. doi: 10.1038/srep24663

Toda, A., Imamizu, H., Kawato, M., and Sato, M. A. (2011). Reconstruction of two-dimensional movement trajectories from selected magnetoencephalography cortical currents by combined sparse bayesian methods. Neuroimage 54, 892–905. doi: 10.1016/j.neuroimage.2010.09.057

Wan, F., da Cruz, J. N., Nan, W., Wong, C. M., Vai, M. I., and Rosa, A. (2016). Alpha neurofeedback training improves SSVEP-based BCI performance. J. Neural Eng. 13:036019. doi: 10.1088/1741-2560/13/3/036019

Wander, J. D., Blakely, T., Miller, K. J., Weaver, K. E., Johnson, L. A., Olson, J. D., et al. (2013). Distributed cortical adaptation during learning of a brain-computer interface task. Proc. Natl. Acad. Sci. U.S.A. 110, 10818–10823. doi: 10.1073/pnas.1221127110

Watanabe, T., Sasaki, Y., Shibata, K., and Kawato, M. (2017). Advances in fMRI real-time neurofeedback. Trends Cogn. Sci. (Regul. Ed). 21, 997–1010. doi: 10.1016/j.tics.2017.09.010

Yanagisawa, T., Fukuma, R., Seymour, B., Hosomi, K., Kishima, H., Shimizu, T., et al. (2016). Induced sensorimotor brain plasticity controls pain in phantom limb patients. Nat. Commun. 7:13209. doi: 10.1038/ncomms13209

Yanagisawa, T., Hirata, M., Saitoh, Y., Goto, T., Kishima, H., Fukuma, R., et al. (2011). Real-time control of a prosthetic hand using human electrocorticography signals. J. Neurosurg. 114, 1715–1722. doi: 10.3171/2011.1.JNS101421

Yanagisawa, T., Hirata, M., Saitoh, Y., Kato, A., Shibuya, D., Kamitani, Y., et al. (2009). Neural decoding using gyral and intrasulcal electrocorticograms. Neuroimage 45, 1099–1106. doi: 10.1016/j.neuroimage.2008.12.069

Yanagisawa, T., Hirata, M., Saitoh, Y., Kishima, H., Matsushita, K., Goto, T., et al. (2012a). Electrocorticographic control of a prosthetic arm in paralyzed patients. Ann. Neurol. 71, 353–361. doi: 10.1002/ana.22613

Yanagisawa, T., Yamashita, O., Hirata, M., Kishima, H., Saitoh, Y., Goto, T., et al. (2012b). Regulation of motor representation by phase-amplitude coupling in the sensorimotor cortex. J. Neurosci. 32, 15467–15475. doi: 10.1523/JNEUROSCI.2929-12.2012

Keywords: brain-machine interface, robotic hand, magnetoencephalography, cortical plasticity, neurofeedback, closed-loop training, online decoding

Citation: Fukuma R, Yanagisawa T, Yokoi H, Hirata M, Yoshimine T, Saitoh Y, Kamitani Y and Kishima H (2018) Training in Use of Brain–Machine Interface-Controlled Robotic Hand Improves Accuracy Decoding Two Types of Hand Movements. Front. Neurosci. 12:478. doi: 10.3389/fnins.2018.00478

Received: 26 January 2018; Accepted: 25 June 2018;

Published: 11 July 2018.

Edited by:

Christoph Guger, Guger Technologies (Austria), AustriaReviewed by:

Jose Luis Contreras-Vidal, University of Houston, United StatesAli Yadollahpour, Ahvaz Jundishapur University of Medical Sciences, Iran

Copyright © 2018 Fukuma, Yanagisawa, Yokoi, Hirata, Yoshimine, Saitoh, Kamitani and Kishima. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Takufumi Yanagisawa, dHlhbmFnaXNhd2FAbnN1cmcubWVkLm9zYWthLXUuYWMuanA=

†These authors have contributed equally to this work.

Ryohei Fukuma

Ryohei Fukuma Takufumi Yanagisawa

Takufumi Yanagisawa Hiroshi Yokoi6

Hiroshi Yokoi6 Masayuki Hirata

Masayuki Hirata Yukiyasu Kamitani

Yukiyasu Kamitani