- 1Oral and Maxillo-Facial Surgery Unit, IRCCS Azienda Ospedaliero-Universitaria di Bologna, Bologna, Italy

- 2Orthopedics and Traumatology Department, IRCCS Azienda Ospedaliero-Universitaria di Bologna, Bologna, Italy

- 3eDIMES Lab-Laboratory of Bioengineering, Department of Medical and Surgical Sciences, University of Bologna, Bologna, Italy

- 4Maxillofacial Surgery Unit, Department of Biomedical and Neuromotor Science, University of Bologna, Bologna, Italy

This systematic review offers an overview on clinical and technical aspects of augmented reality (AR) applications in orthopedic and maxillofacial oncological surgery. The review also provides a summary of the included articles with objectives and major findings for both specialties. The search was conducted on PubMed/Medline and Scopus databases and returned on 31 May 2023. All articles of the last 10 years found by keywords augmented reality, mixed reality, maxillofacial oncology and orthopedic oncology were considered in this study. For orthopedic oncology, a total of 93 articles were found and only 9 articles were selected following the defined inclusion criteria. These articles were subclassified further based on study type, AR display type, registration/tracking modality and involved anatomical region. Similarly, out of 958 articles on maxillofacial oncology, 27 articles were selected for this review and categorized further in the same manner. The main outcomes reported for both specialties are related to registration error (i.e., how the virtual objects displayed in AR appear in the wrong position relative to the real environment) and surgical accuracy (i.e., resection error) obtained under AR navigation. However, meta-analysis on these outcomes was not possible due to data heterogenicity. Despite having certain limitations related to the still immature technology, we believe that AR is a viable tool to be used in oncological surgeries of orthopedic and maxillofacial field, especially if it is integrated with an external navigation system to improve accuracy. It is emphasized further to conduct more research and pre-clinical testing before the wide adoption of AR in clinical settings.

1 Introduction

Augmented reality (AR) is a technology that allows the fusion of digital content into the real environment. The achieved augmented continuum is a virtual world in which virtual objects are overlaid on real elements, in the surrounding actual environment (Azuma et al., 2001).

The first AR system using a Head Mounted Display (HMD) was developed by Sutherland in 1968 (Feiner, 2002). Since its discovery, AR technology has been utilized by experts in many areas; such as entertainment, sports, gaming, retail, and also medicine. Indeed, the recent technological advancements in headsets and computer hardware resulted in many companies, especially in the entertainment sector, investing in AR devices which have become increasingly available and accessible. Therefore employed also in health-related applications particularly in the surgical fields.

When applied to surgery, the AR allows to improve the user’s perceptual and comprehensive ability by projecting three-dimensional underlying anatomy directly onto the user’s retina (via HMDs) or on a display screen.

AR in surgery has enormous potential to help the surgeon in identifying tumor locations, delineating the planned dissection planes, and reducing the risk of injury to invisible structures. Therefore, using AR in the operating room (OR) could be helpful in performing surgical tasks in a more accurate way. HMDs are particularly beneficial for AR surgical applications since they intrinsically provide the surgeon with an egocentric viewpoint, and offer improved ergonomics if compared to traditional computer-assisted surgical systems. This allows surgeons to concentrate on the task at hand without having to turn their heads away from the surgical field to constantly look at imaging monitor. The most ambitious goal in surgery is to use AR for intraoperative navigation. This involves taking data from preoperative imaging and using anatomical anchors in the operating field to register the two representations in real time.

Registration is an important step in computer-assisted surgical navigation in order to correlate the virtual content and the real surgical scene. In this context, the registration error can be defined as; the measurement of how much the virtual objects displayed in AR appear incorrectly positioned relative to the real environment.

For virtual-to-real surgical scene registration, AR systems typically use a camera coupled to a device marker; such as QR code, anchored to the patient (marker-based registration). Another option is marker-less registration which includes a combination of location data (from Global Positioning System), inertial measurement unit (IMU) data, and computer vision to track image features such as scene depth, the object surface, and object edges (Venkatesan et al., 2021).

The core of the registration modality is tracking, which means to determine and follow the position and orientation of an object with respect to some reference coordinate system over time.

Over the past decade, with the advent of multimodal and high-detailed 4D medical imaging (Bradley, 2008), numerous surgical specialties have integrated AR into their surgical workflow, namely; neurosurgery (Cannizzaro et al., 2022), urological surgery (Bianchi et al., 2021; Schiavina et al., 2021; Roberts et al., 2022), ophthalmology (Li et al., 2021), gastrointestinal endoscopy (Mahmud et al., 2015), cardiovascular surgery (Rad et al., 2022), spinal surgery (Molina et al., 2021a), breast surgery (Gouveia et al., 2021), and thyroid surgery (Lee et al., 2020). Some authors utilized AR to perform lateral skull-based surgery for cerebellopontine angle tumor (Schwam et al., 2021) and some used it in open hepatic surgery (Golse et al., 2021). Moreover, AR has also been employed in procedures such as perforator flap transfer (Jiang et al., 2020) and percutaneous nephrolithotomy (Ferraguti et al., 2022).

Orthopedic and Maxillofacial surgeries have been pioneers in the use of AR in a surgical setting (Barcali et al., 2022).

These two surgeries may represent very promising fields for the future clinical implementation of AR, since they are based on bony hard tissues which make it easier to have fixed references, i.e., bony structures, to be used for ensuring an accurate virtual-to-real scene registration between preoperative (virtual) and intraoperative (real) views.

Regarding orthopedic surgery, Alexander et al. (2020) formulated a 3D augmented reality system for the placement of acetabular component during total hip arthroplasty (THA) and found it to be more precise and faster than standard fluoroscopic guidance. Similarly, Ogawa et al. (2018) found AR to be more accurate when comparing it to conventional goniometer for acetabular cup placement during THA. In 2019, Tsukada et al. (2019) conducted an in vitro study on sawbone models for employing AR during total knee arthroplasty and concluded that the system provided accurate measurements for tibial bone resection. Consequently, in 2021, the same authors, formulated prospective cohort study on 72 patients. They emphasized that AR-assisted navigation to resect distal femur is more precise than the conventional method (Tsukada et al., 2021).

Augmented reality and its tools have emerged as a new paradigm also in spinal surgeries. Many authors have validated the use of AR navigation for the precise placement of pedicle screw (Elmi-Terander et al., 2018; Elmi-Terander et al., 2019; Gibby et al., 2019; Dennler et al., 2020) and some compared its accuracy with free-hand approach (Elmi-Terander et al., 2020). In 2021, Molina et al. (2021b) conducted the first human trial of using an FDA approved AR-HMD (X-vision Spine System, Augmedics) and demonstrated its clinical and technical accuracy in spine surgery.

In the context of oral and cranio-maxillofacial surgery, AR applications are of increasing interest and adoption (Badiali et al., 2020).

Sharma et al. (2021) proposed a marker-less AR navigation system algorithm with greater precision and faster processing time for jaw surgery. Similar to the article on marker-less image registration for jaw experiments published by Wang et al. (2019), this study demonstrated its clinical viability through minimal registration error and processing time.

Some other experiences of marker-less AR navigation have been reported for assisting the harvesting of periosteum pedicle flap and osteomyocutaneous fibular flap in head and neck reconstruction (Battaglia et al., 2020), as well as for guiding osteotomies in pediatric cranio-facial surgery (Ruggiero et al., 2023).

Similarly, recent studies in dental implantology have demonstrated the efficacy of AR for displaying dynamic navigation systems (Pellegrino et al., 2019; Shrestha et al., 2021). Ma et al. (2019) proposed an AR-assisted navigation with cone beam computed tomography (CBCT) registration method to attain the desired dental implant precision. They compared the navigation method to physician’s experience and concluded that AR guidance had better outcomes in terms of mean target error and mean angle error. Budhathoki et al. (2020) emphasized the use of AR navigation to visualize deep-seated anatomy, narrow areas and to provide positioning of surgical instruments to avoid positioning error complications during jaw surgery. Moreover, Gao et al. (2019) employed AR in mandibular split osteotomy. Same as, Pietruski et al. (2019) who incorporated AR navigation and cutting guides for mandibular osteotomies in 2019 and concluded that this technology can enhance the surgeon’s perception and hand-eye coordination during mandibular resection and reconstruction procedures.

Although AR technology has a long history in orthopedic and maxillofacial surgery, a complete analysis of its clinical and technical application on oncological cases is still lacking.

In this literature review, we provide the comprehensive up-to-date overview on current clinical applications of AR in orthopedic oncology and maxillofacial oncology, pointing out its benefits and current limitations. Moreover, we also elucidate the different technological aspects of AR used in each of these experiences to give an insight on how AR can be administered in oncological surgical scenarios.

2 Materials and methods

2.1 Searching criteria

This systematic literature review was conducted on PubMed/Medline and Scopus databases using the terms, “Augmented Reality” AND “Orthopedic oncology,” “Mixed Reality” AND “Orthopedic oncology,” “Augmented Reality” AND “Maxillofacial” AND “oncology,” “Mixed Reality” AND “Maxillofacial” AND “oncology” and “Augmented Reality” AND “Head and Neck” AND “cancer.” The same search was also attempted using the term “Cranio-maxillofacial” AND “oncology” in the place of “Maxillofacial” AND “oncology,” from which, however, no additional results were obtained with respect to what was already found. Searches for both specialty domains were done separately and 2 independent users performed search until 31 May 2023. Relevant articles of only last 10 years were included in this review paper. Manual search was also done in references of papers to see missing of any relevant paper. PRISMA-guidelines were kept in mind while preparing this review article.

The SPIDER (Sample, Phenomena of Interest, Design, Evaluation, Research type) method was used to construct the suitable research question: “Can augmented reality be considered a beneficial tool in orthopedic and maxillofacial oncological fields in achieving surgical accuracy?”

This review addresses this question by focusing on both clinical and technical aspects of AR in these two surgical disciplines, as well as on reporting current limitations and benefits.

Due to the qualitative and mixed-method nature of the included articles and the heterogeneity of the data, the term “evaluation” was left intentionally broad.

We performed the study selection based on the following inclusion criteria: studies on augmented reality applications in either orthopedic or maxillofacial field, only focused on oncology; studies reporting applications on different targets (e.g., phantoms, cadavers, animals and patients). All articles with either quantifiable or qualitative outcomes on augmented reality confined to both specialties with case reports were included.

Exclusion criteria were the following: articles “not relevant” (i.e., not related to augmented reality, not strictly related to oncological surgery, not related to orthopedic or maxillofacial surgery); articles with language of publication other than English; theses; conference papers; editorials; book chapters; review articles (as Review articles typically do not include sufficient specifics regarding the recommended solutions and are also considered as secondary source, therefore they cannot be used in data extraction process).

2.2 Data extraction and analysis

All the search articles available till 31 May 2023 were screened by title first and then abstract.

The authors, date of publication, study design, and data from the eligible articles were tabulated in Microsoft Excel® (Microsoft Corporation, WA). All articles meeting the inclusion criteria were read carefully and stratified following two parallel perspectives: a clinical one, i.e., focusing on the specific surgical application, the type of study (on phantoms, on cadavers, on animals, on humans), the anatomical region of interest, the virtual information provided to surgeon, and a technical one, i.e., the registration/tracking modality the type of AR display, the achieved registration and surgical accuracy).

Key findings of each article were also stated in given tables in the Results section for both orthopedic and maxillofacial specialties, and also depicted in bar histograms. However, meta-analysis could not be performed due to heterogeneity of literature. All these findings were validated by a second independent investigator to ensure the correct data acquisition and selection of the appropriate relevant literature.

Due to the fact that the included study designs exhibited a significant level of variability, as is often observed in the case of new technologies, they are developed individually with distinct features. Consequently, conventional approaches for evaluating the risk of bias were not suitable for use in this context. The authors generally evaluated and assessed the risk of bias to be low or negligible for data description, but it could be high for the analysis of the effectiveness of approaches used in these studies. Furthermore, none of the articles included in the review refers to a specific methodological protocol.

3 Results

The initial search of the PubMed/Medline and Scopus databases was completed on 31 May 2023, and all available articles were scrutinized using the above-described criteria.

In the following paragraphs, an analytic overview of the selected papers and their classification were presented for both orthopedic oncology and maxillofacial oncology.

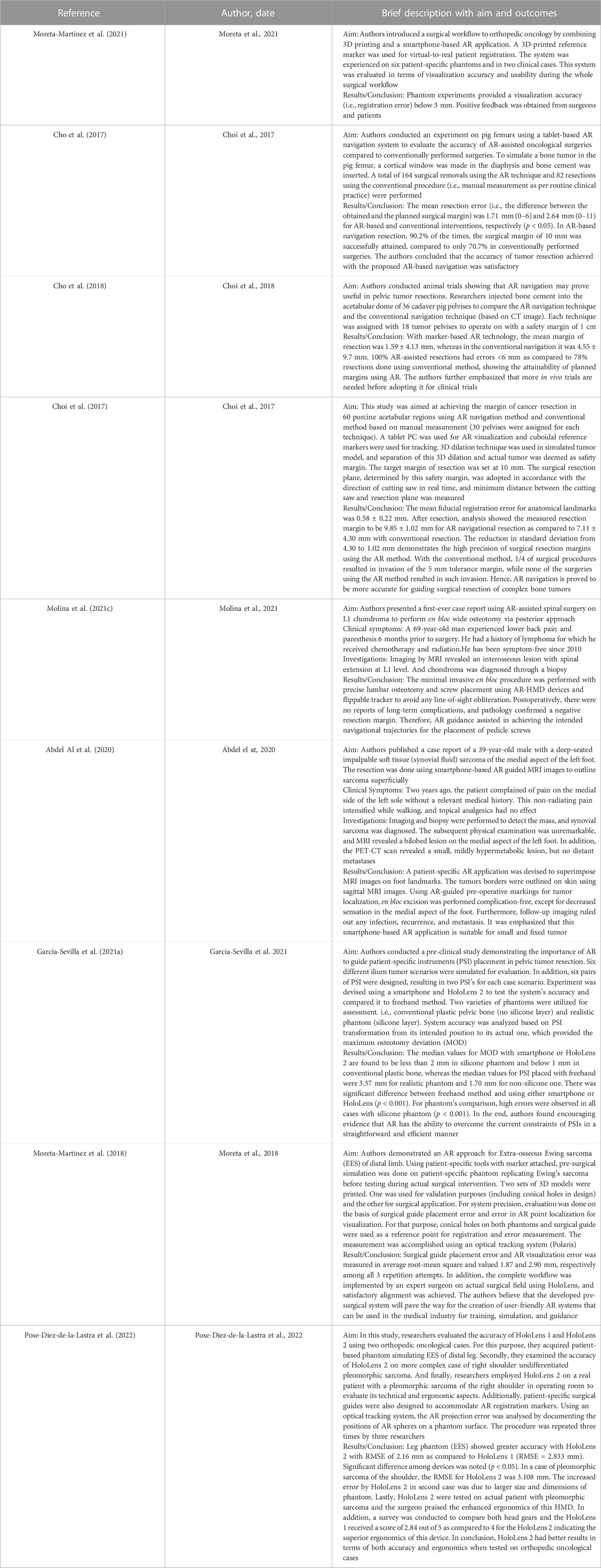

As depicted in flowchart (Figure 1), the databases search for orthopedic oncology returned 89 results while manual search yielded 4 publications. Fifty-nine articles remained after removing duplicates based on titles and abstracts. According to the inclusion criteria, only 9 of the 59 articles were included in this review. Other articles were excluded for the following reasons: “not-relevant” (n = 27), review articles/book chapters/editorials/conference papers (n = 22), non-English articles (n = 1).

FIGURE 1. A flowchart showing inclusion and exclusion criteria used for the search, and the resulting selected papers.

Similarly, for CMF oncology, 951 articles were found through PubMed/Medline and Scopus search and 7 from manual search. Out of total 958, 738 articles remained after removal of duplicates. Based on above defined criteria, 27 publications were included and others were eliminated for the following reasons: “not-relevant” (n = 395), review articles/book chapters/editorials/conference papers (n = 286), non-English language (n = 30).

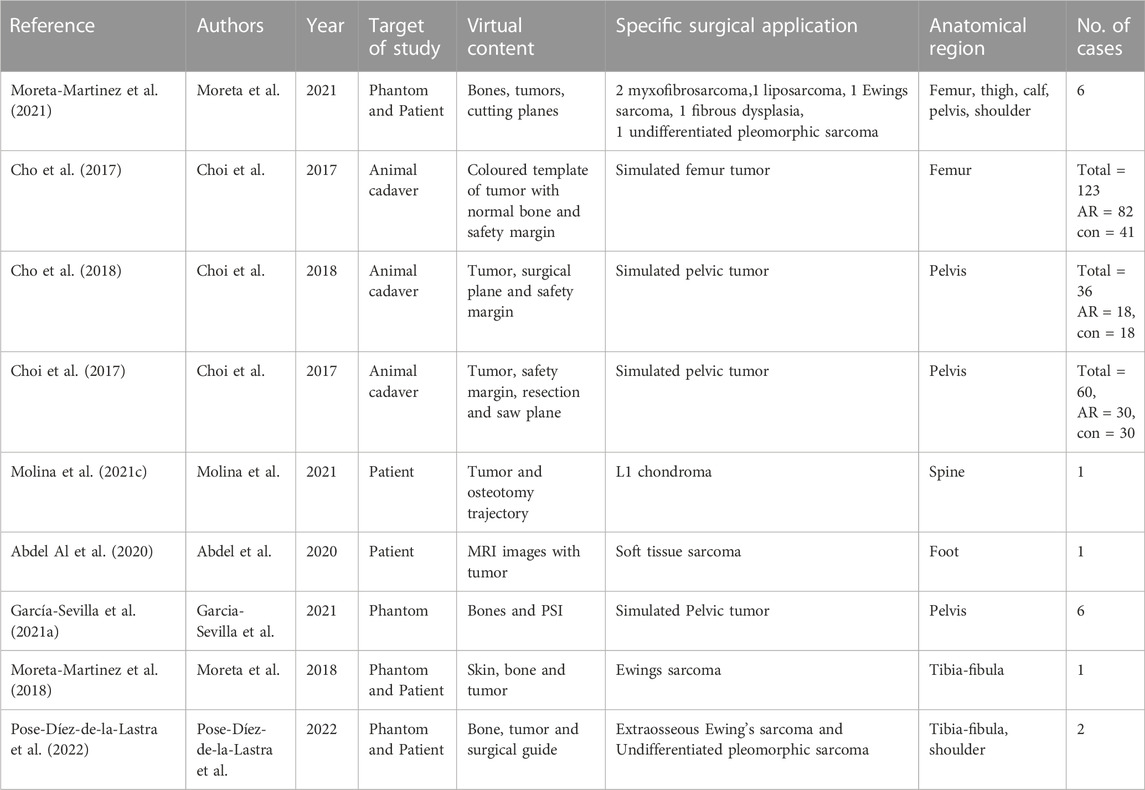

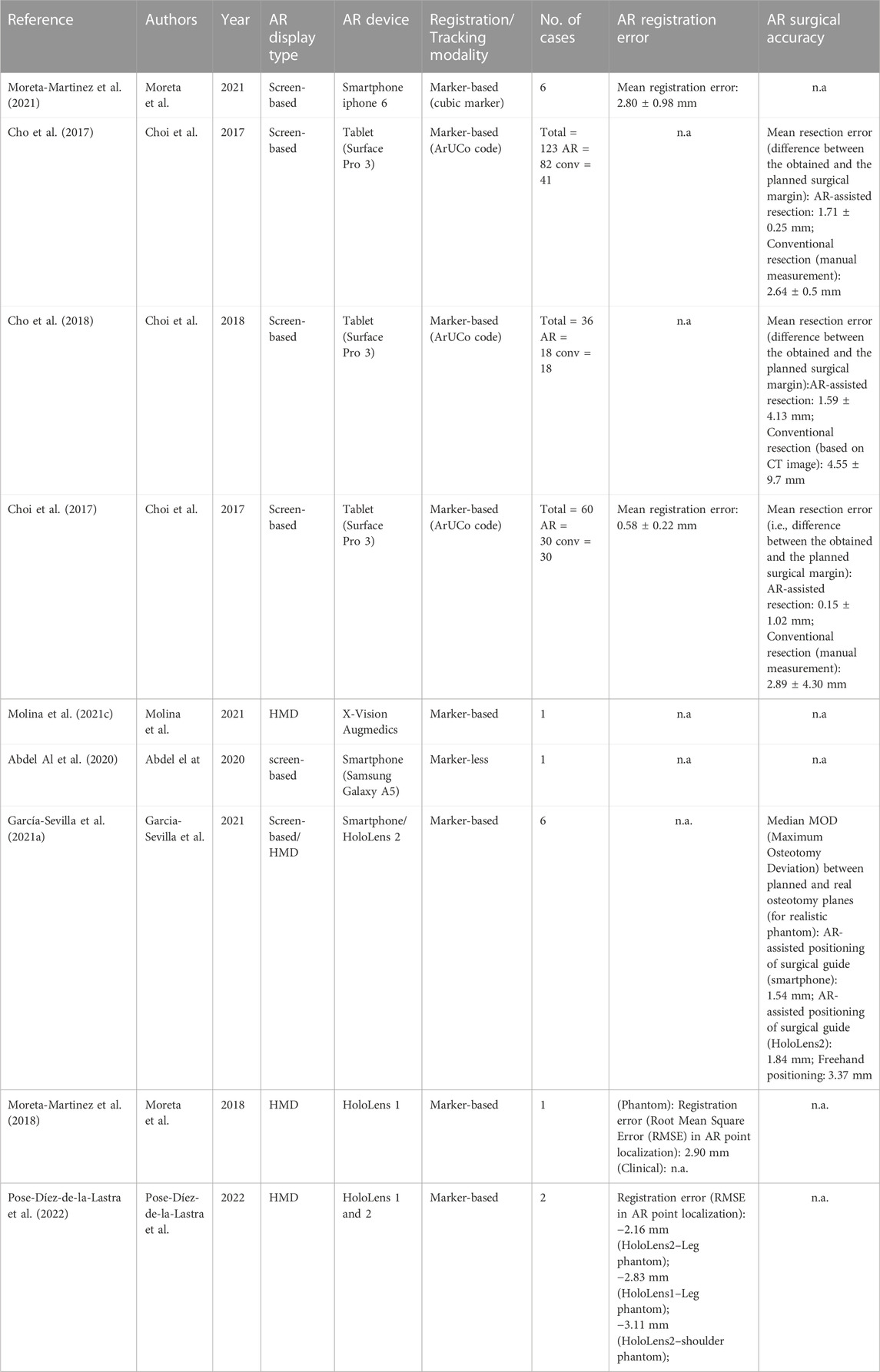

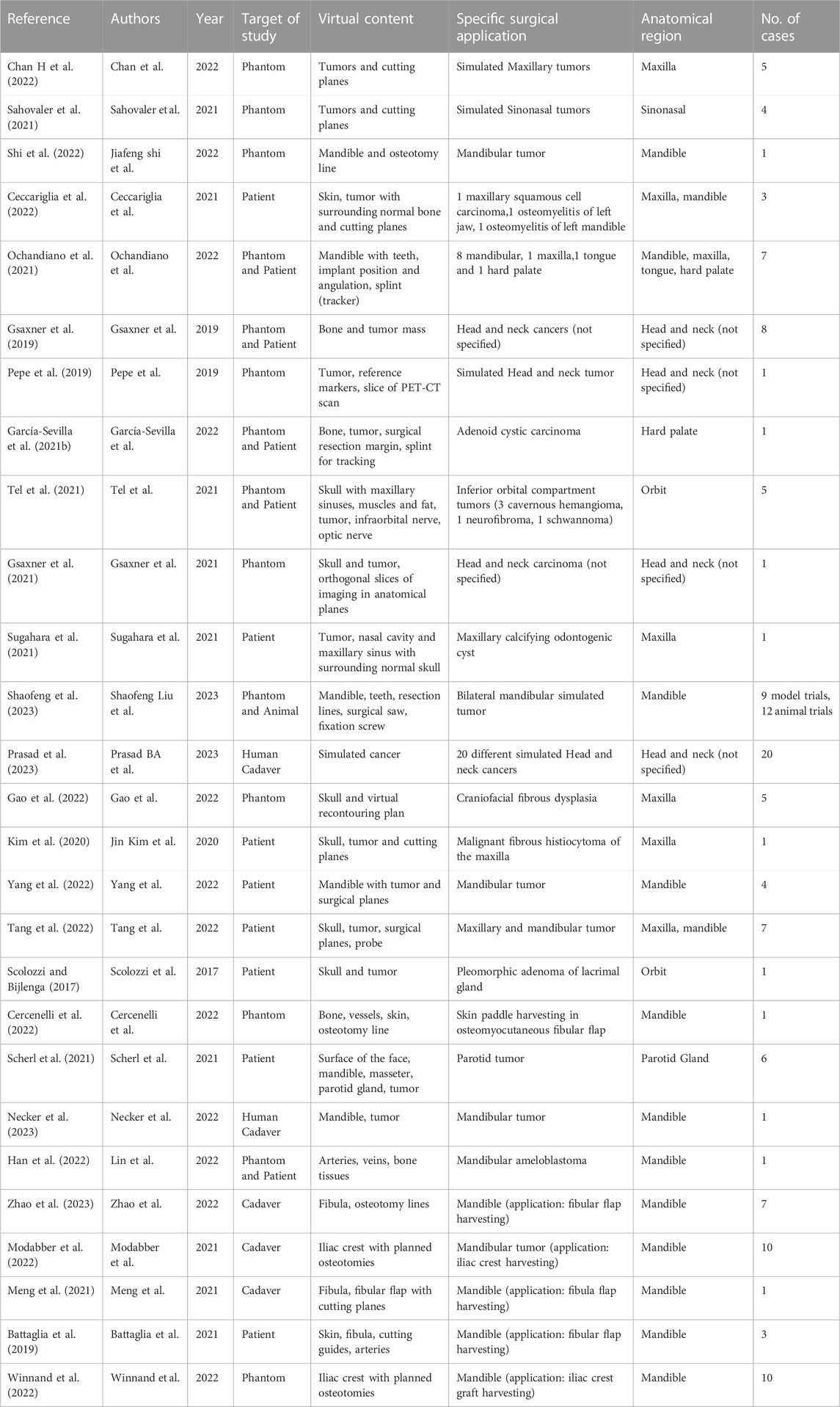

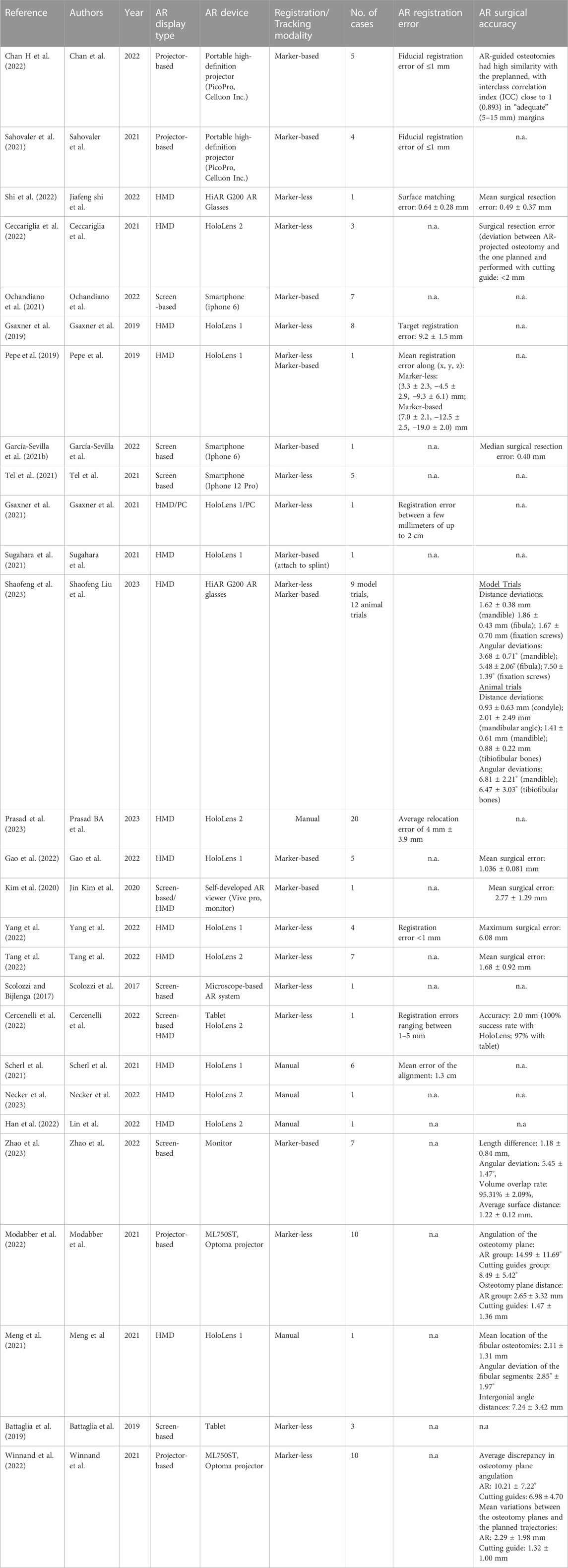

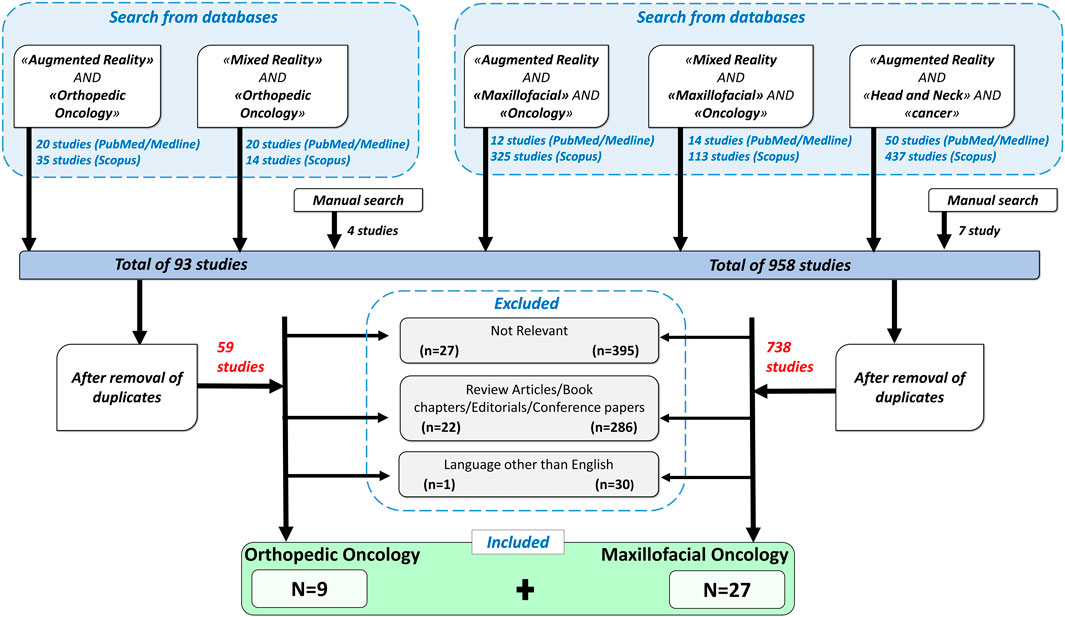

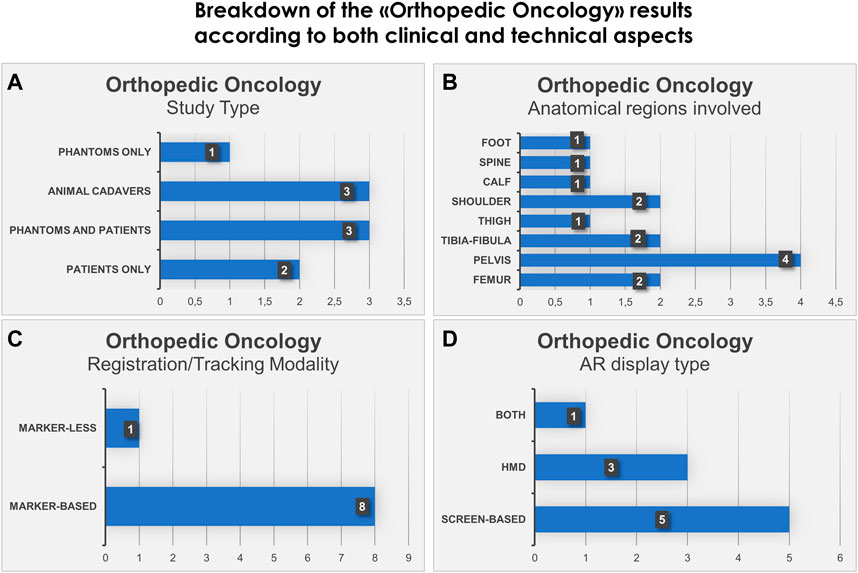

For the included articles, both clinical and technical aspects are summarized separately in given tables for both specialties (Tables 1–4). For the clinical aspects, we classified the papers according to: the specific surgical application, the number of cases involved, the involved anatomical region, the type of study (i.e., on phantom, on cadaver, on patient), the virtual information provided to augment the surgeon’s view (Tables 1, 3). For the technical aspects, we considered the type of AR display and device, the used registration/tracking modality, as well as the achieved registration error and surgical accuracy (measured in mm) (Tables 2, 4). For each included article we provided in a separate table a brief description of the study and the major outcomes (Tables 5, 6).

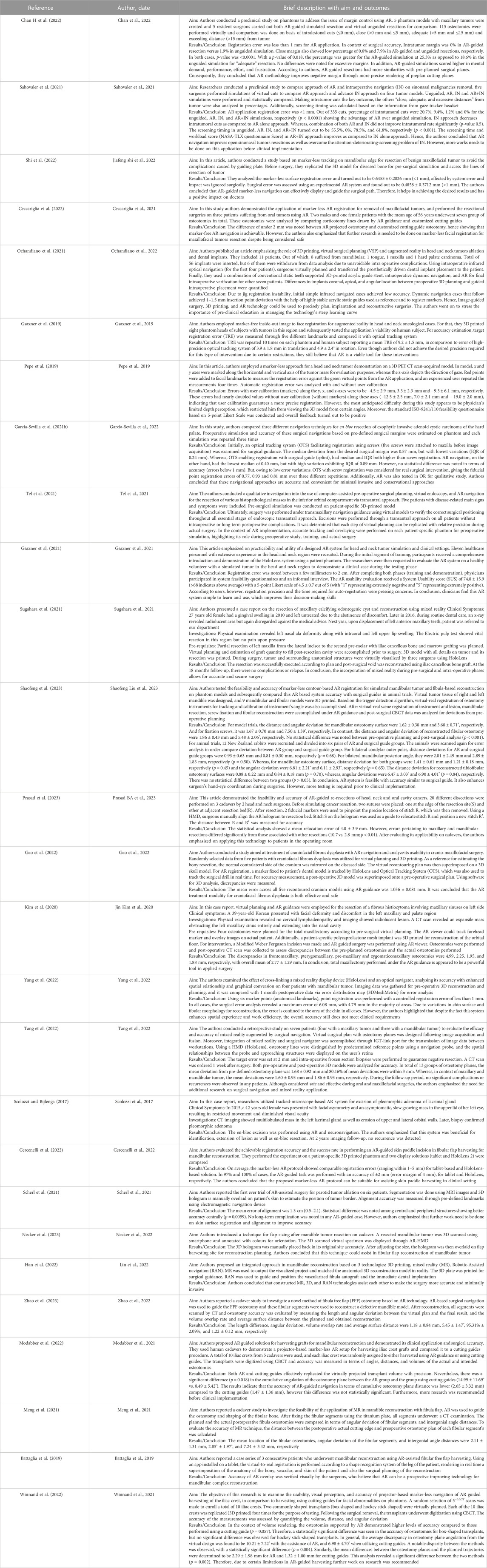

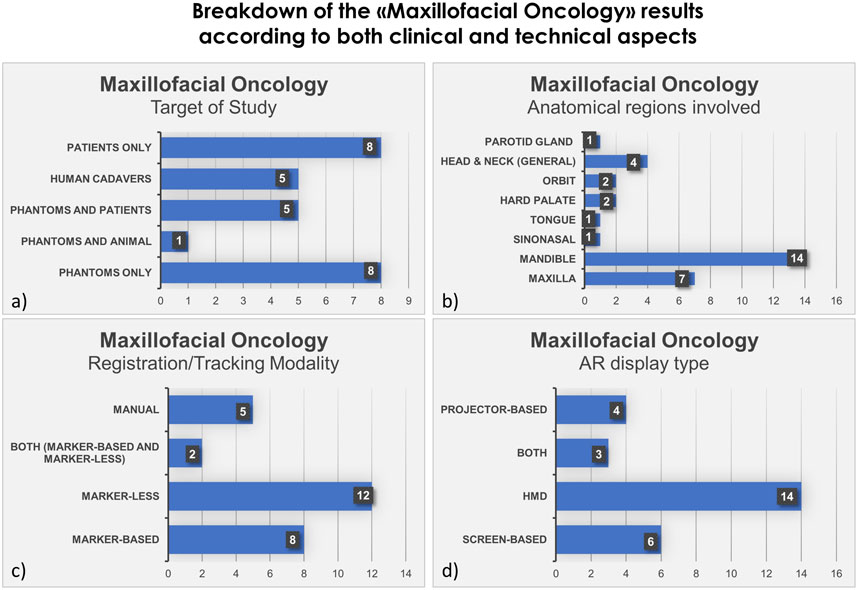

We also show in bar histograms the breakdown of the studies according to some of the most interesting aspects above mentioned (Figures 2, 3).

FIGURE 2. Bar histograms depicting types of study (A), anatomical regions involved in the surgery (B), registration/tracking modality (C) and AR display type (D), for Orthopedic Oncology.

FIGURE 3. Bar histograms depicting types of study (A), anatomical regions involved in the surgery (B), registration/tracking modality (C) and AR display type (D), for Maxillofacial Oncology.

3.1 Orthopedic oncology

For orthopedic oncology (Cho et al., 2017; Choi et al., 2017; Cho et al., 2018; Moreta-Martinez et al., 2018; Abdel Al et al., 2020; García-Sevilla et al., 2021a; Molina et al., 2021c; Moreta-Martinez et al., 2021; Pose-Díez-de-la-Lastra et al., 2022), four studies were conducted on phantoms (n = 1) or both on phantoms and prospectively on patients (n = 3). Three studies were conducted on animal cadavers, whereas, two studies directly employed AR on patients in OR. In the context of AR display and registration/tracking modality, five studies used screen-based display and eight studies opted for a marker-based registration/tracking modality. Regarding the anatomical region of interest, pelvis is the most involved anatomical region in the selected studies (n = 4).

The main outcomes reported in the included articles referred to the registration error of the AR systems, the AR-guided surgical accuracy in performing tumor resection compared to preoperative planning and/or to standard procedures (i.e., manual measurements), the placement error in positioning surgical guides or patient-specific implant under AR assistance.

Despite the fact that the application of AR in the field of orthopedic oncology has been relatively limited compared to maxillofacial oncology to date, the included studies demonstrate the potential future significance of AR technology in this surgical field.

3.2 Maxillofacial oncology

In the field of maxillofacial oncology (Scolozzi and Bijlenga, 2017; Battaglia et al., 2019; Gsaxner et al., 2019; Pepe et al., 2019; Kim et al., 2020; García-Sevilla et al., 2021b; Gsaxner et al., 2021; Meng et al., 2021; Ochandiano et al., 2021; Sahovaler et al., 2021; Scherl et al., 2021; Sugahara et al., 2021; Tel et al., 2021; Ceccariglia et al., 2022; Cercenelli et al., 2022; Chan H et al., 2022; Gao et al., 2022; Han et al., 2022; Modabber et al., 2022; Shi et al., 2022; Tang et al., 2022; Winnand et al., 2022; Yang et al., 2022; Necker et al., 2023; Prasad et al., 2023; Shaofeng et al., 2023; Zhao et al., 2023), in the majority of articles (n = 14) the proposed AR systems were tested on phantoms, and 8 on patients only. Five researcher groups carried out pre-clinical research on phantoms before using AR on patients. The marker-less registration approach (n = 14) and wearable AR displays, i.e., HMDs (n = 14), were utilized in the majority of the research studies. These two investigating factors made up 52% of the total studies. Mandible, being the most involved anatomical area of interest for AR implementation, comprises of 52% of total studied areas (n = 14).

The following are the primary findings that are covered in the articles: the registration error, the AR-guided surgical accuracy in performing tumor resection or flap harvesting compared to preoperative planning, and the AR-guided surgical accuracy compared to the more conventional use of 3D printed cutting guides. It is interesting to mention that, some studies in maxillofacial oncology have also incorporated external tracking navigation systems into augmented reality to improve accuracy and spatial relationships. The articles included have shown the importance of AR and its future perspectives in this field. Nevertheless, the majority of the studies underlined the need for additional research before clinical application.

4 Discussion

In the set of orthopedic oncology articles, all studies utilized marker for registration except 1 (Abdel Al et al., 2020), which opted for marker-less registration. Mean registration error found to be less than 3 mm where measured (not all articles reported the registration error) excepting the one case of complex shoulder phantom (Pose-Díez-de-la-Lastra et al., 2022), where RMSE was slightly more than 3 mm with HoloLens 2.

Out of nine articles, only three randomized controlled trial studies (RCT) were reported (performed on animal cadavers). Mean AR-assisted resection was less than 2 mm as compared to conventional approach (e.g., manual resection) which had mean resection error more than 2.5 mm in these studies (Cho et al., 2017; Choi et al., 2017; Cho et al., 2018).

Included studies of orthopedic oncology also reported two cases, both achieved intended outcomes using AR without any complications (Abdel Al et al., 2020; Molina et al., 2021c). Moreover, one study demonstrated a better positioning of surgical guide in simulated pelvic tumor through AR as compared to freehand method (García-Sevilla et al., 2021a).

Regarding usability, only one study conducted a questionnaire survey with patients and surgeons, and the results turned out to be satisfactory (Moreta-Martinez et al., 2021). Ergonomics of the most popular AR devices, HoloLens 1 and 2, were discussed by Pose-Díez-de-la-Lastra et al. (2022) while comparing them on two orthopedic cases. They indicated that HoloLens 2 has superior ergonomics (score 4 out of 5) as compared to HoloLens 1 (score 2.84 out of 5).

The primary outcomes of the included articles in orthopedic segment demonstrated the utility of AR and showed even better results than conventional procedures in some cases. However, some of the limitations of the orthopedic segment is that few articles discuss the usability and ergonomics of this technology. Only three out of nine articles are randomized controlled trials (RCTs), making it difficult to derive a statistical comparison value from the current studies. Furthermore, only four articles provided surgical accuracy (Cho et al., 2017; Choi et al., 2017; Cho et al., 2018; García-Sevilla et al., 2021a) and only four provided registration error (Choi et al., 2017; Moreta-Martinez et al., 2018; Moreta-Martinez et al., 2021; Pose-Díez-de-la-Lastra et al., 2022) showing the limitation of this research.

Over all, with consideration of sufficient accuracy achieved in terms of registration error and surgical accuracy during surgical simulations, it can be said that AR is beneficial and helpful in orthopedic oncological surgeries. However, due to limited literature in orthopedic oncology till date, further testing is recommended.

In maxillofacial oncology, out of eight marker-based studies, only two reported AR regisration error in terms of fiducial registration error (<1 mm) (Sahovaler et al., 2021; Chan H et al., 2022). Marker-less registration error (where measured in articles) ranges from less than 1 mm to upto 2 cm (Gsaxner et al., 2019; Gsaxner et al., 2021; Cercenelli et al., 2022; Shi et al., 2022; Yang et al., 2022). This shows that some articles did not achieve the desired precision in AR registration through the marker-less approach.

Marker-based AR surgical accuracy was reported in five articles and it was demonstrated in terms of deviation from pre-planned surgical resection. Mean surgical resection error was measured to be less than 3 mm among these articles (Kim et al., 2020; García-Sevilla et al., 2021b; Chan H et al., 2022; Gao et al., 2022; Zhao et al., 2023). Conversely, seven articles reported surgical accuracy using the marker-less approach. The accuracy ranges from 0.49 to 2.77 mm in six articles (Ceccariglia et al., 2022; Cercenelli et al., 2022; Modabber et al., 2022; Shi et al., 2022; Tang et al., 2022; Winnand et al., 2022), whereas, one study had a maximum surgical error of 6.08 mm which did not meet surgical requirement (Yang et al., 2022).

Two studies employed both marker-less and marker-based registration, the latter being used mainly in the case of lack of surface features easily recognizable (Shaofeng et al., 2023) or to introduce a calibration procedure aimed at improving registration accuracy (Pepe et al., 2019). Particularly in (Shaofeng et al., 2023) the surgical accuracy, in terms of distance deviation between the planned osteotomies and postoperative cuts performed under AR guidance, was measured in the range of 0.88–2.01 mm. Manual alignment for virtual-to-real registration was performed in five articles (Meng et al., 2021; Scherl et al., 2021; Han et al., 2022; Necker et al., 2023; Prasad et al., 2023) and only two of them reported errors in terms of overlaying AR images. One study showed average relocation error of 4 mm ± 3.9 mm with HoloLens 2 (Prasad et al., 2023), whereas the other measured mean registration error of 1.3 cm with HoloLens 1 (Scherl et al., 2021). Even though it is hard to comment based on only two results, it seems that chances of error are more in manual registration as compared to marker-less or marker-based approach.

Two of the three case reports on maxillofacial oncological surgery were qualitative, while one case of fibrous histiocytoma had quantified findings with an overall mean discrepancy of 2.77 ± 1.29 mm using AR (Kim et al., 2020). According to qualitative studies, the incorporation of mixed reality during pre-surgical and intra-operative phases allows for precise surgical outcomes and is helpful for lesion identification and determination of its extension (Scolozzi and Bijlenga, 2017; Sugahara et al., 2021).

A total of seven studies have examined the utilization and precision of AR in the context of flap harvesting for mandibular restoration (Battaglia et al., 2019; Meng et al., 2021; Cercenelli et al., 2022; Han et al., 2022; Modabber et al., 2022; Winnand et al., 2022; Zhao et al., 2023). Among these, two of them conducted a comparison between marker-less AR guidance and cutting guides in the context of iliac crest harvesting (Modabber et al., 2022; Winnand et al., 2022). The results indicated that cutting guides exhibited superior precision compared to AR navigation in terms of, both distance and angular deviation from pre-determined trajectories. However, the distance deviations were less than 2.7 mm in AR group. In contrast, Zhao et al. (2023) employed a marker-based methodology to assess the fibular flap, yielding an average distance deviation of 1.22 ± 0.12 mm. One possible explanation for this phenomenon is that the marker-based approach tends to exhibit more accuracy in comparison to the marker-less approach in relation to lower limb bones. This might be attributed to the specific shape and contour of these bones, which pose challenges for marker-less registration techniques.

It is interesting to outline that five of the studies utilized external navigator system with mixed reality. Four of them incorporated external navigation into mixed reality to enhance accuracy and spatial relationships (Scolozzi and Bijlenga, 2017; Gao et al., 2022; Tang et al., 2022; Yang et al., 2022) and one study compared AR and optical tracking system (OTS) accuracy (García-Sevilla et al., 2021b).

García-Sevilla et al. (2021b) compared AR and OTS for surgical navigation and concluded that they have similar accuracy with errors below 1 mm. Gao et al. (2022) used HoloLens and OTS on five patients of cranio-fibrous dysplasia and the mean registration error across all cranium models was 1.036

Feasibility and usability studies were conducted in two articles in maxillofacial oncology. In one study, the standard ISO-9241/110 feasibility questionnaire based on the 5-point Likert Scale was conducted on both medical staff and AR experts, and overall feedback turned out to be positive (Pepe et al., 2019). In another article by Gsaxner et al. (2021), AR usability evaluation received a SUS of 74.8

In addition, AR guided simulations scored higher as compared to virtual unguided resections in mental demand, performance, effort and frustration in preclinical study conducted on phantoms by Chan H et al. (2022).

Some clinicians find the AR system simple to learn and use, which improves their decision-making skills (Gsaxner et al., 2021), whereas, some authors stressed on the importance of pre-clinical education in managing the technology’s steep learning curve (Ochandiano et al., 2021).

Despite of the fact that a few studies did not achieve sufficient accuracy in terms of registration error and surgical accuracy in maxillofacial oncology, the overall outcomes seem to have a positive impact of AR in maxillofacial oncological surgeries. For instance, according to Chan H et al. (2022), AR guided resection improved negative margin and had more similarities with pre-planned cutting planes. Similarly, Shaofeng et al. (2023) came to conclusion that AR system accuracy is similar to that of surgical guide while testing it on mandibular tumor and fibular reconstruction. They also emphasized that it enhances surgeon’s hand-eye coordination in executing surgeries. Ceccariglia et al. (2022) found the discrepancy of under 2 mm between AR projected osteotomy and customized cutting guide osteotomy. Shi et al. (2022) concluded that AR navigation can effectively display and guide the surgical path and helps in achieving desired results. Furthermore, Sahovaler et al. (2021) showed advantage of AR over unguided simulations. However, some pressing concerns like limited depth perception and time required for auto-registration were also mentioned in some studies (Pepe et al., 2019; Gsaxner et al., 2021; Modabber et al., 2022).

Similar to orthopedic oncology, maxillofacial oncology section also lacks in many aspects. Feasibility questionnaire survey and ergonomics are discussed in only few articles. The different methods of evaluation in articles limited the ability to provide quantifiable results. Additionally, only 10 out of 27 articles reported on registration error and only 14 reported on surgical accuracy. Conversely, further research is emphasized pre-clinically before implementing AR in operating rooms.

We should advocate for the development of a technique that is uniform and consistent in order to investigate this new technology and make it possible to conduct meta-analyses for future investigations. This stage is crucial for gathering data to support the use of AR in oncological procedures in both disciplines. Additionally, we stress the importance of including external navigation in AR in future experiments in order to enhance the precision and depth perception of this infant, yet useful technology. Moreover, through the use of standardized questionnaires, SUS, and a 5-point Likert scale, feasibility and ergonomics should be evaluated.

We observed from the collected papers that an important aspect of AR implementation is three-dimensional printing (3D printing), also referred to as rapid prototyping, which is typically used for obtaining pre-operative patient-specific phantoms replicating the anatomical structures of interest. These phantoms are typically used in the papers to perform the surgical task preoperatively under AR guidance, as well as to evaluate both the registration error and surgical accuracy (Moreta-Martinez et al., 2018; Gsaxner et al., 2019; Pepe et al., 2019; García-Sevilla et al., 2021a; García-Sevilla et al., 2021b; Gsaxner et al., 2021; Moreta-Martinez et al., 2021; Ochandiano et al., 2021; Sahovaler et al., 2021; Tel et al., 2021; Chan H et al., 2022; Gao et al., 2022; Pose-Díez-de-la-Lastra et al., 2022; Shi et al., 2022; Shaofeng et al., 2023). When using a marker-based tracking approach, the reference marker which is designed to fit in a unique position on the patient, is produced by 3D printing (Moreta-Martinez et al., 2021; Cho et al., 2017; Choi et al., 2017; Cho et al., 2018; Moreta-Martinez et al., 2018; García-Sevilla et al., 2021b; Ochandiano et al., 2021; Sugahara et al., 2021; Pose-Díez-de-la-Lastra et al., 2022; Shaofeng et al., 2023). Moreover, in some cases (Moreta-Martinez et al., 2018; Ceccariglia et al., 2022) patient-specific surgical guides used for comparative evaluation with AR guidance on surgical accuracy are manufactured via 3D printing. Finally, some studies clearly suggest to use AR and 3D printing in combination to improve surgical efficacy, accuracy, and patients experience (García-Sevilla et al., 2021a; Moreta-Martinez et al., 2021).

4.1 Limitations of AR in surgery

Despite the fact that AR is a growing technology, it is not without limitations and complications. Surgeons should be well aware of the limitations of augmented reality in surgery, including technical challenges, limited field of view which limits the amount of virtual content available to the user, high implementation costs and limited user experience. In addition, they should consider how these limitations may impact the accuracy and efficacy of AR systems, as well as the surgical outcomes. Before incorporating AR into clinical practice, it is essential to execute a comprehensive analysis of its viability and benefits.

For instance, the viewing distance and angle of commercially available HMDs, such as HoloLens 2, are not optimized for use in surgery since the focus distance is suboptimal for medical procedures that are typically carried out at arm’s length and with the head bowed to observe the operative field (Wong et al., 2022).

To the authors’ knowledge, today only two “surgery-specific” headsets are available for AR-based intraoperative guidance: the X-vision Spine System by Augmedics, which received FDA approval (https://www.augmedics.com/), and the VOSTARS system, still under investigation (https://www.vostars.eu/). VOSTARS is promising new wearable AR system designed as a hybrid Optical-See-Through (OST)/Video-See-Through (VST) HMD capable to offer a highly advanced navigation tool for maxillofacial surgery and other open surgeries. An early prototype of the VOSTARS system (Ruggiero et al., 2023) has been already evaluated in phantom tests and demonstrated a sub-millimetric accuracy (0.5÷1 mm) in the execution of high-precision maxillofacial tasks (Cercenelli et al., 2020; Condino et al., 2020).

The issue of depth perception is another challenge that surgeons have to consider when applying AR technology during surgical procedures (Sielhorst et al., 2006). Surgeons must accurately gauge the distance between their instruments and the intended targets for AR surgery to be successful. However, accurate distance estimation during AR-assisted surgery is complicated by the fact that tools and target landmarks are 3D-rendered (Choi et al., 2016).

In order to implement AR in surgery, complex technical solutions, including medical-grade software and hardware systems, are required. For example, consumer-grade computer systems are suboptimal for displaying high-quality 3D rendered objects, and HMDs have a limited battery life (2–3 h), which can result in technical issues such as system failure, calibration errors, and latency. These obstacles may limit the accuracy of AR systems and result in surgical complications (Wong et al., 2022).

In addition, surgeons using AR-HMDs must contend with the limited field of view, restricted binocular field and projection size (Lareyre et al., 2021).

Virtual-to-real scene registration with HMDs is another major issue when using AR for surgical guidance and simulation, resulting in inaccurate identification of the deep anatomical structure in question. Due to the fact that these display devices were not devised for medical purposes, their technical characteristics are less suited for surgical procedures (Badiali et al., 2020).

Surely, a marker-less registration, i.e., without the use of fiducial markers or trackers anchored to the patient, is highly preferable in surgery, however it is not always feasible for certain surgeries. As also emerged from our analysis, in maxillofacial oncological surgery a marker-less based approach seems to be more viable since the edges of anatomical parts (e.g., mandible or skull) are more accessible and trackable during surgery; conversely, in orthopedic field the intraoperative recognition of bone edges may be more difficult.

During surgery, a surgeon using an AR headset may endure discomfort, weariness, eye strains, and headache. Furthermore, it is possible that the surgeon’s ability to focus on the surgical operation field might get impaired due to the visualization of augmented information. However, in a study conducted on simulation sickness, in 2018, authors showed that out of 142 HMD’s users from various fields, only few experienced mild discomfort (Vovk et al., 2018).

Because this technology is high priced and requires significant investment to initiate and implement in hospitals, it is not widely accessible in all medical settings. Consequently, the data associated with augmented reality in the surgical field are rather preliminary and require further testing and analysis.

The AR application has a steep and costly learning curve. It requires expertise, and most surgeons are unfamiliar with AR utilization. So they must collaborate with biomedical engineers to implement this technology in the operating room. Personnel must endure time-consuming hands-on training in order to implement AR in hospital surgical setups.

When information needs to be electronically distributed to several departments in order to make patient-specific tools for AR, patient data privacy is another concern that must be addressed. When dealing with sensitive information pertaining to patients, certain national regulations should be followed in order to protect and secure both their safety and their privacy.

4.2 Future of AR

Augmented reality is being recognized as a promising application for enhancing the outcomes and standard of care for orthopedic and maxillofacial oncology patients. Especially in complex oncological cases that require a strategic planning for execution and comprehension, it is emphasized that surgeons should consider using augmented reality where applicable, in combination with 3D printing. However, implementation of AR and its tools in surgical cases and healthcare has certain shortcomings which can be improved with future advancement in technology. Depending on the complexity of cases, process from procuring CT scan/MRI images to 3D printing of pre-surgical phantoms and developing a patient-specific AR application take days, sometimes even months including preoperative surgical planning and simulation training. Surgeons and biomedical engineers must work together to refine and successfully execute the procedure. Therefore, as future perspective, AR cannot be used in emergency cases and can only be advised in elective surgeries. Despite these facts, we continue to believe that AR technology has the potential to revolutionize the conventional methods of oncological surgical procedures and overcome all of these limitations. Augmented reality is expected to help in better understanding of tumor anatomy and in plan the resection accordingly. This will contribute to improve the outcomes and standard of care by limiting the recurrence rate while attaining the desired surgical margins with accuracy.

5 Conclusion

Currently, augmented reality is one of the most innovative technology in the field of surgery, particularly orthopedic and maxillofacial surgery. Due to its real-time visualization of preoperative images and planning directly on the patient, AR can be particularly beneficial for oncological surgeries in both fields to achieve the desired surgical accuracy. In oncological surgery, the AR allows to overcome some limitations of conventional computer-assisted surgical navigation, such as the surgeon’s attention shift from the operative field to view the navigation monitor, as well as to avoid the lead-time in manufacturing 3D-printed cutting guides. Indeed, AR should be used as a complementary tool to other computer-assisted technologies, as suggested by our literature review: particularly for maxillofacial oncology, surgeons have begun to incorporate external navigation systems into AR to track the surgical probe or instruments, to further improve accuracy and spatial relationships.

Even though AR technology is still in its infancy and has certain limitations, the current outcomes of its application in both disciplines are promising to support its clinical use. Certain concerning aspects still remain, related to image-to-patient registration and surgical accuracy. In the present review, we attempted to identify a range of registration error and surgical accuracy based on results from both surgical domains. Although it is difficult to derive general ranges due to the involvement of various anatomical regions and different complexity for each domain it can be observed that AR resection error exhibited greater accuracy compared to conventional un-guided resection, hence successfully attaining the desired goals without any associated complications. Additionally, in some studies the AR navigation showed comparable accuracy with pre-planned virtual cutting planes and with customized cutting guides.

We believe that the still limiting technical aspects on registration and surgical accuracy can be improved and overcome with further development in hardware and software used for AR. For that, industry-academic partnerships are essential to advance the technology, in conjunction with clinical studies to assess its benefits and role in the clinical practice.

In conclusion, although AR is seen to have the capacity to enhance surgical efficiency and ensure patient’s safety, further search needs to be done pre-clinically in order to improve its accuracy and before achieving its wide adoption in clinical settings.

Data availability statement

The raw data supporting the conclusion of this article will be made available by the authors, without undue reservation.

Author contributions

NN: Conceptualization, Data curation, Methodology, Writing–original draft, Writing–review and editing. LC: Conceptualization, Data curation, Methodology, Writing–original draft, Writing–review and editing. AT: Resources, Supervision, Writing–review and editing. EM: Resources, Supervision, Writing–review and editing.

Funding

The authors declare that no financial support was received for the research, authorship, and/or publication of this article.

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

References

Abdel Al, S., Chaar, M. K. A., Mustafa, A., Al-Hussaini, M., Barakat, F., and Asha, W. (2020). Innovative surgical planning in resecting soft tissue sarcoma of the foot using augmented reality with a smartphone. J. Foot Ankle Surg. 59 (5), 1092–1097. doi:10.1053/j.jfas.2020.03.011

Alexander, C., Loeb, A. E., Fotouhi, J., Navab, N., Armand, M., and Khanuja, H. S. (2020). Augmented reality for acetabular component placement in direct anterior total hip arthroplasty. J. Arthroplasty 35 (6), 1636–1641.e3. doi:10.1016/j.arth.2020.01.025

Azuma, R., Baillot, Y., Behringer, R., Feiner, S., Julier, S., and MacIntyre, B. (2001). Recent advances in augmented reality. IEEE Comput. Graph. Appl. 21 (6), 34–47. doi:10.1109/38.963459

Badiali, G., Cercenelli, L., Battaglia, S., Marcelli, E., Marchetti, C., Ferrari, V., et al. (2020). Review on augmented reality in oral and cranio-maxillofacial surgery: toward “surgery-specific” head-up displays. IEEE Access 8, 59015–59028. doi:10.1109/access.2020.2973298

Barcali, E., Iadanza, E., Manetti, L., Francia, P., Nardi, C., and Bocchi, L. (2022). Augmented reality in surgery: a scoping review. A Scoping Rev. 12 (14), 6890. doi:10.3390/app12146890

Battaglia, S., Badiali, G., Cercenelli, L., Bortolani, B., Marcelli, E., Cipriani, R., et al. (2019). Combination of CAD/CAM and augmented reality in free fibula bone harvest. Plastic Reconstr. Surg. Glob. open 7 (11), e2510. doi:10.1097/gox.0000000000002510

Battaglia, S., Ratti, S., Manzoli, L., Marchetti, C., Cercenelli, L., Marcelli, E., et al. (2020). Augmented reality-assisted periosteum pedicled flap harvesting for head and neck reconstruction: an anatomical and clinical viability study of a galeo-pericranial flap. J. Clin. Med. 9 (7), 2211. doi:10.3390/jcm9072211

Bianchi, L., Chessa, F., Angiolini, A., Cercenelli, L., Lodi, S., Bortolani, B., et al. (2021). The use of augmented reality to guide the intraoperative frozen section during robot-assisted radical prostatectomy. Eur. Urol. 80 (4), 480–488. doi:10.1016/j.eururo.2021.06.020

Budhathoki, S., Alsadoon, A., Prasad, P. W. C., Haddad, S., and Maag, A. (2020). Augmented reality for narrow area navigation in jaw surgery: modified tracking by detection volume subtraction algorithm. Int. J. Med. robotics + computer assisted surgery MRCAS 16 (3), e2097. doi:10.1002/rcs.2097

Cannizzaro, D., Zaed, I., Safa, A., Jelmoni, A. J. M., Composto, A., Bisoglio, A., et al. (2022). Augmented reality in neurosurgery, state of art and future projections. A systematic review. Frontiers in surgery 9, 864792. doi:10.3389/fsurg.2022.864792

Ceccariglia, F., Cercenelli, L., Badiali, G., Marcelli, E., and Tarsitano, A. (2022). Application of augmented reality to maxillary resections: a three-dimensional approach to maxillofacial oncologic surgery. Maxillofacial Oncologic Surgery 12 (12), 2047. doi:10.3390/jpm12122047

Cercenelli, L., Babini, F., Badiali, G., Battaglia, S., Tarsitano, A., Marchetti, C., et al. (2022). Augmented reality to assist skin paddle harvesting in osteomyocutaneous fibular flap reconstructive surgery: a pilot evaluation on a 3D-printed leg phantom. Front. Oncol. 11, 804748. doi:10.3389/fonc.2021.804748

Cercenelli, L., Carbone, M., Condino, S., Cutolo, F., Marcelli, E., Tarsitano, A., et al. (2020). The wearable VOSTARS system for augmented reality-guided surgery: preclinical phantom evaluation for high-precision maxillofacial tasks. Journal of clinical medicine 9 (11), 3562. doi:10.3390/jcm9113562

Chan H, H. L., Sahovaler, A., Daly, M. J., Ferrari, M., Franz, L., Gualtieri, T., et al. (2022). Projected cutting guides using an augmented reality system to improve surgical margins in maxillectomies: a preclinical study. Oral oncology 127, 105775. doi:10.1016/j.oraloncology.2022.105775

Cho, H. S., Park, M. S., Gupta, S., Han, I., Kim, H. S., Choi, H., et al. (2018). Can augmented reality Be helpful in pelvic bone cancer surgery? An in vitro study. Clinical orthopaedics and related research 476 (9), 1719–1725. doi:10.1007/s11999.0000000000000233

Cho, H. S., Park, Y. K., Gupta, S., Yoon, C., Han, I., Kim, H. S., et al. (2017). Augmented reality in bone tumour resection: an experimental study. Bone Joint Res 6 (3), 137–143. doi:10.1302/2046-3758.63.bjr-2016-0289.r1

Choi, H., Cho, B., Masamune, K., Hashizume, M., and Hong, J. (2016). An effective visualization technique for depth perception in augmented reality-based surgical navigation. The international journal of medical robotics + computer assisted surgery MRCAS 12 (1), 62–72. doi:10.1002/rcs.1657

Choi, H., Park, Y., Lee, S., Ha, H., Kim, S., Cho, H. S., et al. (2017). A portable surgical navigation device to display resection planes for bone tumor surgery. Minimally Invasive Therapy & Allied Technologies 26 (3), 144–150. doi:10.1080/13645706.2016.1274766

Condino, S., Fida, B., Carbone, M., Cercenelli, L., Badiali, G., Ferrari, V., et al. (2020). Wearable augmented reality platform for aiding complex 3D trajectory tracing. Sensors (Basel). 20 (6), 1612. doi:10.3390/s20061612

Dennler, C., Jaberg, L., Spirig, J., Agten, C., Götschi, T., Fürnstahl, P., et al. (2020). Augmented reality-based navigation increases precision of pedicle screw insertion. Journal of Orthopaedic Surgery and Research 15 (1), 174. doi:10.1186/s13018-020-01690-x

Elmi-Terander, A., Burström, G., Nachabé, R., Fagerlund, M., Ståhl, F., Charalampidis, A., et al. (2020). Augmented reality navigation with intraoperative 3D imaging vs fluoroscopy-assisted free-hand surgery for spine fixation surgery: a matched-control study comparing accuracy. Sci Rep 10 (1), 707. doi:10.1038/s41598-020-57693-5

Elmi-Terander, A., Burström, G., Nachabe, R., Skulason, H., Pedersen, K., Fagerlund, M., et al. (2019). Pedicle screw placement using augmented reality surgical navigation with intraoperative 3D imaging: a first in-human prospective cohort study. Spine 44 (7), 517–525. doi:10.1097/brs.0000000000002876

Elmi-Terander, A., Nachabe, R., Skulason, H., Pedersen, K., Söderman, M., Racadio, J., et al. (2018). Feasibility and accuracy of thoracolumbar minimally invasive pedicle screw placement with augmented reality navigation technology. Spine 43 (14), 1018–1023. doi:10.1097/brs.0000000000002502

Feiner, S. K. (2002). Augmented reality: a new way of seeing. Scientific American 286 (4), 48–55. doi:10.1038/scientificamerican0402-48

Ferraguti, F., Farsoni, S., and Bonfè, M. (2022). Augmented reality and robotic systems for assistance in percutaneous nephrolithotomy procedures: recent advances and future perspectives. Recent Advances and Future Perspectives 11 (19), 2984. doi:10.3390/electronics11192984

Gao, Y., Lin, L., Chai, G., and Xie, L. (2019). A feasibility study of a new method to enhance the augmented reality navigation effect in mandibular angle split osteotomy. Journal of cranio-maxillo-facial surgery official publication of the European Association for Cranio-Maxillo-Facial Surgery 47 (8), 1242–1248. doi:10.1016/j.jcms.2019.04.005

Gao, Y., Liu, K., Lin, L., Wang, X., and Xie, L. (2022). Use of augmented reality navigation to optimise the surgical management of craniofacial fibrous dysplasia. British Journal of Oral and Maxillofacial Surgery 60 (2), 162–167. doi:10.1016/j.bjoms.2021.03.011

García-Sevilla, M., Moreta-Martinez, R., García-Mato, D., Arenas de Frutos, G., Ochandiano, S., Navarro-Cuéllar, C., et al. (2021b). Surgical navigation, augmented reality, and 3D printing for hard palate adenoid cystic carcinoma en-bloc resection: case report and literature review. Frontiers in oncology 11, 741191. doi:10.3389/fonc.2021.741191

García-Sevilla, M., Moreta-Martinez, R., García-Mato, D., Pose-Diez-de-la-Lastra, A., Pérez-Mañanes, R., Calvo-Haro, J. A., et al. (2021a). Augmented reality as a tool to guide PSI placement in pelvic tumor resections. Sensors (Basel) 21 (23), 7824. doi:10.3390/s21237824

Gibby, J. T., Swenson, S. A., Cvetko, S., Rao, R., and Javan, R. (2019). Head-mounted display augmented reality to guide pedicle screw placement utilizing computed tomography. International journal of computer assisted radiology and surgery 14 (3), 525–535. doi:10.1007/s11548-018-1814-7

Golse, N., Petit, A., Lewin, M., Vibert, E., and Cotin, S. (2021). Augmented reality during open liver surgery using a markerless non-rigid registration system. Journal of gastrointestinal surgery official journal of the Society for Surgery of the Alimentary Tract 25 (3), 662–671. doi:10.1007/s11605-020-04519-4

Gouveia, P. F., Costa, J., Morgado, P., Kates, R., Pinto, D., Mavioso, C., et al. (2021). Breast cancer surgery with augmented reality. The Breast 56, 14–17. doi:10.1016/j.breast.2021.01.004

Gsaxner, C., Pepe, A., Li, J., Ibrahimpasic, U., Wallner, J., Schmalstieg, D., et al. (2021). Augmented reality for head and neck carcinoma imaging: description and feasibility of an instant calibration, markerless approach. Computer Methods and Programs in Biomedicine 200, 105854. doi:10.1016/j.cmpb.2020.105854

Gsaxner, C., Pepe, A., Wallner, J., Schmalstieg, D., and Egger, J. (2019). “Markerless image-to-face registration for untethered augmented reality in head and neck surgery,” in 22nd International Conference, Shenzhen, China, October 2019, 236–244.

Han, J. J., Sodnom-Ish, B., Eo, M. Y., Kim, Y. J., Oh, J. H., Yang, H. J., et al. (2022). Accurate mandible reconstruction by mixed reality, 3D printing, and robotic-assisted navigation integration. The Journal of craniofacial surgery 33 (6), e701–e706. doi:10.1097/SCS.0000000000008603

Jiang, T., Yu, D., Wang, Y., Zan, T., Wang, S., and Li, Q. (2020). HoloLens-based vascular localization system: precision evaluation study with a three-dimensional printed model. Journal of medical Internet research 22 (4), e16852. doi:10.2196/16852

Kim, H.-J., Jo, Y.-J., Choi, J.-S., Kim, H.-J., Park, I.-S., You, J.-S., et al. (2020). Virtual reality simulation and augmented reality-guided surgery for total maxillectomy: a case report. A Case Report 10 (18), 6288. doi:10.3390/app10186288

Lareyre, F., Chaudhuri, A., Adam, C., Carrier, M., Mialhe, C., and Raffort, J. (2021). Applications of head-mounted displays and smart glasses in vascular surgery. Annals of Vascular Surgery 75, 497–512. doi:10.1016/j.avsg.2021.02.033

Lee, D., Yu, H. W., Kim, S., Yoon, J., Lee, K., Chai, Y. J., et al. (2020). Vision-based tracking system for augmented reality to localize recurrent laryngeal nerve during robotic thyroid surgery. Scientific Reports 10 (1), 8437. doi:10.1038/s41598-020-65439-6

Li, T., Li, C., Zhang, X., Liang, W., Chen, Y., Ye, Y., et al. (2021). Augmented reality in ophthalmology: applications and challenges. Front Med (Lausanne) 8, 733241. doi:10.3389/fmed.2021.733241

Ma, L., Jiang, W., Zhang, B., Qu, X., Ning, G., Zhang, X., et al. (2019). Augmented reality surgical navigation with accurate CBCT-patient registration for dental implant placement. Medical & biological engineering & computing 57 (1), 47–57. doi:10.1007/s11517-018-1861-9

Mahmud, N., Cohen, J., Tsourides, K., and Berzin, T. (2015). Computer vision and augmented reality in gastrointestinal endoscopy: figure 1. Gastroenterology Report 3, 179–184. doi:10.1093/gastro/gov027

Meng, F. H., Zhu, Z. H., Lei, Z. H., Zhang, X. H., Shao, L., Zhang, H. Z., et al. (2021). Feasibility of the application of mixed reality in mandible reconstruction with fibula flap: a cadaveric specimen study. Journal of stomatology, oral and maxillofacial surgery 122 (4), e45–e49. doi:10.1016/j.jormas.2021.01.005

Modabber, A., Ayoub, N., Redick, T., Gesenhues, J., Kniha, K., Möhlhenrich, S. C., et al. (2022). Comparison of augmented reality and cutting guide technology in assisted harvesting of iliac crest grafts - a cadaver study. Annals of anatomy = Anatomischer Anzeiger official organ of the Anatomische Gesellschaft 239, 151834. doi:10.1016/j.aanat.2021.151834

Molina, C. A., Dibble, C. F., Lo, S.-fL., Witham, T., and Sciubba, D. M. (2021c). Augmented reality–mediated stereotactic navigation for execution of en bloc lumbar spondylectomy osteotomies. Journal of Neurosurgery Spine 34 (5), 700–705. doi:10.3171/2020.9.spine201219

Molina, C. A., Dibble, C. F., Lo, S. L., Witham, T., and Sciubba, D. M. (2021a). Augmented reality-mediated stereotactic navigation for execution of en bloc lumbar spondylectomy osteotomies. J Neurosurg Spine 34, 700–705. doi:10.3171/2020.9.spine201219

Molina, C. A., Sciubba, D. M., Greenberg, J. K., Khan, M., and Witham, T. (2021b). Clinical accuracy, technical precision, and workflow of the first in human use of an augmented-reality head-mounted display stereotactic navigation system for spine surgery. Operative neurosurgery (Hagerstown, Md) 20 (3), 300–309. doi:10.1093/ons/opaa398

Moreta-Martinez, R., García-Mato, D., García-Sevilla, M., Pérez-Mañanes, R., Calvo-Haro, J., and Pascau, J. (2018). Augmented reality in computer-assisted interventions based on patient-specific 3D printed reference. Healthc Technol Lett 5 (5), 162–166. doi:10.1049/htl.2018.5072

Moreta-Martinez, R., Pose-Díez-de-la-Lastra, A., Calvo-Haro, J. A., Mediavilla-Santos, L., Pérez-Mañanes, R., and Pascau, J. (2021). Combining augmented reality and 3D printing to improve surgical workflows in orthopedic oncology: smartphone application and clinical evaluation. Sensors (Basel, Switzerland) 21 (4), 1370. doi:10.3390/s21041370

Necker, F. N., Chang, M., Leuze, C., Topf, M. C., Daniel, B. L., and Baik, F. M. (2023). Virtual resection specimen interaction using augmented reality holograms to guide margin communication and flap sizing. Otolaryngology--head and neck surgery 169, 1083–1085. doi:10.1002/ohn.325

Ochandiano, S., García-Mato, D., Gonzalez-Alvarez, A., Moreta-Martinez, R., Tousidonis, M., Navarro-Cuellar, C., et al. (2021). Computer-assisted dental implant placement following free flap reconstruction: virtual planning, CAD/CAM templates, dynamic navigation and augmented reality. Frontiers in oncology 11, 754943. doi:10.3389/fonc.2021.754943

Ogawa, H., Hasegawa, S., Tsukada, S., and Matsubara, M. (2018). A pilot study of augmented reality technology applied to the acetabular cup placement during total hip arthroplasty. J Arthroplasty 33 (6), 1833–1837. doi:10.1016/j.arth.2018.01.067

Pellegrino, G., Mangano, C., Mangano, R., Ferri, A., Taraschi, V., and Marchetti, C. (2019). Augmented reality for dental implantology: a pilot clinical report of two cases. BMC Oral Health 19 (1), 158. doi:10.1186/s12903-019-0853-y

Pepe, A., Trotta, G. F., Mohr-Ziak, P., Gsaxner, C., Wallner, J., Bevilacqua, V., et al. (2019). A marker-less registration approach for mixed reality-aided maxillofacial surgery: a pilot evaluation. Journal of digital imaging 32 (6), 1008–1018. doi:10.1007/s10278-019-00272-6

Pietruski, P., Majak, M., Światek-Najwer, E., Żuk, M., Popek, M., Mazurek, M., et al. (2019). Supporting mandibular resection with intraoperative navigation utilizing augmented reality technology - a proof of concept study. Journal of cranio-maxillo-facial surgery 47 (6), 854–859. doi:10.1016/j.jcms.2019.03.004

Pose-Díez-de-la-Lastra, A., Moreta-Martinez, R., García-Sevilla, M., García-Mato, D., Calvo-Haro, J. A., Mediavilla-Santos, L., et al. (2022). HoloLens 1 vs. HoloLens 2: improvements in the new model for orthopedic oncological interventions. Oncological Interventions 22 (13), 4915. doi:10.3390/s22134915

Prasad, K., Miller, A., Sharif, K., Colazo, J. M., Ye, W., Necker, F., et al. (2023). Augmented-reality surgery to guide head and neck cancer Re-resection: a feasibility and accuracy study. Annals of Surgical Oncology 30, 4994–5000. doi:10.1245/s10434-023-13532-1

Rad, A. A., Vardanyan, R., Lopuszko, A., Alt, C., Stoffels, I., Schmack, B., et al. (2022). Virtual and augmented reality in cardiac surgery. Brazilian journal of cardiovascular surgery 37 (1), 123–127. doi:10.21470/1678-9741-2020-0511

Roberts, S., Desai, A., Checcucci, E., Puliatti, S., Taratkin, M., Kowalewski, K. F., et al. (2022). Augmented reality" applications in urology: a systematic review. Minerva urology and nephrology 74 (5), 528–537. doi:10.23736/s2724-6051.22.04726-7

Ruggiero, F., Cercenelli, L., Emiliani, N., Badiali, G., Bevini, M., Zucchelli, M., et al. (2023). Preclinical application of augmented reality in pediatric craniofacial surgery: an accuracy study. An Accuracy Study 12 (7), 2693. doi:10.3390/jcm12072693

Sahovaler, A., Chan, H. H. L., Gualtieri, T., Daly, M., Ferrari, M., Vannelli, C., et al. (2021). Augmented reality and intraoperative navigation in sinonasal malignancies: a preclinical study. Frontiers in oncology 11, 723509. doi:10.3389/fonc.2021.723509

Scherl, C., Stratemeier, J., Rotter, N., Hesser, J., Schönberg, S. O., Servais, J. J., et al. (2021). Augmented reality with HoloLens® in parotid tumor surgery: a prospective feasibility study. ORL 83 (6), 439–448. doi:10.1159/000514640

Schiavina, R., Bianchi, L., Chessa, F., Barbaresi, U., Cercenelli, L., Lodi, S., et al. (2021). Augmented reality to guide selective clamping and tumor dissection during robot-assisted partial nephrectomy: a preliminary experience. Clinical genitourinary cancer 19 (3), e149–e155. doi:10.1016/j.clgc.2020.09.005

Schwam, Z. G., Kaul, V. F., Bu, D. D., Iloreta, A. C., Bederson, J. B., Perez, E., et al. (2021). The utility of augmented reality in lateral skull base surgery: a preliminary report. Am J Otolaryngol 42 (4), 102942. doi:10.1016/j.amjoto.2021.102942

Scolozzi, P., and Bijlenga, P. (2017). Removal of recurrent intraorbital tumour using a system of augmented reality. British Journal of Oral and Maxillofacial Surgery 55 (9), 962–964. doi:10.1016/j.bjoms.2017.08.360

Shaofeng, L., Yunyang, L., Bingwei, H., Bowen, D., Zhaoju, Z., Jiafeng, S., et al. (2023). Mandibular resection and defect reconstruction guided by a contour registration-based augmented reality system: a preclinical trial. Journal of Cranio-Maxillofacial Surgery 51, 360–368. doi:10.1016/j.jcms.2023.05.007

Sharma, A., Alsadoon, A., Prasad, P. W. C., Al-Dala’in, T., and Haddad, S. (2021). A novel augmented reality visualization in jaw surgery: enhanced ICP based modified rotation invariant and modified correntropy. Multimedia Tools and Applications 80 (16), 23923–23947. doi:10.1007/s11042-021-10787-2

Shi, J., Liu, S., Zhu, Z., Deng, Z., Bian, G., and He, B. (2022). Augmented reality for oral and maxillofacial surgery: the feasibility of a marker-free registration method. The international journal of medical robotics + computer assisted surgery MRCAS 18 (4), e2401. doi:10.1002/rcs.2401

Shrestha, L., Alsadoon, A., Prasad, P. W. C., AlSallami, N., and Haddad, S. (2021). Augmented reality for dental implant surgery: enhanced ICP. The Journal of Supercomputing 77 (2), 1152–1176. doi:10.1007/s11227-020-03322-x

Sielhorst, T., Bichlmeier, C., Heining, S. M., and Navab, N. (2006). “Depth perception – a major issue in medical AR: evaluation study by twenty surgeons,” in Medical image computing and computer-assisted intervention – miccai 2006 (Berlin, Germany: Springer).

Sugahara, K., Koyachi, M., Koyama, Y., Sugimoto, M., Matsunaga, S., Odaka, K., et al. (2021). Mixed reality and three dimensional printed models for resection of maxillary tumor: a case report. Quantitative imaging in medicine and surgery 11 (5), 2187–2194. doi:10.21037/qims-20-597

Tang, Z.-N., Hu, L.-H., Soh, H. Y., Yu, Y., Zhang, W.-B., and Peng, X. (2022). Accuracy of mixed reality combined with surgical navigation assisted oral and maxillofacial tumor resection. Front Oncol 11, 715484. doi:10.3389/fonc.2021.715484

Tel, A., Arboit, L., Sembronio, S., Costa, F., Nocini, R., and Robiony, M. (2021). The transantral endoscopic approach: a portal for masses of the inferior orbit—improving surgeons' experience through virtual endoscopy and augmented reality. Front Surg 8, 715262. doi:10.3389/fsurg.2021.715262

Tsukada, S., Ogawa, H., Nishino, M., Kurosaka, K., and Hirasawa, N. (2019). Augmented reality-based navigation system applied to tibial bone resection in total knee arthroplasty. Journal of experimental orthopaedics 6 (1), 44. doi:10.1186/s40634-019-0212-6

Tsukada, S., Ogawa, H., Nishino, M., Kurosaka, K., and Hirasawa, N. (2021). Augmented reality-assisted femoral bone resection in total knee arthroplasty. JBJS Open Access 6 (3), e21.00001. doi:10.2106/jbjs.oa.21.00001

Venkatesan, M., Mohan, H., Ryan, J. R., Schürch, C. M., Nolan, G. P., Frakes, D. H., et al. (2021). Virtual and augmented reality for biomedical applications. Cell reports Medicine 2 (7), 100348. doi:10.1016/j.xcrm.2021.100348

Vovk, A., Wild, F., Guest, W., and Kuula, T. (2018). “Simulator sickness in augmented reality training using the Microsoft HoloLens,” in Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, April, 2018, 1–9.

Wang, J., Shen, Y., and Yang, S. (2019). A practical marker-less image registration method for augmented reality oral and maxillofacial surgery. International journal of computer assisted radiology and surgery 14 (5), 763–773. doi:10.1007/s11548-019-01921-5

Winnand, P., Ayoub, N., Redick, T., Gesenhues, J., Heitzer, M., Peters, F., et al. (2022). Navigation of iliac crest graft harvest using markerless augmented reality and cutting guide technology: a pilot study. The international journal of medical robotics + computer assisted surgery MRCAS 18 (1), e2318. doi:10.1002/rcs.2318

Wong, K. C., Sun, Y. E., and Kumta, S. M. (2022). Review and future/potential application of mixed reality technology in orthopaedic oncology. Orthopedic research and reviews 14, 169–186. doi:10.2147/orr.s360933

Yang, R., Li, C., Tu, P., Ahmed, A., Ji, T., and Chen, X. (2022). Development and application of digital maxillofacial surgery system based on mixed reality technology. Front. Surg. 8, 719985. doi:10.3389/fsurg.2021.719985

Keywords: augmented reality, mixed reality, orthopedics oncology, maxillofacial oncology, surgery, tracking

Citation: Nasir N, Cercenelli L, Tarsitano A and Marcelli E (2023) Augmented reality for orthopedic and maxillofacial oncological surgery: a systematic review focusing on both clinical and technical aspects. Front. Bioeng. Biotechnol. 11:1276338. doi: 10.3389/fbioe.2023.1276338

Received: 11 August 2023; Accepted: 03 November 2023;

Published: 22 November 2023.

Edited by:

Run Zhang, The University of Queensland, AustraliaReviewed by:

Cosima Prahm, University of Tübingen, GermanyWen Qi, Polytechnic University of Milan, Italy

Copyright © 2023 Nasir, Cercenelli, Tarsitano and Marcelli. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Naqash Nasir, bmFxYXNoLm5hc2lyMkB1bmliby5pdA==; Laura Cercenelli, bGF1cmEuY2VyY2VuZWxsaUB1bmliby5pdA==

†These authors have contributed equally to this work and share first authorship

‡These authors have contributed equally to this work and share last authorship

Naqash Nasir

Naqash Nasir Laura Cercenelli

Laura Cercenelli Achille Tarsitano

Achille Tarsitano Emanuela Marcelli

Emanuela Marcelli