- 1Department of Electrical Engineering and Information Systems, Graduate School of Engineering, The University of Tokyo, Tokyo, Japan

- 2Institute of Industrial Science, The University of Tokyo, Tokyo, Japan

Trade-off between reproducibility of neuronal activities and computational efficiency is one of crucial subjects in computational neuroscience and neuromorphic engineering. A wide variety of neuronal models have been studied from different viewpoints. The digital spiking silicon neuron (DSSN) model is a qualitative model that focuses on efficient implementation by digital arithmetic circuits. We expanded the DSSN model and found appropriate parameter sets with which it reproduces the dynamical behaviors of the ionic-conductance models of four classes of cortical and thalamic neurons. We first developed a four-variable model by reducing the number of variables in the ionic-conductance models and elucidated its mathematical structures using bifurcation analysis. Then, expanded DSSN models were constructed that reproduce these mathematical structures and capture the characteristic behavior of each neuron class. We confirmed that statistics of the neuronal spike sequences are similar in the DSSN and the ionic-conductance models. Computational cost of the DSSN model is larger than that of the recent sophisticated Integrate-and-Fire-based models, but smaller than the ionic-conductance models. This model is intended to provide another meeting point for above trade-off that satisfies the demand for large-scale neuronal network simulation with closer-to-biology models.

1. Introduction

Silicon neuronal networks have gained remarkable attention in recent years. The silicon neuronal network is composed of dedicated circuit that solves the differential equations of a neuron and synapse model. On account of their parallel and distributed structures, silicon neuronal networks can simulate neuronal activities with low power consumption and in high speed, potentially realizing an extremely large-scale network comparable to that of the human brain in future.

Their analog circuit implementation consumes ultra-low power down to several nano watts per silicon neuron (Brink et al., 2013; Kohno and Aihara, 2014; Mandloi et al., 2014), however, it includes technical hurdles of fabrication mismatch and temperature dependence to construct a large-scale network. On the other hand, digital circuit implementation solves this limitation because it is far less sensitive to these factors, though power consumption tends to be higher than the analog circuit implementations. A digital silicon neuronal network comprising 1 million spiking neurons and 256 million synapses has been implemented on a 5.4-billion transistor chip (Merolla et al., 2014). This Application Specific Integrated Circuit (ASIC) chip calculates asynchronously in real-time and consumes just 63 mW. Merolla et al. adopted the leaky integrate-and-fire (LIF) model, one of the most simple neuronal models. The LIF model describes the dynamics of the neuronal membrane potential, which is perturbed by a stimulus inputs over time and converges slowly to the resting potential. It is computationally efficient and suitable for the large-scale implementation. After refining the synaptic efficacy by a learning process, the ASIC was applied to a multi-object detection and classification.

In silicon neuronal networks, diversified neuronal models are used due to a trade-off between the reproducibility of neuronal activity and computational efficiency. For instance, ionic-conductance models can reproduce a neuronal activity accurately but demands excessive computational resources in large-scale implementations. In contrast, neuronal models that approximate a spiking process by resetting the state variable [integrate-and-fire (I&F) -based models], such as the LIF, exponential I&F (Fourcaud-Trocmé et al., 2003), adaptive exponential I&F (Brette and Gerstner, 2005), and Izhikevich (IZH) models, can be implemented at low computational cost. However, it has reduced reproducibility of complex neuronal activities. For example, these models assume fixed maximum membrane potentials during the spike process, whereas these potentials are nonuniform in the nervous system (Alle and Geiger, 2006). Reduction of computational cost benefits the power consumption, system size, and response speed of silicon neuronal networks regardless of implementation types (ASIC, Field Programmable Gate Array, and massively-parallel CPUs). These factors are particularly important for their application to neuromorphic systems (e.g., for robot control) that are required to occupy a small space and operate with restricted power supply. They are also important to implement with realistic power consumption and system size a large-scale network comparable to the human brain that is composed of about a hundred billion neurons. The DSSN model (Kohno and Aihara, 2007) is a qualitative neuronal model designed for efficient implementation in a digital arithmetic circuit. It is a non-I&F-based model that can realize several neuronal activities including the Class I and II in the Hodgkin's classification (Hodgkin, 1948). Because this model does not abbreviate the calculation of the spiking process, it can reproduce the gradient response in Class II neurons (Wang and Rinzel, 2003). Li et al. (2012) constructed an auto-associative memory with 256 fully connected digital spiking silicon neuron (DSSN) models on an FPGA. They reported that a network of Class II neurons yields higher retrieval performance than that of Class I neurons in the associative memory task. Recently, a Hebbian learning rule was applied to this network (Li et al., 2013). In Osawa and Kohno (2015), it was reported that the Class II mode of the IZH model with a standard parameter setting has discontinuous phase resetting curve (PRC) and the auto-associative memory constructed in the same way does not deliver higher performance than the Class I-mode IZH model. Increasing the value of a parameter can solve this problem, however, it considerably distorts the spiking waveform.

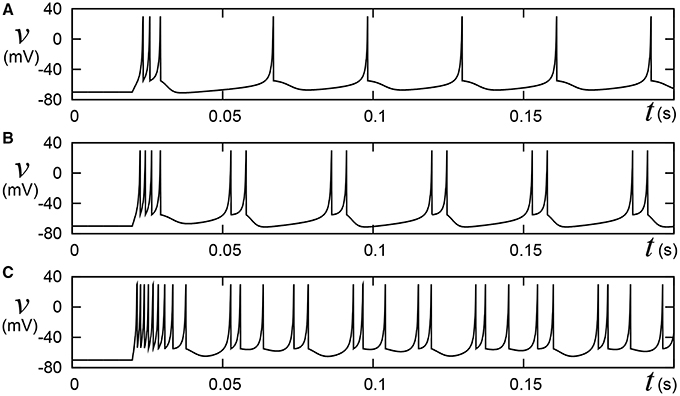

Pospischil et al. (2008) have found appropriate parameter sets for an ionic-conductance model that replicate experimental data in the well-known four classes of cortical and thalamic neurons; regular spiking (RS), fast spiking (FS), intrinsically bursting (IB), and low-threshold spike (LTS). A most typical cortical neuron class, RS is characterized by spike-frequency adaptation; that is, the spike frequency decreases over time in response to a constant stimulus input. Conversely, FS neurons maintain firing at a constant frequency. IB and LTS are the neuron classes with bursting ability. Neurons in the IB class generate a burst firing immediately at the onset of a stimulus, then continue spiking until its termination. The LTS class also exhibits the bursting. Neurons in the LTS class generate a burst firing just after the termination of a sufficient hyperpolarizing stimulus. Their model equations were constructed by integrating several ionic-conductance models reported in the previous researches and contains up to seven variables. For the RS and FS classes, they applied an auto fitting procedure that minimizes the error function of spike intervals by the simulated annealing method. The IZH model supports these four classes. The Pospischils' ionic-conductance model for the IB class generates a long silent phase following the burst firing (Figure 11-left), but the IZH model produced uniform silent phases (Figure 1). Moreover, the response to larger inputs evidently differed from that of Pospischils' model. Because it is not elucidated completely what properties of the neuronal activities are playing the key roles in the information processing in the brain, developing a simple non-I&F model that supports a wide variety of neuronal activities can be significant for both scientific and engineering purposes, even if it consumes computational resources than I&F-based models.

Figure 1. Behaviors of the Izhikevich model in the IB class. Stimulus step input Istim rise at t = 0.02, where (A) Istim = 10, (B) 15, and (C) 30, respectively. A numerical integration step dt is 0.05 ms.

In Nanami and Kohno (2015), we reduced the dimensions of Pospischils models by Keplers method and analyzed their mathematical structures by bifurcation analysis. We also reported parameter sets where the three-variable DSSN model (Kobayashi et al., 2011) produces similar activities to our target classes. While we did not evaluate their similarity, it was clear that the parameter set for the IB class could not reproduce the transient firing patterns from the first bursting phase to the later spiking phase. In this work, we cleared this limitation as follows. Firstly, we repeated the parameter search and found three-variable DSSN model parameter sets for RS, FS, and LTS modes that reproduce corresponding mathematical structures of the reduced Pospischils models and statistical characteristics of spiking patterns. Secondly, for the IB class, we incorporated an additional slow variable and found a parameter set that reproduces corresponding mathematical structures and the statistical characteristics of spiking patterns. The statistical evaluation was performed using Cv and Lv (Shinomoto et al., 2003) that are utilized to characterize the spiking activities of neuronal cells.

The remainder of this paper is organized as follows. Section 2 introduces our neuron model, its basic concepts, and details of its construction. The model is tested and evaluated by simulation in Section 3. Section 4 summarizes the work and suggests ideas for future.

2. Methods

In the nervous system, the neuronal cells are classified by their intrinsic spike properties (Llinas, 1988; Connors and Gutnick, 1990; Gupta et al., 2000). The quantitative characteristics of neuronal activity, including the degree of adaptation and duration of the burst firing, differ even among neurons of the same class. To accommodate this fact, Pospischil et al. assembled various experimental data of different nerve cells in each neuron class, and adjusted the parameters of their ionic-conductance models' equations to each dataset. We expanded the DSSN model so that it has a compatible dynamics and behaviors to a typical parameter of the Pospischil's model in each class.

2.1. DSSN Model

The DSSN model (Kohno and Aihara, 2007) is a qualitative neuron model that can simulate several classes of neuronal activities by Euler's method with fixed point operation. Given appropriate parameter sets, it can reproduce the Class I and II in the Hodgkin's classification as well as Class I* (Fujii and Tsuda 2004) which is defined by a unique mathematical structure named narrow channel. The differential equations of the model is written as follows:

And Kobayashi et al. derived three-variable DSSN model (Kobayashi et al., 2011) that realize autonomous bursting including the square-wave bursting and the elliptic bursting. As explained in the introduction, we reported parameter sets with which this model can produce firing patterns similar to the four classes of cortical and thalamic neuronal activities in Pospischil et al. (2008) but with limited affinity. The equations of this model are given by

where v corresponds to the membrane potential, and n and q are the fast and slow variables, respectively, that abstractly describe the activity of the ion channels. The Parameter I0 is a bias constant and Istim represents the input stimulus. Parameters ϕ, ϵ, and τ control the time constants of the variables. Parameters rx, ax, bx, and cx, where x = fn, fp, gn, gp, hn, or hp, are constants that adjust the nullclines of the variables. All of the variables and constants in this qualitative model are purely abstract with no physical units. Most existing qualitative neuronal models replicate the spiking dynamics by a cubed variable term (FitzHugh, 1961; Nagumo et al., 1962; Hindmarsh and Rose, 1984). Because multiplication consumes significant circuit resources in a digital arithmetic circuit, the DSSN model adopts a piecewise quadratic function so that its numerical integration step includes only one multiplication between variables.

2.2. Reduced Model

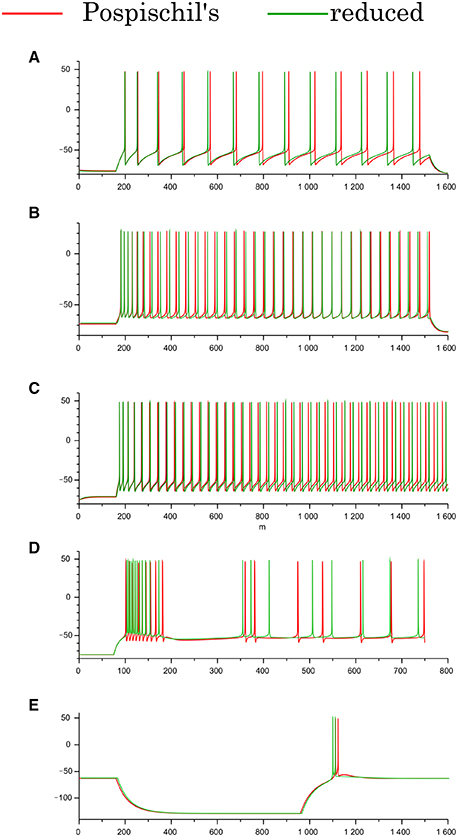

The Pospischils' models contain up to seven variables, which makes an analysis of their mathematical structure difficult. Therefore, by applying Keplers' method (Kepler et al., 1992), we derived three-variable reduced models for the RS, FS, and LTS classes, and a four-variable reduced model for the IB class. Their equations and parameter sets are listed in the Appendix of Supplementary Material. This process reduces the number of variables without critically altering the models' intrinsic structure. The reduced model of the IB class has four variables, because the time-scale differences among the variables prevent the division of the variables into three groups without altering the neuronal activity. Comparing the waveforms of the reduced and Pospischils' models, we confirmed that the dimensional reduction imposed no significant changes on their dynamical properties (Figure 2). In both models, the RS classes have the spike-frequency adaptation (Figures 2A,B). The FS classes have little adaptation. The IB classes have burst firing followed by repetitive single spikes (Figure 2C). The LTS classes generate rebound bursting after removing the long hyperpolarizing stimuli (Figure 2D).

Figure 2. Waveforms of the Pospischils' and reduced models in the cortical and thalamic neuron classes. (A) The excitatory RS class, (B) the inhibitory RS class, (C) the FS class, (D) the IB class, and (E) the LTS class, respectively. An excitatory step stimulus is applied in (A–D). An inhibitory pulse stimulus that starts at 180 ms and ends at 980 ms is applied in (E).

2.3. Analysis of Mathematical Structure

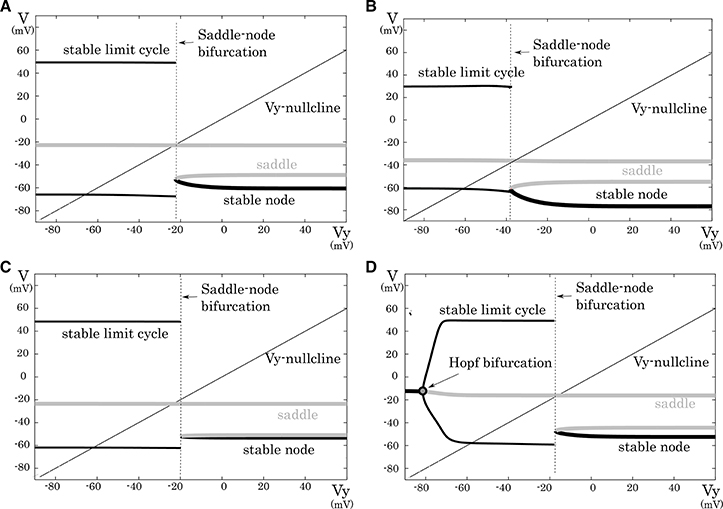

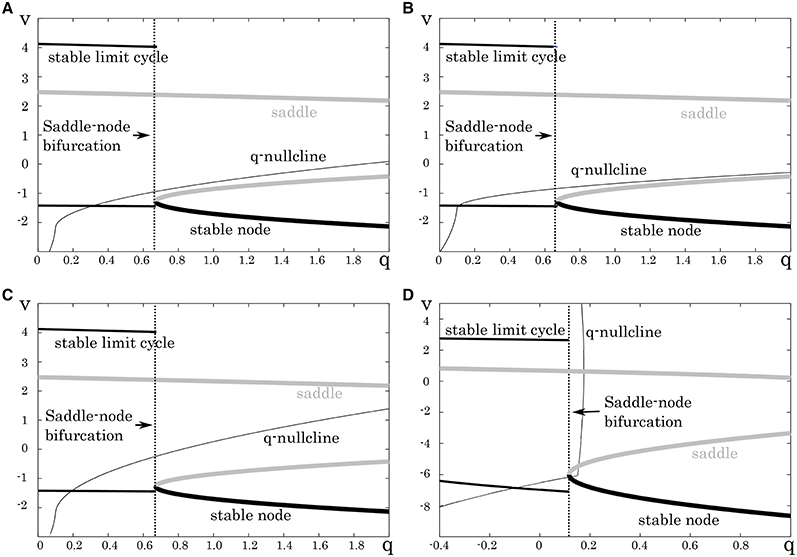

This subsection analyzes mathematical structures of the reduced models derived in the preceding section. The bifurcation diagrams of the reduced models of the excitatory RS, inhibitory RS, FS, and LTS classes are displayed on a V – Vy plane with a Vy–nullcline (Figure 3). The bifurcation analysis was conducted on the fast subsystem including V and Vx while varying the remaining slow variable as a bifurcation parameter. Their mathematical structures are projected onto the V − Vy planes. The fast subsystem of these neuron classes undergo a saddle- node on invariant circle bifurcation. Limit cycles which correspond to the periodic spiking are represented by two curves that correspond to their maximum and minimum values of V. The Vy–nullcline represents the continuous points where is 0. The state point on the stable limit cycle slows down as it approaches to the saddle-node point. Therefore, the slow variable Vy can control the firing frequency, and the variations in the dynamics of Vy induce the different properties of the neuron classes.

Figure 3. Mathematical structures of the reduced models. Projected mathematical structures onto the Vy − V plane. A stimulus sufficiently strong for periodic spiking is applied. The saddle-node bifurcation is observed in (A) the excitatory RS, (B) the inhibitory RS, (C) the FS, and (D) the LTS classes.

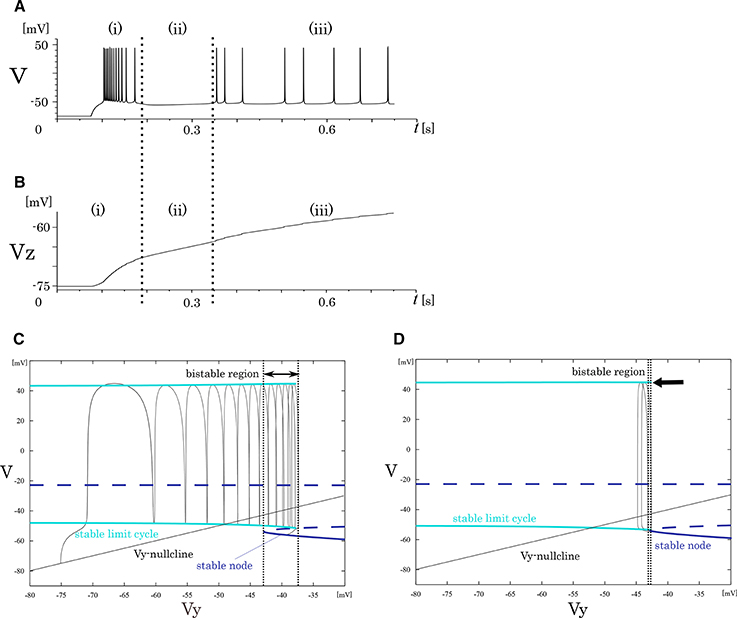

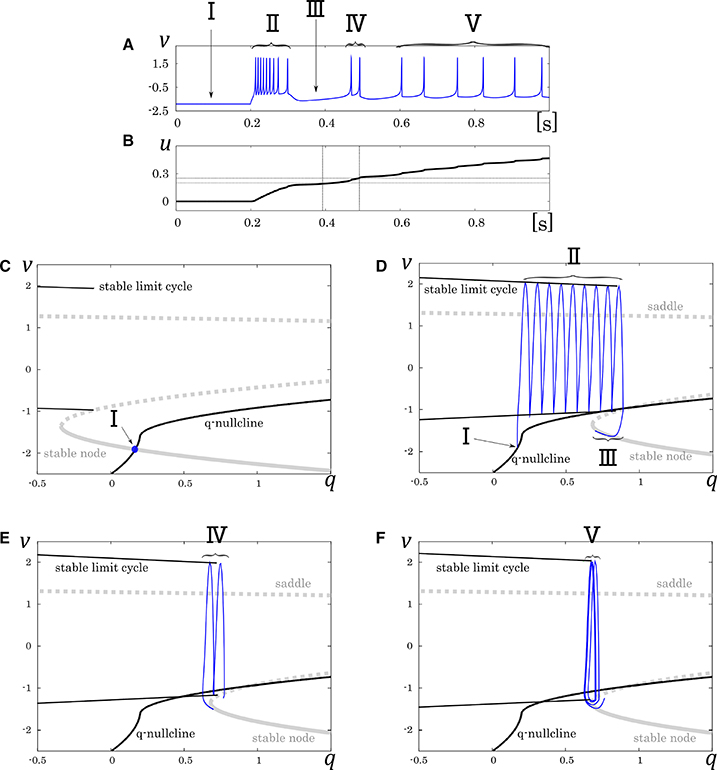

In our analysis of the IB class, we fixed the slowest variable Vz while varying the second slowest variable Vy as the parameter because Vz is sufficiently slower than Vy. The transitions in the mathematical structure of the fast subsystem induced by the change in Vz are presented in Figure 4. The fast subsystem exhibits a homoclinic loop bifurcation and the bistable range of Vy depends on the slowest variable Vz. Here, the bistable region is where the stable limit cycle overlaps the stable node with respect to the Vy-axis. In response to an appropriate step input, the system produces three qualitatively different phases (Figure 4A). They are burst firing, subsequent long silent, and monotonic single spiking phase. This phase transition proceeds as the slowest variable Vz increases (Figure 4B). When Vz is relatively small, a bursting with many spikes is followed by a long silent phase, because the system has a wide bistability region where the state point takes long time to go back to stable limit cycle (Figure 4C). When Vz gets larger, this region is small and the state point can generate only one spike while it is on the limit cycle (Figure 4D).

Figure 4. Mathematical structure of the reduced model in the IB class. (A) Periodic spike waveform of the membrane potential in response to an excitatory step input. (B) Transition of the slowest variable Vz that controls the mathematical structure of the fast system. The projected mathematical structure for the (C) Vz = –70 mV and (D) Vz = −58 mV.

2.4. Parameter Tuning and the Four-Variable DSSN Model

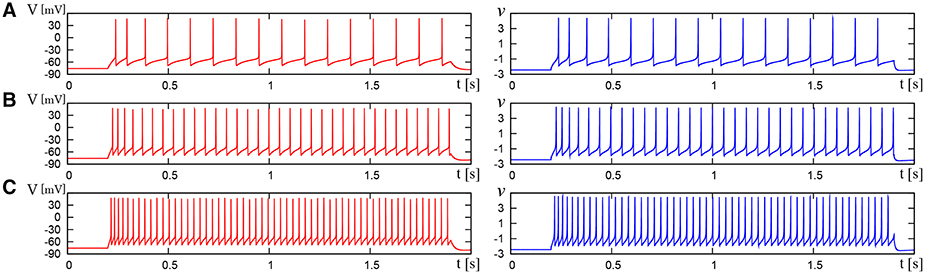

To support the RS, FS, and LTS classes by the DSSN model, we found appropriate parameter sets for it that produce the same structures as those in the above reduced models. Firstly, we determined appropriate parameters of the fast subsystem. They were selected so that the system undergoes a saddle-node bifurcation as observed in the reduced models for the RS, FS, and LTS classes (Figure 5). The parameters of the fast subsystem for the LTS mode was tuned to mimic the hyperpolarizing response in the reduced model. Secondly, we tuned the remaining parameters, which control the dynamics of the slow system, to precisely reproduce the distinctive neuronal activities of the corresponding neuron classes. In the RS mode, we placed the q-nullcline at relatively low area of the Vy plane so that the system state converges to the limit cycle at near the bifurcation point. It induces spike frequency adaptation because the firing frequency decreases as the slow variable q approaches the saddle-node bifurcation point. In the FS mode, the q-nullcline was adjusted in the inverse direction to suppress the adaptation. For the LTS mode, the parameters for the fast subsystem were selected to mimic the resting membrane potential in the Pospischils' model which is higher than that in the other modes. Parameters of the slow variable q were selected so that it decreases under a hyperpolarizing input. Just after the injection of the hyperpolarizing input has been terminated, because the state point is at far from the saddle-node point, it rapidly moves along the stable limit cycle. This dynamics produces the rebound bursting.

Figure 5. Mathematical structures of the DSSN models. Projected mathematical structures onto the q-v plane. A stimulus sufficiently strong for periodic spiking is applied. A saddle-node on invariant circle bifurcation is seen in (A) the excitatory RS, (B) inhibitory RS, (C) FS, and (D) LTS.

To support the IB class by the DSSN model, we added a slowest variable in order to vary the bistable area, which was explained in the last part of the previous subsection. The four-variable DSSN model is written as follows:

where, the new variable u dynamically tunes the value of ϕ. Because the equation of u does not include multiplication between variables, and ϕ is selected in the list of possible values (ϕ0, ϕ1, and ϕ2), it does not increase the number of multiplications in a numerical integration step. Its mathematical structure is illustrated in Figure 6. With no stimulus, the state point resides at a point which corresponds to the resting state (Figure 6, I). This point is at the intersection of the q-nullcline and the stable node. In response to a positive sustained input, the state point jumps into the stable limit cycle and keep firing repetitively (Figure 6, II). The state point drifts rightward because most part of the limit cycle is at above the q-nullcline. Therefore, the state point arrives at the right endpoint of the stable limit cycle and falls to the stable node. Subsequently, the state point moves leftward along the stable node because it is at below the q-nullcline, which corresponds to the long silent phase (Figure 6, III). Meanwhile, the slowest variable u exceeds ru0, and the stable limit cycle shifts leftward. Therefore, the state point goes into the limit cycle again and makes a few spikes along the limit cycle before returning to the stable node (Figure 6, IV). When u exceeds ru1, a single spike is generated on the limit cycle before the state point goes back to the stable node (Figure 6, V). The DSSN model qualitatively reproduces the behaviors of the four neuron classes by numerical integration with the Euler's method (dt = 0.0001 s).

Figure 6. Mathematical structure of the DSSN model in the IB class. (A) Waveform of the membrane potential in response to a step input that rises at t = 0.2 s. (B) Transition of the complementary variable u. (C) Projected mathematical structure without any input stimulus. Projected mathematical structure under a sustained stimulus where (D) u < ru0, (E) ru0 < u < ru1, and (F) ru1 < u. Each roman number corresponds to a spiking phase.

The DSSN model qualitatively reproduces the behaviors of the four neuron classes by numerical integration with the Euler's method (dt = 0.0001 s). For example, the calculation of v for IB classes is conducted as follows;

where, i denotes 0, 1, or 2 and j denotes n or p. In these equations, we can calculate a product of parameters in advance and store it as a constant value. Parameters are a sum of at most 7 (in average 3.78) positive or negative power-of-two numbers so that the multiplication of a variable and a parameter is realized by combination of bit shift and add operations. Multiplication is only used for the calculation of v2.

3. Results

3.1. Comparison of the Waveforms

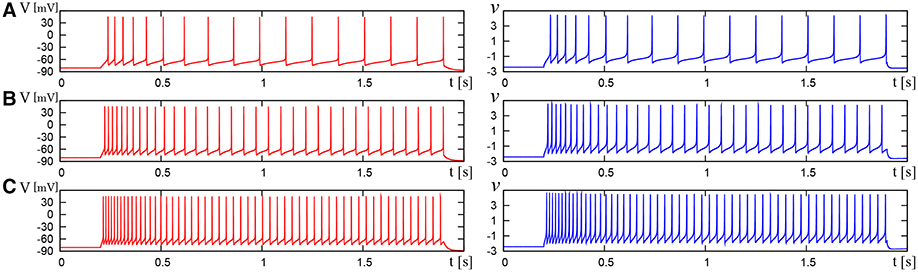

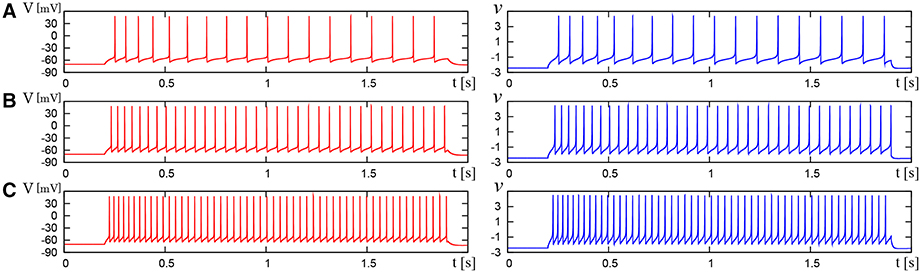

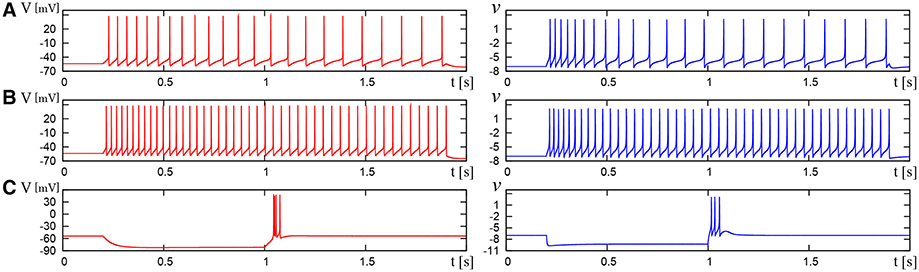

We compared the waveforms generated by the DSSN and Pospischils' ionic-conductance models for several magnitudes of step inputs. The DSSN model accurately reproduce Pospischils' model in each neuron class (Figures 7–11). In the excitatory RS mode, both models exhibit the spike-frequency adaptation, and their firing frequencies increase as input stimuli become large (Figure 7). Figure 8 shows the inhibitory RS mode that has more stronger spike-frequency adaptation than the excitatory RS mode. Both models in the FS mode fire with almost constant frequency, which increases as the stimulus is strengthened. In the LTS mode (Figure 10), both models generate periodic spiking with spike-frequency adaptation in response to positive step stimulus and a rebound bursting at the end of a negative stimulus. Both models in the IB mode exhibit repetitive spiking following a burst firing in response to a weak positive input (Figure 11). The frequency of the repetitive spiking gets higher in response to a stronger positive stimulus.

Figure 7. Waveforms of Pospischil and DSSN models, corresponding to an excitatory neuron in the RS class. The Pospischils' model (left) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 1.1, (B) 1.5, (C) 2.1 nA. The DSSN model (right) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 2.9221, (B) 3.2521, (C) 3.7921.

Figure 8. Waveforms of Pospischil and DSSN models, corresponding to an inhibitory neuron in the RS class. The Pospischils' model (left) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 0.6, (B) 1.1, (C) 1.7 nA. The DSSN model (right) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 2.97, (B) 3.57, (C) 4.29.

Figure 9. Waveforms of Pospischil and DSSN models, corresponding to a neuron in the FS class. The Pospischils' model (left) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 0.78, (B) 0.98, (C) 1.22 nA. The DSSN model (right) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 2.7, (B) 2.95, (C) 3.25.

Figure 10. Waveforms of Pospischil and DSSN models, corresponding to a neuron in the LTS class. The Pospischils' model (left) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 0.34, (B) 0.79, (C) –0.6 nA. The DSSN model (right) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 0.278, (B) 0.308, (C) –1.7. (A,B) Pospischils' model (left) and DSSN model (right) generate periodic spike in response to a depolarizing step input rising at t = 0.2. (C) A hyperpolarizing step input is applied from t = 0.2 to t = 1.0.

Figure 11. Waveforms of Pospischils' model and DSSN model which correspond to a neuron in the IB class. The Pospischils' model (left) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 2.38, (B) 2.6645, (C) 3.0495 nA. The DSSN' model (right) generates periodic spikes in response to a step input rising at t = 0.2 where Istim is (A) 0.89, (B) 1.42, (C) 1.81.

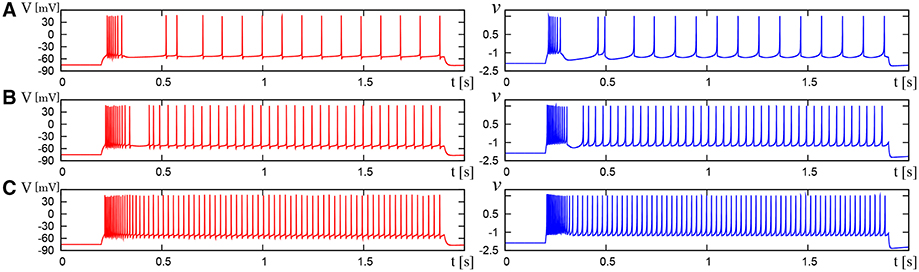

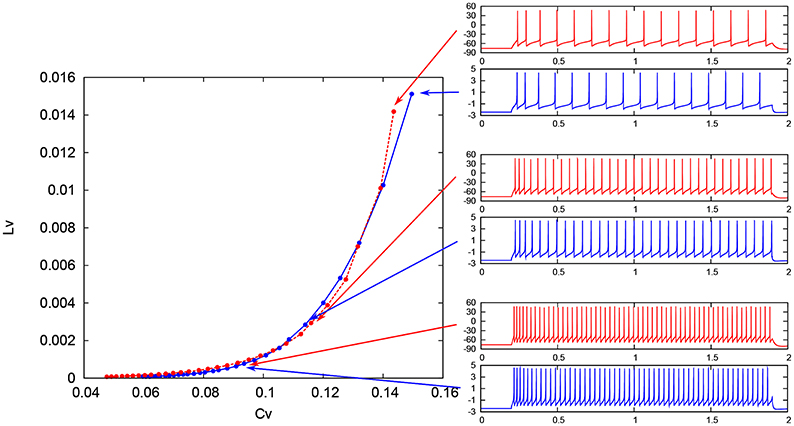

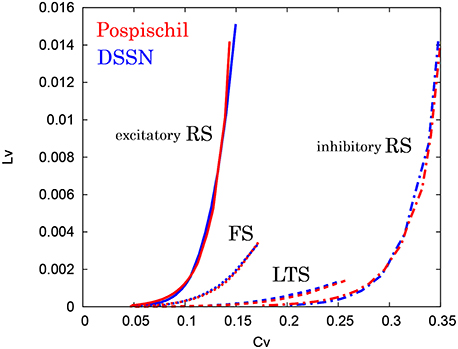

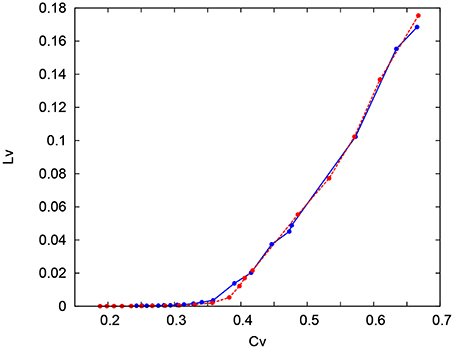

3.2. CV − LV

To evaluate quantitatively our DSSN models, we adopted the coefficient of variation (CV) and local variation (LV) (Shinomoto et al., 2003), because the spike frequency cannot capture the spiking characteristics that changes over time. These statistics were developed by Shinomoto et al. to classify the spike sequences in the cortical areas of awake monkeys (Shinomoto et al., 2003), which have been used at various situations (Koyama and Shinomoto, 2005; Miura et al., 2006; Shinomoto et al., 2009). They are defined as follows:

where Ti denotes the ith interspike interval, is the average of Ti, and n is the number of spikes in the sequence. The coefficient 3 in Equation (21) is chosen so that the expectation value of LV in the Poisson spike sequence equals one. The CV is the standard deviation divided by the mean spike interval, and LV becomes large when the duration of the spike intervals locally fluctuates. Both of CV and LV are zero for a regular spike sequence with constant Ti. We measured the CV and LV of the spike sequences generated by Pospischils' and our DSSN models while varying the strength of the step input. As shown in the Figure 12, the results are compared by their plot on the CV − LV plane. The CV − LV characteristics of the Pospischils' and the DSSN models in each neuron class are plotted in Figures 13, 14. We confirmed that both neuron models have similar statistical properties in each neuron class.

Figure 12. Spike sequences and their representation in the CV − LV plane. The LV vs. CV for spike sequences generated by the Pospischil (red) and the DSSN (blue) models in the excitatory RS mode in response to various magnitudes of step input.

Figure 13. CV − LV characteristics in the RS, FS, and LTS classes. The CV − LV characteristics of the Pospischil (red) and the DSSN (blue) models in the excitatory and inhibitory RS, FS, and LTS classes.

Figure 14. CV − LV characteristics in the IB classes. The CV − LV characteristics of the Pospischil (red) and DSSN (blue) model.

4. Conclusion

In this paper, we expanded the DSSN model so that it can support the RS, FS, LTS, and IB classes, according to the following steps. Firstly, we reduced the number of variables of the Pospischils' ionic-conductance model utilizing the Keplers' method. The reduced models contained three variables for the RS, FS, and LTS classes and four variables for the IB class. We then elucidated their mathematical structures, including their structures on the phase portraits. The FS class is generically considered to exhibit a Hopf bifurcation; however, it yielded a saddle-node bifurcation in the Pospischils' model. Secondly, we determined the appropriate parameter sets that produce the same mathematical structures in the DSSN model as those in the reduced models. To support the IB class, the four-variable DSSN was developed by adding a slowest variable. It dynamically tunes the structure of the fast system. If ϕ0, ϕ1, and ϕ2 are equal to ϕ, the four-variable DSSN model is compatible with the three-variable one. Finally, it was confirmed that the DSSN models behave very similarly to the Pospischils' models in response to several magnitudes of step input. The similarity was quantitatively confirmed by measuring the CV and LV statistics of the spike sequences, and confirming that both neuron models have the same statistical properties in each neuron class. The model is solved by Euler's method (Δt = 0.0001[s]), and is realized by only one multiplier that consumes significant digital circuit resources.

The DSSN model will consume more circuit resources than the IZH model because it has more variables, while the number of multiplication per a numerical integration step is the same. But it captures more aspects of the neuronal activity because it is not an I& F-based model. As is apparent from the equations, the implementation cost of the DSSN model is far lower than the ionic-conductance models. Circuit implementation of this model will be reported in our future publication. In software simulation, the computational cost of the DSSN mode is much lower than that of the ionic-conductance models, and comparable with that of the IZH per a numerical integration step. We conducted simulation of two million spikes, the DSSN and IZH models in the RS mode consumed 18.51 and 2.76 s, respectively. The DSSN model required over five times longer calculation, because the step of the DSSN model (0.0001[s]) is five times smaller than that of the IZH model (0.0005[s]). If the fixed point operation is used, it will be simulated faster. The DSSN model may be also useful for computer simulation in which more realistic model than I&F-based models are required.

By the expansion in this work, the DSSN model supports the RS, FS, LTS, and IB classes as well as the Class I and II in the Hodgkin's classification, Class I*, square-wave bursting, elliptic bursting. We expect it can be a basis for silicon- and software- based spiking neuronal networks that capture the dynamics in the nervous system approximately, which can contribute to the neuroscience from the viewpoint of analysis by construction and the neuromorphic engineering. In this work, we tuned the parameters manually. We will work on an auto-fitting method for the DSSN model with an error function based on the mathematical structure.

Author Contributions

TN performed the study and TK supervised it.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

This work was supported by JSPS Grant-in-Aid for scientific Exploratory Research Number 25240045.

Supplementary Material

The Supplementary Material for this article can be found online at: http://journal.frontiersin.org/article/10.3389/fnins.2016.00181

References

Alle, H., and Geiger, J. R. P. (2006). Combined analog and action potential coding in hippocampal mossy fibers. Science 311, 1290–1293. doi: 10.1126/science.1119055

Brette, R., and Gerstner, W. (2005). Adaptive exponential integrate-and-fire model as an effective description of neuronal activity. J. Neurophysiol. 94, 3637–3642. doi: 10.1152/jn.00686.2005

Brink, S., Nease, S., and Hasler, P. E. (2013). Computing with networks of spiking neurons on a biophysically motivated floating-gate based neuromorphic integrated circuit. Neural Netw. 45, 39–49. doi: 10.1016/j.neunet.2013.02.011

Connors, B. W., and Gutnick, M. J. (1990). Intrinsic firing patterns of diverse neocortical neurons. Trends Neurosci. 13, 99–104.

FitzHugh, R. (1961). Impulses and physiological states in theoretical models of nerve membrane. Biophys. J. 1, 445–466.

Fourcaud-Trocmé, N., Hansel, D., van Vreeswijk, C., and Brunel, N. (2003). How spike generation mechanisms determine the neuronal response to fluctuating inputs. J. Neurosci. 23, 11628–11640.

Fujii, H., and Tsuda, I. (2004). “Itinerant dynamics of class I* neurons coupled by gap junctions,” in Computational Neuroscience: Cortical Dynamics, Vol. 3146, eds P. Érdi, A. Esposito, M. Marinaro, and S. Scarpetta (Berlin; Heidelberg: Springer), 140–160.

Gupta, A., Wang, Y., and Markram, H. (2000). Organizing principles for a diversity of gabaergic interneurons and synapses in the neocortex. Science 287, 273. doi: 10.1126/science.287.5451.273

Hindmarsh, J. L., and Rose, R. M. (1984). A model of neuronal bursting using tree coupled first order differential equations. Philos. Trans. R. Soc. Lond. B221, 87–102.

Hodgkin, A. L. (1948). The local electric changes associated with repetitive action in a non-medullated axon. J. Physiol. 107, 165–181.

Kepler, T. B., Abbott, L. F., and Marder, E. (1992). Reduction of conductance-based neuron models. Biol. Cybern. 66, 381–387.

Kobayashi, W., Kohno, T., and Aihara, K. (2011). “3-variable digital spiking silicon neuron,” in Proceedings of the 24th Workshop on Circuit and Systems (in Japanese) (Hyogo), 1–5.

Kohno, T., and Aihara, K. (2007). “Digital spiking silicon neuron: concept and behaviors in GJ-coupled network,” in Proceedings of International Symposium on Artificial Life and Robotics (Beppu).

Kohno, T., and Aihara, K. (2014). “A qualitative-modeling-based low-power silicon nerve membrane,” in Electronics, Circuits and Systems (ICECS), 2014 21st IEEE International Conference on (Kitakyushu), 199–202.

Koyama, S., and Shinomoto, S. (2005). Empirical bayes interpretations of random point events. J. Phys. A Math. Gen. 38, L531–L537. doi: 10.1088/0305-4470/38/29/l04

Li, J., Katori, Y., and Kohno, T. (2012). An FPGA-based silicon neuronal network with selectable excitability silicon neurons. Front. Neurosci. 6:183. doi: 10.3389/fnins.2012.00183

Li, J., Katori, Y., and Kohno, T. (2013). “Hebbian learning in FPGA silicon neuronal network,” in The 1st IEEE/IIAE International Conference on Intelligent Systems and Image Processing (Kitakyushu).

Llinas, R. R. (1988). The intrinsic electrophysiological properties of mammalian neurons: insights into central nervous system function. Science 23, 1654–1664.

Mandloi, N., Bartolozzi, C., and Indiveri, G. (2014). “Smart motion sensing for autonomous robots,” in Biomedical Circuits and Systems Conference (BioCAS) (Atlanta, GA: IEEE), 520–523.

Merolla, P., Arthur, J., Alvarez-Icaza, R., Cassidy, A., Sawada, J., Akopyan, F., et al. (2014). A million spiking-neuron integrated circuit with a scalable communication network and interface. Science 345, 668–673. doi: 10.1126/science.1254642

Miura, K., Okada, M., and Amari, S.-I. (2006). Estimating spiking irregularities under changing environments. Neural Comput. 18, 2359–2386. doi: 10.1162/neco.2006.18.10.2359

Nagumo, J. S., Arimoto, S., and Yoshizawa, S. (1962). An active pulse transmission line simulating nerve axon. Proc. IRE 50, 2061–2071.

Nanami, T., and Kohno, T. (2015). Cortex and thalamic neuron models for digital arithmetic circuit. Seisan Kenkyu 67, 297–300. doi: 10.11188/seisankenkyu.67.297

Osawa, Y., and Kohno, T. (2015). “Associative memory with class I and II Izhikevich model,” in The 2015 International Conference on Artificial Life and Robotics (ICAROB 2015) (Oita).

Pospischil, M., Toledo-Rodriguez, M., Monier, C., Piwkowska, Z., Bal, T., Fregnac, Y., et al. (2008). Minimal hodgkin-huxley type models for different classes of cortical and thalamic neurons. Biol. Cybern. 99, 427–441. doi: 10.1007/s00422-008-0263-8

Shinomoto, S., Kim, H., Shimokawa, T., Matsuno, N., Funahashi, S., Shima, K., et al. (2009). Relating neuronal firing patterns to functional differentiation of cerebral cortex. PLoS Comput. Biol. 5:e1000433. doi: 10.1371/journal.pcbi.1000433

Keywords: digital silicon neuronal network, qualitative neuron model, nonlinear dynamics, cerebral cortex, thalamus

Citation: Nanami T and Kohno T (2016) Simple Cortical and Thalamic Neuron Models for Digital Arithmetic Circuit Implementation. Front. Neurosci. 10:181. doi: 10.3389/fnins.2016.00181

Received: 18 January 2016; Accepted: 08 April 2016;

Published: 13 May 2016.

Edited by:

Tim Pearce, University of Leicester, UKReviewed by:

Theodore Yu, Texas Instruments Inc., USASiddharth Joshi, University of California, San Diego, USA

Copyright © 2016 Nanami and Kohno. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) or licensor are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Takuya Nanami, bmFuYW1pQHNhdC50LnUtdG9reW8uYWMuanA=

Takuya Nanami

Takuya Nanami Takashi Kohno

Takashi Kohno