Abstract

Integrated Information Theory is one of the leading models of consciousness. It aims to describe both the quality and quantity of the conscious experience of a physical system, such as the brain, in a particular state. In this contribution, we propound the mathematical structure of the theory, separating the essentials from auxiliary formal tools. We provide a definition of a generalized IIT which has IIT 3.0 of Tononi et al., as well as the Quantum IIT introduced by Zanardi et al. as special cases. This provides an axiomatic definition of the theory which may serve as the starting point for future formal investigations and as an introduction suitable for researchers with a formal background.

1 Introduction

Integrated Information Theory (IIT), developed by Giulio Tononi and collaborators [5, 45–47], has emerged as one of the leading scientific theories of consciousness. At the heart of the latest version of the theory [19, 25, 26, 31, 40] is an algorithm which, based on the level of integration of the internal functional relationships of a physical system in a given state, aims to determine both the quality and quantity (‘ value’) of its conscious experience.

While promising in itself [12, 43], the mathematical formulation of the theory is not satisfying to date. The presentation in terms of examples and accompanying explanation veils the essential mathematical structure of the theory and impedes philosophical and scientific analysis. In addition, the current definition of the theory can only be applied to comparably simple classical physical systems [1], which is problematic if the theory is taken to be a fundamental theory of consciousness, and should eventually be reconciled with our present theories of physics.

To resolve these problems, we examine the essentials of the IIT algorithm and formally define a generalized notion of Integrated Information Theory. This notion captures the inherent mathematical structure of IIT and offers a rigorous mathematical definition of the theory which has ‘classical’ IIT 3.0 of Tononi et al. [25, 26, 31] as well as the more recently introduced Quantum Integrated Information Theory of Zanardi, Tomka and Venuti [50] as special cases. In addition, this generalization allows us to extend classical IIT, freeing it from a number of simplifying assumptions identified in [3]. Our results are summarised in Figure 1.

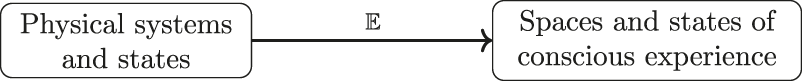

FIGURE 1

An Integrated Information Theory specifies for every system in a particular state its conscious experience, described formally as an element of an experience space. In our formalization, this is a map from the system class Sys into a class Exp of experience spaces, which, first, sends each system S to its space of possible experiences , and, second, sends each state to the actual experience the system is having when in that space, The definition of this map in terms of axiomatic descriptions of physical systems, experience spaces and further structure used in classical IIT is given in the first half of this paper.

In the associated article [44] we show more generally how the main notions of IIT, including causation and integration, can be treated, and an IIT defined, starting from any suitable theory of physical systems and processes described in terms of category theory. Restricting to classical or quantum process then yields each of the above as special cases. This treatment makes IIT applicable to a large class of physical systems and helps overcome the current restrictions.

Our definition of IIT may serve as the starting point for further mathematical analysis of IIT, in particular if related to category theory [30, 49]. It also provides a simplification and mathematical clarification of the IIT algorithm which extends the technical analysis of the theory [1, 41, 42] and may contribute to its ongoing critical discussion [2, 4, 8, 23, 27, 28, 33]. The concise presentation of IIT in this article should also help to make IIT more easily accessible for mathematicians, physicists and other researchers with a strongly formal background.

This work is concerned with the most recent version of IIT as proposed in [25, 26, 31, 40] and similar papers quoted below. Thus our constructions recover the specific theory of consciousness referred to as IIT 3.0 or IIT 3.x, which we will call classical IIT in what follows. Earlier proposals by Tononi et al. that also aim to explicate the general idea of an essential connection between consciousness and integrated information constitute alternative theories of consciousness which we do not study here. A yet different approach would be to take the term ‘Integrated Information Theory’ to refer to the general idea of associating conscious experience with some pre-theoretic notion of integrated information, and to explore the different ways that this notion could be defined in formal terms [4, 27, 28, 37].

Relation to Other Work

This work develops a thorough mathematical perspective of one of the promising contemporary theories of consciousness. As such it is part of a number of recent contributions which seek to explore the role and prospects of mathematical theories of consciousness [11, 15, 18, 30, 49], to help overcome problems of existing models [17, 18, 34] and to eventually develop new proposals [6, 13, 16, 20, 22, 29, 39].

1.1 Structure of Article

We begin by introducing the necessary ingredients of a generalised Integrated Information Theory in Sections 2–4, namely physical systems, experience spaces and cause-effect repertoires. Our approach is axiomatic in that we state only the precise formal structure which is necessary to apply the IIT algorithm. We neither motivate nor criticize these structures as necessary or suitable to model consciousness. Our goal is simply to recover IIT 3.0. In Section 5, we introduce a simple formal tool which allows us to present the definition of the algorithm of an IIT in a concise form in Sections 6 and 7. Finally, in Section 8, we summarise the full definition of such a theory. The result is the definition of a generalized IIT. We call any application of this definition ‘an IIT’.

Following this we give several examples including IIT 3.0 in Section 9 and Quantum IIT in Section 10. In Section 11 we discuss how our formulation allows one to extend classical IIT in several fundamental ways, before discussing further modifications to our approach and other future work in Section 12. Finally, the appendix includes a detailed explanation of how our generalization of IIT coincides with its usual presentation in the case of classical IIT.

2 Systems

The first step in defining an Integrated Information Theory (IIT) is to specify a class of physical systems to be studied. Each element is interpreted as a model of one particular physical system. In order to apply the IIT algorithm, it is only necessary that each element S come with the following features.

Definition 1.

A

system classis a class each of whose elements

S, called

systems, come with the following data:

- 1.

A set of states;

- 2.

for every , a set of subsystems and for each an induced state ;

- 3.

a set of decompositions, with a given trivial decomposition;

- 4.

for each a corresponding cut system and for each state a corresponding cut state.

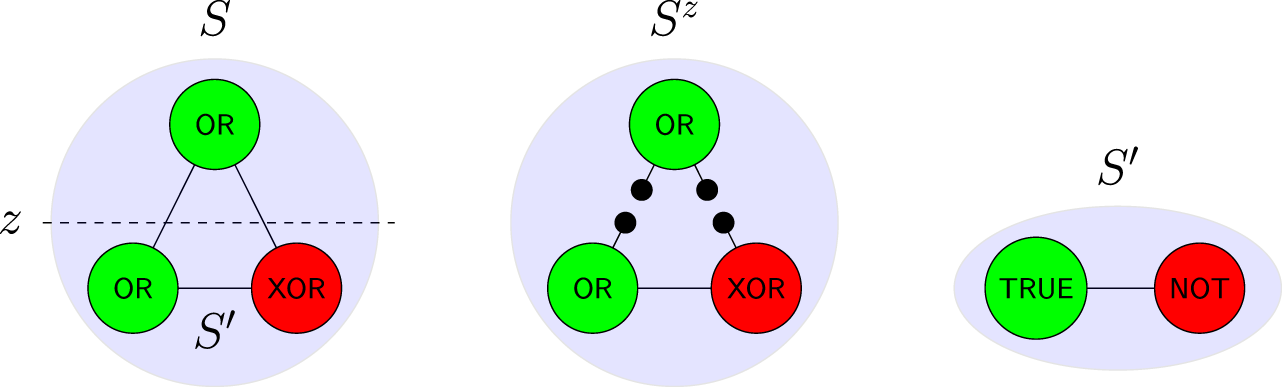

FIGURE 2

As an example of Definition 1 similar to IIT 3.0, consider simple systems given by sets of nodes (or ‘elements’), with a state assigning each node the state ‘on’ (depicted green) or ‘off’ (red). Each system comes with a time evolution shown by labelling each node with how its state in the next time-step depends on the states of the others. Decompositions of a system S correspond to binary partition of the nodes, such as z above. The cut system is given by modifying the time evolution of S so that the two halves do not interact; in this case all connections between the halves are replaced by sources of noise which send ‘on’ or ‘off’ with equal likelihood, depicted as black dots above. Given a current state s of S, any subset of the nodes (such as those below the dotted line) determines a subsystem , with time evolution obtained from that of S by fixing the nodes in (here, the upper node) to be in the state specified by s. Note that while in this example any subsystem (subset of S) determines a decomposition (partition of S) we do not require such a relationship in general.

3 Experience

An IIT aims to specify for each system in a particular state its conscious experience. As such, it will require a mathematical model of such experiences. Examining classical IIT, we find the following basic features of the final experiential states it describes which are needed for its algorithm.

Firstly, each experience e should crucially come with an intensity, given by a number in the non-negative reals (including zero). This intensity will finally correspond to the overall intensity of experience, usually denoted by . Next, in order to compare experiences, we require a notion of distance d(e,e′) between any pair of experiences e,e′. Finally, the algorithm will require us to be able to rescale any given experience e to have any given intensity. Mathematically, this is most easily encoded by letting us multiply any experience e by any number . In summary, a minimal model of experience in a generalized IIT is the following.

Definition 2.

An

experience spaceis a set

Ewith:

- 1.

An intensity function

- 2.

A distance function

- 3.

A scalar multiplication, denoted , satisfying

for all and .

Example 3. Any metric space may be extended to an experience space in various ways. E.g., one can define , and define the distance asThis is the definition used in classical IIT (cf. Section 9 and Appendix A). An important operation on experience spaces is taking their product.

Definition 4. For experience spaces E and F, we define the product to be the space with distanceintensity and scalar multiplication . This generalizes to any finite product of experience spaces.

4 Repertoires

In order to define the experience space and individual experiences of a system S, an IIT utilizes basic building blocks called ‘repertoires’, which we will now define. Next to the specification of a system class, this is the essential data necessary for the IIT algorithm to be applied.

Each repertoire describes a way of ‘decomposing’ experiences, in the following sense. Let D denote any set with a distinguished element 1, for example the set of decompositions of a system S, where the distinguished element is the trivial decomposition .

Definition 5. Let e be an element of an experience space E. A decomposition of e over D is a mapping with .In more detail, a repertoire specifies a proto-experience for every pair of subsystems and describes how this experience changes if the subsystems are decomposed. This allows one to assess how integrated the system is with respect to a particular repertoire. Two repertoires are necessary for the IIT algorithm to be applied, together called the cause-effect repertoire.For subsystems , define . This set describes the decomposition of both subsystems simultaneously. It has a distinguished element .

Definition 6. A cause-effect repertoire at S is given by a choice of experience space , called the space of proto-experiences, and for each and , a pair of elementsand for each of them a decomposition over .Examples of cause-effect repertoires will be given in Sections 9 and 10. A general definition in terms of process theories is given in [44]. For the IIT algorithm, a cause-effect repertoire needs to be specified for every system S, as in the following definition.

Definition 7. A cause-effect structure is a specification of a cause-effect repertoire for every such thatThe names ‘cause’ and ‘effect’ highlight that the definitions of and in classical and Quantum IIT describe the causal dynamics of the system. They are intended to capture the manner in which the ‘current’ state s of the system, when restricted to M, constrains the ‘previous’ or ‘next’ state of P, respectively.

5 Integration

We have now introduced all of the data required to define an IIT; namely, a system class along with a cause-effect structure. From this, we will give an algorithm aiming to specify the conscious experience of a system. Before proceeding to do so, we introduce a conceptual short-cut which allows the algorithm to be stated in a concise form. This captures the core ingredient of an IIT, namely the computation of how integrated an entity is.

Definition 8. Let E be an experience space and e an element with a decomposition over some set D. The integration level of e relative to this decomposition isHere, d denotes the distance function of E, and the minimum is taken over all elements of D besides 1. The integration scaling of e is then the element of E defined bywhere denotes the normalization of e, defined asFinally, the integration scaling of a pair of such elements is the pairwhere is the minimum of their integration levels.We will also need to consider indexed collections of decomposable elements. Let S be a system in a state and assume that for every an element of some experience space with a decomposition over some is given. We call a collection of decomposable elements, and denote it as .

Definition 9. The core of the collection is the subsystem for which is maximal.1 The core integration scaling of the collection is . The core integration scaling of a pair of collections is , where are the cores of and , respectively.

6 Constructions: Mechanism Level

Let be a physical system whose experience in a state is to be determined. The first level of the algorithm involves fixing some subsystem , referred to as a ‘mechanism’, and associating to it an object called its ‘concept’ which belongs to the concept space

For every choice of , called a ‘purview’, the repertoire values and are elements of with given decompositions over . Fixing M, they provide elements with decompositions over Sub(S) given by

The concept of M is then defined as the core integration scaling of this pair of collections,

It is an element of . Unraveling our definitions, the concept thus consists of the values of the cause and effect repertoires at their respective ‘core’ purviews , i.e. those which make them ‘most integrated’. These values and are then each rescaled to have intensity given by the minima of their two integration levels.

7 Constructions: System Level

The second level of the algorithm specifies the experience of system S in state s. To this end, all concepts of a system are collected to form its Q-shape, defined as

This Is an Element of the Spacewhere , which is finite and independent of the state s according to our assumptions. We can also define a Q-shape for any cut of S. Let be a decomposition, the corresponding cut system and be the corresponding cut state. We defineBecause of Eq. 4, and since the number of subsystems remains the same when cutting, is also an element of . This gives a mapwhich is a decomposition of over . Considering this map for every subsystem of S gives a collection of decompositions defined asThis is the system level-object of relevance and is what specifies the experience of a system according to IIT.

Definition 10. The experience of system S in the state isThe definition implies that , where is the core of the collection , called the major complex. It describes which part of system S is actually conscious. In most cases there will be a natural embedding for a subsystem M of S, allowing us to view as an element of itself. Assuming this embedding to exist allows us to define an Integrated Information Theory concisely in the next section.

8 Integrated Information Theories

We can now summarize all that we have said about IITs.

Definition 11. An Integrated Information Theory is determined as follows. The data of the theory is a system class along with a cause-effect structure. The theory then gives a mappinginto the class of all experience spaces, sending each system S to its space of experiences defined in Eq. 12, and a mappingwhich determines the experience of the system when in a state s, defined in Eq. 14.The quantity of the system’s experience is given byand the quality of the system’s experience is given by the normalized experience . The experience is “located” in the core of the collection , called the major complex, which is a subsystem of S.In the next sections we specify the data of several example IITs.

9 Classical IIT

In this section we show how IIT 3.0 [25, 26, 31, 48] fits in into the framework developed here. A detailed explanation of how our earlier algorithm fits with the usual presentation of IIT is given in Appendix A. In [44] we give an alternative categorical presentation of the theory.

9.1 Systems

We first describe the system class underlying classical IIT. Physical systems S are considered to be built up of several components , called elements. Each element comes with a finite set of states , equipped with a metric. A state of S is given by specifying a state of each element, so thatWe define a metric d on by summing over the metrics of the element state spaces and denote the collection of probability distributions over by . Note that we may view as a subset of by identifying any with its Dirac distribution , which is why we abbreviate by s occasionally in what follows.

Additionally, each system comes with a probabilistic (discrete) time evolution operator or transition probability matrix, sending each to a probabilistic state . Equivalently it may be described as a convex-linear mapFurthermore, the evolution T is required to satisfy a property called conditional independence, which we define shortly.

The class consists of all possible tuples of this kind, with the trivial system I having only a single element with a single state and trivial time evolution.

9.2 Conditioning and Marginalizing

In what follows, we will need to consider two operations on the map T. Let M be any subset of the elements of a system and its complement. We again denote by the Cartesian product of the states of all elements in M, and by the probability distributions on . For any , we define the conditioning [26] of T on p as the mapwhere denotes the multiplication of these probability distributions to give a probability distribution over S. Next, we define marginalisation over M as the mapsuch that for each and we have

In particular for any map T as above we call the marginal of T over M and we write for each . Conditional independence of T may now be defined as the requirement thatwhere the right-hand side is again a probability distribution over .

9.3 Subsystems, Decompositions and Cuts

Let a system S in a state be given. The subsystems are characterized by subsets of the elements that constitute S. For any subset of the elements of S, the corresponding subsystem is also denoted M and is again given by the product of the , with time evolutionwhere is the restriction of the state s to and denotes the conditioning on the Dirac distribution .

The decomposition set of a system S consists of all partitions of the set N of elements of S into two disjoint sets M and . We denote such a partition by . The trivial decomposition 1 is the pair .

For any decomposition the corresponding cut system is the same as S but with a new time evolution . Using conditional independence, it may be defined for each aswhere denotes the uniform distribution on . This is interpreted in the graph depiction as removing all those edges from the graph whose source is in and whose target is in M. The corresponding input of the target element is replaced by noise, i.e. the uniform probability distribution over the source element.

9.4 Proto-Experiences

For each system S, the first Wasserstein metric (or ‘Earth Mover’s Distance’) makes a metric space . The space of proto-experiences of classical IIT iswhere is defined in Example 3. Thus elements of are of the form for some and , with distance function, intensity and scalar multiplication as defined in the example.

9.5 Repertoires

It remains to define the cause-effect repertoires. Fixing a state s of S, the first step will be to define maps and which send any choice of to an element of . These should describe the way in which the current state of M constrains that of P in the next or previous time-steps. We begin with the effect repertoire. For a single element purview we definewhere denotes (the Dirac distribution of) the restriction of the state s to M. While it is natural to use the same definition for arbitrary purviews, IIT 3.0 in fact uses another definition based on consideration of ‘virtual elements’ [25, 26, 48], which also makes calculations more efficient (Supplementary Material S1 of [26]). For general purviews P, this definition istaking the product over all elements in the purview P. Next, for the cause repertoire, for a single element mechanism and each , we definewhere λ is the unique normalisation scalar making a valid element of . Here, for clarity, we have indicated evaluation of probability distributions at particular states by square brackets. If the time evolution operator has an inverse , this cause repertoire could be defined similarly to (25) by but classical IIT does not utilize this definition.

For General Mechanisms M, we Then Definewhere the product is over all elements in M and where is again a normalisation constant. We may at last now definewith intensity 1 when viewed as elements of . Here, the dot indicates again the multiplication of probability distributions and denotes the empty mechanism.

The distributions and are called the unconstrained cause and effect repertoires over .

Remark 12. It is in fact possible for the right-hand side of Eq. 28 to be equal to 0 for all for some . In this case we set in .Finally we must specify the decompositions of these elements over . For any partitions of M and of P, we definewhere we have abused notation by equating each subset and of nodes with their induced subsystems of S via the state s. This concludes all data necessary to define classical IIT. If the generalized definition of Section 8 is applied to this data, it yields precisely classical IIT 3.0 defined by Tononi et al. In Appendix A, we explain in detail how our definition of IIT, equipped with this data, maps to the usual presentation of the theory.

10 Quantum IIT

In this section, we consider Quantum IIT defined in [50]. This is also a special case of the definition in terms of process theories we give in [44].

10.1 Systems

Similar to classical IIT, in Quantum IIT systems are conceived as consisting of elements . Here, each element is described by a finite dimensional Hilbert space and the state space of system S is defined in terms of the element Hilbert spaces aswhere describes the positive semidefinite Hermitian operators of unit trace on , i.e. density matrices. The time evolution of the system is again given by a time evolution operator, which here is assumed to be a trace preserving (and in [50] typically unital) completely positive map

10.2 Subsystems, Decompositions and Cuts

Subsystems are again defined to consist of subsets M of the elements of the system, with corresponding Hilbert space . The time-evolution is defined aswhere and denotes the trace over the Hilbert space .

Decompositions are also defined via partitions of the set of elements N into two disjoint subsets D and whose union is N. For any such decomposition, the cut system is defined to have the same set of states but time evolutionwhere is the maximally mixed state on , i.e. .

10.3 Proto-Experiences

For any , the trace distance defined asturns into a metric space. The space of proto-experiences is defined based on this metric space as described in Example 3,

10.4 Repertoires

We finally come to the definition of the cause-effect repertoire. Unlike classical IIT, the definition in [50] does not consider virtual elements. Let a system S in state be given. As in Section 9.5, we utilize maps and which here map subsystems M and P to . They are defined aswhere is the Hermitian adjoint of . We then defineeach with intensity 1, where again denotes the empty mechanism. Similarly, decompositions of these elements over are defined asagain with intensity 1, where and .

11 Extensions of Classical IIT

The physical systems to which IIT 3.0 may be applied are limited in a number of ways: they must have a discrete time-evolution, satisfy Markovian dynamics and exhibit a discrete set of states [3]. Since many physical systems do not satisfy these requirements, if IIT is to be taken as a fundamental theory about reality, it must be extended to overcome these limitations.

In this section, we show how IIT can be redefined to cope with continuous time, non-Markovian dynamics and non-compact state spaces, by a redefinition of the maps Eqs. 26 and 28 and, in the case of non-compact state spaces, a slightly different choice of Eq. 24, while leaving all of the remaining structure as it is. While we do not think that our particular definitions are satisfying as a general definition of IIT, these results show that the disentanglement of the essential mathematical structure of IIT from auxiliary tools (the particular definition of cause-effect repertoires used to date) can help to overcome fundamental mathematical or conceptual problems.

In Section 11.3, we also explain which solution to the problem of non-canonical metrics is suggested by our formalism.

11.1 Discrete Time and Markovian Dynamics

In order to avoid the requirement of a discrete time and Markovian dynamics, instead of working with the time evolution operator Eq. 18, we define the cause- and effect repertoires in reference to a given trajectory of a physical state . The resulting definitions can be applied independently of whether trajectories are being determined by Markovian dynamics in a particular application, or not.

Let denote the time parameter of a physical system. If time is discrete, is an ordered set. If time is continuous, is an interval of reals. For simplicity, we assume . In the deterministic case, a trajectory of a state is simply a curve in , which we denote by with . For probabilistic systems (such as neural networks with a probabilistic update rule), it is a curve of probability distributions , which we denote by , with equal to the Dirac distribution . The latter case includes the former, again via Dirac distributions.

In what follows, we utilize the fact that in physics, state spaces are defined such that the dynamical laws of a system allow to determine the trajectory of each state. Thus for every , there is a trajectory which describes the time evolution of s.

The idea behind the following is to define, for every , a trajectory in which quantifies how much the state of the purview P at time t is being constrained by imposing the state s at time on the mechanism M. This gives an alternative definition of the maps (26) and (28), while the rest of classical IIT can be applied as before.

Let now and be given. We first consider the time evolution of the state , where denotes the restriction of s to as before and where is an arbitrary state of . We denote the time evolution of this state by . Marginalizing this distribution over gives a distribution on the states of P, which we denote as . Finally, we average over v using the uniform distribution . Because state spaces are finite in classical IIT, this averaging can be defined pointwise for every bywhere κ is the unique normalization constant which ensures that .

The probability distribution describes how much the state of the purview P at time t is being constrained by imposing the state s on M at time as desired. Thus, for every , we have obtained a mapping of two subsystems to an element of which has the same interpretation as the map Eq. 26 considered in classical IIT. If deemed necessary, virtual elements could be introduced just as in Eqs 27 and 29.

So far, our construction can be applied for any time . It remains to fix this freedom in the choice of time. For the discrete case, the obvious choice is to define Eqs 27 and 29 in terms of neighboring time-steps. For the continuous case, several choices exist. E.g., one could consider the positive and negative semi-derivatives of at , in case they exist, or add an arbitrary but fixed time scale to define the cause-effect repertoires in terms of . However, the most reasonable choice is in our eyes to work with limits, in case they exist, by definingto replace Eq. 27 andto replace Eq. 29. The remainder of the definitions of classical IIT can then be applied as before.

11.2 Discrete Set of States

The problem with applying the definitions of classical IIT to systems with continuous state spaces (e.g., neuron membrane potentials [3]) is that in certain cases, uniform probability distributions do not exist. E.g., if the state space of a system S consists of the positive real numbers , no uniform distribution can be defined which has a finite total volume, so that no uniform probability distribution exists.

It is important to note that this problem is less universal than one might think. E.g., if the state space of the system is a closed and bounded subset of , e.g. an interval , a uniform probability distribution can be defined using measure theory, which is in fact the natural mathematical language for probabilities and random variables. Nevertheless, the observation in [3] is correct that if a system has a non-compact continuous state space, might not exist, which can be considered a problem w.r.t. the above-mentioned working hypothesis.

This problem can be resolved for all well-understood physical systems by replacing the uniform probability distribution by some other mathematical entity which allows to define a notion of averaging states. For all relevant classical systems with non-compact state spaces (whether continuous or not), there exists a canonical uniform measure which allows to define the cause-effect repertoires similar to the last section, as we now explain. Examples for this canonical uniform measure are the Lebesgue measure for subsets of [35], or the Haar measure for locally compact topological groups [36] such as Lie-groups.

In what follows, we explain how the construction of the last section needs to be modified in order to be applied to this case. In all relevant classical physical theories, is a metric space in which every probability measure is a Radon measure, in particular locally finite, and where a canonical locally finite uniform measure exists. We define to be the space of probability measures whose first moment is finite. For these, the first Wasserstein metric (or ‘Earth Mover’s Distance’) exists, so that is a metric space.

As before, the dynamical laws of the physical systems determine for every state a time evolution , which here is an element of . Integration of this probability measure over yields the marginal probability measure . As in the last section, we may consider these probability measures for the state , where . Since is not normalizable, we cannot define as in (32), for the result might be infinite.

Using the fact that is locally finite, we may, however, define a somewhat weaker equivalent. To this end, we note that for every state , the local finiteness of implies that there is a neighborhood in for which is finite. We choose a sufficiently large neighborhood which satisfies this condition. Assuming to be a measurable function in v, for every A in the σ-algebra of , we can thus definewhich is a finite quantity. The so defined is non-negative, vanishes for and satisfies countable additivity. Hence it is a measure on as desired, but might not be normalizable.

All that remains for this to give a cause-effect repertoire as in the last section, is to make sure that any measure (normalized or not) is an element of . The theory is flexible enough to do this by setting if either μ or ν is not in , and otherwise. Here, denotes the total variation of the signed measure , and is the volume thereof [10, 32]. While not a metric space any more, the tuple , with denoting all measures on St(S), can still be turned into a space of proto-experiences as in Example 3. This givesand finally allows one to construct cause-effect repertoires as in the last section.

11.3 Non-canonical Metrics

Another criticism of IIT’s mathematical structure mentioned [3] is that the metrics used in IIT’s algorithm are, to a certain extend, chosen arbitrarily. Different choices indeed imply different results of the algorithm, both concerning the quantity and quality of conscious experience, which can be considered problematic.

The resolution of this problem is, however, not so much a technical as a conceptual or philosophical task, for what is needed to resolve this issue is a justification of why a particular metric should be used. Various justifications are conceivable, e.g. identification of desired behavior of the algorithm when applied to simple systems. When considering our mathematical reconstruction of the theory, the following natural justification offers itself.

Implicit in our definition of the theory as a map from systems to experience spaces is the idea that the mathematical structure of experiences spaces (Definition 2) reflects the phenomenological structure of experience. This is so, most crucially, for the distance function d, which describes how similar two elements of experience spaces are. Since every element of an experience space corresponds to a conscious experience, it is naturally to demand that the similarly of the two mathematical objects should reflect the similarity of the experiences they describe. Put differently, the distance function d of an experience space should in fact mirror (or “model”) the similarity of conscious experiences as experienced by an experiencing subject.

This suggests that the metrics d used in the IIT algorithm should, ultimately, be defined in terms of the phenomenological structure of similarity of conscious experiences. For the case of color qualia, this is in fact feasible [18, Example 3.18], [21, 38]. In general, the mathematical structure of experience spaces should be intimately tied to the phenomenology of experience, in our eyes.

12 Summary and Outlook

In this article, we have propounded the mathematical structure of Integrated Information Theory. First, we have studied which exact structures the IIT algorithm uses in the mathematical description of physical systems, on the one hand, and in the mathematical description of conscious experience, on the other. Our findings are the basis of definitions of a physical system class and a class of experience spaces, and allowed us to view IIT as a map .

Next, we needed to disentangle the essential mathematics of the theory from auxiliary formal tools used in the contemporary definition. To this end, we have introduced the precise notion of decomposition of elements of an experience space required by the IIT algorithm. The pivotal cause-effect repertoires are examples of decompositions so defined, which allowed us to view any particular choice, e.g. the one of ‘classical’ IIT developed by Tononi et al., or the one of ‘quantum’ IIT recently introduced by Zanardi et al. as data provided to a general IIT algorithm.

The formalization of cause-effect repertoires in terms of decompositions then led us to define the essential ingredients of IIT’s algorithm concisely in terms of integration levels, integration scalings and cores. These definitions describe and unify recurrent mathematical operations in the contemporary presentation, and finally allowed to define IIT completely in terms of a few lines of definition.

Throughout the paper, we have taken great care to make sure our definitions reproduce exactly the contemporary version of IIT 3.0. The result of our work is a mathematically rigorous and general definition of Integrated Information Theory. This definition can be applied to any meaningful notion of systems and cause-effect repertoires, and we have shown that this allows one to overcome most of the mathematical problems of the contemporary definition identified to date in the literature.

We believe that our mathematical reconstruction of the theory can be the basis for refined mathematical and philosophical analysis of IIT. We also hope that this mathematisation may make the theory more amenable to study by mathematicians, physicists, computer scientists and other researchers with a strongly formal background.

12.1 Process Theories

Our generalization of IIT is axiomatic in the sense that we have only included those formal structures in the definition which are necessary for the IIT algorithm to be applied. This ensured that our reconstruction is as general as possible, while still true to IIT 3.0. As a result, several notions used in classical IIT, e.g., system decomposition, subsystems or causation, are merely defined abstractly at first, without any reference to the usual interpretation of these concepts in physics.

In the related article [44], we show that these concepts can be meaningfully defined in any suitable process theory of physics, formulated in the language of symmetric monoidal categories. This approach can describe both classical and Quantum IIT and yields a complete formulation of contemporary IIT in a categorical framework.

12.2 Further Development of IIT

IIT is constantly under development, with new and refined definitions being added every few years. We hope that our mathematical analysis of the theory might help to contribute to this development. For example, the working hypothesis that IIT is a fundamental theory, implies that technical problems of the theory need to be resolved. We have shown that our formalization allows one to address the technical problems mentioned in the literature. However, there are others which we have not addressed in this paper.

Most crucially, the IIT algorithm uses a series of maximalization and minimalization operations, unified in the notion of core subsystems in our formalization. In general, there is no guarantee that these operations lead to unique results, neither in classical nor Quantum IIT. Using different cores has major impact on the output of the algorithm, including the value, which is a case of ill-definedness.2

Furthermore, the contemporary definition of IIT as well as our formalization rely on there being a finite number of subsystems of each system, which might not be the case in reality. Our formalisation may be extendable to the infinite case by assuming that every system has a fixed but potentially infinite indexing set , so that each is the image of a mapping , but we have not considered this in detail in this paper.

Finally, concerning more operational questions, it would be desirable to develop the connection to empirical measures such as the Perturbational Complexity Index (PCI) [7, 9] in more detail, as well as to define a controlled approximation of the theory whose calculation is less expensive. Both of these tasks may be achievable by substituting parts of our formalization with simpler mathematical structure.

On the conceptual side of things, it would be desirable to have a more proper understanding of how the mathematical structure of experiences spaces corresponds to the phenomenology of experience, both for the general definition used in our formalization—which comprises the minimal mathematical structure which is required for the IIT algorithm to be applied—and the specific definitions used in classical and Quantum IIT. In particular, it would be desirable to understand how it relates to the important notion of qualia, which is often asserted to have characteristic features such as ineffability, intrinsicality, non-contextuality, transparency or homogeneity [24]. For a first analysis toward this goal, cf [18]. A first proposal to add additional structure to IIT that accounts for relations between elements of consciousness in the case of spatial experiences was recently given in [14].

Statements

Data availability statement

The original contributions presented in the study are included in the article/Supplementary Material, further inquiries can be directed to the corresponding author.

Author contributions

JK and ST conceived the project together and wrote the article together.

Acknowledgments

We would like to thank the organizers and participants of the Workshop on Information Theory and Consciousness at the Center for Mathematical Sciences of the University of Cambridge, of the Modeling Consciousness Workshop in Dorfgastein and of the Models of Consciousness Conference at the Mathematical Institute of the University of Oxford for discussions on this topic. Much of this work was carried out while Sean Tull was under the support of an EPSRC Doctoral Prize at the University of Oxford, from November 2018 to July 2019, and while Johannes Kleiner was under the support of postdoctoral funding at the Institute for Theoretical Physics of the Leibniz University of Hanover. We would like to thank both institutions.

Conflict of interest

Author ST was employed by company Cambridge Quantum Computing Limited.

The remaining author declares that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Footnotes

1.^If the maximum does not exist, we define the core to be the empty system I.

2.^The problem of ‘unique existence’ has been studied extensively in category theory using universal properties and the notion of a limit. Rather than requiring that each come with a metric, it may be possible to alter the IIT algorithm into a well-defined categorical form involving limits to resolve this problem.

References

1.

BarrettAB. An Integration of Integrated Information Theory with Fundamental Physics. Front Psychol(2014)5:63. 10.3389/fpsyg.2014.00063

2.

TimB. On the Axiomatic Foundations of the Integrated Information Theory of Consciousness. Neurosci Conscious (2018). 2018(1) niy007. 10.1093/nc/niy007

3.

BarrettABMedianoPAM. The Phi Measure of Integrated Information Is Not Well-Defined for General Physical Systems. J Conscious Stud (2019). 21:133. 10.1021/acs.jpcb.6b05183.s001

4.

AdamBSethA. Practical Measures of Integrated Information for Time-Series Data. Plos Comput Biol (2011). 7(1):e1001052. 10.1371/journal.pcbi.1001052

5.

BalduzziDTononiG. Integrated Information in Discrete Dynamical Systems: Motivation and Theoretical Framework. Plos Comput Biol (2008). 4(6):e1000091. 10.1371/journal.pcbi.1000091

6.

ChangAYCBiehlMYenYKanaiR. Information Closure Theory of Consciousness. Front Psychol (2020). 11:121. 10.3389/fpsyg.2020.01504

7.

CasarottoSComanducciARosanovaMSarassoSFecchioMNapolitaniMet alStratification of Unresponsive Patients by an Independently Validated Index of Brain Complexity. Ann Neurol (2016). 80(5):718–29. 10.1002/ana.24779

8.

BolyMA. The Problem with Phi: a Critique of Integrated Information Theory. Plos Comput Biol (2015). 11(9):e1004286. 10.1371/journal.pcbi.1004286

9.

CasaliAGGosseriesORosanovaMBolyMSarassoSCasaliKRet alA Theoretically Based Index of Consciousness Independent of Sensory Processing and Behavior. Sci Translat Med (2015). 5:198ra105. 10.1126/scitranslmed.3006294

10.

HalmosPR. Measure TheoryBerlin: Springer (1974).

11.

Hardy.L. Proposal to Use Humans to Switch Settings in a Bell Experiment (2017). arXiv preprint arXiv:1705.04620.

12.

HaunAMOizumiMKovachCKKawasakiHOyaHHowardMAet alContents of consciousness investigated as integrated information in direct human brain recordings. (2016). bioRxiv.

13.

HoffmanDDPrakashC. Objects of Consciousness. Front Psychol (2014). 5:577. 10.3389/fpsyg.2014.00577

14.

HaunATononiG. Why Does Space Feel the Way it Does? towards a Principled Account of Spatial Experience. Entropy (2019). 21(12):1160. 10.3390/e21121160

15.

KentA. Quanta and Qualia. Found Phys (2018). 48(9):1021–37. 10.1007/s10701-018-0193-9

16.

KentA. Toy Models of Top Down Causation. (2019). arXiv preprint arXiv:1909.12739.

17.

KleinerJHoelE. Falsification and Consciousness. Neurosci Consciousness (2021). 2021(1):niab001. 10.1093/nc/niab001

18.

KleinerJ. Mathematical Models of Consciousness. Entropy (2020). 22(6):609. 10.3390/e22060609

19.

KochCMassiminiMBolyMTononiG. Neural Correlates of Consciousness: Progress and Problems. Nat Rev Neurosci (2016). 17(5):307, 21. 10.1038/nrn.2016.22

20.

KremnizerKRanchinA. Integrated Information-Induced Quantum Collapse. Found Phys (2015). 45(8):889–99. 10.1007/s10701-015-9905-6

21.

KuehniR. Color Spaces. Scholarpedia (2010). 5(3):9606. 10.4249/scholarpedia.9606

22.

MasonJWD. Quasi‐conscious Multivariate Systems. Complexity (2016). 21(S1):125–47. 10.1002/cplx.21720

23.

KelvinJ. Interpretation-neutral Integrated Information Theory. J Conscious Stud (2019). 26(1-2):76–106. 10.1007/978-1-4419-9707-4_13

24.

MetzingerT. Grundkurs Philosophie des Geistes, Band 1. Berlin: Phänomenales Bewusstsein (2006).

25.

MarshallWGomez-RamirezJTononiG. Integrated Information and State Differentiation. Front Psychol (2016). 7:926. 10.3389/fpsyg.2016.00926

26.

WilliamGMaynerPMarshallWAlbantakisLFindlayGMarchmanRet alPyPhi: A Toolbox for Integrated Information Theory. Plos Comput Biol (2018). 14(7):e1006343–21. 10.1371/journal.pcbi.1006343

27.

PedroAMRosasFCarhart-HarrisRLSethAAdamB. Beyond Integrated Information: A Taxonomy of Information Dynamics Phenomena. (2019). arXiv preprint arXiv:1909.02297.

28.

PedroAMSethAAdamB. Measuring Integrated Information: Comparison of Candidate Measures in Theory and Simulation. Entropy (2019). 21(1):17. 10.3390/e21010017

29.

MuellerMP. Could the Physical World Be Emergent Instead of Fundamental, and Why Should We Ask?(short Version). (2017). arXiv preprint arXiv:1712.01816.

30.

NorthoffGTsuchiyaNSaigoH. Mathematics and the Brain. A Category Theoretic Approach to Go beyond the Neural Correlates of Consciousness. (2019). bioRxiv.

31.

OizumiMAlbantakisLTononiG. From the Phenomenology to the Mechanisms of Consciousness: Integrated Information Theory 3.0. PLoS Comput Biol (2014). 10(5):e1003588. 10.1371/journal.pcbi.1003588

32.

Encyclopedia of Mathematics. Signed Measure. Berlin: Springer (2013).

33.

AnthonyP. Consciousness as Integrated Information a Provisional Philosophical Critique. J Conscious Stud (2013). 20(1–2):180–206. 10.2307/25470707

34.

PedroR. Proceedings of the Workshop on Combining Viewpoints in Quantum Theory, 19–22. Edinburgh, UK: ICMS (2018).

35.

WalterR. Real and Complex Analysis. London: Tata McGraw-hill education (2006).

36.

SalamonD. Measure and Integration. London: European Mathematical Society (2016). 10.4171/159

37.

SethAAdamBBarnettL. Causal Density and Integrated Information as Measures of Conscious Level. Philos Trans A Math Phys Eng Sci (1952). 369:3748–67. 10.1098/rsta.2011.0079

38.

SharmaGWuWEdulN. Dalal. The CIEDE2000 Color-Difference Formula: Implementation Notes, Supplementary Test Data, and Mathematical Observations. London: COLOR Research and Application (2004).

39.

Miguel SignorelliCWangQKhanI. A Compositional Model of Consciousness Based on Consciousness-Only. (2020). arXiv preprint arXiv:2007.16138.

40.

TononiGBolyMMassiminiMKochC. Integrated Information Theory: from Consciousness to its Physical Substrate. Nat Rev Neurosci (2016). 17(7):450, 61. 10.1038/nrn.2016.44

41.

TegmarkM. Consciousness as a State of Matter. Chaos, Solitons Fractals (2015). 76:238–70. 10.1016/j.chaos.2015.03.014

42.

TegmarkM. Improved Measures of Integrated Information. PLoS Comput Biol (2016). 12(11). 10.1371/journal.pcbi.1005123

43.

TsuchiyaNHaunACohenDOizumiM. Empirical Tests of the Integrated Information Theory of consciousnessThe Return of Consciousness: A New Science on Old Questions. London: Axel and Margaret Ax. son Johnson Foundation (2016). p. 349–74.

44.

TullSKleinerJ. Integrated Information in Process Theories. J Cognit Sci (2021). 22:135–55.

45.

TononiG. An Information Integration Theory of Consciousness. BMC Neurosci (2004). 5(1):42. 10.1186/1471-2202-5-42

46.

TononiG. Consciousness, Information Integration, and the Brain. Prog Brain Res (2005). 150:109–26. 10.1016/s0079-6123(05)50009-8

47.

TononiG. Consciousness as Integrated Information: a Provisional Manifesto. Biol Bull (2008). 215(3):216–42. 10.2307/25470707

48.

TononiG. Integrated Information Theory. Scholarpedia (2015). 10(1):4164. 10.4249/scholarpedia.4164

49.

TsuchiyaNTaguchiSSaigoH. Using Category Theory to Assess the Relationship between Consciousness and Integrated Information Theory. Neurosci Res (2016). 107(1–7):133. 10.1016/j.neures.2015.12.007

50.

ZanardiPTomkaMVenutiLC. Quantum Integrated Information Theory. (2018). arXiv preprint arXiv:1806.01421, 2018 Comparison with Standard Presentation of IIT 3.0.

Appendix A: Comparison with Standard Presentation of IIT 3.0

In Section 9, we have defined the system class and cause-effect repertoires which underlie classical IIT. The goal of this appendix is to explain in detail why applying our definition of the IIT algorithm yields IIT 3.0 defined by Tononi et al. In doing so, we will mainly refer to the terminology used in [25, 26, 31, 48]. We remark that a particularly detailed presentation of the algorithm of the theory, and of how the cause and effect repertoire are calculated, is given in the Supplementary Material S1 of [26].

A.1 Physical Systems

The systems of classical IIT are given in Section 9.1. They are often represented as graphs whose nodes are the elements and edges represent functional dependence, thus describing the time evolution of the system as a whole, which we have taken as primitive in Eq. 18. This is similar to the presentation of the theory in terms of a transition probability functionin [25]. For each probability distribution over , this relates to our time evolution operator T via

A.2 Cause-Effect Repertoires

In contemporary presentations of the theory ([25], p. 14] or [48]), the effect repertoire is defined asandHere, denotes a state of the mechanism M at time t. denotes the complement of the mechanism, denoted in our case as , denotes the state space of the complement, and an element thereof. denotes an element of the purview Z (designated by P in our case), denotes the state space of this element, a state of this element and z a state of the whole purview. denotes the cardinality of the state space of , and equals the number of elements in the purview. Finally, the expression denotes a variant of the so-called “do-operator”. It indicates that the state of the system, here at time t, is to be set to the term in brackets. This is called perturbing the system into the state . The notation then gives the probability of finding the purview element in the state at time given that the system is prepared in the state at time t.

In our notation, the right hand side of Eq. 35 is exactly given by the right-hand side of Eq. 25, i.e. . The system is prepared in a uniform distribution on (described by the sum and prefactor in Eq. 35) and with the restriction of the system state, here denoted by , on M. Subsequently, T is applied to evolve the system to time , and the marginalization throws away all parts of the states except those of the purview element (denoted above as ). In total, Eq. 25 is a probability distribution on the states of the purview element. When evaluating this probability distribution at one particular state of the element, one obtains the same numerical value as Eq. 35. Finally, taking the product in Eq. 36 corresponds exactly to taking the product in Eq. 26.

Similarly, the cause repertoire is defined as ([25], p. 14] or [48])andwhere denotes the state of one element of the mechanism M, with the subscript t indicating that the state is considered at time t. Z again denotes a purview, z is a state of the purview and denotes the state space of the purview, where the subscript indicates that the state is considered at time . K denotes a normalization constant and gives the number of elements in M.

Here, the whole right hand side of Eq. 37 gives the probability of finding the purview in state z at time t − 1 if the system is prepared in state mi,t at time t. In our terminology this same distribution is given by Eq. 27, where λ is the denominator in Eq. 37. Taking the product of these distributions and re-normalising is then precisely Eq. 28.

As a result, the cause and effect repertoire in the sense of [31] correspond precisely in our notation to and , each being distributions over . In (Supplementary Material S1 of [26]), it is explained that these need to be extended by the unconstrained repertoires before being used in the IIT algorithm, which in our formalization is done in Eq. 29, so that the cause-effect repertoires are now distributions over . These are in fact precisely what are called the extended cause and effect repertoires or expansion to full state space of the repertoires in [31].

The behavior of the cause- and effect-repertoires when decomposing a system is described, in our formalism, by decompositions (Definition 5). Hence a decomposition is what is called a parition in the classical formalism. For the case of classical IIT, a decomposition is given precisely by a partition of the set of elements of a system, and the cause-effect repertoires belonging to the decomposition are defined in Eq. 30, which corresponds exactly to the definitionin [25], when expanded to the full state space, and equally so for the effect repertoire.

A.3 Algorithm: Mechanism Level

Next, we explicitly unpack our form of the IIT algorithm to see how it compares in the case of classical IIT with [31]. In our formalism, the integrated information φ of a mechanism M of system S when in state s isdefined in Eq. 10. This definition conjoins several steps in the definition of classical IIT. To explain why it corresponds exactly to classical IIT, we disentangle this definition step by step.

First, consider in Eq. 9. This is, by definition, a decomposition map. The calculation of the integration level of this decomposition map, cf. Eq. 5, amounts to comparing to the cause-effect repertoire associated with every decomposition using the metric of the target space , which for classical IIT is defined in Eq. 24 and Example 3, so that the metric d used for comparison is indeed the Earth Mover’s Distance. Since cause-effect repertoires have, by definition, unit intensity, the factor r in the definition (1) of the metric does not play a role at this stage. Therefore, the integration level of is exactly the integrated cause information, denoted asin [48], where denotes the (induced state of the) mechanism M in this notation, and denotes the purview P. Similarly, the integration level of is exactly the integrated effect information, denoted as

The integration scaling in Eq. 10 simply changes the intensity of an element of to match the integration level, using the scalar multiplication, which is important for the system level definitions. When applied to , this would result in an element of whose intensity is precisely .

Consider now the collections (9) of decomposition maps. Applying Definition 9, the core of is that purview P which gives the decomposition with the highest integration level, i.e. with the highest . This is called the core cause of M, and similarly the core of is called the core effect of M.

Finally, to fully account for Eq. 10, we note that the integration scaling of a pair of decomposition maps rescales both elements to the minimum of the two integration levels. Hence the integration scaling of the pair fixes the scalar value of both elements to be exactly the integrated information, denoted asin [48], where and .

In summary, the following operations are combined in Eq. 10. The core of picks out the core cause and core effect . The core integration scaling subsequently considers the pair , called maximally irreducible cause-effect repertoire, and determines the integration level of each by analysing the behavior with respect to decompositions. Finally, it rescales both to the minimum of the integration levels. Thus it gives exactly what is called in [48]. Using, finally, the definition of the intensity of the product in Definition 4, this implies (39). The concept of M in our formalization is given by the tuplei.e., the pair of maximally irreducible repertoires scaled by . This is equivalent to what is called a concept, or sometimes quale sensu stricto, in classcial IIT [48], and denoted as .

We finally remark that it is also possible in classical IIT that a cause repertoire value vanishes (Remark 12). In our formalization, it would hence be represented by in , so that for all according to (1), which certainly ensures that .

A.4 Algorithm: System Level

We finally explain how the system level definitions correspond to the usual definition of classical IIT.

The Q-shape is the collection of all concepts specified by the mechanisms of a system. Since each concept has intensity given by the corresponding integrated information of the mechanism, this makes what is usually called the conceptual structure or cause-effect structure. In [31], one does not include a concept for any mechanism M with . This manual exclusion is unnecessary in our case because the mathematical structure of experience spaces implies that mechanisms with should be interpreted as having no conscious experience, and the algorithm in fact implies that they have ‘no effect’. Indeed we will now see that they do not contribute to the distances in or any values, and so we do not manually exclude them.

When comparing with the Q-shape Eq. 13 obtained after replacing S by any of its cuts, it is important to note that both are elements of defined in Eq. 12, which is a product of experience spaces. According to Definition 4, the distance function on this product isUsing Definition 3 and the fact that each concept’s intensity is according to the mechanism level definitions, each distance is equal towhere denotes the integrated information of the concept in the original system S, and where the right-hand cause and effect repertoires are those of at its own core causes and effects for M. The factor ensures that the distance used here corresponds precisely to the distance used in [31], there called the extended Earth Mover’s Distance. If the integrated information of a mechanism is non-zero, it follows that as mentioned above, so that this concept does not contribute.

We remark that in Supplementary Material S1 of [26], an additional step is mentioned which is not described in any of the other papers we consider. Namely, if the integrated information of a mechanism is non-zero before cutting but zero after cutting, what is compared is not the distance of the corresponding concepts as in Eq. 40, but in fact the distance of the original concept with a special null concept, defined to be the unconstrained repertoire of the cut system. We have not included this step in our definitions, but it could be included by adding a choice of distinguished point to Example 3 and redefining the metric correspondingly.

In Eq. 14 the above comparison is being conducted for every subsystem of a system S. The subsystems of S are what is called candidate systems in [31], and which describe that ‘part’ of the system that is going to be conscious according to the theory (cf. below). Crucially, candidate systems are subsystems of S, whose time evolution is defined in Eq. 22. This definition ensures that the state of the elements of S which are not part of the candidate system are fixed in their current state, i.e., constitute background conditions as required in the contemporary version of classcial IIT [26].

Eq. 14 then compares the Q-shape of every candidate system to the Q-shape of all of its cuts, using the distance function described above, where the cuts are defined in Eq. 23. The cut system with the smallest distance gives the system-level minimum information partition and the integrated (conceptual) information of that candidate system, denoted as in [48].

The core integration scaling finally picks out that candidate system with the largest integrated information value. This candidate system is the major complex M of S, the part of S which is conscious according to the theory as part of the exclusion postulate of IIT. Its Q-shape is the maximally irreducible conceptual structure (MICS), also called quale sensu lato. The overall integrated conceptual information is, finally, simply the intensity of as defined in Eq. 14,

A.5 Constellation in Qualia Space

Expanding our definitions, and denoting the major complex by M with state , in our terminology the experience of system S state s isThis encodes the Q-shape , i.e. the maximally irreducible conceptual structure of the major complex, sometimes called quale sensu lato, which is taken to describe the quality of conscious experience. By construction it also encodes the integrated conceptual information of the major complex, which captures its intensity, since we have . The rescaling of in Eq. 41 leaves the relative intensities of the concepts in the MICS intact. Thus is the constellation of concepts in qualia space of [31].

Summary

Keywords

Integrated Information Theory, experience spaces, mathematical consciousness science, IIT 3.0, IIT 3.x, generalized IIT

Citation

Kleiner J and Tull S (2021) The Mathematical Structure of Integrated Information Theory. Front. Appl. Math. Stat. 6:602973. doi: 10.3389/fams.2020.602973

Received

07 September 2020

Accepted

23 December 2020

Published

04 June 2021

Volume

6 - 2020

Edited by

Heng Liu, Guangxi University for Nationalities, China

Reviewed by

Guangming Xue, Guangxi University of Finance and Economics, China

Shumin Ha, Shaanxi Normal University, China

Updates

Copyright

© 2021 Kleiner and Tull.

This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Johannes Kleiner, johannes.kleiner@lmu.de; Sean Tull, sean.tull@cambridgequantum.com

This article was submitted to Dynamical Systems, a section of the journal Frontiers in Applied Mathematics and Statistics

Disclaimer

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.