- 1Long Marine Laboratory, Institute of Marine Sciences, University of California Santa Cruz, Santa Cruz, CA, USA

- 2Department of Psychology, Emory University, Atlanta, GA, USA

- 3Department of Psychological Sciences, University of Connecticut, Storrs, CT, USA

Human capacity for entraining movement to external rhythms—i.e., beat keeping—is ubiquitous, but its evolutionary history and neural underpinnings remain a mystery. Recent findings of entrainment to simple and complex rhythms in non-human animals pave the way for a novel comparative approach to assess the origins and mechanisms of rhythmic behavior. The most reliable non-human beat keeper to date is a California sea lion, Ronan, who was trained to match head movements to isochronous repeating stimuli and showed spontaneous generalization of this ability to novel tempos and to the complex rhythms of music. Does Ronan's performance rely on the same neural mechanisms as human rhythmic behavior? In the current study, we presented Ronan with simple rhythmic stimuli at novel tempos. On some trials, we introduced “perturbations,” altering either tempo or phase in the middle of a presentation. Ronan quickly adjusted her behavior following all perturbations, recovering her consistent phase and tempo relationships to the stimulus within a few beats. Ronan's performance was consistent with predictions of mathematical models describing coupled oscillation: a model relying solely on phase coupling strongly matched her behavior, and the model was further improved with the addition of period coupling. These findings are the clearest evidence yet for parity in human and non-human beat keeping and support the view that the human ability to perceive and move in time to rhythm may be rooted in broadly conserved neural mechanisms.

Introduction

Auditory-motoric entrainment—the coordination of motor movement with simple and complex rhythmic sounds—has a strong presence in human culture and is found across all human societies (Clayton et al., 2005). This phenomenon of “beat keeping” was believed to be unique to humans (Wallin et al., 2000; Bispham, 2006; Zatorre et al., 2007), but new findings in non-human animals have decisively put that idea to rest. Evidence for some faculty to flexibly entrain movement to simple metronome-like stimuli has been found in bonobos (Large and Gray, 2015), chimpanzees (Hattori et al., 2013), and budgerigars (Hasegawa et al., 2011). The ability to entrain to more complex musical stimuli has been shown in cockatoos (Patel et al., 2009), parrots (Schachner et al., 2009), and most reliably, a California sea lion (Cook et al., 2013). Preliminary evidence suggesting beat-keeping behavior has also been identified for elephants (Schachner et al., 2009) and horses (Bregman et al., 2013). While hypotheses have been advanced suggesting that beat keeping is dependent on specialized and relatively rare neural adaptations (Patel et al., 2009; Merchant and Honing, 2013), or exposure to auditory rhythm during critical developmental periods (Schachner, 2012), it is increasingly difficult to identify candidate traits exclusive to the phylogenetically distant species now shown capable of rhythmic entrainment. This suggests that rather than being a derived ability, this faculty is instead broadly conserved, supported by mechanisms of domain-general sensorimotor synchronization found across the animal kingdom (see Wilson and Cook, 2016).

Performance dynamics and variability in human rhythmic behavior have been extensively and carefully studied (see Repp, 2005; Large, 2008; Repp and Su, 2013 for reviews). Although beat-keeping behavior has now been ascribed to a number of non-human species, the mechanisms have not yet been explored outside of humans. If human rhythm is broadly conserved, beat keeping in other animals should be consistent with the principles governing the behavior in humans. One parsimonious and well-established theory of beat keeping is that of neural resonance. This theory proposes that the perception of pulse in simple and complex rhythms, and associated behavioral synchronization to those rhythms, arise from intrinsic properties of neural oscillation (Large and Snyder, 2009; Large et al., 2015). Unlike information-processing theories, in which beat perception and synchronization are separate computational processes that require specialized neural circuitry (Vorberg and Wing, 1996; Repp and Keller, 2004; Patel, 2006; Patel and Iversen, 2014), the theory of neural resonance states that both phenomena are byproducts of the physical principles of coupled oscillation (Large, 2008), and does not presuppose any specialized and potentially restricted neural adaptations beyond auditory-motor coupling to explain auditory motor entrainment.

Neural resonance theory is supported by the well-established finding of neural oscillation: interaction between excitatory and inhibitory neuronal populations gives rise to population rhythms throughout the brain, including across sensory and motor networks (Brunel, 2003; Börgers and Kopell, 2003; Buzsáki and Draughn, 2004; Stefanescu and Jirsa, 2008). In brief, when acoustic stimuli are presented in a periodic pattern, auditory oscillations spontaneously entrain to the structure of the stimulus stream (Will and Berg, 2007; Nozaradan et al., 2011, 2012). Presumably, these auditory oscillations then induce synchronized neural oscillations in coupled motor systems, leading to rhythmic behavior with a strong phase and tempo relationship to the auditory stimulus (e.g., Loehr et al., 2011). Models of neural resonance are neurologically plausible and fully compatible with widely accepted models of functional connectivity in the brain (see Biswal et al., 1995). The brain can be described as a complicated set of overlapping networks linking neural populations into functional units (Bullmore and Sporns, 2009), and connectivity between these units can be described in terms of synchrony of firing rates between neural populations (Biswal et al., 1995; Greicius et al., 2003). Perception and cognition are then posited to emerge out of the action and interaction of these networks (Sporns et al., 2004; Bressler and Menon, 2010). Importantly, although neural resonance does not require specialized neural mechanisms beyond linked auditory and motor networks, beat-keeping behavior is not necessarily obligate and automatic. Learning clearly changes the properties of coupling between auditory and motor networks, and attention and intention play important roles in producing or inhibiting beat-keeping behavior (Large and Jones, 1999; Repp and Keller, 2004).

An advantage of this sort of theoretical analysis is the ability to link complex oscillation of high dimensional neuronal populations with simpler lower dimensional population- and behavior-level models that capture much of the behavioral richness observed in high dimensional systems (Wilson and Cowan, 1973; Stefanescu and Jirsa, 2008) and that are amenable to theoretical and computational analysis (Aronson et al., 1990; Hoppensteadt and Izhikevich, 1996).

Neural resonance models have been used extensively to accurately describe rhythmic entrainment in humans, for both simple and complex stimuli. A common experimental approach uses behavioral paradigms that involve perturbations in both phase and tempo (Michon, 1967; Large and Palmer, 2002; Large et al., 2002; Repp and Keller, 2004; Loehr et al., 2011). Animal synchronization studies have historically attempted to demonstrate synchronization using statistical methods designed to show a nonrandom phase relationship between stimulus and movement (e.g., Patel et al., 2009, see Fisher, 1993; Pikovsky et al., 2001). However, to probe the underlying mechanisms, a different approach is required. One avenue is to perturb the stimulus and observe relaxation back to steady state behavior (a stable phase relationship).

Behavioral responses to stimulus perturbations can be modeled using the same discrete-time model of coupled oscillation that has been applied to both perception of rhythmic auditory sequences (Large and Jones, 1999) and perception-action coordination with rhythmic auditory sequences (deGuzman and Kelso, 1991; Loehr et al., 2011). Conceptually, a neural/behavioral oscillation is coupled to a rhythmic auditory stimulus that consists of brief acoustic events. The model assumes that the behavioral oscillation is temporally continuous, and the stimulus sequence is temporally discrete, illustrated in Equation (1).

Here, ϕn is the phase of the behavioral oscillation at which acoustic event n occurs. The model predicts the phase of the behavioral oscillation ϕn+1 at the next acoustic event, given the relative frequency of the behavioral oscillation and stimulus, Ω = 2πfosc∕fstim, and the coupling between the two, −α sin ϕn. Generic models such as Equation (1) are particularly powerful because they make strong predictions regarding both steady state (synchronization) and transient (relaxation) behavior and are easily implemented and analyzed. However, they have not yet been applied to examine rhythmic behavior in non-human animals.

The most reliable and precise non-human beat keeper known is Ronan, a California sea lion (Zalophus californianus) who was trained using operant methods to match her head movement to a simple isochronous stimulus, thus “bobbing” her head in time to the rhythmic beats. Once she had learned to bob in time to simple stimuli at set tempos, she successfully transferred to novel tempos and stimuli, including music at multiple tempos (Cook et al., 2013). To date, Ronan's results represent the most extensive and robust dataset of beat keeping in a non-human animal. This sea lion's ability to entrain her body movement to sound makes her a valuable candidate for cross-species testing of theories and allows the examination of the underlying mechanisms in a broader comparative approach.

Here, we apply neural resonance theory to an experimental study of Ronan's entrainment behavior, in which we tested her ability to adapt to sudden changes in both the phase and tempo of an isochronous repeating stimulus. Although—like humans—Ronan had previously shown strong entrainment to complex musical stimuli, we used simple stimuli comparable to those presented to humans in similar studies. To determine whether her performance was consistent with theories of neural resonance as is seen in humans, we evaluated her performance with a discrete-time model of coupled oscillation (see Equation 1). We hypothesized that Ronan's beat-keeping performance, and her response to phase and tempo perturbations, would be well fit by simple models of phase and period coupling.

Methods

Subject

The subject was “Ronan,” a 7-year-old female California sea lion (NOA0006602), who was housed at Long Marine Laboratory at the University of California Santa Cruz. Ronan was a healthy individual that was placed into captivity around age one after repeated stranding incidents and rescues. She previously participated in a study examining her ability to synchronize to auditory rhythms (Cook et al., 2013). In brief, Ronan was trained to match regular head movement to a simple isochronous stimulus at tempos of 80 and 120 beats per minute (bpm). She then successfully generalized the behavior to novel tempos of 72, 88, 96, 108, and 132 bpm with the simple stimulus, and to novel musical stimuli at tempos of 104, 108, 117, 124, 130, 137, and 143 bpm. Following data collection for Cook et al. (2013), Ronan received intermittent “practice” sessions (typically no more than one per week) with familiar simple stimuli and several novel musical stimuli. During this time, Ronan also participated in several other cognitive and perceptual studies unrelated to rhythm (Reichmuth et al., 2013; Cunningham et al., 2014a,b; Cook et al., 2015; Cunningham and Reichmuth, 2016).

The current experiment occurred from September 2015 to January 2016. During this time, Ronan received a daily diet of 5.7–6.6 kg of freshly thawed, cut herring and capelin fish. She was maintained at a healthy weight of ~72 kg, and her diet was not constrained for experimental purposes. Ronan typically participated in five sessions per week, receiving ~40% of her diet during these experimental sessions.

The study was conducted without harm under National Marine Fisheries Service marine mammal research permits 14535 and 18902, with the approval and oversight of the Institutional Animal Care and Use Committee at the University of California Santa Cruz.

Apparatus

Testing occurred in a 3.6 × 5.2 m enclosure containing a 1.2 m deep, 2.25 m square pool, and surrounding deck space. The experimental setup (similar to that used in Cook et al., 2013) consisted of a 1.1 × 1.5 m painted wooden panel mounted vertically in the doorway of the enclosure. A 0.8 × 0.3 m raised wooden platform was placed on the deck facing the panel, 0.4 m away. Ronan used this platform to find and maintain a consistent stationing position prior to each trial. She rested her foreflippers on the platform while directly facing the panel, and could then move her head freely without touching the panel. An assistant sitting quietly outside the enclosure and behind the panel delivered fish rewards through a short length of PVC pipe mounted in the panel. The experimenter observed Ronan's real-time performance from behind the panel through a 9 cm diameter convex mirror placed 2 m to the side of the flipper station. Both the experimenter and the assistant were concealed from Ronan's view during all trials.

Each session was recorded on a GoPro Hero 2 camera mounted inside the enclosure, 0.25 m above the convex mirror. The auditory stimuli were projected from an Advent AV570 amplified speaker placed ~1 m from Ronan and from the camera. The absolute broadband received level of the brief auditory stimuli presented through the speaker was ~100 dBpeak (re 20 μPa); the equivalent sensation level was 60–80 dB at the frequency of the test stimuli based on species-typical hearing sensitivity (Reichmuth et al., 2013). The level of the stimulus was established to ensure saliency of the auditory cues in an outdoor, coastal environment.

Stimuli

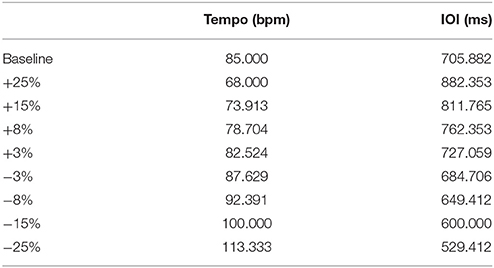

Stimuli were repetitive click tracks created in Audacity, an open-source audio editing program. The clicks comprised two overlaid pure tones of 659 and 1319 Hz for a duration of 10 ms, as was used in Cook et al. (2013). Each track began with a series of beats at a steady rate followed by a single perturbation of either phase or tempo at a magnitude of ±25, ±15, ±8, or ±3% of the inter-onset interval (IOI, equivalent to 60 divided by the tempo in beats per minute). For example, a +15% shift of the 85 bpm condition would be 73.913 bpm. The perturbations were introduced at a different beat for each condition (between 16 and 25 beats after the beginning of the trial) to prevent prediction of the onset location. Primary testing was completed at a base tempo of 85 bpm (705.88 ms IOI), to which Ronan had not previously been exposed; Table 1 lists the perturbation tempos, and their corresponding IOI values. We also tested Ronan with stimulus perturbations at two additional novel base tempos (94.444 and 77.273 bpm, ±10% of the 85 bpm condition, see Supplementary Materials) independently of each other and the main tempo.

Table 1. Tempo and inter-onset interval perturbation values referenced to the baseline (no perturbation) condition.

General Procedure

The auditory stimulus was started after Ronan calmly positioned at the flipper station and oriented toward the panel. The trial was ended after a predetermined performance criterion of 20 or 40 consecutive, apparently entrained bobs (termed “good” bobs) as judged by the experimenter in real time, similar to the procedure used in Cook et al. (2013). Transfer trials (i.e., trials when a perturbation was presented) were run to a criterion of 20 good bobs following the perturbation, and baseline trials (those with no perturbation) were run to a criterion of 40 good bobs starting at the beginning of the trial. At the beginning of each session, two “warm-up” trials (at the base tempo with no perturbation) were presented to confirm stimulus control of the behavior and run to a criterion of 15–30 apparently entrained beats. Each trial was terminated with a previously conditioned reinforcer (a sharp whistle blown by the experimenter that marked the last bob in the criterial run) followed by a reward of two whole capelin fish offered to Ronan through the feeding port in the panel. Ronan then entered the pool for a small fish reward and returned to the flipper station to begin the next trial.

One experimental replicate at a given base tempo encompassed 24 trials: one trial at each test condition (eight phase changes and eight tempo changes for a total of 16 perturbations) and eight unperturbed trials at the base rate. One session, equivalent to one half of a replicate series, consisted of two warm-up trials at the base rate followed by a randomly-shuffled sequence consisting of four baseline (unperturbed) trials, four tempo perturbations, and four phase perturbations. The perturbations for both phase and tempo were each counterbalanced to ensure an equal number of positive and negative shifts per session. Sessions were broken into three blocks of four stimulus presentations each (not including the two warm-up trials), with a short 30 s break between each block, in which Ronan received four to five half capelin while swimming calmly in the water.

Ronan completed 10 replicates of the 85 bpm test series during 20 sessions; that is, she completed 10 trials with each of the 16 phase and tempo perturbations (n = 160 perturbation trials, n = 80 baseline trials). Subsequently, she completed a single replicate with the two additional base tempos over a total of four sessions (n = 32 perturbation trials, n = 16 baseline trials).

During two sessions, trials were interrupted by external factors before the perturbation occurred: once by vocalizations from an animal in a neighboring enclosure, and once by beeping from a truck in close proximity to the testing facility. In these cases, the experimenter immediately stopped the interrupted trial and proceeded as though the trial had been completed. The aborted trial was retested at the end of the session, and the interrupted trials were not included in any analysis.

Video Analysis

We recorded each session at a frame rate of 120 frames per second (equivalent to a resolution of 8.333 ms per frame). Although Ronan's behavior was continuous, the video data is necessarily binned into windows of 8.333 ms, which introduces some small margin of error into any analysis of precise timing. Nevertheless, a single frame represents, at most, 2% of the IOI.

The primary measure of Ronan's performance was selected as the coincidence of the nadir of her head position with the onset of an auditory beat. Specifically, the height of the tip of the nose was used as the marker for the inflection point. An observer using frame-by-frame analysis determined the time of the lowest point for each head bob, with trials viewed in AvsPmod, an open-source video editing program. When more than one frame appeared to show the lowest point, the first of these frames was selected.

Video footage was analyzed independently by two observers. Inter-observer reliability was determined using a common subset of practice trials. Out of 136 bobs, the observers agreed on the frame number corresponding to the lowest point on 127 of them, and the nine disagreements fell within one frame of each other; this means that 100% of the observations were within 8.333 ms. Considering the 10,777 frames over these 136 bobs, the calculated Cohen's kappa (0.933) indicated very high inter-observer agreement.

To compare the observed head movements to the timing of the auditory stimuli, we combined the movement data from video analysis with the timing of beat onset determined from the corresponding camera audio, sampled at 48 kHz. For each trial, we located the onset of the first beat by visual analysis of the waveform to the nearest millisecond using Audacity, and then calculated times of subsequent beats based on the stimulus tempo and perturbation location. Because the speaker, the camera, and Ronan were approximately equidistant, we considered the sound travel time from the speaker to Ronan as approximately equal to the sound travel time from the speaker to the camera. Therefore, we used the audio from the camera for timing and did not consider the sound travel time in any subsequent calculations.

Statistical Analysis

We quantified Ronan's performance with circular statistics. As the analysis was focused on performance following perturbation rather than overall statistical similarity between movement and beat, analysis of each transfer trial was restricted to a subset of bobs: specifically, the 10 bobs preceding the perturbation and the 20 bobs following. In baseline trials, where there was no perturbation, the entire trial was included.

In several trials (n = 10), Ronan exhibited “double bobs” where she bobbed twice for a given beat. This typically occurred during the +25% tempo condition of the 85 bpm base tempo, when she continued moving at the original rate such that when the first shifted beat occurred, her head was near the highest point of the bob rather than the lowest. In response to this beat, Ronan immediately nodded her head in a smaller bobbing motion before slowing her overall movement to adapt to the new tempo. These outlier bobs were easily identified by the shift in angle of her head in relation to her neck, and were excluded from the analysis. An example of a double bob can be seen in the first trial of Supplementary Video 1.

First, the relative phase angle between each head bob and the nearest stimulus beat was calculated using Equation (2).

The phase is expressed, in radians, as the time between a head bob (tbobn) and the nearest stimulus beat (tbeatn), as a proportion of the inter-onset interval of the beats surrounding the head bob (In). For all bobs, the relative phase angle was inherently restricted to a range of −π to π radians, as no bob occurred more than π radians away from its nearest beat.

We calculated the mean relative phase angle () and mean vector length (r) for each trial using the argument (Equation 3) and modulus (Equation 4), respectively, of the sums of the angles as complex numbers.

The mean vector length, which indicates the concentration of the mean angle, ranges from 0 (no mean angle) to 1 (perfect concordance of angles). Together, the mean relative phase angle and mean vector length specify the strength of Ronan's performance on a given trial. For each trial, we used the V-test to determine whether Ronan's performance was significantly different from a mean relative phase of 0, which would indicate perfect synchrony with the stimulus (Zar, 1999). Again, we included both pre-perturbation (10 preceding) bobs and post-perturbation (20 following) bobs in this analysis to provide an evaluation of her synchronization across each transfer trial; all bobs within each baseline trial were included.

Relative phase angles for each beat were also averaged across replicates to obtain an average trial for the baseline and each perturbation type. We used these averaged trials to fit the oscillator models.

Model Fitting

The nonlinear equations that describe rhythmic behavior are often explained using a “circle map,” an equation that produces a set of phases which predict the phase of a stimulus event relative to the onset of the behavioral oscillation (Pikovsky et al., 2001; Large and Palmer, 2002).

Equation (5) states that the phase of each successive auditory event (ϕn+1)—in this case, the onset of the click stimulus—is determined by the current auditory event's relative phase (ϕn), the frequency of the stimulus relative to the oscillator's frequency (expressed as the product of the current period of the stimulus (tn+1−tn) and the current radian frequency of the oscillator (ωn = 2πfronan), and a stimulus coupling (i.e., sine of the current auditory event's relative phase modulated by a coupling factor, α). The coupling factor indicates how strongly the relative phase of the oscillator is affected by the stimulus. Because phase is a circular value, the resulting phase is taken modulo 2π (the remainder after dividing the phase by 2π) and normalized to the range of −π to π.

If Ronan were to bob her head at exactly the same rate as the stimulus, the number of bobs to correct for being ahead of or behind the beat would depend solely on the phase coupling factor, α: a high coupling factor would mean a very quick adaptation to the stimulus, while a low coupling factor would mean a slower adaptation. The optimal value for the phase coupling factor is 1.0, which is the largest value that does not result in overcorrection. A value >2.0 causes the equation to become unstable (Pikovsky et al., 2001).

If, on the other hand, Ronan's period were different from the stimulus period, she still might be able to adapt to a steady phase, but it would be at a non-zero phase. This non-zero phase can be calculated with Equation (5) by assuming that ϕn+1 = ϕn and solving for ϕn. However, if the phase coupling factor (α) was not sufficiently large, she might never adapt to a steady phase and instead would start to “phase-wrap.” Therefore, phase adaptation alone does not guarantee perfect synchronization; period adaptation is required as well. This is described by a supplemental equation to the circle map.

From Equation (6), we can see that each successive oscillator radian frequency (ωn+1)—in this case, the oscillator radian frequency is 2π multiplied by the inverse of the time between two successive head bobs—is dependent on the oscillator radian frequency of the current beat (ωn) and a different stimulus coupling (the sine of the relative phase of the current beat, modulated by a different coupling factor, β). Again, this coupling factor indicates how strongly the oscillator period is affected by the stimulus.

Thus, in order to completely synchronize, an oscillator needs to adapt both phase and period. Together, Equations (5) and (6) accurately model not only the entrainment of human performers to a simple repeating stimulus, but to stimuli with multiple changes in phase and tempo over the course of the stimulus.

We fit a deterministic version of Equations (5) and (6) to the circularly averaged trial from each condition. α and β were varied to minimize the root mean squared error (RMSE) of the test model compared to Ronan's data. The range used for fitting α was 0.4–2.0 in increments of 0.1, and the range used for fitting β was 0.01–0.70 in increments of 0.01. Because Equation (5) describes the phase of the stimulus relative to the oscillator, the phases produced by the model are opposite of the measured behavioral data, which indicates the phase of the oscillator to the stimulus. In other words, ϕmodel = −ϕmeasured.

Parameter Regression

To identify any potential relationship between either of the coupling parameters and the perturbation magnitude, we regressed both α and β as a function of condition against perturbation magnitude.

Results

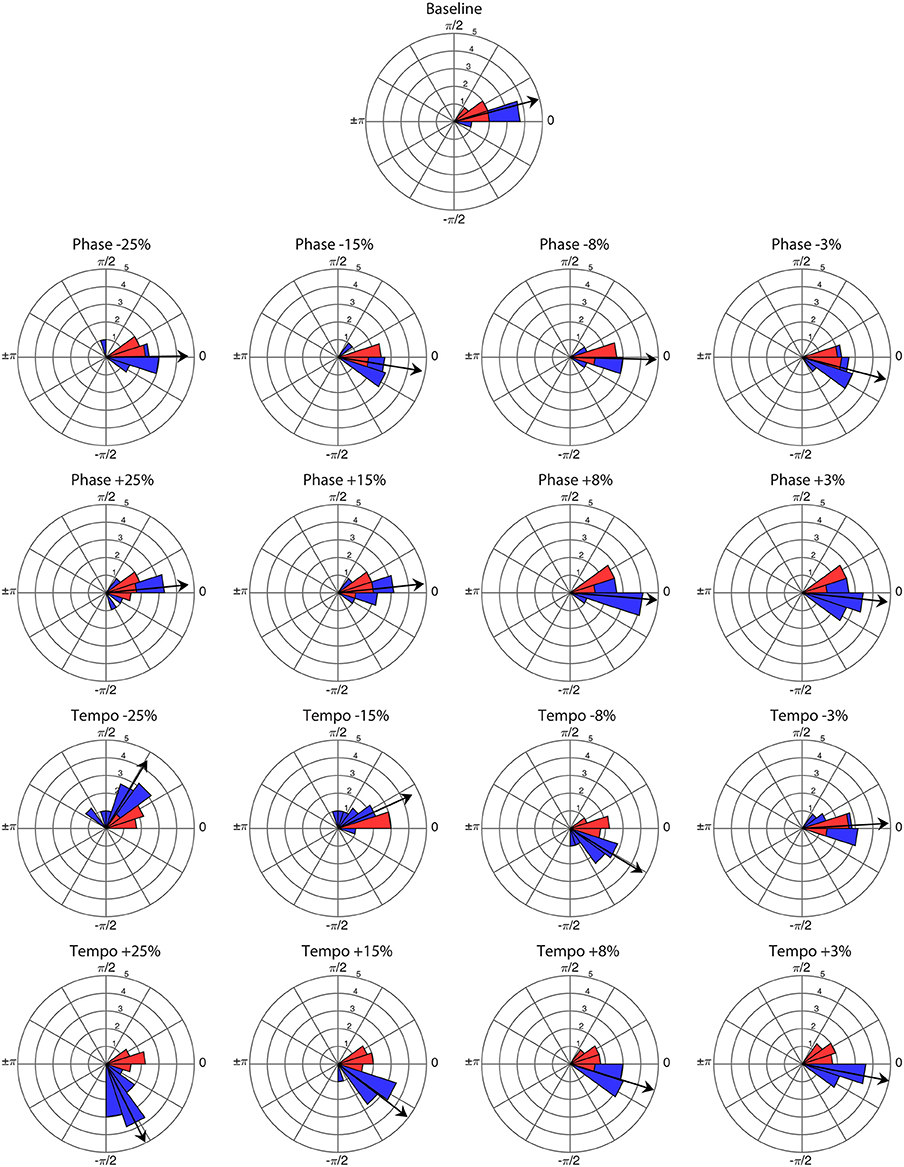

Ronan successfully entrained to all stimuli and perturbations at the base tempo of 85 bpm (see Supplementary Video 1). For all trials, the critical value of the V-test was >3.4, indicating that the distribution of bobs was significantly nonrandom (p < 0.001) with respect to 0 radians. Thus, Ronan's head movements were strongly correlated with the beat on both baseline trials and transfer trials containing a perturbation event. Figure 1 displays the correspondence of the angular distributions of the initial presentations of each condition, and Figure 2 displays the similarity between performances in all trials. Her subsequent performance on the two additional base tempos (94.444 and 77.273 bpm) showed similar results: she successfully entrained to all stimuli and perturbations (see Supplementary Tables 1, 2 and Supplementary Figures 1–3).

Figure 1. Angular distribution of phase angles for the first presentation of each perturbation condition (n = 16) and baseline trial (n = 1) at a base tempo of 85 bpm. Red wedges show 10 bobs prior to the perturbation and blue wedges show 25 bobs following. The radius of each wedge represents the square root of the number of bobs in that wedge, so that the area of each wedge is equal to the number of bobs. The arrow indicates the mean vector of the post-perturbation bobs, with length normalized to the outer radius of the plot. Note that angles >0 represent bobs trailing the stimulus beat, and angles <0 represent bobs leading the stimulus beat.

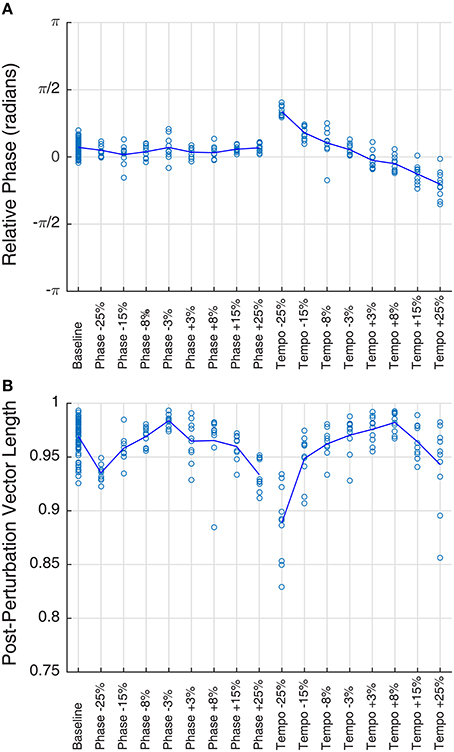

Figure 2. Mean phase (A) and vector length (B) of Ronan's post-perturbation bobs at a base tempo of 85 bpm, grouped by condition. Each trial is plotted as a circle, and the mean for each condition is represented by the line. Mean phase shows a linear trend with tempo changes (right portion of plot A), a trend described in a prior study of Ronan's rhythmic entrainment ability (Cook et al., 2013). In all cases, mean vector length falls between 0.88 and 0.99, indicating a very high concordance of phases within the trial.

Ronan's performance on baseline and transfer trials revealed rapid entrainment to the base tempo, with performance stabilizing within the first four beats (Supplementary Video 1), as previously observed by Cook et al. (2013). Unexpectedly, her performance with all tempos and all stimuli showed a slight phase progression over the course of each trial: the average slope of relative phase per beat across all trials was −0.0206, and the slope was different from zero for the majority of trials (n = 80 baseline trials, n = 62 phase perturbation trials, n = 55 tempo perturbation trials, p < 0.05). This represents a deviation from her previous performance (Cook et al., 2013). However, this deviation was consistent across replicates and conditions.

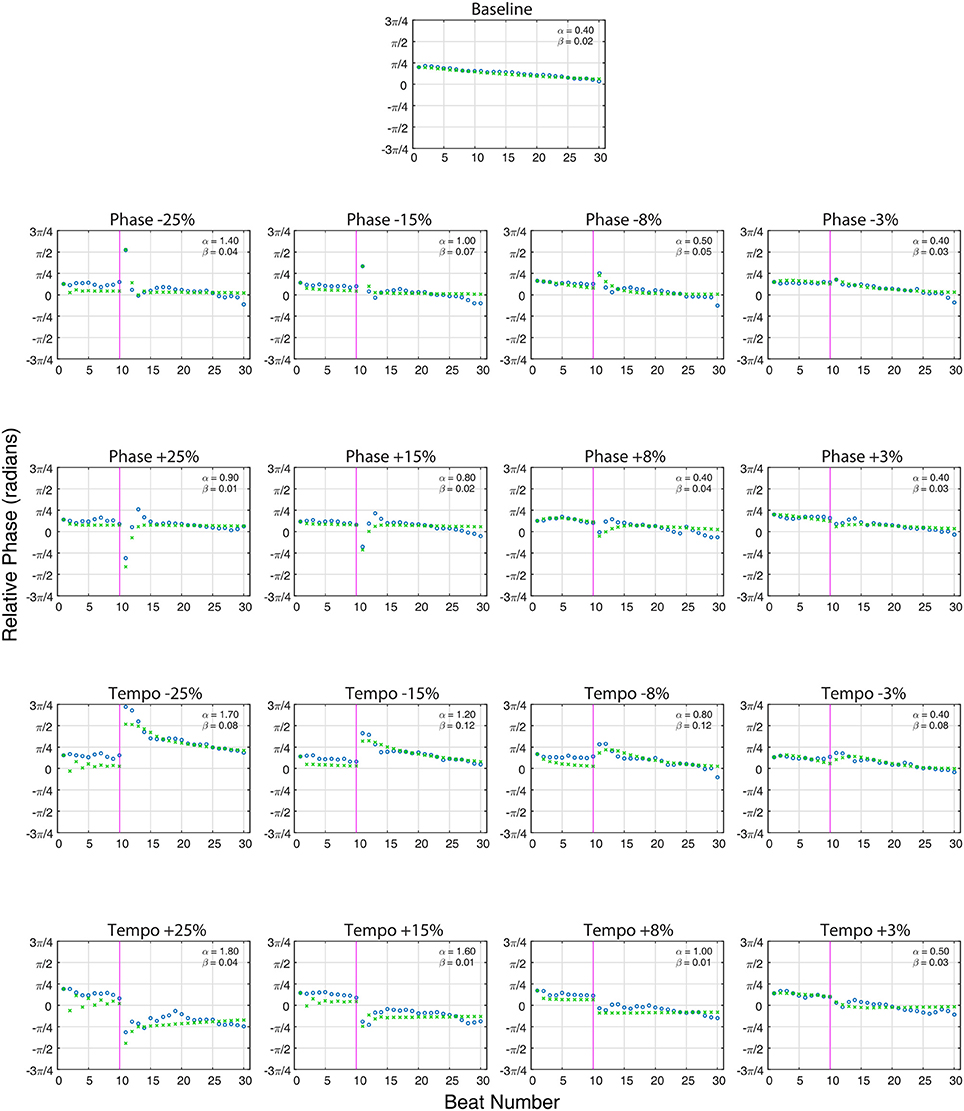

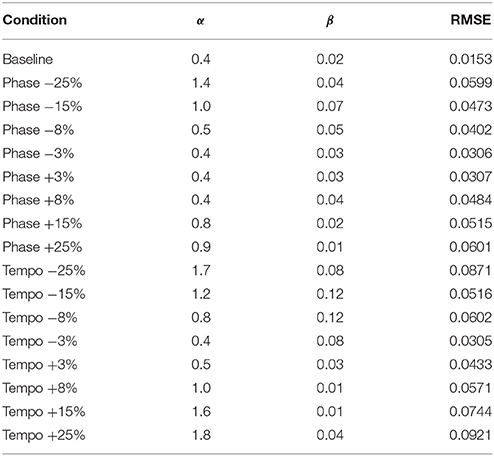

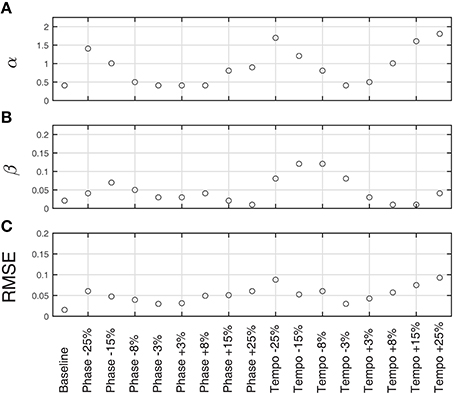

Figure 3 shows the model fit compared to Ronan's pooled performance for each condition. Table 2 and Figure 4 show the fitted coupling parameter values and final RMSE for each condition. RMSE was very low for all conditions, with an average value of 0.0518 radians. Phase coupling was strong; across all conditions, the average parameter value was 0.894. Loehr et al. (2011) found that human subjects performing a comparable task (playing a piano keyboard to a metronome with a changing tempo) had an average phase coupling parameter value of 0.875, quite close to Ronan's. Ronan's observed period coupling was much weaker, averaging 0.0471 across all conditions. This is quite low compared to the subjects in the Loehr study, who had an average period coupling parameter value of 0.450.

Figure 3. The averaged relative phase in radians (Y-axis) over successive beats (X-axis) for averaged baseline trials (n = 80, top) and each averaged perturbation condition (n = 10 per condition) at a base tempo of 85 bpm. Ronan's results (circles) are plotted against the model predictions (x's). The close alignment of the model predictions to the experimentally obtained values indicate that Ronan's responses to these perturbations are consistent with the model of coupled oscillation. On perturbation conditions, the vertical line at 10 beats indicates the onset of the tempo or phase shift indicated at the top of the plot. Phase (α) and period (β) coupling factors are noted in the upper right portion of each plot.

Table 2. Phase (α) and Period (β) coupling parameter values and Root Mean Squared Error (RMSE) for model fits of Ronan's experimental data at 85 bpm.

Figure 4. Fitted model parameters and RMSE for averaged trials for each condition at 85 bpm (n = 80 baseline trials, n = 10 perturbation trials per condition). α (A) represents the strength of phase coupling, or how strongly the stimulus affects the phase of the model's behavior. β (B) represents the strength of the period coupling. On average, the magnitude of phase coupling was 20 times stronger than the magnitude of the period coupling, suggesting that Ronan is primarily using phase adaptation to match the stimulus. Also of note is that α is strongly affected by perturbation magnitude. RMSE (C) is the root mean squared error of the fitted model compared to Ronan's behavior.

We also observed a significant positive linear relationship between phase coupling magnitude and absolute perturbation magnitude [RMSE = 0.2800, F(1, 15) = 35.1, p < 0.0001]. Period coupling, on the other hand, showed a significant negative linear relationship with perturbation magnitude [RMSE = 0.0288, F(1, 15) = 9.02, p < 0.01].

Discussion

Ronan's performance with novel tempos containing embedded tempo and phase perturbations showed remarkable ability to adapt quickly and accurately to synchronize her body motion to the temporal features of the auditory stimulus stream. Moreover, her beat keeping (ranging from 61.818 to 125.925 bpm across the three base tempos) and adaptation (±25% of the IOI) impressively fit models of co-oscillation, drawn from physics and validated in human beat-keeping experiments. The findings show a strong similarity between dynamics of Ronan's performance and human performance, and parsimony suggests these are rooted in similar and conserved neural mechanisms rather than species-specific adaptations. However, these results by themselves are not dispositive, and more comparative data are needed to fully resolve the debate over underlying mechanisms.

Through the lens of neural resonance, we see that Ronan's beat-keeping behavior in response to stimulus perturbations compared strongly to that measured in humans in four ways: (1) flexibility in tempo matching was evident in her behavior throughout testing, (2) changes in phase and tempo were matched through both phase and period adaptation, (3) phase adaptation was stronger than tempo adaptation, and (4) reduced sensitivity to smaller perturbations was observed (discussed below).

Ronan's performance in this study differed from human performance in two important ways, related to (1) phase coupling, and (2) period adaptation. For most perturbation trials, phase coupling (α) varied based on perturbation magnitude, dramatically increasing for larger magnitude shifts (perturbations >8%). The significant linear relationship between α and absolute perturbation magnitude suggests that the more noticeable alterations induced a larger change in coupling. In most human studies, phase coupling has been considered more or less constant (for review see Repp, 2005; Large, 2008), so Ronan's variable coupling strength is a novel discovery. However, her performance does align with findings in humans that larger perturbations are more noticeable because they represent more significant violations of expectation of where the next beat should occur (Large and Jones, 1999). It also suggests another similarity to humans: the just-noticeable difference in humans for tempo changes of a single interval (equivalent to a change in phase) is ~6% (Drake and Botte, 1993). Ronan's results here imply that she did not readily perceive the ±3 or ±8% phase perturbations or the ±3% tempo perturbations, similar to what might be expected in human performance based on available research.

The second divergence in Ronan's behavior relative to that of humans is decreased period adaptation. While human studies have shown typical period coupling values between 0.3 and 0.8 (Loehr et al., 2011), Ronan's period coupling values did not exceed 0.2. Again, it is important to note that the human comparison is imperfect. Human subjects played a melody on the keyboard with a metronome, as opposed to a single discrete repeating movement. Furthermore, rather than a single sudden shift, the tempo changed continuously following the shift onset.

Additionally, Ronan had a larger phase/tempo offset than typically seen in humans (e.g., Repp, 2005; Repp and Su, 2013): her starting relative phase showed a direct linear correlation with IOI, with faster tempos effecting a starting phase further behind the beat, a trend described previously in Cook et al. (2013).

These differences relative to human subjects may be rooted in behavioral aspects of Ronan's performance. The gradual phase progression on all trials and changing phase coupling strength for larger perturbations suggest that Ronan used a specific strategy to entrain to these stimuli. Although she showed reliable phase and tempo matching throughout the experiment, her precision dramatically increased following relatively large perturbations. One possible interpretation is that basic beat keeping with simple metronomic stimuli is quite easy for Ronan following her extensive training with these and more complicated stimuli. Perhaps she uses a motor heuristic to produce “good enough” entrainment without employing any significant attentive effort. However, following a perturbation, realigning her movement with the beat may require greater attention. This could then drive an up-regulation of auditory motor networks, leading to increased coupling and greater performance. There is extensive evidence that human beat-keeping performance is heavily reliant on intent and attention (see Large and Jones, 1999; Repp, 2005). Furthermore, “task-positive” networks in humans—attention-driven brain networks that up-regulate in-network functional connectivity (i.e., co-synchrony across nodes) during rigorous mental action—include motor and motor planning regions (Fox et al., 2005; Bardouille and Boe, 2012). Increased attention to the stimulus following perturbation could therefore lead to increased resonance between the neural oscillators of interest.

In most respects, Ronan's beat-keeping performance was as precise and reliable as that observed in human studies, and was well fit by models of coupled oscillation. Ronan's obvious ability to adaptively entrain her body movements to auditory rhythms extends the findings reported for this subject by Cook et al. (2013). Although the current experiment did not explicitly test adaptation to phase or tempo change in more complex stimuli, Ronan has successfully entrained to human-generated music that contains natural variability in both phase and tempo (Cook et al., 2013). Not only does this support the likelihood of shared mechanisms, it emphasizes Ronan's usefulness as a comparative model to study other aspects of rhythmic entrainment. In addition, Ronan's beat keeping did not emerge de novo—she received explicit and extensive operant (positive reinforcement) training. Therefore, she may serve as a model for training other non-human beat keepers. Supplemental testing with Ronan and with additional non-human subjects should clarify the mechanisms supporting beat-keeping ability and resolve whether these mechanisms are evolutionarily conserved. Further exploration of these results may also improve understanding of other facets of human musical ability. Resonance of neural oscillators with an external stimulus has been proposed as the foundation for many areas of music perception and cognition, including pitch and meter perception (see Large, 2008 for a review).

Patterns of neural oscillations have been observed in every nervous system examined (Glass, 2001). The basic physics of the structure of neural oscillators shows that if stimulated rhythmically, they will synchronize. The finding that non-human as well as human beat keeping is consistent with models of neural resonance supports a parsimonious explanation of beat-keeping behavior as arising from basic principles of nervous system behavior. That being said, the tendency of linked neural populations to co-oscillate could be only the beginning of an understanding of sensorimotor synchronization. While coupled oscillation between neural populations may be necessary and sufficient for supporting a general faculty for beat keeping, great potential still exists for variability in the dynamics of beat-keeping behavior. First and foremost, animals may differ in connection strengths between relevant neural populations. This could be due to differences in anatomical connectivity, or differences in functional connectivity in these brain circuits, which can change with learning, across development, and dynamically with attention, intention, and other psychological factors. To date, the field of comparative rhythm has focused on answering the question “which species can keep a beat?” If basic and conserved neural mechanisms support entrainment intrinsically, the more productive question is this: “How can we use sensorimotor synchronization paradigms as a comparative tool to better understand brain function and behavior across species and contexts?”

Author Contributions

AR, PC, and CR designed the study; AR conducted all experiments; and AR, PC, CR, and EL analyzed the data, interpreted the results, and wrote the manuscript.

Funding

Support for this work was provided in part by the Special Projects Fund of the Pinniped Cognition and Sensory Systems Laboratory, and a grant to CR from the International Association of Oil and Gas Producers through the Exploration and Production (E&P) Sound and Marine Life Joint Industry Programme (Award 22-07-23).

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

The authors thank the dedicated research team of the Pinniped Cognition and Sensory Systems Laboratory. We are especially grateful to Lima Kayello and Jacob Linsky for their assistance with data analysis. We also thank Ronan for her enthusiastic participation and cooperation in this behavioral research.

Supplementary Material

The Supplementary Material for this article can be found online at: http://journal.frontiersin.org/article/10.3389/fnins.2016.00257

References

Aronson, D. G., Ermentrout, G. B., and Kopell, N. (1990). Amplitude response of coupled oscillators. Phys. D 41, 403–449. doi: 10.1016/0167-2789(90)90007-C

Bardouille, T., and Boe, S. (2012). State-related changes in MEG functional connectivity reveal the task-positive sensorimotor network. PLoS ONE 7:e48682. doi: 10.1371/journal.pone.0048682

Bispham, J. (2006). Rhythm in music: what is it? Who has it? And Why? Music Percept. 24, 125–134. doi: 10.1525/mp.2006.24.2.125

Biswal, B. B., Yetkin, F. Z., Haughton, V. M., and Hyde, J. S. (1995). Functional connectivity in the motor cortex of resting human brain using echo-planar MRI. Magn. Reson. Med. 34, 537–541. doi: 10.1002/mrm.1910340409

Börgers, C., and Kopell, N. (2003). Synchronization in networks of excitatory and inhibitory neurons with sparse, random connectivity. Neural Comput. 15, 509–538. doi: 10.1162/089976603321192059

Bregman, M. R., Iversen, J. R., Lichman, D., Reinhart, M., and Patel, A. D. (2013). A method for testing synchronization to a musical beat in domestic horses (Equus ferus caballus). Empir. Musicol. Rev. 7, 144–156.

Bressler, S. L., and Menon, V. (2010). Large-scale brain networks in cognition: emerging methods and principles. Trends Cogn. Sci. 14, 277–290. doi: 10.1016/j.tics.2010.04.004

Brunel, N. (2003). Dynamics and plasticity of stimulus-selective persistent activity in cortical network models. Cereb. Cortex 13, 1151–1161. doi: 10.1093/cercor/bhg096

Bullmore, E., and Sporns, O. (2009). Complex brain networks: graph theoretical analysis of structural and functional systems. Nat. Rev. Neurosci. 10, 186–198. doi: 10.1038/nrn2575

Buzsáki, G., and Draughn, A. (2004). Neuronal oscillations in cortical networks. Science 304, 1926–1929. doi: 10.1126/science.1099745

Clayton, M., Sager, R., and Will, U. (2005). In time with the music: the concept of entrainment and its significance for ethnomusicology. Eur. Semin. Ethnomusicol. 1, 3–75. doi: 10.1093/acprof:oso/9780195339680.001.0001

Cook, P. F., Reichmuth, C., Rouse, A. A., Libby, L. A., Dennison, S. E., Carmichael, O. T., et al. (2015). Algal toxin impairs sea lion memory and hippocampal connectivity, with implications for strandings. Science 350, 1545–1547. doi: 10.1126/science.aac5675

Cook, P., Rouse, A., Wilson, M., and Reichmuth, C. (2013). A California sea lion (Zalophus californianus) can keep the beat: motor entrainment to rhythmic auditory stimuli in a non vocal mimic. J. Comp. Psychol. 127, 412–427. doi: 10.1037/a0032345

Cunningham, K. A., Hayes, S. A., Wargo Rub, A. M., and Reichmuth, C. J. (2014a). Auditory detection of ultrasonic coded transmitters by seals and sea lions. J. Acoust. Soc. Am. 135, 1978–1985. doi: 10.1121/1.4868371

Cunningham, K. A., and Reichmuth, C. (2016). High-frequency hearing in seals and sea lions. Hear. Res. 331, 83–91. doi: 10.1016/j.heares.2015.10.002

Cunningham, K. A., Southall, B. L., and Reichmuth, C. (2014b). Auditory sensitivity of seals and sea lions in complex listening scenarios. J. Acoust. Soc. Am. 136, 3410. doi: 10.1121/1.4900568

deGuzman, G. C., and Kelso, J. A. S. (1991). Multifrequency behavioral patterns and the phase attractive circle map. Biol. Cybern. 64, 485–495. doi: 10.1007/BF00202613

Drake, C., and Botte, M.-C. C. (1993). Tempo sensitivity in auditory sequences: evidence for a multiple-look model. Percept. Psychophys. 54, 277–286. doi: 10.3758/BF03205262

Fox, M. D., Snyder, A. Z., Vincent, J. L., Corbetta, M., Van Essen, D. C., and Raichle, M. E. (2005). The human brain is intrinsically organized into dynamic, anticorrelated functional networks. Proc. Natl. Acad. Sci. U.S.A. 102, 9673–9678. doi: 10.1073/pnas.0504136102

Greicius, M. D., Krasnow, B., Reiss, A. L., and Menon, V. (2003). Functional connectivity in the resting brain: a network analysis of the default mode hypothesis. Proc. Natl. Acad. Sci. U.S.A. 100, 253–258. doi: 10.1073/pnas.0135058100

Hasegawa, A., Okanoya, K., Hasegawa, T., and Seki, Y. (2011). Rhythmic synchronization tapping to an audio–visual metronome in budgerigars. Sci. Rep. 1, 1–8. doi: 10.1038/srep00120

Hattori, Y., Tomonaga, M., and Matsuzawa, T. (2013). Spontaneous synchronized tapping to an auditory rhythm in a chimpanzee. Sci. Rep. 3:1566. doi: 10.1038/srep01566

Hoppensteadt, F. C., and Izhikevich, E. M. (1996). Synaptic organizations and dynamical properties of weakly connected neural oscillators. II. Learning phase information. Biol. Cybern. 75, 129–135. doi: 10.1007/s004220050280

Large, E. W. (2008). “Resonating to musical rhythm: theory and experiment,” in Psychology of Time, ed S. Grondin (Bingley: Emerald Group Publishing), 189–231.

Large, E. W., Fink, P., and Kelso, J. A. S. (2002). Tracking simple and complex sequences. Psychol. Res. 66, 3–17. doi: 10.1007/s004260100069

Large, E. W., and Gray, P. M. (2015). Spontaneous tempo and rhythmic entrainment in a bonobo (Pan paniscus). J. Comp. Psychol. 129, 317–328. doi: 10.1037/com0000011

Large, E. W., Herrera, J. A., and Velasco, M. J. (2015). Neural networks for beat perception in musical rhythm. Front. Syst. Neurosci. 9:159. doi: 10.3389/fnsys.2015.00159

Large, E. W., and Jones, M. R. (1999). The dynamics of attending: how people track time-varying events. Psychol. Rev. 106, 119–159. doi: 10.1037/0033-295X.106.1.119

Large, E. W., and Palmer, C. (2002). Perceiving temporal regularity in music. Cogn. Sci. 26, 1–37. doi: 10.1207/s15516709cog2601_1

Large, E. W., and Snyder, J. S. (2009). Pulse and meter as neural resonance. Ann. N.Y. Acad. Sci. 1169, 46–57. doi: 10.1111/j.1749-6632.2009.04550.x

Loehr, J. D., Large, E. W., and Palmer, C. (2011). Temporal coordination and adaptation to rate change in music performance. J. Exp. Psychol. Hum. Percept. Perform. 37, 1292–1309. doi: 10.1037/a0023102

Merchant, H., and Honing, H. (2013). Are non-human primates capable of rhythmic entrainment? Evidence for the gradual audiomotor evolution hypothesis. Front. Neurosci. 7:274. doi: 10.3389/fnins.2013.00274

Nozaradan, S., Peretz, I., Missal, M., and Mouraux, A. (2011). Tagging the neuronal entrainment to beat and meter. J. Neurosci. 31, 10234–10240. doi: 10.1523/JNEUROSCI.0411-11.2011

Nozaradan, S., Peretz, I., and Mouraux, A. (2012). Steady-state evoked potentials as an index of multisensory temporal binding. Neuroimage 60, 21–28. doi: 10.1016/j.neuroimage.2011.11.065

Patel, A. D. (2006). Musical rhythm, linguistic rhythm, and human evolution. Music Percept. 24, 99–103. doi: 10.1525/mp.2006.24.1.99

Patel, A. D., and Iversen, J. R. (2014). The evolutionary neuroscience of musical beat perception: the Action Simulation for Auditory Prediction (ASAP) hypothesis. Front. Syst. Neurosci. 8:57. doi: 10.3389/fnsys.2014.00057

Patel, A. D., Iversen, J. R., Bregman, M. R., and Schulz, I. (2009). Experimental evidence for synchronization to a musical beat in a nonhuman animal. Curr. Biol. 19, 827–830. doi: 10.1016/j.cub.2009.03.038

Pikovsky, A., Rosenblum, M. G., and Kurths, J. (2001). Synchronization: A Universal Concept in Nonlinear Sciences. Cambridge: Cambridge University Press. doi: 10.1063/1.1554136

Reichmuth, C., Holt, M. M., Mulsow, J., Sills, J. M., and Southall, B. L. (2013). Comparative assessment of amphibious hearing in pinnipeds. J. Comp. Physiol. A. Neuroethol. Sens. Neural. Behav. Physiol. 199, 491–507. doi: 10.1007/s00359-013-0813-y

Repp, B. H. (2005). Sensorimotor synchronization: a review of the tapping literature. Psychon. Bull. Rev. 12, 969–992. doi: 10.3758/BF03206433

Repp, B. H., and Keller, P. E. (2004). Adaptation to tempo changes in sensorimotor synchronization: effects of intention, attention, and awareness. Q. J. Exp. Psychol. A 57, 499–521. doi: 10.1080/02724980343000369

Repp, B. H., and Su, Y.-H. (2013). Sensorimotor synchronization: a review of recent research (2006-2012). Psychon. Bull. Rev. 20, 403–452. doi: 10.3758/s13423-012-0371-2

Schachner, A. (2012). If horses entrain, don't entirely reject vocal learning: An experience-based vocal learning hypothesis. Empir. Musicol. Rev. 7, 157–159.

Schachner, A., Brady, T. F., Pepperberg, I. M., and Hauser, M. D. (2009). Spontaneous motor entrainment to music in multiple vocal mimicking species. Curr. Biol. 19, 831–836. doi: 10.1016/j.cub.2009.03.061

Sporns, O., Chialvo, D. R., Kaiser, M., and Hilgetag, C. C. (2004). Organization, development and function of complex brain networks. Trends Cogn. Sci. 8, 418–425. doi: 10.1016/j.tics.2004.07.008

Stefanescu, R. A., and Jirsa, V. K. (2008). A low dimensional description of globally coupled heterogeneous neural networks of excitatory and inhibitory neurons. PLoS Comput. Biol. 4:e1000219. doi: 10.1371/journal.pcbi.1000219

Vorberg, D., and Wing, A. (1996). “Modeling variability and dependence in timing,” in Handbook of Perception and Action, eds H. Heuer and S. W. Keele (San Diego, CA: Academic Press), 181–262.

Wallin, N. L., Merker, B., and Brown, S. (eds.). (2000). The Origins of Music. Cambridge, MA: MIT Press.

Will, U., and Berg, E. (2007). Brain wave synchronization and entrainment to periodic acoustic stimuli. Neurosci. Lett. 424, 55–60. doi: 10.1016/j.neulet.2007.07.036

Wilson, H. R., and Cowan, J. D. (1973). A mathematical theory of the functional dynamics of cortical and thalamic nervous tissue. Kybernetik 13, 55–80. doi: 10.1007/BF00288786

Wilson, M., and Cook, P. F. (2016). Rhythmic entrainment: why humans want to, fireflies can't help it, pet birds try, and sea lions have to be bribed. Psychon. Bull. Rev. doi: 10.3758/s13423-016-1013-x. [Epub ahead of print]. Available online at: http://link.springer.com/article/10.3758%2Fs13423-016-1013-x

Keywords: sensorimotor synchronization, rhythmic entrainment, neural oscillators, sea lions, music cognition and perception, non-human models

Citation: Rouse AA, Cook PF, Large EW and Reichmuth C (2016) Beat Keeping in a Sea Lion As Coupled Oscillation: Implications for Comparative Understanding of Human Rhythm. Front. Neurosci. 10:257. doi: 10.3389/fnins.2016.00257

Received: 05 March 2016; Accepted: 23 May 2016;

Published: 03 June 2016.

Edited by:

Andrea Ravignani, Vrije Universiteit Brussel, BelgiumReviewed by:

Shinya Fujii, The University of Tokyo, JapanAngela S. Stoeger, University of Vienna, Austria

Copyright © 2016 Rouse, Cook, Large and Reichmuth. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) or licensor are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Andrew A. Rouse, YXJvdXNlQHVjc2MuZWR1

Andrew A. Rouse

Andrew A. Rouse Peter F. Cook2

Peter F. Cook2 Edward W. Large

Edward W. Large Colleen Reichmuth

Colleen Reichmuth