- 1Department of Psychological Medicine, Institute of Psychiatry, Psychology and Neuroscience, King's College London, London, United Kingdom

- 2Department of Biostatistics and Health Informatics, Institute of Psychiatry, Psychology and Neuroscience, King's College London, London, United Kingdom

- 3Health Service and Population Research Department, Institute of Psychiatry, Psychology and Neuroscience, King's College London, London, United Kingdom

- 4Lived Experience Advisor, London, United Kingdom

- 5School of Electronic Engineering and Computer Science, Queens Mary University of London, London, United Kingdom

- 6Qntfy, Arlington, VA, United States

- 7Centre for Psychiatry and Mental Health, Wolfson Institute of Population Health, Queen Mary University of London, London, United Kingdom

- 8Department of Child and Adolescent Psychiatry, Institute of Psychiatry, Psychology and Neuroscience, King's College London, London, United Kingdom

- 9MRC Cognition and Brain Sciences Unit, Cambridge, United Kingdom

- 10Department of Psychiatry, University of Cambridge, Cambridge, United Kingdom

- 11South London and Maudsley NHS Foundation Trust, London, United Kingdom

Social media usage impacts upon the mental health and wellbeing of young people, yet there is not enough evidence to determine who is affected, how and to what extent. While it has widened and strengthened communication networks for many, the dangers posed to at-risk youth are serious. Social media data offers unique insights into the minute details of a user's online life. Timely consented access to data could offer many opportunities to transform understanding of its effects on mental wellbeing in different contexts. However, limited data access by researchers is preventing such advances from being made. Our multidisciplinary authorship includes a lived experience adviser, academic and practicing psychiatrists, and academic psychology, as well as computational, statistical, and qualitative researchers. In this Perspective article, we propose a framework to support secure and confidential access to social media platform data for research to make progress toward better public mental health.

Introduction

The viewing of self-harm-related images and posts have been cited as factors in the suicide of young people across the world (1). Not all social media use is detrimental to mental health however, and it is increasingly harnessed to provide support and even suicide prevention strategies (2, 3).

Testimony to US Congress in October 2021 by a former Facebook employee, provided evidence that the social media platform concealed internal research findings regarding the potential negative impact of its Instagram platform on some youth (4). Furthermore, social media platforms have been blocking access to data by external researchers, potentially delaying the development of life-saving advances and discoveries (5). Social media data, for example, offers the scientific community unique insights into the details of a person's digitally mediated life. Near-real-time access to data paired with the informed consent of the individual, could provide many positive opportunities in a clinical setting.

Following the Cambridge Analytica scandal of 2018, where the personal data of millions of Facebook users was harvested without their consent, the platform tightened access to its Application Programming Interface (API), which served as the main tool by which legitimate researchers collected behavioral and digital trace data (6). Facebook's current complex and lengthy guidelines for data access are aimed at commercial organizations and centred on a notion of “enhancing user experience,” including by means of targeted personal advertising (7). Research does not usually intend to improve the individual “user experience,” but instead has wider societal implications. It is important to note that in light of prior misuses of Facebook's API, all use of the API is required to be verified by Facebook and must meet Facebook's own guidelines.

This difference in focus by researchers can result in lack of access to platform products offered widely to the commercial sector, such as the open authentication protocol allowing login and access to user content via a user's Facebook login. This disparity in data accessibility between commercial and academic interests, with commercial gain prioritized over research for public benefit, raises a vital question for scientific research and data ownership. How might we conduct independent, academic research into the impact of social media use on behavioral health and wellbeing when access to data is so limited?

Researchers who try to develop expensive and difficult to maintain bespoke data collection pipelines (i.e., systems designed to regularly extract and store data from consented users), compliant with the terms of service specified by a platform to collect publicly available data, are often unsuccessful. Their requests are reviewed by the platform, and a decision is made stating that the terms are violated as they do not enhance the “user experience.” There is no independent review or appeals process external to the organization with limited engagement and consultation with academic or lived experience researchers to develop systems that meet the needs of all parties.

Moreover, researchers encounter increasingly negative scenarios when they attempt to access social media data from fully consented and ethically approved studies with active participants. Even when informed user consent is carefully documented, social media platforms do not permit (or have technical roadblocks) to data access. As an example, our US colleagues created a system for the consented donation of online data (OurDataHelps.org), to screen for suicide risk and varied mental health diagnoses using natural language processing (3, 8). By January 2021, more than 4,000 individuals had provided self-report mental health data and social media content, yet the agreed access and collection of data was revoked by the platforms. There were neither complaints by the participants nor breaches of data, the platforms simply made it impossible for this project to continue despite a long track record of qualified support of peer-reviewed research by leading Universities.

For us the answer is simple. While users must provide consent to social media platforms for the processing of their personal data, it should be for the user to decide how their other data is processed and used (9). Barriers should not be placed in the way of users making informed decisions about this.

Such a view is supported by legislation and data regulation. In the European Union and the European Economic Area, the use of personal data is regulated by the General Data Protection Regulation 2016/679 - commonly known as GDPR - which poses a number of conditions under which data processing may be considered lawful. The most transparent way for academics to process data and comply with the regulations is by obtaining informed consent.

GDPR also specifies that data processing may be lawful if it is “necessary for the performance of a task carried out in the public interest.” Since it is generally straightforward to defend academic research by accredited Universities as pertaining to public interest, data collection, analysis, and publication for scientific purposes is protected by the GDPR. This is particularly the case when participants have provided informed consent for the use of their data. Yet social media platforms are using presumed incompatibility with data privacy and accessibility as a justification to deflect or deny qualified researcher access requests.

The default position of academic study is to rely heavily on self-reported social media use which is known to be an inaccurate proxy for logged media use (10). Alternatively, participants are burdened with the unwieldy task of requesting and accessing a copy of their data and providing this to researchers (11). The systems the platforms provide to the user, while compliant with the law, are not user friendly for this purpose, which presents the researcher with complications for data completeness and participant retention. It is imperative that we move beyond self-report and utilize behavioral data from platforms including Apple iOS, Google Android, Facebook, Instagram, TikTok, Twitter and YouTuben to understand more objectively how young people are interacting online and how and when this affects their mental wellbeing, in ways that are acceptable to participants.

To address the concerns of the research community about users' safety and security, the UK government's Online Harms White Paper (12), released in 2020, pledged to provide “researchers with access to company data to support research into online harms.” The government proposal also included a 2% “turnover tax” levy on the UK revenues of major technology companies to fund independent research and training packages for clinicians, teachers and other professionals working with children and young people, though this has since been reversed with the Organization for Economic Co-operation and Development (OECD) taxation agreement earlier this year.

Neither recommendation has been realized. Instead, both proposals have been removed from the delayed Online Safety Bill, to be replaced only by a requirement for the government-approved regulatory Office of Communications (OFCOM) to prepare a report explaining how independent researchers are “(a)…currently able to obtain information from providers of regulated services to inform their research, (b) exploring the legal and other issues which currently constrain the sharing of information for such purposes, and (c) assessing the extent to which greater access to information for such purposes might be achieved” (13).

A framework of recommendations

The UK government's Online Harms White Paper's (12) suggested introduction of a voluntary best practice frameworks has not been included in the Draft Online Safety Bill (13), and would not ensure social media platforms met their ethical responsibilities (e.g., data protection, participant health and safety).

Over the last 20 years, social media platforms have been able to develop their own rules as to what, how and why an individual, organization or researcher can access user data. Often these rules change without notice, without prior notification and irrespective of the potential harm this may cause. Therefore, we are proposing a framework to facilitate regulated and monitored access for researchers to social media platform data in order to make long-term progress toward public mental health.

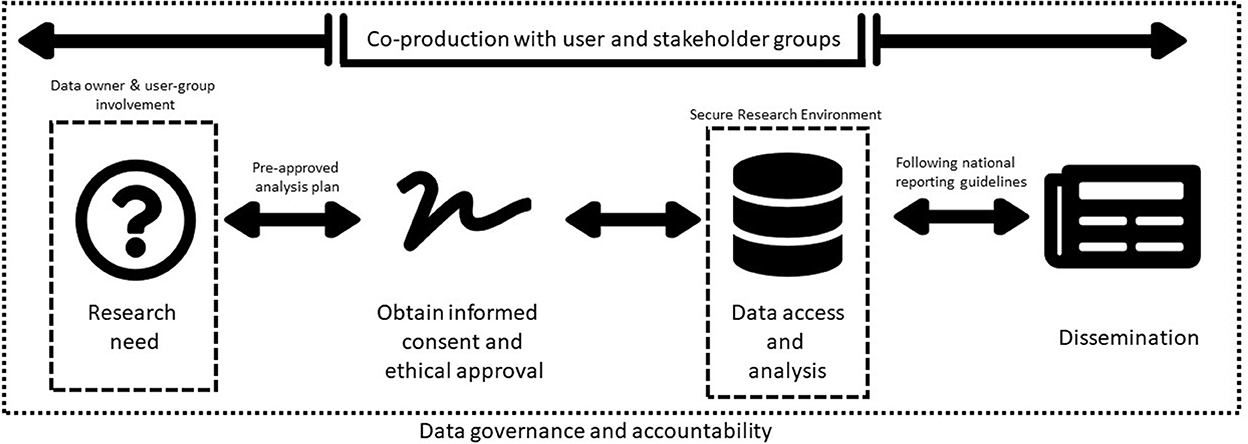

Our framework has four core elements and a cross-cutting theme integral to each stage (refer to Figure 1).

Figure 1. Proposed framework to regulate and monitor researchers' access to social media platform data.

Co-production with user and stakeholder groups is the cross-cutting theme embedded and incorporated into each element of the framework. Using established public and patient involvement standards (e.g., UK Standards for Public Involvement, NIHR: https://sites.google.com/nihr.ac.uk/pi-standards/home), researchers should work collaboratively with those with lived experience, carers and members of the public to first identify the research priorities and then co-produce research protocols and methods.

Research need

Qualified researchers at accredited Universities intending to use social media data to understand and improve youth mental health, should co-produce their research with patients, carers and members of the public. Researchers shall undertake user-centred engagement in line with established public and patient involvement model criteria throughout the study; justify the rationale for data access and engage data owners in the proposed research (14). The data owner and user-group should also review and approve analysis plans to ensure the approach is acceptable, ethically-sound, feasible and of value.

Ethical approval and informed consent

Participants should always be empowered to understand why and how their data will be used for research. This should be in accessible and acceptable formats which user groups co-produce with researchers. Ethically-approved informed consent procedures will state exactly what is being collected, how it will be processed and how results will be reported. This will include clear accessible guidance on how data will be managed following GDPR.

Data access and analysis

Certain social media data inherently cannot be fully anonymised due to free-text and use of images/videos. Therefore, robust data governance guidelines and well-defined individual institutional accountability should be established, on a par with current protocols for medical research. This would include analyzing data in a Secure Research Environment (SRE), where access is intensively monitored and controlled. Data owners should agree data sharing agreements with SRE providers. Exemplars in the UK include the Office for National Statistics Secure Research Service (SRS), which records each interaction with the data and restricts what researchers might do with the data. Having a trained service user group with lived experience involved in qualitative data analysis can realign researchers' misinterpretations and challenge the ways in which findings are reported adding value to the products of research analysis.

Open dissemination

A Registered Report format is recommended, which via standardization, would improve the peer review process to be conducted before data collection and public dissemination of research findings. Lived experience advisers or service user researchers should be included in the authorship of documents, briefings and research papers arising. This would promote better accessibility, transparency and collaboration for the public, academic community and other interested groups in accordance with the Open Science Framework.

Conclusions

Gaining informed consent for social media data access to study youth mental health has the potential for significant benefits in public mental health. Data collected via social media platforms provide us with a unique opportunity to gather vital insights into participants' actions and activities. This unparalleled access will help researchers understand the intricate social constructs of user interactions, perceptions, mental state and health.

At present, the poorly defined term “enhancing user experience” is the main factor that social media platforms apply in determining if access is granted. However, accredited researchers' use of social media platform data does not usually improve user “experience” in the commercial sense, rather it has the potential for wider positive public benefits which are unlikely to be of primary interest for social media platforms.

Tackling harmful and negative content is a global problem, but one solution is to provide access for researchers to understand the problem. It is important that we unlock social media data's potential for research and leverage the data for societal good. We hope this framework will be a “call to action” to stimulate social media platforms, policy makers and researchers to make positive changes by collaborative working.

Data availability statement

Publicly available datasets were analyzed in this study. This data can be found at: Perspective article. Original contribution relates to https://gtr.ukri.org/projects?ref=MR%2FS020365%2F1.

Author contributions

DL, AB, BC, KT, SB-F, ML, AW, TF, and RD contributed to conception and planning of this perspective article. DL and RD wrote the first draft of the manuscript. All authors contributed to sections of the manuscript according to expertise, editing, revision, and approved the submitted version.

Funding

DL was partly funded by the Forces in Mind Trust (Project number: FIMT/0323KCL), a funding scheme run by the Forces in Mind Trust using an endowment awarded by the National Lottery Community Fund. AB, BC, KT, ML, and RD are partly funded by the Medical Research Council (Grant award: MR/S020365/1) and Medical Research Foundation. BC was also partly funded by the Nuffield Trust. ML was partly funded by the Engineering and Physical Sciences Research Council (Grant award: EP/V030302/1) and the Alan Turing Institute. DO receives funds from the National Institute for Health Research (NIHR127408 1221154), the Medical Research Council (MR/W002493/1), and Bart's Charity. AO was partly funded by the Medical Research Council (Grant award: SWAG/076.G101400), the Jacob's Foundation and an Emmanuel College Research Fellowship. TF receives MRC and NIHR funding and is supported by NIHR Applied Research Centre for the East of England and the Cambridge Biomedical Research Centre. RD was also funded by a Clinician Scientist Fellowship from the Health Foundation in partnership with the Academy of Medical Sciences and her work is supported by the National Institute for Health Research (NIHR) Biomedical Research Centre at South London and Maudsley NHS Foundation Trust and King's College London.

Conflict of interest

AW was Chair of the Board of the American Association of Suicidology. DO was a Board member for the Association for Child and Adolescent Mental Health. TF consults to place2Be a third sector organization that provides mental health to schools and is the Vice Chair of the Association for Child and Adolescent Mental Health.

The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher's note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

References

1. Luxton DD, June JD, Fairall JM. Social media and suicide: a public health perspective. Am J Public Health. (2012) 102(Suppl 2):S195–200. doi: 10.2105/AJPH.2011.300608

2. Sedgwick R, Epstein S, Dutta R, Ougrin D. Social media, internet use and suicide attempts in adolescents. Curr Opin Psychiatry. (2019) 32:534–41. doi: 10.1097/YCO.0000000000000547

3. Coppersmith G, Ngo K, Leary R, Wood A. “Exploratory analysis of social media prior to a suicide attempt.” In: Proceedings of the 3rd Workshop on Computational Linguistics and Clinical Psychology. (2016). doi: 10.18653/v1/W16-0311

4. Paul K. Facebook Whistleblower's Testimony Could Finally Spark Action in CONGRESS. Guardian Online (2021). Available online at: https://www.theguardian.com/technology/2021/oct/05/facebook-frances-haugen-whistleblower-regulation (accessed October 15, 2021).

5. Mahase E. Social media companies should be forced to share data for harms and benefits research, say psychiatrists. BMJ. (2020) 368:m209. doi: 10.1136/bmj.m209

6. Mancosu M, Vegetti F. What you can scrape and what is right to scrape: A proposal for a tool to collect public facebook data. Soc Media Soc. (2020) 6:2056305120940703. doi: 10.1177/2056305120940703

7. Dwivedi YK, Ismagilova E, Hughes DL, Carlson J, Filieri R, Jacobson J, et al. Setting the future of digital and social media marketing research: Perspectives and research propositions. Int J Informat Manage. (2021) 59:102168. doi: 10.1016/j.ijinfomgt.2020.102168

8. Kelly DL, Spaderna M, Hodzic V, Coppersmith G, Chen S, Resnik P. Can language use in social media help in the treatment of severe mental illness? Curr Res Psychiatry. (2021) 1:1–4. doi: 10.46439/Psychiatry.1.001

9. Rieger A, Gaines A, Barnett I, Baldassano CF, Connolly Gibbons MB, Crits-Christoph P. Psychiatry outpatients' willingness to share social media posts and smartphone data for research and clinical purposes: Survey study. JMIR Form Res. (2019) 3:e14329. doi: 10.2196/14329

10. Parry DA, Davidson BI, Sewall CJR, Fisher JT, Mieczkowski H, Quintana DS. A systematic review and meta-analysis of discrepancies between logged and self-reported digital media use. Nat Hum Behav. (2021) 5, 1535–47. doi: 10.31234/osf.io/f6xvz

11. Huckvale K, Venkatesh S, Christensen H. Toward clinical digital phenotyping: a timely opportunity to consider purpose, quality, and safety. NPJ Digit Med. (2019) 2:88. doi: 10.1038/s41746-019-0166-1

12. Online Harms White Paper: Full Government Response to the Consultation. London (2020). Available online at: https://www.gov.uk/government/consultations/online-harms-white-paper/outcome/online-harms-white-paper-full-government-response

13. Draft Online Safety Bill Part 4. OFCOM's powers and duties in relation to regulated services, Chapter 7 - Committees, research and reports, section. (2021).

Keywords: social media, data protection, research ethics, risk, Facebook

Citation: Leightley D, Bye A, Carter B, Trevillion K, Branthonne-Foster S, Liakata M, Wood A, Ougrin D, Orben A, Ford T and Dutta R (2023) Maximizing the positive and minimizing the negative: Social media data to study youth mental health with informed consent. Front. Psychiatry 13:1096253. doi: 10.3389/fpsyt.2022.1096253

Received: 11 November 2022; Accepted: 16 December 2022;

Published: 10 January 2023.

Edited by:

Chao Guo, Peking University, ChinaReviewed by:

Elaine Stasiulis, Rotman Research Institute (RRI), CanadaCopyright © 2023 Leightley, Bye, Carter, Trevillion, Branthonne-Foster, Liakata, Wood, Ougrin, Orben, Ford and Dutta. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Rina Dutta,  cmluYS5kdXR0YUBrY2wuYWMudWs=

cmluYS5kdXR0YUBrY2wuYWMudWs=

Daniel Leightley

Daniel Leightley Amanda Bye

Amanda Bye Ben Carter

Ben Carter Kylee Trevillion

Kylee Trevillion Stella Branthonne-Foster4

Stella Branthonne-Foster4 Dennis Ougrin

Dennis Ougrin Rina Dutta

Rina Dutta