- College of Law, Prince Sultan University, Riyadh, Saudi Arabia

ChatGPT empowers instructors to provide interactive, individualized attention and enhance student engagement. It is used to understand the learners so that the teaching materials and assessments can be contextualized. ChatGPT can enrich the learning experience, motivate the learners, and improve academic performance. No study in Saudi Arabia surveyed law educators on the intention to use ChatGPT. To fill the gap in this area, this research investigated the intention of law educators to use ChatGPT. To achieve the research objective, the researcher used a survey method to collect information from law educators in Saudi Arabia. The research revealed that law educators will use ChatGPT in legal education as the constructs of the performance expectancy, effort expectancy, social influence, facilitating conditions, and behavioral intention are found to be significant. The finding has policy, practical, and theoretical implications. The finding can be used to understand the factors that influence ChatGPT adoption by law educators. Accordingly, teaching and learning policies can be strengthened, and the learning institutions can introduce training for the proper and acceptable use of ChatGPT in legal education. The research also expanded the technology adoption model to understand the intention to use ChatGPT among law educators in a developing country.

1 Introduction

ChatGPT (Generative Pre-trained Transformer) is a conversational artificial intelligence interface application chatbot. It created a storm of reactions, both positive and negative. There are many positive responses to its use in various fields, including education. Some are cautious about its use in education as they fear possible abuses and concerns. There are concerns about privacy, personality, manipulation, and ethics (McCallum, 2023; Eke, 2023; Asad et al., 2024). ChatGPT can enhance traditional teaching methods through student engagement and machine-aided intelligent learning. ChatGPT provides an enhanced learning platform that could improve the learning experience and assist students in academic performance. It can empower instructors with interactive individualized attention (Chiu, 2024; García-Peñalvo, 2023).

With adequate appropriate input, ChatGPT is an interactive intelligent chatbot that could answer questions with correct responses (Clarizia et al., 2018). The size and accuracy of the database decide the reliability of responses (Aleedy et al., 2022). ChatGPT was tested in medical, law, and business school examinations and found to be impressive (Terwiesch, 2023; Choi et al., 2023). Since the 1980s, AI has become a significant research area (Williamson et al., 2020). ChatGPT has a profound effect on education (Zhou et al., 2023). However, the technology alone cannot revolutionize the education sector; there is a need to change the learning philosophy to achieve the desired benefit of the technology (Castañeda and Selwyn, 2018).

When learning is planned with technology, learning can be supported by the technology. The learners are recipients of technology services, or they collaborate with technology, or technology empowers learners to take control of their learning and collaborate with other stakeholders (Ouyang and Jiao, 2021; Xia et al., 2022). In whichever aspect the technology is used, the learners, the context of use, assigned tasks, pedagogical methods, level of integration, and application of technology need to be considered (Zheng et al., 2021). This could enhance learning, motivate the learners, and improve academic performance. ChatGPT can assist disabled students. Studies showed that AI-based teaching improved comprehension among dyslexia students (Llorente et al., 2021). It was also shown to enhance learning among autistic students (Zhang et al., 2022). The technology will be of great help in controlling students’ anxiety, inculcating a positive attitude toward learning, and improving skills (Jaiswal and Arun, 2021; Alotaibi et al., 2025). It could build teaching competence through inspiration and self-reflection (Aldeman et al., 2021). The instructors will be able to monitor the students’ performance and take diagnostic steps to control the students’ dropout rate. A rich database could be built to predict students’ performance, behaviors, and related information.

However, caution should be exercised as ChatGPT could diminish critical thinking and innovation capacities. It could affect educational quality, as the students may outsource their work to ChatGPT to facilitate cheating and plagiarism. ChatGPT learns from prior interaction; if there is no prior interaction on the subject matter, it could provide inaccurate information. It is used for answering questions by generating ideas, content, story, solving problems, and creating music. ChatGPT can create social interaction as it is designed to be interactive. ChatGPT will not make classical skills obsolete; paradoxically, it will improve skills related to critical thinking, problem-solving, and critical analysis.

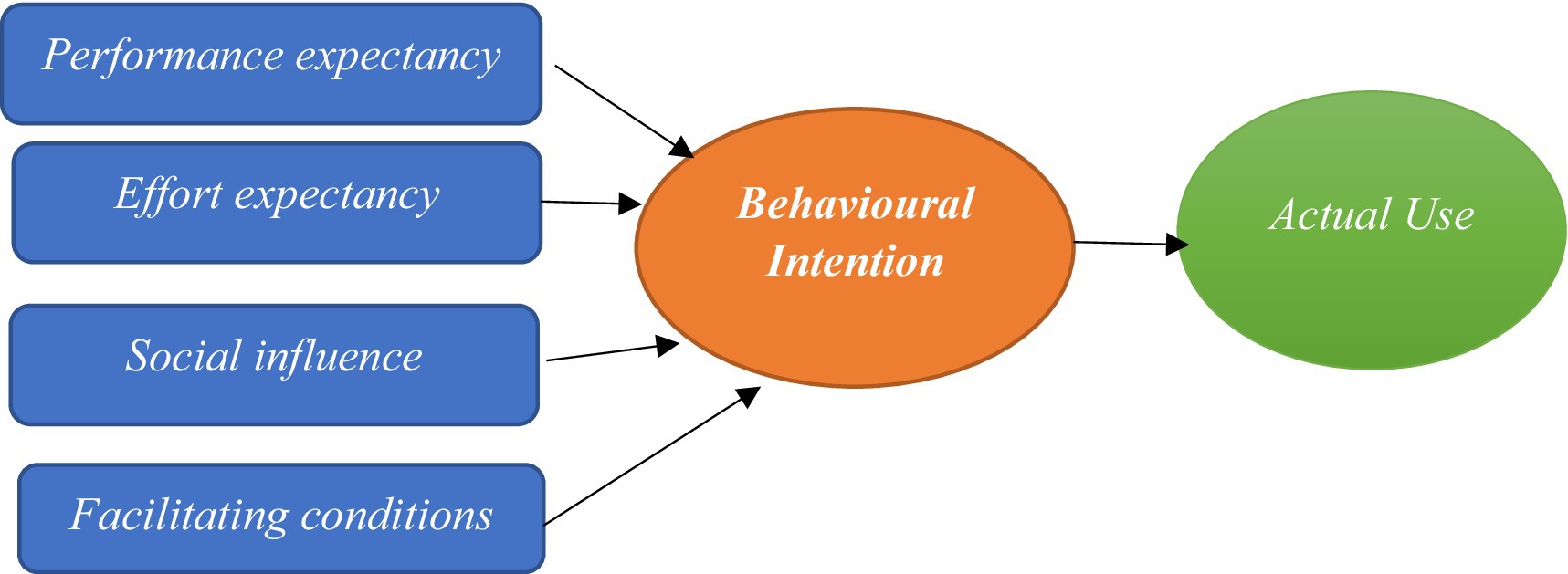

Considering the positive and negative sentiments in the literature, studying the intention to use ChatGPT by law educators will be beneficial. The objective of this research was to collect data on the intention to use ChatGPT among law educators. Research in this area is new, as no study has been undertaken in this aspect among law educators in Saudi Arabia. This research could contribute to the existing knowledge. The research employed the Unified Theory of Acceptance and Use of Technology (UTAUT) model to investigate the intention to use and actual use of ChatGPT among law educators. The UTAUT model has been used since 2003, and since then, over 800 research papers have employed this model in various technology adoption studies (Morsi, 2024). The UTAUT model is considered complete since it covers all the variables of technology adoption (Venkatesh et al., 2011). The UTAUT model is the most acceptable in technology adoption (Rodrigues et al., 2016; Raffaghelli et al., 2022; Rodrigues et al., 2024). Therefore, the research employed the UTAUT model to achieve the research objective. The model can investigate technology adoption among users with dissimilar personal characteristics, such as age of user and IT skills.

The remainder of the article is divided as follows. Section 2 describes the literature review and hypothesis development. Section 3 explains the method used. The result of the survey is explained in section 4. The policy, practical, and theoretical implications of the research findings are provided in Section 5. Conclusion, limitation, and future research direction are covered in section 6.

2 Literature review and hypotheses development

2.1 ChatGPT and education

Use of robots was articulated in 1921 (Capek, 1921). The transformation of machine intelligence form was introduced in the 1940s with the introduction of the Three Laws of Robotics as protocol (Turing, 1950). ChatGPT is considered revolutionary as it utilizes natural language processing (NLP) and Deep learning to create a human-type of interaction with the users. ChatGPT could answer questions, correct mistakes, and reject inappropriate questions. It could transform the education sector using innovative AI technology. Literature reported various benefit of ChatGPT in education (Firat, 2023; Susnjak et al., 2022; Zhai, 2022); some looked at the benefits of ChatGPT and proposed user guide of it in classrooms (Mollick and Mollick, 2022, Firat, 2023, Susnjak et al., 2022), others discussed the possible concerns of chatbots (Janssen et al., 2021).

ChatGPT, like any other technology, has positive and negative attributes; however, its leverage to education is tremendous (Kasneci et al., 2023; King, 2023). ChatGPT could be used to prepare course specifications, lesson plans, and assessments that could reduce the workload of educators and provide feedback to students. An investigation conducted by Tlili et al. (2023) showed that ChatGPT has the ability to create a paradigm shift concerning course delivery. The literature contended that ChatGPT could provide five main advantages to the education sector: help in creating outlines, brainstorming, learning assessment, enhancing pedagogical aspects, and providing virtual tutoring (Kasneci et al., 2023; King, 2023; Stokel-Walker and Van Noorden, 2023). On the issue of creative writing, Baidoo-Anu and Ansah (2023) confirmed that ChatGPT could help in creative writing. He provided suggestions to overcome the negative impact of ChatGPT. Accordingly, the educators could opt for open-ended questions and introduce semi-automated students’ work using ChatGPT. These approaches could help to identify both weaknesses and strengths of the student in the assigned task and help to provide a tailor-made assessment in the future (Kasneci et al., 2023). Cotton et al. (2024) also suggested applying formative assessment that should include discussion, debate, teamwork, and presentation. Lo (2023) applied a rapid review of literature on ChatGPT and found that ChatGPT could help in assessments; however, caution should be taken to achieve the desired learning outcome. ChatGPT could be used as an aide to guide in setting the assessments. Students can use ChatGPT for brainstorming and defining ideas and applications. ChatGPT could also be used for questioning and reasoning with its answers (Gilson et al., 2023).

Researched applied social exchange theory (Cook et al., 2013) and Levinger’s ABCDE model (Croes and Antheunis, 2021), and SPT (Altman and Taylor, 1973), and found that ChatGPT can improve social relationships and enhance learning. Additionally, the virtual relationship could be created using virtual assistance (Hudlicka, 2016). Durall and Kapros (2020) suggested that developer chatbots should consider inclusion, usability, ease of use, ethics, and best practices. Tlili et al. (2023) showed that these considerations were not fully adopted or considered in ChatGPT. According to Kuhail et al. (2023), the technology that could be used for education should be user-centric and should consider factors like emotion, cognition, and pedagogy. The efficacy of ChatGPT could be seen when ChatGPT passed four separate law exams in the University of Minnesota Law School (Choi et al., 2021). ChatGPT was tested in taking the US medical license exam and found that it scored a minimum requirement (Gilson et al., 2023).

Kung et al. (2023) and Jalil et al. (2023) used ChatGPT in solving software-related problems and found the answer to be correct/partially correct. Malinka et al. (2023) studied ChatGPT about computer programming and found the usability of ChatGPT. Rahman and Watanobe (2023) showed that ChatGPT could be easily used for lesson planning, answering questions, personal support, quick assessment evaluation, language improvement, and research. They surveyed the programming experiences of the postgraduate students in the use of ChatGPT and found that the students rated it between 1/5 and 3/5. The instructors who have been surveyed are in support of ChatGPT in teaching programming. And they were also satisfied with the use of ChatGPT in research.

Hosseini et al. (2023) studied the intended use of ChatGPT among health care educators. Results of the study showed that medical and research trainees were willing to use ChatGPT for education, not for other purposes. Other medical professionals who participated were neutral in the use of ChatGPT in medical and educational purposes. The research further revealed that ChatGPT is a great help for those with language difficulties, and ChatGPT could be used for summarizing existing texts and coming up with a first draft. It could also be used for writing clinical notes and could be used in centralizing and organizing patient records. It could also flag the areas of improvement needed. The efficiency of documentation could be improved by the use of ChatGPT, where repopulation of forms and records into clinical notes and patients’ notes could save a lot of time and effort.

As such, policies should be introduced to explain the acceptable use so that any miscommunications can be avoided. The participants who had used the technology before were positive about the use of ChatGPT, and those who did not use ChatGPT had more concerns about its use. Extra caution should be taken to ensure that wrong or inaccurate data is not entered into the training data set, as it could lead to wrong information. It is also recommended to use bidirectional communication with the expert users to help refine the output of the data. Since ChatGPT is not available in some countries, the feedback of the researchers in those countries will not be available; as such, the data could be biased toward certain locations.

After analyzing 18 articles, Mhlanga (2023) found that some concern from the educators, and he emphasized the inclusion of guidance on ethical use rather than banning the use of ChatGPT. Similarly, Sallam (2023), after reviewing 60 articles on ChatGPT, argued that users of ChatGPT have concerns regarding plagiarism, inaccuracy, and authenticity. The negative effects of using ChatGPT should be mitigated to encourage its adoption.

The literature on the negative impact of ChatGPT discusses user privacy violations as conversations are usually stored, so necessary steps should be adopted so that privacy is not misused (Wu et al., 2024). The other negative impact discussed by the literature was incorrect citation or bibliographic information (Walters and Wilder, 2023). There are also concerns about the accuracy of information, like inaccurate coding, inability to detect errors (Megahed et al., 2024). Another potential concern is bypassing the plagiarism detection, where software like Turnitin may not be able to capture all ChatGPT-generated content (Ventayen, 2023). Bašić et al. (2023) found that the students who used ChatGPT were more likely to commit plagiarism than others. Mbakwe et al. (2023) stated that the bias could occur because the research and subsequent use of the dataset are based on the West, and other cultures were left out during the development stage. This could affect the possible answer and accuracy, and could cause bias. To avoid the tapestry of biases, proofreading, expert review, and editing of the output should be undertaken. Halaweh (2023) suggested erring on the side of disclosure and transparency since it is difficult to detect AI-generated texts.

Due to ethical, privacy, and other concerns, some countries banned the use of ChatGPT while others, like the European Consumer Organization (BEUC), cautioned against the use of ChatGPT as it could have the potential for manipulation and deception (McCallum, 2023). Jobin et al. (2019) suggested including transparency, nonmaleficence, privacy, justice, fairness, responsibility, freedom, autonomy, beneficence, dignity, trust, solidarity, and sustainability as part of the technology ethical ecosystem. Eke (2023) investigated the possible ethical issues in the use of ChatGPT and found that accuracy, reliability, bias, privacy, lack of human interaction, technology dependency, plagiarism, transparency, and accountability were relevant ethical issues.

In the regulatory sphere, the European Union’s proposal on the law on AI classifies AI according to the level of risks. For example, AI that causes unacceptable risk, like social scoring systems of government, applications that are used for scanning tools like job applicants, and applications are regulated (European Commission, 2021). The regulation is timely as AI chatbots have been used in many sectors like predicting adverse effects for democracy (Cowen, 2023) or employment (Krugman et al., 2022). Though the emergence of text generation technology will not make text-based assessment as obsolete, the assessment should be made challenging with critical analysis and proper disclosure (Miernicki and Ng, 2021).

2.2 Technology acceptance model for ChatGPT adoption in education

The UTAUT model developed by Venkatesh, Morris, and Davis is considered a synthesis model that unifies eight theories. UTAUT adopted Theory of reason Action (TRA) (Ajzen and Fishbein, 1977), Technology acceptance model (TAM) (Davis, 1989), Theory of planned behavior (TPB) (Ajzen, 1991), Model of PC Utilization (MPCU) (Thompson et al., 1991), Motivational model (MM) (Davis et al., 1992), combined TAM and TPB (C-TAM-TPB) (Taylor and Todd, 1995), Innovation Diffusion Theory (IDT) (Rogers, 2003) and Social Cognitive Theory (SCT) (Williams et al., 2015). Technology adoption was tested through other models. The UTAUT model could be considered complete since it covers all the variables in the technology adoption models (Venkatesh et al., 2011). Literature suggests that the UTAUT model is the most predictive (Rodrigues et al., 2016), and UTAUT can look at technology adoption of a range of users with dissimilar personal characteristics like age of user, IT skills (Wang and Shih, 2009). The UTAUT comprises four predictors and four moderator variables that have a direct influence on intention to use technology. The four predictors are:

Performance Expectancy: This explains the belief of a user of a technology or innovation in helping to achieve a better result with the technology. This construct is believed to be the strongest construct in predicting intention to use (Venkatesh et al., 2003; Venkatesh and Davis, 2000). Therefore, Hypothesis 1 reads as:

H1: Performance expectancy can positively influence behavioral intention to use ChatGPT in education.

Effort Expectancy: This is defined as the degree of ease in the use of the technology, and studies suggested that this construct is also significant in determining intention to use the technology (Venkatesh et al., 2003; Davis, 1989; Moore and Benbasat, 1991). The ease of use of new technologies not only predicts intention to use but also could provide satisfaction in the use of technology (Morsi, 2024). Accordingly, Hypothesis 2 states:

H2: Effort expectancy can positively influence behavioral intention to use and to use ChatGPT by law educators.

Social Influence: It is defined as peer influence in using certain technology or a system. It is promoted as one of the strongest predictors of intention (Ajzen, 1991; Venkatesh and Davis, 2000; Rogers, 2003; Venkatesh et al., 2003). Chau and Hu (2002) found it to be not significant in a voluntary context; however, it is significant if technology use is mandated (Venkatesh et al., 2003). Social influence impacted the social circles of users (Goldstein and Cialdini, 2011). This resulted in users adopting products and services that could reflect on social norms and user identities (Choi et al., 2018). Accordingly, Hypothesis 3 states that:

H3: Social influence can positively influence behavioral intention to use ChatGPT.

Facilitating Conditions: It is considered a significant condition to predict the intention to use technology (Moore and Benbasat, 1991; Thompson et al., 1991; Teng et al., 2024). According to Venkatesh et al. (2003), facilitating conditions consider users’ perception of technical and organizational resources available to support their use of technology. Nonetheless, it will become insignificant when performance expectancy and effort expectancy are present in the model (Venkatesh et al., 2003). Based on the above literature, Hypothesis 4 reads as:

H4: Facilitating conditions have a significant influence on behavioral intention to use ChatGPT among law educators.

According to Ajzen (1991), the intention to use will predict actual behavior to use (Ajzen, 1991). Behavioral intention is the readiness to perform a certain behavior. The stronger the intention, the stronger the will to perform a certain behavior (Ajzen, 1991). According to Venkatesh et al. (2003), the presence of all four predictors will greatly influence the Behavioral Intention (BI) and technology use. Studies have also shown users’ intention to continue using a particular system based on their satisfaction with various conditions (Hidayat-ur-Rehman et al., 2021; Wu et al., 2022). The potential moderation factors are gender, age, experience, and voluntariness of use. The moderating factors may alter the relation between factors and predictors (Sharma et al., 1981). As such, Hypothesis 5 states that:

H5: Behavioral Intention to use Chat GPT in education will have a significant influence on the actual use of ChatGPT in legal education.

3 Methodology

The survey method using items from the UTAUT model as developed by Venkatesh et al. (2003) was used in this research. Numerous studies. The same measurement items were adopted with some modification. During the research development process, some of these items were removed as it is not relevant to achieving the objective of the current study. The UTAUT model has five major variables with few items in each variable. There are four items in the performance expectancy variable. The usefulness of ChatGPT, information collection, overall productivity, and ability to increase information and efficiency. Another four items are included in effort expectancy. The Items are: clear and understandable interaction, easy to learn, easy to use, and easy to operate. The third variable has 4 items related to social influence, and the fourth variable of facilitating conditions has four variables too. The items are related to availability of resources, having necessary knowledge, technology compatibility, and support from others. The final variable of behavioral intention has 3 items; they are related to intention to frequently use ChatGPT in education in the future. The questionnaire is provided in Appendix A. As suggested by Churchill (1979), three steps were taken to validate the measurement items and the survey questionnaires. The validated survey questionnaire from Venkatesh et al. (2003) was used after assessing its suitability for the current research. A pilot test with seven participants who are experienced educators was conducted to make sure that the questions convey the meaning. The experts’ opinions helped to remove the ambiguity in questions. The target population was law educators who used or intended to use ChatGPT in education (Figure 1).

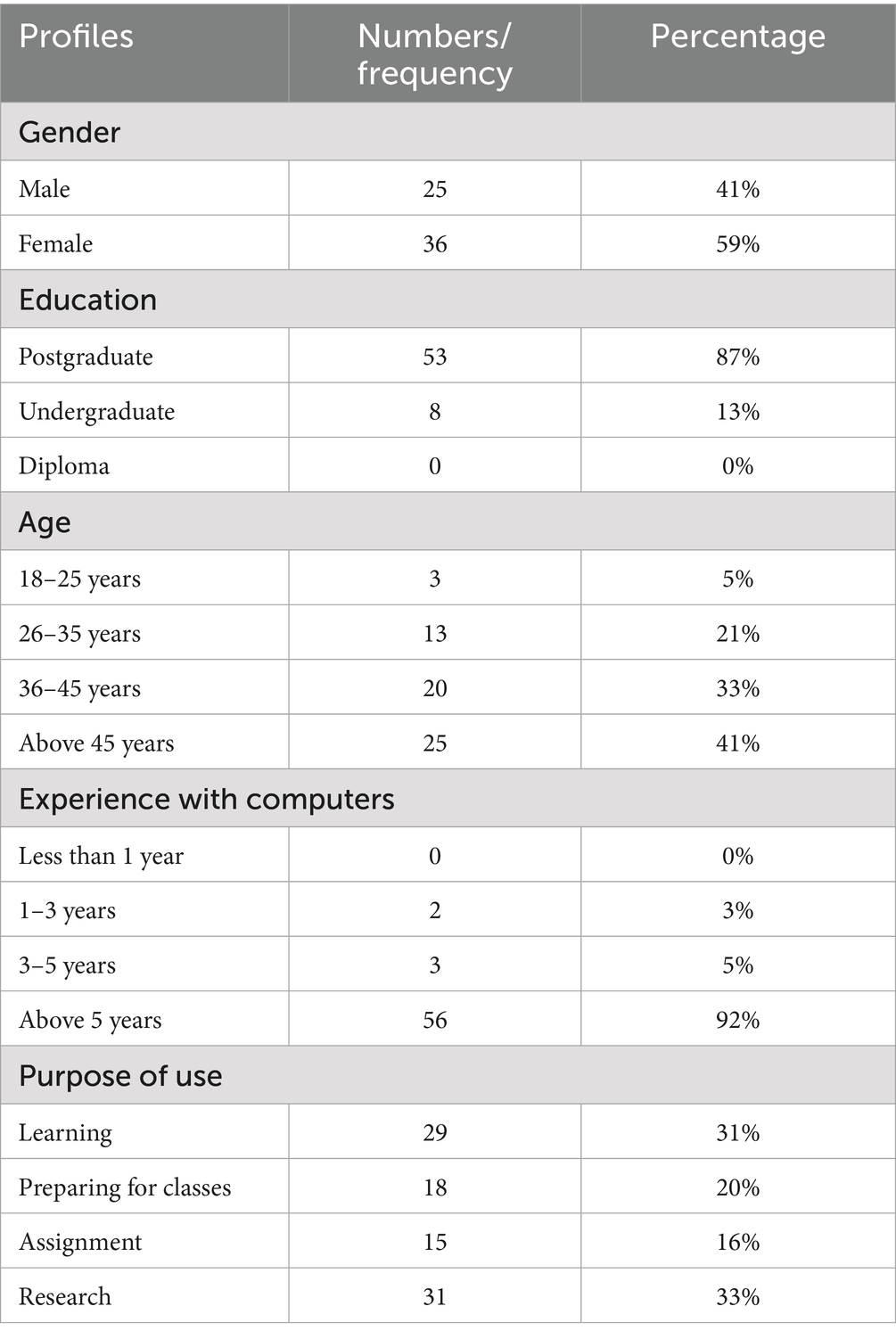

The participants were selected using a convenience sampling method as there is no official published registry of residents available (Shah Alam et al., 2024; Fatma and Khan, 2023). The participants were selected from those who teach law and have experience in using the internet and technology. Males and females from different nationalities, educational levels, and age groups were reached out to. The selected participant was reached online; they were informed about the purpose of the survey. The researcher obtained participants ‘consent. The responses were anonymous, and they were given the option to leave the survey at any time. The participants were given 3 months to complete the survey, and a reminder was sent after a month. After 3 months, the researcher received 70 responses, out of which 61 were complete. Nine incomplete surveys were removed.

The Questionnaire used was a closed questionnaire with a multiple-choice five-point Likert scale, where “1” was “strongly disagree,” and “5” was “strongly agree” as used by the original UTAUT model. The questionnaire had two parts. The first part included questions on demography, and the second part consisted of questions on ChatGPT adoption in law education. The second section was divided into five areas, comprising questions related to UTAUT components. They are performance expectancy, effort expectancy, social influence, facilitating conditions, and behavioral intention. The researcher used Microsoft Excel for analyses as it is best suited to achieve the research objective. It applied descriptive analysis as it was enough to achieve the research objective and provide appropriate practical and policy suggestions to encourage adoption of ChatGPT by legal educators.

4 Results and discussion

The survey was distributed to 100 respondents who teach various law subjects with varying backgrounds and work in Riyadh. The researcher received 70 responses, out of which 61 were reliable. The demographic data showed that 41% of the respondents were male and 59% of them were female. 87% of them had postgraduate studies, as many institutions in Saudi Arabia require a master’s degree and beyond to be appointed as educators in colleges and universities. A minimum of a bachelor’s degree is required to be appointed as a teacher. Many of those who participated in the survey were above 26 years old. This helped the researcher collect data from educated, knowledgeable, and active educators. Computer knowledge and use are important for the use of ChatGPT. The survey results showed that 92% of the respondents have used computers for more than 5 years. The results further revealed that the respondents used ChatGPT for various purposes: learning, preparation for classes, assignments, and research. The majority of them have used it for research. The descriptive analysis is presented in Table 1.

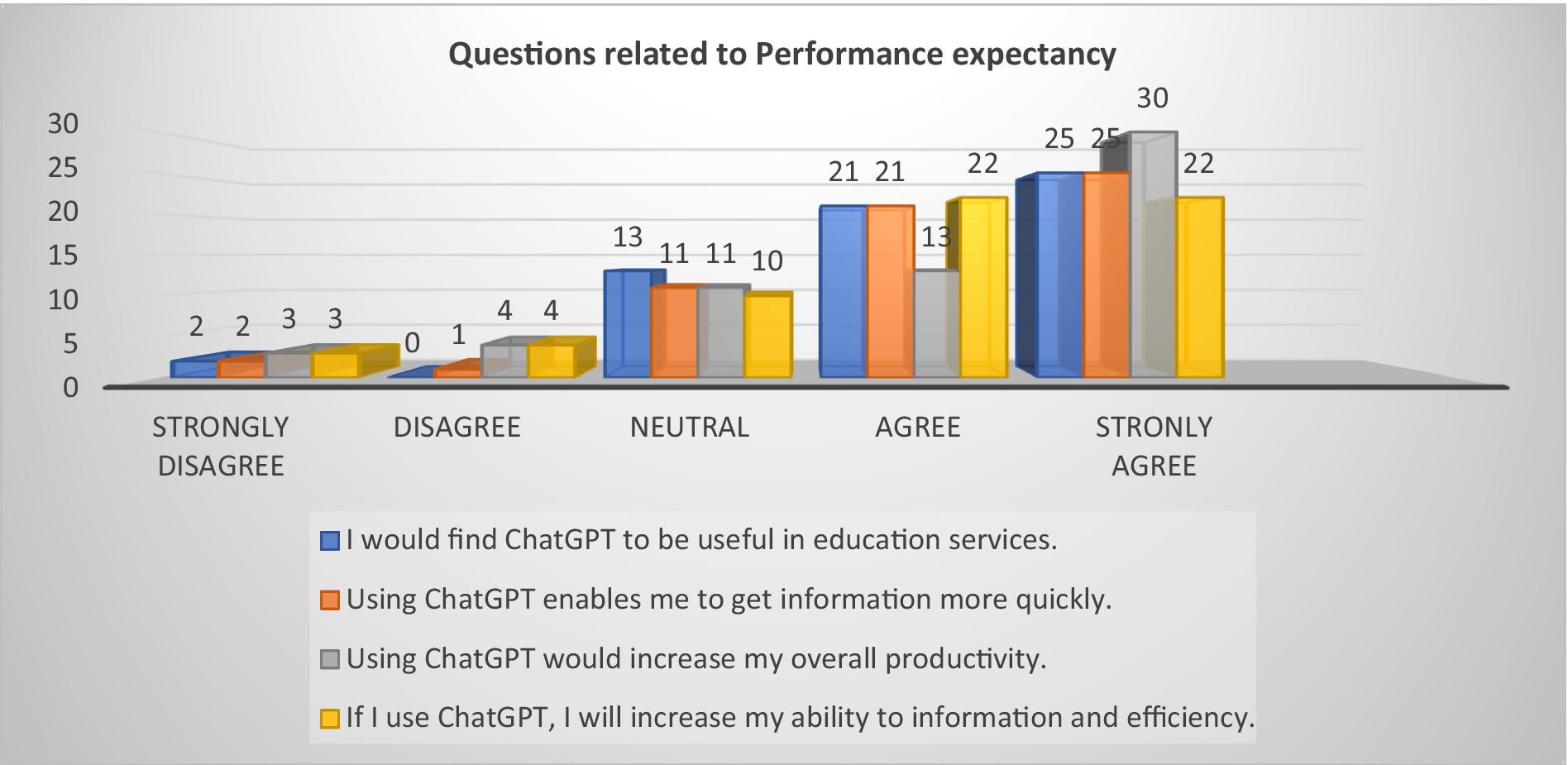

The results of the analyses show that H1, H2, H3, H4, and H5 are important, and all the constructs contributed to the possible adoption of ChatGPT in education. H1 (performance expectancy) comprises questions on usefulness, efficiency, and productivity. The H1 was proved to be significant. This is said to be proven to be an important factor in adopting technology among users of technology in developing and developed nations (Venkatesh et al., 2003; Carter and Belanger, 2004; Chen et al., 2023). The higher the score in this construct, the higher the chances of using ChatGPT. The result of the survey showed that in total, 42% of the respondents strongly agreed on all four variables within the construct. 32% of respondents agreed to all four variables within the construct. In total, 64% of respondents have confirmed that the performance expectancy is an important construct in ChatGPT use in legal education. The detailed responses are provided in Figure 2.

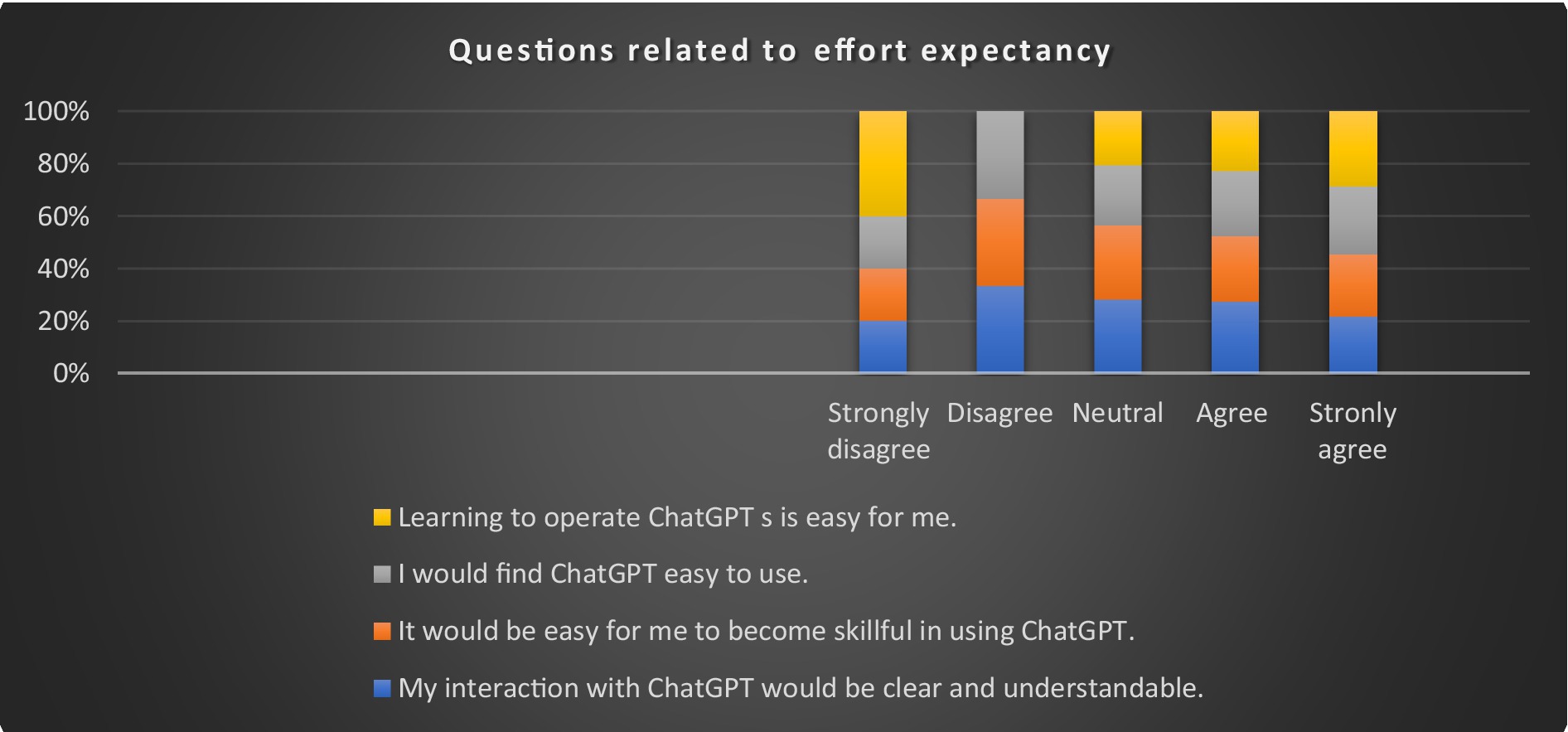

H2 (Effort expectancy) is another important construct in the technology adoption model. The effort expectancy was tested to understand the perception and ease of use of ChatGPT. This construct is used to understand the simplicity of the system that could facilitate use and adoption. The result showed that the familiarity of ChatGPT is high, as many of the respondents were computer savvy. The participant in the current research perceived that the use of ChatGPT is easy to learn and use. About 48% strongly agreed that ChatGPT is easy to learn, and 43% of them strongly agreed that it is easy to use. The majority of the respondents also agreed that ChatGPT is easy to become skilled in, and it is understandable. As research showed that the effort expectations are important to the adoption of new technology, it is expected that educators will find ways to use ChatGPT in education. The finding is similar to the findings of other researchers on technology adoption (Wu et al., 2023). Research suggested that those who found it easy to use will eventually adopt the technology (Venkatesh et al., 2003; Chen et al., 2023). Figure 3 provides detailed results on effort expectancy.

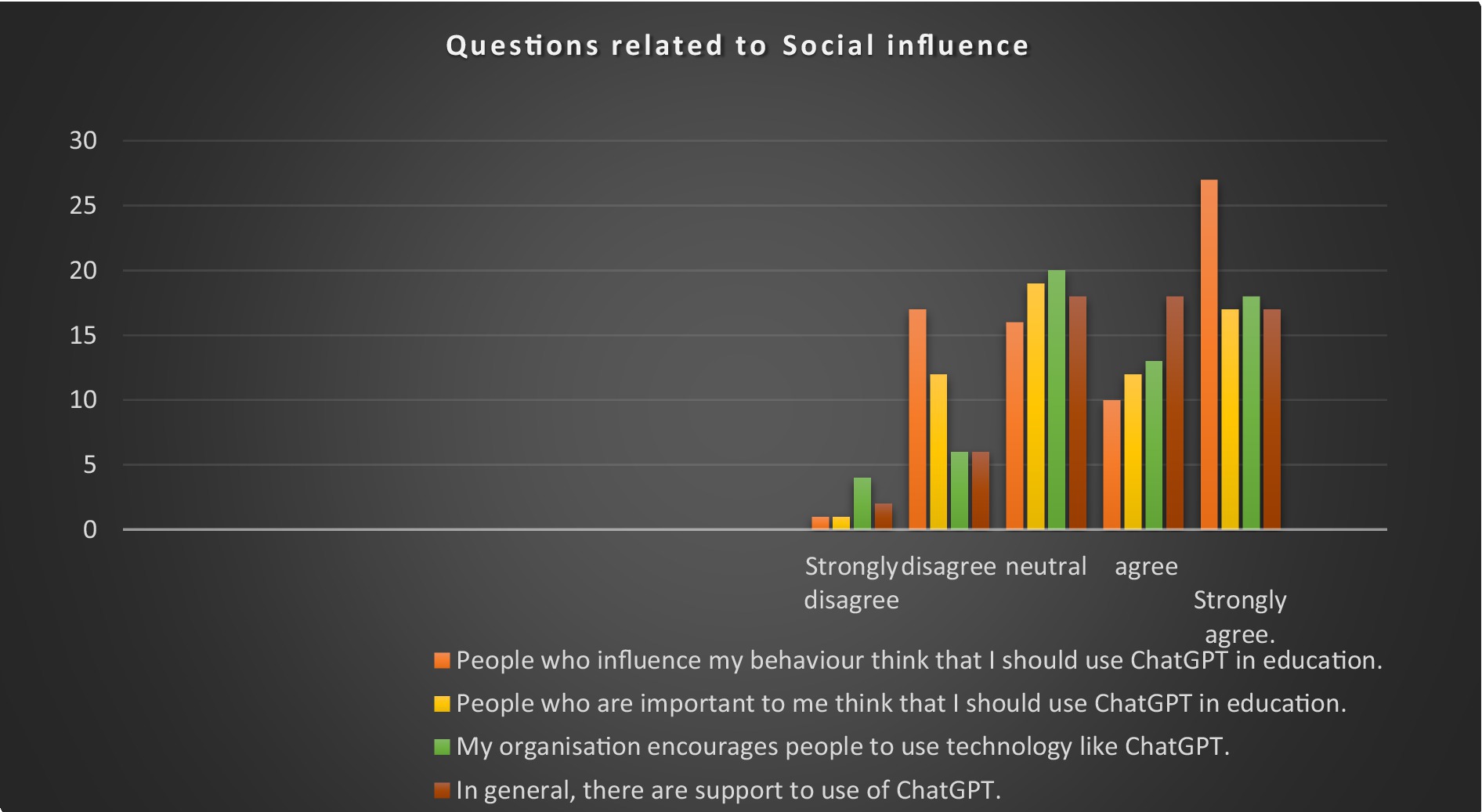

H3 (Social influence) construct includes social factors, norms, and image that tend to influence intention to use technology. The author tested this construct to see the influence of the behavioral intention to use ChatGPT in education. The finding revealed that 61% of the respondents agreed that others’ influence on their behavior is significant in using ChatGPT. 48% of them stated that the people important to them influence the use of ChatGPT, and 51% of them agreed/strongly agreed that their organization supports the use of ChatGPT in education. 57% of the respondents agreed that they have the support to use ChatGPT in education. The finding of social influence of technology adoption was similar to the finding of Yu (2012), who studied adoption of mobile banking among users, and Wu et al. (2023), who assessed the adoption of Medical self-service terminals among the elderly. However, some research in areas like e-government showed the insignificance of social influence in voluntary adoption of technology (Kim et al., 2015, Mansoori et al., 2018). The detailed results are presented in Figure 4.

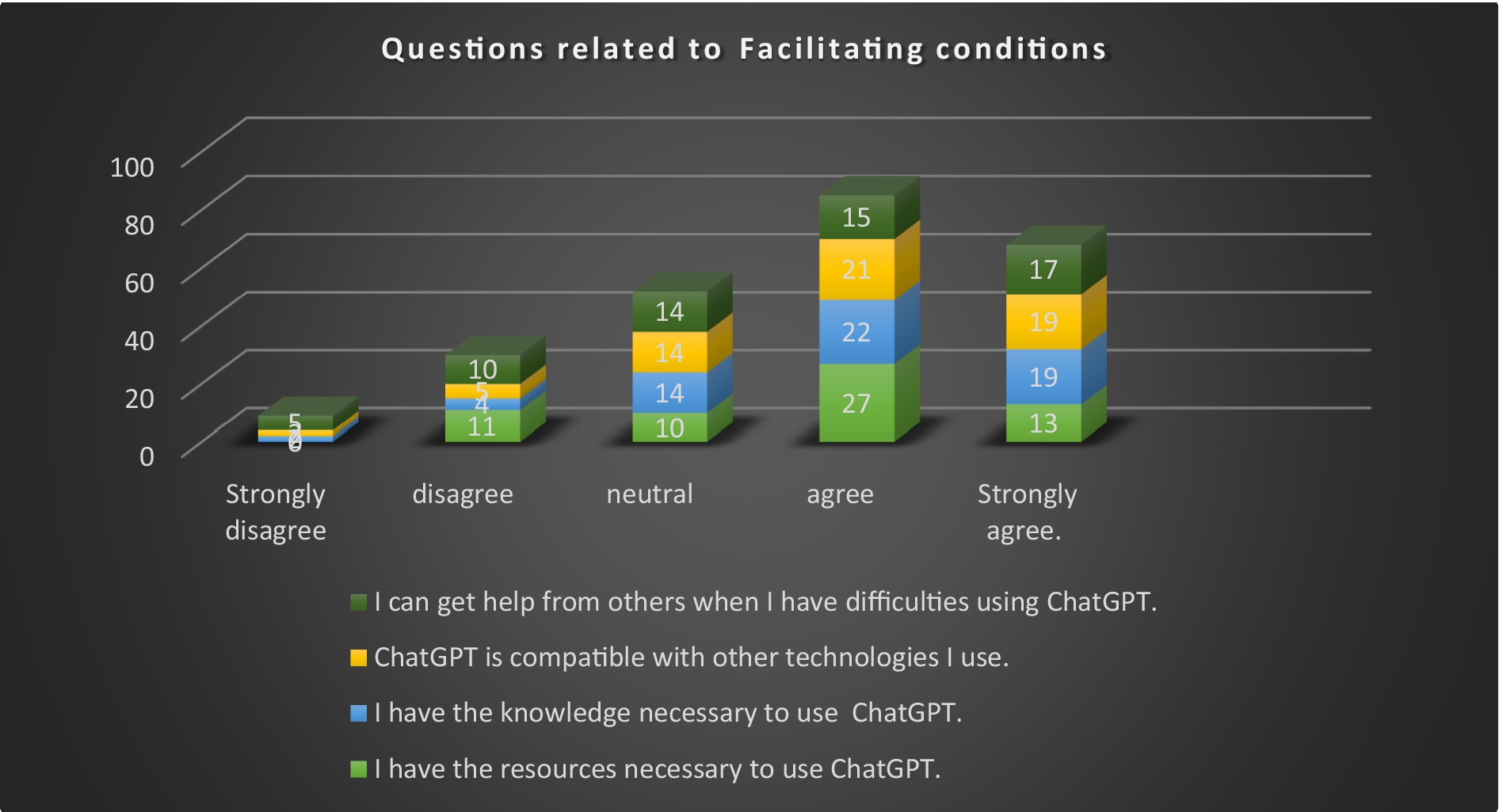

H4 (Facilitating conditions) construct is also known as perceived behavior control or adoptability of innovation (Davis, 1989). This construct is considered important in adopting a technology. The Facilitating conditions were tested to assess the availability of resources, knowledge, support, and compatibility of the technology, as these factors seem to be significant in predicting behavioral intention to adopt technology. The results revealed that the respondents are positive about the availability of necessary resources. 66% of the respondents either strongly agreed or agreed that they have access to resources. The respondents who are knowledgeable in using ChatGPT effectively, as 87% of the participants have a postgraduate qualification, and they have many years of experience with the technology. These factors made it easy for them to adopt ChatGPT. 66% of them agreed that the ChatGPT technology is compatible with other technology, and as such, it will allow users to use the existing technology without the need to improve their technological assets. 52% also confirmed that they will be able to get help from others if they face difficulties. The finding is in par with the findings of Alsharif et al. (2013) and Frans and Pather (2022). The detailed result is presented in Figure 5.

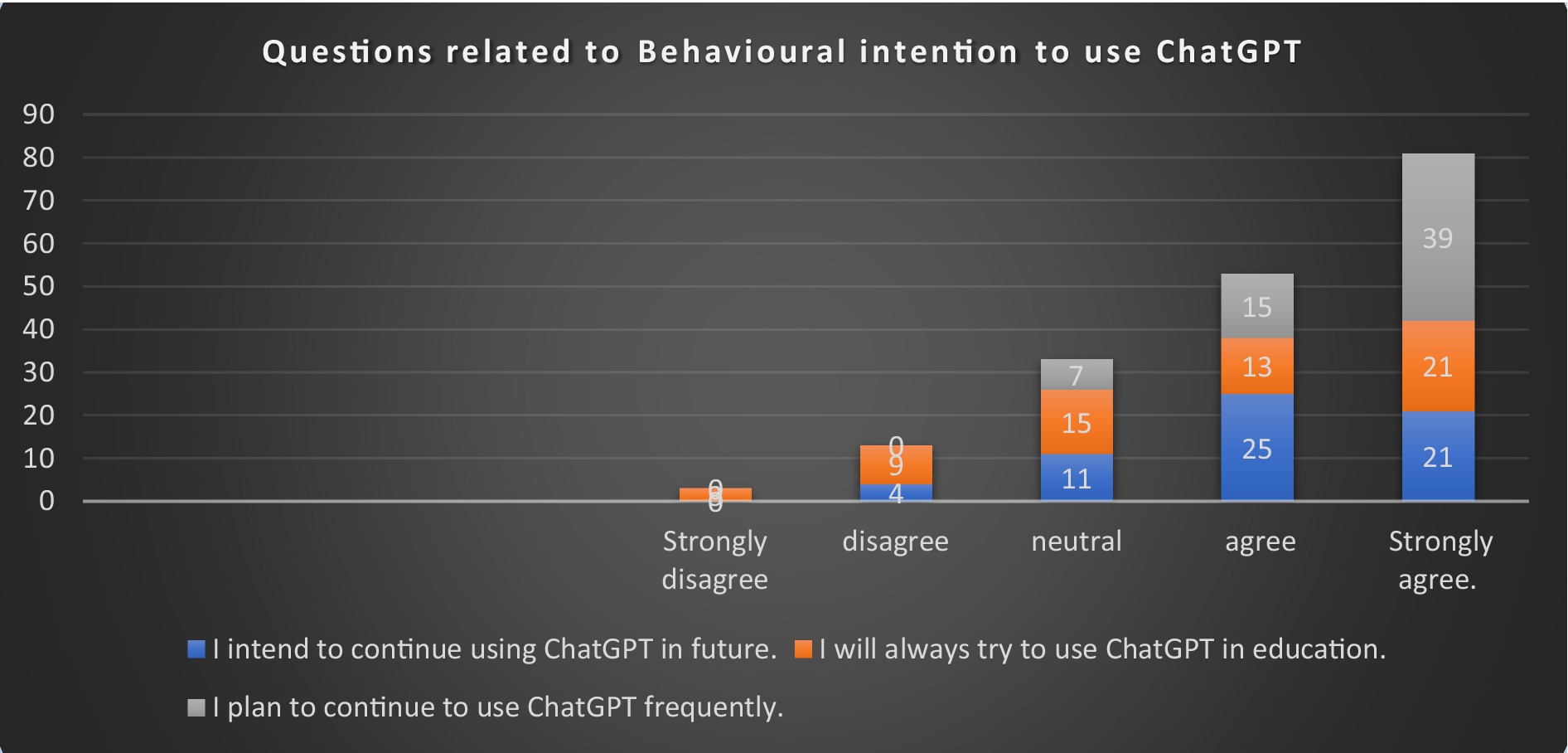

Davis (1989) explains that behavioral intention is the individual readiness to perform a certain activity or behavior. When individuals have a stronger intention to act, the likelihood of performance is high (Ajzen, 1991). The positive intention to use ChatGPT will lead to actual use. The survey result showed that 75% of the respondents planned to use ChatGPT in the future, and 89% of them intended to use it frequently. The overall result revealed that the respondents intended to use ChatGPT in the future. The finding is similar to the other research findings on technology adoption. It was established that the stronger the intention to use a particular technology, the greater the actual use will be (Venkatesh et al., 2003; Frans and Pather, 2022). The result of this construct is presented in Figure 6.

5 Policy, practical, and theoretical implications

The research has few policy, practical, and theoretical implications. The present study expanded the body of knowledge about ChatGPT adoption among law educators in Saudi Arabia. The technology is new. No research has investigated the possible factors that influence the adoption of ChatGPT among law educators. The research is the first of its kind in this context and will fill the gap in the literature in this area, as the search of the literature does not reveal any literature on ChatGPT adoption by law educators in Saudi Arabia. The research can assist in understanding factors that influence technology adoption among educators. Educational institutions or departments can use findings to understand the need for providing facilities to support the use of ChatGPT.

The result provided insight into the factors that influence the possible adoption of ChatGPT by law educators. This will guide the policy makers in preparing and executing a technology adoption strategy that is academically sound and practically acceptable. The strategy should have a structured institutional policy on the use of technology and AI tools in learning and research. Training should be included as part of skill development within the strategy. The training should prioritize the optimum use of ChatGPT in law education. It should also enhance educators’ skills in asking the right questions or creating assessments like reflective writing and flipped learning (Rudolph et al., 2023). Dilekçi and Karatay (2023) stated that assessment of information, deriving ideas, referencing, presentation, and justifications of their work could be categorized as ChatGPT era skills, as they could augment learning capacity. Preparing educators is necessary to enhance those skills. According to Kung et al. (2023), 60% of the content sourced through ChatGPT is accurate, and it demands careful verification before using the inputs from ChatGPT. The accuracy of the answers that one can get from ChatGPT depends on the wording of the questions, which benefits from ChatGPT. Asking questions and critical thinking are necessary skills that should be included as part of learning objectives. The failure of these competencies could affect motivation to adopt the technology (Fryer et al., 2019). ChatGPT adoption will necessitate modifying the teaching philosophy and assessment methods, where oral argument, logical and critical thinking, group projects, and hands-on activities should be an integral part of the teaching assessment.

Organizational policy should facilitate the change of teaching philosophy and assessment methods. Use of ChatGPT can cause bias and violate intellectual property and privacy. As such, it is necessary to provide guidelines to control bias and other violations. Rahman and Watanobe (2023) suggested taking initiative to eliminate fake, baseless, and harmful content. As ChatGPT can generate text, educators should enhance their skills to check the accuracy of the information presented. They can include a viva of the assignment to ensure that the assignment is completed by them, and require a report or peer assessment. ChatGPT has many benefits for law educators, and they should be encouraged to adopt it in their teaching and research. The educational institution should fund research related to ChatGPT so that the benefits of ChatGPT could be explored and advances could be fully achieved (Sok and Heng, 2024). Finally, this study applied the theoretical literature relating to technology adoption models and the UTAUT model, with some variation, to suit the Saudi Arabian context regarding adoption of technology among law educators.

6 Conclusion, limitation, and future research direction

The study intended to identify factors influencing intention to use and actual use of ChatGPT by law educators. The research found that all the factors within UTAUT were highly significant and can positively influence behavioral intention and actual behavior. Since scores on behavioral intention to use ChatGPT are positive, the educators will likely use ChatGPT in the future. The findings are beneficial in providing insights to educational institutions. They can assess their policies to strengthen educators’ intention to use and actual use of ChatGPT in educational services. The up-to-date policies can cater to the changing dynamics of the education sector.

However, the current study has a few limitations. The research used a questionnaire-based survey to collect data from law educators, and Microsoft Excel was used to analyze the data. Further, the study was conducted among the law educators based in Riyadh, Saudi Arabia. Since all the cities in Saudi Arabia have similar cultures, the findings could be generalized to other cities in Saudi Arabia. However, it may not be generalized to countries with different cultures. Another limitation was that the questionnaire was distributed at a single point in time; as such, it would not have captured the change in reaction among the participants. It is also limited to the use of ChatGPT in education, and DeepSeek and other chatbots were not included in the research. The research has utilized descriptive analysis and not inferential tests.

Future studies may consider covering educators who teach other disciplines and could also include students. It could apply inferential tests to understand the linear relationship between the dependent variable and the independent variable, the differences between independent groups, and to compare independent variables. They may use qualitative methods to study a different group and use other technology adoption models as well. Since this study covered only the capital city due to time and logistic constraints, future studies could expand to cover all the provinces of Saudi Arabia and beyond. Future research could also study adoption of other chatbots and compare with the results at different points in time.

Data availability statement

The original contributions presented in the study are included in the article/Supplementary material, further inquiries can be directed to the corresponding author.

Ethics statement

The studies involving humans were approved by Institutional Review Board, Prince Sultan University. The studies were conducted in accordance with the local legislation and institutional requirements. The participants provided their written informed consent to participate in this study.

Author contributions

JS: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Resources, Software, Supervision, Validation, Visualization, Writing – original draft, Writing – review & editing.

Funding

The author(s) declare that no financial support was received for the research and/or publication of this article.

Acknowledgments

The author would like to acknowledge the support of Prince Sultan University (PSU) for the research and for paying the Article Processing Charges (APC) of this publication. The author would like to record the support provided by the Governance and Policy Research Lab, too.

Conflict of interest

The author declares that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Generative AI statement

The authors declare that no Gen AI was used in the creation of this manuscript.

Any alternative text (alt text) provided alongside figures in this article has been generated by Frontiers with the support of artificial intelligence and reasonable efforts have been made to ensure accuracy, including review by the authors wherever possible. If you identify any issues, please contact us.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Supplementary material

The Supplementary material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/feduc.2025.1631413/full#supplementary-material

References

Ajzen, I. (1991). The theory of planned behavior. Organ. Behav. Hum. Decis. Process. 50, 179–211. doi: 10.1016/0749-5978(91)90020-T

Ajzen, I., and Fishbein, M. (1977). Attitude-behavior relations: a theoretical analysis and review of empirical research. Psychol. Bull. 84, 888–918. doi: 10.1037/0033-2909.84.5.888

Aldeman, N. L. S., de Sá Urtiga Aita, K. M., Machado, V. P., da Mata Sousa, L. C. D., Coelho, A. G. B., da Silva, A. S., et al. (2021). Smartpathk: a platform for teaching glomerulopathies using machine learning. BMC Med. Educ. 21:248. doi: 10.1186/s12909-021-02680-1

Aleedy, M., Atwell, E., and Meshoul, S. (2022). “Using AI chatbots in education: recent advances, challenges, and use case” in Artificial intelligence and sustainable computing: proceedings of ICSISCET 2021, 661–675.

Alotaibi, H. M., Sonbul, S. S., and El-Dakhs, D. A. (2025). Factors influencing the acceptance and use of ChatGPT among English as a foreign language learners in Saudi Arabia. Human. Soc. Sci. Commun. 12, 1–13. doi: 10.1057/s41599-025-04945-2

Alsharif, F., Siewe, F., Fidler, C., and Bella, G. (2013). “Extend the UTAUT to measure the adoption of online shopping in the Saudi environment” in The proceedings of IADIS international conference e-society, Lisbon, Portugal, 185–192.

Altman, I., and Taylor, D. A. (1973). Social penetration: the development of interpersonal relationships. New York: Holt, Rinehart & Winston.

Asad, M. M., Shahzad, S., Shah, S. H. A., Sherwani, F., and Almusharraf, N. M. (2024). ChatGPT as artificial intelligence-based generative multimedia for English writing pedagogy: challenges and opportunities from an educator’s perspective. Int. J. Inf. Learn. Technol. 41, 490–506. doi: 10.1108/IJILT-02-2024-0021

Baidoo-Anu, D., and Ansah, L. O. (2023). Education in the era of generative artificial intelligence (AI): understanding the potential benefits of ChatGPT in promoting teaching and learning. J. AI 7, 52–62. doi: 10.61969/jai.1337500

Bašić, Ž., Banovac, A., Kružić, I., and Jerković, I. (2023). ChatGPT-3.5 as writing assistance in students’ essays. Human. Soc. Sci. Commun. 10, 1–5. doi: 10.1057/s41599-023-02269-7

Capek, T. (1921). The Čech bohemian community of New York: with introductory remarks on the Čechoslovaks in the United States. New York: Czechoslovak section of America's making.

Carter, L., and Belanger, F. (2004). “Citizen adoption of electronic government initiatives” in 37th annual Hawaii international conference on system sciences, 2004. Proceedings of the (Hawaii: IEEE), 10.

Castañeda, L., and Selwyn, N. (2018). More than tools? Making sense of the ongoing digitizations of higher education. Int. J. Educ. Technol. High. Educ. 15, 1–10. doi: 10.1186/s41239-018-0109-y

Chau, P. Y., and Hu, P. J. (2002). Examining a model of information technology acceptance by individual professionals: an exploratory study. J. Manag. Inf. Syst. 18, 191–229. doi: 10.1080/07421222.2002.11045699

Chen, L., Jia, J., and Wu, C. (2023). Factors influencing the behavioral intention to use contactless financial services in the banking industry: an application and extension of UTAUT model. Front. Psychol. 14:1096709. doi: 10.3389/fpsyg.2023.1096709

Chiu, T. K. F. (2024). Future research recommendations for transforming higher education with generative AI. Comput. Educ. Artif. Intell. 6:100197. doi: 10.1016/j.caeai.2023.100197

Choi, J. H., Hickman, K. E., Monahan, A. B., and Schwarcz, D. (2021). ChatGPT goes to law school. J. Legal Educ. 71:387.

Choi, J., Park, J., and Suh, J. (2023). Evaluating the current state of ChatGPT and its disruptive potential: an empirical study of Korean users. Asia Pac. J. Inf. Syst. 33, 1058–1092. doi: 10.14329/apjis.2023.33.4.1058

Choi, N. H., Xu, H., and Teng, Z. (2018). Roles of social identity verification in the effects of symbolic and evaluation relevance on Chinese consumers' brand attitude. J. Bus. Econ. Environ. Stud. 8, 17–27. doi: 10.13106/eajbm.2018.vol8.no4.17

Churchill, G. A. Jr. (1979). A paradigm for developing better measures of marketing constructs. J. Mark. Res. 16, 64–73. doi: 10.1177/002224377901600110

Clarizia, F., Colace, F., Lombardi, M., Pascale, F., and Santaniello, D. (2018). “Chatbot: An education support system for student” in Cyberspace safety and security: 10th international symposium, CSS 2018, Amalfi, Italy, October 29–31, 2018, proceedings 10 (Cham, Switzerland: Springer International Publishing), 291–302.

Cook, K. S., Cheshire, C., Rice, E. R., and Nakagawa, S. (2013). Social exchange theory. Netherlands: Springer, 61–88.

Cotton, D. R., Cotton, P. A., and Shipway, J. R. (2024). Chatting and cheating: ensuring academic integrity in the era of ChatGPT. Innov. Educ. Teach. Int. 61, 228–239. doi: 10.1080/14703297.2023.2190148

Cowen, R. (2023). Comparative education: and now? Comp. Educ. 59, 326–340. doi: 10.1080/03050068.2023.2240207

Croes, E. A., and Antheunis, M. L. (2021). Can we be friends with Mitsuku? A longitudinal study on the process of relationship formation between humans and a social chatbot. J. Soc. Pers. Relat. 38, 279–300. doi: 10.1177/0265407520959463

Davis, F. D. (1989). “Technology acceptance model: TAM” in Information seeking behavior and technology adoption. eds. M. N. Al-Suqri and A. S. Al-Aufi, vol. 205, 5.

Davis, F. D., Bagozzi, R. P., and Warshaw, P. R. (1992). Extrinsic and intrinsic motivation to use computers in the workplace 1. J. Appl. Soc. Psychol. 22, 1111–1132. doi: 10.1111/j.1559-1816.1992.tb00945.x

Dilekçi, A., and Karatay, H. (2023). The effects of the 21st century skills curriculum on the development of students’ creative thinking skills. Think. Skills Creat. 47:101229. doi: 10.1016/j.tsc.2022.101229

Durall, E., and Kapros, E. (2020). “Co-design for a competency self-assessment chatbot and survey in science education” in Learning and collaboration technologies. Human and technology ecosystems: 7th international conference, LCT 2020, held as part of the 22nd HCI international conference, HCII 2020, Copenhagen, Denmark, July 19–24, 2020, proceedings, part II 22 (Cham, Switzerland: Springer International Publishing), 13–24.

Eke, D. O. (2023). ChatGPT and the rise of generative AI: threat to academic integrity? J. Respons. Technol. 13:100060. doi: 10.1016/j.jrt.2023.100060

European Commission. (2021). 2030 digital compass: The European way for the digital decade [COM(2021) 118 final]. Office for Official Publications of the European Communities.

Fatma, M., and Khan, I. (2023). An integrative framework to explore corporate ability and corporate social responsibility association’s influence on consumer responses in the banking sector. Sustainability 15:7988. doi: 10.3390/su15107988

Firat, M. (2023). What ChatGPT means for universities: perceptions of scholars and students. J. Appl. Learn. Teach. 6, 57–63. doi: 10.37074/jalt.2023.6.1.22

Frans, C., and Pather, S. (2022). Determinants of ICT adoption and uptake at a rural public-access ICT Centre: a south African case study. Afr. J. Sci. Technol. Innov. Dev. 14, 1575–1590. doi: 10.1080/20421338.2021.1975354

Fryer, L. K., Nakao, K., and Thompson, A. (2019). Chatbot learning partners: connecting learning experiences, interest and competence. Comput. Human Behav. 93, 279–289. doi: 10.1016/j.chb.2018.12.023

García-Peñalvo, F. J. (2023). The perception of artificial intelligence in educational contexts after the launch of ChatGPT: Disruption or Panic?

Gilson, A., Safranek, C. W., Huang, T., Socrates, V., Chi, L., Taylor, R. A., et al. (2023). How does ChatGPT perform on the United States medical licensing examination (USMLE)? The implications of large language models for medical education and knowledge assessment. JMIR Med. Educ. 9:e45312. doi: 10.2196/45312

Goldstein, N. J., and Cialdini, R. B. (2011). Using social norms as a lever of social influence. In R. B. Cialdini (N. J. Goldstein) The science of social influence (167–191). England: Psychology Press.

Halaweh, M. (2023). ChatGPT in education: strategies for responsible implementation. Contemp. Educ. Technol. 15:ep421. doi: 10.30935/cedtech/13036

Hidayat-ur-Rehman, I., Ahmad, A., Khan, M. N., and Mokhtar, S. A. (2021). Investigating mobile banking continuance intention: a mixed-methods approach. Mob. Inf. Syst. 2021, 1–17. doi: 10.1155/2021/9994990

Hosseini, M., Gao, C. A., Liebovitz, D. M., Carvalho, A. M., Ahmad, F. S., Luo, Y., et al. (2023). An exploratory survey about using ChatGPT in education, healthcare, and research. PLoS One 18:e0292216. doi: 10.1371/journal.pone.0292216

Hudlicka, E. (2016). “Virtual affective agents and therapeutic games” in Artificial intelligence in behavioral and mental health care (London, United Kingdom: Academic Press), 81–115.

Jaiswal, A., and Arun, C. J. (2021). Potential of artificial intelligence for the transformation of the education system in India. Int. J. Educ. Dev. Using Inf. Commun. Technol. 17, 142–158.

Jalil, S., Rafi, S., LaToza, T. D., Moran, K., and Lam, W. (2023). “ChatGPT and software testing education: promises & perils” in 2023 IEEE international conference on software testing, verification and validation workshops (ICSTW) (Dublin, Ireland: IEEE), 4130–4137.

Janssen, A. H. A., Grützner, L., and Breitner, M. H. (2021). “Why do Chatbots fail? A critical success factors analysis” in ICIS.

Jobin, A., Ienca, M., and Vayena, E. (2019). The global landscape of AI ethics guidelines. Nat. Mach. Intell. 1, 389–399. doi: 10.1038/s42256-019-0088-2

Kasneci, E., Seßler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., et al. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learn. Individ. Differ. 103:102274. doi: 10.1016/j.lindif.2023.102274

Kim, S., Lee, K. H., Hwang, H., and Yoo, S. (2015). Analysis of the factors influencing healthcare professionals’ adoption of mobile electronic medical record (EMR) using the unified theory of acceptance and use of technology (UTAUT) in a tertiary hospital. BMC Med. Inform. Decis. Mak. 16, 1–12. doi: 10.1186/s12911-016-0249-8

King, M. R.ChatGPT (2023). A conversation on artificial intelligence, chatbots, and plagiarism in higher education. Cell. Mol. Bioeng. 16, 1–2. doi: 10.1007/s12195-022-00754-8

Krugman, D. W., Manoj, M., Nassereddine, G., Cipriano, G., Battelli, F., Pillay, K., et al. (2022). Transforming global health education during the COVID-19 era: perspectives from a transnational collective of global health students and recent graduates. BMJ Glob. Health 7:e010698. doi: 10.1136/bmjgh-2022-010698

Kuhail, M. A., Al Katheeri, H., Negreiros, J., Seffah, A., and Alfandi, O. (2023). Engaging students with a chatbot-based academic advising system. Int. J. Hum. Comput. Interact. 39, 2115–2141. doi: 10.1080/10447318.2022.2074645

Kung, T. H., Cheatham, M., Medenilla, A., Sillos, C., De Leon, L., Elepaño, C., et al. (2023). Performance of ChatGPT on USMLE: potential for AI-assisted medical education using large language models. PLOS Digit. Health 2:e0000198. doi: 10.1371/journal.pdig.0000198

Llorente, A. M., Ladera, V., Contreras, P., and Conde, L. (2021). Efficacy of a computerized intervention based on artificial intelligence for improving reading comprehension in students with dyslexia. Front. Psychol. 12:641005. doi: 10.1016/j.chb.2016.05.071

Lo, C. K. (2023). What is the impact of ChatGPT on education? A rapid review of the literature. Educ. Sci. 13:410. doi: 10.3390/educsci13040410

Malinka, K., Peresíni, M., Firc, A., Hujnák, O., and Janus, F. (2023). “On the educational impact of chatgpt: is artificial intelligence ready to obtain a university degree?” in Proceedings of the 2023 conference on innovation and technology in computer science education, vol. 1, 47–53.

Mansoori, K. A. A., Sarabdeen, J., and Tchantchane, A. L. (2018). Investigating emirati citizens’ adoption of e-government services in Abu Dhabi using modified UTAUT model. Inf. Technol. People 31, 455–481. doi: 10.1108/ITP-12-2016-0290

Mbakwe, A. B., Lourentzou, I., Celi, L. A., Mechanic, O. J., and Dagan, A. (2023). ChatGPT passing USMLE shines a spotlight on the flaws of medical education. PLOS Digit. Health 2:e0000205. doi: 10.1371/journal.pdig.0000205

McCallum, L. (2023). New takes on developing intercultural communicative competence: using AI tools in telecollaboration task design and task completion. J. Multicult. Educ. 18, 153–172. doi: 10.1108/JME-06-2023-0043

Megahed, F. M., Chen, Y. J., Ferris, J. A., Knoth, S., and Jones-Farmer, L. A. (2024). How generative AI models such as ChatGPT can be (mis) used in SPC practice, education, and research? An exploratory study. Qual. Eng. 36, 287–315. doi: 10.1080/08982112.2023.2206479

Mhlanga, D. (2023). “Open AI in education, the responsible and ethical use of ChatGPT towards lifelong learning” in Fin tech and artificial intelligence for sustainable development: the role of smart technologies in achieving development goals (Springer Nature Switzerland: Cham), 387–409.

Miernicki, M., and Ng, I. (2021). Artificial intelligence and moral rights. AI Soc. 36, 319–329. doi: 10.1007/s00146-020-01027-6

Mollick, E. R., and Mollick, L. (2022). New modes of learning enabled by AI Chatbots: three methods and assignments. SSRN J :4300783. doi: 10.2139/ssrn.4300783

Moore, G. C., and Benbasat, I. (1991). Development of an instrument to measure the perceptions of adoption and information technology innovation. Inf. Syst. Res. 2, 192–222. doi: 10.1287/isre.2.3.192

Morsi, S. M. (2024). An empirical study on the factors influencing the usage intention of metaverse for e-commerce. Acad. J. Contemp. Comm. Res. 4, 76–97. doi: 10.21608/ajccr.2024.227715.1077

Ouyang, F., and Jiao, P. (2021). Artificial intelligence in education: the three paradigms. Comput. Educ. Artif. Intell. 2:100020. doi: 10.1016/j.caeai.2021.100020

Raffaghelli, J. E., Rodríguez, M. E., Guerrero-Roldán, A. E., and Bañeres, D. (2022). Applying the UTAUT model to explain the students' acceptance of an early warning system in higher education. Comput. Educ. 182:104468. doi: 10.1016/j.compedu.2022.104468

Rahman, M. M., and Watanobe, Y. (2023). ChatGPT for education and research: opportunities, threats, and strategies. Appl. Sci. 13:5783. doi: 10.3390/app13095783

Rodrigues, T. R. C., Reijnders, T., Breeman, L. D., Janssen, V. R., Kraaijenhagen, R. A., Atsma, D. E., et al. (2024). Use intention and user expectations of human-supported and self-help eHealth interventions: internet-based randomized controlled trial. JMIR Form. Res. 8:e38803. doi: 10.1097/PSY.0000000000001242

Rodrigues, G., Sarabdeen, J., and Balasubramanian, S. (2016). Factors that influence consumer adoption of e-government services in the UAE: a UTAUT model perspective. J. Internet Commer. 15, 18–39. doi: 10.1080/15332861.2015.1121460

Rudolph, J., Tan, S., and Tan, S. (2023). ChatGPT: bullshit spewer or the end of traditional assessments in higher education? J. Appl. Learn. Teach. 6, 342–363. doi: 10.37074/jalt.2023.6.1.9

Sallam, M. (2023). The utility of ChatGPT as an example of large language models in healthcare education, research and practice: systematic review on the future perspectives and potential limitations. Med Rxiv 11, 2023–2002. doi: 10.3390/healthcare11060887

Shah Alam, S., Ahsan, M. N., Masukujjaman, M., Kokash, H. A., and Ahmed, S. (2024). Adoption of big data analytics intelligence among hospitality and tourism companies: perceive performance perspective. J. Qual. Assur. Hospital. Tour., 1–35. doi: 10.1080/1528008X.2024.2442674

Sharma, S., Durand, R. M., and Gur-Arie, O. (1981). Identification and analysis of moderator. J. Mark. Res. 18, 291–300.

Sok, S., and Heng, K. (2024). Generative AI in higher education: The need to develop or revise academic integrity policies to ensure the ethical use of AI. Cambodia Development Center, Cambodia.

Stokel-Walker, C., and Van Noorden, R. (2023). What ChatGPT and generative AI mean for science. Nature 614, 214–216. doi: 10.1038/d41586-023-00340-6

Susnjak, T., Ramaswami, G. S., and Mathrani, A. (2022). Learning analytics dashboard: a tool for providing actionable insights to learners. Int. J. Educ. Technol. High. Educ. 19:12. doi: 10.1186/s41239-021-00313-7

Taylor, S., and Todd, P. (1995). Assessing IT usage: the role of prior experience. MIS Q. 19, 561–570. doi: 10.2307/249633

Teng, Q., Bai, X., and Apuke, O. D. (2024). Modelling the factors that affect the intention to adopt emerging digital technologies for a sustainable smart world city. Technol. Soc. 78:102603. doi: 10.1016/j.techsoc.2024.102603

Terwiesch, C. (2023). Would chat GPT3 get a Wharton MBA. A prediction based on its performance in the operations management course.

Thompson, R. L., Higgins, C. A., and Howell, J. M. (1991). Personal computing: toward a conceptual model of utilization. MIS Q. 15, 125–143. doi: 10.2307/249443

Tlili, A., Shehata, B., Adarkwah, M. A., Bozkurt, A., Hickey, D. T., Huang, R., et al. (2023). What if the devil is my guardian angel: ChatGPT as a case study of using chatbots in education. Smart Learn. Environ. 10:15. doi: 10.1186/s40561-023-00237-x

Venkatesh, V., and Davis, F. D. (2000). A theoretical extension if the technology acceptance model: four longitudinal field studies. Manag. Sci. 46, 186–204. doi: 10.1287/mnsc.46.2.186.11926

Venkatesh, V., Morris, M. G., Davis, G. B., and Davis, F. D. (2003). User acceptance of information technology: toward a unified view. MIS Q. 27, 425–478. doi: 10.2307/30036540

Venkatesh, V., Sykes, T. A., and Zhang, X. (2011). “'Just what the doctor ordered': a revised UTAUT for EMR system adoption and use by doctors” in 2011 44th Hawaii international conference on system sciences. (Kauai, HI, USA: IEEE) 1449, 1–10.

Ventayen, R. J. M. (2023). ChatGPT by OpenAI: students' viewpoint on cheating using artificial intelligence-based application. SSRN J. :4361548. doi: 10.2139/ssrn.4361548

Walters, W. H., and Wilder, E. I. (2023). Fabrication and errors in the bibliographic citations generated by ChatGPT. Sci. Rep. 13:14045. doi: 10.1038/s41598-023-41032-5

Wang, Y. S., and Shih, Y. W. (2009). Why do people use information kiosks? A validation of the unified theory of acceptance and use of technology. Gov. Inf. Q. 26, 158–165. doi: 10.1016/j.giq.2008.07.001

Williams, M. D., Rana, N. P., and Dwivedi, Y. K. (2015). The unified theory of acceptance and use of technology (UTAUT): a literature review. J. Enterp. Inf. Manag. 28, 443–488. doi: 10.1108/JEIM-09-2014-0088

Williamson, B., Eynon, R., and Potter, J. (2020). Pandemic politics, pedagogies and practices: digital technologies and distance education during the coronavirus emergency. Learn. Media Technol. 45, 107–114. doi: 10.1080/17439884.2020.1761641

Wu, X., Duan, R., and Ni, J. (2024). Unveiling security, privacy, and ethical concerns of ChatGPT. J. Inf. Intell. 2, 102–115. doi: 10.1016/j.jiixd.2023.10.007

Wu, Q., Huang, L., and Zong, J. (2023). User Interface characteristics influencing medical self-service terminals behavioral intention and acceptance by Chinese elderly: an empirical examination based on an extended UTAUT model. Sustainability 15:14252. doi: 10.3390/su151914252

Wu, W., Zhang, B., Li, S., and Liu, H. (2022). Exploring factors of the willingness to accept AI-assisted learning environments: an empirical investigation based on the UTAUT model and perceived risk theory. Front. Psychol. 13:870777. doi: 10.3389/fpsyg.2022.870777

Xia, Q., Chiu, T. K., Lee, M., Sanusi, I. T., Dai, Y., and Chai, C. S. (2022). A self-determination theory (SDT) design approach for inclusive and diverse artificial intelligence (AI) education. Comput. Educ. 189:104582. doi: 10.1016/j.compedu.2022.104582

Yu, C. S. (2012). Factors affecting individuals to adopt mobile banking: empirical evidence from the UTAUT model. J. Electron. Commer. Res. 13:104.

Zhai, X. (2022). ChatGPT user experience: implications for education. SSRN J. :4312418. doi: 10.2139/ssrn.4312418

Zhang, M., Ding, H., Naumceska, M., and Zhang, Y. (2022). Virtual reality technology as an educational and intervention tool for children with autism spectrum disorder: current perspectives and future directions. Behav. Sci. 12:138. doi: 10.3390/bs12050138

Zheng, W., Ma, Y. Y., and Lin, H. L. (2021). Research on blended learning in physical education during the COVID-19 pandemic: a case study of Chinese students. SAGE Open 11:21582440211058196. doi: 10.1177/21582440211058196

Keywords: ChatGPT, intention to use, law educators, UTAUT model, Saudi Arabia

Citation: Sarabdeen J (2025) Intention to use ChatGPT among law educators in Saudi Arabia. Front. Educ. 10:1631413. doi: 10.3389/feduc.2025.1631413

Edited by:

Eugène Loos, Utrecht University, NetherlandsReviewed by:

Loredana Ivan, National School of Political Studies and Public Administration, RomaniaJaneth Díaz Vera, University of Guayaquil, Ecuador

Copyright © 2025 Sarabdeen. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Jawahitha Sarabdeen, anNhcmFiZGVlbkBwc3UuZWR1LnNh

Jawahitha Sarabdeen

Jawahitha Sarabdeen