- 1Micro Air Vehicle Laboratory (MAVLab), Department of Control and Simulation, Faculty of Aerospace Engineering, Delft University of Technology, Delft, Netherlands

- 2Department of Space Systems Engineering, Faculty of Aerospace Engineering, Delft University of Technology, Delft, Netherlands

This work presents a review and discussion of the challenges that must be solved in order to successfully develop swarms of Micro Air Vehicles (MAVs) for real world operations. From the discussion, we extract constraints and links that relate the local level MAV capabilities to the global operations of the swarm. These should be taken into account when designing swarm behaviors in order to maximize the utility of the group. At the lowest level, each MAV should operate safely. Robustness is often hailed as a pillar of swarm robotics, and a minimum level of local reliability is needed for it to propagate to the global level. An MAV must be capable of autonomous navigation within an environment with sufficient trustworthiness before the system can be scaled up. Once the operations of the single MAV are sufficiently secured for a task, the subsequent challenge is to allow the MAVs to sense one another within a neighborhood of interest. Relative localization of neighbors is a fundamental part of self-organizing robotic systems, enabling behaviors ranging from basic relative collision avoidance to higher level coordination. This ability, at times taken for granted, also must be sufficiently reliable. Moreover, herein lies a constraint: the design choice of the relative localization sensor has a direct link to the behaviors that the swarm can (and should) perform. Vision-based systems, for instance, force MAVs to fly within the field of view of their camera. Range or communication-based solutions, alternatively, provide omni-directional relative localization, yet can be victim to unobservable conditions under certain flight behaviors, such as parallel flight, and require constant relative excitation. At the swarm level, the final outcome is thus intrinsically influenced by the on-board abilities and sensors of the individual. The real-world behavior and operations of an MAV swarm intrinsically follow in a bottom-up fashion as a result of the local level limitations in cognition, relative knowledge, communication, power, and safety. Taking these local limitations into account when designing a global swarm behavior is key in order to take full advantage of the system, enabling local limitations to become true strengths of the swarm.

1. Introduction

Micro Air Vehicles (MAVs), or “small drones,” are becoming commonplace in the modern world. The term refers to small, light-weight, flying robots. Several MAV designs exist, including multirotors (Kumar and Michael, 2012), flapping wing (Michelson and Reece, 1998; Wood et al., 2013; de Croon et al., 2016), fixed wing (Green and Oh, 2006), morphing designs (Falanga et al., 2019b), or “hybrid” vehicles (Itasse et al., 2011). Of these, quadrotors have enjoyed the spotlight due to their high maneuverability, their ability to take-off vertically (as opposed to most fixed wing MAVs, for instance), and their relative simplicity in design (Gupte et al., 2012; Kumar and Michael, 2012). MAVs can be used for surveillance and mapping (Mohr and Fitzpatrick, 2008; Scaramuzza et al., 2014; Saska et al., 2016b), infrastructure inspection (Sa and Corke, 2014), load transport and delivery (Palunko et al., 2012), or construction (Lindsey et al., 2012; Augugliaro et al., 2014). Such applications are particularly useful in areas that are not easily accessible by humans, like forests or disaster sites (Alexis et al., 2009; Achtelik et al., 2012). Smaller and lighter designs push the boundaries of their applications further. Aside from the asset of increased portability, smaller MAVs can also navigate through tighter spaces, such as narrow indoor environments with higher agility (Mohr and Fitzpatrick, 2008). They also cause less damage to their surroundings (including people) in the event of a collision, making them intrinsically safer tools (Kushleyev et al., 2013).

Unfortunately, smaller size comes at the expense of more limited capabilities. The interplay between limited flight time, limited sensing, and limited power hinder an MAV from performing grander tasks on its own. This has created a strong interest in developing MAV swarms (Yang et al., 2018). The paradigm of swarm robotics aims to transcend the limitations of a single robot by enabling cooperation in larger teams. This is inspired by the animal kingdom, where animals and insects have been observed to unite forces toward a common goal that is otherwise too complex or challenging for the lone individual (Garnier et al., 2007). Using several robots at once can bring several advantages and possibilities, such as: redundancy, faster task completion due to parallelization, or the execution of collaborative tasks (Martinoli and Easton, 2003; Trianni and Campo, 2015; Nedjah and Junior, 2019). The control of robotic swarms is envisioned to be fully distributed. The individual robots perceive and process their environment locally and then act accordingly without global awareness or direct awareness of the final goal of the swarm. Nevertheless, by means of collaboration, the robots can achieve an objective that they would not have been able to achieve by themselves. As they say: there is strength in numbers.

It is easy to imagine swarms of MAVs jointly carrying a load that is too heavy for a single one to lift, or persistently exploring an area without interruption. As is often the case, however, putting such visions into practice is another story altogether. Developing self-organizing swarms of MAVs in the real world is a multi-disciplinary challenge coarsely divided in two main aspects. One aspect is that of the individual MAV design, where the local abilities of a single MAV are defined. The second aspect is the swarm design, whereby we need to develop controllers with which the global goal can be efficiently achieved, autonomously, by the swarm. To make matters more complicated, the two are not decoupled. As we shall explore in this paper, there exist fundamental links between the local limitations of an MAV and the behaviors that a swarm of MAVs could, or should, execute as a result. Vice versa, in order to realize certain swarm behaviors, there are local requirements that the individual MAVs must meet. This bond between the local and the global cannot be ignored if MAV swarms are to be brought to the real world. In this paper, we aim to reconcile these two aspects and present a discussion of the fundamental challenges and constraints linking local MAV properties and global swarm behaviors.

2. Co-dependence of Swarm Design and Individual MAV Design

Let us begin from the primary challenge of swarm robotics: to design local controllers that successfully lead to global swarm behaviors (Şahin et al., 2008). Concerning MAVs, these global behaviors include, but are not limited to: collaborative transport, collaborative construction, distributed sensing, collaborative object manipulation, and parallelized exploration and mapping of environments. Albeit the individual MAV may be limited in its ability to successfully perform these tasks (for instance, as areas get larger or loads get heavier), they can be tackled by collaborating in a swarm. Generally, swarms of robots are expected to feature the following inherent advantages (Şahin et al., 2008; Brambilla et al., 2013):

• Robustness: The swarm is robust to the loss or failure of individual robots.

• Flexibility: The swarm can reconfigure to tackle different tasks.

• Scalability: The swarm can grow and shrink in size depending on the needs of the global task.

When designing a swarm of MAVs, we must then ask ourselves: how can we design a swarm that is robust, flexible, and scalable? It is true that these properties pertain to the swarm rather than the individual, but if the swarm is composed of individual units, then it follows that they must also be present (although perhaps not always apparent) at the local level. We cannot use individual robots that are not robust and merely expect the swarm as a whole to be immune or tolerant to individual failures (Bjerknes and Winfield, 2013). If there is a high probability of errors at the local level, such as erroneous observations, poorly executed commands, or failure of a unit, then this may have a repercussion on the swarm's performance; an effect that Bjerknes and Winfield (2013) have shown can worsen with the number of robots in a swarm. There is a point after which the individual robots are too unreliable and the swarm can fail to achieve its goal, or it can be shown to be outperformed by smaller teams with more reliable units (Stancliff et al., 2006) or even by a single reliable system (Engelen et al., 2014). The further complication with MAVs is that local failures do not remain local, but are likely to cause collisions and damages to other nearby MAVs and/or objects. For some tasks, such as collective transport, the impact may be even more severe as the MAVs are mechanically attached to the load (Tagliabue et al., 2019). It thus follows that, to develop a robust swarm for real world deployment, we must also ensure robustness at the local level.

Of equal importance is to make sure that the robots have the satisfactory tools and sensors to carry out their individual components of a global task. The more capable the sensors are, the more likely it is that the swarm can be flexible and adjust to different tasks or unexpected changes. When performing pure swarm intelligence research, we can afford to abstract away from lower level issues (Brutschy et al., 2015). For instance, in a study on making a decision about selecting a new location for a swarm's nest, one can abstract away from actually evaluating the quality of a nest location, and instead focus the analysis on a particular aspect of the system, such as the decision making process. However, when dealing with real-world applications, this is not an option. If we want to develop nest selection capabilities for a swarm in the real world, each robot should be capable of: flying and operating safely, recognizing the existence of a site, evaluating the quality of a site with a certain reliability, exchanging this information with its neighbors, and more. All these lower level requirements need to be appropriately realized for the global level outcome to emerge, or otherwise need to be accepted as limitations of the system. The way in which they are implemented shape the final behavior of the swarm.

Last but not least, unless properly accounted for, there are scalability problems that may also occur as the swarm grows in size. Examples of issues are: a congested airspace whereby the MAVs are unable to adhere to safety distances, a cluttered visual environment as a result of several MAVs (thus obstructing the task), or poor connectivity as a result of low-range communication capabilities. To achieve scalability, the MAV design must be such that these properties are appropriately accommodated, from the appropriate hardware design all the way to the higher level controllers which make up the swarm behavior.

2.1. The Challenge of Local Sensing and Control

When flying several MAVs at once, the control architecture can be of two types: (1) centralized, or (2) decentralized. In the centralized case, all MAVs in a swarm are controlled by a single computer. This “omniscient” entity knows the relevant states of all MAVs and can (pre-)plan their actions accordingly. The planning can be done a-priori and/or online. In the decentralized case, the MAVs make their decisions locally.

A second dichotomy can also be defined for how the MAVs sense their environment: (1) using external position sensing, or (2) locally. External positioning is typically achieved with a Global Navigation Satellite System (GNSS) or with a Motion Capture System (MCS), depending on whether the MAVs are flying outdoors or indoors, respectively. Alternatively, the latter only relies on the sensors that are on-board of the MAV.

Currently, the combination of centralized architecture and external positioning have achieved the highest stage of maturity, allowing for flights with several MAVs. Kushleyev et al. (2013) showed a swarm of 20 micro quadrotors that could re-organize in several formations. Lindsey et al. (2012), Augugliaro et al. (2014), and Mirjan et al. (2016) developed impressive collaborative construction schemes using a team of MAVs. Preiss et al. (2017) showcased “Crazyswarm,” an indoor display of 49 small quadrotors flying together. The strategy of centralized planning and external positioning has also attracted large industry investments, leading to shows with record-breaking number of MAVs flying simultaneously. In 2015, Intel and Ars Electronica Futurelab first flew 100 MAVs, making a Guinness World Record (Swatman, 2016a). In 2016, Intel beat its own record by flying 500 MAVs simultaneously (Swatman, 2016b). In 2018, EHang claimed the record with 1,374 MAVs flying above the city of Xi'an, China (Cadell, 2018). In 2019, Intel reclaimed the title by flying 2,066 MAVs (Guinness World Records, 2018) outdoors. Meanwhile, the record for the most MAVs flying indoors (from a single computer) was recently broken by BT with 160 MAVs (Guinness World Records, 2019).

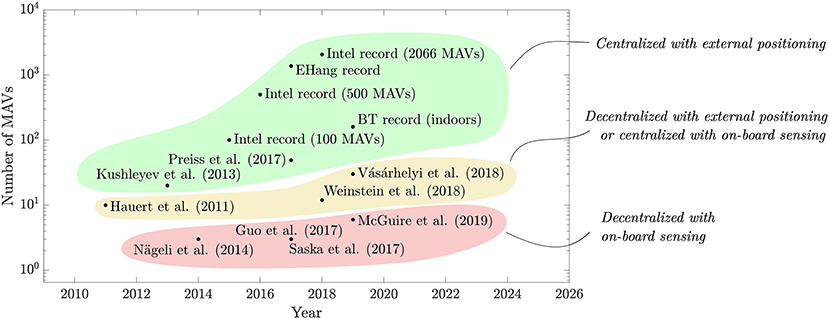

Without external positioning systems or centralized planning/control, the problem of flying several MAVs at once becomes more challenging. This is because: (1) the MAVs have to rely only on on-board perception, or (2) they have to make local decisions without the benefit of global planning, or (3) both. It is then not surprising that, as shown in Figure 1, the swarms that have been flown without external positioning and/or centralized control are significantly smaller. When the control is decentralized, but the MAVs benefit from an external positioning system, or vice versa, the largest swarms are in the dozens (Hauert et al., 2011; Vásárhelyi et al., 2018; Weinstein et al., 2018). For swarms featuring both local perception and distributed control, the highest numbers are currently in the single digits (Nägeli et al., 2014; Guo et al., 2017; Saska et al., 2017; McGuire et al., 2019). Despite the fact that these numbers have been increasing in the last few years, they are still lower, as the operations are shifted away from external system and toward on-board perception and control. If the past is any indication for the future, we expect that: (1) the numbers of drones will keep increasing for all cases, and (2) businesses will take over the records as the technologies for on-board decision making and perception become more mature.

Figure 1. Scatter plot of the number of MAVs that have been flown in sampled state of the art studies discussed in this paper. The combination of centralized planning/control with external positioning has allowed to fly significantly larger swarms. The numbers are lower for the works featuring decentralized control with external positioning, or centralized control with local sensing. The works that use both decentralized control and do not rely on external positioning can be seen to feature the fewest MAVs due to the increased complexity of the control and perception task.

Although we can fly a high number of MAVs when using centralized planning and external positioning, swarming is not just a numbers game. Flying with many MAVs does not automatically imply that we are achieving the benefits of swarm robotics (Hamann, 2018). A centralized system relies on a main computer to take all decisions. This means that a prompt online re-planning is needed in order to achieve robustness and flexibility. This re-planning grows in complexity with the size of the swarm, making the system unscalable. Moreover, the central computer represents a single point of failure. Instead, a swarm adopts a distributed strategy whereby each robot takes a decision independently. The fact that each MAV needs to take its own decisions, and additionally, if the MAVs do not rely on external infrastructure, introduces a new layer of difficulty. However, this is also what brings new advantages: redundancy, scalability, and adaptability to changes (Şahin, 2005; Bonabeau and Théraulaz, 2008)1.

When we analyze swarms of MAVs with local on-board sensing and control, we can observe two trends: (1) As the size of the swarm increases, the relative knowledge that each MAV will have of its global environment, which includes the remainder of the swarm, decreases (Bouffanais, 2016); (2) as the individual MAV's size and/or mass decreases, its capability to sense its own local environment decreases (Kumar and Michael, 2012). This creates an interesting challenge. On the one hand, we aim to design smaller, lighter, cheaper, and more efficient MAVs. On the other hand, as we make these MAVs smaller, the gap between the microscopic and macroscopic widens further. Designing the swarm becomes a more challenging task because each MAV has less information about its environment and is also less capable to act on it. This can be generalized to other robotic platforms as well, but MAVs feature the increased difficulty of having a tightly bound relationship between their on-board capabilities, their dynamics, their processing power, and their sensing (Chung et al., 2018). This is sometimes referred to as the SWaP (Size, Weight, and Power) trade-off (Mahony et al., 2012; Liu et al., 2018). The relationship is often non-linear. For instance, if we add a sensor that results in 5% more power usage, it does not only spend more energy per second, but it also affects the total energy that can be extracted from the battery as it will be operating in a different regime (de Croon et al., 2016). For many MAVs, grams and milliwatts matter. This makes the design of autonomous decentralized swarms of MAVs a more unique challenge.

2.2. Overview of Design Challenges Throughout the Design Chain

Throughout this paper, we shall review the state of the art in MAV technology from the swarm robotics perspective. To facilitate our discussion, we will break down the challenges for the design and control of an MAV swarm in the following four levels, from “local” to “global.”

1. MAV design. This defines the processing power, flight time, dynamics, and capabilities of the single MAV. Most importantly from a swarm engineering perspective, it defines the sensory information available on each unit, from which it can establish its view of the world. This is discussed in section 3.

2. Local ego-state estimation and control. At the lowest level, an MAV must be capable of controlling its motion with sufficient accuracy. This lower level layer handles basic flight operations of the MAV. This includes attitude control, height control, and velocity estimation and control. Moreover, the MAV should be capable of safely navigating in its environment. Minimally, it should detect and avoid potential obstacles. The challenges and state of the art for these methods are discussed in section 4.

3. Intra-swarm relative sensing and avoidance. There are two key enabling technologies for swarming. The first is the knowledge on (the location of) nearby neighbors. This is particularly important for MAV swarms as it not only enables several higher level swarming behaviors, but it also ensures that MAVs do not collide with one another in mid-air. The second enabling technology is communication between MAVs, such that they can share information and thus expand their knowledge of the environment via their neighborhood. These are is discussed in section 5.

4. Swarm behavior. This is the higher level control policy that the robots follow to generate the global swarm behavior. Examples of higher level controllers in swarms range from attraction and repulsion forces for flocking (Gazi and Passino, 2002; Vásárhelyi et al., 2018) to neural networks for aggregation, dispersion, or homing (Duarte et al., 2016). We discuss how MAV swarm behaviors can be designed in section 6.

Other similar taxonomies have been defined. Floreano and Wood (2015) describe three levels of robotic cognition: sensory-motor autonomy, reactive autonomy, and cognitive autonomy. Meanwhile, de Croon et al. (2016) divide the control process for autonomous flight into four levels: attitude control, height control, collision avoidance, and navigation. Although the taxonomies above are conceptually similar (generally going from low level sensing and control to a higher level of cognition), the re-definition that we provide here is designed to better organize our discussion within the context of swarm robotics. Moreover, we also include the design of the MAV within the chain. As we will explain in this manuscript, this has a fundamental impact on the higher level layers.

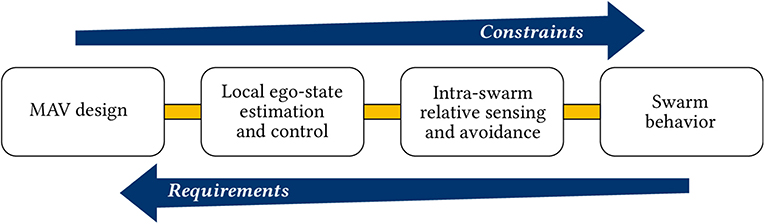

The four stages that have been defined have an increasing level of abstraction. The lower levels enable the robustness, flexibility, and scalability properties expected at the higher level, while the higher levels dictate, accommodate, and make the most out of the capabilities set at the lower level. From a more systems perspective, the MAV design poses constraints on what the higher level controllers can expect to achieve, while the higher level controllers create requirements that the MAV must be able to fulfill. A simplified view of the flow of requirements and constraints is shown in Figure 2.

Figure 2. Generalized depiction of the flow of requirements and constraints for the design of MAV swarms. The lower level design choices create constraints on the higher level properties of the swarm. Higher level design choices create requirements for the lower levels, down to the physical design of the MAV. Note that, for the specific case, the flow of requirements and constraints is likely to be more intricate than this picture makes it out to be. However, the general idea remains.

Throughout the remainder of this paper, as we discuss the state of the art at each level, we will highlight the major constraints that flow upwards and the requirements that flow downwards. Naturally, each sub-topic that we will treat features a plethora of solutions, challenges, and methods, each deserving of a review paper of its own. It is beyond the scope (and probably far beyond any acceptable word limit, too) to present an exhaustive review about each topic. Instead, we keep our focus to highlighting the main methodologies and how they can be used to design swarms of MAVs. Where possible, we will refer the reader to more in-depth reviews on a specific topic.

3. MAV Design

The differentiating challenge faced by a flying robot, namely (and somewhat trivially) the fact that it has to carry its own mass around, creates a strong design driver toward minimalism. Despite battery mass consisting of up to 20–30% of the total system mass, the flight time of quadrotor MAVs still remains limited to the order of magnitude of minutes (Kumar and Michael, 2012; Mulgaonkar et al., 2014; Oleynikova et al., 2015). To increase the carrying capabilities of an MAV, enabling it to carry more/better sensors, processors, or actuators, while keeping flight time constant, means that the size of the battery should also increase. In turn, this leads to a new increase in mass, and so on. This type of spiral, often referred to as the “snowball effect,” is a well-known issue for the design of any flying vehicle, from MAVs to trans-Atlantic airliners (Obert, 2009; Lammering et al., 2012; Voskuijl et al., 2018). It then becomes paramount for an MAV design to be as minimalist as possible relative to its task, such that it may fulfill the mission requirements with a minimum mass (or, at the very least, there is a trade-off to be considered). This design driver has been taken to the extreme and has lead to the development of miniature MAV systems, popular examples of which include the Ladybird drone and the Crazyflie (Lehnert and Corke, 2013; Remes et al., 2014; Giernacki et al., 2017). These MAVs have a mass of <50 g, making them attractive due to their low cost and the fact that they are safer to operate around people. This makes them appealing for swarming, especially in indoor environments (Preiss et al., 2017).

A substantial body of literature already exists on single MAV design, the specifics of which largely vary depending on the type of MAV in question. We refer the reader to the works of Mulgaonkar et al. (2014) and Floreano and Wood (2015) and the sources therein for more details. From the swarming perspective, it is important to understand that, independently of the type of MAV in question, the following constraints are intertwined during the design phase: (1) flight time, (2) on-board sensing, (3) on-board processing power, and (4) dynamics. This means that the choice of MAV directly constrains the application as well as the swarming behavior that can be achieved (or, vice versa, a desired swarming behavior requires a specific type of MAV). For example, fixed wing MAVs benefit from longer autonomy. This makes them ideal candidates for long term operations, and also give the operators more time to launch an entire fleet and replace members with low batteries (Chung et al., 2016). However, fixed wing MAVs also have limited agility in comparison to quadrotors or flapping wing MAVs. The latter, for instance, can have a very high agility (Karásek et al., 2018), but also comes with more limited endurance and payload constraints (Olejnik et al., 2019). The MAV design impacts the number and type of sensors that can be taken on-board. It can also impact how these sensors are positioned and their eventual disturbances and noise. In turn, this affects the local sensing and control properties of the MAV and can also impact its ability to sense neighbors and operate in a team more effectively. We will return to this where relevant in the next chapters, whereby we discuss how an MAV can estimate and control its motion, sense its neighbors, and navigate in an environment together with the rest of the swarm.

A special note is made to designs that are intended for collaboration. Oung and D'Andrea (2011) introduced the Distributed Flight Array, a design whereby multiple single rotors can attach and detach from each other to form larger multi-rotors. More recently, Saldaña et al. (2018) introduced the ModQuad: a quadrotor with a magnetic frame designed for self-assembly with its neighbors. This design provides a solution for collaborative transport by creating a more powerful rigid structure with several drones. Gabrich et al. (2018) have shown how the ModQuad design can be used to form an aerial gripper. Because of the frame design, one of the difficulties of the ModQuad was in the disassembly back to individual quadrotors. This was tackled with a new frame design which enabled the quadrotors to disassemble by moving away from each other with a sufficiently high roll/pitch angle (Saldaña et al., 2019).

4. Local Ego-State Estimation and Control

The primary objective for a single MAV operating in a swarm is to remain in flight and perform higher level tasks with a given accuracy. This requires a robust estimation of the on-board state as well as robust lower level control, preferably while minimizing the size, power, and processing required. The design choices made here dictate the accuracy (i.e., noise, bias, and disturbances) with which each MAV will know its own state, as well as which variables the state is actually comprised of. In turn, this affects the type of maneuvers and actions that an MAV can execute. For instance, aggressive flight maneuvers likely require relatively accurate real-time state estimation (Bry et al., 2015). Of equal importance are the considerations for the processing power that remains for higher level tasks. While it can be attractive to implement increasingly advanced algorithms to achieve a more reliable ego-state estimate, these can be too computationally expensive to run on-board even by modern standards (Ghadiok et al., 2012; Schauwecker and Zell, 2014). This limits the MAV, as processing power is diverted from tasks at a higher level of cognition. If not properly handled, it can lead to sub-optimal final performances by the MAVs and by the swarm2.

4.1. Low-Level State Estimation and Control

This section outlines the main sensors and methods that can be used by MAVs to measure their on-board states, laying the foundations for our swarm-focused discussion in later sections. We organize the discussion by focusing on the following parameters: attitude (section 4.1.1), velocity and odometry (section 4.1.2), and height and altitude (section 4.1.3). Moreover, we restrict our overview to on-board sensing, as this is in line with the swarming philosophy and the relevant applications.

4.1.1. Attitude

It is essential for an MAV to estimate and control its own attitude in order to control its flight (Beard, 2007; Bouabdallah and Siegwart, 2007). Accelerations and angular rotation rates are typically measured through the on-board Inertial Measurement Unit (IMU) sensor (Bouabdallah et al., 2004; Gupte et al., 2012). The IMU measurements can be fused together to both estimate and control the attitude of an MAV (Shen et al., 2011; Schauwecker et al., 2012; Macdonald et al., 2014; Mulgaonkar et al., 2015). Additionally to the IMU, MAVs equipped with cameras can also use it to infer the attitude with respect to certain reference features or planar surfaces, as in Schauwecker and Zell (2014). Thurrowgood et al. (2009), Dusha et al. (2011), de Croon et al. (2012), and Carrio et al. (2018) estimate the roll and pitch angles of an MAV based on the horizon line (outdoors). The measurements from the IMU and vision can then be filtered together to improve the estimate as well as filter out the accumulating bias from the IMU (Martinelli, 2011). Once known, attitude control can be achieved with a variety of controllers. For a recent survey that treats the topic of attitude control in more detail, we refer the reader to the review by Nascimento and Saska (2019). Of particular interest to swarming are controllers that can provide robustness to disturbances or mishaps. One interesting example is the scheme devised by Faessler et al. (2015), which can automatically re-initialize the leveled flight of an MAV in mid-air.

Measuring and controlling the heading (for instance, with respect to North) is not strictly needed for basic flight. However, it can be an enabler for collective motion by providing a common reference that can be measured locally by all MAVs (Flocchini et al., 2008). Heading with respect to North can be measured with a magnetometer, which is a common component for MAVs (Beard, 2007). A main limitation of this sensor is that it is highly sensitive to disturbances in the environment (Afzal et al., 2011). The disturbances can be corrected for with the use of other attitude sensors. For example, Pascoal et al. (2000) fused gyroscope measurements with the magnetometer in order to filter out disturbances from the magnetometer while also reducing the noise from the gyroscope. Another sensor that has been explored is the celestial compass, which extracts the orientation based on the Sun (Jung et al., 2013; Dupeyroux et al., 2019). Although this sensor is not subject to electro-magnetic disturbances, it is limited to outdoor scenarios and performs best under a clear sky, which may also not always be the case.

4.1.2. Velocity and Odometry

A tuned sensor fusion filter with an accurate prediction model can estimate velocity just based on the IMU readings (Leishman et al., 2014). However, the use of additional and dedicated velocity sensors is commonly used to achieve a more robust system without bias. Fixed wing MAVs can be equipped with a pitot tube in order to measure airspeed (Chung et al., 2016). For other designs, such as quadrotors, a popular solution is to measure the optic flow, i.e., the motion of features in the environment, from which an MAV can extract its own velocity (Santamaria-Navarro et al., 2015). To observe velocity, the flow needs to be scaled with the help of a distance measurement, such as height (albeit this assumes that the ground is flat, which may be untrue in cluttered/outdoor environments). Optic flow can be measured with a camera or with dedicated sensors, such as PX4FLOW (Honegger et al., 2013) or the PixArt sensor3. Using optical mouse sensors, Briod et al. (2013) were able to make a 46 g quadrotor fly based on only inertial and optical-flow sensors, even without the need to scale the flow by a distance measurement. This was achieved by only using the direction of the optic flow and disregarding its magnitude. In nature, optic flow has also been shown to be directly correlated with how insects control their velocity in an environment (Portelli et al., 2011; Lecoeur et al., 2019). Similar ideas have also been ported to the drone world, whereby the optic flow detection is directly correlated to a control input, without even necessarily extracting states from it (Zufferey et al., 2010). This can be an attractive property in order to create a natural correlation between a sensor and its control properties. State estimates improve when optic flow is fused with other sensors, such as IMU readings or pressure sensors (Kendoul et al., 2009a,b; Santamaria-Navarro et al., 2015), or with the control input of the drone (Ho et al., 2017). As opposed to optic flow sensors, a camera has the advantage that it can observe both optic flow as well as other features in the environment, thus enabling an MAV to get more out of a single sensor. Although this is more computationally expensive, it also provides versatility.

The use of vision also enables the tracking of features in the environment, which a robot can use to estimate its odometry. Using Visual Odometry (VO), a robot integrates vision-based measurements during flight in order to estimate its motion. The inertial variant of VO, known as Visual Inertial Odometry (VIO), further fuses visual tracking together with IMU measurements. This makes it possible for an MAV to move accurately relative to an initial position (Scaramuzza and Zhang, 2019). VIO has been exploited for swarm-like behaviors, such as in the work by Weinstein et al. (2018), whereby twelve MAVs form patterns by flying pre-planned trajectories and use VIO to track their motion. A step beyond VO and its variants is to use Simultaneous Localization And Mapping (SLAM). The advantage of SLAM is that it can mitigate the integration drift of VO-based methods. When solving the full SLAM problem, a robot estimates its odometry in the environment and then corrects it by recognizing previously visited places and optimizing the result accordingly, so as to make a consistent map (Cadena et al., 2016; Cieslewski and Scaramuzza, 2017). Yousif et al. (2015) and Cadena et al. (2016) provide more in-depth reviews of VO and SLAM algorithms. Within the swarming context, a map can also be shared so as to make use of places and features that have been seen by other members of the swarm. One common drawback of VO and SLAM methods is that they are computationally intensive and thus reserved for larger MAVs (Ghadiok et al., 2012; Schauwecker and Zell, 2014). However, recent developments have also seen the introduction of more light-weight solutions, such as Navion (Suleiman et al., 2018).

Odometry and SLAM are not limited to the use of vision. A viable alternative sensor is the LIDAR (Light Detection and Ranging) scanner, more commonly referred to as “laser scanner.” LIDAR-based SLAM feature the same philosophy as the vision counterparts, but instead of a camera it uses LIDAR to measure depth information and build a map (Bachrach et al., 2011; Opromolla et al., 2016; Doer et al., 2017; Tripicchio et al., 2018). A LIDAR is generally less dependent on lighting conditions and needs less computations, but it is also heavier, more expensive, and consumes more on-board power (Opromolla et al., 2016). Vision and LIDAR can also be used together to further enhance the final estimates (López et al., 2016; Shi et al., 2016).

4.1.3. Height and Altitude

In an abstract sense, the ground represents an obstacle that the MAV must avoid, much like walls, objects, or other MAVs. It does not need to be explicitly known in order to control an MAV, as shown in the work of Beyeler et al. (2009). Unlike other obstacles, however, gravity continuously pulls the MAV toward the ground, meaning that measuring and controlling height and altitude often requires special attention.

Note that we differentiate here between height and altitude. Height is the distance to the ground surface, which can vary when there is a high building, a canyon, or a table. The height of an MAV can be measured with an ultrasonic range finder (or “sonar”). Sonar can provide more accurate data at the cost of power, mass, size, and a limited range. Its accuracy, however, made it a part of several designs (Krajník et al., 2011; Ghadiok et al., 2012; Abeywardena et al., 2013). Infra-red or laser range finders have also been used as an alternative (Grzonka et al., 2009; Gupte et al., 2012). The advantage of an infra-red sensor is that it can be very power efficient, albeit it is only reliable up to a limited range of a few meters, and on favorable light conditions (Laković et al., 2019)4. Altitude is the distance to a fixed reference point, such as sea level or a take-off position. A pressure sensor is a common sensor to obtain this measurement (Beard, 2007), but it can be subject to large noise and disturbances in the short term, which can be reduced via low pass filters (Sabatini and Genovese, 2013; Shilov, 2014). If flying outdoors, a Global Navigation Satellite System (GNSS) can also be used to obtain altitude.

The choice of height/altitude sensor has an impact on the swarm behaviors that can be programmed. GNSS and pressure sensors provide a measurement of the altitude of the MAV with respect to a certain position. This is an attractive property, although, as previously discussed, GNSS is limited to outdoor environments, while pressure sensors can be noisy. Moreover, all pressure sensors of all MAVs in the swarm should be equally calibrated. Unlike pressure sensors, ultrasonic sensors or laser range finders do not require this calibration step, since the measurement is made from the MAV to the nearest surface. However, one must then assume that the MAVs all fly on a flat plane with no objects (or other MAVs below them), which may turn out to not be a valid assumption. SLAM and VIO methods, previously discussed in section 4.1.2, can also estimate altitude/height as part of the odometry/mapping procedure provided that a downwards facing camera is available.

Just as for the use of a common heading like North, the measurements of height and/or altitude can provide a common reference plane for a swarm of MAVs. If the vertical distance between the MAVs is sufficient, it can provide a relatively simple solution for intra-swarm collision avoidance (albeit with constraints—we return to this in section 5.2). It can also enable self-organized behaviors, such as in the work of Chung et al. (2016), where the MAVs are made to follow the one with the highest altitude within their sub-swarm. In this way, the leader is automatically elected in a self-organized manner by the swarm. For example, should a current leader MAV need to land as a result of a malfunction, a new leader can be automatically re-elected so that the rest of the swarm can keep operating.

4.2. Achieving Safe Navigation

It is important that each MAV remains safe and that it does not collide with its surroundings, or that damages remain limited in case this happens. This safety requirement can be satisfied in two ways. The first, which is more “passive” and brings us back to MAV design, is to develop MAVs that are mechanically collision resilient. This allows the MAV to hit obstacles without risking significant damage to itself or its environment. With this rationale, Briod et al. (2012), Mulgaonkar et al. (2015, 2018), and Kornatowski et al. (2017) placed protective cages around an MAV. However, the additional mass of a cage can negatively impact flight time and the cage can also introduce drag and controllability issues (Floreano et al., 2017). Instead, Mintchev et al. (2017) developed a flexible design for miniature quadrotors in order to be more collision resilient upon impact with walls. The use of airships has also been proposed as a more collision resilient solution (Melhuish and Welsby, 2002; Troub et al., 2017). The limitations of airships, however, are in their lower agility and restricted payload capacity. More recently, Chen et al. (2019) demonstrated insect scale designs that use soft artificial muscles for flapping flight. The soft actuators, combined with the small scale of the MAV, are such that the MAVs can be physically robust to collisions with obstacles and with each other. Collision resistant designs can even be exploited to improve on-board state estimation, such as in the recent work by Lew et al. (2019), whereby collisions are used as pseudo velocity measurement under the assumption that the velocity perpendicular to an obstacle, at the time of impact, is null. The alternative, or complementary, solution to passive collision resistance is “active” obstacle sensing and avoidance, whereby an MAV uses its on-board sensors to identify and avoid obstacles in the environment.

Collision-free flight can be achieved via two main navigation philosophies: (1) map-based navigation, and (2) reactive navigation. With the former, a map of the environment can be used to create a collision-free trajectory (Shen et al., 2011; Weiss et al., 2011; Ghadiok et al., 2012). The map can be generated during flight (using SLAM) and/or, for known environments, it can be provided a-priori. The advantage of a map-based approach is that obstacle avoidance can be directly integrated with higher level swarming behaviors (Saska et al., 2016b). Instead, a reactive control strategy uses a different philosophy whereby the MAV only reacts to obstacles in real-time as they are measured, regardless of its absolute position within the environment. In this case, if an MAV detects an obstacle, it reacts with an avoidance maneuver without taking its higher level goal into account. The trajectories pursued with a reactive controller may be less optimal, but the advantage of a reactive control strategy is that it naturally accounts for dynamic obstacles and it is not limited to a static map. The two can also operate in a hierarchical manner, such that the reactive controller takes over if there is a need to avoid an obstacle, and the MAV is otherwise controlled at a higher level by a path planning behavior. Regardless of the navigation philosophy in use, if the MAV needs to sense and avoid obstacles during flight, it will require sensors that can provide it with the right information in a timely manner.

Of all sensors, vision provides a vast amount of information from which an MAV can interpret its direct environment. By using a stereo-camera, the disparity between two images gives depth information (Heng et al., 2011; Matthies et al., 2014; Oleynikova et al., 2015; McGuire et al., 2017). Alternatively, a single camera can also be used. For example, the work of de Croon et al. (2012) exploited the decrease in the variance of features when approaching obstacles. Ross et al. (2013) used a learning routine to map monocular camera images to a pilot command in order to teach obstacle avoidance by imitating a human pilot. Kong et al. (2014) proposed edge detection to detect the boundary of potential obstacles in an image. Saha et al. (2014) and Aguilar et al. (2017) used feature detection techniques in order to extract potential obstacles from images. Alvarez et al. (2016) used consecutive images to extract a depth map (a technique known as “motion parallax”), albeit the accuracy of this method is dependent on the ego-motion estimation of the quadrotor. Learning approaches have also been investigated in order to overcome the limitations of monocular vision. By exploiting the collision resistant design of a Parrot AR Drone, Gandhi et al. (2017) collected data from 11,500 crashes and used a self-supervised learning approach to teach the drone how to avoid obstacles from only a monocular camera. Self-supervised learning of distance from monocular images can also be accomplished without the need to crash, but with the aid of an additional sensor. Lamers et al. (2016) did this by exploiting an infrared range sensor, and van Hecke et al. (2018) applied this to see distances with one single camera by learning a behavior that used a stereo-camera. This is useful if the stereo-camera were to malfunction and suddenly become monocular. Alternative camera technologies have also been developed, providing new possibilities. RGB-D sensors are cameras that also provide a per-pixel depth map, a mainstream example of which is the Microsoft Kinect camera (Newcombe et al., 2011). This particular sensor augments one RGB camera with an IR camera and an IR projector, which together are capable of measuring depth (Smisek et al., 2013). RGB-D sensors have been used on MAVs to navigate in an environment and avoid obstacles (Shen et al., 2014; Stegagno et al., 2014; Odelga et al., 2016; Huang et al., 2017). One of the disadvantages of these RGB-D sensors over a stereo-camera set-up (whereby depth is inferred from the disparity) is that RGB-D sensors can be more sensitive to natural light, and may thus perform less well in outdoor environments (Stegagno et al., 2014). Finally, in recent years, the introduction of Dynamic Vision Sensor (DVS) cameras has also enabled new possibilities for reactive obstacle sensing. A DVS camera only measures changes in the brightness, and can thus provide a higher data throughput. This enables a robot to quickly react to sudden changes in the environment, such as the appearance of a fast moving obstacle (Mueggler et al., 2015; Falanga et al., 2019a).

The capabilities of a vision algorithm will depend on the resolution of the on-board cameras, the number of the on-board cameras, as well as the processing power on-board. On very lightweight MAVs, such as flapping wings, even carrying a small stereo-camera can be challenging (Olejnik et al., 2019). A further known disadvantage of vision is the limited Field of View (FOV) of cameras. Omni-directional sensing can only be achieved with multiple sets of cameras (Floreano et al., 2013; Moore et al., 2014) at the cost of additional mass, the impact of which is dependent on the design of the MAV.

Although vision is a rich sensor, in that it can provide different types of information, other sensors also can be used for reactive collision avoidance. LIDAR, for instance, has the advantage that it is less dependent on lighting conditions and can provide more accurate data for localization and navigation (Bachrach et al., 2011; Tripicchio et al., 2018). Alternatively, time-of-flight laser ranging sensors have also been proposed for reactive obstacle avoidance algorithms on small drones (Laković et al., 2019). These uni-directional sensors can sense whether an object appears along their line of sight (typically up to a few meters). Due to their small size and low power requirements, they can be used on tiny MAVs (Bitcraze, 2019)5.

5. Intra-Swarm Relative Sensing and Collision Avoidance

Once we have an MAV design that can perform basic safe flight, we begin to expand its capabilities toward collaboration in a swarm. Two fundamental challenges need to be considered in this domain. The first is relative localization. This is not only required to ensure intra-swarm collision avoidance, which is a basic safety requirement, but also to enable several swarm behaviors (Bouffanais, 2016). The design choice used for intra-swarm relative localization defines and constrains the motion of the MAVs relative to one another, which affects the swarming behavior that can be implemented. The second challenge is intra-swarm communication. Much like knowing the position of neighbors, the exchange of information between MAVs can help the swarm to coordinate (Valentini, 2017; Hamann, 2018). In this section, we explore the state of the art for relative localization (section 5.1), reactive collision avoidance maneuvers (section 5.2), and we discuss intra-swarm communication technologies (section 5.3).

5.1. Relative Localization

In outdoor environments, relative position can be obtained via a combination of GNSS and intra-swarm communication. Global position information obtained via GNSS is communicated between MAVs and then used to extract relative position information. This has enabled connected swarms that can operate in formations or flocks (Chung et al., 2016; Yuan et al., 2017). An impressive recent display of this in the real world was put into practice by Vásárhelyi et al. (2018), who programmed a swarm of 30 MAVs to flock. The same concept can be applied to indoor environments if pre-fitted with, for example: external markers (Pestana et al., 2014), motion-tracking cameras (Kushleyev et al., 2013), antenna beacons (Ledergerber et al., 2015; Guo et al., 2016), or ultra sound beacons (Vedder et al., 2015). However, this dependency on external infrastructure limits the swarm to being operable only in areas that have been properly fitted to the task. Several tasks, especially the ones that involve exploration, cannot rely on these methods. In order to remove the dependency on external infrastructure, there is a need for technologies that allows the MAVs themselves to obtain a direct MAV-to-MAV relative location estimate. This is still an open challenge, with several technologies and sensors currently being developed.

One of the earlier solutions for direct relative localization on flying robots proposed the use of infrared sensors (Roberts et al., 2012). However, since infrared sensors are uni-directional, this used an array of sensors (both emitting and receiving) placed around the MAV in order to approach omni-directionality, making for a relatively heavy system. Alternatively, vision-based algorithms have once again been extensively explored. However, the robust visual detection of neighboring MAVs is not a simple task. The object needs to be recognized at different angles, positions, speeds, and sizes. Moreover, the image can be subject to blur or poor lighting conditions. One way to address this challenge is with the use of visual aids mounted on the MAVs, such as visual markers (Faigl et al., 2013; Krajník et al., 2014; Nägeli et al., 2014), colored balls (Roelofsen et al., 2015; Epstein and Feldman, 2018), or active markers, such as infrared markers (Faessler et al., 2014; Teixeira et al., 2018)6 or Ultra Violet (UV) markers (Walter et al., 2018, 2019). Visual aids simplify the task and improve the detection accuracy and reliability. However, they are not as easily feasible on all designs, such as flapping wing MAVs or smaller quadrotors. Marker-less detection of other MAVs is very challenging, since other MAVs have to be detected against cluttered, possibly dynamic backgrounds while the detecting MAV is moving by itself as well. A successful current approach is to rely on stereo vision, where other drones can be detected because they “float” in the air unlike other objects like trees or buildings. Carrio et al. (2018) explored a deep learning algorithm for the detection of other MAVs in stereo-based disparity images. An alternative is to detect other MAVs in monocular still images. Like the detection in stereo disparity images, this removes the difficulty of interpreting complex motion fields between frames, but it introduces the difficulty of detecting other, potentially (seemingly) small MAVs against background clutter. To solve the challenge, Opromolla et al. (2019) used a machine learning framework that exploited the knowledge that the MAVs were supposed to fly in formation. Their scheme used the knowledge of the formation in order to predict the expected position of a neighboring MAV and focus the vision-based detection on the expected region, thus simplifying the task. Employing a more end-to-end learning technique, Schilling et al. (2019) used imitation learning to autonomously learn a flocking behavior from camera images. Following the attribution method by Selvaraju et al. (2017), Schilling et al. studied the influence that each pixel of an input image had on the predicted velocity. It was shown that the parts of the image whereby neighboring MAVs could be seen were more influential, demonstrating that the network had implicitly learned to localize its neighbors. Despite the promising preliminary results, it is yet to be seen how it can handle other MAVs sizes or more cluttered backgrounds. Finally, it is possible to use the optic flow field for detecting other MAVs. This approach could have the benefit of generality, but it would require the calculation and interpretation of a complex, dense optic flow field. To our knowledge, this method has not yet been investigated.

From a swarming perspective, it may also be desirable to know the ID of a neighbor. However, IDs may be difficult to detect using vision without the aid of markers. This issue was explored by Stegagno et al. (2011), Cognetti et al. (2012), and Franchi et al. (2013) with fusion filters that infer IDs over time with the aid of communication. Moreover, cameras have a limited FOV. This limits the behaviors that can be achieved by the swarm. For instance, it may be limiting for surveillance tasks where quadrotors may need to look away from each other but can't or else they may collide or disperse. It can be addressed by placing several cameras around the MAVs (Schilling et al., 2019), but at the cost of additional mass, size, and power, which in turn creates new repercussions.

The use of vision is not only limited to directly recognizing other drones in the environment. With the aid of communication, two or more MAVs can also estimate their relative location indirectly by matching mutually observed features in the environment. The MAVs can compare their respective views and infer their relative location. In the most complete case, each MAV uses a SLAM algorithm to construct a map of its environment, which is then compared in full (as discussed in section 4, this can also be accomplished using other sensors, such as LIDAR, so this approach is not only reserved for vision). Although SLAM is a computationally expensive task, more easily handled centrally (Achtelik et al., 2012; Forster et al., 2013), it can also be run in a distributed manner, making for an infrastructure free system (Cunningham et al., 2013; Cieslewski et al., 2018; Lajoie et al., 2019). For a survey of collaborative visual SLAM, we refer the reader to the paper by Zou et al. (2019) and the sources therein. An additional benefit of collective map generation is that the MAVs benefit from the observations of their team-mates and can thus achieve a better collective map. However, if the desired objective is only to achieve relative localization, the computations can be simplified. Instead of computing and matching an entire map, the MAVs need only to concern themselves with the comparison of mutually observed features in order to extract their relative geometric pose (Achtelik et al., 2011; Montijano et al., 2016). This requires that the images compared by the MAVs have sufficient overlap and can be uniquely identified.

An alternative stream of research leverages only communication between MAVs to achieve relative localization, while also using the antennas as relative range sensors. Here, we will refer to these methods as communication-based ranging. The advantage of this method is that it offers omni-directional information at a relatively low mass, power, and processing penalty, leveraging a technology that is likely available on even the smallest of MAVs. Szabo (2015) first proposed the use of signal strength to detect the presence of nearby MAVs and engage in avoidance maneuvers. Also for the purposes of collision avoidance, Coppola et al. (2018) implemented a beacon-less relative localization approach based on the signal strength between antennas, using the Bluetooth Low Energy connectivity already available on even the smaller drones. Guo et al. (2017) proposed a similar solution using UltraWide Band (UWB) antennas for relative ranging, which offer a higher resolution even at larger distances. However, this work used one of the drones as a reference beacon for the others. One commonality between the solutions by Guo et al. (2017) and Coppola et al. (2018) was that the MAVs were required to have a knowledge of North, which enabled them to compare each other's velocities along the same global axis. However, in practice this is a significant limitation due to the difficulties of reliably measuring North, especially if indoors, as already discussed in section 4.1.1. To tackle this, van der Helm et al. (2019) showed that, if using a high accuracy ranging antenna, such as UWB, then it is not necessary for the MAVs to measure a common North. However, selecting this option creates fundamental constraints on the high-level behaviors of the swarm. This issue is there for the case where North is known and when it is not, albeit the requirement when North is not known are more stringent. If North is known, at least one of the MAVs must be moving relative to the other for the relative localization to remain theoretically observable. If North is not known, all MAVs must be moving. The MAVs remain bound to trajectories that excite the filter (van der Helm et al., 2019). For the case where North is known, Nguyen et al. (2019) proposed that a portion of MAVs in the swarm should act as “observers” and perform trajectories that persistently excite the system.

Another solution is to use sound. Early research in this domain was performed by Tijs et al. (2010), who used a microphone to hear nearby MAVs. This was explored in more depth by Basiri (2015) using full microphone arrays for relative localization. A primary issue encountered was that the sound emitted by the listening quadrotor would mask the sound of the neighboring MAVs, which were also similar. This was addressed with the use of a “chirp” sound, which can then be easily heard by neighbors, in order to overcome this issue (Basiri et al., 2014, 2016). In recent work, Cabrera-Ponce et al. (2019) proposed the use of a Convolutional Neural Network to detect the presence of nearby MAVs. This is done using a large scale microphone array (Ruiz-Espitia et al., 2018) featuring eight microphones based on the ManyEars framework (Grondin et al., 2013). Specific to sound sensors, the accuracy of the detection depends on how similar the sounds of other MAVs are. Moreover, the localization accuracy depends on the microphone setup. Most works use a microphone array, where the localization accuracy depends on the length of the baseline between microphones, which is inherently limited on small MAVs.

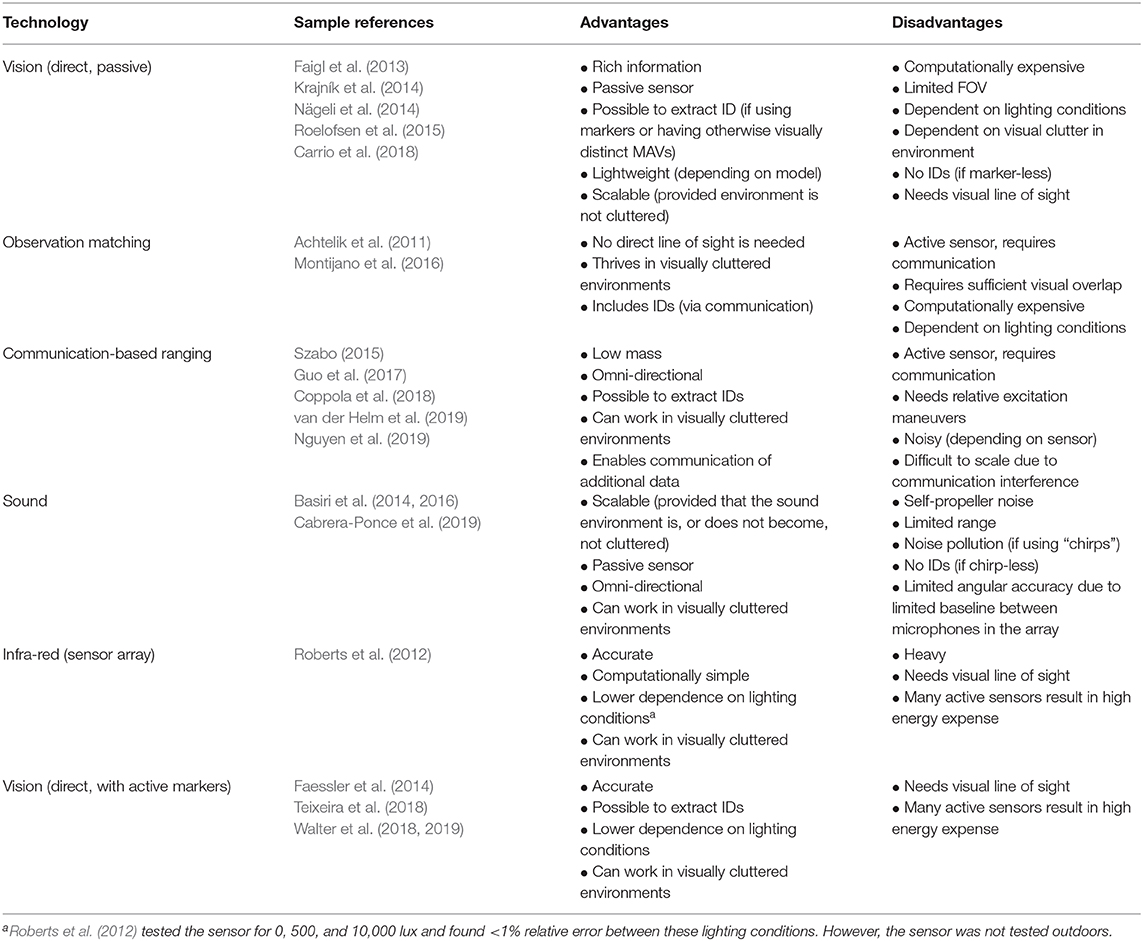

As it can be seen, several different techniques exist. Minimally, these technologies should enable neighboring MAVs to avoid collisions with one another. However, the particular choice of relative localization technology creates a fundamental constraint on the swarm behavior that can be achieved. For example, communication-based ranging methods have unobservable conditions depending on the MAVs' motion, and sound-based localization with microphone arrays will be less accurate when used on smaller MAVs. Similarly, certain swarm behaviors (e.g., one that requires known IDs, or long range distances) may place certain requirements on which technology is best to be used. In Table 1, we outline the major relative localization approaches with their advantages and disadvantages.

Table 1. Current technologies in the state of the art for relative localization between MAVs, with their main advantages and disadvantages.

5.2. Intra-Swarm Collision Avoidance

Collision detection and avoidance of objects in the environment has already been discussed in section 4.2. As MAVs operate in teams, relative intra-swarm collision avoidance also becomes a safety-critical behavior that should be implemented. The complexity of this task is that it requires a collaborative maneuver between two or more MAVs.

MAVs operate in 3D space, and thus relative collision avoidance could be tackled by vertical separation. However, particularly in indoor environments where vertical space is limited, vertical avoidance maneuvers may cause undesirable aerodynamic interactions with other MAVs as well as other parts of the environment. For quadrotors, while aerodynamic influence is negligible when flying side-by-side, flying above another will create a disturbance for the lower one (Michael et al., 2010; Powers et al., 2013). Furthermore, emergency vertical maneuvers could also cause a quadrotor to fly too close to the ground, which creates a ground effect and pushes it upwards, or, if indoors, to fly too close to the ceiling, which creates a pulling effect toward the ceiling (Powers et al., 2013). Vertical avoidance may also corrupt the sensor readings of the MAV. For instance, height may be compromised if another MAV obstructs a sonar sensors. Overall, horizontal avoidance maneuvers are desired.

A popular algorithm for obstacle avoidance, provided that the robots know their relative position and velocity, is the Velocity Obstacle (VO) method (Fiorini and Shiller, 1998). The core idea is for a robot to determine a set of all velocities that will lead to collisions with the obstacle (a collision cone), and then choose a velocity outside of that set, usually the one that requires minimum change from the current velocity. VO has stemmed a number of variants specifically designed to deal with multi-agent avoidance, such as Reciprocal Velocity Obstacle (RVO) (van den Berg et al., 2008; van den Berg et al., 2011), Hybrid Reciprocal Velocity Obstacle (HRVO) (Snape et al., 2009), and Optimal Reciprocal Collision Avoidance (ORCA) (Snape et al., 2011). These variants alter the set of forbidden velocities in order to address reciprocity, which may otherwise lead to oscillations in the behavior. These methods have been successfully applied on MAVs, both in a decentralized way as well as via centralized re-planners. They accounted for uncertainties by artificially increasing the perceived radii of the robots. Alonso-Mora et al. (2015) showed the successful use of RVO on a team of MAVs such that they may adjust their trajectory with respect to a reference. This was done using an external MCS for (relative) positioning. Coppola et al. (2018) showed a collision cone scheme with on-board relative localization, introducing a method to adjust the cone angle in order to better account for uncertainties in the relative localization estimates. A disadvantage of VO methods and its derivatives is scalability. If the flying area is limited and the airspace becomes too crowded, then it may become difficult for MAVs to find safe directions to fly toward (Coppola et al., 2018). Another avoidance algorithm, called Human-Like (HL), presents the advantage that the heading selection is decoupled from speed selection (Guzzi et al., 2013a; Guzzi et al., 2014), such that the MAVs only engage in a change in heading. HL has been found to be successful even when operating at relatively lower rates (Guzzi et al., 2013b). Although it has not been tested on MAVs, their tests also demonstrated generally better scalability properties.

Alternatively, attraction and repulsion forces between obstacles are also a valid algorithm for collision avoidance. This is a common technique which has been extensively studied in swarm research (Reynolds, 1987; Gazi and Passino, 2002; Gazi and Passino, 2004). If one wishes for the MAVs to flock, these attraction and repulsion forces can also be directly merged with the swarm controller (Vásárhelyi et al., 2018). One potential short-coming of this approach is that it can lead to equilibrium states whereby the swarm remains in a fixed final formation, although this can also be seen as a positive property that can be exploited (Gazi and Passino, 2011).

In summary, multiple methods exist for intra-swarm collision avoidance. Given sufficiently accurate relative locations, these methods are very successful. The main challenges here are: (1) how to deal with uncertainties and unobservable conditions deriving from the localization mechanism used by the drones, and (2) how to keep guaranteeing successful collision avoidance when the swarm scales up to very large numbers.

5.3. Intra-Swarm Communication

Direct sharing of information between neighboring robots is an enabler for swarm behaviors as well as relative sensing (Valentini, 2017; Hamann, 2018; Pitonakova et al., 2018). To achieve the desired effect, it needs to be implemented with scalability, robustness, and flexibility in mind. Common problems that can otherwise arise are: (1) the messaging rate between robots is too low (low scalability); (2) high packet loss (low robustness); (3) communication range is too low (low scalability and flexibility); (4) inability to adapt to a switching network topology (low flexibility) (Chamanbaz et al., 2017).

Solutions to the above depend on the application. With respect to hardware, the three main technologies in the state of the art are: Bluetooth, WiFi, and ZigBee (Bensky, 2019). All three operate in the 2.4GHz band7. Bluetooth is energy efficient, but features a low maximum communication distances of ≈10–20 m (indoors, depending on the environment and version). This makes it more important to establish a network that can adapt to a switching topology, as it is very likely to change during operations. The latest version of the Bluetooth standard, Bluetooth 5, features a higher range and a higher data-rate despite keeping a low power consumption. It also has longer advertising messages, such that, without pairing, asynchronous network nodes can exchange messages of 255 bytes instead of 31 (Collotta et al., 2018). Bluetooth antennas were used in the previously discussed work of (Coppola et al., 2018) on a swarm of 3 MAVs to exchange data indoors and to measure their relative range. In comparison to Bluetooth, WiFi is known to be less energy efficient, but works more reliably at longer ranges and has a higher data throughput. Chung et al. (2016) used WiFi to enable a swarm of 50 MAVs to form an ad-hoc network. WiFi was also used by Vásárhelyi et al. (2018) in combination with an XBee module8 using a proprietary communication protocol. ZigBee's primary benefits are scalability (it can keep up to, theoretically, 64,000 nodes) and low power, although it has a low data communication rate (Bensky, 2019)9. Depending on the application, this may or may not be an issue depending on what the intra-swarm communication requirements are. Allred et al. (2007) used a ZigBee module to enable communication on a flock of fixed wing MAVs due to its combination of low energy consumption and long range (offering “a range of over 1 mile at 60 mW”). For comparisons of technical details of these technologies we refer the reader to the detailed book by Bensky (2019), the MAV-focused review by Zufferey et al. (2013), as well as the earlier comparisons by Lee et al. (2007).

In addition to the technologies discussed above, there is also the possibility of enabling indirect communication via cellular networks. In the near future, 5G networks are expected to make it possible to have a reliable and high data throughput between several MAVs (Campion et al., 2018). Finally, the use of UWB can also gain more relevance in the future, especially because its additional capability to accurately measure the range between MAVs, as discussed in section 5.1, can be very helpful for swarms. One technological challenge is that communication needs power, and while this may be near-negligible for the bigger MAVs, it is not so for the smaller designs (Petricca et al., 2011). From this perspective, the communication-based relative localization discussed in section 5.1, which can also double as a communication device for MAVs, is an interesting solution if one desires a system that can achieve both goals simultaneously. However, using any relative localization approach that relies on communication means that having a stable connection among MAVs is an important requirement, and possibly a safety critical one. Moreover, high messaging rates also become important in order to have a high update rate.

6. Swarm-Level Control

We finally arrive at the “swarm” part of this paper. Once we have reliable MAVs that can safely fly in an environment, localize one another, and perhaps even communicate, we can begin to exploit them as a swarm. The complexity of this task stems from the fact that, due to the decentralized nature of the swarm, the local actions that a robot takes can have any number of repercussions at the global level. These cannot be known unless the system is fully observed and optimized for, which the individual robot cannot do.

This section discusses possible approaches to design MAV swarm behaviors. Prominent examples of behaviors are: flocking, formation flight, distributed sensing (e.g., mapping/surveillance), and collaborative transport and object manipulation10. Of these, formation flight receives significant attention. It can be useful for several applications, such as surveillance, mapping, or cinematography so as to collaboratively observe a scene (Mademlis et al., 2019). Additionally, it can also be used for collaborative transport (de Marina and Smeur, 2019), and it has even been shown that certain formations lead to energy efficient flight for groups (Weimerskirch et al., 2001). Flocking behaviors bear similar properties to formation flight, but with more “fluid” inter-agent behaviors that allow the swarm to re-organize according to their current neighborhood and the environment. Distributed sensing behaviors may require the swarm to travel in a formation or flock, but may also include behaviors in which the swarm distributes over pre-specified areas (Bähnemann et al., 2017) or disperses (McGuire et al., 2019). Collaborative transport and object manipulations take two forms. The first is that of MAVs individually foraging for different objects and bringing them to base (Bähnemann et al., 2017), the second is that of jointly carrying a load that is too heavy for the individual MAV to carry (Tagliabue et al., 2019). In order to achieve the behaviors above, and others, the MAVs can also engage in a number of more general swarm behaviors, such as distributed task allocation or collective decision making. For all cases, the challenge is to endow the MAVs with a controller that achieves the desired swarm behavior while also avoiding undesired results (Winfield et al., 2005, 2006).

Similarly to the review by Brambilla et al. (2013) (which the reader is referred to for a general overview of swarm robotics and engineering), we divide the design methods in two categories. The first, which we call “manual design methods,” refers to hand-crafted controllers that instigate a particular behavior in the swarm. These are discussed in section 6.1, where we provide an overview of the state of the art for different swarm behaviors. The second, which we refer to as “automatic design methods,” uses machine learning techniques in order to design and/or optimize the controller for an arbitrary goal. This is discussed in section 6.2. We discuss the advantages and disadvantages between the two, from the perspective of designing swarms of MAVs, in section 6.3.

6.1. Manual Design Methods

This is the “classical” strategy to control, whereby a swarm designer develops the controllers so as to achieve a desired global behavior. For swarm robotics, we differentiate between two approaches. One approach is to design local behaviors, analyze them, and then manually iterate until the swarm behaves as desired. Another approach is to make mathematical models of the robots and their interactions and then design a suitable controller that comes with a certain proof of convergence. The latter approach has some obvious advantages if one succeeds, but it makes the designer face the full complexity of swarm systems. Hence, such methods typically have limited applicability. For example, in the work of Izzo and Pettazzi (2007), the behavior is limited to only symmetrical formations of limited numbers of agents. The preferred approach is dependent on the swarm behavior that the designer wishes to achieve, under the constraints of the local properties of each MAV.

A large portion of methods focuses on formation control algorithms, whereby the goal is for the MAVs to form and/or keep a tight formation during flight. To hold a formation, the MAVs must hold a relative position or distance between given neighbors, such that they can move as one unit through space. See, for instance, the works of Quintero et al. (2013), Schiano et al. (2016), de Marina et al. (2017), Yuan et al. (2017), and de Marina and Smeur (2019). One advantage of flying in formation for MAV swarms is their predictability during operations. Several methods provide robust controllers with mathematical proofs that the formation can be achieved and maintained during flight. A review dedicated to formation control algorithms for MAVs is provided by Oh et al. (2015). Chung et al. (2018) also discuss different methods.

There are applications for which a rigid formation is sub-optimal, undesired, or unnecessary, and it is better for the MAVs to move through space in a flock. Flocking behaviors were originally synthesized from the motion of animals in nature (Aoki, 1982), and were most famously formalized by Reynolds (1987) with the intent of simulating swarms in computer animations. The behavior is typically characterized by a combination of simple local rules: attraction forces, repulsion forces, heading alignment with neighbors, speed agreement with neighbors. This behavior naturally incorporates collision avoidance via the repulsion rule, and it has also been explored as a means to collectively navigate in an environment with obstacles, whereby the obstacles provide additional repulsion fores (Saska et al., 2014; Saska, 2015). Alternatively, the local rules can also be exploited to achieve formations by making use of equilibrium points between attraction and repulsion forces (Gazi, 2005). Depending on the way in which the rules are used, they can be incorporated into an iterative approach, or they can be made part of a mathematical regime combined with the model of the robot. An early real-world demonstration of distributed flocking was achieved by Hauert et al. (2011) with a swarm of ten fixed wing MAVs. The more recent work by Vásárhelyi et al. (2018) demonstrated outdoor flocking for a swarm of 30 quadrotors.

Concerning behaviors, such as distributed sensing, exploration, or mapping, there are several different types of solutions that have been developed specifically for MAVs. Typically, these are found to vary depending on the nature of the task, requiring the designer to make careful choices on the best algorithm to be used. Bähnemann et al. (2017) and Spurný et al. (2019), aided by GNSS for positioning, divided a search area into multiple regions so that a team of three MAVs could efficiently explore it with a pre-planned trajectory. The recent work of McGuire et al. (2019) demonstrated a swarm of six Crazyflie MAVs performing an autonomous exploration task in an unknown indoor environment. Each MAV acted entirely locally based on a manually designed bug algorithm which enabled exploration as well as homing to a reference beacon.

6.2. Automatic Methods for Behavior Design and Optimization

In the last few decades, the increasing power of machine learning methods cannot be denied, with multiple examples in robotics, autonomous driving, smart-homes, and more. Machine learning techniques offer a way to automatically extract the local controller that can fulfill a task, relieving us from the need to design it ourselves. However, the problem shifts to devising algorithms that can efficiently and effectively discover the controllers for us. In this section, we discuss the possibilities based on two primary machine learning approaches in swarm intelligence research: Evolutionary Robotics (ER) and Reinforcement Learning (RL).

6.2.1. Evolutionary Robotics

ER uses the concept of survival of the fittest in order to efficiently search through the design space for an effective controller (Nolfi, 2002)11. It has been widely adopted in swarm robotics literature in order to evolve local robot controllers that optimize the performance of the swarm with respect to a global, swarm-level objective (Trianni, 2008). ER bypasses the analysis of the relation between the local controllers and the global behavior of the swarm. Instead, it optimizes the controllers “blindly” by means of several evaluations in an evolutionary process, which most often happens in simulation, but can also be performed in the real world (Eiben, 2014). Evolved solutions often exploit the robots' bodies and environment, including the behaviors of other swarm members. Moreover, thanks to the blind optimization, not only the controller can be evolved, but also other factors, such as the communication between robots (Ampatzis et al., 2008). Likewise, ER offers a generic approach to generate swarm controllers of different types, including, but not limited to: neural networks (Trianni et al., 2003; Silva et al., 2015), grammar rules (Ferrante et al., 2013), behavior trees (Scheper et al., 2016; Jones et al., 2018, 2019), and state machines (Francesca et al., 2014). Although neural network architectures can be very powerful, the advantage of the latter methods is that they can be better understood by a designer, which makes it easier to cross the “reality gap” between simulation and the real world when deploying the controllers on the real robots (Jones et al., 2019). Crossing the reality gap is a major challenge in the field of ER and many different approaches have been investigated, also for neural networks. See Scheper (2019) for a more extensive discussion on these methods.