- 1Advanced Telecommunications Research Institute International, Kyoto, Japan

- 2National Hospital Organization Tokyo Medical Center, Tokyo, Japan

- 3Graduate School of Informatics, Kyoto University, Kyoto, Japan

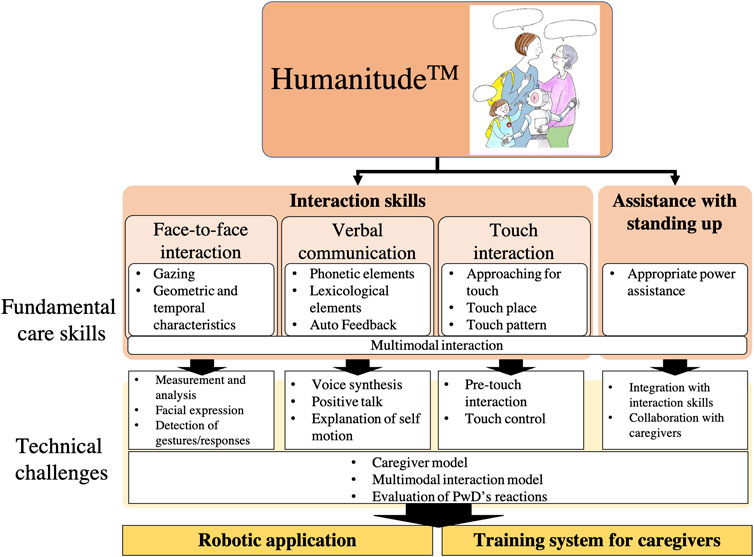

Due to cognitive and socio-emotional decline and mental diseases, senior citizens, especially people with dementia (PwD), struggle to interact smoothly with their caregivers. Therefore, various care techniques have been proposed to develop good relationships with seniors. Among them, Humanitude is one promising technique that provides caregivers with useful interaction skills to improve their relationships with PwD, from four perspectives: face-to-face interaction, verbal communication, touch interaction, and helping care receivers stand up (physical interaction). Regardless of advances in elderly care techniques, since current social robots interact with seniors in the same manner as they do with younger adults, they lack several important functions. For example, Humanitude emphasizes the importance of interaction at a relatively intimate distance to facilitate communication with seniors. Unfortunately, few studies have developed an interaction model for clinical care communication. In this paper, we discuss the current challenges to develop a social robot that can smoothly interact with PwDs and overview the interaction skills used in Humanitude as well as the existing technologies.

Introduction

Dementia is a leading cause of disability and dependency in senior citizens worldwide (Livingston et al., 2017). This syndrome not only weakens such cognitive functions as memory and reasoning but also induces various problems collected under the rubric of the behavioral and psychological symptoms of dementia (BPSD), such as agitation, aberrant motor behavior, anxiety, depression, apathy, delusions, and hallucinations. Although BPSD’s guidelines recommend that caregivers pay more attention to such people, strict adherence to such policies complicates caregiving and increases their burden and costs. Therefore, BPSD reduction is an important issue for caregivers who want to build good relationships with people with dementia (PwDs) and reduce their own burden (Selwood et al., 2007; Cerejeira et al., 2012).

Although pharmacological interventions can reduce BPSDs, non-pharmacological interventions are generally preferred as the first option to avoid adverse events and polypharmacy (Cerejeira et al., 2012). Among the many non-pharmacological interventions that have been proposed, researchers have identified the following consistent characteristics in the more effective ones: they are usually multimodal, provide individualized care, and train caregivers in skills that include optimizing communication with PwDs (Livingston et al., 2017).

Robotic technologies, which have mainly been introduced in elderly care to provide physical and mental support for seniors in multimodal individualized care, are usually used in rehabilitation and physical support. More recently, robot therapy is attracting attention as a form of mental support to reduce BPSDs in PwDs through interaction with pets or humanoid social robots (Broadbent et al., 2009). Previous studies identified various positive effects (Broekens et al., 2009; Robinson et al., 2013; Shibata and Coughlin, 2014; Pu et al., 2019), including improved psychological and physiological well-being (Mordoch et al., 2013; Costescu et al., 2014), better QoL (Chu et al., 2017), fewer problematic behaviors (Moyle et al., 2014; Jøranson et al., 2015), and less depression and anxiety (Petersen et al., 2016). Many of these studies use pet-like robots; such non-humanoid robots are preferred by seniors with mild dementia (Wu et al., 2012). But since PwDs and their caregivers want social robots that have verbal communication functions (Korchut et al., 2017), achieving social robots that satisfy the desire of PwDs for human interaction is essential. This idea is also supported by the fact that human interaction is the most enjoyable and long-lasting intervention for PwDs (Cohen-Mansfield et al., 2010). Therefore, creating social robots that can satisfactorily interact with humans is a major challenge in dementia care. However, it remains unclear how to design interactions with PwDs to reduce BPSD and what the technical challenges are.

Communication with PwDs is often difficult, resulting in caregivers who grudgingly provide treatment without much respect for their patients. Inappropriate communication is a main cause of BPSD (Cohen-Mansfield, 2000), and teaching caregivers how to effectively communicate with dementia patients ameliorates BPSDs (Selwood et al., 2007; Livingston et al., 2017). Improved communication skills among caregivers with their dementia patients can also reduce their own burdens (Adelman et al., 2014). This reduction is a critical factor in maintaining good relationships between seniors and their caregivers. Various approaches have been proposed for communication techniques to improve relationships with seniors to provide care based on personal dignity.

Humanitude™ is a multimodal method of comprehensive care that is attracting attention because it provides specific techniques for communicating with PwDs in actual care settings (Gineste and Pellissier, 2007; Honda et al., 2014). Humanitude consists of four care elements: three for communication, seeing, talking, and touching, and one that assists the physical act of standing. BPSDs and refusing care can be reduced in PwDs with this methodology (Biquand and Zittel, 2012; Simões et al., 2012; Ito et al., 2015; Honda et al., 2016; Melo et al., 2017b; Melo et al., 2017a; 2019; 2020; Figueiredo et al., 2018; Henriques et al., 2019; 2020). Humanitude provides systematic care techniques to communicate with PwDs in nursing care. If we analyze, model, and implement such techniques in social robots, we can create interactions that reduce such symptoms of PwDs. By analyzing Humanitude, we also believe we can clarify the differences between skilled and novice human caregivers and create a training tool for human caregivers. However, the scientific validation of its effectiveness is still in its infancy although Humanitude has been recognized for its effects and has already been implemented in many nursing homes. Therefore, there is limited information for researchers to understand Humanitude care. As a result, it is difficult to analyze, model, and implement Humanitude techniques into robots.

The purpose of this study is to summarize the key features of Humanitude’s communication skills and focus on the technical challenges that must be achieved with robots, based on books written by developers of Humanitude (Gineste and Pellissier, 2007) and the latest research by researchers who use it for care. We aim to develop social robots that have a good relationship with PwDs as well as evaluation systems that facilitate caregivers’ learning of communication skills in Humanitude. In the following, we first overview Humanitude and identify the key features of its care techniques. Then we summarize technical challenges to achieve social robot in which these techniques have been incorporated. Finally, we discuss other key evaluation challenges of the internal states of dementia patients and collaboration between human caregivers and the robots in Humanitude care.

Humanitude™

Humanitude™ refers to a concept proposed in 1979 by Y. Gineste and R. Marescotti and the care techniques based on it. It emphasizes respect for the human dignity of the individuals who are receiving care and provides technical solutions for their caregivers and families. To date, Humanitude, which has been introduced in more than 600 hospitals and nursing homes in Europe, is beginning to carve out inroads in North America as well (Melo et al., 2017b). Several studies have shown significant reductions of aggressive behavior in patients (88.5%), less reliance on neuroleptics as well as cost effectiveness with a social return of investment (SROI: 4.07) (Ito et al., 2015; Pimentel and Martins, 2015; Honda et al., 2016).

Humanitude emphasizes the development of good relationships between caregivers and care receivers. The following are its three key communication elements: seeing (face-to-face interaction), talking (verbal communication), and touching (touch interaction) (Figure 1). For humans, maintaining a standing posture is important for spatial recognition, positive physiological effects, and individual self-consciousness (Lopez et al., 2008; Lopez and Blanke, 2010; Wilson et al., 2013). For this reason, the fourth element, assistance with standing up, is also crucial for care. Caregivers are directed to assess the stage of care needed by each PwD and assist him/her to stand and walk on his/her own as much as possible during care. In the following, we discuss these care elements in detail.

Face-to-Face Interaction

When we fail to obtain enough information about our environment due to a decline of sensory modalities, we tend to feel anxious and passive (Gineste and Pellissier, 2007). Therefore, the characteristics of sensory processing must be understood in seniors to communicate with them. In particular, face-to-face interaction plays an important role in initiating communication and conveying the impressions of another person. Humanitude facilitates communication and good relationships with PwDs by emphasizing three aspects in face-to-face interaction: eye contact, its geometric characteristics, and its duration.

Gazing (Eye Contact)

Gazing is a core element in the Humanitude skill system, which is mainly related to the following eye contact (mutual gaze) states: 1) extending eye contact, 2) maintaining it during communication, and 3) reducing durations without it. The objective of these skills is to help PwDs continue to pay attention to caregivers since the former are easily distracted by other stimuli, such as other individuals or objects. In Humanitude, these efforts to preserve continuous communication can lead to smoother interaction with PwDs.

In psychology, eye contact is the most basic state that indicates a readiness for communication with another person (Senju and Johnson, 2009). Doctors who exhibit patient-centered behavior make more eye contact with their patients during consultations than those who do not (Gorawara-Bhat and Cook, 2011). Caregivers must make eye contact with PwDs when they start caring for them and maintain it during their care. Establishing eye contact with another person in a social relationship also facilitates the memory of that person’s face (Conty, 2016; Lopis and Conty, 2019) and shared conversation (Fullwood and Doherty-Sneddon, 2006). Eye contact activates conversations with PwDs and supports their memories (Lopis et al., 2019). As the baseline, older people have difficulty with contrast vision and reduced binocular summation (Gillespie-Gallery et al., 2013), and required four times longer time than youger participants to recognize the target in the functional visual filed test (Coeckelbergh et al., 2004). Addition to these normal physiological change, visual sensitivity of PwDs is reduced throughout the visual field (Trick 1995). Therefore, Humanitude suggests that caregivers voluntarily engage in eye contact with them.

Geometric and Temporal Characteristics in Face-to-Face Interaction

Humanitude points out the importance of geometric properties when caregivers make eye contact with PwDs. The impression perceived by PwDs depends on how the eye contact is made. When eye contact is established from the front and at the same level, it creates a positive impression that conveys security, trust, and friendship. Eye contact from the side of the face without directly looking at the patient or from above creates a negative impression, such as anxiety, frustration, and anger. Physicians who behave in a patient-friendly manner often make eye contact at the same level as the patient (Gorawara-Bhat and Cook, 2011). Humanitude emphasizes the importance of such eye contact with patients from the front and at the same level or under.

Humanitude also discusses the distance between caregivers and PwDs during eye contact. Close proximity communicates intimacy and trust in PwDs. It also draws attention to the caregivers of a PwD who has poor visual and attentional conditions. Humanitude recommends an eye contact distance that is closer than the distance generally observed in adult communication: around 20–30 cm, which is dependent on the cognition level of the care receivers. Caregivers should approach PwDs from the front because this tactic is a reasonable way to display their face to patients, based on such findings as face recognition in Alzheimer’s disease, which is the most common type of dementia (Adduri and Marotta, 2009).

Humanitude also defines eye contact duration. Caregivers should maintain it for longer than 0.5 s so that PwDs become aware that they are being watched. Although prolonged eye contact is recommended, silence can also become uncomfortably eerie to seniors. Caregivers should respond by talking to a PwD within 2 s after establishing eye contact (Honda et al., 2014).

Verbal Communication

The second communicative element is verbal communication, which has two categories. One is such phonetic elements as tone, speed, and volume; the other is lexicological elements: vocabulary. In care settings, all verbal communication is conveyed with non-verbal information. Generally, most of the topics focused on by caregivers concentrate on their work, and their conversations are brief. In fact, a report described that verbal communication lasted only 2 min each day for a bedridden dementia patient in a long-term care facility (Gineste and Pellissier, 2007; Honda et al., 2014). Caregivers often become discouraged by laconic PwDs who fail to respond or show irrelevant answers. Humanitude argues that good communication skills must be developed based on both phonetic and lexicological elements as well as a technique called Auto Feedback where caregivers talk continuously even when care receivers respond inadequately.

Phonetic Elements: Tone, Speed, and Volume

Phonetic information includes tone, speed, and volume. PwDs have disproportionate atrophy in the medial, basal, and lateral temporal lobes, and medial parietal cortex, which lead difficulty in understanding the meaning of linguistic information (McKhann et al., 2011). However, their amygdala essentially maintains its function and analyzes emotional meaning of paralinguistic information. Hearing loss is the highest population attributable factor for dementia (Livingston et al., 2017, 2020). Due to this, people taking care of PwDs have a tendency to speak loud and high pitch voice to communicate. These tones convey negative emotional prosody to PwDs. To avoid this condition, United Kingdom National Health System1 recommended to calm, slow, gentle and low voice, which is also recommended in Humanitude.

Lexicological Elements: Positive Words

Another category of verbal communication is lexicological elements. Vocabulary is critical to convey positive information. The words used by caregivers generally communicate such requests as “Please open your mouth” or “Hold still” or apologies like “I’m sorry that you are in pain by my procedure.” To establish good relationships with PwDs, selecting positive words is key. There are other expressions to deliver the same communication and care in different words, conveying identical meaning by adding a positive emphasis: “Thank you for keeping your mouth open, that is a big help.”

During and after the caregiving process, the caregiver must continue to give positive feedback to seniors about the care’s content and their reactions. For example, after the caregiver get a PwD changed her clothes, a positive explanation can connect this action to a good feeling: “New pajamas makes you feel so comfortable, don’t they?” “I like to see you smiling at me.”

Auto Feedback

Maintaining verbal communication is difficult when the other person fails to respond. Type of silence between patients and healthcare professionals falls into three categories; awkward, invitational and compassionate (Back et al., 2009). Verbal communication failure with PwDs belongs to awkward silence. Sensory deprivation speeds up the degenerative changes normally associated with aging and enhances the loss of functional cells in the central nervous system (Oster, 1976) and deteriorate dementia (Humes and Young, 2016). To minimize the sensory deprivation, verbal information should be maximized to PwD. Even with no response from PwDs, a caregiver must keep talking with positive language to avoid silence. Proposed by Humanitude, “Auto Feedback” is a skill for maintaining verbal communication during caregiving that describes caregiving actions. By talking about oneself, the caregiver can present verbal stimuli to the PwD without depending on her/his responses, creating a chance to elicit a response. In addition, by explaining their own actions during care, caregivers can increase the feelings of security sensed by PwDs about their care.

Auto Feedback consists of three phases. Caregivers first ask the PwDs to behave voluntarily since Humanitude’s basic element is to encourage them to move as much as possible by themselves. If the person does not respond to the word of caregivers, next, caregivers tell care receivers what they are going to do. For example, the caregivers must clearly describe to their patients what exactly they are going to do before actually doing it: “I’m going to wash your arms now.” Then they present explanatory information: what is being done. For example, “Now I’m raising your left arm.” Although mastering such a concise and clear communication method appears quite simple, caregivers require an average of one to three months of training (Gineste and Pellissier, 2007). This phase of Auto Feedback can increase verbal communication during daily care by four to six times, which can prevent sensory deprivation of PwDs.

Touch Interaction

Touching PwDs by caregivers is another common behavior in various daily support activities, such as changing clothes, bathing, and feeding. Touching plays an important role in communication with them in the mechanism of activating c-tactile afferents to excrete oxytocin (Walker et al., 2017). In Humanitude, there are three touch categories. The first is a validating touch (pleasant touch) that builds and maintains good relationships with others, such as hugs and handshakes. The second is an aggressive touch (unpleasant touch), such as hitting or pulling, which causes pain. The last type is a necessary touch (unpleasant but acceptable in the context), which is often uncomfortable but required during medical examinations or dental treatments.

Touch interaction is another opportunity for communication to develop good relationships. A person brings positive or negative meaning to another, depending on the kind of touch or how it is performed (Hertenstein et al., 2009). Since touching behavior may infringe on a person’s privacy, e.g., changing diapers or potentially invasive medical procedures, a friendly attitude and an intimate relationship must be maintained before and during such touching. For this reason, Humanitude determines the techniques, how to touch PwDs in the care.

Approaching for Touch

Aggressive touches are never acceptable. Due to their cognition decline, PwDs has difficulties to understand neccessary touches. Caregivers must consider what message is being conveyed by their touch. Aggressive touches must be avoided. Necessary touch must be made as comfortable as possible. For example, to avoid conveying negative information, caregivers should not approach the arm of a PwD with grabbing from above. They should approach to their arms to support from below.

Touch Place

The place that is touched is important in Humanitude. Tactile stimuli are received by the brain’s somatosensory cortex. As Penfield describes, the size of the receptive area depends on the body part. The area corresponding to the hands, face, and mouth is large; the area corresponding to the legs and arms is small (Penfield and Boldrey, 1937). Even if we touch a person in the same way, the effect on his/her brain will be different depending on which part was touched. Therefore, to avoid startling an PwD by suddenly receiving too much information, caregivers should first touch the parts that convey less information, which is the upper arms, shoulders, and back. Sensitive areas should only be touched when absolutely necessary: the hands, the face, and the genital region.

Touch Pattern

Like caressing a baby or loved one, a slow, gentle touch over a large area is fundamental in Humanitude, which is to activate c-tactile neurons (Walker et al., 2017). Such an approach to touching is necessary so that PwDs have positive impressions about caregivers. Humanitude recommends to touch with the fingertips at first, and followd by the palm, like landing an airplane. If a touch is too light, it might connote an unwelcome sexual implication or an awkward reluctance to touch; a certain amount of force should be applied. In Humanitude, the applied force during a touch should fall within a certain range. It is also recommended that one of the hands should always touch the PwD during care to keep conveying a positive relationship. When the touch is finished, the hands should leave the body in the opposite order at which the touch was started like an airplane that is taking off.

Assistance With Standing up

Human has ability to stand up and walk. The harmful effect of prolonged bed rest has been pointed out (Allen et al., 1999) and healthy older adults showed significant functional decline by 10 days bed rest (Kortebein et al., 2008), The amount of information from peripheral receptors about position and perception is more in upright than supine (Lopez and Blanke, 2010). Also, among the patients in altered mental status showed significantly more arousal and awareness in upright position than bed ridden. The main goal of Humanitude is to maintain the health of seniors and allow them to live a life with dignity by helping them stand and walk, and accumurate the duration of standing up 20 min per day to prevent being bed ridden. For this purpose, daily care is important opportunities for maintaining the health since caregivers can assist seniors with standing and walking in the care. In fact, Humanitude offers many techniques that provide walking assistance. The key is letting seniors stand and walk by themselves to maximize their muscle strength.

During the standing up and walking assistance, the caregiver should always use more than two out of three modes of communication elements; visual, verbal, and tactile interactions to present consistent and positive stimuli through at least two or more visual, verbal, or tactile interactions. Consistent presentation of positive stimuli among multiple sensory inputs is crucial. Since PwDs often suffer cognition and perception declines, information that is presented in a single modality may be insufficient. Information must be presented in a multimodal manner to facilitate the transmission of positive information to seniors.

Multitasking ability is declined with age and especially in PwDs (Clapp et al., 2011). When more than two caregivers talk to a PwD in the care, simultaneously, the person becomes confused due to overwhelmed information. Therefore, in Humanitude, the roles must be divided so that the stimulus of each modality comes from the same person. For example, in bathing care, caregiver A is in charge of face-to-face interaction and verbal communication to draw attention of care receiver, while caregiver B is washing the body using the technique of touch without saying words. This strategy of Humanitude is called “the master and the hidden player.”

Care Procedure to Build Intimate Relationships With Seniors With Dementia

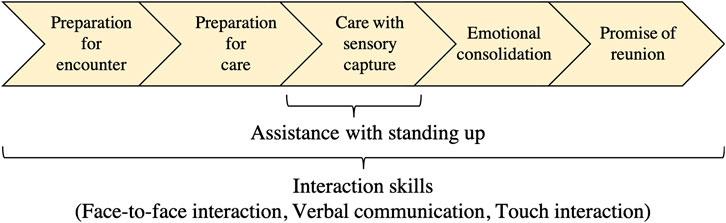

To make care more acceptable, Humanitude provides all of its care with one sequence that consists of five stages: 1) preparation for an encounter, 2) preparation for care, 3) care with sensory capture (provide care with multimodal approach), 4) emotional consolidation, and 5) a promise of reunion. In all the stages, the interaction techniques play an important role to build good relationships with PwDs and smoothly conduct care, while the assistive techniques for standing up during the care is worth for somatosensory input and to maintain muscle strength (Figure 2). First, during preparation for encounters, caregivers make the PwDs aware of their own presence. After they become more aware, the caregiver prepares for the care by multimodal communication to reach an agreement on care at the second stage. The key point in the first and second stages is that the caregiver should give the PwD the impression that he/she has come to see them not as a work responsibility but to spend good time together. The third stage provides the actual care for them by presenting positive stimuli with multimodal communication to make them realize that the care is a good experience. When the third stage is completed, the fourth and fifth stages build positive impressions of the care in the memory of the dementia patients and express that the caregiver also enjoyed spending the time with them. Since emotional memories stay longer even for PwDs, adequately providing stages four and five increases the likelihood that the next care will be more readily accepted.

Humanitude also emphasizes the importance of the caregiver’s contingent responses in interacting with PwD through all states. The adaptive/contingent interactions that the caregiver pays attention to the PwD’s behavior and responds contingently is one of the keys to realized good care interaction with PwDs, especially those who are non-verbal. Recent studies about intervention to PwD show that the adaptive interaction that uses non-verbal modalities for communication has the potential for promoting and supporting communication between people living with dementia who cannot speak and those who care for them (Ellis and Astell, 2017).

Technical Challenges Toward Artificial Systems That Incorporate Humanitude Techniques

We have identified and described the basic elements of the care techniques proposed in Humanitude. Caregivers usually acquire them by special training. If we analyze and model these techniques, we can develop social robots that provide more elderly centered support as well as a supportive system that helps human caregivers acquire them.

To implement Humanitude techniques in social robots, researchers can address the development of three types of robotic systems: a robot as a caring teacher, a robot as a second caregiver in a team with a human, and a robot as a primary caregiver. As a first step, robots would work as a caring teacher to improve human caregivers’ abilities by sensing their actions and providing feedback based on modeled knowledge of Humanitude techniques. The reason is that current robot systems would not have enough physical support capabilities in caregiving, instead of rich sensing abilities to observe people’s behaviors. Next, robots would behave as a second caregiver, supporting human caregivers through physical collaborations by following advances in their hardware capability. After increasing the physical capabilities of robots, they will work as main caregivers, but it is still far from current situations. Based on these considerations, modeling of Humanitude technique will provide essential knowledge for robots to behave as care teachers firstly.

However, it remains unclear to what extent current technologies can satisfy the techniques and what technologies should be developed to implement Humanitude-based care into artificial systems. In this section, we discuss the technical issues that must be solved to achieve Humanitude care techniques and know-how in artificial systems.

Technical Challenges for Face-to-Face Interaction Analysis

Measurement of Face-to-Face Interactions

For the purpose of human communication analysis [e.g., detection of Autism Spectrum Disorder (ASD) or social interactions], several computer-vision-based methods have been developed for detecting eye contacts (mutual-gaze) (Marin-Jimenez et al., 2019) or joint attention (Recasens et al., 2015). These method uses third-person-video where a filmer took a video.

Another approach is the use of first-person videos which are taken from the viewpoint of a caregiver from a frontal direction using a head-mounted wearable camera (first-person camera). For a social robot, first-person videos can be captured by a camera embedded in the eye-pupil or the forehead. From these videos, face-to-face postures (distances or angles) or eye contact states can be obtained using facial detection and/or machine learning techniques (Chong et al., 2020; Mitsuzumi et al., 2017). Similar systems such as wearable eye trackers (Chong et al., 2017) or proximity sensors (Hachisu et al., 2018) can be used for face-to-face interaction analysis as well. One advantage of a first-person camera is that it requires no third-person filmer. This is quite important for communication analysis with PwDs.

Analysis of Face-to-Face Interaction

Finding essential elements in face-to-face communication with PwDs is vital for smooth interaction for both caregivers and social robots. Thus, a number of studies have analyzed conversations with PwDs. O’Brien et al. described the conversational analysis approach for evaluating the skills of care learners before and after taking care-training courses. They compared the occurrence of communication techniques before/after training through video analysis and showed their effectiveness (O’Brien et al., 2018). Ishikawa et al. developed an analysis and training system for interaction with PwDs where an annotator rated the quality of the skill elements (gaze, speech, touch, and comprehensibility) for a conversational video. Trainees learn current interaction behavior through the system’s visualized feedback (Ishikawa et al., 2016).

Little work has been conducted on automated analysis for PwD interactions. Nakazawa et al. used a first-person video from the caregiver’s view while communicating with a PwD (Nakazawa et al., 2020). The relative facial posture (distance and angles) is automatically detected by the video and distinguishes skill levels using statistical analysis. They identified behavioral differences among care experts, middle levels, and novices. The expert and middle care levels look at the face of the care receivers more frequently; the face-to-face distances and rotations are also different among these three groups.

Facial Expression of PwD and Caregivers

Since facial expressions are a key role in PwD interactions, the automated recognition of them is a vital technique for evaluating levels of care skills and communication robots that have true interactivity. Facial expression analysis has two roles.

One is the facial expression analysis of care receivers/PwDs for sensing their status/mood. However, existing facial-expression recognition algorithms are mainly tuned for younger adults and posed (non-natural) conditions (Ebner et al., 2010). Therefore, few reliable techniques are applicable for recognizing the spontaneous facial expressions of PwDs. Collecting and annotating the facial expression dataset of PwDs is an important task to encourage studies. The second role is obtaining the facial expressions of caregivers as they interact with PwDs. Although much research literature has described the importance of positive facial expressions while communicating with PwDs, the relations remain unknown between the existing/magnitude of the positive/negative facial expressions of caregivers and the reactions of care receivers. Further studies must explore the effects of caregiver’s facial expressions for smooth communication with PwDs.

Detection of Facial Gestures/Responses of PwDs

Since PwDs suffer from impaired motor skills, the amplitudes of their gestures or facial expressions are quite small. Therefore, distinguishing between purposive and non-purposive movements is quite difficult, even by human caregivers. Moreover, they sometimes express their responses in such non-typical ways as blinking. Thus, novice caregivers often overlook these signals, a situation that leads to miss-communication with PwDs. Automated detection for such nuanced PwD responses is crucial and challenging in AI and Robotics. We have identified the following three key technical points of these tasks: 1) construction of well-annotated datasets, including videos from multiple viewpoints, 2) developing algorithms to detect slight movements that can discriminate between true signals and non-purposive behavior, and 3) temporal interaction analysis that takes the actions of caregivers into account for recognition.

Technical Challenges for Verbal Communication

One of the crucial challenges for verbal communication is voice synthesis that makes PwDs feel comfortable since Humanitude suggests that it is essential for a robot to speak in a calm, slow, gentle, and low voice to make PwDs feel comfortable. There have been various efforts to synthesize emotional speech (Schröder, 2001). Some commercial software is already capable of synthesizing emotional speech which expresses some positive emotion such as happy and calm. However, it is unclear whether these synthesized expressions are helpful to make PwDs feel comfortable. It is important to verify the effect of the existing emotional speech on PwDs. Another issue is to synthesize emotional speech based on the speech of caregivers who learned Humanitude. To achieve this, we need to collect their speech during the interaction with PwDs and build a “Humanitude speech” corpus. Such a corpus is helpful for us to make speech synthesis systems for care robots by using machine learning methods such as hidden Markov model (Yamagishi et al., 2005; Nose and Kobayashi, 2013; Lorenzo-Trueba et al., 2015) and deep neural network (DNN) (Lorenzo-Trueba et al., 2018).

So that seniors positively experience their care, the robot also needs to describe the appropriate positive evaluations for each element of care. A database of care situations and their corresponding positive comments is required. Honda et al. developed a system that analyzes the multimodal behavior of Humanitude experts while performing such care (Ishikawa et al., 2016; Honda et al., 2017). Such a system helps model positive utterances based on specific situations.

To achieve Auto Feedback, which is one main technique for verbal communication in Humanitude, the robot needs to explain its actions. The simplest implementation of this function is preparing predefined scripts for each task in advance and playing them back since daily care tasks are determined to some extent. This approach is especially effective for informing the PwDs about what the caregivers are going to do. But such an implementation is not adaptive for accidents during care. Another approach could implement in a robot the ability to explain its own actions. Little research has investigated the possibility of learning the relationship between a robot’s actions and their corresponding explanations (Taniguchi et al., 2019). Platter et al. proposed bidirectional mapping between the whole-body motion of a humanoid robot and language using deep recurrent networks (Plappert et al., 2018). Yamada et al. proposed paired recurrent autoencoders that bidirectionally translate a robot’s actions and language (Yamada et al., 2018). In these studies, although the robot’s joint information is used as sensory input to the networks, the robot is expected to explain the caregiver’s behavior and provide Auto Feedback by combining their networks with recent methods that estimate human posture from camera images, such as OpenPose (Cao et al., 2021).

In addition to these challenges for verbal communication in Humanitude, robots must also require the basic abilities to chat with PwDs to improve their communication. A robot needs to recognize questions, replies, and other statements from them. Since the speech features of PwDs are different from the general population (Toth et al., 2018), for the speech recognition for PwDs, a speech recognition system must be specifically created for them (Russo et al., 2019).

Technical Challenges for Touch Interaction

Due to the recent development of various robotic hands and machine learning approaches, social robots will be able to physically and safely touch people in the near future. However, social robots need to consider what kinds of touch behaviors would be acceptable by people because such considerations are different aspects from safe and physical touch. In fact, caregivers need to consider various factors for acceptable touches from patients, as described in section Touch Interaction. To enable Humanitude techniques by social robots, similar factors must be addressed. This section summarizes several essential factors for acceptable touch interactions from robots to people in the Humanitude context.

Firstly, we focused on a situation where people are approaching for touch, i.e., before-touch situation. Based on the described manners and considerations of section Approaching for Touch, we investigated related works that focused on before-touch interaction between people and robots. Based on these past studies, we thought that social robots should consider pre-touch situations for natural and acceptable touch interactions, similar to conversational interactions. For example, before talking with another person, people adjust their position relationships based on personal space (Hall, 1966). Many social robots also consider such position relationships before interacting with people as pre-conversational interactions (Obaid et al., 2016; Li et al., 2019; Saunderson and Nejat, 2019). Following such concepts, robotics researchers investigated the pre-touch reaction distance around a face between people and reported that the average distance is about 20 cm (Shiomi et al., 2018). They developed an android robot that reacted to the touch behaviors of people and concluded that a 20-cm threshold as a pre-touch reaction distance is more natural and human-like than personal space as a pre-touch reaction distance. This knowledge is useful for designing robot’s approaching behaviors before touch-based care.

In the before-touch situation, as described in sections Face-to-Face Interaction and Technical Challenges for Face-to-Face Interaction Analysis, gaze behaviors are also important in touch contexts because related works reported that a robot’s gaze in touch interaction implies its intention and changed perceived impressions of touched people. For example, Hirano et al. investigated the effects of gaze behaviors and touched timing when a robot touches participants (Hirano et al., 2018). They reported that participants preferred a gaze behavior that only looks at their faces during a touch more than a gaze behavior that looks at their faces, hands and returns to their face. Although this study did not investigate the duration effects of looking at faces, its results suggest the importance of face-to-face interaction as promulgated in Humanitude. They also investigated the effects of the robot’s gaze height and speech timing for touching in a nursing context (Shiomi et al., 2020a). Before-touch speech timing was preferred to after-touch speech timing, although the gaze height did not significantly improve robot-initiated touches’ feelings. Interestingly, this speech timing result contradicted a phenomenon from a past study that concluded that after-touch timing was preferred by participants in robot-initiated touch situations in a nursing context (Chen et al., 2014). Although different results described speech timing in touch situations, we believe that before-touch speech timing is better for a Humanitude context because after-touch speech timing might convey negative impressions to patients (Gineste and Pellissier, 2007; Honda et al., 2014).

We described the importance of considering touch place and patterns in the context of Humanitude in sections Touch Place and Touch Pattern. In fact, several human-human and human-robot touch interaction studies investigated the effects of touch place and patterns in influencing the perceived emotions, intimacy, and comfortableness. For example, human science literature has investigated the effects of touching speed on the impressions of comfort and reported that a rate of 5 cm/s was evaluated more positively than 0.5 or 50 cm/s (Essick et al., 1999). This knowledge is widely used in the human-robot interaction research field (Shiomi et al., 2017a). Another study developed a human-imitation hand to investigate the subjective and physiological effects of gentle stroking motions by a robot and reported that stroke speed and rate positively affect (Ishikura et al., 2020). To create comfortable touches from robots, several researchers have focused on the effects of warmth. For example, Block et al. developed a robot that provided a warm hug using chemical warming packs and reported that the warmth improved its hugs’ perceived impressions (Block and Kuchenbecker, 2019). Another study reported that a robot’s skin temperature influences users’ perceptions and evaluations (Park and Lee, 2014). These studies provided rich knowledge about robots’ body temperature design, particularly those that need to touch patients.

Touch place and patterns also influence conveying emotions, which is essential for social robots to interact with PwDs smoothly. Based on past related studies about touch place and patterns in human-robot touch interaction, Robotics researchers have already focused on this topic, i.e., conveying emotions by touch. Past studies defined the relationships between arousal/variance emotion maps and touch characteristics (Russell, 1978) and enabled robots to convey emotions by changing touch characteristics naturally (Meng et al., 2019; Zheng et al., 2020b; Teyssier et al., 2020). Another research focused on conveying intimacy by touch (Zheng et al., 2020a), which will also be useful for caregiver contexts. Related to this topic, researchers have also investigated different locations to be touched based on what emotion is being expressed. One study investigated the relationship between the body locations that were touched and the emotions that were conveyed: e.g., hands/forearms express happiness, and hands/shoulders express sadness (Hertenstein et al., 2006). Another study extended this work with robots and reported that participants mainly touched the hands/forearms of others for both happy/sad emotions with robots (Andreasson et al., 2018; Lowe et al., 2018). This knowledge will help design the touch behaviors of social robots, particularly how robots convey emotions to patients during care. However, human science literature investigated the difference of acceptable touched places due to personal relationships (Suvilehto et al., 2015; Suvilehto et al., 2019). Therefore, social robots should also consider the acceptability of PwDs during touch interaction with them.

Finally, as described in section Touch Interaction, touch behavior brings positive or negative meaning to people even if touchers are social robots; moreover, we also need to consider the effects of behavior change effects as well as perceived impressions. Because related works in human-robot touch interaction reported positive effects of robots’ touches toward people’s behaviors, such as improved motivation for monotonous tasks (Shiomi et al., 2017a), persuasion (Bevan and Stanton Fraser, 2015), encouraging self-disclosure and pro-social behaviors (Shiomi et al., 2017b; 2020b), and stress-buffering (Shiomi and Hagita, 2017), similar to human-human touch interactions. Since these studies were conducted with relatively young participants and not PwDs, additional evaluations with PwDs under Humanitude contexts are critical to apply such knowledge in care contexts.

Technical Challenges for Assistance With Standing up

Many efforts have investigated using robots for assisting seniors to stand and walk on their own, which is a major goal of Humanitude (Martins et al., 2012; Cifuentes and Frizera, 2016; Geravand et al., 2017; Ferrari et al., 2020; Takeda et al., 2020). Robotic technologies do already exist that facilitate the standing or walking of PwDs by themselves. However, few studies exist on systems that assist PwDs with the interaction techniques in Humanitude for producing feelings of comfort and positive impressions of the care. For example, Takeda et al. proposed a sit-to-stand support system that informed users by verbal guidance when assistance will begin. Healthy adult users felt comfortable when the start of the robot’s movement was indicated by voice (Takeda et al., 2020), although they did not evaluate their system with healthy seniors or PwDs. Providing a feeling of comfort through multimodal information in standing and walking is critical for PwDs.

If a robot conveys a feeling of comfort in a multimodal manner, it can provide Humanitude care by collaborating with human caregivers. This allows caregivers to divide interaction techniques with it; the robot is responsible for the skills that are difficult for a caregiver to acquire but easy for the robot to implement. For example, while assisting a PwD to stand or walk, the robot can perform Auto Feedback, which describes what the human caregiver is going to do and will soon be doing while the human caregiver makes eye contact and gently touches the person.

Technical Challenges for Modeling Care Procedure in Humanitude

To date, no robot can perform all of the stages of care procedure in Humanitude. Therefore, care techniques must be implemented into social robots to cover all stages in addition to achieving such advanced cognitive functions as the recognition of seniors and their environments, and their physical functions to conduct actual care. Humanitude provides appropriate communication strategies at each stage, which could be implemented into a robot. However, people have different impressions of a robot depending on its appearance and size. In fact, a study that presented pictures of various social robots to PwDs concluded that robots that share some traits with humans/animals and machines are more attractive than humanoid robots (Wu et al., 2012). For robots whose appearances are different from humans, their interactions with PwDs may be different from those between PwDs and caregivers. As researchers have done in various HRIs (Kahn et al., 2008; Mutlu and Forlizzi, 2008; Lee et al., 2010), new interaction patterns must be investigated with PwDs based on Humanitude.

We also need to address the development of a system that enables contingent interaction with PwDs since the caregiver’s contingent response is essential, as described in section Care Procedure to Build Intimate Relationships With Seniors With Dementia. Such a system also has the potential to give a good impression to users (Yamaoka et al., 2007). Yamazaki et al. analyzed the interaction between caregivers and healthy older people in elderly day-care centers in Japan to develop service robots for elderly care, focusing on verbal and non-verbal behaviors surrounding requests (Yamazaki et al., 2007). Their qualitative analysis suggests that service robots in elderly day-care centers should display availability to multiple visitors simultaneously and respond contingently to individual visitors through non-verbal information such as gaze, head, and body orientation. We must analyze contingent interaction between caregivers and PwDs with quantitative methods to design the robot’s responses. However, we should also consider a possibility that the “effective” modal (verbal, eye contact, facial expression, touch, and laugh) is quite dependent on the individual properties of PwDs. This adaptability and reactive contingency in the care interaction is the deepest part of realizing tender-care communication. But so far, current research on AI and robotics does not reach this stage.

Discussion

Possible Caregiving Applications for Social Robots With Humanitude Techniques

In this paper, we focused on Humanitude, an effective comprehensive care technique in elderly care, to create a robot that contributes to reducing BPSDs in PwDs and improves their QoL. As people age, their sensory and cognitive functions generally decline. This is especially evident in PwDs with whom healthy adults often struggle to communicate. Gaze, touch, and speech, which are fundamental behaviors in our communication, are more crucial for PwDs because they often have difficulty in understanding the meaning of linguistic information (McKhann et al., 2011). However, we cannot use the same behaviors as we do on healthy adults. We need to learn appropriate behaviors to communicate smoothly with PwDs. The appropriate behaviors are also helpful for social robots to communicate with PwDs. However, there is a paucity of studies about them in the existing HRI. Humanitude provides necessary care techniques to facilitate smooth communication with PwDs in care and suggests several technical challenges for social robots that interact with them, an idea that has received less attention in robot-assistive elderly care.

Note that we do not aim to implement Humanitude techniques in social robots to replace human caregivers. Rather, we propose developing such robots as a tool to help caregivers build better relationships with PwDs and provide improved care for them. In Humanitude, not only can one person perform all the seeing, talking, touching, and standing assistance tasks; multiple people can share the tasks. Therefore, by introducing at least a part of the Humanitude care techniques into the interaction’s design between robots and PwDs, robots can provide care in collaboration with caregivers. In other words, a robot based on the Humanitude concept will not only help build good relationships with PwDs but also facilitate the division of roles in Humanitude care with caregivers, improving the quality of care provided by caregivers and robots.

The introduction of Humanitude-based robots also provides a new perspective into Humanitude itself. Recent studies in robotics report that PwDs show high acceptance to social robots; robot therapy is another method to reduce BPSDs. One advantage of using social robots is that they can be designed so that their appearance and interaction patterns are attractive to PwDs. Perhaps a robot can be designed that can easily establish good relationships with PwDs. By introducing robots into Humanitude care, we may be able to create new methods in which robots and caregivers work together. Unfortunately, ethical issues emerge when robots are used in elderly care (Feil-Seifer and Matarić, 2011). To protect the dignity of PwDs, these issues must be discussed in actual care situations in cooperation with the caregivers and the families of PwDs (Stahl and Coeckelbergh, 2016).

Based on these considerations, as described in sections Technical Challenges Toward Artificial Systems That Incorporate Humanitude Techniques, firstly, social robots will be used as caring teachers for training novice caregivers due to their existing capabilities for caregiving and limited acceptance from PwDs and their families. In fact, some of the described systems in section Technical Challenges Toward Artificial Systems That Incorporate Humanitude Techniques would help to analyze the caregiving behaviors of caregivers quantitatively. Due to the advance of social robots’ physical capabilities for caregiving and increasing social acceptance of PwDs and their families toward social robots, the next step is a second caregiver, which supports human caregivers through physical collaborations. Even if such robots did not have enough physical capabilities for caregiving, the robots could work with human caregivers by dealing with conversation partners of PwDs based on knowledge from Humanitude, e.g., eye-contact modeling. Finally, social robots will work as main caregivers by overcoming technical and ethical problems in a future society, but it is still far from current situations. In other words, implementing Humanitude techniques in social robots is still an early stage. Therefore, the developers need to solve technical issues in the beginning. Thus, we discuss what kinds of problems still exist in three interaction skills essential in Humanitude context in the following subsection.

Issues of Implementing Humanitude Techniques in Social Robots

We revealed that although robot technologies already exist that assist PwDs to stand and walk (the main objective of Humanitude care), many issues must be addressed in three interaction skills. In techniques for face-to-face interaction, we showed that care robots have to autonomously enter the PwD’s field of vision to establish eye contact with them, unlike healthy adults who can spontaneously adjust it. Although Humanitude proposes quantitative indexes, the gaze behavior of trained caregivers must be analyzed and modeled in more detail so that robots and novice caregivers can learn how to successfully make eye contact with PwDs. First-person assessment systems for Humanitude skills (Nakazawa et al., 2020) are another useful approach for this purpose. Such models of eye contact behavior of trained caregivers are expected to be implemented in a robot to achieve smooth interaction with PwDs in future nursing home.

Compared with guidelines for face-to-face interaction, there are many qualitative guidelines in verbal communication and touch interaction. Therefore, we have to quantitatively analyze verbal communication and touch interaction between well-trained caregivers and PwDs to implement such interaction in artificial systems. For example, in verbal communication, we have to investigate how often a robot conducts Auto Feedback during care and what words are appropriate to create positive impressions in PwDs about caregivers and care itself. Multimodal behavior analysis (Ishikawa et al., 2016; Honda et al., 2017) can provide significant insight into these issues. For touch interaction, little quantitative analysis has analyzed how caregivers decide to touch a PwD or how much force should be applied. Modeling touch behavior and verifying the effects of touch interaction are beginning to focus on robots that can perform touch interactions with people. However, since these studies use data measured on healthy adults, data must be collected on touch interactions between PwDs and well-trained caregivers. In fact, such efforts have already begun. For example, Hiramatsu et al. developed a full-body tactile sensor system that is capable of capturing touch information from a skilled caregiver (Hiramatsu et al., 2020). Sumioka et al. developed a system that measured not only such light touches as stroking but also approaching behaviors before being touched (Sumioka et al., 2019). These systems will eventually model the pre-touch and post-touch behaviors of skilled human caregivers and be implemented in robots and caregiver evaluation systems.

The interaction techniques proposed by Humanitude are effective for evoking positive impressions in PwDs about their caregivers and care contents. However, even if these methods are modeled, and robots perform them, and caregivers learn them, they might not necessarily positively affect PwDs. Skilled caregivers perform interaction techniques while confirming that PwDs have positive emotions based on their responses. As mentioned in section Facial Expression of PwD and Caregivers, the facial expressions of PwDs are one way to obtain their emotional information. What PwDs say, their reactions to being touched, and such biological signals as their heartbeats and temperature are also useful information. An important challenge is estimating the emotions of PwDs from the multimodal information obtained from their behavior.

Author Contributions

All authors listed have made a substantial, direct, and intellectual contribution to the work and approved it for publication.

Funding

This work was supported by JST CREST Grant Number JPMJCR18A1 and JPMJCR17A5, Japan.

Conflict of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

All authors thank Prof. Yves Gineste for helpful discussions and valuable comments on several points in the paper.

Footnotes

1https://www.nhs.uk/conditions/dementia/

References

Adduri, C. A., and Marotta, J. J. (2009). Mental Rotation of Faces in Healthy Aging and Alzheimer's Disease. PLOS ONE 4, e6120. doi:10.1371/journal.pone.0006120

Adelman, R. D., Tmanova, L. L., Delgado, D., Dion, S., and Lachs, M. S. (2014). Caregiver Burden. JAMA 311, 1052. doi:10.1001/jama.2014.304

Allen, C., Glasziou, P., and Mar, C. D. (1999). Bed Rest: a Potentially Harmful Treatment Needing More Careful Evaluation. The Lancet 354, 1229–1233. doi:10.1016/S0140-6736(98)10063-6

Andreasson, R., Alenljung, B., Billing, E., and Lowe, R. (2018). Affective Touch in Human-Robot Interaction: Conveying Emotion to the Nao Robot. Int. J. Soc. Rob. 10, 473–491. doi:10.1007/s12369-017-0446-3

Back, A. L., Bauer-Wu, S. M., Rushton, C. H., and Halifax, J. (2009). Compassionate Silence in the Patient-Clinician Encounter: A Contemplative Approach. J. Palliat. Med. 12, 1113–1117. doi:10.1089/jpm.2009.0175

Bevan, C., and Stanton Fraser, D. (2015). “Shaking Hands and Cooperation in Tele-Present Human-Robot Negotiation,” In Proceedings of the Tenth Annual ACM/IEEE International Conference on Human-Robot Interaction - HRI ’15. Portland, Oregon, USA: ACM Press, 247–254. doi:10.1145/2696454.2696490

Biquand, S., and Zittel, B. (2012). Care Giving and Nursing, Work Conditions and Humanitude. Work 41, 1828–1831. doi:10.3233/wor-2012-0392-1828

Block, A. E., and Kuchenbecker, K. J. (2019). Softness, Warmth, and Responsiveness Improve Robot Hugs. Int. J. Soc. Robotics 11, 49–64. doi:10.1007/s12369-018-0495-2

Broadbent, E., Stafford, R., and MacDonald, B. (2009). Acceptance of Healthcare Robots for the Older Population: Review and Future Directions. Int. J. Soc. Rob. 1, 319–330. doi:10.1007/s12369-009-0030-6

Broekens, J., Heerink, M., and Rosendal, H. (2009). Assistive Social Robots in Elderly Care: a Review. Gerontechnology 8, 94–103. doi:10.4017/gt.2009.08.02.002.00

Cao, Z., Hidalgo, G., Simon, T., Wei, S.-E., and Sheikh, Y. (2021). OpenPose: Realtime Multi-Person 2D Pose Estimation Using Part Affinity Fields. IEEE Trans. Pattern Anal. Mach. Intell. 43, 172–186. doi:10.1109/TPAMI.2019.2929257

Cerejeira, J., Lagarto, L., and Mukaetova-Ladinska, E. B. (2012). Behavioral and Psychological Symptoms of Dementia. Front. Neur. 3. doi:10.3389/fneur.2012.00073

Chen, T. L., King, C.-H. A., Thomaz, A. L., and Kemp, C. C. (2014). An Investigation of Responses to Robot-Initiated Touch in a Nursing Context. Int. J. Soc. Robotics 6, 141–161. doi:10.1007/s12369-013-0215-x

Chong, E., Chanda, K., Ye, Z., Southerland, A., Ruiz, N., Jones, R. M., et al. (2017). Detecting Gaze towards Eyes in Natural Social Interactions and its Use in Child Assessment. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 1 (43), 1–20. doi:10.1145/3131902

Chong, E., Clark-Whitney, E., Southerland, A., Stubbs, E., Miller, C., Ajodan, E. L., et al. (2020). Detection of Eye Contact with Deep Neural Networks Is as Accurate as Human Experts. Nat. Commun. 11, 6386. doi:10.1038/s41467-020-19712-x

Chu, M.-T., Khosla, R., Khaksar, S. M. S., and Nguyen, K. (2017). Service Innovation through Social Robot Engagement to Improve Dementia Care Quality. Assistive Techn. 29, 8–18. doi:10.1080/10400435.2016.1171807

Cifuentes, C. A., and Frizera, A. (2016). Human-Robot Interaction Strategies for Walker-Assisted Locomotion. Cham: Springer International Publishing. doi:10.1007/978-3-319-34063-0

Clapp, W. C., Rubens, M. T., Sabharwal, J., and Gazzaley, A. (2011). Deficit in Switching between Functional Brain Networks Underlies the Impact of Multitasking on Working Memory in Older Adults. Proc. Natl. Acad. Sci. 108, 7212–7217. doi:10.1073/pnas.1015297108

Coeckelbergh, T. R. M., Cornelissen, F. W., Brouwer, W. H., and Kooijman, A. C. (2004). Age-Related Changes in the Functional Visual Field: Further Evidence for an Inverse Age X Eccentricity Effect. J. Gerontol. Ser. B: Psychol. Sci. Soc. Sci. 59, P11–P18. doi:10.1093/geronb/59.1.P11

Cohen-Mansfield, J., Marx, M. S., Dakheel-Ali, M., Regier, N. G., and Thein, K. (2010). Can Persons with Dementia Be Engaged with Stimuli?. Am. J. Geriatr. Psychiatry 18, 351–362. doi:10.1097/JGP.0b013e3181c531fd

Cohen-Mansfield, J. (2000). Nonpharmacological Management of Behavioral Problems in Persons with Dementia: The TREA Model. Alzheimers Care Today 1, 22–34.

Conty, L., George, N., and Hietanen, J. K. (2016). Watching Eyes Effects: When Others Meet the Self. Conscious. Cogn. 45, 184–197. doi:10.1016/j.concog.2016.08.016

Costescu, C. A., Vanderborght, B., and David, D. O. (2014). The Effects of Robot-Enhanced Psychotherapy: A Meta-Analysis. Rev. Gen. Psychol. 18, 127–136. doi:10.1037/gpr0000007

Ebner, N. C., Riediger, M., and Lindenberger, U. (2010). FACES-A Database of Facial Expressions in Young, Middle-Aged, and Older Women and Men: Development and Validation. Behav. Res. Methods 42, 351–362. doi:10.3758/BRM.42.1.351

Ellis, M., and Astell, A. (2017). Communicating with People Living with Dementia Who Are Nonverbal: The Creation of Adaptive Interaction. PLOS ONE 12, e0180395. doi:10.1371/journal.pone.0180395

Essick, G. K., James, A., and McGlone, F. P. (1999). Psychophysical Assessment of the Affective Components of Non-painful Touch. NeuroReport 10, 2083–2087. doi:10.1097/00001756-199907130-00017

Feil-Seifer, D., and Mataric, M. (2011). Socially Assistive Robotics. IEEE Robot. Automat. Mag. 18, 24–31. doi:10.1109/MRA.2010.940150

Ferrari, F., Divan, S., Guerrero, C., Zenatti, F., Guidolin, R., Palopoli, L., et al. (2020). Human-Robot Interaction Analysis for a Smart Walker for Elderly: The ACANTO Interactive Guidance System. Int. J. Soc. Rob. 12, 479–492. doi:10.1007/s12369-019-00572-5

Figueiredo, A., Melo, R., and Ribeiro, O. (2018). Humanitude Care Methodology: Difficulties and Benefits from its Implementation in Clinical Practice. Rev. Enf. Ref. IV Série, 53–62. doi:10.12707/RIV17063

Fullwood, C., and Doherty-Sneddon, G. (2006). Effect of Gazing at the Camera during a Video Link on Recall. Appl. Ergon. 37, 167–175. doi:10.1016/j.apergo.2005.05.003

Geravand, M., Korondi, P. Z., Werner, C., Hauer, K., and Peer, A. (2017). Human Sit-To-Stand Transfer Modeling towards Intuitive and Biologically-Inspired Robot Assistance. Auton. Robot 41, 575–592. doi:10.1007/s10514-016-9553-5

Gillespie-Gallery, H., Konstantakopoulou, E., Harlow, J. A., and Barbur, J. L. (2013). Capturing Age-Related Changes in Functional Contrast Sensitivity with Decreasing Light Levels in Monocular and Binocular Vision. Invest. Ophthalmol. Vis. Sci. 54, 6093–6103. doi:10.1167/iovs.13-12119

Gineste, Y., and Pellissier, J. (2007). Humanitude : Comprendre la vieillesse, prendre soin des Hommes vieux. Aramand Colin. doi:10.4000/adlfi.7588

Gorawara-Bhat, R., and Cook, M. A. (2011). Eye Contact in Patient-Centered Communication. Patient Educ. Couns. 82, 442–447. doi:10.1016/j.pec.2010.12.002

Hachisu, T., Pan, Y., Matsuda, S., Bourreau, B., and Suzuki, K. (2018). FaceLooks: A Smart Headband for Signaling Face-To-Face Behavior. Sensors 18, 2066. doi:10.3390/s18072066

Hall, E. T. (1966). The Hidden Dimension. Doubleday & Company IncAvailable at: https://ci.nii.ac.jp/naid/10000012348/ (Accessed December 18, 2020).

Henriques, L. V. L., Dourado, M. d. A. R. F., Melo, R. C. C. P. d., and Tanaka, L. H. (2019). Implementation of the Humanitude Care Methodology: Contribution to the Quality of Health Care. Rev. Latino-am. Enfermagem 27, e3123. doi:10.1590/1518-8345.2430-3123

Henriques, L., Dourado, M., Melo, R., Araújo, J., and Inácio, M. (2020). Methodology of Care Humanitude Implementation at an Integrated Continuing Care Unit: Benefits for the Individuals Receiving Care. Ojn 10, 960–972. doi:10.4236/ojn.2020.1010067

Hertenstein, M. J., Keltner, D., App, B., Bulleit, B. A., and Jaskolka, A. R. (2006). Touch Communicates Distinct Emotions. Emotion 6, 528–533. doi:10.1037/1528-3542.6.3.528

Hertenstein, M. J., Holmes, R., McCullough, M., and Keltner, D. (2009). The Communication of Emotion via Touch. Emotion 9, 566–573. doi:10.1037/a0016108

Hiramatsu, T., Kamei, M., Inoue, D., Kawamura, A., An, Q., and Kurazume, R. (2020). “Development of Dementia Care Training System Based on Augmented Reality and Whole Body Wearable Tactile Sensor”, in EEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, (IEEE), 4148–4154. doi:10.1109/IROS45743.2020.9341039

Hirano, T., Shiomi, M., Iio, T., Kimoto, M., Tanev, I., Shimohara, K., et al. (2018). How Do Communication Cues Change Impressions of Human-Robot Touch Interaction? Int. J. Soc. Rob. 10, 21–31. doi:10.1007/s12369-017-0425-8

Honda, M., Marescotti, R., and Gineste, Y. (2014). Introduction To Humanitude. Japanese. Tokyo, Japan: Igaku Shoin.

Honda, M., Ito, M., Ishikawa, S., Takebayashi, Y., and Tierney, L. (2016). Reduction of Behavioral Psychological Symptoms of Dementia by Multimodal Comprehensive Care for Vulnerable Geriatric Patients in an Acute Care Hospital: A Case Series. Case Rep. Med. 2016, 1–4. doi:10.1155/2016/4813196

Honda, M., Ishikawa, S., Okino, Y., Nakazawa, A., Takebayashi, Y., Delmas, C., et al. (2017). A Video-Based Education and Analysis System for Professional Caregivers of Cognitive Impaired Elderlies. ASM Sci. J. 83–45.

Humes, L. E., and Young, L. A. (2016). Sensory-Cognitive Interactions in Older Adults. Ear Hear 37, 52S–61S. doi:10.1097/AUD.0000000000000303

Ishikawa, S., Ito, M., Honda, M., and Takebayashi, Y. (2016). “The Skill Representation of a Multimodal Communication Care Method for People with Dementia,” in Proc. 14th Int. Conf. On Global Research and Education. Hamamatsu, Japan: Inter-Academia 2015Japan Society of Applied Physics). 011616. doi:10.7567/JJAPCP.4.011616

Ishikura, T., Yuguchi, A., Kitamura, Y., Cho, S.-G., Ding, M., Takamatsu, J., et al. (2020). Toward an Affective Touch Robot: Subjective and Physiological Evaluation of Gentle Stroke Motion Using a Human-Imitation Hand. ArXiv201204844 Cs. Available at: http://arxiv.org/abs/2012 (Accessed December 16, 2020).

Ito, M., Honda, M., Gineste, Y., Marescotti, R., Hirayama, R., Shimada, C., et al. (2015). An Examination of the Influence of Humanitude® Caregiving on the Behavior of Older Adults with Dementia in Japan, 1.

Jøranson, N., Pedersen, I., Rokstad, A. M. M., and Ihlebæk, C. (2015). Effects on Symptoms of Agitation and Depression in Persons with Dementia Participating in Robot-Assisted Activity: A Cluster-Randomized Controlled Trial. J. Am. Med. Directors Assoc. 16, 867–873. doi:10.1016/j.jamda.2015.05.002

Kahn, P. H., Freier, N. G., Kanda, T., Ishiguro, H., Ruckert, J. H., Severson, R. L., et al. (2008). “Design Patterns for Sociality in Human-Robot Interaction,” in Proceedings of the 3rd International Conference on Human Robot Interaction - HRI ’08, 97. Amsterdam, Netherlands: ACM Press. doi:10.1145/1349822.1349836

Korchut, A., Szklener, S., Abdelnour, C., Tantinya, N., Hernández-Farigola, J., Ribes, J. C., et al. (2017). Challenges for Service Robots-Requirements of Elderly Adults with Cognitive Impairments. Front. Neurol. 8, 228. doi:10.3389/fneur.2017.00228

Kortebein, P., Symons, T. B., Ferrando, A., Paddon-Jones, D., Ronsen, O., Protas, E., et al. (2008). Functional Impact of 10 Days of Bed Rest in Healthy Older Adults. J. Gerontol. Ser. A: Biol. Sci. Med. Sci. 63, 1076–1081. doi:10.1093/gerona/63.10.1076

Lee, M. K., Kiesler, S., Forlizzi, J., Srinivasa, S., and Rybski, P. (2010). “Gracefully Mitigating Breakdowns in Robotic Services”, in Proceedings of ACM/IEEE Int. Conf. on Human-Robot In-teraction (HRI2010), (Osaka, Japan: IEEE), 203–210. doi:10.1109/HRI.2010.5453195

Li, R., van Almkerk, M., van Waveren, S., Carter, E., and Leite, I. (2019). “Comparing Human-Robot Proxemics between Virtual Reality and the Real World,” in 2019 14th ACM/IEEE International Conference on Human-Robot Interaction (HRI), 431–439. doi:10.1109/HRI.2019.8673116

Livingston, G., Sommerlad, A., Orgeta, V., Costafreda, S. G., Huntley, J., Ames, D., et al. (2017). Dementia Prevention, Intervention, and Care. The Lancet 390, 2673–2734. doi:10.1016/S0140-6736(17)31363-6

Livingston, G., Huntley, J., Sommerlad, A., Ames, D., Ballard, C., Banerjee, S., et al. (2020). Dementia Prevention, Intervention, and Care: 2020 Report of the Lancet Commission. The Lancet 396, 413–446. doi:10.1016/S0140-6736(20)30367-6

Lopez, C., and Blanke, O. (2010). How Body Position Influences the Perception and Conscious Experience of Corporeal and Extrapersonal Space. Rev. Neuropsychol. 2, 195–202. doi:10.3917/rne.023.0195

Lopez, C., Lacour, M., Léonard, J., Magnan, J., and Borel, L. (2008). How Body Position Changes Visual Vertical Perception after Unilateral Vestibular Loss. Neuropsychologia 46, 2435–2440. doi:10.1016/j.neuropsychologia.2008.03.017

Lopis, D., and Conty, L. (2019). Investigating Eye Contact Effect on People's Name Retrieval in Normal Aging and in Alzheimer's Disease. Front. Psychol. 10, 1218. doi:10.3389/fpsyg.2019.01218

Lopis, D., Baltazar, M., Geronikola, N., Beaucousin, V., and Conty, L. (2019). Eye Contact Effects on Social Preference and Face Recognition in normal Ageing and in Alzheimer's Disease. Psychol. Res. 83, 1292–1303. doi:10.1007/s00426-017-0955-6

Lorenzo-Trueba, J., Barra-Chicote, R., San-Segundo, R., Ferreiros, J., Yamagishi, J., and Montero, J. M. (2015). Emotion Transplantation through Adaptation in HMM-Based Speech Synthesis. Comp. Speech Lang. 34, 292–307. doi:10.1016/j.csl.2015.03.008

Lorenzo-Trueba, J., Eje Henter, G., Takaki, S., Yamagishi, J., Morino, Y., and Ochiai, Y. (2018). Investigating Different Representations for Modeling and Controlling Multiple Emotions in DNN-Based Speech Synthesis. Speech Commun. 99, 135–143. doi:10.1016/j.specom.2018.03.002

Lowe, R., Andreasson, R., Alenljung, B., Lund, A., and Billing, E. (2018). Designing for a Wearable Affective Interface for the NAO Robot: A Study of Emotion Conveyance by Touch. Mti 2, 2. doi:10.3390/mti2010002

Marin-Jimenez, M. J., Kalogeiton, V., Medina-Suarez, P., and Zisserman, A. (2019). LAEO-net: Revisiting People Looking at Each Other in Videos. Available at: https://openaccess.thecvf.com/content_CVPR_2019/html/Marin-Jimenez_LAEO-Net_Revisiting_People_Looking_at_Each_Other_in_Videos_CVPR_2019_paper.html (Accessed December 22, 2020). 3477–3485. doi:10.1109/cvpr.2019.00359

Martins, M. M., Santos, C. P., Frizera-Neto, A., and Ceres, R. (2012). Assistive Mobility Devices Focusing on Smart Walkers: Classification and Review. Robotics Autonomous Syst. 60, 548–562. doi:10.1016/j.robot.2011.11.015

McKhann, G. M., Knopman, D. S., Chertkow, H., Hyman, B. T., Jack, C. R., Kawas, C. H., et al. (2011). The Diagnosis of Dementia Due to Alzheimer's Disease: Recommendations from the National Institute on Aging-Alzheimer's Association Workgroups on Diagnostic Guidelines for Alzheimer's Disease. Alzheimer's Demen. 7, 263–269. doi:10.1016/j.jalz.2011.03.005

Melo, R. C. C. P., Fernandes, D. S. C., Albuquerque, J. S., and Duarte, M. N. (2017a). “Methodology of Care Humanitude in Promoting Self-Care in Dependent People: An Integrative Review,” in Advances In Human Factors And Ergonomics In Healthcare Advances in Intelligent Systems and Computing. Editors V. G. Duffy, and N. Lightner (Cham: Springer International Publishing), 187–193. doi:10.1007/978-3-319-41652-6_18

Melo, R., Queirós, P., Tanaka, L., Salgueiro, N., Alves, R., Araújo, J., et al. (2017b). State-of-the-art in the Implementation of the Humanitude Care Methodology in Portugal. Rev. Enf. Ref IV Série, 53–62. doi:10.12707/RIV17019

Melo, R. C. C. P. d., Costa, P. J., Henriques, L. V. L., Tanaka, L. H., Queirós, P. J. P., and Araújo, J. P. (2019). Humanitude in the Humanization of Elderly Care: Experience Reports in a Health Service. Rev. Bras. Enferm. 72, 825–829. doi:10.1590/0034-7167-2017-0363

Melo, R. C. C. P., Queirós, P. J. P., Tanaka, L. H., Henriques, L. V. L., and Neves, H. L. (2020). Nursing Students' Relational Skills with Elders Improve through Humanitude Care Methodology. Ijerph 17, 8588. doi:10.3390/ijerph17228588

Meng, X., Yoshida, N., Wan, X., and Yonezawa, T. (2019). “Emotional Gripping Expression of a Robotic Hand as Physical Contact,” in Proceedings of the 7th International Conference on Human-Agent Interaction. (Kyoto Japan: ACM), 37–42. doi:10.1145/3349537.3351884

Mitsuzumi, Y., Nakazawa, A., and Nishida, T. (2017). “DEEP Eye Contact Detector: Robust Eye Contact Bid Detection Using Convolutional Neural Network”, in Proceedings of the British Machine Vision Conference. Editors T. K. Kim, S. Zafeiriou, G. Brostow, and K. Mikolajczyk, (London, United Kingdom: BMVA Press), 1–12. doi:10.5244/C.31.59

Mordoch, E., Osterreicher, A., Guse, L., Roger, K., and Thompson, G. (2013). Use of Social Commitment Robots in the Care of Elderly People with Dementia: A Literature Review. Maturitas 74, 14–20. doi:10.1016/j.maturitas.2012.10.015

Moyle, W., Jones, C., Cooke, M., O’Dwyer, S., Sung, B., and Drummond, S. (2014). Connecting the Person with Dementia and Family: a Feasibility Study of a Telepresence Robot. BMC Geriatr. 14, 7. doi:10.1186/1471-2318-14-7

Mutlu, B., and Forlizzi, J. (2008). “Robots in Organizations: The Role of Workflow, Social, and Environmental Factors in Human-Robot Interaction”, in Proceedings of ACM/IEEE Int. Conf. on Human-Robot Interaction (HRI 2008), (Amsterdam, Netherlands: IEEE), 287–294. doi:10.1145/1349822.1349860

Nakazawa, A., Mitsuzumi, Y., Watanabe, Y., Kurazume, R., Yoshikawa, S., and Honda, M. (2020). First-person Video Analysis for Evaluating Skill Level in the Humanitude Tender-Care Technique. J. Intell. Robot. Syst. 98, 103–118. doi:10.1007/s10846-019-01052-8

Nose, T., and Kobayashi, T. (2013). An Intuitive Style Control Technique in HMM-Based Expressive Speech Synthesis Using Subjective Style Intensity and Multiple-Regression Global Variance Model. Speech Commun. 55, 347–357. doi:10.1016/j.specom.2012.09.003

O'Brien, R., Goldberg, S. E., Pilnick, A., Beeke, S., Schneider, J., Sartain, K., et al. (2018). The VOICE Study - A before and after Study of a Dementia Communication Skills Training Course. Plos One 13, e0198567. doi:10.1371/journal.pone.0198567

Obaid, M., Sandoval, E. B., Zlotowski, J., Moltchanova, E., Basedow, C. A., and Bartneck, C. (2016). ”Stop! that Is Close Enough. How Body Postures Influence Human-Robot ProximityStop! that Is Close Enough. How Body Postures Influence Human-Robot Proximity,” in 25th IEEE International Symposium on Robot and Human Interactive Communication. New York, NY, USA: RO-MAN)IEEE), 354–361. doi:10.1109/ROMAN.2016.7745155

Oster, C. (1976). Sensory Deprivation in Geriatric Patients. J. Am. Geriatr. Soc. 24, 461–464. doi:10.1111/j.1532-5415.1976.tb03261.x

Park, E., and Lee, J. (2014). I Am a Warm Robot: the Effects of Temperature in Physical Human-Robot Interaction. Robotica 32, 133–142. doi:10.1017/S026357471300074X

Penfield, W., and Boldrey, E. (1937). Somatic Motor and Sensory Representation in The Cerebral Cortex of Man as Studied by Electrical Stimulation. Brain 60, 389–443. doi:10.1093/brain/60.4.389

Petersen, S., Houston, S., Qin, H., Tague, C., and Studley, J. (2016). The Utilization of Robotic Pets in Dementia Care. Jad 55, 569–574. doi:10.3233/JAD-160703

Pimentel, A., and Martins, A. (2015). “Humanitude Social Value,” in Social Impact International Conference. Lisbon.

Plappert, M., Mandery, C., and Asfour, T. (2018). Learning a Bidirectional Mapping between Human Whole-Body Motion and Natural Language Using Deep Recurrent Neural Networks. Robotics Autonomous Syst. 109, 13–26. doi:10.1016/j.robot.2018.07.006

Pu, L., Moyle, W., Jones, C., and Todorovic, M. (2019). The Effectiveness of Social Robots for Older Adults: A Systematic Review and Meta-Analysis of Randomized Controlled Studies. The Gerontologist 59, e37–e51. doi:10.1093/geront/gny046

Recasens, A., Khosla, A., Vondrick, C., and Torralba, A. (2015). Where Are They Looking? Adv. Neural Inf. Process. Syst. 28, 199–207.

Robinson, H., MacDonald, B., Kerse, N., and Broadbent, E. (2013). The Psychosocial Effects of a Companion Robot: A Randomized Controlled Trial. J. Am. Med. Directors Assoc. 14, 661–667. doi:10.1016/j.jamda.2013.02.007

Russell, J. A. (1978). Evidence of Convergent Validity on the Dimensions of Affect. J. Personal. Soc. Psychol. 36, 1152–1168. doi:10.1037/0022-3514.36.10.1152

Russo, A., D'Onofrio, G., Gangemi, A., Giuliani, F., Mongiovi, M., Ricciardi, F., et al. (2019). Dialogue Systems and Conversational Agents for Patients with Dementia: The Human-Robot Interaction. Rejuvenation Res. 22, 109–120. doi:10.1089/rej.2018.2075

Saunderson, S., and Nejat, G. (2019). How Robots Influence Humans: A Survey of Nonverbal Communication in Social Human-Robot Interaction. Int. J. Soc. Rob. 11, 575–608. doi:10.1007/s12369-019-00523-0

Schröder, M. (2001). Emotional Speech Synthesis: A Review, in EUROSPEECH 2001, (Aalborg, Denmark: ISCA Archive), 4, 561–564.

Selwood, A., Johnston, K., Katona, C., Lyketsos, C., and Livingston, G. (2007). Systematic Review of the Effect of Psychological Interventions on Family Caregivers of People with Dementia. J. Affective Disord. 101, 75–89. doi:10.1016/j.jad.2006.10.025

Senju, A., and Johnson, M. H. (2009). The Eye Contact Effect: Mechanisms and Development. Trends Cogn. Sci. 13, 127–134. doi:10.1016/j.tics.2008.11.009

Shibata, T., Coughlin, J. F., and Coughlin, J. F. (2014). Trends of Robot Therapy with Neurological Therapeutic Seal Robot, PARO. J. Robot. Mechatron. 26, 418–425. doi:10.20965/jrm.2014.p0418

Shiomi, M., and Hagita, N. (2017). “Do Audio-Visual Stimuli Change Hug Impressions?,” in Social Robotics Lecture Notes in Computer Science. Editors A. Kheddar, E. Yoshida, S. S. Ge, K. Suzuki, J.-J. Cabibihan, F. Eysselet al. (Cham: Springer International Publishing), 345–354. doi:10.1007/978-3-319-70022-9_34

Shiomi, M., Nakagawa, K., Shinozawa, K., Matsumura, R., Ishiguro, H., and Hagita, N. (2017a). Does A Robot's Touch Encourage Human Effort? Int. J. Soc. Robotics 9, 5–15. doi:10.1007/s12369-016-0339-x