- 1Department of Medical Ultrasound, China Resources & Wisco General Hospital, Academic Teaching Hospital of Wuhan University of Science and Technology, Wuhan, China

- 2Department of Medical Ultrasound, Hubei Cancer Hospital, Tongji Medical College, Huazhong University of Science and Technology, Wuhan, China

- 3Department of Artificial Intelligence, Julei Technology, Wuhan, China

- 4Department of Medical Ultrasound, The First Affiliated Hospital of Medical College, Shihezi University, Xinjiang, China

- 5Department of Medical Ultrasound, Tongji Hospital, Tongji Medical College, Huazhong University of Science and Technology, Wuhan, China

- 6Department Allgemeine Innere Medizin (DAIM), Kliniken Beau Site, Salem und Permanence, Bern, Switzerland

Artificial intelligence (AI) has invaded our daily lives, and in the last decade, there have been very promising applications of AI in the field of medicine, including medical imaging, in vitro diagnosis, intelligent rehabilitation, and prognosis. Breast cancer is one of the common malignant tumors in women and seriously threatens women’s physical and mental health. Early screening for breast cancer via mammography, ultrasound and magnetic resonance imaging (MRI) can significantly improve the prognosis of patients. AI has shown excellent performance in image recognition tasks and has been widely studied in breast cancer screening. This paper introduces the background of AI and its application in breast medical imaging (mammography, ultrasound and MRI), such as in the identification, segmentation and classification of lesions; breast density assessment; and breast cancer risk assessment. In addition, we also discuss the challenges and future perspectives of the application of AI in medical imaging of the breast.

Introduction

Artificial intelligence (AI) is commonly defined as “a system’s ability to correctly interpret external data, to learn from such data, and to use those learnings to achieve specific goals and tasks through flexible adaptation”. Over the past 50 years, the dramatic growth of computer functions related to big data intrusion has pushed AI applications into new areas (1). Currently, AI can be found in voice recognition, face recognition, driverless cars and other new technologies, and the application of AI in medical imaging has gradually become an important topic of research. AI algorithms, particularly deep learning (DL) algorithms, have demonstrated remarkable progress in image recognition tasks. Methods ranging from convolutional neural networks to variational autoencoders have been found in a myriad applications in the medical image analysis field and have promoted the rapid development of medical imaging (2). AI has made great contributions to early detection, disease evaluation and treatment response assessments in the field of medical image analysis for diseases such as pancreatic cancer (3), liver disease (4), breast cancer (5), chest disease (6), and neurological tumors (7).

Approximately 2.1 million newly diagnosed cases of breast cancer occurred in 2018 worldwide, accounting for almost 1 in 4 of all cases of cancer among women (8). Breast cancer is the most frequently diagnosed cancer in most countries (154 of 185) and is the leading cause of death due to cancer in over 100 countries (9). Breast cancer has a marked impact on women’s physical and mental health, which seriously threatens women’s lives and health. The early screening and treatment of breast diseases have become major health problems in the world. The correct diagnosis, especially the early detection and treatment of breast cancer, has a decisive impact on the prognosis. The clinical cure rate of early breast cancer can reach more than 90%; in the middle stage, it is 50 - 70%, and in the late stage, the treatment effect is very poor. Currently, Mammography, ultrasound and MRI are invaluable screening and supplemental diagnostic tool for breast cancer, they also have become important means of detection, staging and efficacy evaluations and follow-up examinations of breast cancer (10).

At present, breast images are mainly read, analyzed and diagnosed by radiologists. Under a large and long-term workload, radiologists are more likely to misjudge images due to fatigue, resulting in a misdiagnosis or missed diagnosis, which can be avoided with AI. To avoid human errors, computer-aided diagnosis (CAD) has been implemented. In CAD systems, a suitable algorithm completes the processing and analysis of an image (11). The latest breakthrough is DL, especially convolutional neural networks (CNNs), which has made significant progress in the field of medical imaging (12). This article briefly introduces the background of AI and mainly reviews its application in breast mammography, ultrasound and MRI image analysis. This paper also discusses the prospects for the application of AI in medical imaging.

Brief Overview of AI

AI refers to the ability of application machines to imitate humans or human brain functions to learn and solve problems (13). It has been more than 60 years since John McCarthy put forward the concept of AI in 1956. Over the past ten years, AI technology has made explosive progress. As a branch of computer science, it attempts to produce a new kind of intelligent machine that responds like a human brain; its application field is very wide and includes robots, image recognition, language recognition, natural language processing, data mining, pattern recognition and expert system, etc. (14, 15). In the medical field, AI can be applied to health management, clinical decision support, medical imaging, disease screening and early disease prediction, medical records/literature analysis, and hospital management, etc. AI can analyze medical images and information for disease screening and prediction and assist doctors in making diagnosis. In breast imaging, Al-antari MA et al. studied a complete integrated CAD system that can be used for detection, segmentation, and classification of masses in mammography in 2018, and its accuracy was more than 92% in all aspects (16). Alejandro Rodriguez-Ruiz et al. gathered 2654 exams and readings by 101 radiologists, using a trained AI system to score the possibility of cancer between 1 and 10, they found that using an AI score of 2 as the threshold could reduce the workload by 17%, which proved that the AI automatic preselection can significantly reduce the workload of radiologists (17).

Machine learning (ML) is one of the most important ways to realize AI. ML is divided into unsupervised and supervised. Unsupervised ML classifies the radiomics features without using any information provided by or determined by an available historical set of imaging data of the same kind of the one under investigation. Supervised ML methods are first trained by means of an available data archive, i.e. all parameters in the algorithm are tuned until the method provides an optimal trade-off between its ability to fit the training set and its generalization power when a new data example arrives. In the world of supervised ML, sparsity-enhancing regularization networks are able to make the prediction while, at the same time, identifying the extracted features that mostly impact such prediction (18). ML indicates those computational algorithms that utilize as input the image features extracted by radiomics in order to provide as output predictions concerning disease outcomes on follow-up, such as linear regression, K-means, decision trees, random forest, PCA (principal component analysis), SVM (support vector machine), and ANNs (artificial neural networks).

DL, one of the AI systems based on neural networks, is structured by building models that imitate the human brain and is currently considered to be the latest technology for image classification. Neural networks first simulate nerve cells and then try to simulate the human brain using a simulation model called a perceptron. A neural network consists of continuous layers, including the input layer, the hidden layer, and the output layer. The input layer can process multi-dimensional data, and the hidden layer includes a convolutional layer, pooling layer and fully connected layer. The feature map created in the convolutional layer is initially passed through a non-linear activation function, and this is then transferred to the pooling layer to enable down-sampling of the feature map. The output is then passed into the fully connected layer to classify the overall outcome, and the output layer directly outputs data analysis results. A multilayer perceptron is constructed by making and arranging layers with perceptrons in which all nodes in the model are fully connected together, thus solving more complex problems (19). The learning paradigm of CNNs also involves supervised learning and unsupervised learning; supervised learning refers to the training procedure in which the observed training data and the associated ground truth labels for that data (or sometimes referred to as “targets”) are both required for training the model. In contrast, unsupervised learning involves training data that has no diagnosis or normal/abnormal labels. Currently, supervised learning seems to be the most popular approach in image classification tasks (20).

Applications of AI in Mammography

Mammography is one of the most widely used methods for breast cancer screening (21, 22). Mammography is a non-invasive detection method associated with relatively decreased pain, easy operation, high resolution, and good repeatability. The retained image can be compared before and after and is not limited by age or body shape. Mammography can detect breast masses that cannot be palpated by doctors and can reliably identify benign lesions and malignant tumors of the breast. Mammograms are currently acquired with full-field digital mammography (DM) systems and are provided in both for-processing (the raw imaging data) and for-presentation (a postprocessed version of the raw data) image formats (23, 24). To date, AI has been used to analyze mammography images in most studies mainly for the detection and classification of breast mass and microcalcifications, breast mass segmentation, breast density assessment, breast cancer risk assessment and image quality improvement.

Detection and Classification of Breast Masses

Among the different abnormalities seen on mammograms, masses are one of the most common symptoms of breast cancer. It is difficult to detect and diagnose masses because of variation in the shape, size, and margins, especially in the presence of dense breasts. Therefore, mass detection is an essential step in CAD. Some studies proposed a Crow search optimization based intuitionistic fuzzy clustering approach with neighborhood attraction (CrSA-IFCM-NA), and it has been proven that CrSA-IFCM-NA effectively separated the masses from mammogram images and had good results in terms of cluster validity indices, indicating the clear segmentation of the regions (24). Others developed a complete integrated CAD system, which included a regional DL approach You-Only-Look-Once (YOLO) and a new deep network model full resolution convolutional network (FrCN) and a deep CNN, to detect, segment, and classify masses in mammograms and used the INbreast dataset to verify that quality detection accuracy reached 98.96%, effectively assisting radiologists make an accurate diagnosis (16, 25, 26).

Detection and Classification of Microcalcifications

Breast calcifications are small spots of calcium salts in the breast tissue, and they appear as small white spots on mammography. There are two different types of calcifications: microcalcifications and macrocalcifications. Macrocalcifications are large and coarse and are mostly benign and age-related. Microcalcifications may be early signs of breast cancer, with sizes ranging from 0.1 mm to 1 mm, with or without visible masses (27). At present, several CAD systems have been developed to detect calcifications in mammography images. Cai H et al. developed a CNN model for the detection, analysis and classification of microcalcifications in mammography images and confirmed that the function of CNN model to extract images outperformed handcrafted features; they achieved a classification precision of 89.32% and a sensitivity of 86.89% by using filtered deep features that are fully utilized by the proposed CNN structure for traditional descriptors (28). Zobia Suhail et al. developed a novel method for the classification of benign and malignant microcalcifications using an improved Fisher linear discriminant analysis approach for the linear transformation of segmented microcalcification data in combination with a SVM variant to distinguish between the two classes; 288 region of interests (ROIs) (139 malignant and 149 benign) in the Digital Database for Screening Mammography (DDSM) were classified with an average accuracy of 96% (29). Jian W et al. developed a CAD system to detect breast microcalcifications based on dual-tree complex wavelet transform (DT-CWT) (30). To detect microcalcification in mammograms, Guo Y et al. proposed a new hybrid method via combining contourlet transform and non-linking simplified pulse-coupled neural network (31). An automatic neural network can automatically detect, segment and classify masses and microcalcifications in mammography, providing a reference for radiologists and significantly improving the work efficiency and accuracy of radiologists.

Breast Mass Segmentation

The true segmentation of masses is directly related to the effective treatment of the patient. Some researchers used the method of fuzzy contours to automatically segment breast masses from mammograms and evaluated the ROIs extracted from the mini-MIAS database. The results showed that the average true positive rate was 91.12%, and the precision was 88.08% (32). Global segmentation of masses on mammograms is a complex process due to low-contrast mammogram images, irregular shapes of masses, spiculated margins, and the presence of intensity variations in pixels. Some used the mesh-free based radial basis function collocation approach for the evolution of a level set function for segmentation of the breast as well as suspicious mass regions. Then, an SVM classifier was used to classify the suspicious areas into abnormal and normal areas. The results showed that the sensitivity and specificity for the DDSM dataset were 97.12% and 92.43% respectively (33). Plane fitting and dynamic programming were applied to detect and classify breast mass in mammography, the accuracy of segmentation of breast lesions got improved greatly (34). The correct segmentation of breast lesions provides a guarantee for accurate disease classification and diagnosis (35). The use of an automatic image segmentation algorithm shows the application and potential of DL in precision medical systems.

Breast Density Assessment

Breast density is a strong risk factor for breast cancer and is usually evaluated by two-dimensional (2D) mammograms. Women with higher breast density have a two- to six-fold higher risk of developing breast cancer than women with low breast density (36). Mammographic density has traditionally been assessed as the absolute or relative amount (as percentage of the total breast size) occupied by dense tissue, which appears on a mammographic images as white “cotton-like” patches (37). In the current context of breast density identification, accurate and consistent breast density assessment is highly desirable to provide clinicians and patients with more informed clinical decision-making support. Many studies have shown that AI technology can assist in the evaluation of mammographic breast density (BD). Mohamed AA et al. studied a CNN model based on the Breast Imaging Reporting and Data System (BI-RADS) for BD categorization and classified the density of large (i.e., 22000 images) DM datasets (i.e., “scattered density” and “heterogeneous density”); they showed that with an increase in training samples could achieve the highest the area under the receiver operating characteristic curve (AUC) of 0.94-0.98 (38). They also used a CNN model to show that radiologists mostly used a medial oblique (MLO) view rather than a head-to-tail (CC) view to determine the category of BD (39). Le Boulc’h M and others evaluated the agreement between DenSeeMammo (an AI-based automatic BD assessment software approved by the Food and Drugs Administration) and visual assessment by a senior and a junior radiologist, and found that the BD assessment between the senior radiologist and the AI model was basically the same on DM (weighted=0.79; 95%CI:0.73-0.84) (40). Lehman CD et al. developed and tested a DL model to assess BD by using 58 894 randomly selected digital mammograms, and implemented the model by using a deep CNN, ResNet-18, with PyTorch. And it is concluded that the agreement between density assessments with the DL model and those of the original interpreting radiologist was good (k = 0.67; 95% CI: 0.66, 0.68), and in the four-way BI-RADS categorization, 9729 of 10763 (90%; 95% CI: 90%, 91%) DL assessments were accepted by the interpreting radiologist (41). The assessment of MBD by AI can reduce the variation between radiologists, better predict the risk of breast cancer and provide a basis for further detection and treatment.

Breast Cancer Risk Assessment

The high incidence and mortality of breast cancer are seriously threatening women’s physical and mental health. At present, there are many known risk factors for breast cancer, as Sun YS et al. concluded in 2017, aging, family history, reproductive factors (early menarche, late menopause, late age at first pregnancy and low parity), estrogen (endogenous and exogenous estrogens), lifestyle (excessive alcohol consumption, too much dietary fat intake, smoking) are all risk factors for breast cancer (42), the early detection and prevention of breast cancer can be promoted by increasing the overall understanding and recognition of breast cancer risk.

Relevant literature shows that the research of AI in breast cancer risk prediction is also very extensive. Nindrea RD et al. conducted a systematic review of the published ML algorithms for breast cancer risk prediction between January 2000 and May 2018, summarized and compared five ML algorithms including SVM, ANN, decision tree (DT), naive Bayes, and K-nearest neighbor (KNN) algorithms, and confirmed that the SVM algorithm was able to calculate breast cancer risk with better accuracy than other ML algorithms (43). Some studies have shown that the mammography results, risk factors, and clinical findings were analyzed and learned through an ANN combined with cytopathological diagnosis to evaluate the risk of breast cancer for doctors to estimate the probability of malignancy and improve the positive predictive value (PPV) of the decision to perform biopsy (44). Yala A and his team also developed a hybrid DL model that operates on both the full-field mammogram and traditional risk factors, and found that it was more accurate than a current clinical standard, i.e. the Tyrer-Cusick model (45). As a result, AI predicts breast cancer risk with higher accuracy than other methods, which in turn helps physicians guide high-risk populations to conduct appropriate interventions to reduce the incidence of breast cancer.

Image Quality Improvement

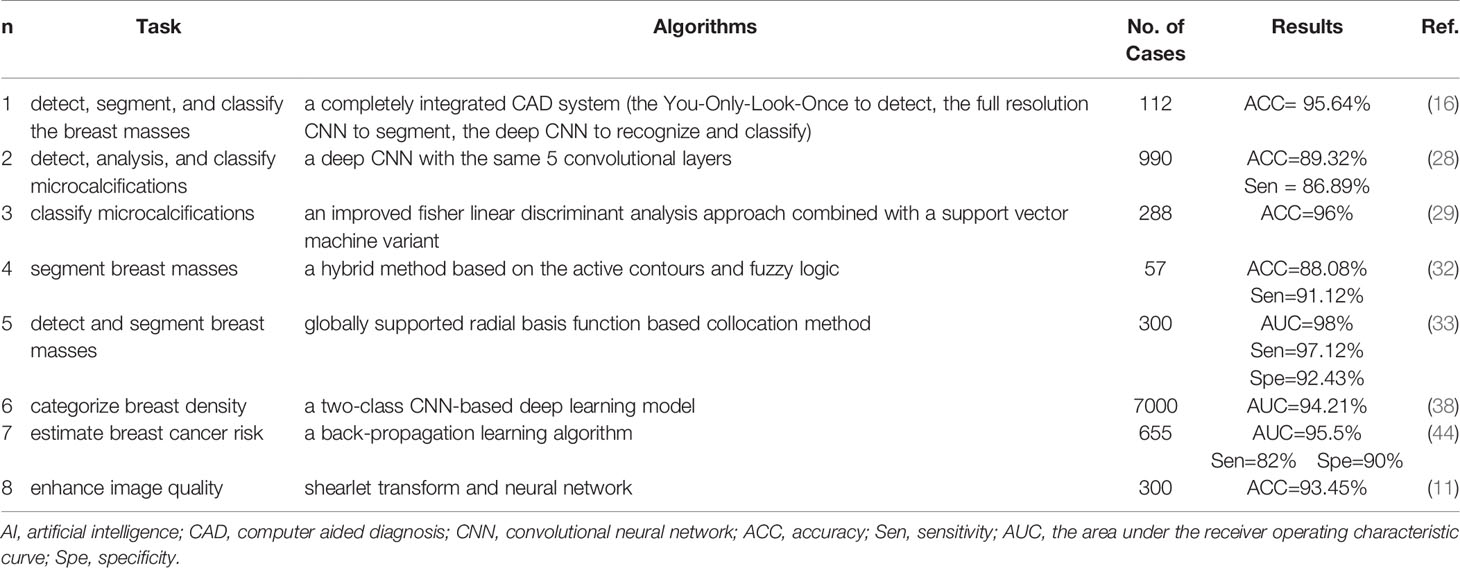

Good image quality is the basis of accurate diagnoses of diseases. Image quality has a significant impact on the diagnosis rate and accuracy rate of AI for assessing breast diseases on mammography, and clear images are conducive to the detection and diagnosis of microscopic lesions. Computer algorithms for improving image quality have been proposed one after another. Because it provides more details on the data phase, directionality and shift invariance, multi-scale shearlet transform can yield multi-resolution results, which is helpful to detect cancer cells, particularly those with small contours. Shenbagavalli P and his colleagues enhanced mammogram image quality by using a shearlet transform image enhancement method and classified the DDSM database as benign and malignant with an accuracy of up to 93.45% (11). Teare P et al. used a novel form of a false color enhancement method to optimize the characteristics of mammography through contrast-limited adaptive histogram equalization (CLAHE) and utilized dual deep CNNs at different scales for classification of mammogram images and derivative patches combined with a random forest gating network, they achieved a sensitivity of 0.91 and a specificity of 0.80 (46). Image quality is the premise of an accurate diagnosis, therefore, strict image quality evaluation and improvement measures must be carried out to effectively assist radiologists and ANN systems for further analysis and diagnosis (Table 1).

Applications of AI in Breast Ultrasound

As a diagnostic method with a high utilization rate, ultrasound has many advantages, such as simple operation, no radiation, and real-time operation. Therefore, ultrasound imaging has gradually become a common imaging method for the detection and diagnosis of breast cancer. To avoid a missed diagnosis or misdiagnosis caused by lack of physician experience or subjective influence and to achieve the quantification and standardization of ultrasound diagnosis, an AI system was developed to detect and diagnose breast lesions in ultrasound images (47). Related studies (48, 49) have shown that the AI systems are mainly used for the identification and segmentation of ROIs, feature extraction and classification of benign and malignant lesions in breast ultrasound imaging.

Identification and Segmentation of ROIs

To accurately represent and diagnose the breast lesions, the lesions should first be segmented from the background. In the current clinical work, the manual segmentation of breast images was mainly carried out by ultrasound doctors, this process not only depends on the doctors’ working experience but also takes time and effort. In addition, breast ultrasound images have low contrast, blurry boundaries, and a large amount of shadows, therefore, an automatic segmentation method for breast ultrasound image lesions using AI is proposed. The segmentation process of breast ultrasound images mainly includes the detection of an ROI containing the lesion and delineation of its contours. Hu Y et al. proposed an automatic tumor segmentation method that combined a dilated fully convolutional network (DFCN) with a phase-based active contour (PBAC) model. After training, 170 breast ultrasound images were identified and segmented, and they achieved a mean DSC of 88.97%, which showed that the proposed segmentation method could partly replace the manual segmentation results in medical analysis (50). Kumar V. et al. proposed a multi-U-net algorithm and segmented masses from 258 women’s breast ultrasound images, they achieved a mean Dice coefficient of 0.82, a true positive fraction (TPF) of 0.84, and a false positive fraction (FPF) of 0.01, which are obviously better than the results with the original U-net algorithm (51). Feng Y. et al. combined a Hausdorff-based fuzzy c-means (FCM) algorithm with an adaptive region selection scheme to segment ultrasound images of breast tumors. Based on the mutual information between regions, the neighborhood around each pixel is adaptively selected for Hausdorff distance measurement. The results showed that the adaptive Hausdorff-based FCM algorithm had a better performance than the Hausdorff-based and traditional FCM algorithms (52). The identification and segmentation of lesions in breast ultrasound images saves a considerable amount of time for ultrasound physicians to quickly identify and diagnose diseases and provide a foundation and guarantee for the development of AI for automatic diagnosis of breast diseases.

Feature Extraction

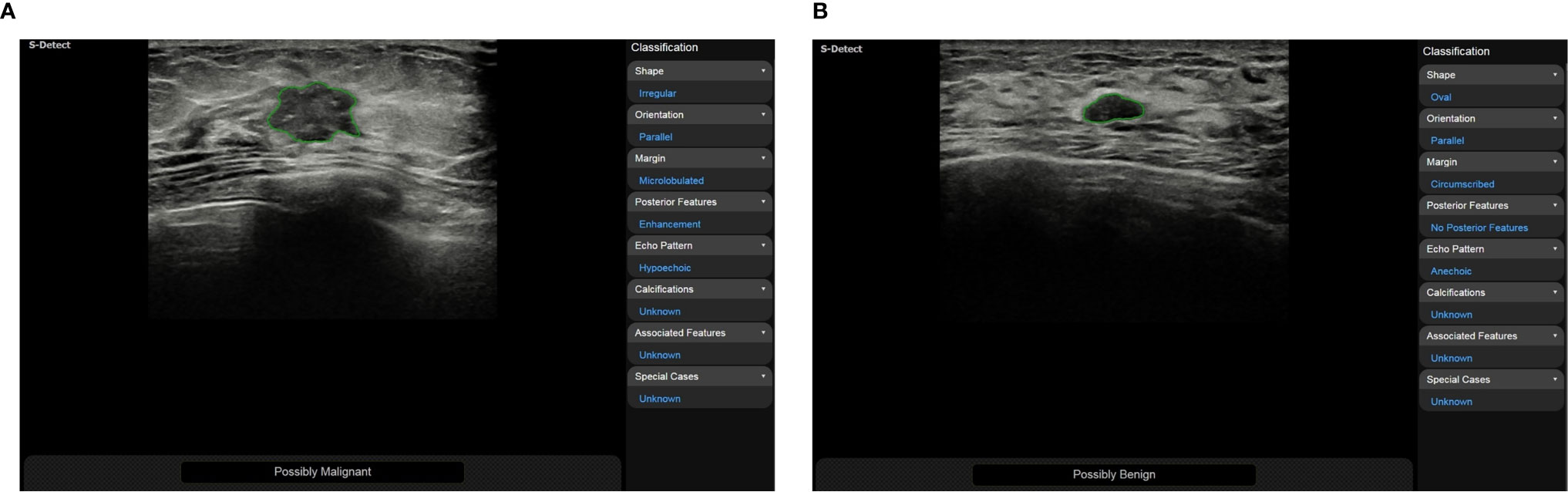

Ultrasound doctors usually identify and segment suspicious masses based on the morphological and texture features of the breast images. These features may be shape, orientation, edge, echo type, rear features, calcification location and hardness. Then, they classify suspicious masses according to the BI-RADS scale to quantify the degree of cancer suspicion in breast masses. The morphological features are very essential for the diagnosis of benign and malignant masses, and obtaining them correctly requires high demands on the ultrasound examiner. To reduce the dependence on the physician’s experience, AI systems have been applied to the feature extraction of breast ultrasound images. According to the research by Hsu SM et al. morphological-feature parameters (e.g., standard deviation of the shortest distance), texture features (e.g., variance), and the Nakagami parameter are combined to extract the physical features of breast ultrasound images, they classified the data using FCM clustering and achieved an accuracy of 89.4%, a specificity of 86.3%, and a sensitivity of 92.5%. Compared with logistic regression and SVM classifiers, the maximum discrimination performance of the optimal feature collection was independent of the type of classifier, indicating that the combination of different feature parameters should be functionally complementary to improve the performance of breast cancer classification (53). Zhang et al. constructed a two-layer DL architecture to extract the shear-wave elastography (SWE) features by combining feature learning and feature selection. Compared with the statistical features of quantified image intensity and texture, the results showed that the DL features had better classification performance with an accuracy of 93.4%, a sensitivity of 88.6%, a specificity of 97.1%, and an area under the receiver operating characteristic curve of 0.947 (54). Relevant studies have shown that using CAD systems (S-Detect, Samsung RS80A ultrasound system) to analyze the ultrasound features of breast masses can significantly improve the diagnostic performance of experienced and inexperienced radiologists (Figure 1). CAD systems may be helpful in refining breast lesion descriptions and in making management decisions, and it improves the consistency of the characteristics of breast masses among observers (49, 55).

Figure 1 (A) A 50-year-old woman was diagnosed with invasive cancer, and the results of CAD (S-Detect, Samsung RS80A ultrasound system) were “possibly malignant”; (B) A 48-year-old woman was diagnosed with adenosis, and the results of CAD were “possibly benign”.

Benign and Malignant Classification

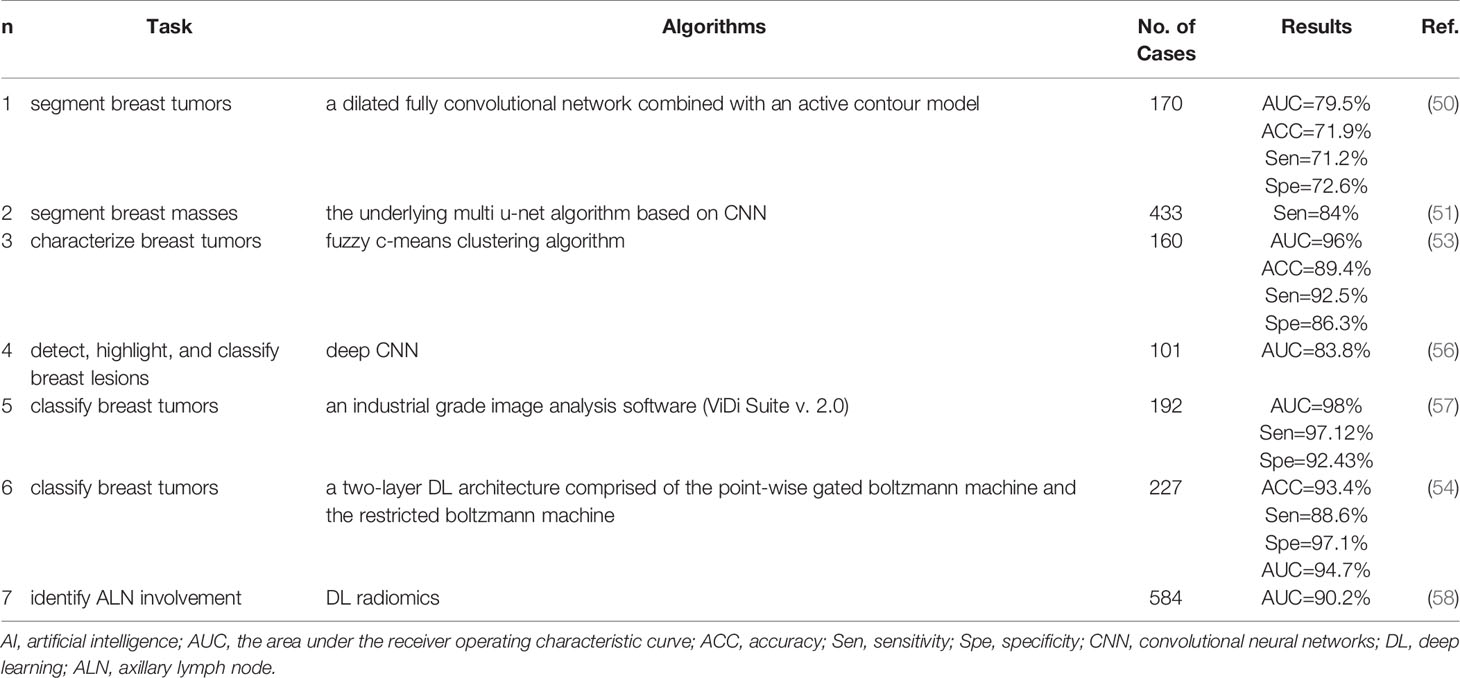

Breast cancer has a high incidence and mortality among women all over the world, therefore, many countries have carried out breast cancer screening for women of appropriate age. In breast disease screening, the most important thing is to distinguish breast cancer from benign breast diseases. Physicians mainly classify the lesions in breast ultrasound images based on BI-RADS. To allow doctors with different experience to reach a consistent conclusion, AI systems with benign and malignant classification functions have been developed gradually. Cirtisis A et al. classified an internal data set and an external test data set by using a deep convolution neural network (dCNN) and classified breast ultrasound images into BI-RADS 2-3 and BI-RADS 4-5. The results showed that the dCNN reached a classification accuracy of 93.1% (external 95.3%), whereas the classification accuracy of radiologists was 91.6 ± 5.4% (external 94.1 ± 1.2%). This shows that dCNNs may be used to mimic human decision making (56). Becker AS et al. used DL software to analyze 637 breast ultrasound images (84 malignant and 553 benign lesions). A randomly chosen subset of the images (n=445, 70%) was used for the training of the software, and the remaining cases (n=192) were used to validate the resulting model in the training process. The results were compared with three readers with variable expertise (a radiologist, resident, and trained medical student), and the findings showed that the neural network, which was trained on only a few hundred cases, exhibited comparable accuracy to the reading of a radiologist. There was a tendency for the neural network to perform better than a medical student who was trained with the same training data set (57). This finding indicates that the classification and diagnosis of breast diseases assisted by AI can significantly shorten the diagnostic time of physicians and improve the diagnostic accuracy of inexperienced doctors (Table 2).

Applications of AI in Breast MRI

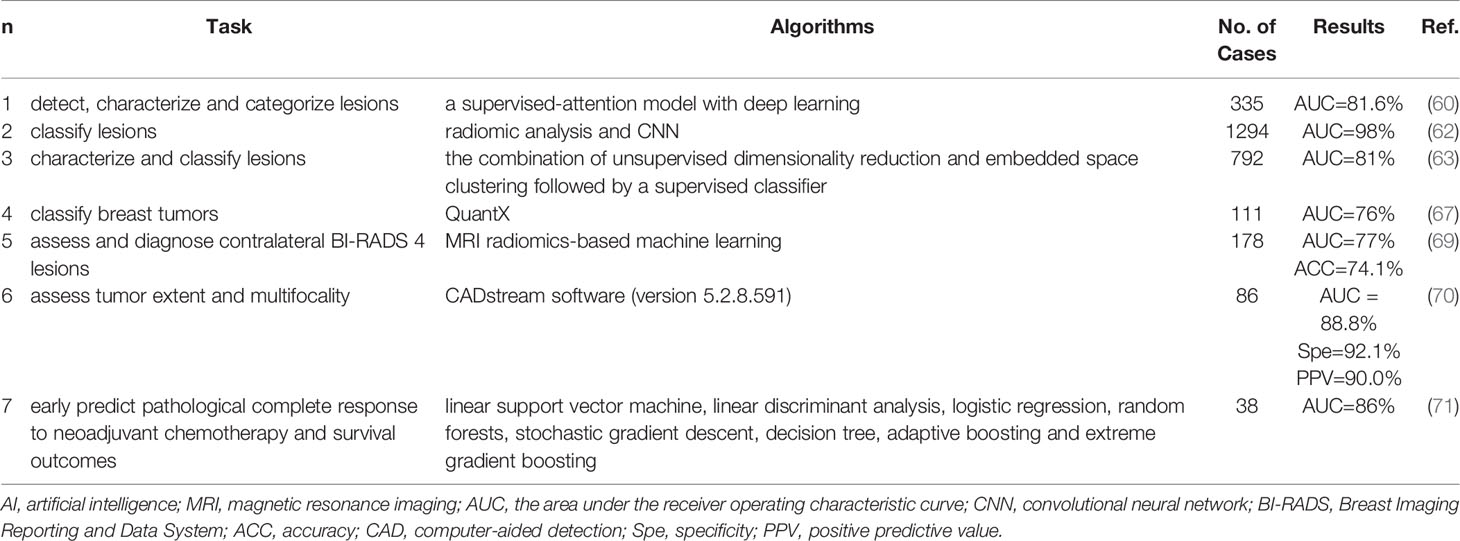

MRI is the most sensitive modality for breast cancer detection and is currently indicated as a supplement to mammography for patients at high risk (59). MRI can comprehensively evaluate the shape, size, scope and blood perfusion of breast masses through a variety of scanning sequences. However, it has disadvantages of low specificity, high cost, long examination time and selectivity for patients, therefore it is not as popularly used as mammography and ultrasound examinations. Most studies on breast imaging and DL have focused on mammography, less evidence is available concerning breast MRI (60). The study of DL in breast MRI mainly focuses on the detection, segmentation, characterization and classification of breast lesions (61–64). Ignacio Alvarez Illan et al. detected and segmented non-mass-enhanced lesions on dynamic contrast-enhanced magnetic resonance imaging (DCE-MRI) of the breast with a CAD system, and the optimized CAD system reduced and controlled the false positive rate and finally achieved satisfactory results (65). Herent P. et al. developed a DL model to detect, characterize and classify lesions on breast MRI (mammary glands, benign lesions, invasive ductal carcinoma and other malignant lesions) and achieved fine performance (60). Antropova N. et al. incorporated the dynamic and volumetric components of DCE-MRIs into breast lesion classification with DL methods using maximum intensity projection images. The results showed that incorporating both volumetric and dynamic DCE-MRI components can significantly improve CNN-based lesion classification (66). Jiang Y. et al. set up 19 breast imaging radiologists (eight academics and eleven private practices) to classify benign and malignant from DCE-MRI, and compared the classification results that only using conventionally available CAD evaluation software including kinetic maps and supplement using AI analytics through CAD software. It was found that the use of AI systems improved radiologists’ performance in differentiating benign and malignant breast lesions on MRI (67). Breast MRI is still necessary to screen patients at high risk of breast cancer. The CAD system can improve the sensitivity of examination, decrease the false positive rate, and reduce unnecessary biopsy and psychological burden of patients (68) (Table 3).

Conclusion

AI, particularly DL, is increasingly widely used in medical imaging and shows excellent performance in medical image analysis tasks. With its advantages of fast computing speed, good repeatability and no fatigue, AI can provide objective and effective information to doctors and reduce the workload of doctors and the rates of missed diagnosis and misdiagnosis (72). At present, the CAD system for breast cancer screening has been widely studied. In mammography, ultrasound, MRI and other imaging examinations, these systems can identify and segment breast lesions, extract features, classify them, estimate BD and the risk of breast cancer, and evaluate treatment effect and prognosis (39, 73–78). These systems show great advantages and potential in relieving pressure on doctors, optimizing resource allocation and improving accuracy.

Challenges and Prospects

AI is still in the stage of “weak AI”. Although it has made rapid developments in the medical field in the past decade, it is far from the goal of being fully integrated into the work of clinicians and large-scale application in the world. At present, there are many limitations in CAD systems for breast cancer screening, such as the lack of large-scale public datasets, the dependence on ROI annotation, high image quality requirements, regional differences, and overfitting and binary classification problems. In addition, AI mostly aims for one task training and cannot solve multiple tasks at the same time, which are the challenges and difficulties that DL faces in the development of breast imaging. Meanwhile, these also provide a new impetus for the development of breast imaging diagnostic disciplines and show the broad prospect of intelligent medical imaging in the future.

In addition to their application in traditional imaging methods, CAD systems based on DL are developing rapidly in the fields of digital breast tomosynthesis (79–81), ultrasound elastography (82), contrast-enhanced mammography, ultrasound and MRI et al. (83, 84). We believe that AI in breast imaging can not only be used for the detection, classification and prediction of breast diseases, but also further classify specific breast diseases (e.g. breast fibroplasia) and predict lymph node metastasis (85) and disease recurrence (86). It is believed that with the progress of AI technology, radiologists will achieve higher accuracy with higher efficiency and more accurate classification and determination of adjuvant treatment for breast diseases to achieve early detection, early diagnosis and early treatment of breast cancer and benefit the majority of patients.

Author Contributions

MY, M-HY, JY, and W-ZL contributed to the collection of relevant literature. S-EZ, JL, and CFD contributed significantly to literature analysis and manuscript preparation. Y-ML sorted out the literature and wrote the manuscript. JY provided a lot of help in the revision of the manuscript. H-RY and X-WC were responsible for design of the review and provided data acquisition, analysis, and interpretation. All authors contributed to the article and approved the submitted version.

Funding

This work was supported by the key research and development project in Hubei Province(2020BCB022), the Joint Fund project of Hubei Provincial Health and Family Planning Commission (WJ2018H0104), the Natural Science Foundation of Hubei Province (2019CFB2876), and Science and Technology Bureau of Shihezi, China (No. 2019ZH11).

Conflict of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

I would like to extend my sincere gratitude to my colleagues for their help in the completion of this article and the reviewers for reviewing my article.

References

1. Bouletreau P, Makaremi M, Ibrahim B, Louvrier A, Sigaux N. Artificial Intelligence: Applications in Orthognathic Surgery. J Stomatol Oral Maxillofac Surg (2019) 120(4):347–54. doi: 10.1016/j.jormas.2019.06.001

2. Hosny A, Parmar C, Quackenbush J, Schwartz LH, Aerts H. Artificial Intelligence in Radiology. Nat Rev Cancer (2018) 18(8):500–10. doi: 10.1038/s41568-018-0016-5

3. Korn RL, Rahmanuddin S, Borazanci E. Use of Precision Imaging in the Evaluation of Pancreas Cancer. Cancer Treat Res (2019) 178:209–36. doi: 10.1007/978-3-030-16391-4_8

4. Zhou LQ, Wang JY, Yu SY, Wu GG, Wei Q, Deng YB, et al. Artificial Intelligence in Medical Imaging of the Liver. World J Gastroenterol (2019) 25(6):672–82. doi: 10.3748/wjg.v25.i6.672

5. Arieno A, Chan A, Destounis SV. A Review of the Role of Augmented Intelligence in Breast Imaging: From Automated Breast Density Assessment to Risk Stratification. AJR Am J Roentgenol (2019) 212(2):259–70. doi: 10.2214/AJR.18.20391

6. Qin C, Yao D, Shi Y, Song Z. Computer-Aided Detection in Chest Radiography Based on Artificial Intelligence: A Survey. BioMed Eng Online (2018) 17(1):113. doi: 10.1186/s12938-018-0544-y

7. Villanueva-Meyer JE, Chang P, Lupo JM, Hess CP, Flanders AE, Kohli M. Machine Learning in Neurooncology Imaging: From Study Request to Diagnosis and Treatment. AJR Am J Roentgenol (2019) 212(1):52–6. doi: 10.2214/AJR.18.20328

8. Cardoso F, Kyriakides S, Ohno S, Penault-Llorca F, Poortmans P, Rubio IT, et al. Early Breast Cancer: ESMO Clinical Practice Guidelines for Diagnosis, Treatment and Follow-Updagger. Ann Oncol (2019) 30(8):1194–220. doi: 10.1093/annonc/mdz173

9. Bray F, Ferlay J, Soerjomataram I, Siegel RL, Torre LA, Jemal A. Global Cancer Statistics 2018: GLOBOCAN Estimates of Incidence and Mortality Worldwide for 36 Cancers in 185 Countries. CA Cancer J Clin (2018) 68(6):394–424. doi: 10.3322/caac.21492

10. Fiorica JV. Breast Cancer Screening, Mammography, and Other Modalities. Clin Obstet Gynecol (2016) 59(4):688–709. doi: 10.1097/GRF.0000000000000246

11. Shenbagavalli P, Thangarajan R. Aiding the Digital Mammogram for Detecting the Breast Cancer Using Shearlet Transform and Neural Network. Asian Pac J Cancer Prev (2018) 19(9):2665–71. doi: 10.22034/APJCP.2018.19.9.2665

12. Abdelhafiz D, Yang C, Ammar R, Nabavi S. Deep Convolutional Neural Networks for Mammography: Advances, Challenges and Applications. BMC Bioinf (2019) 20(Suppl 11):281. doi: 10.1186/s12859-019-2823-4

13. Hamet P, Tremblay J. Artificial Intelligence in Medicine. Metabolism (2017) 69S:S36–40. doi: 10.1016/j.metabol.2017.01.011

14. Viceconti M, Hunter P, Hose R. Big Data, Big Knowledge: Big Data for Personalized Healthcare. IEEE J BioMed Health Inform (2015) 19(4):1209–15. doi: 10.1109/JBHI.2015.2406883

15. Obermeyer Z, Emanuel EJ. Predicting the Future - Big Data, Machine Learning, and Clinical Medicine. N Engl J Med (2016) 375(13):1216–9. doi: 10.1056/NEJMp1606181

16. Al-Antari MA, Al-Masni MA, Choi MT, Han SM, Kim TS. A Fully Integrated Computer-Aided Diagnosis System for Digital X-Ray Mammograms via Deep Learning Detection, Segmentation, and Classification. Int J Med Inform (2018) 117:44–54. doi: 10.1016/j.ijmedinf.2018.06.003

17. Rodriguez-Ruiz A, Lang K, Gubern-Merida A, Teuwen J, Broeders M, Gennaro G, et al. Can We Reduce the Workload of Mammographic Screening by Automatic Identification of Normal Exams With Artificial Intelligence? A Feasibility Study. Eur Radiol (2019) 29(9):4825–32. doi: 10.1007/s00330-019-06186-9

18. Tagliafico AS, Piana M, Schenone D, Lai R, Massone AM, Houssami N. Overview of Radiomics in Breast Cancer Diagnosis and Prognostication. Breast (2020) 49:74–80. doi: 10.1016/j.breast.2019.10.018

19. Yanagawa M, Niioka H, Hata A, Kikuchi N, Honda O, Kurakami H, et al. Application of Deep Learning (3-Dimensional Convolutional Neural Network) for the Prediction of Pathological Invasiveness in Lung Adenocarcinoma: A Preliminary Study. Med (Baltimore) (2019) 98(25):e16119. doi: 10.1097/MD.0000000000016119

20. Le EPV, Wang Y, Huang Y, Hickman S, Gilbert FJ. Artificial Intelligence in Breast Imaging. Clin Radiol (2019) 74(5):357–66. doi: 10.1016/j.crad.2019.02.006

21. Welch HG, Prorok PC, O’Malley AJ, Kramer BS. Breast-Cancer Tumor Size, Overdiagnosis, and Mammography Screening Effectiveness. N Engl J Med (2016) 375(15):1438–47. doi: 10.1056/NEJMoa1600249

22. McDonald ES, Oustimov A, Weinstein SP, Synnestvedt MB, Schnall M, Conant EF. Effectiveness of Digital Breast Tomosynthesis Compared With Digital Mammography: Outcomes Analysis From 3 Years of Breast Cancer Screening. JAMA Oncol (2016) 2(6):737–43. doi: 10.1001/jamaoncol.2015.5536

23. Alsheh Ali M, Eriksson M, Czene K, Hall P, Humphreys K. Detection of Potential Microcalcification Clusters Using Multivendor for-Presentation Digital Mammograms for Short-Term Breast Cancer Risk Estimation. Med Phys (2019) 46(4):1938–46. doi: 10.1002/mp.13450

24. S P, NK V, S S. Breast Cancer Detection Using Crow Search Optimization Based Intuitionistic Fuzzy Clustering With Neighborhood Attraction. Asian Pac J Cancer Prev (2019) 20(1):157–65. doi: 10.31557/APJCP.2019.20.1.157

25. Jiang Y, Inciardi MF, Edwards AV, Papaioannou J. Interpretation Time Using a Concurrent-Read Computer-Aided Detection System for Automated Breast Ultrasound in Breast Cancer Screening of Women With Dense Breast Tissue. AJR Am J Roentgenol (2018) 211(2):452–61. doi: 10.2214/AJR.18.19516

26. Fan M, Li Y, Zheng S, Peng W, Tang W, Li L. Computer-Aided Detection of Mass in Digital Breast Tomosynthesis Using a Faster Region-Based Convolutional Neural Network. Methods (2019) 166:103–11. doi: 10.1016/j.ymeth.2019.02.010

27. Cruz-Bernal A, Flores-Barranco MM, Almanza-Ojeda DL, Ledesma S, Ibarra-Manzano MA. Analysis of the Cluster Prominence Feature for Detecting Calcifications in Mammograms. J Healthc Eng (2018) 2018:2849567. doi: 10.1155/2018/2849567

28. Cai H, Huang Q, Rong W, Song Y, Li J, Wang J, et al. Breast Microcalcification Diagnosis Using Deep Convolutional Neural Network From Digital Mammograms. Comput Math Methods Med (2019) 2019:2717454. doi: 10.1155/2019/2717454

29. Suhail Z, Denton ERE, Zwiggelaar R. Classification of Micro-Calcification in Mammograms Using Scalable Linear Fisher Discriminant Analysis. Med Biol Eng Comput (2018) 56(8):1475–85. doi: 10.1007/s11517-017-1774-z

30. Jian W, Sun X, Luo S. Computer-Aided Diagnosis of Breast Microcalcifications Based on Dual-Tree Complex Wavelet Transform. BioMed Eng Online (2012) 11:96. doi: 10.1186/1475-925X-11-96

31. Guo Y, Dong M, Yang Z, Gao X, Wang K, Luo C, et al. A New Method of Detecting Micro-Calcification Clusters in Mammograms Using Contourlet Transform and non-Linking Simplified PCNN. Comput Methods Prog BioMed (2016) 130:31–45. doi: 10.1016/j.cmpb.2016.02.019

32. Hmida M, Hamrouni K, Solaiman B, Boussetta S. Mammographic Mass Segmentation Using Fuzzy Contours. Comput Methods Prog BioMed (2018) 164:131–42. doi: 10.1016/j.cmpb.2018.07.005

33. Kashyap KL, Bajpai MK, Khanna P. Globally Supported Radial Basis Function Based Collocation Method for Evolution of Level Set in Mass Segmentation Using Mammograms. Comput Biol Med (2017) 87:22–37. doi: 10.1016/j.compbiomed.2017.05.015

34. Song E, Jiang L, Jin R, Zhang L, Yuan Y, Li Q. Breast Mass Segmentation in Mammography Using Plane Fitting and Dynamic Programming. Acad Radiol (2009) 16(7):826–35. doi: 10.1016/j.acra.2008.11.014

35. Anderson NH, Hamilton PW, Bartels PH, Thompson D, Montironi R, Sloan JM. Computerized Scene Segmentation for the Discrimination of Architectural Features in Ductal Proliferative Lesions of the Breast. J Pathol (1997) 181(4):374–80. doi: 10.1002/(SICI)1096-9896(199704)181:4<374::AID-PATH795>3.0.CO;2-N

36. Fowler EEE, Smallwood AM, Khan NZ, Kilpatrick K, Sellers TA, Heine J. Technical Challenges in Generalizing Calibration Techniques for Breast Density Measurements. Med Phys (2019) 46(2):679–88. doi: 10.1002/mp.13325

37. Hudson S, Vik Hjerkind K, Vinnicombe S, Allen S, Trewin C, Ursin G, et al. Adjusting for BMI in Analyses of Volumetric Mammographic Density and Breast Cancer Risk. Breast Cancer Res (2018) 20(1):156. doi: 10.1186/s13058-018-1078-8

38. Mohamed AA, Berg WA, Peng H, Luo Y, Jankowitz RC, Wu S. A Deep Learning Method for Classifying Mammographic Breast Density Categories. Med Phys (2018) 45(1):314–21. doi: 10.1002/mp.12683

39. Mohamed AA, Luo Y, Peng H, Jankowitz RC, Wu S. Understanding Clinical Mammographic Breast Density Assessment: A Deep Learning Perspective. J Digit Imaging (2018) 31(4):387–92. doi: 10.1007/s10278-017-0022-2

40. Le Boulc’h M, Bekhouche A, Kermarrec E, Milon A, Abdel Wahab C, Zilberman S, et al. Comparison of Breast Density Assessment Between Human Eye and Automated Software on Digital and Synthetic Mammography: Impact on Breast Cancer Risk. Diagn Interv Imaging (2020) 101(12):811–9. doi: 10.1016/j.diii.2020.07.004

41. Lehman CD, Yala A, Schuster T, Dontchos B, Bahl M, Swanson K, et al. Mammographic Breast Density Assessment Using Deep Learning: Clinical Implementation. Radiology (2019) 290(1):52–8. doi: 10.1148/radiol.2018180694

42. Sun YS, Zhao Z, Yang ZN, Xu F, Lu HJ, Zhu ZY, et al. Risk Factors and Preventions of Breast Cancer. Int J Biol Sci (2017) 13(11):1387–97. doi: 10.7150/ijbs.21635

43. Nindrea RD, Aryandono T, Lazuardi L, Dwiprahasto I. Diagnostic Accuracy of Different Machine Learning Algorithms for Breast Cancer Risk Calculation: A Meta-Analysis. Asian Pac J Cancer Prev (2018) 19(7):1747–52. doi: 10.22034/APJCP.2018.19.7.1747

44. Sepandi M, Taghdir M, Rezaianzadeh A, Rahimikazerooni S. Assessing Breast Cancer Risk With an Artificial Neural Network. Asian Pac J Cancer Prev (2018) 19(4):1017–9. doi: 10.31557/APJCP.2018.19.12.3571

45. Yala A, Lehman C, Schuster T, Portnoi T, Barzilay R. A Deep Learning Mammography-Based Model for Improved Breast Cancer Risk Prediction. Radiology (2019) 292(1):60–6. doi: 10.1148/radiol.2019182716

46. Teare P, Fishman M, Benzaquen O, Toledano E, Elnekave E. Malignancy Detection on Mammography Using Dual Deep Convolutional Neural Networks and Genetically Discovered False Color Input Enhancement. J Digit Imaging (2017) 30(4):499–505. doi: 10.1007/s10278-017-9993-2

47. Akkus Z, Cai J, Boonrod A, Zeinoddini A, Weston AD, Philbrick KA, et al. A Survey of Deep-Learning Applications in Ultrasound: Artificial Intelligence-Powered Ultrasound for Improving Clinical Workflow. J Am Coll Radiol (2019) 16(9 Pt B):1318–28. doi: 10.1016/j.jacr.2019.06.004

48. Wu GG, Zhou LQ, Xu JW, Wang JY, Wei Q, Deng YB, et al. Artificial Intelligence in Breast Ultrasound. World J Radiol (2019) 11(2):19–26. doi: 10.4329/wjr.v11.i2.19

49. Park HJ, Kim SM, La Yun B, Jang M, Kim B, Jang JY, et al. A Computer-Aided Diagnosis System Using Artificial Intelligence for the Diagnosis and Characterization of Breast Masses on Ultrasound: Added Value for the Inexperienced Breast Radiologist. Med (Baltimore) (2019) 98(3):e14146. doi: 10.1097/MD.0000000000014146

50. Hu Y, Guo Y, Wang Y, Yu J, Li J, Zhou S, et al. Automatic Tumor Segmentation in Breast Ultrasound Images Using a Dilated Fully Convolutional Network Combined With an Active Contour Model. Med Phys (2019) 46(1):215–28. doi: 10.1002/mp.13268

51. Kumar V, Webb JM, Gregory A, Denis M, Meixner DD, Bayat M, et al. Automated and Real-Time Segmentation of Suspicious Breast Masses Using Convolutional Neural Network. PloS One (2018) 13(5):e0195816. doi: 10.1371/journal.pone.0195816

52. Feng Y, Dong F, Xia X, Hu CH, Fan Q, Hu Y, et al. An Adaptive Fuzzy C-Means Method Utilizing Neighboring Information for Breast Tumor Segmentation in Ultrasound Images. Med Phys (2017) 44(7):3752–60. doi: 10.1002/mp.12350

53. Hsu SM, Kuo WH, Kuo FC, Liao YY. Breast Tumor Classification Using Different Features of Quantitative Ultrasound Parametric Images. Int J Comput Assist Radiol Surg (2019) 14(4):623–33. doi: 10.1007/s11548-018-01908-8

54. Zhang Q, Xiao Y, Dai W, Suo J, Wang C, Shi J, et al. Deep Learning Based Classification of Breast Tumors With Shear-Wave Elastography. Ultrasonics (2016) 72:150–7. doi: 10.1016/j.ultras.2016.08.004

55. Choi JH, Kang BJ, Baek JE, Lee HS, Kim SH. Application of Computer-Aided Diagnosis in Breast Ultrasound Interpretation: Improvements in Diagnostic Performance According to Reader Experience. Ultrasonography (2018) 37(3):217–25. doi: 10.14366/usg.17046

56. Ciritsis A, Rossi C, Eberhard M, Marcon M, Becker AS, Boss A. Automatic Classification of Ultrasound Breast Lesions Using a Deep Convolutional Neural Network Mimicking Human Decision-Making. Eur Radiol (2019) 29(10):5458–68. doi: 10.1007/s00330-019-06118-7

57. Becker AS, Mueller M, Stoffel E, Marcon M, Ghafoor S, Boss A. Classification of Breast Cancer in Ultrasound Imaging Using a Generic Deep Learning Analysis Software: A Pilot Study. Br J Radiol (2018) 91(1083):20170576. doi: 10.1259/bjr.20170576

58. Zheng X, Yao Z, Huang Y, Yu Y, Wang Y, Liu Y, et al. Deep learning radiomics can predict axillary lymph node status in early-stage breast cancer. Nat Commun (2020) 11(1):1236. doi: 10.1038/s41467-020-15027-z

59. Sheth D, Giger ML. Artificial Intelligence in the Interpretation of Breast Cancer on MRI. J Magn Reson Imaging (2020) 51(5):1310–24. doi: 10.1002/jmri.26878

60. Herent P, Schmauch B, Jehanno P, Dehaene O, Saillard C, Balleyguier C, et al. Detection and Characterization of MRI Breast Lesions Using Deep Learning. Diagn Interv Imaging (2019) 100(4):219–25. doi: 10.1016/j.diii.2019.02.008

61. Xu X, Fu L, Chen Y, Larsson R, Zhang D, Suo S, et al. Breast Region Segmentation Being Convolutional Neural Network in Dynamic Contrast Enhanced MRI. Annu Int Conf IEEE Eng Med Biol Soc (2018) 2018:750–3. doi: 10.1109/EMBC.2018.8512422

62. Truhn D, Schrading S, Haarburger C, Schneider H, Merhof D, Kuhl C. Radiomic Versus Convolutional Neural Networks Analysis for Classification of Contrast-Enhancing Lesions at Multiparametric Breast MRI. Radiology (2019) 290(2):290–7. doi: 10.1148/radiol.2018181352

63. Gallego-Ortiz C, Martel AL. A Graph-Based Lesion Characterization and Deep Embedding Approach for Improved Computer-Aided Diagnosis of Nonmass Breast MRI Lesions. Med Image Anal (2019) 51:116–24. doi: 10.1016/j.media.2018.10.011

64. Vidic I, Egnell L, Jerome NP, Teruel JR, Sjobakk TE, Ostlie A, et al. Support Vector Machine for Breast Cancer Classification Using Diffusion-Weighted MRI Histogram Features: Preliminary Study. J Magn Reson Imaging (2018) 47(5):1205–16. doi: 10.1002/jmri.25873

65. Illan IA, Ramirez J, Gorriz JM, Marino MA, Avendano D, Helbich T, et al. Automated Detection and Segmentation of Nonmass-Enhancing Breast Tumors With Dynamic Contrast-Enhanced Magnetic Resonance Imaging. Contrast Media Mol Imaging (2018) 2018:5308517. doi: 10.1155/2018/5308517

66. Antropova N, Abe H, Giger ML. Use of Clinical MRI Maximum Intensity Projections for Improved Breast Lesion Classification With Deep Convolutional Neural Networks. J Med Imaging (Bellingham) (2018) 5(1):014503. doi: 10.1117/1.JMI.5.1.014503

67. Jiang Y, Edwards AV, Newstead GM. Artificial Intelligence Applied to Breast MRI for Improved Diagnosis. Radiology (2021) 298(1):38–46. doi: 10.1148/radiol.2020200292

68. Meyer-Base A, Morra L, Meyer-Base U, Pinker K. Current Status and Future Perspectives of Artificial Intelligence in Magnetic Resonance Breast Imaging. Contrast Media Mol Imaging (2020) 2020:6805710. doi: 10.1155/2020/6805710

69. Hao W, Gong J, Wang S, Zhu H, Zhao B, Peng W. Application of MRI Radiomics-Based Machine Learning Model to Improve Contralateral BI-RADS 4 Lesion Assessment. Front Oncol (2020) 10:531476. doi: 10.3389/fonc.2020.531476

70. Song SE, Seo BK, Cho KR, Woo OH, Son GS, Kim C, et al. Computer-aided detection (CAD) system for breast MRI in assessment of local tumor extent, nodal status, and multifocality of invasive breast cancers: preliminary study. Cancer Imaging (2015) 15(1):1. doi: 10.1186/s40644-015-0036-2

71. Tahmassebi A, Wengert GJ, Helbich TH, Bago-Horvath Z, Alaei S, Bartsch R, et al. Impact of Machine Learning With Multiparametric Magnetic Resonance Imaging of the Breast for Early Prediction of Response to Neoadjuvant Chemotherapy and Survival Outcomes in Breast Cancer Patients. Invest Radiol (2019) 54(2):110-7. doi: 10.1097/RLI.0000000000000518

72. Morgan MB, Mates JL. Applications of Artificial Intelligence in Breast Imaging. Radiol Clin North Am (2021) 59(1):139–48. doi: 10.1016/j.rcl.2020.08.007

73. Hupse R, Samulski M, Lobbes MB, Mann RM, Mus R, den Heeten GJ, et al. Computer-Aided Detection of Masses at Mammography: Interactive Decision Support Versus Prompts. Radiology (2013) 266(1):123–9. doi: 10.1148/radiol.12120218

74. Quellec G, Lamard M, Cozic M, Coatrieux G, Cazuguel G. Multiple-Instance Learning for Anomaly Detection in Digital Mammography. IEEE Trans Med Imaging (2016) 35(7):1604–14. doi: 10.1109/TMI.2016.2521442

75. Mendelson EB. Artificial Intelligence in Breast Imaging: Potentials and Limitations. AJR Am J Roentgenol (2019) 212(2):293–9. doi: 10.2214/AJR.18.20532

76. Qi X, Zhang L, Chen Y, Pi Y, Chen Y, Lv Q, et al. Automated Diagnosis of Breast Ultrasonography Images Using Deep Neural Networks. Med Image Anal (2019) 52:185–98. doi: 10.1016/j.media.2018.12.006

77. Kooi T, Litjens G, van Ginneken B, Gubern-Merida A, Sanchez CI, Mann R, et al. Large Scale Deep Learning for Computer Aided Detection of Mammographic Lesions. Med Image Anal (2017) 35:303–12. doi: 10.1016/j.media.2016.07.007

78. Kim J, Kim HJ, Kim C, Kim WH. Artificial Intelligence in Breast Ultrasonography. Ultrasonography (2021) 40(2):183–90. doi: 10.14366/usg.20117

79. Sechopoulos I, Teuwen J, Mann R. Artificial Intelligence for Breast Cancer Detection in Mammography and Digital Breast Tomosynthesis: State of the Art. Semin Cancer Biol (2020) 72:214-25. doi: 10.1016/j.semcancer.2020.06.002

80. Skaane P, Bandos AI, Niklason LT, Sebuodegard S, Osteras BH, Gullien R, et al. Digital Mammography Versus Digital Mammography Plus Tomosynthesis in Breast Cancer Screening: The Oslo Tomosynthesis Screening Trial. Radiology (2019) 291(1):23–30. doi: 10.1148/radiol.2019182394

81. Lotter W, Diab AR, Haslam B, Kim JG, Grisot G, Wu E, et al. Robust Breast Cancer Detection in Mammography and Digital Breast Tomosynthesis Using an Annotation-Efficient Deep Learning Approach. Nat Med (2021) 27(2):244–9. doi: 10.1038/s41591-020-01174-9

82. Zhang Q, Song S, Xiao Y, Chen S, Shi J, Zheng H. Dual-Mode Artificially-Intelligent Diagnosis of Breast Tumors in Shear-Wave Elastography and B-Mode Ultrasound Using Deep Polynomial Networks. Med Eng Phys (2019) 64:1–6. doi: 10.1016/j.medengphy.2018.12.005

83. Adachi M, Fujioka T, Mori M, Kubota K, Kikuchi Y, Xiaotong W, et al. Detection and Diagnosis of Breast Cancer Using Artificial Intelligence Based Assessment of Maximum Intensity Projection Dynamic Contrast-Enhanced Magnetic Resonance Images. Diag (Basel) (2020) 10(5):330. doi: 10.3390/diagnostics10050330

84. Dalmis MU, Gubern-Merida A, Vreemann S, Bult P, Karssemeijer N, Mann R, et al. Artificial Intelligence-Based Classification of Breast Lesions Imaged With a Multiparametric Breast MRI Protocol With Ultrafast DCE-MRI, T2, and DWI. Invest Radiol (2019) 54(6):325–32. doi: 10.1097/RLI.0000000000000544

85. Zhou LQ, Wu XL, Huang SY, Wu GG, Ye HR, Wei Q, et al. Lymph Node Metastasis Prediction From Primary Breast Cancer US Images Using Deep Learning. Radiology (2020) 294(1):19–28. doi: 10.1148/radiol.2019190372

Keywords: artificial intelligence, machine learning, deep learning, breast, imaging

Citation: Lei Y-M, Yin M, Yu M-H, Yu J, Zeng S-E, Lv W-Z, Li J, Ye H-R, Cui X-W and Dietrich CF (2021) Artificial Intelligence in Medical Imaging of the Breast. Front. Oncol. 11:600557. doi: 10.3389/fonc.2021.600557

Received: 30 August 2020; Accepted: 07 July 2021;

Published: 22 July 2021.

Edited by:

Emanuele Neri, University of Pisa, ItalyReviewed by:

Alberto Stefano Tagliafico, University of Genoa, ItalySubathra Adithan, Jawaharlal Institute of Postgraduate Medical Education and Research (JIPMER), India

Copyright © 2021 Lei, Yin, Yu, Yu, Zeng, Lv, Li, Ye, Cui and Dietrich. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Hua-Rong Ye, eWVodWFyb25nQGhvdG1haWwuY29t; Xin-Wu Cui, Y3VpeGlud3VAbGl2ZS5jbg==

Yu-Meng Lei

Yu-Meng Lei Miao Yin

Miao Yin Mei-Hui Yu

Mei-Hui Yu Jing Yu

Jing Yu Shu-E Zeng

Shu-E Zeng Wen-Zhi Lv

Wen-Zhi Lv Jun Li

Jun Li Hua-Rong Ye

Hua-Rong Ye Xin-Wu Cui

Xin-Wu Cui Christoph F. Dietrich

Christoph F. Dietrich