Abstract

Individuals who have suffered neurotrauma like a stroke or brachial plexus injury often experience reduced limb functionality. Soft robotic exoskeletons have been successful in assisting rehabilitative treatment and improving activities of daily life but restoring dexterity for tasks such as playing musical instruments has proven challenging. This research presents a soft robotic hand exoskeleton coupled with machine learning algorithms to aid in relearning how to play the piano by ‘feeling’ the difference between correct and incorrect versions of the same song. The exoskeleton features piezoresistive sensor arrays with 16 taxels integrated into each fingertip. The hand exoskeleton was created as a single unit, with polyvinyl acid (PVA) used as a stent and later dissolved to construct the internal pressure chambers for the five individually actuated digits. Ten variations of a song were produced, one that was correct and nine containing rhythmic errors. To classify these song variations, Random Forest (RF), K-Nearest Neighbor (KNN), and Artificial Neural Network (ANN) algorithms were trained with data from the 80 taxels combined from the tactile sensors in the fingertips. Feeling the differences between correct and incorrect versions of the song was done with the exoskeleton independently and while the exoskeleton was worn by a person. Results demonstrated that the ANN algorithm had the highest classification accuracy of 97.13% ± 2.00% with the human subject and 94.60% ± 1.26% without. These findings highlight the potential of the smart exoskeleton to aid disabled individuals in relearning dexterous tasks like playing musical instruments.

1 Introduction

Patients with neuromuscular disorders commonly face challenges when it comes to engaging in everyday activities. For instance, after a stroke, their ability to carry out daily tasks can be affected due to decreased coordination and strength in one or both of their upper limbs (Lai et al., 2019). As a result, they may experience asymmetric function caused by unilateral hand weakness (Patel and Lodha, 2019). Moreover, spasticity can develop over time and affect their ability to perform personal hygiene tasks, leading to further deterioration of the affected limb’s function (Kerr et al., 2020). This pattern of disability is alsoevident in conditions like cerebral palsy (Gordon et al., 2013). These problems have spurred the development of robotic devices to enhance the abilities of patients recovering from these debilitating disorders (Lum et al., 2012; Polotto et al., 2012; Squeri et al., 2013; Aubin et al., 2014).

Exoskeletons are a relatively new solution for addressing support and enhancing user movements (Tran et al., 2021). Traditionally, exoskeleton systems have consisted of inflexible mechanical structures that are often powered by electric motors (Herder and Antonides, 2006; Rahman et al., 2006; Rahman et al., 2015; Gopura et al., 2016). These systems are precise and offer numerous control techniques (Gopura and Kiguchi, 2008; Kiguchi and Hayashi, 2012; Li et al., 2013; Ambrosini et al., 2014; Jarrassé et al., 2014; Leonardis et al., 2015; Lambelet et al., 2017). They have proven especially beneficial in stable scenarios such as when attached to wheelchairs or if used as standing aids in physical therapy (Iwamuro et al., 2008). Nonetheless, the rigid nature of such assistive devices can create problems of their own. Properly distributing force for user safety and comfort can be challenging in some cases (Mohamaddan and Komeda, 2010; Pons, 2010; Rahman et al., 2015). Although various approaches have been suggested to address this issue, it remains an active area of research (Jarrassé and Morel, 2011).

Surgical interventions to restore upper limb functionality operate in a manner akin to inflexible exoskeletons. When dealing with upper limb spasticity, surgical choices may involve the fractional elongation and release of tendons or targeted neurotomies to reduce the debilitating effects of spasticity. In severe cases, bone surgery like wrist fusion may enhance joint alignment, which could facilitate residual hand function, but this comes at the cost of sacrificing the range of motion in the affected joint. In some neurological conditions, such as brachial plexus injuries, surgery is not able to restore full motor and sensory function equivalent to the uninjured upper extremity. In total brachial plexus injuries, surgery is targeted at restoring the key movements of elbow flexion and shoulder abduction or external rotation—restoration of sensation and motor function in the hand is not possible at that point. Exoskeletons may, therefore, have a complementary role to surgery in managing these patients.

Supportive exoskeletons have played a vital role in aiding patients to manage and recuperate from neurotrauma. Cable and spring-based mechanical exoskeletons have proven to be beneficial in rehabilitation, but their size and complexity often render them impractical in meeting patients’ needs (Arata et al., 2013; In et al., 2015; Nycz et al., 2015; Kang et al., 2016; Nycz et al., 2016; Yang et al., 2016; Jarrett and McDaid, 2017; Li et al., 2018). The fabrication and maintenance of these systems are challenging due to the requirement for custom parts to accommodate the unique anatomy of each patient (Hussain et al., 2016; Kang et al., 2016). Furthermore, the structures used are not ergonomic, and rigid exoskeletons tend to become excessively bulky.

The adoption of soft pneumatic actuators has revolutionized the development of exoskeletons that meet patients’ requirements for lightweight, pliable, and practical support (Andrikopoulos et al., 2015; Al-Fahaam et al., 2016; Haghshenas-Jaryani et al., 2016; Al-Fahaam et al., 2018; Cappello et al., 2018; Li et al., 2020; Lin et al., 2020; Abd et al., 2021). However, the flexibility of these actuators creates a challenge that necessitates the use of flexible sensor technology capable of accommodating and compensating for significant deformation (Yeo et al., 2016). By implementing effective and adaptable sensor techniques, this challenge can be overcome (Haghshenas-Jaryani et al., 2016; Abd et al., 2021).

Relearning tasks involves the restoration and retraining of specific movements or skills. Soft robotic exoskeletons, utilizing the properties of flexible materials and sensors, provide gentle support and assistance to individuals in relearning and regaining their motor abilities (Polygerinos et al., 2015). By monitoring and responding to users’ movements, soft robotic exoskeletons can offer real-time feedback and adjustments, making it easier for patients to grasp the correct movement techniques (Deimel and Brock, 2016). Playing the piano requires complex and highly skilled movements. For individuals who have lost the ability to play due to neurotrauma, soft robotic exoskeletons can serve as powerful assistive tools (Hoang et al., 2021). The flexibility of soft materials and sensors enables the exoskeletons to adapt to the shape and motion of the hand, providing precise force and guidance to aid patients in recovering the fine finger movements required for piano playing (Takahashi et al., 2020). However, achieving precise force control and adaptability requires the development of highly intelligent algorithms to address motion planning issues (Wang and Chortos, 2022).

The objective of this study is to introduce a smart assistive hand exoskeleton that comprises five soft pneumatic actuators, each fitted with a 16-taxel flexible sensor at the fingertip (Figures 1A–C). The design and fabrication process of the hand exoskeleton is novel and could be customized to unique anatomy of different patients. This completely soft design will improve user comfort and result in a lightweight convenient exoskeleton. Furthermore, the fabrication is significantly simpler than most designs as all the actuators and sensors are combined into a single molding process. As a demonstration of the exoskeleton’s capabilities to serve as an intelligent assistant, it was employed to ‘feel’ the difference between correct and incorrect versions of a song played on the piano. The implementation of sensing systems within soft exoskeletons has been done less frequently and in this case the sensors are utilized to a much greater extent. Experiments were conducted using the exoskeleton independently and while worn by a human subject to show that one of the myriad possibilities of this new device could be to aid relearning how to play the piano after neurotrauma. The fingertip tactile sensor signals were employed to train three different machine learning algorithms: RF, KNN, and ANN. The accuracy of these algorithms was compared to classify the correct and incorrect song variations with and without the human subject.

FIGURE 1

(A) Soft actuator with sensor arrays; (B) CAD model for the new sensorized soft hand exoskeleton (i) top view, (ii) bottom view; (C) The new soft hand exoskeleton (i) top view, (ii) bottom view.

2 Experimental methods

A soft robotic exoskeleton for the hand was designed to offer active flexion and passive extension assistance to all five digits. The exoskeleton was constructed using Dragon Skin-30 material, which provides passive freedom for transverse movements along the palmar plane for each finger. Additionally, flexible sensor arrays comprising sixteen taxels were incorporated into each fingertip to enable pattern recognition of piano-playing performance.

2.1 Sixteen-channel flexible tactile sensor fabrication

The flexible tactile sensor array employs two primary piezoresistive elements: velostat and stainless-steel thread. Velostat is a composite film made from polyethylene and carbon black. The velostat changed conductivity when pressure was applied, so also did the stainless-steel thread exhibit increased conductivity upon the application of force. The application of pressure to the velostat caused the distance between the carbon black particles to decrease and increased their contact points, leading to changes in conductivity across the affected area of the film (Dzedzickis et al., 2020). Similarly, applying force to the stainless-steel thread increased contact between the thread and the film and within the thread, leading to enhanced conductivity (Choudhry et al., 2022). By arranging the threads in a grid pattern that is connected to the conductive film, it is possible to create a sensor array that measures the pressure distribution across the grid’s surface.

The sensor was created by assembling seven layers, which included an outer layer, an adhesive layer, longitudinal wires, a conductive layer, transverse wires, another adhesive layer, and a second outer layer. Plastic wrap was used for the outer layers (MRP Corp, Philadelphia, PA), while the adhesive layer was made of 3 M double-sided adhesive (3 M™ Adhesive Transfer Tape 468MP, United States). The wires were made of a stainless-steel yarn (Stainless Thin Conductive Thread - 2 ply, Adafruit Industries LLC), and the conductive layer was made of velostat, a pressure-sensitive and conductive sheet (Velostat 1361, Adafruit Industries LLC). The assembly process involved cutting rectangles out of the velostat to match the sensor’s size and cutting the plastic wrap and adhesive into a rectangle of 2 cm × 1.5 cm. To aid the assembly, wires were placed in a 3D-printed holster to maintain an even spacing of 0.2 cm apart for the longitudinal wires and 0.3 cm apart for the transverse wires. The wires were then lightly pressed onto one of the adhesive layers, and the assembly was placed on top of the conductive layer. The adhesive was trimmed to match the conductive layer’s size, and the protective layer was removed to expose the other side of the adhesive. The outer layer was wrapped around a finger and then rolled onto the adhesive to prevent air pockets. This process was repeated for the other side of the conductive layer with the transverse wires. The longitudinal wires were soldered and encapsulated in heat shrink tubing separately, while all the wiring along the fingers was encapsulated together in heat-shrink tubing together (Electriduct, 3.18 mm 3:1 Polyolefin Tubing). The soldering of the horizontal channels was performed after the Dragon Skin-30 cured to prevent any unnecessary strain on the steel thread during fabrication. The finished size of the sensor was 1.5 cm × 1 cm.

2.2 Designing the molds to cast the soft exoskeleton

SolidWorks 2019 software was utilized to create molds for the hand exoskeleton, taking into account measurements obtained from prior anthropometric studies (Garrett, 1971). A second mold was produced that allowed for an additional 0.3 cm of width and 0.4 cm of thickness to accommodate the wiring of the sensor array; slots were included for the wire conduits. To ensure proper placement of the actuators, semi-cylindrical rods with a 0.35 cm cavity at one end were designed and inserted into the molds. Caps were printed to cover the openings of the fingers in the first mold, featuring an opening for the rods to maintain their alignment. Both molds and caps were printed using the Ultimaker S5 (Ultimaker, Netherlands) and made of PLA (Overture, PLA, 2.85 mm filament). The rods acted as placeholders for the air channels and were 3D printed using the Ultimaker S5 and dissolvable PVA (Polymaker, PolyDissolve S1, 2.85 mm filament).

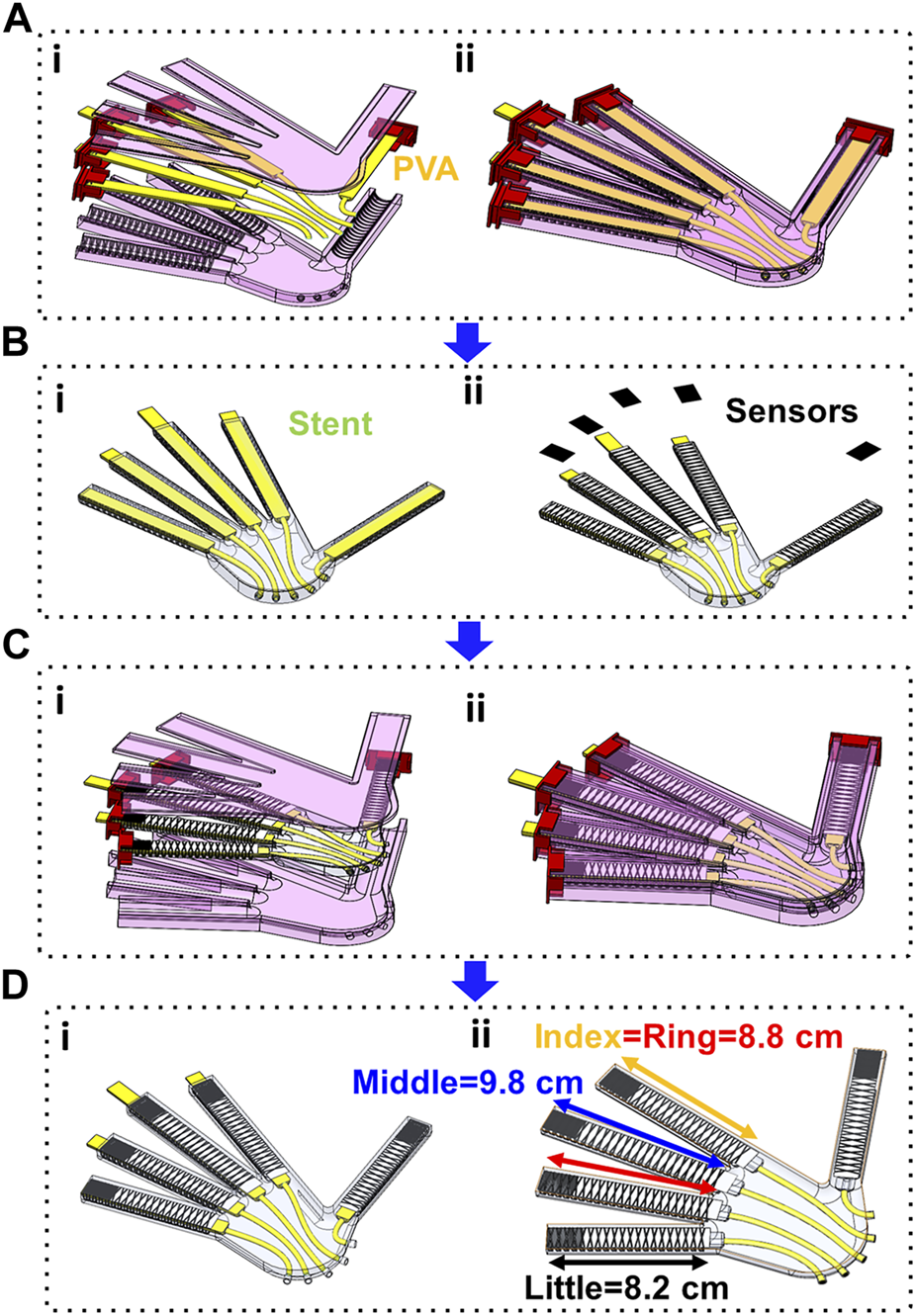

2.3 Fabricating the hand exoskeleton

The process began by inserting polyurethane tubing (1.59 mm × 3.18 mm) through the mold’s holes and then into the stents (Figure 2A). To make it easier to remove later, the stents were wrapped with Teflon (Sklety, PTFE Pipe Sealant Tape). The stents were positioned inside the caps such that their bottom edge aligned with the base of each finger and ran parallel to the molding. Hot glue was also used to temporarily secure the tubing and stents in place as required. The mold was then filled with 120 g of hydrogel material (Smooth-On, Dragon Skin-30) and allowed to be cured for 4 h before cleaning any excess material. Before molding the outer layer of Dragon Skin, each finger had a rectangular piece of fabric (100% Blackout Grommet Banton Window Curtain Panel, S.L. Home Fashions Inc.) placed over the flat base for reinforcement. Carbon fiber tow (1K 3800 MPa 50/100 m Length Carbon Fiber Fibre Tow Filament Yarn Thread Tape, AliExpress) was then wrapped in a helical pattern around the silicone cast. The fiber was wrapped first from the base of the finger to the tip and then from the tip to the base, intersecting itself at the apex of the dorsal side and along the palmar side (Figure 2B (ii)).

FIGURE 2

Manufacturing Process: (A) (i) All printed components in CAD assembly, cast made from mold 1, PVA stents, and tubing, (ii) Complete stage 1 cast, shown after filling with Dragon Skin and sealing shut; (B) (i) Result of stage 1 cast, (ii) Cast is equipped with strain-limiting layers and pressure sensor arrays; (C) (i) Fully equipped cast is lain in the stage 2 mold to encase the strain limiting layers and sensors as part of the exoskeleton, (ii) Complete stage 2 cast, shown after filling with Dragon Skin and sealing shut; (D) (i) Result of the stage 2 cast, (ii) PVA stents are dissolved, and stage 3 casting is done to seal the pressure chambers.

The end of each finger had the tactile sensor arrays installed with all vertical wires already soldered in place. To provide greater mobility during pre-mold placement and actuation, the wires for each sensor were separated into two bundles (transverse and longitudinal) and covered with heat shrink. Each tactile sensor comprised eight wires that ran through the palm to a 40-pin cable connector (Micro SATA wiring, IDE cable). The tubing and wiring were placed in the slots in the mold and filled with 80 g of Dragon Skin, then left to cure for 4 hours (Figure 2C). Once the curing was done, the horizontal wires of the sensors were soldered to the corresponding wires of the 40-pin cable connector.

In stage 3 of the molding process the excess rubber around each finger was trimmed to a uniform length of 3 mm from the sensor array’s edge (Figure 2D). The PVA stents were dissolved in water and the Teflon was removed from the inside surface. Next, the end of each finger was dipped in a cup filled with 10 g of Dragon Skin, and after a 4-h curing period, the excess material was removed to leave a Dragon Skin cap that completely sealed the pneumatic chambers. The palm side of the hand containing the tubing was cut open, leaving a layer of silicone rubber around the tubing, and a zip tie (Outus Nylon Cable Ties) was fastened around the Dragon Skin at the inlet of each finger actuator to ensure an airtight seal. Any gaps were filled with Dragon Skin using an open molding process.

2.4 Soft actuator characterization

To evaluate the force response and hysteresis of the soft actuators, three internal pressures were used for testing. The force of the fingertips (little, ring and middle finger) was measured individually using a 2 kg load cell (LSP-2, Transducer Techniques, Temecula, United States), and each test comprised 16 cycles, with 3 s of actuation followed by 7 s of rest. The 16 cycles were recorded three times at pressures of 0.14, 0.21, and 0.28 MPa. The forces and pressures obtained from these tests were used to plot the hysteresis at each of the three pressures. The maximal force at pressures of 0.14, 0.21, and 0.28 MPa was obtained from the force-pressure relationship for each finger.

2.5 System configuration for perception and action

A Teensy 4.0 microcontroller and a multiplexer board were used to sample the 16 taxels on each of the fingertips. The resistance of each taxel was used in a non-inverting op-amp circuit and the output voltage of the op-amp circuit was measured for each of the 16 taxels on every digit of the exoskeleton at 74 Hz. To cycle through the 80 available taxels, the multiplexer (Sundaram et al., 2019) was used, and the output voltage from the op-amp circuit was sampled ten times per taxel and averaged by the Teensy. The data was then published to a Robot Operating System (ROS) network from the Teensy, and Simulink was linked to the same ROS network for real-time data visualization and storage (Figure 3A).

FIGURE 3

Control system. (A) The control scheme for the exoskeleton and sensors; (B) The valve control signals of each finger for playing “Mary Had A Little Lamb.” Illustrative examples are shown of the correct song and the song variations that had errors introduced.

Simulink was used to implement the 10 song variations by controlling the valves of the four fingers. The control inputs for the four valves were created with the Simulink signal builder block and set as four separate digital outputs. These outputs were then used as a 1 V input to a MOSFET (NTE2389), which controlled the 24 V signals for the 5 Festo solenoid valves (MHE2-MS1H-3) that were responsible for controlling the fingers. A single pressure reservoir at 0.28 MPa was connected to all five valves, and the open/closed state of the valves was determined by the 1 V outputs from Simulink, which controlled the pressure applied within the pneumatic actuators.

2.6 Exoskeleton to (Re)Learn playing the piano

To assess the potential of using the smart hand exoskeleton for rehabilitation purposes, we programmed it to play ten different versions of the well-known tune “Mary Had a Little Lamb.” To introduce variations in the performance, we created a pool of 12 different types of errors that could occur at the beginning or end of a note, or due to timing errors that were either premature or delayed, and that persisted for 0.1, 0.2, or 0.3 s. By combining these error types, we generated 12 unique error scenarios that were included in the different song variations. We created three groups of three songs each based on the number of errors present: the first group had 75% of the keys played with some type of error, the second group had 50%, and the third group had 25%. Within each group, we created three variations, each with errors on the same note but with the error type randomly selected from the pool of options. The ten different song variations consisted of the three groups of three variations each, plus the correct song played with no errors (Figure 3B).

The study included 20 repetitions of each of the ten song variations in two settings: with the hand exoskeleton worn by a human subject (25-year-old male, able-bodied) and with the exoskeleton playing the songs independently (without a person). The study was conducted with institutional review board oversight and the human subject provided written informed consent in accordance with the Declaration of Helsinki. The resulting datasets from the 200 song repetitions played were used to train three machine learning classification algorithms (KNN, RF, ANN), which were then evaluated based on their ability to distinguish between the different song variations. This approach could potentially provide real-time feedback to individuals recovering from a stroke or other neurotrauma who are (re)learning to play a musical instrument.

2.7 Machine learning classification methods

To design the machine learning problem, 10 different song alterations were programmed for the hand to perform (Figure 3B). For each song alteration, 20 repetitions were collected to perform machine-learning tasks. The collected data were preprocessed and labeled to train the RF, KNN, and ANN algorithms to separate the data into 10 different classes corresponding to the 10 song variations. The KNN algorithm was used to calculate the shortest distance between a query and all the points in the features and select the specified k number closest to the query and vote for the most frequent class label (Zhang et al., 2017a). The RF algorithm resembled a tree structure that can be trained separately to perform the classification (Breiman, 2001). The decision trees were the main components of this algorithm. In general, the more trees in the forest the more robust the prediction which leads to higher reported accuracy. The last algorithm was the ANN (Basu et al., 2010) which was trained and evaluated using cross-entropy and confusion matrices. A two-layer feed forward network with sigmoid hidden and softmax output neurons was used to classify the collected data into ten classes for the different song alteration classes. The network was trained with scaled conjugate gradient back propagation.

To train the KNN and the RF classifiers, the collected datasets were divided into two sets: the training dataset, which contains 80% of the data, and the testing dataset, which contains 20% of the collected data. However, to train and test the ANN, the collected data were divided into 3 categories: 70% for training, 15% for validation, and 15% for testing. The training dataset was presented to the network during training, and the network was adjusted according to its error. The validation dataset was used to measure network generalization and to halt training when generalization stopped improving. The testing dataset did not affect training and so provided an independent measure of network performance during and after training. Training automatically stopped when generalization stopped improving, as indicated by an increase in the cross-entropy error of the validation samples.

To verify the accuracy of each algorithm, they were run ten times for each song variation with a randomized selection of the training and testing data. The mean and standard deviation of the classification accuracy were calculated for each algorithm. A two-factor analysis of variance (ANOVA) was performed. The first independent variable was the classification algorithm (RF, KNN, ANN). The second independent variable was whether the hand exoskeleton was worn by a person or used independently. The classification accuracy of the machine learning algorithms was the dependent variable. A p-value of 0.01 was assumed for statistical significance.

3 Results

3.1 Performance of soft actuators

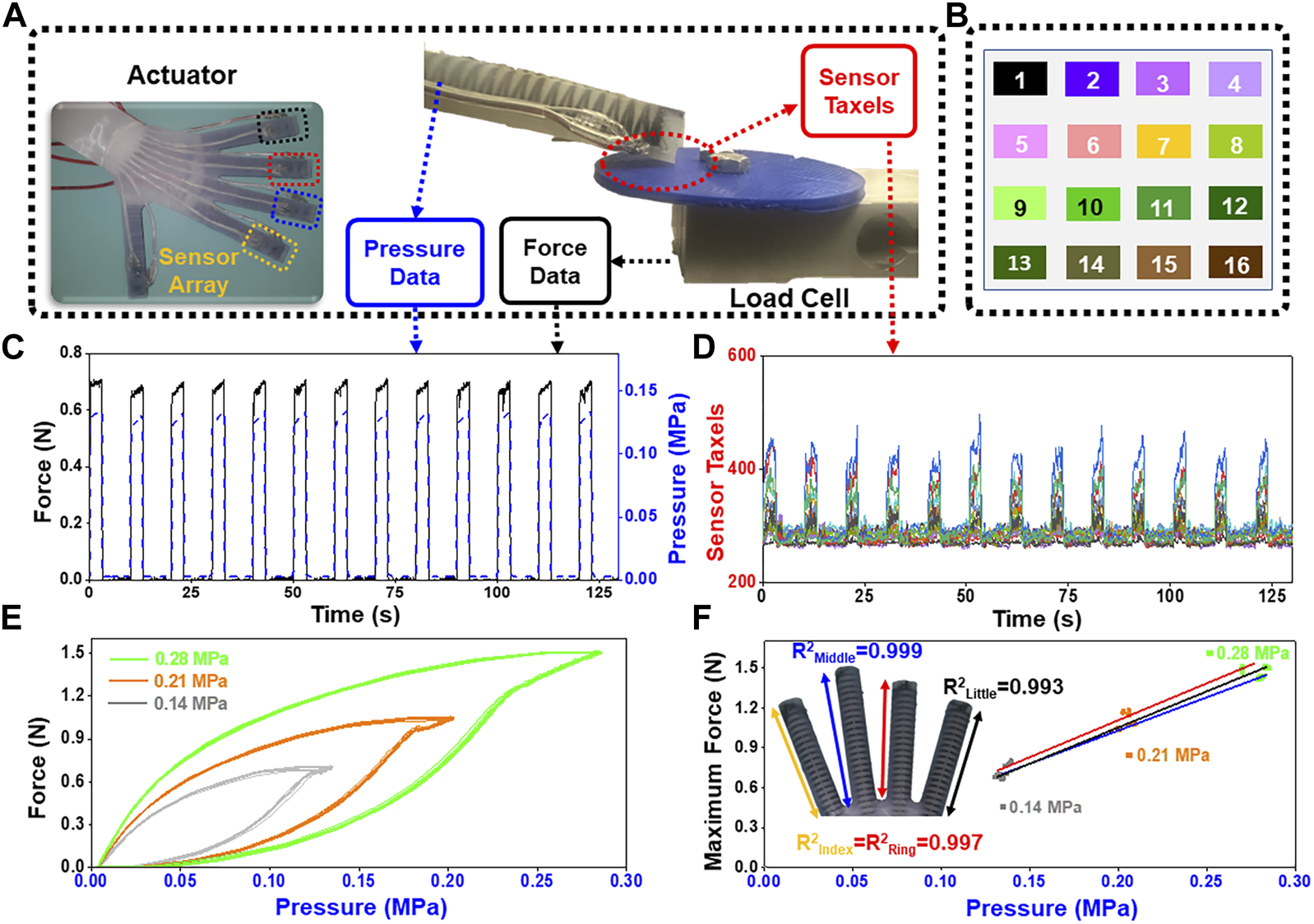

The response of each soft actuator was highly repeatable with each of the three applied pressures (Figure 4). With an internal pressure of 0.14 MPa, the average maximum fingertip force and standard deviation were 0.72 ± 0.04 N. With an internal pressure of 0.21 MPa, the mean and standard deviation were 1.10 ± 0.05 N, and at 0.28 MPa pressure, the mean and standard deviation were 1.47 ± 0.04 N (Figures 4A, C, E). Illustrative data of the sixteen taxels in a fingertip (Figure 4D) show the response of the tactile sensor when the finger was internally pressurized to repetitively apply forces to the load cell (Figure 4D). The hysteresis of the actuators follows a characteristic trend with each internal pressure (Figure 4E). The maximal generated fingertip forces had a near linear correlation to increasing pressure over the tested range (Figure 4F). A linear model that was fit to these data had an R2 value of 0.993 for the little finger, 0.997 for the ring finger, and 0.999 for the middle finger.

FIGURE 4

(A) The hand exoskeleton was equipped with an internal pressure sensor and applied forces to the load cell; (B) Color map shows the spatial location of the 16 taxels on the sensor of each finger; (C) Force measured by the load cell for the little finger at an internal pressure of 0.14 MPa; (D) Corresponding taxel signals at the pressure of 0.14 MPa; (E) Force-pressure relationship using 16 actuation cycles of the little finger for three different internal pressures; (F) the maximal generated fingertip forces correlated almost linearly to increasing pressure over the tested range.

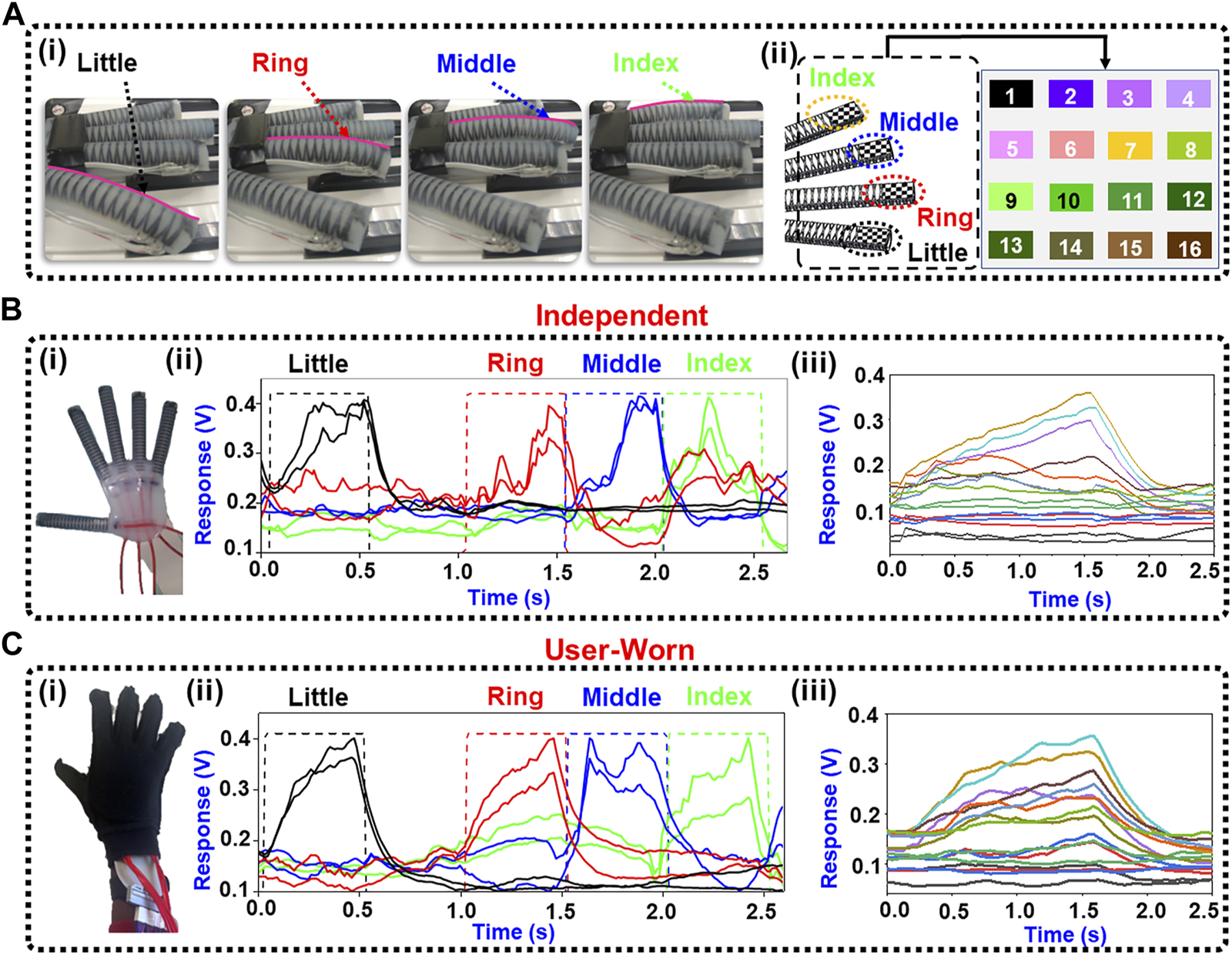

3.2 Feeling the beat: classification accuracy for piano playing

The soft robotic hand exoskeleton played the 10 song variations independently and while being worn by a user. Figure 5A(i) displays the hand operating independently to press the keyboard. The independent data are shown first in Figure 5B, followed by the user-worn data for comparison (Figure 5C). Figure 5B(ii) and Figure 5C(ii) show two illustrative taxels from each finger for clarity. The normalized response of all 16 taxels from the little finger in a single keystroke is shown in Figure 5B(iii) and Figure 5C(iii) for the independent and user-worn situations, respectively.

FIGURE 5

Exoskeleton playing the piano independently and while being worn. (A) (i) Actuation of each finger while playing a song. (ii) Color map of the tactile sensor showing the locations of taxels on each finger; (B) (i) The exoskeleton as used independently, (ii) Two illustrative taxels for each finger are shown while playing the song. (iii) The response of all the taxels for the little finger during a single keystroke; (C) (i) The exoskeleton was inserted into a glove and worn by the human subject. (ii) Two illustrative taxels for each finger. (iii) The response of all the taxels for the little finger from a single keystroke.

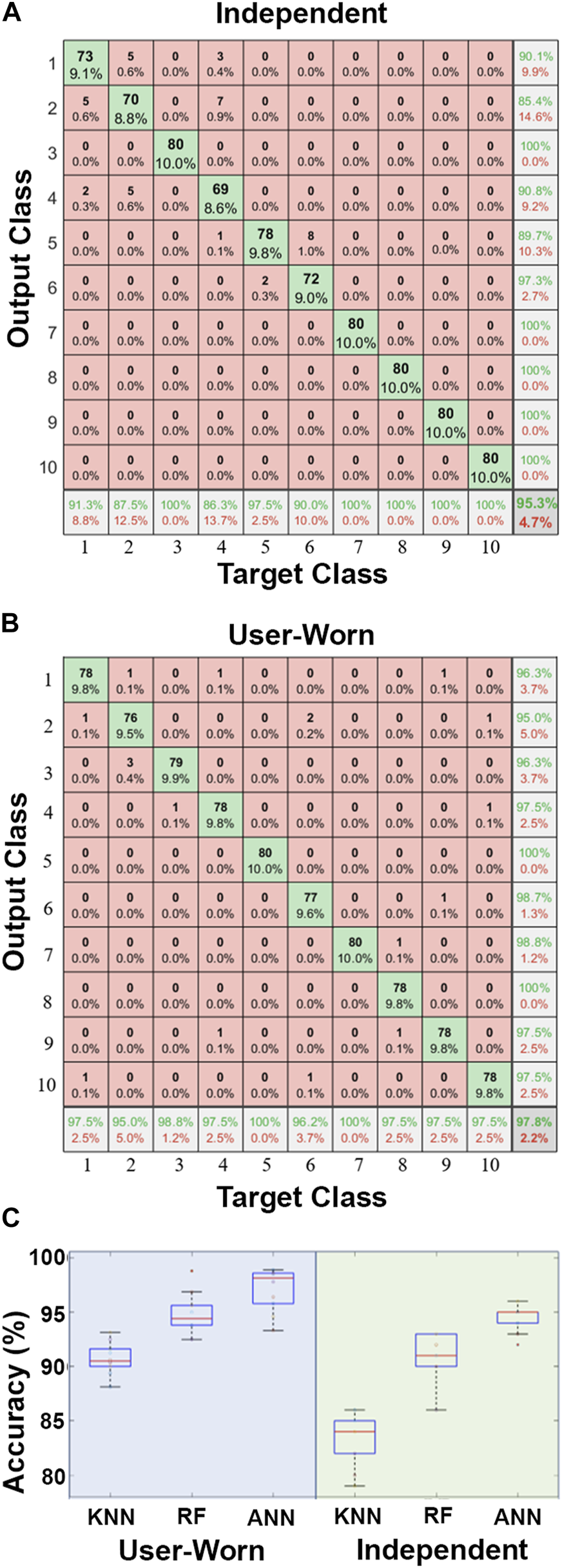

The ANN achieved the highest classification accuracy with 94.60% ± 1.26% for independent use and 97.13% ± 2.00% when worn by a person (Figures 6A, B). The RF had a classification accuracy of 91.00% ± 2.11% for independent use and 94.77% ± 1.96% when worn, while the KNN had the lowest classification accuracy with 83.30% ± 2.45% independently and 90.70% ± 1.48% when worn (Figure 6C). Results from the two-factor ANOVA indicated that the classification algorithms were significantly different from each other (p < 0.01). There was a statistically significant difference in accuracy between independent and worn usage (p < 0.01), and there was also an interaction effect between the two independent variables (p < 0.01).

FIGURE 6

(A) Illustrative confusion matrices for the ANN showed the accuracy for classifying the 10 different song alterations during independent use and (B) while user-worn; (C) Comparison of 3 classification algorithms during independent use and with a human subject wearing the soft robotic exoskeleton. The ANN had significantly higher accuracy than the KNN and RF algorithms.

4 Discussion

We designed a novel soft exoskeleton using 3D printed PVA stents and hydrogel casting to integrate 5 actuators into a single wearable device that conforms to the user’s hand. The fabrication process is new, and the form factor could be customized to the unique anatomy of individual subjects with use of 3D scanning technology or CT scans. We also developed a flexible tactile sensor array that was embedded into each fingertip of the exoskeleton, with each array containing 16 taxels. To our knowledge, all these features have yet to be combined into a single hand exoskeleton. We used artificial intelligence to ‘feel’ the difference between correct and incorrect versions of a song played on the piano as one illustrative demonstration of the potential for the smart exoskeleton to be used as a rehabilitation tool to improve hand dexterity.

Our approach to designing the hand exoskeleton involved using 3D printing techniques to create a complete soft robot that could be based on the patient’s needs. Zhao et al. (2016) incorporated a 3D-printed rigid palm and wrist into a fiber-reinforced soft prosthetic hand. Our complete soft palm and wrist design approach could further improve the effectiveness of their application. Furthermore, the use of 3D-printed PVA stents in our new soft robotic hand provided an added advantage. Compared to the fabric-based soft robotic glove developed by Cappello et al. (2018) for individuals with upper limb paralysis following spinal cord injury, our approach involved fewer fabricated layers, making the process less complicated. The PVA stents can be easily dissolved in water to aid in the removal of Teflon from the inside surface. Additionally, we embedded the flexible sensor array during the fabrication process to allow for tactile sensations, which could be readily adjusted based on the user’s dimensions. Fras and Althoefer (2018) proposed a soft pneumatic hand capable of passively adapting to grasped objects due to its mechanical compliance. The inclusion of sensors within a design such as this would aid in the control of the device.

In the past, other soft robotic actuators have been used to play the piano; however, ours is the only one that has demonstrated the capability to ‘feel’ the difference between correct and incorrect versions of the same song. Takahashi et al. (2020) developed a soft hand exoskeleton that allowed pianists to move their fingers more easily and quickly, without imposing as many limitations as possible on their voluntary movements. The actuator also reduced variability in the force of the keypresses even when the pianists were not wearing gloves. However, our soft robotic hand actuator utilized machine learning trained by tactile sensor arrays that could be used to provide instructive feedback to users and useful data for clinicians. This makes it an ideal tool to assist disabled individuals in relearning how to play the piano correctly. This unique capability is enabled by the novel design at the intersection of flexible tactile sensors, soft actuators, and artificial intelligence that would be handy when applied to many other tasks beyond musical instruments. Hoang et al. (2021) utilized a random access pneumatic memory device to regulate the soft robotic fingers that play the piano. This approach helped to reduce the amount of hardware required to control multiple independent actuators in pneumatic soft robots. Wang et al. (2022) developed and produced a three-fingered soft-rigid hybrid hand system with variable stiffness in each finger. Their design enabled diverse compliant behaviors for pressing piano keys. In comparison, our smart hand exoskeleton can expand their range of applications by incorporating sensing technology and artificial intelligence to characterize the interaction between the environment and the robot.

The three classification algorithms we employed in this paper had significantly different accuracies. The classification performance of various algorithms can differ depending on the task, dataset, and available resources. In this study, the classification accuracy of the ANN surpassed that of the KNN and RF for several reasons. First, ANNs possess many internal parameters (weights and biases) within interconnected neurons and layers, granting them the flexibility to fit highly complex datasets with superior classification accuracy compared to other models like KNN and RF, which may achieve lower accuracy in such cases (Breiman, 2001; Zhang et al., 2017b). Additionally, ANNs can automatically learn intricate feature representations from raw datasets (Bergen et al., 2019). By learning hierarchical representations through multiple layers, ANNs can capture complex patterns in the data, whereas KNN and RF rely on simpler distance metrics that may struggle to capture such complexities. ANNs excel in learning nonlinear relationships between input features and output predictions, enabling them to model intricate decision boundaries and capture more intricate patterns. However, it is worth noting that the performance of these algorithms depends on the specific task, dataset characteristics, and hyperparameter tuning. In certain cases, KNN or RF may be more suitable or outperform ANN, particularly for small datasets or where interpretability is prioritized. Careful consideration of the trade-offs and characteristics of each algorithm is crucial when selecting the most appropriate one for a given problem.

All three classification algorithms had higher accuracy when the exoskeleton was worn compared to when used independently (Figure 6C). This could potentially be caused by better pressure distribution across the surface of the tactile sensor when it was worn. When used independently, the rigid piano key made direct contact with the taxels near the sensor tip, which inherently causes pressure concentrations in localized areas. In contrast, when the sensor was in contact with a human hand, the higher compliance was more likely to distribute the pressure more evenly across the surface of the tactile sensor to consistently activate more taxels. These likely created a greater difference between activation and rest states that was more recognizable by the machine learning algorithms when the exoskeleton was worn by a person.

The successful detection of song errors can provide measurable outcomes for patients in their rehabilitation programs. Although this study’s application was for playing a song, the approach could be applied to myriad tasks of daily life. Thus, the device could facilitate intricate rehabilitation programs customized for each patient. The current machine learning algorithm can successfully determine the percentage error of a certain song as well as identify key presses that are out of time. Clinicians could use the data to develop personalized action plans to pinpoint patient weaknesses, which may present themselves as sections of the song that are consistently played erroneously and can be used to determine which motor functions require improvement. As patients progress, more challenging songs could be prescribed by the rehabilitation team in a game-like progression to provide a customizable path to improvement.

Furthermore, the rehabilitation approach using smart exoskeletons demonstrated in this study can be extended to a wide range of injuries particularly if used in conjunction with other devices designed for the elbow and shoulder (O’Neill et al., 2017; Nassour et al., 2021). Additionally, a larger tactile sensor could readily provide functionality for assessing progress towards achieving a normal force pattern for shoulder and elbow exoskeletons. Soft actuators offer the advantages of conformability, ergonomics, and design versatility through 3D printing and casting methods. Corresponding flexible sensing technologies are essential for the use of soft exoskeleton technologies. The development and implementation of new sensor arrays within these exoskeletons will significantly expand their range of applications and improve their effectiveness.

The effect of many different users’ biomechanics on the soft exoskeleton’s response was not investigated in this paper, though it will be added as an avenue of future investigation. In future works, we have also considered numerous potential ways to convey training feedback to the user. These include visual feedback with a smartphone (Yin et al., 2021) and haptic feedback with a wearable device like a smartwatch (Heng et al., 2022). We have also considered adding vibrotactile feedback within future versions of the smart exoskeleton that could vibrate to alert the users of errors (Pan et al., 2018).

5 Conclusion

A new soft exoskeleton was designed with integrated tactile sensor arrays that were used for the novel application of ‘feeling’ the differences between correct and incorrect versions of a song played on the piano. The RF, KNN, and ANN algorithms were trained by data from the 80 taxels in the four fingertip tactile sensors that each had 16 taxels. The ANN algorithm achieved the highest level of accuracy, attaining a success rate of 97.13% ± 2.00% when tested with a human subject, and 94.60% ± 1.26% while used independently. Furthermore, we introduced a new hand exoskeleton fabrication technique that used 3D printed PVA stents and hydrogel casting to incorporate 5 actuators into a complete wearable device. The smart soft exoskeleton demonstrated high classification accuracy and could be used in the future to guide disabled people on fully customized rehabilitation programs.

Statements

Data availability statement

The original contributions presented in the study are included in the article/Supplementary Material, further inquiries can be directed to the corresponding author.

Ethics statement

The studies involving human participants were reviewed and approved by the Florida Atlantic University Institutional Review Board. The patients/participants provided their written informed consent to participate in this study.

Author contributions

Conceptualization, ML and EE; methodology, ML, RP, MA, JJ, DD, and EE; software, ML, RP, MA, JJ, DD, and EE; validation, ML, RP, and MA; formal analysis, ML, RP, and MA; investigation, ML, RP, and EE; resources, EE; data curation, ML, RP, and MA; writing—original draft preparation, ML, RP, DD, MA, HC, and EE; writing—review and editing, ML, RP, MA, HC, and EE; visualization, ML, RP, and EE; supervision, EE; project administration, EE; funding acquisition, EE. All authors contributed to the article and approved the submitted version.

Funding

Research reported in this publication was supported by the National Institute of Biomedical Imaging and Bioengineering of the National Institutes of Health under Award Number R01EB025819. This research was also supported by the National Institute of Aging under 3R01EB025819-04S1 and National Science Foundation awards #2205205 and #1950400. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health or the National Science Foundation. This research was supported in part by a seed grant from the College of Engineering at FAU and I-SENSE.

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Supplementary material

The Supplementary Material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/frobt.2023.1212768/full#supplementary-materialhttps://www.frontiersin.org/articles/10.3389/frobt.2023.1212768/full#supplementary-material

References

1

Abd M. A. Paul R. Aravelli A. Bai O. Lagos L. Lin M. et al (2021). Hierarchical tactile sensation integration from prosthetic fingertips enables multi-texture surface recognition. Sensors21, 4324. 10.3390/s21134324

2

Al-Fahaam H. Davis S. Nefti-Meziani S. (2018). The design and mathematical modelling of novel extensor bending pneumatic artificial muscles (EBPAMs) for soft exoskeletons. Robotics Aut. Syst.99, 63–74. 10.1016/j.robot.2017.10.010

3

Al-Fahaam H. Davis S. Nefti-Meziani S. (2016). “Wrist rehabilitation exoskeleton robot based on pneumatic soft actuators,” in 2016 international conference for students on applied engineering (ICSAE) (IEEE), 491–496.

4

Ambrosini E. Ferrante S. Rossini M. Molteni F. Gföhler M. Reichenfelser W. et al (2014). Functional and usability assessment of a robotic exoskeleton arm to support activities of daily life. Robotica32, 1213–1224. 10.1017/s0263574714001891

5

Andrikopoulos G. Nikolakopoulos G. Manesis S. (2015). “Motion control of a novel robotic wrist exoskeleton via pneumatic muscle actuators,” in 2015 IEEE 20th conference on emerging technologies and factory automation (ETFA) (IEEE), 1–8.

6

Arata J. Ohmoto K. Gassert R. Lambercy O. Fujimoto H. Wada I. (2013). “A new hand exoskeleton device for rehabilitation using a three-layered sliding spring mechanism,” in 2013 IEEE international conference on robotics and automation (IEEE), 3902–3907.

7

Aubin P. Petersen K. Sallum H. Walsh C. Correia A. Stirling L. (2014). A pediatric robotic thumb exoskeleton for at-home rehabilitation: The isolated orthosis for thumb actuation (IOTA). Int. J. Intelligent Comput. Cybern.7, 233–252. 10.1108/ijicc-10-2013-0043

8

Basu J. K. Bhattacharyya D. Kim T.-H. (2010). Use of artificial neural network in pattern recognition. Int. J. Softw. Eng. Its Appl.4.

9

Bergen K. J. Johnson P. A. De Hoop M. V. Beroza G. C. (2019). Machine learning for data-driven discovery in solid Earth geoscience. , 363, eaau0323, 10.1126/science.aau0323

10

Breiman L. (2001). Random forests. Mach. Learn.45, 5–32. 10.1023/a:1010933404324

11

Cappello L. Meyer J. T. Galloway K. C. Peisner J. D. Granberry R. Wagner D. A. et al (2018). Assisting hand function after spinal cord injury with a fabric-based soft robotic glove. J. NeuroEngineering Rehabilitation15, 59–10. 10.1186/s12984-018-0391-x

12

Choudhry N. A. Shekhar R. Rasheed A. Arnold L. Wang L. (2022). Effect of conductive thread and stitching parameters on the sensing performance of stitch-based pressure sensors for smart textile applications. IEEE Sensors J.22, 6353–6363. 10.1109/jsen.2022.3149988

13

Deimel R. Brock O. (2016). A novel type of compliant and underactuated robotic hand for dexterous grasping. Int. J. Rob. Res.35, 161–185. 10.1177/0278364915592961

14

Dzedzickis A. Sutinys E. Bucinskas V. Samukaite-Bubniene U. Jakstys B. Ramanavicius A. et al (2020). Polyethylene-carbon composite (Velostat®) based tactile sensor. Polymers12, 2905. 10.3390/polym12122905

15

Fras J. Althoefer K. (2018). “Soft biomimetic prosthetic hand: Design, manufacturing and preliminary examination,” in 2018 IEEE/RSJ international conference on intelligent robots and systems (IROS) (IEEE), 1–6.

16

Garrett J. W. (1971). The adult human hand: Some anthropometric and biomechanical considerations. Hum. Factors13, 117–131. 10.1177/001872087101300204

17

Gopura R. a. C. Kiguchi K. (2008). “EMG-based control of an exoskeleton robot for human forearm and wrist motion assist,” in 2008 IEEE international conference on robotics and automation (IEEE), 731–736.

18

Gopura R. Bandara D. Kiguchi K. Mann G. K. (2016). Developments in hardware systems of active upper-limb exoskeleton robots: A review. Robotics Aut. Syst.75, 203–220. 10.1016/j.robot.2015.10.001

19

Gordon A. M. Bleyenheuft Y. Steenbergen B. (2013). Pathophysiology of impaired hand function in children with unilateral cerebral palsy. Dev. Med. Child Neurology55, 32–37. 10.1111/dmcn.12304

20

Haghshenas-Jaryani M. Carrigan W. Nothnagle C. Wijesundara M. B. (2016). “Sensorized soft robotic glove for continuous passive motion therapy,” in 2016 6th IEEE international conference on biomedical robotics and biomechatronics (BioRob) (IEEE), 815–820.

21

Heng W. Solomon S. Gao W. (2022). Flexible electronics and devices as human–machine interfaces for medical robotics. Adv. Mat.34, 2107902. 10.1002/adma.202107902

22

Herder J. L. Antonides T. Cloosterman M. Mastenbroek P. L. (2006). Principle and design of a mobile arm support for people with muscular weakness. J. Rehabilitation Res. Dev.43, 591–604. 10.1682/jrrd.2006.05.0044

23

Hoang S. Karydis K. Brisk P. Grover W. H. (2021). A pneumatic random-access memory for controlling soft robots. Plos One16, e0254524. 10.1371/journal.pone.0254524

24

Hussain I. Salvietti G. Spagnoletti G. Prattichizzo D. (2016). The soft-sixthfinger: A wearable emg controlled robotic extra-finger for grasp compensation in chronic stroke patients. IEEE Robotics Automation Lett.1, 1000–1006. 10.1109/lra.2016.2530793

25

In H. Kang B. B. Sin M. Cho K.-J. (2015). Exo-glove: A wearable robot for the hand with a soft tendon routing system. IEEE Robot. Autom. Mag.22, 97–105.

26

Iwamuro B. T. Cruz E. G. Connelly L. L. Fischer H. C. Kamper D. G. (2008). Effect of a gravity-compensating orthosis on reaching after stroke: Evaluation of the therapy assistant WREX. Archives Phys. Med. rehabilitation89, 2121–2128. 10.1016/j.apmr.2008.04.022

27

Jarrassé N. Morel G. (2011). Connecting a human limb to an exoskeleton. IEEE Trans. Robotics28, 697–709. 10.1109/tro.2011.2178151

28

Jarrassé N. Proietti T. Crocher V. Robertson J. Sahbani A. Morel G. et al (2014). Robotic exoskeletons: A perspective for the rehabilitation of arm coordination in stroke patients. Front. Hum. Neurosci.8, 947. 10.3389/fnhum.2014.00947

29

Jarrett C. Mcdaid A. (2017). Robust control of a cable-driven soft exoskeleton joint for intrinsic human-robot interaction. IEEE Trans. Neural Syst. Rehabilitation Eng.25, 976–986. 10.1109/tnsre.2017.2676765

30

Kang B. B. Lee H. Jeong U. Chung J. Cho K.-J. (2016). “Development of a polymer-based tendon-driven wearable robotic hand,” in 2016 IEEE international conference on robotics and automation (ICRA) (IEEE), 3750–3755.

31

Kerr L. Jewell V. D. Jensen L. (2020). Stretching and splinting interventions for poststroke spasticity, hand function, and functional tasks: A systematic review. Am. J. Occup. Ther.74, 7405205050p1–7405205050p15. 10.5014/ajot.2020.029454

32

Kiguchi K. Hayashi Y. (2012). An EMG-based control for an upper-limb power-assist exoskeleton robot. IEEE Trans. Syst. Man, Cybern. Part B Cybern.42, 1064–1071. 10.1109/tsmcb.2012.2185843

33

Lai C.-H. Sung W.-H. Chiang S.-L. Lu L.-H. Lin C.-H. Tung Y.-C. et al (2019). Bimanual coordination deficits in hands following stroke and their relationship with motor and functional performance. J. Neuroengineering Rehabilitation16, 101–114. 10.1186/s12984-019-0570-4

34

Lambelet C. Lyu M. Woolley D. Gassert R. Wenderoth N. (2017). “The eWrist—a wearable wrist exoskeleton with sEMG-based force control for stroke rehabilitation,” in 2017 international conference on rehabilitation robotics (ICORR) (IEEE), 726–733.

35

Leonardis D. Barsotti M. Loconsole C. Solazzi M. Troncossi M. Mazzotti C. et al (2015). An EMG-controlled robotic hand exoskeleton for bilateral rehabilitation. IEEE Trans. Haptics8, 140–151. 10.1109/toh.2015.2417570

36

Li H. Yao J. Zhou P. Chen X. Xu Y. Zhao Y. (2020). High-force soft pneumatic actuators based on novel casting method for robotic applications. Sensors Actuators A Phys.306, 111957. 10.1016/j.sna.2020.111957

37

Li N. Yang T. Yu P. Chang J. Zhao L. Zhao X. et al (2018). Bio-inspired upper limb soft exoskeleton to reduce stroke-induced complications. Bioinspiration Biomimetics13, 066001. 10.1088/1748-3190/aad8d4

38

Li Z. Wang B. Sun F. Yang C. Xie Q. Zhang W. (2013). sEMG-based joint force control for an upper-limb power-assist exoskeleton robot. IEEE J. Biomed. Health Inf.18, 1043–1050. 10.1109/JBHI.2013.2286455

39

Lin M. Vatani M. Choi J.-W. Dilibal S. Engeberg E. D. (2020). Compliant underwater manipulator with integrated tactile sensor for nonlinear force feedback control of an SMA actuation system. Sensors Actuators A Phys.315, 112221. 10.1016/j.sna.2020.112221

40

Lum P. S. Godfrey S. B. Brokaw E. B. Holley R. J. Nichols D. (2012). Robotic approaches for rehabilitation of hand function after stroke. Am. J. Phys. Med. Rehabilitation91, S242–S254. 10.1097/phm.0b013e31826bcedb

41

Mohamaddan S. Komeda T. (2010). “Wire-driven mechanism for finger rehabilitation device,” in 2010 IEEE international conference on mechatronics and automation (IEEE), 1015–1018.

42

Nassour J. Zhao G. Grimmer M. (2021). Soft pneumatic elbow exoskeleton reduces the muscle activity, metabolic cost and fatigue during holding and carrying of loads. Sci. Rep.11, 1–14. 10.1038/s41598-021-91702-5

43

Nycz C. J. Bützer T. Lambercy O. Arata J. Fischer G. S. Gassert R. (2016). Design and characterization of a lightweight and fully portable remote actuation system for use with a hand exoskeleton. IEEE Robotics Automation Lett.1, 976–983. 10.1109/lra.2016.2528296

44

Nycz C. J. Delph M. A. Fischer G. S. (2015). “Modeling and design of a tendon actuated soft robotic exoskeleton for hemiparetic upper limb rehabilitation,” in 2015 37th annual international conference of the IEEE engineering in medicine and biology society (EMBC) (IEEE), 3889–3892.

45

O'neill C. T. Phipps N. S. Cappello L. Paganoni S. Walsh C. J. (2017). “A soft wearable robot for the shoulder: Design, characterization, and preliminary testing,” in 2017 international conference on rehabilitation robotics (ICORR) (IEEE), 1672–1678.

46

Pan Y.-T. Lamb Z. Macievich J. Strausser K. A. (2018). “A vibrotactile feedback device for balance rehabilitation in the EksoGT™ robotic exoskeleton,” in 2018 7th IEEE international conference on biomedical robotics and biomechatronics (biorob) (IEEE), 569–576.

47

Patel P. Lodha N. (2019). Dynamic bimanual force control in chronic stroke: Contribution of non-paretic and paretic hands. Exp. Brain Res.237, 2123–2133. 10.1007/s00221-019-05580-5

48

Polotto A. Modulo F. Flumian F. Xiao Z. G. Boscariol P. Menon C. (2012). “Index finger rehabilitation/assistive device,” in 2012 4th IEEE RAS and EMBS international conference on biomedical robotics and biomechatronics (BioRob) (IEEE), 1518–1523.

49

Polygerinos P. Wang Z. Galloway K. C. Wood R. J. Walsh C. (2015). Soft robotic glove for combined assistance and at-home rehabilitation. Rob. Auton. Syst.73, 135–143. 10.1016/j.robot.2014.08.014

50

Pons J. L. (2010). Rehabilitation exoskeletal robotics. IEEE Eng. Med. Biol. Mag.29, 57–63. 10.1109/memb.2010.936548

51

Rahman M. H. Rahman M. J. Cristobal O. Saad M. Kenné J.-P. Archambault P. S. (2015). Development of a whole arm wearable robotic exoskeleton for rehabilitation and to assist upper limb movements. Robotica33, 19–39. 10.1017/s0263574714000034

52

Rahman T. Sample W. Jayakumar S. King M. M. Wee J. Y. Seliktar R. et al (2006). Passive exoskeletons for assisting limb movement. J. Rehabilitation Res. Dev.43, 583. 10.1682/jrrd.2005.04.0070

53

Squeri V. Masia L. Giannoni P. Sandini G. Morasso P. (2013). Wrist rehabilitation in chronic stroke patients by means of adaptive, progressive robot-aided therapy. IEEE Trans. Neural Syst. Rehabilitation Eng.22, 312–325. 10.1109/tnsre.2013.2250521

54

Sundaram S. Kellnhofer P. Li Y. Zhu J.-Y. Torralba A. Matusik W. J. N. (2019). Learning the signatures of the human grasp using a scalable tactile glove. 569, 698–702. 10.1038/s41586-019-1234-z

55

Takahashi N. Furuya S. Koike H. (2020). Soft exoskeleton glove with human anatomical architecture: Production of dexterous finger movements and skillful piano performance. IEEE Trans. Haptics13, 679–690. 10.1109/toh.2020.2993445

56

Tran P. Jeong S. Herrin K. R. Desai J. P. (2021). Review: Hand exoskeleton systems, clinical rehabilitation practices, and future prospects. IEEE Trans. Med. Robotics Bionics3, 606–622. 10.1109/tmrb.2021.3100625

57

Wang H. Howison T. Hughes J. Abdulali A. Iida F. (2022). “Data-driven simulation framework for expressive piano playing by anthropomorphic hand with variable passive properties,” in 2022 IEEE 5th international conference on soft robotics (RoboSoft) (IEEE), 300–305.

58

Wang J. Chortos A. (2022). Control strategies for soft robot systems. Adv. Intell. Syst.4, 2100165. 10.1002/aisy.202100165

59

Yang J. Xie H. Shi J. (2016). A novel motion-coupling design for a jointless tendon-driven finger exoskeleton for rehabilitation. Mech. Mach. Theory99, 83–102. 10.1016/j.mechmachtheory.2015.12.010

60

Yeo J. C. Yap H. K. Xi W. Wang Z. Yeow C. H. Lim C. T. (2016). Flexible and stretchable strain sensing actuator for wearable soft robotic applications. Adv. Mater. Technol.1, 1600018. 10.1002/admt.201600018

61

Yin J. Hinchet R. Shea H. Majidi C. (2021). Wearable soft technologies for haptic sensing and feedback. Adv. Funct. Mat.31, 2007428. 10.1002/adfm.202007428

62

Zhang S. Li X. Zong M. Zhu X. Cheng D. (2017a). Learning k for knn classification. ACM Trans. Intelligent Syst.8, 1–19. 10.1145/2990508

63

Zhang S. Li X. Zong M. Zhu X. Cheng D. (2017b). Learning k for knn classification. ACM Trans. Intell. Syst. Technol.8, 1–19. 10.1145/2990508

64

Zhao H. O’brien K. Li S. Shepherd R. F. (2016). Optoelectronically innervated soft prosthetic hand via stretchable optical waveguides. Sci. Robotics1, eaai7529. 10.1126/scirobotics.aai7529

Summary

Keywords

soft robot, exoskeleton, sensor array, hand, artificial intelligence, 3D print

Citation

Lin M, Paul R, Abd M, Jones J, Dieujuste D, Chim H and Engeberg ED (2023) Feeling the beat: a smart hand exoskeleton for learning to play musical instruments. Front. Robot. AI 10:1212768. doi: 10.3389/frobt.2023.1212768

Received

26 April 2023

Accepted

05 June 2023

Published

29 June 2023

Volume

10 - 2023

Edited by

Emanuele Lindo Secco, Liverpool Hope University, United Kingdom

Reviewed by

Mahdi Haghshenas-Jaryani, New Mexico State University, United States

Daniele Cafolla, Swansea University, United Kingdom

Updates

Copyright

© 2023 Lin, Paul, Abd, Jones, Dieujuste, Chim and Engeberg.

This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Erik D. Engeberg, eengeberg@fau.edu

Disclaimer

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.