Abstract

A review of 35 peer reviewed articles dated from 2016 to February, 2021 was conducted to identify and describe the types of wayfinding devices that people who are blind, visually impaired or deafblind use while navigating indoors and/or outdoors in dynamic travel contexts. Within this investigation, we discovered some characteristics of participants with visual impairments, routes traveled, and real-world environments that have been included in recent wayfinding research as well as information regarding the institutions, agencies, and funding sources that enable these investigations. Results showed that 33 out of the 35 studies which met inclusionary criteria integrated the use of smart device technology. Many of these devices were supplemented by bluetooth low-energy beacons, and other sensors with more recent studies integrating LIDAR scanning. Identified studies included scant information about participant’s visual acuities or etiologies with a few exceptions, which limits the usability of the findings for this highly heterogeneous population. Themes derived from this study are categorized around the individual traveler’s needs; the wayfinding technologies identified and their perceived efficacy; the contexts and routes for wayfinding tasks; and the institutional support offered for sustaining wayfinding research.

Introduction

Wayfinding is an essential life function for human beings. As early hunter-gatherers, wayfinding was crucial for survival and today it remains a complex skill that is connected with quality of life, mental health, and economic prosperity (Allen, 2007; Golledge, 2003; Scherer and Glueckauf, 2005). Wayfinding for those who are blind, have low vision, or are deafblind may also be known as “orientation and mobility” (O&M), “orienteering,” “travel,” and “visually impaired mobility.” The term “wayfinding” is used as a way to describe orientation and navigation through an environment. It is the ability for travelers to know where they are and where they are going by understanding where they have already been. It is described by Wiener et al. (2010) as “moving purposefully through the environment toward a destination” (p. 324) while using all the cognitive, motor, and perceptual skills that the traveler has already learned.

Effective mobility devices are designed to support a person dynamically, enabling individuals to use compensatory sensory information for spatial navigation. Like all devices, whether they be no-tech, such as a long cane, or highly technical, such as a robotic guide dog, the goodness of fit between the person, the technology, the task, and the environment are mediated by several factors which may serve as either facilitators or barriers to device use (Gray et al., 2016; Wittich et al., 2021). The World Health Organization (WHO, 2018) describes assistive products as those that “maintain or improve an individual’s functioning and independence, thereby promoting their well-being.” Because the WHO considers assistive technology (AT) to be elemental to human dignity, quality of life, and mental and physical health, they are promoting the universal funding of these devices as a part of their 2030 sustainability goals (WHO, 2018).

People who are blind, have low vision, or are deafblind are a diverse population, with worldwide estimates in the range of 285 million (Pascolini and Mariotti, 2012). Long canes and guide dogs are primary mobility devices that support people with visual impairments as they navigate a variety of obstacles and surface changes. However, cognitive processes may also be supported by secondary tools, such as wayfinding apps or tactile maps, which assist the traveler in accessing, remembering, or interpreting salient environmental knowledge (Wiener et al., 2010). For many years electronic devices for persons who are blind were designed as customized tools, often categorized as electronic travel aids (ETAs) or electronic orientation aids (EOAs) (Wiener et al., 2010). Now, more universally-designed smart devices have allowed people with visual impairments, along with others who have disabilities, to benefit from the lower costs of mainstream, mass market devices (Institute of Medicine US et al., 2007).

For those with visual impairments, blindness, or deafblindness, exploring the art involved in human wayfinding is enigmatic not only because research in the field is limited, but also because there are varied lenses, including perceptual, behavioral, attitudinal, and analytic, for examining human factors in the process of navigation. For example, research teams have explored the process of wayfinding by identifying which types of environmental information is prefered by individual travelers who are blind or have low vision (Koutsoklenis and Papadopoulos, 2014; Parkin and Smithies, 2012). By describing which auditory, olfactory, tactile, and visual clues and/or landmarks from the environment support traveler’s navigational tasks, scholars have contributed to the field’s understanding of blind traveler’s dynamic use of sensory perception for wayfinding (Koutsoklenis and Papadopoulos, 2011a; Koutsoklenis and Papadopoulos, 2011b; Koutsoklenis and Papadopoulos, 2014). Some investigators have studied the impact of constructive verbal guidance for wayfinding tasks, and described traveler perceptions of the timing and clarity of such guidance (Bradley and Dunlop, 2005; Giudice et al., 2007; Havik et al., 2011; Ahmetovic et al., 2019). Others have examined which design elements in the built environment offer greater support to travelers with visual impairments to interpret their surroundings and reach their destinations efficiently (Gokgur, 2014; Havik et al., 2015; Lukman et al., 2020). Such investigations are vital for promoting more inclusive building compositions as well as informing universal accessibility standards (Tutuncu and Lieberman, 2016; Zimmermann-Janschitz et al., 2017).

While there is an increasing amount of research focusing on the development and use of accessible wayfinding technology for those who are blind, have low vision, or are deafblind (Arditi and Tian, 2013; Lancioni et al., 2014), many studies are conducted in controlled laboratory environments that do not include an exploration of technology use in real world scenarios. Furthermore, it is common for researchers to engage sighted participants who are blindfolded as subjects in investigations of technologies that are meant to benefit people with vision loss. While this substitution of sighted participants in blindfolds for people with visual impairments may be expedient from a recruitment standpoint, such participants do not have the same lived experiences, needs, or preferences that people who are blind, low vision, or deafblind may demonstrate. Other research teams have invited individuals who are blind or deafblind to reflect on their use of wayfinding tools and navigation challenges retrospectively using surveys, interviews, or focus group methodologies (Griffin-Shirley, et al., 2017; Hersh, 2013; Parker, et al., 2020). Such studies amplify the voices of visually impaired travelers and provide insights into the ways that tools, such as wayfinding technologies, mitigate the barriers in navigating through complex environments. Qualitative inquiry has also been used as the basis for designing more responsive wayfinding systems by incorporating participant themes into iterative technological advancements (Abdolrahmani et al., 2016; Ganz et al., 2012). Through participatory action research, the process of research, design and development occurs in partnership with people with disabilities. Through such collaborations, we not only create design efficiencies, we create more suitable products, enabling people to live better lives (Azenkot et al., 2016; Parker et al., 2020; Wittich et al., 2021).

Arguably, portable wayfinding technologies, such as those enabled through smart devices have transformed travel planning and navigation for all people. From an access perspective, these personal and powerful smart devices afford people with visual impairments and deafblindness access to a myriad of mobile apps which purport to support wayfinding. During focus group conversations with adults who are blind or deafblind, participants reported that the array, the functionality, the lack of integration, and the problems with sustainability of these apps present unique challenges for travelers who wish to adopt them (Swobodzinski and Parker, 2019; Parker et al., 2020). In focus groups with O&M Specialists and with individuals who are blind, it was reported that, at times, individuals use multiple apps to plan and execute one trip due to the limitations and lack of integration across apps (Swobodzinski and Parker, 2019).

Recently, Swobodzinski and Parker interrogated both academic and marketplace literature to describe the landscape of smartphone-supported wayfinding technologies used by people who are blind or deafblind. The researchers found that the majority of reports included scant to no information about the participants with visual impairments who directly evaluated the technologies (

Swobodzinski and Parker, 2019). In addition, people with deafblindness were not explicitly represented in the investigations at all. Another salient finding was that a minority of wayfinding apps addressed both indoor and outdoor navigation concerns, and still fewer addressed the challenge around seamless navigation between environments (

Swobodzinski and Raubal, 2009). Finally, the original review included an exploration of academic and marketplace literature, which did not provide adequate descriptions of routes traveled while using the apps, but focused more on the features and costs of specific technologies. In order to evaluate the evidence of the utility of the wayfinding devices, we deliberately sought peer-reviewed investigations only, unlike our first efforts which included marketplace listings of smartphone apps (

Swobodzinski and Parker, 2019). In this systematic review, we broadened our team and our focus by asking one primary and two secondary research questions:

1) What wayfinding aids do people who are blind, visually impaired or deafblind use while navigating indoor and/or outdoor real-world routes and what is their perceived efficacy?

2) How are the participants with visual impairments, routes traveled, and real-world environments described by the researchers within these wayfinding studies?

3) What institutions, agencies, and funding sources are supporting these investigations?

Our intent was to identify wayfinding tools that provide supplemental static or dynamic environmental information for the traveler to use during navigation; not only for obstacle avoidance, but for enhanced access to spatial knowledge. Such wayfinding tools differ from primary mobility devices such as a long cane, guide dogs, or human guides which instead support safety and efficiency when moving through the environment by providing immediate surface preview, protection from obstacles, and environmental awareness (Petrie et al., 1996; Isaksson et al., 2020).

Methods

A review of the literature (Cmar and Markowski, 2019) was conducted to collect a comprehensive list of relevant peer-reviewed studies identifying wayfinding tools used by people who are blind, visually impaired or deafblind while navigating indoors and/or outdoors. The Data Sheet S1 used included: Google Scholar, EBSCOhost, Academic Search Premier, Web of Science, and ERIC. Boolean operators were used in conjunction with the terms “wayfinding,” “mobility,” “orientation” or “travel” combined with “visually impaired,” “blind” or “deafblind” and “indoor,” “outdoor” or “urban environment.” The initial date range was restricted to roughly 10 years, from 2009 to the present (February, 2021) and later limited to a five-year search to focus on more recent evolutions in wayfinding.

Our original 10-year search parameters identified 2,238 possible articles. A first pass through the identified literature involved the removal of duplicates and irrelevant topics such as venetian blinds, animal migration and religion. After this process, 619 articles were retained for a second level of review. In addition, during the process of evaluating the works, the team consulted with a librarian from the American Printing House for the Blind (APH) to use the same 10-year window and search terms to recommend works from their international database. Our consultation with APH confirmed many of the articles identified in our original searches, but 262 additional articles were added to our study, creating a total of 881 articles present for a second level of review. For the second level of review, the research team which consisted of two faculty members, and three master’s level graduate students, began reviewing the abstracts of each article, identifying studies that incorporated six specific criteria created to answer our original research question. If it was not clear through the abstract whether all six inclusionary criteria were met, then individual articles were examined until it was clarified. The inclusionary criteria required that the article 1) contained participants with visual impairments, without identified cognitive or memory loss 2) involved a real world indoor and/or outdoor route(s) which were used as the context of the study, 3) was published for peer review in an academic journal or conference proceeding, 4) included a route-centered travel experience, 5) articulated a route description with a specific destination/endpoint, and 6) executed a wayfinding task. Articles that only included sighted participants who were blindfolded were excluded as well as studies that occurred in laboratory or controlled environments. Articles that focused primarily on object detection and not wayfinding in real world environments were excluded. Articles exclusively regarding investigations of traditional O&M tools, such as the long cane, guide dog, or human guide were ruled out. The second round of review yielded 35 articles.

In order to strengthen the search method, the research team decided to conduct an ancestral hand search of all 35 articles, applying the 10-year date range and all six inclusionary criteria to promising articles that were found within the 35 article’s reference lists. From this search, 24 additional articles were identified, totalling 59 articles. To further refine the article count for analysis and because of the rapid evolutionary cycle in wayfinding technologies, it was decided to only include the most recent articles, those from the last 5 years (2016–February 2021), which reduced the count by 19. Of the 40 remaining, it was discovered that an overwhelming majority of articles were app-related, however, there were also rich articles that surfaced (n = 5) related to guiding robots and physical tactile maps that included haptic feedback elements. Robotic support via guiding smart canes (e.g., Meshram et al., 2019), suitcase structures (e.g., Guerreiro et al., 2019), and dog-sized or person-sized robots (e.g., Tobita et al., 2017; Chuang et al., 2018) with navigation functions were thought to be qualitatively different from those focused on providing wayfinding information via other smart devices, because of the physical guidance given to participants. Additionally, the use of 2D or 3D printed maps (Feucht and Holmgren, 2018) or tablets with matrix pins that provide haptic interfaces for travelers to tactually scan for navigation support were deemed to be categorically different. In order for these rich topics to receive more in-depth review, it was decided to withhold them from the current systematic review and to report on them in future analyses. This resulted in a total of 35 articles retained in the current systematic review to receive full examination. These articles met all six original inclusionary criteria, and were characterized as tools used for wayfinding and/or navigation, but were not characterized as tactile maps or robotic technologies.

The reviewers developed a coding form to collect and analyze the articles using open and closed measurements. The form gathered details including: the journal of publication; the year published; the country, university, or corporation leading the study; the age range of participants; the number of participants with visual impairments; and the disability demographic of participants (i.e., low vision, blind, deafblind, etc.). Additionally, the form recorded the study methods, setting of the study (indoors, outdoors, or both), what mobility aids were used, a description of technology used, participant’s performance and perceptions while using the devices, and any additional notes the reviewer felt relevant to this review.

Once the form was completed and ready to use, the researchers ensured coding fidelity by reviewing the coding protocol as a team. To begin, a portion of studies (n = 8) were read and independently coded by five researchers in the team. That same article was then compared for similarity in coding. The researchers collaborated with each other regarding their answers until they agreed on a consistent manner to complete the form with consensus. They then distributed the remaining articles for independent review and coding. When there were articles where the coder was unsure about the application of the inclusionary criteria or specific variables described in the study, they consulted with another team member for their insights. The final set of articles were independently re-reviewed, re-coded by two members of the research team and achieved 100% consensus.

Results

Authors analyzed 35 articles to be included in the literature review. Nineteen were published in 15 different peer-reviewed professional journals, while 16 were published in peer-reviewed conferences proceedings. There were five quantitative studies and 30 were mixed method studies. Of the 35 included articles, 33 involved smart devices, largely smartphones, to integrate spatial data from a variety of infrastructures for travelers to access (see Table 1 for technology and impact summary). Researchers reported the use of widely available commercial apps as well as specially designed apps to support visually impaired travelers with wayfinding tasks. Two studies did not report the use of smart devices as a part of their navigation ecosystem. (Caraiman et al, 2019) describe the use of head mounted sensors and a haptic belt that help one “hear through” depth and sound while traveling. The second outlier was (Röijezon et al, 2019) testing of a virtual white cane with haptic feedback, using a small vibrating loudspeaker. Twelve studies incorporated the use of bluetooth beacons for indoor settings with one study reporting the use of rugged outdoor beacons (Ahmetovic et al., 2016). One study described LIDAR mapping paired with beacons to increase spatial mapping capacity (Sato et al., 2019). Other researchers used RFID tags, QR codes, magnetic data from steel building infrastructure, or broadly described sensor-based wayfinding supports. Augmented reality interfaces or apps were reported in two of the studies (Yoon et al., 2019; Zhao et al., 2020). Eighteen of the 35 studies described some type of training that was offered to participants who were using the technology. These training sessions ranged widely in duration and in form, with some sessions including a preview of the technology using two routes within a “training area” each being 80 feet long with three turns until 80% accuracy achieved. (Zhao et al, 2020) requiring a series of training modules on the use of augmented reality in indoor and outdoor environments for several hours (Caraiman, et al., 2019).

TABLE 1

| Study | Methods | Wayfinding technology ecosystem | Device name | Device training Y/N | Participant results impact of technology |

|---|---|---|---|---|---|

| Abu Doushet al. (2016) | Mixed: time, # of errors in navigation; location errors in meters; user rating scale; user feedback | WiFi access points, Samsung Galaxy Grand smartphone, Navigation apps, RFID tags, Bluetooth shelf reader. Audible feedback | ISAB: Integrated Indoor Navigation System for the Blind | Y | Preferred hands-free and instructions in advance; located objects within 10 cm or accuracy. Most preferred automatic navigation prompts |

| Ahmetovic et al. (2016) | Mixed: # of missed turns; instructions repeated; interviews | Map server, Smart Beacons (indoors), Tough Beacons (outdoors), iPhone 6 smartphone, navigation app. Audible feedback | NavCog iOS App | Y | Preferred repeat instructions; instructions provided could clearer |

| Ahmetovic et al. (2017) | Mixed: localization fingerprinting; interviews | BLE Beacons, iOS smartphone, navigation app. Audible feedback | NavCog system | N | Desire personalization of guidance features, e.g., timing of advance directions |

| Bai et al. (2017) | Mixed: experiment across types of guidance; user feedback | Smart guiding eyeglasses with sensors. Visual and audible feedback. Audible feedback had 3 conditions: stereo tone, recorded instructions, different frequency beep | Not mentioned | N | Turning directions were challenging to follow; totally blind users found recorded instructions more efficient in unfamiliar crowded areas versusstereo tones/beeps due to competing noises. more efficient than cane across all routes and stereo beeping most efficient overall |

| Bai et al. (2018) | Quantitative: algorithm created shortest path; measured deviation from paths | Smart guiding eyeglasses with cameras, ultrasonic rangefinder, CPU, headphone. Visual and audible feedback | Not mentioned | N | Participant feedback not given; data showed totally blind deviated less from “virtual-blind-road” than low vision users and device proved to serve as VI consumer product |

| Bai et al. (2019) | Mixed: concurrent measurement of time and # of collisions; survey on user preferences | Navigation app using Android TextToSpeech, smartphone, and eyeglasses. Visual and audible feedback | Not mentioned | N | Useful and helpful for orientation to environment; easy to wear. Wanted more tutorials, tactile feedback, staircase feedback, and more options (e.g., face, cash, and signal recognition) |

| Balata et al. (2016) | Mixed: Comparative Study, Performance Across Two conditions; Qualitative feedback; Diary study | Nokia smartphone, GoPro Hero 3, Navigation “system.” Comparative Study used Landmark system versus Metric System. Audible Feedback | Versions of a Navigation System: Landmark; Metric | N | All reached destination successfully. Error rates similar with slight favor to Landmark, completion times longer for Landmark, higher comprehension for Landmark |

| Balata et al. (2018) | Mixed: Comparative Study, Qualitative Study, and Long-term Diary study | Comparative Study used Landmark system versus Metric System with Nokia smartphone; Long-term Diary study used participant’s own mobile phone—Android OS, iOS, Symbian OS), accessible web navigation app (PesestriNet) with no GPS and connected to navigation system running on server. Audible feedback | Versions of a Navigation System: Landmark; Metric | Y | All reached destination successfully. Error rates similar with slight favor to Landmark, completion times longer for Landmark, higher comprehension for Landmark; praised feeling of independence |

| Caraimanet al. (2019) | Mixed: quantitative description of computer vision with 3-D construction and user feedback | Stereo-based acoustic and haptic wearable head mount that uses depth sensors and IMU devices. Info given to user through “hear-through” headphones and/or haptic belt sounds | Sound of Vision (SoV) | Y | Across 65 experimental scenarios, participants (n = 4) were able to use SoV with 88.5% accuracy. The tech added value to the white cane as it provided earlier feedback detecting static and dynamic objects, distinctly head-height objects, walls, negative objects (e.g., holes) and signs. |

| Cheraghi etal. (2017) | Mixed: concurrent design; algorithmic routes proximity detection; quantitative measures of distance and time; user opinion scale | Android OS smartphone, text-to-speech from Google, BLE beacons. Audible feedback | GuideBeacon system | N | Improved navigation times with system; Needs improvements with user interface and navigation modules (e.g. timing of instruction); further testing for compass accuracy needed; liked step-by-step instructions, want better personal preference settings |

| Cheraghi et al. (2019) | Quantitative: route calculation; measured participant time and distance traveled comparing apps | Android OS smartphone (Samsung Galaxy S7), navigation app, BLE indoor and outdoor beacons, Google Places API. Audible feedback | CityGuide app | N | When using tech, almost all participants (n = 6) reduced end-to-end navigation times and distances |

| Cohen and Dalyot. (2020) | Mixed: Participant interviews; Observation; Route comparison | Weighted network graph criteria using OSM data; Open Street Map (OSM), Google Map app. Audible feedback | “Our system” | N | System generated similar routes compared to Google Maps and O&M instructor; Liked having options for which path to travel (park vs. road);factors in play included landmarks (e.g., value of), user perceptions (e.g., safety), and user preferences (e.g. relaxing walk) |

| Flores et al. (2016) | Quantitative: comparison of blind and sighted participants on efficiency- time and number of steps | Android OS; smartphone IMU, compass, and barometer; Audible feedback | Programmed app called “the path recording program” | Y | System stopped working when disoriented; accuracy dependent on user training (e.g., understanding mobility language), user’s mobility skills (e.g. veering). No user feedback shared |

| Giudice et al. (2019) | Mixed: matched controls comparison between sighted and blind participants for efficiency and errors; post-experiment survey | Smartphone platform used magnetic signatures from building's steel structure. Magneto-meter used in required “walk through” for database. Audible feedback through Bluetooth single-ear headset | MagNav | Y | Prefer proposed system over up-front instructions; would travel more frequently, confidently and less stressed with access to proposed system |

| Giudice et al. (2020) | Mixed: within and between subject statistical comparison; qualitative survey and user feedback | Commercial Apple devices (iPhone 5 or 5th generation iPod touch) to deliver speech-based narrative descriptions from ClickAndGo Wayfinding Maps LLC & Wayfinding with Words placed in location-specific BLE beacons. Audible feedback | Not mentioned | Y | Increased likelihood of independent exploration; Preview of the environment in the form of “pre-journeys” are preferred; Requested different directions for guide dog users versus cane users; Wanted different beacon voices |

| Guerreiro et al. (2018) | Mixed: measuring user errors and thematic analysis | Smartphone, Navigation app, BLE beacons. GoPro cameras. Haptic and audible feedback | NavCog | Y | Identified in situ behaviors that caused navigation errors; Personalization of app features is vital but dynamic, too many settings can have negative impact |

| Guerreiro et al. (2019) | Mixed: quantitative metrics of completion times and errors; observational analysis | iPhone 8 and adapted NavCog app (navigation app) to identify moving walkways, AfterShokz bone-conductive headphones. Audible feedback | NavCog | Y | Low number of navigation errors and reasonable route completion times; confidence, performance, and motivation for independence increased with exposure; liked contextually-relevant info about environment; greater localization accuracy required in more challenging areas |

| Guerreiro et al. (2020) | Mixed: quantitative metrics of time, missed turns, long recovery errors; observation and user feedback | Participant used own devices (all iPhone 6 or 7 except one with iPhone 5 and one borrowed iPhone 6 to replace Android) with virtual navigation app for route knowledge acquisition prior to real-world navigation. All used iPhone 7 with NavCog (navigation app) for unfamiliar in situ navigation, no apps for familiar (virtually learned) in situ navigation. AfterShokz bone-conductive headphones. GoPro Hero 4 Black. Audible feedback | NavCog | Y | Virtual app helped gain route knowledge over time, NavCog did not; No clear quantitative benefit to combine virtually learned with NavCog routes but observed benefit of virtual app for in-route problem solving; increased confidence when knew what to expect; liked both apps |

| Kim et al. (2016) | Mixed: quantitative metrics of task completion rate, time, deviation and help-seeking situations; questionnaire survey | BLE beacons, iOS platform for smartphone using built-in accelerometer and gyroscope sensors. Earphone jack and portable speaker. Audible feedback | StaNavi (navigation system) | Y | All tasks completed successfully with general independence; found useful; liked having route overview info; diagonal direction taking found difficult; wanted more points-of-interest |

| Ko and Kim (2017) | Mixed: quantitative metrics of task time and wayfinding errors; post-test interview | Camera of smartphone (iPhone 6) used to detect QR codes in environment. QR codes with visual color codes. Audible feedback of varying beeping sounds at different frequencies. Used “spatial language” (TTS) to convey directional commands with compass directions | Not mentioned | Y | Easy to use; became accustomed with practice; would need tech support; found consistency in system |

| Murata et al. (2019) | Quantitative: metrics of localization errors | Smartphone, localization system integrated into turn-by-turn navigation app on iOS devices. Audible feedback | Not mentioned | N | Proposed localization system helps independent mobility; No user feedback |

| Nair et al. (2018) | Mixed: quantitative metrics of total interventions, trip durations, and total bumps; post-experiment survey | Android smartphone (Lenovo Phab 2 Pro). Hybrid positioning and navigation system combining BLE beacons (used RSSI) and Google Tango (used RBG-D camera to estimate phone’s location and orientation without GPS or external signals). Compared to pure BLE beacons for turn-by-turn navigation. Supplemental vibrotactile sensors worn on wrist for object detection. Audible and Haptic feedback | Not mentioned | N | Most felt hybrid app was helpful, safe and effective; liked supplemental vibrotactile information |

| Nair et al. (2020) | Mixed: quantitative metrics of time, walking speed, collisions and navigation errors; user evaluation data Wrist-wearable, proximity-based vibrotactile device. Audible, visual, vibrotactile or mixed feedback | Navigation app that uses existing floorplans (can be downloaded and used offline). BLE beacons, Google Tango. Integrates ARCore for Android and ARKit on iOS. Android smartphone w/ Google Tango 3D sensor built in (Lenovo Phab 2 Pro) | ASSIST app | N | All found easy to use; most found helpful and destinations easy to reach; liked voice feedback and vibratory features. Wanted more info on elevators |

| Ohn-Bar et al. (2018) | Quantitative: metrics of completion time and route knowledge | iPhone 7 smartphone with turn-by-turn navigation app; ibeacons; AfterShokz bone-conductive headphones; GoPro cameras | NavCog3 (open source indoor navigation system) | N | Route knowledge and route completion time gradually improves; No user feedback |

| Rodriguez- Sanchez and Martinez-R omo (2017) | Mixed: 3 year case study; usability and feasibility questionnaire; behavioral observation on routes | Navigation software. GAWA generated navigation apps. Apps tested with Android devices, iOS devices, and GAWA system web. Created tailored path for traveler. Visual, audible, and haptic feedback | GAWA (platform generates/ manages wayfinding apps); AMWA (wayfinding app) | Y | Useful and appreciative of continuity of guidance |

| Röijezon et al. (2019) | Mixed: motion capture movement behavior; interviews | 300 g hand-held device using laser to measure distance to objects (optical system, not ultrasonic). Device acts as a “virtual” white cane. Haptic feedback to index finger placed on small vibrating loudspeaker | Laser Navigator | Y | Maintaining device positioning was challenging. More useful outdoors than indoors; Liked ability to vary virtual “cane length” |

| Saha et al. (2019) | Mixed: Formative study to develop Landmark AI app; qualitative analysis of user feedback | GPS-based iOS navigation app (Microsoft Soundscape) used to get close to a particular business. Camera-based iOS app used to gather info about immediate space including channels called Landmarks, Signage and Places. Audible feedback | Landmark AI (the camera-based iOS app) | Y | Rich user feedback; all valued environmental info provided by app; “Landmark channel” most useful, “Place channel” most liked; shared landmark preferences; accuracy needs improved; wanted app integration |

| Sato et al. (2019) | Mixed: behavioral description and qualitative comments | iPhone 6 Smartphone, BLE beacons. LIDAR sensor to map buildings. Bone conduction headphones Audible feedback. | NavCog3 app | Y | Increased cognitive load for spatial mapping and decreased cognitive load for dynamic travel. Prefer having a preview setting for travel planning |

| van der Bie (2016) | Mixed: Likert scale, free comments and interview | Smartphone, custom made iPhone app, BLE beacons placed outdoors on lampposts, trees and other obstacles in public space. Audible and visual feedback | Not mentioned | N | Felt supported and safer using app than without; would use with new routes; split views on instruction length |

| van der Bie (2019a) | Mixed: quantitative metrics of user feedback with scale and task load index; open ended questions | Wearable Apple Watch (smartwatch) wayfinding app via Bluetooth connected to bone conduction headphone. Sidewalk wayfinding syntax. EyeBeacons system, “wizard-of-Oz” phone. Visual, haptic, and audio/voice feedback. Vibration patterns differed | Not mentioned | Y | 3 satisfied with feedback, 1 wanted less and shorter messages; varied cognitive load; felt sufficient timing of messages |

| Van der Bie (2019b) | Mixed: quantitative metrics of user feedback with scale and task load index; open ended questions | Wayfinding app connecting auditory messages from BLE beacon placements; Auditory wayfinding messages provided by EyeBeacons (wayfinding app designed for PVI). Bone-conducting headset. Audible feedback | Sidewalk (new wayfinding message syntax) | N | Proposed system provided better info than traditional turn-by-turn instructions; liked additional environmental and orientation info; easy to use and understand; appreciate different types of messages |

| Velázquez et al. (2018) | Mixed: quantitative metrics of GPS coordinates (to calculate path efficiency) and navigation time; user feedback | Electronic travel device using shoe insert with tactile display, electronic module worn on ankle. GPS localization through an Samsung Android smartphone using OpenStreet Map. Haptic feedback | Not mentioned | Y | info displayed was intuitive and recognizable; felt low cognitive load |

| Yoon et al. (2019) | Mixed: longitudinal design with co-design partners; experimental testing of app; large-scale metrics from mass market release with quantitative and qualitative data | Augmented reality iOS wayfinding app that provides continuous guidance | Clew (system uses digital bread-crumbs to find way back) | N (parts. were co-designers) | Proposed guidelines for tech development; Phone positioning is important; most positive ratings in routes 9–15 m long; ratings less positive as tracking errors increased |

| Zaib et al. (2019) | Mixed: quantitative metrics of time; # of steps; accuracy on paths; non-directed interviews | Samsung Galaxy S6 smartphone and Motorola droid. Indoor avigation app either receiving or providing service to other components using Application Programming Interface (API). Audible feedback | Not mentioned | Y | Desired braille output, not audio only, for increased accuracy; App decreased cognitive load; Prefer longer and safer routes rather than most efficient routes. (algorithm) |

| Zhao et al. (2020) | Mixed: ANOVA across augmented reality conditions; structured interviews | Built prototype on the wearable head mount Microsoft HoloLens v1 which uses augmented binocular displays and has spatial audio support. Wearable Motorola 360 smartwatch for secondary task vibration feedback with varying vibration patterns. Smartphone app to control HoloLens via TCP and communicate with smartwatch, toggling between the two. Spatialized audio turn-by-turn directions. Audio, haptic, and visual feedback | Not mentioned | Y | Visual feedback features lowered cognitive load and increased performance measures over audio unless user had limited field of vision. Training times varied more for visual feedback trials due to differing levels of visual abilities |

Technologies and Impact.

Across these 35 studies participant performance and perceptions while using these various apps, devices and systems were varied (see Table 1, Technology and Impact). The efficacy and impact of the various wayfinding tools were measured by assessing participant performance data and qualitatively through participant interviews or rating scales. All of the 35 studies engaged in reporting observed travel behaviors but these were often defined differently. For example efficiency might be measured in the time spent reaching a destination, the number of steps, or the number of errors. Others reported not only efficiency but usability which was largely measured by comments that travelers made or expressed preferences or challenges. Qualitative measures included interviews, rating scales, diaries, or functionality feedback on the features of the device. Overall, the majority of travelers found the devices to be useful in offering supplemental preview and real-time environmental information, although the timing, clarity, and accessibility of the information was less helpful in specific conditions.

The studies included anywhere from 2 to 43 participants with low vision or blindness. Age was not mentioned in nine of the studies. The ages in the remaining 26 studies ranged from 18 to 82 years old. None of the studies reported any participants with deafblindness, however one study mentioned a visually impaired participant who used hearing aids (van der Bie et al., 2019a). All 35 studies included a combined total of 469 participants who executed routes and were visually impaired (see Table 1 for a summary). Across the studies, participants were most commonly described as “blind” or having “low vision.” Other terms were used, such as “blind with light perception” or “blind with no light perception.” Of the 35 articles, six offered information about etiologies, others merely said “various disorders.” Some used “congenital” or “late onset” as a description of visual impairments. Only one study mentioned including other participants with disabilities, including individuals who are Deaf and two people who used wheelchairs, within the wayfinding tasks (Rodriguez-Sanchez and Martinez-Romo, 2017).

Eleven of the 35 studies and two large scale user evaluations within two of the remaining studies, represented 172 participants and did not clarify the participant’s primary mobility tool. Of the remaining 297 participants, 202 (68.0%) used a mobility cane as their primary mobility tool, 52 (17.5%) used dog guides, and 5 (1.7%) used dog guides and canes. The remaining 38 (12.8%) participants used other types of primary tools including their remaining functional vision. Only one study mentioned two participants who used wheelchairs, and these individuals were not identified as having a visual disability (See Table 2 for participant descriptions).

TABLE 2

| Reference | # of VI Participants | Age range in years (Average) | Cause of blindness (COB) | No. of cane users | No. of guide dog users |

|---|---|---|---|---|---|

| Abu Doush et al. (2016) | 20 | 20–34 (23) | |||

| Ahmetovic et al. (2016) | 6 | 35–73 | 4 | 2 (guide dog and cane user) | |

| Ahmetovic et al. (2017) | 6 | 35–73 | 4 | 2 (guide dog and cane user) | |

| Bai et al. (2017) | 20 | Amblyopia | 10 | ||

| Bai et al. (2018) | Undefined | ||||

| Bai et al. (2019) | 20 | 10 | |||

| Balata et al. (2016) | 16 for quantitative study; 6 for qualitative study | 23–66 (35.75); 30–68 (48.67) | |||

| Balata et al. (2018) | 16 for quantitative study; 6 for qualitative study; 18 for long-term diary study | 23–66 (35.75); 30–68 (48.67); 23–66 (36.78) | |||

| Caraiman et al. (2019) | 4 | 4 | |||

| Cheraghi et al. (2017) | 7 | 6 | 1 | ||

| Cheraghi et al. (2019) | 6 | 5 | 1 | ||

| Cohen and Dalyot (2020) | 14 | ||||

| Flores et al. (2016) | 3 | 3 | |||

| Giudice et al. (2019) | 12 | 20–59 | Retinitis Pigmentosa, Glaucoma, Leber’s Congenital Amaurosis, bilateral retinoblastoma, optic demyelination with cortical damage, fever | 4 | 8 |

| Giudice et al. (2020) | 7; 7 | “older group” of 60–70; “younger group” of 28–54 | Retinitis Pigmentosa, Stargardt Disease, Glaucoma, Cataracts, Retrolental Fibroplasia, Meningitis, Toxoplasmosis, Leber’s Congenital Amaurosis, ROP, Keratis | 11 | 3 |

| Guerreiro et al. (2018) | 13 | 7 | 6 | ||

| Guerreiro et al. (2019) | 10 | 37–70 | 5 | 4 | |

| Guerreiro et al. (2020) | 14 | 41–69 | 8 | ||

| Kim et al. (2016) | 8 | (24.4) | 8 | ||

| Ko and Kim (2017) | 4 | 24–28 | |||

| Murata et al. (2019) | 10 | 33–54 | 9 | 1 | |

| Nair et al. (2018) | 11 | 18–55+ | |||

| Nair et al. (2020) | 11 in usability test; 6 in performance test | 25–55+ | 9 (usability test); 5 (performance test) | 2 (usability test); 1 (performanc e test) | |

| Ohn-Bar et al. (2018) | 8 | 43–76 (65.63) | 8 | ||

| Rodriguez-Sanchez and Martinez-Romo (2017) | 7 | 20–75 | |||

| Röijezon et al. (2019) | 3 | 60, 72, 78 | 2 | 1 (guide dog and cane user) | |

| Saha et al. (2019) | 22 (Study 1); 13 (Study 2) | 31–50; 24–55 | 13 (Study 1); 6 (Study 2) | 6 (Study 1); 6 (Study 2) | |

| Sato et al. (2019) | 10 (Study 1); 43 (Study 2); 37 (Study 3) | 33–54a | 9 (Study 1); 35 (Study 2) | 1 (Study 1); 5 (Study 2) | |

| van der Bie (2016) | 5 | 30–78 | |||

| van der Bie (2019a) | 4 | 25–46 | |||

| van der Bie (2019b) | 6 | 44–69 | 6 | ||

| Velázquez et al. (2018) | 2 | 31–35 | Retinitis Pigmentosa, Congenital blindness | 2 | |

| Yoon et al. (2019) | 4 co-designers; Large-scale user study | Retinitis Pigmentosa | 3 | 1 | |

| Zaib et al. (2019) | 8 | 30–50 | |||

| Zhao et al. (2020) | 16 | 27–82 (56) | Retinitis Pigmentosa, Diabetic Retinopathy, Stargardt Disease, Glaucoma, Doyne Honeycomb Retinal Dystrophy, Albinism, Fuch’s Dystrophy, Myopic Choroidal Neovascularization, Optic Neuritis Multiple Sclerosis, Braine Tumor/Glioma, Cone Dystrophy | 6 |

Participants with visual impairments who executed routes.

Note. If a space was left blank, the information was not provided in the study.

VI = visual impairments.

= as reported.

Settings of the studies were predominantly either indoors (57.1%) or outdoors (28.6%). One research team conducted one indoor only and one outdoor only (Bai et al., 2017). Only four studies included single routes which transitioned between indoor and outdoor settings (Ahmetovic et al., 2016; Ahmetovic et al., 2017; Rodriguez-Sanchez and Martinez-Romo, 2017; Cheraghi et al., 2019). Urban cityscapes, university campuses, basements of office complexes, supermarkets, retail stores, multi-level malls, airports, train stations, and community parks were contexts for the travel tasks. All studies included the number of routes used with participants. Twenty-five studies, or 71.4%, provided a range or average length for individual routes. Twenty-three studies (65.7%) recorded the amount or average amount of turns in each route. Almost half of the 35 studies (17) provided preview or training time prior to completing measured wayfinding tasks in order to learn how to operate secondary supports. This time was not necessarily restricted to technology and ranged from 5 min to as long as needed to get comfortable with the task. (See Table 3 for route information). While not represented in the tables directly, 26 of the studies mentioned landmarks but not always as a direct term. For example, sometimes other terms were used such as “points of interest,” “features,” “environmental features” or “action points.” A variety of terms were presented in the articles to be used by study participants to support travel in authentic environments.

TABLE 3

| Study | Route Setting | Conditions | No. of routes per participant | Actual length of test route(s) | No. of turns in test route(s) |

|---|---|---|---|---|---|

| Abu Doush et al. (2016) | First floor of University library | Indoor | 2 | 11 m | 2 (average) |

| Ahmetovic et al. (2016) | University path covered 2 buildings, 2 bridges, a flight of stairs, and campus quad area with paths containing both indoors and outdoors areas | Indoor and Outdoor | 2 practice; 1 test | 350 m | 12 |

| Ahmetovic et al. (2017) | University path traversed 2 buildings, a set of stairs, 2 bridges, and outdoor quad area | Indoor and Outdoor (route 1); Indoor only (route 2) | 2 | 390 m (route 1); 230 m (route 2) | 12; 10 |

| Bai et al. (2017) | Home; office; supermarket | Indoor | 6 (3 routes; 2 conditions) | 40 m; 150 m; 1.000 m | 20a; 11a; 14a |

| Bai et al (2018) | Hall to washroom; lounge to bar; Room 3308 to washroom | Indoor | 6b | Not mentioned | 2 |

| Bai et al. (2019) | Indoor routes in same office building: desk to reception, desk to meeting room, desk to washroom; Outdoor routes: office to Walmart, office to bank, bank to bus station | Indoor, Outdoor | 6 (3 indoor; 3 outdoor) | Indoor: ∼21 m, ∼52 m, ∼30 m Outdoor: ∼860 m | Indoor: 2a; 2a; 4a Outdoor: 4, 4, 1 |

| Balata et al. (2016) | Comparative Study: Quiet area in city center of Prague, Czech Republic; Qualitative Study: busy square in city center ending in quiet area | Outdoor | Comparative Study: 4 (2 routes walked twice—once for test, once for retrospective walkthrough); Qualitative Study: 1 | Comparative Study: ∼350 m; Qualitative Study: 670 m | Comparative Study: 8; Qualitative Study: 13 |

| Balata et al. (2018) | Comparative Study: Quiet area in city center of Prague, Czech Republic; Qualitative Study: busy square in city center ending in quiet area; Long-Term Diary Study: Urban, city center environment | Outdoor | Comparative Study: 4 (2 routes walked twice—once for test, once for retrospective walkthrough); Qualitative Study: 1; Long-Term Diary Study: varied. | Comparative Study: ∼350 m; Qualitative Study: 670 m; Long-Term Diary Study: Covered area of 0.8 km2 with 51.9 km of sidewalks covered by PedestriNet geodatabase | Comparative Study: 8; Qualitative Study: 13; Long-Term Diary Study: Varied |

| Caraiman et al. (2019) | University setting. Predefined routes. Semi-controlled natural setting with short routes and no or light traffic. Uncontrolled environments in public areas, with varying, uncontrollable traffic. Video link shows route to include walking on crowded sidewalks and parking lot exits with downcurbs, upcurbs and traffic present. | Outdoor | Unclear | Semi-controlled “"usually” 15–30 m; Uncontrolled distance not defined in paper but link to video of research shows 250 m route | Not mentioned |

| Cheraghi et al. (2017) | University campus—entrance of building to research lab located on 2nd floor of building | Indoor | 1–4 | Not mentioned | Not mentioned |

| Cheraghi et al. (2019) | Unfamiliar campus setting. Indoor starting location, outdoor destination Route with various vertices and edges | Indoor and Outdoor | 1–2 | Not mentioned | Not mentioned |

| Cohen and Dalyot (2020) | Technion campus in Haifa, Israel—bordered a park (sound and direction of oncoming traffic), through a park (more complex but more relaxing and calming); New York City, NY, United States—along border of Madison Square Park, through Madison Square Park (crowded, fountains, playing musicians, food carts, sounds and smells) | Outdoor | 2; 2 | 366 m, 205 m; 404 m, 351 m | 2, 2; 1, 4 |

| Flores et al. (2016) | College campus in New York City. Starting at either building entrance or a classroom. Going through corridors and passing from one floor to another. Stairs. (figures referred to but not accessible) | Indoor | Unclear. There were 2 for “training” and 3 for “final tests.” Participant could repeat test routes up to 3 times each | 50 m–100 m | Not mentioned (figures referred to but not accessible) |

| Giudice et al. (2019) | Mall of America, all on same floor level. Carpet underfoot, sound of fountain, round cement pillar | Indoor | 1 practice; 4 experiments | 196.6 m–245.4 m | 5–8 |

| Giudice et al. (2020) | College complex in New York City. Similar in complexity but differ in topology. Half traversed 2 floors and used elevator with 5–8 decision points | Indoor | 1 practice; 4 experiment | 68.6 m; 114.3 m; 30.5 m; 56.4 m | 7; 5; 4; 4 |

| Guerreiro et al. (2018) | Instrumented 3 buildings in university setting with NavCog environment (58,800 m2). Short route was on one floor with 6 POIs (landmarks) and long route was on 2 floors with 22 POIs (landmarks) | Indoor | 1 short; 1 long | 61 m; 210.3 m | 4; 11 |

| Guerreiro et al. (2019) | Natural airport setting including ticketing area, moving walkways, and airside terminals. Routes—Entrance to ticketing counter; Train to gate; Gate to nearest restroom | Indoor | 4 | 120 m; 310 m; 30–40 m; 230 m | 4; 3; Undefined; 2 |

| Guerreiro et al. (2020) | Short routes include one floor and 6 POIs (landmarks); Long routes include 2 floors, an elevator, and 22 POIs (landmarks) | Indoor | 2 short; 2 long (1 short and 1 long had been taught for 3 days using virtual app to become “familiar”—for real-world task, participant performed 2 “familiar” and 2 unfamiliar routes | 60 m; 210 m | 4; 11 |

| Kim et al. (2016) | Very high activity traveling in key locations inside Tokyo Station on one floor. Routes—Central gate to Transfer gate; Transfer gate to Keiyo Street; Keiyo Street to South Gate; South gate to Gransta entrance | Indoor | 4 | “Average route lengths”—153 m; 114 m; 171 m; 138 m | Not mentioned |

| Ko and Kim (2017) | Two buildings on university campus, one 14-story building and one 6-story building with similar structure except first floor | Indoor | 2 | Not mentioned | 8–11a |

| Murata et al. (2019) | Shopping mall that includes three multi-story buildings and large open underground passageway and elevator use. Routes—Basement floor subway station to 3rd floor movie theater; movie theater to candy shop; candy shop to subway station | Indoor | 3 | 177 m; 54 m; undefined | 26 total. 11a; 4a; 11a |

| Nair et al. (2018) | Lighthouse Guild of New York City | Indoor | 6 | Defined as “virtually identical” but undefined | Defined as “virtually identical” but undefined1a |

| Nair et al. (2020) | 6-story building in New York City | Indoor | 3 in Usability Study; 3 in Performance Study | 24.3–33.5 m; ∼20 m | 3-7; 3 |

| Ohn-Bar et al. (2018) | Three buildings in university campus (58,800 m2). Route A was executed with navigation app sometimes and executed using memory for others. Route B was executed using only navigation app and not from memory (due to fatigue) | Indoor | 14 (2 routes walked several times—Route A walked 6 times; Route B walked 4 times) | 152.4 m; 76.2 m (check) | 8; 7 |

| Rodriguez-Sanchez and Martinez-Romo (2017) | Indoor trial used one council building with two floors (each floor 175 m2) using StepByStep app; Outdoor trial used city setting (Madrid) with 8 points of interest on tourism route called “Guadalupe” route; Indoor/Outdoor trial used an educational environment moving between indoors and outdoors including university, library, and laboratories | Indoor; Outdoor; Indoor and Outdoor | 21 (9 indoors; 6 outdoors; 6 indoor/outdoor) | Not mentioned | Not mentioned |

| Röijezon et al. (2019) | One indoor route; one outdoor route. Indoor route not analyzed due to lab setting (not real-world). Outdoor route on university campus with 2 crossings among a cluster of buildings | Outdoor | 2 (same route done twice) | 385 m | 4 |

| Saha et al. (2019) | Large, outdoor two-story shopping center. Tasks—Find elevator near restrooms/ice cream; Find table near entrance of candy shop; Find box office counter for theater | Outdoor | 3 | Not mentioned | Not mentioned |

| Sato et al. (2019) | A shopping mall with 3 buildings (21,000 m2) and connects to subway station on first basement level. Study 1- Fixed Route in Shopping Mall; Study 2: Free Route in Shopping Mall. Study 3—Adhoc NavCog3 system installment at a hotel. Accessible guest-room areas where guests were staying to attend a 4-day conference for PVI were mapped with LIDAR. NavCog3 app available for download on iOS App Store for the four days | Indoor | 3 (Study 1); Average was 4 (Study 2, only 5 participants walked less than 3); 280 (included all user’s traveling records) | Average for “all routes and participants,” 450 m; Average per participant, 120 m; Average per user, 108 m | 26; undefined; undefined |

| van der Bie (2016) | Walked city route with and without app. Route from Amsterdam train station to office including stairway, crossing traffic light, parking exit, and crossing train station | Outdoor | 2 (same route under 2 conditions) | Undefined | Undefined |

| van der Bie (2019a) | Urban setting in Amsterdam designed to include noisy roads, stairs, road crossings, squares, construction work and obstacles on the road | Outdoor | 1 | 1 km (1,000 m) | Undefined (although there were 23 “wayfinding instructions”) |

| Van der Bie (2019b) | Urban center of Amsterdam with “challenging” obstacles, crossroads, and squares | Outdoor | 1 | Undefined | Undefined |

| Velázquez et al. (2018) | Two urban environments carefully chosen due to low vehicle traffic with static and dynamic obstacles (objects and people) | Outdoor | 1 | Undefined | Undefined |

| Yoon et al. (2019) | Released Clew on iOS app store to 156 countries | Indoor | 5,789b | Undefined | Undefined |

| Zaib et al. (2019) | University setting with classrooms, corridors, elevator, and stairs | Indoor | 11 | Range—42.89–60 m | 0 (5 routes);1 (3 routes); 2 (3 routes) |

| Zhao et al. (2020) | One floor of campus building. Well-lit area | Indoor | 8 | Each ∼51.8 m | 4 |

Real-world routes.

= The number of turns per route were not explicitly recorded, therefore, this number represents total of turns shown in figure of route.

= The number of routes per participant were not quantified in the study, therefore, this number represents the total number of routes quantified for the whole study.

The studies took place in 14 different countries, with the majority taking place in the United States (44.4%), the Netherlands (8.33%), China (8.33%), Japan (8.33%), and the Czech Republic (5.55%). Twenty-four universities were represented across the 35 studies. Twenty-six of the studies (74.3%) were declared as funded with a mixture of support from national grants, corporate sponsorships, and private foundations (see Table 4 Research Institutions and Financial Support).

TABLE 4

| Reference | Institution & Partners | Funding Sources | Country |

|---|---|---|---|

| Abu Doush et al. (2016) | Yarmouk University | Deanship of Research and Graduate Studies in Yarmouk University under grant number (15/2013) | Jordan |

| Ahmetovic et al. (2016) | Carnegie Mellon University & IBM Research, Japan | Sponsored in part by Shimizu Corporation | United States |

| Ahmetovic et al. (2017) | Carnegie Mellon University & Indiana University—Purdue & IBM Research—Tokyo University | Partially supported by Shimizu Corporation | United States |

| Bai et al. (2017) | Beihang University & CloudMinds Technologies, Inc., Beijing and DT-LinkTech Inc., Beijing | Not mentioned | China |

| Bai et al (2018) | Beihang University & CloudMinds Technologies, Inc., Beijing and MorningCore Technology Company Ltd., Beijing | Supported by the CloudMinds Technologies, Inc. | China |

| Bai et al. (2019) | School of Electronic Information Engineering, Beijing, CloudMinds Technologies, Inc., Beijing and China Academy of Telecommunication Technology, Beijing | Not mentioned | China |

| Balata et al. (2016) | Czech Technical University & Central European Data Agency | Supported by the project Navigation of handicapped people funded by grant no. SGS16/236/OHK3/3T/13 (FIS 161–1611663C000) and by the Technology Agency of the Czech Republic through project Route4all (TA04031574) | Czech Republic |

| Balata et al. (2018) | Czech Technical University, Prague | Navigation of handicapped people funded by grant no. SGS16/236/ OHK3/3T/13 (FIS 161–1611663C000) | Czech Republic |

| Caraiman et al. (2019) | Georghe Asachi Technical University of Iasi | European Union's Horizon 2020 research and innovation program under grant # 643636 “Sound of Vision” and by the “Gheorghe Asachi” Technical University of Iasi, Romania under project # TUIASI-GI-2018-2392 | Romania |

| Cheraghi et al. (2017) | Wichita State University & Envision Research Institute | This work was funded in part by the Carl and Rozina Cassat Regional Institute on Aging at Wichita State University and the Envision Research Institute | United States |

| Cheraghi et al. (2019) | Wichita State University | Funded in part by the U.S. National Science Foundation (NSF) grant #1737433 | United States |

| Cohen and Dalyot (2020) | Technion-Israel Institute of Technology | Supported by the Ruch Exchange Grant | United States |

| Flores et al. (2016) | Capgemini Engineering, France; University of Strasbourg, France; Universite Paris-Sud | Capgemini Engineering, France; University of Strasbourg, France; Universite Paris-Sud | France |

| Giudice et al. (2019) | University of Maine, Orono; Koronis Biomedical Technologies, Maple Grove, MN; Clickandgo Wayfinding Maps, Inc., Minneapolis, MN | NIH grants: R44EY021412-02 and R01EY019924-07 | United States |

| Giudice et al. (2020) | University of Maine, Orono; Koronis Biomedical Technologies, Maple Grove, MN; Clickandgo Wayfinding Maps, Inc., Minneapolis, MN | NIH grant Phase II SBIR: R44EY021412-02 and NSF grant: IIS-1822800 supported technical development of the system and funded this research | United States |

| Guerreiro et al. (2018) | Carnegie Mellon University & IBM Research, Tokyo | Support of Shimizu Corporation, JST CREST (JPMJCR14E1) and NSF (1637927) | |

| Guerreiro et al. (2019) | Carnegie Mellon University & IBM | This work was sponsored in part by NSF NRI award (1637927), NIDILRR (90DPGE0003), Allegheny County Airport Authority, and Shimizu Corporation | United States |

| Guerreiro et al. (2020) | Carnegie Mellon University, PA & IBM Research | This work was sponsored in part by Shimizu Corp, JST CREST grant (JPMJCR14E1) and NSF NRI grant (1637927) | United States |

| Kim et al. (2016) | The University of Tokyo; YRP Ubiquitous Networking Laboratory | Not mentioned | Japan |

| Ko and Kim (2017) | Sonkuk University, Seoul | Not mentioned | Korea |

| Murata et al. (2019) | Shimizu Corporation, Japan & IBM Research, Japan; Carnegie Mellon University, PA | Shimizu Corporation and Mitsui Fudosan for their collaboration | Japan |

| Nair et al. (2018) | City College of New York & the Lighthouse Guild, New York | Research was administered by the Oak Ridge Institute for Science and Education, managed under US Dept. of Energy contract #DE-AC05-06OR23100 and #DE-SC0014664. “This work is also supported by the U.S. National Science Foundation (NSF) through Award #EFRI-1137172, Award #CBET-1160046 and Award #CNS-1737533, the VentureWell (formerly NCIIA) Course and Development Program (Award #10087-12), a Bentley-CUNY Collaborative Research Agreement 2017–2020, as well as NYSID via the CREATE (Cultivating Resources for Employment with Assistive Technology) Program.” | United States |

| Nair et al. (2020) | City College of New York & the Lighthouse Guild, New York | VentureWell [#10087-12]; NYSID [CREATE 2017-2018]; Bentley Systems, Inc. [Bentley-CUNY Collaborative Research Agreement 2017]; U.S. National Science Foundation [#CBET-1160046,#CNS-1737533,#EFRI-1137172,# IIP-1827505]; U.S. Department of Homeland Security [#DE-AC05-06OR23100,#DE-SC0014664]; the U.S. Office of the Director of National Intelligence via IC CAE at Rutgers [Awards #HHM402-19-1-0003 and #HHM402-18-1-0007] | United States |

| Ohn-Bar et al. (2018) | Carnegie Mellon University & IBM Tokyo | Shimizu Corporation, JST CREST (JPMJCR14E1) and NSF (1637927) | United States |

| Rodriguez-Sanchez & Martinez-Romo (2017) | Universidad Rey Juan Carlos, Madrid; Universidad Nacional de Educacion a Distancia, Madrid | Not mentioned | Spain |

| Röijezon et al. (2019) | Lulea University of Technology, Lulea, Sweden | Not mentioned | Sweden |

| Saha et al. (2019) | University of Washington, WA; Microsoft Research, Redmond, WA | Not mentioned | United States |

| Sato et al. (2019) | IBM Research, Tokyo; Carnegie Mellon University, PA; Shimizu Corporation, Japan | This work was sponsored in part by JST CREST (Grant No. JPMJCR14E1) and NSF NRI (Grant No. 1637927) | Japan and the United States |

| van der Bie (2016) | Digital Life Centre Amsterdam University of Applied Sciences Amsterdam, Netherlands; Royal Dutch Visio Centre of expertise for visually impaired people Amsterdam, Netherlands | Amsterdam University of Applied Sciences grand Urban Vitality and Amsterdam Creative Industries Network | Netherlands |

| van der Bie (2019a) | Amsterdam University of Applied Sciences, Netherlands | “Supported by” the ZonMW InZicht program, project nr. 94312006 | Netherlands |

| van der Bie (2019b) | Amsterdam University of Sciences Saxion University of Applied Sciences | ZonMW InZicht program, project nr. 94312006 | Netherlands |

| Velázquez et al. (2018) | Universidad Panamerican a, Mexico; Universite de Rouen, France; Universidad Adolfo Ibanez, Chile; Universita de Salento, Italy | Not mentioned | Mexico |

| Yoon et al. (2019) | Stanford University & Olin College of Engineering | Peabody Foundation of Boston, MA | United States |

| Zaib et al. (2019) | University of Peshawar & University of Malakand | Not mentioned | Pakistan |

| Zhao et al. (2020) | Cornell University, NY; University of Washington, WA | Supported in part by the National Science Foundation under Grant No. IIS-1657315 | United States |

Research institutions and financial support.

Discussion

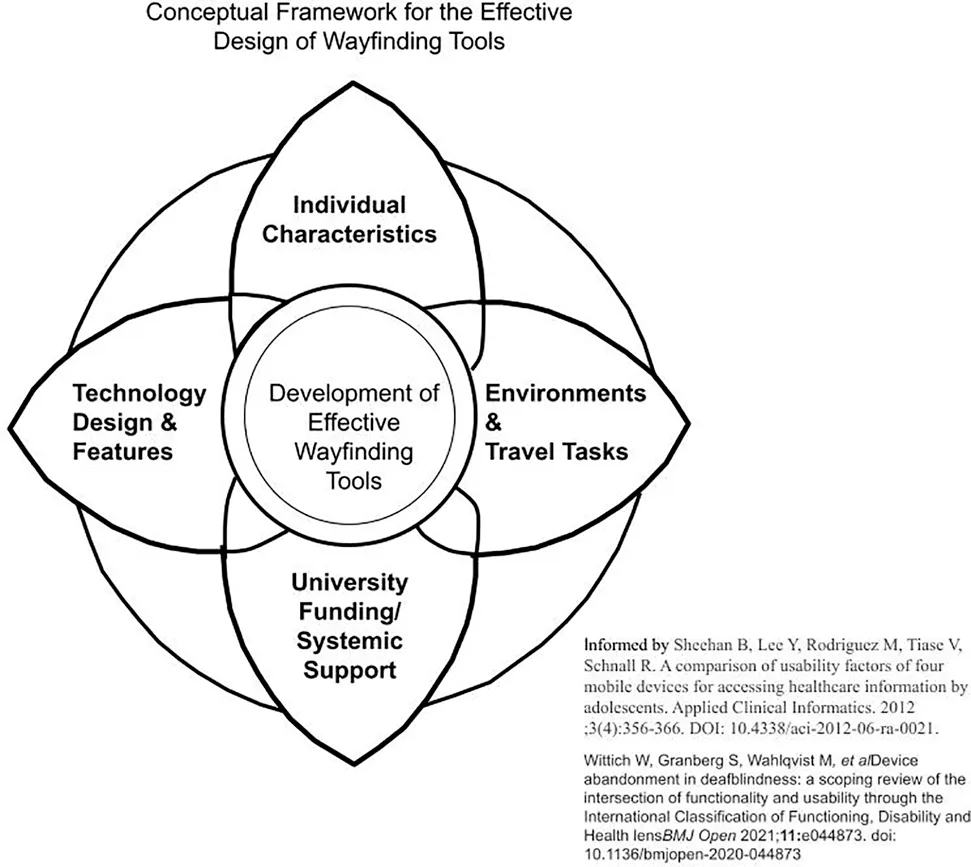

The objective of this systematic review is to identify research published in peer-reviewed academic journals within the last 5 years that focuses on the wayfinding tools used by people who are blind, visually impaired, or deafblind on routes in real world environments. In an initial review of included articles, the research team deemed that studies that investigated the use of guiding robots or tactile maps were qualitatively different from smart device wayfinding tools and set these articles aside for future in-depth analysis. Thirty five studies were analyzed for this report. Most of the studies identified mirror the larger than life role that smart device technology, including phones, watches, and glasses, assumes in our world. Our goals not only included understanding what types of participants and technologies were represented in these studies, we also sought to describe the ways that participants evaluate those technologies in authentic travel contexts. To organize our discussion, we explored: the interconnections of participant descriptions; the technologies that supported the wayfinding tasks and their efficacy; the characteristics of the environments and routes where wayfinding occurred; and the university partners and funding sources which supported the wayfinding research. The linkages between these findings offer opportunities for understanding the complex nature of effective design for the important human task of wayfinding. Our findings are organized into a conceptual framework, which is influenced by the FITT model for technology adoption (Sheehan et al., 2012; Gray et al., 2016).

Participants With Visual Impairments in Studies

Within the 35 studies in this systematic review, approximately 447 people with visual impairments were represented. This number is an approximation because it is likely that the same participants joined more than one route-based evaluation with teams of researchers. The descriptions of participant’s visual acuity and etiologies were minimal, with a few notable exceptions (see Table 1). Within the six studies that included information about participants, there were a wide range of etiologies (n = 25) mentioned. This finding is in harmony with demographic data regarding the heterogeneity of the causes of visual impairments; however, this modest sample of etiologies should be considered from the perspective of specific etiologies that are more prevalent in certain countries (Pascolini and Mariotti, 2012). While the ways that each person experiences vision loss is unique, there are some common challenges people with specific etiologies face, particularly when traveling in various environments. For example, depending upon the person’s age and the progression of the disease, people with retinitis pigmentosa experience difficulty in transitioning between light and dark environments, as well as being sensitive to glare (National Eye Institute, 2019a). Because wayfinding devices not only have audio but visual features, knowing about participant’s visual acuities and etiologies is important for researchers and practitioners alike to be able to make connections between what types of devices may be more supportive for different types of individuals. People with diabetic retinopathy may experience neuropathy in their hands and feet, as well as having low vision (National Eye Institute, 2019b). Individuals with Stargardt’s have reported challenges with depth perception and challenges in negotiating curbs and stairs with varying levels of contrast during mobility tasks (Zhao et al., 2018). For technology developers and for researchers, understanding the reason that surface changes or stairs may be more challenging for some travelers with low vision is important; therefore, more information on participant etiologies is helpful for exploring the utility of devices for people with specific constraints.

Another opportunity afforded to our team through this systematic review was the chance to learn what primary mobility devices, long canes and guide dogs, that travelers were using and the ways that participants evaluated secondary devices based on their use of their primary mobility devices. Several research teams noted that travelers who were teamed with guide dogs had a more rapid pace during the wayfinding process, which impacted the use of the wayfinding apps (Cheriaghi et al., 2017; Guerreirro et al., 2018; Sato et al., 2019). In one study, participants with guide dogs were found to have a faster pace “than other cane users, and even sighted users” causing dog users to move “ahead of a turn point faster” and re-route back (Cheraghi et al., 2017, p. 8). Another research team noted that guide-dogs stopped at a decision point before the navigation system announced the turn (Guerreiro et al., 2018). In another example, a participant shared that the timing of wayfinding app messages regarding turns was disruptive to her pacing in working with her guide dog as she tried to coordinate information from the app with commands given to her guide dog (Ahmetovic et al., 2017). The developers used this information to build in more control regarding the speed and timing of messages, which was useful to the participant in communicating with her guide dog to anticipate turns or route changes (Ahmetovic et al., 2017). Still others noted that travelers that were teaming with guide dogs, needed less information about obstacles and slight turns than those traveling with long canes (Guerreiro et al., 2018). In response to the differences in travel needs across long cane and guide dog travelers, some researchers devised a different set of directions for guide dog users to adapt to the difference in pacing, and in making slight turns along the routes during wayfinding tasks (Sato et al., 2019; Giudice et al., 2020). In a study conducted in an outdoor two-story shopping center, Saha et al. reported that two participants, who were guide dog users, decided to change their primary mobility tools during the wayfinding tasks because “they felt their canes were better suited to the task” (Saha et al., 2019, p. 227). Other cane-based travelers offered feedback on their need for having better information about stairs (Flores et al., 2016), illuminating the interconnected nature of individual characteristics, travel demands, and mobility tools.

From global reporting across the 35 studies, we get a sense of the wide age range for participants and some basic information about visual status from 18 to 82 years of age. There is little mentioned of people who may have additional challenges in terms of disability or mobility. This finding represents a gap in our research as the bulk of the population, as they age, have one or more disabilities (Gray et al., 2016). On the other hand, the youngest participant was 18 years of age. One research team mentioned the need to include youth in future wayfinding studies, which could be particularly meaningful for young adults who are honing their wayfinding skills to explore college and career goals (Ahmetovic et al., 2016). Consider the Individuals with Disabilities Education Act (IDEA), which states that school age students with disabilities need to start planning for transition no later than their 16th year of age, earlier if determined appropriate by the current Individual Education Program team (U.S. Department of Education, 2017). Concurrently, the U.S. Census Bureau (n.d.) defines the “elderly” population as 65 years old or older. Twelve studies (34%) in our review included participants over 70. None of the studies within this review reported including deafblind participants, although when someone has a significant visual impairment and concomitant hearing loss they may be recognized as being deafblind because of the combined effects of vision and hearing loss in accessing information, communication, and full participation in community life (Jaiswal et al., 2018). This is a highly underrepresented population in the research and as demographics trend towards people living longer, there will be more people who experience vision and hearing loss (Perfect et al., 2019; Wittich et al., 2021). The exclusion of individuals with vision loss and additional disabilities not only fails to represent the population, it limits what we can know about the application of wayfinding tools.

Technologies and Devices Evaluated

Researchers within the corpus of studies used mixed method designs as well as quantitative approaches to evaluate the efficiency and usability of technologies during routes. Technologies supported a blend of the virtual and the physical interactions with the traveler for the cognitive and bodily aspects of wayfinding. Our systematic review found that the positioning of these wayfinding devices on the traveler’s body had a profound impact on the way that participants were able to use information dynamically. Travelers, at times, expressed an interest in holding the wayfinding device in their hands in order to receive real time haptic feedback during wayfinding tasks (Sato et al., 2019). Other participants desired a hands-free option during dynamic tasks (Abu Doush et al., 2016; van der Bie et al., 2019a; Saha et al., 2019). Still other research teams provided ways for participants to hold the phone in one’s hands or release it on a lanyard or store it within a belt around their waists (Balata et al., 2018; Sato et al., 2019). Still other travelers expressed challenges in managing the position of the device while traveling (Röijezon et al., 2019) or found that positioning errors of the device hindered navigation (Flores et al., 2016; Nair et al., 2018; Yoon et al., 2019). (Guerreiro et al, 2019) described the tradeoffs travelers made when it was uncomfortable to hold the phone in their hands while using escalators, but then experienced a reduction in location accuracy with the phone in their pocket or on a lanyard.

For some, the use of haptic information delivered through belts was combined with additional information to create richer navigation support, “like gaming” (Caraiman et al., 2019). Bone conduction ear buds allowed others to continue to receive ambient environmental sound and specific auditory navigation information from their wayfinding devices (Ohn-Bar et al., 2018; van der Bie et al., 2019a; Sato et al., 2019). The goodness of fit in the technology with an individual’s body in motion cannot be overlooked particularly for individuals who are visually impaired and using long canes or guide dogs for safety and navigation.

The types of sensory support afforded by the technologies are also worth noting because of the way travelers confirm intended routes using multiple forms of environmental information. Ross and Kelly (2009) discuss how travelers use a “combination of cues” when “orienting a route” such as “residual vision, sounds, smells, temperature sensations, air movement, and proprioceptive-haptic sensations” (p. 229). Giudice et al. (2019) consider cues as important for user interfaces to include in order to “adopt universal design.” They propose that all user interfaces should include visual, speech, spatialized audio, and haptic or vibration cues. In Zhao et al. (2020) work with Microsoft’s Hololens, a smartwatch was used to allow for easy toggling, while the person received audio, visual and haptic feedback for wayfinding. Roentgen et al. (2011) compared the preferred sensory related features of four wayfinding devices with participants, finding that these elements were meaningful according to the specific needs of the person and to their travel tasks.

From a cognitive load perspective, it is not surprising that real-time route information notably increases the rate of success among participants who are visually impaired or blind (Ko and Kim, 2017; Rodriguez-Sanchez and Martinez-Romo, 2017; Bai et al., 2018; Balata et al., 2018; Giudice et al., 2019). When information is provided in real-time, inclusion of landmark-based information (Balata et al., 2018), egocentric directions (Giudice et al., 2019), and tailored guidance (Rodriguez-Sanchez and Martinez-Romo, 2017) are preferred by travelers with visual impairments. Such findings are in alignment with previous studies that have looked at the temporal aspects of just-in-time support when navigating (Kalia et al., 2010; Ganz et al., 2012). Another theme from the systematic review was the impact of apps on reducing or sometimes increasing cognitive load while traveling routes. In some instances, participants voiced that the app was easy for them because the information from the device reduced what they needed to remember along an indoor route (Zaib et al., 2019). While most participants found the level of environmental information offered by the apps helpful, some individuals reported an increase in their cognitive load while using the devices (van der Bie et al., 2019b; Sato et al., 2019).

Environments, Settings, and Wayfinding Tasks

In Swobodzinski and Parker’s original examination of the literature, very few studies included real-world routes or participants with visual impairments. By design, our research team sought studies where a real-world environment was the context for the routes and wayfinding tasks. Our systematic investigation creates windows into the realities of human-centered design within dynamic travel environments. In one study, which was set in the city of Prague, the challenges of conducting research in authentic contexts was richly described. Researchers observed the ways in which participants were approached by other pedestrians or were distracted by sudden noises during the wayfinding task (Balata et al., 2016). Rather than this being a detractor to the research, reviewing this study in depth helped the coding team apply our exclusionary criteria when we examined studies that were conducted in more controlled environments. For example, one study that we excluded met all inclusionary criteria, and described a practical and well-designed route-based application with participants who were blind or had low vision to be able to retrace their routes, but according to the researchers was conducted in a controlled setting, where there was less chance of encountering other people (Flores and Manducci, 2018a). By deeper review, discussion and consensus, the coding team reluctantly excluded the study. Wayfinding tasks are complex because of these uncontrolled but regularly encountered travel distractions and a true evaluation of the wayfinding device’s utility by the traveler, is more true to life when researchers allow for in situ consideration of the way the device supports travelers. Several studies that we considered also were excluded because of their focus on obstacle detection or identification tasks (Patil et al., 2018); emphasis on shopping tasks for people with low vision (Szpiro et al., 2016); or participant’s preferences for descriptive annotations of landmarks (Gleason et al., 2018) rather than wayfinding. Although such studies provide invaluable insights and include people with visual impairments in real world settings, we were guided by the stated purposes of the researchers who led the studies and our desire to stay true to our investigation of wayfinding.