- 1Computer Science, Gallogly College of Engineering, The University of Oklahoma, Norman, OK, United States

- 2Engineering Pathways, Gallogly College of Engineering, The University of Oklahoma, Norman, OK, United States

Background: Deep learning (DL), a subset of machine learning and artificial intelligence (AI), is transforming engineering by addressing complex problems with innovative solutions. Despite its growing influence, a comprehensive review of current trends, applications, and research gaps in engineering disciplines is essential to understand its full potential, limitations, and potential educational implications.

Purpose: This study systematically explores the state, trends, and future directions of deep learning applications in engineering, and potential educational implications. The primary research question is: “What are the current applications, trends, and research gaps in the use of deep learning across engineering disciplines, and how can these insights guide future innovations in engineering practice?”

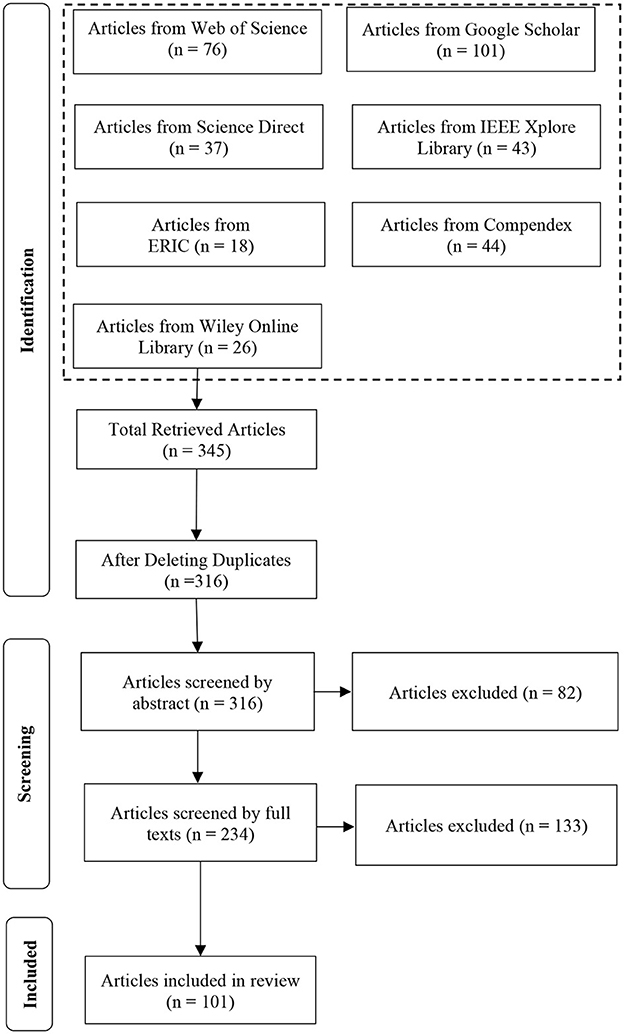

Method: A systematic literature review (SLR) was conducted in three phases: identification, screening, and synthesis. Articles were retrieved using the search term “deep learning + engineering” from databases like IEEE Xplore, Web of Science, and Google Scholar. After removing duplicates from an initial pool of 346 articles, abstracts and full texts were screened based on predefined exclusion criteria, narrowing the selection to 101 relevant studies. The synthesis categorized data into four themes: strategic methodologies, practical implementation, system optimization, and emerging applications.

Results: The analysis revealed DL's significant impact on engineering disciplines, especially mechanical and electrical engineering, with applications such as predictive maintenance and automated grid management. Key trends include strategic deep learning model development, practical evaluation frameworks, and the optimization of efficiency. However, research gaps remain in scalability, model interpretability, and real-world implementation.

Conclusions: This study underscores DL's transformative potential in engineering while identifying critical research gaps and opportunities. It provides a framework for future research and industry applications, emphasizing the importance of strategic innovation and interdisciplinary collaboration to advance deep learning in engineering.

Introduction

In today's rapidly evolving technological landscape, deep learning (DL), a subset of machine learning (ML) and artificial intelligence (AI), has gained significant traction across multiple disciplines. Deep learning mimics the way the human brain processes information, utilizing neural networks to analyze large and complex datasets with impressive accuracy and efficiency (Sarker, 2021). This ability has enabled deep learning to excel in areas like natural language processing and image recognition (LeCun et al., 2015). However, despite its successes, the application of deep learning in engineering fields has yet to be fully explored, leaving room for deeper analysis.

While DL's role in fields such as mechanical and electrical engineering is growing, existing literature often lacks the specificity or depth needed to capture the evolving nature of deep learning in these disciplines. Previous reviews of AI in engineering focus on general approaches, often leaving out the distinct challenges and innovations brought about by deep learning technologies. This gap highlights the need for a comprehensive review of how deep learning is currently being applied in engineering, as well as the future directions for its use in this field.

While this study focuses on deep learning applications in engineering, it is important to recognize that other AI-driven approaches, such as reinforcement learning and natural language processing, have also been applied in educational and engineering contexts. However, deep learning stands out for its ability to automatically extract hierarchical features from complex datasets without the need for manual feature engineering, offering advantages in fields like image analysis, predictive modeling, and automated decision-making. This capability distinguishes DL from traditional AI methods that often require more explicit programming and domain-specific heuristics.

This study aims to address this gap by answering the question: “What are the current applications, trends, and research gaps in the use of deep learning across engineering disciplines, and how can these insights guide future innovations in engineering practice?” Understanding deep learning's evolving role in engineering is critical for advancing innovation, improving system efficiencies, and solving complex real-world challenges across diverse disciplines. Specifically, this research will explore the algorithms, frameworks, and designs employed in deep learning studies, as well as the methods used for data collection and analysis. It will also identify key research gaps and propose potential directions for future investigations. The significance of this research lies in its potential to influence both academic and practical applications of deep learning in engineering. By providing an overview of the current landscape, this study aims to contribute to a deeper understanding of how deep learning can enhance problem-solving, innovation, and efficiency in various engineering fields.

Methods

This study's research framework was adapted from the model proposed by Borrego et al. (2014). The research process was divided into three phases (Identification, Screening, and Analysis), outlined in Figure 1 and as follows:

Identification

In the first phase, relevant articles were retrieved from multiple databases using the search term: “deep learning + engineering”. The databases utilized included Xplore Library, Compendex, Scopus, Google Scholar, Wiley Online Library, Web of Science, and ERIC. Retrieved articles were merged into a single dataset, and any duplicates were removed to create a distinct pool of publications for further analysis. A total of 345 articles were initially retrieved using these search terms, and this number was reduced to 316 after removing duplicates.

Screening

Following the identification phase, articles were screened to determine their relevance to the research topic. Abstracts were initially reviewed against a set of exclusion criteria to filter out irrelevant publications. These eight exclusion criteria are:

• EC1: Articles published before 2014 were excluded, as this year marked a major shift in deep learning with the rise of modern architectures like CNNs. Limiting the scope ensures relevance to current DL practices.

• EC2: Articles not published in English were excluded.

• EC3: Articles that are not peer-reviewed conference or journal articles were excluded,

• EC4: Articles that focus on other AI technologies such as traditional machine learning, without specific emphasis on deep learning, were excluded.

• EC5: Articles that do not explicitly focus on the application of deep learning in engineering fields were excluded.

• EC6: Articles that do not mention specific deep learning algorithms or frameworks used in their research were excluded.

• EC7: Books and theses were excluded to focus on peer-reviewed studies, ensuring methodological rigor and consistent empirical validation.

• EC8: Unfinished articles were excluded.

Subsequently, full texts of the remaining articles were examined and excluded based on the above criteria, further reducing the number of articles. The total number of articles included in the study was 101 and these studies were included in the final phase of the SLR process.

Synthesis

Articles that passed the full-text screening proceeded to the synthesis phase. During this phase, a comprehensive review of the final articles was conducted. Key details such as the title, publication year, research questions, research design, sampling strategies and sizes, data collection methods, and analysis techniques were compiled into a document. Initially, this was done manually by reading through each article and extracting the relevant information. However, an AI tool called Elicit AI was later used to assist in the information extraction process (Elicit: The AI Research Assistant, 2024). By creating targeted extraction prompts, the tool was able to retrieve detailed and useful information from each article, providing outputs that were comparable in quality to the manual process but completed more efficiently. The authors conducted a manual review of the outputs generated by Elicit AI to ensure their accuracy and reliability. While this qualitative synthesis captured key patterns and thematic insights, a formal bibliometric analysis—such as citation frequency and journal impact metrics—was conducted. These quantitative techniques enhance future research by providing a more structured assessment of scholarly influence and topic evolution. The extracted details were analyzed to answer the overarching question, “What are the current applications, trends, and research gaps in the use of deep learning across engineering disciplines, and how can these insights guide future innovations in engineering practice?”

Findings

Publication type and publication outlet of included articles

Among the 101 articles reviewed for this study, 23.3% were published as conference proceedings, while 76.7% were journal articles. The conference papers were predominantly published in IEEE-sponsored conferences, such as the 2019 International Conference on Deep Learning and Machine Learning in Emerging Applications (Deep-ML), 2021 International Symposium on Artificial Intelligence and its Application on Media (ISAIAM), and 2022 International Conference on Engineering Education and Information Technology (EEIT). Collectively, these IEEE venues accounted for 13.6% of the total publications.

The remaining 76.7% of journal articles were distributed across various outlets, with notable contributions from Computer-Aided Civil and Infrastructure Engineering (4.9%), IEEE Access (4.9%), Chemical Engineering Science (2.9%), Neural Networks (2.9%), and Journal of Neural Engineering (2.9%). Other journals, such as IEEE Transactions on Cybernetics, Applied Energy, Automation in Construction, and Sensors, each contributed 1.9–2.9% of the total reviewed articles. These percentages highlight a strong emphasis on journal publications, reflecting the in-depth and scholarly nature of deep learning research in engineering and related fields.

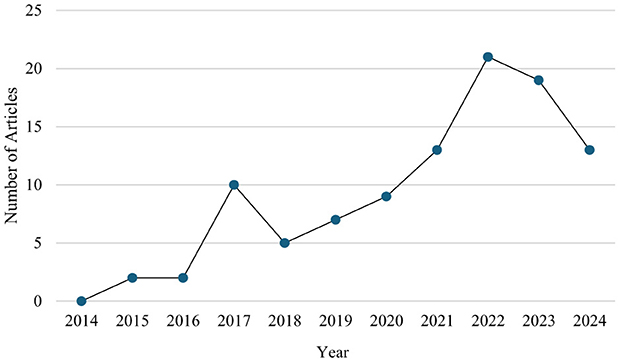

Number of publications in deep learning by year

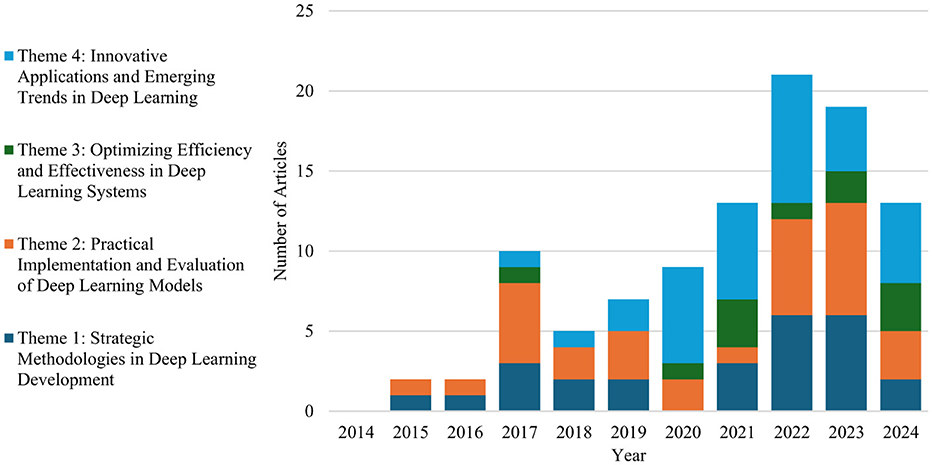

From 2014 through 2024, there has been a notable upward trend in the number of deep learning articles published per year, with a peak in 2022 (Figure 2). The number of publications started modestly between 2014 and 2016, followed by a sharp increase in 2017, indicating a growing interest in deep learning research. Although there was a slight dip in 2018, the trend continued to rise steadily from 2019 to 2022, reaching its highest point. In the subsequent years, a decline is observed in 2023 and 2024, though publication numbers remain higher than earlier years. This trend reflects the increased adoption and exploration of deep learning technologies during the past decade, with a slight tapering off in recent years. The observed decline in the number of articles in 2024 is attributed to the authors concluding their data collection during the summer of that year, and it does not fully represent the total articles published in 2024.

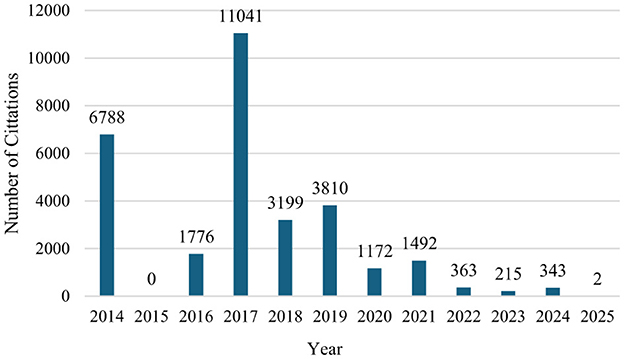

Bibliometric analysis

Figure 3 illustrates the temporal distribution of citations from 2014 to 2025. The data reveals a pronounced peak in 2017, with 11,041 citations, suggesting the publication of several high-impact studies during that year. Other notable years include 2014 and 2019, which garnered 6,788 and 3,810 citations respectively, indicating sustained academic influence. From 2020 onward, a declining trend in citations is observed, with more recent years such as 2023 and 2024 showing markedly lower citation counts (215 and 343, respectively), which is expected due to the citation time lag typically associated with newer publications. The minimal citation count in 2025 (only 2 citations) further supports this temporal lag. Interestingly, 2015 registered no citations, potentially indicating either a gap in publication or indexing for that year. Overall, the chart highlights the temporal dynamics of scholarly impact, with a concentration of influential publications between 2014 and 2019.

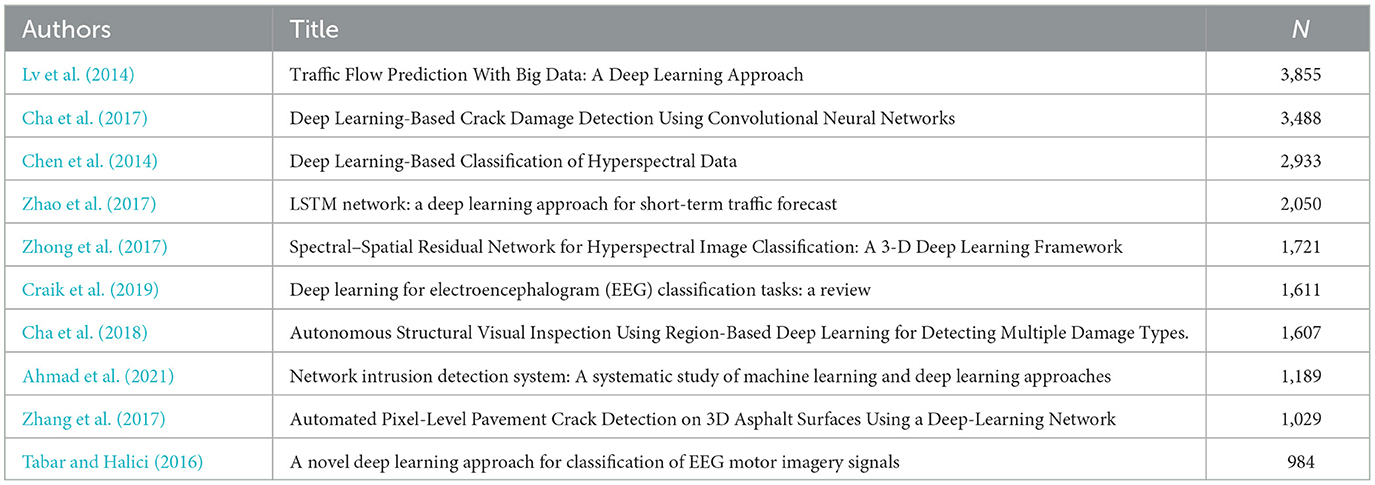

Table 1 presents the ten most cited articles among the sampled studies, highlighting key contributions to the application of deep learning in various engineering domains. The most highly cited work is by Lv et al. (2014), which focuses on traffic flow prediction using big data and deep learning, accumulating 3,855 citations. This is followed by Cha et al. (2017), whose study on crack damage detection using convolutional neural networks received 3,488 citations. Other notable articles include Chen et al. (2014) on hyperspectral data classification and Zhao et al. (2017) on short-term traffic forecasting using LSTM networks, cited 2,933 and 2,050 times, respectively. The list features significant advancements in structural health monitoring, image classification, EEG analysis, and cybersecurity, underscoring the diverse applicability and growing influence of deep learning across disciplines. The high citation counts reflect the substantial impact these studies have had in shaping subsequent research and technological development in their respective fields.

Additionally, the impact factor of the sampled articles published was in the range from 0.7 to 34.4. A review of the sampled studies reveals that several high-impact journal publications have significantly contributed to the scholarly discourse on deep learning in engineering applications. As summarized in Table B, at least 18 studies were published in journals with impact factors exceeding 9.0, underscoring the quality and visibility of the research. Notably, multiple articles appeared in Computer-Aided Civil and Infrastructure Engineering and Automation in Construction, both with an impact factor of 9.6, highlighting their influence within the civil and structural engineering community. Additionally, Applied Energy (IF: 11.45), IEEE Journal of Selected Topics in Signal Processing (IF: 11.48), and Composites Part B: Engineering (IF: 12.7) featured prominently in energy forecasting, signal processing, and materials engineering applications of deep learning. The highest impact factor in the dataset was recorded for an article published in IEEE Communications Surveys & Tutorials (IF: 34.4), reflecting the widespread relevance of deep reinforcement learning in network traffic engineering. Collectively, these publications demonstrate that deep learning research in engineering not only spans diverse domains but also garners attention in top-tier, multidisciplinary journals.

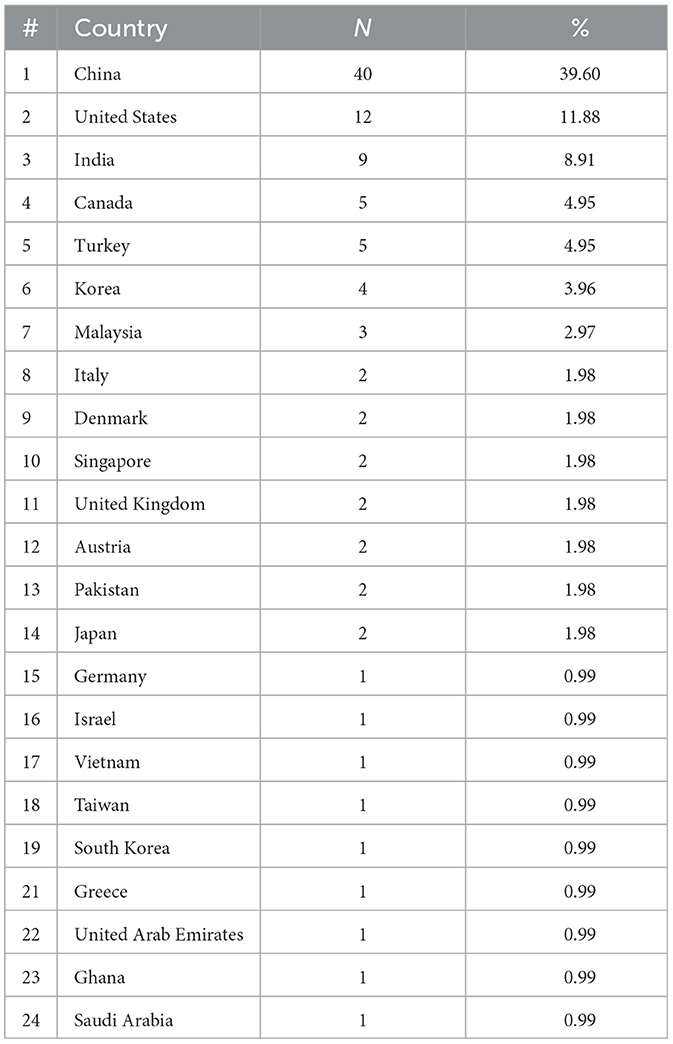

Country affiliation of first author

Table 2 shows that the articles selected for this review featured first authors from 24 countries. The majority of first authors were from China (39.6%), followed by the United States (11.9%) and India (8.9%). Canada and Turkey each contributed 4.95%, while Korea accounted for 3.96%. Malaysia contributed 2.97%, with Italy, Denmark, Singapore, and 6 other countries each representing 1.98%. An additional 9 countries accounted for 0.99% each. These results indicate a significant contribution from China and suggest that the predominance of articles from English-speaking or widely published countries may reflect publication trends or accessibility within our search criteria.

Notably, China and the United States dominate the authorship landscape, accounting for over 50% of the reviewed articles. This geographic concentration may influence research priorities, with China showing a strong focus on infrastructure and materials applications, while the U.S. contributions emphasize cybersecurity, automation, and optimization. The comparative scarcity of papers from other regions suggests a need for more globally inclusive research and may indicate disparities in funding, access to technology, or publishing networks.

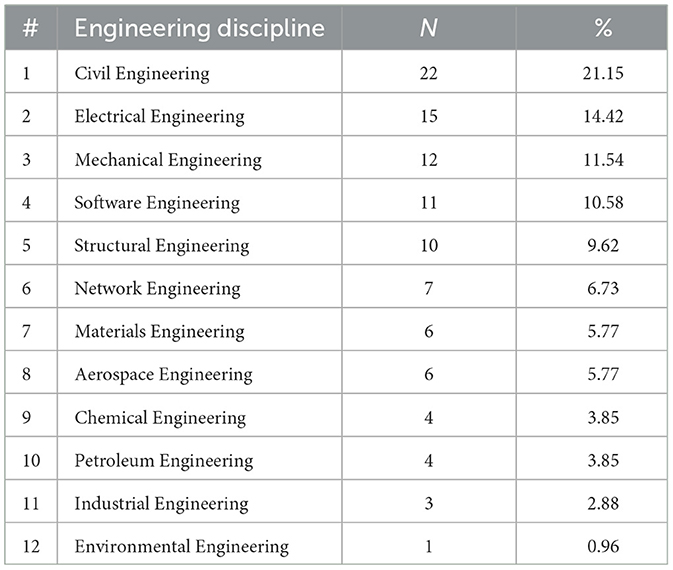

Deep learning applications by discipline

Table 3 lists the engineering disciplines studied across the 101 articles that explore the application of deep learning techniques. Civil engineering (21.57%) was the most frequently studied discipline, followed by electrical engineering (14.71%), mechanical engineering (11.76%), software engineering (10.78%), and structural engineering (10.78%). Additional disciplines such as network engineering (6.86%), materials engineering (5.88%), and aerospace engineering (5.88%) also contributed to the research, with smaller contributions from fields like chemical engineering, petroleum engineering, and industrial Engineering. Only one article (0.98%) focused on environmental engineering. The diversity of engineering disciplines shown in Table 1 highlights how broad deep learning is being applied across various domains of engineering. From more traditional areas such as civil and mechanical engineering to newer fields like network engineering, deep learning is proving its worth broadly. This shows how versatile technology is, helping tackle complex problems in all sorts of engineering specialties.

Table 3. Breakdown of engineering disciplines represented in the research articles applying deep learning techniques, including the count (N) and percentage (%) of articles in each discipline.

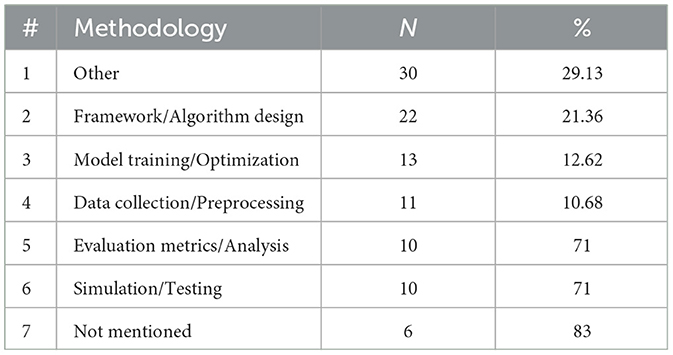

Research methodology

Table 4 categorizes the methodologies researchers employed in their studies. The most used methods fall into areas like framework/algorithm design (21.36%), where new models or frameworks were created or adapted for specific applications, and model training/optimization (12.62%), which involved techniques to improve model performance through tuning or optimization. Methods such as data collection/preprocessing (10.68%) and evaluation metrics/analysis (9.71%) also played significant roles in various studies. The other category (30.10%) includes methodologies that don't fit neatly into the main categories, encompassing unique, specialized, or highly specific approaches that are less common or more difficult to generalize. These methods often involve niche technical approaches, variations of deep learning that weren't fully captured by the primary categories, or techniques used in very specific contexts or applications.

Table 4. Breakdown of methodologies used in the reviewed studies, categorized by the most common research approaches and techniques employed.

Thematic analysis

This section presents the four key themes that emerged following the screening and analysis of the 101 sampled articles. These themes represent the primary areas of focus within the reviewed literature. The themes were identified by combining codes that go together well. For each theme, we provide their descriptions, and two exemplar studies which were selected for each theme. These exemplar studies were chosen because they closely aligned with the topics within each theme. Additionally, the information under each theme also includes practical implications for professionals in the deep learning field, as well as research implications for future studies in this area. This thematic analysis structure is informed by approaches used in recent systematic literature reviews (Harris and Kittur, 2024; Kittur et al., 2024; Nguyen and Kittur, 2024).

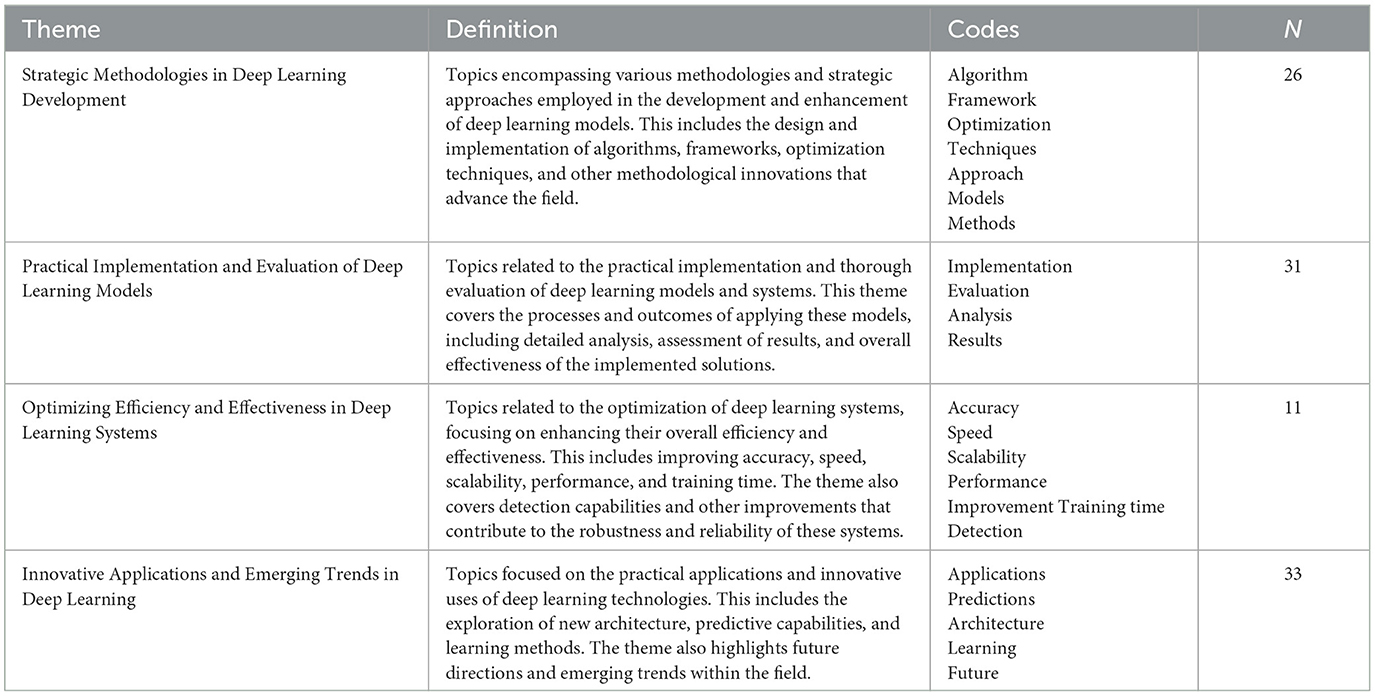

Coding and thematic grouping

Once the information was extracted from the articles, the data analysis phase began. Through a comprehensive review of the articles and extracted data, a total of 23 codes were identified, which were then grouped into four overarching themes. These themes, along with their associated codes and definitions, are presented in Table 5.

The distribution of articles across the four themes provides valuable insights into the progression and focus of deep learning research over the years. By examining these themes through time, we can observe shifts in the field's priorities, emerging trends, and areas that have garnered increased attention. Figure 4 illustrates the temporal distribution of articles corresponding to each theme, highlighting key periods of growth and evolution within the deep learning domain.

Figure 4. Distribution of articles by theme (2014–2024), highlighting trends and evolving research focus in deep learning.

Theme 1: strategic methodologies in deep learning development

This theme focuses on the various strategies and methodologies used to develop and improve deep learning models. It encompasses a broad range of topics, from algorithm design and framework creation to optimization techniques aimed at advancing the field. Of the 101 reviewed articles, 26 fell under this theme, highlighting the critical role of structured approaches and innovative methods in pushing deep learning forward.

Exemplar study 1

The first exemplar study presents a strategic approach to enhancing the accuracy of deep learning models for non-stationary time series prediction (Li et al., 2022). In this complex task, traditional statistical methods and existing machine-learning models often fall short. Li et al. (2022), propose a novel framework that combines time series decomposition and different techniques to extract more useful features, which are then utilized by a deep learning model. This approach involves the application of first order and second-order differencing to reduce volatility, followed by the decomposition of the time series into periodic, trend, and residual components. The framework employs a two-stage training process, with a GRU network predicting the trend and residual components, and an FCN network refining the predictions. The study's findings indicate that this methodology significantly outperforms traditional methods like ARIMA and other deep learning models, achieving a 43% decrease in MSE, a 24% decrease in RMSE, a 35% decrease in MAE, and a 3% increase in R-squared. This research exemplifies the theme, with its innovative use of algorithmic design, optimization techniques, and methodological advancements. It contributes to the ongoing optimization of deep learning models for complex, non-stationary time series data.

Exemplar study 2

In their study, Tabar and Halici (2016) address a significant gap in the application of deep learning within brain-computer interface (BCI) systems, an area in which traditional methods have often fallen short. They propose an innovative solution leveraging convolutional neural networks (CNNs) combined with stacked autoencoders (SAEs) to enhance the classification of EEG motor imagery signals—a critical component for improving BCI performance. By introducing a novel data representation that integrates time, frequency, and location information from EEG signals, the researchers use a 1D CNN to capture activation patterns, which are further refined by the SAE to boost classification accuracy. Their approach outperforms previous state-of-the-art methods, achieving a 9% improvement in kappa value on the BCI Competition IV dataset 2b and superior accuracy on the BCI Competition II dataset III. Despite these promising results, the study also highlights some limitations, including the small training dataset and the limited number of electrodes, leaving room for future research to explore larger datasets and more complex CNN architectures. This study is a strong example of the theme, demonstrating how innovative algorithmic approaches can push the boundaries of BCI applications, particularly in enabling faster, real-time classification for practical use (Tabar and Halici, 2016).

Research implications

Regarding the theme of strategic methodologies in deep learning development, future research could focus on advancing algorithm design to enhance model robustness and adaptability across diverse application domains. For instance, exploring novel optimization techniques, such as those employed in IoT-enabled smart manufacturing, could uncover ways to improve data processing pipelines and integrate domain-specific insights to refine model accuracy and scalability (Shah et al., 2020). Similarly, studies investigating the implementation of hybrid frameworks, like gated recurrent units combined with fully connected networks, could provide valuable strategies to mitigate issues such as lag and overfitting in non-stationary time series predictions, pushing the boundaries of temporal data modeling (Li et al., 2022).

Another promising direction involves the development of spectral-spatial feature extraction frameworks, exemplified in hyperspectral image classification, to address challenges related to high-dimensional data while maintaining computational efficiency (Zhong et al., 2017). Additionally, research into architectural innovations for complex tasks, such as those applied to engineering drawings, can inspire the creation of targeted methodologies that effectively handle intricate datasets and domain-specific requirements (Bhanbhro et al., 2022). These investigations collectively deepen our understanding of how structured approaches and innovative strategies drive the evolution of deep learning methodologies, ultimately fostering advancements in model performance and application versatility.

Practice implications

Practical applications of the methodologies discussed under the theme 1 suggest several promising directions for real-world implementation. For instance, leveraging deep learning frameworks for symbol detection in complex engineering drawings can streamline processes in fields like architecture and industrial design by automating the interpretation of intricate diagrams (Bhanbhro et al., 2022). Similarly, advancements in EEG motor imagery classification using deep learning highlight potential applications in brain-computer interface systems, which could improve accessibility for individuals with disabilities through more accurate signal recognition (Tabar and Halici, 2016).

The development of frameworks for non-stationary time series prediction addresses challenges in dynamic environments such as energy systems and weather forecasting, offering practical solutions to lag and prediction accuracy issues (Li et al., 2022). In smart manufacturing, deep learning paired with effective feature engineering enables enhanced data processing and decision-making, paving the way for IoT-driven production systems that are more efficient and adaptive (Shah et al., 2020). Finally, the spectral-spatial residual network designed for hyperspectral image classification provides a foundation for advancements in remote sensing and agricultural monitoring by improving the analysis of high-dimensional data (Zhong et al., 2017). These practical uses illustrate the transformative potential of structured methodologies in deep learning, driving innovation across diverse industries and addressing real-world challenges effectively.

Theme 2: practical implementation and evaluation of deep learning models

This theme delves into the hands-on application and rigorous assessment of deep learning models across various contexts. With 31 articles included, the focus is on how these models are applied, the processes involved in applying them, and how their performance is measured. The studies under this theme emphasize the practical challenges and successes in implementing deep learning systems, offering insights into the tools and techniques used to evaluate their overall effectiveness.

Exemplar study 1

The study by Chen et al. (2014) introduces a novel application of deep learning for hyperspectral data classification, emphasizing the practical challenges of feature extraction and classification accuracy. The authors argue that existing methods fail to effectively extract deep, hierarchical features, leading to less robust classification outcomes. By employing autoencoders (AEs) and stacked autoencoders (SAEs) to learn deep spectral features in an unsupervised manner, this study pioneers the use of deep learning for hyperspectral data. The methodology combines these deep spectral features with spatial-dominated features, forming a joint spectral-spatial deep learning framework. The key findings indicate that this deep learning-based approach significantly outperforms traditional methods like support vector machines (SVMs), particularly when incorporating both spectral and spatial information. However, the study also notes that the deep learning approach is computationally expensive and time-consuming, which may limit its practical applications. The authors recommend further exploration of this joint framework, highlighting its potential to advance the field of hyperspectral data classification and opening new avenues for research (Chen et al., 2014). This study exemplifies the effective application and thorough evaluation of deep learning techniques in a complex real-world scenario.

Exemplar study 2

The Cha et al. (2017) study addresses the challenges associated with traditional methods of structural health monitoring, particularly for large-scale civil infrastructure, where environmental effects and uncertainties can complicate detection. The authors propose a vision-based method using deep learning, specifically convolutional neural networks (CNNs), to detect cracks in concrete structures, providing a more robust and adaptable solution compared to conventional image processing techniques. The methodology involves collecting a dataset of 332 raw concrete surface images under various conditions, annotating them as “crack” or “intact,” and training a CNN classifier on a cropped image database. The trained CNN, coupled with a sliding window technique, demonstrated high accuracy (over 97%) in detecting cracks, even under challenging conditions such as strong lighting, shadows, and blur. However, the study also highlights limitations, such as the inability to detect internal structural features and the significant amount of training data required. The authors recommend expanding this approach to detect other types of structural damage and integrating it with autonomous drones for more comprehensive monitoring (Cha et al., 2017). This research showcases a practical application of CNNs in civil engineering, where thorough evaluation and adaptation of deep learning methods significantly enhance the detection of structural defects.

Research implications

Future research should explore the integration of hybrid evaluation frameworks that merge explainability with high-performance deep learning techniques, addressing challenges in understanding model decisions and scalability. Studies like Gulmez et al. (2024) highlight the potential of Explainable Artificial Intelligence (XAI) in enhancing trust and interpretability in deep learning applications. Similarly, He et al. (2017) demonstrate the need for adaptive models capable of addressing diverse attack scenarios in real-time. Expanding these methodologies to broader contexts, such as environmental monitoring or transportation systems, could help address domain-specific challenges like variable data quality and high computational demands. Additionally, integrating dynamic learning mechanisms, inspired by Chen et al. (2014), may enable more robust feature extraction and improve performance under limited training data scenarios.

Practice implications

From a practical perspective, tools leveraging convolutional neural networks (CNNs) for specific tasks, such as real-time fault detection or damage assessment, can transform operational workflows. For instance, Cha et al. (2017) illustrates the effectiveness of CNNs in monitoring infrastructure, achieving high accuracy under diverse environmental conditions. XRan's (Gulmez et al., 2024) explainable framework offers actionable insights into ransomware detection, potentially enhancing cybersecurity defenses through transparent decision-making processes. Moreover, the methods for spatio-temporal data analysis, as reviewed by Wang C. et al. (2022); Wang S. et al. (2022), highlight scalable approaches for urban planning and public safety. Tailoring these applications to address domain-specific needs, such as predictive maintenance in energy grids or real-time alerts in transportation systems, could significantly advance the adoption of deep learning models in critical sectors.

Theme 3: optimizing efficiency and effectiveness in deep learning systems

Eleven articles fall under the theme of innovative applications and emerging trends in deep learning, emphasizing their transformative role across industries. From advancements in fault diagnosis, traffic engineering, and cybersecurity to innovations in feature engineering and overfitting mitigation, these works demonstrate how deep learning optimizes processes and addresses complex challenges. By solving problems in areas like financial fraud detection, communication systems, and industrial applications, the theme underscores deep learning's growing influence and its potential to shape the future of technology and industry.

Exemplar study 1

The study published in 2020 by Zhai and Qiao, addresses the critical issue of prolonged training times in deep learning models, particularly within manufacturing fault diagnosis. The authors introduce an innovative adaptive learning rate strategy that individually adjusts weight and bias parameters, enhancing both training efficiency and classification accuracy. Leveraging a Deep Belief Network (DBN) and stochastic gradient descent (SGD), this strategy notably reduces training time and reconstruction error, while also boosting the model's fault classification performance. Specifically, the adaptive learning rate for weights accelerates convergence, and the power exponential learning rate for bias improves classification accuracy. While these advances are promising, the authors suggest that the approach may require modification for low-dimensional datasets and advocate for further research into broader practical applications. This study exemplifies the theme by offering a concrete method to improve both the speed and accuracy of deep learning models, particularly in industrial settings where efficiency is critical.

Exemplar study 2

Elkhatib et al.'s (2024) study presents a cutting-edge approach to signal modulation classification, a challenge often encountered in telecommunications. The study proposes a hybrid deep learning architecture combining Convolutional LSTM (ConvLSTM) and Transformer-block neural networks, enabling the model to directly process raw signals without denoising. This approach significantly improves classification accuracy for signals across both low and high Signal-to-Noise Ratio (SNR) conditions, achieving 66% accuracy for low SNR signals and 93.5% for high SNR signals. Furthermore, the adaptive weighted focal loss function introduced in the study addresses class imbalance and underflow issues, optimizing the classification process even in challenging conditions. Although the study demonstrates substantial improvements, the authors recognize that the model's performance could be further refined, particularly for noisy signals. This research is a clear representation of the theme showcasing how innovative deep learning architectures can bolster both the accuracy and robustness of complex classification tasks in real-world telecommunications scenarios (Elkhatib et al., 2024).

Research implications

Future research in optimizing efficiency and effectiveness in deep learning systems can explore hybrid methodologies and adaptive strategies for performance enhancement. For instance, Zhai and Qiao (2020) emphasize the importance of adaptive learning rate strategies in reducing training time and improving model accuracy, suggesting the need to investigate parameter-specific adjustments across diverse applications. Similarly, Chang et al. (2022) demonstrate the potential of feature engineering in reducing computational complexity while maintaining high performance in adversarial environments. Exploring the integration of these approaches with automated feature selection techniques, as highlighted by Lee et al. (2023) for carbon emissions prediction, could lead to more generalizable and efficient models. Furthermore, the development of combinatorial techniques, such as Elkhatib et al.'s (2024) transformer-based modulation classification, opens avenues for cross-domain applications, particularly in scenarios requiring high accuracy under noisy conditions.

Practice implications

In practice, optimizing deep learning systems can significantly advance real-world applications by improving process efficiency and decision-making. For example, Dinh et al. (2022) highlight the effectiveness of combining feature engineering with deep learning for robust stereo vision in adverse driving conditions, which could revolutionize autonomous vehicle navigation. Similarly, Chang et al. (2022) propose feature-engineered reinforcement learning for anti-jamming strategies, paving the way for resilient communication systems in hostile environments. These innovations can also extend to industrial fault diagnosis, as demonstrated by Zhai and Qiao (2020), who present adaptive learning strategies that enhance model responsiveness and accuracy. Leveraging such advancements in sectors like energy management, as noted in Lee et al.'s (2023) work on carbon emissions prediction, could lead to significant environmental and operational benefits. Lastly, applying dynamic optimization techniques, like Elkhatib et al.'s (2024) approach to signal modulation classification, could enhance wireless communication networks, addressing challenges in signal quality and bandwidth allocation.

Theme 4: innovative applications and emerging trends in deep learning

Thirty-three articles were categorized under the theme “Innovative Applications and Emerging Trends in Deep Learning.” These articles explore a variety of practical applications of deep learning across different fields, including automated systems, molecular engineering, and product innovation. They also highlight advancements in deep reinforcement learning for optimization and traffic engineering, as well as innovations in feature engineering and deep learning frameworks for specific challenges in engineering and technology.

Exemplar study 1

The study published by Nguyen et al. (2023), offers a comprehensive review of how deep learning (DL) and deep reinforcement learning (DRL) are shaping the future of 6G wireless networks. The paper explores various DL and DRL techniques, such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), graph neural networks (GNNs), and deep Q-networks (DQN), and how they are applied to solve critical challenges in 6G networks. These challenges include resource management, spectrum optimization, mobility management, and security. While primarily reviewing current applications, the study emphasizes the potential of these technologies to meet the demanding requirements of 6G systems, such as ultra-low latency, high reliability, and energy efficiency. The authors underscore the successful application of DL and DRL in tasks like resource allocation and channel prediction and propose further exploration in areas like energy-efficient model training, federated learning, and multi-agent DRL frameworks. This research exemplifies the theme by illustrating how advanced deep learning techniques are being tailored to the evolving demands of next-generation wireless communication systems, paving the way for continued innovation in the field (Nguyen et al., 2023).

Exemplar study 2

The study published in 2024 by Tsinganos et al., introduces a groundbreaking system designed to detect and mitigate chat-based social engineering (CSE) attacks using deep learning. The study highlights that traditional cybersecurity measures are insufficient against the increasingly sophisticated manipulation tactics deployed in social engineering, which exploit human psychology and behavior. To address this, the authors developed CSE-ARS, a system that utilizes a late fusion strategy to combine the outputs of several specialized deep learning models, each focused on recognizing specific enablers of social engineering attacks, such as information leakage, personality traits, dialogue acts, persuasion techniques, and persistent behavior. By integrating insights from social psychology and personality theory, including Cialdini's principles of persuasion and the Big Five Personality Traits, CSE-ARS significantly improves the detection and mitigation of CSE attacks. The study finds that the system outperforms individual models by using multimodal fusion, making it a robust defense against these threats. However, the authors acknowledge certain limitations, such as the lack of deepfake detection and advanced multimedia processing capabilities, which could further enhance its effectiveness. This research exemplifies the theme by showcasing how interdisciplinary approaches combining deep learning with psychological theory can address emerging cybersecurity threats, offering a more comprehensive and resilient defense mechanism (Tsinganos et al., 2024).

Research implications

Future research should focus on interdisciplinary advancements that combine domain-specific challenges with emerging deep learning trends. For example, Nguyen et al. (2023) highlight the transformative potential of deep reinforcement learning (DRL) in optimizing 6G network architectures, while Chang and Zhu (2024) demonstrate how deep learning frameworks can address the complexities of defect and strain engineering in materials science. Investigating the cross-application of such techniques to other domains, such as disaster management or precision agriculture, could yield innovative methodologies. Additionally, the integration of late fusion strategies for multimodal data, as applied by Tsinganos et al. (2024) in social engineering attack detection, suggests that similar approaches could be tailored to fields like medical diagnostics or smart city infrastructure. Further exploration into these methodologies could refine their adaptability and robustness, enabling better scalability across diverse fields.

Practice implications

From a practical perspective, the methodologies discussed in these studies could revolutionize real-world applications. Jin et al. (2023) demonstrate how deep learning-driven computer vision frameworks can significantly improve post-earthquake structural assessments, offering faster and more accurate damage evaluations. Similarly, Sujatha et al. (2023) emphasize the efficacy of DRL for network intrusion detection, suggesting that such systems could enhance cybersecurity protocols in dynamic environments. These innovations also extend to fields like telecommunications, where Nguyen et al. (2023) propose DRL-enabled resource allocation for 6G networks. Practical applications in materials science, such as the strain and defect engineering frameworks developed by Chang and Zhu (2024), could expedite the development of advanced materials for industrial use. By adopting these advanced tools and techniques, industries can address specific operational challenges, enhance efficiency, and foster technological growth in critical areas.

Pedagogical implications

The thematic findings of this review offer valuable insights for instructional design in engineering and computer science education. For instance, the theme on Strategic Methodologies in Deep Learning Development can inform the structure of project-based learning modules, where students design and optimize algorithms or frameworks to solve real-world problems. The Practical Implementation and Evaluation theme supports the integration of case-based teaching strategies, enabling students to critically assess the effectiveness of deep learning solutions across domains such as infrastructure, cybersecurity, and urban planning. Additionally, content from the Efficiency and Optimization theme can be leveraged to teach model tuning, system performance analysis, and resource-aware computing—key skills in advanced AI curricula. Finally, the Emerging Applications theme could inspire cross-disciplinary capstone projects, where students explore cutting-edge applications of deep learning in fields ranging from materials science to wireless networks. Incorporating these themes into instructional strategies fosters applied learning, encourages interdisciplinary thinking, and helps students bridge theory with industry-relevant practice.

Conclusion

This systematic literature review provides a comprehensive analysis of the current applications, trends, research gaps, and future directions of deep learning across engineering disciplines. By examining 101 peer-reviewed articles published between 2014 and 2024, the study identifies key disciplines, methodologies, and thematic areas where deep learning is making significant impacts. The findings reveal that deep learning is extensively applied across various engineering disciplines, with civil engineering (21.15%) being the most represented, followed by electrical engineering (14.42%) and mechanical engineering (11.54%). This widespread adoption underscores the versatility and transformative potential of deep learning techniques in solving complex engineering problems. Methodologically, the reviewed studies predominantly focus on framework and algorithm design (21.36%), model training and optimization (12.62%), and data collection and preprocessing (10.68%). These approaches highlight the ongoing efforts to enhance model performance, efficiency, and applicability in real-world scenarios.

The thematic analysis uncovers four key themes:

1. Strategic Methodologies in Deep Learning Development: Emphasizes innovative strategies for developing and improving deep learning models. Exemplar studies demonstrate how novel frameworks, and algorithmic approaches can significantly enhance prediction accuracy and classification performance in complex tasks.

2. Practical Implementation and Evaluation of Deep Learning Models: Focuses on the hands-on application and rigorous assessment of deep learning models. Studies under this theme showcase successful implementations in areas like hyperspectral data classification and structural health monitoring, highlighting both the potential and challenges of applying deep learning in practice.

3. Optimizing Efficiency and Effectiveness in Deep Learning Systems: Addresses the need for balancing efficiency and effectiveness in deep learning applications. Exemplary research illustrates how adaptive strategies and hybrid architectures can reduce training times and improve accuracy, particularly in industrial and telecommunications contexts.

4. Innovative Applications and Emerging Trends in Deep Learning: Explores the frontier of deep learning applications and future directions. Studies in this theme reveal how deep learning is being leveraged to address emerging challenges in 6G networks and cybersecurity, demonstrating the field's adaptability and potential for interdisciplinary integration.

Implications, limitations, and future directions

The synthesis of the reviewed literature underscores the transformative impact of deep learning on engineering research. The integration of advanced deep learning methodologies offers significant potential for solving complex engineering problems more effectively. Researchers are encouraged to focus on developing robust and efficient models that can handle real-world challenges such as data variability, computational constraints, and the need for scalability.

Persistent gaps such as scalability, interpretability, and real-world implementation challenges remain. These are often due to computational limitations, the black-box nature of complexDEEP LEARNINGmodels, lack of interpretability tools, and difficulties in transferring research models to diverse engineering environments. The absence of standardized benchmarking and domain-specific constraints further complicates these issues, underscoring the need for collaborative interdisciplinary research to overcome these barriers.

The importance of interdisciplinary approaches is evident, suggesting that combining deep learning with insights from other fields can lead to innovative solutions and new applications. By addressing these aspects, future research can contribute to the advancement of engineering knowledge, fostering models that are not only theoretically sound but also practically viable across diverse engineering domains.

For practitioners, the findings highlight the considerable benefits of adopting deep learning techniques in engineering applications. Implementing these advanced models can lead to improvements in accuracy, efficiency, and overall performance in tasks ranging from predictive maintenance to resource optimization and cybersecurity. The versatility of deep learning allows engineers to tackle complex problems that were previously difficult to address with traditional methods. However, practical adoption requires awareness of potential challenges, such as the need for substantial computational resources and high-quality data. By embracing deep learning technologies, engineering professionals can enhance innovation, drive operational excellence, and maintain a competitive edge in an increasingly technology-driven landscape.

Limitations

While this review offers a comprehensive overview, it is not without limitations. While the exclusion of books and dissertations may omit some theoretical frameworks, this decision was made to prioritize peer-reviewed studies with clearly documented methodologies and empirical evidence. Similarly, while pre-2014 research may contain foundational work, the focus on post-2014 publications ensure alignment with current deep learning technologies. Also, the sampled studies were not assessed on their quality, and this might have introduced some bias in our synthesis. Future studies are required to assess the quality of the studies using standard frameworks such as GRADE-based evaluation.

Additionally, the rapid evolution of deep learning technologies means that new developments may quickly emerge beyond the scope of this review. Furthermore, this review does not include a formal bibliometric analysis, such impact factor metrics, nor does it apply a systematic quality assessment framework to evaluate the methodological rigor of the included studies. Incorporating such analyses in future research could provide more nuanced insights into the influence, credibility, and scholarly impact of deep learning applications in engineering. Finally, this review is limited to studies published in English, which may have introduced regional and language bias by excluding potentially relevant research conducted in other languages or regions.

Future directions

Future research should consider expanding the scope to include a broader range of publications and exploring the impact of emerging technologies such as explainable AI, federated learning, and quantum computing on deep learning applications in engineering. There is also a need for longitudinal studies to assess the long-term effectiveness and sustainability of deep learning solutions in engineering practice. While this review focused on established deep learning applications within engineering, future studies may consider expanding the scope to include emerging areas such as model interpretability, federated learning, and graph neural networks, which are poised to play a significant role in advancing the field. To address challenges related to reproducibility and scalability, future research should also focus on establishing collaborative initiatives and standardized benchmarking practices across engineering domains to ensure consistency, transparency, and wider applicability of deep learning models.

Since this review focuses on the applications of deep learning in engineering domains, most of the sampled studies did not explicitly address pedagogical strategies or educational frameworks. As such, this paper does not evaluate the effectiveness of deep learning in enhancing student learning outcomes compared to traditional methods. Future studies are encouraged to explore how deep learning can be integrated into engineering education through real-world instructional tools such as AI-driven tutoring systems, adaptive learning platforms, and simulations. In doing so, scholars should consider applying pedagogical models like Bloom's Taxonomy and constructivist learning theory to evaluate the impact of deep learning on student learning. Moreover, as DL-based tools become more common in educational settings, ethical concerns such as bias in automated assessment, transparency in feedback, and the need for faculty training must be addressed. These considerations are critical to ensuring fair, responsible, and effective implementation of AI-driven educational technologies.

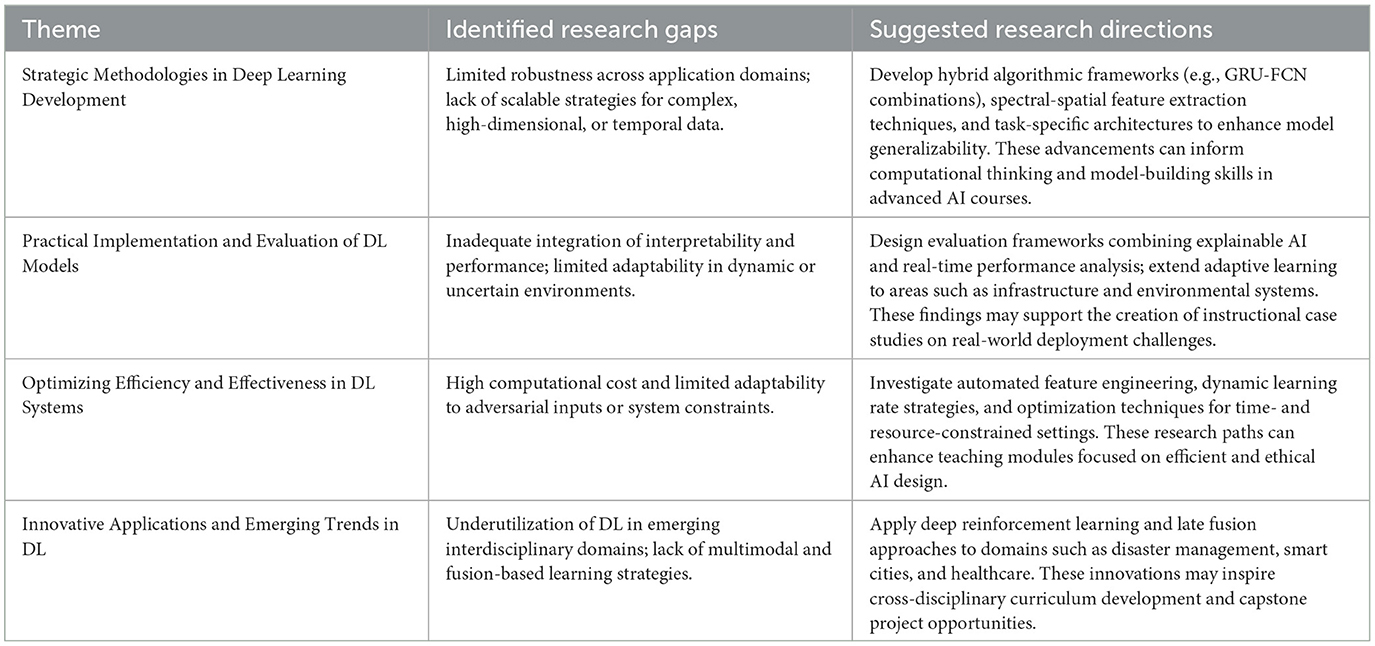

To synthesize the thematic findings and guide future work, Table 6 outlines key research gaps and corresponding directions across the four identified themes. Each theme reflects distinct but interconnected challenges in deep learning research, from methodological development to real-world application and optimization. The suggested directions highlight opportunities for advancing model robustness, interpretability, efficiency, and interdisciplinary integration. Additionally, these insights can inform curriculum design, instructional case studies, and student-led projects, reinforcing the educational relevance of emerging deep learning research in engineering contexts.

Table 6. Summary of research gaps and suggested future directions in deep learning for engineering contexts.

In conclusion, deep learning is profoundly influencing engineering research and practice, offering innovative solutions to complex problems across multiple disciplines. The identified trends and themes reflect a vibrant and evolving field, characterized by methodological advancements, practical implementations, optimization efforts, and emerging applications. By synthesizing current knowledge and highlighting future directions, this study contributes to a deeper understanding of deep learning's role in engineering and serves as a valuable resource for researchers and practitioners aiming to leverage these technologies for continued innovation and improvement.

Author contributions

AT: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Project administration, Validation, Writing – original draft, Writing – review & editing. JK: Conceptualization, Investigation, Methodology, Project administration, Supervision, Validation, Writing – review & editing.

Funding

The author(s) declare that financial support was received for the research and/or publication of this article. This project was supported by the Provost's Summer Undergraduate Research and Creative Activities (UReCA) Fellowship. Financial support was provided by the University of Oklahoma Libraries' Open Access Fund to cover the article processing charges.

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Generative AI statement

The author(s) declare that Gen AI was used in the creation of this manuscript. During the preparation of this work the author(s) used ChatGPT 4o to improve the language and readability. After using this tool/service, the author(s) reviewed and edited the content as needed and take full responsibility for the content of the publication.

Publisher's note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

Author disclaimer

Its contents, including findings, conclusions, opinions, and recommendations, are solely attributed to the author(s) and do not necessarily represent the views of the Provost's Office.

Supplementary material

The Supplementary Material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/feduc.2025.1583404/full#supplementary-material

References

Ahmad, Z., Shahid Khan, A., Wai Shiang, C., Abdullah, J., and Ahmad, F. (2021). Network intrusion detection system: a systematic study of machine learning and deep learning approaches. Trans. Emerg. Telecommun. Technol. 32:e4150. doi: 10.1002/ett.4150

Bhanbhro, H., Hooi, Y.K., Hassan, Z., and Sohu, N. (2022). “Modern deep learning approaches for symbol detection in complex engineering drawings,” in 2022 International Conference on Digital Transformation and Intelligence (ICDI) (Piscataway, NJ; sIEEE), 121–126. doi: 10.1109/ICDI57181.2022.10007281

Borrego, M., Foster, M. J., and Froyd, J. E. (2014). Systematic literature reviews in engineering education and other developing interdisciplinary fields. J. Eng. Educ. 103, 4576. doi: 10.1002/jee.20038

Cha, Y.-J., Choi, W., and Büyüköztürk, O. (2017). Deep learning-based crack damage detection using convolutional neural networks. Comp. Aided Civil Infrastruct. Eng. 32, 361–378. doi: 10.1111/mice.12263

Cha, Y.-J., Choi, W., Suh, G., Mahmoudkhani, S., and Büyüköztürk, O. (2018). Autonomous structural visual inspection using region-based deep learning for detecting multiple damage types. Comp. Aided Civil Infrastruct. Eng. 33, 731–747. doi: 10.1111/mice.12334

Chang, J., and Zhu, S. (2024). Deep learning on atomistic physical fields of graphene for strain and defect engineering. Adv. Intell. Syst. 6:2300601. doi: 10.1002/aisy.202300601

Chang, X., Li, Y., Zhao, Y., Du, Y., and Liu, D. (2022). An improved anti-jamming method based on deep reinforcement learning and feature engineering. IEEE Access 10, 69992–70000. doi: 10.1109/ACCESS.2022.3187030

Chen, Y., Lin, Z., Zhao, X., Wang, G., and Gu, Y. (2014). Deep learning-based classification of hyperspectral data. IEEE J. Sel. Topics Appl. Earth Obs. Remote Sens. 7, 2094–2107. doi: 10.1109/JSTARS.2014.2329330

Craik, A., He, Y., and Contreras-Vidal, J. L. (2019). Deep learning for electroencephalogram (EEG) classification tasks: a review. J. Neural Eng. 16:031001. doi: 10.1088/1741-2552/ab0ab5

Dinh, V. Q., Nguyen, P. H., and Nguyen, V. D. (2022). Feature engineering and deep learning for stereo matching under adverse driving conditions. IEEE Trans. Intell. Transp. Syst. 23, 7855–7865. doi: 10.1109/TITS.2021.3073557

Elkhatib, Z., Kamalov, F., Moussa, S., Mnaouer, A. B., Yagoub, M. C. E., Yanikomeroglu, H., et al. (2024). Radio modulation classification optimization using combinatorial deep learning technique. IEEE Access 12, 17552–17570. doi: 10.1109/ACCESS.2024.3357628

Gulmez, S., Gorgulu Kakisim, A., and Sogukpinar, I. (2024). XRan: explainable deep learning-based ransomware detection using dynamic analysis. Comp. Secur. 139:103703. doi: 10.1016/j.cose.2024.103703

Harris, H. J., and Kittur, J. (2024). “Generative artificial intelligence in undergraduate engineering: a systematic literature review,” in 2024 ASEE Annual Conference and Exposition (Washington, DC: ASEE). doi: 10.18260/1-2-47492

He, Y., Mendis, G. J., and Wei, J. (2017). Real-time detection of false data injection attacks in smart grid: a deep learning-based intelligent mechanism. IEEE Trans. Smart Grid 8, 2505–2516. doi: 10.1109/TSG.2017.2703842

Jin, T., Ye, X. W., Que, W. M., and Ma, S. Y. (2023). Computer vision and deep learning-based post-earthquake intelligent assessment of engineering structures: technological status and challenges. Smart Struct. Syst. 31, 311–323. doi: 10.12989/sss.2023.31.4.311

Kittur, J., Brunhaver, S. R., Bekki, J. M., and Thomas, K. (2024). A systematic literature review of trends in research and practice among asynchronous online course offerings in formal engineering curricula. Stud. Eng. Educ. 5, 67–91. doi: 10.21061/see.122

LeCun, Y., Bengio, Y., and Hinton, G. (2015). Deep learning. Nature 521, 436444. doi: 10.1038/nature14539

Lee, Z.-J., Lin, Y., Yang, Z., Chen, Z.-Y., Fan, W.-G., Lee, C.-H., et al. (2023). “Novel automatic feature engineering for carbon emissions prediction base on deep learning,” 2023 IEEE 6th Eurasian Conference on Educational Innovation (ECEI) (Piscataway, NJ: IEEE), 203–206. doi: 10.1109/ECEI57668.2023.10105367

Li, L., Huang, S., Ouyang, Z., and Li, N. (2022). “A deep learning framework for non-stationary time series prediction,” 2022 3rd International Conference on Computer Vision, Image and Deep Learning and International Conference on Computer Engineering and Applications (CVIDL and ICCEA) (Piscataway, NJ: IEEE), 339–342. doi: 10.1109/CVIDLICCEA56201.2022.9824863

Lv, Y., Duan, Y., Kang, W., Li, Z., and Wang, F.-Y. (2014). Traffic flow prediction with big data: a deep learning approach. IEEE Trans. Intell. Transp. Syst. 16, 865–873. doi: 10.1109/TITS.2014.2345663

Nguyen, A., and Kittur, J. (2024). “Exploring the use of artificial intelligence in racing games in engineering education: a systematic literature review,” in Proceedings of 2024 ASEE Annual Conference and Exposition (Washington, DC: ASEE). doi: 10.18260/1-2-47040

Nguyen, T.-H., Park, H., Seol, K., So, S., and Park, L. (2023). “Applications of deep learning and deep reinforcement learning in 6G networks,” in 2023 Fourteenth International Conference on Ubiquitous and Future Networks (ICUFN) (Washington, DC: IEEE), 427–432. doi: 10.1109/ICUFN57995.2023.10200390

Sarker, I. H. (2021). Deep learning: a comprehensive overview on techniques, taxonomy, applications and research directions. SN Comput. Sci. 2, 120. doi: 10.1007/s42979-021-00815-1

Shah, D., Wang, J., and He, Q. P. (2020). Feature engineering in big data analytics for IoT-enabled smart manufacturing - Comparison between deep learning and statistical learning. Comp. Chem. Eng. 141:106970. doi: 10.1016/j.compchemeng.2020.106970

Sujatha, V., Prasanna, K. L., Niharika, K., Charishma, V., and Sai, K. B. (2023). “Network intrusion detection using deep reinforcement learning,” in 2023 7th International Conference on Computing Methodologies and Communication (ICCMC) (Piscataway, NJ: IEEE), 1146–1150. doi: 10.1109/ICCMC56507.2023.10083673

Tabar, Y. R., and Halici, U. (2016). A novel deep learning approach for classification of EEG motor imagery signals. J. Neural Eng. 14:016003. doi: 10.1088/1741-2560/14/1/016003

Tsinganos, N., Fouliras, P., Mavridis, I., and Gritzalis, D. (2024). CSE-ARS: deep learning-based late fusion of multimodal information for chat-based social engineering attack recognition. IEEE Access 12, 16072–16088. doi: 10.1109/ACCESS.2024.3359030

Wang, C., Song, L., and Fan, J. (2022). End-to-end structural analysis in civil engineering based on deep learning. Autom. Constr. 138:104255. doi: 10.1016/j.autcon.2022.104255

Wang, S., Cao, J., and Yu, P. S. (2022). Deep learning for spatio-temporal data mining: a survey. IEEE Trans. Knowl. Data Eng. 34, 3681–3700. doi: 10.1109/TKDE.2020.3025580

Zhai, X., and Qiao, F. (2020). “A deep learning model with adaptive learning rate for fault diagnosis,” in 2020 IEEE 9th Data Driven Control and Learning Systems Conference (DDCLS) (Piscataway, NJ: IEEE), 668–673. doi: 10.1109/DDCLS49620.2020.9275094

Zhang, A., Wang, K. C. P., Li, B., Yang, E., Dai, X., Peng, Y., et al. (2017). Automated pixel-level pavement crack detection on 3d asphalt surfaces using a deep-learning network. Comp. Aided Civil Infrastruct. Eng. 32, 805–819. doi: 10.1111/mice.12297

Zhao, Z., Chen, W., Wu, X., Chen, P. C. Y., and Liu, J. (2017). LSTM network: a deep learning approach for short-term traffic forecast. IET Intell. Transp. Syst. 11, 68–75. doi: 10.1049/iet-its.2016.0208

Keywords: artificial intelligence, deep learning, engineering, systematic literature review, neural networks

Citation: Tobias AG and Kittur J (2025) Strategic innovations and future directions in deep learning for engineering applications: a systematic literature review. Front. Educ. 10:1583404. doi: 10.3389/feduc.2025.1583404

Received: 25 February 2025; Accepted: 18 July 2025;

Published: 12 August 2025.

Edited by:

Yu-Tung Kuo, North Carolina Agricultural and Technical State University, United StatesReviewed by:

Madhu Puttegowda, Malnad College of Engineering, IndiaM. Dolores Ramirez, Autonomous University of Madrid, Spain

Abdulelah S. Alshehri, King Saud University, Saudi Arabia

Copyright © 2025 Tobias and Kittur. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Javeed Kittur, amtpdHR1ckBvdS5lZHU=

Arianna G. Tobias1

Arianna G. Tobias1 Javeed Kittur

Javeed Kittur