Abstract

A new meta-heuristic algorithm called like-attracts-like optimizer (LALO) is proposed in this article. It is inspired by the fact that an excellent person (i.e., a high-quality solution) easily attracts like-minded people to approach him or her. This LALO algorithm is an important inspiration for video robotics cluster control. First, the searching individuals are dynamically divided into multiple clusters by a growing neural gas network according to their positions, in which the topological relations between different clusters can also be determined. Second, each individual will approach a better individual from its superordinate cluster and the adjacent clusters. The performance of LALO is evaluated based on unimodal benchmark functions compared with various well-known meta-heuristic algorithms, which reveals that it is competitive for some optimizations.

1 Introduction

Optimization is almost everywhere in our world, which usually attempts to find the perfect solution for a certain issue. To deal with these issues, various optimization methods have been proposed and have shown a good performance. Most of them fall into two categories: mathematical programming methods and meta-heuristics (Dai et al., 2009). The first type can rapidly converge to an optimum with high convergence stability by utilizing the gradient information (Guo et al., 2014), such as quadratic programming (Wang et al., 2014) and the Newton method (Kazemtabrizi and Acha, 2014). But the mathematical programming methods are highly dependent on the mathematical model of the optimization problem (Mirjalili and SCA, 2016). Furthermore, they are even incapable of addressing a blank-box optimization with only the input and output measurements. In contrast, the meta-heuristic algorithms are more flexible to be employed for different optimization problems since they are highly independent of the mathematical model of the specific problem (Zhang et al., 2017). Furthermore, they are easily applied to an optimization with only the input and output measurements instead of any gradient information or convex transformation. Meanwhile, through the balance between global search and local search, they can effectively avoid falling into the low-quality local optimal solution (Alba and Dorronsoro, 2005). Consequently, meta-heuristic algorithms have become a popular and powerful way to engineer optimization problems.

So far, most meta-heuristic algorithms have been designed from different nature phenomena (Askari et al., 2020), e.g., animal hunting and human learning. Among all of these meta-heuristic algorithms, there is no one able to tackle every optimizing issue with a good performance based on the No-Free-Lunch theorem (Wolpert and Macready, 1997). In fact, each meta-heuristic algorithm can show superior performance in only some kinds of optimization problems compared with most of the other algorithms (Zhao et al., 2019). This is also the main reason why so many different meta-heuristic algorithms have been designed and proposed.

According to the number of searching individuals, the meta-heuristic algorithms can be divided into two categories, including the single-individual-based and population-based algorithms (Mirjalili et al., 2014). It is noticeable that the single-individual-based algorithms with simple searching operations require fewer fitness evaluations and computation time than those of the second category (Mas et al., 2009). However, it is also easily trapped in a low-quality local optimum since the single individual is difficult to increase the solution diversity while guaranteeing an efficient search, such as simulated annealing (SA) (Kirkpatrick et al., 1983) and tabu search (TS) (Glover, 1989). In another aspect, population-based methods (Mirjalili et al., 2014) can effectively improve the optimization efficiency and global searching ability via a specific cooperation between different individuals. In contrast to the single-individual-based algorithms, the population-based algorithms need to consume more computation time to execute adequate fitness evaluations for exploration and exploitation.

The video robot system has the benefits of high strength, high precision, and good repeatability. Meanwhile, it has a strong ability to withstand extreme environments, so it can complete a variety of tasks excellently (Mas et al., 2009). Although the majority of video robots execute these tasks in an isolated mode, increasing attention is being paid to the use of closely interacting clustered robotic systems. The potential benefits of clustered robotic systems include redundancy, enhanced footprint and throughput, resilient irreconcilability, and diverse features in space. A key feature among these considerations is the technology utilized to coordinate the movement of individuals.

In this article, a new machine learning-based hybrid algorithm named like-attracts-like optimizer (LALO) is also proposed based on solution clustering. Video clustering robots require optimization algorithms for further planning of their routes during population coordination in order to achieve higher efficiency. For this problem, the proposed LALO algorithm can perform the corresponding optimization. It essentially mimics the social behavior of human beings where an excellent person easily attracts like-minded people to approach them (Fritzke et al., 1995; Fišer et al., 2013). Similarly, all the searching individuals in LALO will be divided into multiple clusters by a growing neural gas (GNG) network (Mirjalili et al., 2014) according to their positions. Different from the conventional clustering methods, the topological relations between different clusters can be generated for guided optimization (See Figure 1).

FIGURE 1

The rest of this article is organized as follows: Section 2 provides the principle and the detailed operations of LALO. The discussions of optimization results in unimodal benchmark functions are given in Section 3. At last, Section 4 concludes this work.

2 Like-attracts-like optimizer

2.1 Inspiration

Like-attracts-like is one of the main parts of social interactions which shows an excellent person easily drives like-minded people to approach them; thus, a group of people with similar features will be formed, as illustrated in Figure 2 (Gutkin et al., 1976). Inspired by this social behavior, a searching individual can represent a person in like-attracts-like, while each cluster can be regarded as a group of people. In this work, the proposed LALO is mainly designed according to the interaction network of different clusters.

FIGURE 2

2.2 Optimization principle and mathematical model

LALO is mainly composed of two stages: the clustering stage and the searching stage. Particularly, the clustering stage is achieved by the GNG network, and the searching stage is designed by combining the encircling prey of gray wolf optimizer (GWO) depending on the interaction network of the different clusters.

2.2.1 Clustering phase by the GNG network

As a form of unsupervised learning, the GNC network (Fišer et al., 2013) can dynamically adjust its topological structure to match the input data without any desired outputs. It can not only achieve fast data clustering but also keep a topological structure to guide the optimization. In general, the GNC network contains a set of nodes V and a set of edges E without weights, i.e., , in which each node represents a cluster; ; and . The nodes and the edges will be dynamically changed to adapt to the input data (i.e., the solutions at the current iteration). Overall, the GNG network-based clustering phase contains ten steps as follows (Fritzke et al., 1995):

Step 1Initialize the network from two nodes, in which the positions of these two nodes are randomly generated within the lower and upper bounds of the input data, aswhere and are the vectors of the lower and upper bounds of the input data, respectively, with and ; represents the position of the dth dimension of the ith individual in LALO; is a random vector within the range from 0 to 1; k denotes the kth iteration of LALO; N is the population size of LALO; d denotes the dth dimension of the solution, and D is the number of dimensions.

Step 2Randomly select a solution from the input data and determine the nearest and the second nearest nodes according to their distances aswhere and represent the numbers of the nearest and the second nearest nodes, respectively; and is the position of the cth node.

Step 3Update the age of all the edge links with the nearest node aswhere denotes the age of the edge .

Step 4Update the accumulated error of the nearest node aswhere denotes the accumulated error of the node .

Step 5Update the positions of the nearest node and its linked nodes aswhere and represent the learning rates of the nearest node and its linked nodes, respectively.

Step 6If , then ; otherwise, create the edge in the network.

Step 7If , then remove the edge from the network, where is the maximum allowable age of each edge. If a node does not have any adjacent nodes, then remove this node from the network.

Step 8Insert a new node if the time of network learning is an integral multiple of the inserting rate , i.e., . Particularly, the new node should be inserted halfway between the node with the largest accumulated error and its adjacent node with the largest accumulated error, aswhere is the set of the neighborhood of node i.Along with the new node , the original edge should be removed, while the new edges and should be added. At the same time, the accumulated errors of these three nodes are updated aswhere is the error decreasing factor for the new node and its neighborhood.

Step 9Decrease the accumulated errors of all the nodes, aswhere is the error-decreasing factor for all the nodes.

Step 10Export the network if the conditions for termination are fulfilled; otherwise, switch to Step 2.

2.2.2 Searching phase

In LALO, each individual will approximate a better individual (i.e., the learning target) with a high-quality solution; thus, a potentially better solution can be found with a higher probability. This process is achieved based on the encircling prey of gray wolf optimizer (GWO) (Mirjalili et al., 2014). To guarantee high solution diversity (Gutkin et al., 1976), the learning target (See Figure 3) is selected from the interactive clusters or the best solution so far, according to solution comparison between adjacent clusters as follows:where is the best solution in the jth cluster; is the best solution in the social network of the jth cluster; is the learning target of the ith individual; is the best solution so far by LALO; is the solution set of all the individuals in the jth cluster; and Fit denotes the fitness function for a minimum optimization.

FIGURE 3

Based on the selected learning target, the position of each individual can be updated as follows (Zeng et al., 2017):where is the coefficient vector to approach the learning target; is the difference vector between the individual and its learning target; and are the random vectors ranging from 0 to 1; and a is a coefficient.

2.2.3 Dynamic balance between exploration and exploitation

Like many meta-heuristic algorithms, LALO also requires wide exploration in the early phase (Heidari et al., 2019). As the iteration number increases, the exploration weight should be gradually weakened, while the exploitation weight should be gradually enhanced. To achieve a dynamic balance between them, the cluster number and the coefficient are decreased as the iteration numbers increase as follows:where and are the maximum and minimum number of clusters, respectively; and is the maximum iteration number of LALO.

2.2.4 Pseudocode of LALO

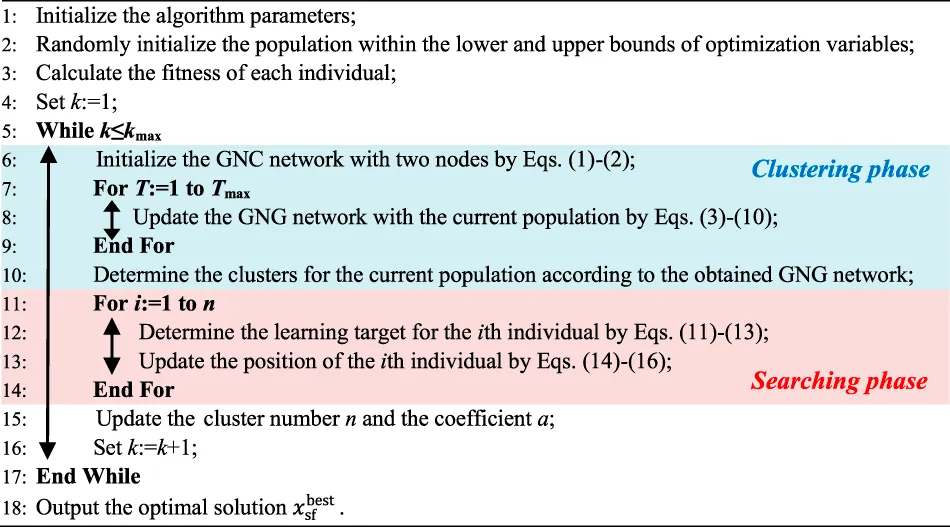

To summarize, the pseudocode for LALO is provided in algorithm 1, where T is the iteration number in the GNC network and Tmax is the corresponding maximum iteration number (i.e., the termination condition of the GNC network).

Algorithm 1 Pseudocode of LALO

3 Like-attracts-like optimizer for unimodal benchmark functions

In this section, unimodal features are adopted to test the capability of LALO. Meanwhile, nine well-known meta-heuristic algorithms, namely, GWO (Mirjalili et al., 2014), GA (Holland, 1992), EO (Faramarzi et al., 2020), PSO (Kennedy and Eberhart, 1007), gravitational search algorithm (SSA) (Mirjalili et al., 2017), GSA (Rashedi et al., 2009), evolution strategy with covariance matrix adaptation (CMA-ES) (Hansen et al., 2003), success-history-based parameter adaptation differential evolution (SHADE) (Tanabe and Fukunaga, 20132013), and SHADE with linear population size reduction hybridized with the semi-parameter adaption of CMA-ES (LSHADE-SPACMA) (Faramarzi et al., 2020), are used for performance comparisons, in which their parameters can be referred in Gutkin et al., 1976. To allow for an equitable competition, the maximum suitability rating for each algorithm was set to 15,000. Consequently, the population size and maximum iteration number of LALO are set to be 20 and 750, respectively, while other parameters can be determined via trial and error, as shown in Table 1. To clearly illustrate the searching process for the 2-dimensional benchmark functions, the population size and maximum iteration number of LALO are set to be 50 and 100, respectively; the maximum cluster number is set to be 8.

TABLE 1

| 0.5 | 0.005 | 5 | 5 | 0.1 | 0.9 | 12 | 4 | 150 |

Main parameters of LALO.

The unimodal benchmark functions to are given in Table 2, where range denotes the searching space of each optimization variable and denotes the global optimum. Since each of them has only one global optimum, they are suitable to evaluate the exploitation ability of each meta-heuristic algorithm.

TABLE 2

| Function | D | Range | |

|---|---|---|---|

| 30 | [−100, 100] | 0 | |

| 30 | [−10, 10] | 0 | |

| 30 | [−100, 100] | 0 | |

| 30 | [−100, 100] | 0 | |

| 30 | [−30, 30] | 0 | |

| 30 | [−100, 100] | 0 | |

| 30 | [−1.28, 1.28] | 0 |

Unimodal benchmark functions.

Figure 4 provides the searching space of the two-dimensional unimodal benchmark functions and the searching process of LALO, where the initial solutions and interactive clusters of LALO are also given. In consequence, the initial interactive clusters are distributed dispersedly, which can achieve wide exploration in the initial phase of LALO. As the iteration number increases, LALO can gradually find a better solution with an enhanced exploitation weight (Storn and Price, 1997; Regis, 2014).

FIGURE 4

Table 3 shows the average fitness and rank obtained by different algorithms for unimodal benchmark functions in 30 runs. It can be seen that LALO can obtain high-quality optimums on the whole for the unimodal benchmark functions, especially for function F2 with the first rank. Particularly, the average rank of average fitness LALO is the highest among all the algorithms except EO for the seven unimodal benchmark functions (Rao et al., 2011; Jin, 2011; El-Abd, 2017; Jin et al., 2019). This effectively verifies the highly competent exploitation ability of LALO against the other nine meta-heuristic algorithms.

TABLE 3

| Function | Metrics | LALO | EO | PSO | GWO | GA | GSA | SSA | CMA-ES | SHADE | LSHADE-SPACMA |

|---|---|---|---|---|---|---|---|---|---|---|---|

| F1 | Avg. | 1.31E-37 | 3.32E-40 | 9.59E-06 | 6.59E-28 | 0.55492 | 2.53E-16 | 1.58E-07 | 1.42E-18 | 1.42E-09 | 0.2237 |

| Rank | 2 | 1 | 8 | 3 | 10 | 5 | 7 | 4 | 6 | 9 | |

| F2 | Avg. | 4.09E-27 | 7.12E-23 | 0.02560 | 7.18E-17 | 0.00566 | 0.05565 | 2.66293 | 2.98E-07 | 0.0087 | 21.1133 |

| Rank | 1 | 2 | 7 | 3 | 5 | 8 | 9 | 4 | 6 | 10 | |

| F3 | Avg. | 1.84E-05 | 8.06E-09 | 82.2687 | 3.29E-06 | 846.344 | 896.534 | 1709.94 | 1.59E-05 | 15.4352 | 88.7746 |

| Rank | 4 | 1 | 6 | 2 | 8 | 9 | 10 | 3 | 5 | 7 | |

| F4 | Avg. | 7.27E-05 | 5.39E-10 | 4.26128 | 5.61E-07 | 4.55538 | 7.35487 | 11.6741 | 2.01E-06 | 0.9796 | 2.1170 |

| Rank | 4 | 1 | 7 | 2 | 8 | 9 | 10 | 3 | 5 | 6 | |

| F5 | Avg. | 26.02845 | 25.32331 | 92.4310 | 26.81258 | 268.248 | 67.5430 | 296.125 | 36.7946 | 24.4743 | 28.8255 |

| Rank | 3 | 2 | 8 | 4 | 9 | 7 | 10 | 6 | 1 | 5 | |

| F6 | Avg. | 0.137603 | 8.29E-06 | 8.89E-06 | 0.816579 | 0.5625 | 2.50E-16 | 1.80E-07 | 6.83E-19 | 5.31E-10 | 0.2489 |

| Rank | 7 | 5 | 6 | 10 | 9 | 2 | 4 | 1 | 3 | 8 | |

| F7 | Avg. | 0.002606 | 0.001171 | 0.02724 | 0.002213 | 0.04293 | 0.08944 | 0.1757 | 0.0275 | 0.0235 | 0.0047 |

| Rank | 3 | 1 | 6 | 2 | 8 | 9 | 10 | 7 | 5 | 4 | |

| Average rank | 3.43 | 1.86 | 6.86 | 3.71 | 8.14 | 7.00 | 8.57 | 4.00 | 4.43 | 7.00 | |

Average fitness and rank obtained by different algorithms for unimodal benchmark functions in 30 runs.

EO: Equilibrium optimizer; PSO: Particle Swarm Optimization; GWO: Grey wolf optimizer; GA: Genetic algorithm; GSA: Gravitational search algorithm; SSA: Salp swarm algorithm; CMA-ES: Evolution strategy with covariance matrix adaptation; SHADE: Success-History based Adaptive Differential Evolution; LSHADE-SPACMA: SHADE with linear population size reduction hybridized with semi-parameter adaption of CMA-ES.

On the other hand, Table 4 gives the fitness standard deviation and rank obtained by different algorithms for the unimodal benchmark functions in 30 runs. Similarly, the average rank of the fitness standard deviation obtained by EO outperforms the other algorithms for the unimodal benchmark functions, followed by LALO. It reveals the high optimization stability of LALO compared with other algorithms for the unimodal benchmark functions.

TABLE 4

| Function | Metrics | LALO | EO | PSO | GWO | GA | GSA | SSA | CMA-ES | SHADE | LSHADE-SPACMA |

|---|---|---|---|---|---|---|---|---|---|---|---|

| F1 | Avg. | 2.06E-37 | 6.78E-40 | 3.35E-05 | 1.58E-28 | 1.2301 | 9.67E-17 | 1.71E-07 | 3.13E-18 | 3.09E-09 | 0.148 |

| Rank | 2 | 1 | 8 | 3 | 10 | 5 | 7 | 4 | 6 | 9 | |

| F2 | Avg. | 4.70E-27 | 6.36E-23 | 0.04595 | 7.28E-17 | 0.01443 | 0.19404 | 1.66802 | 1.7889 | 0.0213 | 9.5781 |

| Rank | 1 | 2 | 6 | 3 | 4 | 7 | 8 | 9 | 5 | 10 | |

| F3 | Avg. | 2.35E-05 | 1.60E-08 | 97.2105 | 1.61E-05 | 161.499 | 318.955 | 11242.3 | 2.21E-05 | 9.9489 | 47.23 |

| Rank | 4 | 1 | 7 | 2 | 8 | 9 | 10 | 3 | 5 | 6 | |

| F4 | Avg. | 7.50E-05 | 1.38E-09 | 0.6773 | 1.04E-06 | 0.59153 | 1.74145 | 4.1792 | 1.25E-06 | 0.7995 | 0.4928 |

| Rank | 4 | 1 | 7 | 2 | 6 | 9 | 10 | 3 | 8 | 5 | |

| F5 | Avg. | 0.187512 | 0.169578 | 74.4794 | 0.793246 | 337.693 | 62.2253 | 508.863 | 33.4614 | 11.208 | 0.8242 |

| Rank | 2 | 1 | 8 | 3 | 9 | 7 | 10 | 6 | 5 | 4 | |

| F6 | Avg. | 0.118325 | 5.02E-06 | 9.91E-06 | 0.482126 | 1.71977 | 1.74E-16 | 3.00E-07 | 6.71E-19 | 6.35E-10 | 0.1131 |

| Rank | 8 | 5 | 6 | 9 | 10 | 2 | 4 | 1 | 3 | 7 | |

| F7 | Avg. | 0.000805 | 0.000654 | 0.00804 | 0.001996 | 0.00594 | 0.04339 | 0.0629 | 0.0079 | 0.0088 | 0.0019 |

| Rank | 2 | 1 | 7 | 4 | 5 | 9 | 10 | 6 | 8 | 3 | |

| Average rank | 3.29 | 1.71 | 7.00 | 3.71 | 7.43 | 6.86 | 8.43 | 4.57 | 5.71 | 6.29 | |

Fitness standard deviation and ranks obtained by different algorithms for unimodal benchmark functions in 30 runs.

EO: Equilibrium optimizer; PSO: Particle Swarm Optimization; GWO: Grey wolf optimizer; GA: Genetic algorithm; GSA: Gravitational search algorithm; SSA: Salp swarm algorithm; CMA-ES: Evolution strategy with covariance matrix adaptation; SHADE: Success-History based Adaptive Differential Evolution; LSHADE-SPACMA: SHADE with linear population size reduction hybridized with semi-parameter adaption of CMA-ES.

Figure 5 provides the box-and-whisker plots of ranks obtained by different algorithms for the benchmark functions from F1 to F7. It can be found from Figure 5A that the ranks of the average fitness obtained by LALO are mainly distributed in the top three for all the benchmark functions, which is the highest among all the algorithms. In contrast, the ranks of average fitness obtained by GA are mainly distributed from 6 to 9, which demonstrate that it easily traps into a low-quality optimum due to premature convergence. Similarly, LALO also performs best among all the algorithms on the ranks of fitness standard deviation, as shown in Figure 5B. This sufficiently indicates that LALO is highly competitive compared to the presented nine meta-heuristic algorithms for the benchmark functions.

FIGURE 5

4 Conclusion

This article proposes a novel machine learning-based meta-heuristic algorithm, in which the main contributions can be summarized as follows:

1) The proposed LALO is a novel meta-heuristic algorithm inspired by the social behavior of human beings that states an excellent person easily attracts like-minded people to approach him or her. Compared with the traditional clustering-based meta-heuristic algorithm, LALO can not only divide the searching individuals into multiple clusters by using the GNG network but can also generate the interaction topology between different clusters. Hence, each individual can select its learning target from the interactive clusters; thus, a wide exploration can be implemented in the searching phase.

2) The exploration and exploitation of LALO can be dynamically balanced via adjusting the number of interactive clusters and the searching coefficient. As a result, it can enhance the exploration of LALO in the initial phase, while the exploitation can be gradually strengthened as the iteration number increases.

3) The comprehensive case studies are carried out to evaluate the optimization performance of LALO compared with various meta-heuristic algorithms. On the whole, LALO is highly competitive in the optimum quality and optimization stability for 29 benchmark functions and 3 classical engineering problems. Particularly, the simulation results clearly demonstrate that LALO is more appropriate to handle fix-dimension multimodal benchmark functions, composite benchmark functions, and classical engineering problems.

Due to the superior optimization performance of LALO, it can be applied to various real-world optimizations. Furthermore, it also can be extended into a multi-objective optimization algorithm to search the Pareto optimum solutions for different kinds of multi-objective optimization problems.

Statements

Data availability statement

The data analyzed in this study are subject to the following licenses/restrictions: Internal enterprise Data. Requests to access these datasets should be directed to Biao_Tang@outlook.com.

Author contributions

XH, BT, and MZ: writing the original draft and editing. YM and XM: discussion of the topic. LT, XW, and DZ: supervision and funding.

Funding

The authors gratefully acknowledge the support of the research and innovative application of key technologies of image processing and knowledge atlas for complex scenes in the Smart Grid (202202AD080004), the research and innovative applications of key technologies of image processing and knowledge atlas for complex scenes in the Smart Grid (YNKJXM20220019), the practical maintenance of substation inspection robot master station management platform of Yunnan Electric Power Research Institute in 2022–2023 (056200MS62210005), and the Yunnan technological innovation talent training object project (No. 202205AD160005).

Conflict of interest

Authors XH, BT, MZ, YM, XM, and LT are employed by the Electric Power Research Institute of Yunnan Power Grid Co., Ltd., China Southern Power Grid. XW is employed by Yunnan Power Grid Co., Ltd., China Southern Power Grid. DZ is employed by Zhejiang Guozi Robotics Co., Ltd.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors, and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

References

1

AlbaE.DorronsoroB. (2005). The exploration/exploitation tradeoff in dynamic cellular genetic algorithms. IEEE Trans. Evol. Comput.9, 126–142. 10.1109/TEVC.2005.843751

2

AskariQ.YounasI.SaeedM. (2020). Political optimizer: A novel socio-inspired meta-heuristic for global optimization. Knowl. Based. Syst.195, 105709. 10.1016/j.knosys.2020.105709

3

DaiC.ChenW.ZhuY.ZhangX. (2009). Seeker optimization algorithm for optimal reactive power dispatch. IEEE Trans. Power Syst.24, 1218–1231. 10.1109/TPWRS.2009.2021226

4

El-AbdM. (2017). Global-best grain storm optimization algorithm. Swarm Evol. Comput.37, 27–44. 10.1016/j.swevo.2017.05.001

5

FaramarziA.HeidarinejadM.StephensB.MirjaliliS. (2020). Equilibrium optimizer: A novel optimization algorithm. Knowl. Based. Syst.191, 105190. 10.1016/j.knosys.2019.105190

6

FišerD.FaiglJ.KulichM. (2013). Growing neural gas efficiently. Neurocomputing104, 72–82. 10.1016/j.neucom.2012.10.004

7

FritzkeB. (1995). “A growing neural gas network learns topologies,”. In Advances in Neural Information Processing System, TesauroG.TouretzkyD. S.LeenT. K. (Cambridge, MA, USA: MIT Press), 7, 625–632. 10.1007/978-3-642-40925-7_38

8

GloverF. (1989). Tabu search-Part I. ORSA J. Comput.1, 190–206. 10.1287/ijoc.1.3.190

9

GuoL.WangG.-G.GandomiA. H.AlaviA. H.DuanH. (2014). A new improved krill herd algorithm for global numerical optimization. Neurocomputing138, 392–402. 10.1016/j.neucom.2014.01.023

10

GutkinT. B.GridleyG. C.WendtJ. M. (1976). The effect of initial attraction and attitude similarity-dissimilarity on interpersonal attraction. Cornell J. Soc. Relat.11, 153–160. 10.1080/00224545.1978.9924167

11

HansenN.MüllerS. D.KoumoutsakosP. (2003). Reducing the time complexity of the derandomized evolution strategy with covariance matrix adaptation (CMA-ES). Evol. Comput.11, 1–18. 10.1162/106365603321828970

12

HeidariA. A.MirjaliliS.FarisH.AljarahI.MafarjaM.ChenH. (2019). Harris hawks optimization: Algorithm and applications. Future Gener. Comput. Syst.97, 849–872. 10.1016/j.future.2019.02.028

13

HollandJ. H. (1992). Genetic algorithms. Sci. Am.267, 66–72. 10.1038/scientificamerican0792-66

14

JinY. (2011). Surrogate-assisted evolutionary computation: Recent advances and future challenges. Swarm Evol. Comput.1, 61–70. 10.1016/j.swevo.2011.05.001

15

JinY.WangH.ChughT.GuoD.MiettinenK. (2019). Data-driven evolutionary optimization: An overview and case studies. IEEE Trans. Evol. Comput.23, 442–458. 10.1109/TEVC.2018.2869001

16

KazemtabriziB.AchaE. (2014). An advanced STATCOM model for optimal power flows using Newton’s method. IEEE Trans. Power Syst.29, 514–525. 10.1109/TPWRS.2013.2287914

17

KennedyJ.EberhartR. “Particle swarm optimization,” in Proceedings of ICNN95-international conference on neural network. IEEE. 10.1007/978-0-387-30164-8_630

18

KirkpatrickS.GelattC. D.VecchiM. P. (1983). Optimization by simulated annealing. Science220, 671–680. 10.1126/science.220.4598.671

19

MasI.LiS.AcainJ. (2009). Entrapment/escorting and patrolling missions in multi-robot cluster space control. In Proceedings of the IEEE/RSJ international conference on intelligent robots & systems. IEEE. St. Louis, MO, USA.

20

MirjaliliS.GandomiA. H.MirjaliliS. Z.SaremiS.FarisH.MirjaliliS. M. (2017). Salp swarm algorithm: A bio-inspired optimizer for engineering design problems. Adv. Eng. Softw.114, 163–191. 10.1016/j.advengsoft.2017.07.002

21

MirjaliliS.MirjaliliS. M.LewisA. (2014). Grey wolf optimizer. Adv. Eng. Softw.69, 46–61. 10.1016/j.advengsoft.2013.12.007

22

MirjaliliS.SCA (2016). Sca: A sine cosine algorithm for solving optimization problems. Knowl. Based. Syst.96, 120–133. 10.1016/j.knosys.2015.12.022

23

RaoR.SavsaniV.VakhariaD. (2011). Teaching–learning-based optimization: A novel method for constrained mechanical design optimization problems. Computer-Aided Des.43, 303–315. 10.1016/j.cad.2010.12.015

24

RashediE.Nezamabadi-pourH.SaryazdiS. (2009). Gsa: A gravitational search algorithm. Inf. Sci. (N. Y).179, 2232–2248. 10.1016/j.ins.2009.03.004

25

RegisR. G. (2014). Evolutionary programming for high-dimensional constrained expensive black-box optimization using radial basis functions. IEEE Trans. Evol. Comput.18, 326–347. 10.1109/TEVC.2013.2262111

26

StornR.PriceK. (1997). Differential evolution-a simple and efficient heuristic for global optimization over continuous space. J. Glob. Optim.11, 341–359. 10.1023/A:1008202821328

27

TanabeR.FukunagaA. (2013). Success-history based parameter adaptation for differential evolution. IEEE Congr. Evol. Comput., Kraków, Poland, 71–78. 10.1007/s00500-015-1911-2

28

WangM. Q.GooiH. B.ChenS. X.LuS. (2014). A mixed integer quadratic programming for dynamic economic dispatch with valve point effect. IEEE Trans. Power Syst.29, 2097–2106. 10.1109/TPWRS.2014.2306933

29

WolpertD. H.MacreadyW. G. (1997). No free lunch theorems for optimization. IEEE Trans. Evol. Comput.1, 67–82. 10.1109/4235.585893

30

ZengY.ChenX.OngY.-S.TangJ.XiangY. (2017). Structured memetic automation for online human-like social behavior learning. IEEE Trans. Evol. Comput.21, 102–115. 10.1109/TEVC.2016.2577593

31

ZhangQ.WangR.YangJ.DingK.LiY.HuJ. (2017). Collective decision optimization algorithm: A new heuristic optimization method. Neurocomputing221, 123–137. 10.1016/j.neucom.2016.09.068

32

ZhaoW.WangL.ZhangZ. (2019). Atom search optimization and its application to solve a hydrogeologic parameter estimation problem. Knowl. Based. Syst.163, 283–304. 10.1016/j.knosys.2018.08.030

Summary

Keywords

like-attracts-like optimizer, meta-heuristic algorithm, growing neural gas network, dynamic clustering, video robotics cluster control

Citation

Huang X, Tang B, Zhu M, Ma Y, Ma X, Tang L, Wang X and Zhu D (2023) Like-attracts-like optimizer-based video robotics clustering control design for power device monitoring and inspection. Front. Energy Res. 10:1030034. doi: 10.3389/fenrg.2022.1030034

Received

28 August 2022

Accepted

20 September 2022

Published

09 January 2023

Volume

10 - 2022

Edited by

Bin Zhou, Hunan University, China

Reviewed by

Yaxing Ren, University of Lincoln, United Kingdom

Yiyan Sang, Shanghai University of Electric Power, China

Updates

Copyright

© 2023 Huang, Tang, Zhu, Ma, Ma, Tang, Wang and Zhu.

This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Biao Tang, Biao_Tang@outlook.com

This article was submitted to Process and Energy Systems Engineering, a section of the journal Frontiers in Energy Research

Disclaimer

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.