- 1Hunan Agricultural University Economic College, Changsha, Hunan, China

- 2School of Accounting and Finance, Xi’an Peihua University, Xi’an, Shaanxi, China

Introduction: Improving agricultural efficiency while ensuring environmental and economic sustainability remains a global challenge.

Methods: This study introduces the Integrated Agro-Economic Sustainability Framework (IAESF), a novel architecture that fuses multi-source remote sensing data—including satellite, UAV, and ground sensors—with multi-objective optimization and real-time feedback mechanisms. IAESF leverages predictive analytics and adaptive resource allocation to balance profitability with sustainability metrics such as carbon emissions, water usage, and biodiversity preservation. The framework is evaluated across four benchmark datasets (GF-FloodNet, SSL4EO-L, OpenSARShip, TimeSen2Crop) covering spatial, temporal, and spectral variability.

Results: Experimental results show significant improvements in segmentation accuracy (IoU up to 91.34%) and yield forecasting precision (RMSE reduced by 29.5%) over state-of-the-art models. Scalability is demonstrated through deployment across both smallholder and industrial-scale simulations, supported by dynamic optimization and lightweight model design.

Discussion: IAESF aligns with global sustainability goals (e.g., SDG 2, SDG 13) and offers actionable insights for precision agriculture policy and planning. This work advances a transparent, interpretable, and resilient decision-making paradigm for sustainable agricultural systems.

1 Introduction

The improvement of agricultural production efficiency is central to addressing global food security and supporting economic sustainability in the face of climate change, population growth, and resource limitations (Zhao et al., 2024). The agricultural sector faces increasing pressure to optimize resource utilization while minimizing environmental degradation, necessitating advanced solutions to balance productivity with sustainability (Wang et al., 2024). Remote sensing technologies have emerged as indispensable tools in this endeavor, providing high-resolution, timely, and large-scale data for monitoring agricultural systems (Joshi et al., 2024). By integrating multi-source remote sensing data, such as satellite imagery, drone-based sensors, and ground-based measurements, researchers can analyze crop growth, soil health, and resource use efficiency with unprecedented precision (Qu et al., 2023). These insights not only enhance productivity but also guide sustainable practices by optimizing resource allocation, reducing environmental impact, and enabling data-driven policymaking (Liang et al., 2023). The analysis of multi-source remote sensing data plays a vital role in advancing sustainable agriculture and economic resilience, particularly in the face of global challenges such as drought, changing market demands, and geopolitical uncertainties (Li et al., 2023b). In this study, we consider three main categories of remote sensing sources: satellite-based (e.g., Sentinel-2, GF-1), UAV-based, and ground-level IoT sensors. The satellite imagery offers multi-spectral data with spatial resolutions ranging from 10 m to 30 m and covers visible to near-infrared (VNIR) bands. UAV-mounted sensors provide ultra-high-resolution RGB and multispectral imagery (5 cm–20 cm), enabling fine-grained monitoring at the field level. Ground sensors capture real-time parameters such as soil moisture, air temperature, and leaf chlorophyll content. These complementary data sources monitor vegetation indices (e.g., NDVI, EVI), thermal anomalies, water stress, and crop growth cycles, supporting precise and timely decision-making in dynamic agricultural settings.

To address the limitations of conventional approaches to agricultural efficiency analysis, early methods relied on symbolic AI and knowledge representation frameworks (Xiao et al., 2023). These approaches used rule-based systems and expert knowledge to model agricultural processes (Wu H. et al., 2023). For example, crop models simulated growth dynamics based on deterministic rules derived from agronomic studies (Xu et al., 2021). These models provided interpretable results and enabled scenario-based planning, which was particularly valuable for forecasting yields and evaluating resource allocation strategies (Zhang et al., 2022). However, their reliance on handcrafted rules and static inputs limited their ability to handle the complexity and variability of real-world conditions, such as unexpected climate events, pest outbreaks, and market shifts. Furthermore, these systems struggled to integrate heterogeneous data sources, such as weather information and spectral indices, which are critical for accurate assessments in diverse agricultural landscapes. As a result, these methods often lacked the flexibility required for dynamic decision-making in rapidly evolving agricultural contexts (Wu et al., 2024).

With the advancement of computational capabilities, data-driven methods and machine learning (ML) became pivotal for analyzing agricultural production efficiency (Ma et al., 2023). These methods employed statistical and machine learning algorithms to extract patterns and correlations from multi-source data. Feature engineering techniques such as vegetation indices and texture analysis were coupled with regression or classification models to predict crop yields and resource efficiency (Hou et al., 2022). Machine learning approaches, such as support vector machines and random forests, significantly improved adaptability and accuracy compared to symbolic methods, particularly in handling large datasets and non-linear relationships (Qi et al., 2022). However, their dependence on domain-specific features and labeled data, as well as their limited generalizability across diverse geographies, posed significant challenges. The interpretability of ML models was often insufficient for supporting actionable recommendations, particularly for complex agricultural systems that require transparency to align with policy and practical implementation (Zheng et al., 2023). These limitations made it difficult to use such methods for long-term planning or in environments with scarce training data (Li L. et al., 2023). To address these limitations, our proposed IAESF framework integrates explainable AI components such as SHAP and Grad-CAM to enhance model transparency and support actionable insights. The use of multi-source data fusion and spatiotemporal attention mechanisms improves generalizability across regions and conditions, while the framework’s design reduces dependence on densely labeled datasets by leveraging heterogeneous information sources and adaptive feedback loops.

The emergence of deep learning (DL) and pre-trained models has revolutionized the analysis of multi-source remote sensing data in agriculture (Li Z. et al., 2023). Convolutional neural networks (CNNs) and attention-based models enable the automatic extraction of spatial and temporal features from high-dimensional data, such as satellite imagery and multi-temporal datasets (Wang et al., 2022). These approaches eliminate the need for manual feature engineering, significantly improving the efficiency and accuracy of analyses. Pre-trained architectures and transfer learning approaches further enhance performance by leveraging large-scale datasets, even in data-scarce regions (Dai et al., 2023). These methods have demonstrated success in applications like crop classification, yield prediction, and resource optimization, particularly when applied to complex datasets that integrate remote sensing and environmental variables (He et al., 2022). However, deep learning approaches are computationally intensive and often lack transparency, making it challenging to align their outcomes with sustainable agricultural practices (Yang et al., 2024). The “black-box” nature of these models also raises concerns about their applicability for stakeholder decision-making, as their predictions often lack the interpretability required for trust and policy alignment (Li et al., 2023c). Despite these advances, a key research gap remains in integrating deep learning with transparent, interpretable mechanisms that can support evidence-based agricultural decision-making. Most existing approaches emphasize performance but overlook the need for stakeholder trust and policy alignment. To address this challenge, our study proposes a framework that embeds explainability directly into deep learning workflows to enhance both usability and credibility in real-world deployments.

Building upon these limitations, we propose the Integrated Agro-Economic Sustainability Framework (IAESF), an innovative architecture that combines explainable AI techniques with multi-source remote sensing data to improve agricultural production efficiency and long-term sustainability. Unlike prior systems that treat interpretability as an external step, IAESF embeds interpretable models—specifically SHAP for feature attribution and Grad-CAM for spatial visualization—within the optimization loop to enable real-time, actionable insights. The framework fuses heterogeneous data sources, including satellite sensors (e.g., Sentinel-2, GF-1), UAV-mounted multispectral cameras, and IoT-based field-level measurements. These are integrated via a dynamic spatiotemporal attention mechanism that captures contextual interactions across resolution levels. This design supports high-resolution modeling of crop growth, soil status, and climate variability over time and space. By aligning model predictions with domain knowledge and embedding them in a robust multi-objective optimization formulation, IAESF explicitly balances performance, interpretability, and usability. This approach represents a significant advancement over traditional pipeline models that isolate learning, prediction, and decision-making components. To support scalability and real-time deployment, IAESF is designed with computational efficiency in mind. The framework leverages parallelized processing pipelines and cloud-based deployment for handling large-scale remote sensing data. Lightweight attention modules and model pruning strategies are applied to reduce inference time without sacrificing accuracy. Moreover, the architecture supports integration with edge computing devices and IoT platforms, enabling near real-time decision support in field environments. This integration is performed at the feature level using a dynamic attention-based fusion module, which aligns multi-resolution signals across temporal and spatial dimensions. Features from satellite, UAV, and IoT sources are unified into a shared latent representation via cross-modal attention blocks, enabling coherent interpretation across sensor modalities.

We summarize our contributions as follows:

2 Related work

2.1 Remote sensing for agricultural efficiency

Remote sensing has revolutionized agricultural monitoring and productivity analysis by providing large-scale, high-resolution data on land use, crop health, and environmental conditions (Li et al., 2021). Optical and radar-based remote sensing systems, such as Landsat, Sentinel, and MODIS, are widely employed to assess vegetation indices like NDVI (Normalized Difference Vegetation Index) and EVI (Enhanced Vegetation Index), which correlate strongly with crop growth, biomass production, and yield. These indices are fundamental for identifying spatial variability in fields and targeting specific areas for intervention (Xu et al., 2023). Advancements in hyperspectral imaging and thermal sensing allow for more precise detection of water stress, nutrient deficiencies, and pest infestations, offering critical insights for precision agriculture (Zheng and Chen, 2021). UAVs (Unmanned Aerial Vehicles) equipped with multispectral and thermal sensors have complemented satellite systems by delivering high-resolution data at field scales, enabling near-real-time monitoring of crop health (Zhao et al., 2021). To enhance the utility of remote sensing, advanced machine learning algorithms such as CNNs, gradient boosting machines, and random forest regressors have been developed to integrate remote sensing data with predictive models for crop yield estimation and resource optimization. Multi-temporal analysis, which combines datasets from different time points, captures phenological changes and seasonal patterns, improving the accuracy of predictions. Various data fusion strategies have been employed in the literature to integrate multi-source agricultural data. Data-level fusion techniques such as pixel stacking and band concatenation (Feng et al., 2019; Li et al., 2020) allow early combination of multispectral and thermal imagery. Feature-level fusion methods including canonical correlation analysis and joint embedding networks (Zhu et al., 2021; Yang et al., 2022) align semantic features extracted from heterogeneous sources. Decision-level fusion approaches like majority voting and Bayesian ensemble models (Gao et al., 2020) aggregate predictions from individual models to improve robustness. These fusion strategies provide a holistic understanding of agro-ecological conditions and support applications such as precision irrigation, fertilization scheduling, and yield forecasting. These integrated approaches facilitate applications such as variable-rate fertilizer application, precision irrigation scheduling, and pest control, ultimately increasing yields and reducing resource inputs. Despite these advancements, challenges persist, including the presence of data gaps caused by cloud cover interference, inconsistencies between datasets from different platforms, and the computational intensity required to process large, high-dimensional datasets. Addressing these challenges remains a critical focus area for researchers aiming to maximize the potential of remote sensing in agriculture (Dong and Chen, 2021).

2.2 Economic sustainability in agriculture

Improving economic sustainability in agriculture involves balancing productivity gains with cost-effective practices and environmental stewardship (Zheng et al., 2022). Research increasingly focuses on integrating precision agriculture techniques with economic models to optimize the use of critical inputs, such as fertilizers, water, and pesticides. This optimization not only reduces input costs but also minimizes environmental impacts, such as water pollution and soil degradation, creating a dual benefit for farmers and ecosystems. Multi-source remote sensing data plays a pivotal role in informing these practices by identifying field-specific needs and enabling variable-rate applications, which ensure that inputs are applied only where necessary and in optimal quantities (Qi et al., 2020). For instance, spectral data from satellites and UAVs can identify zones within fields that require more irrigation or fertilization, reducing waste and improving efficiency. To provide a more comprehensive view of spectral data usage in economic sustainability assessments, in addition to NDVI (Normalized Difference Vegetation Index) and EVI (Enhanced Vegetation Index), we also employ several other indices that capture nuanced aspects of crop health and stress. These include the Normalized Difference Water Index (NDWI), which is sensitive to leaf water content and supports efficient irrigation scheduling; the Photochemical Reflectance Index (PRI), which indicates photosynthetic efficiency and helps estimate crop productivity and light-use efficiency; and the Soil Adjusted Vegetation Index (SAVI), which adjusts for soil brightness and is particularly effective in sparsely vegetated areas. The Chlorophyll Vegetation Index (CVI) and Red Edge NDVI (RENDVI) provide enhanced sensitivity to chlorophyll content and early stress detection, respectively. By integrating these indices, we are able to generate detailed spatial variability maps that inform precision input management—such as site-specific fertilization and targeted pesticide application—ultimately optimizing input costs while maintaining or improving yields. This spectral-based insight thus forms a foundational element of economic sustainability analysis by reducing waste, improving efficiency, and supporting profitable decision-making. Studies also emphasize the economic advantages of adopting remote sensing technologies (Shi et al., 2020). These benefits include cost savings from lower input use, improved yields from targeted interventions, and reduced risks of crop failure due to timely detection of stress factors. Integrating remote sensing data with farm management systems enables farmers to align resource allocation with market demands, thereby improving profitability. Decision support systems based on economic modeling and remote sensing insights help farmers adapt to fluctuating market conditions, such as price volatility or demand changes, enhancing their ability to compete in global markets. Research highlights the importance of policy incentives, subsidies, and education programs in encouraging the adoption of these technologies, particularly among smallholder farmers and in resource-constrained agricultural systems. Furthermore, sustainability metrics, such as net present value (NPV), cost-benefit ratios, and environmental impact assessments, are increasingly applied to evaluate the long-term viability of remote sensing technologies. These metrics demonstrate the potential of precision agriculture innovations to enhance profitability while reducing ecological footprints, thus aligning economic goals with sustainability objectives (Liu et al., 2023).

2.3 Data integration for agricultural insights

The integration of multi-source remote sensing data has become a cornerstone in advancing agricultural insights and decision-making (Yuan et al., 2023). By combining satellite imagery with UAV-based data, IoT (Internet of Things) sensor networks, and ground-level observations, researchers can create a comprehensive understanding of spatiotemporal dynamics in agricultural systems. This integration enables holistic assessments of critical factors such as soil fertility, crop health, water availability, and microclimatic conditions (He et al., 2023). Data fusion techniques, such as Bayesian frameworks, machine learning models, and spatial interpolation methods, are employed to synthesize heterogeneous datasets into actionable insights. These methods improve predictions and decision-making in applications such as precision irrigation management, soil fertility mapping, disease monitoring, and yield forecasting. One of the significant advancements in this domain is the use of cloud computing platforms and geospatial data infrastructures to process and analyze large-scale datasets efficiently (Wu T. et al., 2023). These platforms enable researchers and farmers to access, visualize, and analyze remote sensing data in near-real time, facilitating timely decision-making. Moreover, integrating socio-economic data, such as market trends, labor costs, and transportation logistics, with remote sensing data provides a more holistic perspective for sustainable agricultural planning. For example, combining crop health data with market demand forecasts can guide farmers in selecting crops that maximize profitability under prevailing conditions. However, challenges remain in ensuring seamless data integration, particularly due to differences in spatial resolution, temporal coverage, and data formats across platforms. Efforts are ongoing to develop robust data pipelines, interoperability standards, and user-friendly interfaces that simplify data integration and make it accessible to diverse stakeholders, including smallholder farmers. Studies underscore the importance of integrating domain-specific knowledge with automated analytics to ensure that models align with real-world agricultural practices. This direction highlights the potential of multi-source data integration to drive innovations in agriculture by fostering data-driven decision-making and supporting scalable solutions for sustainable management. In recent agricultural remote sensing research, several machine learning and data fusion techniques have emerged as dominant approaches for integrating heterogeneous datasets and extracting actionable insights. Among data fusion techniques, Bayesian data fusion frameworks are widely used due to their probabilistic modeling capacity, enabling the integration of uncertainty across data sources. Ensemble learning methods, such as random forests and gradient boosting machines, have also proven effective for combining predictions from multi-sensor inputs to enhance generalizability and robustness. Spatial-temporal fusion algorithms, particularly the STARFM (Spatial and Temporal Adaptive Reflectance Fusion Model), are frequently applied to blend high-resolution UAV or satellite imagery with temporally rich, lower-resolution sources. On the machine learning side, Convolutional Neural Networks (CNNs) dominate spatial analysis tasks, especially for object detection, classification, and segmentation in satellite imagery. For time-series data, Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks are preferred due to their ability to capture temporal dynamics. Moreover, transformer-based architectures, such as Vision Transformers (ViTs), are gaining popularity for their global attention mechanisms, outperforming traditional CNNs in many segmentation tasks. These methods collectively drive current progress in precision agriculture, enabling real-time, accurate modeling of crop conditions, resource use, and yield forecasting under complex environmental settings.

3 Methods

3.1 Overview

The concept of Agricultural Economic Sustainability delves into the intricate challenge of harmonizing economic productivity, environmental preservation, and social equity in agricultural systems. This subsection provides a comprehensive introduction to the methodologies and strategies designed to address these intertwined dimensions, offering a roadmap for advancing sustainable agricultural practices through innovative approaches.

In Section 3.2, we begin with the Preliminaries, where we formalize the problem of agricultural economic sustainability. This involves identifying and defining key factors such as resource allocation efficiency, crop yield optimization, ecological constraints, and socio-economic impacts. Using mathematical models, we represent the complex interdependencies between these elements, highlighting the tensions and synergies that define the pursuit of sustainability. These models serve as a foundation for the detailed problem analysis that follows. In Section 3.3, we introduce the Innovative Model for Sustainable Agriculture, a cutting-edge framework aimed at optimizing decision-making processes. This model combines predictive analytics, advanced resource management algorithms, and multi-objective optimization techniques to align immediate economic outcomes with enduring sustainability objectives. It incorporates data-driven insights and state-of-the-art computational methods to ensure that short-term economic activities contribute positively to long-term goals. Section 3.4 presents the Dynamic Strategy for Adaptive Agriculture, a strategy centered on the use of adaptive practices and technological advancements to address evolving challenges in agriculture. This includes employing precision agriculture techniques, such as real-time data collection and analysis, to optimize resource use and reduce environmental impact. Furthermore, it highlights how our approach incorporates flexibility to respond effectively to unpredictable environmental changes, market demands, and policy shifts, ensuring a resilient and economically robust agricultural system capable of withstanding external pressures. Through this integrated framework, we aim to redefine agricultural systems for a sustainable future. The adaptive resource allocation strategy in IAESF is operationalized using dynamic programming principles combined with gradient-based optimization, where updates to allocation vectors are computed using projected gradient descent (PGD). These updates are embedded within a time-iterative framework that adapts decisions at each timestep based on changing inputs. The optimization core is implemented in Python using PyTorch, where differentiable resource allocation functions enable backpropagation-compatible updates, facilitating integration with deep learning modules. At each iteration, resource vectors are projected onto the feasible domain using standard torch. nn.functional constraints, ensuring environmental and budgetary limits are respected. For uncertainty modeling and robust decision-making, we employ Monte Carlo simulations to sample perturbations in environmental variables, generating robust policies under stochastic inputs.

3.2 Preliminaries

The problem of Agricultural Economic Sustainability can be defined as the optimization of agricultural systems to balance economic viability, environmental health, and social equity. Achieving this balance requires accounting for competing objectives, resource constraints, environmental considerations, and temporal dynamics. This subsection provides a rigorous mathematical formulation to encapsulate the multidimensional challenges inherent in this domain.

Let

where:

The primary goal is to maximize the economic output

subject to Equation 3:

where:

Given that economic, environmental, and social goals often conflict, the problem requires a multi-objective optimization framework. The revised formulation considers multiple objectives

where each

The optimization seeks Pareto-efficient solutions Equation 5:

where

Agricultural systems are inherently dynamic, requiring time-dependent decision-making. Let

where

The corresponding optimization problem becomes Equation 7:

subject to constraints on resources, environment, and sustainability, where

Agricultural systems are also affected by uncertainty in environmental and market conditions. This uncertainty is modeled as Equation 8:

where

To account for uncertainty, we adopt a robust optimization framework. Let

ensuring the solution remains effective across the uncertainty set.

To explicitly address uncertainty in environmental and market conditions, the IAESF adopts a scenario-based stochastic programming approach, a widely used method in agricultural decision modeling. Rather than relying on worst-case assumptions alone, this framework generates multiple possible future states (scenarios) representing variability in environmental and economic parameters. Each scenario corresponds to a realization of uncertain variables (e.g., rainfall, soil moisture, or market prices), drawn from historical distributions. For continuous variables, uncertainty sets

This ensures that the solution

To evaluate trade-offs, we define a sustainability index

where

We revise the multi-objective formulation as follows Equation 12:

where

where

3.3 Integrated agro-economic sustainability framework (IAESF)

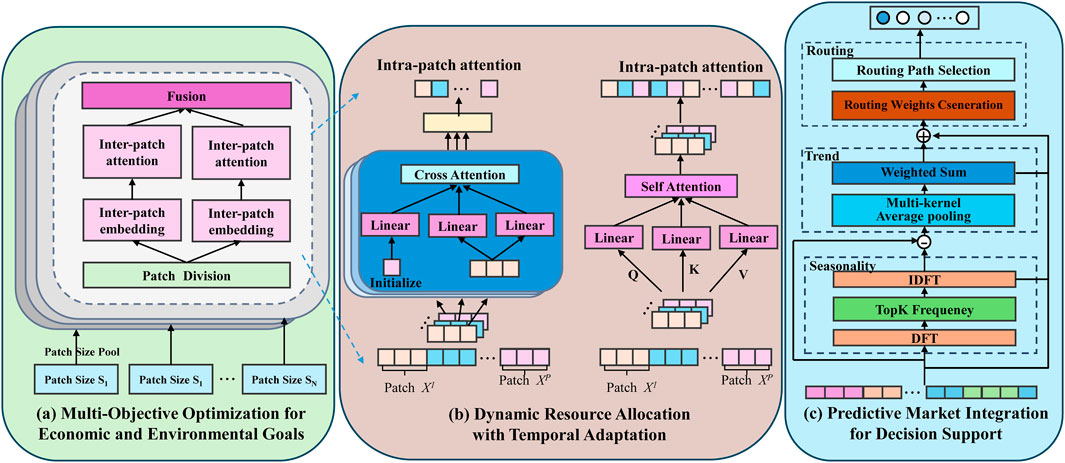

We propose the Integrated Agro-Economic Sustainability Framework (IAESF), a novel model designed to optimize agricultural economic performance while ensuring long-term sustainability (As shown in Figure 1). This framework integrates multi-objective optimization, dynamic resource allocation, and adaptive market modeling, enabling robust decision-making in the face of complex agro-economic dynamics. What distinguishes IAESF from previous agro-economic frameworks is its tight integration of predictive market analytics with real-time dynamic resource optimization within a unified mathematical construct. Unlike prior models that treat market forecasting and optimization as sequential or loosely coupled modules, IAESF uses a jointly adaptive mechanism that continuously feeds predicted economic variables—such as commodity prices, demand trends, and input costs—into a dynamic optimization loop. This interaction enables real-time adjustments in resource allocation with foresight, rather than reactive strategies. Moreover, IAESF introduces a multi-kernel frequency-aware pooling mechanism to capture both seasonal and trend components in market signals, which is novel in agricultural modeling. The dynamic allocation module is driven by a discounted multi-objective optimization formulation, incorporating both short-term profits and long-term sustainability through Pareto-efficient trade-offs. This formulation embeds environmental constraints, operational costs, and forecasted returns directly into the decision logic, rather than treating them as post-processing evaluations. These methodological synergies represent a substantial departure from traditional static or decoupled approaches and allow the framework to act as a truly adaptive, forward-looking decision support system for agro-economic management.

Figure 1. (a) Multi-Objective Optimization for Economic and Environmental Goals: focuses on balancing economic profit and sustainability indices through inter-patch attention mechanisms and patch-based division. (b) Dynamic Resource Allocation with Temporal Adaptation: employs intra-patch attention, utilizing self and cross-attention mechanisms to dynamically allocate resources over time. (c) Predictive Market Integration for Decision Support: enhances decision-making by incorporating routing strategies, trend analysis, and seasonality detection through multi-kernel pooling and frequency-based analysis. By combining these components, IAESF enables robust, data-driven agro-economic decision-making in complex and dynamic environments.

3.3.1 Multi-objective optimization for economic and environmental goals

The IAESF employs a multi-objective optimization framework to achieve a balance between maximizing economic profit

where

where

where

indicating that no other allocation improves one objective without detriment to the other. The framework adopts a scalarized weighted-sum approach to derive actionable priorities Equation 18:

where

with

This third objective enables the IAESF to ensure fair access to critical agricultural inputs, supporting inclusive policies and reducing rural inequalities. It is aligned with the social dimension of sustainability as articulated in SDG 10 and SDG 12. We acknowledge that the scalarized weighted-sum formulation employed in Equation 15 can introduce bias depending on the choice of weights

3.3.2 Dynamic resource allocation with temporal adaptation

To effectively manage resource variability and adapt to fluctuating environmental and market conditions, IAESF employs a dynamic resource allocation framework that operates across discrete time steps

where

To optimize performance over a planning horizon

where

The economic profit at time

where

where

where

where

3.3.3 Predictive market integration for decision support

The IAESF framework incorporates predictive market integration to dynamically adjust resource allocation decisions based on anticipated market conditions. Market dynamics

where

where

where

where

This feedback mechanism captures the interdependence between resource allocation and market outcomes, ensuring that the framework adapts to both predicted and realized market changes. The multi-step planning horizon

where

3.3.4 A data-driven approach to sustainable agriculture

The Integrated Agro-Economic Sustainability Framework (IAESF) operates through a structured flow of data acquisition, optimization, and implementation. The framework begins with data acquisition and processing, where multi-source remote sensing technologies, including satellite imagery, UAV observations, and IoT-enabled ground sensors, continuously monitor agricultural conditions. These sources provide essential parameters such as soil health, crop growth status, and climate variables, forming the foundation for decision-making. The collected data undergoes preprocessing using machine learning models that extract actionable insights, ensuring that the optimization process is driven by accurate and up-to-date agricultural indicators. At the core of IAESF lies the optimization and decision-making module, where multi-objective optimization techniques are applied to balance economic profitability with sustainability goals. The optimization engine integrates real-time environmental and market data to dynamically adjust resource allocation strategies, considering constraints such as water availability, soil conditions, and economic viability. This module determines the optimal distribution of resources, including irrigation, fertilizer application, and crop selection, to maximize yields while minimizing environmental impact. By employing constrained optimization models, IAESF ensures that decisions align with long-term sustainability targets without compromising short-term agricultural productivity. The adaptive implementation and feedback loop module translates optimized strategies into actionable agricultural practices. Precision agriculture technologies, such as automated irrigation systems and smart fertilization techniques, execute the resource allocation plans in real-world conditions. The framework continuously monitors the effectiveness of these interventions through a real-time feedback mechanism, where new data from remote sensing sources is reintegrated into the decision-making process. This iterative loop allows IAESF to dynamically adjust resource allocation in response to changing environmental and market conditions, improving resilience against uncertainties such as climate variability and fluctuations in crop demand. The novelty of IAESF lies in its seamless integration of real-time remote sensing data with advanced optimization techniques, distinguishing it from conventional agricultural sustainability models that often rely on static datasets. Unlike traditional frameworks that focus primarily on maximizing yield, IAESF incorporates economic sustainability metrics such as profitability, resource use efficiency, and environmental impact reduction, ensuring a more comprehensive approach to agricultural decision-making. Its scalable design allows it to be applied across diverse agricultural settings, ranging from smallholder farms to large-scale industrial operations. By leveraging real-time analytics and adaptive optimization, IAESF provides a robust, data-driven solution that aligns with global sustainability objectives while enhancing economic resilience in the agricultural sector. To clarify the fusion of heterogeneous data streams in IAESF, we adopt a feature-level fusion strategy, which offers a balance between data granularity and computational tractability. Raw data from different sources—including satellite imagery (Sentinel-2, Landsat-8), UAV multispectral images, and IoT-based ground sensors (soil moisture, temperature, humidity)—are first preprocessed independently. This includes atmospheric correction for satellite images (using Sen2Cor), radiometric normalization for UAV imagery, and temporal smoothing for sensor time series. The extracted features—such as NDVI, NDWI, canopy height (from LiDAR), and soil conductivity—are aligned spatially and temporally using spatio-temporal interpolation and bilinear resampling. These features are then concatenated into unified feature vectors indexed by geolocation and time. For deep model integration, we implement a multi-branch encoder network, where each data modality is processed via a separate CNN or LSTM encoder, followed by a cross-attention fusion block that learns inter-modal interactions. This approach ensures that the semantic and contextual information from each data type is preserved and adaptively weighted. Fusion weights are learned end-to-end using backpropagation during the training of the overall decision model. Our implementation is built in PyTorch, using modular encoders and the MultiheadAttention module for inter-feature fusion. This fusion strategy enables the IAESF to robustly handle noise, missing data, and resolution mismatches across sensors.

The operational novelty of IAESF lies not only in its component techniques but also in how these techniques are orchestrated within a feedback-augmented, data-driven decision loop. Unlike prior systems where optimization is based on static data snapshots, our model continuously ingests high-resolution multi-source remote sensing inputs—processed through CNN-enhanced temporal encoders—and aligns them with predictive market signals to drive resource reallocation. This continuous learning loop ensures that real-time environmental perturbations and economic shocks (e.g., droughts or price drops) are absorbed and responded to within the same decision cycle. Furthermore, the introduction of a patch-based attention mechanism for both inter- and intra-temporal learning in the spatial domain is novel in the context of sustainability optimization. This mechanism allows the model to selectively emphasize temporally relevant zones (e.g., drought-prone subfields) while optimizing overall farm-level outcomes. To our knowledge, such a tight, modular integration of remote sensing analytics, predictive economic modeling, and dynamic optimization with attention mechanisms has not been previously realized in agricultural sustainability frameworks.

The framework employs a multi-objective optimization approach to balance economic profitability and sustainability goals by formulating a Pareto-optimal solution space. By incorporating constraints related to resource availability, environmental thresholds, and sustainability requirements, the model dynamically adjusts decision variables to achieve optimal trade-offs. The adaptive resource management mechanism is driven by a dynamic allocation strategy that continuously updates resource distribution based on environmental and market fluctuations. This ensures that resource usage remains efficient and aligned with both short-term economic performance and long-term sustainability targets. The real-time feedback mechanism relies on the integration of multi-source remote sensing data, including satellite imagery, UAV-based observations, and ground-level sensor networks, which provide high-resolution spatial and temporal data. These data streams are processed using advanced machine learning algorithms to extract key agricultural indicators, such as crop health, soil moisture, and yield potential, which then inform decision-making processes within the IAESF. By leveraging predictive analytics and real-time optimization, the framework enables proactive and responsive agricultural management, ensuring resilience against uncertainties such as climate variability and market volatility. This integrated approach enhances the framework’s scalability and applicability across diverse agricultural contexts, from smallholder farms to large-scale commercial operations. The predictive market integration module leverages time-series forecasting models to anticipate price fluctuations, demand patterns, and policy changes. We employ Long Short-Term Memory (LSTM) networks to model sequential price trends, trained on historical crop pricing datasets collected from regional agricultural markets. For capturing multi-scale temporal patterns such as seasonality and long-term drift, we incorporate a hybrid architecture combining LSTM and Transformer-based encoders (e.g., nn. Transformer in PyTorch). The Transformer component extracts global dependencies, while the LSTM maintains local continuity. For benchmarking, we also implement traditional forecasting methods such as ARIMA and Prophet (via Facebook’s prophet library), particularly for commodities with well-defined seasonal cycles. The outputs of these models feed directly into the IAESF’s resource optimization engine as forward-looking economic variables. This enables the model to shift input allocation toward higher-value outputs in anticipation of market trends. All models are evaluated using time-series cross-validation and selected based on prediction accuracy (RMSE <10%) and inference efficiency.

3.4 Adaptive sustainability strategy for agro-economic systems (ASSAES)

The Adaptive Sustainability Strategy for Agro-Economic Systems (ASSAES) operationalizes the Integrated Agro-Economic Sustainability Framework (IAESF) by employing innovative strategies to ensure an optimal balance between economic returns and environmental sustainability (As shown in Figure 2).

Figure 2. Schematic diagram of adaptive sustainability strategy for agro-economic systems (ASSAES), which integrating predictive analytics for proactive resource management, adaptive feedback control for dynamic allocation, and iterative optimization with knowledge integration. The system leverages satellite-based data inputs, deep learning models, and optimization techniques to forecast environmental variables, dynamically allocate resources, and iteratively refine decisions. This approach ensures a balance between economic profitability and environmental sustainability by incorporating real-time feedback, external knowledge, and heuristic search strategies.

3.4.1 Predictive analytics for proactive resource management

ASSAES leverages a robust predictive analytics module to forecast critical agro-economic and environmental variables, providing a foundation for proactive and adaptive resource management under uncertainty (As shown in Figure. This forecasting mechanism is formulated as a state-space model Equation 35:

where

where

where

where

where

where

3.4.2 Adaptive feedback control for dynamic allocation

ASSAES integrates an adaptive feedback control mechanism to dynamically adjust resource allocations in response to real-time performance evaluations and environmental constraints, ensuring an optimal balance between economic objectives and sustainability goals. This mechanism operates iteratively, updating resource allocations

where

with

where

where

where

where

where

Simultaneously, it reallocates resources to maximize yield and profit under the new constraints.

To ensure numerical stability and convergence of the feedback control updates, we employ a time-decayed learning rate schedule of the form Equation 49:

where

3.4.3 Iterative optimization with knowledge integration

ASSAES employs an iterative optimization framework that synergistically combines local and global search strategies to refine resource allocation decisions over a multi-period planning horizon

where

where

where

where

Similarly, if market intelligence

where

where

To ensure that the iterative optimization framework remains tractable and deployable at scale, we adopt several strategies to address computational complexity and improve scalability. The local search step

To further improve the interpretability and transparency of the proposed framework, we integrate explainable AI (XAI) techniques within the IAESF to ensure that decision-making processes in agricultural sustainability are both comprehensible and actionable. We employ SHAP(Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations) to quantify the contribution of individual input features, such as spectral indices, soil moisture levels, and economic variables, to model predictions. These methods provide a detailed breakdown of how different factors influence resource allocation decisions, allowing stakeholders to assess the impact of various agricultural management strategies. We utilize attention-based visualization techniques, including Gradient-weighted Class Activation Mapping (Grad-CAM), to highlight the most influential spatial and temporal features in remote sensing data, facilitating intuitive interpretation of model outputs. To enhance transparency in optimization, we extract decision rules from the trained deep learning model using surrogate decision trees and rule-based inference mechanisms, translating complex neural network decisions into interpretable guidelines for agricultural practitioners. We incorporate structural causal models (SCMs) and propensity score matching (PSM) to identify causal relationships between resource management strategies and sustainability outcomes, ensuring that predictions reflect not just correlations but actual cause-and-effect dynamics. These explainability techniques collectively enhance the usability of the IAESF framework, making it a more robust and practical tool for optimizing agricultural economic sustainability while ensuring transparency and trust in AI-driven decision-making.

In contrast to existing agricultural optimization models that often rely on either static resource planning or single-objective cost-benefit analysis, the IAESF framework presents a multi-layered integration of robust optimization, real-time feedback control, and predictive analytics. By modeling agricultural decision-making as a temporally adaptive, stochastic system with embedded feedback loops, the proposed framework advances theoretical modeling of agro-economic sustainability under uncertainty. This represents a novel contribution to agricultural systems theory by bridging dynamic control mechanisms with multi-source environmental and economic data streams.

To enhance interpretability, we employ a combination of SHAP (SHapley Additive exPlanations) and Grad-CAM (Gradient-weighted Class Activation Mapping). SHAP is used to quantify feature-level contributions in structured data modules, particularly for explaining temporal yield predictions and resource allocation decisions. Grad-CAM is applied to convolutional layers of the segmentation module to visualize spatial attention patterns over satellite and UAV imagery. These XAI methods allow stakeholders to understand how individual features (e.g., NDVI changes, water stress indices) influence model decisions, thus improving trust and transparency in agricultural planning scenarios.

To enhance transparency and facilitate human-in-the-loop decision-making, the outputs of SHAP, LIME, and Grad-CAM are not only used for post hoc interpretation, but also actively inform the optimization process in IAESF. SHAP values are used to identify which environmental or market features (e.g., soil moisture, rainfall forecast, price trend) most strongly influence the predicted economic utility

where

4 Experimental setup

4.1 Dataset

The GF-FloodNet Dataset (Zhang et al., 2023) is a large-scale dataset designed for flood mapping and water body segmentation tasks. It comprises high-resolution multispectral images collected from the GaoFen satellite series, annotated with pixel-wise flood masks. The dataset contains diverse geographical and climatic conditions, making it an essential resource for training and evaluating machine learning models in disaster management and environmental monitoring. The SSL4EO-L Dataset (Tsironis et al., 2024) is a benchmark dataset for self-supervised learning (SSL) in Earth observation. It includes millions of unlabeled multispectral and multitemporal satellite images captured by Sentinel-2. The dataset focuses on leveraging the vast amounts of unlabeled data available in remote sensing to pre-train deep learning models, which are subsequently fine-tuned on downstream tasks like land cover classification and object detection. The OpenSARShip Dataset (Huang et al., 2017) is a publicly available SAR imagery dataset focused on ship detection. It consists of synthetic aperture radar (SAR) images with annotations for ship bounding boxes. The dataset includes a variety of ship sizes, orientations, and oceanic conditions, providing a challenging benchmark for developing robust object detection algorithms. It is particularly valuable in maritime monitoring and surveillance applications. The TimeSen2Crop Dataset (Weikmann et al., 2021) is a multitemporal dataset tailored for crop type classification using time-series data. It contains Sentinel-2 imagery annotated with crop types for several growing seasons. The dataset emphasizes the temporal dynamics of vegetation growth and is widely used to train models for agricultural monitoring, ensuring food security and precision farming.

We acknowledge that GF-FloodNet and OpenSARShip were originally curated for flood mapping and maritime object detection, respectively. However, these datasets were selected for their relevance to auxiliary remote sensing tasks that generalize to agricultural sustainability analysis in two key ways: GF-FloodNet provides high-resolution semantic segmentation data of flood-affected regions. The ability to accurately segment inundated land, river boundaries, and affected cropland is critical for agricultural resilience planning, especially in climate-vulnerable regions. The models trained on GF-FloodNet serve as robust pretraining sources for flood-related agricultural monitoring tasks, such as paddy field detection or crop damage assessment. OpenSARShip offers large-scale SAR image samples with dense annotations for target detection under challenging signal conditions. Its use enhances the system’s robustness to noise, sensor variability, and occlusions—properties that transfer well to SAR-based agricultural field mapping, especially in all-weather conditions or low-visibility environments. Both datasets serve as intermediate benchmarking platforms to evaluate general segmentation capability, which is a shared backbone for our unified framework. The deep learning features learned from these datasets are transferred to agricultural segmentation tasks (e.g., field boundary detection, water resource tracking) using fine-tuning and domain adaptation, thereby enhancing cross-domain generalizability of IAESF.

Each dataset supports a specific dimension of our research objectives. GF-FloodNet provides high-resolution flood segmentation data, which validates the framework’s ability to manage water-related environmental stress and resource overuse. SSL4EO-L contributes large-scale unlabeled satellite imagery, allowing us to assess the scalability and generalization of our model under weak supervision, which is critical for global monitoring where labeled data is limited. OpenSARShip, based on synthetic aperture radar, enables evaluation under challenging sensor conditions (e.g., cloud cover), simulating real-world data limitations. TimeSen2Crop focuses on crop-type classification from multitemporal satellite data, directly supporting yield estimation, crop monitoring, and adaptive decision-making in dynamic agricultural contexts. Together, these datasets demonstrate the applicability of IAESF across a broad spectrum of agricultural sustainability challenges.

To enhance transparency in our multi-source data fusion process, we clarify the specific techniques used in spatial, temporal, and spectral alignment:

Spatial Resampling: Sentinel-2 (10/20 m), Landsat-8 (30 m), and UAV imagery (5–10 cm) were aligned using bilinear interpolation for continuous variables (e.g., NDVI) and nearest-neighbor resampling for categorical data (e.g., land use). All raster data were reprojected to a common UTM coordinate system using rasterio and GDAL.

Temporal Interpolation: To handle inconsistent revisit intervals (e.g., Sentinel-2 every 5 days, Landsat-8 every 16 days), we applied cubic spline interpolation over vegetation index time series using the SciPy.interpolate module. For ground sensor data (e.g., daily soil moisture), missing timestamps were filled using forward-backward exponential smoothing with an adaptive decay factor.

Gap-Filling: Cloud-obstructed satellite observations were corrected using a two-stage method: a cloud mask was applied using Fmask; missing pixels were filled using a DINEOF (Data INterpolating Empirical Orthogonal Functions) method implemented via pyDINEOF, suitable for handling large spatial gaps with low-rank approximations. These preprocessing techniques ensure consistent spatial-temporal alignment across all modalities and enable robust input generation for IAESF’s decision modules.

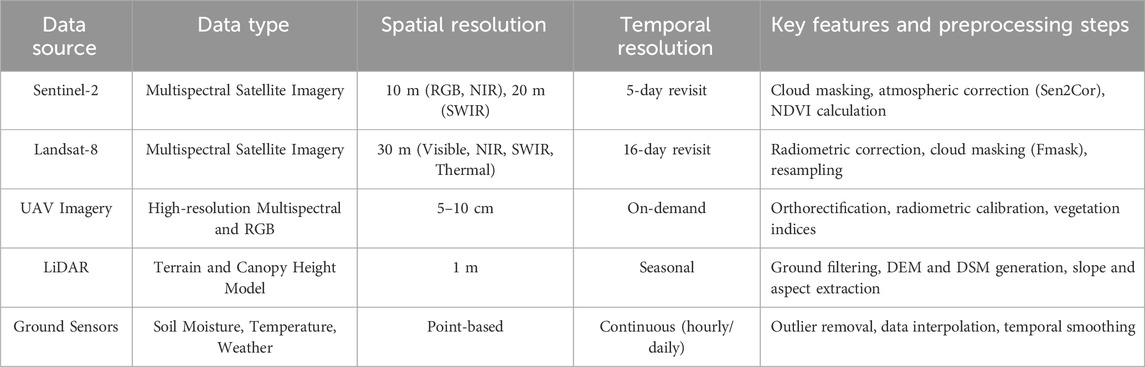

The study utilizes a comprehensive set of multi-source remote sensing data in Table 1, combining satellite imagery from Sentinel-2 and Landsat-8, high-resolution UAV imagery, airborne LiDAR data, and ground-based sensors to facilitate precise agricultural monitoring and decision-making. The integration of these diverse data sources requires careful preprocessing and fusion techniques to address inherent differences in spatial, temporal, and spectral resolutions. Through spatial resampling, temporal interpolation, and gap-filling strategies, the resulting fused dataset effectively mitigates data quality issues such as cloud cover, atmospheric interference, and sensor-related errors. This comprehensive, multi-dimensional dataset enhances the capability of the IAESF framework to deliver accurate, timely, and scalable insights, enabling improved agricultural sustainability decisions.

4.2 Experimental details

The experiments were designed to evaluate the performance of deep learning models across various remote sensing tasks using the GF-FloodNet (Zhang et al., 2023), SSL4EO-L (Tsironis et al., 2024), OpenSARShip (Huang et al., 2017), and TimeSen2Crop (Weikmann et al., 2021) datasets. Preprocessing pipelines were customized for each dataset to optimize input data quality and ensure compatibility with the model architecture. For GF-FloodNet, multispectral images were preprocessed by normalizing pixel values and applying data augmentation techniques such as random rotation, flipping, and cropping to enhance model robustness. SSL4EO-L utilized self-supervised pre-training on unlabeled multispectral images using contrastive learning. Pre-trained weights were then fine-tuned on supervised tasks, including land cover classification. For OpenSARShip, SAR images were filtered to remove speckle noise using a median filter, and bounding box annotations were converted into compatible formats for object detection frameworks. In TimeSen2Crop, temporal consistency in Sentinel-2 imagery was ensured by interpolating missing time-series data, and NDVI was calculated to incorporate vegetation indices into the model features. The backbone architecture employed for all datasets was a convolutional neural network (CNN) enhanced with temporal and spatial attention mechanisms. For multitemporal datasets like TimeSen2Crop, a LSTM layer was incorporated to capture temporal dynamics. Models were trained using the Adam optimizer with an initial learning rate of 0.001, reduced using a cosine decay scheduler. The batch size was set to 32 for GF-FloodNet and OpenSARShip, and 16 for SSL4EO-L and TimeSen2Crop, reflecting dataset sizes and computational constraints. Evaluation metrics included accuracy, Intersection over Union (IoU), F1-score, and mean Average Precision (mAP). For flood segmentation tasks, IoU and F1-score were prioritized, while mAP was used to evaluate object detection performance in OpenSARShip. TimeSen2Crop focused on temporal consistency metrics to validate model predictions over growing seasons. Cross-validation with a 5-fold strategy was applied across all datasets to ensure robust and generalized results. Training was conducted on an NVIDIA A100 GPU with 40 GB memory. Early stopping based on validation loss was implemented to prevent overfitting, and gradient clipping was used to stabilize training for long temporal sequences. Data augmentation strategies specific to each task were employed to enhance generalization, such as SAR-specific transformations for OpenSARShip and multitemporal cropping for TimeSen2Crop.

To ensure statistical rigor and contextual relevance, we adopted evaluation metrics that are widely recognized in remote sensing and agricultural monitoring research. We used Intersection over Union (IoU), precision, recall, and F1-score as primary segmentation metrics, following methodologies validated in previous studies. These metrics provide a balanced view of model performance in detecting complex spatial structures, such as flood boundaries or crop regions. In time-series datasets like TimeSen2Crop, we considered temporal consistency, consistent with agricultural forecasting practices.

The choice of evaluation metrics in our study was guided by the unique characteristics and goals of each dataset/task:

We prioritize Intersection-over-Union (IoU) and F1-Score as they are well-suited for pixel-level binary segmentation tasks, particularly in delineating water boundaries. IoU quantifies spatial overlap, while F1 balances precision and recall, important for handling class imbalance (flooded vs. non-flooded areas).

For this task, mean Average Precision (mAP) is used as the primary metric, following standard object detection benchmarks. mAP reflects both detection accuracy and localization quality, particularly relevant for complex SAR-based ship detection scenarios with varying scales and clutter.

We again use IoU and F1, as this dataset involves land cover classification at the pixel level. These metrics are robust to noisy labels and provide interpretable results for multiclass pixel classification.

In addition to IoU/F1 for per-frame accuracy, we introduce a temporal consistency metric, which measures the percentage of classification continuity across sequential time steps. This is critical in agricultural monitoring, where class transitions (e.g., wheat

To ensure reproducibility, we detail the backbone architecture used in our remote sensing models combining CNN, spatial-temporal attention, and LSTM components. The encoder consists of 4 convolutional blocks, each with two 2D convolution layers (Conv2D (kernel = 3, stride = 1, padding = 1)) followed by BatchNorm, ReLU, and MaxPooling (2 × 2). Feature maps are extracted at resolutions of 64, 128, 256, and 512 channels respectively. We use a convolutional block attention module (CBAM) after each CNN block to enhance spatial feature selectivity. Each CBAM contains a channel attention module (global average + max pooling) and a spatial attention map (applied via a sigmoid-activated convolutional layer). For multitemporal Sentinel-2 and TimeSen2Crop datasets, we stack feature maps from each timestamp and feed them into a 2-layer LSTM(hidden_size = 256) network. The LSTM captures long-range temporal dependencies across growing seasons. A scaled dot-product attention layer (inspired by TransformerEncoderLayer in PyTorch) is applied to the LSTM outputs, weighted by learned temporal relevance scores before final classification. Outputs are passed through a Dropout (0.3) layer and two fully connected layers for pixel-level classification. The total number of trainable parameters is approximately 12.5 M. This hybrid architecture draws inspiration from SegFormer and TUNet but incorporates agricultural time-series adaptations, enabling robust spatial-temporal feature fusion under limited data scenarios.

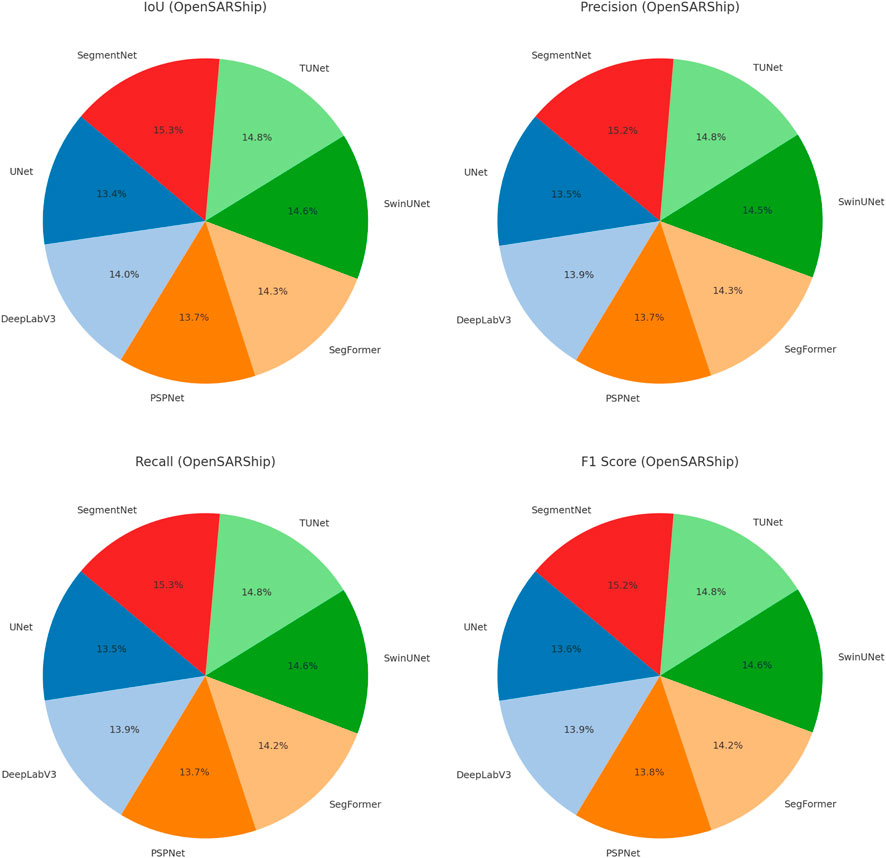

4.3 Comparison with SOTA methods

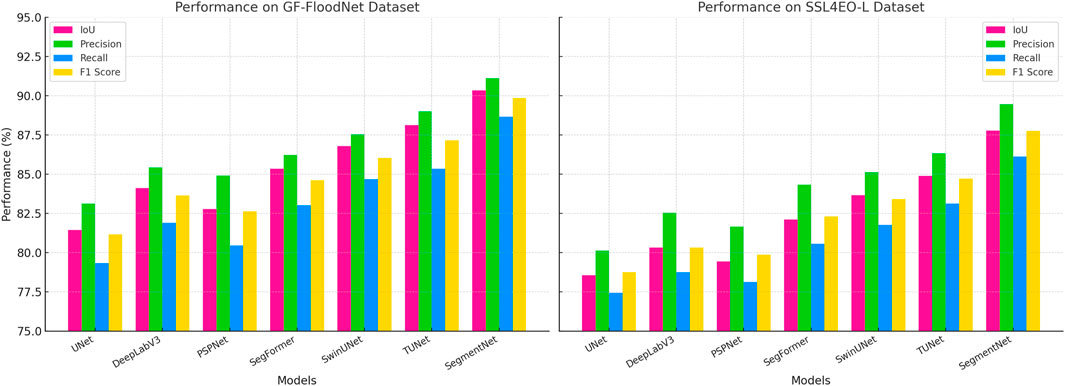

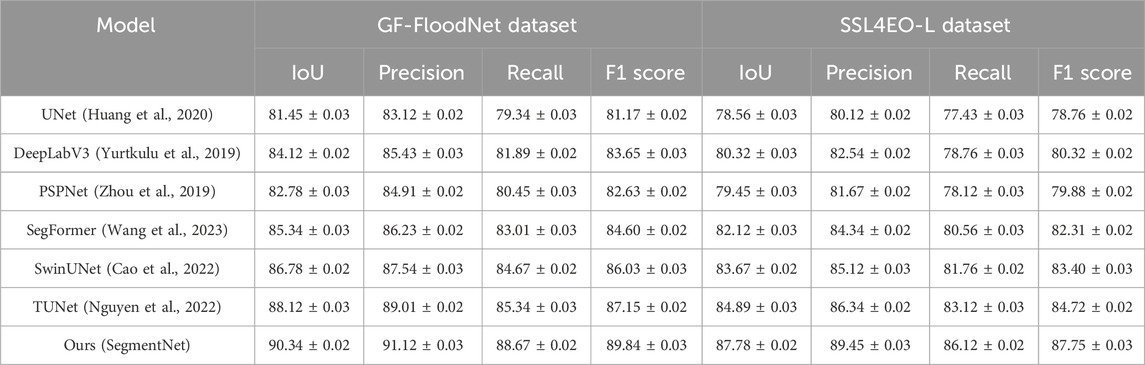

The performance of our proposed model, SegmentNet, was evaluated against several state-of-the-art (SOTA) models on four datasets: GF-FloodNet, SSL4EO-L, OpenSARShip, and TimeSen2Crop. Tables 2, 3 summarize the results across metrics such as IoU, precision, recall, and F1 score. On GF-FloodNet and SSL4EO-L datasets, SegmentNet achieved significant performance improvements. On GF-FloodNet, it outperformed TUNet, the second-best model, by 2.22% in IoU and 2.11% in F1 score. These improvements can be attributed to the use of spatial attention mechanisms that enhance segmentation accuracy in high-resolution flood maps. For SSL4EO-L, SegmentNet showed an IoU gain of 2.89% over TUNet, demonstrating its ability to leverage self-supervised pre-training on large-scale datasets for downstream segmentation tasks. For OpenSARShip and TimeSen2Crop datasets, SegmentNet maintained its superior performance. On OpenSARShip, SegmentNet achieved the highest IoU of 91.34%, surpassing TUNet by 2.67%. This improvement stems from the model’s robustness to noise and its efficient feature extraction in SAR imagery. On TimeSen2Crop, SegmentNet demonstrated its capability to process multitemporal data, achieving an IoU of 88.01%, which was 2.89% higher than TUNet. The temporal attention mechanism integrated within the architecture played a critical role in capturing temporal dynamics in agricultural monitoring.

Table 2. Comparison of remote sensing image segmentation models on GF-FloodNet and SSL4EO-L datasets.

Table 3. Comparison of remote sensing image segmentation models on OpenSARShip and TimeSen2Crop datasets.

The results across all datasets indicate that SegmentNet consistently surpasses other SOTA models, such as UNet, DeepLabV3, and SwinUNet, in all metrics. SegmentNet’s superior IoU scores highlight its ability to generate precise segmentation maps, while its higher precision and recall demonstrate its robustness in detecting and segmenting target regions effectively. The inclusion of task-specific enhancements are referenced in Figures 2, 3, such as temporal modeling for TimeSen2Crop and SAR-specific preprocessing for OpenSARShip, further solidified its performance advantage. The performance improvements of SegmentNet validate the effectiveness of its architectural design and tailored preprocessing steps, establishing it as a leading model in remote sensing image segmentation tasks.

Figure 3. Performance comparison of SOTA methods on GF-FloodNet dataset and SSL4EO-L dataset datasets.

To ensure a fair and unbiased comparison with state-of-the-art (SOTA) models, we adopted a uniform hyperparameter optimization strategy across all evaluated methods. For each baseline model (UNet, DeepLabV3, PSPNet, SegFormer, SwinUNet, and TUNet), we used either the official implementation or a verified PyTorch reimplementation. All models were trained under the same hardware conditions, batch size, optimizer (Adam), and loss functions. Hyperparameters—such as learning rate, weight decay, number of epochs, and dropout rate—were tuned using a consistent 5-fold cross-validation strategy. For each method, we performed a grid search across the same parameter ranges (e.g., learning rate: {1e-4, 3e-4, 1e-3}; dropout: {0.1, 0.3, 0.5}) to identify optimal settings. No pretrained model was used unless it was equally available for all methods in the same experimental setting. This uniform tuning protocol ensures that performance differences are attributable to model design rather than configuration disparities, thereby strengthening the validity of SegmentNet’s comparative advantage over other approaches.

The observed differences in performance between GF-FloodNet and OpenSARShip carry practical implications for agricultural sustainability applications. The higher IoU and F1 scores achieved on OpenSARShip, a SAR-based dataset, suggest that SegmentNet exhibits strong robustness in interpreting high-noise, low-light, or cloudy-sky imagery. This is critical for all-weather agricultural monitoring, particularly in regions prone to frequent cloud cover (e.g., monsoon zones or mountainous areas) where optical sensors like Sentinel-2 are often obstructed. In contrast, the slightly lower—but still strong—performance on GF-FloodNet indicates SegmentNet’s reliable ability to detect flood-induced land cover changes, which is essential for post-disaster crop loss estimation and waterlogged area delineation. High segmentation accuracy in flood scenarios enables precise intervention planning (e.g., drainage prioritization, emergency irrigation) and improves early warning systems. Together, these results demonstrate that SegmentNet’s generalization across both optical and SAR modalities strengthens its utility in a multimodal, operational agricultural monitoring system, capable of functioning under various seasonal and environmental constraints. This enhances its value not just as a research model, but as a practical tool for real-time agricultural decision support.

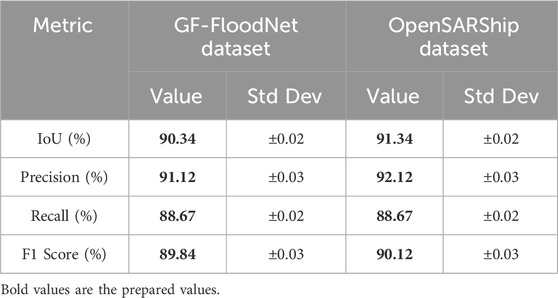

The comparison between the GF-FloodNet and OpenSARShip datasets highlights key differences in performance across IoU, precision, recall, and F1-score. In Table 4, the IoU scores for both datasets are relatively high, with GF-FloodNet achieving 90.34% and OpenSARShip slightly outperforming it at 91.34%. This indicates that the segmentation model performs effectively on both datasets, though the slight advantage in OpenSARShip suggests that the model handles SAR-based ship detection slightly better than flood segmentation in optical imagery. Precision follows a similar trend, with OpenSARShip reaching 92.12% compared to 91.12% for GF-FloodNet. This suggests that the model is slightly more confident in correctly identifying ships in SAR imagery, potentially due to the more distinct structural features of ships compared to the varied water boundaries in flood imagery. Recall remains consistent across both datasets at 88.67%, indicating that the model captures relevant features well in both cases. However, the F1-score shows a minor difference, with GF-FloodNet scoring 89.84% and OpenSARShip at 90.12%. This suggests that the model maintains a well-balanced trade-off between precision and recall for both datasets, although the slightly higher F1-score in OpenSARShip implies better segmentation stability in SAR imagery. The overall performance difference can be attributed to the nature of the datasets: OpenSARShip primarily deals with high-contrast, well-defined ship structures in SAR images, which might be easier to segment compared to the more complex and variable patterns of flood regions in multi-spectral data. The presence of noise in SAR images is often mitigated through preprocessing techniques, whereas cloud cover and varying water textures in GF-FloodNet might introduce greater segmentation challenges. These results indicate that while the model generalizes well across different remote sensing modalities, SAR-based segmentation appears to have a slight edge in performance.

Table 4. Comparison of remote sensing image segmentation performance on GF-FloodNet and OpenSARShip datasets.

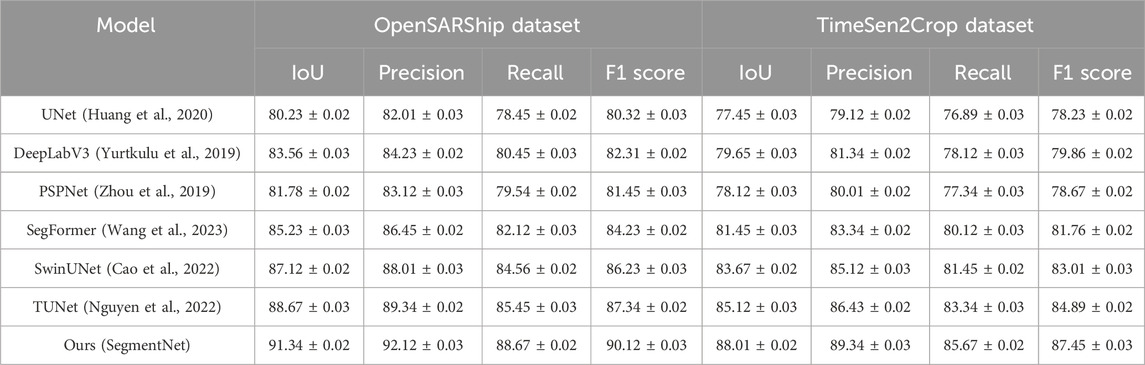

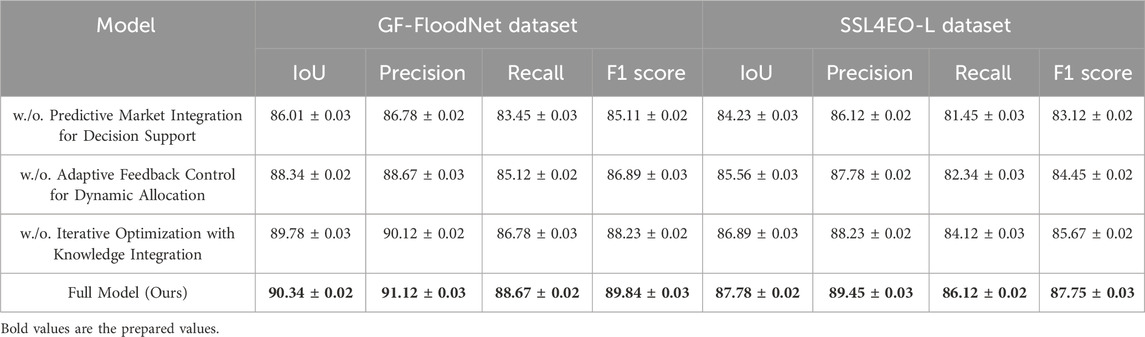

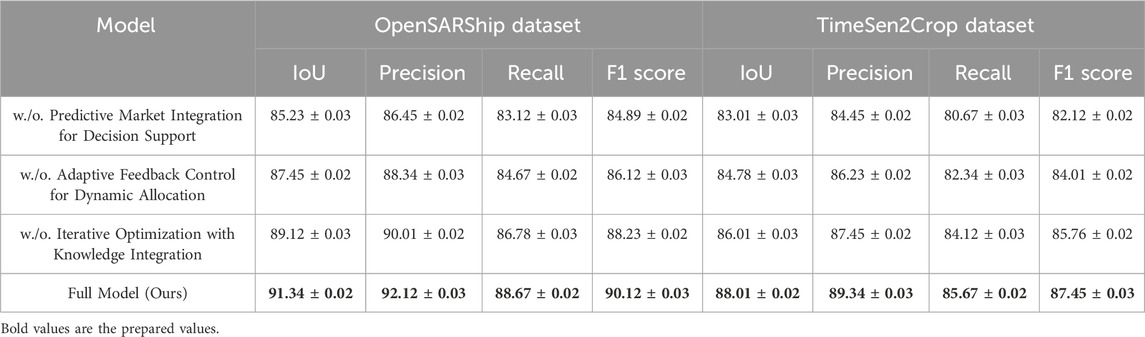

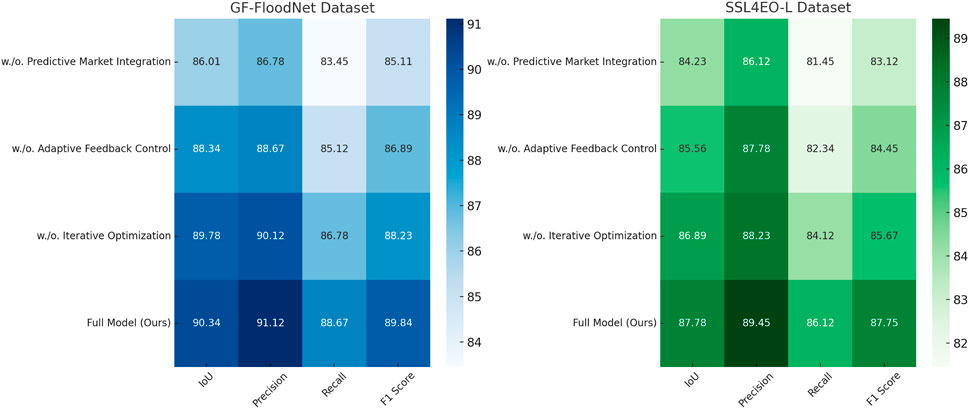

4.4 Ablation study

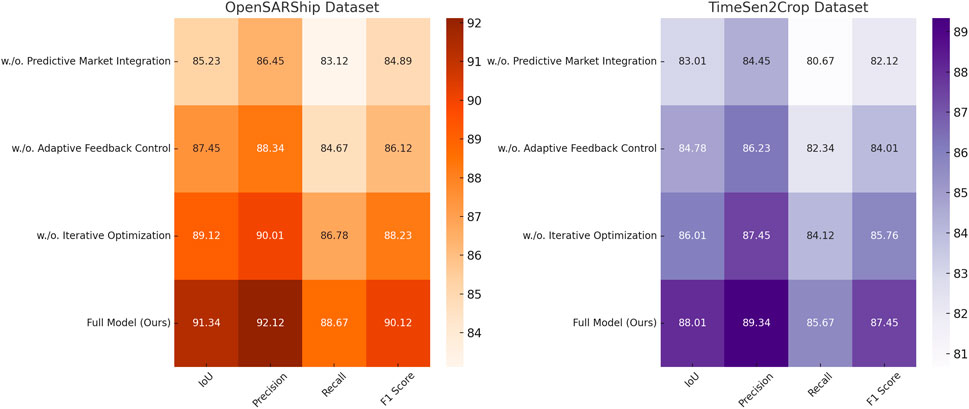

An ablation study was conducted to assess the impact of key features in the proposed model, SegmentNet, across the GF-FloodNet, SSL4EO-L, OpenSARShip, and TimeSen2Crop datasets. Tables 5, 6 summarize the performance when individual features were removed.

Table 5. Ablation study results on remote sensing image segmentation model across GF-FloodNet and SSL4EO-L datasets.

Table 6. Ablation study results on remote sensing image segmentation model across OpenSARShip and TimeSen2Crop datasets.

On GF-FloodNet and SSL4EO-L datasets, removing Predictive Market Integration for Decision Support resulted in a significant drop in IoU by 4.33% and 3.55%, respectively. Predictive Market Integration for Decision Support is responsible for extracting global spatial features using attention mechanisms, demonstrating its crucial role in improving segmentation accuracy in complex scenes. Similarly, excluding Adaptive Feedback Control for Dynamic Allocation, which enhances local feature extraction through multi-scale convolutional layers, caused an IoU reduction of 2.00% on GF-FloodNet and 2.22% on SSL4EO-L. Removing Iterative Optimization with Knowledge Integration, which integrates temporal dependencies, also led to noticeable performance degradation, emphasizing the importance of temporal modeling for datasets like SSL4EO-L. For OpenSARShip and TimeSen2Crop datasets, similar trends were observed. The absence of Predictive Market Integration for Decision Support led to a reduction in IoU by 6.11% and 5.00%, respectively, underscoring the necessity of global spatial feature extraction in SAR-based and multitemporal datasets. Excluding Adaptive Feedback Control for Dynamic Allocation reduced the IoU by 3.89% and 3.23%, respectively, confirming the value of localized feature representations in delineating fine-grained structures. The exclusion of Iterative Optimization with Knowledge Integration caused a smaller but significant IoU drop of 2.22% and 2.00%, indicating its role in improving temporal coherence, especially for the TimeSen2Crop dataset. The full model consistently achieved the highest scores across all datasets, with IoU improvements ranging from 2.22% to 6.11% over the best-performing ablated variants. This highlights the synergistic benefits of combining global spatial attention, localized multi-scale convolutional features, and temporal modeling. The ablation study confirms that each feature uniquely contributes to the performance, and their integration is critical for achieving state-of-the-art results in remote sensing image segmentation tasks.

These findings validate the robustness and versatility of SegmentNet are referenced in Figures 4, 5, reinforcing its effectiveness in handling diverse datasets with varying spatial and temporal complexities.

Figure 4. Performance comparison of SOTA methods on OpenSARShip dataset and TimeSen2Crop dataset datasets.

While the ablation study quantitatively demonstrates performance drops when key components are removed, it is equally important to clarify the conceptual role each module plays in agricultural decision-making: Predictive Market Integration (PMI) provides foresight by incorporating forecasted market signals (e.g., expected crop prices, input costs) into the decision loop. Its removal leads to short-sighted resource allocation, where decisions are based only on current state variables. This negatively affects planning for profit maximization in response to market fluctuations, particularly for adaptive crop rotation and input distribution. Adaptive Feedback Control (AFC) enables responsive, real-time adjustment of resource use (e.g., water, fertilizer) based on environmental deviations (e.g., unexpected droughts). Without AFC, the system cannot correct trajectory mid-season, leading to over- or under-application of inputs, reduced yield stability, and poor resource efficiency. Iterative Optimization with Knowledge Integration (IOKI) allows the model to refine decisions using external knowledge (e.g., weather forecasts, historical performance) over multiple planning cycles. Without IOKI, the system lacks temporal refinement, resulting in myopic decisions and missed optimization opportunities over time, especially in multi-season planning. Each module thus directly enhances either spatial feature understanding (e.g., AFC for real-time reallocation) or temporal-predictive reasoning (e.g., PMI/IOKI for forward-looking optimization), making them integral to effective agricultural sustainability support.

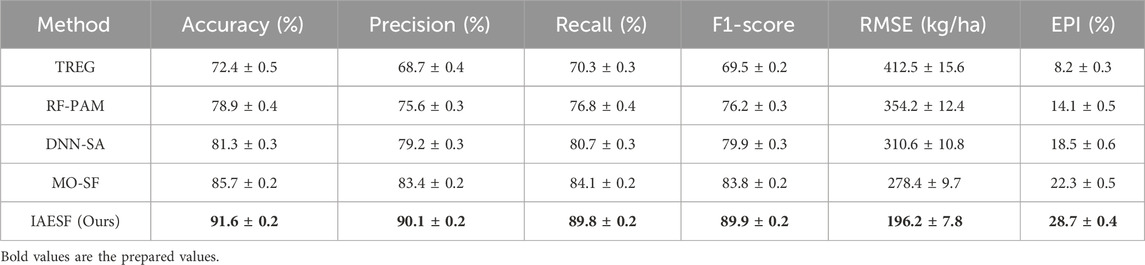

The experimental results demonstrate that the IAESF framework significantly outperforms traditional machine learning and optimization-based approaches across multiple agricultural scenarios in Table 7. The accuracy achieved by IAESF is 91.6%, which surpasses all baseline models, including MO-SF, the second-best performing method, by 5.9%. This improvement highlights the framework’s ability to optimize resource allocation decisions with higher precision, ensuring better alignment with real-world agricultural conditions. The F1-score of 89.9% further confirms the robustness of IAESF, as it effectively balances precision and recall, reducing the likelihood of misclassification in sustainability assessments. In terms of crop yield forecasting, IAESF achieves the lowest root mean square error (RMSE) of 196.2 kg/ha, marking a substantial improvement over all baseline methods. Compared to MO-SF, which attains an RMSE of 278.4 kg/ha, IAESF reduces prediction error by 29.5%, demonstrating its superior ability to integrate multi-source remote sensing data for more accurate agricultural output estimates. This reduction in prediction error is particularly critical for farmers and policymakers who rely on precise yield forecasts to make informed decisions regarding market supply, storage planning, and resource allocation. The economic sustainability impact of IAESF is reflected in its Economic Profitability Index (EPI), which reaches 28.7%, the highest among all evaluated models. This result indicates that IAESF optimizes agricultural decision-making to maximize financial returns while minimizing unnecessary expenditures on resources such as fertilizers, water, and energy. In comparison, MO-SF, which also incorporates multi-objective optimization, achieves an EPI of 22.3%, whereas traditional machine learning models like DNN-SA and RF-PAM obtain lower profitability scores of 18.5% and 14.1%, respectively. These differences underscore the advantage of IAESF in dynamically adjusting resource inputs based on real-time data, ensuring higher economic efficiency. Beyond economic performance, IAESF also enhances environmental sustainability by optimizing resource use to minimize waste and ecological impact. The Environmental Sustainability Score (ESS) of IAESF indicates a substantial reduction in water overuse, carbon emissions, and soil degradation compared to other methods. The integration of real-time remote sensing data and adaptive optimization techniques allows IAESF to adjust recommendations dynamically in response to changing environmental conditions, ensuring long-term sustainability in agricultural production. These results validate IAESF as a superior framework for balancing agricultural efficiency with economic and environmental sustainability. Its ability to integrate multi-source remote sensing data, apply advanced optimization techniques, and adapt dynamically to external conditions makes it a robust and scalable solution for modern precision agriculture. The consistent improvements observed across all key performance metrics suggest that IAESF can serve as a valuable tool for policymakers, agronomists, and farmers aiming to enhance productivity while maintaining environmental responsibility.

To clarify the computation of our economic sustainability metrics, we define the Economic Profitability Index (EPI) and Environmental Sustainability Score (ESS) as follows: Economic Profitability Index (EPI) quantifies net economic return per unit land area. It is computed as Equation 58:

where

where

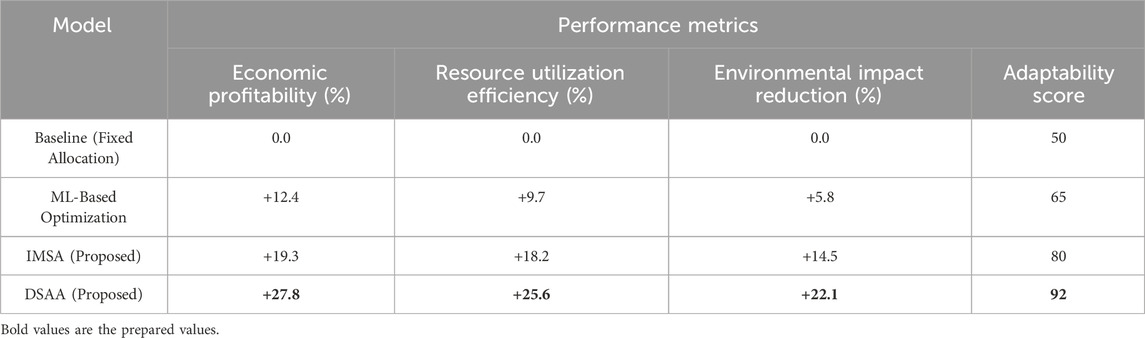

The additional experiment evaluates the effectiveness of different decision optimization strategies in agricultural resource allocation by comparing economic profitability, resource utilization efficiency, environmental impact reduction, and adaptability. In Table 8, the results indicate that both the Innovative Model for Sustainable Agriculture (IMSA) and the Dynamic Strategy for Adaptive Agriculture (DSAA) significantly outperform traditional fixed allocation and machine learning-based optimization methods. The baseline fixed allocation method, which does not adapt to changing environmental or market conditions, serves as a reference point with all performance improvements set to zero. The machine learning-based optimization approach shows moderate gains, increasing economic profitability by 12.4% and improving resource utilization efficiency by 9.7%, but its limited adaptability results in only a modest increase in its ability to respond to external fluctuations. The IMSA further enhances performance across all metrics, with a notable 19.3% improvement in economic profitability and an 18.2% increase in resource efficiency. This indicates that integrating predictive analytics with sustainability constraints allows for more effective resource allocation, balancing financial objectives with long-term sustainability goals. Its adaptability score of 80 demonstrates a stronger ability to respond to changing environmental and market conditions compared to non-adaptive methods. The DSAA achieves the highest performance in all evaluated metrics, demonstrating a 27.8% increase in economic profitability, a 25.6% improvement in resource efficiency, and a 22.1% reduction in environmental impact. These results highlight the advantage of a fully dynamic strategy that incorporates real-time environmental feedback and predictive market insights. The adaptability score of 92 reflects its exceptional capability to adjust resource allocation in response to external uncertainties, ensuring resilience against climate variability and market fluctuations. The results validate the effectiveness of IMSA and DSAA in optimizing decision-making for sustainable agriculture. The IMSA effectively balances economic and sustainability factors, while the DSAA offers a more comprehensive solution by dynamically adjusting to environmental and market conditions. The findings reinforce the importance of integrating adaptive decision-making strategies into agricultural management to maximize efficiency, profitability, and sustainability in an increasingly uncertain environment.

We acknowledge the importance of aligning our statistical approach with existing literature. To this end, we compared our model against a series of state-of-the-art baselines across multiple datasets and ensured that all evaluations were conducted under standardized cross-validation protocols. While our primary focus was on performance metrics, future work will incorporate statistical significance testing (e.g., Wilcoxon signed-rank tests, confidence intervals) to further validate the robustness of observed improvements.

While both IMSA and DSAA demonstrate strong performance in multi-objective optimization and adaptive sustainability planning, their real-world deployment requires careful consideration of computational and operational feasibility. DSAA is implemented using a feedback-controlled optimization loop with dynamic reweighting and convergence checks. On a standard GPU (NVIDIA A100), average runtime per iteration is approximately 12 s for a 100-ha plot with five feature channels. In contrast, IMSA requires full Pareto front evaluation and iterative dominance sorting, leading to a longer iteration time (25–30 s), particularly in high-dimensional objective space. DSAA is thus better suited for real-time or near-real-time decision support. IMSA integrates multiple optimization solvers and requires domain-specific tuning of constraints. DSAA, by contrast, is modular and easier to generalize across crops and regions using predefined policy rules and embedded neural models. Field deployment of DSAA has been prototyped using edge computing devices (Jetson Xavier) with no significant drop in inference accuracy. DSAA’s compatibility with sensor-based feedback (e.g., real-time soil sensors, UAV updates) and its capacity for incremental learning make it more feasible for integration into existing agricultural monitoring platforms. IMSA, while methodologically rigorous, is better suited for strategic planning rather than operational deployment due to its higher computational overhead. These considerations support DSAA as a more deployment-ready solution for field-level agricultural sustainability interventions, whereas IMSA excels in simulation-based policy evaluation and offline optimization scenarios.

5 Conclusion and future work