Abstract

The present study investigated the role of a Higher-Order Adjunct Question Package (HAQP) in supporting learners’ germane cognitive load (GCL) within digital learning environments, using two complementary indicators: a self-reported measure of germane cognitive load and knowledge-transfer performance. A total of 126 Mathematics and English teachers participated in the study and were randomly assigned to either an experimental group (n = 64) or a control group (n = 62). Through online instruction delivered via Google Forms, all participants viewed four analogical paintings accompanied by explanatory text illustrating principles of effective learning. In the control condition, the interpretation of each painting was directly provided, whereas in the experimental condition the interpretation was replaced by a higher-order multiple-choice question requiring participants to infer the correct interpretation. The question was embedded within a package that involved active responding, feedback, and guidance away from guessing, rather than functioning as a question alone. This package was designed to promote deeper engagement with the visual information. Findings showed that participants in the experimental group reported higher levels on the self-reported GCL measure and demonstrated superior knowledge-transfer performance compared to those in the control group. These results suggest that the HAQP contributes to learning conditions that support germane cognitive load in online instruction, likely by guiding learners’ attention toward conceptually relevant information and reducing engagement with extraneous details.

Introduction

According to Cognitive Load Theory (CLT), the cognitive processing required for learning places demands on the learner's limited working memory (Anderson, 1977; Atkinson and Shiffrin, 1968; Paas and Ayres, 2014; Sweller, 1988; Sweller et al., 2019). Within this framework, cognitive load is commonly categorized into intrinsic, extraneous, and germane load. Intrinsic cognitive load (ICL) arises from the inherent complexity of the material and must be managed to remain optimal, whereas extraneous cognitive load (ECL) stems from suboptimal instructional design and should be minimized. Germane cognitive load (GCL), by contrast, reflects learners’ intentional investment of mental effort in constructing and refining schemas (Jordan et al., 2020; Sweller, 2010, 2020; Sweller et al., 1998; Sweller et al., 2019). Thus, GCL is conceptualized as a process variable (“germane processing”) involving the allocation of cognitive resources to learning-relevant activities—that is, activities directly related to dealing with intrinsic load (Paas and van Merriënboer, 2020; Sweller et al., 2019). The total cognitive load across these components must remain within the limits of working memory capacity to ensure effective learning (Sweller et al., 2011). Learning is most effective when learners devote most of their cognitive resources to germane processing and the total load does not exceed working memory capacity; when cognitive load is irrelevant or excessive, learning is hindered (Gerjets et al., 2009; Sweller, 1988; Sweller et al., 1998). Accordingly, researchers increasingly emphasize the importance of instructional designs that maximize GCL by guiding learners to allocate their cognitive resources to schema-relevant processing (Paas et al., 2003, 2004; Paas and Van Gog, 2006; Sweller, 2020; van Merriënboer and Sweller, 2005).

One instructional technique that may help create conditions conducive to germane cognitive load is the use of adjunct questions (AQs)—questions inserted into instructional materials to guide learners’ attention toward information that is central to learning (Mory et al., 1991). Their value lies in their ability to highlight conceptually important elements, which is particularly beneficial in asynchronous online learning environments where instructor presence is limited or absent. In such settings, learners may unintentionally focus on peripheral or irrelevant aspects of the material, and instructors cannot actively monitor or redirect their attention (Ahmed, 2024; Mayer et al., 2020). As a result, instructional materials must shoulder this role by prompting learners to engage with tasks that align closely with the intended learning outcomes.

Adjunct questions are commonly categorized into factual and higher-order forms (Andre, 1979; Winne, 1979). While factual questions direct learners to recall explicitly stated information, higher-order questions require deeper engagement, prompting learners to analyse, infer, or explain relationships within the content—for example, by asking “why” or “how.” A substantial body of research shows that these higher-order questions elicit greater cognitive effort and yield stronger learning outcomes compared with factual questions (Cerdan et al., 2009; Hamaker, 1986; Jensen et al., 2014; Sun et al., 2024; Watts and Anderson, 1971; Zhu et al., 2024). Because this added cognitive effort reflects mental activities directly tied to learning, it is likely to support conditions that facilitate germane cognitive load (Sweller et al., 1998).

Recent research has drawn attention to an important limitation of asynchronous online learning: without ongoing instructor monitoring, learners are less likely to engage in the kind of purposeful cognitive processing that supports effective learning (Ahmed, 2024; Mayer et al., 2020). Although higher-order questions are known to prompt deeper cognitive effort and consistently outperform factual questions in promoting learning (Sun et al., 2024; Zhu et al., 2024), most work on adjunct questions (AQs) has focused primarily on reading comprehension tasks (Li et al., 2024; Liu, 2021). While these studies demonstrate the value of AQs in guiding attention and supporting effective learning, they do not address whether such questions can be leveraged to foster the schema-relevant cognitive effort associated with germane cognitive load (GCL) in online multimedia learning environments. This gap suggests the need to explore how AQs might be used not only to highlight important information but also to create instructional conditions that actively support deeper, schema-building processes.

Furthermore, although the literature clearly distinguishes between factual and higher-order adjunct questions, contemporary research has yet to examine how higher-order AQs—relative to basic factual questions—might support the conditions necessary for fostering germane cognitive load in digital learning environments. This gap indicates that the specific contribution of higher-order adjunct questions to promoting schema-relevant cognitive effort remains under-investigated, thereby providing a clear and timely rationale for the present study.

The present experiment aimed to examine whether a Higher-Order Adjunct Question Package (HAQP)—consisting of a higher-order question, active responding, non-revealing feedback, and guidance away from guessing—can support the conditions that foster germane cognitive load in digital learning environments. To evaluate this, the study employed two complementary indicators: a self-reported measure of GCL and learners’ performance on a knowledge-transfer task.

Knowledge transfer is treated in this study as a learning outcome that complements the self-report measure of GCL by indicating whether learners’ schema-relevant cognitive effort has translated into meaningful learning. When learners invest higher levels of germane cognitive load, they construct richer and more abstract schemas that can be flexibly applied to new situations. Thus, transfer performance serves as an observable outcome of germane cognitive processing: the more effectively learners engage in such processing during learning, the more capable they become of activating and applying their schemas across different contexts (DeLeeuw and Mayer, 2008; Hajian, 2019; Sweller et al., 2019).

The findings of the present study may offer practical guidance for educators seeking to design more effective online instructional materials that maximise learners’ productive cognitive load (i.e., GCL). These results also provide insight into how instructional design can help learners manage their cognitive resources more efficiently by directing their attention toward learning-relevant activities and reducing engagement with irrelevant information—ultimately supporting more effective learning. The following sections present a review of germane cognitive load and adjunct questions.

Germane cognitive load

Until the mid-1990s, cognitive load theory exclusively concentrated on reducing ECL with a view to designing effective instruction (Mayer and Moreno, 2003). The focus on GCL emerged following the publication of a study conducted by Paas and van Merriënboer (1994), who found that students learned more effectively when they were exposed to high-level as opposed to low variability when studying worked examples. These scholars reported that, in addition to higher levels of learning, the students exposed to high variability when presented with worked examples experienced a greater cognitive load compared to those who studied worked examples with low variability. The researchers interpreted this as indicating the students exposed to high variability in the worked examples experienced a positive cognitive load, which the researchers identified as germane to learning. Later, this positive cognitive load came to be referred to as ‘germane cognitive load’ (GCL; Sweller et al., 1998).

Recent discussions in cognitive load research argue that germane cognitive load (GCL) should not be treated as an independent load type, but rather as the proportion of working-memory resources intentionally devoted to intrinsic cognitive processing (Kalyuga, 2011; Sweller et al., 2019). In response to long-standing debates concerning the definition and function of GCL, Sweller et al. (2019) introduced a revised formulation of Cognitive Load Theory (CLT). A central modification in this update is the removal of germane load from the additive cognitive load model proposed in 1998. Instead, GCL is reconceptualized as “germane processing”—a set of productive mental activities that facilitate the construction, strengthening, and refinement of schemas, rather than a separate category of cognitive burden.

In line with this revision, the present study adopts the view that germane cognitive load represents the productive component of intrinsic processing: the portion of working-memory resources that learners deliberately invest in interpreting, integrating, and reorganizing information in ways that contribute to schema construction. Under this perspective, GCL is not treated as a separate load type; instead, it reflects the learner-driven enhancement of intrinsic processing that emerges when instructional conditions successfully foster deeper engagement with the learning material. Thus, GCL is conceptualized as a process variable (“germane processing”) involving the allocation of cognitive resources to learning-relevant activities—that is, activities directly related to dealing with intrinsic load (Paas and van Merriënboer, 2020; Sweller et al., 2019).

Schnotz and Kürschner (2007) identified three important characteristics related to this type of cognitive load: (a) GCL requires a working memory capacity—otherwise, it would not be a cognitive load; (b) GCL aims to establish learning—otherwise, it would not be germane; and (c) GCL refers to cognitive processing in working memory, not to learning itself. Therefore, GCL occurs when a learner dedicates mental effort to processing information relevant to the intended learning. The concept refers to learners’ engagement in cognitive processing that is directed toward schema construction and/or schema automation, therefore, GCL should be increased to the degree possible (Sweller et al., 1998). In other words, GCL is primarily associated with engaging in activities relevant to the intended learning (Gerjets et al., 2009; Sweller, 1988; Sweller et al., 1998). Therefore, researchers who study cognitive load have shifted their focus toward identifying and inventing instructional methods that encourage learners to invest their cognitive resources in activities related to the intended learning, thereby supporting conditions that facilitate germane cognitive load (Paas et al., 2003, 2004; Paas and Van Gog, 2006; van Merriënboer and Sweller, 2005).

Research has indicated that, while GCL is associated with effective learning, ECL imposes an ineffective load (Gerjets et al., 2009; Sweller, 1988; Sweller et al., 1998). As a result, when learners invest their mental effort in activities related to the intended learning, they effectively increase their GCL, which leads to better learning outcomes. Conversely, investing effort in irrelevant activities increases ECL, resulting in ineffective learning. Moreover, GCL cannot increase when working memory capacity is fully occupied by ECL. Therefore, to maximise GCL, instructional designers must simultaneously work to minimise ECL (Gerjets et al., 2009; Sweller, 2005; Sweller et al., 1998; Sweller et al., 2019). Reducing ECL (unproductive load) frees cognitive resources, paving the way for increased GCL (productive load). Thus, instructional design should focus on reducing extraneous load while increasing GCL (Sweller et al., 2019). Building on this distinction between productive and unproductive cognitive load, online learning environments introduce additional challenges that demand even more deliberate instructional management of cognitive load.

Germane cognitive load and instructional design in online learning

Effective instructional design plays a compensatory role in online learning by offsetting the absence of physical instructor presence and sustaining learners’ focus, engagement, and deeper cognitive processing (Al Qahtani and Higgins, 2013; Cole, Shelley, and Swartz, 2014). A central mechanism underlying this influence is germane cognitive load, described as the mental effort allocated to productive intrinsic processing that supports schema construction and refinement (De Jong, 2010). Empirical findings show that well-designed instructional materials reliably support GCL, leading to improved comprehension and stronger learning outcomes (Cierniak, Scheiter, and Gerjets, 2009; Sweller, Van Merriënboer, and Paas, 1998).

A substantial body of research demonstrates that high-quality instructional design is essential for managing cognitive load in online learning environments, particularly through strategies that support germane cognitive load (GCL). Poorly designed materials increase extraneous cognitive load and consume working-memory resources that should otherwise support learning (Chandler and Sweller, 1991; Sweller et al., 2011). In contrast, instructional approaches that deliberately foster GCL, such as worked examples, scaffolding, and the gradual fading of instructional guidance, have consistently been shown to facilitate schema construction and improve learning efficiency (Paas and Van Merriënboer, 1994).

This challenge is particularly salient in online contexts. Research indicates that novice learners are more susceptible to cognitive overload in digital environments, making the enhancement of GCL a critical design priority (Ayres, 2015; Kalyuga, 2007).

Investigations in large-scale online courses (MOOCs) similarly show that technological complexity, interface fragmentation, and navigational difficulties elevate extraneous load and undermine learning performance (Zheng et al., 2020). These findings emphasize the need for intentional design interventions that reduce extraneous processing while stimulating deeper cognitive engagement.

With respect to learning performance, higher levels of GCL are consistently associated with superior learning outcomes, as GCL reflects the active and purposeful allocation of cognitive resources to learning-relevant processes (Klepsch and Seufert, 2021; Sweller, 2010). Consequently, instructional environments—offline or online—should be structured in ways that cognitively engage and motivate learners to engage meaningfully with the material (Mayer, 2010). Building on this premise, several scholars have advocated for the deliberate support for GCL through strategies that stimulate deep cognitive engagement (Paas and Van Merriënboer, 1994). Since the primary purpose of instruction is to foster learning, the literature converges on the expectation that greater GCL is associated with enhanced learning performance, particularly in online learning environments where cognitive demands are inherently higher (Ahmed, 2024). Given this central role of germane cognitive load in promoting learning, it becomes essential to examine instructional techniques—such as adjunct questions—that are specifically designed to direct learners’ attention, deepen cognitive engagement, and thereby supporting GCL within digital learning environments. Taken together, these findings underscore the need for instructional methods that may help guide learners’ attention, reduce unnecessary processing, and potentially strengthen germane cognitive engagement—making adjunct questions a promising candidate for empirical investigation.

Adjunct questions

Adjunct questions (AQs) are embedded within instructional materials to draw learners’ attention to the most important elements of the content (Hamaker, 1986; Winne, 1979). Their function is not simply to ask for an answer but to encourage learners to pause, revisit the text, and consider what is most important. In this sense, AQs operate as gentle instructional cues that help shape the learner's engagement with the content.

Wittrock (1990) situated AQs within the broader category of Generative Learning Activities—activities that invite learners to construct meaning rather than merely receive information (Brod, 2021). From the perspective of generative learning theory (Fiorella and Mayer, 2016; Mayer, 2009, 2014), learning involves three interrelated processes: selecting relevant ideas, organizing them into meaningful structures, and integrating them with prior knowledge. AQs are designed to activate these processes by encouraging learners to interact with the content in a more intentional and reflective manner.

In practical terms, AQs serve as attentional guides that help learners identify what deserves deeper consideration. By prompting learners to return to specific portions of the text, AQs help learners direct their cognitive effort more intentionally, reducing engagement with information that is irrelevant to the learning task.

A considerable body of research demonstrates that such prompts enhance comprehension by fostering purposeful engagement with key ideas (Hamaker, 1986; Li et al., 2024; Liu, 2021; Medina et al., 2017; Winne, 1997). Rather than spreading attention across the entire instructional material, learners are encouraged to prioritize the most relevant segments. This targeted engagement is expected to reduce extraneous cognitive load (ECL) and support germane cognitive load (GCL), as learners direct mental effort toward processes that directly contribute to achieving the intended learning outcomes while ignoring irrelevant activities.

Several studies have shown that AQs can enhance learning when inserted within web-based materials (Chang, 2021; Dornisch and Sperling, 2004, 2006; Valdez, 2013). For example, Valdez (2013) found that inserting AQs into science lessons through PowerPoints improved retention and comprehension. As another example, Chang (2021) found that AQs aligned students’ knowledge structures more closely with those of the instructor. Most studies on AQs have been conducted to assess their effects on reading comprehension (Li et al., 2024; Liu, 2021; Medina et al., 2017), consistently showing their effectiveness in this domain.

There are two types of AQs. Factual questions ask learners to recognise or repeat information from learning materials and require less complex cognitive processing, as there is no need to manipulate the instructional content (Andre, 1979). Higher-order adjunct questions, by contrast, require learners to manipulate instructional content, such as through comprehension, inferential reasoning, or application (Winne, 1979).

Most studies indicate that higher-order adjunct questions positively affect learners’ performance compared with factual questions (Cerdan et al., 2009; Hamaker, 1986; Jensen et al., 2014; Sun et al., 2024; Watts and Anderson, 1971; Zhu et al., 2024). For instance, synthesis-type higher-order questions lead to better learning on complex tasks than factual questions (Zhu et al., 2024), and “why” questions have stronger effects on learning than “what” questions (Sun et al., 2024). Higher-order questions require learners to identify relationships between ideas that are implied but not explicitly stated (Hamaker, 1986), thereby demanding extra mental effort. Since such productive cognitive effort enhances learning, higher-order questions are likely to support conditions that facilitate germane cognitive load (Sweller et al., 1998).

Taken together, prior findings suggest that higher-order adjunct questions enhance learning because they likely require learners to engage in additional productive cognitive processing, which supports schema construction. Building on this evidence, the present study extends previous work by examining the role of not an isolated higher-order question, but a structured Higher-Order Adjunct Question Package (HAQP)—a package consisting of a higher-order question, active responding, feedback, and guidance away from guessing. This design shifts the focus from isolated higher-order questions to a more comprehensive instructional mechanism intended to support conditions that facilitate germane processing. Accordingly, this study investigated whether the HAQP supports instructional conditions that facilitate germane cognitive load. GCL was assessed using two complementary approaches: self-report, which captures perceived mental effort allocated to productive intrinsic processing, and knowledge-transfer performance, which reflects the successful construction and application of schemas in new contexts (

Hajian, 2019). Because transfer is a learning outcome rather than a direct indicator of cognitive load (

DeLeeuw and Mayer, 2008), using both measures provides a more valid and comprehensive assessment of the extent to which the HAQP fosters germane processing. Based on the reviewed literature, this study addresses the following research questions:

Does a Higher Order Adjunct Question Package (HAQP) support instructional conditions that facilitate germane cognitive load?

Does a Higher Order Adjunct Question Package (HAQP) improve learners’ performance on knowledge-transfer tasks?

Materials and methods

Participants

A total of 131 male teachers were initially selected through random sampling from schools located in an urban district in Ha’il, Saudi Arabia. However, following the random assignment of participants to the experimental and control groups, several teachers withdrew from the study. This attrition reduced the final sample to 126 teachers (67 mathematics teachers and 59 English teachers). Because participant withdrawal occurred after random assignment, the final group composition no longer fully reflected the original randomisation. Consequently, the study does not meet the criteria for a true randomized experiment and is therefore classified as quasi-experimental.

This study was limited to male teachers because of the gender segregation system in Saudi Arabia. Participants’ ages ranged from less than 25 to more than 50 years old. Their years of teaching experience ranged from fewer than 5 to more than 25 (see Tables 1, 2). Based on the descriptive information presented in Tables 1, 2, the experimental and control groups appear to be broadly similar in terms of age distribution and teaching experience. Table 1 shows that the frequencies across the four age categories are closely aligned between the two groups, with no noticeable differences that would suggest an imbalance in demographic characteristics. Similarly, Table 2 indicates that the distribution of teaching experience follows almost the same pattern in both groups, particularly in the predominant range of 6–15 years, and the remaining categories also display comparable representation. The descriptive patterns in these tables collectively suggest that the two groups share similar backgrounds, making it unlikely that age or teaching experience introduced systematic variation that could influence the study's outcomes.

Table 1

| Group | 25 or less | 26–40 | 41–50 | 51 or more |

|---|---|---|---|---|

| Experimental group | 11 | 23 | 21 | 9 |

| Control group | 9 | 24 | 19 | 10 |

| Total | 20 | 47 | 40 | 19 |

Sample Age.

Table 2

| Group | Less than 5 years | 6–15 | 16–25 | 26 or more |

|---|---|---|---|---|

| Experimental group | 10 | 24 | 20 | 10 |

| Control group | 8 | 25 | 19 | 10 |

| Total | 18 | 49 | 39 | 20 |

Sample teaching experiences.

The study employed a quasi-experimental design with two groups: an experimental group and a control group. Sixty-four teachers (33 in mathematics, 31 in English) were randomly assigned to the experimental group, and 62 teachers (34 in mathematics, 28 in English) were assigned to the control group (see Table 3). All participants provided informed consent and were made aware that they could withdraw from the study at any time without providing a reason. All participants were volunteers. Mathematics and English teachers were selected for this study because Mathematics and English constitute a significant portion of students’ education in schools.

Table 3

| Group | Mathematics teachers | English teachers | Total |

|---|---|---|---|

| Experimental group | 33 | 31 | 64 |

| Control group | 34 | 28 | 62 |

| Total | 67 | 59 | 126 |

Sample description.

Topic and study design

As part of a professional development initiative, mathematics and English teachers were introduced to four important learning principles: (a) assessing and building upon relevant prior knowledge, (b) implementing continuous assessment, (c) applying differentiated instruction and (d) setting clear objectives. To convey these principles, we used analogical paintings integrated with explanatory text as instructional materials. Analogical paintings are visual metaphors that use familiar imagery to represent abstract concepts. In other words, analogical paintings make abstract concepts concrete. We used them here to make concepts more relatable (see Gray and Holyoak, 2021).

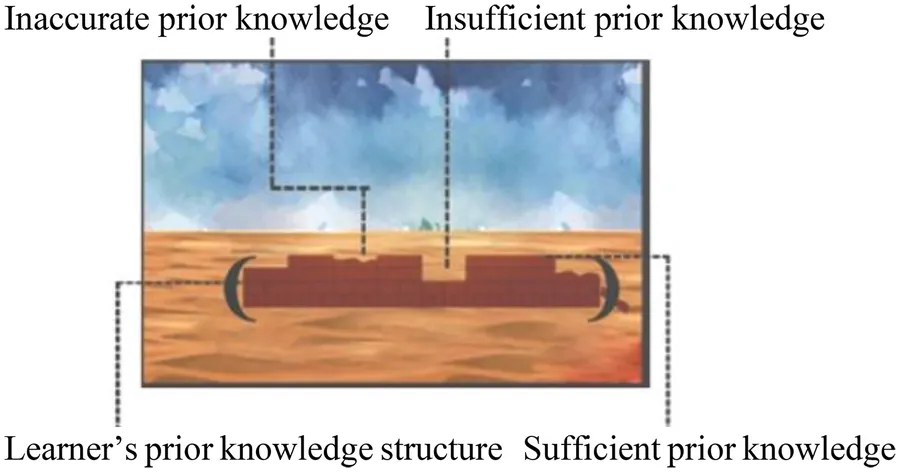

Four paintings were included, each one illustrating one of the four learning principles, and the accompanying text provided further explanation. For example, Figure 1 represents the learning principle of ‘assessing and building upon relevant prior knowledge’. The painting uses architectural metaphors to represent different types of prior knowledge a learner might have before engaging with new instructional material.

Figure 1

In

Figure 1, each structure symbolises one type of prior knowledge.

The distorted structure represents “inaccurate prior knowledge”, which cannot be built upon, so it needs to be changed (i.e., conceptual changes). In other words, the learner holds misconceptions or false understandings that need to be corrected before new learning can occur.

Incomplete structure represents “insufficient prior knowledge”. The learner has partial understanding but lacks the depth or connections needed to fully grasp new concepts (i.e., conceptual growth).

The complete structure represents “sufficient prior knowledge”. The learner is well-prepared and can readily integrate new information with existing understanding.

All participants were selected from different schools and assembled in a single location under the supervision of the authors. The instructional materials were delivered using Google Forms. All participants completed a pre-test to assess their prior knowledge of the learning content in knowledge transfer, which served as a baseline to control for prior knowledge. The same test was administered after the intervention to assess the influence of HAQP on participants’ knowledge transfer (see an example in

Appendix A).

In the experimental group, participants considered each painting and responded to a multiple-choice question in which they were asked to identify the correct interpretation of the painting. Participants were allowed to view the painting as many times as needed before or after answering the question. They were encouraged to review the painting and extract the correct interpretation of the painting. Once the question was answered, correct responses were reinforced through feedback. If the response was incorrect, feedback was provided to guide the participants in the right direction without revealing the correct answer. For example, the feedback would inform the participant why the choice was not correct. This approach was intended to encourage participants to review the painting and extract the correct interpretation thereby preventing guessing. The questions were designed to encourage cognitive engagement by prompting participants to actively analyse the paintings and derive the correct answers (i.e., the relevant learning activities). The aim of this technique was to encourage participants to invest their working memory resources in concentrating on learning-relevant activities (i.e., interpreting the paintings) and reducing attention to unnecessary information (see Appendix B).

In contrast to the experimental group, the control group viewed the paintings without HAQP. Instead, they were presented with statements interpreting the paintings (see Appendix C).

After either answering the questions (for the experimental group) or reviewing the content (for the control group), participants’ GCL and transfer test were measured (see Figure 2).

Figure 2

For both groups, ECL was kept low; first, there was no split-attention effect, as the paintings were integrated with exploratory texts to minimise unnecessary cognitive load. Second, participants were not required to generate random interpretations and test their validity; instead, they were encouraged to focus entirely on the interpretation of the painting. In the experimental group, participants chose what was considered to be the correct answer from four provided interpretations; conversely, in the control group, participants read the interpreted statement and simply viewed the painting.

In the experimental condition, the HAQP was implemented as a pedagogically coherent package comprising a higher-order multiple-choice question, active responding, non-revealing diagnostic feedback, and guidance away from guessing. Participants could revisit the same painting as needed; no additional instructional content was provided beyond the original materials. In the control condition, participants viewed the same paintings but received the interpretation directly without a question or active response. Hence, the conditions differed not only in the presence of a question vs. a statement, but in whether interpretive processing was externally prompted and sustained. This contrast aligns with our theoretical interest in encouraging cognitive engagement with schema-relevant processing.

Higher-order adjunct question package

Higher-order adjunct question Package aims to encourage deeper cognitive processing by prompting learners to recognise relationships among elements within the paintings in order to select the most appropriate interpretation (see Appendix B). This technique is intended to help focus learners’ attention on learning-relevant elements while reducing attention to irrelevant information, thereby supporting more efficient cognitive engagement.

The higher-order nature of the intervention lies in the interpretive analysis of the analogical paintings rather than in the multiple-choice format itself. The HAQP functions as a cue that encourages learners to engage in schema-relevant interpretive processing rather than surface-level recognition.

The HAQP was delivered as an integrated pedagogical package that included a higher-order multiple-choice question, opportunities for active responding, non-revealing diagnostic feedback, and support designed to discourage guessing.

Instruments

Germane cognitive load measurement

After the participants were exposed to the four paintings, whether with a question or a statement, they were asked to complete a set of self-report measure of germane cognitive load. This measure was adopted from

Krieglstein et al. (2023), and it included five items assessed on a 9-point Likert-type scale (1 = not at all applicable to 9 = fully applicable). Participants were asked to fill out the questionnaire based on their fulfilment of the following requirements:

I actively reflected upon the learning content.

I made an effort to understand the learning content.

I achieved a comprehensive understanding of the learning content.

I was able to expand my prior knowledge with the learning content.

I can apply the knowledge that I acquired through the learning material quickly and accurately.

The present study adopts the GCL scale from

Krieglstein et al., (2023). The authors explicitly noted that GCL should be understood “

not as a load per se, but rather as germane processing, whereby working memory resources are devoted to dealing with intrinsic load to construct and automate schemata in long-term memory” (p.9). They further acnowledged that “

an accurate measurement, as is the case with the ICL and ECL, is all the more difficult with GCL,” and that the theoretical separation of load types “

does not always show up in reality.” Given these conceptual and empirical ambiguities, and the recognition that understanding the nature of GCL “

remains the ‘holy grail’ in CLT research,” the present study interprets GCL as an indicator of self-reported schema-relevant effort, reflecting learners’ cognitive investment in schema construction rather than a direct measure of germane load.

Knowledge transfer

To assess the participants’ performance on the transfer task, we designed 16 multiple-choice questions (four questions for each learning principle) that evaluated the participants’ ability to apply the learning principles. Transfer of knowledge questions require the student to apply what they have learned in a new or different context (see, Hajian, 2019), see an example in appendix A.

Each correct answer was scored as 1, and each incorrect answer was scored as 0. This assessment complemented the self-report GCL meaure by providing a performance-based indicator of schema application (DeLeeuw and Mayer, 2008).

Transfer was used as a learning outcome, not as an indicator of cognitive load. It complements the self-reported GCL measure by indicating whether learners’ schema-relevant processing was reflected in their performance, but it is not interpreted as a direct measure of GCL.

The same transfer test was used at pre-test and post-test to ensure that both groups were assessed on identical criteria. Because the items measure application of learning principles to new situations, improvements in scores reflect conceptual application rather than memorization of specific items.

Validity of the instruments

The content used in this study—including the paintings, the HAQP materials, the knowledge-transfer test, and the GCL self-report items—was reviewed by five experts in the field of science education and instructional design. They evaluated the materials for adequacy, clarity, and relevance, and no modifications were recommended, indicating satisfactory content validity.

To assess reliability, a test–retest procedure was conducted with a sample of 32 participants drawn from the same population, with a one-week interval between administrations. The test–retest coefficient was 0.89, indicating high temporal stability. In addition, internal consistency reliability was examined using Cronbach's alpha, which yielded a coefficient of 0.84 for the knowledge-transfer test, demonstrating strong reliability for use in this study. Internal consistency for the GCL scale was also acceptable, with Cronbach's alpha of α = 0.76.

Results

The aim of this study was to investigate the role of a Higher-Order Adjunct Question Package (HAQP) in supporting learners’ germane cognitive load (GCL) within digital learning environments, using two complementary indicators: a self-reported measure of germane cognitive load and knowledge-transfer performance. Normality and Levene's test checks were conducted, and all statistical assumptions were satisfied.

The results indicated that participants in the HAQP condition reported higher scores on the GCL measure and achieved better knowledge-transfer performance compared with those who viewed the instructional materials without the HAQP. The following sections present these findings in detail.

Germane cognitive load measurement

Table 4 shows a statistically significant difference in GCL scores between the two groups, t(124) = 2.44, p < .05. Participants in the experimental group reported higher GCL scores (M = 15.78, SD = 10.32) compared with those in the control group (M = 12.10, SD = 6.18). The independent-samples t-test indicated a statistically significant effect, with an effect size approaching the medium range (Cohen's d = 0.44). These results suggest that participants who received the Higher-Order Adjunct Question Package (HAQP) engaged more deeply in schema-relevant cognitive processes than participants who viewed the materials without HAQP.

Table 4

| Variable | Group | N | Mean | SD | t | d |

|---|---|---|---|---|---|---|

| Post-test | Exp. | 64 | 15.78 | 10.32 | 2.44* | 0.44 |

| Cont. | 62 | 12.10 | 6.18 |

GCL measurement results.

*p is significant at <.05.

Performance in knowledge transfer

The t-test results indicated no significant difference between the experimental and control groups on the pre-test scores, t(124) = −0.165, p > .05, confirming that both groups started with comparable levels of prior knowledge.

Table 5 presents the results of a one-way ANCOVA conducted to examine group differences in post-test performance while controlling for prior knowledge. The pre-test score was included as a covariate to adjust for initial variability, and all statistical assumptions were met, indicating that ANCOVA was appropriate for analysis. After adjusting for pre-test performance, the analysis revealed a statistically significant difference between the groups, F(1, 123) = 13.271, p < .001. Participants in the experimental group obtained higher post-test scores (M = 8.42, SD = 2.68) than those in the control group (M = 6.94, SD = 2.52). The effect size (Cohen's d = 0.66) indicated a medium-sized difference.

Table 5

| Variable | Group | N | M | SD | Adjusted M | F | d |

|---|---|---|---|---|---|---|---|

| Post-test | Exp. | 64 | 8.42 | 2.68 | 8.44 | 13.271** | 0.66 |

| Cont. | 62 | 6.94 | 2.52 | 6.92 |

One-Way ANCOVA results.

**p is significant at <.01.

These findings show that participants exposed to the HAQP demonstrated superior performance on the knowledge-transfer task compared with those who received the instructional materials without HAQP. This pattern aligns with the higher levels of self-reported GCL observed in the experimental group, suggesting that the HAQP contributes to learning conditions that support germane cognitive load in online instruction.

Discussion

Supporting learners in asynchronous online environments requires instructional materials that truly help them focus on what matters. When learners’ attention is directed toward processes that build meaningful mental structures, they are more likely to benefit from the learning experience. Consistent with the ideas proposed in Cognitive Load Theory (Sweller, 2010; Sweller et al., 1998), effective instructional design should minimise unnecessary cognitive effort and instead encourage learners to invest their mental resources in activities that genuinely deepen understanding—those associated with germane cognitive load (GCL). This study contributes to that goal by exploring how a Higher Order Adjunct Question Package (HAQP) can guide learners toward richer, more meaningful processing while interpreting analogical representations.

The HAQP used in this research was intentionally crafted to help learners notice, analyse, and articulate the relationships embedded within the analogical paintings that illustrated the targeted learning principle. The package included higher order multiple-choice questions, opportunities for active responding, non-revealing feedback, and prompts designed to discourage guessing. Together, these elements nudged learners toward more thoughtful engagement with the material. This approach echoes previous work showing that adjunct questions can act as scaffolds that redirect attention to conceptually important information (Hamaker, 1986; Winne, 1979). In this way, the HAQP functioned not just as a support tool, but as a mechanism for encouraging the kind of relational thinking essential for interpreting analogies.

The findings lend support to this interpretation. Learners who worked with the HAQP reported higher levels of GCL and performed better on the transfer task than those who simply read an explanatory statement. The moderate effect size for GCL (Cohen's d = 0.44) suggests that the package succeeded in encouraging deeper mental effort, while the stronger effect size for transfer performance (Cohen's d = 0.66) indicates that this effort translated into more meaningful learning. Even so, these results should be viewed with some caution: they are based on a limited number of analogical tasks that share similar structures, and it remains unclear how well these outcomes might extend to different or more complex materials.

Performance patterns offer further insight. Learners exposed to the HAQP appeared to focus more on meaningful relationships within the paintings and less on extraneous details. This interpretation is consistent with earlier work showing that adjunct questions help channel attention toward key instructional elements (Li et al., 2024; Liu, 2021; Medina et al., 2017). Although attention was inferred indirectly rather than measured directly, the overall pattern aligns with theoretical expectations.

Existing literature also reinforces the idea that higher order adjunct questions can enhance cognitive engagement and improve learning (Dornisch and Sperling, 2004, 2006; Sun et al., 2024; Zhu et al., 2024). Such questions typically require learners to infer underlying relationships instead of relying on explicitly stated information (Hamaker, 1986), prompting the deeper processing associated with germane cognitive load. Still, the extent to which HAQP support this deeper processing may vary depending on task difficulty, learners’ familiarity with the content, and the nature of the instructional materials. Further research using a wider variety of tasks and formats would help clarify the stability of these effects.

The HAQP may have been particularly valuable in this study because adjunct questions can help compensate for the lack of immediate instructor presence in asynchronous environments. Chang (2021) found that such questions can move learners’ understanding closer to the conceptual structure intended by instructors. Since schema construction benefits from structured guidance that helps learners allocate their cognitive effort effectively (Jordan et al., 2020; Sweller, 2010), HAQP may serve as a practical tool for supporting deeper learning in self-paced settings.

Overall, the findings of this study suggest that HAQP offer a promising way to direct learners’ attention toward essential elements of a learning task while reducing the cognitive noise created by irrelevant information. However, additional research is needed to determine how well these benefits hold across different types of content, more complex tasks, and longer periods of learning. Future work should explore long-term retention, incorporate direct measures of learners’ cognitive processes, and examine how variations of HAQP perform across diverse instructional contexts.

Conclusion and limitations

This study set out to explore how a Higher-Order Adjunct Question Package (HAQP) might support learners’ germane cognitive load (GCL) in digital learning environments. Using both self-reported GCL and performance on a knowledge-transfer task, the findings pointed in the same direction: learners who received the HAQP seemed to engage more deeply with the instructional content. They reported higher levels of germane cognitive effort and performed better when asked to apply what they learned. Taken together, these results suggest that HAQP can help guide learners’ attention toward the elements that matter and encourage the kind of productive cognitive activity that supports meaningful learning.

At the same time, these interpretations must be considered within the limits of the study. The present study interprets GCL as an indicator of self-reported schema-relevant effort, representing learners’ cognitive investment in constructing schemas rather than a direct measure of germane load. In contrast, the transfer task is viewed as an outcome of learning rather than a measure of germane processing itself. Accordingly, the findings indicate differences between groups, but they should not be interpreted as demonstrating definitive causal effects.

Several limitations also shape how the findings should be understood. The instructional design used here included several features—such as a higher-order question format, active responses, feedback, and opportunities for review—that worked together as a package. While this approach mirrors authentic instructional practice, it does not reveal which specific components are most influential. Future research could use factorial designs to examine each element on its own.

Another limitation concerns the exclusive reliance on multiple-choice questions within the HAQP. Although these questions may have encouraged learners to interpret the analogical paintings, the fixed response options might not have fully captured the depth of reasoning typically associated with higher-order generative tasks. In particular, the conceptual strength and attractiveness of the distractors may not have been evenly balanced, which could limit the extent to which the task elicited genuinely deep engagement and may pose a potential threat to internal validity. Future research should consider employing open-ended or response-constructed adjunct questions that require learners to generate their own interpretations, as such formats may better reflect the generative and higher-order cognitive processes central to the theoretical aims of HAQP-based interventions.

The study also relied on a single type of representational material: analogical paintings. This consistency supports internal validity, but it limits the extent to which the findings can be applied to other types of instructional materials or longer learning sequences. Similarly, using identical items in both the pre- and post-tests raises the possibility of testing effects, although the transfer-based nature of the items makes memorization an unlikely explanation for the observed gains.

Finally, the participants were all in-service teachers from a single region. Educational environments with different structures—such as settings with mixed-gender classrooms or different instructional cultures—may produce different outcomes. Expanding research to include a wider range of instructional conditions, content areas, and learner characteristics would provide a clearer picture of HAQP potential. Investigating long-term retention and the durability of transfer effects also represents an important next step.

Statements

Data availability statement

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation.

Ethics statement

The studies involving humans were approved by Ethical Approval of Scientific Research University of Ha'il. The studies were conducted in accordance with the local legislation and institutional requirements. The participants provided their written informed consent to participate in this study. Written informed consent was obtained from the individual(s) for the publication of any potentially identifiable images or data included in this article.

Author contributions

NA: Conceptualization, Data curation, Formal analysis, Funding acquisition, Investigation, Methodology, Project administration, Resources, Software, Supervision, Validation, Visualization, Writing – original draft, Writing – review & editing. OA: Conceptualization, Data curation, Formal analysis, Funding acquisition, Investigation, Methodology, Project administration, Resources, Software, Supervision, Validation, Visualization, Writing – original draft, Writing – review & editing.

Funding

The author(s) declared that financial support was not received for this work and/or its publication.

Conflict of interest

The author(s) declared that this work was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Generative AI statement

The author(s) declared that generative AI was not used in the creation of this manuscript.

Any alternative text (alt text) provided alongside figures in this article has been generated by Frontiers with the support of artificial intelligence and reasonable efforts have been made to ensure accuracy, including review by the authors wherever possible. If you identify any issues, please contact us.

Publisher’s note

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

References

1

AhmedS. (2024). Cognitive load and academic performance in online learning: evidence from undergraduate students. JERL1 (1), 5–9.

2

Al QahtaniA.HigginsS. (2013). Effectiveness of mobile learning in developing learners’ performance. Comput. Educ.61, 379–390.

3

AndersonR. C. (1977). “The notion of schemata and the educational enterprise: general discussion of the conference,” in Schooling and the Acquisition of Knowledge, eds. AndersonR.SpiroR.MontagueW. (Lawrence Erlbaum Associates), 415–431.

4

AndreT. (1979). Does answering higher-level questions while Reading facilitate productive learning?Rev. Educ. Res.49, 280–318. 10.3102/00346543049002280

5

AtkinsonR. C.ShiffrinR. M. (1968). “Human memory: a proposed system and its control processes,” in The Psychology of Learning and Motivation, eds. SpenceK. W.SpenceJ. T. (Stanford, CA: Academic Press), 89–195

6

AyresP. (2015). State of the art research into cognitive load theory. Comput. Human. Behav.45, 21–24. 10.1016/j.chb.2008.12.007

7

BrodG. (2021). Generative learning: which strategies for what age?Educ. Psychol. Rev.33 (4), 1295–1318. 10.1007/s10648-020-09571-9

8

CerdánR.Vidal-AbarcaE.MartínezT.GilabertR.GilL. (2009). Impact of question-answering tasks on search processes and Reading comprehension. Learn. Instr.19 (1), 13–27. 10.1016/j.learninstruc.2007.12.003

9

ChandlerP.SwellerJ. (1991). Cognitive load theory and the format of instruction. Cogn. Instr.8 (4), 293–332. 10.1207/s1532690xci0804_2

10

ChangH. K. (2021) The effect of embedded interactive adjunct questions in instructional videos. Doctoral dissertation. The Pennsylvania State University.

11

CierniakG.ScheiterK.GerjetsP. (2009). Explaining the split-attention effect: is the reduction of extraneous cognitive load accompanied by an increase in germane load?Comput. Human. Behav.25 (2), 315–324. 10.1016/j.chb.2008.12.020

12

ColeM. T.ShelleyD. J.SwartzL. B. (2014). Online instruction, e-learning, and student satisfaction: a three-year study. Int. Rev. Res. Open Dist. Learn.15 (6), 111–131. 10.19173/irrodl.v15i6.1748

13

De JongT. (2010). Cognitive load theory, educational research, and instructional design: some food for thought. Instr. Sci.38 (2), 105–134. 10.1007/s11251-009-9110-0

14

DeLeeuwK. E.MayerR. E. (2008). A comparison of three measures of cognitive load. J. Educ. Psychol.100 (1), 223–234. 10.1037/0022-0663.100.1.223

15

DornischM. M.SperlingR. A. (2004). Elaborative questions in web-based text materials. Int. J. Instr. Media.31 (1), 49–59.

16

DornischM. M.SperlingR. A. (2006). Facilitating learning from technology-enhanced text: effects of prompted elaborative technology. J. Educ. Res.99 (3), 156–165. 10.3200/JOER.99.3.156-166

17

FiorellaL.MayerR. E. (2016). Eight ways to promote generative learning. Educ. Psychol. Rev.28 (4), 717–741. 10.1007/s10648-015-9348-9

18

GerjetsP.ScheiterK.CierniakG. (2009). The scientific value of cognitive load theory. Educ. Psychol. Rev.21, 43–54. 10.1007/s10648-008-9096-1

19

GrayM. E.HolyoakK. J. (2021). Teaching by analogy: from theory to practice. Mind Brain Educ.15 (3), 250–263. 10.1111/mbe.12288

20

HajianS. (2019). Transfer of learning and teaching: a review of transfer theories and effective instructional practices. IAFOR J. Educ.7 (1), 93–111. 10.22492/ije.7.1.06

21

HamakerC. (1986). The effects of adjunct questions on prose learning. Rev. Educ. Res.56, 212–242. 10.3102/00346543056002212

22

JensenJ. L.McDanielM. A.WoodardS. M.KummerT. A. (2014). Exams requiring higher order thinking skills encourage greater conceptual understanding. Educ. Psychol. Rev.26, 307–329. 10.1007/s10648-013-9248-9

23

JordanJ.WagnerJ.MantheyD. E.WolffM.SantenS.CicoS. J. (2020). Optimizing lectures from a cognitive load perspective. AEM Educ. Train.4 (3), 306–312. 10.1002/aet2.10389

24

KalyugaS. (2007). Expertise reversal effect and its implications for learner-tailored instruction. Educ. Psychol. Rev.19, 509–539. 10.1007/s10648-007-9054-3

25

KalyugaS. (2011). Cognitive load theory: how many types of load does it really need?Educ. Psychol. Rev.23 (1), 1–19. 10.1007/s10648-010-9150-7

26

KlepschM.SeufertT. (2021). Understanding the role of cognitive load in the educational process. Learn. Instr.71, 101398.

27

KrieglsteinF.BeegeM.ReyG. D.Sanchez-StockhammerC.SchneiderS. (2023). Development and validation of a theory-based questionnaire to measure different types of cognitive load. Educ. Psychol. Rev.35 (1), 9. 10.1007/s10648-023-09738-0

28

LiY.BrantmeierC.GaoY.StrubeM. (2024). The effects of strategic adjunct questions on L2 reading and strategy use.

29

LiuH. (2021). Does questioning strategy facilitate L2 Reading comprehension?J. Res. Read.44 (2), 339–359. 10.1111/1467-9817.12339

30

MayerR. E. (2009). Multimedia Learning. 2nd ed.Cambridge University Press. 10.1017/CBO9780511811678

31

MayerR. E. (2014). “Cognitive theory of multimedia learning,” in The Cambridge Handbook of Multimedia Learning, 2nd ed. ed. MayerR. E.Cambridge University Press. pp. 43–71.

32

MayerR. E.FiorellaL.StullA. (2020). Five ways to increase the effectiveness of instructional video. Educ. Technol. Res. Dev.68, 837–852. 10.1007/s11423-020-09749-6

33

MayerR. E.MorenoR. (2003). Nine ways to reduce cognitive load in multimedia learning. Educ. Psychol.38 (1), 43–52. 10.1207/S15326985EP3801_6

34

MayerR. E. (2010). “Techniques that reduce extraneous cognitive load and manage intrinsic cognitive load during multimedia learning”, in Cognitive Load Theory, eds PlassJ. L.MorenoR.BrünkenR. (Cambridge University Press), 131–152. 10.1017/CBO9780511844744.009

35

MedinaA.CallenderA. A.BrantmeierC.SchultzL. (2017). Inserted adjuncts, working memory capacity, and L2 reading. System66, 69–86. 10.1016/j.system.2017.03.002

36

MoryE. H.ChenS. J.SaadA. M. (1991). The effects of adjunct questions on learning text material: The time factor (ERIC Document No. ED333344).

37

PaasF.AyresP. (2014). Cognitive load theory. Educ. Psychol. Rev.26 (2), 191–195. 10.1007/s10648-014-9263-5

38

PaasF. G.Van MerriënboerJ. J. (1994). Variability of worked examples and transfer of problem-solving skills. J. Educ. Psychol.86 (1), 122. 10.1037/0022-0663.86.1.122

39

PaasF.RenklA.SwellerJ. (2003). Cognitive load theory and instructional design. Educ. Psychol.38 (1), 1–4. 10.1207/S15326985EP3801_1

40

PaasF.RenklA.SwellerJ. (2004). Instructional implications of cognitive load theory. Instr. Sci.32 (1–2), 1–8. 10.1023/B:TRUC.0000021806.17516.d0

41

PaasF.Van GogT. (2006). Optimising worked example instruction. Learn. Instr.16, 87–91. 10.1016/j.learninstruc.2006.02.004

42

PaasF.Van MerriënboerJ. J. G. (2020). Cognitive-load theory. Curr. Dir. Psychol. Sci.29, 394–398. 10.1177/0963721420922183

43

SchnotzW.KürschnerC. (2007). A reconsideration of cognitive load theory. Educ. Psychol. Rev.19, 469–508. 10.1007/s10648-007-9053-4

44

SunY.ZhouW.TangS. (2024). Effects of adjunct questions on L2 Reading. Behav. Sci.14 (2), 138.

45

SwellerJ. (1988). Cognitive load during problem solving. Cogn. Sci.12, 275–285. 10.1207/s15516709cog1202_4

46

SwellerJ. (2005). The Redundancy Principle in Multimedia Learning. in the Cambridge Handbook of Multimedia Learning. Cambridge University Press. 159–167. 10.1017/CBO9780511816819.011

47

SwellerJ. (2010). Element interactivity and intrinsic, extraneous, and germane cognitive load. Educ. Psychol. Rev.22, 123–138. 10.1007/s10648-010-9128-5

48

SwellerJ. (2020). Cognitive load theory and educational technology. Educ. Technol. Res. Dev.68 (1), 1–16. 10.1007/s11423-019-09701-3

49

SwellerJ.AyresP.KalyugaS. (2011). Cognitive Load Theory. Springer. 10.1007/978-1-4419-8126-4

50

SwellerJ.AyresP.KalyugaS. (2019). Cognitive Load Theory. Springer. 10.1007/978-3-030-20969-8_5

51

SwellerJ.Van MerriënboerJ. J.PaasF. G. (1998). Cognitive architecture and instructional design. Educ. Psychol. Rev.10, 251–296. 10.1023/A:1022193728205

52

ValdezA. (2013). Multimedia learning from PowerPoint: use of adjunct questions. Psychology Journal10(1), 35–44.

53

Van MerriënboerJ. J.SwellerJ. (2005). Cognitive load theory and complex learning. Educ. Psychol. Rev.17 (2), 147–177. 10.1007/s10648-005-3951-0

54

WattsG. H.AndersonR. C. (1971). Effects of inserted questions on learning from prose. J. Educ. Psychol.62 (5), 387. 10.1037/h0031633

55

WinneP. (1979). Experiments relating teachers’ use of higher cognitive questions to student achievement. Rev. Educ. Res.49, 13–50. 10.3102/00346543049001013

56

WittrockM. C. (1990). Generative processes of comprehension. Educ. Psychol.24, 345–376. 10.1207/s15326985ep2404_2

57

ZhengS.RossonM. B.ShihP. C.CarrollJ. M. (2020). Understanding student motivation in MOOCs. Comput. Educ.125, 191–201.

58

ZhuP.CâmaraA.RoyN.MaxwellD.HauffC. (2024). On the effects of automatically generated adjunct questions. In Proceedings of the 2024 Conference on Human Information Interaction and Retrieval (pp. 266–277).

Appendix

Appendix A: an example of the test for knowledge transfer

| Which of the following examples represents the lack of prior knowledge for adding numbers: 1. A student who does not know how to count attends a lesson on adding numbers 2. A student who writes six as two and writes two as six attends a lesson on adding numbers 3. A student who knows how to count numbers and what each number represents attends a lesson on adding numbers 4. All of the above are correct |

Appendix B: an example of the paintings and question for Exp. group

| View the painting carefully below, and answer the following question: choose the statement that constitutes the most correct interpretation of the painting: 1. Prior knowledge relevant to new learning comes in one of three forms: either correct and sufficient knowledge, correct and insufficient knowledge, or incorrect knowledge. The role of the teacher is to help students to complete what is missing and correct the error to build upon it. 2. Prior knowledge is not the same as construction 3. Previous knowledge is always correct 4. Prior knowledge is independent |

|

Appendix C: an example of the paintings and the statement for Con. group

| Prior knowledge relevant to new learning comes in one of three forms: either correct and sufficient knowledge, correct and insufficient knowledge, or incorrect knowledge. The role of the teacher is to help students to complete what is missing and correct the error to build upon it. |

|

Summary

Keywords

digital learning environments, germane cognitive load, higher-order adjunct question package, instructional design, knowledge transfer

Citation

Alreshidi N and Alrashidi O (2026) Investigating the role of a higher-order adjunct question package in supporting learners’ germane cognitive load. Front. Educ. 11:1819989. doi: 10.3389/feduc.2026.1819989

Received

28 February 2026

Revised

07 April 2026

Accepted

08 April 2026

Published

01 May 2026

Volume

11 - 2026

Edited by

Sami Heikkinen, LAB University of Applied Sciences, Finland

Reviewed by

Bahadir Yildiz, Hacettepe University, Türkiye

Aan Hendrayana, Sultan Ageng Tirtayasa University, Indonesia

Updates

Copyright

© 2026 Alreshidi and Alrashidi.

This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Nawaf Alreshidi nawwaf2012@hotmail.com

Disclaimer

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.