Abstract

This review treats the mathematical and algorithmic foundations of non-reversible Markov chains in the context of event-chain Monte Carlo (ECMC), a continuous-time lifted Markov chain that employs the factorized Metropolis algorithm. It analyzes a number of model applications and then reviews the formulation as well as the performance of ECMC in key models in statistical physics. Finally, the review reports on an ongoing initiative to apply ECMC to the sampling problem in molecular simulation, i.e., to real-world models of peptides, proteins, and polymers in aqueous solution.

1. Introduction

Markov-chain Monte Carlo (MCMC) is an essential tool for the natural sciences. It is also the subject of a research discipline in mathematics. Ever since its invention, in 1953, MCMC [1] has focused on reversible MCMC algorithms, those that satisfy a detailed-balance condition. These algorithms are particularly powerful when the Monte Carlo moves (from one configuration to the next), and they can be customized for each configuration of a given system. Such a priori choices [2] allow for big moves to be accepted and for sample space to be explored rapidly. Prominent examples for reversible methods with custom-built, large-scale moves are path-integral Monte Carlo and the cluster algorithms for spin systems [3].

In many important problems, insightful a priori choices for moves are yet unavailable. MCMC then often consists of a sequence of unbiased local moves, such as tiny displacements of one out of N particles, or flips of one spin out of many. Local reversible Monte Carlo schemes are easy to set up for these problems. The Metropolis or the heatbath (Gibbs-sampling) algorithms are popular choices. They generally compute acceptance probabilities from the changes in the total potential (the system's energy) and thus mimic the behavior of physical systems in the thermodynamic equilibrium. However, such algorithms are often too slow to be useful. Examples are the hard-disk model [4, 5] where local reversible MCMC methods failed for several decades to obtain independent samples in large systems, and the vast field of molecular simulation [6], which considers classical models of polymers, proteins, etc., in aqueous solution. Local reversible MCMC algorithms were for a long time without alternatives in molecular simulation, but they remained of little use because of their lack of efficiency.

Event-chain Monte Carlo (ECMC) [7, 8] is a family of local, non-reversible MCMC algorithms developed over the last decade. Its foundations, applications, and prospects are at the heart of the present review. At a difference with its local reversible counterparts (that are all essentially equivalent to each other and to the physical overdamped equilibrium dynamics), different representatives of ECMC present a wide spread of behaviors for a given model system. Two issues explain this spread. First, any decision to accept or reject a move is made on the basis of a multitude of statistically independent decisions of parts of the systems, so-called factors (see the Glossary). Usually, there is a choice between inequivalent factorizations. In the Metropolis algorithm, in contrast, all decisions to accept a move are made on the basis of changes in the total energy. Second, ECMC is fundamentally a non-reversible “lifted” version of an underlying reversible algorithm. In a lifting (a lifted Markov chain), some of the randomly sampled moves of the original (“collapsed”) Markov chain are rearranged. Again, there are many possible liftings and each of these can give rise to a specific dynamic behavior. The development of ECMC is still in its infancy. Its inherent variability may, however, bring to life the use of local MCMC in the same way as reversible energy-based Monte Carlo has been empowered through the a priori probabilities.

Section 2 discusses the mathematical foundations underlying ECMC, namely global balance, factorization, liftings, and thinning (making statistically correct decisions with minimal effort). Section 3 reviews exact results for various Markov chains in the simplest setting of a path graph. Section 4 studies one-dimensional N-body systems. Section 5 provides an overview of findings on N-particle systems in higher than one dimension. Section 6 reviews a recent proposal to apply ECMC to molecular simulations. Section 7, finally, discusses prospects for event-chain Monte Carlo and other non-reversible Markov chains in the context of molecular simulation. The Glossary contains non-technical introductions to the main terms used in this review.

2. Markov Chains, Factorization, Liftings, and Thinning

The present section reviews fundamentals of ECMC. Non-reversibility modifies the positioning of MCMC from an analog of equilibrium physics toward the realization of a non-equilibrium system with steady-state flows. Section 2.1 discusses transition matrices, the objects that express MCMC algorithms in mathematical terms. The balance conditions for the steady state of Markov chains are reviewed, as well as the performance limits of MCMC in terms of basic characteristics of the sample space and the transition matrix. Section 2.2 discusses factorization, the break-up of the interaction into terms that, via the factorized Metropolis algorithm, make independent decisions on the acceptance or the rejection of a proposed move. Section 2.3 reviews the current understanding of lifted Markov chains, the mathematical concept used by ECMC to replace random moves by deterministic ones without modifying the stationary distribution. This remains within the framework of (memory-less) Markov chains. Section 2.4 reviews thinning, the way used in ECMC to make complex decisions with minimum computations.

2.1. Transition Matrices and Balance Conditions

For a given sample space Ω, a Markov chain essentially consists in a time-independent transition matrix P and a distribution of initial configurations. Any non-negative element Pij gives the conditional probability for the configuration i to move to configuration j in one time step. The transition matrix P is stochastic—all its rows i sum up to . Commonly, the probability Pij is composed of two parts , where is the a priori probability to propose a move (mentioned in section 1), and the so-called “filter” to accept the proposed move. Any term Pii in the transition matrix is the probability to move from i to i. If non-vanishing, it may result from the probability of all proposed moves from i to other configurations j ≠ i (by the a priori probability) to be rejected (by the filter ). In the lifted sample space that will be introduced in subsection 2.3, ECMC is without rejections.

2.1.1. Irreducibility and Convergence—Basic Properties of the Transition Matrix

A (finite) Markov chain is irreducible if any configuration j can be reached from any other configuration i in a finite number of time steps [9, section 1.3]. As the matrix gives the conditional probability to move from i to j in exactly t time steps, an irreducible matrix has ∀i, j, but the time t may depend on i and j.

The transition matrix P connects not only configurations but also probability distributions π{t−1} and π{t} at subsequent time steps t − 1 and t. By extension, the matrix Pt connects the distribution π{t} with the (user-provided) distribution of initial configurations π{0} at time t = 0: The initial distribution π{0} can be concentrated on a single initial configuration. In that case, π{0} is a discrete Kronecker δ-function for a Markov chain in a finite sample space, and a Dirac δ-function in a continuous sample space.

An irreducible Markov chain has a unique stationary distribution π that satisfies Equation (2) allows one to define the flow from j to i as the stationary probability πj to be at j multiplied with the conditional probability to move from j to i: where the left-hand side of Equation (2) was multiplied with the stochasticity condition .

Although any irreducible transition matrix has a unique π, this distribution is not guaranteed to be the limit π{t} for t → ∞ for all initial distributions π{0}. But even in the absence of convergence, ergodicity follows from irreducibility alone, and ensemble averages of an observable agree with the time averages (see [9, Theorem 4.16]).

Convergence toward π of an irreducible Markov chain requires that it is aperiodic, i.e., that the return times from a configuration i back to itself are not all multiples of a period larger than one. For example, if the set of return times is {2, 4, 6, … }, then the period is 2, but if it is {1000, 1001, 1002, … }, then it is 1. These periods do not depend on the configuration i. For irreducible, aperiodic transition matrices, is a positive matrix for some fixed t, and MCMC converges toward π from any starting distribution π{0}. The existence and uniqueness of the stationary distribution π follows from the irreducibility of the transition matrix, but if its value is imposed (for example to be the Boltzmann distribution or the diagonal density matrix [3]), then Equation (2) becomes a necessary “global-balance” condition for the transition matrix. For ECMC, this global-balance condition of Equation (2) must be checked for all elements of the lifted sample space .

A reversible transition matrix is one that satisfies the detailed-balance condition Detailed balance implies global balance (Equation 4 yields Equation 2 by summing over j, considering that ), and the flow into a configuration i coming from a configuration j goes right back to j. In ECMC, the reality of the global-balance condition is quite the opposite, because the entering flow is compensated by flows to other configurations than j ( usually implies ). Checking the global-balance condition is more complicated than checking detailed balance, as it requires monitoring all the configurations j that contribute to the flow into i, in a larger (lifted) sample space.

For a reversible Markov chain, the matrix is symmetric, as trivially follows from the detailed-balance condition. The spectral theorem then assures that A has only real eigenvalues and that its eigenvectors form an orthonormal basis. The transition matrix P has the same eigenvalues as A, as well as closely related eigenvectors: The eigenvectors of P must be multiplied with to be mutually orthogonal. They provide a basis on which any initial probability distribution π{0} can be expanded. An irreducible and aperiodic transition matrix P (reversible or not) has one eigenvalue λ1 = 1, and all others satisfy |λk| < 1 ∀k ≠ 1. The unit eigenvalue λ1 corresponds to a constant right eigenvector of P because of the stochasticity condition , and to the left eigenvector π of P, because of the global-balance condition of Equation (2).

A non-reversible transition matrix may belong to a mix of three different classes, and this variety greatly complicates their mathematical analysis. P may have only real eigenvalues and real-valued eigenvectors, but without there being an associated symmetric matrix A, as in Equation (5) (see [10, section 2.3] for an example). A non-reversible transition matrix may also have only real eigenvalues but with geometrical multiplicities that not all agree with the algebraic multiplicities. Such a matrix is not diagonalizable. Finally, P may have real and then pairs of complex eigenvalues [10]. This generic transition matrix can be analyzed in terms of its eigenvalue spectrum, and expanded in terms of a basis of eigenvectors [11–13]. As non-reversible transition matrices may well have only real eigenvalues, the size of their imaginary parts does not by itself indicate the degree of non-reversibility [14]. Reversibilizations of non-reversible transition matrices have been much studied [15], but they modify the considered transition matrix.

2.1.2. Total Variation Distance, Mixing Times

In real-world applications, irreducibility and aperiodicity of a Markov chain can usually be established beyond doubt. The stationary distribution π of a transition matrix constructed to satisfy a global-balance condition is also known explicitly. The time scale for the exponential approach of π{t} to π—that always exists—is much more difficult to establish. In principle, the difference between the two distributions is quantified through the total variation distance: The distribution π{t} depends on the initial distribution, but for any choice of π{0}, for an irreducible and aperiodic transition matrix, the total variation distance is smaller than an exponential bound Cαt with α ∈ (0, 1) (see the convergence theorem for Markov chains [9, Theorem 4.9]). At the mixing time, the distance from the most unfavorable initial configuration, drops below a certain value ϵ Usually, is taken, with tmix = tmix(1/4) (see [9]). The value of ϵ is arbitrary, but smaller than 1/2 in order for convergence in a small part of Ω (without exploration of its complement) not to be counted as a success (see subsection 3.2.1 for an example). Once such a value smaller than is reached, exponential convergence in t/tmix sets in (see [9, Equation 4.36]). The mixing time is thus the time to obtain a first sample of the stationary distribution from a most unfavorable initial configuration. It does not require the existence of a spectrum of the transition matrix P, and it can be much larger than the correlation time tcorr given by the absolute inverse spectral gap of P, if it exists (the time it takes to decorrelate samples in the stationary regime). Related definitions of the mixing time take into account such thermalized initial configurations [16].

2.1.3. Diameter Bounds, Bottleneck, Conductance

An elementary lower bound for the mixing time on a graph G = (Ω, E) (with the elements of Ω as vertices, and the non-zero elements of the transition matrix as edges) is given by the graph diameter LG, that is, the minimal number of time steps it takes to connect any two vertices i, j ∈ Ω. The mixing time, for any ϵ < 1/2, trivially satisfies For the Metropolis or heatbath single-spin flip dynamics in the Ising model with N spins (in any dimension D), mixing times throughout the high-temperature phase are logarithmically close to the diameter bound with the graph diameter LG = N, the maximum number of spins that differ between i and j (see [17]).

Mixing and correlation times of a MCMC algorithm can become very large if there is a bottleneck in the sample space Ω. Two remarkable insights are that in MCMC there is but a single such bottleneck (rather than a sequence of them), and that the mixing time is bracketed (rather than merely bounded from below) by functions of the conductance [18]. Also called “bottleneck ratio” [9] or “Cheeger constant” [19], the conductance is defined as the flow across the bottleneck: where . Although it can usually not be computed in real-world applications, the conductance is of importance because the liftings which are at the heart of ECMC leave it unchanged (see subsection 2.3).

For reversible Markov chains, the correlation time is bounded by the conductance as [18]: The lower bound follows from the fact that to cross from S into , the Markov chain must pass through the boundary of S, but this cannot happen faster than through direct sampling within S, i.e., with probability πi/πS for i on the boundary of S. The upper bound was proven in [20, Lemma 3.3]. For arbitrary MCMC, one has the relation where tset is the “set time,” the maximum over all sets S of the expected time to hit a set S from a configuration sampled from π, multiplied with the stationary distribution π(S). In addition, the mixing time, defined with the help of a stopping rule [18], satisfies: where the constants are different for reversible and for non-reversible Markov chains, and πmin is the smallest weight of all configurations.

The above inequalities strictly apply only to finite Markov chains, where the smallest weight πmin of all configurations is well-defined. A continuous system may have to be discretized in order to allow a discussion in its terms. Also, in the application to ECMC, which is event-driven, mixing and correlation times may not reflect computational effort, which roughly corresponds to the number of events, rather than of time steps. Nevertheless, the conductance yields the considerable diffusive-to-ballistic speedup that may be reached by a non-reversible lifting (see [18]) if the collapsed Markov chain is itself close to the ~ 1/Φ2 upper bound of Equations (11) and (13).

2.2. Factorization

In generic MCMC, each proposed move is accepted or rejected based on the change in total potential that it entails. For hard spheres, a move in the Metropolis algorithm [3, Chapter 2] can also be pictured as being accepted “by consensus” over all pairs of spheres (none of which may present an overlap) or else rejected “by veto” of at least one pair of spheres (the pair which presents an overlap). The “potential landscape” and the “consensus–veto” interpretations are here equivalent as the pair potential of two overlapping hard spheres is infinite, and therefore also the total potential as soon as there is one such pair.

The factorized Metropolis filter [8, 21] generalizes the “consensus–veto” picture to an arbitrary system whose stationary distribution breaks up into a set of factors M = (IM, TM). Here, IM is an index set and TM a type (or label), such as “Coulomb,” “Lennard-Jones,” “Harmonic” (see [21, section 2A]). The total potential U of a configuration c then writes as the sum over factor potentials UM that only depend on the factor configurations cM: and the stationary distribution appears as a product over exponentials of factor potentials: where β is the inverse temperature. Energy-based MCMC considers the left-hand side of Equation (14) and molecular dynamics its derivatives (the forces on particles). All factorizations are then equivalent. In contrast, ECMC concentrates on the right-hand side of Equation (14), and different factorizations now produce distinct algorithms.

2.2.1. Factorized Metropolis Algorithm, Continuous-Time Limit

The Metropolis filter [1] is a standard approach for accepting a proposed move from configuration c to configuration c′: where . The factorized Metropolis filter [8] plays a crucial role in ECMC. It inverts the order of product and minimization, and it factorizes as its name indicates: The factorized Metropolis filter satisfies detailed balance for any symmetric a priori probability of proposed moves. This is because, for a single factor, reduces to (which obeys detailed balance for a symmetric a priori probability [3]) and because the Boltzmann weight of Equation (15) and the factorized filter of Equation (17) factorize along the same lines. The detailed-balance property is required for proving the correctness of ECMC (see [21, Equation 27]), although ECMC is never actually run in reversible mode.

The Metropolis filter of Equation (16) is implemented by sampling a Boolean random variable: where “True” means that the move c → c′ is accepted. In contrast, the factorized Metropolis filter appears as a conjunction of Boolean random variables: The left-hand side of Equation (19) is “True” if and only if all the independently sampled Booleans XM on the right-hand side are “True,” each with probability min[1, exp(−βΔUM)]. The above conjunction thus generalizes the consensus–veto picture from hard spheres to general interactions, where it is inequivalent to the energy-based filters.

In the continuous-time limit, for continuously varying potentials, the acceptance probability of a factor M becomes: where is the unit ramp function. The acceptance probability of the factorized Metropolis filter then becomes and the total rejection probability for the move turns into a sum over factors: In ECMC, the infinitesimal limit is used to break possible ties, so that a veto can always be attributed to a unique factor M and then transformed into a lifting move. The MCMC trajectory in between vetoes appears deterministic, and the entire trajectory as piecewise deterministic [22, 23] (see also [24]). In the event-driven formulation of ECMC, rather than to check consensus for each move c → c′, factors sample candidate events (candidate vetoes), looking ahead on the deterministic trajectory. The earliest candidate event is then realized as an event [25].

2.2.2. Stochastic Potential Switching

Whenever the factorized Metropolis filter signals a consensus, all interactions appear as effectively turned off, while a veto makes interactions appear hard-sphere like. These impressions are confirmed by a mapping of the factorized Metropolis filter onto a hamiltonian that stochastically switches factor potentials, in our case between zero (no interaction) and infinity (hard-sphere interactions).

Stochastic potential switching [26] replaces a Markov chain for a given potential U (or, equivalently a stationary distribution) through a chain with the same stationary distribution for another potential Ũ, sometimes compensated by a pseudo-potential . The possible application to factor potentials was pointed out in the original paper. The factor potential UM (see [26, section V]) for a move c → c′ is then switched to ŨM with probability with c† = c. The switching affects both configurations, but is done with probability SM(c) (see [26]). The constant is chosen so that SM < 1 for both c and c′, for example as with ϵ ≳ 0. If the potential is not switched, the move c → c′ is subject to a pseudo-potential for both configurations c† ∈ {c, c′}. ECMC considers “zero-switching” toward Ũ(c) = Ũ(c′) = 0. If , the zero-switching probability is ~ 1 − βϵ → 1 for ϵ → 0.

If , the zero-switching probability is smaller than 1. If the potential is not switched so that the pseudo-potential is used at c and c′, then remains finite while for ϵ → 0. In that limit, the pseudo-potential barrier diverges toward the hard-sphere limit. For with (, the zero-switching probability approaches ~ 1 − βdUM and, together with the case , this yields with the unit ramp function of Equation (21). In the infinitesimal limit , at most one factor fails to zero-switch. The factorized Metropolis filter of Equation (22) follows naturally.

2.2.3. Potential Invariants, Factor Fields

Invariants in physical systems originate in conservation laws and topological or geometrical characteristics, among others. Potentials V that are invariant under ECMC time evolution play a special role if they can be expressed as a sum over factor potentials VM. The total system potential then writes as Ũ = U + V with constant , which results in Although V is constant, the factor terms VM can vary between configurations. In energy-based MCMC and in molecular dynamics, such constant terms play no role. Subsection 4.3.3 reviews linear factor invariants that drastically reduce mixing times and dynamical critical exponents in one-dimensional particle systems.

2.3. Lifting

Generic MCMC proposes moves that at each time are randomly sampled from a time-independent set. Under certain conditions, the moves from this set can be proposed in a less-random order without changing the stationary distribution. This is, roughly, what is meant by “lifting.” Lifting formulates the resulting algorithm as a random process without memory, i.e., a Markov chain. Sections 3 and 4 will later review examples of MCMC, including ECMC, where lifting reduces the scaling of mixing and autocorrelation times with system size.

Mathematically, a lifting of a Markov chain Π (with its opposite being a “collapsing”) consists in a mapping f from a “lifted” sample space to the “collapsed” one, Ω, such that each collapsed configuration v ∈ Ω splits into lifted copies i ∈ f−1(v). There are two requirements [18, section 3]. First, the total stationary probability of all lifted copies must equal the stationary probability of the collapsed configuration: Second, in any lifted transition matrix on , the sum of the flows between the lifted copies of two collapsed configurations u, v ∈ Ω must equal the flow between the collapsed configurations: There are usually many inequivalent choices for for a given lifted sample space . A move between two lifted copies of the same collapsed configuration is called a lifting move.

Event-Chain Monte Carlo considers a more restricted class of lifted sample spaces that can be written as , with a set of lifting variables. The lifted copies i of a collapsed configuration v then write as i = (v, σ) with , and each collapsed configuration has the same number of lifted copies. Moves that only modify σ are called lifting moves, and lifting moves that are not required by the global-balance condition are called resamplings. The lifting variables usually have no bearing on the stationary distribution: A lifted Markov chain cannot have a larger conductance than its collapsed counterpart because the weights and the flows in Equations (28) and (29) remain unchanged, and therefore also the bottlenecks in Equation (10). Also, from Equation (29), the reversibility of the lifting implies reversibility of the collapsed chains Π. In this case, lifting can only lead to marginal speedups [18]. Conversely, a non-reversible collapsed Markov chain necessarily goes with a non-reversible lifting. Within the bounds of Equation (12) and the corresponding expression for mixing times, a lifting may be closer to the ~ 1/Φ lower “ballistic” mixing-time limit, if its collapsed Markov chain Π is near the ~ 1/Φ2 upper “diffusive” limit.

2.3.1. Particle Lifting

The earliest example of non-reversible MCMC for N-particle systems involves performing single-particle moves as sweeps through indices rather than in random order. This was proposed in 1953, in the founding publication of MCMC, which states “…we move each of the particles in succession …” [1, p.22]. Particle liftings all have a sample space where Ω = {x = (x1, …, xN)} is the space of the N-particle positions and denotes the particle indices. The specific particle-sweep transition matrix , for lifted configurations (x, i) with the active particle and with stationary distribution , satisfies where periodicity in the indices is understood. In this expression, the final δ-function implements the sweep (particle l = k + 1 moves after particle k), while the other δ-functions isolate a move of k in the collapsed transition matrix P. The multiplicative factor N on the right-hand side of Equation (31) compensates for the a priori probability of choosing the active particle k, which is in the collapsed Markov chain and 1 in its lifting. A particle-sweep lifting satisfies the global-balance condition if the collapsed Markov chain Π is reversible, a conclusion that can be generalized considerably. However, if Π is non-reversible, the corresponding particle-sweep can be incorrect (see subsection 4.2.2 for an example).

In particle systems with central potentials, particle-sweep liftings lead to slight speedups [27–29]. Likewise, in the Ising model and related systems, the analogous spin sweeps (updating spin i + 1 after spin i, etc.) again appear marginally faster than the random sampling of spin indices [30]. Particle sweeps appear as a minimal variant of reversible Markov chains, yet their deliberative choice of the active particle, common to all particle liftings, is a harbinger of ECMC.

2.3.2. Displacement Lifting

In a local reversible Markov chain Π, say, for an N-particle system, the displacements are randomly sampled from a set (and usually applied to a particle that is itself chosen randomly). Displacement liftings of a Markov chain Π with sample space Ω all live in a lifted sample space , where is either the displacement set itself (for discrete systems) or some quotient set of , describing, for example, the directions of displacements. Again, there can be different lifted sample spaces for any collapsed Markov chain Π and many inequivalent displacement liftings for a given and collapsed transition matrix.

Displacement liftings of the Metropolis algorithm on a path graph Pn with (corresponding to forward or backward hops) are reviewed in subsection 3.1.1 (see [31]). Among the many lifted transition matrices , those that preserve the once chosen element of for time steps are the most efficient. Displacement lifting applies in more than one dimensions, for example, for systems of dipole particles [32], whose dynamics is profoundly modified with respect to that of its collapsed counterpart, and also in ECMC, where the same infinitesimal displacement is repeated many times for the same particle.

2.3.3. Event-Chain Monte Carlo

Event-Chain Monte Carlo [7, 8] combines particle and displacement liftings. For a given sample space Ω (describing, for example, all N-particle coordinates), the lifted sample space is . The lifted “transport” transition matrices correspond to the same particle (or spin) using the same infinitesimal element of an infinite number of times. The persistent ECMC trajectories are piecewise deterministic. In between lifting moves, they appear non-interacting (compare with subsection 2.2.1). Resampling, implemented in a separate transition matrix, ensures irreducibility and aperiodicity of the lifted Markov chain and speeds up mixing.

In ECMC, factorization and the consensus principle of the factorized Metropolis algorithm ensure that a single factor triggers the veto that terminates the effectively non-interacting trajectory (as discussed in subsection 2.2.2). This factor is solely responsible for the ensuing lifting move c → c′. The triggering factor has at least one active particle in its in-state c. The out-state c′ can be chosen to have exactly the same number of active elements. In homogeneous particle systems (and in similarly invariant spin systems), the lifting move does not require a change of displacement vector and concerns only the particle sector of the lifting set . For a two-particle factor, the lifting-move scheme is deterministic, and the active and the passive particle interchange their roles. For a three-particle factor, the active particle may have to be sampled from a unique probability distribution, so that the lifting-move scheme is stochastic but uniquely determined [33]. For larger factors, the next active particle may be sampled following many different stochastic lifting-move schemes that can have inequivalent dynamics [33] (see also [21, section II.B]).

2.4. Thinning

Thinning [34, 35] denotes a strategy where an event in a complicated time-dependent random process (here, the decision to veto a move) is first provisionally established on the basis of an approximate “fatter” but simpler process, before being thinned (confirmed or invalidated) in a second step. Closely related to the rejection method in sampling [3], thinning doubly enables all but the simplest ECMC applications. First, it is used when the factor event rates of Equation (23) cannot be integrated easily along active-particle trajectories, while appropriate bounding potentials allow integration. Second, thinning underlies the cell-veto algorithm [36], which ascertains consensus and identifies vetoes among ~ N factors (the number of particles) with an effort. The random process based on the unthinned bounding potential is time-independent and pre-computed, and it can be sampled very efficiently.

Thinning thus uses approximate bounding potentials for the bulk of the decision-making in ECMC while, in the end, allowing the sampling to remain approximation-free. This contrasts with molecular dynamics, whose numerical imperfections—possible violations of energy, momentum, and phase-space conservation under time-discretization—cannot be fully repaired (see [37]). ECMC also eliminates the rounding errors for the total potential that cannot be avoided in molecular dynamics for long-range Coulomb interactions.

2.4.1. Thinning and Bounding Potentials

Thinning is unnecessary for hard spheres and for potentials with simple analytic expressions that can be integrated analytically. In other cases, it may be useful to compare a factor event rate to a (piecewise) upper bound, as . In the case where, for simplicity, the active particle k moves along the x direction, the bounding factor event rate allows one to write The factor M triggers the veto with the factor event rate on the left-hand side of this equation, which varies with the configuration. On the right-hand side, this is expressed as a product of independent probabilities corresponding to a conjunction of random variables, the first of which corresponds to a constant-rate Poisson process and the second to a simple rejection rate. The second factor must be checked only when the first one is “True.” Thinning thus greatly simplifies ECMC when the mere evaluations of UM and of its derivatives are time-consuming. The JeLLyFysh application of subsection 6.2 implements thinning for potentials in a variety of settings (see [38]).

2.4.2. Thinning and the Cell-Veto Algorithm, Walker's Algorithm

The cell-veto algorithm [36] (see also [39]) permanently tiles two or three-dimensional (3D) sample space (with periodic boundary conditions) into cells with pre-computed cell bounds for the factor event rates of any long-ranged factor that touches a pair of these cells. In this way, for example, a long-range Coulomb event rate for a pair of distant atoms (or molecules) can be bounded by the cell event rate of the pair of cells containing these atoms. For an active atom in a given cell, the total event rate due to other far away atoms can also be bounded by the sum over all the cell-event rates. Identifying the vetoing atom (factor) then requires to provisionally sample a cell among all those contributing to the total cell-event rate. This problem can be solved in using Walker's algorithm [35, 40]. After identification of the cell, the confirmation step involves at most a single factor. The cell-veto algorithm is heavily used in the JeLLyFysh application (see subsection 6.2). In essence, it, thus, establishes consensus and identifies a veto among ~ N factors in in a way that installs neither cutoffs nor discretizations.

3. Single Particles on a Path Graph

The present section reviews liftings of the Metropolis algorithm for a single particle on a path graph Pn = (Ωn, En), with a sample space Ωn = {1, …, n}, and a set of edges En = {(1, 2), …, (n − 1, n)} that indicate the non-zero elements of the transition matrix. The path graph Pn, thus, forms a one-dimensional n-site lattice without periodic boundary conditions. The stationary distribution is {π1, …, πn}. Two virtual vertices 0 and n + 1 and virtual edges (0, 1) and (n, n + 1), with π0 = πn+1 = 0, avoid the separate discussion of the boundaries. The Metropolis algorithm on Pn (with the virtual additions, but an unchanged sample space) proposes a move from vertex i ∈ Ωn to j = i ± 1 with probability each. This move is accepted with the Metropolis filter min(1, πj/πi) so that the flow is . If rejected, the particle remains at i. The flows:

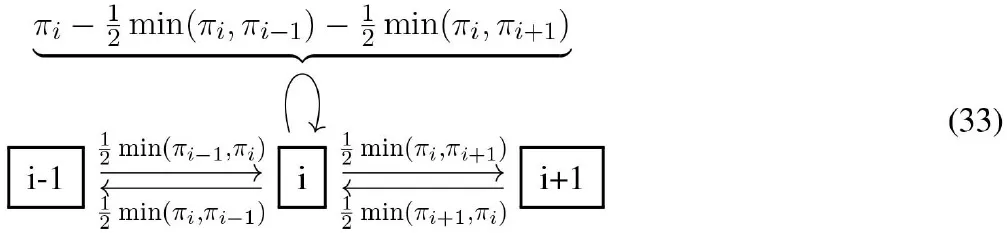

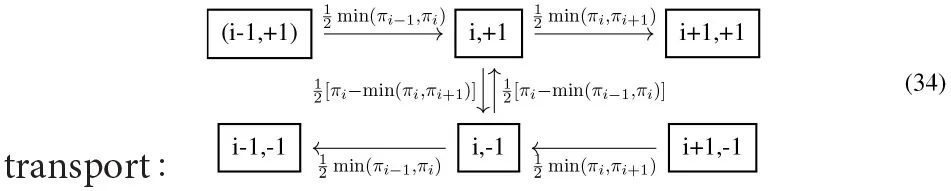

satisfy detailed balance, and the total flow into each configuration i (including from i itself) satisfies the global-balance condition of Equation (2), as already follows from detailed balance.

The displacement-lifted Markov chains in this section, with , split the Metropolis moves i → i ± 1 into two copies (the virtual vertices and edges are also split). The lifted transition matrix is a product of a transport transition matrix and a resampling transition matrix . describes moves from lifted configuration (i, σ) to (i + σ, σ) that are proposed with probability 1 and accepted with the Metropolis filter min(1, πi+σ/πi) given that . Crucially, in the transport transition matrix, if the transport move (i, σ) → (i + σ, σ) is rejected, the lifting move (i, σ) → (i, −σ) takes place instead. satisfies the global-balance condition for each lifted configuration, as can be checked by inspection:

[the flow into (i, σ) equals ].

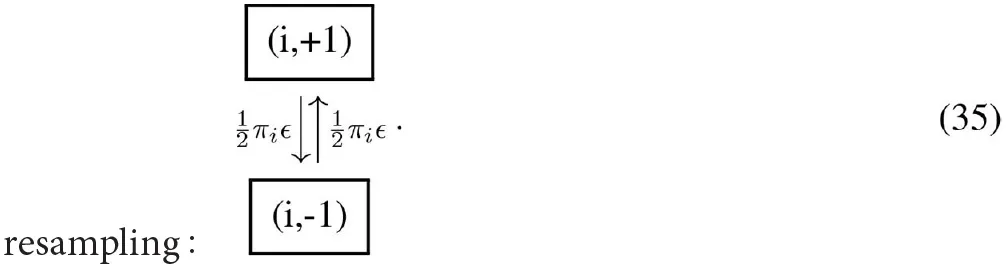

In the resampling transition matrix, the lifting variable in the configuration (i, σ) is simply switched to (i, −σ) with a small probability ϵ. satisfies detailed balance, and the resampling flows are:

The lifted transition matrix satisfies global balance. guarantees aperiodicity of for any choice of π.

The speedups that can be achieved by lifting on the path graph Pn depend on the choice of π and on the resampling rate ϵ. Bounded stationary distributions are reviewed in subsection 3.1 and unbounded ones in subsection 3.2. All Markov chains on Pn naturally share a diameter bound n, which is independent of π and that (up to a constant) is the same for the lifted chains.

3.1. Bounded One-Dimensional Distributions

In real-world applications of MCMC, many aspects of the stationary distribution π remain unknown. Even the estimation of the maximum-weight (minimum-energy) configuration argmaxi ∈ Ωπi is often a non-trivial computational problem that may itself be treated by simulated annealing, a variant of MCMC [3, 41]. Also, crucial characteristics as the conductance are usually unknown, and the mixing time can at best be inferred from the running simulation. Simplified models, as a particle on a path graph, offer more control. The present subsection reviews MCMC on a path graph for stationary distributions for which remains finite for n → ∞. The conductance bound then scales as as n → ∞, and reversible local MCMC mixes in time steps.

3.1.1. Constant Stationary Distribution, Transport-Limited MCMC

For the constant stationary distribution on Pn (see [31]), the collapsed Markov chain Π moves with probability to the left and to the right. Rejections take place only at the boundaries i = 1 and i = n and assure aperiodicity. Π performs a diffusive random walk. Its mixing time is close to the upper mixing-time bound of Equation (13). As tmix is on larger time scales than the lower conductance bound, Π is limited by transport.

In the lifted sample space , the transport transition matrix describes a deterministic rotation on the lifted graph which has the topology of a ring with 2n vertices. is not aperiodic as its period is 2n (see subsection 2.1.1). Resampling with rate with leads to a mixing time of the combined transition matrix as (see [31]).

The resampling, that is, the application of , need not take place after each transport step, with a small probability ϵ. One may also resample after a given number ℓ of transport moves, where ℓ may itself have a probability distribution. Such resamplings can also be formulated as Markov chains. In ECMC, resamplings are often required to ensure irreducibility whereas, on the path graph, they merely render the Markov chain aperiodic.

3.1.2. Square-Wave Stationary Distribution

On the path graph Pn with the square-wave stationary distribution , (for even n), the conductance for n → ∞ is of the same order of magnitude as for the flat distribution. Its bottleneck (the vertex where the conductance is realized) is again at . The Metropolis algorithm proposes moves from vertex i with probabilities to i ± 1 but rejects them with probability if i is odd (see Equation 16). Its mixing time is , on the same order as in subsection 3.1.1. The displacement-lifted Metropolis algorithm generates lifting moves with probability on odd-numbered vertices, and the persistence of the lifting variable is lost on finite time scales. In consequence, the displacement lifting is inefficient for the square-wave stationary distribution, with the mixing time still as . More elaborate liftings using height-type lifting variables that decompose into a constant “lane” and another one that only lives on even sites and recover mixing [42].

The success of displacement lifting on the path graph thus crucially depends on the details of the stationary distribution. ECMC in real-world applications is likewise simpler for homogeneous systems with a global translational invariance (or with a similar invariance for spin systems). Remarkably, however, ECMC can apply the same rules to any continuous interparticle potential in homogeneous spaces. It achieves considerable speedups similar to what the lifting of subsection 3.1.1 achieves for the flat stationary distribution on the path graph.

3.2. Unbounded One-Dimensional Stationary Distributions

For unbounded ratios of the stationary distribution ( for n → ∞) the conductance as well as the benefits that can be reaped through lifting vary strongly. Exact results for a V-shaped distribution and for the Curie–Weiss model are reviewed.

3.2.1. V-Shaped Stationary Distribution, Conductance-Limited MCMC

The V-shaped stationary distribution on the path graph Pn of even length n is given by , where (see [43]). The stationary distribution π thus decreases linearly from i = 1 to the bottleneck , with and then increases linearly from to i = n. The Metropolis algorithm again proposes a move from vertex i to i ± 1 with probabilities and then accepts them with the filter of Equation (16). The virtual end vertices ensure the correct treatment of boundary conditions. The conductance equals , for the minimal subset (see Equation 10). The Metropolis algorithm mixes in S on an diffusive timescale, but requires time steps to mix in Ωn (see [43]). However, even a direct sampling in S, that is, perfect equilibration, samples the boundary vertex n/2 between S and only on a inverse time scale. For the V-shaped distribution, the benefit of lifting is, thus, at best marginal [from to ], as the collapsed Markov chain is already conductance-limited, up to a logarithmic factor.

The optimal speedup for the V-shaped distribution is indeed realized with , and the transition matrices and . The lifted Markov chain reaches a total variation distance of and mixes in S on an timescale. A total variation distance of , and mixing in is reached in time steps only [43]. This illustrates that is required in the definition of Equation (7).

3.2.2. Curie–Weiss Model, Mixing Times vs. Correlation Times

The Curie–Weiss model (or mean-field Ising model [44]) for N spins si = ±1 has the particularity that its total potential with J > 0, only depends on the magnetization . The mapping i = (M + N)/2 + 1, n = N + 1, places it on the path graph Pn. The heatbath algorithm (where a single spin is updated) can be interpreted as the updating of the global magnetization state. The model was studied in a number of papers [22, 45–47].

The conductance bound of Equation (10) for the Curie–Weiss model derives from a bottleneck at i = n/2 where U = 0. In the paramagnetic phase, one has , and at the critical point βJ = 1, (see [45]). The corresponding conductance bounds are smaller than n because the magnetization is strongly concentrated around M = 0 (i.e., i = n/2), in the paramagnetic phase. In the ferromagnetic phase, βJ > 1, the magnetizations are concentrated around the positive and negative magnetizations (i ≳ 1 and i ≲ n) and in consequence Φ−1 grows exponentially with n, and so do all mixing times.

The reversible Metropolis algorithm mixes in time steps for T > Tc (see [45]). This saturates the upper bound of Equation (13). At the critical point T = Tc, the mixing time is . As is evident from the small conductance bound, the Metropolis algorithm is again limited by a slow diffusive transport and not by a bottleneck in the potential landscape.

The displacement lifting of the Metropolis algorithm improves mixing times both for T > Tc and for T = Tc to the optimal scaling allowed by the diameter bound. The correlation time can be shorter than because of the strong concentration of the probability density on a narrow range of magnetizations [22, 46, 47], while the mixing time on the path graph is always subject to the ~ n diameter bound. By contrast, in the paramagnetic phase J > 1, the conductance bound is exponential in n, and all mixing times are limited by the bottleneck at , that is, the zero-magnetization state.

4. N Particles in One Dimension

The present section reviews MCMC for N hard-sphere particles on a one-dimensional graph with periodic boundary conditions [the path graph Pn with an added edge (n, 1)], and on continuous intervals of length L with and without periodic boundary conditions. In all cases, moves respect the fixed order of particles (x1 < x2, …, < xN−1 < xN, possibly with periodic boundary conditions in positions and particle indices). With periodic boundary conditions, uniform rotations of configurations are ignored, as they mix very slowly [48]. The hard-sphere MCMC dynamics is essentially independent of the density for the discrete cases (see [49, Equation 2.18]) as well as in the continuum (see [28] and [29, Figure 1]). Most of the hard-sphere results are numerically found to generalize to a class of continuous potentials (see [28, Supplementary Item 5]).

Subsection 4.1 reviews exact mixing and correlation-time results for reversible Markov chains and subsection 4.2 those for non-reversible ones, including the connection with the totally asymmetric simple exclusion process (TASEP). Subsection 4.3 discusses particle-lifted Markov chains, like the PL-TASEP, the lifted-forward Metropolis algorithm as well as ECMC, for which rigorous mixing times were obtained. In many cases, non-reversible liftings including ECMC mix on smaller time scales than their collapsed Markov chains.

4.1. Reversible MCMC in One-Dimensional N-Particle Systems

Although local hard-sphere MCMC was introduced many decades ago [1], its mixing times were obtained rigorously only in recent years, and that too only in one dimension. In the discrete case, the symmetric simple exclusion process (SSEP), the mixing times (and not only their scalings) are known rigorously.

4.1.1. Reversible Discrete One-Dimensional MCMC

The SSEP implements the local hard-sphere Metropolis algorithm in the discrete sample space with xi ∈ {1, …, n} (periodic boundary conditions understood for positions and indices). All legal hard-sphere configurations c = (x1, …, xN) have the same weight , and the displacement set is . At each time step t, a random element of is applied to a randomly sampled particle i, for a proposed move from c toward one of the 2N neighboring configurations that may, however, not all be legal: If the sampled configuration is legal, the move is accepted. Otherwise, it is rejected and c remains the configuration for time step t + 1. The algorithm trivially satisfies detailed balance. Global balance follows from a detailed balance for the SSEP, but the validity of Equations (2) and (3) may also be checked explicitly.1 The total flow into c, which must equal πc, arrives from the configuration c itself and again from the 2N neighboring configurations of Equation (37) (see [28]): If the configuration is legal, it contributes forward accepted flow , and otherwise. If, on the contrary, is illegal, there is backward rejected flow from c to c through the rejected backward move . Therefore, , and likewise . The total flow into configuration c is: so that global balance is satisfied.

The mixing time of the SSEP from the most unfavorable initial distribution π{0} (the compact configuration) is known to scale as , whereas the mixing time from an equilibrium configuration scales only as , i.e., as the correlation time [49]. These behaviors are recovered by numerical simulation.

4.1.2. Continuous One-Dimensional Reversible MCMC

In the one-dimensional continuum, the scaling for the mixing times of reversible local hard-sphere MCMC has been obtained rigorously for the heatbath algorithm only. In this dynamics, at each time step, the position of a randomly sampled sphere i is updated to a uniform random value in between spheres i − 1 and i + 1. The heatbath algorithm mixes in moves [50] on an interval with fixed boundary conditions. The mixing time for the same model with periodic boundary conditions is between and [48]. Numerical simulations suggest mixing on the continuous interval with periodic boundary conditions both for the heatbath and for the Metropolis algorithm [28].

4.2. Non-reversible MCMC in One-Dimensional N-Particle Systems

Non-reversible MCMC in one-dimensional particle systems has a long history in physics and mathematics in the study of the totally asymmetric simple exclusion process (TASEP) [51], which can be interpreted as a displacement lifting of the SSEP. For that model, faster mixing-time scaling than for the SSEP was proven recently. The “particle-lifted” TASEP (PL-TASEP) [28] (with particle lifting on top of the TASEP's displacement lifting) is a lattice version of ECMC. With its chains of particles moving forward and a suitable choice for resampling, it can mix on even faster time scales than the TASEP. Extensions of the hard-sphere case to nearest-neighbor, monotonous potentials with a no-hopping condition were discussed [28, Supplementary Item 5].

4.2.1. Discrete Non-reversible MCMC in One Dimension—TASEP, Particle-Lifted TASEP

The TASEP can be interpreted as a displacement lifting of the SSEP (see subsection 4.1.1) with a displacement-lifted sample space , with . Its lifted transport transition matrix randomly proposes a move of sphere i in direction σ, from (c, σ) toward . This move is accepted if is legal. Otherwise, the system remains at (c, σ) [without performing a lifting move toward (c, −σ)]. A resampling transition matrix could perform such a lifting move on a random particle i. Because of the periodic boundary conditions, the TASEP, for its collapsed configurations, is irreducible and aperiodic even for a vanishing resampling rate ϵ between the elements of . One may thus neglect resamplings and retain only one of the elements of , say, the +1. The TASEP then simply consists in the +1 half of the SSEP. The effective halving of the lifted sample space for small ϵ carries over, in homogeneous spaces, to higher-dimensional diffusion processes and to ECMC.

The global-balance condition may be checked through the flows into a lifted configuration (c, σ), using the notations of Equation (37).2 For the TASEP, the configuration c = (x1, …, xN) can evolve into the configurations , as mentioned above. The flows into c now arrive from c itself and possibly from N neighboring configurations of Equation (37). If the configuration is legal, then contributes accepted flow toward c, and otherwise. If, on the contrary, is illegal, then there is forward rejected flow from c to c through the rejected forward move and otherwise. Therefore, , and the total flow into configuration c is [28]: so that global balance is satisfied (periodic boundary conditions understood).

The TASEP is proven to mix in time steps [52], a factor of N1/2 log N faster than its collapsed Markov chain, the SSEP. The equilibrium correlation time (the inverse gap of the transition matrix that, in this case, can be diagonalized) has the same scaling [12, 13]. Numerical simulations (with an operational definition of mixing times [28, Supplementary Item S2]) recover this behavior.

The SSEP allows for particle lifting with respect to the indices , with the lifted sample space . Transition matrices, for example, the particle-sweep transition matrices of subsection 2.3.1 then constitute valid liftings of the SSEP, as the latter is a reversible Markov chain with single-particle moves. In contrast, the PL-TASEP, with a lifted sample space is subtle. The particle-sweep transition matrix (with forward moves, and the sample space of Equation 40) violates the global-balance condition because, in essence, and add up to a constant in Equation (39), while et do not. This is not in contradiction with subsection 2.3.1 as the collapsed Markov chain of the particle-sweep (the TASEP) is non-reversible. The PL-TASEP [28], lives in the doubly lifted sample space of Equation (40). Its transport transition matrix advances the lifted configuration (c, σ, i) toward (see Equation 37) if is legal. Otherwise, the configuration at time t + 1 is (c, σ, i + 1) (where periodic boundary conditions are understood, sphere i + 1 blocks sphere i). In essence, the PL-TASEP thus consists in repeatedly advancing the same sphere. When such a forward move is rejected, a particle-lifting move results in the sphere causing the rejection to become the new moving sphere.

The transport transition matrix of the PL-TASEP satisfies global balance, and can again be checked by computing the flows into a particle-lifted configuration (c, σ, i). The flows into (c, σ, i) arrive either from (c, σ, i − 1), if is illegal, or else from , if is legal. The two flows sum up to a constant. The transport transition matrix of the PL-TASEP is deterministic, and particle resamplings (c, σ, i) → (c, σ, k) with random choices of are required for irreducibility and aperiodicity [28]. Displacement resamplings (c, σ, i) → (c, −σ, i) are not required, and the lifted sample space can again be halved.

With appropriate resamplings, the PL-TASEP mixes on an time scale, much faster than its (doubly) collapsed reversible Markov chain, the SSEP [which features mixing], and even considerably faster than its particle-collapsed non-reversible Markov chain, the TASEP [with ].

4.2.2. Continuous Non-reversible MCMC in One Dimension: Forward, Lifted Forward

The discrete liftings of the SSEP, of subsection 4.2.1, can be generalized to continuous-space liftings of the Metropolis algorithm (with a non-hopping condition) with its collapsed sample space with xi ∈ [0, L] (periodic boundary conditions understood for positions and indices).3 The displacements ±δ with δ > 0 are proposed from a symmetric distribution that may stretch out to infinity, so that . The two possible directions in the quotient set are used for the displacement lifting. Displacement liftings with respect to the elements of rather than have not been studied.

The global-balance condition can again be verified for the Metropolis algorithm separately for each δ > 0, with configurations and defined as generalizing the 2N neighboring configurations and of Equation (37).

The forward Metropolis (“Forward”) algorithm is a displacement lifting of the Metropolis algorithm, with a sample space Its lifted transport transition matrix randomly advances sphere i in direction σ by a random step δ on the scale of the mean inter-particle spacing. This advances the configuration from (c, σ) to ) if the latter is legal. Otherwise the system remains at (c, σ) [without performing a lifting move toward (c, −σ)]. The effective halving of the sample space (to only “forward” moves) carries over from the TASEP. The Forward algorithm is irreducible and aperiodic for appropriate choices of the step-size distribution, and it requires no resampling.

The particle-lifted Forward (PL-Forward) algorithm has a sample space The particle-sweep lifting of the Forward algorithm is again incorrect, as for the TASEP. The transport transition matrix of the PL-Forward algorithm is the continuous-space variant of the PL-TASEP. The same sphere i advances, with random displacements at each time step, until it is blocked by the succeeding sphere i + 1 (periodic boundary conditions understood). In that latter case, a lifting move (c, σ, i) → (c, σ, i + 1) takes place.

The Forward algorithm is numerically found to mix in time steps, down from for its collapsed Markov chain (the rigorous results [52] for the TASEP have not been generalized). This reduction is achieved without resampling. The PL-Forward algorithm also mixes in time steps, and resampling reduces the mixing-time scaling to , as for the PL-TASEP.

4.3. Event-Chain Monte Carlo in One-Dimensional N-Particle Systems

For hard spheres on a one-dimensional line with periodic boundary conditions, ECMC lives in the particle-lifted sample space of the PL-Forward algorithm, itself being a displacement lifting of the Metropolis algorithm (see subsection 4.2.2). It realizes its continuous-time limit, with an infinitesimal expectation of δt and a rescaling of time t such that finite times correspond to finite net displacements, usually with a “velocity” equal to one. ECMC is thus a lifting of the Metropolis algorithm with infinitesimal time steps. With the sample-space halving discussed previously, the ECMC lifted transport transition matrix advances the “active” sphere i by infinitesimal δt (with all other spheres stationary) until it collides with the succeeding sphere i + 1 (periodic boundary conditions in N understood), at which time sphere i + 1 becomes the sole active sphere. The transport transition matrix of one-dimensional hard-sphere ECMC is thus equivalent to molecular dynamics (the solution of Newton's equations) for an initial condition with a single non-zero velocity +1, and it is purely deterministic.

Particle resamplings assure irreducibility and aperiodicity of the ECMC. It is convenient to have these resamplings take place after each (eponymous) “event chain,” of length ℓ = ∑δt, where ℓ can itself have a probability distribution. Such resampling generalize the transition matrix of subsection 3.1. For specific distributions of ℓ, ECMC relaxation can be described exactly. Non-trivial relabelings and factor-field variants illustrate in this exactly solvable case that ECMC is a family of algorithms with different scalings of the mixing times. These times are now to be counted in the number of “events” (i.e., in lifting moves), rather than in the number of infinitesimal moves δt. Very generally, ECMC is implemented with a computational effort of per event, and without any discretization in time.

4.3.1. Event-Chain Monte Carlo Stopping Rule, Coupon-Collector Problem

For one-dimensional hard-sphere ECMC, the transport transition matrix is deterministic, and particle resamplings are crucial. The ECMC dynamics of N hard spheres of diameter d on a periodic line of length L is equivalent to the dynamics of N point particles on a reduced line of length Lfree = L − Nd. In consequence, one event chain of chain length ℓ effectively advances the sphere that initiates the event chain by ℓ (up to relabeling of spheres). If ℓ is sampled from any uniform distribution in [const, const + Lfree], the initial sphere of the event chain is placed (up to a relabeling) at a random position on the ring. ECMC thus obtains a perfect sample when each sphere has once initiated an event chain, allowing the definition of a stopping rule. The solution of the mathematical coupon-collector problem shows that it takes random samples of N possible event-chain starting spheres to touch each of them at least once. In consequence, the number of event chains required for mixing scales as , and the total number of events as (see [29, 53].

4.3.2. Event-Chain Monte Carlo With Local Relabeling

The logarithm in the mixing-time scaling of ECMC originates in the coupon-collector probability to repeatedly sample the same starting sphere for different event chains. Naive particle sweeps, however, break the global-balance condition. A correct modified lifted transition incorporates local relabelings as follows: It advances the active sphere i (with all other spheres stationary) until it collides with a sphere j, at which time i and j exchange their labels. The newly relabeled sphere i continues as the sole active sphere. When the event chain terminates (after a continuous time interval ℓ), a new event chain starts with sphere i + 1 as active sphere [29]. With the sweep through active spheres, the coupon-collector-related logarithmic slowdown is avoided so that the choice of random chain lengths ℓ = ran[const, const + Lfree], remains as the only random element in the algorithm. It produces a perfect sample after N chains and events rather than the events of regular ECMC. ECMC with local relabeling again illustrates that reducing the randomness of moves can speed up mixing, in other words, bring about faster overall randomization. In higher-dimensional hard-sphere systems, the relabeling appears to speed up the approach to equilibrium by only a constant factor [53].

4.3.3. Factor-Field ECMC in One Dimension

The factorized Metropolis filter separates the total potential into independent terms (the factors). Large variations in one factor potential then remain uncompensated by those in other factors, as would be the case for the total potential (for energy-based filters). As a consequence, ECMC may possess event rates that are too high for efficient mixing. This potential shortcoming of standard ECMC was remarked at low temperature in one-dimensional N-particle systems [54] (see also [29, Figure 2]). It is overcome in one-dimensional particle systems through the addition of invariants and their subsequent breakup into factor fields. Such terms may decrease the event rate. Moreover, they can profoundly modify the characteristics of the event-chain dynamics and decrease mixing-time and correlation-time exponents.

For N particles on a one-dimensional interval of length L, the quantity (with periodic boundary conditions in L and in N) is an invariant. A linear function f(x) = ax can transform it into a sum of factor fields: The factor field of Equation (44) may be added and its linear parameter a adjusted to any pair-factor potential. Attractive factor fields may thus be added to hard-sphere factors or to Lennard-Jones factors [55]. With the linear factor adjusted to compensate the virial pressure, autocorrelation times [rather than without factor fields] and mixing times [rather than ] are found. One particular feature of event-chain dynamics at the optimal value of the factor field is that the chains have zero linear drift, and therefore also vanishing virial pressure [8, 55].

5. Statistical-Mechanics Models in More Than One Dimension

The present section reviews ECMC for statistical-physics models in more than one dimension. At a difference with the lifted Markov chains considered in section 4, the displacement of an active particle impacts several factors, and the next veto arises from one of them (an active particle may, for example, collide with one out of several other particles and form separate factors with each of them). Subsection 5.1 reviews mathematical and algorithmic aspects of the two-dimensional (2D) hard-disk model, the first application of ECMC [5, 7, 56] where vetoes are determined deterministically. Hard-disk ECMC is contrasted with related algorithms that were applied to this system. Subsection 5.2 reviews ECMC for the harmonic model of a solid, but which is also the low-temperature effective model for continuous-spin systems, as the XY or the Heisenberg models. In these spin systems, as in the harmonic model, ECMC can be thoroughly analyzed. Subsection 5.3 reviews the interplay between spin waves and topological excitations for local Markov chains including ECMC, whose behavior there is much better understood than for particle systems.

5.1. Two-Dimensional Hard Disks

Hard-sphere ECMC can be analyzed without reference to the factorized Metropolis algorithm, as is necessary for more general interactions. Most studies have concentrated on the 2D case, the hard-disk model, whose phase behavior was clarified using ECMC [5, 57] after decades of uncertainty (The original Fortran90 implementation used in [5] is publicly available [58]). Strictly speaking, hard-disk ECMC is not generally irreducible in the NVT ensemble of fixed volume and number of disks, because of the existence of locally stable configurations at arbitrarily small density in the thermodynamic limit [59]. Such configurations can be formally excluded, and irreducibility established, in the NPT ensemble of constant pressure, although the locally stable configurations are too rare to play a role for a large number of disks N. Hard-disk ECMC is found to be very fast, but elementary questions as, for example, the scaling of correlation times with system size are still without even a clear empirical answer in the ordered phases. The simplifications related to the existence of a constraint graph of possible lifting moves have enabled a successful implementation of hard-disk multithreaded ECMC [58].

5.1.1. Characterization of Hard-Disk ECMC

Hard-disk ECMC lives in a lifted sample space where ΩMet is the sample space of the Metropolis algorithm (the configuration space of N hard disks, say, in a square box with periodic boundary conditions), is the quotient set of the displacements (see subsection 2.3.2), and the set of particle indices. For the “straight” ECMC [7] used in all large-scale simulations, . The halving of the homogeneous lifted sample space (see subsection 4.2.1) again allows one to restrict displacements to the positive x and the positive y directions, but this applies to homogeneous hard-disk systems only. The lifted transport transition matrix of straight hard-sphere ECMC advances the active disk i until it collides with another disk j, at which time a particle-lifting move takes place, and j becomes the active disk. This effectively one-dimensional process is equivalent to the one described in subsection 4.3. Particle and displacement resamplings assure irreducibility of straight ECMC (with the above-mentioned caveat related to locally stable configurations). Placed at the beginning of each event chain, they may select the chain's random starting disk and overall direction. The correctness of straight ECMC follows from the continuum limit of a hard-disk lattice version as well as from a mapping onto molecular dynamics with 2D sphere positions but with one-dimensional sphere velocities (see [58, Lemma 1]). A variant of straight ECMC with a larger quotient space and a transition matrix that slowly changes directions was found to bring no improvements for hard disks [60], while it has drastic effects for hard-sphere objects with internal degrees of freedom [32].

The “reflected” ECMC is also compatible with the necessary conservation of phase-space volume [7]. Its quotient set is , where is the 2D unit vector in direction ϕ. The lifted transport transition matrix of reflected ECMC only differs in the particle-lifting moves at a collision between a disk i and disk j. To define the outgoing direction, the incoming one is reflected with respect to the line (hyperplane) of incidence (see [56, Figure 2.8]). Reflected ECMC is irreducible without resamplings. Resampling also seem to have little influence on convergence times. In hard-disk applications, however, straight ECMC is generally faster [7, Figure 6]. Variants of the reflected ECMC algorithm, such as the “obtuse reflected” and the Newtonian ECMC [61], appear to be very fast in 3D hard spheres.

In hard-disk and hard-sphere systems, the computation of the pressure is notoriously complicated, as the virial cannot be extracted from a finite number of equiprobable configurations. Instead, it is obtained from an extrapolation of the pair-correlation function to contact [56, section 3.3.4]. In ECMC, the infinite number of highly correlated samples between any two lifting moves allows for an estimator of the virial pressure from the expectations of basic geometrical properties of the ECMC trajectory [8, 57, 62]. This estimator generalizes from hard spheres to arbitrary potentials.

In (straight) ECMC of monodisperse disk, any particle-lifting move (at a collision) can only go from a disk i to a set of at most three other disks, that remains fixed between direction liftings.4 An oriented constraint graph, with at most three outgoing arrows for every disk, encodes these relations [63]. The constraint-graph formulation of hard-sphere ECMC exposes its close connection with the harmonic model where subsection 5.2, where the neighbor relations are fixed permanently.

In 2D hard-disk MCMC algorithm, the total variation distance or spectral gaps cannot be estimated or evaluated, and theoretical bounds cannot be used [64]. Rigorous mixing-time scaling exponents are available for low density, but only for a non-local version of the Metropolis algorithm [65]. The analysis of all recent computations builds on the hypothesis that for 2D disks the autocorrelation function of the global orientational order parameter is the slowest relaxation process in this system. Practical computations generally adopt a square box with periodic boundary conditions, where the expectation of the above autocorrelation function vanishes because of symmetry, simplifying the interpretation of time series [5, 7].

5.1.2. Hard-Disk ECMC and Other Algorithms

In general ECMC, the continuous-time limit serves only to associate each veto in the factorized Metropolis algorithm with a unique factor. In the simpler setting of hard-disk ECMC, the continuous-time limit makes that an active disk i only collides with a single other disk j, so that the lifting move i → j is well-defined and compatible with global balance. For two hard disks in a box with periodic boundary conditions, ECMC needs only a single factor, so that the continuous-time limit is not needed. ECMC satisfies the global balance with finite displacements . Because of homogeneity, a given event chain, say, with a fixed displacement (δx, 0) then maps to the displacement-lifted transport transition matrix on a path graph (see subsection 3.1.1), with the displacement of one hard disk corresponding to the “+1” sector on the path graph, and the displacement of the other corresponding to the “−1” sector.

For any N and any spatial dimension D, the transport transition matrix of straight hard-sphere ECMC is equivalent to modified molecular dynamics with D-dimensional positions, yet one-dimensional velocities. This corresponds to hard spheres on one-dimensional constraining “rails” that remain fixed in between direction liftings. The ECMC events correspond to molecular-dynamics collisions, which conserve energy and momenta for a phase-space configuration with only a single non-zero velocity.

A discrete-time precursor algorithm of hard-disk ECMC [66, section 5] also moves chains of disks. For each chain, it samples a random initial disk and a random direction of displacement (such that and its inverse are equally likely), and a total number nc of disks to be displaced. A chain move is then constructed (not unlike ECMC) by displacing nc − 1 disks (starting with the initial one) along the direction of displacement until they hit their successor disks. The (final) disk nc is placed randomly between its initial position and its (hypothetical) event position with a successor disk. In order to satisfy detailed balance, this algorithm requires a Metropolis rejection step for the entire chain by the ratio of the intervals available for the first and for the final disks. One chain of the precursor algorithm resembles the transport stage of ECMC between particle resamplings, although it can neither be interpreted as a continuous-time Markov chain nor as a lifting. The convergence properties of this algorithm have not been analyzed.

5.1.3. Parallel Hard-Disk ECMC

The hard-disk model was successfully simulated in parallel using domain decomposition, overcoming some of the problems of such a scheme in molecular dynamics [67]. Within ECMC [63, 68], domain decomposition leads to residual interactions that destroy space homogeneity and therefore the convenient halving of the sample space. Convergence is then slower. Within a four-color checkerboard scheme, a cell of any one color touches only differently colored cells [57, 69]. At any time, disks in cells of one given color can thus be updated in parallel. Irreducibility is assured by frequently translating the four-color checkerboard by a random vector. With the collapsed Metropolis algorithm as a sampling algorithm inside cells, this algorithm overcomes its considerable speed handicap (roughly, two orders of magnitude in single-processor mode), through massive parallelization on GPUs. Massively parallel MCMC has been tested to high precision against ECMC and against event-driven molecular dynamics for the pressure and the orientational and positional order parameters [57]. Related work was performed with ECMC replacing the Metropolis algorithm inside each cell [68].

Hard-disk ECMC remains valid for more than one active particle [58], and it then resembles event-driven molecular dynamics, albeit with a much smaller number of moving particles. In single-processor mode, both identify the earliest one of a number of candidate events, the one that will be realized as an event and lead to a lifting move (for ECMC) or to a collision (for molecular dynamics). In both approaches, the event then generates new candidate events, while some of the old ones continue to exist and yet others disappear.

Modern event-driven molecular dynamics codes optimize the management of a very large number of such candidate events [70, 71]. In event-driven molecular dynamics, an extensive number ∝N of candidate events are present at any given moment. The possible scheduling conflicts among this large number of candidate events has long stymied attempts to parallelize the algorithm and to handle several events independently from each other [72–76]. In ECMC, one may freely choose the number of active particles and keep it fixed throughout a simulation. If this number is , the mathematical birthday problem shows that the candidate events are usually disjoint for any two active particles, cutting down on the degree of interference. A framework of local times has lead to a multithreaded implementation for hard-disk ECMC in which scheduling conflicts appear with small finite probability for active disks in the N → ∞ limit for finite run times [58].

5.2. Harmonic Model

The harmonic model [77] describes spin-wave excitations for N spins on a lattice, with a total potential between neighbors 〈i, j〉, with non-periodic angles ϕi ∈ ℝ, which approximate the small elongations |ϕ − ϕj| ≪ 1 in the XY model with its total potential . Spin waves are the dominant excitations in the XY model at low temperatures, notably in two dimensions [78]. The harmonic model also provides the quintessence of phonon excitations in particle systems, for small displacements from perfect lattice positions. In the harmonic particle model, each particle interacts with a fixed set of neighbors so that disclinations, dislocations, and stacking faults cannot develop.

Besides its role of isolating phonon and spin-wave excitations of many systems, the harmonic model is of importance for hard-sphere ECMC whose sequence of constraint graphs [58, 63], between displacement resamplings, effectively defines a sequence of models with fixed neighborhoods.

5.2.1. Physics of the Harmonic Model

The harmonic model is exactly solved [77]. It has a single phase for all finite temperatures, but the nature of this phase depends on the spatial dimension D. The differences in angle for two spins at positions distant by r for the harmonic spin model (and similarly the difference in elongations with respect to the lattice positions for the harmonic particle model) in equilibrium are given by: For a system of size L, the mismatch of two typical spins or particles is thus of the order of in one dimension, grows as the square root of the logarithm of L in two dimensions, and remains constant in three dimensions and higher. The harmonic particle model, in two dimensions, features long-range orientational order but only power-law decay of positional order [79]. Only in more than two dimensions does it have long-range orientational and positional order.

5.2.2. ECMC Algorithm for the Harmonic Model

For the harmonic spin model, the ECMC takes place in a particle and displacement-lifted sample space where is the sample space of N generalized angles (although only differences of angles are important), where the periodicity of angles is abandoned. is again the quotient set of (infinitesimal) displacements. The homogeneity in ϕi again allows the lifted sample space to be halved and the positive direction of displacement to be retained only. ECMC monotonically increases each of the ϕi, at a difference of Metropolis MCMC, which must allow for changes of the angles in both directions. In event-driven harmonic-model ECMC, for a finite number of neighbors per spin, the displacement per event of each active spin is finite. It can be expected that the total displacement of each spin or particle corresponding to the Δϕ or Δr in Equation (46) decorrelates the system. It thus follows that from one independent sample to the next one each particle or spin must be displaced times in one dimension, times in two dimensions, and a constant number of times in more than two dimensions. Reversible local MCMC, in contrast, requires ∝ L2 displacements per spin (or particle) for all dimensions. This translates into ECMC correlation times of , and single events, in dimensions D = 1, 2, and 3. Numerical simulations are in excellent agreement with these expectations [80, Figure 7].

5.3. Event-Chain Monte Carlo for Continuous Spin Models

Event-Chain Monte Carlo readily applies to XY and Heisenberg-type spin models because their spin–spin pair potentials on neighboring sites i and j write as U(ϕi − ϕj) with continuous spin angles ϕi and ϕj. The particle and direction-lifted sample space for these models is analogous to that of the harmonic model, but with angular variables ϕ ∈ [0, 2π) (the spins of the Heisenberg model must be projected onto a plane in spin space). The homogeneity of the potential with respect to the absolute spin angles again allows the halving of lifted sample space. The particle and direction-lifted transition matrix rotates a given spin i in one direction until the factorized Metropolis algorithm calls for a veto by a neighbor j of i followed by a lifting move from i to j. The spin j then starts to rotate in the same sense, with the cumulative rotation corresponding to the MCMC time.

In the XY model, the 2D fixed-length continuous spins live on a D-dimensional spatial lattice. The pair potential Uij = −cos(ϕi − ϕj) favors the alignment of neighboring spins i and j, and in ECMC, the activity (i.e., the rotation) passes from one spin to one of its neighbors. XY-model ECMC is irreducible without any resamplings. Its sequence of active spins realizes an anomalous 2D diffusion process [81]. ECMC on the Heisenberg model, where spins are 3D, can be reduced to this case, with resamplings through rotations in 2D sub-planes in spin space. In both models, ECMC lowers the scaling of relaxation times with respect to local reversible MCMC. Although more efficient algorithms are available [82–84] for these particular models, the comparison of ECMC with reversible MCMC illustrates what can be achieved through non-reversible local Markov chains in real-world applications.

In the 2D XY model, ECMC autocorrelation functions can be fully explained in terms of spin waves and topological excitations. The 2D hard-disk system similarly features phonons and two types of topological excitations, but the precise relaxation dynamics of MCMC algorithms has yet to be clarified (for a synopsis, see [56, Chapter 1]).

5.3.1. Spin Waves and Topological Excitations in the 2D XY Model