- Psicología Cognitiva, University of La Laguna, La Laguna, Spain

This study investigates whether understanding up/down metaphors as well as semantically homologous literal sentences activates embodied representations online. Participants read orientational literal sentences (e.g., she climbed up the hill), metaphors (e.g., she climbed up in the company), and abstract sentences with similar meaning to the metaphors (e.g., she succeeded in the company). In Experiments 1 and 2, participants were asked to perform a speeded upward or downward hand motion while they were reading the sentence verb. The hand motion either matched or mismatched the direction connoted by the sentence. The results showed a meaning-action effect for metaphors and literals, that is, faster hand motion responses in the matching conditions. Notably, the matching advantage was also found for homologous abstract sentences, indicating that some abstract ideas are conceptually organized in the vertical dimension, even when they are expressed by means of literal sentences. In Experiment 3, participants responded to an upward or downward visual motion associated with the sentence verb by pressing a single key. In this case, the facilitation effect for matching visual motion-sentence meaning faded, indicating that the visual motion component is less important than the action component in conceptual metaphors. Most up and down metaphors convey emotionally positive and negative information, respectively. We suggest that metaphorical meaning elicits upward/downward movements because they are grounded on the bodily expression of the corresponding emotions.

Introduction

People use language to refer literally to perceptual objects or events, in sentences such as “the balloon rose.” However, they can also refer to abstract events and entities using the indirect pathway of metaphors. For example, “his mood rose” expresses the abstract concept of “arriving at a good mood” in terms of the more concrete concept of “rising.” In other words, metaphors help us to understand abstract or relatively unclear concepts such as mental states in terms of concrete sensory-motor experiences. Two related features emerge from the current literature on metaphorical meaning: metaphors are conceptual, and metaphors are embodied. The conceptual nature of metaphors means that metaphorical expressions are tied to metaphorical concepts in a systematic way (Lakoff and Johnson, 1980; Johnson, 1987; Lakoff, 1987). In other words, concepts are primarily metaphorical (rather than symbolic or propositional) and linguistic metaphors are systematically derived from these concepts. On the other hand, conceptual metaphors are grounded in embodied representations; that is to say, we use sensory-motor experience to conceptualize abstract domains such as time, feelings, interpersonal relationships, etc. (Lakoff and Johnson, 1980; Gibbs, 1994, 2006; Johnson and Lakoff, 2002; Casasanto, 2009). Thus, given the prominence of space in our perceptual and motor experience, spatial dimensions are frequently used to support rich metaphorical conceptual systems (Lakoff and Johnson, 1980). For instance, speakers of English and other languages have been found to conceptualize time by mapping the future in front and the past behind themselves (e.g., Casasanto and Boroditsky, 2008; Sell and Kaschak, 2010) or, alternatively, the future to the right and the past to the left (Torralbo et al., 2006; Santiago et al., 2007). There is also a rich orientational metaphor system in the up/down spatial dimension, which is the main concern of this paper. According to Lakoff and Johnson (1980) some metaphorical notions (good, virtue, happiness, consciousness, health, wealth, high status, power, etc.) are mapped onto the “up” pole of the vertical dimension, whereas the opposite notions (evil, vice, sadness, unconsciousness, illness, poverty, low status, etc) are mapped onto the “down” pole of the vertical dimension.

Some recent studies have supported the embodied character of up/down conceptual metaphors. Thus, Schubert (2005) has found evidence that the concept of power is partially mapped onto the physical vertical dimension; in other words, the “control is up – lack of control is down” conceptual metaphor is embodied. Thus, when participants were asked to judge which one was the more powerful of two groups (e.g., master or servant), their response was faster if the word for the powerful group was in the upper part of the screen than if it was in the lower part. Furthermore, Moeller et al. (2008), using a spatial attention paradigm, found that individuals with dominant personalities favored the vertical dimension of space more than individuals with low dominance, being faster to respond to probes along a vertical axis, while both groups performed similarly over a horizontal axis.

In the same vein, the “more is up – less is down” orientational metaphor was investigated by Joseph et al. (1994), who found that participants’ judgments of their performance in a proofreading task were influenced by the size of the pile of documents they were required to read. Individuals who were required to read pages inside journals (high pile) judged that they had done more and a better job than those who were asked to read single pages (low pile), despite the fact that the two conditions demanded the same amount of work. Similarly, Langston (2002) found that texts that violate the “more is up-less is down” conceptual metaphor are more difficult to comprehend than texts that are consistent with such metaphor, yielding slower response times and higher error rates in a semantic task. Moreover, Ito and Hatta (2004) reported a SNARC-like effect (Spatial-Numerical Association of Response Codes) for the vertical dimension: they found that participants were faster to respond to large numbers with the top choice key than with the bottom choice key, whereas the reverse was true for small numbers.

Finally, concerning the “positive is up – negative is down” metaphor, Meier and Robinson (2004) have reported that evaluations for positive words were faster when words were in the upper rather than on the lower position in the screen, whereas for negative words they found the opposite pattern. They also found that participants with higher neuroticism or depressive symptoms responded faster to spatial attention targets placed in the bottom, which suggests that negative affect biases selective attention in a direction that favors lower regions of physical space (Meier and Robinson, 2006). Memory tasks are also sensitive to the “positive is up – negative is down” metaphor. Thus, Crawford et al. (2006) asked participants to remember images with an emotionally positive or negative valence, and found that positive items were remembered better when presented on the top of the screen, while negative images were biased downward. Also Casasanto and Dijkstra (2010) reported that participants retrieved more positive memories of their life when instructed to move marbles up, and more negative memories when instructed to move them down, demonstrating that positive and negative life experiences are implicitly associated with schematic representations of upward and downward motion.

The above studies investigated how the up/down metaphorical conceptualization modulates different semantic tasks, such as semantic classifications in bipolar categories, but they did not directly investigate whether the motions in the vertical dimension are activated online during ordinary comprehension of metaphors. To test the embodied meaning approach to language comprehension, some researchers have used an action-sentence compatibility effect (ACE) paradigm. The basic ACE procedure consists of asking participants to listen to or read sentences describing motor events while they perform a motor task designed to match or mismatch the meaning of the sentences (Glenberg and Kaschak, 2002; Buccino et al., 2005; Borreggine and Kaschak, 2006; Zwaan and Taylor, 2006; Glenberg et al., 2008; Kaschak and Borregine, 2008; de Vega et al., in revision). In most cases a facilitatory ACE has been reported in the literature; that is, the meaning-action matching condition produces faster motor responses than the mismatching condition. Thus, Glenberg and Kaschak (2002) asked people to judge the sensibility of sentences describing a transfer motion toward or away from them (e.g., “Andy delivered the pizza to you” or “You delivered the pizza to Andy”). The judgment time for sensible sentences was faster for the matching conditions (e.g., sentences describing a transfer toward oneself with the “yes” response being a hand motion toward oneself) than for the mismatching conditions.

Surprisingly, in spite of the abundant literature on metaphors, no study has yet been performed to address whether the comprehension of up/down orientational metaphors activates embodied representations online. To our knowledge, the only ACE study on metaphorical comprehension to date has been run recently to demonstrate that the comprehension of “future is in front – past is behind” spatial metaphors activates body action simulations in the front/back spatial dimension (Sell and Kaschak, 2010). The present research aims to fill the gap, testing whether the comprehension of up/down metaphors activates vertical body actions or visual motions online. Given that orientational metaphors are ubiquitous in everyday language, it is possible that they might have become “frozen” or “dead;” if such were the case, they would not activate embodied representations online. Conversely, if embodied representations were part of metaphorical meaning, then they would be activated in online comprehension. Moreover, not only would explicit orientational metaphors activate embodied representations but, as we will argue, abstract literal sentences that describe the same conceptual domains as orientational metaphors would also activate embodied representations in the same way. Assuming that metaphors do activate embodied representations online, another goal of this study was to know whether these representations have a motor component, a visuo-spatial component, or both. Notice that most up/down orientational metaphors employ verbs of motion (e.g., rising, going down, falling, jumping, etc), and these motions could potentially be understood as visual or motor events. For instance, the metaphor “made him rise with victory” could be represented either as an upward visual motion (e.g., as watching a movie), as an upward body action, or as a combination of the two.

In this research, the ACE procedure was modified to test how orientational metaphors modulate vertical body actions (Experiments 1 and 2). Participants performed either upward or downward hand motions while they read orientational metaphors and other types of sentences. If orientational metaphors involved a simulation of a vertical action, then they would interact with the enactment of a matching body vertical motion. Thus, one might expect faster responses in matching as compared to mismatching conditions: up metaphors (“climbing up in the company”) would facilitate upward hand motions, and down metaphors (“falling into depression”) would facilitate downward hand motions. Moreover, given that the conceptual system supposedly is itself metaphoric (Lakoff and Johnson, 1980), the same meaning-action facilitation should be observed with literal sentences referring to the same concepts as orientational metaphors. For instance, “succeeding in business” would facilitate upward hand motions and “becoming sick” would facilitate downward hand motions. By contrast, if the ACE were restricted to orientational metaphors, this would mean that it might be a lexical phenomenon triggered by the motion verb, rather than a conceptual phenomenon.

The purpose of Experiment 3 was to test whether metaphorical meaning involves a purely visual motion component. Rather than using the ACE paradigm of the previous experiments, participants were asked to press a single key in response to either an upward or a downward visual animation. In this way, the visual motion matched or mismatched the orientational meaning of the sentences, but participants did not perform any upward or downward motion. In spite of this, it is possible that a visual motion-sentence compatibility effect would emerge; namely, we might expect faster responses for matching conditions (e.g., up metaphors and upward visual animation) than for mismatching conditions. In fact, some studies have used a dual-task paradigm to demonstrate a visual motion-sentence compatibility effect. For instance, Kaschak et al. (2005) asked participants to make semantic judgments on auditory sentences that described motions toward them (e.g., “the car approached you”) or away from them (e.g., “the horse ran away from you”), while simultaneously viewing dynamic stimuli that produced an illusory motion toward or away from them. Semantic judgments were faster in the mismatching condition than in the matching conditions, suggesting a competition for the same neuronal resources responsible for processing a given visual motion (e.g., away from you) and processing of the meaning sentence. Furthermore, a neuroimaging study observed that during the comprehension of visual motion-related sentences, there was activation in a brain region responsible for processing dynamic visual stimuli (Rueschemeyer et al., 2010).

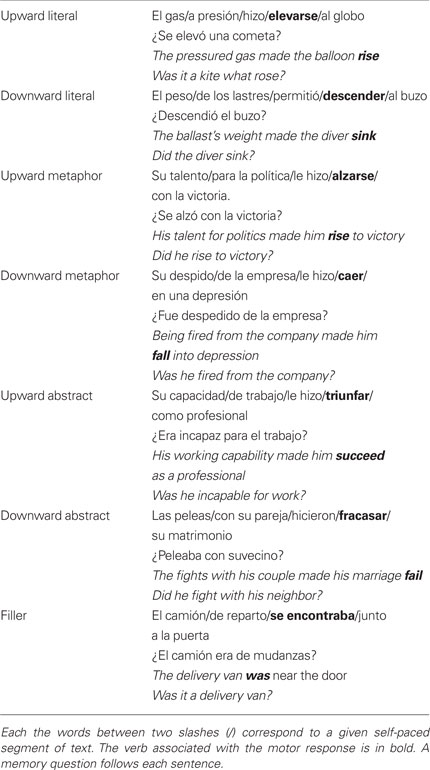

To test these ideas, three kinds of sentences were created (see examples in Table 1). First, literals describing upward or downward physical movements were included as a baseline condition, in which ordinary ACE should be expected. The second group comprised metaphors, including upward motion verbs (e.g., “climbing,” “flying,” “jumping”), and downward motion verbs (e.g., “falling,” “sinking,” “burying”). Typically, upward metaphors referred to abstract positive events like “success,” “gain,” “improvement,” or “happiness,” while downward metaphors referred to negative events such as “defeat,” “loss,” “worsening,” or “sadness.” The third group, abstract sentences referred to similar concepts as the orientational metaphors, although in this case employing literal verbs such as “succeeding,” “improving,” “failing,” or “winning.” While reading the critical verb (Table 1, in bold), participants were asked to perform a hand motion that either matched or mismatched the direction of the motion described by the sentence. The reaction times in the matching and mismatching conditions provided the main ACE measure. In Experiment 1, the cue prompting the motor response was a visual upward or downward animation of the target word. In Experiment 2, the cue prompting the motor response was a color switch (from black to red or blue) of the target word, with the purpose being to observe meaning-action interaction in absence of visual motion. In Experiment 3 there was again an upward/downward animation of the target word, but in this case participants were not asked to move their hand in these directions. They simply performed a go/nogo task: pressing a single key when the target word moved and not pressing any key otherwise.

Experiment 1

In this experiment, the motor responses were cued by an upward/downward visual animation of the sentence verb, which was easily interpreted by participants as a prompt to immediately move their finger in the same direction. The visual animation was set 200 ms after the verb onset, because action-verbs more strongly activate the motor cortex within this temporal range, according to magnetoencephalography studies (e.g., Pulvermüller et al., 2005). Furthermore, in a previous study with literal transfer sentences de Vega et al. (in revision) found that this interval was optimal to obtain ACE. In addition to the motor responses, participants were asked to respond to a simple yes/no memory question after reading each sentence, providing later measures of meaning-action effects.

The procedure used here was the same as the one employed by de Vega et al. (in revision), and differs from the typical ACE studies (e.g., Glenberg and Kaschak, 2002; Glenberg et al., 2008) in which the directional motor responses were associated with sensibility judgments and were collected after understanding the whole sentence. By contrast, here the motor action was a simple psychophysical response to a visual cue, and did not require the burden of a semantic judgment. The procedure allows collecting meaning-action effects at a relatively early stage of sentence processing (in the verb), and also dissociating the motor effects (measured on the motor response) from the semantic effects (measured on the memory task).

Method

Participants

Sixty students of Psychology of the University of La Laguna, all native speakers of Spanish, took part in the experiment in exchange for academic credits.

Materials

Ninety-six experimental sentences (32 literals, 32 metaphors, and 32 abstract sentences) were constructed, as well as 50 filler sentences, describing static scenarios (e.g.: The delivery van was near the door), and eight practice sentences. The experimental and the sample sentences, illustrated in Table 1, shared the following structure: Subject/noun complement/verbal periphrasis/main verb/verb complement. The main verb, which described an upward or downward motion or an abstract event, was always the fourth segment. All five segments had approximately the same number of words in each sentence: 2 in the first one, 2 or 3 in the second and fifth and 1 or 2 in the third and fourth, depending on the particular periphrasis. This periphrastic structure was not suitable for the filler sentences, which described static situations. As a result, the filler sentences had four segments and the critical verb was placed in the third position. Finally, each sentence had a corresponding yes/no question that could refer to any segment.

Design and Procedure

The experiment manipulated 3 sentence types (literals, metaphors, and abstract sentences) × 2 Motor direction (upward, downward) × 2 Semantic directions (upward, downward) in a factorial within-participant design. The experimental session started with instructions to perform the task, followed by eight practice trials similar to the experimental ones, and finally the 96 experimental trials mixed with the 50 fillers were presented randomly in two blocks. The experimental trials included eight sentences for each of the 12 experimental conditions resulting from the combination of the three factors: upward literal-upward motor, upward metaphor-upward motor, upward abstract-upward motor, downward literal-upward motor, downward metaphor-upward motor, downward abstract-upward motor, upward literal-downward motor, upward metaphor-downward motor, upward abstract-downward motor, downward literal-downward motor, downward metaphor-downward motor, downward abstract-downward motor. There were two counter-balanced conditions, in which the assignment of motor direction to trials was reversed.

An ordinary computer keyboard was used for the recording of motor responses. The keyboard was fixed in an upright position, remaining thus during the whole experimental session, with all keys removed except the letters A, G, and L, which were placed in a downward, central, and upward position, respectively. Their distances to the table surface were 10, 18, and 26 cm, respectively. Upward and downward keys were covered by a 5-mm red square with icon arrows of the corresponding directions. A set of concentric circles, like a small target, covered the central (resting) key. The rest of the keyboard was covered with white cardboard. Participants were seated in front of the computer screen, with their elbows resting on the table, and were instructed to use the response keyboard with their dominant hand, and the mouse with the other.

The experimental trials consisted of the following sequence: a fixation point in the middle of the screen prompted participants to press the resting key and keep it pressed while the first three segments appeared automatically in the screen, remaining 800 ms each. Then, the fourth segment with the critical verb appeared and remained 200 ms, before “jumping” upward or downward. This apparent motion was a cue for participants to release the resting key and move their dominant hand’s index finger to press the key either above or below it. After the motor response, the final segment was presented, and remained 800 ms in the screen. Finally, a memory question referring to the sentence was given, and participants responded yes or no by using one of the two keys of the mouse with their non-dominant hand. The practice trials were similar to the experimental ones, except that the former were followed by motor response feedback.

Results

Four participants were discarded from the analyses because they gave more than 10% wrong responses in the memory task. The average error rate for the motor response was very small (less than 1%). Outliers exceeding the mean reaction times by two SDs were also excluded from the analyses (4.2% of data). Several measures were collected for analysis. The releasing time (from the animation onset to the release of the resting key) and the key-pressing time (from the release to the pressing of one directional key) are components of the same response, and so we decided to use the sum of the two as a single dependent measure, which we called motor response time. We also analyzed the time and accuracy of the responses to the memory questions. Repeated measures analyses of variance (ANOVAs) including Sentence type, Semantic direction, and Motor direction were performed for each of the above dependent measures, both by item (F1) and by participant (F2). Additional Semantic direction × Motor direction ANOVAs were also run for each Sentence type separately, as well as t-tests between pairs of conditions sharing the same directional motor response. We will only report significant effects.

Motor Response Times

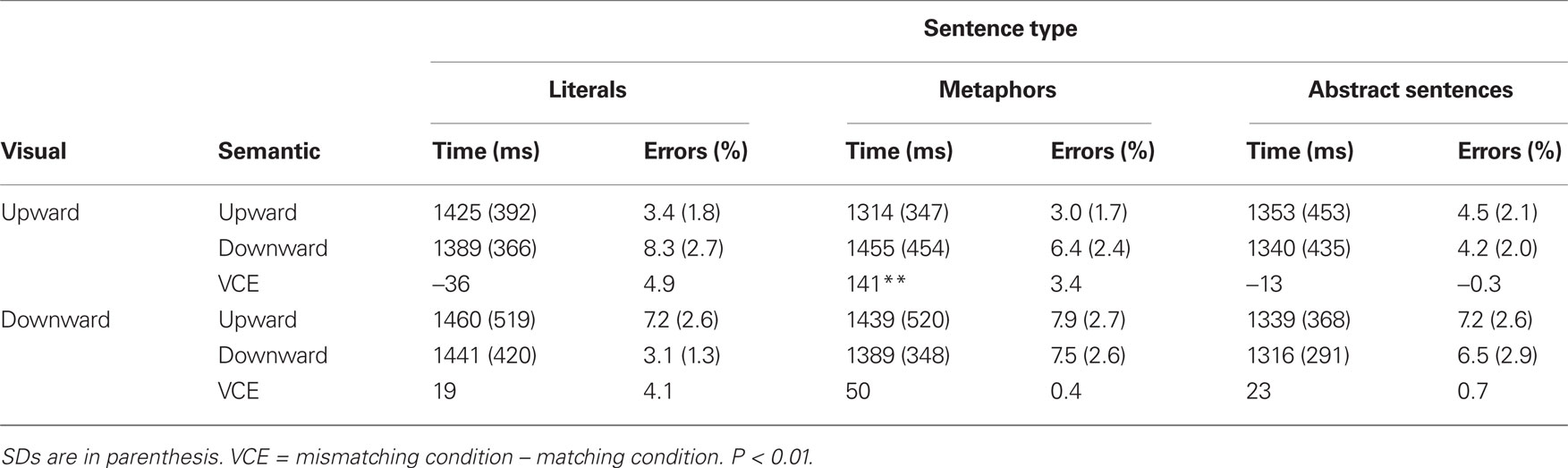

There was a main effect of Motor direction: [F1(1, 55) = 40.188, MSe = 86877.74; F2(1, 90) = 9.15, MSe = 19773.01, p ≤ 0.0001]; specifically, downward responses were faster (M = 749 ms) than upward responses (M = 785 ms). However, this effect was modulated by the important Motor direction × Semantic direction interaction: F1(1, 55) = 21.79, MSe = 87347.48, p ≤ 0.000; F2(1, 90) = 9.15, MSe = 19773.01, p ≤ 0.003. This interaction consists of a matching < mismatching pattern, as shown in Figure 1. Moreover, the Motor direction × Semantic direction interaction was significant for each type of sentence analyzed separately: literals [F1(1, 55) = 4.58; MSe = 4798.17, p ≤ 0.037; F2(1, 30) = 2.13, n.s.], metaphors [F1(1, 55) = 10.63; MSe = 4090.46, p ≤ 0.002; F2(1, 30) = 6.62, MSe = 2444.53, p ≤ 0.015], and abstract sentences [F1(1, 55) = 6.99; MSe = 3438.67, p ≤ 0.01; F2(1, 30) = 1.70, n.s.], indicating that the matching < mismatching pattern was shared by the three types of sentences. Further t-tests performed for each pair of matching-mismatching conditions revealed significant effects both for upward [t(55) = 2.38, p ≤ 0.021] and downward [t(55) = 2.60, p ≤ 0.012] motor response in metaphors; for upward motor responses [t(55) = 2.181, p ≤ 0.033] in abstract sentences, and for upward motor responses [t(55) = 2.332, p ≤ 0.023] in literals.

Figure 1. Experiment 1: Mean of motor responses times, as a function of Sentence type, Sentence direction, and Motor direction. The vertical lines indicate the SDs, and the stars (*) correspond to significant matching-mismatching pairwise comparisons (p < 0.05).

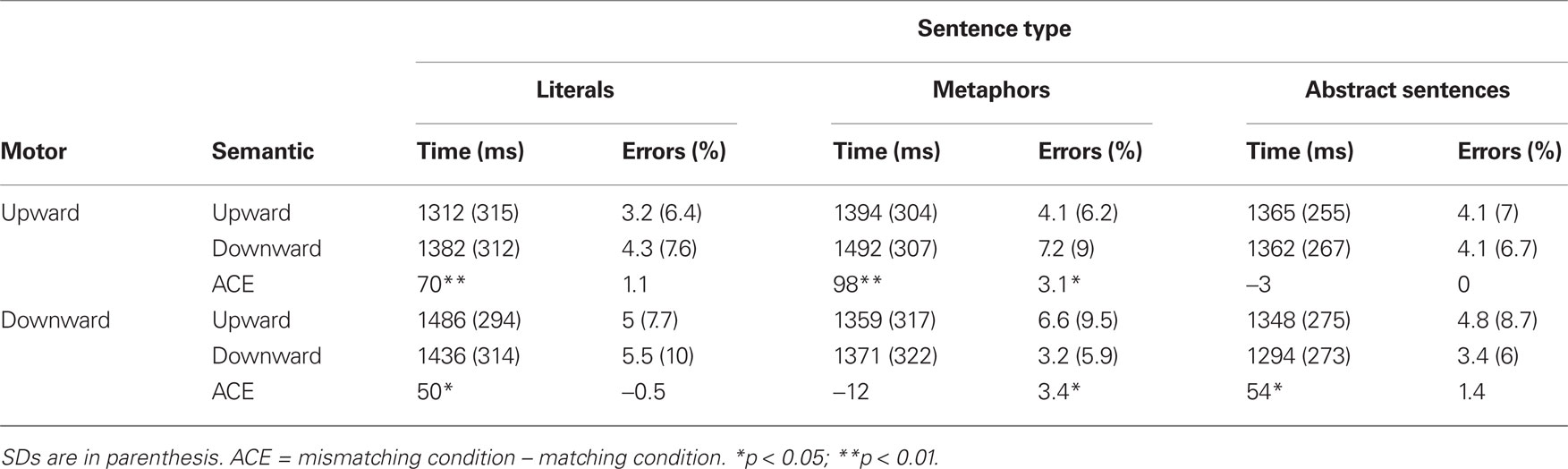

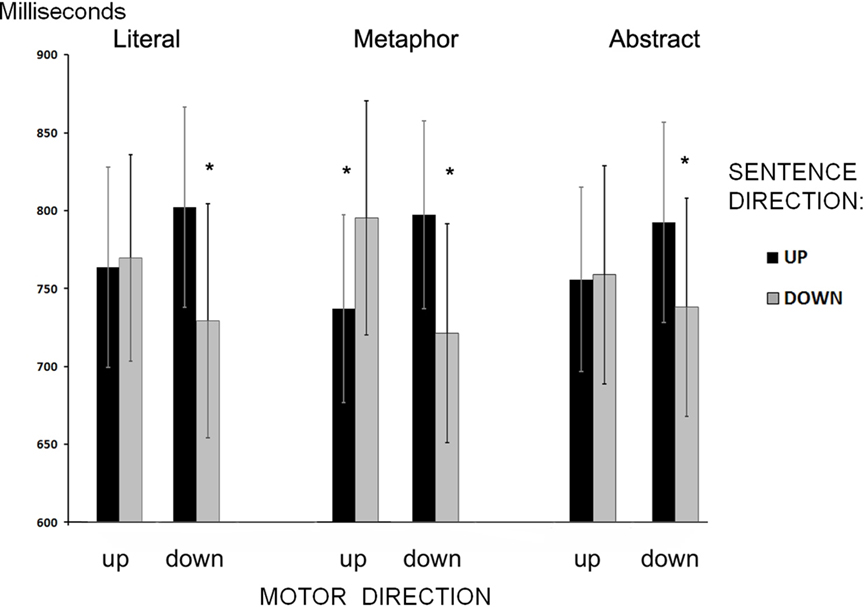

Response Times in the Memory Task

The most important result was the significant Semantic direction × Motor direction interaction [F1(1, 55) = 3.83, MSe = 284478.48, p ≤ 0.05; F2(1, 90) = 19.19, MSe = 14988.06, p ≤ 0.0001]. This interaction was significant for literals [F1(1, 55) = 6.07, MSe = 17154.01, p < 0.02; F2(1, 30) = 11.22, MSe = 14821.93, p ≤ 0.002], and for abstract sentences [F2(1, 30) = 6.63, MSe = 8353.65, p ≤ 0.016] showing the matching < mismatching pattern. The t-tests confirmed this pattern for upward motor direction [t(55) = 3.48, p ≤ 0.0001] in metaphors, for downward motor direction [t(55) = 2.08, p ≤ 0.042] in abstract sentences, and for both upward [t(55) = 3.58, p ≤ 0.001] and downward [t(55) = 2.08, p ≤ 0.042] motor direction in literals. These results are shown in Table 2.

Other results with less theoretical interest for this paper were the main effect of Sentence type [F1(2, 110) = 17.77, MSe = 20289.54, p ≤ 0.0001], as abstract sentences produced faster responses (M = 1342) than metaphors (M = 1404), and literals (M = 1404). This effect, however, was modulated by the Sentence type × Semantic direction [F1(2, 110) = 9.122, MSe = 195866.86, p ≤ 0.0001] and by the Sentence type × Motor direction [F1(2, 110) = 6.15, MSe = 582186.81, p ≤ 0.01].

Errors in the Memory Task

The mean percentages of errors are shown in Table 2. The ANOVA revealed a Semantic direction × Motor direction interaction [F1(1, 55) = 5.64, MSe = 58.53, p ≤ 0.021], consisting of smaller number of errors for matching than for mismatching conditions. Separate analyses performed for each sentence type only confirmed a significant Semantic direction × Motor direction interaction for metaphors [F1(1, 55) = 9.78, MSe = 59.44, p ≤ 0.003]. The t-tests confirmed statistically significant differences for upward [t(55) = 2.63, p ≤ 0.01] and downward motor responses [t(55) = 2.12, p ≤ 0.04] in metaphors.

Discussion

Using a double task paradigm, Experiment 1 obtained robust ACE. Several facts are remarkable in the results. First, the ACE consists of the standard pattern observed in other studies: the matching conditions resulted in a better performance than the mismatching conditions, confirming that the reading of the experimental sentences activates embodied representations of vertical motions online. Second, the meaning-action effects were observed in two different moments, modulating the speed of the finger motion task that immediately followed the sentence verb, as well as the speed and accuracy of the memory task recorded at the end of sentence. This fact suggests a symmetric meaning-action modulation, as will be argued in the general discussion. In other words, not only does the sentence meaning modulate the performance of a motor action, but the motor action also modulates the comprehension of the sentence. Third, the ACE was obtained in all three types of sentences: in literal sentences describing upward/downward motions, in metaphors and even in abstract sentences that shared meaning with metaphors, indicating that the effects were not specifically associated with the action-verbs, but with the metaphorical domain underlying these sentences. Interestingly, the ACE was apparently more conspicuous in metaphors (significant both for upward and downward movements) than in the other types of sentences (only significant for upward movements), although the sentence type × motor direction × semantic direction interaction was not significant [F(1, 55) < 1].

Experiment 2

Experiment 1 confirmed our predictions for meaning-action effects, supporting the embodied cognition approach to metaphorical meaning. Moreover, the activation of embodied representations was found to occur online while participants were reading the sentence verb and extended to the end of sentence. Notice, however, that an apparent motion of the sentence verb cued the upward/downward finger motion in the same direction. Consequently, there was a possible confusion between the visual motion effects and the motor response effects. The apparent motion might produce a compatibility effect itself, indistinguishable from the meaning-action interaction. In this respect, some papers have reported that the apparent motion of visual stimuli could affect the comprehension of a simultaneous sentence with a meaning matching or mismatching the direction of the visual motion (e.g., Kaschak et al., 2005). To avoid this confusion, in Experiment 2 the upward/downward finger motion was prompted by a color change of the critical verb, rather than its apparent motion. This ensured that the static visual cue could not produce any compatibility effect itself, and the obtained effects, if any, could only be attributed to the meaning-action compatibility.

Method

Participants

Thirty-seven native Spanish-speaking undergraduates took part, in exchange for academic credits.

Materials, Design, and Procedure

The experimental sentences and their distribution in the experimental session were the same as in Experiment 1. The design was exactly the same than in the previous experiment: a 3 Sentence type × 2 Motor direction × 2 Semantic direction repeated measures factorial design. The procedure was also the same, except that in this case the target word did not move, but rather changed its color as a prompt to move the hand upward or downward. Thus, the first three segments were presented in black, each lasting 800 ms, while participants kept the resting key pressed. Then the target word appeared in black and after 200 ms it turned blue or red and remained so until the participant’s response. For half of the participants the red color was a cue to move their hand upward, and the blue color was a cue to downward hand movement, while for the remaining participants the assignment of colors to directions was reversed. Given the novelty of the color response cues, participants received 40 training trials to learn how to use the motor response system, at the beginning of the session, before the practice and the experimental trials. Each training item mimicked the events sequence of the experimental trials, except that each visual segment consisted of sets of pseudowords rather than words and, consequently, there were not comprehension questions.

The response keyboard was arranged vertically as in Experiment 1, and the keys assigned to the upward or downward direction were covered with the assigned red or blue colors. The resting key was covered with concentric circles like a small target. Each participant was randomly assigned to one of the four counterbalancing conditions, resulting from changing the motor direction and color assignation. Each of the counterbalancing conditions of Experiment 1, thus, produced two conditions in Experiment 2: “red-up, blue-down” and “red-down, blue-up.”

Results

One participant was excluded from analyses for giving more than 10% wrong responses on the memory task. Outliers were also discarded, following the same trimming criteria as in Experiment 1. ANOVAS for motor response times (releasing time + key-pressing time) and for semantic response times and accuracy were conducted by participants (F1) and by items (F2).

Motor Response Times

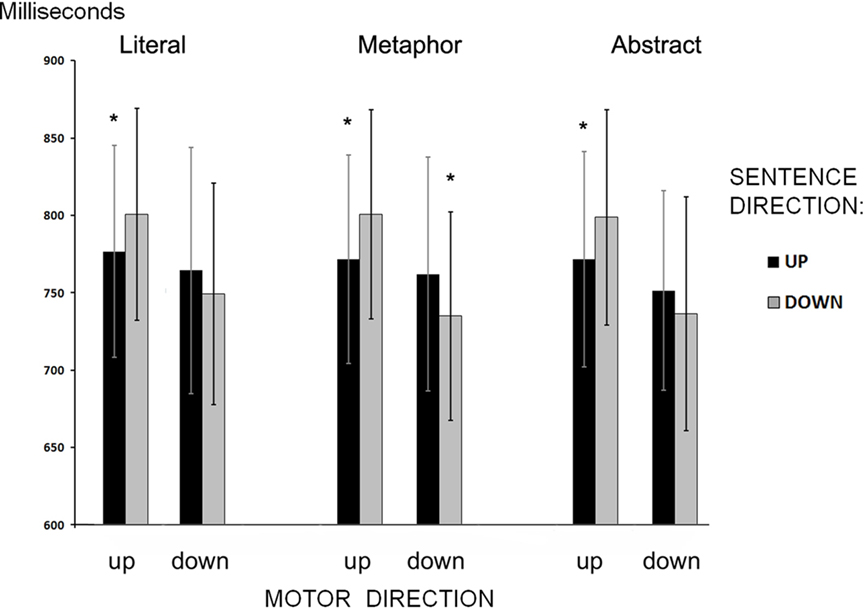

There was a main effect of Motor direction, consisting of faster responses for the downward than for the upward direction: F2(1, 90) = 18.29, MSe = 1771.88, p ≤ 0.0001. However, this effect was modulated by the important Motor direction × Semantic direction interaction: F1(1, 35) = 28.18, MSe = 7942.08, p ≤ 0.0001; F2(1, 90) = 54.48, MSe = 1771.88, p ≤ 0.0001. This interaction consisted of the matching < mismatching pattern observed in the previous experiment (see Figure 2). Furthermore, the Motor direction × Semantic direction interaction was also significant for each of the sentences types analyzed separately: literals: F1(1, 35) = 10.75, MSe = 5401.41, p ≤ 0.02; F2(1, 30) = 17.30, MSe = 22351.15, p ≤ 0.0001; metaphors: F1(1, 35) = 27.43, MSe = 5982.05, p ≤ 0.0001; F2(1, 30) = 38.12, MSe = 65440.09, p ≤ 0.0001; and abstract sentences: F1(1, 35) = 6.65, MSe = 4514.99, p ≤ 0.014; F2(1, 30) = 7.65, MSe = 17644.88, p ≤ 0.010. The t-test pairwise comparisons confirmed the matching < mismatching pattern both for upward [t(35) = 2.54, p ≤ 0.016] and downward [t(35) = 3.67, p ≤ 0.0001] motor direction in metaphors, and only for downward motor responses in abstract sentences [t(35) = 2.44, p ≤ 0.019] and in literals [t(35) = 3.86, p ≤ 0.0001].

Figure 2. Experiment 2: Mean of motor responses times as a function of Sentence type, Sentence direction, and Motor direction. The vertical lines indicate the SDs, and the stars (*) correspond to significant matching-mismatching pairwise comparisons (p < 0.05).

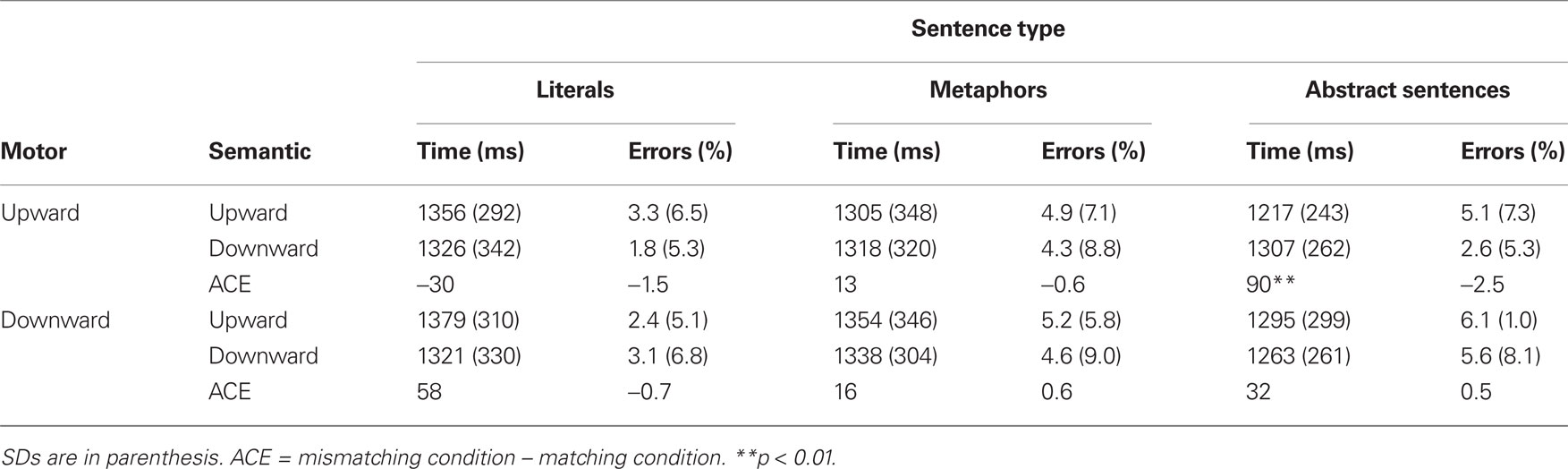

Response Times in the Memory Task

A main effect of Sentence types was observed: F1(2, 70) = 9.91, MSe = 22570.52, p ≤ 0.0001, consisting of faster responses for abstract sentences (M = 1270) than for metaphors (M = 1328), and for literals (M = 1345). However, the most important result was once again the significant Motor direction × Semantic direction interaction, illustrated in Table 3: F1(1, 35) = 5.01, MSe = 19302.44, p ≤ 0.032; F2(1, 90) = 6.24, MSe = 7241.86, p ≤ 0.014. When each sentence type was analyzed separately, the matching < mismatching pattern was only confirmed for abstract sentences (see Table 3): Motor direction × Semantic direction interaction F1(1, 35) = 8.24, MSe = 16187.65, p ≤ 0.007; F2(1, 30) = 6.71, MSe = 52970.06, p ≤ 0.015. The t-tests confirmed a significant effect for upward motor responses [t(35) = 3.16, p ≤ 0.003].

Errors in the Memory Task

Error analyses only showed a main effect of Sentence type F1(2, 70) = 3.67, MSe = 0.006, p ≤ 0.031. Specifically, literals produced fewer errors (M = 2.5%) than metaphors (M = 4.75%) and abstract sentences (M = 5%).

Discussion

In Experiment 2 the visual motion was removed from the task, whereas the vertical hand motion was preserved. In spite of this, the meaning-action effects were virtually the same as in the previous experiment. The matching < mismatching pattern was found in the motor response times for all three sentence types: literals, metaphors, and abstract sentences. Again metaphors showed significant ACE both for upward and downward movements, whereas the abstract and literal sentences only showed ACE for downward movements. It was also observed in the responses times to the memory questions, but only for abstract sentences. Thus, we can conclude that there is a genuine ACE in metaphorical meaning, which is independent of visual motion. The next experiment will test the role of visual motion alone when the directional hand motion is suppressed.

Experiment 3

The previous experiment demonstrated that the meaning-action interaction persisted after the influence of visual motion was ruled out. In this experiment, we tested whether the visual motion component alone interacts with the sentences meaning. To do that, we suppressed the directional motor response while keeping the vertical visual motion, in a go/nogo paradigm: the participants’ task was to press a single key only if the critical verb had moved regardless of the direction of its motion. If the response times were faster in the meaning-motion matching conditions than in the mismatching conditions, we might conclude that the activation of a visual motion (not only a motor motion) is associated with the sentence’s meaning.

Method

Participants

Thirty-four native Spanish-speaking undergraduates took part in the experiment voluntarily, in exchange for academic credits.

Materials, Design, and Procedure

This experiment employed the same experimental sentences and fillers (static scenarios) as in Experiment 1 and 2 were employed. In addition, 24 experimental-like fillers (eight literals, eight metaphors, and eight abstract sentences) were created for this experiment. The experimental task and procedure, however, differed considerably from those used in the previous experiments, because in this experiment the motor task always consisted of the same key-pressing motion, without any vertical displacement of the hand. Participants were instructed to press the key (go trials) when the critical verb moved upward or downward, and to refrain from pressing the key otherwise (nogo trials). All of the experimental trials (96), and half of the static scenario fillers (25) were go trials. The remaining static scenario fillers (25), and all of the experimental-like fillers (24) were nogo trials. The experimental design was similar to the one used in the previous experiments, except that, as explained above, Visual direction substituted Motor direction. Thus, a 3 Sentence types × 2 Visual direction × 2 Semantic direction repeated measures factorial design was employed.

As in the previous experiments, each trial started with a fixation point in the middle of the screen, following which the sentence was presented automatically in three segments that remained visible for 800 ms each. In the go trials, the fourth segment, which contained the critical verb, “jumped” up or down 200 ms after its onset. This apparent motion was a cue for pressing the key (the spacebar in the keyboard) with the participants’ dominant hand; after the participant’s response the final segment was presented for another 800 ms. Finally, the yes/no question was given, and participants were required to answer using the mouse with their non-dominant hand.

Results

One participant was discarded from the analyses because she did not produce any motor response. Outlying data were also excluded, following the same trimming criteria as in Experiment 1 (4.1% of data).

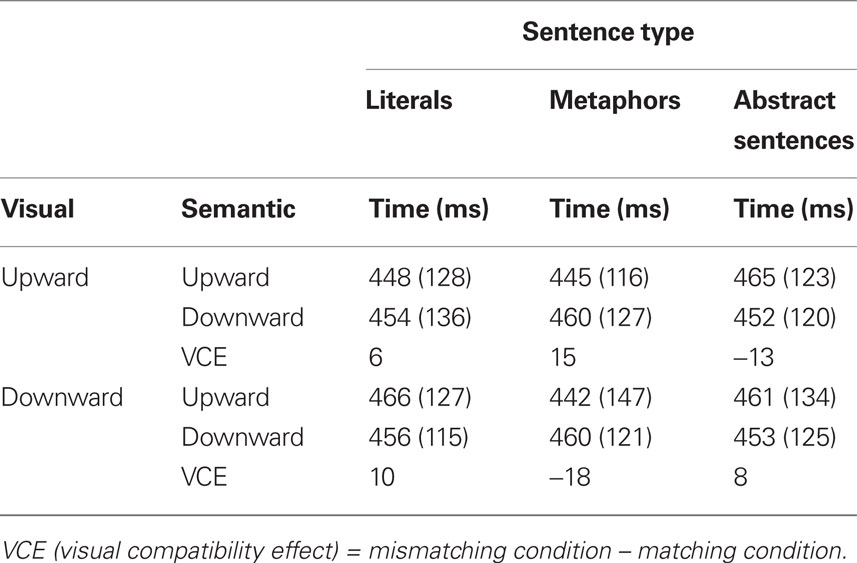

Motor Response Times

Only a significant Sentence type × Semantic direction was found F1(2, 64) = 3.54, MSe = 1766.02, p ≤ 0.035. t-Tests between pairs of conditions did not show any significant difference (see Table 4).

Response Times in the Memory Task

A main effect of Sentence type F1(2, 64) = 9.12, MSe = 31528.34, p ≤ 0.0001 was found (literals M = 1429; metaphors M = 1388; abstract sentences M = 1337). However, this was qualified by a triple Sentence type × Visual direction × Semantic direction interaction: F1(1, 32) = 5.03, MSe = 35315.13, p ≤ 0.032; F2(2, 90) = 3.50. MSe = 1501.09, p ≤ 0.034. In the simple effects analysis there was only a significant Visual direction × Semantic direction for metaphors: F1(1, 32) = 6.023, MSe = 49825.58, p ≤ 0.02; F2(1, 30) = 10.37, MSe = 151469.77, p ≤ 0.003. This interaction consists of a matching < mismatching pattern (see Table 5), which was most remarkable for upward motor responses [t(32) = 3.80, p ≤ 0.001].

Errors in the Memory Task

Error analyses only revealed a main effect of Visual direction F1(1, 32) = 14.42, MSe = 0.006, p ≤ 0.001 resulting from higher error rates for downward visual directions.

Discussion

Experiment 3 did not show the robust and general matching < mismatching pattern obtained in the previous experiments. The motor reaction times in the go trials were not sensitive either to the semantic direction implicit in the sentences, or to the visual direction of the animation, or to the interaction between the two. In other words, none of the sentence types showed meaning-motion compatibility effects in the motor response. Concerning the response times to the memory questions, there was a significant meaning-motion interaction confined to metaphors. This interaction, which involves the usual matching advantage, is rather surprising because it was not observed in the motor responses themselves but it was delayed until the memory task. In other words, the mismatching of the sentence meaning and the visual animation interferes with sentence comprehension, but only for metaphors. Combining the results of this experiment with those of the previous ones, we may conclude that visual motion is a less important component of metaphorical conceptualization than motor motion. This is an apparent limitation to the hypothesis that metaphors are grounded on sensory-motor processes. The current literature on embodied meaning indicates that activations could be multimodal; that is, not only motor traces, but also visual and other modalities traces could be activated in comprehension (e.g., Kaschak et al., 2005; Barsalou et al., 2008; Glenberg et al., 2008; Rueschemeyer et al., 2010). One possibility, however, is that the visual motion stimuli used here for each trial (a single up or down hand motion) were too weak to produce effects on the concurrent comprehension task. Compare, for instance, this procedure with Kaschak et al.’s (2005) study, in which they obtained the expected meaning-motion effects for literal sentences presenting to the participants a continuous visual apparent motion during 35 s, while they listened to and judged the sensibility of motion-related sentences. Further research will be necessary before taking definite conclusions on the role of visual motion in metaphorical meaning.

General Discussion

In this study, the participants’ task was simply to read sentences for comprehension without making any bipolar categorical judgments. Consequently, the observed effects demonstrated that ordinary comprehension of up/down metaphors or equivalent abstract sentences elicited body motions along the vertical axis (Experiments 1 and 2). By contrast, the role of a visual motion component associated with the comprehension of these sentences was less clear, except for the delayed effect obtained with metaphors (Experiment 3). The body movement along the up/down axis, not the perceptual movement, was thus critical to the representation of metaphorical meaning. This is consistent with similar observations in the comprehension of “time as space” metaphors. Thus, Sell and Kaschak (2010) found that participants were faster to respond to future time shifts (e.g., “Tomorrow she will learn about paint brushes”) when performing motions away from their body, and faster to respond to past time shifts (e.g., “Yesterday she learned about paint brushes”) when performing motions toward their body. However, in another version of the task participants were asked to respond without any hand motion (the finger was always ready on the appropriate response key) and the meaning-action effect disappeared. The action component in “time as space” metaphors derives from the fact that time, quantity, and actions in the peripersonal space are correlated in human experience, and even share brain mechanisms (Walsh, 2003; Casasanto and Boroditsky, 2008). However, the correspondence between meaning and action in up/down metaphors obtained here is less obvious. Why should the phrase “…made him rise to victory” activate an upward body action? Even assuming, as Lakoff and Johnson (1980) did, that some metaphorical concepts are organized along the up/down axis, they could involve just a visual motion or even a static position on the up/down axis. Lakoff and Johnson seem to think of static up/down organization of metaphorical domains rather than up/down body motions when they say: “happy is up; sad is down,” or “good is up; bad is up,” “power is up;” etc.

One possible explanation for the motoric component of metaphors (and homologous literals) is that most refer to human characters that reach an upward or downward state as a consequence of their own actions, rather than being passively taken there by external uncontrolled forces. A more substantial explanation is that most up and down metaphors (and homologous literals) convey positive and negative emotional valences, respectively, and emotional valences, on their side, could be associated with vertical body actions and postures. According to Darwin’s (1872/1998) evolutionary approach, communication of emotion by body movements occupies a privileged position as emotions embody action schemes that have evolved in the service of survival. Partial empirical support to this idea comes from the field of bodily expressions of emotions. For instance, the expressions of joy and proud involve sometimes upward body movements, whereas the expressions of sadness and disgust are more closely associated with downward body actions or postures (e.g., Wallbott, 1998; Atkinson et al., 2004). If so, it could be possible that up metaphors elicit upward body movements, because of their positive valence; and down metaphors elicit downward body movements, as a consequence of their negative valence. In sum, we could tentatively posit that up/down metaphors are grounded on bodily emotional expressions, providing thus a biological explanation of metaphors origins. However, this claim requires additional research that is beyond the scope of this paper. Meanwhile, caution is necessary before carrying conclusions too far. Bodily expressions of positive and negative emotions are rather complex and do not solely rely upon the vertical dimension. More frequently it has been reported that positive/negative stimuli automatically trigger approach/avoidance movements (e.g., Chen and Bargh, 1999; Wentura et al., 2000; Rotteveel and Phaf, 2004; Rinck and Becker, 2007), which clearly differ from the upward/downward movements observed here for metaphors.

The current results are also comparable to those reported by Wilson and Gibbs (2007) supporting embodied meaning activation during the comprehension of action-related metaphors. They found that performing or imagining a body motion appropriate to the metaphorical sentences improved their comprehension. Thus, participants were faster to read “grasp a concept” after they had previously made or imagined making a grasping movement, than after first making a mismatching body action or no movement at all. However, the present study differs from Wilson and Gibbs’s one (hereinafter W&G) in important respects. First, the effects observed by W&G consisted of action-meaning priming, whereas the current ACE was meaning-action priming. W&G used the body action as an antecedent event that influenced metaphor comprehension; consequently they conclude: “appropriate body action enhances people’s embodied, metaphorical construal of abstract concepts that are referred to in metaphorical phrases” (p. 721). By contrast, in our ACE’s experiments metaphor comprehension occurred first and influenced a motor event that followed. Consequently, our conclusion differs from that arrived at by W&G: processing the meaning of up/down metaphors (and homologous literals) involves an unprimed motor activation that modifies the performance of a following physical action. Second, W&G used specific actions (grasping, pushing, chewing, etc) as primes for metaphorical phrases, and therefore their action-meaning priming could force the activation of specific motor programs. By contrast, in our experiments the actions referred to by the sentences and the body action only shared the up/down directional feature. Thus, the sentences varied considerably in the sensory-motor features of the vertical motions being referred to (compare “falling sick” and “burying hopes”), whereas the requested body action was always a simple upward/downward finger motion. In spite of that, meaning-action interaction occurred. This suggests that actions are simulated at a relatively abstract level of motor processing (e.g., gross directional parameters) rather than in detail, e.g., at the level of specific motor programs. The relatively “abstract” character of motor simulations was also observed in other ACE studies involving sentences referring to concrete actions with different effectors (“throwing a stone” vs “kicking a football”) and even abstract events like information transfer (“telling the story”), and in all cases the ACE with a concurrent away/toward hand motion occurred (Glenberg and Kaschak, 2002; Glenberg et al., 2008).

In the traditional ACE paradigm the motor response was associated with a semantic task, such as a sensibility judgment generally placed at the end of a sentence (e.g., Glenberg and Kaschak, 2002; Borreggine and Kaschak, 2006; Glenberg et al., 2008; Sell and Kaschak, 2010). By contrast, the procedure used here allowed for the testing of meaning-action effects at two different loci, dissociating the motor response task from the memory task. The motor task immediately followed the motion verb providing a first glance at early meaning-action effects, whereas the memory task placed at the end of the sentence showed long-term meaning-action effects. Furthermore, the two meaning-action effects might have different theoretical value. As mentioned above, the ACE in the motor task indicates that the sentence meaning modulates a subsequent body action. By contrast, the ACE in the memory task might offer information on the reversed process: how a previous body action modulates the comprehension of the sentence. In other words, the two ACEs support the idea of a mutual influence between the meaning of orientational sentences and the corresponding actions. These two-way effects in orientational sentences depart from other spatial metaphors. Particularly, temporal metaphors are asymmetric: the representation of time is modulated by spatial information, whereas the representation of space is not modulated by temporal information (Lakoff and Johnson, 1980; Casasanto and Boroditsky, 2008; Merritt et al., 2010).

The meaning-action effects obtained here were remarkably similar for the three types of sentences, indicating that all are grounded on embodied representations. Let’s consider first the literal sentences that provide a baseline condition. Basically, the results for literal orientational sentences confirm other ACEs reported in the literature, supporting the idea that the comprehension of concrete motion-related language activates sensory-motor representations (Glenberg and Kaschak, 2002; Buccino et al., 2005; Zwaan and Taylor, 2006; Glenberg et al., 2008). On the other hand, the ACE obtained for metaphors clearly shows that the abstract meaning of these sentences also activates sensory-motor representations of vertical motions. The metaphors employed in this study represent a broad sample of typical metaphors, referring to abstract events such as changes in mood, power, status, health, wealth, etc. However, metaphors as those used in this study are a sort of concrete-abstract hybrid, because their “tenor” or meaning is abstract whereas their “vehicle” includes concrete words, such as the motion verbs employed in this study. It is possible that the ACE obtained here was simply a lexically-driven phenomenon associated with the processing of motion verbs. However, we must remember that one important claim of Lakoff and Johnson (1980) was that metaphors are not just linguistic expressions, but conceptualizations. The strongest test of the notion of conceptual metaphors comes from the results observed here with abstract sentences, in which action and motion verbs were absent. We can consider these sentences as literal descriptions of underlying orientational concepts; for instance, “succeeding” is a literal expression corresponding to the “good is up” conceptual metaphor, and “failing” is a literal expression of the “bad is down” conceptual metaphor. With this assumption in mind we selected for the experiments literal sentences belonging to either the up or down pole of the orientational metaphorical system. When the upward/downward codes for abstract sentences were entered in the statistical analysis we obtained exactly the same meaning-action matching advantage as for the other sentences. This result supports the strong argument that metaphors are not just “nice sentences” but rather expressions of a deeper conceptual organization that not only underlies metaphorical utterances but also penetrates literal language. In other words, bipolar notions such as power-lack of power, health-sickness, good-bad, success-failure, and the like are primarily conceptualized as upward-downward body actions. This metaphorical conceptualization determines the emergence and use of many up/down metaphorical expressions in English and other languages, but even when we use literal sentences to express ideas of a metaphorical domain vertical body actions are activated, suggesting that the mapping occurs between concepts and actions rather than between action-related words and actions.

This paper has demonstrated that understanding metaphors (and homologous literals) activates motor representations online, which modulate performance on a concurrent motor task. However, skeptical arguments are still possible. For instance, it might be the case that the activation of body actions associated with orientational sentences does not constitute the core meaning of metaphors. Thus, the semantics of motion verbs employed in metaphors is complex conveying not only motion features, but also more abstract, disembodied components such as sources, goals, pathways, changes of state, etc. (Jackendoff, 1996; Chatterjee, 2010). Consider the metaphor: “…made him rise to victory;” its core meaning could be based on the abstract notion “arrival at a new state” conveyed by the verb, and the body action component could play a marginal role (Chatterjee, 2010). However, this epiphenomenal explanation has some drawbacks. First, some of these “abstract” components of verb meaning could themselves be considered metaphorical extensions of action parameters. For instance, sources, goals, pathways and changes of states are sensory-motor components of human performance. Second, as we have already argued, not only does meaning modulate the performance of an incoming action, but the reverse is also true. In other words, a previous matching or mismatching action modulates the comprehension of orientational metaphors, which strongly suggests that the motor event is a functional part of metaphorical meaning. Finally, the fact that non-orientational literals also activate vertical motions cannot be easily explained by an epiphenomenal motor component of the verbs, because the verbs in question (e.g., succeed, fail) are not action-verbs.

In sum, this paper has demonstrated that the ordinary comprehension of metaphors enacts vertical body actions online. Space is not only a static organizer for metaphorical domains in semantic memory, as Lakoff and Johnson (1980) claim, but also determines simulated body actions along the vertical axis during online comprehension of metaphorical utterances. Most importantly, abstract sentences sharing the meaning of metaphors also elicit body actions, providing a strong argument for the conceptual character of metaphors. The meaning-action modulation was two-way: the comprehension of the sentences’ meaning modulated the performance on a concurrent motion task and the motion task modified performance in the comprehension of the sentences, suggesting that the motor component is a functional feature of metaphorical meaning rather than epiphenomenal.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

This research was supported by the Spanish Ministry of Science and Innovation to Manuel de Vega (Grant SEJ2007-66916/PSIC), and by the Canary Agency of Research, Innovation and Information Society (NEUROCOG project), and the European Regional Development Fund.

References

Atkinson, A. P., Dittrich, W. H., Gemmell, A. J., and Young, A. W. (2004). Emotion perception from dynamic and static body expressions in point-light and full-light displays. Perception 33, 717–746.

Barsalou, L. W., Santos, A., Simmons, W. K., and Wilson, C. D. (2008). “Language and simulation in conceptual processing,” in Symbols, Embodiment, and Meaning, eds M. De Vega, A. M. Glenberg and A. C. Graesser (Oxford: Oxford University Press), 245–283.

Borreggine, K. L., and Kaschak, M. P. (2006). The action-sentence compatibility effect: it’s all in the timing. Cogn. Sci. 30, 1097–1112.

Buccino, G., Riggio, L., Melli, G., Binkofski, F., Gallese, V., and Rizzolatti, G. (2005). Listening to action-related sentences modulates the activity of the motor system: a combined TMS and behavioural study. Cogn. Brain Res. 24, 355–363.

Casasanto, D. (2009). Embodiment of abstract concepts: good and bad in right- and left-handers. J. Exp. Psychol. Gen. 138, 351–367.

Casasanto, D., and Boroditsky, L. (2008). Time in the mind: using space to think about time. Cognition 106, 579–593.

Chen, S., and Bargh, J. A. (1999). Consequences of automatic evaluation: immediate behavior predispositions to approach or avoid the stimulus. Pers. Soc. Psychol. Bull. 25, 215–224.

Crawford, L. E., Margolies, S. M., Drake, J. T., and Murphy, M. E. (2006). Affect biases memory of location: evidence for the spatial representation of affect. Cogn. Emot. 20, 1153–1163.

Darwin, C. (1872). The Expression of the Emotions in Man and Animals. London: Murray (Reprinted, Oxford: Oxford University Press, 1998).

Gibbs, R. W. (1994). The Poetics of Mind: Figurative Thought, Language, and Understanding. New York: Cambridge University Press.

Glenberg, A. M., and Kaschak, M. P. (2002). Grounding language in action. Psychon. Bull. Rev. 9, 558–565.

Glenberg, A. M., Sato, M., Cattaneo, L., Riggio, L., Palumbo, D., and Buccino, G. (2008). Processing abstract language modulates motor system activity. Q. J. Exp. Psychol. 61, 905–919.

Ito, Y., and Hatta, T. (2004). Spatial structure of quantitative representation of numbers: evidence from the SNARC effect. Mem. Cognit. 32, 662–673.

Jackendoff, R. (1996). “The architecture of the linguistic-spatial interface,” in Language and Space, eds P. Bloom, M. A. Peterson, L. Nadel and M. F. Garrett (Cambridge, MA: The MIT Press), 1–30.

Johnson, M. (1987). The Body in the Mind: The Bodily Basis of Meaning, Imagination, and Reason. Chicago: University of Chicago Press.

Johnson, M., and Lakoff, G. (2002). Why cognitive linguistics requires embodied realism. Cogn. Linguist. 13, 245–263.

Joseph, R. A., Giesler, R. B., and Silvera, D. H. (1994). Judgment by quantity. J. Exp. Psychol. Gen. 123, 21–32.

Kaschak, M. P., and Borregine, K. L. (2008). Is long-term structural priming affected by patterns of experience with individual verbs? J. Mem. Lang. 58, 862–878.

Kaschak, M. P., Madden, C. J., Therriault, D. J., Yaxley, R. H., Aveyard, M., Blanchard, A. A., and Zwaan, R. A. (2005). Perception of motion affects language processing. Cognition 94, B79–B89.

Lakoff, G. (1987). Women, Fire, and Dangerous Things: What Categories Reveal About the Mind. Chicago: University of Chicago.

Meier, B. P., and Robinson, M. D. (2004). Why the sunny side is up: associations between affect and vertical position. Psychol. Sci. 15, 243–247.

Meier, B. P., and Robinson, M. D. (2006). Does “feeling down” mean seeing down?: depressive symptoms and vertical selective attention. J. Res. Pers. 40, 451–461.

Merritt, D. J., Casasanto, D., and Brannon, E. M. (2010). Do monkeys think in metaphors? Representations of space and time in monkeys and humans. Cognition 117, 191–202.

Moeller, S. K., Robinson, M. D., and Zabelina, D. L. (2008). Personality dominance and preferential use of the vertical dimension of space: evidence from spatial attention paradigms. Psychol. Sci. 19, 355–361.

Pulvermüller, F., Shtyrov, Y., and Ilmoniemi, R. J. (2005). Brain signatures of meaning access in action word recognition. J. Cogn. Neurosci. 17, 884–892.

Rinck, M., and Becker, E. S. (2007). Approach and avoidance in fear of spiders. J. Behav. Ther. Exp. Psychiatry 38, 105–120.

Rotteveel, M., and Phaf, R. H. (2004). Automatic affective evaluation does not automatically predispose for arm flexion and extension. Emotion 4, 156–172.

Rueschemeyer, S. A., Pfeiffer, C., and Bekkering, H. (2010). Body schematics: on the role of the body schema in embodied lexical–semantic representations. Neuropsychologia 48, 774–781.

Santiago, J., Lupiáñez, J., Pérez, E., and Funes, M. J. (2007). Time (also) flies from left to right. Psychon. Bull. Rev. 14, 512–516.

Schubert, T. W. (2005). Your highness: vertical positions as perceptual symbols of power. J. Pers. Soc. Psychol. 89, 1–21.

Sell, A. J., and Kaschak, M. P. (2010). Processing time shifts affects the execution of motor responses. Brain Lang. 1, 39–44.

Torralbo, A., Santiago, J., and Lupiáñez, J. (2006). Flexible conceptual projection of time onto spatial frames of reference. Cogn. Sci. 30, 745–757.

Walsh, V. (2003). A theory of magnitude: common cortical metrics of time, space and quantity. Trends Cogn. Sci. 7, 483–488.

Wentura, D., Rothermund, K., and Bak, P. (2000). Automatic vigilance: the attention-grabbing power of approach and avoidance-related social information. J. Pers. Soc. Psychol. 78, 1024–1037.

Wilson, N., and Gibbs, R. (2007). Real and imagined body movement primes metaphor comprehension. Cogn. Sci. 31, 721–731.

Keywords: embodied cognition, orientational metaphors, language comprehension, action-related language, positive valence, negative valence

Citation: Santana E and de Vega M (2011) Metaphors are embodied, and so are their literal counterparts. Front. Psychology 2:90. doi: 10.3389/fpsyg.2011.00090

Received: 09 February 2011; Paper pending published: 02 March 2011;

Accepted: 26 April 2011; Published online: 10 May 2011.

Edited by:

J. Toby Mordkoff, University of Iowa, USAReviewed by:

Anna M. Borghi, University of Bologna, ItalySascha Topolinski, Universität Würzburg, Germany

Max Louwerse, University of Memphis, USA

Copyright: © 2011 Santana and de Vega. This is an open-access article subject to a non-exclusive license between the authors and Frontiers Media SA, which permits use, distribution and reproduction in other forums, provided the original authors and source are credited and other Frontiers conditions are complied with.

*Correspondence: Manuel de Vega, Facultad de Psicología, Campus de Guajara, 38205 La laguna, Tenerife, Spain. e-mail:bWRldmVnYUB1bGwuZXM=