- 1 Rotman Research Institute at Baycrest, Toronto, ON, Canada

- 2 Department of Psychology, University of Toronto, Toronto, ON, Canada

- 3 Department of Psychiatry, University of Toronto, Toronto, ON, Canada

Face recognition is impaired when changes are made to external face features (e.g., hairstyle), even when all internal features (i.e., eyes, nose, mouth) remain the same. Eye movement monitoring was used to determine the extent to which altered hairstyles affect processing of face features, thereby shedding light on how internal and external features are stored in memory. Participants studied a series of faces, followed by a recognition test in which novel, repeated, and manipulated (altered hairstyle) faces were presented. Recognition was higher for repeated than manipulated faces. Although eye movement patterns distinguished repeated from novel faces, viewing of manipulated faces was similar to that of novel faces. Internal and external features may be stored together as one unit in memory; consequently, changing even a single feature alters processing of the other features and disrupts recognition.

Introduction

Identifying faces is critical for everyday life. However, eyewitness identification research has shown that our memories for previously viewed faces is often imperfect, and can be highly malleable (Weingardt et al., 1995), such that erroneous eyewitness identification of the “culprit” has been reported as the most common reason for convicting the innocent (Wells et al., 1998). One potential factor underlying this effect may be the change in the culprit’s appearance from initial viewing to subsequent identification in a police line-up. Indeed, previous research has reported that transformations made to a face across viewings (Shapiro and Penrod, 1986), such as changing a face’s viewing angle, expression (Woodhead et al., 1979; Krouse, 1981; Bruce, 1982; Bartlett and Leslie, 1986), facial hair (Patterson and Baddeley, 1977), or adding/removing glasses to a face (Terry, 1993), can each impair recognition.

Interestingly, however, while such changes alter the way in which the internal features of the face are portrayed – and thus it would seem intuitive that subsequent recognition would be impaired – changes to external features, such as one’s hairstyle, have also been found to impair recognition of faces (Patterson and Baddeley, 1977). For example, face recognition was disrupted when the set of internal features for a previously studied face were, during the recognition test, placed onto a new face structure that included a different chin, face outline, and hair (Sinha and Poggio, 1996, 2002). Such findings suggest that the internal and external features of a face are stored holistically; that is, internal and external face features are stored together in memory as one unit (e.g., Andrews et al., 2010; Axelrod and Yovel, 2010). As a result, changing either an internal or external feature across viewings could result in processing of the face as if it were entirely new, thereby negatively impacting recognition. Alternatively, internal and external face features may be maintained separately in memory, such that altering one does not impact the processing of the other. In particular, it has been suggested that there are specialized neural mechanisms devoted to processing the eyes (Itier and Batty, 2009). In that case, a face with a manipulated hairstyle may still be processed as a face that has been previously viewed (or at least some face features, such as the eyes, would be processed as previously viewed), even if explicit recognition is impaired. Finally, a manipulated face may be processed as if the face were repeated, but this processing may not be used to guide explicit recognition. For instance, the processing of an altered external feature may signal a “novel” response, whereas the processing of the internal features signals a “repeated” response. Such conflicting response signals may produce interference and ultimately result in impaired recognition.

In the current study, we used eye movement monitoring to determine the extent to which altering an external feature would influence processing of a previously viewed face, including viewing to internal face features. Eye movements are sensitive to prior exposure; for instance, previously viewed faces are sampled with fewer eye fixations compared to novel faces (Althoff and Cohen, 1999). Differences in viewing of repeated versus novel faces can be observed early, and prior to explicit recognition responses (e.g., Althoff and Cohen, 1999; Stacey et al., 2005; Ryan et al., 2007). Moreover, eye movements are sensitive to changes that have occurred to a previously viewed stimulus, early during viewing (Riggs et al., 2010), and even in the absence of explicit awareness for what has changed (e.g., Ryan et al., 2000; Ryan and Cohen, 2004). Consequently, eye movement monitoring was used in the current work to determine the extent to which viewers’ eye movements revealed memory for previously viewed, but manipulated faces, separate from explicit recognition reports.

Participants studied a set of novel younger and older faces, followed by a recognition test during which participants viewed novel faces, faces that had been previously viewed in the same form (repeated faces), or faces that had an altered hairstyle from a previous viewing (manipulated faces). Participants were asked to identify the faces that had been previously viewed, even if the hairstyle had been altered. Eye movement patterns were analyzed with respect to the entire face, as well as to the altered external (hair) and internal features, to determine the influence of prior exposure and manipulation on face processing. Additionally, changes in eye movement patterns as a result of prior exposure were assessed with respect to the explicit recognition reports to determine the relationship between face processing and recognition.

If manipulated faces were viewed in the same manner as novel faces, this would suggest that the internal and external facial features may be stored together as one unit in memory; consequently, changing one feature would result in a face being processed as “novel.” However, if the internal features of manipulated faces were viewed in the same manner as repeated faces, and there were a change in the viewing of the external feature (hair) between manipulated and repeated faces, such findings would suggest that internal and external face features are stored separately in memory. If viewing of manipulated faces were distinguished from viewing of both repeated and novel faces, two explanations could explain this finding. First, this could suggest that the internal and external facial features are stored separately, but the memory for the altered face feature may influence the processing of other, unaltered, face features. Alternatively, this could suggest that the face features are stored together in memory, and viewers are matching the holistic representations against the presented stimulus in an effort to obtain an overall “similarity” signal. To then distinguish between these two possibilities, it would be useful to examine viewing to the specific face features. If viewers are maintaining features separately in memory, eye movements may reveal that the hair is the manipulated feature, whereas other features are repeated. However, if viewers are obtaining an overall similarity signal, viewers may not have specific, detailed memory for which distinct features have been altered versus repeated. Ultimately, the findings from the current work will contribute to our understanding of how faces are processed and stored in memory.

Materials and Methods

Participants

Twenty-four younger adults [age range = 19–27 (mean: 22.5, SD: 2.5)] from the Rotman Research Institute participant pool participated in exchange for monetary compensation. All participants had normal or corrected-to-normal vision.

Apparatus

Stimuli were presented on a 19′′ Dell M991 monitor (resolution 1024 × 768) from a distance of 24′′. A head-mounted SR Research Ltd. EyeLink II system monitored eye movements with a temporal resolution of 2 ms. Eye movement calibration was repeated if the error at any calibration point was greater than 1° or if the mean error for all nine calibration points was greater than 0.5°.

Stimuli and Design

Twenty younger and 20 older female faces were created, each with their own unique hair style, using FACES 4.0, a photo composite program. All faces were grayscale and were displayed from the neck upward; none had glasses or jewelry.

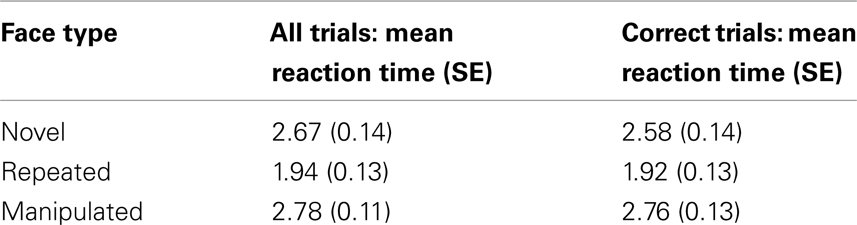

An equal number of younger faces were displayed with hair that was pinned up, short, medium, or long, and an equal number of older faces were displayed with hair that was short and curly, short and straight, medium, or long (Figure 1). Hair for the older faces was always presented as light in shade, whereas hair for the younger faces was presented equally often across faces and hairstyles as light, medium, and dark-colored in shade. Faces and hairstyles were counterbalanced such that across participants, hairstyles and faces were each shown as novel, repeated, and manipulated; within each participant, each hairstyle was seen on one face in one condition. Hairstyles were not shared across faces, and all hairstyles within each age group were unique.

Figure 1. Examples of stimuli – (top row from left to right): younger stimuli with hair put up, short, medium, and long hair; (bottom row from left to right): older stimuli with short and curly hair, short and straight hair, medium and long hair.

The experiment consisted of five study blocks followed by a recognition test block. Participants viewed 20 faces singly, once in each of the five study blocks. Equal numbers of younger versus older faces were presented. The order of the faces within each study block was randomized. During the recognition test, participants were shown 30 faces: 10 faces were new (novel), 10 faces were presented in the same form as in the study blocks (repeated), and 10 faces were presented with an altered hairstyle from previous viewings during the study blocks (manipulated). Within each face type (novel, repeated, manipulated), half of the faces were younger and half were older faces. Each face was presented once within the test block, and the order of the faces was randomized. The presentation of the faces was counterbalanced such that each version (original, manipulated) of a face was viewed as a novel, repeated, or manipulated face equally often across participants, so that comparisons of eye movements are made across physically identical stimuli (e.g., Ryan et al., 2000).

Procedure

During the study block, participants were instructed to imagine themselves as a border official who was required to examine and later recognize faces of people wanted for questioning. Participants were asked to study the faces without reference to, or in exclusion of, any particular feature. Each face was viewed singly for 5 s in each of the study blocks. To ensure that participants remained engaged in studying the faces, participants were asked to judge the age of each face on a scale from 1 to 5 (1 = 21–30; 2 = 31–40; 3 = 41–50; 4 = 51–60; 5 = 61–70) following the presentation of each face. After all five study blocks were complete, the Extended Range Vocabulary Test (ERVT) was administered.

In the recognition test block, participants were shown faces of people who were in line to cross the border, and were told to identify which were wanted for questioning (i.e., identify the faces that had been viewed during the study blocks) via a button press and verbal response. Participants viewed each face singly for 7 s and were instructed to respond that a face was “old” for previously viewed faces from the study block in either the same or altered form, or respond “new” for completely novel faces. Participants were told that the faces could have changed since the previous viewing, but no specific details were provided regarding the nature of the change. In addition, participants were asked to make their response during the 7-s presentation of the face. The face remained on the screen even after the decision was made, such that the faces were always viewed for the full 7 s. If the trial time elapsed and no button press response was recorded, the participant’s verbal recognition response was recorded after the trial was complete. Participants’ eye movements were recorded during both the study and recognition test blocks, but for the current work which examines the influence of prior exposure on eye movements, only the eye movement data from the recognition block are presented.

Reaction Time and Eye Movement Analyses

A repeated-measures analysis of variance (ANOVA) with one within-subject factor of face type (novel, repeated, manipulated) was used to examine differences in participants’ response times (measured from stimulus onset) for the recognition judgment. The same analysis was conducted on a set of eye movement measures. These eye movement measures were analyzed separately for the period prior to and following the recognition response. To examine viewing to the entire face, the measures of rate of fixations made to the stimuli (number of fixations made per second), and the rate of transitions made between face features (number of transitions made per second) were used. Viewing was also examined with respect to the critical external feature that could be altered (hair), and to internal features (eyes, nose, mouth); each were defined through an experimenter-drawn region of interest. Viewing to the features was examined using the measures of: the time, from stimulus onset, at which participants first directed their viewing to the feature (time of first fixation), and the time spent viewing the feature once participants first arrived to these regions, prior to leaving the region, as a proportion of total viewing time to the face (proportion of viewing time in the first gaze). These measures were used to indicate the extent to which changes to the hair were identified via eye movements, and the extent to which changes to the hair influenced viewing to internal features. A fixation was defined as the absence of any saccade (e.g., the velocity of two successive eye movement samples exceeds 22°/s over a distance of 0.1°) or blink (e.g., pupil is missing for three or more samples) activity.

The eye movement analyses as noted above were repeated using the within-subject factor of response type (novel-correct, repeated-correct, manipulated-correct, manipulated-incorrect), instead of face type, to examine the relationship between changes in eye movement patterns and explicit recognition (Ryan et al., 2000; Ryan and Cohen, 2004). Novel-incorrect and repeated-incorrect trials were not included in the analyses due to the limited amount of trials for these response types. In addition, a number of participants did not have trials in every response condition – one participant correctly classified each manipulated face as “repeated,” one participant did not view the hair region of manipulated-correct faces, and three participants did not view the hair region of manipulated-incorrect faces. As a result, these five participants were excluded from the respective analyses.

Note that analyses of recognition accuracy, time of first fixation, and rate of fixations and transitions were initially conducted with the additional within-subject factor of face age (younger, older) although face age was not the primary factor of interest for the current research questions. Analyses of the proportion of viewing time in the first gaze to either the hair or eyes were not conducted with face age because this limited the number of participants who could be included, as some did not have data in each condition. Face age interacted with either face type or response type for only one measure. Therefore, face age was removed from subsequent analyses and only significant interactions with face type or response type will be reported in the results section. In addition, it is important to note that the nose and mouth each received less than 12 and 6% of total viewing time, respectively, across all three face types, and due to the use of analyses that examined viewing before/after the recognition responses, there were too few trials to properly examine viewing effects to these regions.

Results

Recognition Accuracy

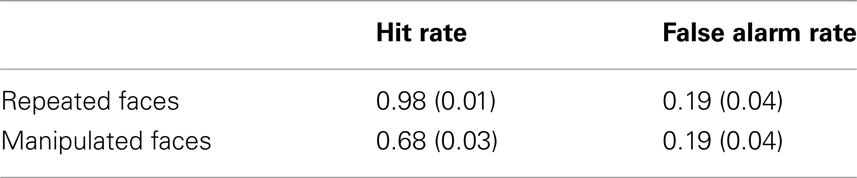

Trials for which a button press response was not recorded, but for which a verbal response was provided were included in this analysis (3% of total trials). Corrected accuracy was calculated as hit rate (repeated or manipulated faces called “old”) minus false alarm rate (novel faces called “old”), and was analyzed using a repeated-measures ANOVA with the within-subject factor of face type (repeated, manipulated). Recognition memory was impaired for faces that had undergone a change in an external face feature from a previous viewing; participants were significantly more accurate at identifying repeated (M = 0.80, SE = 0.04) versus manipulated (M = 0.50, SE = 0.05) faces as having been previously viewed [F(1, 23) = 92.00, p < 0.001; Table 1].

Reaction Time

A significant main effect of face type (novel, repeated, manipulated) was found for participant response times [F(2, 46) = 19.05, p < 0.001]. Participants were fastest to respond to repeated faces [M = 1.94 s, SE = 0.13 s; repeated versus novel faces: t(23) = −4.71, p < 0.001; repeated versus manipulated faces: t(23) = −6.35, p < 0.001], and no differences were observed between novel (M = 2.67 s, SE = 0.14 s) and manipulated faces [M = 2.77 s, SE = 0.11 s; t(23) = −0.69, p > 0.45]. The same pattern of results emerged when only correct trials were considered (see Table 2 for relevant means and SE).

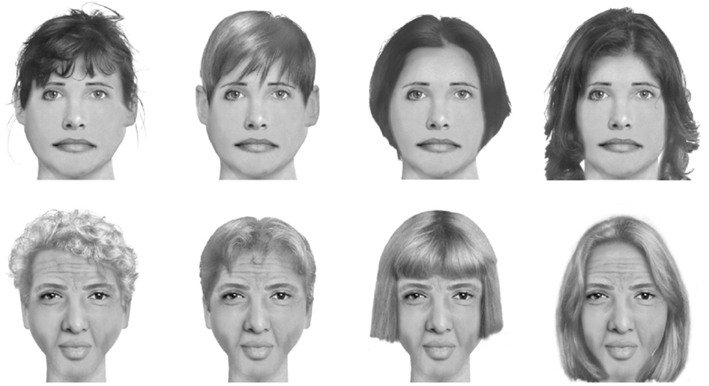

Arrival to the Hair and Eyes

There was no significant main effect of face type (novel, repeated, manipulated) or response type (novel-correct, repeated-correct, manipulated-correct, manipulated-incorrect) for the measure of time of first fixation to either the hair [face type: F(2, 46) = 1.54, p > 0.20; response type: F(3, 54) = 1.36, p > 0.25] or eyes (Fs < 1; Figure 2). Planned comparisons between response types with respect to first fixation time to the hair yielded no significant differences.

Figure 2. Time (seconds) of the first fixation made to the eye and hair regions for (A) face type: novel, repeated, and manipulated faces; (B) response type: novel-correct, repeated-correct, manipulated-correct, manipulated-incorrect. Error bars represent SEM.

Viewing Prior to the Response

Viewing of the face

A significant effect of face type (novel, repeated, manipulated) was found for the fixation rate prior to the recognition response [F(2, 46) = 6.22, p < 0.01]. Repeated faces were sampled at a lower fixation rate compared to either novel [t(23) = −2.31, p < 0.05] or manipulated faces [t(23) = −3.58, p < 0.01]. The fixation rate for novel and manipulated faces did not significantly differ [t(23) = −0.73, p > 0.45; Figure 3A]. Similarly, a marginal main effect of face type was found for the transition rate prior to the response [F(2, 46) = 2.89, p = 0.07]; fewer transitions per second were made toward repeated (M = 1.44, SE = 0.14) compared to either novel [M = 1.57, SE = 0.10; t(23) = −1.76, p = 0.09] or manipulated faces [M = 1.61, SE = 0.11; t(23) = −1.98, p = 0.06], with no difference between novel and manipulated faces [t(23) = −0.55, p > 0.55]. When including face age into the analysis, a significant interaction between face type and face age emerged for the transition rate prior to recognition response [F(2, 46) = 3.89, p < 0.05]. The pattern as described above was observed when participants viewed older faces [repeated versus novel: t(23) = −2.62, p < 0.05; repeated versus manipulated: t(23) = −2.16, p < 0.05; novel versus manipulated: t(23) = 0.59, p > 0.55]; however, when viewing younger faces, no significant differences were found between face types [repeated versus novel: t(23) = −0.09, p > 0.90; repeated versus manipulated: t(23) = −1.24, p > 0.20; novel versus manipulated: t(23) = −1.23, p > 0.20].

Figure 3. Mean fixation rate (fixations per second) before and after participants’ recognition response across (A) face type: novel, repeated, and manipulated faces; and (B) response type: novel-correct, repeated-correct, manipulated-correct, manipulated-incorrect. Error bars represent SEM.

When considering participants’ explicit responses, a similar pattern was observed [F(3, 66) = 4.14, p < 0.01]. Repeated-correct faces were sampled at a significantly lower fixation rate compared to novel-correct [t(22) = −2.33, p < 0.05], manipulated-correct [t(22) = −3.32, p < 0.01] or manipulated-incorrect faces [t(22) = −2.74, p < 0.05; Figure 3B]. There was no significant difference in the fixation rate made to novel versus manipulated faces, regardless of whether the manipulated face was correctly identified as having been previously viewed [novel-correct versus manipulated-correct: t(22) = −0.65, p > 0.50; novel-correct versus manipulated-incorrect: t(22) = −0.75, p > 0.45; manipulated-correct versus manipulated-incorrect: t(22) = −0.28, p > 0.75]. Similarly, a marginal effect of response type was found for the transition rate prior to the response [F(3, 66) = 2.43, p = 0.07]. Repeated-correct faces (M = 1.44, SE = 0.15) were sampled at a lower transition rate compared to novel-correct [M = 1.59, SE = 0.11; t(22) = −1.82, p = 0.08], manipulated-correct [M = 1.60, SE = 0.12; t(22) = −1.70, p = 0.10] or manipulated-incorrect faces [M = 1.66, SE = 0.10; t(22) = −2.12, p < 0.05]. There was no significant difference in the transition rate made to novel versus manipulated faces, regardless of whether the manipulated face was correctly identified as having been previously viewed [novel-correct versus manipulated-correct: t(22) = −0.19, p > 0.80; novel-correct versus manipulated-incorrect: t(22) = −0.93, p > 0.35; manipulated-correct versus manipulated-incorrect: t(22) = −0.67, p > 0.50].

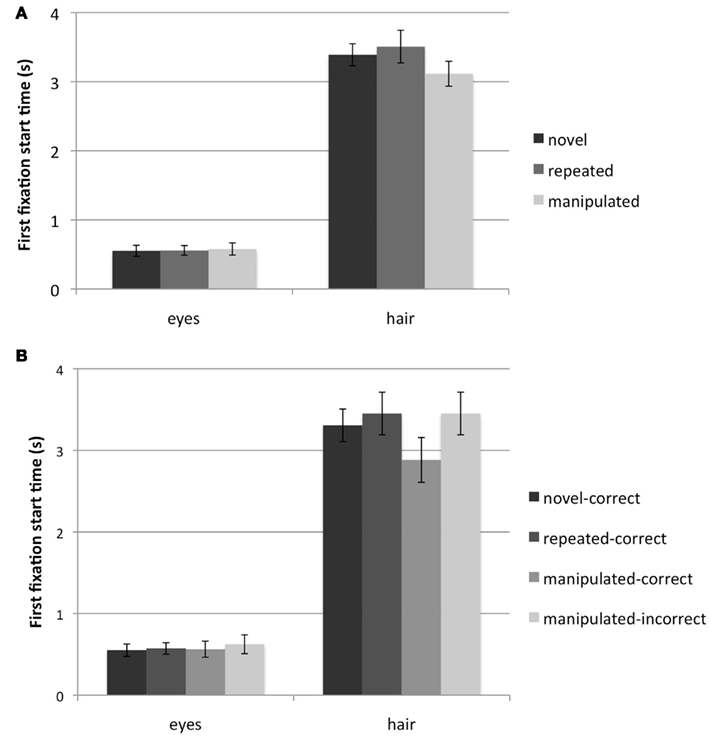

Viewing of the hair

When considering only those trials where the first gaze of the hair was completed before the recognition response (32% of all trials), there was no significant effect of face type or response type for the proportion of viewing time in the first gaze to the hair (Fs < 1; Figures 4A,B).

Figure 4. Proportion of total viewing time spent within the eye and hair regions during the first gaze to these regions. Results are shown for when the first gaze ended prior to the recognition response across (A) face type: novel, repeated, and manipulated faces; (B) response type: novel-correct, repeated-correct, manipulated-correct, manipulated-incorrect; and following the recognition response across (C) face type; (D) response type. Error bars represent SEM.

Viewing of the eyes

When considering only those trials where the first gaze to the eyes was completed before the recognition response (82% of all trials), there was no significant effect of face type for the proportion of viewing time in the first gaze [F(2, 44) = 1.70, p > 0.15; Figure 4A]; however there was a significant effect of response type [F(3, 60) = 3.18, p < 0.05]. Participants spent a significantly higher proportion of viewing time in the first gaze to the eyes of manipulated-correct faces compared to repeated-correct [t(20) = 2.34, p < 0.05] or manipulated-incorrect faces [t(20) = 2.03, p = 0.06], with no difference found when compared to novel-correct faces [t(20) = 1.50, p > 0.15; Figure 4B]. There was no difference in viewing between novel-correct and repeated-correct faces [t(20) = 1.23, p > 0.20], and there was a marginal difference between novel-correct and manipulated-incorrect faces [t(20) = 1.77, p = 0.09]. There was no difference between viewing of repeated-correct and manipulated-incorrect faces [t(22) = 0.35, p > 0.70]. Thus, viewing of the eyes of manipulated faces that would go on to be endorsed as “repeated” was largely similar to that of novel faces, whereas viewing of manipulated faces called “novel” was largely similar to that of repeated faces.

Interim summary

Consistent with previous work (e.g., Althoff and Cohen, 1999), the rate of fixations and transitions distinguished repeated from novel faces prior to the explicit response. Although participants had prior exposure to the manipulated faces during the study blocks, participants viewed manipulated faces in the same manner as novel faces. There was increased viewing of the eyes of manipulated faces that were correctly endorsed as “repeated” compared to the eyes of repeated faces, suggesting that information obtained from the eyes may contribute to explicit recognition of the face; however, this finding should be interpreted with caution as viewing to the eyes of manipulated faces was not distinguished from that of novel faces, and in turn, viewing of novel faces was not significantly different from viewing of repeated faces.

Viewing after the Response

Viewing of the face

A significant main effect of face type was found for fixation rate following the recognition response [F(2, 46) = 3.60, p < 0.05]. Repeated faces were sampled at a lower fixation rate compared to either novel [t(23) = −2.37, p < 0.05] or manipulated faces [t(23) = −2.43, p < 0.05]. The fixation rate for novel and manipulated faces did not significantly differ [t(23) = 0.87, p > 0.35; Figure 3A]. Similarly, a significant main effect of face type was found for the transition rate after the response [F(2, 46) = 9.83, p < 0.001]; fewer transitions per second were made toward repeated (M = 1.19, SE = 0.10) compared to either novel [M = 1.40, SE = 0.10; t(23) = −4.03, p < 0.05] or manipulated faces [M = 1.38, SE = 0.09; t(23) = −3.42, p < 0.05], with no difference between novel and manipulated faces [t(23) = 0.37, p > 0.70].

When considering participants’ explicit responses, a significant main effect of response type was not observed for fixation rate [F(3, 66) = 1.69, p > 0.15; Figure 3B]. However, a significant main effect of transition rate emerged [F(3, 66) = 6.74, p < 0.001]; repeated-correct faces (M = 1.19, SE = 0.10) were sampled at a significantly lower transition rate compared to novel-correct [M = 1.39, SE = 0.11; t(22) = −4.55, p < 0.001], manipulated-correct [M = 1.33, SE = 0.10; t(22) = −2.04, p = 0.05], or manipulated-incorrect faces [M = 1.52, SE = 0.11; t(22) = −4.04, p = 0.001]. Manipulated-correct faces were also sampled at a significantly lower transition rate compared to manipulated-incorrect faces [t(22) = −2.12, p < 0.05]. There was no significant difference in the transition rate made to novel versus manipulated faces, regardless of whether the manipulated face was correctly identified as having been previously viewed [novel-correct versus manipulated-correct: t(22) = 1.04, p > 0.30; novel-correct versus manipulated-incorrect: t(22) = −1.35, p > 0.15].

Viewing of the hair

When considering only those trials for which the first gaze to the hair began following the recognition response (68% of all trials), there was no significant main effect of face type [F(2, 40) = 1.99, p > 0.15] or response type (F < 1) on the proportion of viewing time in the first gaze to the hair (Figures 4C,D).

Viewing of the eyes

When considering only those trials for which the first gaze to the eyes began following the recognition response (18% of all trials), a significant main effect of face type was found [F(2, 16) = 7.05, p < 0.01]. Upon first arriving to the eye region, participants spent a larger proportion of time in the eye region of repeated compared to novel [t(8) = 2.67, p < 0.05] or manipulated faces [t(8) = 2.73, p < 0.05], with no difference between novel and manipulated faces [t(8) = −1.50, p > 0.15; Figure 4C]. A marginal main effect of response type was found [F(3, 12) = 2.99, p = 0.07]; viewing was again longer for the repeated faces (Figure 4D).

Interim summary

Prior exposure influenced viewing to the face and to the eyes, following the recognition response. Specifically, viewers had a lower rate of fixations and transitions for repeated compared to novel or manipulated faces, and more viewing was directed to the eyes during the first gaze for repeated compared to novel or manipulated faces. The transition rate also distinguished manipulated faces that were endorsed as “novel” versus “repeated”; specifically, more transitions were made between face features when manipulated faces were endorsed as novel, although neither significantly differed from novel faces; thus, across the trial, before and after the recognition response, viewing was distinguished largely for the repeated faces; manipulated faces were viewed most similarly to the novel faces.

Discussion

Although accurate recognition of faces is readily achieved, recognition memory becomes considerably impaired if faces have undergone some kind of change from initial to subsequent viewings; in particular, merely changing a person’s hairstyle can negatively impact face recognition (Patterson and Baddeley, 1977). These findings suggest that external and internal features may be stored as a single unit within memory, such that changing a single feature creates a “novel” face. The present study was conducted to determine the influence of altered features on face processing and recognition. We examined the extent to which changing an external feature (hair) would alter eye movement sampling to the face as a whole, and to particular face features, such as the manipulated external feature and the internal feature that typically receives the most viewing (the eyes). Additionally, we examined the relationship between face processing and recognition, by analyzing the eye movement measures prior to, and following, the recognition response and by examining eye movements based on whether the correct recognition response was made.

Although participants were highly accurate to recognize repeated faces, recognition was significantly impaired for previously viewed, but manipulated, faces. These results replicate previous findings which demonstrated a recognition deficit for faces that had been altered from a previous viewing (e.g., Patterson and Baddeley, 1977; Shapiro and Penrod, 1986) and suggests that recognition for newly learned faces may not withstand changes to features across viewings, even when changes are made to external features.

Viewing of manipulated faces was predominantly similar to viewing of novel faces. Viewing to the eyes before the recognition response distinguished between manipulated faces that were endorsed as “novel” versus “repeated,” suggesting that some processing of an individual feature was used to support the recognition judgment. However, viewing was not significantly distinguished from viewing of novel faces for either type of manipulated trial; again suggesting that the faces, even when endorsed as “repeated” are instead processed as a novel face, and perhaps are consciously appraised as “not exactly a repeated face.” Further, the rate of transitions made between face features following the recognition response also distinguished manipulated faces that were endorsed as “repeated” versus “novel,” but again, viewing was not significantly different from viewing of novel faces for either type of manipulated trial. All together, this suggests that the representation used to predominantly support face recognition may be holistic in nature, and that processing of a manipulated face as “novel” may reflect the ongoing update of the face representation in memory (McClelland and Chappell, 1998).

Processing of Faces prior to Recognition Responses

Consistent with previous research (Althoff and Cohen, 1999; Stacey et al., 2005; Ryan et al., 2007; Heisz and Shore, 2008), viewing distinguished repeated faces from novel faces. Participants made significantly fewer fixations and transitions (slower rate of fixations and transitions per second) to repeated compared to novel faces prior to the recognition response. Such eye movement differences between repeated and novel faces likely reflect a kind of perceptual fluency that arises when previously stored representations are retrieved and compared with the externally presented stimulus (Althoff and Cohen, 1999; Ryan et al., 2007). The fact that eye movements are altered as a result of prior viewing history suggests that the memories for the previously viewed faces must have been retrieved and made accessible to the participants for comparison to the currently presented stimulus. If the memories were not accessible and/or not compared to current perceptual input, then there would be no difference in viewing across the conditions – no influence of prior viewing history. The purpose of eye movements is to extract the most meaningful information from the environment (Loftus and Mackworth, 1978); therefore, during comparison, if there is redundancy detected between what is maintained in memory and what is presented, then there should consequently be a decrease in eye movement sampling; that is, perceptual fluency may be evidenced by a decrease in eye movement behavior that occurs as a function of repetition. When viewing novel faces, no existing face representation in memory would exist with which to compare the facial stimulus; as a result, all of the face features would need to be examined in order to form a representation of that face in memory. For novel faces, this resulted in participants making more fixations and transitions within the novel compared to repeated faces, in order to view and integrate the face features, and thus inform an explicit response.

The critical question here was whether manipulated faces, for which participants also had prior exposure in the study blocks, would be viewed in a manner similar to repeated or novel faces as a result of the altered hairstyle. Predominantly, participants viewed manipulated faces similarly to novel faces, suggesting that the internal and external facial features may be stored holistically, as one unit in memory. Further, such findings suggest that even if there are specialized neuronal mechanisms dedicated to processing the eyes (e.g., Itier and Batty, 2009), the change to an external feature changes the manner by which other, internal, face features are processed, suggesting that there is a holistic representation that governs face recognition.

However, prior to the recognition response, there was some indication that manipulated faces may be processed differently depending on whether they would later be endorsed as “repeated” or “novel.” Specifically, for manipulated faces that would correctly be called “repeated,” the first gaze of viewing to the eyes resembled that of novel faces; whereas viewing to the eyes of manipulated faces that would be incorrectly called “novel” was similar to that of repeated faces. This pattern of findings may be counterintuitive as one might expect that viewing to the eyes would divide simply on whether any face, regardless of prior viewing history, was endorsed as “repeated” versus “novel.” However, the results should be interpreted with caution as there were no significant differences between viewing of novel and repeated faces. Moreover, more than 50% of the trials that were excluded for this analysis were trials of repeated faces, for which the first gaze to the eyes was occurring as the recognition response was being made, and thus the first gaze could not be considered as having begun and been completed before the response. Nonetheless, the present results hint that participants may have relied on information obtained from the eyes early in viewing to inform the recognition response. Early eye movement contributions to subsequent recognition have been reported elsewhere; for example, Hsiao and Cottrell (2008) showed that accurate face recognition judgments can be made in only two fixations and Matthews (1978) suggested that viewers initially fixate on the eyes to obtain a holistic view of the face to guide further processing.

Although changes were made to the hair of manipulated faces, overt viewing to the hair itself did not distinguish manipulated faces from either novel or repeated faces, nor did overt viewing to the hair distinguish repeated from novel faces. Our previous work has shown that within scenes, viewing is increased to regions where an object has been altered (e.g., Ryan et al., 2000; Riggs et al., 2010). The fact that altered viewing toward the critical region was not observed in the present study may suggest that participants were nonetheless directing covert attention to the hair, and were able to sufficiently encode information regarding the hair within their peripheral vision such that direct foveation was not required. Alternatively, the absence of foveal fixation of the hair may support the uniqueness of face processing compared to other stimuli such as scenes (Tanaka and Farah, 1993). Specifically, face features may be stored as a single unit, rather than each of the features being stored as separate “objects” that are then associated in memory, as with scenes. Maintaining objects separately in memory would allow a viewer to detect a change to one object, while simultaneously appreciating the repetition of the surrounding objects (e.g., Ryan et al., 2000). However, if the features are maintained in a single unit, a change to one feature would result in the processing of the stimulus as a novel unit. In addition, the fact that in many trials, participants did not make a fixation to the hair prior to making the recognition response, even when the hair was manipulated, suggests that the hair may not have been used to make recognition decisions. This further emphasizes the holistic representation of faces in memory. This is not to suggest that internal and external features cannot be represented simultaneously and separately in memory. Rather, the current findings suggest that it is the holistic representation of internal and external features that predominantly influences processing and recognition.

Processing of Faces after Recognition Responses

Similar to what was observed prior to the recognition response, viewing distinguished repeated from novel faces following the recognition response: fewer fixations and transitions per second were made toward repeated compared to novel faces. In addition, participants spent a significantly larger proportion of time in the first gaze to the eyes of repeated compared to novel faces. This suggests that participants may have been continuously scanning and integrating face features to form a new face representation in memory for novel faces, and as a consequence, spent less time in the first gaze to the eyes. By contrast, repeated faces would not require this continued processing given that a memory representation already existed.

Manipulated faces were still viewed similarly to novel faces following the recognition response; both manipulated and novel faces were viewed with an increased fixation and transition rate, and a lower proportion of time spent in the first gaze to the eyes, compared to repeated faces. This again suggests that internal and external facial features may be stored holistically, since a change in an external feature resulted in the face being viewed as “novel.” Interestingly however, participants made fewer transitions per second after the recognition response toward manipulated faces which would later be endorsed as “repeated,” compared to those which would later be endorsed as “novel,” although viewing of each was similar to that of novel faces. Such findings suggest that although manipulated faces were treated as “novel,” subsequent processing differed depending on whether the viewer thought there was an existing match in memory.

Access and Update of Holistic Representations

Viewing of manipulated faces across the trial period was ultimately most similar to novel faces, suggesting that internal and external features are stored as a single unit within memory. There is increasing evidence from behavioral and neuroimaging studies suggesting that external features are stored alongside internal features within a holistic face representation (Sinha and Poggio, 1996, 2002; Andrews et al., 2010; Axelrod and Yovel, 2010). As a result, even though accurate face recognition can be achieved even when only the internal features are presented (e.g., Anaki et al., 2007), altering external features disrupts face recognition. Additionally, Andrews et al. (2010) showed a significant decrease in neural responses (neural adaptation) in the fusiform face area (FFA) when participants viewed repeated images of faces; however, if either the internal or external features were altered within the face (leaving the complementary set of features repeated), there was a release from neural adaptation, suggesting that internal and external features are represented holistically by the FFA rather than being maintained separately. While these findings support the notion that internal and external features are stored holistically in memory, the present study demonstrates that the very processing of previously viewed faces, as evidenced by eye movement monitoring, differs with an external feature change, and consequently results in a subsequent recognition deficit for such faces.

Interestingly, viewing of manipulated faces was similar to novel faces even for those manipulated faces that were accurately recognized as “old.” Despite recognizing the face as “repeated,” participants may have been processing manipulated faces as “novel” as a means of updating the face representation in memory. If manipulated faces had been viewed similarly to repeated faces, due to repetition of the internal features, such findings would align with general memory models that suggest judgments of recognition are based on the “strength” or familiarity of a given target item (Murdock, 1982; Hintzman, 1988). When a probe is presented, traces within memory that are similar to, or contain, the probe will be activated, and the similarity of the trace to the probe will be evaluated. The trace will be recalled from memory upon exceeding a particular threshold of similarity, and the target item would then be endorsed as “repeated.” Under this account of recognition, manipulated faces should have been processed and recognized as “repeated” prior to the recognition response given that the manipulated faces retained the same internal features of the face from the previous viewing and therefore would have been highly similar to traces stored in memory. It might then be expected, under these memory models, that manipulated faces would be processed similar to novel faces following the recognition response, as a new “copy” of the representation is laid down in memory.

Instead, manipulated faces were processed as “novel” both prior to, and following, the recognition response and were endorsed via recognition responses as “novel” nearly as often as “repeated.” Such findings may align more readily with models of recognition that suggest that memory traces of items may be updated with each encounter (McClelland and Chappell, 1998; Criss and McClelland, 2006). Under these accounts, recognizing a target item relies on the ability to understand the characteristics or properties within the memory trace and to be able to differentiate these properties from the presented target item. This ability to differentiate the presented face with a trace in memory may allow viewers to identify the differences, endorse the manipulated face as “repeated” and ultimately display processing of the face akin to processing a novel face. Processing of the manipulated face as “novel” (e.g., increased transitions, fixations) may reflect the ongoing update of the memory representation based on the currently presented information, and such updates could occur throughout the viewing period.

In summary, processing of internal face features was influenced by changes made to external features, such that faces with altered hairstyles were predominantly viewed as “novel.” Such findings suggest that internal and external face features may be stored together as one unit in memory. Applied in the context of eyewitness testimony, the present research suggests that eyewitness identification may be adversely affected by seemingly slight changes in the appearance of the previously viewed culprit. This underscores the importance of investigating the use and reliability of eyewitness testimony as evidence in the court of law, and developing additional objective measures of recognition that can be used in conjunction with eyewitness reports.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Acknowledgments

We thank Daphne Kamino for assistance in data collection, Jiye Shen for technical assistance, Malcolm Binns for statistical assistance and Jennifer Heisz for comments on a previous version of this manuscript. Funding from the Canadian Institutes of Health Research (CIHR) and the Canada Research Chairs Program and the Canadian Foundation for Innovation (CRC/CFI) was provided to Jennifer D. Ryan.

References

Althoff, R. R., and Cohen, N. J. (1999). Eye-movement-based memory effect: a reprocessing effect in face perception. J. Exp. Psychol. Learn. Mem. Cogn. 25, 997–1010.

Anaki, D., Boyd, J., and Moscovitch, M. (2007). Temporal integration in face perception: evidence of configural processing of temporally separated face parts. J. Exp. Psychol. Hum. Percept. Perform. 33, 1–19.

Andrews, T. J., Davies-Thompson, J., Kingstone, A., and Young, A. W. (2010). Internal and external features of the face are represented holistically in face-selective regions of visual cortex. J. Neurosci. 30, 3544–3552.

Axelrod, V., and Yovel, G. (2010). External facial features modify the representation of internal facial features in the fusiform face area. Neuroimage 52, 720–725.

Bartlett, J. C., and Leslie, J. E. (1986). Aging and memory for faces versus single views of faces. Mem. Cognit. 14, 371–381.

Bruce, V. (1982). Changing faces: visual and non-visual processes in face recognition. Br. J. Psychol. 73, 105–111.

Criss, A., and McClelland, J. L. (2006). Differentiating the differentiation models: a comparison of the retrieving effectively from memory model (REM) and the subjective likelihood model (SLiM). J. Mem. Lang. 55, 447–460.

Hintzman, D. L. (1988). Judgments of frequency and recognition memory in a multiple-trace memory model. Psychol. Rev. 95, 528–551.

Hsiao, J. H., and Cottrell, G. (2008). Two fixations suffice in face recognition. Psychol. Sci. 19, 998–1006.

Itier, R. J., and Batty, M. (2009). Neural bases of eye and gaze processing: the core of social cognition. Neurosci. Biobehav. Rev. 33, 843–863.

Krouse, F. L. (1981). Effects of pose, pose change, and delay on face recognition performance. J. Appl. Psychol. 66, 651–654.

Loftus, G. R., and Mackworth, N. H. (1978). Cognitive determinants of fixation location during picture viewing. J. Exp. Psychol. Hum. Percept. Perform. 4, 565–572.

Matthews, M. L. (1978). Discrimination of identi-kit constructions of faces: evidence for a dual processing strategy. Percept. Psychophys. 23, 153–161.

McClelland, J. L., and Chappell, M. (1998). Familiarity breeds differentiation: a subjective-likelihood approach to the effects of experience in recognition memory. Psychol. Rev. 105, 724–760.

Murdock, B. B. (1982). A theory of the storage and retrieval of item and associative information. Psychol. Rev. 89, 609–626.

Patterson, K. E., and Baddeley, A. D. (1977). When face recognition fails. J. Exp. Psychol. Hum. Learn. 3, 406–417.

Riggs, L., McQuiggan, D. A., Anderson, A. K., and Ryan, J. D. (2010). Eye movement monitoring reveals differential influences of emotion on memory. Front Psychol. 1:205. doi:10.3389/fpsyg.2010.00205

Ryan, J. D., Althoff, R. R., Whitlow, S., and Cohen, N. J. (2000). Amnesia is a deficit in relational memory. Psychol. Sci. 11, 454–461.

Ryan, J. D., and Cohen, N. J. (2004). The nature of change detection and online representations of scenes. J. Exp. Psychol. Hum. Percept. Perform. 30, 988–1015.

Ryan, J. D., Hannula, D. E., and Cohen, N. J. (2007). The obligatory effects of memory on eye movements. Memory 15, 508–525.

Shapiro, P. N., and Penrod, S. D. (1986). Meta-analysis of facial identification studies. Psychol. Bull. 100, 139–156.

Sinha, P., and Poggio, T. (2002). United we stand: the role of head-structure in face recognition. Perception 31, 133.

Stacey, P. C., Walker, S., and Underwood, J. D. M. (2005). Face processing and familiarity: evidence from eye-movement data. Br. J. Psychol. 96, 407–422.

Tanaka, J. W., and Farah, M. J. (1993). Parts and wholes in face recognition. Q. J. Exp. Psychol. 46A, 225–245.

Weingardt, K. R., Loftus, E. F., and Lindsay, D. S. (1995). Misinformation revisited: new evidence on the suggestibility of memory. Mem. Cognit. 23, 72–82.

Wells, G. L., Small, M., Penrod, S. D., Malpass, R. S., Fulero, S. M., and Brimacombe, C. A. E. (1998). Eyewitness identification procedures: recommendations for lineups and photospreads. Law Hum. Behav. 22, 603–607.

Keywords: eye movements, aging, recognition, memory, face perception

Citation: Chan JPK and Ryan JD (2012) Holistic representations of internal and external face features are used to support recognition. Front. Psychology 3:87. doi: 10.3389/fpsyg.2012.00087

Received: 18 August 2011; Accepted: 07 March 2012;

Published online: 23 March 2012.

Edited by:

Andrea Bender, University of Freiburg, GermanyReviewed by:

Alan C.-N. Wong, The Chinese University of Hong Kong, Hong KongAndreas Glöckner, Max Planck Institute for Research on Collective Goods, Germany

Copyright: © 2012 Chan and Ryan. This is an open-access article distributed under the terms of the Creative Commons Attribution Non Commercial License, which permits non-commercial use, distribution, and reproduction in other forums, provided the original authors and source are credited.

*Correspondence: Jennifer D. Ryan, Rotman Research Institute at Baycrest, 3560 Bathurst Street, Toronto, ON, Canada M6A 2E1. e-mail:anJ5YW5Acm90bWFuLWJheWNyZXN0Lm9uLmNh