Abstract

Is there something special about the way music communicates feelings? Theorists since Meyer (1956) have attempted to explain how music could stimulate varied and subtle affective experiences by violating learned expectancies, or by mimicking other forms of social interaction. Our proposal is that music speaks to the brain in its own language; it need not imitate any other form of communication. We review recent theoretical and empirical literature, which suggests that all conscious processes consist of dynamic neural events, produced by spatially dispersed processes in the physical brain. Intentional thought and affective experience arise as dynamical aspects of neural events taking place in multiple brain areas simultaneously. At any given moment, this content comprises a unified “scene” that is integrated into a dynamic core through synchrony of neuronal oscillations. We propose that (1) neurodynamic synchrony with musical stimuli gives rise to musical qualia including tonal and temporal expectancies, and that (2) music-synchronous responses couple into core neurodynamics, enabling music to directly modulate core affect. Expressive music performance, for example, may recruit rhythm-synchronous neural responses to support affective communication. We suggest that the dynamic relationship between musical expression and the experience of affect presents a unique opportunity for the study of emotional experience. This may help elucidate the neural mechanisms underlying arousal and valence, and offer a new approach to exploring the complex dynamics of the how and why of emotional experience.

INTRODUCTION

Every known civilization creates unique and sophisticated musical forms to communicate affect, and humans consciously seek out musical experiences because of the feelings they evoke. There is widespread agreement that music induces emotional experiences, and that at least some aspects of this phenomenon are universal. But what is the nature of musical feelings and what is the relationship between musical feelings and emotions? Over the years, competing theories have been developed to address these issues, and sophisticated experimental paradigms have been devised to investigate them. However, incommensurate claims and variable findings leave many open questions. Are affective responses to music accidents of evolution? Is there something special about musical communication? Can the study of emotion teach us anything about the nature of music? And what, if anything, can music teach us about the nature of emotional experience?

Since Meyer’s (1956) pioneering work in music and emotion, theorists have struggled to explain how music could stimulate emotional experiences by somehow triggering basic, evolutionarily ancient psychological processes tied to survival. Empirical approaches have sought specific behavioral and/or physiological responses to music (Juslin and Sloboda, 2001). One specific problem that arises is the question of how an object-directed emotion can be evoked by music with no external referent. Another is how a sophisticated, non-referential form of cultural expression, learned over many years of exposure, could engender innate, survival-related responses.

The goal of this paper is to stake out some new territory in the debate on musical emotion. First, we will ask whether emotional experiences are really “basic” (Allport, 1924; Izard, 1971), or whether they are psychologically constructed from domain-general processes (Barrett, 2009a). Next, we will explore the idea that all conscious systems consist of multiple neural processes, produced by spatially dispersed events in the physical brain and integrated into a seamless neurodynamic whole through synchrony of neural oscillations (Edelman, 2003). Core affect is thought to be one primitive, domain-general aspect of the dynamic core of consciousness (Russell, 2003) especially relevant to emotional experience (Barrett, 2009a). Then, we will review recent evidence that neuronal synchrony with music gives rise to musical qualia including tonal and temporal expectancies (Large and Jones, 1999; Lerdahl, 2001; Huron, 2005; Large, 2010a). Finally, we will argue that music-synchronous responses couple into the dynamic core of consciousness, directly modulating core affect.

THEORIES OF MUSIC AND EMOTION

Music does not have obvious survival value (cf. Pinker, 1997) and yet is able to elicit strong emotional reactions. Many biological perspectives consider the primary function of emotion as a response to behavioral demands that may require mobilization for action; they evolved to prepare an individual to deal with situations that were significant for survival and reproduction (Darwin, 1872/1965; Cosmides and Tooby, 2000; Huron, 2005, 2006). Darwin (1872/1965) proposed that the origin of the musical communication of emotion was to be found in the evolutionary process of sexual selection.

Psychological approaches to musical emotions have been heavily influenced by the theory of basic emotion. Basic emotion theorists have sought to identify categories of emotions that share distinct collections of properties such as patterns of autonomic nervous system activity and behavioral responses or action tendencies (Allport, 1924; Izard, 1971; Ekman, 1972; Panksepp, 1998). Other approaches consider emotions to be constructed from more primitive processes, including affect (Irons, 1987; Ortony et al., 1988; Russell, 2003; Barrett, 2006, 2009b; Duncan and Barrett, 2007). Mechanisms of musically induced emotion have been explored at great length with varying causations, interpretations, and results.

Meyer’s (1956) approach to musical emotion has been highly influential in part because he was the first to seriously take into account both philosophical works (e.g., Langer, 1951) and psychological theories of emotion (cf. Dewey, 1895; MacCurdy, 1925; Angier, 1927; Rapaport, 1950). Meyer observed that namable emotions are – unlike music – event-directed, and that emotional experiences are much more subtle than the “crude and standardized words we use to denote them.” He also observed that emotional responses are not innate, they are highly variable, and they depend on learning and enculturation (Meyer, 1956). He therefore concluded that, for the most part, music does not communicate genuine emotions. “That which we wish to consider” wrote Meyer, “is that which is most vital and essential in emotional experience, the feeling-tone accompanying emotional experience, that is, the affect” (Meyer, 1956, p.12).

Meyer was also the first to suggest that what is now called statistical learning applies to music and determines musical feelings. For example, a passage of tonal music leads to the feeling that some pitches are more stable than others. More stable pitches are felt as points of repose, and less stable pitches are felt to point toward, or be attracted to the more stable ones (Lerdahl, 2001). Such relationships are reflected in naming conventions of many musical cultures, including Western, Indian and Chinese (Meyer, 1956). Based on the failure of earlier attempts to account for musical communication based on vibrations, ratios of intervals, and so on, he argued that feelings of stability and attraction are learned through experience with the music of a particular culture. Moreover, the associations of musical moods, such as happy and sad, with major or minor harmonies, or the affective qualities associated with ragas in North Indian tonal systems are conventional designations, having little to do with the sound itself (Meyer, 1956).

Finally, Meyer (1956) argued that the frustration of expectancy is the basis for affective responses to music. He believed that affect is aroused when an action tendency is inhibited. Music, unlike other emotional stimuli, is not referential; it both creates and inhibits expectancies thereby providing meaningful and relevant resolutions within itself. Music communicates affect through violations and resolutions of learned expectancies.

The latter two points were taken up by modern empiricists and theorists, who studied musical expectancy and statistical learning in a variety of musical domains, and from various points of view (e.g., Krumhansl, 1990; Narmour, 1990; Large and Jones, 1999; Tillmann et al., 2000; Lerdahl, 2001; Huron, 2005; Temperley, 2007). Perhaps the most comprehensive attempt to extend Meyer’s expectancy theory of musical emotion is Huron’s “Sweet Anticipation” (2006). Huron argues that a fundamental job of the brain is to make predictions about the world, and successful predictions are rewarded. Within tonal context, the most stable pitches are experienced as most pleasant; within a metrical context, events that occur at expected times are more pleasurable. Thus, Huron argues, music is fundamentally a hedonic experience.

Huron emphasizes that tonal and temporal expectancies in music are learned. Musical events evoke distinctive musical qualia, and Huron reviews the body of empirical evidence showing that qualia such as stability and attraction (“scale degree qualia”) correlate with statistical properties of music (Huron, 2006). He agrees with Meyer that the associations of major and minor modes with happy and sad qualia are learned associations.

In a key break from Meyer, however, Huron argues that expectancy evokes emotions, not merely affect. He adopts a two-process approach, which posits a fast time-scale reaction and a slow time-scale appraisal (LeDoux, 1996). Specific emotional responses involve primitive circuits that are conserved throughout mammalian evolution, and function relatively independently of cognitive circuits (LeDoux, 2000). He hypothesizes, for example, that unexpected events in music activate the neural circuitry for fear, leading to the feeling of surprise. He goes so far as to suggest that basic survival-related responses, including fight, flight and freezing, lead to the specific subjective musical experiences of frisson, laughter, and awe, respectively.

Juslin and Vastfjall (2008), consider emotions to be affective responses that involve subjective feelings, physiological arousal, expressions, and action tendencies. They too, reject Meyer’s claim that music does not induce genuine emotions, because musical responses can display all these features. They endorse the notion that emotions involve intentionality1; emotions are “about” something. However, they claim that music induces a wide range of both basic and complex emotions because music triggers a variety of psychological mechanisms beyond expectancy. They go on to describe how brain stem reflexes, evaluative conditioning, visual imagery, episodic memory, and emotional contagion can lead to genuine emotional responses. Brain stem reflexes trigger emotional responses because acoustic characteristics are taken by the brain stem to signal an urgent event. Evaluative conditioning is a special kind of classic conditioning in which a stimulus without an emotional meaning, e.g., music, is consistently paired with an emotional experience, eventually coming to trigger the emotional response. Presumably, the learned pairing of major with happy and minor with sad (Meyer, 1956; Huron, 2006) would be an example of this phenomenon. Triggering of visual imagery as well as episodic memories (Janata et al., 2007) can also lead to emotional experiences (Sloboda and O’Neill, 2001). In all of these cases the emotional responses are intentional – they are about something.

Emotional contagion, in Juslin and Vastfjall’s (2008) view, is a process in which a listener perceives the emotional expression of the music, and then “mimics” this expression internally, as with other forms of interpersonal interaction like bodily gestures, facial gestures, and speech (Juslin and Vastfjall, 2008). In their conceptualization, music evokes basic emotions with distinct nonverbal expressions (Juslin and Laukka, 2003; Laird and Strout, 2007), and this process operates similarly to emotional contagion via facial and vocal expressions of emotion (Tomkins, 1962; Ekman, 1993). Emotional contagion is linked to activation of the so-called mirror neuron system (Rizzolatti and Craighero, 2004), and Juslin (2001) suggests that music can operate in this way because in some sense it imitates other forms of social interaction. Below we will offer a somewhat different view of contagion.

If we consider the full spectrum of phenomena discussed by Juslin and Vastfjall (2008), it seems clear that a wide range of emotional responses can be triggered by music. However, from a musical point of view, some of these mechanisms are more interesting than others. Loud unexpected sounds can frighten us, and auditory stimuli can trigger conditioned responses. But in these examples, music serves merely as a trigger. Many other kinds of stimuli can trigger such responses equally well; they need not be musical or even auditory (e.g., LeDoux, 2000). Episodic memory and visual imagery likely account for a significant proportion of the emotional responses people experience on a day-to-day basis (Janata et al., 2007). Moreover, there are a many reasons to believe that music is especially effective at eliciting episodic memories (Janata et al., 2007; Eschrich et al., 2008). If we could understand the fundamental mechanisms of musical communication, this may help us to understand why episodic memories are so effectively evoked by music. Here, we take a different approach to understanding the ability of music to elicit feelings, one that does not treat music merely as a trigger but rather focuses on fundamental dynamic mechanisms of affective communication.

The remainder of this article is concerned primarily with musical expectancy and contagion, as these are mechanisms that seem to us to be most inherently musical. Expectancies arise in response to complex, explicitly musical structures such as tonality and meter. Contagion is a kind of empathic resonance (Molnar-Szakacs and Overy, 2006; Chapin et al., 2010) that enables music to function as a type of interpersonal communication. Our approach will be to link expectancy and contagion with the dynamics of the physical brain. This involves addressing several basic questions. What is the nature of emotional experience: are emotions basic, evolutionarily adapted and cross-cultural, or are emotions constructed from more fundamental psychological ingredients? Are musical qualia based solely on learned contingencies, or do they arise from intrinsic neurodynamics? And what is the nature of the relationship, such that music is able to elicit affective experiences?

EMOTION, AFFECT, AND CONSCIOUSNESS

Influenced by Darwin’s (1872/1965) theory of pan cultural emotions, Tomkins (1963) and Ekman et al. (1987) argued that emotions are genetically determined products of evolution. Basic emotions are discrete, and each category shares a distinctive collection of properties including patterns of autonomic nervous system activity, behavioral responses or action tendencies, and a set of emotion-specific brain structures that are thought to mediate these particular “basic” emotions. Each basic emotion derives from a particular causal mechanism; an evolutionarily preserved module in the brain (Tomkins, 1963; Ekman, 1992; Panksepp, 1998). Ekman (1984) proposed that the natural boundaries between types of emotion could be determined by differences in facial expression. Huron’s (2005) approach to musical emotion and Juslin and Vastfjall’s (2008) multiple mechanisms theory tend to endorse the basic emotion view. Basic emotions are cross-cultural and non-basic emotions are specific to cultural upbringing. However, there is little agreement about which emotions are basic, how many emotions are basic, and how basic emotions are defined.

Recent behavioral, psychophysiological, and neural findings (e.g., Barrett, 2006; Pessoa, 2008; Lindquist et al., 2012) have led a number of emotion theorists to question the basic emotion view (Ortony and Turner, 1990; Russell and Barrett, 1999; Duncan and Barrett, 2007). An alternative approach holds that diverse human emotions result from the interplay of more fundamental domain-general processes (Russell, 2003; Pessoa, 2008; Barrett, 2009b). Psychological constructionists argue that emotions are culturally relative, learned, and, though they are a result of evolution, they are not biologically basic (Russell and Barrett, 1999; Duncan and Barrett, 2007). Emotions are the combination of psychologically primitive processes that encompass both affective and intentional components. A specific emotion is not the invariable result of activation in a particular brain area; neural circuitry realizes more basic processes across emotion categories (Pessoa, 2008; Wilson-Mendenhall et al., 2013). Meyer’s approach to the musical communication of affect is consistent with this view.

Contemporary neurodynamic approaches hypothesize that all conscious states are a multimodal process entailed by physical events occurring in the brain (Tononi and Edelman, 1998; Engel and Singer, 2001; Searle, 2001; Seth et al., 2006; Pessoa, 2008). The neural structures and mechanisms underlying consciousness contribute domain-general processes to many psychological phenomena. Importantly, when spatially distinct areas contribute to the contents of consciousness, they enter into a unified neurodynamic core (e.g., Edelman, 2003). Neurodynamic theories of consciousness propose that the synchronous activations of the thalamocortical system give rise to the unity of conscious experience (Edelman and Tononi, 2000; Varela et al., 2001; Cosmelli et al., 2006). Binding of spatially distinct processes is thought to occur through enhanced synchrony in gamma and beta band rhythms (Engel and Singer, 2001; Fujioka et al., 2012), and high frequency activity is modulated by slower rhythms such as delta and theta (Lakatos et al., 2005; Buzsáki, 2006; Canolty et al., 2006).

Intentionality and affect are fundamental properties of conscious experience (Searle, 2001). Conscious processes point to or are about something (Brentano, 1973; Searle, 2000), and they possess a valence and a level of activation (Barrett, 1998, 2006; Searle, 2000). Searle’s theory of consciousness (Searle, 1992, 2004), Edelman’s dynamic core theory (Edelman, 1987; Edelman and Tononi, 2000) and Damasio’s somatic marker hypothesis (cf. Damasio, 1999) all emphasize dynamic processes that encompass both intentionality (e.g., appraisal, see Scherer, 2001; Smith and Kirby, 2001) and affect (Russell and Barrett, 1999; Davidson, 2000). An emotional experience includes affect as one important ingredient, but intentional psychological processes – perception, cognition, attention, and behavior – are also necessary (Pessoa, 2008; Barrett, 2009b). To a great extent, the difference between an emotion and a cognition depends on the level of attention paid to the core affect (Russell, 2003).

Affect can be characterized as fluctuating level of valence (pleasure/displeasure) and arousal (activation/deactivation; Wundt, 1897; Russell, 2003; Barrett and Bliss-Moreau, 2009). It is the most elementary consciously accessible sensation evident in moods and emotions(Russell, 2003). Core affect is so called because it is thought to arise in the core of the body or in neural representations of body state change (Russell and Barrett, 1999; Russell, 2003). It has been observed in subjective reports (Barrett, 2004), in peripheral nervous system activation (Cacioppo et al., 2000), and in facial and vocal expression (Cacioppo et al., 2000; Russell, 2003). The experience of core affect is thought to be present in infants (Lewis, 2000) and psychologically universal (Russell, 1991; Mesquita, 2003).

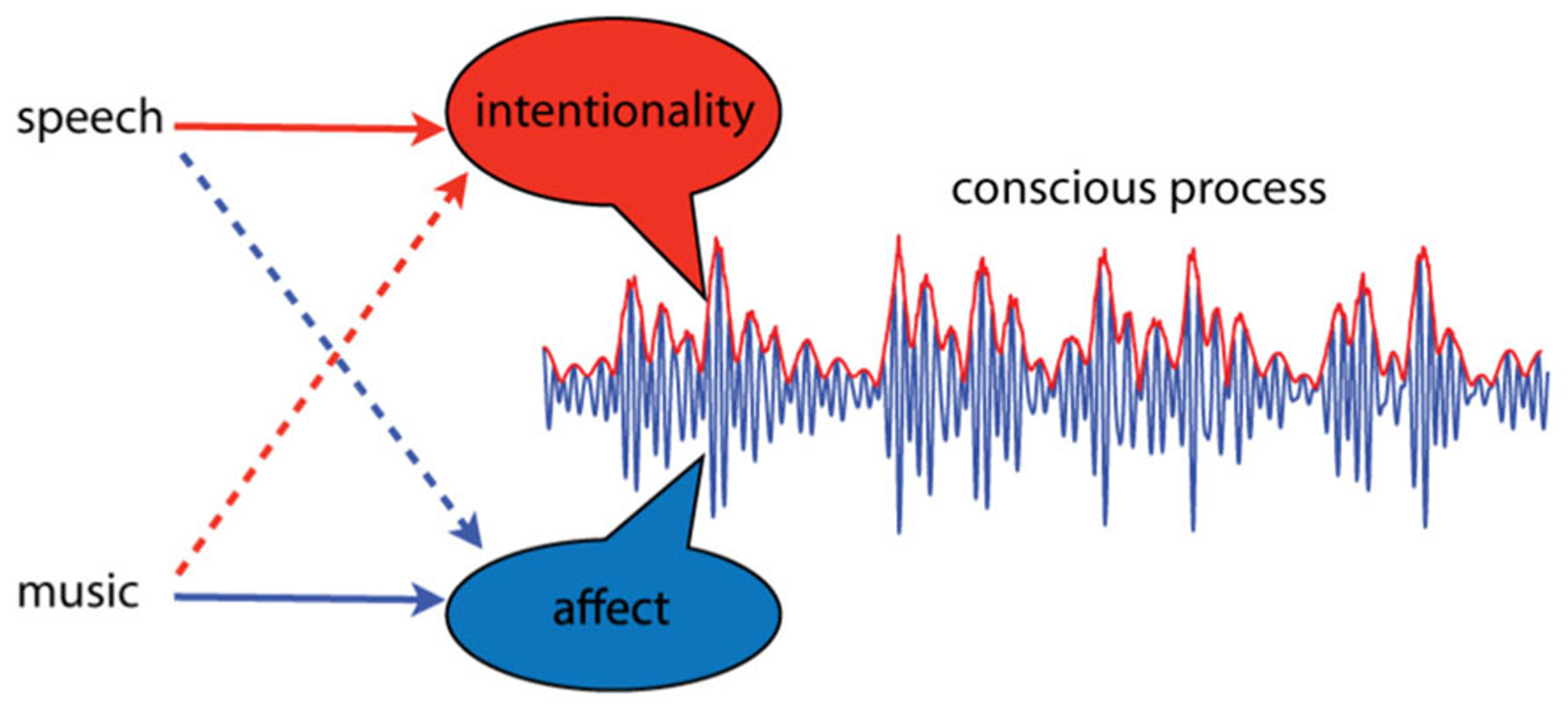

Intentional thought and affective experience are thought to arise as dynamic aspects of spatially distinct dynamic processes, integrated through synchrony of neural oscillations (Tononi and Edelman, 1998; Searle, 2001; Seth et al., 2006). Let us attempt to illustrate this idea, emphasizing dynamic over spatial aspects, by integrating over neural location. The result is an average, summarizing the activity of multiple brain areas, as shown in Figure 1. The dynamic properties of this pattern are the critical features; intentionality and affect correspond to dynamic aspects of the integrated neural activity. In this illustration, affective aspects correspond to changes in higher frequency activity, while intentional aspects take place at lower frequencies, and appear as amplitude modulations. This is only a visual aid of course; we do not know enough to speculate about which frequency bands or dynamic features might correspond to intentionality and affect. Here we oversimplify to illustrate the point that relevant aspects of experience may correspond to dynamical aspects of integrated neural processes. If this approach is on the right track, however, then this way of thinking about core affect may lead to a better understanding why music is such an especially effective means of affective communication.

FIGURE 1

A conscious process is conceived as a dynamic pattern of activity. Intentionality and affect are conceived as separable aspects of such processes, and different types of communication sounds may convey more of one or the other. Language primarily communicates intentionality; it is “about” events in the external world. However, certain aspects of speech, such as prosody, can communicate affect. Music, on the other hand, communicates primarily affect; it is most often not “about” anything. However, under certain circumstances music can signify events or elicit memories.

MUSICAL NEURODYNAMICS

At any given moment, a unified neurodynamic process is shaped by exogenous sensory input such as sights or sounds, input from the body such as vestibular sensations, endogenous constructs such as autobiographical memories, and by communication sounds such as music and speech. It seems likely that if intentionality and affect are different dynamic aspects of these spatiotemporal patterns, then different kinds of communication sounds may couple into different aspects of the dynamics. Of course, it is well established that different modes of auditory communication, i.e., music and speech, convey more of one aspect or the other. Speech primarily communicates intentionality; it is “about” events in the external world. Nevertheless, certain aspects of speech, such as prosody, directly communicate affect. Music, on the other hand, communicates primarily affect; it is most often not “about” anything. However music can signify objects or events, and it can evoke memories and images. Thus, both types of signals can induce emotions, although in different ways. What we want to suggest is that music may couple directly into affective dynamics because it causes the brain to resonate in certain ways.

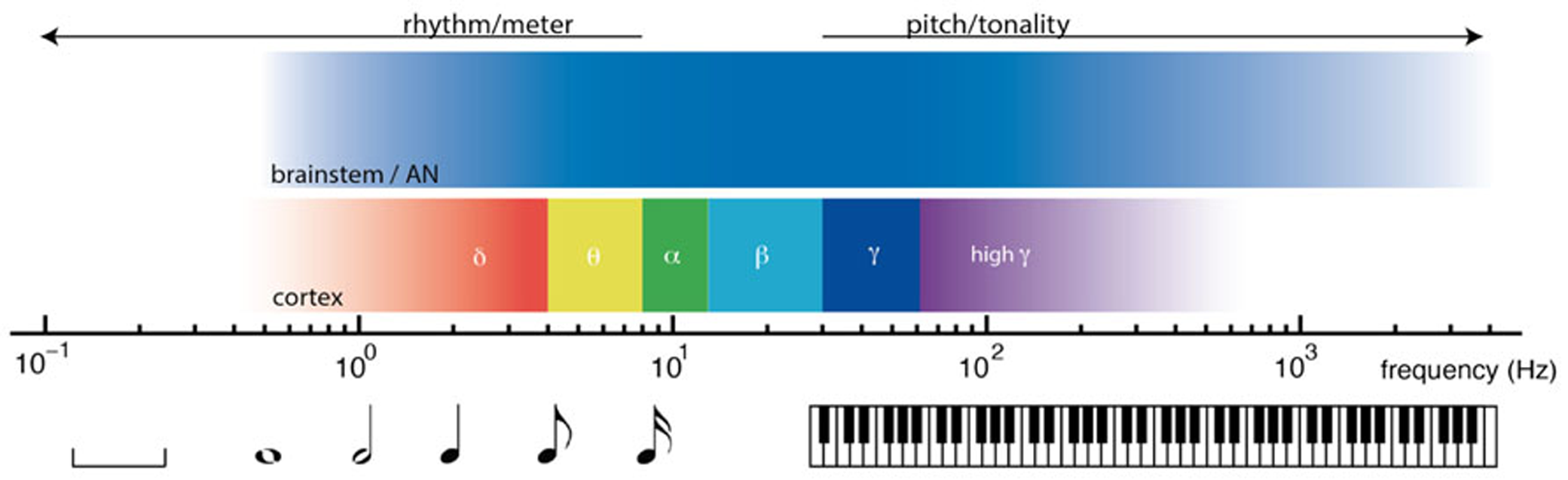

Nonlinear oscillation and resonant responses to acoustic signals are found at multiple time scales in the nervous system, from thousands of Hertz in the auditory nerve and brainstem, to cortical oscillations in delta, theta, beta, and gamma ranges. The relative timescales of these processes are illustrated in Figure 2. From the earliest stages of the auditory system, volleys of action potentials time-lock to dynamic features of acoustic waves (Joris et al., 2004; Laudanski et al., 2010). Time-locked brainstem responses are thought to be important in the perception of pitch, which is observed from 30 Hz (Pressnitzer et al., 2001) up to about 4000 Hz (Plack and Oxenham, 2005). In auditory cortex, endogenous cortical oscillations entrain to low frequency rhythms of acoustic stimuli (Lakatos et al., 2008; Nozaradan et al., 2011). Cortical entrainment is thought to be important in the perception of rhythm, which extends from about 8 Hz (Repp, 2005a) to ultra low cortical frequencies (Buzsáki, 2006; Large, 2008). Between the timescales of pitch and rhythm lie the frequencies thought to be important in binding neural processes into unified conscious scenes (Engel and Singer, 2001; Seth et al., 2006; Fujioka et al., 2012).

FIGURE 2

Timescales of neural dynamics and timescales of musical communication. Music engages the brain on multiple timescales, from fractions of one cycle per second (left) to thousands of cycles per second (right). At rhythmic timescales, cortical oscillations entrain to rhythmic stimuli. At tonal timescales, volleys of action potentials time lock to acoustic waves.

PITCH AND TONALITY

In central auditory circuits, action potentials phase- and mode-lock to the fine time structure and the temporal envelope modulations of auditory stimuli at many different neural levels (Langner, 1992; Large et al., 1998; Joris et al., 2004; Laudanski et al., 2010). Neural synchrony is thought to be important in pitch perception (Cariani and Delgutte, 1996; Hartmann, 1996), consonance (Ebeling, 2008; Shapira Lots and Stone, 2008), and musical tonality (Tramo et al., 2001; Large, 2010a). While phase-locking is well established, mode-locked spiking patterns have recently been reported in the mammalian auditory system (Laudanski et al., 2010) and may explain the highly nonlinear responses to musical intervals that can be measured in the human auditory brainstem response (Lee et al., 2009; Large and Almonte, 2012; Lerud et al., 2013).

Mode-locking implies binding between neural frequencies that display particular frequency relationships (Hoppensteadt and Izhikevich, 1997). In this form of synchrony a periodic stimulus interacts with intrinsic neural dynamics causing m cycles of the oscillation to lock to k cycles of the stimulus. Mode-locking leads to neural resonance at harmonics (k*f1), subharmonics (f1/m), summation frequencies (e.g., f1 + f2), difference frequencies (e.g., f2 - f1), and integer ratios (e.g., k*f1/m)2. This implies feature binding based on harmonicity (Bregman, 1990), and suggests a role for mode-locking in the perception of pitch (cf. Cartwright et al., 1999). This also predicts a significant cross-cultural musical invariant (Burns, 1999) because octave frequency relationships (2:1 and 1:2) are the most stable, followed by fifths (3:2), and fourths (4:3). Mode-locking may provide a neurodynamic explanation for musical consonance and dissonance (Shapira Lots and Stone, 2008) that does not depend on interference (e.g., Plomp and Levelt, 1965).

Perhaps most relevant to the current discussion is the issue of scale degree qualia (Huron, 2006), which has important implications for understanding musical expectancy (Meyer, 1956; Zuckerkandl, 1956). Scale degree qualia differentiate musical sound sequences from arbitrary sound sequences, and are thought to enable non-referential sound patterns to carry meaning. Most discussions of expectancy and emotion assume scale degree qualia to be learned based on the statistics of tonal sequences (e.g., Meyer, 1956; Krumhansl and Kessler, 1982; Lerdahl, 2001; Huron, 2006), and therefore culture-dependent. However, recent dynamical analyses have shown that mode-locking provides a better explanation for quantitative measurements of stability in both Western and North Indian tonal systems (Krumhansl and Kessler, 1982; Castellano et al., 1984; Large, 2010a; Large and Almonte, 2011). Thus, scale degree qualia likely depend on the interaction of the stimulus sequence with intrinsic neurodynamic properties of the physical brain.

RHYTHM AND METER

At the timescale of rhythm and meter, relationships between musical and neural rhythms are equally striking (Musacchia et al., 2013). In auditory cortex, brain rhythms nest hierarchically, for example delta phase modulates theta amplitude, and theta phase modulates gamma amplitude (Lakatos et al., 2005). Like neural rhythms, music rhythms nest hierarchically, such that faster metrical frequencies subdivide the pulse (London, 2004). Pulse perception provides a good match for the delta band (0.5–4 Hz, see London, 2004) while fast metrical frequencies occupy theta (4–8 Hz, see e.g., Repp, 2005b; Large, 2008). Importantly, acoustic stimulation in the pulse range synchronizes auditory cortical rhythms in the delta-band (Will and Berg, 2007; Stefanics et al., 2010; Nozaradan et al., 2011) and modulates the amplitude of higher frequency beta and gamma rhythms (Snyder and Large, 2005; Iversen et al., 2006; Fujioka et al., 2012). Models of synchronization to acoustic rhythms (see e.g., Large, 2008) have successfully predicted a wide range of behavioral observations in time perception (Jones, 1990; McAuley, 1995), meter perception (Large and Kolen, 1994; Large, 2000), attention allocation (Large and Jones, 1999; Stefanics et al., 2010), and motor coordination (Kelso et al., 1990; Repp, 2005c). Moreover, musical qualia including metrical expectancy (Huron, 2005), syncopation (London, 2004), and groove (Tomic and Janata, 2008; Janata et al., 2012), have all been linked to synchronization of cortical rhythms and/or bodily movements. In addition, synchronization of rhythmic movements to music (Burger et al., 2013) and synchronization between individuals (e.g., Hove and Risen, 2009) have been linked to affective responses.

The perception of rhythm also provides an example of synchronous time-locked patterns of activity integrating the function of multiple brain regions. When people listen to musical rhythms that have a pulse or basic beat, multiple brain regions are activated, including auditory cortices, cerebellum, basal ganglia, premotor cortex, and the supplementary motor cortex (Zatorre et al., 2007; Chen et al., 2008; Grahn and Rowe, 2009). In these areas, the amplitude of beta band activity waxes and wanes with the pulse of the acoustic stimulation (Snyder and Large, 2005; Iversen et al., 2006; Fujioka et al., 2012). The specific neural structures involved depend on the tempo of the stimulus, and it appears that the synchrony of beta band processes is what binds the neural activity (Fujioka et al., 2012). This suggests that perhaps it is not the areas per se, but the integrated neural activity that corresponds to the experience of pulse.

MUSICAL COMMUNICATION AS NEURODYNAMIC RESONANCE

We can summarize the above discussion by saying that music taps into brain dynamics at the right time scales to cause both brain and body to resonate to the patterns. This causes the formation of spatiotemporal patterns of activity on multiple temporal and spatial scales within the nervous system. The dynamical characteristics of such spatiotemporal patterns – oscillations, bifurcations, stability, attraction, and responses to perturbations – predict perceptual, attentional, and behavioral responses to music, as well as musical qualia including tonal and rhythmic expectations. Conceptualization of consciousness in similar neurodynamic terms leads to a new way to think about how music may communicate affective content. Neurodynamic responses that give rise to musical qualia also resonate with affective circuits, enabling music to directly engage the sorts of feelings that are associated with emotional experiences. In this section we ask, how might affective resonance take place, do musical qualia arise from intrinsic neurodynamics, and what exactly is communicated?

AFFECTIVE RESONANCE

We begin with an example of affective resonance to rhythm. Expressive piano performance is a kind of social interaction in which correlated fluctuations in timing and intensity transfer emotional information from the performer to the listener (Bhatara et al., 2010). Expressive tempo fluctuations display 1/f structure (Rankin et al., 2009; Hennig et al., 2011), and listeners predict such tempo changes when entraining to musical performances (Rankin et al., 2009; Rankin, 2010). A recent study compared BOLD responses to an expressive performance and a mechanical performance, in which the piece was “performed” by computer, with no fluctuations in timing and intensity. Greater activations were found in emotion and reward related areas for the expressive performance, consistent with transfer of affective information. Tempo fluctuations, BOLD activations and real-time ratings of valence and arousal were also compared for the expressive performance. Over the 3–1/2 min performance, fluctuations in timing correlated with BOLD changes in motor networks known to be involved in rhythmic entrainment, and in a network consistent with the human “mirror neuron” system (Chapin et al., 2010). As tempo increased, activation in these regions increased. Tempo fluctuations also correlated with real-time reports of affective arousal.

Despite the fact that the tempo-correlated activations were observed in so-called mirror neuron areas, this was not motor mirroring; half the participants were not musicians, and none were familiar with the piece. Could listener responses arise from a more general form of contagion in which the perception of affective expression directly induces the same emotion in the perceiver (Carr et al., 2003; Rizzolatti and Craighero, 2004; Molnar-Szakacs and Overy, 2006)? Based on what is known about neural responses to rhythm (Nozaradan et al., 2011; Fujioka et al., 2012), we propose a simple, if somewhat speculative, interpretation. Activation in mirror regions reflects resonance of endogenous cortical rhythms to exogenous musical rhythms. Activation increases as tempo increases because, as this neural circuit entrains to the musical rhythm it tracks the tempo (i.e., frequency modulations) of the performance (Herrmann et al., 2013). The frequency modulations themselves would represent violations of temporal expectancy (Large and Jones, 1999). The expressive performance also led to emotion and reward related neural activations (when compared with a mechanical performance that precisely controlled for melody, harmony, and rhythm, see Chapin et al., 2010). We hypothesize that the frequency modulation of mirror regions led to these activations (Molnar-Szakacs and Overy, 2006; Chapin et al., 2010). Thus, perhaps music directly couples into affective circuitry by exploiting resonant modes of cortical function, thereby creating the basis for affective communication

INTRINSIC DYNAMICS, MUSICAL QUALIA AND COGNITIVE DEVELOPMENT

The preceding discussion suggests that at least some aspects of affective responses to music are deeply rooted in the intrinsic physics of the brain and body. If this is true, then neurodynamic investigations may ultimately explain how musical rhythms couple into neural circuits and modulate affective responses. But, could the neurodynamic approach explain musical qualia more generally? Consider the fundamental qualitative difference between pitch and rhythm. A simple acoustic click, repeated at 5 ms intervals, generates a pitch percept at 200 Hz. Increase the interval to 500 ms and the percept is that of a series of discrete events, with a pulse rate of 2 Hz. From a dynamical systems point of view, it makes perfect sense that the neural mechanisms brought to bear on the two stimuli may be similar; the difference is merely one of timescale. Yet from a phenomenological point of view, the two are fundamentally different: a single continuous event versus a rhythmic sequence. Why the difference in qualia? Perhaps it is because the timescale at which distinct neural events are bound together into unified conscious scenes lie between these timescales of pitch and rhythm. Perhaps the difference in qualia lies in the timescale relationship, not in the mechanism per se. If so, perhaps neural oscillation explains not only rhythm related responses, but also pitch related responses, such as stability and attraction.

We have argued elsewhere that the terms stability and attraction, used by theorists to describe scale degree qualia (Meyer, 1956; Zuckerkandl, 1956; Lerdahl and Jackendoff, 1983; Lerdahl, 2001), are not metaphorical. These refer to real, dynamical stability and attraction relationships in a neural field stimulated by external frequencies (Large, 2010a; Large and Almonte, 2011). In other words, scale degree qualia are simply what it feels like when our brains resonate to tonal sequences. This approach can explain the perception of tonal stability and attraction in Western modes (Krumhansl and Kessler, 1982; Large, 2010a), and North Indian raga (Castellano et al., 1984; Large and Almonte, 2011). It may also shed light on the development of statistical regularities in tonal melodies, implying that certain pitches occur more frequently because they have greater dynamical stability in underlying neural networks.

There is now a great deal of evidence regarding development of basic music structure cognition, including meter (Hannon and Trehub, 2005; Kirschner and Tomasello, 2009; Winkler et al., 2009) and tonality (Trainor and Trehub, 1992; Schellenberg and Trehub, 1996; Trehub et al., 1999). Such results reveal developmental trajectories that occur over the first several years of life, as well as perceptual invariants that are consistent with intrinsic neurodynamics (Large, 2010b) tuned with Hebbian plasticity (Hoppensteadt and Izhikevich, 1996; Large, 2010a). Dalla Bella et al. (2001) asked if children can determine whether music is happy or sad. 3- to 4-year-olds failed to distinguish happy from sad above chance, 5-year-olds” responses were affected by tempo, while 6- to 8-year-old children used both tempo and mode. Thus, children begin to use tempo at about the same time the ability to synchronize movements emerges, and they begin to use mode at about the same time that sensitivity to key emerges (Trainor and Trehub, 1992; Schellenberg and Trehub, 1999; McAuley et al., 2006). The fact that the development of the two main musical dimensions – rhythm and tonality – have the same time course as their affective correlates, strongly suggests a link between the development of neurodynamic responses and music-induced affective experience.

We do not claim that musical qualia are hard-wired, however, our argument does suggest that substantive aspects of musical expectancy and musical contagion may be explainable directly in neurodynamic terms, linking “high-level” perception with “low-level” neurodynamics. In combination with Hebbian plasticity, intrinsic neurodynamic constraints could explain the sensitivity of infant listeners to musical invariants, as well as the ability to acquire sophisticated musical knowledge. Moreover, this explanation suggests that association of affective responses with the musical modes of diverse cultures may not be due entirely to convention, as has been speculated previously (Meyer, 1956; Huron, 2005). Indeed cross-cultural studies in the perception of Western music suggest that happiness and sadness are communicated, at least in part, based on mode (Balkwill et al., 2004; Fritz et al., 2009). Moreover, unencultured Western listeners may be able to understand the moods intended by Indian raga performances (Balkwill and Thompson, 1999; Chordia and Rae, 2008). At the very least, these cross-cultural findings suggest that associations of mood with mode have been prematurely dismissed as conventional, and these relationships deserve to be reevaluated.

WHAT IS COMMUNICATED – BASIC EMOTION OR CORE AFFECT?

Juslin and Vastfjall (2008) propose that emotional contagion operates similarly to facial expression of basic emotions (Juslin and Laukka, 2003; Laird and Strout, 2007). However, because the theory of basic emotions has recently been called into question, it makes sense to review the body of evidence that pertains to music. In communication studies, both performances and listener judgments of intended emotion have been linked to specific musical features, including tempo, articulation, intensity, and timbre (Gabrielsson and Juslin, 1996; Peretz et al., 1998; Juslin, 2000; Juslin and Laukka, 2003). However, these studies also show that, at least for Western music, happiness and sadness are the most reliably communicated emotions (Kreutz et al., 2002; Lindström et al., 2002; Kallinen, 2005; Kreutz et al., 2008; Mohn et al., 2011), while other “basic” emotions are more often confused (Gabrielsson and Juslin, 1996; Peretz et al., 1998; Juslin, 2000; Juslin and Laukka, 2003; Kreutz et al., 2008; Mohn et al., 2011). Interestingly, Ekman (1993) and Izard et al. (2000) have both questioned the theory of facial expression of basic emotion based on variability and confusability. Moreover, analyses of physiological responses to music show that while musical stimuli elicit significant responses, physiological measures do not generally match listener self-reports using emotion terms (Krumhansl, 1997).

Basic emotion theory has been linked to an approach in which music is supposed to somehow imitate or mimic more biologically relevant stimuli, such as speech or mother-infant interactions (Juslin and Laukka, 2003), leading to the direct perception of emotion. Such discussions generally assume that musical communication is not evolutionarily selected, but needs to piggyback on more fundamental mechanisms. Our proposal is that music speaks to the brain in its own language, it need not imitate any other form of communication. In this sense, other forms of communication may be seen to induce or modulate emotions more indirectly, i.e., the effect is more cognitive (cf. Langer, 1951). Thus, the study of music may provide a unique window into the fundamental nature of affective communication, which might explain, for example, why music has the ability to evoke emotional memories (Janata et al., 2007).

It is tempting to try to unify core affect with basic emotion (Juslin, 2001) by assuming that each emotion category is associated with a specific core affective state (e.g., fear is unpleasant and highly arousing, sadness is unpleasant and less arousing, etc.). However, the mapping of emotion to affect is not unique; core affective states experienced during two different episodes of a given, nameable emotion (e.g., fear) typically differ depending on the situation (Meyer, 1956; Barrett, 2009b; Wilson-Mendenhall et al., 2013). Moreover, musical variables such as melodic contour, tempo, loudness, texture, and timbral sharpness, predict real-time listener ratings of arousal and valence well (Schubert, 1999, 2001, 2004), and correlate with BOLD responses in a number of brain regions (Chapin et al., 2010). Neuroimaging studies have also revealed BOLD responses to parametric manipulation of pleasantness (Blood et al., 1999; Koelsch et al., 2006), and these overlap with responses to intensely pleasurable music (Blood and Zatorre, 2001; Salimpoor et al., 2010).

CONCLUSION

In summary, we believe that a coherent picture is developing, based on recent findings of nonlinear resonant responses to acoustic stimulation at multiple timescales (Ruggero, 1992; Joris et al., 2004; Lakatos et al., 2005; Lee et al., 2009; Laudanski et al., 2010; Nozaradan et al., 2011) and theoretical analyses that show how such processes could underlie complex cognitive computations as well as phenomenal and affective aspects of our musical experiences (Baldi and Meir, 1990; Hoppensteadt and Izhikevich, 1997; Izhikevich, 2002; Shapira Lots and Stone, 2008; Large, 2010b). Such results and analyses suggest that neurodynamics provides an appropriate level at which to understand not only perceptual and cognitive responses to music, but ultimately affective and emotional responses as well. We suggest that, to support affective communication, music need not mimic some other type of social interaction; it need only engage the nervous system at the appropriate timescales. Indeed, music may be a unique type of stimulus that engages the brain in ways that no other stimulus can.

Thus, we suggest that there is something special about the way music communicates emotion. Our approach recasts musical expectancy and affective contagion as nonlinear resonance to musical patterns. Resonance occurs simultaneously on multiple timescales, leading to stable or metastable patterns of neural responses. Such patterns are inherently spatiotemporal, however, temporal aspects of the stimulus determine at any specific point which neural structures are involved. Violations of expectancy, such as the occurrence of a strong rhythmic event on a weak beat (a syncopation) or the prolongation of an unstable tone where a stable tone is expected (an appoggiatura), would correspond to a disruptions, or perturbations of the ongoing pattern. Implication and realization would correspond to relaxation toward, and reestablishment of a stable orbit. Stable and unstable in this context, are determined by the intrinsic neurodynamics of brain networks involved, which depend, in part, on tuning of the dynamics via synaptic plasticity. In this way, music may modulate affective neurodynamics directly by coupling into those aspects of the dynamic core of consciousness that govern our subjective feelings from moment to moment.

GLOSSARY

Affect/Core affect: The most elementary consciously accessible sensation evident in moods and emotions. Affect can be characterized as fluctuating level of valence (pleasure/displeasure) and arousal (activation/ deactivation; Barrett and Bliss-Moreau, 2009). Core affect is so called because it is thought to arise in the core of the body or in neural representations of body state change (Russell, 2003).

Basic emotions: A few privileged emotion kinds (e.g., anger, sadness, fear, and happiness), each of which is thought to derive from an evolutionarily preserved brain module. Basic emotions are discrete, and each category shares a distinctive collection of properties, including patterns of autonomic nervous system activity, behavioral responses, and action tendencies. A set of emotion-specific brain structures is thought to mediate these particular “basic” emotions (Allport, 1924; Izard, 1971; Ekman, 1972; Panksepp, 1998).

Dynamic core: Functional clusters of neuronal groups in the thalamocortical system that are hypothesized to underlie consciousness. Distinct neuronal groups contribute to the contents of consciousness through enhanced synchrony of neural rhythms. The boundaries of this core are suggested to shift over time, with transitions occurring under the influence of internal and external stimulation (Seth, 2007).

Empathy: A feeling that arises when the perception of an emotional gesture in another person directly induces the same emotion in the perceiver without any appraisal process (see Juslin and Vastfjall, 2008).

Emotional contagion/Affective contagion: A process that occurs between individuals in which emotional or affective information is transferred from one individual to another. The idea that people may “catch” the emotions of others when seeing their facial expressions, hearing their vocal expressions, or hearing their musical performances (see Juslin and Vastfjall, 2008)

Emotion: Affective responses to situations that usually involve a number of sub-components – subjective feeling, physiological arousal, thought, expression, action tendency, and regulation – which are more or less synchronized (Juslin and Vastfjall, 2008). Emotions are intentional; they are about an object or event.

Feelings: The subjective phenomenal character of an experience, used informally to refer to qualia or affect.

Intentionality: The power of minds to be about, to represent, or to stand for, things, properties and states of affairs (Jacob, 2010).

Psychological constructionism: The theory that emotions results from the combination of psychologically primitive processes, which encompass both affective and intentional components. A specific emotion is not the invariable result of activation in a particular brain area; neural circuitry realizes more basic processes across emotion categories. Psychological constructionists argue that emotions are culturally relative and learned (see Russell and Barrett, 1999; Barrett and Bliss-Moreau, 2009; Barrett, 2009a,b).

Qualia/musical qualia: The distinctive subjective character of a mental state; what it is like to experience each state; the introspectively accessible, phenomenal aspects of our mental lives (Tye, 2013). Musical qualia refers to the subjective character of specific musical events, experienced within a tonal and/or temporal context.

Statements

Acknowledgments

This work was supported by NSF grant BCS-1027761 to Edward W. Large. We wish to thank Dan Levitin and all of the reviewers (with special thanks to reviewer 3) for their careful reading and excellent suggestions for improving this manuscript.

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Footnotes

1.^We use the word intentionality to refer to any mental phenomena that have referential content (see glossary).

2.^f1 and f2 denote frequencies of pure tones and k and m are positive integers.

References

1

Allport F. (1924). Social Psychology.New York: Houghton Mifflin.

2

Anders S. Heinzle J. Weiskopf N. Ethofer T. Haynes J. D. (2011). Flow of affective information between communicating brains.Neuroimage54439–446. 10.1016/j.neuroimage.2010.07.004

3

Angier R. P. (1927). The conflict theory of emotion.Am. J. Psychol.39390–401. 10.2307/1415425

4

Baldi P. Meir R. (1990). Computing with arrays of coupled oscillators: an application to preattentive texture discrimination.Neural Comput.2458–471. 10.1162/neco.1990.2.4.458

5

Balkwill L. L. Thompson W. F. (1999). A cross-cultural investigation of the perception of emotion in music: psychophysical and cultural cues.Music Percept.1743–64. 10.2307/40285811

6

Balkwill L. L. Thompson W. F. Matsunaga R. (2004). Recognition of emotion in Japanese, Western, and Hindustani music by Japanese listeners.Jpn. Psychol. Res.46337–349. 10.1111/j.1468-5584.2004.00265.x

7

Barrett L. F. (1998). Discrete emotions or dimensions? The role of valence focus and arousal focus.Cogn. Emot.12579–599.10.1080/026999398379574

8

Barrett L. F. (2004). Feelings or words? Understanding the content in self-report ratings of experienced emotion.J. Pers. Soc. Psychol.87266–281. 10.1037/0022-3514.87.2.266

9

Barrett L. F. (2006). Valence as a basic building block of emotional life.J. Res. Pers.4035–55. 10.1016/j.jrp.2005.08.006

10

Barrett L. F. (2009a). The future of psychology: connecting mind to brain.Perspect. Psychol. Sci.4326–339. 10.1111/j.1745-6924.2009.01134.x

11

Barrett L. F. (2009b). Variety is the spice of life: a psychological construction approach to understanding variability in emotion.Cogn. Emot.231284–1306. 10.1080/02699930902985894

12

Barrett L. F. Bliss-Moreau E. (2009). Affect as a psychological primitive.Adv. Exp. Soc. Psychol.41167–218. 10.1016/S0065-2601(08)00404-8

13

Bhatara A. Tirovolas A. K. Duan L. M. Levy B. Levitin D. J. (2010). Perception of emotional expression in musical performance.J. Exp. Psychol. Hum. Percept. Perform.37921–934. 10.1037/a0021922

14

Blood A. J. Zatorre R. J. (2001). Intensely pleasurable responses to music correlate with activity in brain regions implicated in reward and emotion.Proc. Natl. Acad. Sci. U.S.A.9811818–11823. 10.1073/pnas.191355898

15

Blood A. J. Zatorre R. J. Bermudez P. Evans A. C. (1999). Emotional responses to pleasant and unpleasant music correlate with activity in paralimbic brain regions.Nat. Neurosci.2382–387. 10.1038/7299

16

Bregman A. S. (1990). Auditory Scene Analysis: The Perceptual Organization of Sound.Cambridge, MA: MIT Press.

17

Brentano F. (1973). Psychology from an Empirical Standpoint.New York: Routledge.

18

Burger B. Saarikallio S. Luck G. Thompson M. R. Toiviainen P. (2013). Relationships between perceived emotions in music and music-induced movement.Music Percept.30517–533.10.1525/mp.2013.30.5.517

19

Burns E. M. (1999). “Intervals, scales, and tuning,” inThe Psychology of Musiced.DeustchD. (San Diego:Academic Press) 215–264. 10.1016/B978-012213564-4/50008-1

20

Buzsáki G. (2006). Rhythms of the Brain.New York: Oxford University Press.10.1093/acprof:oso/9780195301069.001.0001

21

Cacioppo J. T. Berntson G. G. Larsen J. T. Poehlmann K. M. Ito T. A. (2000). “The psychophysiology of emotion,” inThe Handbook of EmotionedsLewisR.Haviland-JonesJ. M. (New York: Guilford Press) 173–191.

22

Canolty R. T. Edwards E. Dalal S. S. Soltani M. Nagarajan S. S. Kirsch H. E. et al (2006). High gamma power is phase-locked to theta oscillations in human neocortex.Science3131626–1628. 10.1126/science.1128115

23

Cariani P. A. Delgutte B. (1996). Neural correlates of the pitch of complex tones. I. Pitch and pitch salience.J. Neurophysiol.761698–1716.

24

Carr L. Iacoboni M. Dubeau M.-C. Mazziotta J. C. Lenzi G. L. (2003). Neural mechanisms of empathy in humans: a relay from neural systems for imitation to limbic areas.Proc. Natl. Acad. Sci. U.S.A.1005497–5502. 10.1073/pnas.0935845100

25

Cartwright J. H. E. González D. L. Piro O. (1999). Nonlinear dynamics of the perceived pitch of complex sounds.Phys. Rev. Lett.825389–5392. 10.1103/PhysRevLett.82.5389

26

Castellano M. A. Bharucha J. J. Krumhansl C. L. (1984). Tonal hierarchies in the music of North India.J. Exp. Psychol. Gen.113394–412. 10.1037/0096-3445.113.3.394

27

Chapin H. Jantzen K. J. Kelso J. A. S. Steinberg F. Large E. W. (2010). Dynamic emotional and neural responses to music depend on performance expression and listener experience.PLoS ONE 5:e13812. 10.1371/journal.pone.0013812

28

Chen J. L. Penhune V. B. Zatorre R. J. (2008). Moving on time: brain network for auditory-motor synchronization is modulated by rhythm complexity and musical training.J. Cogn. Neurosci.20226–239. 10.1162/jocn.2008.20018

29

Chordia P. Rae A. (2008). “Understanding emotion in raag: an empirical study of listener responses,” inComputer music modeling and retrieval. Sense of soundsed.SwaminathanD. (Berlin:Springer) 110–124. 10.1007/978-3-540-85035-9_7

30

Cosmelli D. Lachaux J.-P. Thompson E. (2006). “Neurodynamical approaches to consciousness,” inThe Cambridge handbook of consciousnessedsZelazoP. D.MoscovitchM.ThompsonE. (Cambridge: Cambridge University) 731–774.

31

Cosmides L. Tooby J. (2000). “Consider the source: the evolution of adaptations for decoupling and metarepresentations,” inMetarepresentations: A Multidisciplinary Perspectiveed.SperberD. (Oxford:Oxford University Press) 53–115.

32

Dalla Bella S. Peretz I. Rousseau L. Gosselin N. (2001). A developmental study of the affective value of tempo and mode in music.Cognition80B1–B10. 10.1016/S0010-0277(00)00136-0

33

Damasio A. (1999). The Feeling of What Happens: Body and Emotion in the Making of Consciousness.New York: Harcourt Brace.

34

Darwin C. (1872/1965). The Expression of the Emotions in Man and Animals.Chicago, IL: University of Chicago Press.(Original work published 1872).

35

Davidson R. J. (2000). “The functional neuroanatomy of affective style,” inCognitive Neuroscience of EmotionedsLaneR. D.NadelL. (New York: Oxford University Press) 371–388.

36

Dewey J. (1895). The theory of emotion.Psychol. Rev.213–32. 10.1037/h0070927

37

Duncan S. Barrett L. F. (2007). Affect is a form of cognition: a neurobiological analysis.Cogn. Emot.211184–1211. 10.1080/02699930701437931

38

Ebeling M. (2008). Neuronal periodicity detection as a basis for the perception of consonance: a mathematical model of tonal fusion.J. Acoust. Soc. Am.1242320–2329. 10.1121/1.2968688

39

Edelman G. M. (1987). Neural Darwinism: The Theory of Neuronal Group Selection.New York: Basic Books.

40

Edelman G. M. (2003). Naturalizing consciousness: a theoretical framework.Proc. Natl. Acad. Sci. U.S.A.1005520–5524. 10.1073/pnas.0931349100

41

Edelman G. M. Tononi G. (2000). A Universe of Consciousness: How Matter Becomes Imagination.New York: Basic Books.

42

Ekman P. (1972). “Universal and cultural differences in facial expressions of emotions,” inNebraska Symposium on Motivationed.ColeJ. K. (Lincoln:University of Nebraska Press) 207–283.

43

Ekman P. (1984). “Expression and the nature of emotion,” inApproaches to EmotionedsSchererK.EhanP. (Hillsdale, NJ:Lawrence Erlbaum Associates Inc) 319–344.

44

Ekman P. (1992). An argument for basic emotions.Cogn. Emot.6169–200. 10.1080/02699939208411068

45

Ekman P. (1993). Facial expression and emotion.Am. Psychol.48384–392. 10.1037/0003-066X.48.4.384

46

Ekman P. Friesen W. V. O’Sullivan M. Chan A. Diacoyanni-Tarlatzis I. Heider K. et al (1987). Universals and cultural differences in the judgments of facial expressions of emotion.J. Pers. Soc. Psychol.53712–717. 10.1037/0022-3514.53.4.712

47

Engel A. K. Singer W. (2001). Nonlinear spectrotemporal sound analysis by neurons in the auditory midbrain.J. Neurosci.224114–4131.

48

Eschrich S. Münte T. F. Altenmüller E. O. (2008). Unforgettable film music: the role of emotion in episodic long-term memory for music.BMC Neurosci. 9:48. 10.1186/1471-2202-9-48

49

Fritz T. Jentsche S. Gosselin N. Sammler D. Peretz I. Turner R. et al (2009). Universal recognition of three basic emotions in music.Curr. Biol.19573–576. 10.1016/j.cub.2009.02.058

50

Fujioka T. Trainor L. J. Large E. W. Ross B. (2012). Internalized timing of isochronous sounds is represented in neuromagnetic beta oscillations.J. Neurosci.321791–1802. 10.1523/JNEUROSCI.4107-11.2012

51

Gabrielsson A. Juslin P. N. (1996). Emotional expression in music performance: between the performer’s intention and the listener’s experience.Psychol. Music2468–91. 10.1177/0305735696241007

52

Grahn J. A. Rowe J. B. (2009). Feeling the beat: premotor and striatal interactions in musicians and non-musicians during beat processing.J. Neurosci.297540–7548. 10.1523/JNEUROSCI.2018-08.2009

53

Hannon E. E. Trehub S. E. (2005). Metrical categories in infancy and adulthood.Psychol. Sci.1648–55. 10.1111/j.0956-7976.2005.00779.x

54

Hartmann W. M. (1996). Pitch, periodicity, and auditory organization.J. Acoust. Soc. Am.1003491–3502. 10.1121/1.417248

55

Hennig H. Fleischmann R. Fredebohm A. Hagmayer Y. Nagler J. Witt A. et al (2011). The nature and perception of fluctuations in human musical rhythms.PLoS ONE 6:e26457. 10.1371/journal.pone.0026457

56

Herrmann B. Henry M. Grigutsch M. Obleser J. (2013). Oscillatory phase dynamics in neural entrainment underpin illusory percepts of time.J. Neurosci.3315799–15809. 10.1523/JNEUROSCI.1434-13.2013

57

Hoppensteadt F. C. Izhikevich E. M. (1996). Synaptic organizations and dynamical properties of weakly connected neural oscillators II: learning phase information.Biol. Cybern.75126–135.

58

Hoppensteadt F. C. Izhikevich E. M. (1997). Weakly Connected Neural Networks.New York: Springer.10.1007/978-1-4612-1828-9

59

Hove M. J. Risen J. L. (2009). It’s all in the timing: interpersonal synchrony increases affiliation.Soc. Cogn.27949–960. 10.1521/soco.2009.27.6.949

60

Huron D. B. (2005). Exploring the musical mind: cognition, emotion, ability, function.Music Sci.9217–221.

61

Huron D. B. (2006). Sweet Anticipation: Music and the Psychology of Expectation.Cambridge: MIT Press.

62

Irons D. (1987). The nature of emotions.Philos. Rev.6242–256. 10.2307/2175881

63

Iversen J. R. Repp B. H. Patel A. D. (2006). “Metrical interpretation modulates brain responses to rhythmic sequences,” inProceedings of the 9th International Conference on Music Perception and Cognition (ICMPC9)edsBaroniM.AddessiA. R.CaterinaR.CostaM. (Bologna) 468.

64

Izard C. E. (1971). The Face of Emotion.New York: Appleton Century Crofts.

65

Izard C. E. Ackerman B. P. Schoff K. M. Fine S. E. (2000). “Self-organization of discrete emotions, emotion patterns, and emotion-cognition relations,”Emotion, Development, and Self-Organization: Dynamic Systems Approaches to Emotional DevelopmentedsLewisM. D.GranicI. (Cambridge:Cambridge University Press) 15–36.

66

Izhikevich E. M. (2002). Resonance and selective communication via bursts in neurons having subthreshold oscillations.Biosystems6795–102. 10.1016/S0303-2647(02)00067-9

67

Jacob P. (2010). “Intentionality,” inThe Stanford Encyclopedia of Philosophy, ed.ZaltaE. N.Available at: http://plato.stanford.edu/archives/fall2010/entries/intentionality/

68

Janata P. Tomic S. T. Haberman J. M. (2012). Sensorimotor coupling in music and the psychology of the groove.J. Exp. Psychol. Gen.14154–75. 10.1037/a0024208

69

Janata P. Tomic S. T. Rakowski S. K. (2007). Characterization of music-evoked autobiographical memories.Memory15845–860. 10.1080/09658210701734593

70

Jones M. R. (1990). “Musical events and models of musical time,” inCognitive Models of Psychological Timeed.BlockR. A. (Hillsdale, NJ: Lawrence Erlbaum) 207–240.

71

Joris P. X. Schreiner C. E. Rees A. (2004). Neural processing of amplitude-modulated sounds.Physiol. Rev.84541–577. 10.1152/physrev.00029.2003

72

Juslin P. N. (2000). Cue utilization in communication of emotion in music performance: relating performance to perception.J. Exp. Psychol. Hum. Percept. Perform.261797–1813. 10.1037/0096-1523.26.6.1797

73

Juslin P. N. (2001). “Communicating emotion in music performance: a review and a theoretical framework,” inMusic and Emotion: Theory and ResearchedsJuslinP. N.SlobodaJ. A. (New York: Oxford University Press) 309–337.

74

Juslin P. N. Laukka P. (2003). Communication of emotions in vocal expression and music performance: different channels, same code?Psychol. Bull.129770–814. 10.1037/0033-2909.129.5.770

75

Juslin P. N. Sloboda J. (2001). Music and Emotion: Theory and Research.New York: Oxford University Press.

76

Juslin P. N. Vastfjall D. (2008). Emotional responses to music: the need to consider underlying mechanisms.Behav. Brain Sci.31559–621. 10.1017/S0140525X08005293

77

Kallinen K. (2005). Emotional ratings of music excerpts in the western art music repertoire and their self-organization in the Kohonen neural network.Psychol. Music33373–393. 10.1177/0305735605056147

78

Kelso J. A. S. de Guzman G. C. Holroyd T. (1990). “The self-organized phase attractive dynamics of coordination,” inSelf-Organization, Emerging Properties, and Learninged.BabloyantzA. (New York: Plenum Publishing Corporation) 41–62.

79

Kirschner S. Tomasello M. (2009). Joint drumming: social context facilitates synchronization in preschool children.J. Exp. Child Psychol.102299–314. 10.1016/j.jecp.2008.07.005

80

Koelsch S. Fritz T. von Cramon D. Y. Müller K. Friederici A. D. (2006). Investigating emotion with music: an fMRI study.Hum. Brain Mapp.27239–250. 10.1002/hbm.20180

81

Kreutz G. Bongard S. Jussis J. V. (2002). Cardiovascular responses to music listening: on the influences of musical expertise and emotion.Music Sci.6257–278.

82

Kreutz G. Ott U. Teichmann D. Osawa P. Vaitl D. (2008). Using music to induce emotions: influences of musical preference and absorption.Psychol. Music36101–126. 10.1177/0305735607082623

83

Krumhansl C. L. (1990). Cognitive Foundations of Musical Pitch.New York: Oxford University Press.

84

Krumhansl C. L. (1997). An exploratory study of musical emotions and psychophysiology.Can. J. Exp. Psychol.51336–352. 10.1037/1196-1961.51.4.336

85

Krumhansl C. L. Kessler E. J. (1982). Tracing the dynamic changes in perceived tonal organization in a spatial representation of musical keys.Psychol. Rev.89334–368. 10.1037/0033-295X.89.4.334

86

Laird J. D. Strout S. (2007). “Emotional behaviors as emotional stimuli,” inHandbook of Emotion Elicitation and AssessmentedsCoanJ. A.AllenJ. J. (New York: Oxford University Press) 54–64.

87

Lakatos P. Karmos G. Mehta A. D. Ulbert I. Schroeder C. E. (2008). Entrainment of neuronal oscillations as a mechanism of attentional selection.Science320110–113. 10.1126/science.1154735

88

Lakatos P. Shah A. S. Knuth K. H. Ulbert I. Schroeder C. E. (2005). An oscillatory hierarchy controlling neuronal excitability and stimulus processing in the auditory cortex.J. Neurophysiol.941904–1911. 10.1152/jn.00263.2005

89

Langer S. K. (1951). Philosophy in a New Key2nd Edn.New York: New American Library.

90

Langner G. (1992). Periodicity coding in the auditory system.Hear. Res.60115–142. 10.1016/0378-5955(92)90015-F

91

Large E. W. (2000). On synchronizing movements to music.Hum. Mov. Sci.19527–566. 10.1016/S0167-9457(00)00026-9

92

Large E. W. (2008). “Resonating to musical rhythm: theory and experiment,” inThe Psychology of Timeed.GrondinS. (Cambridge:Emerald) 189–231.

93

Large E. W. (2010a). “Dynamics of musical tonality,” inNonlinear Dynamics in Human BehavioredsHuysR.JIrsaV. (New York:Springer) 193–211.

94

Large E. W. (2010b). “Neurodynamics of music,” inSpringer Handbook of Auditory Research: Music PerceptionedsRiess JonesM.FayR. R.PopperA. N. (New York:Springer) 201–231.

95

Large E. W. Almonte F. (2011). Neurodynamics and Learning in Musical Tonality.Rochester, NY: Society for Music Perception and Cognition.

96

Large E. W. Almonte F. V. (2012). Neurodynamics, tonality, and the auditory brainstem response.Ann. N. Y. Acad. Sci.1252E1–E7. 10.1111/j.1749-6632.2012.06594.x

97

Large E. W. Jones M. R. (1999). The dynamics of attending: how people track time-varying events.Psychol. Rev.106119–159. 10.1037/0033-295X.106.1.119

98

Large E. W. Kolen J. F. (1994). Resonance and the perception of musical meter.Connect. Sci.6177–208. 10.1080/09540099408915723

99

Large E. W. Kozloski J. Crawford J. D. (1998). A dynamical model of temporal processing in the fish auditory system.Assoc. Res. Otolaryngol. Abstr.21717.

100

Laudanski J. Coombes S. Palmer A. R. Sumner C. J. (2010). Mode-locked spike trains in responses of ventral cochlear nucleus chopper and onset neurons to periodic stimuli.J. Neurophysiol.1031226–1237. 10.1152/jn.00070.2009

101

LeDoux J. E. (1996). Emotional networks and motor control: a fearful view.Prog. Brain Res.107437–446. 10.1016/S0079-6123(08)61880-4

102

LeDoux J. E. (2000). Emotion circuits in the brain.Annu. Rev. Neurosci.23155–184. 10.1146/annurev.neuro.23.1.155

103

Lee K. M. Skoe E. Kraus N. Ashley R. (2009). Selective subcortical enhancement of musical intervals in musicians.J. Neurosci.295832–5840. 10.1523/JNEUROSCI.6133-08.2009

104

Lerdahl F. (2001). Tonal Pitch Space.New York: Oxford University Press.

105

Lerdahl F. Jackendoff R. (1983). An overview of hierarchical structure in music.Music Percept.1229–252. 10.2307/40285257

106

Lerud K. D. Almonte F. V. Kim J. C. Large E. W. (2013). Mode-locking neurodynamics predict human auditory brains tem responses to musical intervals.Hear. Res.30841–49. 10.1016/j.heares.2013.09.010

107

Lewis M. (2000). “The emergence of human emotions,” inHandbook of EmotionsedsLewisM.Haviland-JonesJ. M. (New York:Guilford Press) 265–280.

108

Lindquist K. A. Wager T. D. Kober H. Bliss-Moreau E. Barrett L. F. (2012). The brain basis of emotion: a meta-analytic review.Behav. Brain Sci.35121–143. 10.1017/S0140525X11000446

109

Lindström E. Juslin P. N. Bresin R. Williamon A. (2002). “Expressivity comes from within your soul”: a questionnaire study of music students. Perspectives on expressivity. Res. Stud. Music Educ.2023–47. 10.1177/1321103X030200010201

110

London J. (2004). Hearing in Time: Psychological Aspects of Musical Meter.Oxford: Oxford University Press.10.1093/acprof:oso/9780195160819.001.0001

111

MacCurdy J. T. (1925). The Psychology of Emotion.Oxford: Harcourt, Brace.

112

McAuley J. D. (1995). Perception of Time as Phase: Toward an Adaptive-Oscillator Model of Rhythmic Pattern Processing.Unpublished doctoral dissertation.Bloomington: Indiana University.

113

McAuley J. D. Jones M. R. Holub S. Johnston H. M. Miller N. S. (2006). The time of our lives: life span development of timing and event tracking.J. Exp. Psychol. Gen.135348–367. 10.1037/0096-3445.135.3.348

114

Mesquita B. (2003). “Emotions as dynamic cultural phenomena,” inHandbook of the Affective SciencesedsDavidsonR.SchererK. (New York:Oxford University Press) 871–890.

115

Meyer L. B. (1956). Emotion and Meaning in Music.Chicago: University of Chicago Press.

116

Mohn C. Argstatter H. Wilker F. W. (2011). Perception of six basic emotions in music.Psychol. Music39503–517. 10.1177/0305735610378183

117

Molnar-Szakacs I. Overy K. (2006). Music and mirror neurons: from motion to emotion.Soc. Cogn. Affect. Neurosci.1235–241. 10.1093/scan/nsl029

118

Musacchia G. Large E. W. Schroeder C. E. (2013). Thalamocortical mechanisms for integrating musical tone and rhythm.Trends Neurosci512812–2824.

119

Narmour E. (1990). The Analysis and Cognition of Basic Melodic Structures: The Implication-Realization Model.Chicago: University of Chicago Press.

120

Nozaradan S. Peretz I. Missal M. Mouraux A. (2011). Tagging the neuronal entrainment to beat and meter.J. Neurosci.3110234–10240. 10.1523/JNEUROSCI.0411-11.2011

121

Ortony A. Clore G. L. Collins A. (1988). The Cognitive Structure of Emotions.Cambridge: Cambridge University Press.10.1017/CBO9780511571299

122

Ortony A. Turner T. J. (1990). What’s basic about basic emotions?Psychol. Rev.97315–331. 10.1037/0033-295X.97.3.315

123

Panksepp J. (1998). Affective Neuroscience.New York: Oxford University Press.

124

Peretz I. Gagnon L. Bouchard B. (1998). Music and emotion: perceptual determinants, immediacy, and isolation after brain damage.Cognition68111–141. 10.1016/S0010-0277(98)00043-2

125

Pessoa L. (2008). On the relationship between emotion and cognition.Nat. Rev. Neurosci.9148–158. 10.1038/nrn2317

126

Pinker S. (1997). How the Mind Works.New York: Norton.

127

Plack C. J. Oxenham A. J. (2005). “The psychophysics of pitch,” inPitch: Neural Coding and PerceptionedsPlackC. J.FayR. R.OxenhamA. J.PopperA. N. (New York:Springer) 7–55.

128

Plomp R Levelt W. J. M. (1965). Tonal consonance and critical bandwidth.J. Acoust. Soc. Am.38548–560. 10.1121/1.1909741

129

Pressnitzer D. Patterson R. D. Krumbholz K. (2001). The lower limit of melodic pitch.J. Acoust. Soc. Am.1092074–2084. 10.1121/1.1359797

130

Rankin S. K. (2010). 1/f Structure of Temporal Fluctuation in Rhythm Performance and Rhythmic Coordination.Unpublished doctoral dissertation, Florida Atlantic University.

131

Rankin S. K. Large E. W. Fink P. W. (2009). Fractal tempo fluctuation and pulse prediction.Music Percept.26401–413. 10.1525/mp.2009.26.5.401

132

Rapaport D. (1950). Emotions and Memory2nd EdnNew York: International University Press.

133

Repp B. H. (2005a). Rate limits of on-beat and off-beat tapping with simple auditory rhythms: 1. Qualitative observations. Music Percept.22479–496. 10.1525/mp.2005.22.3.479

134

Repp B. H. (2005b). Rate limits of on-beat and off-beat tapping with simple auditory rhythms: 2.The roles of different kinds of accent. Music Percept.23165–187. 10.1525/mp.2005.23.2.165

135

Repp B. H. (2005c). Sensorimotor synchronization: a review of the tapping literature.Psychon. Bull. Rev.12969–992. 10.3758/BF03206433

136

Rizzolatti G. Craighero L. (2004). The mirror-neuron system.Annu. Rev. Neurosci.27169–192. 10.1146/annurev.neuro.27.070203.144230

137

Ruggero M. A. (1992). Responses to sound of the basilar membrane of the mammalian cochlea.Curr. Opin. Neurobiol.2449–456. 10.1016/0959-4388(92)90179-O

138

Russell J. A. (1991). Culture and the categorization of emotion.Psychol. Bull.110426–450. 10.1037/0033-2909.110.3.426

139

Russell J. A. (2003). Core affect and the psychological construction of emotion.Psychol. Rev.110145–172. 10.1037/0033-295X.110.1.145

140

Russell J. A. Barrett L. F. (1999). Core affect, prototypical emotional episodes, and other things called emotion: dissecting the elephant.J. Pers. Soc. Psychol.76805–819. 10.1037/0022-3514.76.5.805

141

Salimpoor V. N. Chang C. Menon V. (2010). Neural basis of repetition priming during mathematical cognition: repetition suppression or repetition enhancement?J. Cogn. Neuro. sci.22790–805. 10.1162/jocn.2009.21234

142

Schellenberg E. G. Trehub S. E. (1996). Natural musical intervals: evidence from infant listeners.Psychol. Sci.7272–277. 10.1111/j.1467-9280.1996.tb00373.x

143

Schellenberg E. G. Trehub S. E. (1999). Culture-general and culture-specific factors in the discrimination of melodies.J. Exp. Child Psychol.74107–127. 10.1006/jecp.1999.2511

144

Scherer K. R. (2001). “Appraisal considered as a process of multilevel sequential processing,” inAppraisal Processes in Emotion: Theory, Methods, ResearchedsSchererK. R.SchorrA.JohnstoneT. (New York: Oxford University Press) 92–120.

145

Schubert E. (1999). Measuring emotion continuously: validity and reliability of the two-dimensional emotion-space.Austr. J. Psychol.51154–165. 10.1080/00049539908255353

146

Schubert E. (2001). “Continuous measurement of self-report emotional response to music,” inMusic and Emotion: Theory and ResearchedsJuslinP. N.SlobodaJ. A. (New York:Oxford University Press) 393–414.

147

Schubert E. (2004). Modeling perceived emotion with continuous musical features.Music Percept.21561–585. 10.1525/mp.2004.21.4.561

148

Searle J. R. (1992). The Rediscovery of the Mind.Cambridge, MA: MIT Press.

149

Searle J. R. (2000). Consciousness.Annu. Rev. Neurosci.23557–578. 10.1146/annurev.neuro.23.1.557

150

Searle J. R. (2001). Free will as a problem in neurobiology.Philosophy76491–514. 10.1017/S0031819101000535

151

Searle J. R. (2004). Mind: A Brief Introduction.New York: Cambridge University Press.

152

Seth A. K. (2007). Models of consciousness.Scholarpedia2132810.4249/scholarpedia.1328

153

Seth A. K. Izhikevich E. Reeke G. N. Edelman G. M. (2006). Theories and measures of consciousness: an extended framework.Proc. Natl. Acad. Sci. U.S.A.10310799–10804. 10.1073/pnas.0604347103

154

Shapira Lots I. Stone L. (2008). Perception of musical consonance and dissonance: an outcome of neural synchronization.J. R. Soc. Interface51429–1434. 10.1098/rsif.2008.0143

155

Sloboda J. A O’Neill S. A. (2001). “Emotions in everyday listening to music,” inMusic and Emotion: Theory and ResearchedsJuslinP. N.SlobodaJ. A. (New York:Oxford University Press) 415–429.

156

Smith C. A. Kirby L. D. (2001). “Toward delivering on the promise of appraisal theory,” inAppraisal Processes in Emotion: Theory, Methods, ResearchedsSchererK. R.SchorrA. (New York:Oxford University Press) 121–138.

157

Snyder J. S. Large E. W. (2005). Gamma-band activity reflects the metric structure of rhythmic tone sequences.Cogn. Brain Res.24117–126. 10.1016/j.cogbrainres.2004.12.014

158

Stefanics G. Hangya B. Hernadi I. Winkler I. Lakatos P. Ulbert I. (2010). Phase entrainment of human delta oscillations can mediate the effects of expectation on reaction speed.J. Neurosci.3013578–13585. 10.1523/JNEUROSCI.0703-10.2010

159

Temperley D. (2007). Music and Probability.Cambridge, MA: The MIT Press.

160

Tillmann B. Bharucha J. J. Bigand E. (2000). Implicit learning of tonality: a self-organizing approach.Psychol. Rev.107885–913. 10.1037/0033-295X.107.4.885

161

Tomic S. T. Janata P. (2008). Beyond the beat: modeling metric structure in music and performance.J. Acoust. Soc. Am.1244024–4041. 10.1121/1.3006382

162

Tomkins S. S. (1962). Affect, Imagery, Consciousness, Volume 1: The positive affects.New York: Springer.

163

Tomkins S. S. (1963). Affect, Imagery, Consciousness, Volume 2: The negative affects.New York: Springer.

164

Tononi G. Edelman G. M. (1998). Consciousness and the integration of information in the brain.Adv. Neurol.77245–279.

165

Trainor L. J. Trehub S. E. (1992). A comparison of infants’ and adults’ sensitivity to Western musical structure.J. Exp. Psychol. Hum. Percept. Perform.18394–402. 10.1037/0096-1523.18.2.394

166

Tramo M. J. Cariani P. A. Delgutte B. Braida L. D. (2001). Neurobiological foundations for the theory of harmony in western tonal music.Ann. N. Y. Acad. Sci.93092–116. 10.1111/j.1749-6632.2001.tb05727.x

167

Trehub S. E. Schellenberg E. G. Kamenetsky S. B. (1999). Infants’ and adults’ perception of scale structure.J. Exp. Psychol. Hum. Percept. Perform.25965–975. 10.1037/0096-1523.25.4.965

168

Tye M. (2013). “Qualia,” inThe Stanford Encyclopedia of Philosophyed.ZaltaE. N.Available at: http://plato.stanford.edu/archives/fall2013/entries/qualia/

169

Varela F. Lachaux J. P. Rodriguez E. Martinerie J. (2001). The brainweb: phase synchronization and large-scale integration.Nat. Rev. Neurosci.2229–239. 10.1038/35067550

170

Will U. Berg E. (2007). Brain wave synchronization and entrainment to periodic acoustic stimuli.Neurosci. Lett.42455–60. 10.1016/j.neulet.2007.07.036

171

Wilson-Mendenhall C. D. Barrett L. F. Barsalou L. W. (2013). Neural evidence that human emotions share core affective properties.Psychol. Sci.24947–956. 10.1177/0956797612464242

172

Winkler I. Háden G. Ladinig O. Sziller I. Honing H. (2009). Newborn infants detect the beat in music.Proc. Natl. Acad. Sci. U.S.A.1062468–2471. 10.1073/pnas.0809035106

173

Wundt W. (1897). Outlines of Psychology.(C. H. Judd, Trans.)Oxford: Engelman. 10.1037/12908-000

174

Zatorre R. J. Chen J. L. Penhune V. B. (2007). When the brain plays music: auditory–motor interactions in music perception and production.Nat. Rev. Neurosci.8547–558. 10.1038/nrn2152

175

Zuckerkandl V. (1956). Sound and symbol: Music and the external world(W. R. Trask, Trans.).Princeton: Princeton University Press.

Summary

Keywords

neurodynamics, consciousness, affect, emotion, musical expectancy, oscillation, synchrony

Citation

Flaig NK and Large EW (2014) Dynamic musical communication of core affect. Front. Psychol. 5:72. doi: 10.3389/fpsyg.2014.00072

Received

30 April 2013

Accepted

19 January 2014

Published

17 March 2014

Volume

5 - 2014

Edited by

Daniel J. Levitin, McGill University, Canada

Reviewed by