- 1School of Education Science, Nanjing Normal University, Nanjing, China

- 2Department of Computer Science and Engineering, National Taiwan Ocean University, Keelung, Taiwan

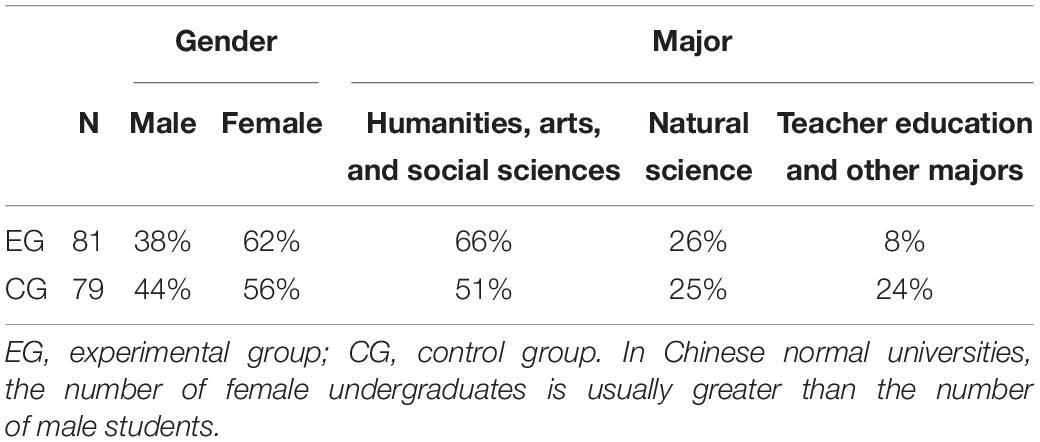

In order for higher education to provide students with up-to-date knowledge and relevant skillsets for their continued learning, it needs to keep pace with innovative pedagogy and cognitive sciences to ensure inclusive and equitable quality education for all. An adequate implementation of flipped learning, which can offer undergraduates education that is appropriate in a knowledge-based society, requires moving from traditional educational models to innovative pedagogy integrated with a playful learning environment (PLE) supported by information and communications technologies (ICTs). In this paper, based on the design-based research, a task-driven instructional approach in the flipped classroom (TDIAFC) was designed and implemented for two groups of participants in an undergraduate hands-on making course in a PLE. One group consisting of 81 students as the experimental group (EG) received flipped learning instruction, and another group of 79 students as the control group (CG) received lecture-centered instruction. The EG students experienced a three-round study, with results from the first round informing the customized design of the second round and the second round informing the third round. The experimental results demonstrated that students in the EG got higher scores of summative tests and final scores than those in the CG. In particular, students’ learning performance in three domains (i.e., cognitive, affective, and psychomotor) differ significantly between the two groups.

Introduction

Collis (1998) offered a concept for pedagogical re-engineering of existing courses that is more flexible, involves more student engagement and better structure, and is more attuned to students’ responsibility for their own continued learning. Wright and Cordeaux (1996) similarly recommended a shift from instructor-transmission models to learner-oriented classrooms, taught according to the process-based model, to achieve effective learning. Innovative pedagogy can provide opportunities to help the teacher and his/her students develop their identities in relation to each other. With the support of information and communications technology (ICT), which plays an increasingly important role in education for sustainable development (Carrión-Martínez et al., 2020), learners in an innovative pedagogy classroom take responsibility for communicating their meanings that freely convey socio-affective and meta-cognitive factors through social interaction (Farren, 2016). In line with these ideas, many innovative pedagogies have been proposed, for example, inquiry-based learning (Schwab, 1962), problem-based learning (Servant-Miklo, 2019; PBL was first implemented in 1969 at McMaster University School of Medicine), play-based learning (Cheng and Stimpson, 2004), and design-based learning (Nelson, 1984).

Based on concepts explored during the 1990s, Baker (2000) presented the pedagogy of flipped classroom, which captures the essence of innovative pedagogy. With the support of mobile technology, such as phones and tablets, which can afford new opportunities to directly influence learning processes and outcomes (Bernacki et al., 2020), the flipped classroom is being implemented in a wide range of disciplines (mathematics, social sciences, humanities, etc.) at a variety of educational levels across many countries (Hao, 2016; Hinojolucena et al., 2018; Builfabrega et al., 2019; Su et al., 2019a,b, 2020). Many instructors in traditional higher education institutions have therefore considered the need to redesign their instruction to flipped classes. For example, Lombardini et al. (2018) designed two flipped classrooms, partial flip and full flip, to examine students’ learning effectiveness in a microeconomics course, and found that the students were not as satisfied with full flip as with partial flip due to the workload. Many studies have combined the task-driven instructional approach with the flipped classroom and evaluation shows that it has a certain degree of success in its application (Yin, 2015; Li, 2017; Hua, 2019; Su and Wu, 2021).

The term “playful learning” was defined by Kangas et al. (2017) as learning activities that are designed and implemented to promote students’ playful engagement and exploration. Playful learning has also been shown to facilitate students’ creativity and imagination (Kangas, 2010a). Offering an active learning method, PLE aims to physically engage students in learning tasks (De Koning-Veenstra et al., 2014). In the PLE, novel tools and technologies can be applied to support learning. Students can learn with imagination and a playful attitude. However, the studies that have reviewed students’ learning engagement and learning outcomes in flipped classroom hands-on making courses in a PLE are few. In a PLE, learning occurs through various playful and physical learning activities, including hands-on and body-on activities (Säljö, 2005, 2006). Due to the content of hands-on making courses being more related to practical knowledge, students can benefit from a PLE, thus anchoring their knowledge (Rajesh et al., 2019). Therefore, this study took a hands-on course, Media Making with Chinese Culture, to explore participants’ learning effectiveness with the flipped classroom approach in a PLE.

Empirical studies on learning effectiveness usually measure improvement on only one aspect of learning performance or a single learning outcome. An evaluation framework that measures the overall learning performance or learning outcome should be designed to fully describe the effectiveness of a flipped classroom approach. According to such a framework, each learning performance dimension can be compared to identify which learning aspects have been improved in a given flipped classroom; for instance, collaborative learning strategies’ effect on learner performance (McDonough and Foote, 2015). Few studies have integrated the different dimensions of effectiveness into an evaluation framework that allows for comparisons of change across dimensions. To fill this research gap, this study provided a detailed description with empirically task-driven instructional adjustments during three rounds of pilot testing, which was customized and applied to a conventional classroom to promote students’ learning effectiveness. The study further proposed an evaluation framework for comparing the learning performance of the two groups of students so as to determine the effectiveness of the flipped classroom approach in a PLE.

Literature Review

Flipped Classroom

The flipped classroom was first defined in 1996 (Lage et al., 2000) as an “inverted classroom” that involved a significant change in the order of pre-class and in-class instruction. Baker (2000) suggested the name “classroom flipping” consistent with the changing role of teachers. Teachers changed from being the “Sage on the Stage” to the “Guide on the Side” in the flipped classroom, and the content to be learned was not taught by the teacher via face-to-face classroom interactions but was instead learned by the students themselves outside the classroom via online learning using various resources. In the conventional teacher-centered classroom instruction model, teachers instruct students in the classroom. Compared with this traditional model, the flipped classroom instructional model offers new possibilities in terms of incorporating digital instructional materials to increase teachers’ ability to teach concepts, motivate students to learn, and promote learning achievements (Kazanidis et al., 2019).

Many pedagogies have been implemented in flipped classroom settings, for example, task-oriented project-based teaching (Li and Ma, 2015), problem-based teaching (Feng et al., 2016), inquiry-based learning (Kim and Ahn, 2017), game-based learning (Cheng et al., 2018), and project-based learning (Fan, 2018). These new models have the potential to enhance students’ participation in the learning environment, improve the learning process, and advance performance results (Yilmaz, 2016).

Design-Based Research

Design-based research (DBR), also referred to as design research, design experiment, and development research (van den Akker, 1999), is a theoretical framework focused on real-world problems with the goal of improving learning. The DBR output contributes with both theoretical knowledge and societal education. Reeves (2006) summarized the DBR in four phases: (1) analyzing the problems from the authentic classroom, (2) designing and developing solutions according to students’ prior knowledge, (3) evaluating the effectiveness of the solutions in the authentic classroom, and (4) providing insights into the whole design process and its principles. Although few studies have reported on the entire DBR procedure, a review revealed that this approach has offered promising results (Anderson and Shattuck, 2012). In Vanderhoven et al.’s (2016) study, the DBR approach has been used to develop effective educational materials to teach children in secondary education (aged 12–19 years) how to act safely on social network site and indicated that DBR approach effectively led to practical solutions and design principles.

Task-Driven Instructional Approach in Flipped Classroom

In the task-driven method, the teacher arranges tasks for students to complete autonomously to achieve knowledge construction (Sun and Wu, 2018). Based on the task-driven method, students perform learning activities on their own and, thus, gradually cultivate their capacity for independent exploration and autonomous learning (Liu and Niu, 2018). The task-driven instructional approach has been applied in subjects such as English, Computer studies, and Engineering. Liu and Niu (2018) showed that the task-driven method has a great advantage over traditional teaching methods. In recent years, that research has emphasized on task-driven instructional approach in the flipped classroom. Kakh and Mansor (2014) applied task instruction in graduate students’ writing instruction. They proposed that task preparation should consist of selecting appropriate themes of the task, designing task activities, validating the task, piloting the task, and engaging the participants in doing the task. Yin (2015) designed a task-driven model in a flipped classroom and the practice showed that it had a certain degree of success in its application. Li (2017) designed a task-driven model based on the flipped classroom for a “database principles” course, while Hua (2019) constructed a task-driven teaching mode based on the concept of the flipped classroom and found that it could better exert the advantages of flipped classrooms and as a result enhanced the teaching effect. Thus, the present study designed and implemented a task-driven instructional approach in a flipped classroom (TDIAFC) for an undergraduate hands-on making course.

Evaluation Framework of Learning Effectiveness

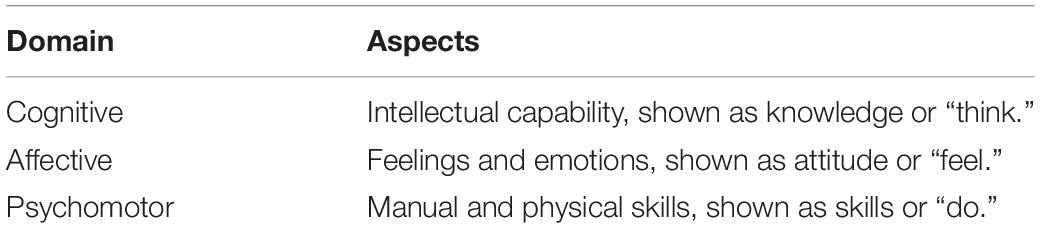

Learning outcomes are the knowledge or skills students have acquired by the end of an instructional period. Bloom’s taxonomy (Bloom et al., 1956) described three domains of learner achievement: cognitive, affective, and psychomotor domains (see Table 1). The cognitive domain is the requirement of knowledge and mental skills (Wilson, 2017). In Shi’s research (Shi et al., 2020), college students’ cognitive learning outcomes in the flipped classroom were analyzed. In contrast to the cognitive domain, the affective domain refers to attitudes, emotion, and feelings. Recently, the affective domain has received increasing attention, and it has been researched in several fields, including science (Jeong et al., 2019) and medicine (Pagatpatan, 2020). The psychomotor domain was firstly described in 1964 (Krathwohl et al., 1964) and is composed of utilizing and coordinating motor skills. Sicherl-Kafol et al. (2014) stated that, in comparison to the cognitive and affective domains, little work had been done in the psychomotor domain. However, more research has been conducted on this domain in recent years. In previous studies, the learning performance had been evaluated according to many separate dimensions, but few studies integrate the different dimensions of effectiveness into an evaluation framework.

According to Rahmat and Saudi (2008), each domain can be divided into three levels, respectively, describing the content of thinking, feeling, and doing (see Table 1). All three domains have been considered in evaluations of learning performance (Handayani et al., 2018; Günes̨ and Y1lmaz, 2019; Su and Chen, 2020). The learning performance evaluation framework in this study referred to all the three domains.

Playful Learning Environment

Kangas (2010a) proposed the playful learning environment (PLE), which is a novel and pedagogically validated learning environment that included both the indoor and outdoor learning environment with ICTs supported. The features of playful learning are connected with collaboration, playfulness, creativity, narration, emotion, embodiment, and media richness (Hyvonen, 2008; Kangas, 2010a,b). For learners, playfulness is an attitude to learning through play or games in a PLE (Säljö, 2005, 2006). Existing studies showed learning in a PLE had positive effects on academic achievements for school students and learners in working life (Sawyer, 2006). Kangas et al. (2017) proposed that playful learning was an effective learning approach with ICTs tools constructing a PLE, because learners in PLE showed an active playful attitude and full of imagination through the learning process. Studies also supported the idea that PLE could promote engaging, insightful, and hands-on learning that usually produced a joy of learning (Csikszentmihalyi, 1990; Resnick, 2006; Kangas, 2010b).

However, although many studies support the learning approach in a PLE to promote learners’ learning, the learners cannot automatically benefit from the learning process. Johnson (2004) introduced the play evaluation continuum to illustrate the influence of the playful learning approach on learners. There are both positive and negative effects of playing learning on learners. Playing or a game may be psychologically harmful to a learner, other learners, or the learning environment. Therefore, it is significant to make rules for play and create evaluation tools to evaluate the effectiveness of the learning approach in a PLE. In the study, PLE was constructed in the flipped classroom approach to promote learners learning. With the combination of task-driven and evaluate the framework of learning effectiveness, it was expected that the positive effects of both the flipped classroom and the PLE can bring benefit to learners.

Research Hypotheses

The aim of the study was to design a model that embeds features of Chinese culture in media making. TDIAFC was implemented in this course.

In the flipped classroom, rather than using the class time to transmit knowledge to the students by lecturing, the teacher engages with the students via discussion, solving problems, hands-on activities, and scaffolding (Akçay1r and Akçay1r, 2018). For the purpose of analyzing how this flipped strategy influences students’ learning effectiveness, the following research hypotheses are proposed:

H1: Students’ learning effectiveness is significantly improved under the revision of the task-driven instructional approach in the three-round flipped classroom, which provides a personalized design of the flipped classroom approach.

H2: According to the evaluation framework, students’ learning performance differs significantly in three domains (i.e., cognitive, affective, and psychomotor) between students who participate in flipped learning instruction and their peers who participate in lecture-centered classroom instruction.

Materials and Methods

Participants

Participants comprised two groups of students (see Table 2) who enrolled in a hands-on course named Media Making with Chinese Culture at a university. The course, among the most popular general education elective courses at this university, focuses on using ICTs and mobile technology to convey the meanings of Chinese traditional culture. The course is also an open online course on the iCourse platform. All study participants were sophomores or juniors, as the course is not open to first-year students; all freshmen are required to pass a computer test at the end of their first year to ensure that they have obtained the basic ICTs and mobile technology skills to study online. The course is an open general education elective course for any interested student of any major. Considering the capacity of the classroom for the face-to-face instruction, about 80 students consisted of one class section. The course was so popular that 160 students enrolled in the same semester. While 81 students enrolled in the course as one class section, another class section was open for the rest 79 students who wanted to enroll in the course. Finally, there were two class sections of 81 and 79 students enrolling in the course in the same semester. Thus, the two class sections formed the EG of 81 participants and the CG of 79 participants. Participants were randomly assigned to the two groups.

In this study, both the EG and CG students enrolling in the hands-on course were instructed in the same PLE that was equipped with multiple technologies associated with rich media tools for students to create their hands-on works. There were enough computers installed with various software and available access to online tools for each student to design and make the works. For example, KAHOOT, a web-based platform that allows users to easily create and play an interactive, multiple-choice-style game (Zucker and Fisch, 2019), was provided for playful learning in the classroom. Other equipment, such as cameras, video cameras, three-dimensional (3D) printers, and other hands-on making materials, were also provided in the classroom. Additionally, learning resources such as lectures, tutorials, and training videos pertaining to Chinese culture for knowledge learning and skills training were also provided. With the tools, technologies, and materials, a playful and engaging learning environment was developed. In the PLE, students learning through playfulness can design and create their hands-on works about Chinese culture.

Instruments

Students’ learning effectiveness was evaluated by the pre-test score, summative score, final score, and learning performance evaluation. The pre-test score was the score of the first coursework assignment. The summative score was the calculation of the last four coursework assignments. The final score calculating the scores of five coursework assignments, was the comprehensive score for each student as the course score. All tests were calculated by a 100-point scale, which was also the requirement for the course grade at the university. Finally, all the results of the tests for the two groups would be compared.

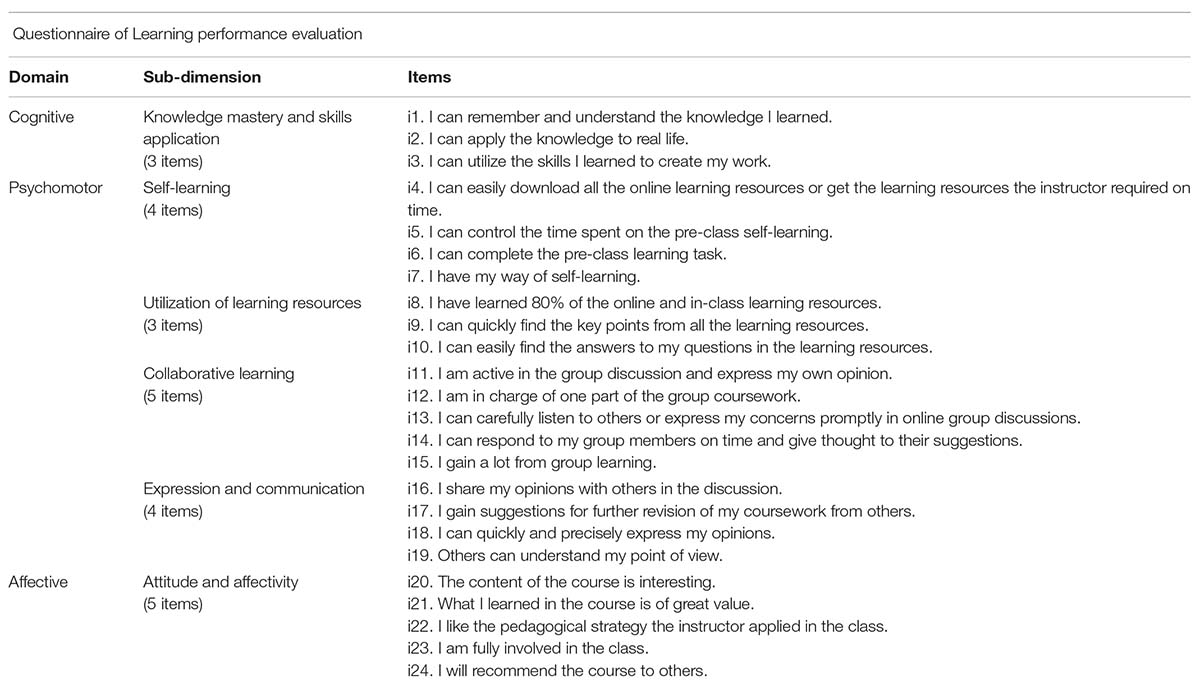

The learning performance was evaluated by the learning evaluation framework designed as a questionnaire based on Bloom’s taxonomy of domains. This framework covered the cognitive, psychomotor, and affective domains. Six variables were defined according to the three domains. The first variable, knowledge mastery and skills application (KNO), was based on the cognitive domain and “deal[t] with the recall or recognition of knowledge and the development of intellectual abilities and skills” (Bloom et al., 1956, p. 7). In Bloom’s taxonomy, the psychomotor domain was not mentioned in detail (Bloom et al., 1956, pp. 7–8). The second through fifth variables in the psychomotor domain, based on the instructional design and learning requirement of the hands-on course and previous studies (Edward, 2002; Krivickas and Krivickas, 2007), were, respectively, defined as self-learning (SELF), the utilization of learning resources (UTI), collaborative learning (COL), and expression and communication (EXP). The sixth variable, attitude and affectivity (ATT; Syaiful et al., 2019), was based on the affective domain and “describe[s] changes in interest, attitudes, and values, and the development of appreciations and adequate adjustment” (Bloom et al., 1956, p. 7).

The questionnaire (see Appendix) mainly comprised three sections corresponding to the three domains. The cognitive domain included three items for the variable of KNO. The items relating to the psychomotor domain consisted of four items for SELF, three items for UTI, five items for COL, and four items for EXP. Finally, five items related to the affective domain for ATT. A Likert-type scale from 1 (strongly disagree) to 5 (strongly agree) was applied to each item in the three domains.

Design of the Study of Three-Round TDIAFC

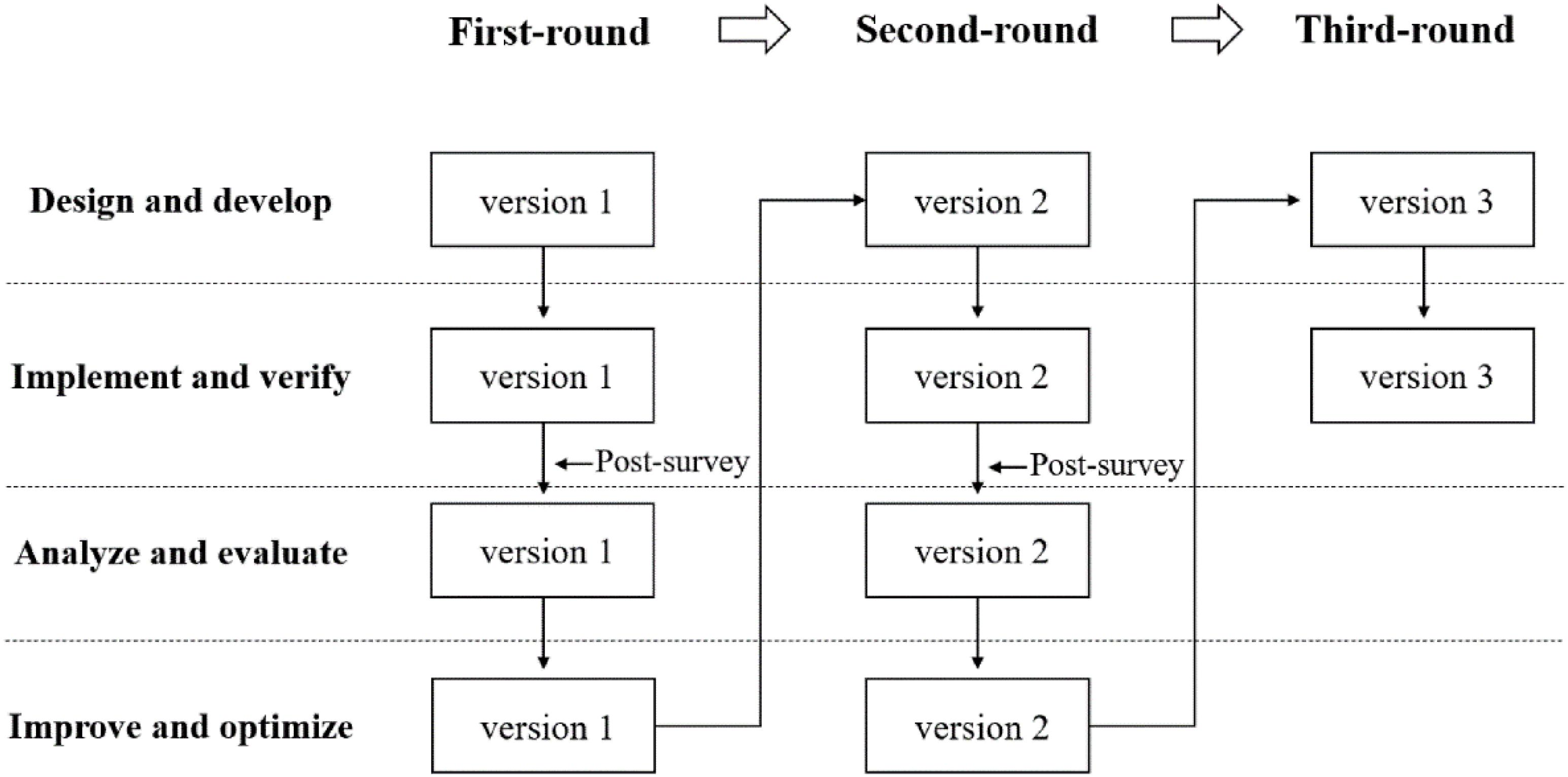

According to the design-based and the task-driven research approach, this study designed four interconnected phases: (1) design and develop TDIAFC, (2) implement and verify TDIAFC, (3) analyze and evaluate TDIAFC, and (4) improve and optimize TDIAFC. Vanderhoven et al. (2016) stated that DBR needed multiple iterations. In this study, a three-round experiment of TDIAFC was carried out (see Figure 1).

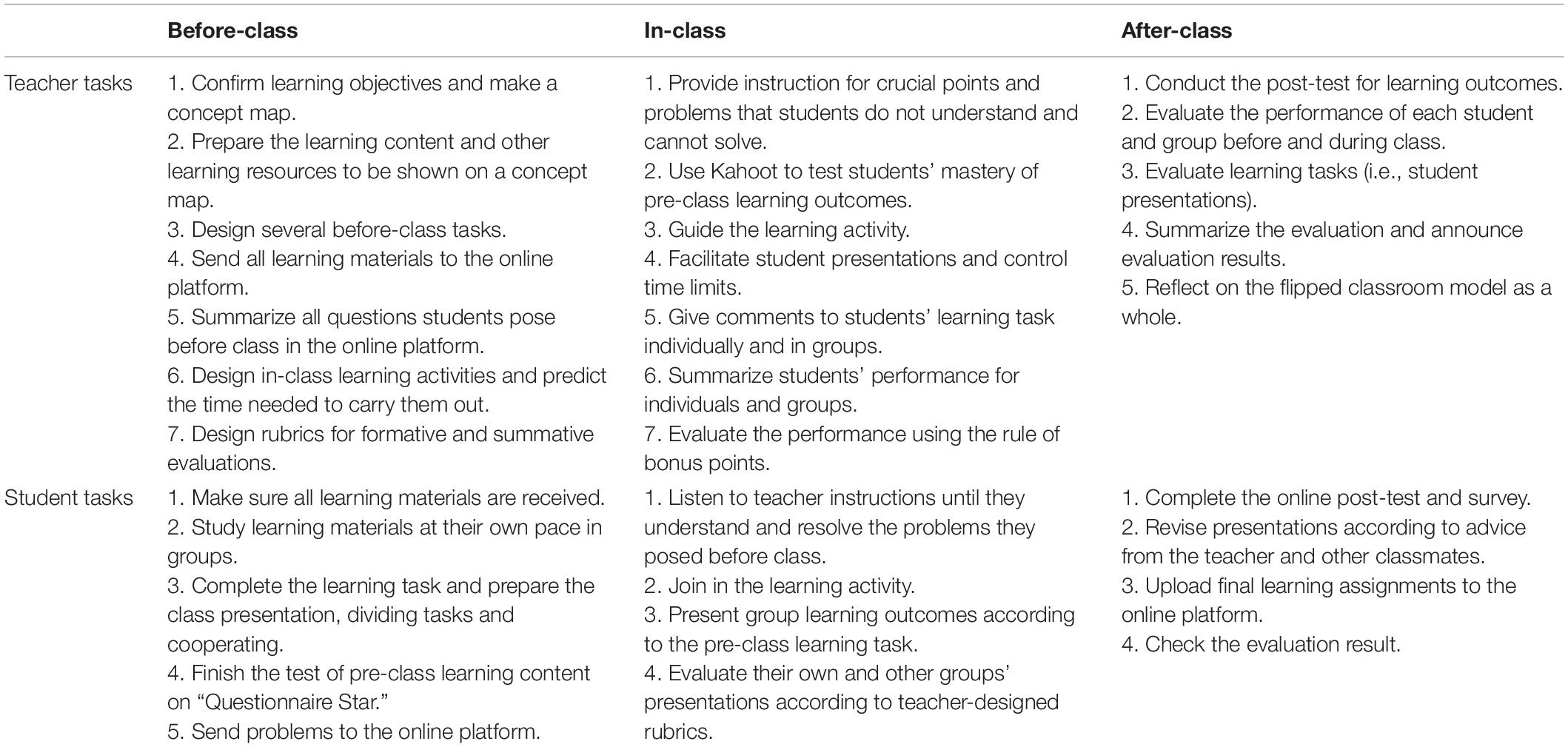

In the first round, practitioners and teachers designed version 1 of TDIAFC based on the theory of constructivism. After implementing version 1 of the experiment, a first post-experiment survey was designed to identify the problems in the first round. Based on the feedback on the first round of the study, version 1 of TDIAFC was revised and version 2 was developed. In the second round, version 2 of TDIAFC was implemented. The same post-experiment survey as used in the first round was administered to identify the problems in the second-round experiment. Based on the feedback of the second round of the study, version 2 of TDIAFC was revised and version 3 was developed. In the last round, version 3 was implemented. Version 3 (see Table 3) includes learning tasks that were specifically designed from the beginning to the end of the flipped classroom instruction.

Procedure

Both the EG and the CG students experienced 8 weeks of learning, which covered five themes. The EG students were instructed applying TDIAFC, and they experienced a three-round instructional process. In the 1st week, the EG received basic readiness training; they then experienced the first round of version 1 of TDIAFC in the 2nd and 3rd week to complete the pre-class, in-class, and after-class tasks for the first theme. In the second round of flipped classroom instruction, the EG participants were instructed by version 2 of TDIAFC in the 4th and 5th week to complete the second and third themes. In the third round, they were instructed using version 3 of TDIAFC in the 6th and 7th week to complete the last two themes. Thus, there were five themes and five coursework assignments for both groups.

The teacher, learning content, and coursework were the same for the CG and the EG participants, but the instruction was presented for the former via lectures and without any of the components of the flipped approach. The course adopted the group cooperative learning approach, which was consistent with peer instruction theory (Crouch and Mazur, 2001). Peer instruction makes use of student interaction and helps students to increase engagement, understanding, and problem-solving ability. For each lesson, the students of each class section completed the designed tasks in groups. In the final week, the evaluation of all coursework was summarized. The questionnaire, which was sent to an online survey tool named Questionnaire Star, was open to the two groups as well. Before submitting their response, the participants were informed that they were participating in this study. The study aimed to promote instructional strategies and the findings of the study would be published. The data they provided was anonymous and would not be of any commercial use or influence their final course scores. All the students agreed to participate in the study.

Results

Students’ Summative and Final Score

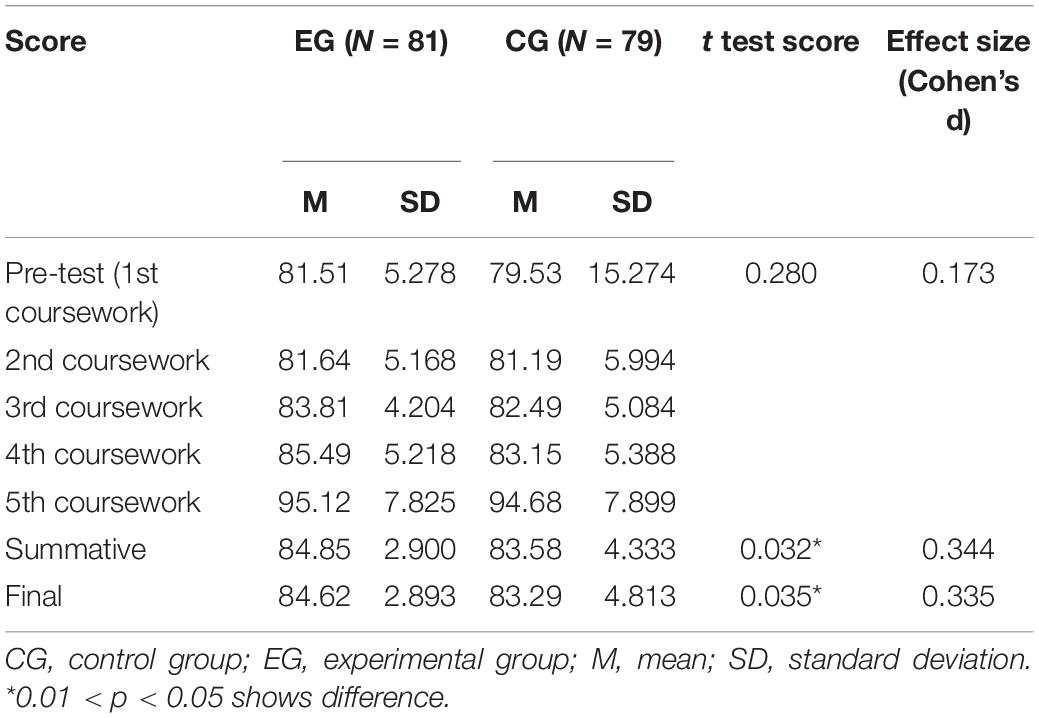

From the results (see Table 4), it was found that the EG students’ mean scores were higher than those of the CG students. However, the value of the standard deviation of the final score of the EG was less than that of the CG. This indicates that the dispersion degree of the scores of students in the EG was small. Students’ scores in the CG varied more widely.

In this study, t testing and the effect size were analyzed to check whether there was a difference between the two groups. The score of the first coursework seemed to be the score of the pre-test. The result of the t test for the pre-test showed that there was no significant difference between the two groups (p > 0.05). The study also analyzed the scores of the summative and final score. The result of the t test of the summative score showed that there was a significant difference between the two groups (0.01 < p < 0.05). There was also a significant difference in the final score between the two groups (0.01 < p < 0.05). Cohen’s d is the most commonly used standardization effect in the t test (Cuthill et al., 2007) and is defined as the difference between the mean values of two groups divided by the standard deviation (equation 1). It can be applied to the calculation of effects by comparing the mean values of two groups of samples (Sullivan and Feinn, 2012). The evaluation criteria of Cohen’s d are as follows: small effects (≥0.2 and <0.5); moderate effect (≥0.5 and <0.8); large effect (≥0.8) (Cohen, 1988). The values of effective size of the summative and final scores were 0.344 and 0.335, which showed small effects, indicating that the flipped classroom approach had an impact on students’ academic performance.

Students’ Learning Performance Evaluations

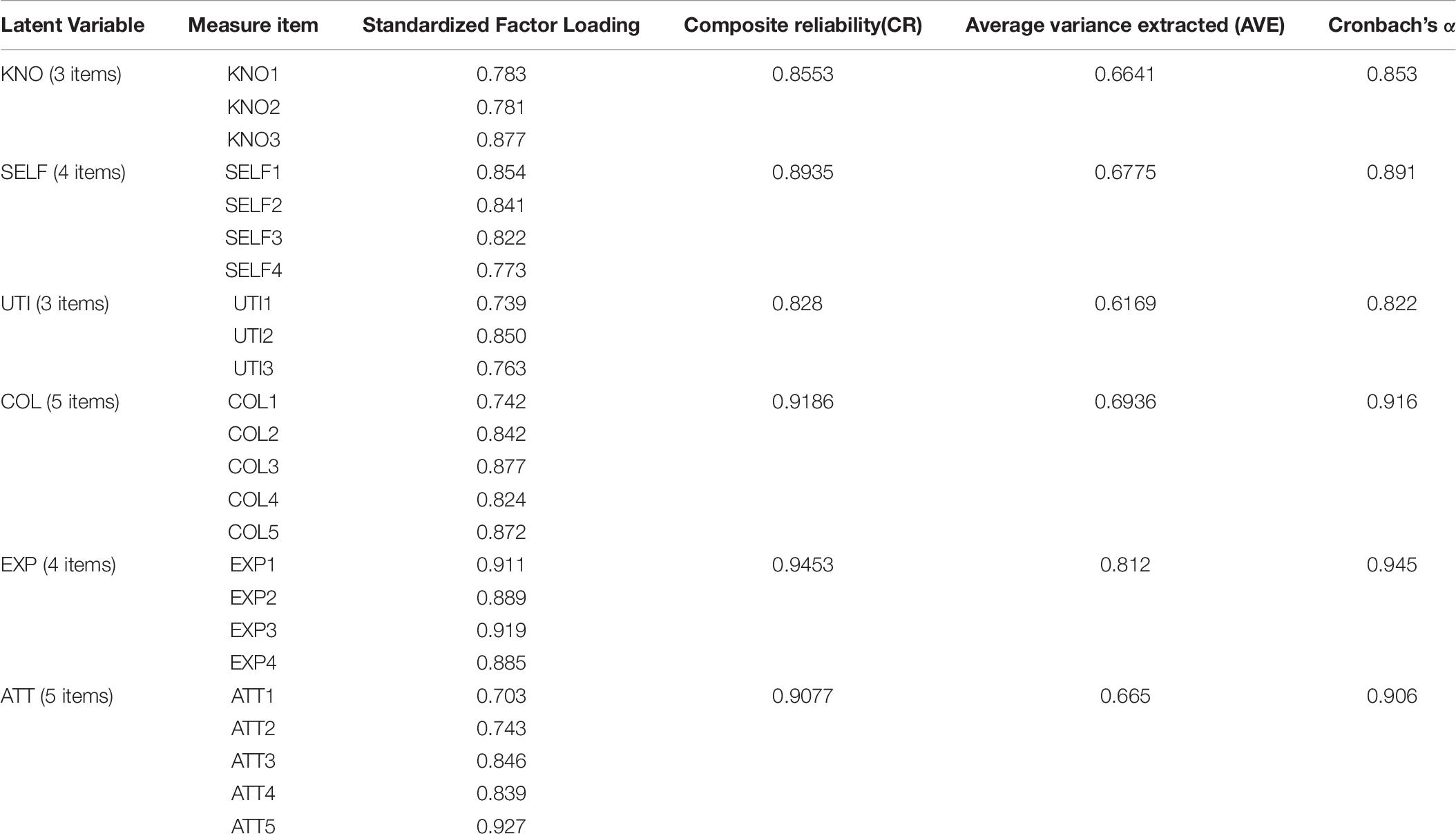

Students’ learning performance was evaluated by a questionnaire based on the evaluation framework. Since the questionnaire was designed by the authors, the reliability and validity of the questionnaire must be tested. Thus, exploratory factor analysis and confirmatory factor analysis were conducted on the questionnaire. The results of exploratory factor analysis are shown in Tables 5, 6, which filter items and define item dimensions. The confirmatory factor analysis results are shown in Tables 7, 8. Table 7 proved that the questionnaire had good aggregation validity, and Table 8 proved that the questionnaire had good discriminative validity. In a word, this questionnaire was effective and reliable and could be used to evaluate the difference between the two groups.

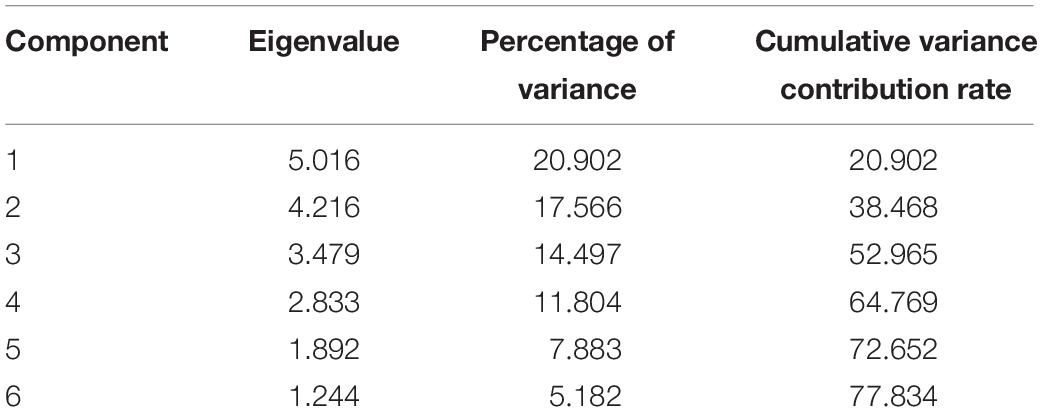

SPSS 24.0 was used to conduct exploratory factor analysis on the scale and rotated the factors with the maximum variance method. The Cronbach’s alpha coefficient was used to test the reliability of the questionnaire. Cronbach’s alpha for the total questionnaire was 0.813, indicating that the questionnaire was highly reliable. The KMO value in this study is 0.790 (higher than 0.7), and the results of Bartlett’s test of sphericity showed correlations among the different variables (χ2 = 2756.482, p = 0.000 <0.001), indicating that the data are suitable for EFA.

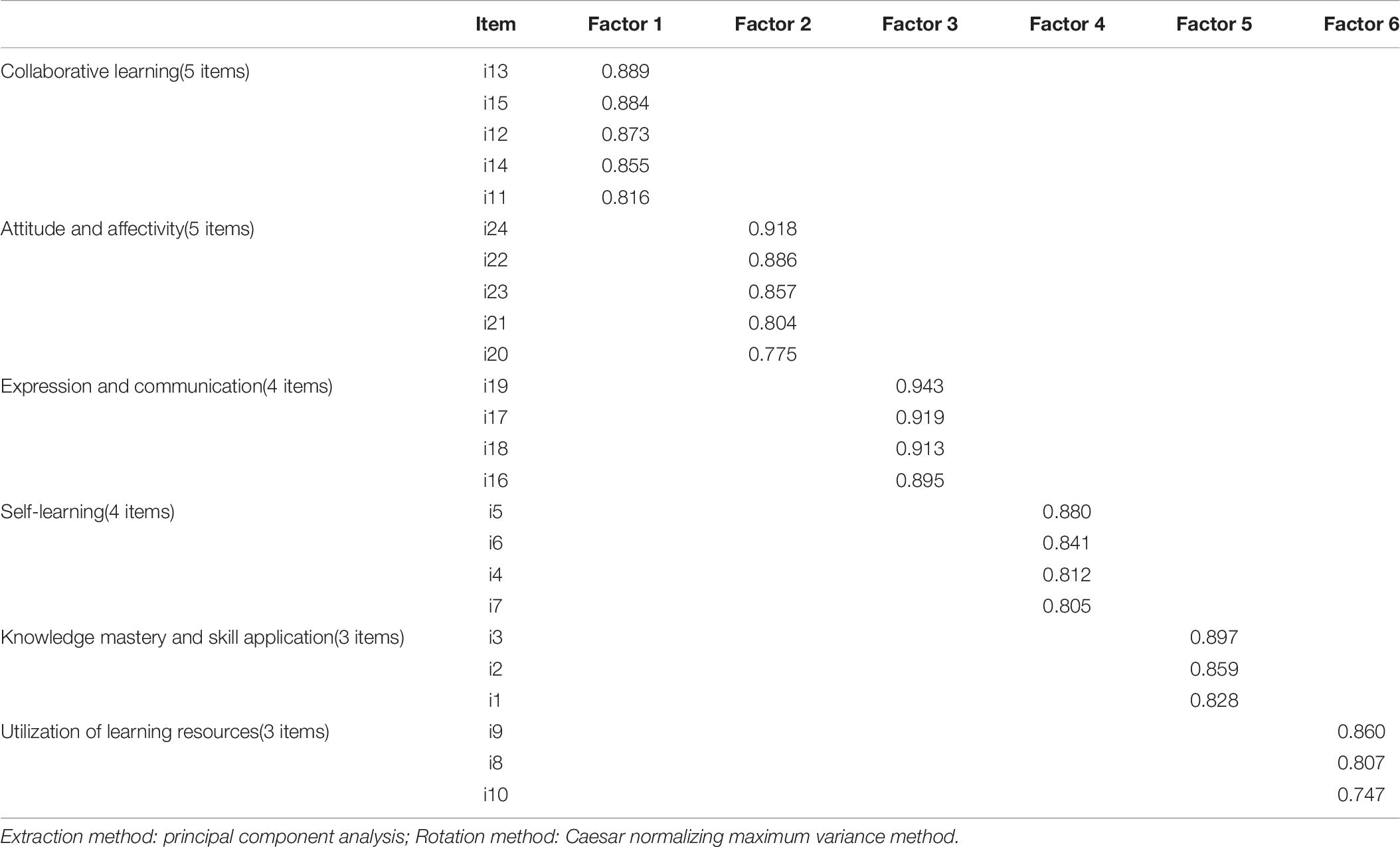

To measure the validity of the dimension, the study used the principal component extraction method to extract the factor and obtain six factors; the items of each variable were on the same dimension, indicating that the questionnaire dimension was effective (Conway and Huffcutt, 2016). To determine the interpretability of factors, the study used the maximum variance rotation method to rotate and obtain the component transformation matrix, as shown in Table 5. The factor load of each factor was greater than 0.5, proving that each factor had good interpretability (Fabrigar et al., 1999).

In this study, the principal component analysis was used to extract factors, and exploratory factor analysis was conducted with the maximum variance rotation method. Factors with an eigenvalue greater than 1 were selected. After multiple orthogonal rotations, the items with factor loads less than 0.4 and inconsistent contents were deleted. Finally, 24 items with eigenvalues greater than 1 and an independent factor load greater than 0.5 were obtained (Fabrigar et al., 1999). Six factors were extracted, and the cumulative variance contribution rate was 77.834% (Conway and Huffcutt, 2016). The eigenvalue, variance contribution rate, and cumulative variance contribution rate of the six factors are shown in Table 5.

The factor load after rotation was shown in Table 6. Factor 1 (knowledge mastery and skill application, KNO) contained three items – i1, i2, and i3 – explaining the total variation of 20.902%. Factor 2 (self-learning, SELF) contained four items – i4, i5, i6, and i7 – that could explain 17.566% of the total variation. Factor 3 (utilization of learning resources, UTI) contained three items – i8, i9, and i10 – which accounted for 14.497% of the total variation. Factor 4 (collaborative learning, COL) contained five items – i11, i12, i13, i14, and i15 – that accounted for 11.804% of the total variation. Factor 5 (expression and communication, EXP) contained four items – i16, i17, i18, and i19 – that accounted for 7.883% of the total variation. Factor 6 (attitude and affectivity, ATT) contained five items – i20, i21, i22, i23, and i24 – that accounted for 5.182% of the total variation.

Table 7 showed the reliability and validity of each dimension’s items, with each dimension showing acceptable internal consistency (Cronbach’s alpha ranging from 0.822 to 0.945), which was adequate for the factor analysis (Bagozzi and Yi, 1988). When the AVE of all factors of the model is greater than 0.5, the convergence validity of potential variables is better (Fornell and Larcker, 1981). The composite reliability value was greater than 0.6 and slightly greater than Cronbach’s alpha, so the collected data were very reliable.

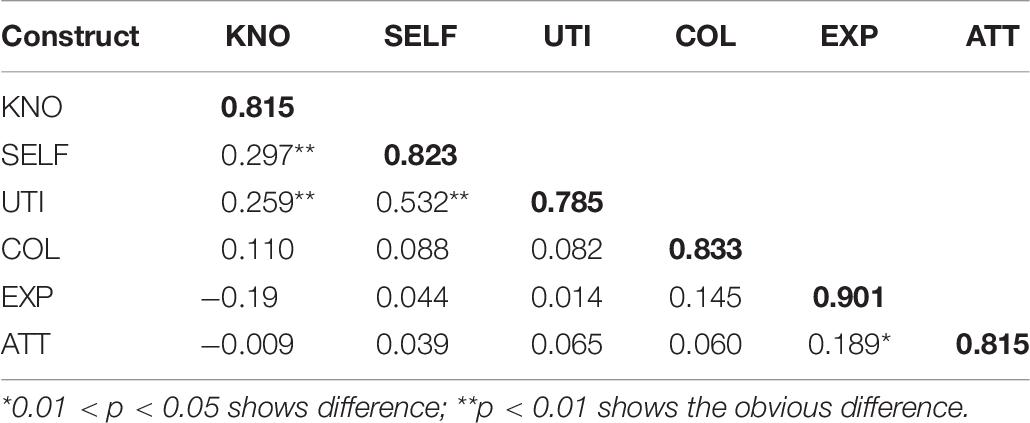

Discriminant validity was evaluated by comparing a construct’s square root of AVE with the construct’s correlation coefficient (Fornell and Larcker, 1981). As displayed in Table 8, the values of the square root of AVE of all constructs (marked as bold) were greater than the correlation coefficients, indicating that the discriminant validity between constructs was acceptable and the measurement model had good discriminant validity (Schumacker and Lomax, 2016).

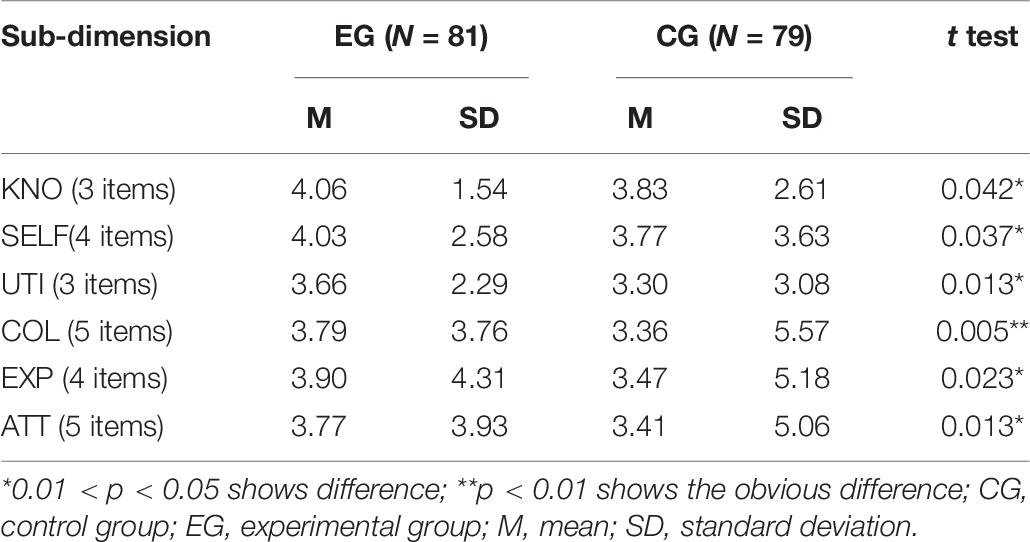

Table 9 shows that the value of EG students’ learning performance had higher mean scores than that of the CG students in each dimension. The difference among the CG students was greater among the EG students in all dimensions from the value of standard deviation. Further, the learning performance gap between students with higher scores than lower ones was more significant in the CG students than in the EG students. Moreover, the EG students performed better than the CG students according to the mean scores, indicating that the students in the EG adapted well to the flipped learning method. The t test results showed that there was a significant difference between the two groups in each sub-dimension of learning performance, particularly in the psychomotor domain of collaborative learning.

Conclusion

Problems and Redesign of Three Rounds of the Flipped Classroom Study

Based on the survey results on the first-round study of the EG students, the following three problems were exposed. First, according to the results, 95.13% of the EG said that they had achieved high scores. However, the score for each student could not be obtained. Second, 68.21% of the EG reported that they had spent 2 to 3 h on pre-class self-learning; however, the time each student spent on pre-class learning could not be verified. Third, in-class time was not managed effectively. There was little time for in-class learning activities owing to more time spent on the discussion and answering the questions students posed in the pre-class learning.

In the second round of the study, several changes were made. First, learning concept maps were provided to better align the learning objectives with the learning activities and assessments. Previous research has also shown that concept maps can help learners activate their previous knowledge and establish connections between concepts in later learning (Gurlitt and Renkl, 2009; Schroeder et al., 2017). With the help of concept maps, students could quickly and easily get the key points of the learning requirements in their pre-class learning. Students posed noticeably fewer questions than in the last round. Second, the pre-class test was created and published in Questionnaire Star. Students completed the test on the platform of Questionnaire Star. Each student’s score could be calculated and identified through the platform. Third, a time limit was set for in-class activities including the group presentations, discussions, and self-evaluations to ensure that they could be completed in time. Version 2 of TDIAFC was developed based on the results of the first round of instruction.

The second round of the experiment resolved the problems of the first round. As a result, the pre-test was uploaded to Questionnaire Star, and each student’s pre-class test score could be seen. According to the new results, nearly 70% of the students got the correct answers for approximately 90% of the questions. Time spent on pre-class self-learning was less than in the previous round. Nearly 90% of students said they spent 30–50 min on pre-class learning.

However, other problems appeared. One problem is that in the in-class presentation section, students apparently only listened carefully to their own group presentations. However, they could not focus on the other groups’ presentations. Second, the problem was still in the presentation section; moreover, each group presentation lasted too long to leave sufficient time for the other in-class learning activities.

In the third round of the study, several changes were made. First, bonus points were given to students to motivate their participation in the discussion. Second, the discussion board was used to post each group’s revised presentation. Students were asked to give comments to each group presentation. Students could learn from each other through the online board posts. Version 3 of TDIAFC was designed based on the evaluation of the second round of instruction.

According to the survey of the three-round study, it was found that the coursework score for each group increased from the first to the last unit (see Table 4). Additionally, students paid more attention to other groups’ presentations and participated more actively in the learning activities than before with the new play rules. Moreover, the time of in-class learning activities was under better control.

The EG students’ performance improved significantly throughout the three-round study. The results showed that an increasing number of students adapted to the new instructional pedagogy. Students seemed to spend less time on pre-class activities (from 2 to 3 h to 30–50 min) and achieve higher scores in the pre-class test (from uncertain to 90% correct rate). During in-class time, the students participated more actively in each of the learning activities, such as discussion and comments. The scores of the five coursework assignments of each group showed significant improvement.

Revision of the TDIAFC Improved Learning

This study applied three versions of TDIAFC in the same course. The design was revised for the first two rounds of the study in three main ways. First, each EG student’s pre-class achievement score was obtained. Second, learning tools such as concept maps and task decomposition were added to scaffold their learning. Third, motivation strategies were implemented and found to be effective in facilitating students’ active participation. The results showed that the EG students performed steadily and incrementally better as they proceeded through the rounds of the class. Additionally, by experiencing the three-round study, the instructors learnt how to better design the learning task, implement the learning activities, and provide learning scaffolding to help students learn independently (González-Gómez and Jeong, 2019), while the students gained knowledge on how to learn in the flipped classroom by becoming familiar with the process and requirements of this type of transformative pedagogy.

In this study, the three-round experiment showed a full picture of the personalized design of the flipped classroom pedagogy. The course, a hands-on making course, to which no other new innovative pedagogy has been applied, required more active participation and deep cooperation with one another. The sample undergraduates had their own learning habits and were accustomed to the lecture-centered classroom. These factors had an obvious impact on the implementation of the flipped classroom approach. The tasks, learning process, and learning outcomes of the three-round study were personalized and specific, but could also provide guidance more generally for those wishing to design or adapt a course to the flipped classroom model. H1 was supported.

TDIAFC Facilitated Students’ Learning Effectiveness

The study applied TDIAFC in a PLE and achieved a good result, which was similar to other studies (Yin, 2015; Li, 2017; Hua, 2019), which used task-driven model in a flipped classroom and had a certain degree of success in its application. In the study, the EG students’ assessed learning performance were significantly better than those of the CG students, which was consistent with the study of van Alten et al. (2019). In the study, the EG students’ self-learning ability was significantly better than those of the CG students. Previous experiments have also shown that the flipped classroom strengthened the students’ self-learning ability (Ma et al., 2017). Lai and Hwang (2016) hypothesized that students who lack self-learning capabilities might be disadvantaged in flipped classrooms. Across a wide-reaching synthesis of currently available interdisciplinary research reports, Shi et al. (2020) found that the flipped classroom instruction can positively influence college students’ cognitive and affective achievement. The results of Dinndorf-Hogenson et al. (2019) suggested that the methods used with the flipped classroom pedagogy do not significantly affect student performance on psychomotor skill acquisition. However, in this study, the learning performance evaluation of the three-round study showed that all three dimensions of cognitive, affective, and psychomotor differed significantly between the two groups. H2 was supported. However, the polarization of the sub-dimensions of expression and communication and attitude and affectivity was serious. Therefore, future research should pay attention to abilities of expression and communication and the adaptability of students with different self-learning abilities to flipped learning.

Limitations and Future Work

There are limitations to this study that leaves scope for future studies to further explore the TDIAFC model. First, both the EG and CG students learned in the same PLE. The evaluation of PLE was combined with the evaluation of the flipped classroom approach in the evaluation framework in the study. Therefore, the evaluation of the effects of PLE was not separately proposed in the study. With the results of the evaluation framework, it was obvious that the new approach in a PLE showed a positive effect on learners. Second, increasing the number of experiments may reveal more problems. The design of TDIAFC may thus be revised again. Third, more personalized evaluation tools should be developed to evaluate the learning effectiveness of the flipped classroom approach. Fourth, this research utilized questionnaires to collect data, which means that common method biases may exist. In addition, according to a survey of the number of courses taken by participants in one semester, 56.1% of the participants had 9–12 courses. TDIAFC with more time spent on pre- and post-class learning may increase students’ coursework load, which can decrease their interest and motivation. Thus, this limitation calls for a wider range of evidence to complement these findings.

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors, without undue reservation.

Ethics Statement

Ethical review and approval was not required for the study on Human Participants in accordance with the Local Legislation and Institutional Requirements. Written informed consent from the college students/participants was not required to participate in this study in accordance with the National Legislation and the Institutional Requirements.

Author Contributions

All authors contributed equally to the conception of the idea, implementing and analyzing the experimental results, and writing the manuscript and read and approved the final manuscript.

Funding

This research was funded by the National Social Science Foundation of China (Grant No. BCA200093) and Priority Academic Program Development of Jiangsu Higher Education Institutions. In addition, this study was supported by the Ministry of Science and Technology, Taiwan, under grants MOST 109-2511-H-019-004-MY2 and MOST 109-2511-H-019-001.

Conflict of Interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

Akçay1r, G., and Akçay1r, M. (2018). The flipped classroom: a review of its advantages and challenges. Comput. Educ. 126, 334–345. doi: 10.1016/j.compedu.2018.07.021

Anderson, T., and Shattuck, J. (2012). Design-based research: a decade of progress in Education research. Educ. Res. 41:1625.

Bagozzi, R. P., and Yi, Y. (1988). On the evaluation of structural equation models. J. Acad. Mark. Sci. 14, 33–46. doi: 10.1007/bf02723327

Baker, J. W. (2000). “The “classroom flip”: using web course management tools to become a guide by the side,” in International Conference on College Teaching and Learning (ICTCS 2000), Jacksonville, FL, 9–17.

Bernacki, M. L., Greene, J. A., and Crompton, H. (2020). Mobile technology, learning, and achievement: advances in understanding and measuring the role of mobile technology in education. Cont. Educ. Psychol. 60:101827. doi: 10.1016/j.cedpsych.2019.101827

Bloom, B. S., Engelhart, M. D., Furst, E. J., Hill, W. H., and Krathwohl, D. R. (1956). Taxonomy of Educational Objectives: The Classification of Educational Goals. Handbook 1: Cognitive Domain. New York, NY: David McKay, doi: 10.1080/00221546.1957.11780607

Builfabrega, M., Casanovas, M. M., Ruizmunzon, N., and Filho, W. L. (2019). Flipped classroom as an active learning methodology in sustainable development curricula. Sustainability 17:4577. doi: 10.3390/su11174577

Carrión-Martínez, J. J., Luque-de la Rosa, A. L., Fernández-Cerero, J., and Montenegro-Rueda, M. (2020). Information and communications technologies (ICTs) in education for sustainable development: a Bibliographic review. Sustainability 8:3288. doi: 10.3390/su12083288

Cheng, P. W. D., and Stimpson, P. G. (2004). Articulating contrasts in kindergarten teachers’ implicit knowledge on play-based learning. Int. J. Educ. Res. 41, 339–352. doi: 10.1016/j.ijer.2005.08.005

Cheng, Y. H., Chih-Yuan, S. J., and Jia, Y. L. (2018). Effects of flipped classrooms integrated with MOOCs and game-based learning on the learning motivation and outcomes of students from different backgrounds. Interact. Learn. Environ. 27, 1028–1046. doi: 10.1080/10494820.2018.1481103

Cohen, J. (1988). Statistical Power Analysis for the Behavioral Sciences, 2nd Edn. Hillsdale, NJ: Erlbaum.

Collis, B. (1998). New didactics for university instruction: why and how? Comput. Educ. 31, 373–393. doi: 10.1016/S0360-1315(98)00040-2

Conway, J. M., and Huffcutt, A. I. (2016). A review and evaluation of exploratory factor analysis practices in organizational research. Organ. Res. Methods 6, 147–168. doi: 10.1177/1094428103251541

Crouch, C. H., and Mazur, E. (2001). Peer instruction: ten years of experience and results. Am. J. Phys. 69, 970–977. doi: 10.1119/1.1374249

Csikszentmihalyi, M. (1990). Flow: The Psychology of Optimal Experience. New York, NY: Harper Perennial.

Cuthill, I., Nakagawa, S., and Cuthill, I. C. (2007). Effect size, confidence intervals and statistical significance: a practical guide for biologists. Biol Rev Camb Philos Soc. 82, 591–605. doi: 10.1111/j.1469-185X.2007.00027.x

De Koning-Veenstra, B., Steenbeek, H. W., Van Dijk, M. W. G., and Van Geert, P. L. C. (2014). Learning through movement: a comparison of learning fraction skills on a digital playful learning environment with a sedentary computer-task. Learn. Individ. Differ. 36, 101–109. doi: 10.1016/j.lindif.2014.10.002

Dinndorf-Hogenson, G. A., Hoover, C., Berndt, J. L., Tollefson, B., Peterson, J., and Laudenbach, N. (2019). Applying the flipped classroom model to psychomotor skill acquisition in nursing. Nurs. Educ. Perspect. 40, 99–101. doi: 10.1097/01.NEP.0000000000000411

Edward, N. (2002). The role of laboratory work in engineering education: student and staff perceptions. Int. J. Electr. Eng. Educ. 39, 11–19. doi: 10.7227/IJEEE.39.1.2

Fabrigar, L. R., Wegener, D. T., MacCallum, R. C., and Strahan, E. J. (1999). Evaluating the use of exploratory factor analysis in psychological research. Psychol. Methods. 7, 272–299. doi: 10.1037/1082-989X.4.3.272

Fan, X. (2018). Research on oral english flipped classroom project-based teaching model based on cooperative learning in china. Educ. Sci. Theor. Pract. 18, 1988–1998. doi: 10.12738/estp.2018.5.098

Farren, P. (2016). “Transformative pedagogy” in the context of language teaching: being and becoming. World J. Educ. Technol. Curr Issues 3, 190–204.

Feng, X., Chen, P., Liu, Y., and Song, Q. (2016). “Using the mixed mode of flipped classroom and problem-based learning to promote college students’ learning: an experimental study,” in International Conference on Educational Innovation through Technology (EITT 2016), Tainan, 133–138. doi: 10.1109/EITT.2016.33

Fornell, C., and Larcker, D. F. (1981). Evaluating structural equation models with unobservable variables and measurement error. J. Mark. Res. 18, 39–50. doi: 10.1177/002224378101800312

González-Gómez, D., and Jeong, J. (2019). EduSciFIT: a computer-based blended and scaffolding toolbox to support numerical concepts for flipped science education. Educ. Sci. 9:116. doi: 10.3390/educsci9020116

Günes̨, B., and Y1lmaz, E. (2019). The effect of tactical games approach in basketball teaching on cognitive, affective and psychomotor achievement levels of high school students. Egit. Bilim. 44, 313–331. doi: 10.15390/eb.2019.8163

Gurlitt, J., and Renkl, A. (2009). Prior knowledge activation: how different concept mapping tasks lead to substantial differences in cognitive processes, learning outcomes, and perceived self-efficacy. Instr. Sci. 38, 417–433. doi: 10.1007/s11251-008-9090-5

Handayani, I., Mukhaiyar, M., and Syarif, H. (2018). The cognitive, affective, and psychomotor domain on English lesson plan in school based curriculum. Int. J. Multidiscip. Res. High. Educ. 1, 32–44.

Hao, Y. (2016). Exploring undergraduates’ perspectives and flipped learning readiness in their flipped classrooms. Comput. Hum. Behav. 59, 82–92. doi: 10.1016/j.chb.2016.01.032

Hinojolucena, F. J., Mingoranceestrada, A., Trujillotorres, J. M., Aznardiaz, I., and Reche, M. C. (2018). Incidence of the flipped classroom in the physical education students’ academic performance in university contexts. Sustainability 5:1334. doi: 10.3390/su10051334

Hua, K. (2019). “Research on task-driven teaching mode based on flipped classroom concept,” in International Conference on Social Science and Higher Education (ICSSHE 2019), Amsterdam: Atlantis Press, 539–541. doi: 10.2991/icsshe-19.2019.136

Hyvonen, P. (2008). Affordances of Playful Learning Environment for Tutoring Playing and Learning. Dissertation thesis, University of Lapland Printing Centre, Rovaniemi.

Jeong, J. S., González-Gómez, D., and Cañada-Cañada, F. (2019). How does a flipped classroom course affect the affective domain toward science course? Interact. Learn. Environ. 1–13. doi: 10.1080/10494820.2019.1636079

Johnson, J. E. (2004). “Violent interactive video games as play poison,” in Paper presented at the 23rd ICCP World Play Conference “Play and Education”, Krakow, 15–17.

Kakh, S. Y., and Mansor, W. F. (2014). Task-based writing instruction to enhance graduate students’ Audience Awareness. Proced. Soc. Behav. Sci. 118, 206–213. doi: 10.1016/j.sbspro.2014.02.028

Kangas, M. (2010a). Creative and playful learning: learning through game co-creation and games in a playful learning environment. Think. Skills Creat. 5, 1–15. doi: 10.1016/j.tsc.2009.11.001

Kangas, M. (2010b). The School of the Future: Theoretical and Pedagogical Approaches for Creative and Playful Learning Environments. Dissertation thesis, University of Lapland Printing Centre, Rovaniemi.

Kangas, M., Siklander, P., Randolph, J., and Ruokamo, H. (2017). Teachers’ engagement and students’ satisfaction with a playful learning environment. Teach. Teach. Educ. 63, 274–284. doi: 10.1016/j.tate.2016.12.018

Kazanidis, I., Pellas, N., Fotaris, P., and Tsinakos, A. (2019). Can the flipped classroom model improve students’ academic performance and training satisfaction in higher education instructional media design courses? Br. J. Educ. Technol. 50, 2014–2027. doi: 10.1111/bjet.12694

Kim, Y., and Ahn, C. (2017). Effect of combined use of flipped learning and inquiry-based learning on a system modeling and control course. IEEE Trans. Educ. 61, 136–142. doi: 10.1109/te.2017.2774194

Krathwohl, D. R., Bloom, B. S., and Masia, B. B. (1964). Taxonomy of Educational Objectives, the Classification of Educational Goals. Handbook II: Affective Domain. New York, NY: David McKay Co., Inc.

Krivickas, R., and Krivickas, J. (2007). Laboratory instruction in Engineering education. Glob. J. Eng. Educ. 11, 191–196.

Lage, M. J., Platt, G. J., and Treglia, M. (2000). Inverting the classroom: a gateway to creating an inclusive learning environment. J. Econ. Educ. 31, 30–43. doi: 10.2307/1183338

Lai, C. L., and Hwang, G. J. (2016). A self-regulated flipped classroom approach to improving students’ learning performance in a mathematics course. Comput. Educ. 100, 126–140. doi: 10.1016/j.compedu.2016.05.006

Li, P. (2017). “Research on task driven teaching model based on flipped classroom – take the “database principles” course as an example,” in 7th International Conference on Management, Education, Information and Control (MEICI 2017), Amsterdam: Atlantis Press, 549–554.

Li, Z. M., and Ma, M. (2015). “Research on task-oriented project-based flipped classroom teaching mode,” in 2nd International Conference on Education and Social Development (ICESD 2015), Changsha, 243–246.

Liu, H., and Niu, L. (2018). “The practical exploration of task-driven method in the course teaching of information technology,” in 2018 1st International Cognitive Cities Conference (IC3 2018), Okinawa, 291–294.

Lombardini, C., Lakkala, M., and Muukkonen, H. (2018). The impact of the flipped classroom in a principles of microeconomics course: evidence from a quasi-experiment with two flipped classroom designs. Int. Rev. Econ. Educ. 29, 14–28. doi: 10.1016/j.iree.2018.01.003

Ma, L., Hu, J., Chen, Y., Liu, X., and Li, W. (2017). “Teaching reform and practice of the basic computer course based on flipped classroom,” in 2017 12th International Conference on Computer Science and Education (ICCSE 2017), Houston, TX, 713–716. doi: 10.1109/ICCSE.2017.8085586

McDonough, K., and Foote, J. A. (2015). The impact of individual and shared clicker use on students’ collaborative learning. Comput. Educ. 86, 236–249. doi: 10.1016/j.compedu.2015.08.009

Nelson, D. (1984). Transformations: Process and theory. Santa Monica, CA: Center for City Building Educational Programs.

Pagatpatan, C. P. Jr., Valdezco, J. A. T., and Lauron, J. D. C. (2020). Teaching the affective domain in community-based medical education: a scoping review. Med. Teach. 42, 507–514. doi: 10.1080/0142159X.2019.1707175

Rahmat, M., and Saudi, M. M. (2008). E-learning assessment application based on bloom taxonomy. Int. J. Learn. 14, 1–11. doi: 10.18848/1447-9494/cgp/v14i09/45476

Rajesh, A., Baloul, M. S., Shaikh, N., de Azevedo, R. U., and Farley, D. R. (2019). Education website and social media to increase video-based learning of surgical trainees. Sur 17:381. doi: 10.1016/j.surge.2019.03.004

Reeves, T. C. (2006). Design research from a technology perspective,” in Educational Design Research, 1st Edn., eds. J. vanden Akker, K. Gravemeijer, S. McKenney, and N. Nieveen (London: Routledge), 52–66.

Resnick, M. (2006). “Computer as paintbrush: technology, play and the creative society,” in Play 1/4 learning: How Play Motivates and Enhances Children’s Cognitive and Social-Emotional Growth, eds D. Singer, R. Golinkoff, and K. Hirsh-Pasek (Oxford: Oxford University Press), 192–206. doi: 10.1093/acprof:oso/9780195304381.003.0010

Säljö, R. (2005). Lärande i Praktiken: ett Sociokulturellt Perspektiv. Stockholm: Norstedts Akademiska Förlag.

Säljö, R. (2006). “Learning and cultural tools: modelling and the evaluation of a collective memory,” in Presentation at EARLI JURE06 Conference 4th July 2006, Tarto.

Schroeder, N. L., Nesbit, J. C., Anguiano, C. J., and Adesope, O. O. (2017). Studying and constructing concept maps: a meta-analysis. Educ. Psychol. Rev. 30, 431–455. doi: 10.1007/s10648-017-9403-9

Schumacker, R. E., and Lomax, R. G. (2016). A Beginner’s Guide to Structural Equation Modeling, 4th Edn. New York, NY: Routledge, doi: 10.1007/BF02595811

Schwab, J. (1962). “The teaching of science as enquiry,” in The Teaching of Science, eds J. Schwab and P. Brandwein (Cambridge, MA: Harvard University Press).

Servant-Miklo, V. F. C. (2019). Fifty years on: a retrospective on the world’s first problem-based learning programme at mcmaster university medical school. Heal. Prof. Educ. 5, 3–12. doi: 10.1016/j.hpe.2018.04.002

Shi, Y., Ma, Y., Macleod, J., and Yang, H. H. (2020). College students’ cognitive learning outcomes in flipped classroom instruction: a meta-analysis of the empirical literature. J. Comput. Educ. 7, 79–103. doi: 10.1007/s40692-019-00142-8

Sicherl-Kafol, B., Denac, O., Denac, J., and Zalar, K. (2014). Music objectives planning in prevailing psychomotor domain. New. Educ. Rev. 35, 101–112.

Su, Y. S., and Chen, H. R. (2020). Social Facebook with big six approaches for improved students’ learning performance and behavior: a case study of a project innovation and implementation course. Front. Psychol. 11:1166. doi: 10.3389/fpsyg.2020.01166

Su, Y. S., Chou, C. H., Chu, Y. L., and Yang, Z. F. (2019a). A finger-worn device for exploring Chinese printed text with using CNN algorithm on a micro IoT processor. IEEE Access 7, 116529–116541. doi: 10.1109/access.2019.2936143

Su, Y. S., Lin, C. L., Chen, S. Y., and Lai, C. F. (2019b). Bibliometric study of social network analysis literature. Library Hi Tech 38, 420–433. doi: 10.1108/LHT-01-2019-0028

Su, Y. S., Ni, C. F., Li, W. C., Lee, I. H., and Lin, C. P. (2020). Applying deep learning algorithms to enhance simulations of large-scale groundwater flow in IoTs. Appl. Soft Comput. 92:106298. doi: 10.1016/j.asoc.2020.106298

Su, Y. S., and Wu, S. Y. (2021). Applying data mining techniques to explore users behaviors and viewing video patterns in converged IT environments. J. Ambient Intell. Humaniz. Comput. 1–8. doi: 10.1007/s12652-020-02712-6

Sullivan, G. M., and Feinn, R. (2012). Using effect size-or why the p value is not enough. J Grad Med Educ. 4, 279–282. doi: 10.4300/JGME-D-12-00156.1

Sun, Z., and Wu, Y. (2018). “Application of task-driven teaching method in high vocational computer teaching,” in 2018 2nd International Conference on Social Sciences, Arts and Humanities (SSAH 2018), Amsterdam: Atlantis Press, 956–959.

Syaiful, L., Ismail, M., and Aziz, Z. A. (2019). “A review of methods to measure affective domain in learning,” in 2019 IEEE 9th Symposium on Computer Applications & Industrial Electronics (ISCAIE 2019), Malaysia, 282–286. doi: 10.1109/ISCAIE.2019.8743930

van Alten, D. C., Phielix, C., Janssen, J., and Kester, L. (2019). Effects of flipping the classroom on learning outcomes and satisfaction: a meta-analysis. Educ. Res. Rev. 28:100281. doi: 10.1016/j.edurev.2019.05.003

van den Akker, J. (1999). “Principles and methods of development research,” in Design Approaches and Tools in Education and Training, eds J. van den Akker, R. M. Branch, K. Gustafson, N. Nieveen, and T. Plomp Dordrecht: Springer, 1–14. doi: 10.1007/978-94-011-4255-7_1

Vanderhoven, E., Schellens, T., Vanderlinde, R., and Valcke, M. (2016). Developing educational materials about risks on social network sites: a design-based research approach. Educ. Tech. Res. Dev. 64, 459–480. doi: 10.1007/s11423-015-9415-4

Wilson, L. O. (2017). The Three Domains of Learning: Cognitive, Affective, and Psychomotor. Avaliable at: https://thesecondprinciple.com/instructional-design/threedomainsoflearning/ (accessed May 3, 2020).

Wright, N., and Cordeaux, C. (1996). Rethinking video-conferencing: lessons learned from initial teacher education. Innov. Educ. Train. Int. 33, 194–202. doi: 10.1080/1355800960330406

Yilmaz, R. (2016). Knowledge sharing behaviors in e-learning community: exploring the role of academic self-efficacy and sense of community. Comput. Hum. Behav. 63, 373–382. doi: 10.1016/j.chb.2016.05.055

Yin, H. L. (2015). “The design and practice of “flipped classroom” model based on task-driven and micro-lecture,” in 2015 2nd International Conference on Education, Management and Computing Technology (ICEMCT2015), Amsterdam: Atlantis Press, 1456–1460. doi: 10.2991/icemct-15.2015.304

Zucker, L., and Fisch, A. A. (2019). Play and Learning with KAHOOT!: enhancing collaboration and engagement in grades 9-16 through digital games. J. Lang. Lit. Educ. 15:1. doi: 10.4324/9781315537658-1

Appendix

Keywords: flipped learning, innovative pedagogy, higher education, playful learning environment, design based research

Citation: Zhao L, He W and Su Y-S (2021) Innovative Pedagogy and Design-Based Research on Flipped Learning in Higher Education. Front. Psychol. 12:577002. doi: 10.3389/fpsyg.2021.577002

Received: 28 June 2020; Accepted: 18 January 2021;

Published: 18 February 2021.

Edited by:

Michael S. Dempsey, Boston University, United StatesReviewed by:

Nigel Francis, Swansea University, United KingdomJing Qian, Tsinghua University, China

Copyright © 2021 Zhao, He and Su. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Yu-Sheng Su, bnRvdWFkZGlzb25zdUBnbWFpbC5jb20=

Li Zhao

Li Zhao Wei He

Wei He Yu-Sheng Su

Yu-Sheng Su