Abstract

The Bayesian approach of cognitive science largely takes the position that evolution drives perception to produce precepts that are veridical. However, some efforts utilizing evolutionary game theory simulations have shown that perception is more likely based on a fitness function, which promotes survival rather than promoting perceptual truth about the environment. Although these findings do not correspond well with the standard Bayesian approach to cognition, they may correspond with a behavioral functional contextual approach that is ontologically neutral (a-ontological). This approach, formalized through a post-Skinnerian account of behaviorism called relational frame theory (RFT), can, in fact, be shown to correspond well with an evolutionary fitness function, whereby contextual functions form that corresponds to a fitness function interface of the world. This fitness interface approach therefore may help provide a mathematical description for a functional contextual interface of phenomenological experience. Furthermore, this more broadly fits with a neurological active inference approach based on the free-energy principle (FEP) and more broadly with Lagrangian mechanics. These assumptions of how fitness beats truth (FBT) and FEP correspond to RFT are then discussed within a broader multidimensional and evolutionary framework called the extended evolutionary meta-model (EEMM) that has emerged out of the functional contextual behavioral science literature to incorporate principles of cognition, neurobiology, behaviorism, and evolution and are discussed in the context of a novel RFT framework called “Neurobiological and Natural Selection Relational Frame Theory” (N-frame). This framework mathematically connects RFT to FBT, FEP, and EEMM within a single framework that expands into dynamic graph networking. This is then discussed for its implications of empirical work at the non-ergodic process-based idiographic level as applied to individual and societal level dynamic modeling and clinical work. This discussion is framed within the context of individuals that are described as evolutionary adaptive and conscious (observer-self) agents that minimize entropy and can promote a prosocial society through group-level values and psychological flexibility.

Introduction

One well-established assumption within cognitive science is that the cognitive system promotes veridical precepts and that through evolution, natural selection drives increasingly veridical perceptions about the objective world (Marr, 1982, 2010; Palmer, 1999; Geisler and Diehl, 2003; Maloney and Zhang, 2010; Pizlo et al., 2014). There is of course some evidence supporting this claim; for example, the eye, as complex as it is today, was postulated by Darwin (1859) to have emerged from much simpler evolutionary beginnings. Initially, a prototype eye was thought to have evolved through purely stochastic means, which formed and allowed the organism to detect direct light. Given that this light detection would provide the organism a substantial survival advantage over organisms that could not detect light, this adaptation would then be selected and continue to adapt with further evolutionary variation and selection. To support Darwin’s postulate, this prototype eye has subsequently been found in the planarian species Polycelis auricularia and the trochophore planktonic marine larvae (Gehring, 2014). This, according to Darwin (1859), has led to the highly evolved eye we have today that not only detects light but also shape, color, contrast, movement, etc.

In complex organisms, the tapetum lucidum is a layer in the eye of many vertebrates, which sits behind the retina with the sole purpose of reflecting light through the retina, thus allowing more available light to pass through the eye’s photoreceptors. This increases the amount of available light for organisms that have the tapetum lucidum, which allows them to see better in the dark and therefore has obvious survival advantages, particularly if the organism hunts at night or is hunted at night. In fact, organisms that have the tapetum lucidum are typically active at night, such as deer, dogs, cats, horses, and ferrets. Humans, other primates, squirrels, birds, and pigs do not have this structure in their eyes, and this is thought to be because they are diurnal (mainly active during the day; Ollivier et al., 2004). It, therefore, seems that the tapetum lucidum only evolved through selection in animals that directly benefited from it through a direct survival advantage. This gives these organisms greater veridical perception at night than organisms that do not have it (without the use of technology), as they can see light reflect off objects within the world (Bennett & Cuthill, 1994). Importantly, this natural selection advantage in perceiving a veridical world is not isolated to just visual perception; a bat, for instance, can hear ultrasound and uses echolocation to navigate (Jones et al., 2013). Likewise, rats have a higher sense of smell than humans, with a higher number of olfactory receptor neurons and therefore greater veridical olfactory perception (Duchamp-Viret et al., 1999; Keller and Vosshall, 2008). Thus, it seems that all forms of veridical phenomenology are shaped by evolutionary fitness payoffs based on the organism’s context.

However, assuming that any of these perceptions are veridical assumes a naïve realist ontology typical in cognitive psychology, which assumes perceptual mapping is to be exactly veridical, or at least a critical realist ontology, which assumes that at least some aspects of the environment the organism experiences through the senses are based on a “true” veridical reality. Although much of the evolutionary evidence suggests that perception is driven by fitness (contextualized given the organism’s survival or reproductive needs) and not solely by absolute veridical object reality, many cognitive theories have still proposed a naïve realist position (that perception should be veridical and computable through Bayesian decision theory) (Marr, 1982, 2010; Palmer, 1999; Geisler and Diehl, 2003; Pizlo et al., 2014). However, certain mathematical models have also been proposed in the area of evolutionary game theory (Smith and Price, 1973; Smith, 1982; Nowak, 2006) that show and emphasize an entirely non-veridical nature to the perception that evolutionary fitness within these game simulations selects non-veridical perception in nearly all cases (Mark et al., 2010; Prakash et al., 2021).

These findings have broad implications for a philosophy of science in the psychology of the “mind” or “behavior” of an organism. It rationalizes that the “mind” or “behavior” is shaped at its foundation by an evolutionary fitness function rather than specific ontologies such as physicalism, mentalism, or naive realism, ultimately giving rise to an ontologically neutral (a-ontological) stance on these phenomena. This a-ontological position is directly opposed to the naïve (or critical) realist position of cognitive psychology. Instead, it is more aligned to a behavioral position, for example, a post-Skinner contextual behavioral science account based on functional contextualism relational frame theory (RFT; Barnes-Holmes et al., 2001; Blackledge, 2003; Zettle et al., 2016), which is based on behavioral pragmatism and also holds an a-ontological position in explaining how complex human behavior emerges (Barnes-Holmes, 2005; Codd, 2015; Monestes and Villatte, 2015).

Given such an a-ontological alignment, and the fact that recent developments within clinical psychology are suggesting a move away from protocols of syndromes, such as the Diagnostic and Statistical Manual of Mental Disorders (DSM-5; American Psychiatric Association, 2013), and toward a more process-based therapeutic (PBT) approach (Hayes and Hofmann, 2018; Hayes et al., 2019, 2020) that highlights evolution as central (evolutionary variation, selection, retention, and context; Hayes et al., 2020; Hofmann et al., 2022) within the therapeutic practice, it seems appropriate to explore how the evolutionary game theory (Smith and Price, 1973; Smith, 1982; Nowak, 2006) can be applied within the context of this study. This specifically relates to evidence provided through mathematical simulations that show phenological experience (e.g., perception) to be non-veridical and instead act through a perceptual interface based on evolutionary fitness functions (Mark et al., 2010; Hoffman and Prakash, 2014; Hoffman et al., 2015; Prakash et al., 2021).

The evolutionary approach of PBT is defined through the extended evolutionary meta-model (EEMM; Hayes and Hofmann, 2018; Hayes et al., 2019, 2020). This is a multilevel and multidimensional modeling approach that emphasizes data modeling to be driven at the non-ergodic ideographic individual level and not a nomothetic population-driven approach. The EEMM dimensions include affective, cognitive, attentional, self, motivational, and overt behavior and have two levels of analysis that are biopsychological and sociocultural. As the EEMM is an expanded contextual behavioral approach that has traditionally emphasized acceptance and commitment therapy (ACT) at a middle level (Hayes et al., 1999, 2004, 2011) and RFT at the basic level (Barnes-Holmes et al., 2001; Blackledge, 2003; Zettle et al., 2016), an exploration of RFT framing will be performed, as well as the psychobiological level of the EEMM in the form of principles of free energy and the Markovian blanket typically associated with neuroscience (Friston, 2010; Schwartenbeck et al., 2013).

An even deeper contextual analysis is also explored at the level of entropy, related to chaotic dynamical self-adaptive behavior, in which value-directed behavior and other dimensions of EEMM and principles of free energy can lead to a reduction in entropy. This includes principles of Lagrangian mechanics and how this interfaces with perceptual and phenomenological experience based on an evolutionary fitness function. In addition to this, there has been, to date, less attempt to define mathematically a formal framework that directly and explicitly extends the RFT’s relational frames through the EEMM and within an ideographic PBT network approach (an ideographic dynamic network analysis, IDNA), that incorporates evolution, free energy, expected utility, and entropy. The basic outline of one such formal mathematical framework implementation is given here and is called the “Neurobiological and Natural Selection Relational Frame Theory” (N-frame), which emphasizes RFT relational frames projected through the EEMM and free energy that define important mathematical structures called Markov blankets, which are important for defining an unbounded mathematical space for “self” in RFT (i.e., separating observer self from self as content).

This theory and hypothesis paper explores (1) the standard cognitive Bayesian approaches to veridical perception and simulations confirming Hoffman and colleagues’ (Mark et al., 2010; Hoffman and Prakash, 2014; Hoffman et al., 2015; Prakash et al., 2021) suggestion that phenomenological experience such as perception is based on fitness and not veridical Bayesian “truth”; (2) logical and set theory mathematical interpretations of RFT that may be applicable for time series ideographic PBT; this includes how to develop these into graphs of graph theory computationally; (3) problems with rare paradoxical self-referential strange loops that are introduced in formal atomic logical system models and could apply when modeling deictics of RFT “self” in more complex modes; in addition, how Markovian blankets and Lagrangian mechanics maybe one solution to this; and (4) the broader implications of how entropy reduction and embodied self naturally emerge when introducing personal values and Lagrangian mechanics.

The standard cognitive Bayesian framework for visual perception

A common approach to visual perception in cognitive science (which assumes a naïve realist ontology) is to use a Bayesian approach and assume that perception is veridical (Marr, 1982, 2010; Palmer, 1999; Geisler and Diehl, 2003; Pizlo et al., 2014). According to this approach, given some image x0, the cognitive-visual system attempts to find the most probable interpretation of the world. To do this, it compares the posterior probability P of various interpretations of the world w given some image of the environment that is being seen x0, and this can be denoted as . When using Bayes’ rule (theorem), the posterior probability f of a cognitive interpretation of the world x when given some image w is given by:

The term P(x0) is the posterior probability of some image that does not depend on a cognitive interpretation w. Given this Bayes’ theorem interpretation, the posterior probability is determined by the product of two terms: (1) the probability of an interpretation given some image , and (2) the probability of an interpretation prior to any image presented (prior probability) P(w). These are then divided by P(x0).

This application of Bayes’ rule yields a probability distribution space of possible interpretations called the posterior distribution. It can be interpreted as a naïve realist cognitive approach (or veridical truth strategy) to perception, whereby it gives the highest posterior probability given some image x0, and it is this strategy that is selected by the perceptual system when processing perceptual “truth.” This strategy consists of a maximum-a-posteriori (MAP) that selects the most probable perception given the posterior distribution, according to this Bayes’ theorem approach. Here, a function f can be described, whereby a sampling distribution of observations (in this case images) x can be made, an unobserved population parameter is given as w, and therefore the function is the probability of x when the population parameter (or world) is w. This is given as , and the maximum estimate of w is given as . The Bayes’ rule MAP strategy also depends on a gain or loss function that describes the consequences of making errors. Here, for visual perception, it uses a Dirac-delta loss function, which gives no loss consequence for a correct (or near-correct within a tolerance level) answer and an equal loss consequence for all incorrect answers (or interpretations in the case of visual perception).

Computational evolutionary perception

In the standard Bayesian approach of perceptual vision, the space W plays two distinct roles: (1) it corresponds to the space of objective world states, and (2) it corresponds to the space of perceptual interpretations from which the visual (or more broadly phenomenological) cognitive system must choose. One problem with this standard Bayesian approach for visual perception is that the framework conflates the Bayesian interpretation space with the real world by assuming their structures are homomorphic. However, for the many reasons already given (e.g., the examples of evolution selecting attributes for fitness rather than truth), they are unlikely to be entirely homomorphic.

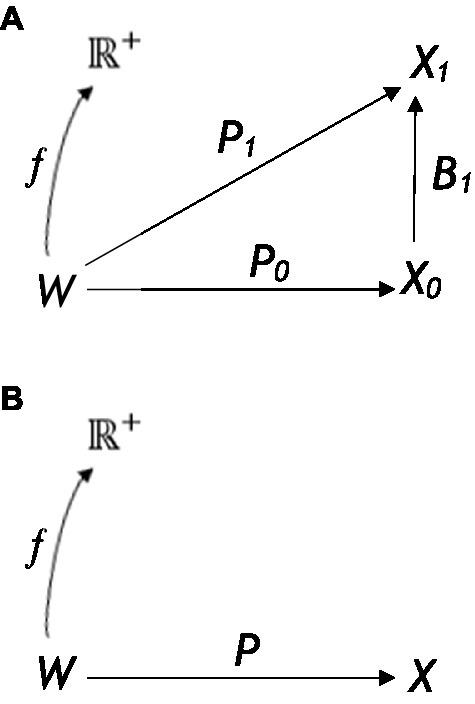

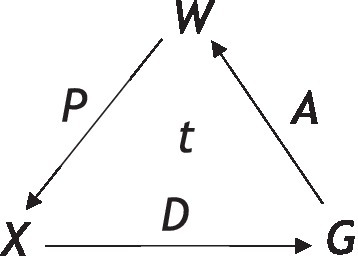

An alternative theory that does not assume any homomorphic relation between the Bayesian interpretation space and the objective world (i.e., does not assume perception is veridical) is the framework of computational evolutionary perception (CEP; see Figure 1; Hoffman and Singh, 2012; Dickinson et al., 2013; Hoffman et al., 2015), which instead assumes perception is based on fitness. Here, the probabilistic inference that results in perceptual experience takes place in a separate observer-specific space of perceptual interpretations X1 that does not need to be homomorphic to W.W is therefore entirely an observer-independent world; X0 and X1 are two perceptual interpretation spaces, while P0 and P1 are their respective perceptual channels. The fitness map of this evolutionary approach is denoted as f, and perceptual inference is given by the Bayesian posterior map that takes place in X1 and not W. Here, the relation between W and X1 does not need to be homomorphic or even isomorphic, unlike the Bayes’ rule MAP truth strategy.

Figure 1

(A) The framework of computational evolutionary perception W is the observer-independent world, X0 and X1 are two perceptual (or representational) spaces, and P0 and P1 their respective perceptual channels f is a fitness map. In (B), a framework to define the two resource strategies. A fixed perceptual map is assumed and a fixed function The organism must select a territory associated with the greatest fitness payoff, given a choice of available territories that it identifies through sensory states [Reprinted with permission from Springer (Prakash et al., 2021)].

In the CEP framework, when an image is seen and interpreted as having a 3D shape, it is assumed that this is because of the probabilistic inference in the perceptual space X1 resulted in such a 3D shape. In this framework, the perceptual interpretation is selected by natural selection, whereby a perceptual interpretation with the highest expected fitness payoff is selected. This means that perceptual interpretations are selected when they lead to a higher expected-fitness payoff (and not those that are most veridical) in the form of more effective interactions with the environment that ultimately promote survival and reproduction. As a simple example of this, if the organism was a hungry lion and the action (behavior) was to eat, then the fitness map f may have a high value in a world w where meat is highly available (but other forms of food are not). However, if the organism was not meat-eating, such as a rabbit, then f may assign a low value to the same state of hunger in the same world w. This shows how context-dependent and crucially important functional context is in these types of frameworks (i.e., fitness is based on the specific functional context of the organism, such as meat eating or not, in selecting the correct environment to satisfy the functional cue of hunger). It is for this reason that functional contextualism as an a-ontological approach is highly relevant, particularly when complex human behavioral variation and selection are considered. CEP would, therefore, need to define itself specifically with functional contextualism and integrate itself within a broader EEMM to scale to real-world dynamics with complex human behavior.

As an example of this integration, CEP can describe how given complex higher-dimensional representational structures, such as a 3D representation in X1, are interpreted rather than a simpler 2D representation of X0 because it allows a higher expected fitness payoff (as depicted in Figure 1A). However, this 3D image does not need to resemble homomorphically or isomorphically the veridical objective world and Hoffman and colleagues provide a fitness beats truth (FBT) mathematical theorem to demonstrate this (Hoffman, 2019; Prakash, 2020; Prakash et al., 2021). Applying CEP within a well-defined functional contextualism context and a broader EEMM can then allow for greater scalability for real-world complex behavior, as functions generally follow similar fitness payoffs rather than being of a veridical fixed “truth” nature. If a person, for instance, drives their behavior in line with fitness that is functionally contextually relevant and sensitive, such as behaving in a way aligned to arbitrary functional context rather than fixed notions of truth about the world, then the payoff is likely to be larger than if they used a simple, fixed veridical strategy. This can be seen, for instance, in the attribution, understanding, and use of concepts when using language embedded within complex conceptual learning histories, and is particularly relevant in artificial intelligence and understanding public health messages (Mulhern et al., 2018; Edwards, 2021; Edwards et al., 2022).

In evolution theory, fitness refers to the probability of transferring genes and associated characteristics from one generation into the next (Darwin, 1859; Smith, 1982). However, different decisions and behaviors (actions) a of an organism or population can also have a fitness value, and this can be represented as a global fitness function that depends on the state w of the world W in which the behavior takes place, the organism type o that is making the behavior (e.g., human, lion, and dog) that provides context to the behavioral states, and the state s of the organism (hungry, thirsty, fearful, etc.). Fitness functions between organisms can vary greatly, sharing less correlation with one another (hence the importance of functional contextualism, as each organism will have different functional needs). For any particular organism, the complexity of the fitness function grows rapidly as the number of possible states and actions increases.

From the perspective of evolutionary game theory (Smith and Price, 1973; Smith, 1982; Nowak, 2006), the behavior of different organism types is competing for fitness points as they interact in some shared environment W. In an evolutionary-based competitive game, natural selection favors the precepts, decisions, and behaviors of these organisms that yield higher fitness points. In a very simple game, whereby all the organisms are of the same type o, have the same state s, and have a single available behavior (or action) a, this can be modeled as a specific fitness function. The function can be described as a nonnegative real-valued function defined on world W. The function can be denoted as and means that only the state w of the world W (and not the state s, organism o, or behavior a) can vary between a value of zero and a value below infinity.

Using this approach, the fitness of different perceptual and behavioral decision strategies is compared through an evolutionary resource game (Mark et al., 2010; Hoffman et al., 2013). In a typical game, the game consists of two organisms, utilizing different strategies and competing over territory, with a limited number of resources. In the first instance, available territories are observed by the first “player,” and the player chooses the territory that they estimate to be the optimal one (the highest expected fitness payoff), then receives a fitness payoff for that chosen territory. The second “player” then does the same with the remaining territories available and receives a fitness payoff for that chosen territory. Then the two players take turns in choosing the remaining territories, receiving a fitness payoff for each chosen territory, and both trying to maximize their fitness payoffs for the territories they choose.

Here, the relevant world attribute w is the number of resources in some given territory, and W is depicted as the world of different quantities of some resource in different territories. A perceptual map can then be considered , whereby X is a set of perceptual states (for instance, red, yellow, green, and blue) in the world state W, and this, for example, could equal some arbitrary value such as one to a hundred different world states, i.e., from W = [1,100], while . In this approach, perceptual map P, therefore, selects the best perceptual element state of the set relevant to the strategy being employed.

A fitness function of the world assigns a nonnegative fitness value to each resource quantity for each territory between zero and a number below infinity. Some functions can be monotonic (whereby as resources keep increasing, fitness also increases), while others can be nonmonotonic (even if resources keep increasing, fitness peaks at a certain number of resources). Nonmonotonic fitness functions are more likely in the natural world as having too many resources may eventually not serve any advanced (e.g., too many food resources have no advantage if excess cannot be stored). As energy resources are limited, the organism is likely to balance the effort made in obtaining resources and the need to obtain resources.

One important assumption of this evolutionary approach is that fitness does not need to correlate in any way with a fixed, veridical “objective truth” about the world. This is because, though fitness depends on the world the organism lives in, it also depends on the organism, and crucially the organism’s perceptual states, as well as the organism’s behavioral action class being considered. In other words, even if the world remains the same, if the action class, perceptual state (or functional context), or even the organism changes, then very different fitness values can be formed. Thus, fitness is entirely functionally context-dependent based on the functional context of the organism and not based on fixed veridical objective truth.

In evolutionary game theory, the organism’s behavior depends (see Figure 1B) on the following three elements: (1) the fitness function as defined by its states, action class, etc.; (2) its prior probabilities of world states; and (3) its perceptual map from a world state via sensory states (i.e., resources in territories w estimated via different sensory states x). The organism observes a number of territories in any given trial (or turn in the game), and these are available through its sensory states, . The goal of the organism (in this example) is to select a territory associated with the greatest fitness payoff (i.e., the greatest resource fitness payoff). In this case, two possible strategies are competing against one another: a perceptual truth strategy consistent with a naïve realist cognitive approach or a fitness-only strategy that is more consistent with a functional contextual approach.

For the standard perceptual truth strategy typical of a cognitive science approach (that assumes evolution is driving veridically true perceptions), the organism estimates the world state (territory resources in this example) for each of the n sensory states . Objective “truth” is given by the Bayesian MAP estimate that gives the world state the highest probability of being the true one, given the sensory state. The fitness values of the n “true” world states are then compared, and finally, a choice of which territory to select is made. This choice is ultimately based on the sensory states xi that yield the highest fitness values. Crucially, the choice is mediated through the MAP estimate of the world that it considers to be absolutely true and ignores any information about other possible states of the world other than the one being selected as true.

In the fitness-only strategy, the organism does not attempt to estimate the “true” world state for each sensory state. Instead, the expected fitness payoff that results from each possible choice based on sensory states xi is utilized. Given the possible world states, there is a posterior probability distribution for each given sensory state xi and a fitness value for each given world state. These fitness values are then weighted through the posterior probability distribution. This is done so that the expected fitness values for a given choice xi can be computed, and the highest expected choice fitness is then ultimately chosen.

In an example of an actual evolutionary game between two strategies, A and B, the payoff matrix is illustrated in Table 1A. Payoffs are denoted by a, b, c, and d for a row player against a column player, for example, b is the payoff to A when A plays B. Fitness payoff a is defined as “fitness-only when playing against fitness only,” fitness payoff b is defined as “fitness-only when playing against truth,” fitness payoff c is defined as “truth when playing against fitness-only,” and fitness payoff d is defined as “truth when playing against truth.” There are three main theorems from evolutionary game theory that are relevant to such an analysis, which are true through mathematical proofs (Nowak, 2006):

Table 1

| A | Against A | Against B | ||

|---|---|---|---|---|

| A plays | a | b | ||

| B plays | c | d | ||

| B | Likelihood of wj given x1, | Likelihood of wj given x2, | Prior | Fitness |

| w 1 | 1/4 | 3/4 | 1/6 | 19 |

| w 2 | 3/4 | 1/4 | 3/6 | 5 |

| w 3 | 1/4 | 3/4 | 3/6 | 4 |

| C | Likelihood of wj given x1, | Likelihood of wj given x2, | Prior | Fitness |

| w 1 | 3/4 | 1/4 | 1/5 | 6 |

| w 2 | 1/4 | 3/4 | 3/5 | 5 |

| w 3 | 3/4 | 1/4 | 3/5 | 21 |

A payoff matrix in evolutionary game theory for two strategies, A and B.

Fitness payoff a is defined as “Fitness-Only when playing against Fitness-Only,” fitness payoff b is defined as “Fitness-Only when playing against Truth,” fitness payoff c is defined as “Truth when playing against Fitness-Only,” and fitness payoff d is defined as “Truth when playing against Truth.” B demonstrates the fitness beats truth in the evolution of perception values. C demonstrates the fitness beats truth in the evolution of perception after a major environmental change.

Theorem 1. In a game with a finite population of two types of players, A and B, if b > c, a > c, and b > d, for all N, and , then selection favors A.

Theorem 2. In a game with a large finite population of two types of players, A and B, and with weak selection, implies that . Therefore, if a > c and b > d for large enough N, then selection favors A.

Theorem 3. For all possible fitness functions and a priori measures, the probability that the fitness-only strategy dominates the truth strategy is at least , where is the size of the perceptual space.For a simple example where there are three world states, and two sensory state stimulations , there is a likelihood value for each sensory stimulation given a world state, and this is given by (see Supplementary material 1, which uses the example in Table 1B). To summarize, from this “truth” observer strategy calculation, for state x1, the truth (i.e., the maximum-a-posterior) estimate is w2 and for state x2, the truth estimate is w3. The truth observer is thus essentially given a choice between selecting the resource w2 or w3 (when given sensory state stimulations x1 and x2) that contain food, and whereby w2 has higher quality food and thus higher fitness value than w3. Given the natural selection, the food (world w) with the highest fitness is then selected, and in this case, it will therefore prefer w2 as fitness value is greater than fitness value . When using this fitness payoff, and then when offered a choice between x1 and x2, the truth observer will choose x1 (corresponding to w2) with an expected fitness utility of 5.85 (as yielded the greatest direct payoff in the previous steps 3 and 4).

In contrast to this, a “fitness-only” observer is not attempting to calculate the veridical truth about the environment through a Bayesian process. Instead, the “fitness-only” observer is only concerned with which sensory experience yields a higher expected fitness (i.e., maximizing expected fitness). Thus, for a fitness-only observer, step 4 gives these fitness values for both x1 and x2. As the expected fitness utility for x1 is 5.85 and the expected fitness utility for x2 is 6.17, given a choice between sensory states x1 and x2, the organism using a fitness-only observer will choose the higher value of 6.17, and thus chooses the state x2. From this analysis, it is therefore clear that the truth observer minimizes expected fitness while the fitness-only observer maximizes expected fitness. In this type of evolutionary game theory, the fitness-only observers will therefore drive the population of truth observers into extinction. Hence, perception, given evolutionary game theory, is likely to be based on fitness and not truth. For other examples that account for more complex situations such as sudden environmental change, please refer to Supplementary material 2, which uses the example in Table 1C.

However, despite the ability to account for environmental change, the games employed are very simple. When dealing with real-world scenarios, such as individuals with mental health disorders, modeling these types of evolutionary game theory simulations is much more challenging. In these types of scenarios, their sensory states are likely to be distorted, such as experiencing negative mood states and a lack of motivation, which all would need to be modeled in an evolutionary game in a real-world context. Given the fact that these games within evolutionary game theory are based on functional contextualism, such that fitness in these games is based on a combination of the organism’s sensory state x (such as hunger, which is the function of that organism driving its behavior at time point t1) and the context of a world w, i.e., whether the world has what the organism needs to satisfy its functional state (if it does, then that world w has a high fitness value f for the organism’s current functional state x that drives its behavior), then these games may benefit from the application of a formal operational definition for functional contextualism, and within a broader EEMM so that it could potentially be usefully applied to clinical settings and PBT research.

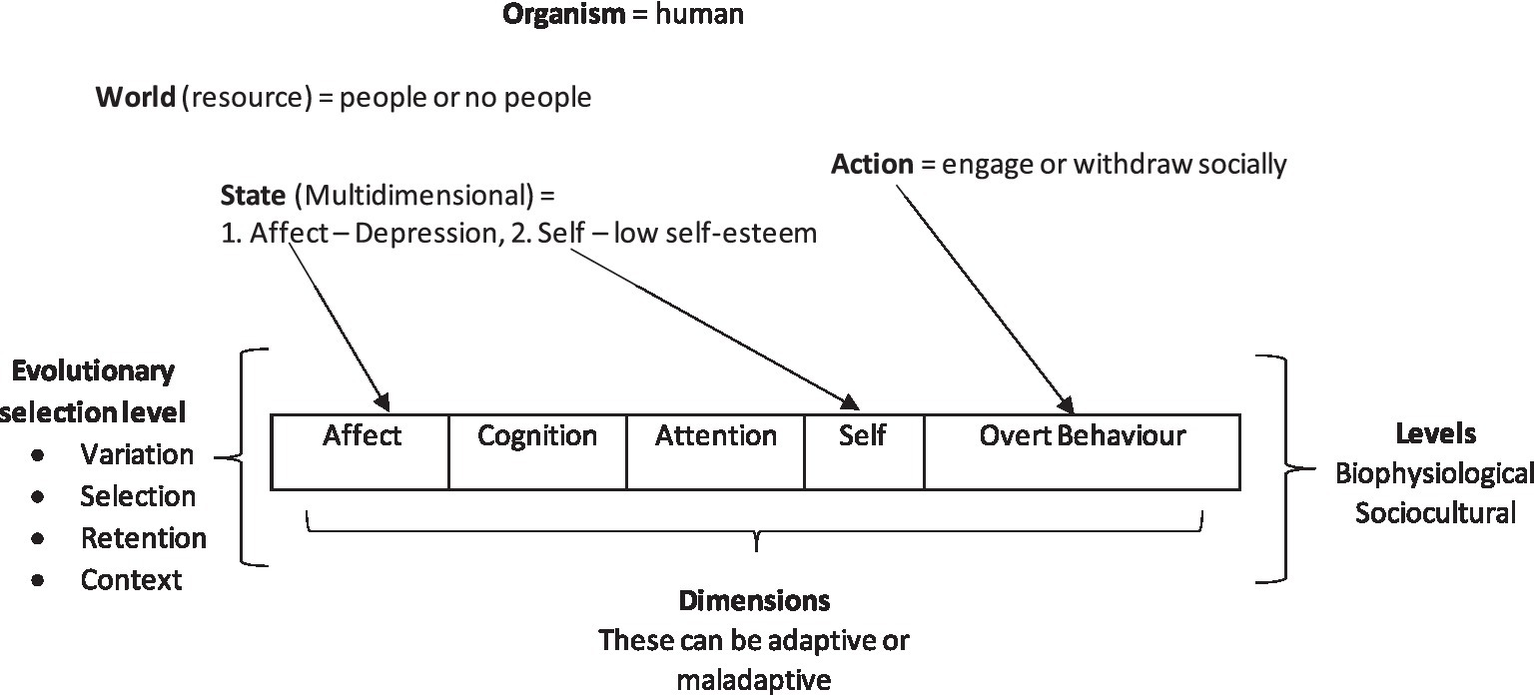

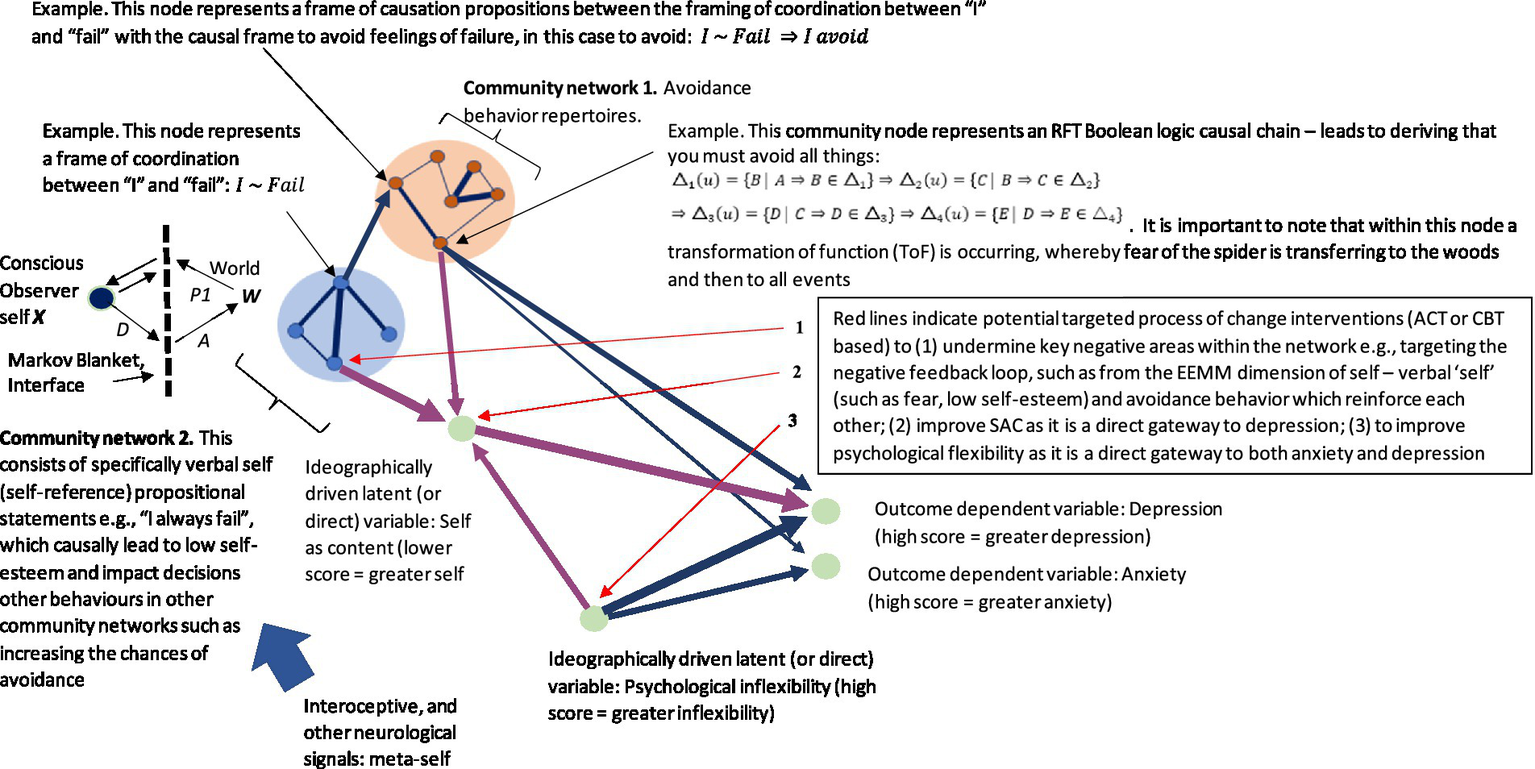

Given this assumption, greater modeling efforts that increase the dimensionality of the interface could strengthen the interface’s fitness. For example, the EEMM, an extension of the functional contextual approach, suggests that there are six dimensions (affective, cognitive, attentional, self, motivational, and over-behavior) at two levels (biopsychological and sociocultural), as the approach is evolutionary meta-model; these represent appropriate dimensions for adaptive organism behavior. Figure 2 shows a simple example of how a human with the states of depression and low self-esteem connects to two dimensions of the EEMM. These dimensions that were identified after an examination of 55,000 studies, whereby 72 measures that had successfully mediated intervention outcomes were replicated, were extracted and summarized into the five dimensions and two levels of the EEMM (Hayes et al., 2022; as shown in Figure 2).

Figure 2

An illustration of how the evolutionary game theory constructs fit and are enhanced by EEMM in “evolutionary game simulations” that involve complex mental health outcomes.

These dimensions may provide a much richer context for modeling relevant clinical processes that could be exploited in such games. For example, the affective dimension relates to emotional states; thus, a game could be designed that defines a sensory state x as having some negative effect and some resource in a world w that brings about positive affect. Fitness f would then be determined based on wellbeing, whereby worlds that bring about greater positive affect are fitter than those that do not. Similarly, the cognitive dimension relates to problem-solving, hope, and attitude; attentional dimensions relate to mindfulness and attentional control; motivation relates to values commitment; and overt behavior relates to goal-striving persistence. These dimensions can therefore be applied as states x, and resources within worlds w are chosen based on the dimensions of EEMM. Selection level of variation, selection, retention, and context are of course relevant in these games as indicated by EEMM, whereby if the context changes, e.g., the world changes due to environmental shift and can no longer provide a positive effect, then it is no longer retained, and selection begins again.

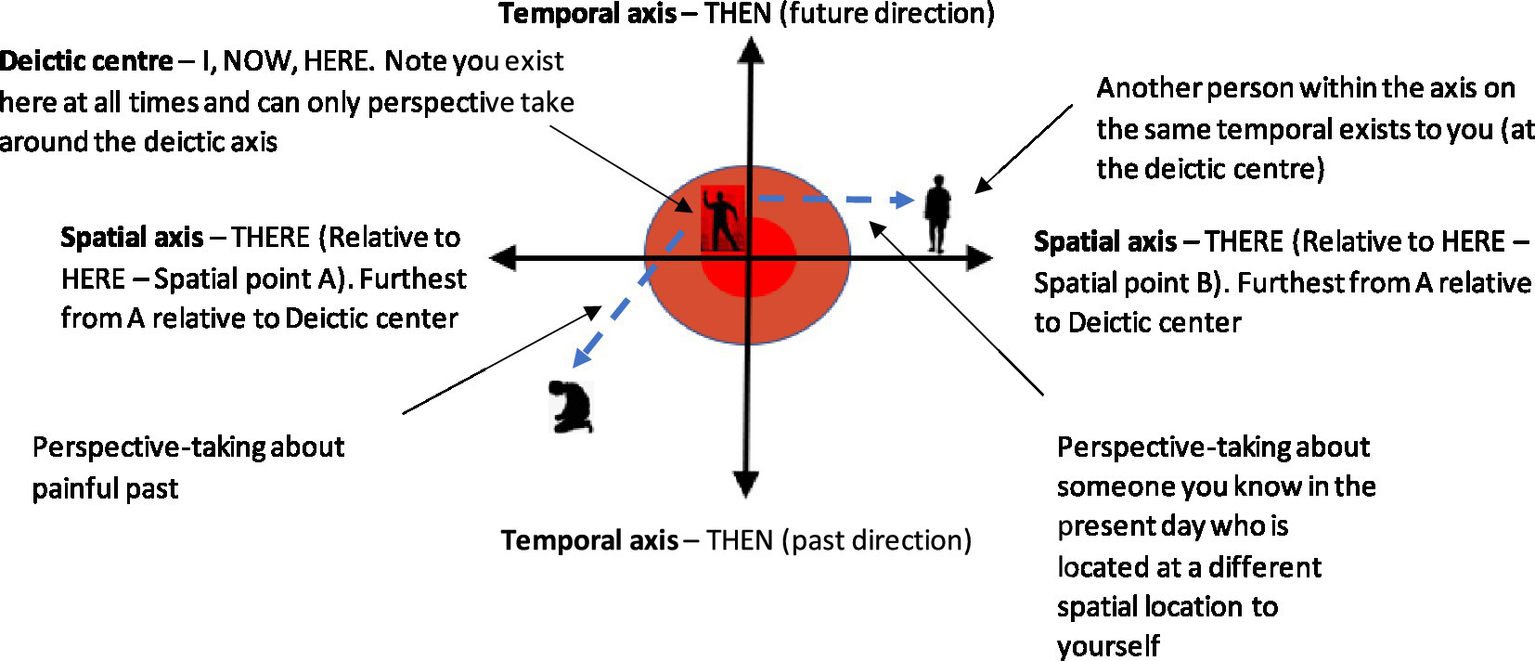

In one concrete example, the self-dimension is selected in the Figure 2 example, and this self-dimension can be further illustrated by the deictic axis (Figure 3). This breaks this complex dimension of self into its subcomponents of perspective-taking relational frames from the functional contextual RFT model, in the form of the interpersonal (I vs. YOU), the temporal (NOW vs. THEN), and spatial (HERE vs. THERE). In an evolutionary game, this may provide rich context that can define which sensory states are accessed under what context (such as thinking about the future or past) and can potentially increase fitness payoff as a result of this context. For example, if resources in a game are limited, then having perspective about the other player’s state space may help the player avoid direct territorial conflict and select the most optimal world. For example, prosocial behavior may be explained as individuals tapping into collective perspective-taking states about the others’ collective needs, and hence shared goals emerge, which increase fitness for the group as well as the individual, hence encouraging prosocial selection of behavior (Atkins et al., 2019). Crucially, maximizing fitness payoffs of a much higher magnitude would not be possible for the individual to obtain alone.

Figure 3

The deictic axis—a visual representation of an individual who relationally frames themselves in the present moment while mindfully observing their painful past. This would be expressed as “I” in the “HERE” and “NOW” as “I” perspective-take about my painful past in the “THERE” and “THEN.”

One current problem is that there has been less effort to mathematically define a functional contextual RFT model within the context of EEMM and evolutionary game simulations in a way that could be visualized within graphs of graph theory and be usefully applied in PBT studies. Within graphs, these should represent functional analytic variables and more broadly patterns of arbitrary applicable responding, that have varying influence over a broader relational network. This will then have scope to connect RFT to the dimensions of EEMM and within the context of evolutionary games. One such approach is to define in detail how relational frames using logic and set theory can be encoded into graphs.

Set theory and formal relational frame logical axioms represented within community network graphs

Within the functional contextual relational frame theory (RFT), there are several patterns of arbitrary applicable responding, or types of relational frames, which include frames of coordination (stimuli x is the same as stimuli y); frames of distinction (stimuli x is not the same stimuli y); frames of causation (if x occurs, then y will follow); frames of comparison (e.g., x is bigger than y); frames of opposition (e.g., left is the opposite of right); frames of hierarchy (e.g., Alsatian is a type of dog); and deictic relational frames (perspective-taking), which involve some self-reference of the impersonal relational frame (I vs. YOU), the spatial relational frame (HERE vs. THERE), and the temporal relational frames (NOW vs. THEN).

These relational frames can be formalized in mathematics through the derivation or deduction of properties or propositions (formalized through logic) with respect to objects or elements belonging to a set (general properties of elements and sets can then be formalized through graph theory), and therefore formalized within the set theory and mathematical logic (Pereyra, 2020). There is a natural relationship between set theory and logic; for example, if A is a set, then proposition is a logical formula of that set, which states that a proposition holds for some value of x in a domain (or set) associated with x. The proposition is therefore true for elements of set A and false for elements outside of set A.

Within mathematical logic, there are subbranches of propositional algebra and predicate logic. A proposition is any statement that can be assigned a unique value of either true or false and cannot be both true and false (the law of excluded middle). As an example of how to apply simple logic (without set theory) to relational frames, denotes that A and B are equivalent, whereby is the mathematical operator for equivalence. In RFT, deriving stimulus relations is a special generalized form of relational responding (Gross and Fox, 2009), and the logic symbol ⇒ can be used to symbolize this as it is the operator for “implies” (or “derives”). Thus, if A is bigger than B, then it can be derived that B must be smaller than A, and this can be expressed as A is bigger than B ⇒ B is smaller than A.

This can be taken a step further, by applying equivalence to relational frames when applied to set theory. There are three classes for this, namely, reflectivity, symmetry, and transitivity. In set theory, if A is equivalent to , then some element x of set A must also be equivalent in set , so that . A very simple equivalence relation can be given by ~, and this is the relation between elements of sets or two sets that hold three conditions: (1) reflexivity ; (2) symmetry ; (3) transitivity is expressed as whereby if a and b are equivalent, and b and c are equivalent, then, therefore, a and c must be equivalent. In RFT, transitivity is called combinatorial entailment. The equivalence relation ~ also applies to sets, such that set A equivalence can be used to partition the set into a series of subsets of A that are equivalent to each other. The equivalent elements contained in these subsets are equivalent classes. According to categorization theory and RFT work, these shared relations of equivalence do not need to be of physical shape or size; they could, for instance, depend on some contextually dependent function such as the concept of cutlery sharing the equivalent function “to eat with” within an equivalence class subset (such as fork, knife, and spoon).

Elements here do not need to relate to other elements with just equivalence; they can also express other relations, such as “larger than” and “faster than,” through propositional algebra. Propositional algebra is a subbranch of mathematical logic relating to propositions and logical operators. Any statement that can be assigned a unique logical value of “true” (T) or “false” (F) can be described as a proposition. Logical operators can be used to define a new proposition S from one or more given propositions, such as propositions A and B. In this type of situation, the logical value of the new proposition S depends on the logical values of the propositions A and B. All of the possible combinations of logical values can be presented in a truth table for the propositions A and B that indicates the corresponding logical value for S for each combination. A truth table, therefore, determines the value of the logical operator. In a simple example of this, the BOTH-FALSE operator ⊗ can be used between two propositions, e.g., A and B, such as A ⊗ B and is only true (T) only if both A and B are both false (F).

The main formal logical operators are NOT, OR, AND, IMPLIES, and EQUIVALENT. Operators such as the NOT operator can be defined in the following way: , whereby ≡ means something is either identical or similar to another element or set but not necessarily equal to it. The relation frame of opposition can be defined as , whereby A equals the opposite of itself, expressed as , or in another example, the color black is equivalent to the opposite of white, expressed as . Going beyond simple logic to define a relation of mutual entailment in logic and set theory, whereby including sets is important when concepts are considered categories (or sets of elements) of things in the world, mutual entailment expresses a relation between two variables or statements in which one statement or variable logically implies the other and vice versa. This means that if one of these variables is false, or assigns some operator, it also applies to the other mutually entailed variable. To express a relational frame of mutual entailment of two variables A and B, this can be expressed in set theory as and . This means that A is a subset of B and B is a subset of A, or more generally, all the elements in A also belong to B and all the elements in B also belong to A. For example, the mutual entailment between the spoken word “snake” and the concept of an actual snake within the real world, can be represented in set theory as follows ({} represent the category or set of some elements contained inside):Verbal word "snake" = {x|x is the verbal word "snake"}, Actual snake = {y|y is an actual snake}, Verbal word "snake" ⊆ Actual snake, Actual snake ⊆ Verbal word "snake".

Combinatorial entailment can be given in set theory, as in the following example: If a is bigger than b and b is bigger than c, a is, therefore, bigger than c. This can be expressed using logic and set theory as follows, with these steps: (1) Define the sets A, B, and C, where A represents the set of all elements that are bigger than element b, B represents the set of all elements that are bigger than c, and C represents the set of all elements that are smaller than b; (2) define the logical statement “a is bigger than b and b is bigger than c” and this is denoted as ; (3) The definitions of sets A, B, and C, can then be used to rewrite the logical statements as follows: , which can be read as “a is an element of the set of all elements that are bigger than b, and b is an element of the set of all elements that are bigger than c.” To simplify the definition, the set-theoretic notation can be used to express the statement a is bigger than b and b is bigger than c, as follows: . Finally, to express the combinatorial entailment, this requires simply adding the implies operator , so that .

For the relation of opposition, if there are two variables, A and B, this can be expressed in set theory as follows:

This can then be represented as which means that A and B have opposite properties, such that A could be true and B could be false, or vice versa. This can also be represented as , which means that A and B have no elements in common, i.e., there are no values that belong to both A and B at the same time. For example, this opposite relational frame can be used between two variables “being stronger” and “being weaker” as follows:

, , , (For a more complete list of relational frames including the transformation of function (ToF), refer to Supplementary material 3).

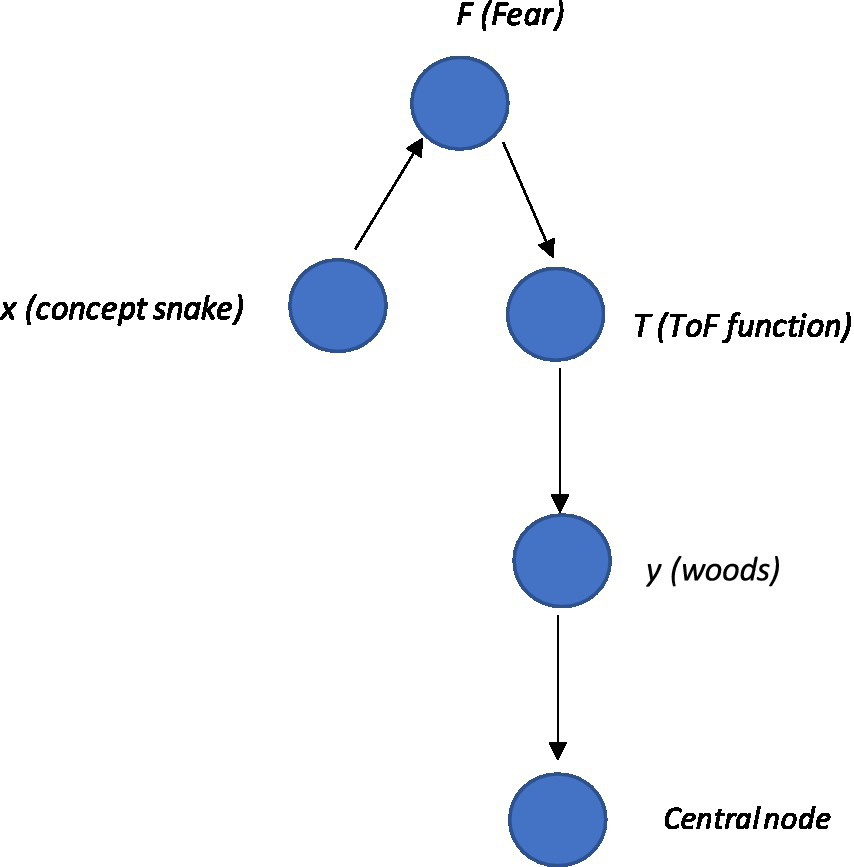

These can be represented visually in a very simple network graph with the following steps: (1) first define a set of nodes where each node represents an element in the community (e.g., snake or woods—see Supplementary material 4 Python code as an example1). Nodes can also represent functions such as ToF and relations in some cases; (2) then define a set of edges, where each edge represents a relational frame between two nodes. For example, the hierarchical frame of a snake in the woods can be expressed with edges that represent the hierarchical relationship between concepts represented by the nodes. The edges can be represented visually by directed lines pointing from a node representing a parent to a node representing a child (i.e., the child is a sub-element of the parent). Edges can appear differently, representing different frames such as shades, colors, and labels. This can be expressed mathematically, whereby E is some relationship and n is some node, which can be deonted as ; (3) then use graph theory techniques such as traversal algorithms or centrality measures to explore the relational frames within the network community and identify patterns and trends. This can be made more specific by including a node function, T, that transfers (ToF) the set of things you are afraid of, F, to the set of woods that you are not afraid of, W. In terms of the code, one way of doing this is to create a function (see Supplementary material 5) that simply transfers “woods” directly into the set of things the individual is afraid of. Notably, within a subgraph, one community central node can receive inputs from the community and produce output that comprises the total weight within the community. (Refer to Figure 4 as an illustration of this with the ToF function node T included).

Figure 4

The basic schematic of the graph, whereby snake node x is framed with fear node F (illustrating the function of the snake as fear), and connecting with the computational function node T that expresses the ToF from fear F of snake x to local woods y. In addition, one central node receives input from the whole community, including a ToF between two nodes.

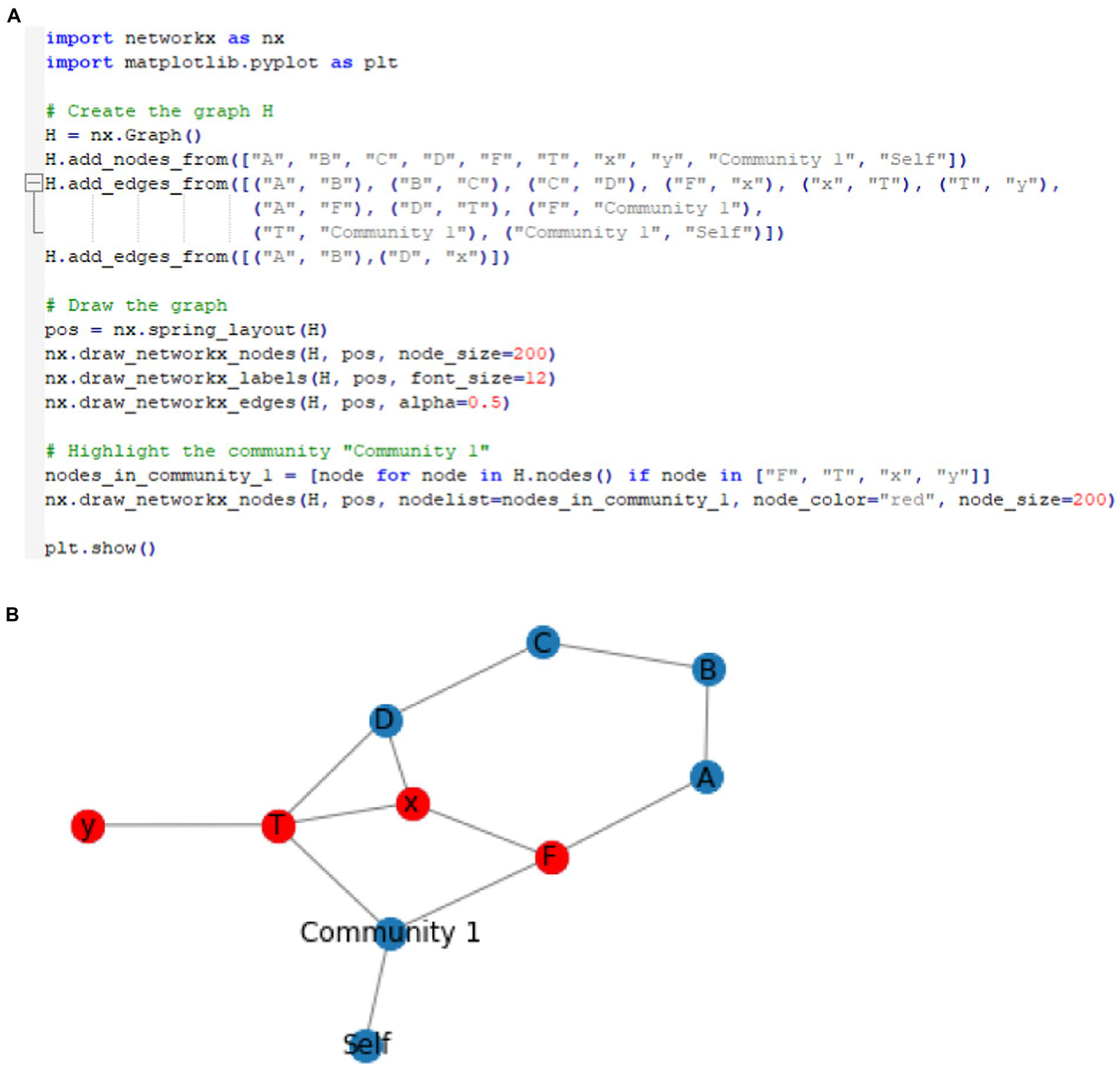

In Python programming language, the NetworkX library can be used to create a graph. The community can be analyzed through nx.pagerank and nx.shortestpage functions. This can be represented as a community graph (i.e., a subset of the larger graph that includes sets that relate to one another closely, such as a network that defines the self) within a larger network graph (of multiple communities that relate networks of relating relations). Here, a graph of nodes represents the sets F and T as well as elements x and y. The graph could be defined as , and Node F again represents the set of all things that you are afraid of, and node T represents the function that frames snakes with local woods. This is different from the representation that simply associates simply associates snakes with “woods” directly. Instead, this function is a more accurate way to present the variables as the networks increase in size and complexity. Node x represents an element in set F (i.e., an instance or a stimulus that you are afraid of, such as “snake”), and node y represents an element in the set for the function T(F).Here, the edges (F, x) and (T, y) represent the membership of x in F and y in T(F),respectively, while the edge (x, T) represents the mapping of framing x with y through the function T. From this, the graph (or community network subgraph), for example, could be defined as . This is illustrated visually within a graph and Python code as depicted in Figure 5. Here, it is also important to note that certain nodes of interest within a community can be highlighted within Python, such as the ToF. This represents an important area of interest when doing a PBT analysis, as targeting the ToF function within the network with, for example, a mindfulness intervention, may undermine the function’s transfer strength and have a significant positive cascading effect across the network. As can be seen, ToF projects to the community node, and this has a direct causal effect on the community of “self,” potentially increasing generalized fear and ultimately increasing the possibility for increased low self-esteem.

Figure 5

(A) Example of Python code for specifying two communities, and the resultant community network where the ToF community (function T node) within a broader relational network can be visualized within the graph. (B) Visual areas of interest within the network may be an area that a PBT therapist would consider targeting to undermine its negative impact across the network. It also shows in the resultant visualized network whether the ToF function T transfers fear F of snake x to local woods y.

It is also useful to note that graph G can be represented as a single community within a larger graph of other communities, i.e., representing relational network subgroups (or communities) relating to other relational network subgroups, such as the ToF within the community for “self” (see Supplementary material 6 for details).

Implementing set theory and logic of RFT into structural equation modeling and graphs

There has been some recent concern over the ergodic approach that provided much of the rationale for nomothetic population-level statistical approaches (Hayes and Hofmann, 2018; Hayes et al., 2019, 2020; Ciarrochi et al., 2022). (For a full discussion, refer to Supplementary material 7). It is for these reasons that an individual idiographic time series approach to an RFT implementation in SEM (an ideographically driven SEM) is explored as opposed to less individually sensitive nomothetic approaches, with the main focus on a highly sensitive ideographic, autoregressive timeseries approach that meets the criteria for assessing intra-subject variability in participants at the individual level.

Pearl’s structural causal modeling (Pearl, 2000) has been successfully applied to causal reasoning (action and change) in artificial intelligence (AI; Pearl, 1988), statistics, economics (Pearl, 2009), social sciences (Pearl, 2000), and even as Bayesian networks represented as propositional reasoning in cognition (Pearl, 2011). This is to extend the ability of classical logic to describe properties within RFT (as described in the previous section) and to allow for a more general mathematical description of causation, which will eventually allow for graph theory modeling and therefore be applicable in a clinical and PBT context.

Pearl (2000) suggests that a causal model is a triple whereby U is a set of background exogenous variables, V is a set of endogenous variables that are determined by variables in the union . F is a set of functions where is a mapping from to and a mapping from U to V is formed from the entire set of F. F can be represented as set of equations:

Whereby, pai is any unique minimal set of variables in (parent variables) that is sufficient for representing fi, and for . According to Pearl, particular “causal worlds” are determined by instances of exogenous variables. Structural equations encode causal information, whereby anything left of the equal sign is the effect and anything right of the equal sign is the cause. Therefore, here the equal sign conveys the asymmetrical relation “is determined by.”

In the theory of Pearl’s (2012), structural equations are intended to be modular, whereby one equation can be modified without changing the others. This allows the determination of three types of queries that can be asked with respect to a causal model: (1) predictions, i.e., will X happen in the event of Y? (2) interventions, i.e., will X happen if we make sure that event Y occurs? (3) counterfactuals, i.e., would X have occurred had event Y occurred, given context Z? Bochman and Lifschitz (2015) have shown that in the case of prediction questions, these can be answered using deductive inferences from a logical description of the causal world. Answers to intervention actions and counterfactuals rely on a submodel Mx of a larger model M, where x is a variable of a set of variables X from V. This is a causal model obtained from M whereby the set of functions F are instead replaced by the function for variable x by the following set:

Fx is therefore formed by deleting from F the functions fi that correspond to the members of set X and instead using the set of constant functions X = x. The submodel Mx can be understood as the result of performing an action X = x on M that produces the minimal change required, whereby X = x holds true under any background exogenous variables x. This is Pearl’s submodel for evaluating counterfactuals that consider alternative situations, such as “Had X been x would Y = y still hold as true?”

Just as with the earlier examples given with RFT, Boolean logic propositions (propositional formulas) can now be proposed, except this time within a causal structural equation model, called a Boolean structural equation, and specifically within an RFT framework. Here it is assumed that a set of propositions is partitioned into a set of background exogenous propositions and a finite set of explainable endogenous propositions. A Boolean structural equation, expressed as A = F, shows that F is a propositional formula in which the endogenous proposition does not appear. It also implies that a set of Boolean structural equations A = F should exist within a Boolean causal model for each endogenous proposition. A causal world solution of a Boolean causal model M is any propositional interpretation that satisfies the equivalences for all equations A = F, in the Boolean causal model M.

As an illustration of how RFT can be applied this way, consider the classic firing squad example (Pearl, 2000). Here, let U, C, A, B and D, respectively, represent the following five propositions: (1) “Court orders the execution,” (2) “Captain gives the signal,” (3) “Rifleman A shoots,” (4) Rifleman B shoots,” (5) “Prisoner dies.” This story of propositions can be formalized within the causal model M, where U is the only exogenous proposition within this model and this is stated as . There are only two solutions to this: the first being that all propositions are true, and the other is that all propositions are false. Static queries can be answered about this causal model, such as casual model M implies that , and this implication is satisfied by all the propositions of M when considered true about the causal world.

In a different scenario, a subset of the endogenous propositions X of the Boolean causal model M can be evaluated, whereby a truth-valued function I on X can be denoted by the submodel of M. Here, every equation A = F is replaced with A = I(A) where to form the submodel from M. To evaluate this new scenario, whereby in this scenario the captain did not give the signal, but the rifleman A shoots anyway, the prisoner dies, and rifleman B does not shoot, is given by the submodel of M with I(A) = t. This is the function of A being true, which means the proposition of the rifleman A shooting is also true. This can be denoted as . As this submodel implies (as rifleman A does shoot his gun in the case of the captain not giving the order to shoot) and (as riflemen B does not shoot his gun in the case of the captain not giving the order to shoot), and as these propositions are both true, this new situation is also justified.

This can be expanded more directly to the logical and set theory RFT interpretation of relational framing properties that have emerged from a logical theory of causal reasoning (relating to action and change) called causal calculus and emerged in the artificial intelligence (AI) literature but can also be applied to SEM. Largely based on work by Geffner (1994), causal calculus was introduced by McCain and Turner (1997) and generalized using first-order logic by Lifschitz (1997). The logical basis for causal calculus was first described by Bochman (2003), and its use as a general-purpose nonmonotonic formalism has also been explored (Bochman, 2004, 2007).

This allows for the establishment of propositional casual rules, such as A causes B, expressed as , where both A and B are propositional formulas. A set of propositional causal rules makes up a propositional causal theory. Nonmonotonic semantics of a causal theory can also be expressed, where for a causal theory Δ and a set of u proposition, Δ(u) denotes a set of propositions that are caused by u in Δ, such as

In an RFT example, the frame of causation can be explicitly represented within a structural equation model in the following set of propositions, which represents a modified version of the firing squad causal chain example and gives a maladaptive behavioral scenario. The propositions are as follows: (A) “A spider specialist explains that poisonous spiders exist in the local woods”; (B) “You fear poisonous spiders and now fear walking through the woods”; (C) “You avoid walking through the local woods”; (D) “You learn avoidance keeps you safe”; (E) “You avoid all experiences which make you feel unsafe.” This can be expressed through a causal chain, denoted as:

Here, there are four causal theories Δ and five propositions, whereby each causal theory consists of a set of two propositions and leads to another causal theory within the chain of the same number of propositions. This gives an example of how runaway thoughts have causal effects within an RFT network (and how possible self-isolative behavior occurs). These types of causal chains can be developed into RFT structure graphs along with functions such as ToF.

Expressing causal logic through propositional ideographic graphs of graph theory

These sets of propositional logic expressed as causal theories within an RFT framework, as well as the other forms of mathematical relational frame logic mentioned, can be visualized within a graph using graph theory. This is provided to expand on current PBT approaches within the EEMM, which use process-based networks as part of their analysis at the individual ideographic level (Hofmann et al., 2021). There are existing graph theory packages available in R such as SEMgraph that specialize in modeling SEM graphs (Palluzzi and Grassi, 2021), as well as specialist non-SEM graph modeling approaches that focus specifically on RFT properties such as mutual entailment bidirectionality (Smith and Hayes, 2022). However, to date, no approach specifically brings about a propositional causal logic SEM RFT approach that comprehensively brings about complex relational framing dynamics within the causal networks that could be applied to PBT research.

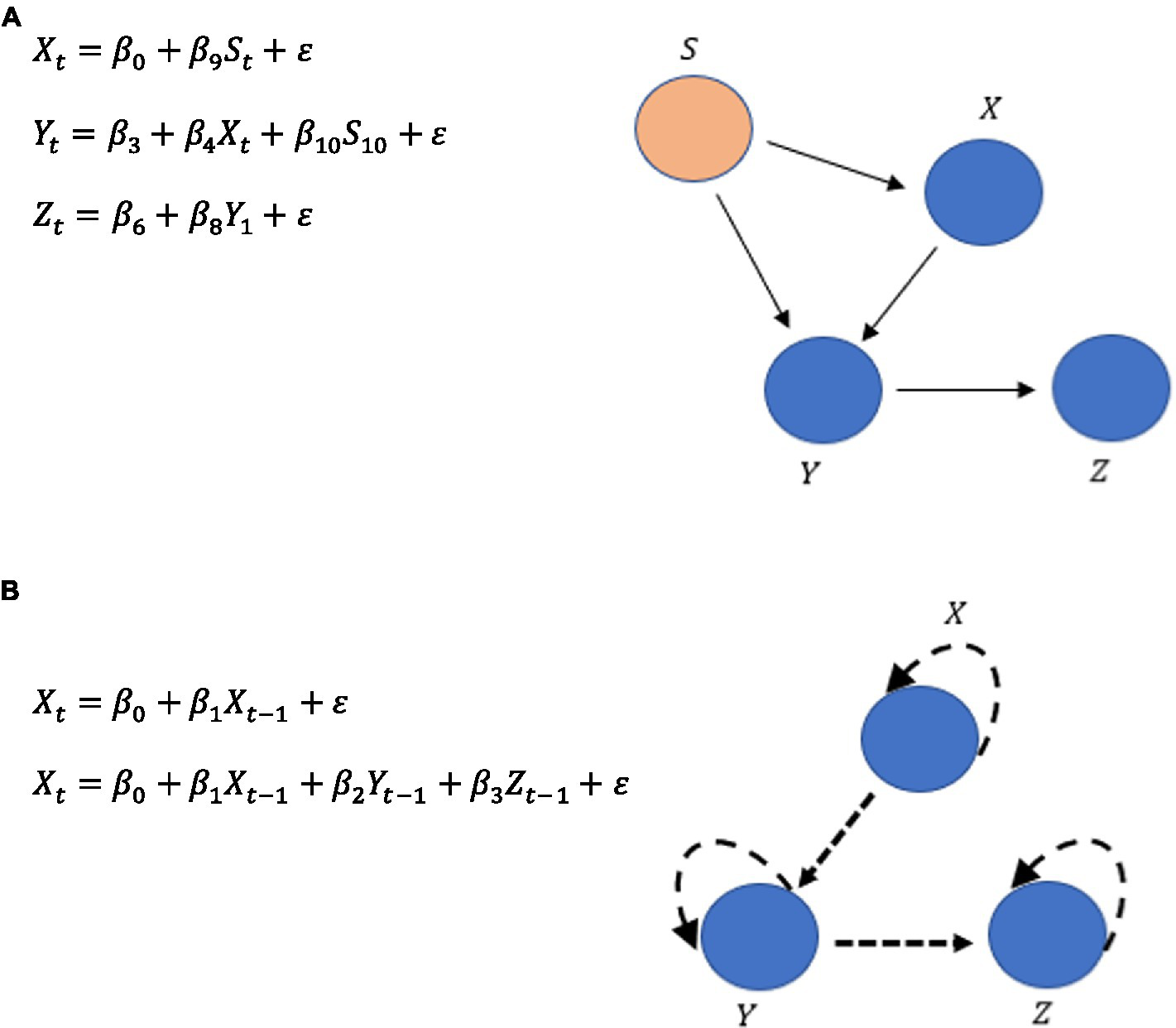

Within graph theory, there are three types of path diagrams, which include (1) directed acyclic graphs (DAG) with values for each path within the graph in the form of beta coefficients , giving an indication of which paths or edges within the graph have the most influence on some dependent measure (DV) within the network. In this case, all potential covariances are assumed null ; (2) covariance models whereby only covariance can have a value greater than zero while coefficients can only equal zero ; (3) Bow-free acrylic path graphs (BAP) have both bidirectional covariance relations as well as acrylic directed edges . Bidirectional covariance only occurs when the k th and the -th variables do not share a directed edge, so if then .

An SEM path diagram consists largely of linear regression equations and can be represented by a graph G = (V, E), whereby the variables can be expressed as a set of nodes V and connections can be expressed as the set of edges E. The set of edges E can include both bidirectional edges if to account for covariation, as well as directed if to account for the direct path coefficients and can be determined by the following:

Here, is an observed variable, and is an unobserved error term. The regression coefficients are expressed as , while covariance is expressed as and indicates that the errors are dependent. This assumes that there is an unobserved latent confounder between k and j.

Equations 6 and 7 can be written in a matrix form Y = BY + U and . Given some random variables with a zero-mean vector , the joint probability of p variables Y within a covariance matrix is given by:

However, given that an idiographic approach is chosen here, and not a nomothetic one, certain ecological momentary assessments (EMA)-type questionnaires could capture the RFT properties (for a full discussion, see Supplementary material 8).

There are many ways EMA data can be analyzed, and one particularly useful approach at this ideographic level of assessment is the Group Iterative Multiple Model Estimation (GIMME; Gates and Molenaar, 2012). The algorithm is useful for time series data with at least 60 observations per person and currently fewer than 25 variables (Lane et al., 2019). The GIMME algorithm, at its core, searches for common and unique dynamic processes among individuals. It uses Lagrangian multiplier diagnostics (Sörbom, 1975), and it considers paths that significantly exist for the majority of individuals. This can be expanded with the idiographic filter and the Model Implied Instrumental Variables with Two-Stage Least Squares Estimation (MIIV-2SLS). Latent Variable—Group Iterative Multiple Model Estimation (LV-GIMME) has been suggested as optimal for individual-level time series data in psychological studies (Gates et al., 2020). This contrasts with the full information estimators such as the maximum likelihood (ML) of typical normative level SEM, which estimates the measurement model coefficients as influenced by values at the latent variable model level. MIIV-2SLS instead allows for an estimation of latent values across time at the individual level for all relations of the dynamic factor MODEL (DFM). As such, LV-GIMME operates within an SEM in a dynamic factor analysis framework for analysis of multivariate time series data (Molenaar, 1985), and this is given as follows:

Whereby t is time, and t–1 is variable at a previous time interval. Φ is the P × P matrix where P is the number of variables in the . The matrix contains the vector autoregressive (VAR) effects (coefficients) for the variables that predict the endogenous values. Therefore, Φ contains the VAR (1) coefficients of how prior time point values relate to the subsequent point in time. In the original GIMME algorithm, and are simply observed variables, but they are latent variables in this SEM approach of LV-GIMME. The P × P -dimensioned A matrix has contemporaneous relations among the endogenous variables. is the P × 1 vector that contains the errors or disturbances and is assumed to have a mean of zero. GIMME, therefore, allows the identification of structures of directed relations (paths) among the variables in the time-series data. The relations are the Φ and the P × P -diementionsed A matrices. Crucially, once the SEM path structure is established, graph modeling can follow similarly as described for the traditional SEM.

Notably, the difference between a traditional normative cross-sectional SEM and the idiographic SEM is that a traditional normative model would have specific directional paths such as those seen in Figure 6A, but an ideographic time series version such as GIMMIE would include a vector autoregression that updates the nodes given the additional data it has across time, as shown in Figure 6B.

Figure 6

(A) Typical SEM pathways. (B) An illustration of the vector autoregressive updating in a time series ideographic SEM.

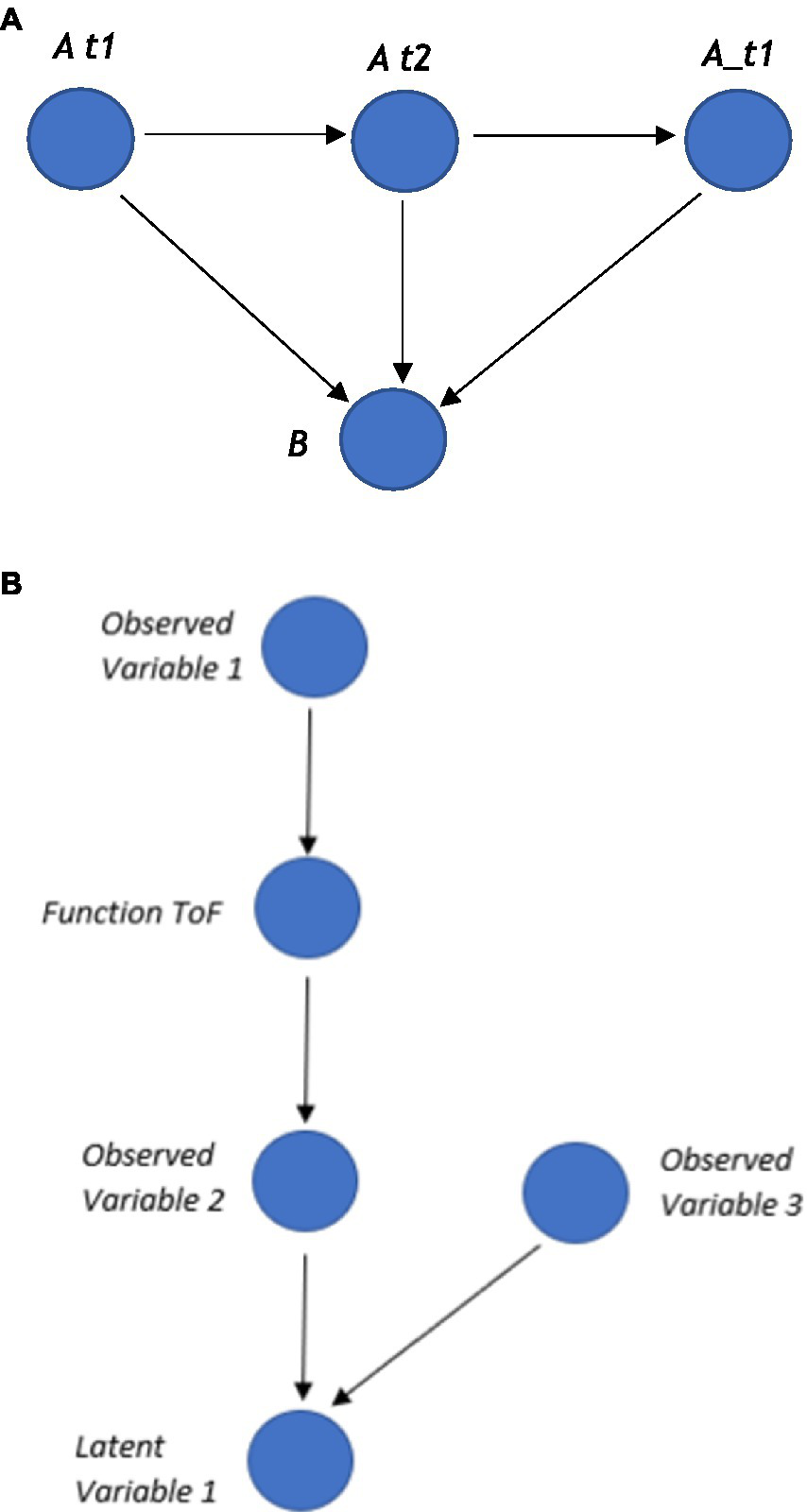

Crucially, these types of time series autoregressive SEMs can be represented within a graph, whereby nodes represent each variable, and the edges represent the relation between the nodes, such as the regression coefficients. In a time series, ideographic SEM additional information can be included about the different time points. One way to represent these time points within a graph is to create a separate node for each time point and connect an edge from each time node to a variable node (as shown in Figure 7A), which can be compressed later to the final autoregressive nodes for visual simplicity. Functions can also be added, such as a ToF, to these types of time-series SEMs, as shown in Figure 7B.

Figure 7

(A) Three time points t1, t2, t3 and two variables A and B. (B) A function node between two observed variables that express some relations between these observed variables, such as latent variable=f(obsevered_variable_1,observed variable_2).

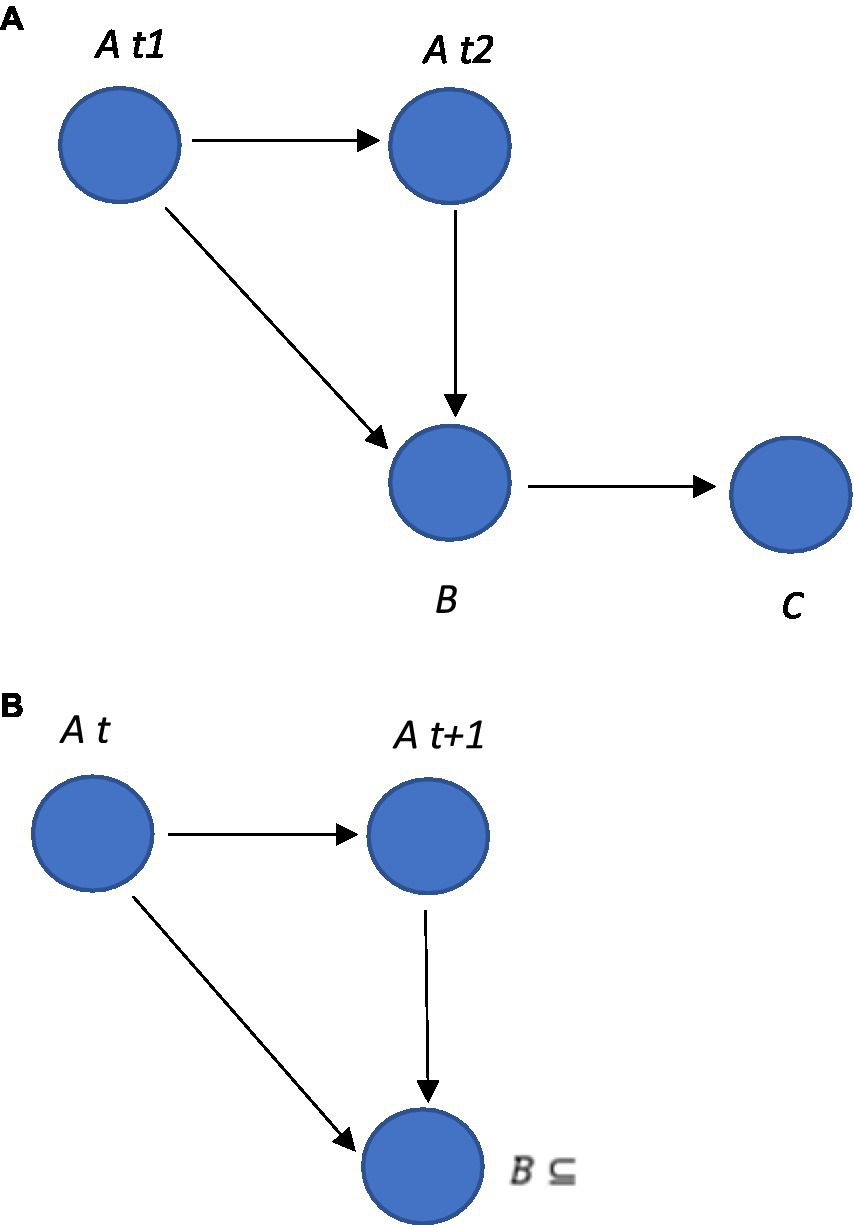

The autoregressive VAR model can be represented within the graph that illustrates the autoregressive nodal relationships, but in an SEM model as illustrated in Figure 8A. These types of autoregressive SEM graphs can be developed in Python, as indicated by the example code in Supplementary material 9.

Figure 8

(A) An autoregressive VAR model within a graph. In this example, the graph includes three nodes, A,B and C, and four edges. The edges from node A to node A represent the autoregressive relationships, indicating that variable A depends on its own past values. The edge from node B to node C represents the relationship between these variables, indicating that B depends on C. (B) The edge from node B at time t + 1 to node A could be described as which is interpreted as saying that the value of B at time t + 1 is a subset of the value of A at time t.

It is also possible to apply logic and set theory in an SEM time-series autoregressive model such as GIMME. In an SEM, variables are often represented using sets, and the relationships between variables can be described using logical operators such as “AND” and “OR”. For example, it is possible to specify the relationship between two latent variables as a function (this in itself could have a function such as ToF or an operator such as AND expressed between two observed variables) using a logical expression such as:

This logical expression as a function could be interpreted in a way that the latent variable at the time (t) is only present when both of the observed variables are present at the time (t).

Set theory can be used in this type of autoregressive idiographic SEM to describe the relationship between variables (see Figure 8B). For example, it is possible to specify that a latent variable at time t + 1 is a subset of an observed variable at time t using set notation such as . This is interpreted as saying that the latent variable at time t + 1 includes all of the elements of the observed variables at time t, as well as possibly some additional elements. This naturally allows the RFT relational frames (such as ToF, opposition, and mutual entailment) to be expressed as logic and sets and modeled within this time series, autoregressive idiographic model approach.

Overcoming logical paradoxes of self-reference and why the observer self needs to be specified mathematically outside of formal axioms

Although in the vast majority of cases specifying “self” leads to no problems in formal logic, perhaps one important observation is that self-reference within logic can, in some limited instances, lead to paradoxical statements that can be shown to be true but paradoxical in nature. This has important consequences when modeling the “self” within graphs. As an example of this, Hofstadter, in his books I am Strange Loop and Gödel, Escher, Bach: An Eternal Golden Braid (Hofstadter, 1979, 2007), referred to the properties of self-referential systems as demonstrated in Gödel’s incompleteness theorems (Gödel, 1931) and other areas as leading to paradoxes, which he refers to as strange loops. Penrose and Lucas made similar arguments, suggesting the mind and consciousness were beyond computation given Gödel’s incompleteness theorem (Lucas, 1961; Penrose and Mermin, 1990; Penrose, 1994). The full arguments for these are made in Supplementary material 10.

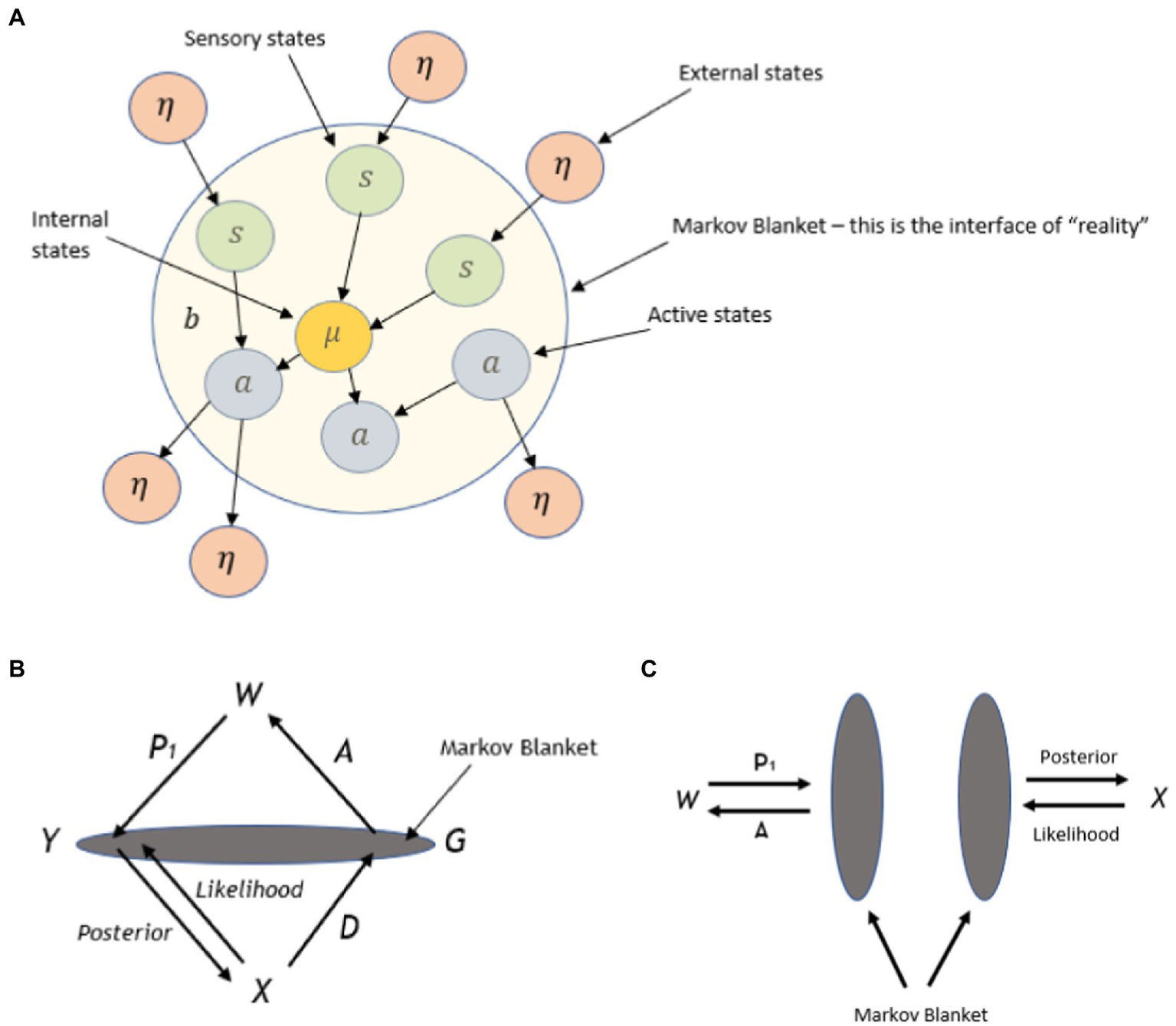

The observer self, evolutionary Interface theory, and Markov kernels

One potentially useful avenue for modeling the observer self, and avoiding self-referential paradoxes is Hoffman and colleagues’ (Hoffman et al., 2015; Hoffman, 2016) interface theory of perception (ITP), whereby perceptual systems provide an organism-specific user interface promoted by evolutionary fitness and not veridical representations of the environment. This takes the ITP one step further by formally defining mathematically what they call a conscious agent (CA; Hoffman and Prakash, 2014; Fields et al., 2018), and this is applied as a minimally universally applicable formal model of conscious perception and behavior, potentially including the self as an observer to experience. CA is assumed to provide a universal representation of perceptual and cognitive processes in the context of ITP. There is also an assumption of consistency between CA and ITP, in the sense that a CA cannot respond (behave in response) to stimuli in the environment if the ITP does not detect them (Hoffman and Prakash, 2014). This mathematical expression of the CA that uses a Markov kernel may offer a useful fit for defining the conscious observer self in ACT and RFT, in order to prevent issues with self-reference paradoxes in strange loop logical systems. Notably, this can be implemented in graphs of graph theory alongside other logical RFT relational frame statements within a mathematically consistent and complete way, by utilizing a Markovian blanket, which would separate the observer self from the entanglement with logical propositional thoughts (i.e., self as content). Notably, conscious phenomenology in this approach is intended to model human phenomenology, whereby language plays a role in shaping phenomenology through concepts and categories that are verbally learned (from a Hebbian network) from the environment within the interface.

Crucially, ITP and CA can be regarded as ontologically neutral, given that perception and phenomenological experience are more generally bound to a fitness function rather than some form of mentalism. This aligns ITP away from a cognitive naïve realism position and more in line with the behavioral pragmatism of RFT that also holds an a-ontological position (Barnes-Holmes, 2005; Codd, 2015; Monestes and Villatte, 2015). This means that RFT could be interpreted through this (functional) interfacing approach based on evolutionary fitness rather than a cognitively vertical phenomenological experience. It is therefore conceptually a good fit for mathematically describing the observer self of ACT and RFT as well as the self within EEMM more generally.

The CA framework can be formally defined mathematically, which allows perceptions, decisions, and actions to be defined within a measurable space (definition 1 is of the Markov kernel, and definition 1 is of the CA):

Definition 1. Let 〈B, B〉 and 〈C, C〉 be measurable spaces, whereby B and C refer to σ-algebra of measurable space called events and represent a collection of subsets B and C, respectively. Then equip the unit interval 0, 1 with its Borel σ-algebra. The function is a Markovian kernel from B to C if

-

(i) For each measurable set , the function enacted by is a measurable function.

-

(ii) For each , the function enacted by is a probability measure on C.

A CA can be represented as a directed graph, as illustrated in Figure 9. The graph demonstrates a cyclic process, whereby a kernel can be thought of in the following way: for each instantiation of G in the immediately previous cycle, and the current instantiation of gives the probability distribution of the instantiated in the next step. Kernels A and P are instantiated in the same way. To put it more formally

Figure 9

Illustration within a three-node graph, the conscious agent (CA). W (world), X (experience), and G (conscious action) are measurable sets, while P (perceive), D (decision), and A (action) are Markovian kernels, and t is an integer parameter [Reprinted with permission from Elsevier (Fields et al., 2018)].

Definition 2. Let 〈W, W〉, 〈X, X〉 and 〈G, G〉 be measurable spaces. Let P be a Markovian kernel , D be a kernel , and A be a kernel . A CA is a 7 tuple , where t is a positive integer parameter.

W is interpreted as elements of the world, X and G are interpreted as representing (consisting of tokens) different conscious experiences and actions, respectively. Kernels P, D, and A represent perception, decision, and action (behavior) operators. Any operator that changes the state of X (conscious experience) is regarded as “perception,” any operator that changes the state of G (conscious action) is regarded as a “decision,” and any operator that changes the state of W (world state) is regarded as an “action.” The perception set X takes all phenomenological representations of experience, not just visual, i.e., all modalities. Similarly, as set G and kernel A are also multimodal, perception can be viewed as an action performed by the world. When states W, X, and G change, kernels P, D, and A act in response, respectively. Decisions D and actions A of the CA are assumed to be freely chosen and not deterministic (particularly when directed by the observer self rather than self as content), and as such, these operators are treated as stochastic in the general case. CA-specific proper time is denoted by t and is incrementally “ticking” concurrently with the action of decisions D, and change of state of X (hence applicable for ideographic time series analysis such as in PBT). There are no assumptions about what X contains, such as containing tokens representing the value of t or the elements of G. Further details of this approach are given in Supplementary material 11.

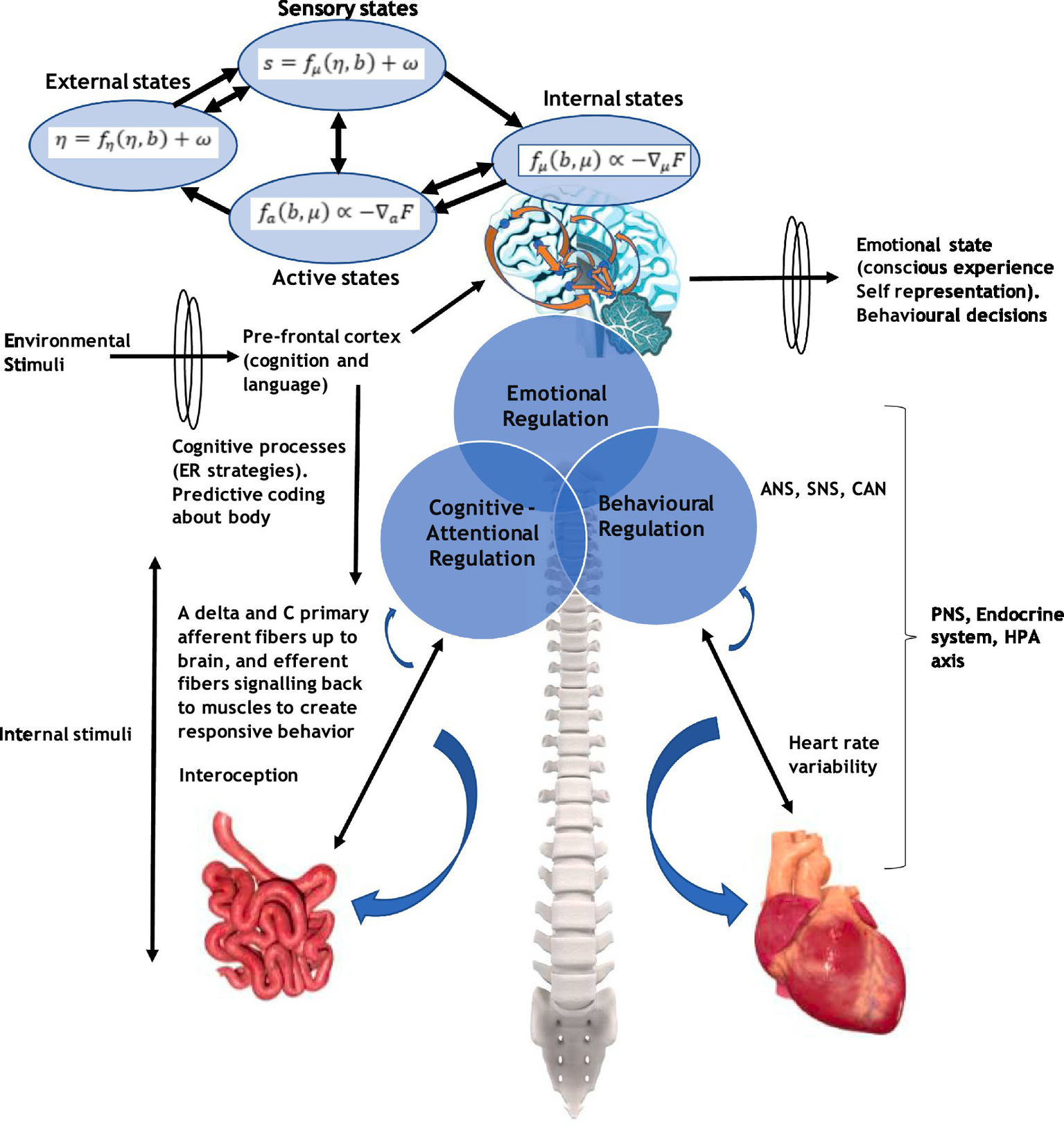

Embodied cognition, entropy, Markov blanket, CA, and self

An important construct of the EEMM is the level of psychobiology and how this relates to, for instance, the dimension of “self.” A conscious agent of “self” would receive many inputs from brain neurons and interoceptively through the body. Embodied cognition and interception play a major role in shaping a meta-representation of “self.” These are relevant to ACT and RFT concepts as, for example, ACT embodied knowledge (such as being aware, engaged, and open) has been identified as relevant in participants naïve to ACT and used to describe (or score) several bodily postures (Falletta-Cowden et al., 2022) that could be interoceptively related. Embodiment, such as interoceptive awareness and related vagal function, has been shown to play an important role in emotional regulation and coping (Pinna and Edwards, 2020). Full details of interoception and how this relates to brain structures that form a representation of “self” are given in Supplementary material 12. Notably, the underlying learning is considered Hebbian in nature, as described in a previous study (Edwards et al., 2022).

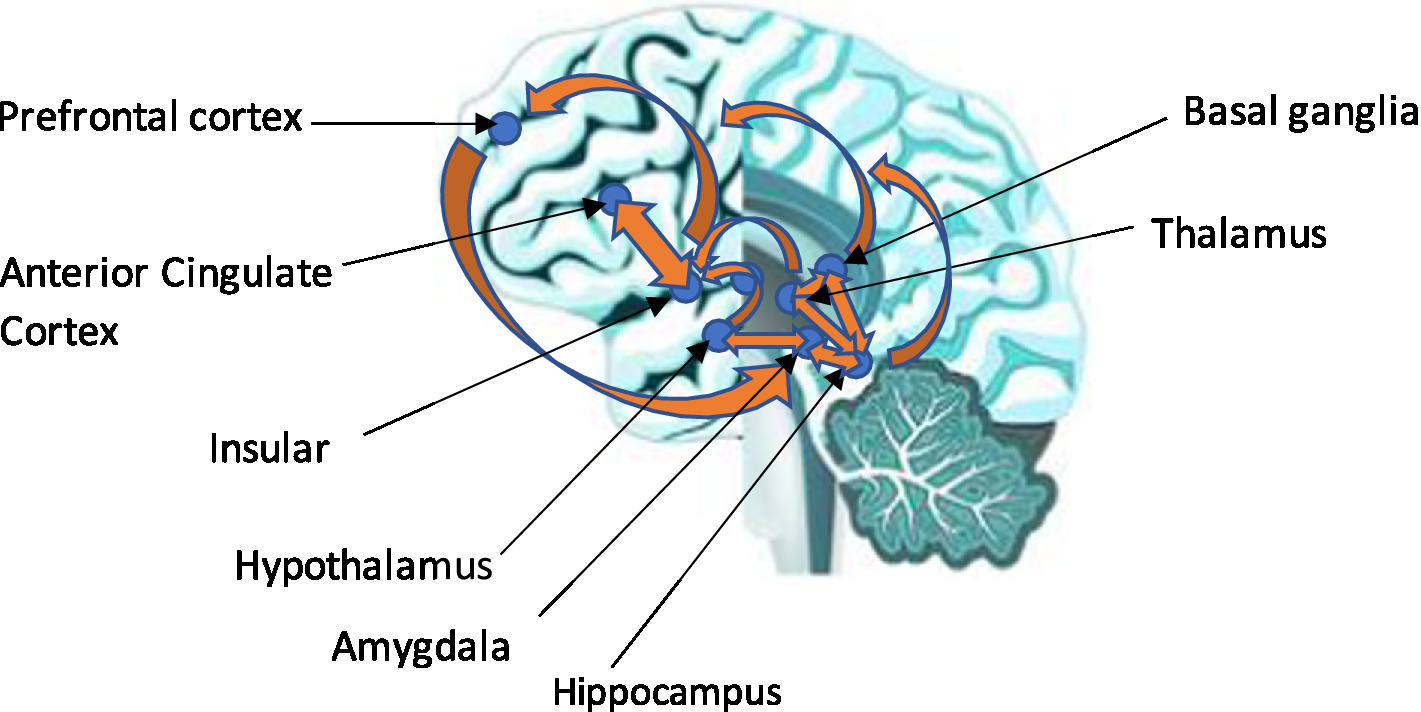

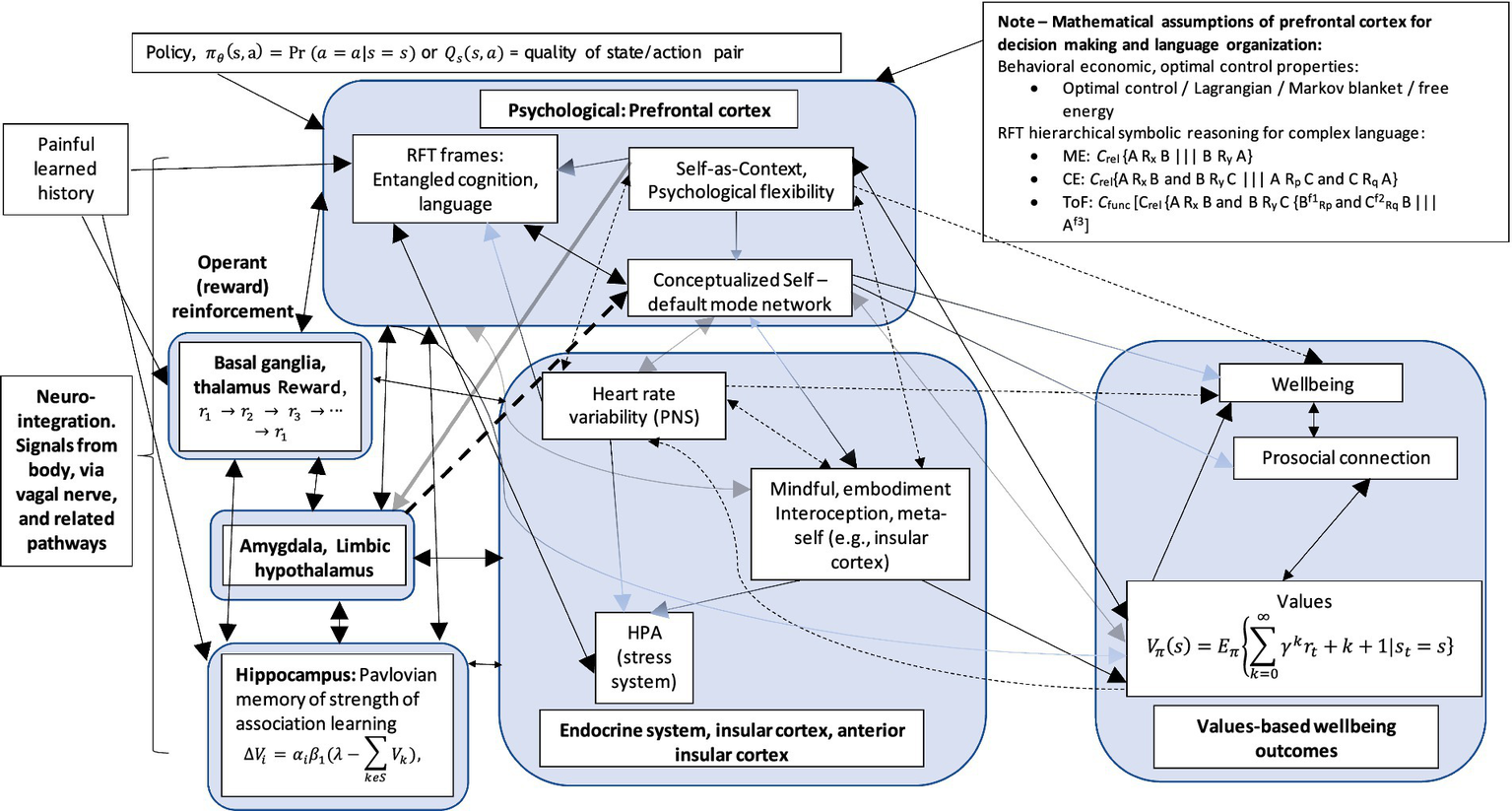

Similar connectivity has been found with major depressive disorder (MDD). For example, MDD, which is also a disorder of the regulation of mood and emotion, has been assumed to be the result of cortical–limbic circuitry (Kennedy et al., 2001). A review of the evidence suggested that abnormalities in the structure and function of the prefrontal cortex, anterior cingulate, hippocampus, and amygdala were responsible for depression (Davidson et al., 2002). It is perhaps interesting that the hippocampus was mentioned, as this is the area where associational learning takes place, such as Pavlovian conditioning, and may relay information to the prefrontal cortex such as situation “A” is scary. The prefrontal cortex can, perhaps, then make decisions about what situations “A” should be avoided. Expanding on this, the hippocampus is thought to provide context-dependent information, as fear extinction is context-dependent, and is thought to involve the inhibitory control of the prefrontal cortex over amygdala-based fear processes, whereby hippocampal-based contextual information is integrated with the prefrontal–amygdala circuitry (Sotres-Bayon et al., 2004). Central brain components and relevant feedback loops are illustrated in Figure 10. Figure 11 illustrates these connections leading to goals and values. Full mathematical accounts for generic relational frames, values, and associations can be found in the study by Edwards (2021).

Figure 10

A combined lateral and medial view of the limbic, insular cortex, and prefrontal cortex axes. The biopsychological level, neuro-integrated, and embodied network.

Figure 11

A potential example of a biopsychological level, neuro-integrated, and embodied network (NIEN). (1) Large arrowheads = greater excitatory effect; (2) Fading arrowhead = inhibitory effect; (3) Dashed line with directional arrowhead = mediating association; (4) Dashed line with bidirectional arrow heads = mediating or moderation association.